1. Introduction

In the Newtonian approximation, the time dependence of the relative position of two distant or spherically symmetric bodies that move in each other’s gravitational field can be written with explicit analytical formulas involving a finite number of terms only when the eccentricity,

e, is equal to 0 or 1, corresponding to circular and parabolic orbits, respectively [

1]. For

and for

, such evolution can be obtained by solving for

E one of the following two Kepler Equations (KEs) (see e.g., Chapter 4 of Ref. [

1]),

where

M and

E are measures of the epoch and the angular position called the mean and the eccentric anomaly, respectively (for convenience, the same symbols are used here for the elliptic and hyperbolic anomalies, even though they are defined in different ways).

For any given value of

e, Equation (

1) can be solved numerically for

E by using a root-finding algorithm for the nonlinear equation

(see Ref. [

2] for an historical overview). In particular, efficient strategies based on the Newton–Raphson iteration method or one its variants have been applied to the elliptic [

3,

4,

5,

6,

7,

8,

9,

10,

11,

12] and hyperbolic [

13,

14,

15,

16] KEs.

Moreover, a handful of infinite series solutions of Equation (

1) have also been found throughout the centuries (see Chapter 3 in Ref. [

2]). The solution for

has been written as an expansion in powers of

e [

17], or as an expansion in the basis functions

with coefficients proportional to the values

of Bessel functions [

18,

19]. Levi-Civita [

20,

21] described a series in powers of the combination

. Finally, Stumpff found an infinite series expansion in powers of

M [

22].

This article describes a class of solutions of KEs, Equation (

1), in terms of bivariate infinite series in powers of both

e and

M,

with coefficients

depending on the choice of the base values

. These solutions converge locally around

, and can be used to devise 2-D spline algorithms for the numerical computation of the eccentric anomaly

E for every

[

23], generalizing the 1-D spline methods that have been described recently [

24,

25]. Since they do not require the evaluation of transcendental functions in the generation procedure, splines based on polynomial expansions, such as the 1-D cubic spline of Refs. [

24,

25], or the 2-D quintic spline of Ref. [

23], which is based on the solutions presented here, are more convenient for numerical computations than expansions in terms of trigonometric functions.

2. Methods

Let the unknown exact solution of Equation (

1) be

If the analytical expression of the partial derivatives of

were known, a bivariate Taylor expansion could be written for any choice of base values

,

,

, so that Equation (

2) would be demonstrated with the coefficients given by

To obtain such derivatives, we notice that the definitions in Equations (

1) and (

3) imply the identity,

In this expression,

e and

E are considered to be independent variables. Therefore, by taking the differential, we obtain,

Since

e and

E are independent, the coefficients of

and

must cancel separately. This condition can be used to obtain the partial derivatives of

g. Taking also into account Equations (

1) and (

3), the cancellation of the coefficient of

implies,

where we have defined the parameter

and the functions

C such that

and

, for

, or

and

, for

. As it could be expected, Equation (

7) coincides with the usual rule for the derivative of the inverse function when

e is considered to be a fixed parameter [

2,

22]. The cancellation of the coefficient of

in Equation (

6) implies,

with

S defined as

, for

, or

, for

. In the case of the elliptic KE, the result of Equation (

8) was used in Ref. [

19] to derive an expansion in the basis

.

Equations (

7) and (

8), taken together with Equations (

1) and (

3), can be used for the iterative computation of all the higher order derivatives entering Equation (

2) for

. The calculations can be simplified by expressing all the derivatives in terms of only

,

S, and

C, and using the following identities, which can be derived from the definitions of

S and

C and Equations (

7) and (

8),

The second order derivatives can then be obtained by applying the operators

and

and the rules of Equations (

9) and (

10) to the first order derivatives given in Equations (

7) and (

8). The result is,

Similarly, the third order derivatives can be obtained by applying the operators

and

and the rules of Equations (

9) and (

10) to the second order derivatives, Equations (

11)–(

13). The result is,

All the higher order derivatives can be obtained by iterating this procedure. These expressions are exact, but they depend on the unknown function

g through

S and

C. Nevertheless, taken together with Equation (

3), they can be used to compute 2-D Taylor series solutions of KEs. This can be done by choosing a pair of base values,

and

, corresponding to

. The values of the coefficients entering Equations (

4) and (

2) can then be computed by substituting

,

,

, for

, or

,

,

, for

, in the expressions for the derivatives of

g, and by defining the zeroth order term

. This procedure can be used to build a class of bivariate infinite series solutions of the elliptic and hyperbolic KEs, one for any given choice of base values. Three explicit examples will be given in

Section 3.

The determination of the radius of convergence for the univariate series solutions of KEs has been a formidable mathematical problem (see Chapter 6 of Ref. [

2]). In the case of the bivariate series of Equations (

2) and (

4), the region of convergence in the

plane can be estimated numerically as discussed in

Section 3.

3. Examples, Discussion and Results

In this section, three examples of bivariate infinite series solutions of KEs are given. They have been obtained from Equations (

2) and (

4) by applying the methods discussed in

Section 2 for the computation of the derivatives of

g, for three different choices of the base values

. All the non-vanishing terms up to fifth order are shown explicitly. Since

, it is sufficient to solve KEs only for positive values of

M. Moreover, for

the

M domain can be reduced to the interval

, and then the solution for every

M can be obtained by using the periodicity of

f and

g.

In all cases, it is convenient to define approximate solutions

obtained by truncating the infinite series of Equation (

2) keeping only the terms with

, so that

with coefficients given by Equation (

4). The errors

of the approximate solutions

can then be evaluated in a self consistent way,

From a practical point of view, the convergence of the infinite series for certain values of

means that

should tend to decrease for increasing

n. This idea is used for obtaining an estimate of the region of convergence in the

parameter space by comparing the average errors for lower and higher values of

n with the following condition,

A more refined criterion of convergence can be obtained by studying the scaling behavior of the solutions. For this purpose, every point of the

plane is expressed in terms of polar variables

,

, defined as

All the polynomials

and their errors

can then be thought of as functions of

and

. For a given value of

, these functions are one dimensional, depending only on

, which is a measure of the distance from the center

in the

plane. Thus,

can play a role similar to that of the embedding parameter

q of the homotopy analysis method [

26], with the difference that

will not be assumed to be smaller than 1. Actually, the parameters

and

will only be used in the intermediate steps and will disappear from the final criteria of convergence.

If the bivariate series of Equations (

2) and (

4) converges in a certain point

along a fixed direction

, the error of the

approximation can be written as -4.6cm0cm

where the derivative entering the definition of

has to be computed for an unknown value

. By plotting the actual numerical errors

in a direction

for a given base point

, it can be seen that the

dependence of

in the convergence region is usually much milder than that of the factor

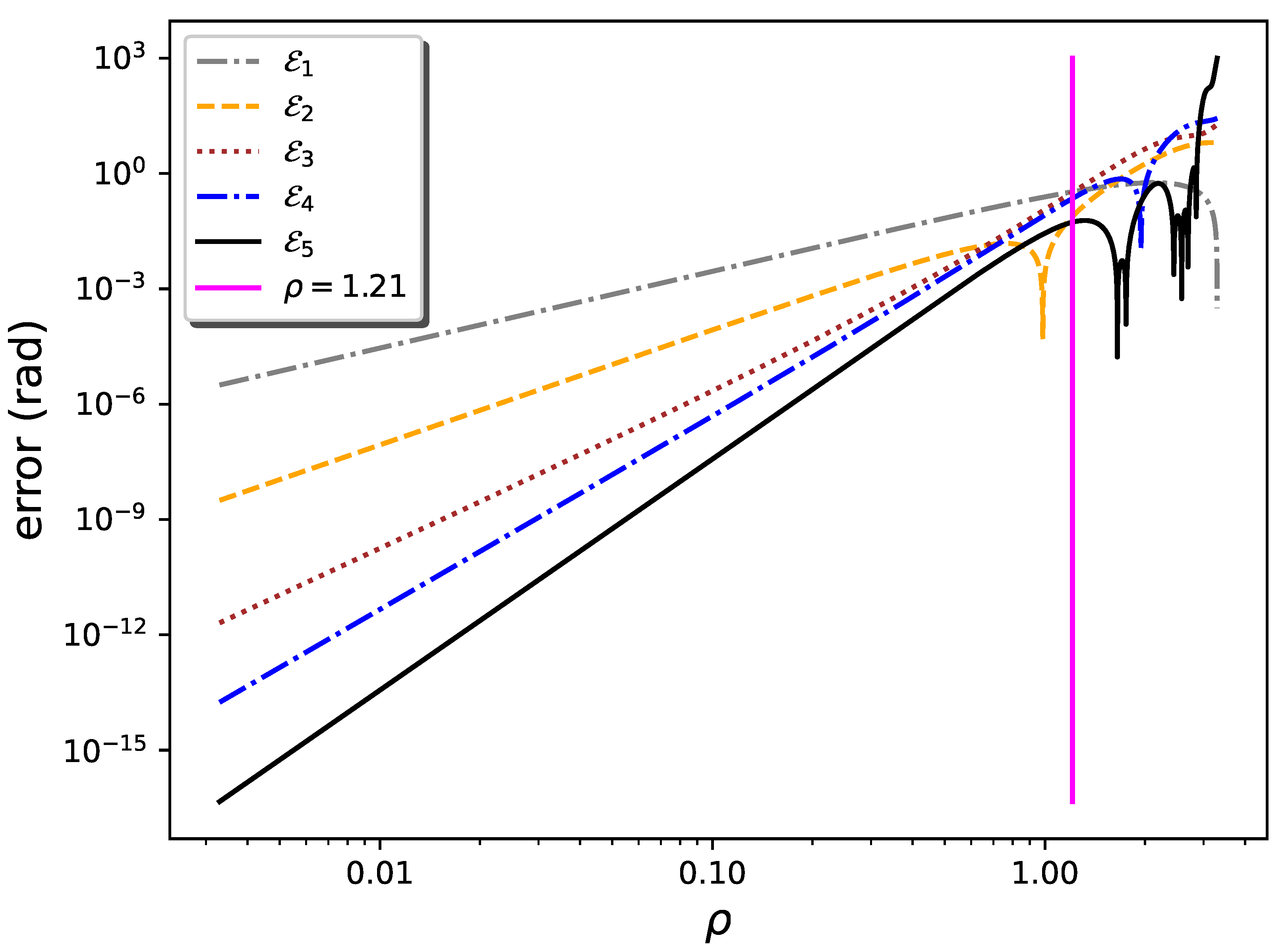

. As an example,

Figure 1 shows such plots for the series centered around the point

, choosing the direction identified by the diagonal line

(corresponding to

). Along this line, the error

is at the level of arithmetic double precision (

) for

. For almost all values of

(vertical magenta line in

Figure 1), corresponding to

and

rad, the errors

decrease as

n increases, as expected for a convergent series, except for the occasional inversion due to cancellations that occur in one of the

(

around

in the figure). For

,

,

, and

mix, and the series can be expected to diverge.

Similar results can be obtained for different directions

and base points

. In general, the linear behavior of

in the convergence region corresponds to

with a very good approximation, so that

and

scale with the same power of

. This behavior can be made more regular by averaging out the possible oscillations that occur in special directions for the individual

. This can be done by summing up different

with the corresponding scale exponent, as in the following combinations:

which scales as

, like

but with greater regularity;

which scales as

, like

; and

which scale as

, like

but–again–with greater regularity. Equation (

22) and these scaling laws are expected to hold only when the Taylor series converges. Therefore, two additional numerical criteria of convergence are given by the inequalities

These conditions ensure that the errors not only tend to decrease for increasing

n, but they also scale as expected when the series is convergent. For the solution based on

and evaluated along the diagonal direction

, these conditions give the limiting value

shown in

Figure 1. By inspecting the figure it can be seen that the bounds of Equation (

26) produce a reliable result in this case. Moreover, as shown in

Section 3.1, Equation (

26) also reproduces the known radius of convergence of Lagrange series [

2,

17] in the limit where it can be compared with our bivariate series. The bounds of Equation (

26) are usually more stringent than those obtained from Equation (

20), but there may be special directions for which the opposite may be true. Hereafter, a conservative definition of the region of convergence will be used by imposing Equations (

20) and (

26) at the same time.

3.1. Bivariate Infinite Series Solution of the Elliptic Kepler Equation around ,

Choosing

,

, so that

rad,

,

,

, the series of Equations (

2) and (

4) becomes,

This case can be compared with Lagrange’s [

17] and Stumpff’s [

22] univariate series, which are,

and

(see Ref. [

2] Equation (3.25)). It is easy to see that the Taylor expansions (up to fifth order) of Equations (

28) and (

29) around

and

, respectively, coincide with the bivariate series of Equation (

27). Of course, their expansions in a neighborhood of

have to coincide since all these series solve the same equation around the same point. However, the complete series are different from one another, and their numerical values will also be increasingly different for increasing distance from the base point

. As a consequence, their regions of convergence will also be different.

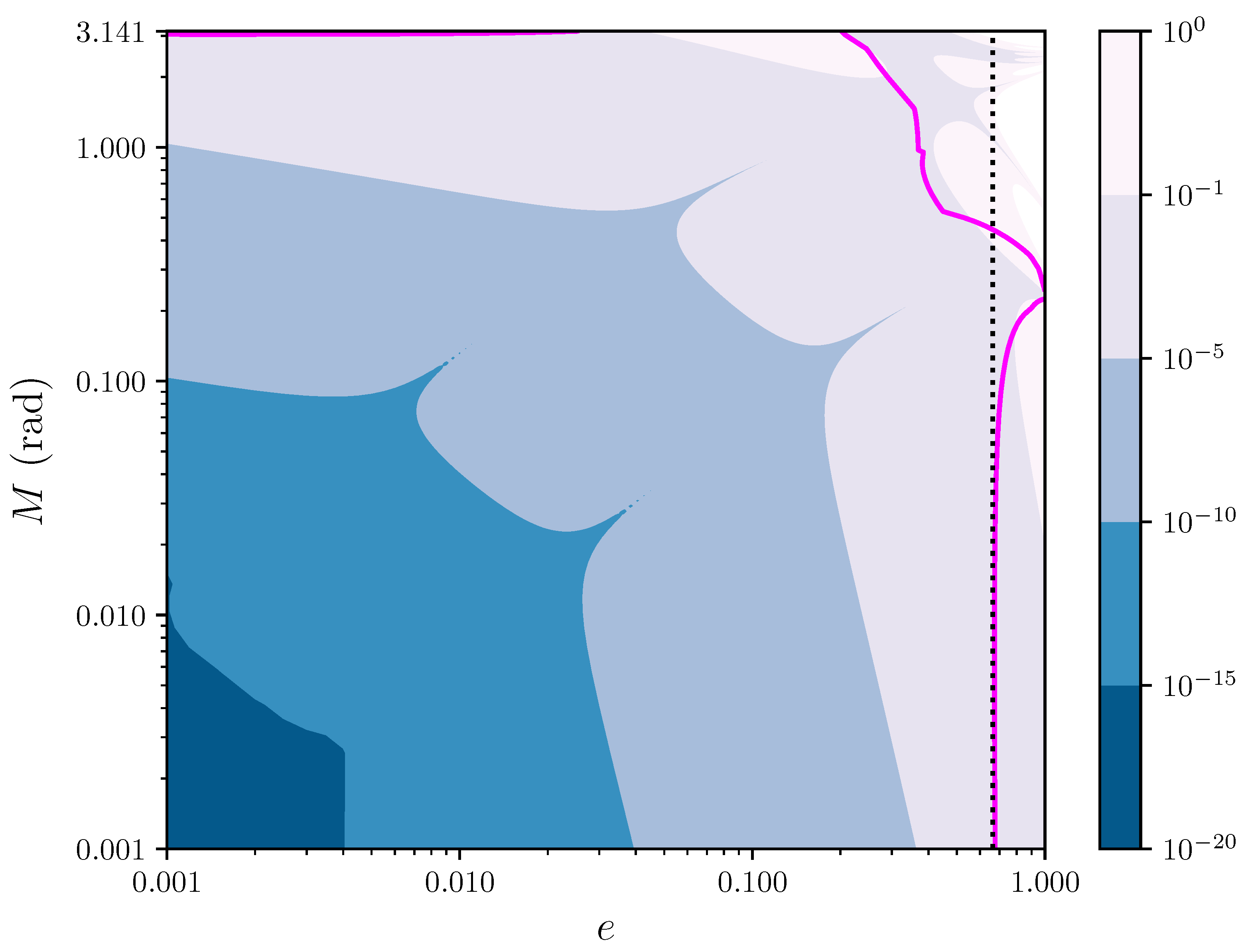

Figure 2 shows the contour levels in the

plane of the error

affecting the fifth degree polynomial approximation,

, as given by Equation (

27). The error

is kept below ∼

rad for

and

, and is reduced to the level ∼

rad for

and

. Moreover, the fifth order approximation reaches machine precision

in an entire neighborhood of size

,

rad around the point

. The continuous magenta curve marks the boundary of the region of convergence of the bivariate series of Equation (

27), as estimated with Equations (

26) and Equation (

20). This can be compared with the limit

for the convergence of Lagrange’s univariate series (see page 26 of Ref. [

2]), which is represented by a vertical dotted line in the figure. For

, our limit for the convergence of the bivariate series agrees very well with that of Lagrange’s series, as it could be expected since in such regime the first terms of the Taylor expansion for

provide a very good approximation. Not surprisingly, for larger values of

M the vertical dotted line separates from the magenta line, so that the region of convergence of the bivariate series is different from that of Lagrange.

3.2. Bivariate Infinite Series Solution of the Elliptic Kepler Equation around ,

Choosing

,

, so that

,

,

,

, and defining

and

, the series of Equations (

2) and (

4) becomes,

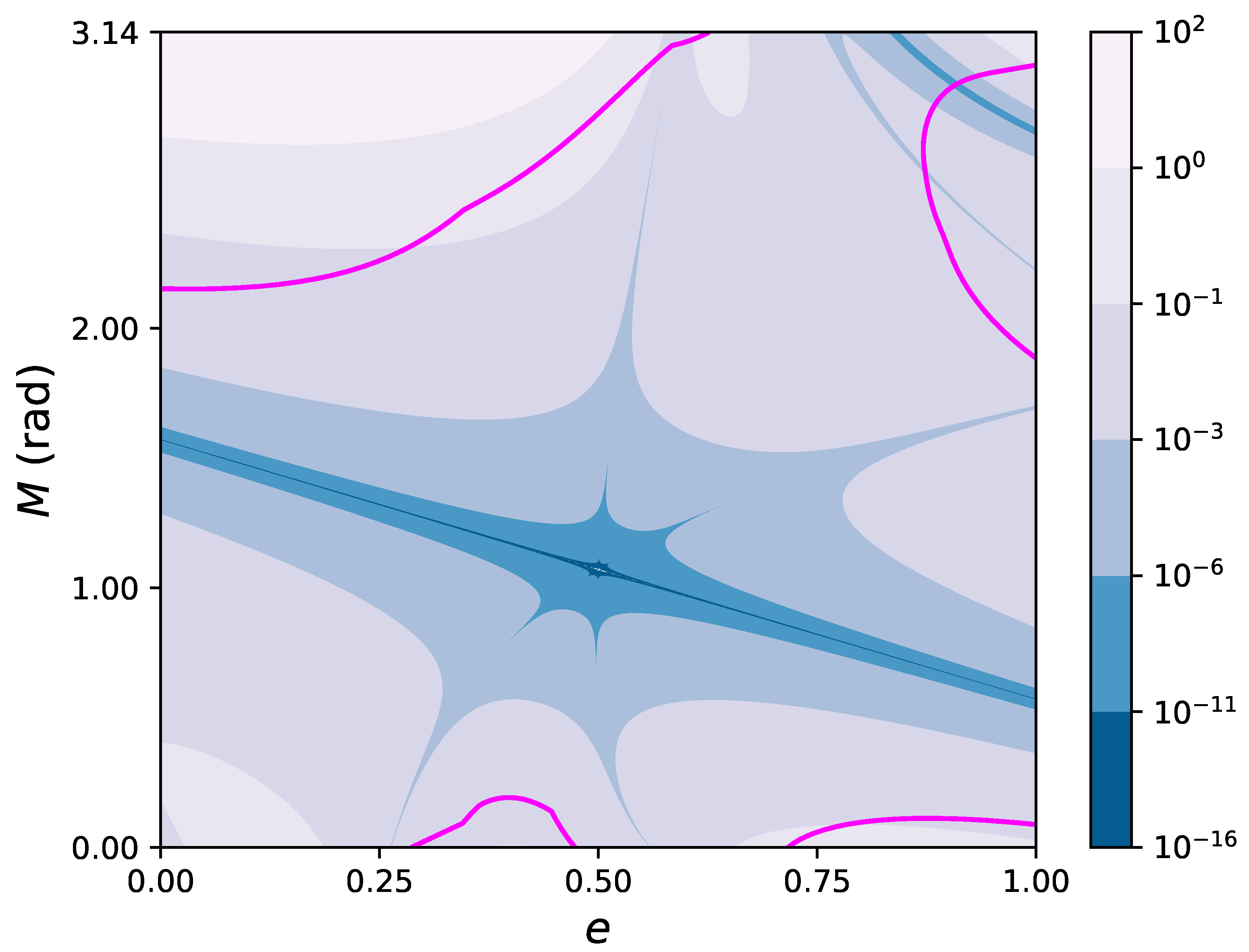

Figure 3 shows the contour levels in the

plane of the error

affecting the fifth degree polynomial approximation,

, as given by Equation (

30). The continuous magenta curve marks the boundary of the region of convergence, as estimated with Equations (

20) and (

26). It can be seen that the Taylor series based in the mid point

converges in a significant part of the

plane. Moreover, the fifth degree polynomial reaches machine precision

in an elongated neighborhood of the point

along a diagonal line crossing the entire

e domain from

to

, with transverse size (along the

M direction) ranging from ∼

rad close to the endpoints, to ∼

rad around the center

.

3.3. Bivariate Infinite Series Solution of the Hyperbolic Kepler Equation around ,

Choosing

,

, so that

,

,

,

, defining

, the series of Equations (

2) and (

4) becomes,

where now

E and

M indicate the (dimensionless) hyperbolic anomalies.

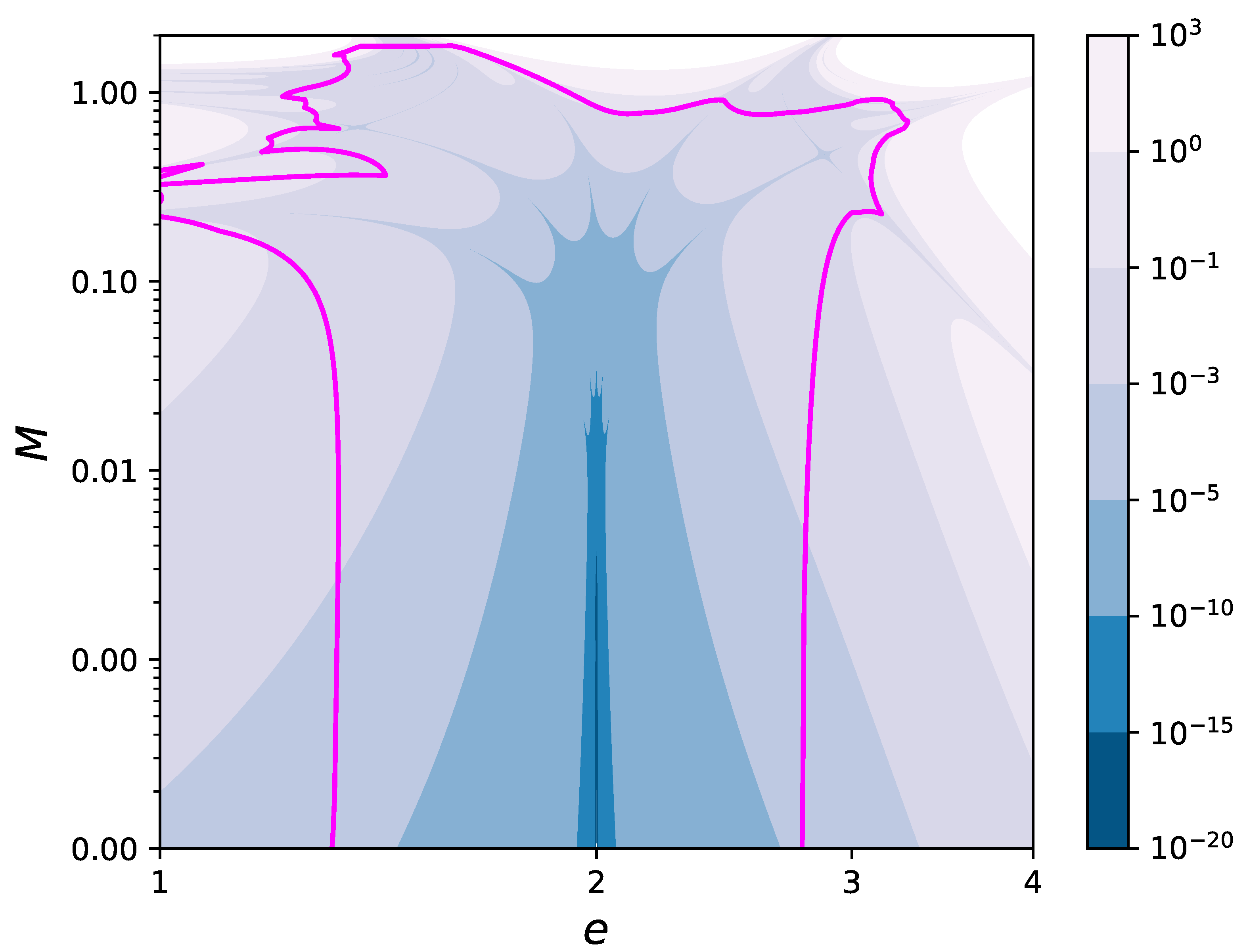

For the hyperbolic motion, the values of

e and

M can vary in infinite ranges,

,

(due to the symmetry for

). In

Figure 4, the contour levels in the

plane of the error

for the solution (

31) have been drawn in the region

,

. This region has been chosen in such a way that the plot contains the magenta curve marking the boundary of the region of convergence, as estimated with Equations (

20) and (

26). Moreover, the fifth degree polynomial reaches machine precision

in an entire neighborhood of size

,

, around the point

.

4. Conclusions

I described an analytical procedure for the exact computation of all the higher-order partial derivatives of the elliptic and hyperbolic eccentric anomalies with respect to both the eccentricity e and the mean anomaly M. Although such derivatives depend implicitly on the solution of KE, they can be computed explicitly by choosing a couple of base values and for the eccentricity and the eccentric anomaly, so that the corresponding value of the mean anomaly can be obtained without solving KE. For any such choice of , an infinite Taylor series expansion in both M and e can then be written, which is expected to converge in a suitable neighborhood of . A procedure for estimating the actual size of the region of convergence has also been given.

Three explicit examples of such series were then provided, two for the elliptic and one for the hyperbolic KE. Each of them, for fixed base point, turns out to converge in large parts of the plane. For close to within a range , the polynomial obtained by truncating the infinite series up to the fifth degree reaches an accuracy at the level of machine double precision. Further away from , but still within the region of convergence, higher order terms should be introduced to maintain such an accuracy.

Since these new solutions converge locally around

, a suitable set of them, centered around different

and truncated up to a certain degree, can be used to design an algorithm for the numerical computation of the function

for every value of

. The resulting polynomials will form a 2-D spline [

23], generalizing the 1-D spline that has been proposed in Refs. [

24,

25] for solving KE for every

M when

e is fixed. This bivariate spline may be used for accelerating computations involving the repetitive solution of Kepler’s equation for several different values of

e and

M [

23], as for exoplanet search [

27,

28] or for the implementation of Enke’s method [

1].