1. Introduction

This paper has been motivated by several articles dedicated to estimating the solutions of high-order boundary-value problems (BVPs) including, fourth-order [

1], sixth-order [

2], eighth-order [

3,

4,

5,

6,

7,

8,

9,

10,

11,

12,

13,

14,

15,

16,

17,

18,

19],

-order [

20], and higher-order [

21,

22,

23,

24] BVPs. This paper focuses specifically on eighth-order BVPs because of the volume of research done on them, which is covered in Refs. [

3,

4,

5,

6,

7,

8,

9,

10,

11,

12,

13,

14,

15,

16,

17,

18,

19]. These references list many existing scientific problems requiring solutions of high-degree BVPs. For example, eighth-order BVPs appear in the physics of specific hydrodynamic stability problems (infinite horizontal layer of fluid heated from below and under rotation) when instability sets in as overstability [

25], and in orthotropic cylindrical shells under line load [

26]. From a theoretical point of view, the study of the existence and uniqueness for the solutions of high-order boundary value problems is presented in Ref. [

22] and studied further in Ref. [

27].

The technique presented in this paper is rooted in functional interpolation expressions. These expressions are particularly well suited to solve differential equations. This has been shown in Ref. [

28], the seminal paper on the Theory of Functional Connections (TFC), and in subsequent articles, showing its application to solve linear [

29] and nonlinear ODEs [

30]. The TFC formalized the method of analytical constraint embedding (a.k.a. functional interpolation), since it provides expressions representing all functions satisfying a set of specified constraints.

The general equation to derive these interpolating expressions, named constrained expressions, follows as,

where

is the free function,

are unknown coefficients to be solved by imposing the

n constraint conditions, and

are “support functions,” which are a set of

n linearly independent functions. In prior work [

29,

30] as well as in this paper, the

support function set has been selected as the monomial set.

The

coefficients are computed by imposing the constraints using Equation (

1). Then, once the expressions of the

coefficients are obtained, they are back substituted into Equation (

1) to produce the constrained expression, a functional representing all possible functions satisfying the specified set of constraints. The use of this constrained expression has already been applied to many areas of study, including solving low-order differential equations [

29,

30], hybrid systems [

31], and optimal control problems, including energy-optimal landing, energy-optimal intercept [

32], and fuel-optimal landing [

33]. Furthermore, this technique has been successfully used to embed constraints into machine learning frameworks [

34,

35], in quadratic and nonlinear programming [

36], and in a variety of other applications [

37]. In addition, this technique has been generalized to

n-dimensions [

38,

39], providing functionals representing all possible

n-dimensional manifolds subject to constraints on the value and arbitrary order derivative of

dimensional manifolds.

2. Derivation of the Constrained Expression for Eighth-Order Boundary-Value Problems

In this paper, we consider the solution of an eighth-order BVP via the TFC. In general, the problem can be posed in its implicit form as,

where the notation

is used to denote the

derivative of

with respect to

x. Now, in order to embed the eight constraints, we can set

in Equation (

1) leading to the expression,

where

and, using monomial support functions,

Now, according to the theory developed in Ref. [

28], a system of equations can be constructed by evaluating the candidate function defined by Equation (

3) at the constraint locations and setting the function equal to the specified constraint value. For example, the constraint on the function at the initial value (i.e.,

) is applied as such,

This can be done for the remaining seven constraint conditions, and the resulting system of equations can be expressed in a compact form,

This system of equations can be solved for the unknown

coefficients and organized in the form,

where the

terms, called switching functions, only depend on the independent variable. This technique is general for any domain

, for example the general expression for

is,

However, for ease of presentation, since all problems presented in this paper, except for Problem #5, are defined on the domain

, we will express these switching functions in terms of this integration range. For completeness, the support functions for two general points

and

(i.e., the switching functions for Equation (

4)) are provided in

Appendix A. The

terms for

are summarized below in Equations (

5)–().

With the solution of the

terms, the constrained expression is fully solved and represents all possible functions satisfying the boundary-value constraints. More specifically, by substituting the constrained expression and its derivatives into the original differential equation a new differential equation in terms of

and its derivatives is obtained. This new differential equation, which has no constraints, can be written in the compact form,

To solve this differential equation, prior work [

29,

30,

32,

33,

37] has expanded

as a linear combination of

m basis functions,

where

,

is an

vector of unknown coefficients, and

is an

vector containing the

m basis functions (in this paper Chebyshev orthogonal polynomials are used). Particular attention must be paid when using this expansion and least-squares. For example, the basis functions in

must be linearly independent of the support functions

in order to solve the system via least-squares. If any of the terms in

are not linearly independent of the support functions, then the matrix to be inverted in the least squares step will be ill-conditioned. Thus, the terms that are not linearly independent of the support functions must be skipped in the expansion of

. In this problem, our support functions span from the monomial term

to

; therefore, the Chebyshev polynomial expansion must start from

. Furthermore, in general, the basis functions may not be defined on the same range as the problem domain (i.e., Chebyshev and Legendre polynomials are defined on

, Fourier series is defined on

, etc.). Therefore, the basis domain (

z) must be mapped into the problem domain (

x), which can be done using the simple linear equations,

Furthermore, all subsequent derivatives of the free-function

are defined as,

where by defining,

the expression can be simplified to,

This defines all mappings from the basis domain into the problem domain. With the expression of

in terms of a known basis, we can rewrite the constrained expression given in Equation (

4) in the form,

where

is a function that is zero where the constraints are defined and

is a function that equals the constraints where they are defined. In fact, if

is selected such that

(meaning

) the expression would simplify to an interpolating function

. Furthermore, since the

vector shows up linearly in the expression of

, Equation (

16) can also be written as,

where

now becomes a vector equation

. This can be seen by expanding Equation (

17),

The subsequent derivatives follow by simply taking the derivatives of the

and

terms. That is, the form of subsequent derivatives of the constrained expression remains the same and we can generally write the constrained expression up to the eighth-order derivative as shown in Equation (

16),

where

is the

n-th derivative of the

function; the

function also includes the derivative of

, which follows Equation (

15) such that

where

c is defined in Equation (

14). With this adjustment to the constrained expression, the transformed differential equation defined in Equation (

13) can be reduced to a function of only

x and the unknown vector

,

which may be linear or nonlinear in the unknown parameter

. Lastly, in order to solve this equation numerically, we must discretize the domain into

points. Since in this paper we consider the linear basis

as Chebyshev orthogonal polynomials, the optimal distribution of

points is provided by Chebyshev-Gauss-Lobatto collocation points [

40,

41], defined as

and the map from

has been previously defined. As compared to the uniform point distribution, the collocation point distribution allows a much slower increase of the condition number as the number of basis functions,

m, increases. In general, we can define the residual of our differential equation in Equation (

19) for each discretized point,

For a linear differential equation

F (and therefore a linear differential equation

) the constrained expression and its derivatives will show up linearly and therefore will remain linear in the unknown

term. This leads to the form

where the matrix

is composed of a linear combination of

and its derivatives discretized over

where

. Note, by our definition the domain is

, where

is the initial value and

is the final value, and

k is defined in the description of Chebyshev-Gauss-Lobatto collocation points. Furthermore, it follows that

is composed of a linear combination of

, its derivatives, and a potential forcing term

for the discrete values of

x. This system can now be easily solved with any available least-squares technique. All numerical solutions in this paper utilize a scaled QR method to perform the least-squares.

In the case of a nonlinear differential equation, Equation (

20) can be expressed as a loss function at each discretization point,

and the system can be solved by an iterative least-squares method where the Jacobian is defined as,

where

represents the current step’s estimated

parameter. The parameter update is provided by,

where the

can be defined using classic least-squares,

or in this paper through a QR decomposition method. This process is repeated until either the absolute value of the loss function is below some tolerance

, or until the

norm of the loss function continues to increase, which is specified by the following conditions,

In this paper, this tolerance was set as twice the value of machine-level precision for double point precision, .

5. Accuracy of the Derivatives

The previous section compared the absolute error of TFC at a number of points along the domain with the absolute error of previous methods. This section discusses the accuracy of the derivatives when using TFC. One of the major advantages of TFC compared to other methods is that the estimated solution is analytical. As a result, further manipulation of the estimated solution is easily achieved, such as taking derivatives. As an example, consider problem #5.

Table 7 shows the mean absolute error of

y and its derivatives up to order eight. The second column of

Table 7 used 10 basis functions to compute the solution and 11 equidistant points to compute the error, while the third column used 30 basis functions to compute the solution and 100 equidistant points to compute the error.

If enough Chebyshev orthogonal polynomials are used in the free function to estimate the solution, the error in subsequent derivatives should increase by an order of magnitude or less.

Table 7 shows that when 10 basis functions are used, the error increases as the order of the derivative increases. In this case, there were not enough Chebyshev orthogonal polynomials used, as indicated by the large mean error in the eighth derivative. In other words, the number of basis functions used was not nearly enough to accurately estimate the solution of the eighth derivative.

When 30 basis functions are used, the mean error increases as the order of the derivative increases, until the eighth derivative is reached. In derivatives one through seven, the mean error increases approximately an order of magnitude or less when compared to the previous derivative. However, the eighth derivative has less error than the seventh derivative, because the eighth derivative shows up in the differential equation, and thus in the residual. Hence, the eighth derivative is directly affected when computing the solution, whereas the other derivatives are not.

In problem #5, the differential equation only contains the function and the eighth derivative. As a different example, consider the problem solved in [

17],

subject to

which has the exact solution

. From hereon, we shall refer to this problem as problem #7.

Table 8 shows the mean absolute error of

y and its derivatives up to order eight for problem #7. The second column of

Table 8 used 10 basis functions to compute the solution and 11 points to compute the error, while the third column used 30 basis functions to compute the solution and 100 points to compute the error.

Table 8 shows that when all derivatives are included in the differential equation, the anomalous decrease in mean error as subsequent derivatives are taken disappears (i.e., the mean solution error from derivative seven to derivative eight increases as expected).

6. Conclusions

This paper explores the application of the techniques developed in [

28,

29,

30] to the solution of high-order differential equations, namely eighth-order BVPs. In all the problems presented, which span the publications [

3,

4,

5,

6,

7,

8,

9,

10,

11,

12,

13,

14,

15,

16,

17,

18,

19], the solution accuracy ranges from

–

. These results are similar to the results obtained in earlier studies on first- and second-order linear [

29] and nonlinear [

30] differential equations. This application to higher-order systems further highlights the power and robustness of this technique.

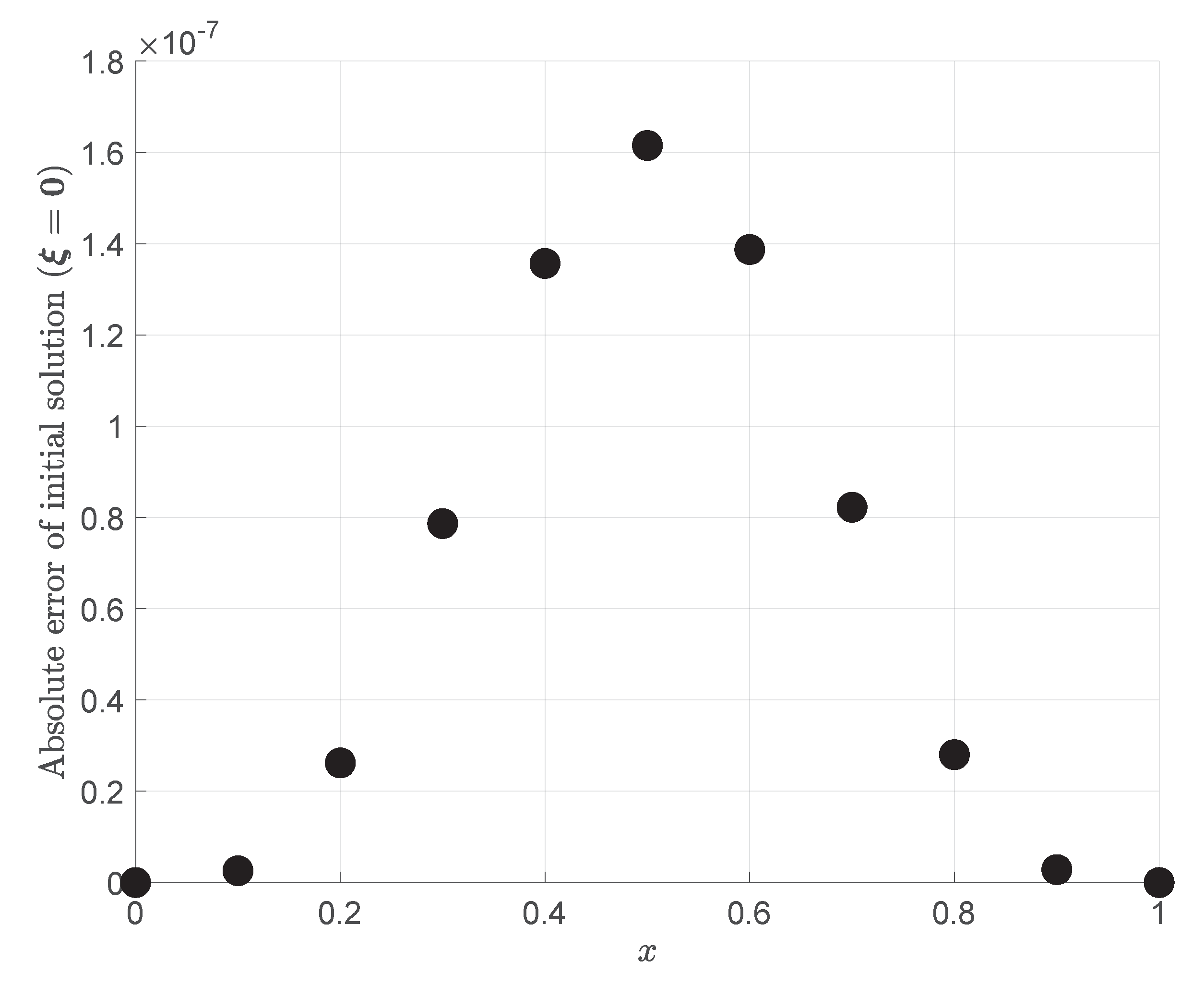

In

Section 3, a discussion of the initialization of the TFC approach for nonlinear differential equations was provided. In the initialization, the coefficient vector

is set to zero, which implies that

. It was found that this still solved the differential equation with an accuracy on the order of

. This can be explained by an equation first presented in the seminal paper on TFC [

28]. In this paper an equation (Equation (

9) in Ref. [

28]) is presented which describes the general expression for the interpolating expression for the function and its first

n derivatives. This equation simplifies to the expression of a Taylor series when

. While this is not the exact case for the boundary-value constraints in this paper, the nature of the aforementioned equation points to the fact that when

, the constrained expression derived in this paper acts as a Taylor series approximation for two points. The relationship between the TFC method and Taylor series has yet to be explored, and the extension of this Taylor series-like expansion about

n points will be the focus of future work.

Section 5 discussed the accuracy of the derivatives of the estimated solution. The solution accuracy was reduced with each subsequent derivative, but overall the accuracy of the final derivatives only lost a few orders of magnitude, resulting in an overall error on the order of

–

, provided that enough basis functions were used when estimating the solution. In addition, it was shown that the accuracy of a given derivative depends, in part, on whether it explicitly shows up in the differential equation.