Abstract

In this study, we construct the one parameter optimal derivative-free iterative family to find the multiple roots of an algebraic nonlinear function. Many researchers developed the higher order iterative techniques by the use of the new function evaluation or the first-order or second-order derivative of functions to evaluate the multiple roots of a nonlinear equation. However, the evaluation of the derivative at each iteration is a cumbersome task. With this motivation, we design the second-order family without the utilization of the derivative of a function and without the evaluation of the new function. The proposed family is optimal as it satisfies the convergence order of Kung and Traub’s conjecture. Here, we use one parameter a for the construction of the scheme, and for , the modified Traub method is its a special case. The order of convergence is analyzed by Taylor’s series expansion. Further, the efficiency of the suggested family is explored with some numerical tests. The obtained results are found to be more efficient than earlier schemes. Moreover, the basin of attraction of the proposed and earlier schemes is also analyzed.

MSC:

65G99; 65H10

1. Introduction

One of the challenging tasks of the scientific and engineering field is to solve nonlinear equations. Many problems like the design of electric circuits, the parachutist problem, and the ideal gas law [1] are formulated in the form of nonlinear equations. Due to their significance, sometimes, it is difficult to solve scalar equations with the help of analytical methods. This leads to the development of modern numerical methods. Many scholars [2,3,4,5,6,7,8,9,10] constructed higher order iterative techniques to solve nonlinear equations. Here, we concentrate on the multiple root finding of a function such that and , where is the exact root of function g with multiplicity m.

One of the relevant and basic methods to find the multiple root of a function is the modified Newton’s method () [11] defined as:

where m is the multiplicity of root and is the first derivative of function g. The convergence order of is quadratic if the multiplicity is known. Another important iterative technique to find multiple roots of nonlinear equations is the Schröder method [12] defined as:

For this scheme, the multiplicity of the root is not required in advance, but it involves the first-order and second-order derivative of a function at each step. Many more researchers [13,14,15,16,17,18,19,20,21,22] developed the higher order iterative schemes involving the first-order derivative to find the multiple roots of a scalar equation. The evaluation of derivatives at each step is time consuming and sometimes a difficult job for any complex problem. Therefore, some authors [23,24,25,26] introduced the derivative-free iterative techniques to find the multiple roots of an equation using second-order the modified Traub–Steffensen method () [12], which is defined as:

where The order of convergence of the modified Traub–Steffensen method is maintained without the evaluation of any derivative.

Very recently, Kumar et al. [26] developed the optimal second-order scheme represented as:

where is a weight function and . On the other hand, the construction of iterative methods with less function evaluation plays a key role. For this, the optimal methods are introduced that satisfy the Kung–Traub conjecture [27], meaning the order of convergence is where n represents the number of function evaluations per iteration. Motivated by this, we suggest a second- order optimal derivative-free iterative family. The salient features of the proposed family are its simple body structure, no new evaluation of the function, consisting of one parameter, and without the computation of the derivative. The proposed work is introduced as follows. Section 2 elaborates on the construction and convergence analysis of the proposed family. The numerical performance of the suggested family is shown in Section 3. The basin of attraction of the suggested methods and the methods available in the literature are exhibited in Section 4. Finally, conclusions are made in Section 5.

2. Construction of the Second-Order Scheme

Here, we construct an optimal second-order family of the iterative method for multiple zeros , which is defined by:

For , the modified Traub method is special case of the scheme (5).

Convergence Analysis

Using the following Lemmas 1 and 2 and Theorem 1, we illustrate that the constructed family (5) attains the maximum second-order of convergence for all without evaluating any extra evaluation of the function or its derivative.

Lemma 1.

Suppose is a solution of multiplicity of the function g. Consider that a function is analytic in surrounding the required zero . Then, the proposed scheme (5) has second-order convergence with the following error equation:

where .

Proof.

Let be the error in the kth iteration. We use Taylor’s series expansions for function around with the assumption and , which are given by:

Set , and take the Taylor series of a function g about the point . We have:

Now,

Substitute the values of Equations (6)–(8) into the scheme (5). One obtains the following error equation:

Hence, the scheme (5) has second-order convergence for . □

Lemma 2.

Assuming the same hypotheses of Lemma 1, the scheme given by (5) is of second-order convergence for . It satisfies the following error equation:

Proof.

Let be the error in the kth iteration. We use the Taylor series expansions for function around with the assumption and , which are given by:

where .

Set , and take the Taylor series of a function g about the point . We have:

Now,

Substitute the values of Equations (10)–(12) into the scheme (5). One obtains the following error equation:

Hence, the scheme (5) has second-order convergence for . □

Theorem 1.

Assuming the same hypotheses of Lemma 1, the scheme given by (5) is of second-order convergence for . It converges to the following error equation:

Proof.

Let be the error in the kth iteration. We use the Taylor series expansions for function around with the assumption and , which are given by:

where .

Set , and take the Taylor series of a function g about the point . We have:

Now,

Substitute the values of Equations (10)–(12) into the scheme (5). One obtains the following error equation:

Hence, the scheme (5) has second-order convergence with multiplicity . □

3. Numerical Illustration

In this section, the derivative-free proposed schemes (5) for different values of parameter a are verified on some numerical problems. Here, we take and and denote the proposed methods as and respectively. The results are compared with other existing methods, the modified Traub method [12] and the Kumar method [26], as shown below.

The modified Traub method is presented as :

The Kumar methods:

The function can be found in the Table 1.

Table 1.

The Kumar methods.

In all numerical problems, the parameter is considered. The numerical problems were performed with Mathematica 10 using 1000 multiple precision digits of the mantissa with stopping criterion . To check the better performance of the proposed method, we display the error between two consecutive iterations , functional error at the th iteration, and the approximate computational order of convergence (ACOC) denoted as in Table 2, Table 3, Table 4, Table 5, Table 6 and Table 7. The following formula is used to evaluate the ACOC:

Table 2.

Numerical results of Example 1.

Table 3.

Numerical results of Example 2.

Table 4.

Numerical results of Example 3.

Table 5.

Numerical results of Example 4.

Table 6.

Numerical results of Example 5.

Table 7.

Numerical results of Example 6.

Notice that the meaning of is in all the tables.

Example 1.

Consider the van der Waals equation of the ideal gas [1]:

which explains the nature of the real gas by taking the parameters of a particular gas. Other parameters are obtained with parameters . Therefore, we have nonlinear equations in terms of the volume of gas (V), which is represented as x in the following equation:

The desired root is of multiplicity . Table 2 depicts the performance of different schemes with initial guess .

Example 2.

Here, we consider the following nonlinear function of multiplicity :

The nonlinear equation is tested on the proposed methods and the methods introduced in [26] by taking the initial guess . The proposed methods converge to the desired root , and the computational results are shown in Table 3.

Example 3.

Finding the eigenvalues of a large matrix whose order is greater than four, we need to solve its characteristic equation. The determination of the roots of such a higher order characteristic equation is a difficult task if we apply the linear algebra approach. Therefore, one of the best ways is to use the numerical techniques. Now, consider the following square matrix of order nine.

whose characteristic equation is modeled as a nonlinear equation as:

The root of this equation is with multiplicity . Table 4 depicts the better performance of the proposed scheme in comparison to existing techniques by taking initial guess .

Example 4.

Consider another standard nonlinear equation as follows:

which has root of multiplicity four. The results are obtained on initial guess and shown in Table 5.

Example 5.

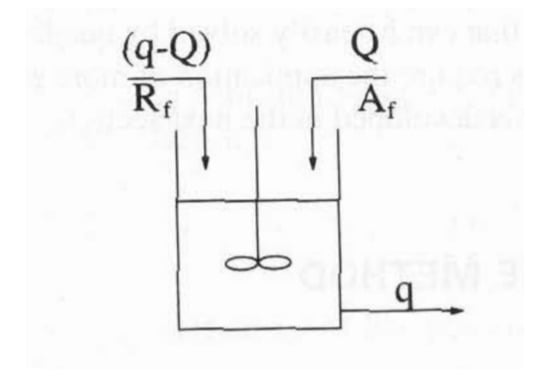

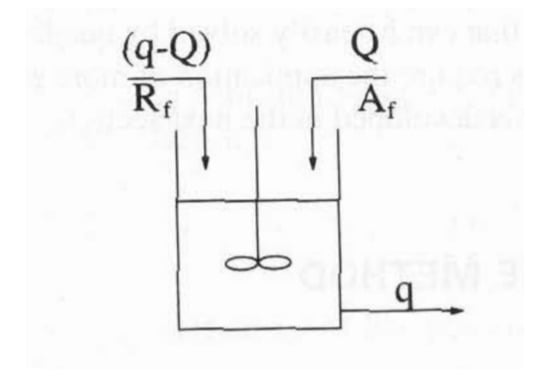

Finally, we consider the problem of a continuous stirred tank reactor [28] shown in the Figure 1.

Figure 1.

Continuous stirred tank reactor.

Here, components A and R are fed to the reactor at rates of Q and , respectively. The reaction schemes developed in these reactors are:

Douglas [29] converted this problem into a mathematical expression:

where is the gain of the proportional controller. This control system is stable for the values of . If we take , we obtain the poles of the open-loop transferred function as the solutions of the following uni-variate equation:

Clearly, function has three roots , and . One of the roots has multiplicity . The computational results are demonstrated in Table 6. It can be concluded that the results are better for the suggested methods in terms of less residual and functional error with the similar utilization of the number of iterations for the methods developed by Traub and Kumar.

Example 6.

Next, we consider the study of the multi-factor effect in which the trajectory of an electron in the air gap between two parallel plates [30] is defined as:

, and are the charge of the electron at rest, the mass of the electron at rest, the position and velocity of the electron at time , and the RF electric field between the plates, respectively. By choosing particular values of these parameters, we have the following expression:

This problem has root with multiplicity . We tested the problem at initial guess , and the results are shown in Table 7.

4. Basin of Attraction

In this section, we explored the convergence behavior of the proposed family and earlier developed techniques for finding multiple roots of a nonlinear equation in the complex plane with the basin of attraction. A basin of attraction is a significant tool that depicts the stability behavior of methods on the complex plane. It provides a wide range of initial guesses to find the roots of an equation, which leads to better understanding of the performance behavior of iterative techniques. Many researchers [31,32,33,34,35,36] have opted for the basin of attraction idea to check the effectiveness of the developed iterative techniques.

The function , where is the Riemann sphere, the orbit of a point , is defined by:

A point is called a fixed point of if it verifies that . Moreover, is called a periodic point of period if it is a point such that , but , for each . Moreover, a point is called pre-periodic if it is not periodic, but there exists a such that is periodic.

There exist different types of fixed points depending on their associated multiplier . Taking the associated multiplier into account, a fixed point is called:

- a superattractor if

- an attractor if

- a repulsor if

- and parabolic if .

The fixed points that do not correspond to the roots of the polynomial are called strange fixed points. On the other hand, a critical point is a point that satisfies that .

The basin of attraction of an attractor is defined as:

The Fatou set of the rational function R, is the set of points whose orbits tend to an attractor (fixed point, periodic orbit, or infinity). Its complement in is the Julia set, . That means that the basin of attraction of any fixed point belongs to the Fatou set, and the boundaries of these basins of attraction belong to the Julia set.

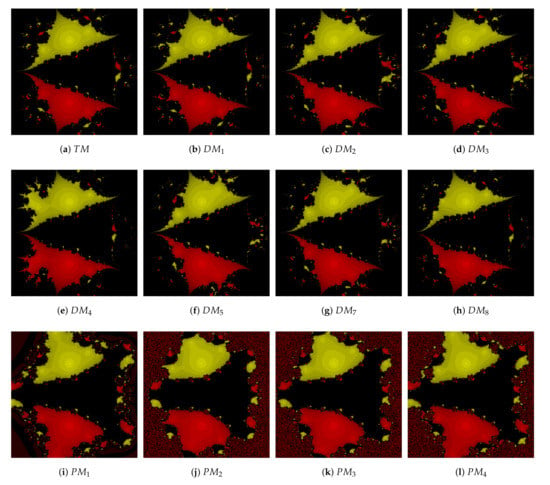

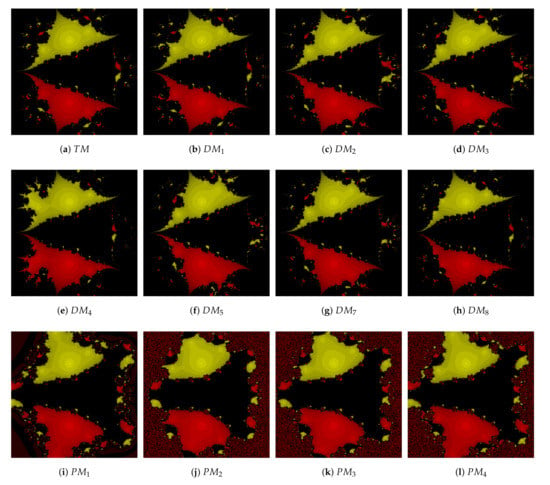

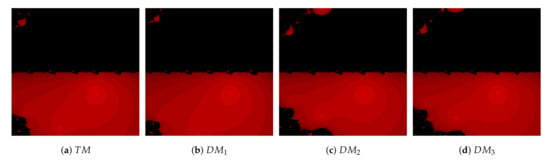

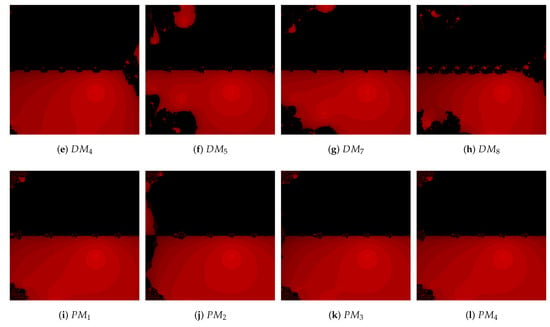

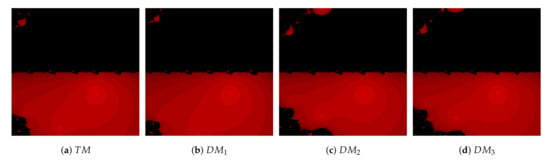

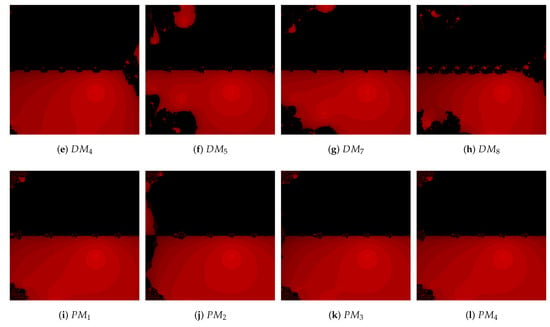

In this direction, we consider the nonlinear equation in interval or boundary with mesh points. Each mesh point is used as the initial value to analyze the convergence behavior for suggested methods , and and for comparison methods , and , respectively. The different colors (red, green, yellow, ...) are considered to represent the roots of . Choosing one mesh point, if schemes converge to any root of the equation, then it is represented as colors like red, green, and yellow under a tolerance of less than and a number of iterations less than 100. Otherwise, black color is assigned, which means that the mesh point does not converge to the required root. The following problems of multiple roots are studied.

Problem 1.

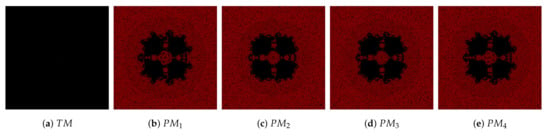

Consider the polynomial equation . The roots of this equation are with multiplicity . It is clear from Figure 2 that the suggested methods , and have more convergence area as compared with methods , and , respectively.

Figure 2.

Comparisons of the convergence plane at for Problem 1.

Problem 2.

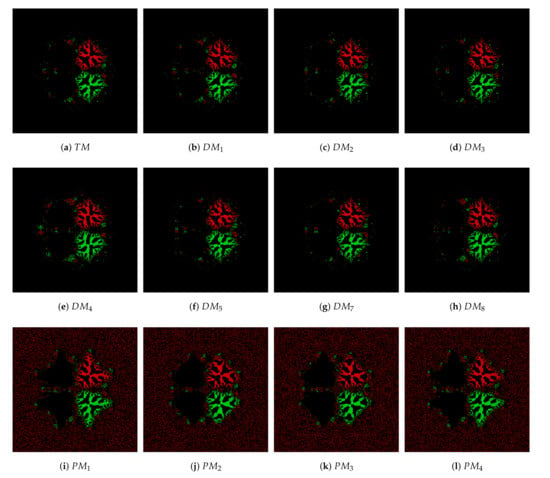

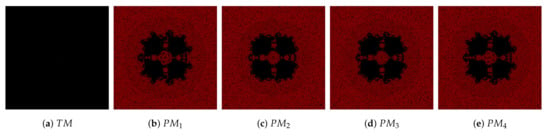

Here, we consider a non-polynomial whose roots are . The basins of Problem 2 are plotted with multiplicity and in Figure 3 and Figure 4, respectively. It is observed that suggested methods , and converge to the root shown as red color, and the less divergence region is presented as the black region, whereas method shows all divergence region in Figure 3. Similarly, methods , and do not converge at at least one point, so their graph is not shown here. Moreover, Figure 4 reveals that for multiplicity , the proposed methods have more convergence points than the earlier methods.

Figure 3.

Comparisons of the convergence plane at for Problem 2 with .

Figure 4.

Comparisons of the convergence plane at for Problem 2 with .

Problem 3.

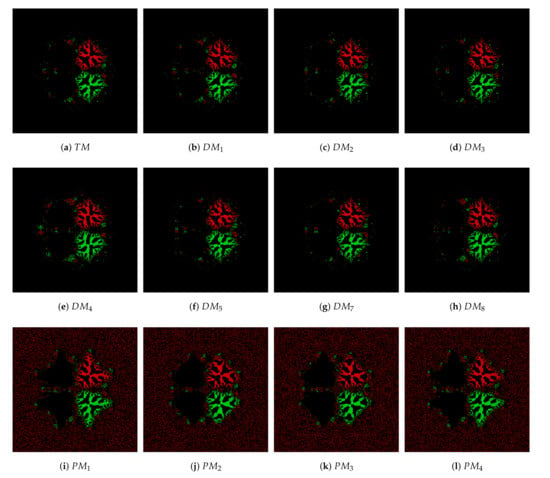

Lastly, we examine with roots , and multiplicity . The basins of attraction for the proposed schemes demonstrate equally better convergence performance than the earlier methods in Figure 5.

Figure 5.

Comparisons of the convergence plane at for Problem 3.

5. Conclusions

The new second-order optimal derivative-free family for the root finding of nonlinear equations is suggested. On the basis of parameter a, some new optimal methods are generated. The well known modified Traub method is a special case for . The convergence order is analyzed. Moreover, the efficiency of the proposed methods are verified by testing some numerical illustrations. The results are compared with the modified Traub method and other existing methods. Finally, the obtained results of the developed methods are better in the comparisons of the earlier developed schemes. The basin of attraction of the proposed schemes also shows better stability.

Author Contributions

M.K., R.B., and S.B.: conceptualization, methodology, validation, writing—original draft preparation, writing—review & editing. A.S.A. and M.S.: review and editing. All authors read and agreed to the published version of the manuscript.

Funding

The Deanship of Scientific Research (DSR), King Abdulaziz University, Jeddah, under Grant No. (FP-29-42).

Acknowledgments

This work was funded by the Deanship of Scientific Research (DSR), King Abdulaziz University, Jeddah, under grant No. (FP-29-42). The authors, therefore, acknowledge with thanks DSR technical and financial support.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Chapra, S.C.; Canale, R.P. Numerical Methods for Engineers, 7th ed.; Mc Graw Hill Publications: New York, NY, USA, 2015. [Google Scholar]

- Bi, W.; Ren, H.; Wu, Q. Three-step iterative methods with eighth-order convergence for solving nonlinear equations. J. Comput. Appl. Math. 2009, 255, 105–112. [Google Scholar] [CrossRef]

- Bi, W.; Wu, Q.; Ren, H. A new family of eighth-order iterative methods for solving nonlinear equations. Appl. Math. Comput. 2009, 214, 236–245. [Google Scholar] [CrossRef]

- Cordero, A.; Hueso, J.L.; Martínez, E.; Torregrosa, J.R. New modifications of Potra-Pták’s method with optimal fourth and eighth order of convergence. J. Comput. Appl. Math. 2010, 234, 2969–2976. [Google Scholar] [CrossRef]

- Behl, R.; Salimi, M.; Ferrara, M.; Sharifi, S.; Samaher, K.A. Some real life applications of a newly constructed derivative free iterative scheme. Symmetry 2019, 11, 239. [Google Scholar] [CrossRef]

- Salimi, M.; Lotfi, T.; Sharifi, S.; Siegmund, S. Optimal Newton-Secant like methods without memory for solving nonlinear equations with its dynamics. Int. J. Comput. Math. 2017, 94, 1759–1777. [Google Scholar] [CrossRef]

- Salimi, M.; Nik Long, N.M.A.; Sharifi, S.; Pansera, B.A. A multi-point iterative method for solving nonlinear equations with optimal order of convergence. Jpn. J. Ind. Appl. Math. 2018, 35, 497–509. [Google Scholar] [CrossRef]

- Matthies, G.; Salimi, M.; Sharifi, S.; Varona, J.L. An optimal eighth-order iterative method with its dynamics. Jpn. J. Ind. Appl. Math. 2016, 33, 751–766. [Google Scholar] [CrossRef]

- Sharifi, S.; Ferrara, M.; Salimi, M.; Siegmund, S. New modification of Maheshwari method with optimal eighth order of convergence for solving nonlinear equations. Open Math. Former. Cent. Eur. J. Math. 2016, 14, 443–451. [Google Scholar]

- Lotfi, T.; Sharifi, S.; Salimi, M.; Siegmund, S. A new class of three point methods with optimal convergence order eight and its dynamics. Numer. Algor. 2016, 68, 261–288. [Google Scholar] [CrossRef]

- Schröder, E. Über unendlich viele Algorithmen zur Aufölsung der Gleichungen. Math Ann. 1870, 2, 317–365. [Google Scholar] [CrossRef]

- Traub, J.F. Iterative Method for the Solution of Equations; Prentice-Hall: Englewood Cliffs, NJ, USA, 1964. [Google Scholar]

- Li, S.; Liao, X.; Cheng, L. A new fourth-order iterative method for finding multiple roots of nonlinear equations. Appl. Math. Comput. 2009, 215, 1288–1292. [Google Scholar]

- Li, S.G.; Cheng, L.Z.; Neta, B. Some fourth-order nonlinear solvers with closed formulae for multiple roots. Comput. Math. Appl. 2010, 59, 126–135. [Google Scholar] [CrossRef]

- Sharma, J.R.; Sharma, R. Modified Jarratt method for computing multiple roots. Appl. Math. Comput. 2010, 217, 878–881. [Google Scholar] [CrossRef]

- Zhou, X.; Chen, X.; Song, Y. Constructing higher order methods for obtaining the multiple roots of nonlinear equations. J. Comput. Appl. Math. 2011, 235, 4199–4206. [Google Scholar] [CrossRef]

- Sharifi, M.; Babajee, D.K.R.; Soleymani, F. Finding the solution of nonlinear equations by a class of optimal methods. Comput. Math. Appl. 2012, 63, 764–774. [Google Scholar] [CrossRef]

- Soleymani, F.; Babajee, D.K.R.; Lotfi, T. On a numerical technique for finding multiple zeros and its dynamics. J. Egypt. Math. Soc. 2013, 21, 346–353. [Google Scholar] [CrossRef]

- Geum, Y.H.; Kim, Y.I.; Neta, B. A class of two-point sixth-order multiple-zero finders of modified double-Newton type and their dynamics. Appl. Math. Comput. 2015, 270, 387–400. [Google Scholar] [CrossRef]

- Geum, Y.H.; Kim, Y.I.; Neta, B. Constructing a family of optimal eighth-order modified Newton-type multiple-zero finders along with the dynamics behind their purely imaginary extraneous fixed points. J. Comput. Appl. Math. 2018, 333, 131–156. [Google Scholar] [CrossRef]

- Kansal, M.; Kanwar, V.; Bhatia, S. On some optimal multiple root-finding methods and their dynamics. Appl. Appl. Math. 2015, 10, 349–367. [Google Scholar]

- Behl, R.; Alsolami, A.J.; Pansera, B.A.; Al-Hamdan, W.M.; Salimi, M.; Ferrara, M. A new optimal family of Schrder’s method for multiple zeros. Mathematics 2019, 7, 1076. [Google Scholar] [CrossRef]

- Hueso, J.L.; Martínez, E.; Teruel, C. Determination of multiple roots of nonlinear equations and applications. Math. Chem. 2015, 53, 880–892. [Google Scholar] [CrossRef]

- Sharma, J.R.; Kumar, S.; Jńtschi, L. On a class of optimal fourth order multiple root solvers without using derivatives. Symmetry 2019, 11, 1452. [Google Scholar] [CrossRef]

- Sharma, J.R.; Kumar, S.; Jńtschi, L. On Derivative Free Multiple-Root Finders with Optimal Fourth Order Convergence. Mathematics 2020, 8, 1091. [Google Scholar] [CrossRef]

- Kumar, D.; Sharma, J.R.; Argyros, I.K. Optimal One-Point Iterative Function Free from Derivatives for Multiple Roots. Mathematics 2020, 8, 709. [Google Scholar] [CrossRef]

- Kung, H.T.; Traub, J.F. Optimal order of one-point and multipoint iteration. J. Assoc. Comput. Mach. 1974, 21, 643–651. [Google Scholar] [CrossRef]

- Constantinides, A.; Mostoufi, N. Numerical Methods for Chemical Engineers with MATLAB Applications; Prentice Hall PTR: Upper Saddle River, NJ, USA, 1999. [Google Scholar]

- Douglas, J.M. Process Dynamics and Control; Prentice Hall: Englewood Cliffs, NJ, USA, 1972; Volume 2. [Google Scholar]

- Maroju, P.; Magreñán, Á.A.; Motsa, S.S.; Sarría, I. Second derivative free sixth order continuation method for solving nonlinear equations with applications. J. Math. Chem. 2018, 56, 2099–2116. [Google Scholar] [CrossRef]

- Scott, M.; Neta, B.; Chun, C. Basin attractors for various methods. Appl. Math. Comput. 2011, 218, 2584–2599. [Google Scholar] [CrossRef]

- Neta, B.; Scott, M.; Chun, C. Basins of attraction for several methods to find simple roots of onlinear equations. Appl. Math. Comput. 2012, 218, 10548–10556. [Google Scholar]

- Moysi, A.; Argyros, I.K.; Regmi, S.; González, D.; Magreñán, Á.A.; Sicilia, J.A. Convergence and Dynamics of a Higher-Order Method. Symmetry 2020, 12, 420. [Google Scholar] [CrossRef]

- Cordero, A.; Ramos, H.; Torregrosa, J.R. Some variants of Halley’s method with memory and their applications for solving several chemical problems. J. Math. Chem. 2020, 58, 751–774. [Google Scholar] [CrossRef]

- Zafar, F.; Cordero, A.; Junjua, M.; Torregrosa, J.R. Optimal eighth-order iterative methods for approximating multiple zeros of nonlinear functions. Rev. Real Acad. Cienc. Exactas Fís. Nat. Ser. Mat. 2020, 114, 1–17. [Google Scholar] [CrossRef]

- Zafar, F.; Cordero, A.; Torregrosa, J.R. A family of optimal fourth-order methods for multiple roots of nonlinear equations. Math. Methods Appl. Sci. 2020, 43, 7869–7884. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).