Abstract

In this paper, we consider a special nonlinear expectation problem on the special parameter space and give a necessary and sufficient condition for the existence of the solution. Meanwhile, we generalize the necessary and sufficient condition to the two-dimensional moment problem. Moreover, we use the maximum entropy method to carry out a kind of concrete solution and analyze the convergence for the maximum entropy solution. Numerical experiments are presented to compute the maximum entropy density functions.

1. Introduction

The sublinear expectation introduced by Peng [1,2] can be regarded as the supremum of a family of linear expectations , that is,

where is a local Lipschitz continuous function and is the parameter space.

It is evident that the sublinear expectation defined by (1) depends on the choice of parameter space . Different spaces will result in different nonlinear expectations. In particular, let and:

then the parameter space can be chosen as the following form:

When , Peng [3] gave the definition of the independent and identically distributed random variable and proved the weak law of large numbers (LLN) under the sublinear expectation and Condition (2). Furthermore, if , then Peng [4,5,6,7,8] defined the G-normal distribution and presented a new central limit theorem (CLT) under . The new LLN and CLT are the theoretical foundations in the framework of sublinear expectation.

The calculation of can be performed by solving the following nonlinear partial differential equation:

whose solution is . When the initial value is a convex function, Peng [8] gave the expression of as follows:

If is a concave function, the variance in (5) will be replaced by . For neither the concave nor the convex case, Hu [9] derived the explicit solutions of Problem (4) with the initial condition , . Gong [10] used the fully-implicit numerical scheme to compute the nonlinear probability under the G-expectation determined by the G-heat Equation (4) with the initial condition . Here, denotes the indicator function of the set . What all these methods mentioned above have in common is that G-expectation is calculated via solving the nonlinear partial differential Equation (4) with the particular initial condition . However, because of the nonlinear term, it is not easy to find a solution of (4) with general continuous initial function .

Based on the above reasons, in this paper, we consider a kind of special parameter space defined in (3) and convert the sublinear expectation in (1) into the following two series of moment problems: find the probability density functions and such that:

and:

respectively, where and . That is, we will approximately find a special class of nonlinear expectations , which satisfies:

and:

where .

The rest of this article is organized as follows. In Section 2, we present an alternative sufficient and necessary condition of the existence of solutions and that satisfy (6) and (7), respectively. In Section 3, we use the maximum entropy method to find the concrete solutions and analyze the convergence of maximum entropy solutions. In Section 4, we conduct the numerical simulations to calculate the maximum entropy density functions.

2. Existence of Solutions for Moment Problems

According to Theorems 1.35 and 1.36 in Akihito [11], the sequences and should satisfy some conditions if we use them to determine the probability density functions. Therefore, in this section, we consider the sufficient and necessary conditions to the existence of solutions for the moment Problems (6) and (7), respectively.

Let:

be the Hankel matrices of the given sequences and , respectively.

The following theorem gives an alternative sufficient and necessary condition for the existence of , which satisfies (6).

Theorem 1.

A sufficient and necessary condition that the sequence determines the probability density function is that satisfies one of the following two conditions:

(a) For any nonvanishing vector ,

(b) If there exist and nonvanishing vector such that , then:

for , where .

Proof.

We prove first the necessity. Let X be a continuous random variable with density function , whose original moments are:

where .

For any -dimensional nonvanishing vector , we can check that:

In fact, taking , we have:

which means that (10) holds for .

Assume that the Equation (10) holds for , that is,

Then, we consider the case of and note that the matrix can be rewritten as:

By using mathematical induction, we know that (10) holds for . Moreover, Equation (10) means that the Hankel matrices are nonnegative definite.

Now, we prove the necessary condition. Because of the nonnegative definiteness of , there are two possible cases for , that is for any m-dimensional nonzero vector or for some -dimensional nonzero vector . Thus, we divide the proof into two cases.

Case 1: Let for any nonzero vector ; by (14), we have:

We regard (15) as a quadratic equation in one variable with respect to . Since the value of (15) is greater than zero, so its discriminant is less than zero, i.e.,

This implies that (a) in Theorem 1 holds for .

Case 2: Let for some nonvanishing vector . For , let , . We choose an vector and multiply by and . Here, we consider only the case of as an example, and the same method can be used to analyze for . Then,

Since is nonnegative definite and , by (17), it follows that , that is (b) holds.

Next, we prove the sufficiency via its converse-negative proposition. In other words, we need to show that there is no probability density function such that the corresponding moments satisfy: there exist a positive integer and an -dimensional nonzero vector such that:

(i) ;

(ii) if , then there exists a positive integer such that .

Now, we carry out the proof by contradiction. Suppose such a density function exists. If holds for any -dimensional nonvanishing vector, then it follows from (15) that:

The above inequality contradicts Condition (i).

If the moments satisfy Condition (ii), then there exists an -dimensional nonzero vector such that . By Condition (ii), without loss of generality, we let and repeat the same procedure for the derivation of (17) to get that:

where . Obviously, the relationship in (19) is contradictory.

Then, we complete the proof of necessity. □

Remark 1.

We use , , and to replace , , and , respectively, in Theorem 1, then we get the sufficient and necessary conditions to the existence of the probability density function .

Note that Theorem 1 is presented in one-dimensional random variable space. Now, we extend this theorem to two-dimensional case. Let X and Y be any continuous random variables with the joint probability density function . The two-dimensional moment problems are defined by: find the joint probability density function and such that:

and:

where the set:

Here, we still take the sequence for example and present the main results in the following theorem without proof. Let:

be the Hankel matrix generated by the given sequence .

Theorem 2.

The sequence can determine the density functions if and only if it satisfies one of the following conditions:

(a) For any -dimensional vectors , and m-dimensional vectors , ,

and:

(b) If there exists such that , then:

for , where the vectors and , and , .

3. Maximum Entropy for Moment Problems

In Section 2, we discussed the existence of solutions of the moment problems. Now, we will use the maximum entropy method to get the solutions for Problems (6) and (7). Moreover, we will consider the convergence of maximum entropy density functions.

Given the first moments , the core idea of the maximum entropy method is to find the probability density function , such that:

where .

The Lagrange operator can be defined as:

where , are the Lagrange multipliers.

By the functional variation with respect to , we have:

and (23) holds. The values of can be calculated by solving a system of equations resulting from the moments conditions (23). Here, we take as an example and work out the values of as:

So far, we have considered the existence of the solutions of the moment Problems (6) and (7) for all . According to Theorem 3.3.11 in Durrett [12], we know that the probability density function is also unique in the weak convergence (see (27)) as long as its moments satisfy the conditions (a) and (b) in Theorem 1 and:

By the analysis in Frontini [13], we have the weak convergence for the maximum entropy solution as follows:

Remark 2.

By an argument analogous to the one-dimensional case, we can use the maximum entropy method to solve a concrete joint probability density function for the two-dimensional moment problem (20). The detailed process is shown in the next section and need not be repeated here.

By Theorem 2 and Theorem 14.20 in Schmüdgen [14], if the marginal moments and satisfy Conditions (a) and (b) in Theorem 2 and the following multivariate Carleman condition [14]:

for , then there exists a unique joint probability density function satisfying (20).

The convergence rate for the maximum entropy density has been analyzed in Frontini [13], and the results are analogous to (27).

4. Numerical Experiments

In this section, we conduct numerical experiments to calculate the two-dimensional maximum entropy density functions. In the numerical experiments, we use the maximum entropy method proposed in Section 3 to calculate the joint probability density functions for the weekly closing price of stock X and the weekly rate of return Y of Shanghai A shares.

By random sampling, we collect the data about the maximum (Table 1) and minimum (Table 2) values of the mixed sample moments of orders one and two for X and Y, respectively. That is,

Table 1.

The values for the maximum value of the moments.

Table 2.

The values for the minimum value of the moments.

The two-dimensional maximum entropy problem is defined as: find a probability density function such that:

and:

for and .

Take notice of the formula as:

where denotes the maximum entropy conditional density function. From (30), we can obtain the joint density function as long as we deduce and . Let:

be the conditional moments of the random variable Y under X, which satisfy:

for and .

It is easy to verify that and are positive definite. Hence, by Theorem 1, there exists a density function determined by .

To derive the explicit expressions of , we need to construct the conditional moments on the base of (32). Without loss of generality, we suppose is a constant. Combining (32) yields that:

By calculation, we derive the expressions of as follows:

where , , and .

In the same way, with the data in Table 2, we can obtain the following probability density function determined by .

where , , , and .

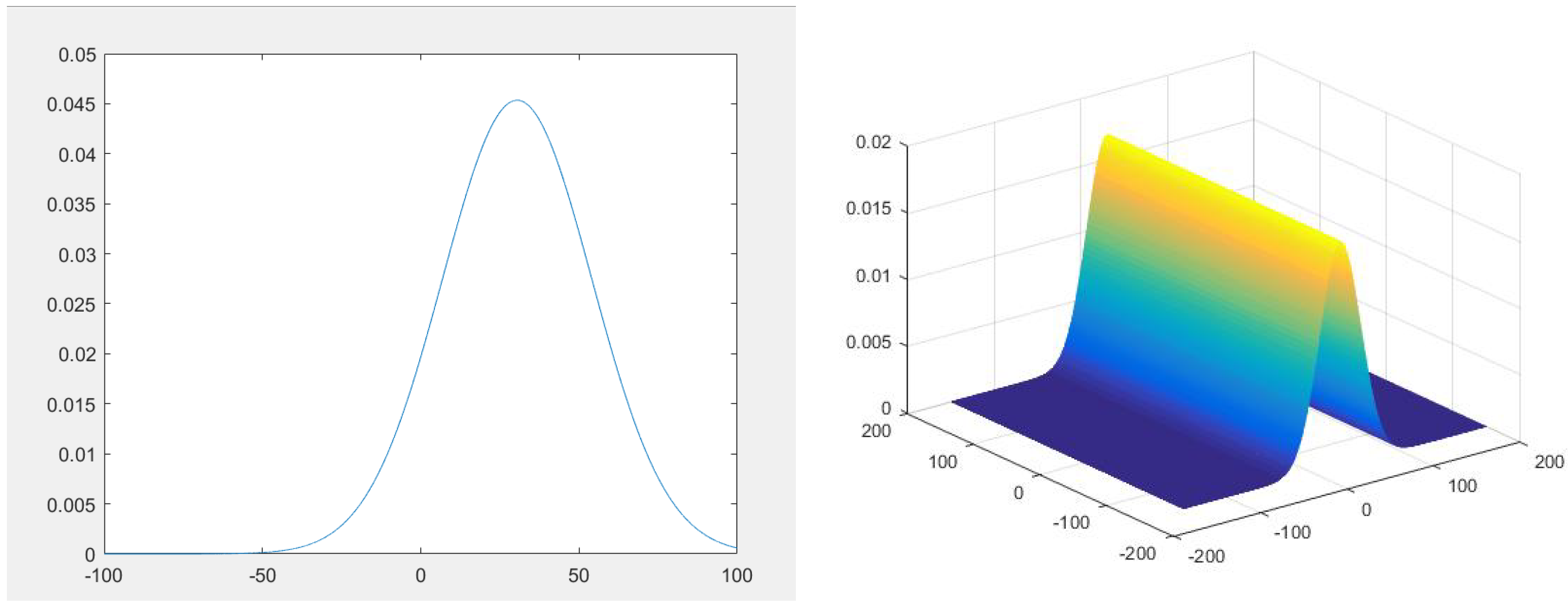

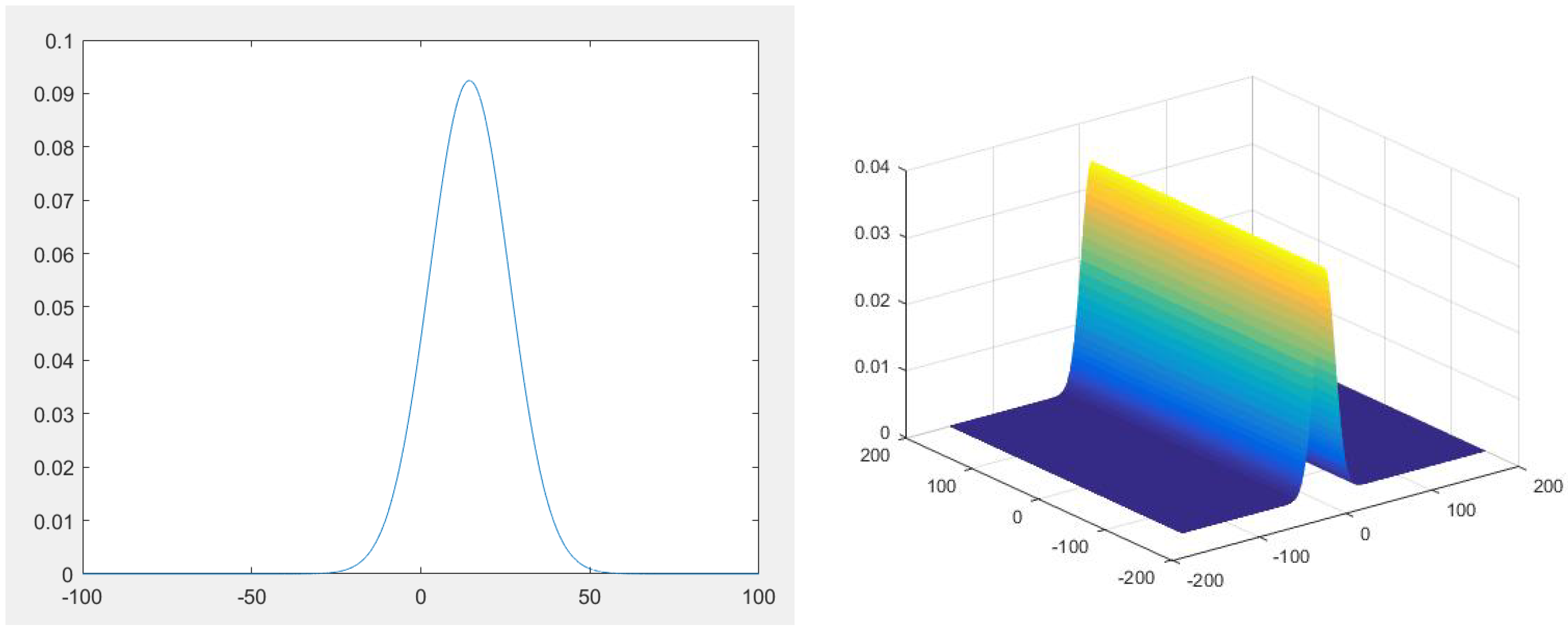

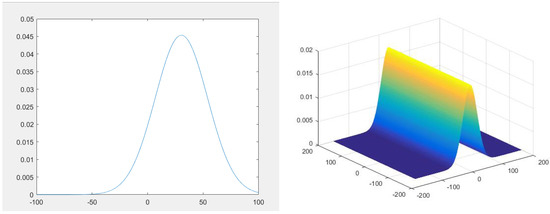

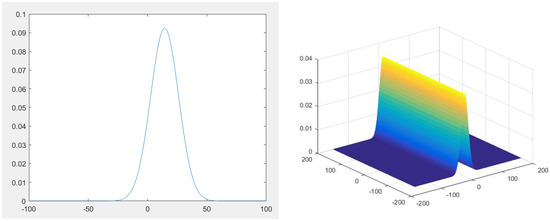

Figure 1 and Figure 2 show visually the sketches of the marginal entropy density functions , (derived in (25) with ) and the joint entropy density functions , (derived in (35) and (36)), respectively.

Figure 1.

The figures above show the figures of the maximum entropy marginal density (left) and joint density (right) determined by and , respectively.

Figure 2.

The figures above show the figures of the maximum entropy marginal density (left) and joint density (right) determined by and , respectively.

Author Contributions

Conceptualization, L.G. and D.H.; methodology, D.H. and L.G.; software, L.G.; validation, L.G.; formal analysis, L.G.; investigation, L.G.; resources, L.G.; data curation, L.G.; writing—original draft preparation, L.G.; writing—review and editing, L.G.; visualization, L.G.; supervision, L.G. and D.H.; project administration, L.G.; funding acquisition, D.H.

Funding

This work is supported by National Natural Science Foundations of China [grant number 11531001] and National Program on Key Basic Research Project [grant number 2015CB856004].

Conflicts of Interest

The authors declare no conflict of interest.

References

- Peng, S.G. Filtration consistent nonlinear expectations and evaluations of contingent claims. Acta Math. Appl. Sin. 2004, 20, 191–214. [Google Scholar] [CrossRef]

- Peng, S.G. Nonlinear expectations and nonlinear Markov chains. Chin. Ann. Math. Ser. B 2005, 26, 159–184. [Google Scholar] [CrossRef]

- Peng, S.G. Survey on normal distributions, central limit theorem, Brownian motion and the related stochastic calculus under sublinear expectations. Sci. China 2009, 52, 1391–1411. [Google Scholar] [CrossRef]

- Peng, S.G. G-Expectation, G-Brownian motion and related stochastic calculus of Ito^ type. Stoch. Anal. Appl. 2007, 2, 541–567. [Google Scholar]

- Peng, S.G. Law of large numbers and central limit theorem under nonlinear expectations. arXiv, 2007; arXiv:math/0702358. [Google Scholar]

- Peng, S.G. G-Brownian motion and dynamic risk measure under volatility uncertainty. arXiv, 2007; arXiv:0711.2834. [Google Scholar]

- Peng, S.G. A new central limit theorem under sublinear expectations. Mathematics 2008, 53, 1989–1994. [Google Scholar]

- Peng, S.G. Multi-dimensional G-Brownian motion and related stochastic calculus under G-expectation. Stoch. Process. Their Appl. 2006, 118, 2223–2253. [Google Scholar] [CrossRef]

- Hu, M.S. Explicit solutions of G-heat equation with a class of initial conditions by G-Brownian motion. Nonlinear Anal. Theory Methods Appl. 2012, 75, 6588–6595. [Google Scholar] [CrossRef]

- Gong, X.S.; Yang, S.Z. The application of G-heat equation and numerical properties. arXiv, 2013; arXiv:1304.1599v2. [Google Scholar]

- Akihito, H.; Nobuaki, O. Quantum Probability and Spectral Analysis of Graphs; Springer: Berlin/Heidelberg, Germany, 2007. [Google Scholar]

- Durrett, R. Probability: Theory and Examples; Cambridge University Press: Cambridge, UK, 1996. [Google Scholar]

- Frontini, M.; Tagliani, A. Entropy convergence in Stieltjes and Hamburger moment problem. Appl. Math. Comput. 1997, 88, 39–51. [Google Scholar] [CrossRef]

- Schmu¨dgen, K. The Moment Problem; Springer: Berlin/Heidelberg, Germany, 2017. [Google Scholar]

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).