1. Introduction

The purpose of this article is to provide a unified framework to obtain analytical, least-squares, approximated solutions of nonhomogeneous linear differential equations (DEs) of order

with nonconstant coefficients subject to a set of

n constraints:

where

and

can be any functions, continuous and nonsingular, within the range of integration, and

indicates the

ith derivative specified at time

. The proposed method unifies how to solve initial value problems (IVPs), boundary value problems (BVPs), or multi-point value problems (MVPs).

While the proposed method works for linear DEs of any order, for brevity of exposition, the method is presented to solve second-order DEs:

This is because the proposed technique does not change if n increases, other than by adding additional terms.

1.1. Background on the Existing Approaches

Various techniques exist to provide numerical approximations of the DEs given in Equation (

1). The most widely used approaches are the Runge–Kutta methods [

1] for IVPs and shooting methods for BVPs. The shooting methods solve BVPs essentially by transforming BVPs into IVPs. These methods provide enough precision for most of the practical problems. However, the estimated solution is not analytic and is provided at discrete points, usually with an error growing along the range of integration. References [

2,

3] provide a collection of the most well-known methods for solving IVPs and BVPs. Other existing alternative integration approaches include, but are not limited to, the Gauss–Jackson method [

4], collocation techniques [

5], and the Modified Chebyshev–Picard Iteration [

6] (a path-length integral approximation), which has recently been proven to be highly effective. All of these methods are based on low-order Taylor expansions, which limits the step size that can be used to propagate the solution. Higher-order Taylor methods have also been analyzed [

7]. More contemporary approaches are the following:

Collocation Techniques. In these techniques, the solution components are approximated by piecewise polynomials on a mesh. The coefficients of the polynomials form the unknowns to be computed. The approximation to the solution must satisfy the constraint conditions and the DEs at collocation points in each mesh subinterval. We note that the placement of the collocation points is not arbitrary. A modified Newton-type method, known as quasi-linearization, is then used to solve the nonlinear equations for the polynomial coefficients. The mesh is then refined by trying to equidistribute the estimated error over the whole interval. An initial estimate of the solution across the mesh is also required. However, a common weakness of all these collocation techniques (low-order Taylor expansion models) is that they are not effective in enforcing algebraic constraints. Enforcing the DE constraints is the strength of the proposed method, as the DE constraints are embedded in the searched solution expressions. This means that the analytical solution obtained, even if completely wrong, perfectly satisfies all the DE constraints.

Spectral methods. Spectral methods are used to numerically solve DEs by writing the solution as a sum of certain “basis functions” (e.g., Fourier series) and then choosing the coefficients in the sum in order to satisfy the DEs as well as possible (e.g., by least-squares). Additionally, in spectral methods, the constraints must then be enforced. This is done by replacing one (or more) of the least-squares equations with the constraint conditions. The proposed method proceeds in the reverse sequence. It first builds a special function, called a “constrained expression”, that has the boundary conditions embedded, no matter what the final estimation is. Then, it uses this expression to minimize the DE residuals by expressing the free function of the constrained expression,

, as a sum of some basis functions (e.g., orthogonal polynomials). The resulting estimation always perfectly satisfies the boundary conditions as long as they are linear. To provide an example, a linear constraint involving four times (

,

,

and

) can be:

It is not clear how the spectral methods can enforce this kind of general linear constraint. Additionally, the spectral methods provide solutions approximating the constraints.

The solution of the proposed method is an analytical function fully complying with the DE constraints, and it approximates the DE by minimizing the residuals in a least-squares sense.

1.2. The Idea Behind the Proposed Method

The least-squares solution of Equation (

2) is obtained using

constrained expressions, which are introduced in [

8] by the

theory of connections, a methodology to provide general interpolation functions with an embedded set of

n linear constraints. These expressions are found using the following general form:

where

are

n assigned functions and

is an unknown function,

independent from the

functions;

are

n coefficients that are derived by imposing the

n linear constraints on the

function. The linear constraints considered in the theory of connections [

8] are any linear combination of the function and/or its derivatives evaluated at specified values of the independent variable,

t. For an assigned set of

n independent functions,

, the

n constraints of the DE allow us to derive the unknown vector of coefficients,

. By substituting this expression into Equation (

3), we obtain an equation satisfying all the constraints, no matter what

is. The problem of solving the DE is, therefore, to find the function

. Because the DE is linear in

and

is linear in

, then, by substituting Equation (

3) into the DE, we obtain a new DE, linear in

. In this paper, the solution,

, is then expressed as a linear combination of orthogonal polynomials. The coefficients of this linear combination constitute the solution of the problem.

Orthogonal polynomials are excellent basis sets to describe the

free function appearing in Equation (

3). However, most of these orthogonal polynomials, such as Chebyshev or Legendre orthogonal polynomials, are defined in some specific defined range,

. (Specifically, Chebyshev and Legendre orthogonal polynomials are defined in

.) Therefore, in order to adopt them, the DE must be rewritten in terms of the new variable,

x, linearly related to

as:

and by introducing the integration range ratio,

, these relationships become:

where

is specified in BVPs while in IVPs it is the integration upper limit. This change of variable also affects the derivatives. Using the notation

for the derivative with respect to

t and

for the derivative with respect to

x, we obtain:

By changing the integration variable, particular attention must be given to the constraints specified in terms of derivatives. In fact, the constraints provided in terms of the first and/or second derivatives also need to comply with the rules given in Equation (

4), meaning that the constraints on the derivatives in terms of the new

x variable depend on the integration time range, through the integration range ratio,

c.

Functions

,

,

and

are also rewritten in terms of

x, where, for instance,

, which can be indicated by

. This means that, using the new variable, we can write

,

,

, and

. Therefore, Equation (

2) can be rewritten as:

2. Least-Squares Solution of IVPs

A second-order DE has two constraints. Therefore, once the DE is written as in Equation (

5), the constrained expression is searched, having the form:

For IVPs, with constraints,

The values of

and

are:

Substituting into Equation (

6),

and setting

and

, we obtain:

Then, substituting into Equation (

6),

The DE, written in terms of

, becomes:

where:

The function

can be set as a linear combination of

m basis functions,

, where

is the vector of

m basis functions, and

is a vector of

m unknown coefficients. Therefore, Equation (

7) becomes:

Equation (

9), for any value of

x, is linear in terms of the unknown coefficients (

). Therefore, this equation can be specified for a set of

N values of

x ranging from the initial value,

, to the final value,

. This can be done, for instance, by a uniform

step. This set of

N equations in

m unknowns (usually,

) can be set in the matrix form:

where:

The linear system of Equation (

10) allows us to compute the solution (coefficient vector

) by least-squares.

The simplest way to write a constrained expression subject to

and

is to select

and

. This provides the values of

and

by inverting a

matrix with its determinant equal to 1. For the variety of constraints considered in this paper, this property can be obtained by selecting the functions

and

to be those provided in

Table 1.

2.1. Accuracy Tests

We consider integrating the following IVP from

to

:

whose general solution is

. We consider solving this IVP using Chebyshev orthogonal polynomials. This implies that, in terms of the new variable, the constraint derivative becomes

. Equation (

11) has been solved using the proposed least-squares approach with

basis functions and a discretization made of

points, and it has been integrated using the MATLAB function ode45 (The numerical comparisons made in this paper are limited to ode45 because it is the most widely used method in commercial applications.), implementing a Runge–Kutta variable-step integrator formula [

1].

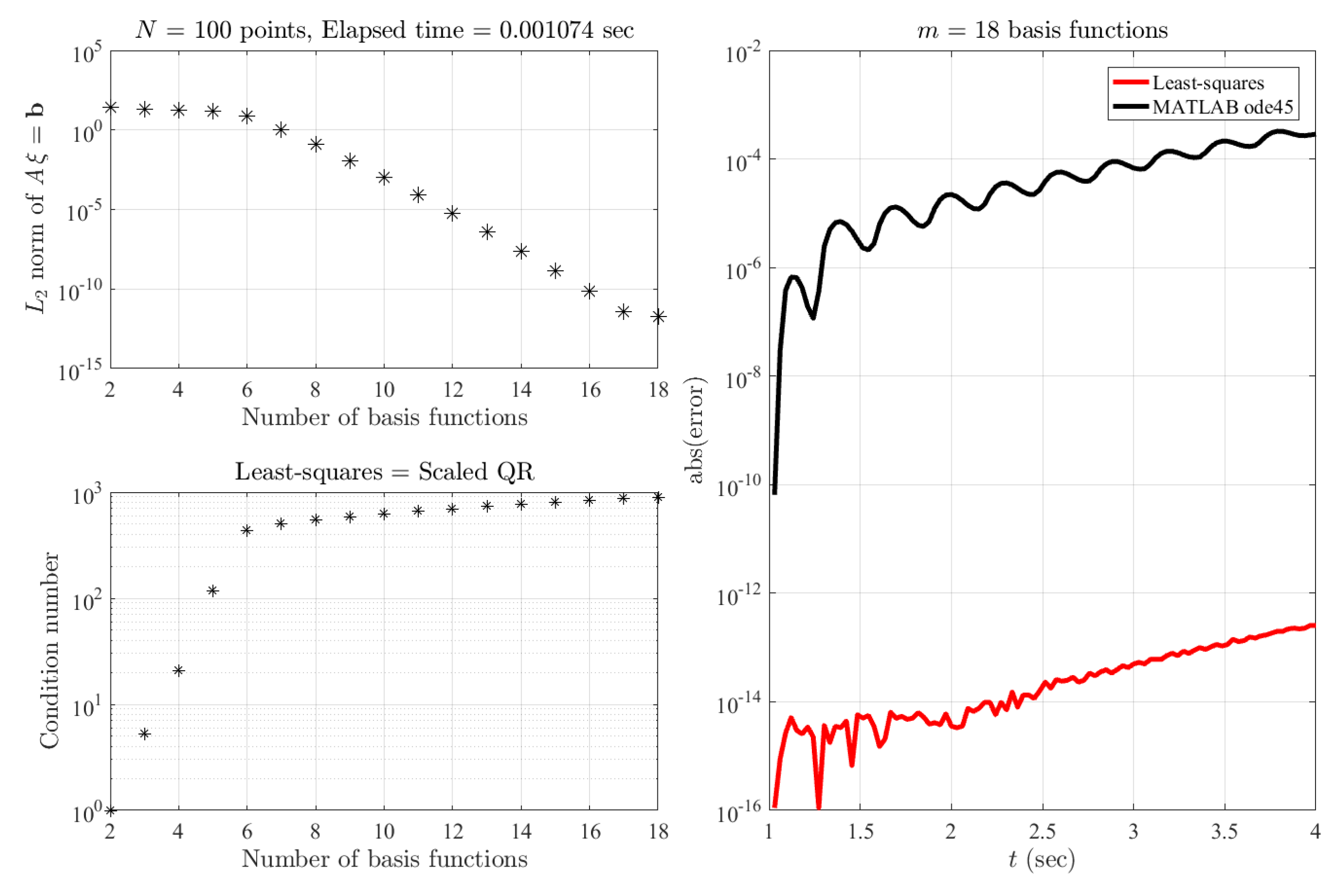

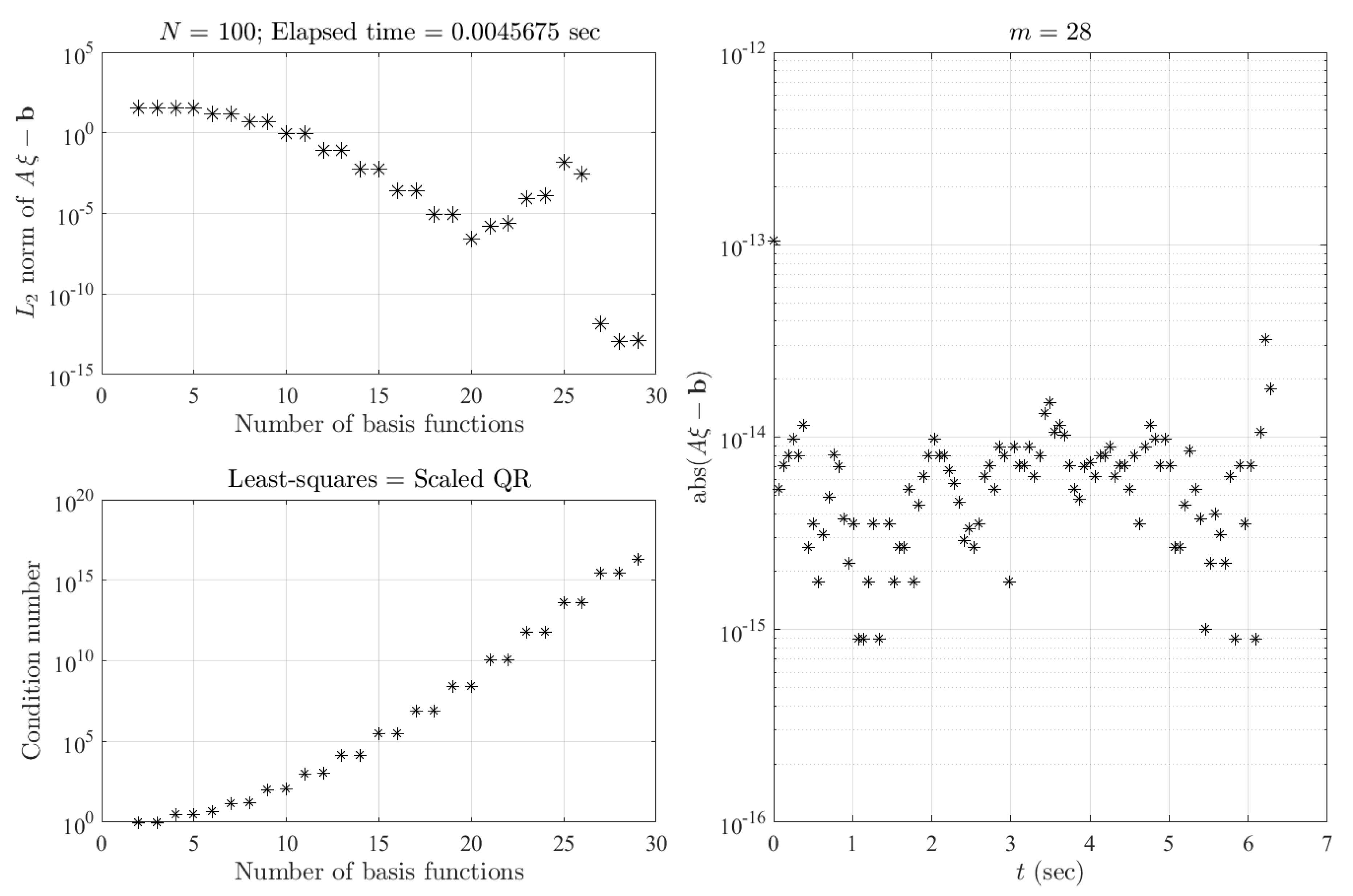

The results obtained are shown in

Figure 1. In the top-left plot, the

norm of

is shown as a function of the number of basis functions, while the bottom-left plot provides the condition number to solve the least-squares. If the solution requires just

basis functions and

is used, then the coefficients from

are all zeros. The values of the

norm of the least-squares residuals identify the minimum number of basis functions. The accuracies obtained using MATLAB’s ode45 [

1] integrator and using the least-squares solution (The least-squares problem has been solved by QR decomposition of a scaled

matrix.) with

basis functions and

points are shown in the right plot. The least-squares solution shows about 9 orders of magnitude of accuracy gain.

In the following subsections, the constrained expressions for two other kinds of IVP—known-function and first-derivative and known first- and second-derivative—are provided.

2.2. Second-Order DE Subject to and

In terms of the new

x variable, the initial conditions are

and

, while a constrained expression is:

Then Equation (

5) becomes:

and the least-squares solutions follows the same steps provided in Equations (

7)–(

10).

We note that, if

and

are known, then

can be derived using Equation (

5) evaluated at

:

provided that

. Therefore, the least-squares solution of the DE given in Equation (

5) with constraints

and

can be solved as in the previous section with constraints

and

.

2.3. Second-Order DE Subject to and

In terms of the new variable, the initial conditions are

and

. In this case, a constrained expression is:

Equation (

5) becomes:

and the least-squares solution follows the same steps provided in Equations (

7)–(

10).

Again, if

and

are known, then

can also be computed by specializing Equation (

5) at

:

Therefore, the solution of the DE given in Equation (

5) with constraints

and

can also be solved as in the previous section with constraints

and

.

3. Least-Squares Solutions of BVPs

A two-point BVP, already written in terms of the new variable and subject to the constraints

and

, has a constrained expression that can be obtained using Equation (

6), where the values of

and

are:

Substituting into Equation (

6),

that is,

which is an equation that can be written as:

Substituting this expression into the DE,

and we obtain:

where:

Therefore, the procedures to solve IVPs and BVPs have little differences. In fact, for BVPs, we obtain the linear equation:

Once the least-squares has been solved and, therefore, the coefficients vector

has been computed, then the solution is:

Numerical integrations of IVPs provide subsequent estimates based on previous estimates. This implies that the error, in general, increases along the integration range. On the contrary, as shown by the next numerical tests, the accuracy provided by the least-squares solution is “uniformly” distributed along the integration range. In addition, if greater accuracy is desired for some specific regions, then by increasing the number of points in those regions, accuracy increase is obtained where desired. Alternatively, by adopting weighted least-squares, the weight’s value gives us an additional option to control the accuracy distribution.

In the following subsections, the proposed least-squares approach is tested, for the four cases of BVPs with known, unknown, no, and infinite solutions.

3.1. Numerical Accuracy Tests for a BVP with Known Solution

We consider the following BVP:

whose solution is

, with derivatives

and

.

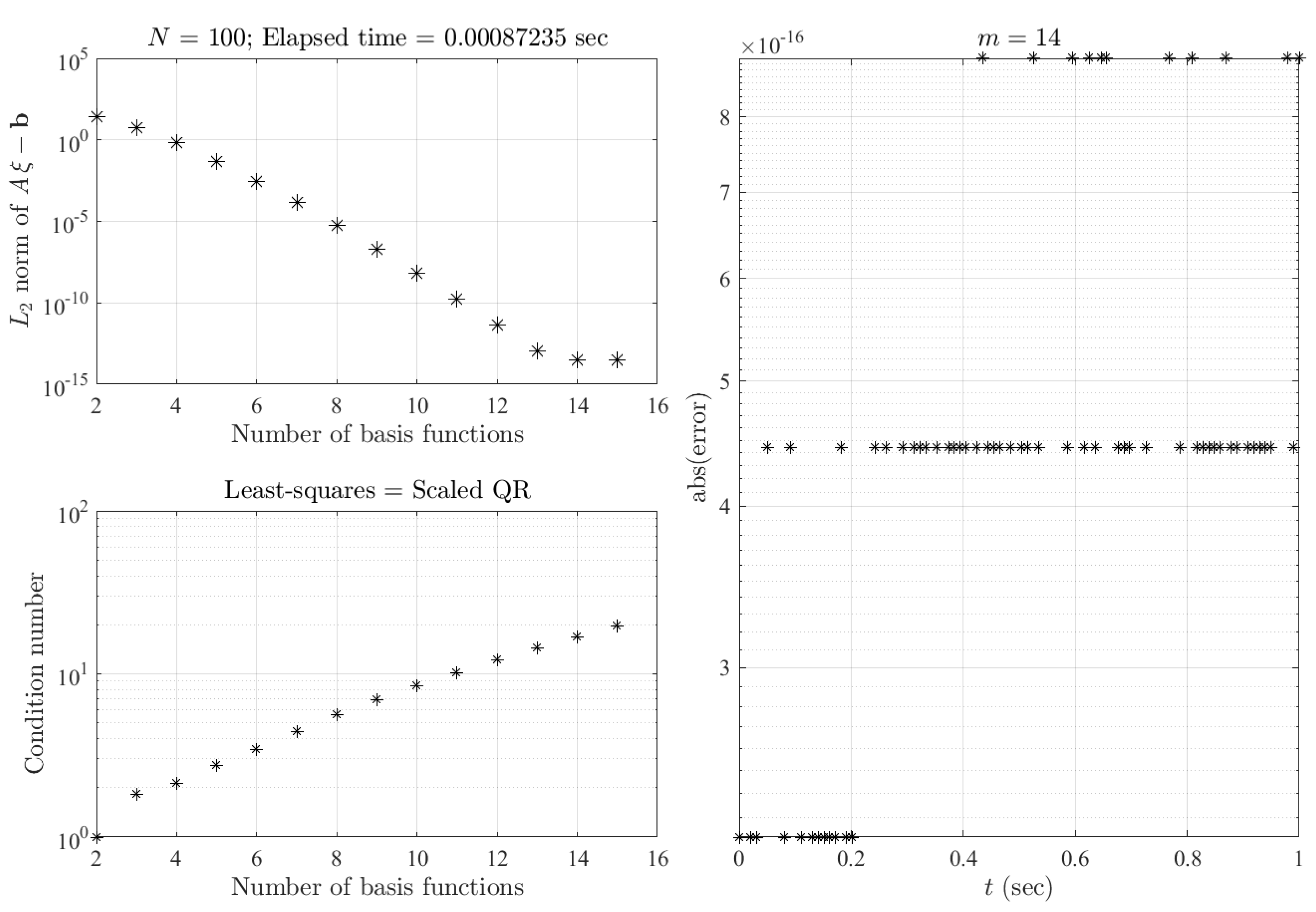

Figure 2 shows the least-squares-approach results for this test in terms of the

norm of

residuals (top-left) and the condition number of matrix

(bottom-left) as a function of the number of basis functions considered. The errors with respect to the true solution obtained with

basis functions (Legendre orthogonal polynomials) and

points (discretization) are provided in the right plot. These plots clearly show that the machine error precision is obtained with excellent numerical stability (low condition number).

3.2. Numerical Accuracy Tests for a BVP with Unknown Solution

We consider the DE (completely invented) with an unknown analytical solution:

subject to

and

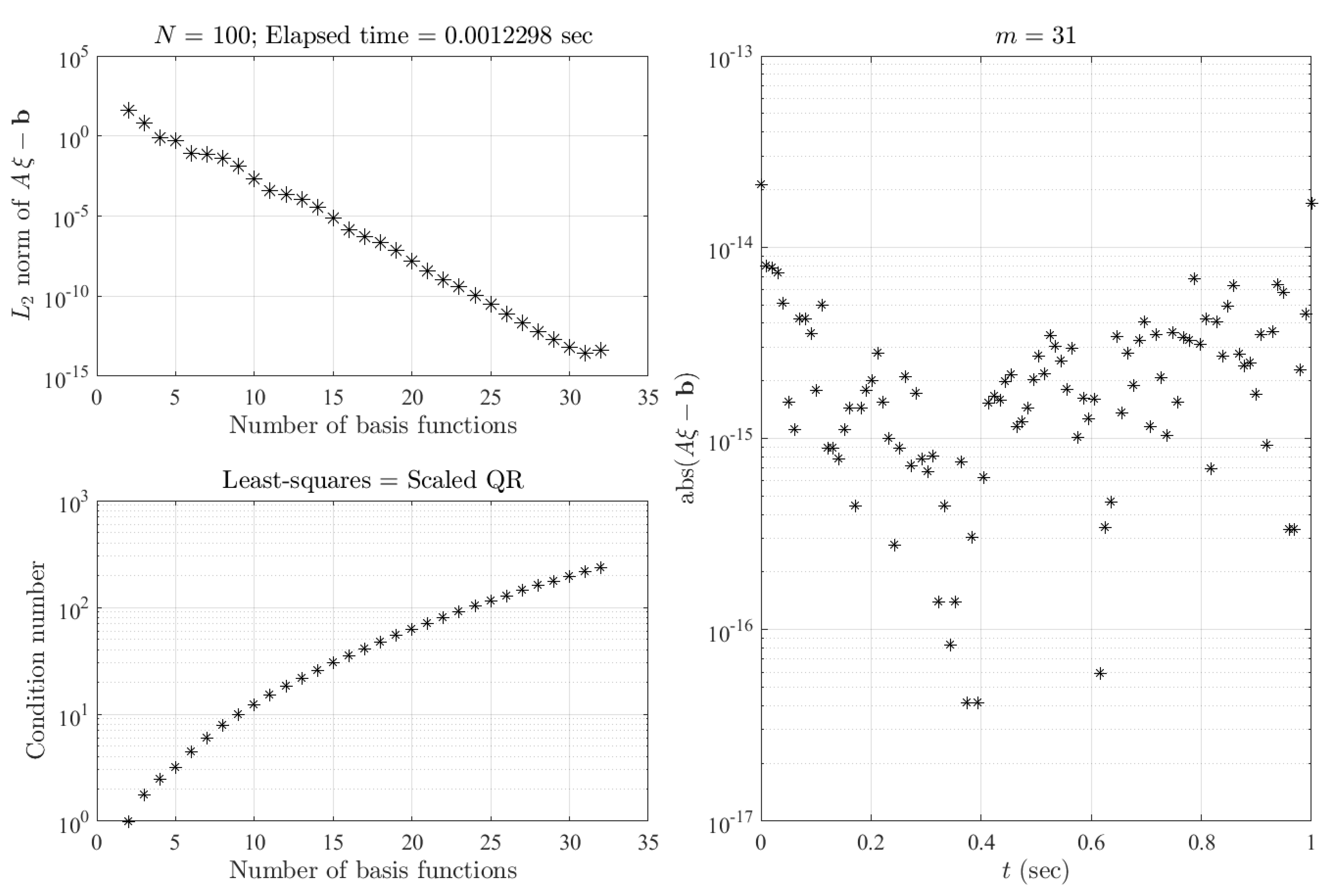

. In this case, the least-squares-solution results are given in the plots of

Figure 3 with the same explanation as for those provided in

Figure 2. The

norm of the residuals (top-left plot) decreases up to

-degree basis functions (Chebyshev orthogonal polynomials of the first kind) while the condition number remains at good values (bottom-left plot). The machine error values of the residuals, obtained for

-degree basis functions and

points, are shown in the right plot.

3.3. Numerical Accuracy Tests for a BVP with No Solution

We consider the following BVP with no solution:

In fact, the general solution of Equation (

17) is

, where the constraint

gives

, while the constraint

gives

, a wrong identity.

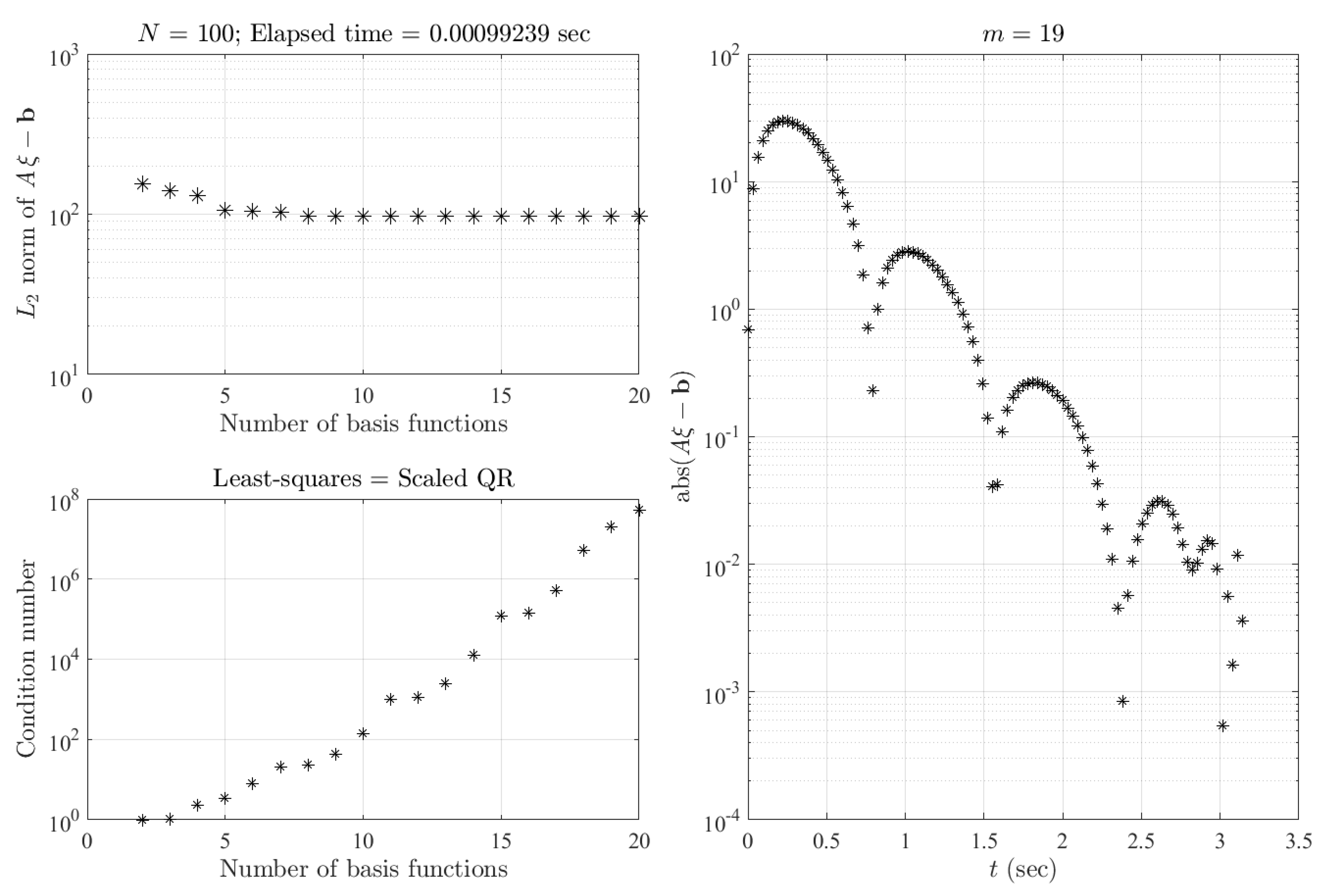

Figure 4 shows the results when trying to solve, by least-squares, the problem given in Equation (

17). For this example, the number of orthogonal polynomials has been increased up to that for which the condition number of the matrix

becomes too large (numerical instability), at an upper bound of

. The number of basis functions has been increased up to

by keeping the number of points to

. By increasing the number of basis functions, the

norm of

does not converge, and the condition number of

becomes huge. These are two potential indicators that the problem has no solution. However, even in this no-solution case, the proposed least-squares approach provides a “solution” complying with the DE constraints.

3.4. Numerical Accuracy Tests for a BVP with Infinite Solutions

Finally, we consider the BVP with infinite solutions:

In fact, the general solution of Equation (

18) is

, consisting of infinite solutions, as

b can have any value. The results of the least-squares approach are given in

Figure 5, showing the convergence, but not at machine-level accuracy.

Figure 5 shows the results obtained for this infinite-solutions case. The main difference with respect to the no-solution case is in the unstable convergence of the

norm of

. In fact, by increasing the number of basis functions from

to

, the

norm actually increases. This unusual behavior is still under analysis, and no explanation is currently available. The instability may be explained by several (infinite) solutions being possible, and some of these may be “close” from a convergence numerical point of view. In this infinite-solutions case, as in the no-solution case, the value of the condition number increases to very high values. In this example, an upper bound has been defined for the number of basis functions (

), while there is no upper bound for the condition number value.

3.5. Constrained Expressions for BVP with Different Constraints

In this section, constrained expressions are provided for BVPs of a second-order DE subject to all (nine) possible constraints.

- (1)

The first constraints considered are the function at the initial time,

, and the first derivative at the final time,

. For this case, the final constraint in terms of the new variable,

, becomes

, and the constrained expression:

can be used. Substituting this into Equation (

5), we obtain:

Then, the least-squares solution can be obtained using the procedure described by Equations (

12)–(

15). Equation (

19) has been tested, providing excellent results, which are not included here for sake of brevity.

- (2)

The second constraints are the function at the initial time,

, and the second derivative at the final time,

. For this case, the final constraint in terms of the new variable is

, and the constrained expression is:

Substituting this into Equation (

5), we obtain:

Then, it follows as in the first case.

- (3)

The third constraints are the first derivative at the initial time,

, and the function at the final time,

. For this case, the initial constraint in terms of the new variable is

, while the constrained equation:

can be used. Substituting this into Equation (

5), we obtain:

and the least-squares solution can be obtained using the procedure described by Equations (

12)–(

15).

- (4)

The fourth constraints are the first derivative at the initial and final times,

and

. This special equation is known as Mathieu’s DE [

9,

10]. For this case, the constraints in terms of the new variable are

and

, while the constrained equation:

can be used. Substituting this into Equation (

5), we obtain:

and the least-squares solution can be obtained using the procedure described by Equations (

12)–(

15).

- (5)

The fifth constraints are the first derivative at the initial time,

, and the second derivative at the final time,

. For this case, the constraints in terms of the new variable are

and

, while the constrained equation:

can be used. Substituting this into Equation (

5), we obtain:

and the least-squares solution can be obtained using the procedure described by Equations (

12)–(

15).

- (6)

The sixth constraints are the second derivative at the initial time,

, and the function at the final time,

. For this case, the first constraint in terms of the new variable is

, while the constrained equation:

can be used. Substituting this into Equation (

5), we obtain:

and the least-squares solution can be obtained using the procedure described by Equations (

12)–(

15).

- (7)

The seventh constraints are the second derivative at the initial time,

, and the first derivative at the final time,

. For this case, the constraints in terms of the new variable are

and

, while the constrained equation:

can be used. Substituting this into Equation (

5), we obtain:

and the least-squares solution can be obtained using the procedure described by Equations (

12)–(

15).

- (8)

The final (eighth) constraints are the second derivative at the initial and final times,

and

. For this case, the constraints in terms of the new variable are

and

, while the constrained equation:

can be used. Substituting this into Equation (

5), we obtain:

and the least-squares solution can be obtained using the procedure described by Equations (

12)–(

15).

4. MVP Example

Finally, to show the potential of the proposed least-squares approach, we consider solving the following third order DE:

where:

and is subject to the multi-point constraints:

whose analytical solution is

. We consider integrating this DE from

to

(beyond the constraint bounds,

) using Legendre orthogonal polynomials (

). Therefore,

Equation (

20) becomes:

subject to:

where

,

, and

.

A constrained expression [

8] satisfying Equation (

22) can be found as:

where

,

, and

have been selected as the first three monomials. The three coefficients,

,

, and

, can be derived from the DE constraints:

Now, setting

,

, and

, the coefficients

,

and

are:

Then, substituting in the constrained expression, we obtain:

whose derivatives are:

These expressions are then substituted back into Equation (

21), and the linear equation provided by the least-squares approach is obtained. The numerical results of this procedure are given in

Figure 6.

The top-left plot of

Figure 6 shows the analytic true solution (black line) with the three constraints: two for the function (black markers) and one for the first derivative (red line). We note that the range of integration,

, goes beyond the constraints’ coordinate range,

. This is purposefully done to show that for the proposed least-squares approach, the range of integration,

, is independent from the constraint range,

.

The bottom-left plot shows that as the number of basis functions considered increases, the condition number to solve the least-squares problem has values that guarantee the numerical stability of the least-squares solution. The number of basis functions continues to increase until the

norm of

decreases, as shown in the top-right plot. The minimum is reached when

. However, the true number of basis functions adopted to describe

is 18 and not 21. The reason is that, to build the constrained expression appearing in Equation (

23), the functions for

,

, and

are constant, linear, and quadratic, respectively. These three functions cannot be included to express

, which is consequently expressed as:

The bottom-right plot of

Figure 6 shows the absolute error obtained when

basis functions are used to express

, while the number of Legendre polynomials used for

is

. This plot clearly shows that, using 18 basis functions for

, the least-squares approach provides a machine error solution.

5. Conclusions

This study presents a new approach to provide least-squares solutions of linear nonhomogeneous DEs of any order with nonconstant coefficients, both continuous and nonsingular in the integration range. Without loss of generality and for the sake of brevity, the implementation of the proposed method has been applied to second-order DEs. This least-squares approach can be adopted to solve IVPs, BVPs, and MVPs with constraints given in terms of the function and/or derivatives.

The proposed method is based on imposing that the solution has a specific expression, called a constrained expression, which is a function with the DE constraints embedded. This expression is given in terms of a new unknown function, . The original DE is rewritten in terms of , thus obtaining a new DE for which the constraints are embedded in the DE itself. Then, the function is expressed as a linear combination of basis functions, . The coefficients of this linear expansion are then computed by least-squares by specializing the new DE for a set of N different values of the independent variable. In this study, the Chebyshev and Legendre orthogonal polynomials have been selected as basis functions.

Numerical tests have been performed for IVPs with known and unknown solutions. A direct comparison has been made with the solution provided by the MATLAB function ode45, implementing a Runge–Kutta variable-step integrator [

1]. In this test, the least-squares approach shows about 9 orders of magnitude of accuracy gain. Numerical tests have been performed for BVPs for the four cases of known, unknown, no, and infinite solutions. In particular, the condition number value and the

norm of the residual can be used to discriminate between whether or not a BVP has a single solution.

Finally, a numerical test has been performed for a third-order MVP with constraints (two on functions and one on the first derivative) defined inside the integration range. In all our tests, the machine error precision has been reached with a limited number of basis functions and the discretization number. All the computations were performed on a laptop using MATLAB software. The elapsed times (in seconds) required to obtain all the solutions are specified in the figures for all the test cases considered.

The proposed method is not iterative, is easy to implement, and, as opposed to numerical approaches, is based on truncated Taylor series (such as Runge–Kutta methods); the solution error distribution does not increase along the integration, but it is approximately uniformly distributed in the integration range. In addition, the proposed technique unifies the way to solve IVPs, BVPs, and MVPs. The method can also be used to solve higher-order linear DEs, as long as the DE constraints are linear.

This study is not complete, as many investigations should be performed before setting this approach as a standard method to integrate linear DEs. In addition, many research areas are still open and under question. A few of these open research areas are the following:

- (1)

Extension to weighted least-squares.

- (2)

Error-bound estimates for the problems admitting solutions.

- (3)

Nonuniform distribution of points and optimal distributions of points to increase accuracy in specific ranges of interest.

- (4)

Comparisons between different function bases and identification of an optimal function base (if it exists).

- (5)

Analysis using Fourier bases.

- (6)

Accuracy analysis of number of basis functions versus points distribution.

- (7)

Extension to nonlinear DEs.

- (8)

Extension to partial DEs.

- (9)

Extension to nonlinear constraints.