1. Introduction

In modern industrial manufacturing processes, quality control is of paramount importance, with surface defect detection playing a critical role in ensuring product performance and safety. As a fundamental industrial material, steel’s surface defects (such as cracks, pits, and scratches) not only directly impact the subsequent processing techniques and service life but may also pose serious safety hazards. Therefore, efficient and precise detection technologies for steel surface defects hold significant engineering application value and theoretical research significance [

1]. Object detection, as one of the core tasks in computer vision, aims to automatically locate and identify regions of interest in images. It has gradually replaced traditional manual inspection methods and become a key technology in industrial quality inspection processes. However, the existing surface defect detection algorithms still face multi-dimensional trade-offs between their accuracy, speed, and generalization capabilities. Therefore, there is an urgent need to design object detection models with high accuracy, high speed, and strong generalization capabilities to further enhance the robustness and engineering adaptability of industrial defect detection systems [

2].

Currently, data-driven object detection algorithms are primarily categorized into one-stage and two-stage algorithms. Two-stage algorithms include methods such as Faster Region-Based Convolutional Neural Networks (R-CNNs) [

3], Mask R-CNNs [

4], and Cascade R-CNNs [

5], which have achieved significant advancements in the field of object detection. Xia et al. [

6] proposed an algorithm, an improved Faster R-CNN, by incorporating bilateral filtering technology to smooth the image background, combined with ResNet50 featuring variable convolutions and the Feature Pyramid Network (FPN), which substantially elevated the accuracy of the surface defect detection on panels. However, this approach still encounters difficulties in locating and identifying small defects within complex backgrounds. To tackle this, Zhang et al. [

7] developed an upgraded Mask R-CNN model by substituting the feature extraction network in the Faster R-CNN with the more robust EfficientNet and refining the FPN structure, thereby successfully boosting the accuracy and efficiency of steel surface defect detection. Nevertheless, this method retains potential for further enhancement in recognizing defects amid high-noise backgrounds and intricate shapes. To address these limitations, Wang et al. [

8] put forward a method for the detection of metal surface defects based on an improved Cascade R-CNN, integrating advanced elements such as the Region of Interest (ROI) Align module and a cascaded Region Proposal Network (RPN) to strengthen the detection performance for small defects and complex backgrounds. Experimental findings demonstrate that the approach surpasses traditional Cascade R-CNNs in terms of the detection accuracy and effectively bolsters the model’s adaptiveness when dealing with defects across different scales. However, the method still has challenges, including a complex model structure, lengthy training time, and high computational resource dependency, which limit its deployment efficiency and real-time performance in actual industrial environments. Overall, while the two-stage method achieves high accuracy, its limitations in detecting small objects and its complex deployment make it unsuitable within industrial contexts requiring stringent real-time capabilities.

Compared to the two-stage algorithms, one-stage object detectors built on the You Only Look Once (YOLO) family offer efficient inference speeds and real-time applicability, making them commonly utilized within the domain of industrial flaw detection. Hussain et al. [

9] systematically evaluated the evolution of the YOLO series from its original version to YOLOv8 for industrial surface defect detection, confirming its strong balance between computational efficiency and detection accuracy, which is particularly suitable for the comprehensive requirements of industrial automation, including high precision, fast response, and edge deployment. For model lightweighting, Chen et al. [

10] incorporated MobileNetV2 into YOLOv3 as the backbone network and fused multi-layer features, enhancing the small-object detection performance and embedded deployment efficiency. However, this approach shows an unstable performance when dealing with defects featuring blurred edges, strong noise, or significant scale variations, and its generalization capabilities require further enhancement. Zhou et al. [

11] optimized the YOLOv5 architecture by introducing feature enhancement and attention mechanisms to improve small-object detection, achieving promising results on specific datasets. Nevertheless, the increased structural complexity led to reduced inference efficiency, and the lack of systematic validation across multiple scenarios and defect categories limits its generalizability. Ma et al. [

12] integrated GhostNet with a multi-scale attention mechanism into the YOLOv8 architecture, reducing the computational overhead while enhancing the feature perception and regression capabilities, demonstrating robust deployment flexibility. However, the model still tends to miss small defects in complex backgrounds, with insufficient detail representation. Xie et al. [

13] optimized YOLOv10 by introducing a sliding loss function and modular design, effectively addressing category imbalance and improving the detection accuracy and recall. Wang et al. [

14] proposed YOLO-LSDI, an improved model based on YOLOv11, incorporating a fusion module of multi-scale perception and attention-based strategies to boost the ability to detect low-contrast, small-sized, and irregularly shaped defects, which exhibited a strong comprehensive performance. However, the network structure becomes more complex, increasing the inference latency and reducing the deployment flexibility. Tian et al. [

15] focused on defects in concrete and other multi-category materials, integrating YOLOv11 with dynamic convolutions and enhanced deformable convolutions to improve the adaptability in complex textured backgrounds while balancing the accuracy and computational cost. Nevertheless, detection instability persists in scenarios with overlapping defects or blurred boundaries. Hence, more efficient object detection algorithms urgently need to be developed to meet the diverse requirements of industrial defect detection across various scenarios.

Additionally, common industrial inspection scenarios include weld defect identification, surface scratch detection, and structural defect localization. These tasks often face challenges such as uneven sample distributions, diverse defect types, and subtle differences between defects. Due to the inherent characteristics of industrial defect detection, such as the high sample acquisition costs, imbalanced category distribution, and significant domain differences across application scenarios, traditional supervised learning methods struggle to build robust and generalizable models [

16]. Distribution discrepancies between training data and testing environments can substantially degrade the model performance, particularly under pronounced domain shifts, where generalization is difficult to ensure. Transfer learning effectively enhances the model performance in target domains by leveraging knowledge from source domains, especially in data-limited scenarios, thereby mitigating overfitting risks and improving adaptability [

17]. When integrated with the YOLO series of deep object detection models, transfer learning further exploits their advantages to provide real-time, accurate, and efficient solutions for industrial defect detection tasks. Researchers have demonstrated the effectiveness of combining YOLO algorithms with transfer learning in various defect detection applications. Situ et al. [

18] addressed data constraints and computational overhead in sewer defect detection by proposing a transfer learning framework based on YOLO, integrating 11 pre-trained CNNs to identify five defect types, and comparing it with mainstream methods such as R-CNNs [

19]. This approach alleviates data insufficiency through transfer learning, enhancing feature extraction and generalization. Experiments indicate that the transferred YOLO outperforms in accuracy, speed, and IoU, with ResNet18 [

20] achieving the best performance and optimal defect separation, underscoring its value in real-world monitoring. Li et al. [

21] proposed an improved YOLOv4 for fabric surface defect detection, incorporating Density-Based Spatial Clustering of Applications with Noise (DBSCAN) clustering to optimize anchor boxes and DenseNet backbone networks to enhance feature extraction, and introducing a dual-channel feature enhancement module. They also applied transfer learning to migrate prior knowledge from large image datasets, addressing scarcity and diversity issues in fabric defect data. Experimental results show an mAP of 98.97% on an expanded textile defect dataset, with superior computational efficiency compared to the original YOLOv4, highlighting its potential for automated quality control in the textile industry. Zhu et al. [

22] developed a small-sample transfer learning approach to boost the identification of defects including inclusions, blemishes, cracks, oxide deposit entrapment, scratches, and pits in Laser Directed Energy Deposition (L-DED)-manufactured components. This method utilizes a YOLOv7 model pre-trained on the NEU-DET dataset [

23], achieving a significant improvement with an mAP of 0.62. Chen et al. [

24] tackled the insufficient detection accuracy and edge deployment challenges by proposing LWFDD-YOLO, a lightweight framework based on YOLOv8n. This framework incorporates the Generalized Elastic Localized Affine Network for Sparse Kernel Approximation (GELAN_SKA) module for selective kernel attention-based feature optimization, integrates Cascaded Group Attention (CGA) for enhanced diversity, and uses Dy_Sample [

25] to reduce the computational load, enabling efficient detection. Additionally, it employs transfer learning to transfer features from YOLOv8l, boosting performance. Experiments on a self-built dataset yielded an mAP of 87.9% and an accuracy of 89.4%, improving by 4.5–8.9% over the original model, with a 23.4% parameter reduction and 163.4 FPS speed, demonstrating its suitability for textile automation. Similarly, to address challenges like the size, aspect ratio, and complex background variability in concrete crack detection, Sohaib et al. [

26] proposed a systematic evaluation framework for multiple YOLO models, first training a general model on datasets with and without cracks to extract abstract features and then using transfer learning to fuse specialized datasets simulating real-world scenarios (e.g., occlusion, size changes, and rotation) for improved robustness and generalization. Experiments show that this framework balances the inference speed and detection accuracy effectively, with YOLOv10 achieving a 74.52% mAP and 51 FPS, outperforming other variants and exhibiting high efficiency and applicability.

While the current transfer learning methods based on YOLO have demonstrated some success in fields such as industry, materials, and disaster response, they often exhibit limited adaptability to industrial inspection tasks. From a data perspective, mainstream approaches primarily rely on models pre-trained on general-purpose, open-source datasets (e.g., COCO and ImageNet) for transfer learning. However, objects in these datasets differ significantly in their semantics and structure from surface defects in industrial settings, especially under complex real-world conditions like micron-level scratches, highly reflective backgrounds, and fluid contamination. Consequently, effectively modeling small-scale, low-contrast defect features remains challenging, resulting in high false-positive rates, inconsistent accuracy, and inadequate real-time performance in the existing models, which fail to satisfy the requirements of high-precision industrial inspection. From a transfer strategy perspective, the existing methods typically involve full-network weight transfer, directly applying general features from pre-trained models to new tasks. Yet, in novel industrial scenarios, these methods frequently lack targeted fine-tuning and thus fail to adapt adequately to the target domain’s feature distribution. This leads to suboptimal feature extraction, poor adaptability, and a diminished detection accuracy for small defects in complex backgrounds, thereby constraining the models’ effectiveness and robustness in practical applications [

27]. More critically, these methods generally lack mechanisms to model the dynamic nature of domain shifts in industrial tasks, hindering adaptation to evolving feature distributions over time or under varying conditions. Furthermore, the current models, predominantly based on deep neural networks, suffer from parameter redundancy and high inference latency, rendering them unsuitable for real-time, edge computing, and lightweight deployment in industrial production. Consequently, the existing research reveals substantial limitations in data source domain adaptability, network structure efficiency, transfer strategy robustness, and framework adaptability. Thus, the application of transfer learning in industrial defect detection requires urgent optimization toward more targeted and practical directions.

As mentioned above, this paper addresses the challenges of inaccurate localization, weak feature extraction, and poor model adaptability in industrial defect detection by proposing a multi-stage transfer learning-assisted enhanced object detection algorithm, YOLOv11-EMD, to fulfill the industrial requirements for high-precision, robust generalization and low-training-cost defect detection. Specifically, to mitigate YOLOv11’s limitations in feature extraction amid intricate backgrounds and the recognition of targets across multiple scales, the InnerEIoU loss function is incorporated to enhance the regression precision of bounding boxes. Furthermore, the multi-scale dilated attention (MSDA) module and Cross-Stage Partial Network with 3 Convolutions and Kernel size 2 Dynamic Convolution (C3k2_DynamicConv) module are integrated to strengthen the model in terms of its capability of multi-scale defect identification and adaptability to diverse defect features in complex environments. Finally, to tackle the prevalent issues of insufficient robustness and weak domain adaptation in cross-domain tasks, a multi-stage transfer learning framework is proposed. This framework employs a dual-driven strategy involving source domain pre-training and target domain fine-tuning to effectively boost the model’s generalization across varying data distributions. The integration of multi-stage transfer learning with the improved YOLOv11 algorithm leverages the benefits of high accuracy, strong generalization, and low training costs, thereby substantially enhancing the reliability and practicality of defect detection in complex industrial scenarios and supporting intelligent, automated quality monitoring. The contributions presented in this study can be summarized as follows:

- (1)

With the aim of resolving YOLOv11’s shortcomings in localization accuracy, multi-scale feature extraction, and adaptability to complex defects in industrial detection, this paper introduces an enhanced YOLOv11 algorithm (YOLOv11-EMD). By incorporating InnerEIoU, MSDA, and the C3k2_DynamicConv module, it synergistically improves the feature representation, multi-scale fusion, and localization precision, significantly boosting the model’s robustness and fine-grained recognition in diverse defect scenarios.

- (2)

A multi-stage progressive transfer learning framework is proposed to overcome the degraded transfer performance and limited adaptability due to domain-specific differences in defect data distributions. It adopts a collaborative optimization strategy of source domain multi-scene pre-training and target domain adaptive fine-tuning, enabling efficient cross-domain feature transfer and enhancing the model’s generalization and stability in heterogeneous environments.

- (3)

The proposed YOLOv11-EMD algorithm and multi-stage transfer learning framework were evaluated on the NEU-DET and GC10-DET datasets. Results demonstrate superior accuracy in defect identification, boundary localization, and robustness in complex backgrounds. Moreover, the framework markedly improves the model adaptability and stability under heterogeneous data distributions, offering efficient and reliable support for high-precision, low-sample defect detection in industrial settings.

The remainder of this paper is structured as follows:

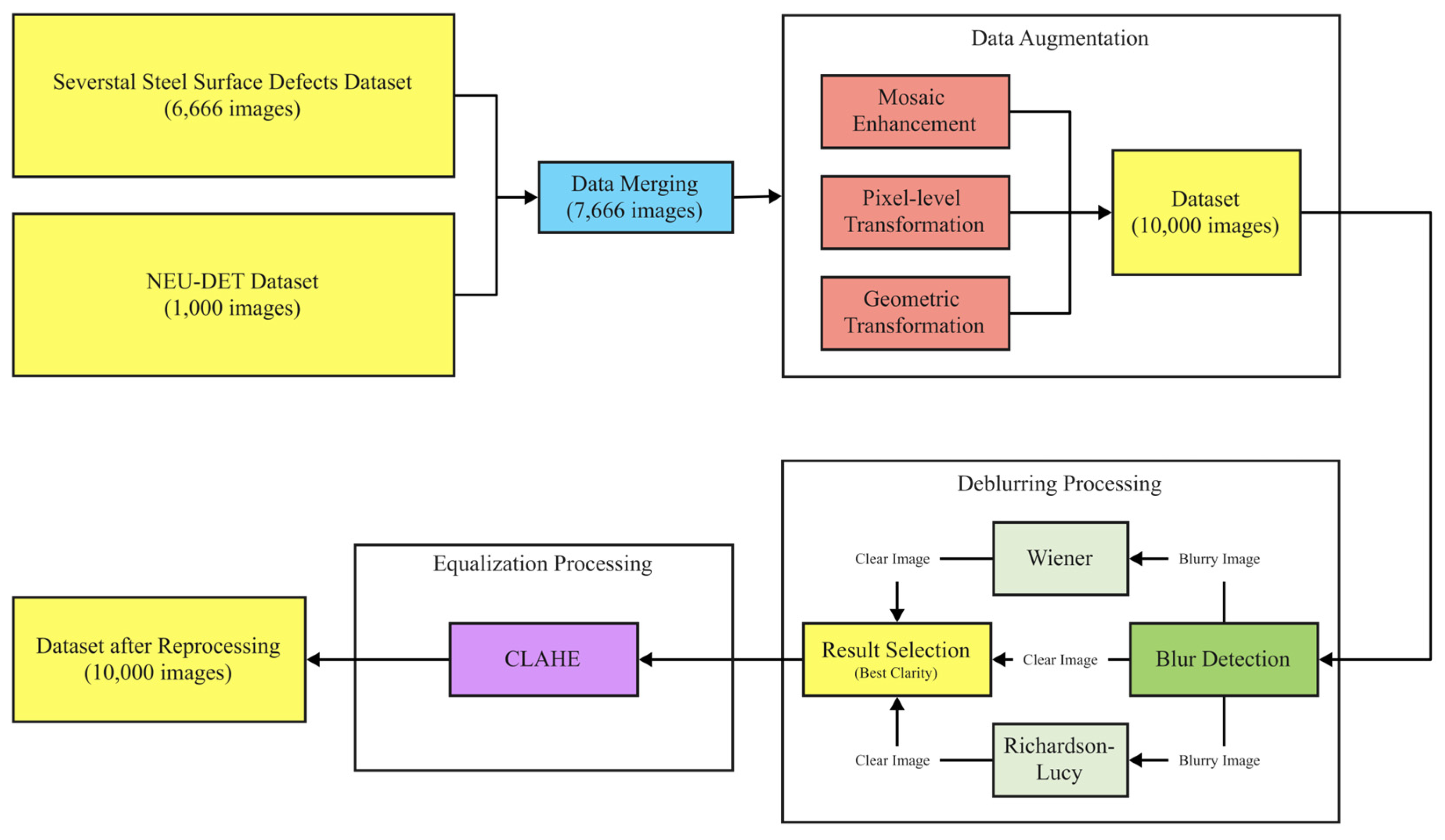

Section 2 describes the construction of the steel surface defect dataset and designs multi-scale data augmentation and preprocessing methods to lay a solid foundation for model training.

Section 3 details the design rationale for the YOLOv11-EMD algorithm, including the structure and functionality of the InnerEIoU loss function, MSDA feature fusion module, and C3k2_DynamicConv module to optimize the detection performance.

Section 4 outlines the multi-stage transfer learning framework, explores its application in cross-domain defect detection, and analyzes the impacts of various transfer stages on the model generalization.

Section 5 presents the experimental design and results analysis, including ablation and comparative studies, to ensure that the efficacy of the proposed method across multiple metrics and its potential in real-world industrial applications are comprehensively verified.

3. YOLOv11-EMD Algorithm

Although the standard YOLOv11 algorithm performs well in general object detection tasks, it faces limitations when applied directly to detecting fine defects on steel surfaces. These include challenges in accurately locating irregularly shaped defects, insufficient capture of multi-scale defect features, and limited adaptability to the complex visual characteristics of various defect types, such as fine scratches or hidden inclusions. To overcome these issues, this study proposes an enhanced YOLOv11-EMD object detection algorithm that fulfills the practical demands for high-precision localization, multi-scale robustness, and complex shape perception in industrial steel surface defect detection. Specifically, to enhance the bounding box regression accuracy, particularly for defects with high aspect ratios or significant scale discrepancies between predicted and ground-truth boxes, the InnerEIoU loss function is introduced. This function employs a refined penalty mechanism that comprehensively accounts for the overlap area, aspect ratio, and center-point distance between predicted and ground-truth boxes, thereby improving the model’s localization precision and enabling more accurate defect boundary delineation. To tackle multi-scale features prevalent in steel defects, the MSDA module is integrated to bolster the model’s extraction of fine-grained details and fusion of large-scale contextual information. This module effectively merges features from various network levels, strengthens multi-scale defect representation, and heightens sensitivity to both large and small defects. Finally, given that steel defects often display diverse and subtle visual patterns, which demand advanced adaptive feature extraction beyond the capabilities of standard convolutions, the C3k2_DynamicConv module is incorporated. Its core mechanism allows convolution kernel parameters to adjust dynamically based on input features, significantly enhancing the model’s capacity for feature learning and its ability to adapt, thereby facilitating the improved capture of complex, variable defect structures. By integrating these three key enhancements, the InnerEIoU loss function, the MSDA module, and the C3k2_DynamicConv module, the proposed YOLOv11-EMD achieves substantial performance gains in steel surface defect detection. The primary contribution of this study lies in its engineering applicability, focusing on addressing practical challenges in steel surface defect detection. By incorporating and integrating the three modules—InnerEIoU, MSDA, and C3k2_DynamicConv—this work not only enhances the detection accuracy and efficiency but also optimizes the feasibility of real-world industrial applications. Unlike traditional theoretical studies, this approach emphasizes practical value in steel manufacturing and surface quality inspection, particularly in terms of the robustness and real-time performance in complex industrial environments, providing direct technical support and solutions for quality control and production processes in the steel industry. This algorithm not only attains higher accuracy in defect identification and localization but also exhibits superior scale robustness and morphological adaptability, offering an efficient, tailored solution for industrial challenges in this domain. The enhanced YOLOv11 framework is illustrated in

Figure 4.

3.1. Traditional YOLOv11 Algorithm

The YOLO (You Only Look Once) family of models has revolutionized object detection with its single-stage paradigm, striking a compelling balance between speed and accuracy. Building upon this legacy, YOLOv11 [

39] introduces several architectural refinements that further advance its core design principles. The model is primarily designed to improve the detection accuracy while maintaining real-time efficiency. To this end, it incorporates a more efficient backbone network, a dynamic receptive field mechanism, and an anchor-free decoupled detection head, collectively enhancing robustness in multi-scale object detection and complex-scene understanding. Moreover, improvements in label assignment strategies and loss function design during training contribute to the greater stability and stronger generalization of the model compared to its predecessors.

The architecture of YOLOv11 is shown in

Figure 5. It adheres to the classic paradigm of object detection networks, comprising three primary parts: the backbone, the neck component, and the detection head module. The backbone extracts multi-level semantic and spatial features from input images, typically utilizing lightweight and efficient architectures such as Cross-Stage Partial Darknet (CSPDarknet), an enhancement of the CSPNet concept, or variants of EfficientNet and Re-parameterized Vision Transformers (RepVGG). It bolsters feature extraction through residual connections, dense connections, and receptive field enhancement modules while managing computational complexity. The neck primarily fuses feature maps across scales to improve the detection of multi-scale objects. Common implementations include the Bidirectional Feature Pyramid Network (BiFPN) and Path Aggregation Network (PANet), which merge deep semantic features with shallow details via bidirectional information flow, thereby creating a rich, multi-scale representation that facilitates the identification of steel surface defects of varying sizes. The detection head employs a decoupled design, independently predicting bounding box locations, categories, and confidences. This module generates outputs in parallel across multi-scale feature maps, where different levels exhibit distinct receptive fields: shallow layers excel at detecting small targets, while deep layers are better suited for large ones, enabling the precise localization of defects across sizes.

Although YOLOv11 performs well in general object detection tasks, it encounters several key challenges in high-precision industrial applications, such as steel surface defect detection: (1) Limited bounding box localization accuracy: Steel defects often present elongated, irregular, or diffuse shapes, and YOLOv11’s regression loss function struggles with targets exhibiting extreme aspect ratios or complex morphologies, leading to poor alignment between the predicted bounding boxes and actual defect boundaries. (2) Insufficient multi-scale feature representation: Steel defects vary widely in scale, yet YOLOv11’s standard feature fusion mechanism inadequately balances the preservation of small-target details with contextual awareness for large targets, compromising detection completeness and robustness. (3) Inadequate adaptability in feature extraction: In industrial images with complex backgrounds and diverse textures, standard convolution operations fail to dynamically adjust weights according to input features, hindering the model’s ability to distinguish critical defects from background noise and thus impairing the recognition stability and generalization. Consequently, YOLOv11 requires further optimization to effectively handle the diversity and complexity of steel defects.

3.2. InnerEIoU

In object detection tasks, accurate bounding box regression is essential for enhancing the localization accuracy. However, YOLOv11 continues to encounter challenges, including substantial regression errors and localization offsets, particularly in scenes with significant variations in object scales, complex shapes, or dense distributions. Traditional IoU loss functions fail to account for the internal structures of objects during optimization, which hinders the full exploitation of geometric relationships between bounding boxes and thus limits further advancements in the detection performance. To mitigate these issues, this paper introduces the InnerEIoU [

40] module, which integrates an internal structure perception mechanism into the IoU regression process and comprehensively incorporates information on object shape consistency and center-point offsets. This strategy improves the discriminative power in bounding box regression tasks, thereby effectively enhancing the localization precision and stability of the detection network in complex scenarios.

The core concept of the InnerEIoU involves introducing a geometry-aware mechanism to traditional IoU-based loss functions, thereby enhancing the modeling of fine-grained differences in bounding boxes. By integrating a strategy that balances focus on the central region with scale consistency constraints, it optimizes both the target localization accuracy and convergence efficiency. This loss function combines the Inner-IoU and EIoU methods to comprehensively model positional, scale, and shape differences between predicted and ground-truth bounding boxes. In particular, the Inner-IoU strengthens the emphasis on the target’s central region, enabling more precise localization in scenarios involving small objects or high-density distributions. Meanwhile, the EIoU incorporates a direct optimization term for the bounding box’s aspect ratio, which improves fitting by minimizing discrepancies in the width, height, and center-point distances. Overall, the InnerEIoU preserves the geometric essence of the traditional IoU while accounting for factors such as center alignment, scale matching, and distance constraints, thereby endowing the model with enhanced geometric perception during regression and significantly boosting the detection performance and training stability.

In the present research, the model substitutes the original CIoU with the enhanced InnerEIoU. This approach not only accelerates model convergence and enhances the bounding box regression accuracy but also incorporates auxiliary boundary constraints into the loss calculation. During training, the InnerEIoU employs smaller auxiliary boundaries for loss computation, which promotes regression for high-IoU samples while moderately suppressing low-IoU samples, thereby optimizing the overall regression performance. Furthermore, to accommodate objects of varying scales, the loss function introduces a scaling factor that dynamically adjusts the auxiliary boundary construction, rendering the loss calculation more adaptive. In the anchor-free detection head of YOLOv11, the network first decodes raw predictions (e.g., center-based coordinates or four-side distances) into explicit rectangular boxes. The InnerEIoU is then computed on these decoded boxes against the ground truth. Because the InnerEIoU operates purely on box geometry, it is parameterization-agnostic and therefore naturally compatible with anchor-free regression: gradients backpropagate through the decode step to the raw outputs. This is consistent with recent anchor-free detectors that apply IoU-style losses directly on decoded boxes [

41]. This strategy improves the model’s robustness in multi-scale scenarios and significantly boosts the target prediction speed in industrial applications. Based on Equations (8)–(12), the Inner-IoU integrates effectively into the EIoU loss calculation, yielding a geometrically aware regression optimization scheme:

where the ground-truth (GT) box is denoted as

and the anchor box is denoted as

. The width of the GT box is

and the height is

, while the anchor box’s width is

and the height is

. And

serves as a supporting parameter controlling the auxiliary box size. The EIoU comprises three components: overlap loss (

), center distance loss (

), and aspect ratio loss (

). The InnerEIoU provides precise focus on the center of the box, functioning as an advanced bounding box regression loss. By utilizing scale-corrected support boxes for loss calculation, it accelerates convergence and excels at detecting small objects.

Compared to the default CIoU, the InnerEIoU performs better when the aspect ratio of objects changes. Specifically, the CIoU loss function is as follows:

Among these,

represents the squared Euclidean distance between the center of the predicted bounding box and the center of the ground-truth bounding box,

is the diagonal length of the minimum bounding rectangle,

is the aspect ratio consistency metric, and

is the trade-off factor. The aspect ratio term indirectly captures differences in width-to-height ratios via the inverse tangent function. However, when the object’s aspect ratio deviates significantly (e.g., from a nearly 1:1 square object to a 1:10 elongated object), the nonlinear nature of

may lead to gradient instability. Specifically, the partial derivatives of

with respect to width (

) and height (

) are as follows:

These partial derivatives depend on the nonlinear transformation of the inverse tangent function, which can easily cause gradient explosion or vanishing problems under extreme aspect ratios, thereby weakening the convergence speed and accuracy of the regression process, especially when the scale of the prediction box is close to the target but the proportion deviation is large. In contrast, the InnerEIoU loss function directly optimizes these dimensions by explicitly separating the width and height penalty terms, and it is expressed as follows:

where

and

represent the width and height of the minimum bounding rectangle, respectively. This design avoids the indirect nonlinear representation of the aspect ratio term in the CIoU and instead uses an independent linear penalty mechanism. When the aspect ratio of an object changes, the InnerEIoU provides more stable gradient guidance, and its partial derivatives with respect to

and

are simplified to the following:

These linear gradients ensure that the penalty is directly proportional to the actual deviation in width and height, unaffected by the overall nonlinearity of the aspect ratio. This promotes faster and more accurate convergence in scenarios where the aspect ratio changes significantly (such as objects with high width-to-height ratios or low width-to-height ratios). Mathematical analysis shows that this direct separation mechanism is more robust in gradient flow, better adapting to diverse object shapes and improving the overall performance of bounding box regression. Therefore, the InnerEIoU performs better than the default CIoU when the aspect ratio of objects changes.

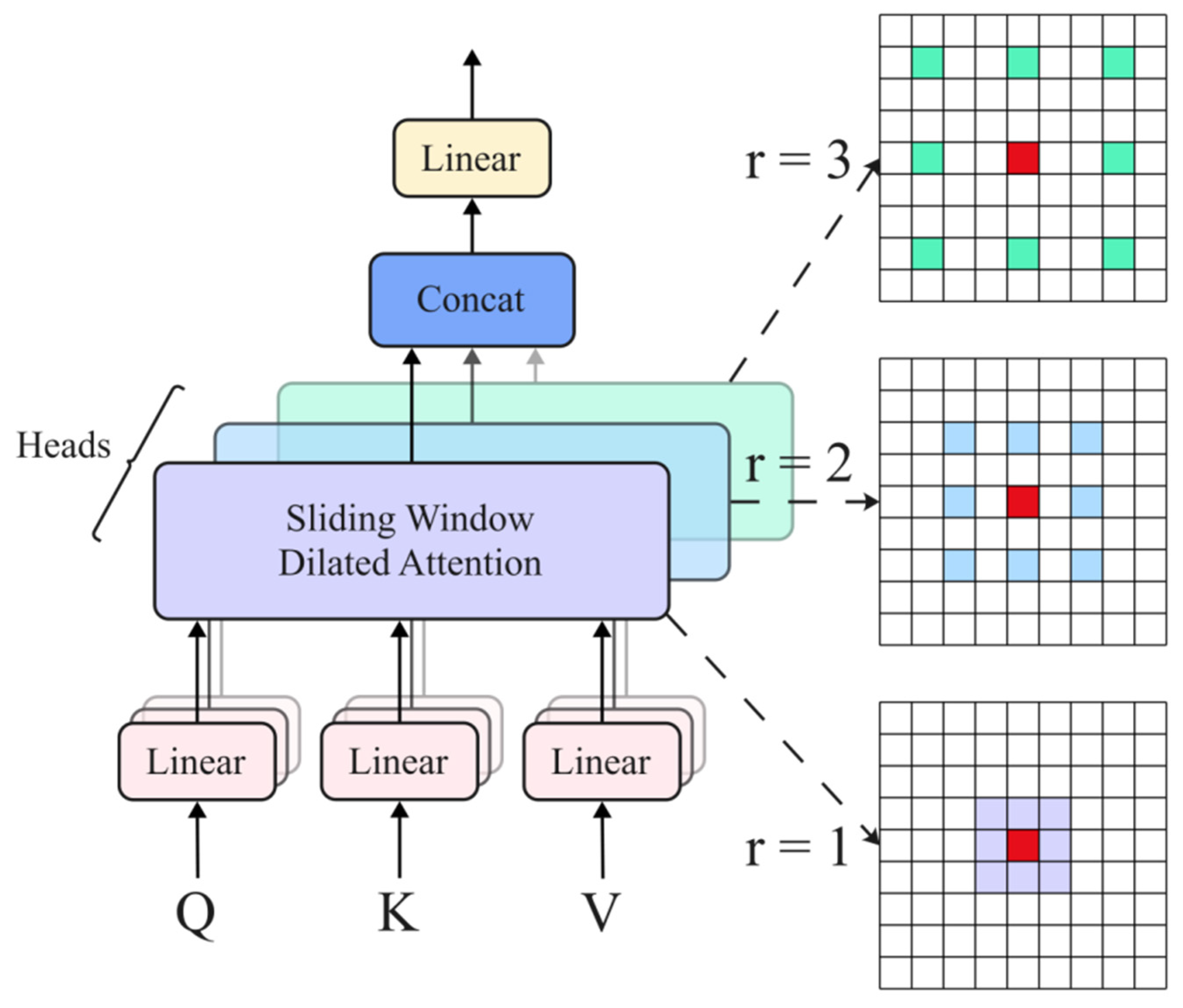

3.3. MSDA

In object detection tasks, extracting features across multiple scales and information fusion are critical for achieving high-precision recognition. Although the traditional Contextualized and Contrastive Pairwise Self-Attention (C2PSA) module in YOLOv11 demonstrates success in channel and spatial attention mechanisms, it still faces difficulties like poor adaptability to objects of varying scales and inadequate feature representation in industrial detection settings. To overcome these limitations, this paper proposes the integration of the MSDA [

42] module. By dynamically adjusting the fusion weights among features at different scales, this module enhances the network’s perception of small objects and complex backgrounds while strengthening the information transmission and fusion across scales. Consequently, it enhances the network’s stability and precision when handling multi-scale objects.

The core concept of MSDA involves introducing a sparse attention mechanism with varying dilation rates within local windows to model multi-scale contextual dependencies while minimizing the computational complexity. This module utilizes a multi-scale dilated attention mechanism to facilitate sparse information exchange across scales, enabling the model to capture long-range dependencies while preserving sensitivity to local structures. It integrates multi-head attention with channel-grouping strategies to achieve information decoupling and reconstruction across scales. Specifically, MSDA divides input features into multiple sub-channels and applies window attention with different dilation rates to each sub-channel. Subsequently, it concatenates and fuses the outputs from various scales to model and dynamically aggregate multi-scale contextual information, thereby enhancing the model’s robustness and expressive power in object recognition and localization tasks.

The MSDA method incorporates a multi-scale dilation rate design based on the Sliding Window Dilated Attention (SWDA) mechanism, which effectively expands the attention mechanism’s receptive field and boosts its representational capabilities. The core concept of SWDA involves introducing a sparse, dilated sampling strategy within a local window, where the dilation rate controls the spatial interval of attention operations. This mechanism is represented as follows:

where

denotes the query of the input features,

denotes the key of the input features, and

denotes the value of the input features.

represents the dilation rate, which governs the sparsity of the attention region.

signifies the query vector at position

.

and

are the key–value sets sampled from a specific neighborhood based on the dilation rate. By integrating dilation operations into the sliding window, SWDA enables perception of a broader range of contextual information while maintaining low computational complexity.

In the SWDA module, the choice of dilation rates is motivated by the need to balance fine-grained detail extraction and global-context modeling. We adopt a fixed dilation schedule of {1, 2, 4}, which progressively enlarges the receptive field and enhances the module’s robustness to multi-scale features without incurring additional computational overhead. Compared to dynamic adaptation strategies, this fixed design avoids potential training instabilities and ensures reproducibility across datasets. Specifically, a smaller dilation rate (e.g., 1) prioritizes the preservation of boundary details, whereas a larger dilation rate (e.g., 4) facilitates the modeling of long-range dependencies. Such multi-scale coverage is particularly beneficial in object detection tasks, where it effectively mitigates missed detections caused by scale variations. This design is inspired by the work of Li et al. [

43], who emphasized that fixed dilation rates are a common practice and explicitly provided the sequence {1, 2, 4} as an example.

Building on this foundation, MSDA incorporates a multi-scale design by partitioning input features into multiple attention heads, each of which independently applies SWDA with varying dilation rates for modeling. This approach covers multi-level semantic information ranging from local to global. The computation for the

th attention head proceeds as follows:

where

,

, and

represent slices of the input feature block, and SWDA operations are applied to these slices. Outputs from all heads are first concatenated, after which feature aggregation takes place through a linear layer, yielding the final output:

Via this design, MSDA captures semantic information at various scales, reduces redundant computations in global attention, and enhances the model performance across multiple visual tasks. Given that MSDA achieves a favorable balance between modeling capability and computational efficiency, this paper integrates it as a key component into the feature extraction module and applies it to detecting defects on steel surfaces, thereby improving the network’s perception of varying-scale defect features. The module’s structural diagram appears in

Figure 6.

Compared to the standard multi-head attention (MHA) mechanism, the MSDA module introduced in this paper not only has higher complexity but also higher accuracy. Specifically, regarding the complexity-scaling characteristics of the two, the computational complexity of the standard MHA primarily stems from the calculation of the attention matrix, which is , where represents the input sequence length and denotes the embedding dimension. This complexity grows quadratically with the sequence length, limiting its scalability in high-resolution tasks. In contrast, MSDA introduces a multi-scale dilated attention mechanism to capture feature dependencies across different receptive fields. Specifically, the dilation operation sparsely samples query–key pairs at a dilation rate () during attention computation, thereby reducing the single-scale attention complexity to approximately . However, since MSDA uses multiple scales (assuming dilation rate levels), its overall complexity scales to , where is the average dilation rate. While the dilation mechanism mitigates some quadratic overhead, the multi-scale superposition results in an overall complexity higher than that of standard MHA, especially when and is large, manifesting as additional consumption of computational resources.

Although MSDA has a high complexity, this trade-off has been empirically proven to be reasonable: through multi-scale feature extraction, MSDA significantly enhances the model’s ability to capture complex patterns (such as multi-level semantic dependencies), resulting in notable improvements in accuracy metrics (such as the mAP or accuracy) on benchmark datasets. This performance gain is particularly prominent in applications where computational resources are limited but accuracy is prioritized, such as real-time detection tasks. In such scenarios, the additional computational overhead of MSDA can be partially offset through parallel optimization (e.g., GPU acceleration), thereby optimizing the overall efficiency. Mathematical analysis further shows that when the sequence length (N) satisfies N > r, although MSDA’s complexity growth rate is higher than MHA’s, its gradient flow is more robust, supporting more stable training convergence, confirming the effective balance between accuracy and computational overhead in this design.

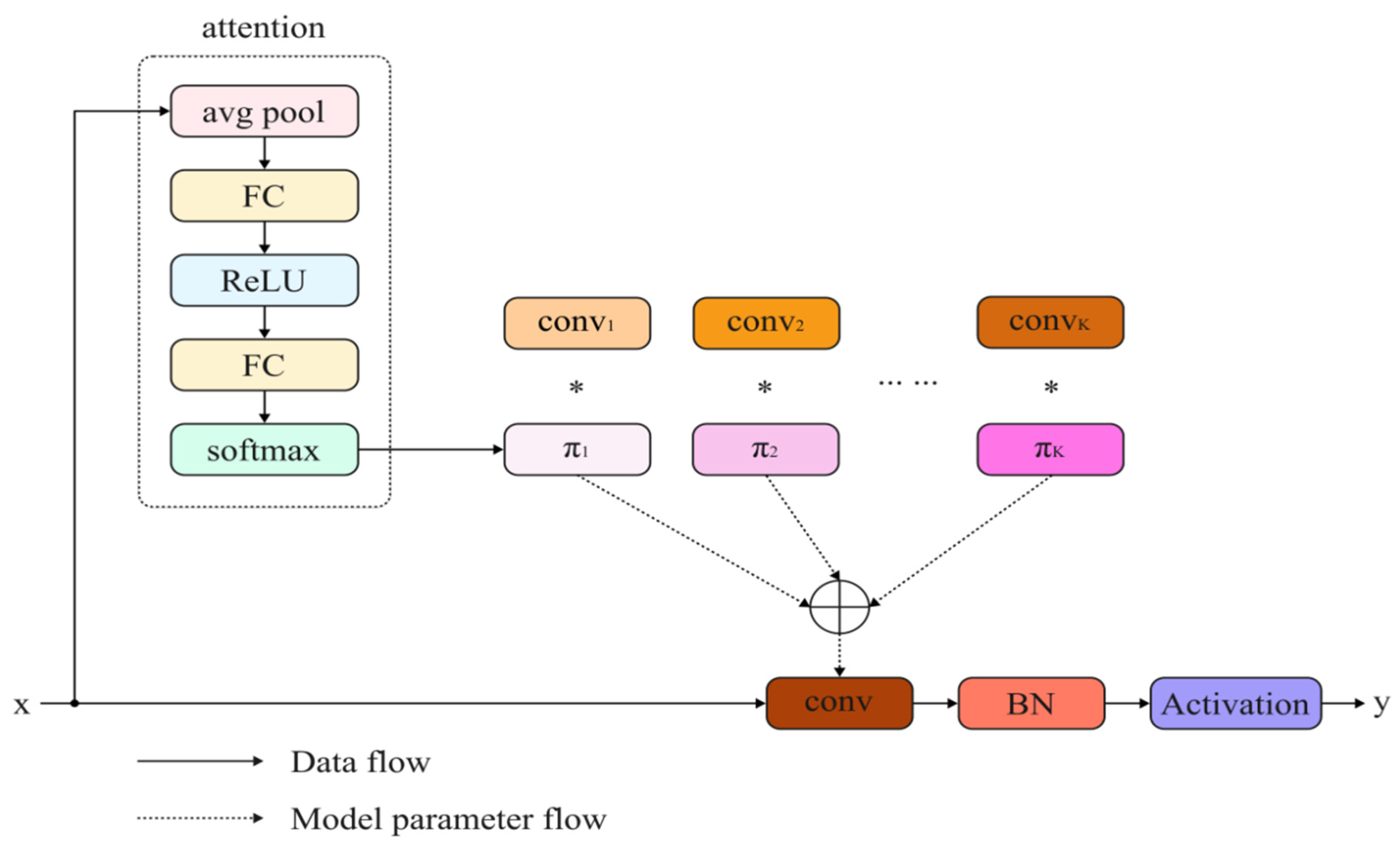

3.4. C3k2_DynamicConv

In steel surface defect detection tasks, CNNs find widespread application owing to their robust feature extraction capabilities. However, traditional convolutional operations in YOLOv11 encounter limitations when processing defects with complex textures or substantial local variations, mainly due to fixed receptive fields and constrained expressive power. To mitigate this issue, this paper proposes an enhanced strategy that incorporates a dynamic convolutional kernel structure into YOLOv11’s C3k2 module, yielding the C3k2_DynamicConv module [

44]. This integration bolsters the network’s capacity to model features across diverse scales and complex contexts, thereby improving the feature representation flexibility and accuracy and ultimately enhancing the model detection precision and stability in detecting defects of steel surfaces.

The core concept of C3k2_DynamicConv involves introducing a dynamic convolution mechanism to the traditional convolution structure, enabling the input-adaptive adjustment of kernel weights and, in turn, strengthening the model’s capability of modeling diverse semantic content. This module achieves input-aware kernel combinations through multi-branch convolution kernels and an attention-based weight generation mechanism, ensuring that the kernel weights for each sample during forward propagation are dynamically generated. Specifically, C3k2_DynamicConv employs a lightweight attention module to generate weight coefficients for each convolution branch, extracts features in parallel using K 3×3 kernels, and performs the weighted fusion of the branch outputs based on these weights, thereby improving the model’s flexibility and discriminative power in feature representation. This mechanism not only increases the model’s sensitivity to local details in complex scenes but also avoids the computational overhead associated with deeper networks.

C3k2_DynamicConv employs an input-dependent attention mechanism for weighted aggregation across multiple parallel convolution kernels, thereby dynamically generating convolution weights and enhancing the model’s expressive power. Specifically, let the input feature be denoted as

. The module utilizes

predefined static convolution kernels and bias terms, represented as

. It introduces input-related attention weights (

) to linearly combine these kernels, forming dynamic convolution kernels and bias terms:

where

represents the attention weight for the

th kernel, satisfying the normalization constraint:

These attention weights (

) depend on the input (

). This attention distribution typically employs a Squeeze-and-Excitation module: it begins by performing global average pooling on the input feature tensor to extract global contextual features, then processes them through two fully connected layers (with ReLU activation in between), and, finally, generates normalized attention weights via a Softmax function. Upon integrating the dynamically generated kernel and bias into the standard convolution operation, the final output emerges as follows:

where

denotes the convolution operation, and

represents a nonlinear activation function (e.g., ReLU). This structure adaptively adjusts convolutional behavior based on varying inputs, exhibiting enhanced modeling capabilities for diverse texture features in steel surface defects (e.g., scratches, indentations, peeling).

By incorporating the C3k2_DynamicConv module, the defect detection model achieves improved robustness and discrimination accuracy without substantially increasing the computational load. The module’s structural diagram appears in

Figure 7.

4. Multi-Stage Transfer Learning Strategy

This paper addresses the limitations of the YOLOv11-EMD algorithm in scenarios involving changes in target categories, defect shapes, or image feature distributions, including insufficient generalization, robustness, and adaptability, as well as inefficient utilization of knowledge from historical data. To overcome these challenges, it proposes a multi-stage transfer learning framework. This framework enhances the model’s boundary adaptability, cross-domain generalization, and feature expression flexibility during task transfer through pre-trained weights and parameter fine-tuning mechanisms. Consequently, it significantly improves YOLOv11-EMD’s robustness, generalization, and integration of historical knowledge in multi-source data environments, ensuring broad adaptability and sustained effectiveness in industrial steel defect detection.

4.1. Transfer Learning Mechanism

Transfer learning [

45] constitutes a machine learning technique that transfers knowledge from a source task to a target task, proving particularly suitable for scenarios with limited target domain data and substantial distribution differences. In visual tasks, it effectively leverages general representations from pre-trained models to achieve faster convergence and a superior performance in the target task. Its advantages include sustaining strong generalization even without large-scale labeled data and mitigating overfitting risks arising from data scarcity or class imbalance. In steel surface defect detection, transfer learning holds particular importance. Models trained on specific datasets often suffer performance degradation under varying data distributions or conditions, exhibiting limited generalization and robustness. Moreover, industrial data sources change frequently, accumulating historical data that models fail to inherit and utilize efficiently. Consequently, transfer learning enhances the model adaptability and generalization across heterogeneous domains while facilitating the effective inheritance and reuse of historical knowledge during multi-stage task evolution, thereby offering theoretical and practical support for addressing performance degradation and inefficient knowledge utilization in industrial contexts.

Within the object detection algorithms of the YOLO family, transfer learning primarily involves utilizing the model weights from pre-training on large-scale, general datasets as initial parameters for YOLO networks in new tasks. Specifically, the YOLO model undergoes pre-training on datasets like ImageNet or COCO, which offer rich and diverse visual features, to develop robust feature extraction and generalization capabilities. Subsequently, these pre-trained parameters transfer to the object detection task and undergo fine-tuning with limited task-specific data, enabling rapid adaptation to the new task’s feature distribution and environment. This approach capitalizes on the strengths of large-scale general datasets in visual feature learning, thereby improving the initial detection performance in specific scenarios. Moreover, incorporating pre-trained features enhances the YOLO model’s adaptability to complex backgrounds, dynamic scenes, and varied object shapes, providing reliable detection in industrial applications.

The Ultralytics team fully open-sources pre-trained weights for YOLO-series models, which support various computer vision tasks, including object detection, instance segmentation, pose estimation, image classification, and oriented object detection. For object detection, the YOLOv11 model provides pre-trained weights in five different scales, as shown in

Table 1.

YOLOv11’s pre-trained weights primarily originate from public datasets like COCO and VOC, which encompass natural scenes. However, the object categories in these datasets markedly differ from the morphology and distribution of steel surface defects. Consequently, direct application of the pre-trained model to steel surface defect detection yields limited specificity, with inadequate generalization to adapt to domain-specific features characterized by high background similarity and complex texture interference. Steel surface defects often appear as small cracks, pits, or oxidation, accompanied by metallic luster, scratches, and noisy backgrounds, rendering general pre-trained models ineffective at distinguishing defect regions from normal surfaces during feature extraction. Moreover, the embedded knowledge in the pre-trained parameters remains highly general but lacks domain specificity; when transferred to steel surface defect detection, it frequently activates irrelevant parameter paths, increasing the inference burden and compromising the real-time performance and accuracy in industrial settings. Therefore, in steel surface defect detection, targeted pre-training weights based on defect features become essential to heighten the model’s sensitivity and discrimination for small targets and complex defects, thereby enhancing the feature representation adaptability. Simultaneously, optimizing transfer learning strategies enables high-precision detection while preserving the inference speed, fulfilling industrial demands for efficient and accurate defect detection.

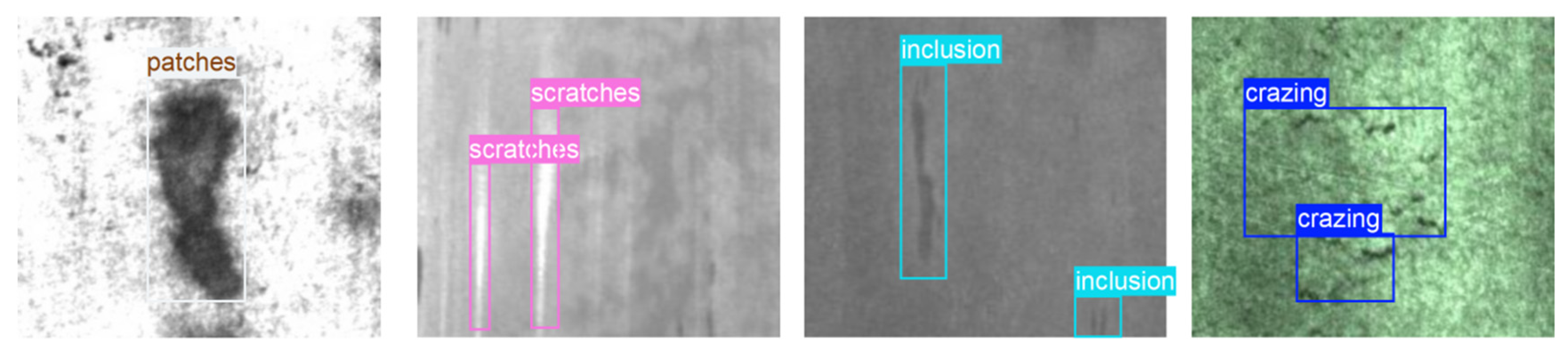

4.2. Source Domain and Target Domain Datasets

In this study, the source domain dataset comprises the Severstal steel surface defect dataset (6666 images) and the NEU-DET dataset (1000 images), merged and preprocessed to yield 10,000 high-quality images. This combined dataset encompasses six typical steel surface defect types: crazing, inclusions, patches, pitted surfaces, rolled-in scale, and scratches, featuring substantial category variations and complex background interference. Data augmentation, deblurring, and balancing preprocessing enhance the data consistency and model training stability. Consequently, the model learns discriminative feature representations amid diverse defect morphologies and intricate textures, improving the transferability and adaptability for downstream tasks. As industrial automation advances and steel surface treatment evolves, defect types diversify, demanding greater accuracy and efficiency in object detection. Thus, balancing robustness and adaptability in model feature representations across varied defect scenarios emerges as a critical research challenge in transfer learning for steel surface defect detection.

To further validate the proposed model’s adaptability and generalization in practical scenarios of detecting steel surface defects, this study constructs a target domain dataset aligned with industrial practices. Specifically, it includes the remaining 800 images from the NEU-DET dataset unused in the source domain training plus 1200 high-quality images from the GC10-DET dataset [

46], forming a 2000-image target domain set. While both NEU-DET source and target images are captured under identical conditions, which may result in distributional similarity, this study supplements the target domain with images from the GC10-DET dataset, which is collected under different acquisition settings. This intentional domain discrepancy helps assess the model’s ability to handle real-world shifts in data distribution. During construction, images undergo uniform format standardization and quality screening, with the manual removal of samples featuring missing annotations, blurred boundaries, or low quality to ensure label accuracy and feature discernibility. This dataset encompasses 15 typical steel surface defect types: crazing, patches, inclusions, creases, water spots, rolled pits, silk spots, crescent gaps, welding lines, punching holes, oil spots, pitted surfaces, rolled-in scale, scratches, and waist folds. It features diverse complex backgrounds and fine-grained defect morphologies, offering strong representativeness and challenge. The mixed-domain target set is deliberately constructed. This design reflects realistic industrial scenarios where models are required to generalize not only to unseen domains but also to subsets of the same domain not used during training. Thus, this setup enables the proposed model, YOLOv11-EMD, to simultaneously examine the intra-dataset generalization (within NEU-DET) and inter-dataset generalization (to GC10-DET).

Figure 8 compares source and target domain samples.

4.3. Multi-Stage Transfer Learning Framework

This paper proposes a multi-stage transfer learning framework to address challenges arising from substantial differences in the distribution, background interference, and feature representation in multi-source heterogeneous steel surface defect data, which complicate feature transfer. The framework exploits shared features across data sources, mitigates cross-domain adaptation barriers, and thereby enhances the model’s generalization and robustness in complex industrial environments with diverse defect types.

Figure 9 illustrates the multi-stage transfer learning framework.

As illustrated in

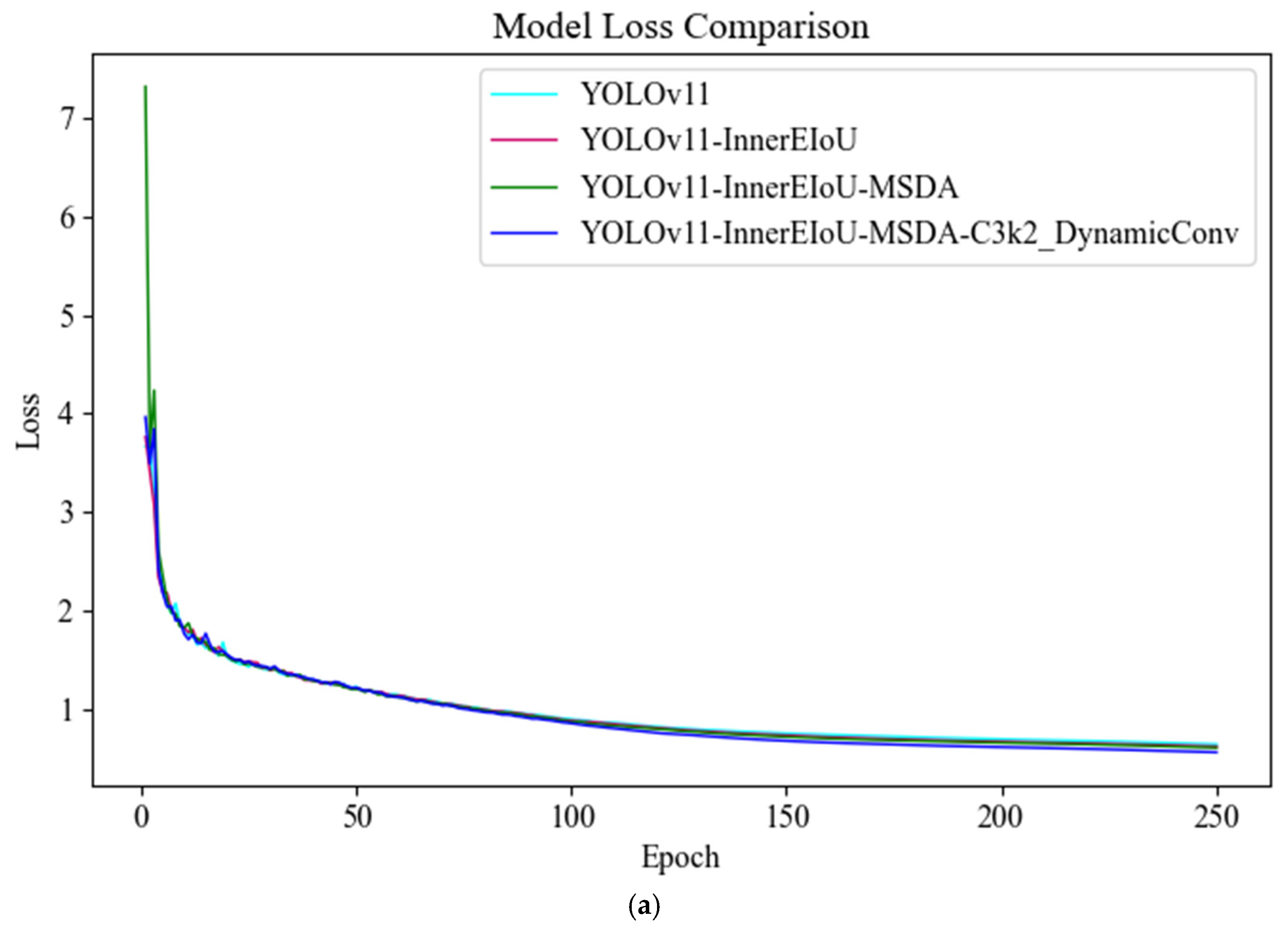

Figure 9a, the first stage involves the deep training of the model on widely sourced source domain data with ample samples. This study selects 10,000 fused steel surface defect images as the source domain data, encompassing six typical defect types with strong representativeness and diversity. To balance training sufficiency and prevent overfitting, the stage sets 250 training epochs, allowing the model to thoroughly learn general defect features from large-scale data. Additionally, to strengthen feature extraction in the source domain and facilitate effective cross-domain transfer, the stage employs the enhanced YOLOv11-EMD model, ensuring high-quality feature representations and weight initialization. This stage’s core objective centers on acquiring generalizable and discriminative representations through adequate training, providing a robust foundation and parameter support for the second stage’s target domain task transfer, which emphasizes small samples and complex distributions.

As illustrated in

Figure 9b, in the second stage, the model initializes its parameters using the weights obtained from training on the source domain in the first stage and applies them to the target domain dataset for further training. Given that the target domain contains only 1080 images of steel surface defects, training from scratch may result in poor convergence and an unstable performance. Therefore, this study transfers the general features learned from the source domain, enabling the model to adapt more rapidly to the new data distribution within the target dataset and, in turn, boosting the stability of the training and initial performance. This stage provides a robust basis for follow-up fine-grained feature adjustments and task-specific performance optimization while the model’s ability to adapt and capacity for generalization in small-sample scenarios are being boosted.

As illustrated in

Figure 9c, in the third stage, this study fine-tunes the model parameters to further enhance the detection accuracy and feature specificity in the target domain. Building on the target domain training from the second stage, this stage optimizes the strategy while maintaining the model’s core structure, achieving more precise parameter adjustments. Specifically, Adaptive Moment Estimation (Adam) is employed in the final fine-tuning stage, as its adaptive gradient mechanism enabled more stable optimization and yielded better convergence and accuracy than Stochastic Gradient Descent (SGD) in the conducted experiments. Concurrently, via grid search [

47] and general experience, the upper bound is set to 0.1 and the lower bound to 1 × 10

−6, from which the optimal learning rate is selected. Consequently, the learning rate is reduced from 0.1 to 0.0001, minimizing gradient fluctuations during training and bolstering the model’s stable updates under small-sample conditions. By adopting a more moderate optimization approach, the model deeply adapts to the target domain’s specific features while preserving transferred knowledge, thereby improving the recognition precision and stability in complex tasks involving steel surface defect detection.

Compared to two common strategies in traditional transfer learning, (1) directly training the YOLOv11 model on the source domain to obtain initial weights, and (2) loading a general pre-trained model such as YOLOv11n.pt in the target domain as a starting point, this study proposes a more targeted multi-stage transfer learning method. This method first trains the improved YOLOv11-EMD model on fused steel surface defect source domain data to acquire weights with domain-specific feature representation capabilities, then transfers these weights to the target domain for training, and, finally, performs fine-tuning optimization in the third stage. Compared to traditional approaches, this method effectively improves the alignment between model weights and the target task while preserving general feature extraction capabilities, thereby significantly enhancing the model’s adaptability and generalization performance under small-sample conditions. Ultimately, it achieves superior detection accuracy and robustness in complex steel surface defect detection tasks.

6. Conclusions

This paper addresses issues such as inaccurate localization, weak feature extraction capabilities, and poor model adaptability in steel surface defect detection. It proposes an enhanced YOLOv11-EMD algorithm and integrates a multi-stage transfer learning framework to fulfill industrial demands for high-precision, robust generalization and low-training-cost defect detection. Specifically, to mitigate YOLOv11’s insufficient feature extraction in complex backgrounds and multi-scale target recognition, this paper introduces the InnerEIoU loss function to enhance bounding box localization. It incorporates the MSDA module to improve the recognition of defects across different scales. It also embeds the C3k2_DynamicConv module to boost adaptability and expressiveness for complex and diverse defect features. Additionally, to tackle the common challenges of limited robustness and weak domain adaptation in cross-domain tasks, it proposes a multi-stage transfer learning framework. Through source domain pre-training and target domain fine-tuning, this framework effectively strengthens the model’s generalization across varying data distributions.

The proposed YOLOv11-EMD model underwent systematic validation on a comprehensive dataset constructed from the Severstal steel surface defect dataset and the NEU-DET dataset. The experimental results show that the model exhibits an outstanding performance in steel surface defect detection tasks. It achieved an average precision of 0.942, a recall of 0.868, and an mAP@50 of 0.949. Compared to the original YOLOv11 model, these metrics improved by 3.5%, 0.8%, and 1.6%, respectively. The overall training time remains comparable to that of YOLOv11, without significantly increasing the computational burden. In the generalization evaluation on a cross-scenario mixed dataset (comprising NEU-DET and GC10-DET data), the model achieved an mAP@50 of 0.799. This outperformed current mainstream detection methods such as CLS-YOLO, HCP-YOLO, SDS-YOLO, GMC-YOLO, and UCR-YOLO. Furthermore, when combined with a multi-stage transfer learning framework, YOLOv11-EMD reduced the training time by 3.2% while maintaining the detection performance and improving the mAP by 8.8%. This effectively alleviates performance degradation during multi-source data transfer and validates its excellent transferability and resilience in practical industrial applications.

The integration of YOLOv11-EMD with a multi-stage transfer learning framework significantly enhances the robustness and generalization of steel defect detection models in complex environments. This approach not only adapts to steel surface image detection tasks across diverse scenarios but also effectively reduces the training time and dependence on labeled data. As a result, it achieves efficient, low-cost, and automated quality inspection. The method holds substantial industrial value and research significance. It effectively addresses key challenges in steel manufacturing and inspection, such as “high precision but poor generalization” and “cross-domain performance degradation.” For future work, the following directions merit exploration. First, self-supervised learning mechanisms can be introduced to leverage unlabeled data and enhance the model recognition in scenarios with insufficient defect samples. Second, domain-specific adversarial training techniques can be integrated to further improve the transfer adaptability in extreme conditions, including cross-factory and cross-steel-grade environments.