In order to realize the instantaneous attitude estimation of the space target, this section proposes instantaneous attitude estimation methods based on a deep network, namely ISAR image enhancement based on UNet++ [

35] and instantaneous attitude estimation based on the shifted window (swin) transformer [

36]. Finally, the training steps of the proposed methods are given.

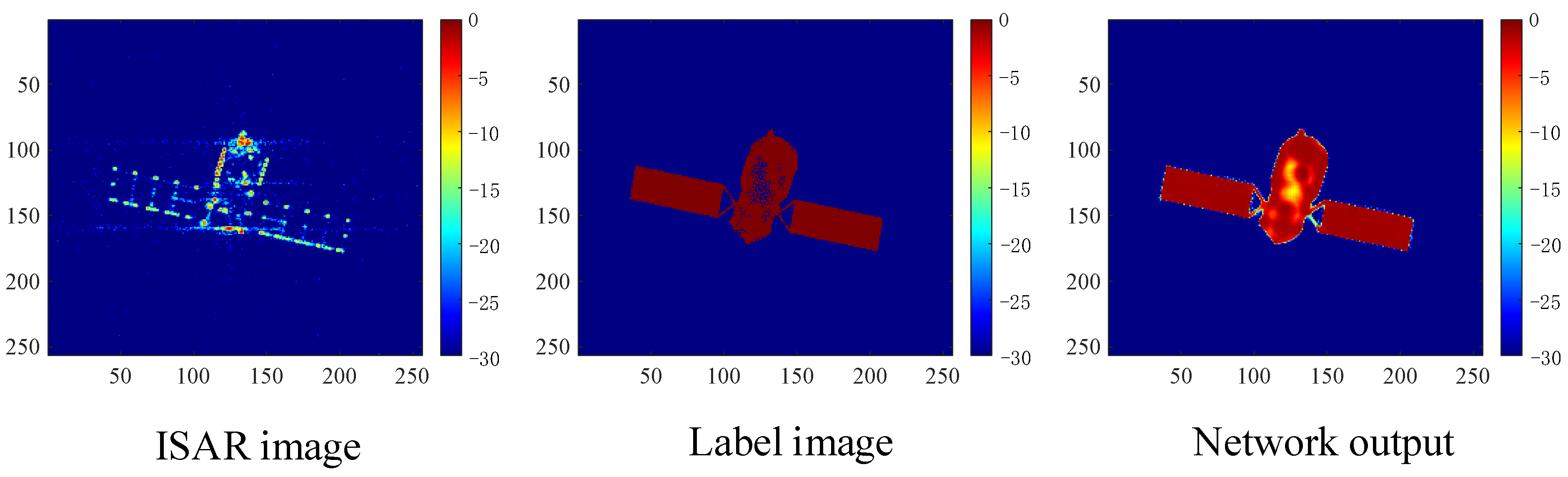

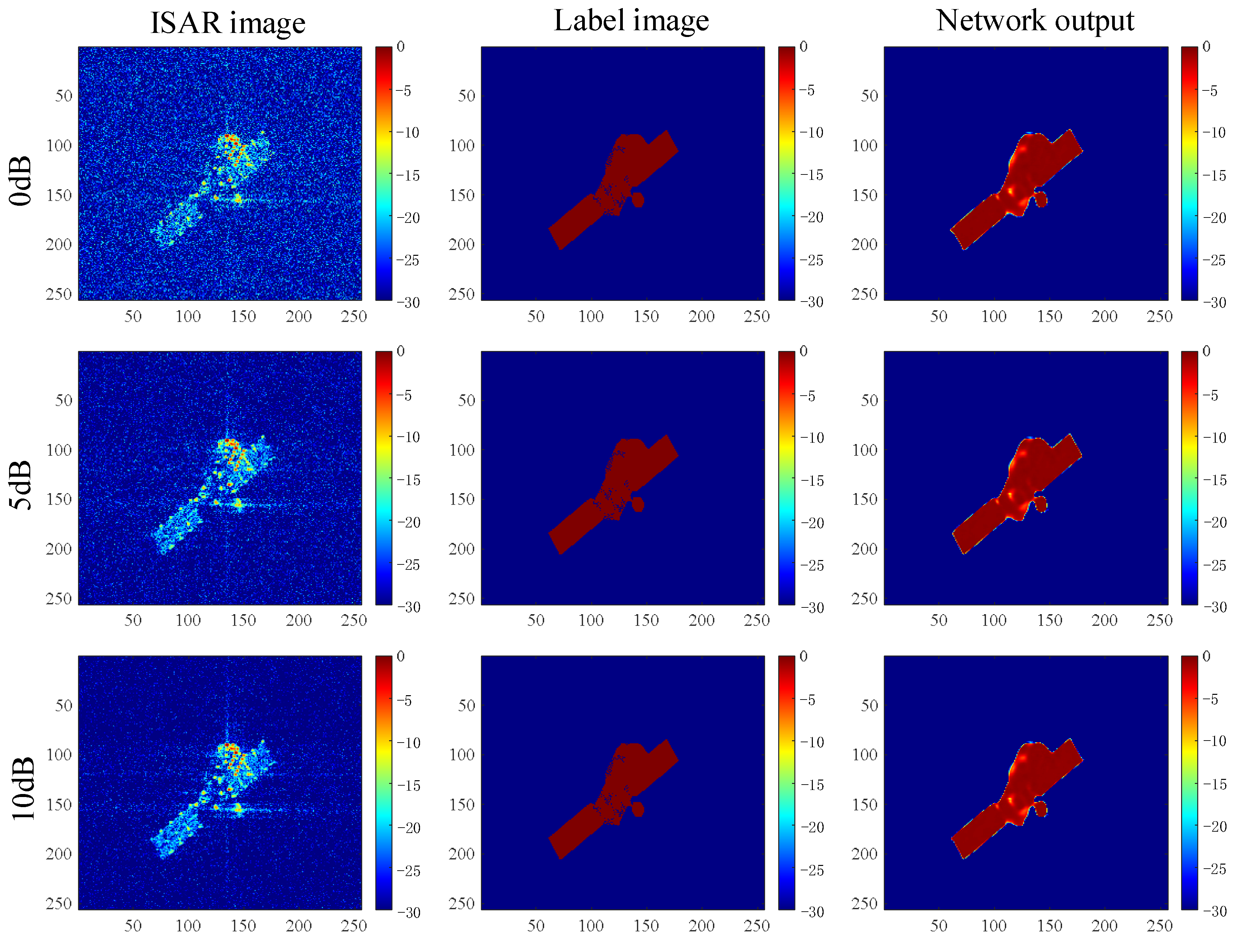

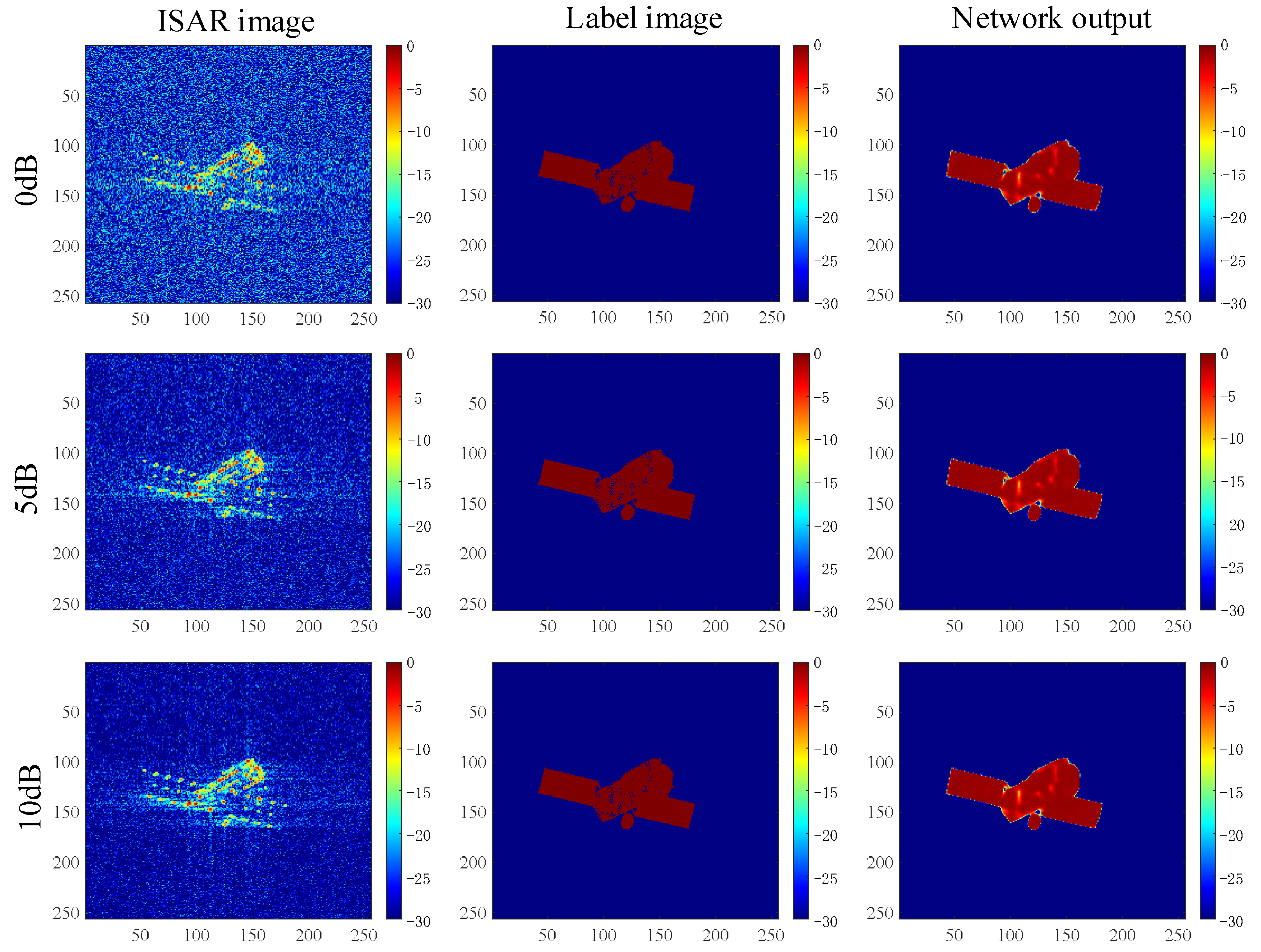

4.3.1. ISAR Image Enhancement Based on UNet++

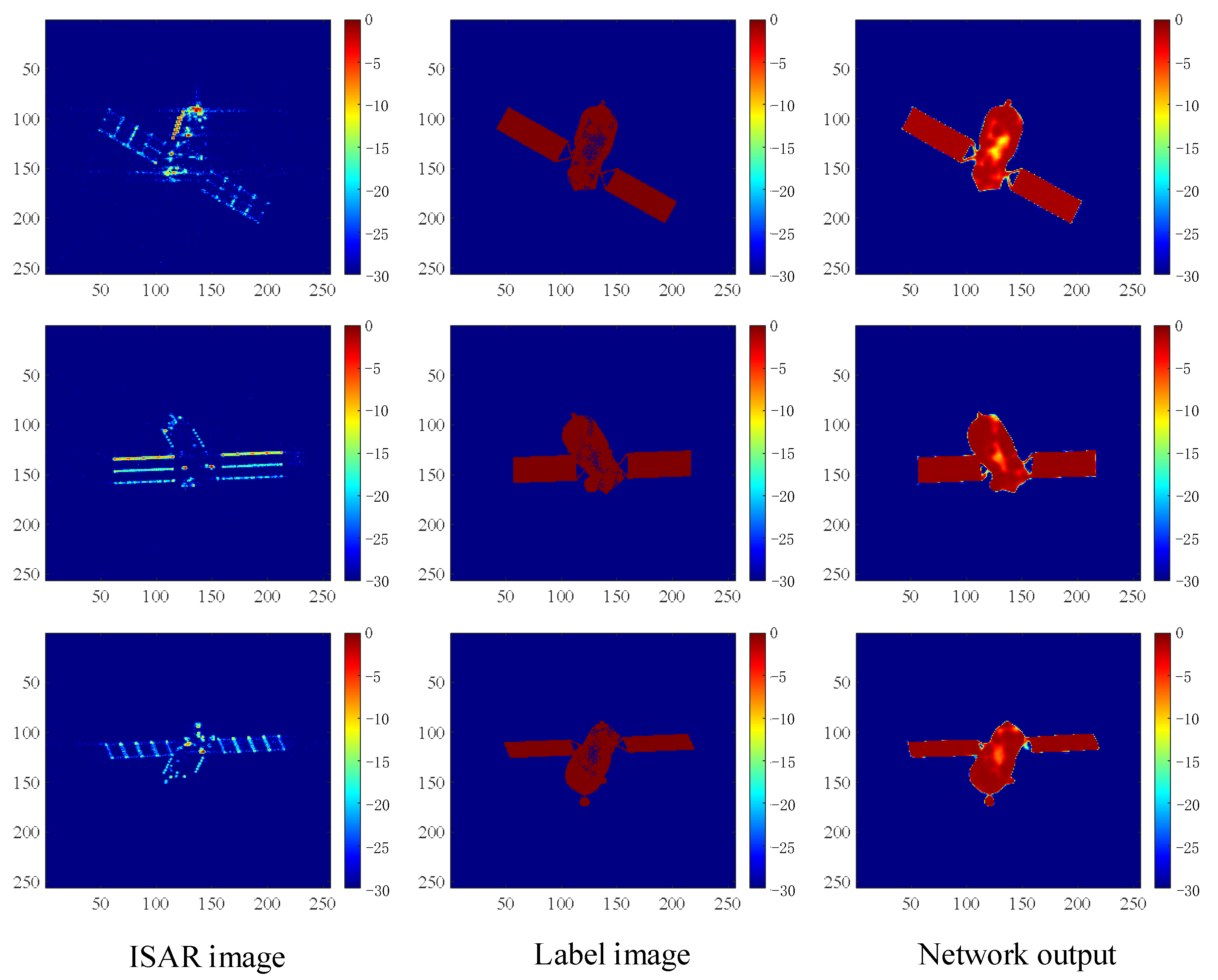

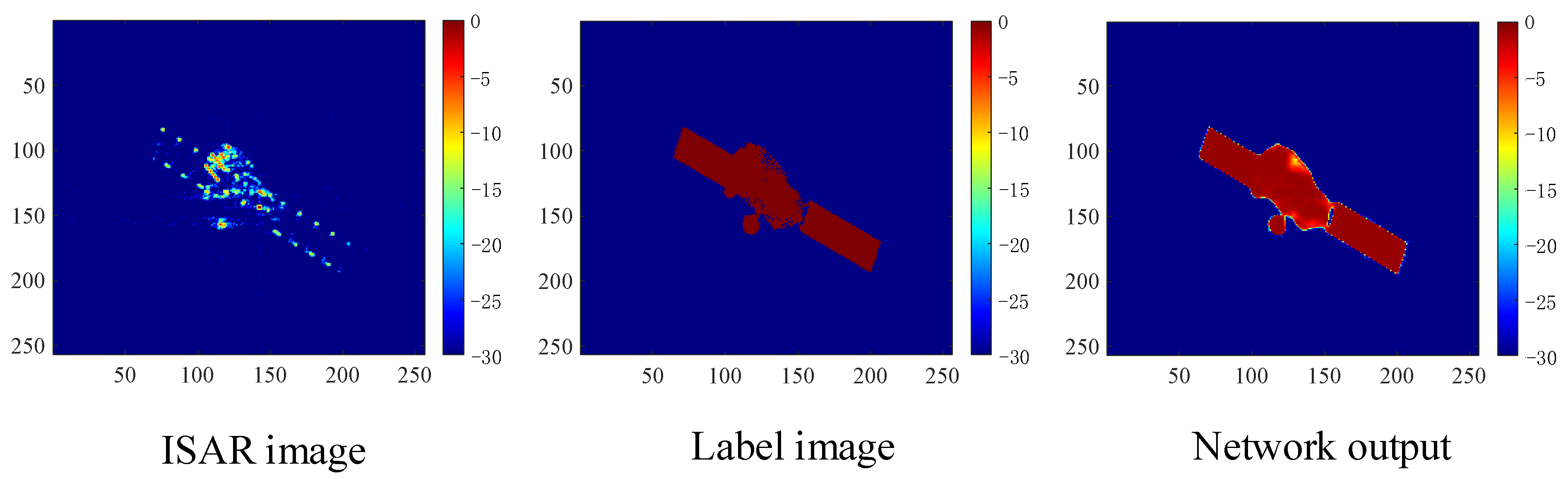

ISAR observes and receives the echoes from non-cooperative targets, compensates the translation components, which are not beneficial to imaging, and then transforms them into turntable models for imaging. However, because of the occlusion effect, the key components of the target can be missing in the imaging results, and the quality of the imaging results can be poor under noisy conditions. These problems can lead to a low-accuracy attitude estimation based on a deep network. To enhance the ISAR imaging results, UNet++, which has strong feature extraction ability, is used to learn the mapping relationship between the ISAR imaging results and theoretical binary projection images, and to provide high-quality imaging results for subsequent attitude estimation.

A flow chart of the ISAR image enhancement based on UNet++ is shown in

Figure 6. The network input is the ISAR imaging result, and the deep features of the ISAR image are extracted through a series of convolution and down-sampling operations. The image is then restored by up-sampling, and more high-resolution information is obtained by using a dense jump connection. Thus, the details of the input image can be more completely restored, and the restoration accuracy can be improved. In order to make full use of the structural advantages of UNet++ and to apply it to ISAR image enhancement, a convolution layer with one channel is added after

,

,

, and

, and its output is averaged to obtain the final ISAR image enhancement result.

Let

represent the output of node

, where

represents the down-sampling layer number of the encoder, and

represents the convolution layer number of the dense hopping connection. The output of each node can then be expressed as follows:

where

represents two convolution layers with linear rectification activation functions. The convolution kernel size is

, and the number of convolution kernels is shown in

Table 2. As shown by the red downward arrow in

Figure 6,

represents the down-sampling operation, which is realized by a pool layer with

kernels, as shown by the blue upward arrow in

Figure 6.

represents the up-sampling operation, which is realized by a deconvolution layer with

kernels and a step size of 2. In addition,

indicates a splicing operation, and the brown arrow indicates a dense jump connection.

To achieve better ISAR image enhancement results, the proposed method uses theoretical binary projection images as labels for end-to-end training, and the loss function is defined as the normalized mean square error between network output

and label

,

where

represents the Frobenius norm.

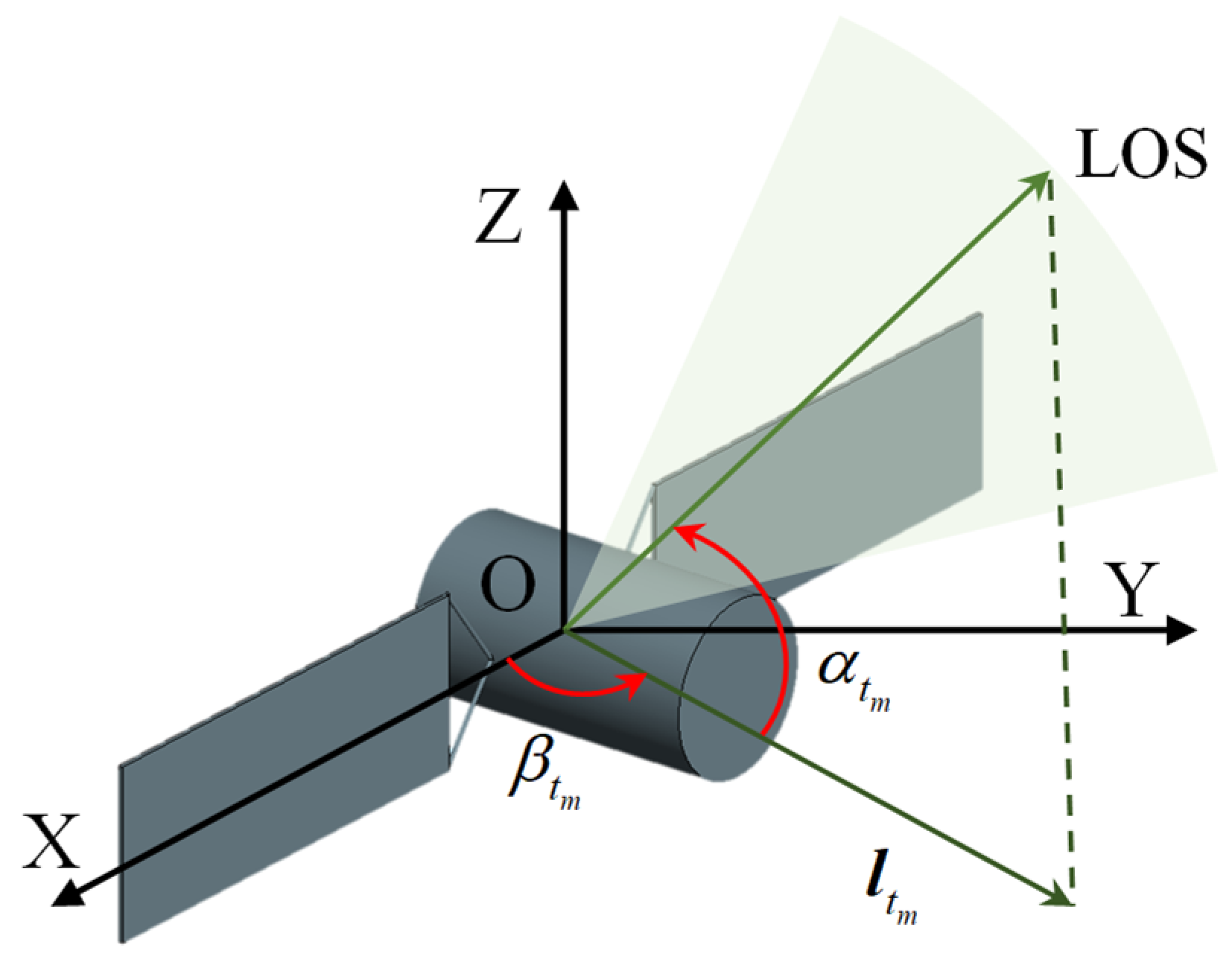

4.3.2. Instantaneous Attitude Estimation Based on Swin Transformer

When the radar line of sight is fixed, the attitude angle has a one-to-one correspondence to the enhanced ISAR image. Therefore, this study used the swin transformer to learn the mapping relationship.

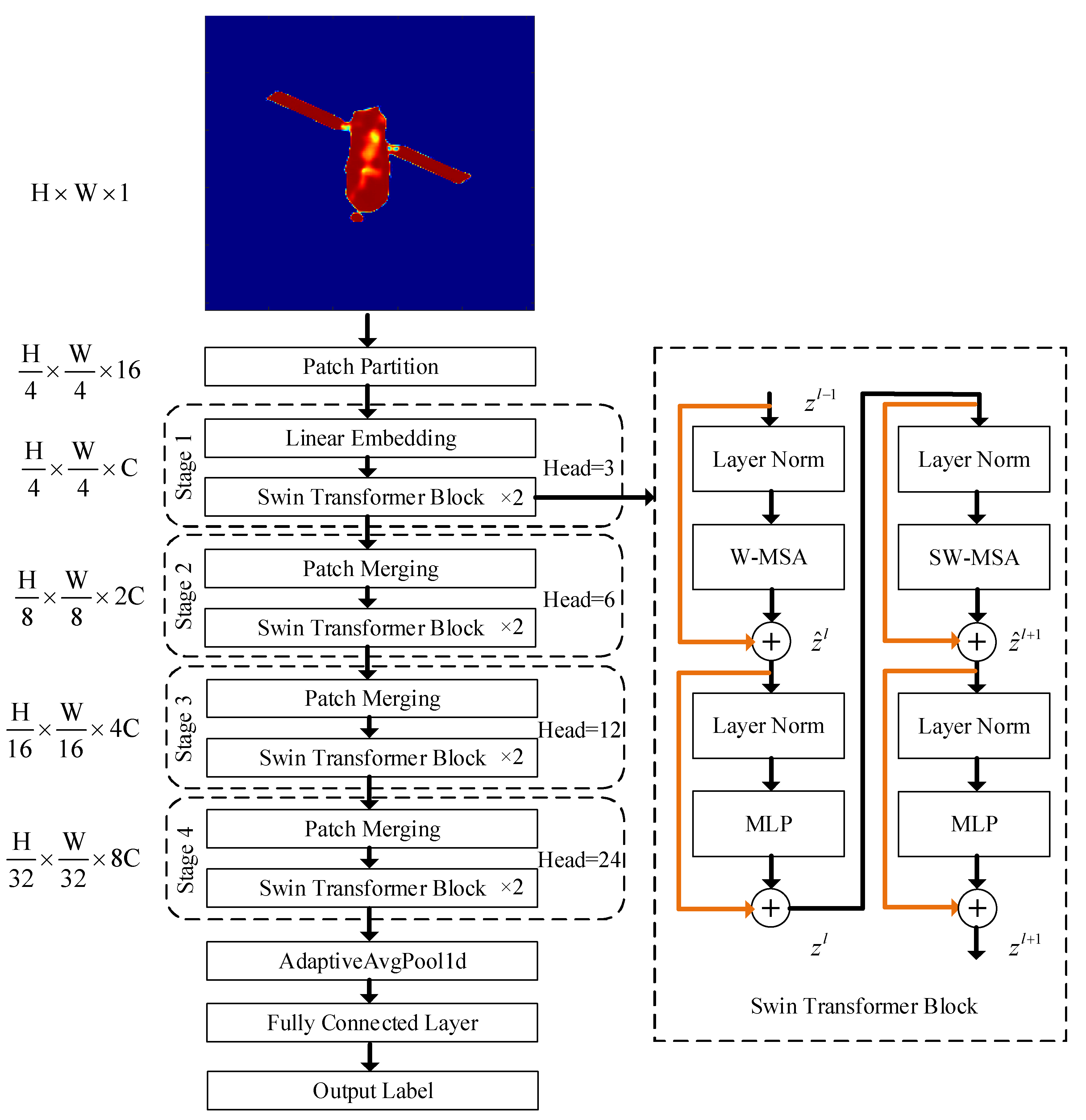

A flow chart of the instantaneous attitude estimation based on the swin transformer is shown in

Figure 7. First, an enhanced ISAR imaging result with a size of

is inputted into the network. It is then divided into non-overlapping patch sets by patch partition, based on a patch of 4 × 4 adjacent pixels, and each patch is flattened in the channel direction to obtain a feature map of

. Four stages are then stacked to build feature maps of different sizes for attention calculation. The first stage changes the feature dimension from 16 to

using linear embedding, and the other three stages are down-sampled by patch merging. Thus, the height and width of the feature maps are halved, and the depth is doubled. The feature map sizes are

,

, and

. After changing the dimension of the feature maps, the swin transformer modules are repeatedly stacked, with the swin transformer modules in subsequent stages stacked 2, 2, 6, and 2 times. A single swin transformer module is shown in the dashed box on the right side of

Figure 7. It is connected using layer normalization (LN) with a windows multi-head self-attention (W-MSA) module, or a shifted windows multi-head self-attention (SW-MSA) module, in which the LN layer is used to normalize different channels of the same sample to ensure the stability of the data feature distribution. Among them, the self-attention mechanism [

37] is the key module of the transformer, and its calculation method is as follows:

where

is the query,

is the key,

is the value, and

is the query dimension.

Multi-head self-attention is used to process the original input sequence into self-attention groups, splice the results, and perform a linear transformation to obtain the final output result:

where each self-attention module defines

, and the weight matrix satisfies the following:

W-MSA in the swin transformer module further divides the image block into non-overlapping areas and calculates the multi-head self-attention in the areas. In W-MSA, only the self-attention calculation is performed in each window. Thus, the information cannot be transmitted between windows. Therefore, the SW-MSA module is introduced. After the non-overlapping windows are divided in the L

th layer, the windows are re-divided in the L

th+1 layer with an offset of half the window distance, which allows the information of some windows in different layers to interact. Next, another LN layer is inputted to connect the multilayer perceptron (MLP). The MLP is a feedforward network that uses the GeLU function as an activation function, with the goal of completing the non-linear transformation and improving the fitting ability of the algorithm. In addition, subsequent stages have 3, 6, 12, and 24 heads. The residual connection added to each swin transformer module is shown in the yellow line in

Figure 7. This module has two different structures and needs to be used in pairs: the first structure uses W-MSA, and the second structure connects with SW-MSA. During the process of passing through this module, the output of each part is shown in Equations (19)–(22):

The dimension of the last stage output feature is . A feature vector with a length of can be obtained by a one-dimensional AdaptiveAvgPool1d with an output dimension of 1, and the Euler angle estimation can be obtained by a fully connected layer with a dimension of 3.

The network loss function is defined as the mean square error between output Euler angle

and label Euler angle

:

where three Euler angles are represented as

.

The swin transformer has the hierarchy, locality, and translation invariance characteristics. The hierarchy is reflected in the feature extraction stage, which uses a hierarchical construction method similar to a CNN. The input image is down-sampled 4 times, 8 times, and 16 times to obtain a multi-scale feature map. The locality is mainly reflected in the process of the self-attention calculation, in which the calculation is constrained in a divided local non-overlapping window. The calculation complexity of W-MSA and traditional MSA is as follows:

where

is the window size for the self-attention calculation. It can be seen that the complexity of the algorithm has changed from a square relationship with the image size to a linear relationship, which greatly reduces the amount of calculation and improves the efficiency of the algorithm. In SW-MSA, the division of non-overlapping windows is offset by half a window compared with W-MSA, which allows the information of the upper and lower windows to effectively interact. Compared with the common sliding window design in a CNN, it retains the translation invariability without reducing the accuracy.

4.3.3. Network Training

The proposed method consists of two deep networks, the UNet++ for ISAR image enhancement and the swin transformer for attitude estimation. During network training, the epoch is set to 100, the batch size is set to 32, and the initial learning rate is set to . With an increase in the epoch, exponential attenuation is then performed with an attenuation rate of 0.98. Finally, the network parameters are optimized using the Adam optimizer. For each epoch, the network training steps can be summarized as follows:

(1) Randomly obtain an ISAR imaging result for the batch size from a training dataset;

(2) Input the ISAR imaging results into UNet++, output the enhanced ISAR imaging results, and calculate the loss function according to Equation (15);

(3) Input the enhanced ISAR imaging results into the swin transformer, output the Euler angle estimation values, and calculate the loss function according to Equation (22);

(4) Update the swin transformer network parameters;

(5) Update the UNet++ network parameters;

(6) Repeat steps 1–5 until all the training data are taken.

After the network is trained, any ISAR imaging result can be inputted into the network to simultaneously realize ISAR image enhancement and instantaneous attitude estimation.