Take-Home Exams in Higher Education: A Systematic Review

Abstract

1. Introduction

- Q1:

- What advantages and disadvantages of THEs are contended by the community?

- Q2:

- What are the risks of THEs and can they be mitigated enough to warrant a wide-spread use?

- Q3:

- Are THEs only appropriate for certain levels on Bloom’s taxonomy scale?

- Q4:

- THEs are non-proctored, students have access to the Internet and the time-span is (typically) extended. How does that affect the question items on the THE?

- Q5:

- How do THEs affect the students’ study habits during the weeks preceding the exam and how does that affect their long-term retention of knowledge?

- Q6:

- Do THEs promote students’ higher-order cognitive skills (HOCS)?

2. Methods

2.1. Keywords

- ‘take-home exam*’ OR ‘take-home test*’ OR ‘take-home assessm*’—title/abstract/keywords

- AND

- ‘higher education’ OR ‘tertiary education’—all fields

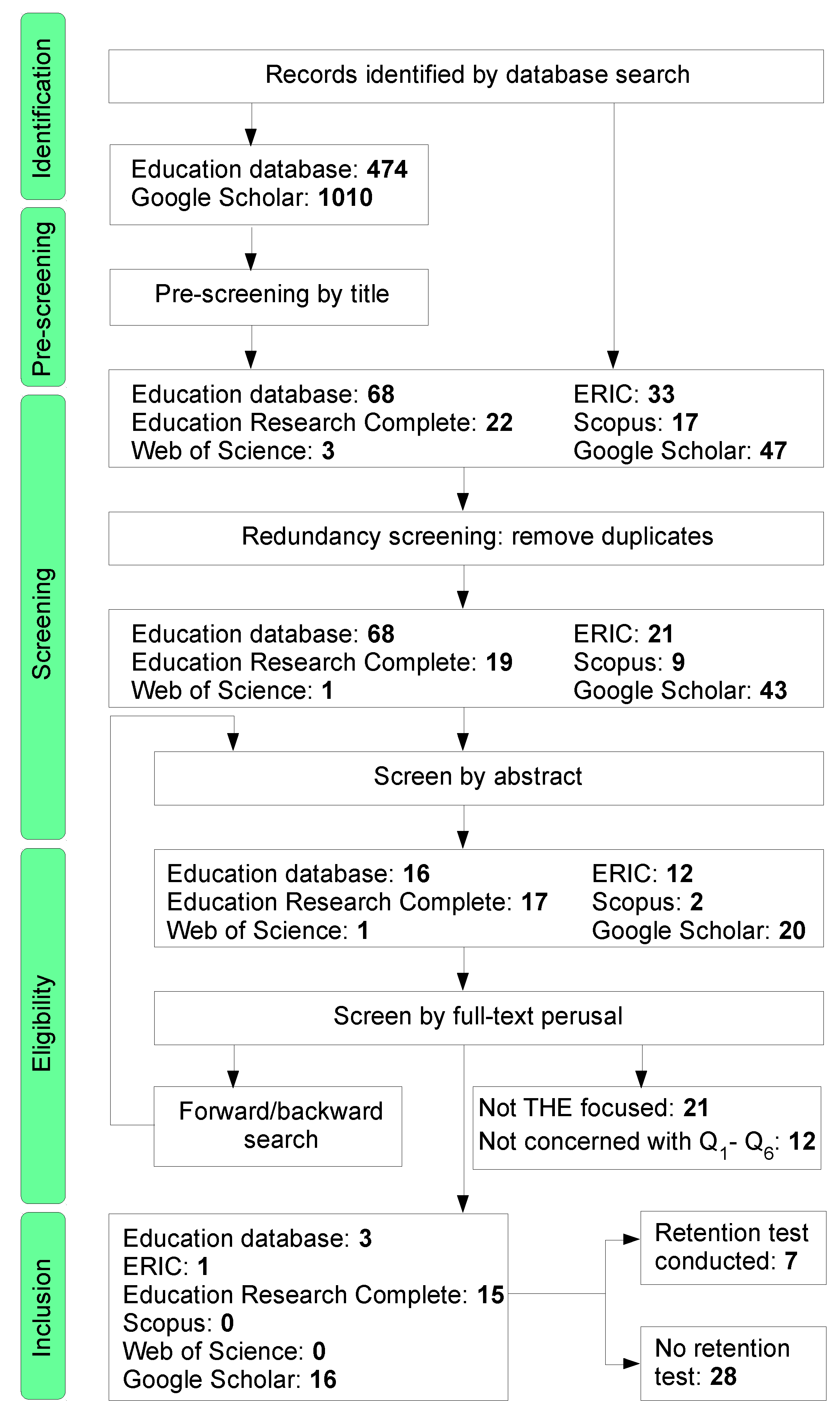

2.2. Screening Algorithm

2.3. Inclusion/Exclusion Criteria

3. Results

4. Discussion

4.1. Community Consensus

4.2. Cheating

4.3. THEs and Study Habits

4.4. Advocates and Objectors

4.5. Stakeholders

5. Conclusions

Recommendations

Funding

Conflicts of Interest

References

- Williams, B.J.; Wong, A. The efficacy of final examination: A comparative study of closed-book, invigilated exams and open-book, open-web exams. Br. J. Educ. Technol. 2009, 40, 227–236. [Google Scholar] [CrossRef]

- Mallroy, J. Adequate Testing and Evaluation of On-Line Learners. In Proceedings of the Instructional Technology and Education of the Deaf: Supporting Learners, K- College: An International Symposium, Rochester, NY, USA, 25–27 June 2001. [Google Scholar]

- Lopéz, D.; Cruz, J.-L.; Sánchez, F.; Fernández, A. A take-home exam to assess professional skills. In Proceedings of the 41st ASEE/IEEE Frontiers in Education Conference, Rapid City, SD, USA, 12–15 October 2011. [Google Scholar]

- Rich, R. An experimental study of differences in study habits and long-term retention rates between take-home and in-class examination. Int. J. Univ. Teach. Fac. Dev. 2011, 2, 123–129. [Google Scholar]

- Biggs, J. Teaching for Quality Learning at University; Oxford University Press: Oxford, UK, 1999. [Google Scholar]

- Giammarco, E. An Assessment of Learning Via Comparison of Take-Home Exams Versus In-Class Exams Given as Part of Introductory Psychology Classes at the Collegiate Level. Ph.D. Thesis, Capella University, Minneapolis, MN, USA, 2011. [Google Scholar]

- Andersson, L.; Krathwhol, D.; Airasian, P.; Cruikshank, K.; Mayer, R.; Pintrich, P.; Wittrock, M.C. A Taxonomy for Learning, Teaching and Assessing: A Revision of Bloom’s Taxonomy of Educational Objectives; Pearson: London, UK, 2001. [Google Scholar]

- Andrada, G.; Linden, K. Effects of two testing conditions on classroom achievement: Traditional in-class versus experimental take-home conditions. In Proceedings of the Annual Meeting of the American Educational Research Association, Atlanta, GA, USA, 12–16 April 1993. [Google Scholar]

- Bloom, B. Taxonomy of Educational Objectives. The Classification of Educational Goals, Handbook 1: Cognitive Domain; David McKay: New York, NY, USA, 1956. [Google Scholar]

- Svoboda, W. A case for out-of-class exams. Clear. House J. Educ. Strateg. Issues Ideas 1971, 46, 231–233. [Google Scholar] [CrossRef]

- Bredon, G. Take-home tests in economics. Econ. Anal. Policy 2003, 33, 52–60. [Google Scholar] [CrossRef]

- Zoller, U. Alternative Assessment as (critical) means of facilitating HOCS-promotion teaching and learning in chemistry education. Chem. Educ. Res. Pract. Eur. 2001, 2, 9–17. [Google Scholar] [CrossRef]

- Carrier, D. Legislation as a stimulus to innovation. High. Educ. Manag. 1990, 2, 88–98. [Google Scholar]

- Aggarwal, A. A Guide to eCourse Management: The stakeholders’ perspectives. In Web-Based Education: Learning from Experience; Aggarwal, A., Ed.; Idea Group Publishing: Baltimore, MD, USA, 2003; pp. 1–23. [Google Scholar]

- Hall, L. Take-home tests: Educational fast food for the new Millennium? J. Manag. Organ. 2001, 7, 50–57. [Google Scholar] [CrossRef]

- Bell, A.; Egan, D. The Case for a Generic Academic Skills Unit. Available online: http://www.quality researchinternational.com/esecttools/esectpubs/belleganunit.pdf (accessed on 15 January 2019).

- Haynie, W. Effects of take-home and in-class tests on delayed retention learning acquired via individualized, self-paced instructional texts. J. Ind. Teach. Educ. 1991, 28, 52–63. [Google Scholar]

- Durning, S.; Dong, T.; Ratcliffe, T.; Schuwirth, L.; Artino, A., Jr.; Boulet, J.; Eva, K. Comparing open-book and closed-book examinations: A systematic review. Acad. Med. 2016, 91, 583–599. [Google Scholar] [CrossRef]

- Ramsey, P.; Carline, J.; Inui, T.; Larsson, E.; Wenrich, M. Predictive validity of certification. Annu. Intern. Med. 1989, 110, 719–726. [Google Scholar] [CrossRef]

- Gough, D. Weight of evidence: A framework for the appraisal of the quality and relevance of evidence. Appl. Pract. Based Res. 2007, 22, 213–228. [Google Scholar] [CrossRef]

- Bearman, M.; Smith, C.; Carbone, A.; Slade, S.; Baik, C.; Hughes-Warrington, M.; Neuman, D. Systematic review methodology in higher education. High. Educ. Res. Dev. 2012, 31, 625–640. [Google Scholar] [CrossRef]

- Freedman, A. The take-home examination. Peabody J. Educ. 1968, 45, 343–347. [Google Scholar] [CrossRef]

- Marsh, R. Should we discontinue classroom tests? An experimental study. High Sch. J. 1980, 63, 288–292. [Google Scholar]

- Weber, L.; McBee, J.; Krebs, J. Take home tests: An experimental study. Res. High. Educ. 1983, 18, 473–483. [Google Scholar] [CrossRef]

- Marsh, R. A comparison of take-home versus in-class exams. J. Educ. Res. 1984, 78, 111–113. [Google Scholar] [CrossRef]

- Grzelkowski, K. A Journey Toward Humanistic Testing. Teach. Sociol. 1987, 15, 27–32. [Google Scholar] [CrossRef]

- Zoller, U.; Ben-Chaim, D. Interaction between examination type, anxiety state and academic achievement in college science; an action-oriented research. J. Res. Sci. Teach. 1989, 26, 65–77. [Google Scholar] [CrossRef]

- Murray, J. Better testing for better learning. Coll. Test. 1990, 38, 148–152. [Google Scholar] [CrossRef]

- Fernald, P.; Webster, S. The merits of the take-home, closed book exam. J. Hum. Educ. Dev. 1991, 29, 130–142. [Google Scholar] [CrossRef]

- Ansell, H. Learning partners in an engineering class. In Proceedings of the 1996 ASEE Annual Conference, Washington, DC, USA, 23–26 June 1996. [Google Scholar]

- Norcini, J.; Lipner, R.; Downing, S. How meaningful are scores on a take-home recertification examination. Acad. Med. 1996, 71, 71–73. [Google Scholar] [CrossRef] [PubMed]

- Haynie, J. Effects of take-home tests and study questions on retention learning in technology education. J. Technol. Learn. 2003, 14, 6–18. [Google Scholar] [CrossRef]

- Tsaparlis, G.; Zoller, U. Evaluation of higher vs. lower-order cognitive skills-type examination in chemistry; implications for in-class assessment and examination. Univ. Chem. Educ. 2003, 7, 50–57. [Google Scholar]

- Giordano, C.; Subhiyah, R.; Hess, B. An analysis of item exposure and item parameter drift on a take-home recertification exam. In Proceedings of the Annual Meeting of the American Educational Research Association, Montreal, QC, Canada, 11–15 April 2005. [Google Scholar]

- Moore, R.; Jensen, P. Do open-book exams impede long-term learning in introductory biology courses? J. Coll. Sci. Teach. 2007, 36, 46–49. [Google Scholar]

- Frein, S. Comparing In-class and Out-of-Class Computer-based test to Traditional Paper-and-Pencil tests in Introductory Psychology Courses. Teach. Psychol. 2011, 38, 282–287. [Google Scholar] [CrossRef]

- Marcus, J. Success at any cost? There may be a high price to pay. Times Higher Education London, 27 September 2012; 22–25. [Google Scholar]

- Tao, J.; Li, Z. A Case Study on Computerized Take-Home Testing: Benefits and Pitfalls. Int. J. Tech. Teach. Learn. 2012, 8, 33–43. [Google Scholar]

- Hagström, L.; Scheja, M. Using meta-reflection to improve learning and throughput: Redesigning assessment producers in a political science course on power. Assess. Eval. High. Educ. 2014, 39, 242–253. [Google Scholar] [CrossRef]

- Rich, J.; Colon, A.; Mines, D.; Jivers, K. Creating learner-centered assessment strategies or promoting greater student retention and class participation. Front. Psychol. 2014, 5, 1–3. [Google Scholar] [CrossRef]

- Sample, S.; Bleedorn, J.; Schaefer, S.; Mikla, A.; Olsen, C.; Muir, P. Students’ perception of case-based continuous assessment and multiple-choice assessment in a small animal surgery course for veterinary medical students. Vet. Surg. 2014, 43, 388–399. [Google Scholar] [CrossRef]

- Johnson, C.; Green, K.; Galbraith, B.; Anelli, C. Assessing and refining group take-home exams as authentic, effective learning experiences. Res. Teach. 2015, 44, 61–71. [Google Scholar]

- Downes, M. University scandal, reputation and governance. Int. J. Educ. Integr. 2017, 13, 8. [Google Scholar] [CrossRef]

- D’Souza, K.; Siegfeldt, D. A conceptual framework for detecting cheating in online and take-home exams. Decis. Sci. J. Innov. Educ. 2017, 15, 370–391. [Google Scholar] [CrossRef]

- Lancaster, T.; Clarke, R. Rethinking assessment by examination in the age of contract cheating. In Proceedings of the Plagiarism Across Europe Beyond, Brno, Czech Republic, 24–26 May 2017; ENAI: Brno, Czech Republic; pp. 215–228. [Google Scholar]

- Foley, D. Instructional potential of teacher-made tests. Teach. Psychol. 1981, 8, 243–244. [Google Scholar] [CrossRef]

- Kaplan, H.; Sadock, B. Learning theory. In Synopsis of Psychiatry: Behavioral Science/Clinical Psychiatry, 8th ed.; Kaplan, H., Sadock, B., Eds.; Williams &Wilkins: Philadelphia, PA, USA, 2000; pp. 148–154. [Google Scholar]

- Berett, D. Harvard Cheating Scandal Points Out Ambiguities of Collaboration. Chron. High. Educ. 2012, 59, 7. [Google Scholar]

- Pennington, B. Cheating scandal dulls pride in Athletics at Harvard. The New York Times, 18 September 2012; B11. [Google Scholar]

- Conlin, M. Commentary: Cheating or postmodern learning? Bus. Week 2007, 4043, 42. [Google Scholar]

- Young, J. Cheating incident involving 34 students at Duke is Business School’s biggest ever. Chronicle of Higher Education, 30 April 2007. [Google Scholar]

- Coughlin, E. West Point to Review 823 Exam Papers. Chronicle of Higher Education, 31 May 1976; 12. [Google Scholar]

- Vassar, R. Take-home exams are not the solution. The Stanford Daily, 8 October 2012. [Google Scholar]

- Agarwal, P.; Karpicke, J.; Kang, S.; Roediger, H., III; McDermott, K. Examining the Testing Effect with Open- and Closed-Book Tests. Appl. Cognit. Psychol. 2008, 22, 861–876. [Google Scholar] [CrossRef]

- Ebel, R. Essentials of Educational Measurement; Prentice Hall: Englewood Cliffs, NJ, USA, 1972. [Google Scholar]

| Database | Hits |

|---|---|

| Education database | 474 |

| Education research complete | 22 |

| ERIC | 33 |

| Scopus | 17 |

| Web of Science | 3 |

| Google Scholar | 1010 |

| Work | Q1 | Q2 | Q3 | Q4 | Q5 | Q6 | Retention |

|---|---|---|---|---|---|---|---|

| Freedman, 1968 [22] | ✓ | ✓ | ✓ | ✓ | |||

| Svoboda, 1971 [10] | ✓ | ✓ | ✓ | ✓ | ✓ | ||

| Marsh, 1980 [23] | ✓ | ✓ | ✓ | ✓ | |||

| Weber et al., 1983 [24] | ✓ | ✓ | ✓ | ✓ | |||

| Marsh, 1984 [25] | ✓ | ✓ | ✓ | ✓ | |||

| Grzelkowski, 1987 [26] | ✓ | ✓ | |||||

| Zoller and Ben-Chaim, 1989 [27] | ✓ | ||||||

| Murray, 1990 [28] | ✓ | ✓ | ✓ | ||||

| Fernald and Webster, 1991 [29] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ |

| Haynie, 1991 [17] | ✓ | ✓ | ✓ | ✓ | ✓ | ||

| Andrada and Linden, 1993 [8] | ✓ | ✓ | ✓ | ✓ | ✓ | ||

| Ansell, 1996 [30] | ✓ | ||||||

| Norcini et al., 1996 [31] | ✓ | ✓ | ✓ | ||||

| Hall, 2001 [15] | ✓ | ✓ | ✓ | ||||

| Mallory, 2001 [2] | ✓ | ||||||

| Zoller, 2001 [12] | ✓ | ||||||

| Haynie, 2003 [32] | ✓ | ✓ | ✓ | ||||

| Bredon, 2003 [11] | ✓ | ✓ | ✓ | ||||

| Tsaparlis and Zoller, 2003 [33] | ✓ | ✓ | ✓ | ✓ | ✓ | ||

| Giordano et al., 2005 [34] | ✓ | ✓ | |||||

| Moore and Jensen, 2007 [35] | ✓ | ✓ | |||||

| Williams and Wong, 2009 [1] | ✓ | ✓ | ✓ | ✓ | ✓ | ||

| Frein, 2011 [36] | ✓ | ||||||

| Giammarco, 2011 [6] | ✓ | ✓ | ✓ | ||||

| Lopez et al., 2011 [3] | ✓ | ✓ | ✓ | ✓ | |||

| Rich, 2011 [4] | ✓ | ✓ | ✓ | ✓ | ✓ | ||

| Marcus, 2012 [37] | ✓ | ||||||

| Tao and Li, 2012 [38] | ✓ | ✓ | ✓ | ||||

| Hagström and Scheja, 2014 [39] | ✓ | ✓ | ✓ | ||||

| Rich et al., 2014 [40] | ✓ | ✓ | |||||

| Sample et al., 2014 [41] | ✓ | ||||||

| Johnson et al., 2015 [42] | ✓ | ✓ | ✓ | ||||

| Downes, 2017 [43] | ✓ | ||||||

| D’Souza and Siegfeldt, 2017 [44] | ✓ | ✓ | |||||

| Lancaster and Clarke, 2017 [45] | ✓ | ✓ |

| Work | Method | Validated |

|---|---|---|

| Freedman, 1968 [22] | Personal reflections | No |

| Svoboda, 1971 [10] | Personal comments based on experience | No |

| Marsh, 1980 [23] | Hypothesis testing | Yes |

| Weber et al., 1983 [24] | Hypothesis testing | Yes |

| Marsh, 1984 [25] | Hypothesis testing | Yes |

| Grzelkowski, 1987 [26] | Case study | No |

| Zoller and Ben-Chaim, 1989 [27] | Survey + Case study | Yes |

| Murray, 1990 [28] | Review | No |

| Fernald and Webster, 1991 [29] | Case study | No |

| Haynie, 1991 [17] | Hypothesis testing | Yes |

| Andrada and Linden, 1993 [8] | Hypothesis testing | Yes |

| Ansell, 1996 [30] | Collaborative THE (voluntary pairing) + final ICE | No |

| Norcini et al., 1996 [31] | Hypothesis testing | Yes |

| Hall, 2001 [15] | Pilot study with optional take-home test | No |

| Mallory, 2001 [2] | Case study | No |

| Zoller, 2001 [12] | Case study | No |

| Haynie, 2003 [32] | Hypothesis testing | Yes |

| Bredon, 2003 [11] | Multiple choice THE | Yes |

| Tsaparlis and Zoller, 2003 [33] | Synthesizing from others | Yes |

| Giordano et al., 2005 [34] | Hypothesis testing | Yes |

| Moore and Jensen, 2007 [35] | Hypothesis testing | Yes |

| Williams and Wong, 2009 [1] | Online survey, students reminded by e-mail | No |

| Frein, 2011 [36] | Hypothesis testing | Yes |

| Giammarco, 2011 [6] | Hypothesis testing | Yes |

| Lopez et al., 2011 [3] | Case study | No |

| Rich, 2011 [4] | Theoretical work | No |

| Marcus, 2012 [37] | Review | No |

| Tao and Li, 2012 [38] | Hypothesis testing | Yes |

| Hagström and Scheja, 2014 [39] | Hypothesis testing | Yes |

| Rich et al., 2014 [40] | Hypothesis testing | Yes |

| Sample et al., 2014 [41] | Hypothesis testing | Yes |

| Johnson et al., 2015 [42] | Case study | Yes |

| Downes, 2017 [43] | Review | No |

| D’Souza and Siegfeldt, 2017 [44] | Hypothesis testing | Yes |

| Lancaster and Clarke, 2017 [45] | Review | No |

| Purported Advantage | Source |

|---|---|

| Reduce students’ anxiety | Fernald and Webster, 1991 [29]; Giordano et al., 2005 [34]; Hall, 2001 [15]; Johnson et al., 2015 [42]; Rich, 2011 [4]; Rich et al., 2014 [40]; Tao and Li, 2012 [38]; Weber, et al., 1983 [24]; Williams and Wong, 2009 [1]; Zoller and Ben-Chaim, 1989 [27] |

| Can be designed to tests HOCS | Williams and Wong, 2009 [1]; Andrada and Linden, 1993 [8]; Fernald and Webster, 1991 [29]; Freedman, 1968 [22]; Giordano et al., 2005 [34]; Johnson et al., 2015 [42]; Lopez et al., 2011 [3]; Sample et al., 2014 [41]; Svoboda, 1971 [10]; Zoller, 2001 [12]; Zoller and Ben-Chaim, 1989 [27]; |

| Conservation of classroom time | D’Souza and Siegfeldt, 2017 [44]; Fernald and Webster, 1991 [29]; Haynie, 1991 [17]; Svoboda, 1971 [10]; Tao and Li, 2012 [38]; Fernald and Webster, 1991 [29] |

| Provide a good learning experience | Andrada and Linden, 1993 [8]; Rich et al., 2014 [40]; Zoller and Ben-Chaim, 1989 [27] |

| Places responsibility for learning on the student | Grzelkowski, 1987 [26]; Hall, 2001 [15]; Lopez, et al., 2011 [3]; Rich, 2011 [4] |

| Promote learning by testing | Andrada and Linden, 1993 [8]; Freedman, 1968 [22]; Rich, 2011 [4] |

| Students have time to accomplish tasks that take time | Svoboda, 1971 [10] |

| Promote a more realistic student study | Svoboda, 1971 [10]; William and Wong, 2009 |

| Less burdensome for teachers | Lopez, et al., 2011 [3]; Svoboda, 1971 [10] |

| Engage students rather than alienate them | William and Wong, 2009; Zoller and Ben-Chaim, 1989 [27] |

| Students spend more time on a THE than on an ICE | Freedman, 1968 [22]; Rich, 2011 [4] |

| Contribute toward a more interactive teacher/student learning environment | Hall, 2001 [15] |

| Foster the educational process beyond that of memorization | Foley, 1981 [46] |

| Do not require venue of supervision | Hall, 2001 [15] |

| Extended involvement of students with course material | Freedman, 1968 [22] |

| Luck is ruled out | Freedman, 1968 [22] |

| Offer flexibility regarding location and time | Williams and Wong, 2009 [1] |

| Bring convenience to both instructor and students | Tao and Li, 2012 [38] |

| Students learn more and study harder | Rich et al., 2014 [40] |

| Enforce students to consult other texts apart from the course textbook | Zoller and Ben-Chaim, 1989 [27] |

| Shift perspective from teaching to learning | Lopez, et al., 2011 [3] |

| Can assess also the highest Bloom levels | Lopez, et al., 2011 [3] |

| More sophisticated questions can be asked | Lopez, et al., 2011 [3] |

| A greater rigor in the answers can be demanded | Lopez, et al., 2011 [3] |

| Make it possible to assess teamwork | Lopez, et al., 2011 [3] |

| Questions can be asked about all the content of the syllabus | Lopez, et al., 2011 [3] |

| Improve retention | Johnson et al., 2015 [42] |

| Permit testing of content not covered in class/textbook | Johnson et al., 2015 [42] |

| Purported Disadvantage | Source |

|---|---|

| Easily compromised by unethical student behavior | Bredon, 2003 [11]; Downes, 2017 [43]; D’Souza and Siegfeldt, 2017 [44]; Fernald and Webster, 1991 [29]; Frein, 2012 [36]; Hall, 2001 [15]; Lancaster and Clarke, 2017 [45]; Lopez et al., 2011 [3]; Mallory, 2001 [2]; Marsh, 1980 [23]; Marsh, 1984 [25]; Svoboda, 1971 [10]; Tao and Li, 2012 [38]; William and Wong, 2009 [1] |

| Students attend fewer lectures | Moore and Jensen, 2007 [35]; Tao and Li, 2012 [38] |

| Students submit fewer extra-credit assignments | Moore and Jensen, 2007 [35]; Tao and Li, 2012 [38] |

| Writing and marking is time consuming | Andrada and Linden, 1993 [8]; Hall, 2001 [15] |

| Students only hunt for answers | Haynie, 1991 [17] |

| Undermine long-term learning | Moore and Jensen, 2007 [35] |

| Promote lower levels of academic achievements | Moore and Jensen, 2007 [35] |

| Items used on THEs are forfeit | Andrada and Linden, 1993 [8] |

| Generate long answers | Rich et al., 2014 [40] |

| Remedy | Source |

|---|---|

| If questions are designed so that they require a thorough understanding of course material, they will be costly to contract out | Bredon, 2003 [11] |

| Ask for proof and justifications to all answers | Lopez et al., 2011 [3]; Svoboda, 1971 [10] |

| Introduce an honor code | Fernald and Webster, 1991 [29]; Frein, 2011 [36] |

| Grade down for copy without reference | Freedman, 1968 [22] |

| Submit electronically to permit plagiarism control | Williams and Wong, 2009 [1] |

| Answers must make direct references to course-specific material | Williams and Wong, 2009 [1] |

| Make questions “highly contextualized” | Williams and Wong, 2009 [1] |

| Cohort cheating can be detected by statistical means | D’Souza and Siegfeldt, 2017 [44]; Weber et al., 1983 [24] |

| Narrow the timeframe to complete the test | Frein, 2011 [36]; Lancaster and Clarke, 2017 [45] |

| Randomly scramble the order of questions and answers | Frein, 2011 [36]; Tao and Li, 2012 [38] |

| Use a security browser to prevent printing and saving of the exam | Tao and Li, 2012 [38] |

| Implement remote invigilation services | Lancaster and Clarke, 2017 [45]; Mallory, 2001 [2] |

| Assign questions randomly | Murray, 2012 [28] |

| Ask for hand-written answers | Lopez et al., 2011 [3] |

| Print exam with watermarks | Lopez et al., 2011 [3] |

© 2019 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Bengtsson, L. Take-Home Exams in Higher Education: A Systematic Review. Educ. Sci. 2019, 9, 267. https://doi.org/10.3390/educsci9040267

Bengtsson L. Take-Home Exams in Higher Education: A Systematic Review. Education Sciences. 2019; 9(4):267. https://doi.org/10.3390/educsci9040267

Chicago/Turabian StyleBengtsson, Lars. 2019. "Take-Home Exams in Higher Education: A Systematic Review" Education Sciences 9, no. 4: 267. https://doi.org/10.3390/educsci9040267

APA StyleBengtsson, L. (2019). Take-Home Exams in Higher Education: A Systematic Review. Education Sciences, 9(4), 267. https://doi.org/10.3390/educsci9040267