Assessment of Competencies in Scientific Inquiry Through the Application of Rasch Measurement Techniques

Abstract

1. Introduction

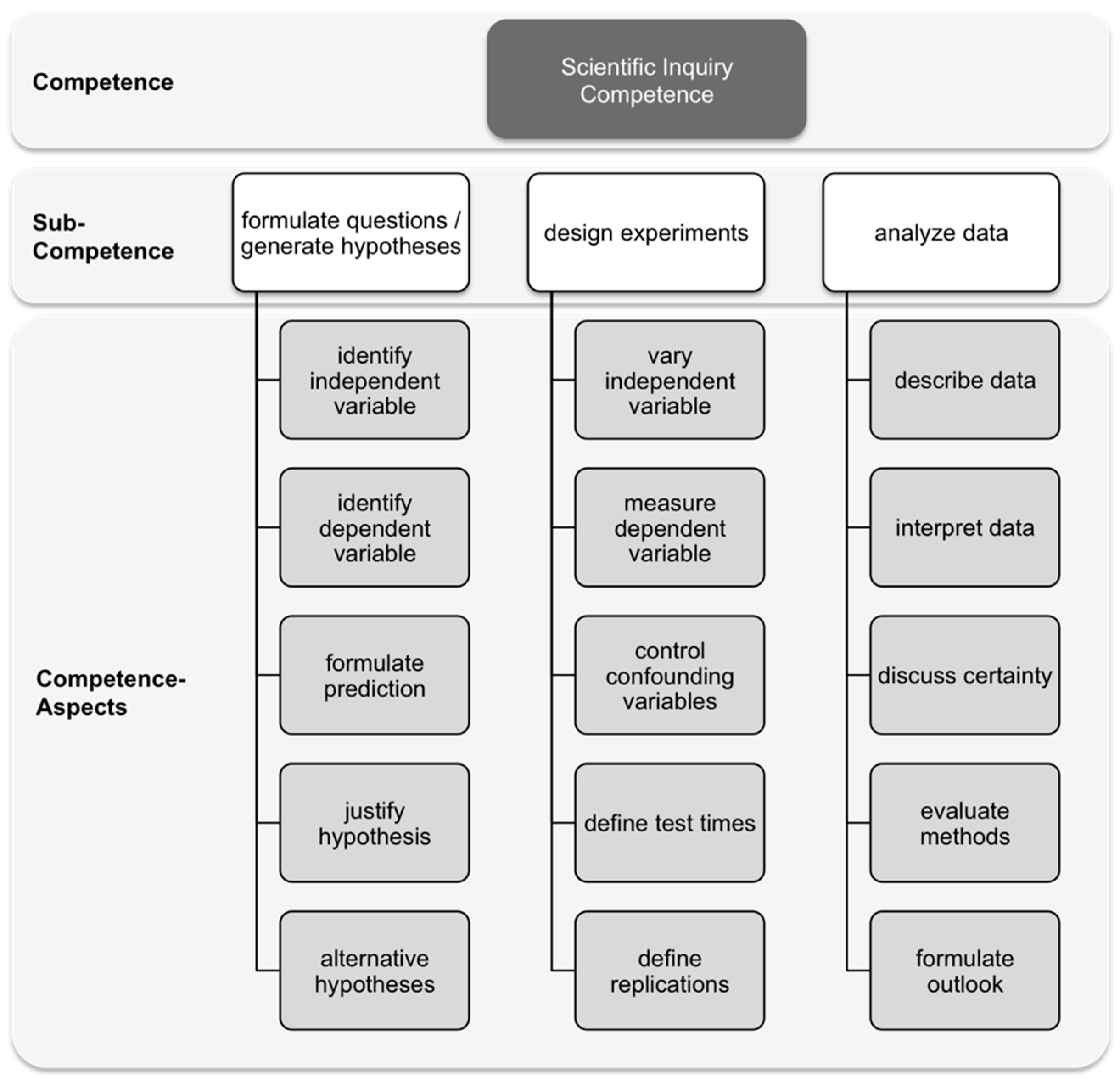

1.1. Scientific Inquiry Competence

1.2. Assessments of Scientific Inquiry Competences

2. Materials and Methods

- Item contexts include challenging and curriculum relevant topics for biology instruction in upper grades, e.g., ecology, neurophysiology, ethology, enzymology.

- Students can answer items without having specific content knowledge. When content knowledge is needed, such information is provided in the item context [57].

- Items which concern “formulating hypotheses” have the research question presented in the item.

- Items which emphasize “designing an experiment”, include both the research question and the hypothesis.

- Items which emphasize “analyzing data”, include the hypothesis to be tested, the experimental design, and the data to be analyzed.

2.1. Final Instrument and Rubric

2.2. Sample and Setting

2.3. Psychometric Analysis and Rasch Partial-Credit Model

3. Results

3.1. Item Fit

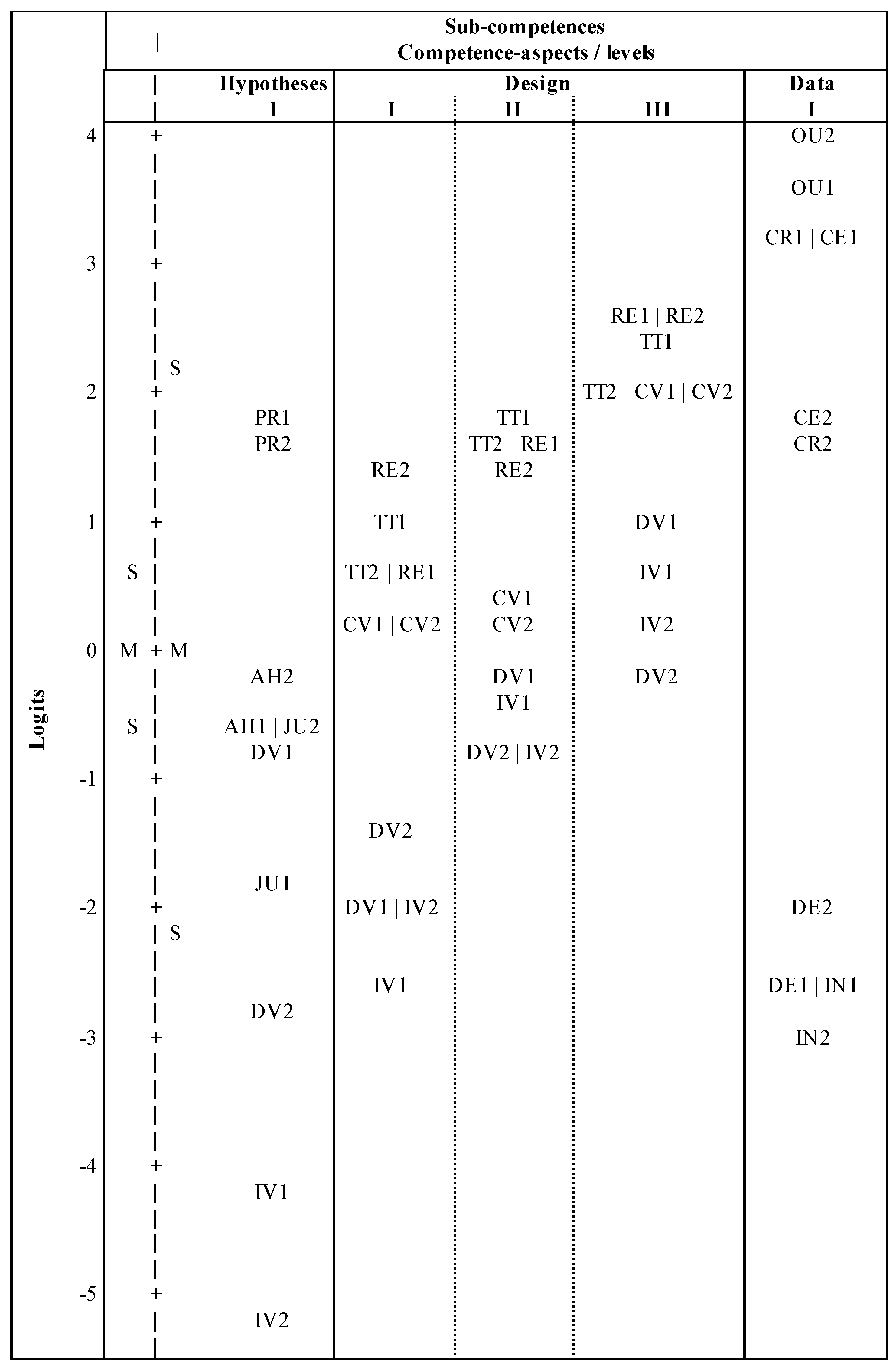

3.2. Item-Difficulty Order

- ‘Generating hypotheses’: independent variable <> dependent variable < justification < alternative hypotheses. For “prediction” no prediction of item difficulty could be found in the literature, but we hypothesize this aspect to be more difficult than “independent variable” and “dependent variable” because students tend to think of possible causes first before mentioning a result.

- ‘Designing Experiments’: independent variable <> dependent variable < confounding variables < test times <> replication.

- ‘Analyzing Data’: interpretation <> description < certainty <> criticism. For “outlook” no prediction of item difficulty could be found in the literature, but we hypothesize this aspect to be more difficult than description and interpretation.

3.3. Reliability and Sensitivity

4. Discussion

4.1. Instrument Evaluation

4.2. Potentials Curricula Development and Teaching

4.3. Potentials for Individual Diagnosis and Feedback

4.4. Limitations of the Study

Supplementary Materials

Author Contributions

Funding

Conflicts of Interest

Appendix A

| Author(s) | Beaumont-Walters & Soyibo (2001) | Chinn & Malhotra (2002) | Dillashaw & Okey (1980) & Burns, Okey & Wise (1985) | Fraser (1980) | Germann, Aram, & Burke (1996); Germann & Aram (1996a, 1996b) | Harwood (2004) | Hofstein, Navon, Kipnis, & Mamlok-Naaman (2005) | Klahr & Dunbar (1988) | Lin & Lehman (1999) | Mayer et al. (2008)/ Kremer et al. (2013) | Meier und Mayer (2011) | Phillips & Germann (2002) | Rönnebeck et al. (2016) | Tamir, Nussinovitz & Friedler (1982) | Temiz, Tasar & Tan (2006) | Tobin & Capie (1982) | Vorholzer et al. (2016) | Wellnitz & Mayer (2013) | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Sub-Competences Aspects | |||||||||||||||||||

| Formulating questions | X | X | X | X | X | X | X | X | X | X | X | X | |||||||

| Dependent variable | X | X | |||||||||||||||||

| Independent variable | X | X | |||||||||||||||||

| Causal question | X | X | X | ||||||||||||||||

| Generating hypotheses | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | ||||

| Dependent variable | X | X | X | X | X | X | |||||||||||||

| Independent variable | X | X | X | X | X | X | X | ||||||||||||

| Prediction | X | X | X | X | X | X | X | X | |||||||||||

| Justification | X | X | X | X | X | X | X | ||||||||||||

| Alternative hypotheses | X | X | X | X | |||||||||||||||

| Designing and conducting an experiment | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | ||

| Dependent variable | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | ||||

| Independent variable | X | X | X | X | X | X | X | X | X | X | X | X | X | X | |||||

| Confounding variables | X | X | X | X | X | X | X | X | X | X | X | ||||||||

| Test times | X | X | |||||||||||||||||

| Repetition | X | X | X | X | X | X | |||||||||||||

| Analyzing Data | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | |

| Description | X | X | X | X | X | X | X | X | |||||||||||

| Interpretation | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | |

| Certainty | X | X | X | X | X | X | X | X | X | ||||||||||

| Criticism | X | X | X | X | X | X | X | ||||||||||||

| Outlook | X | X | X | X | X | X | |||||||||||||

| Source | Instrument | Grade-Level | Test Format | Test Theory |

|---|---|---|---|---|

| Beaumont-Walters & Soyibo (2001) | Test of integrated Science Process Skills (TISPS) | 9–10 | Open-ended (Hands-on) | CTT |

| Dillashaw & Okey (1980) & Burns, Okey & Wise (1985) | Test of Integrated Process Skills (TIPS) | 7–12 | Multiple-choice | CTT |

| Fraser (1980) | Test of Enquiry Skills (TOES) | 7–10 | Multiple-choice | CTT |

| Germann, Aram & Burke (1996) Germann & Aram (1996a, 1996b) | Science Process Skills Inventory (SPSI) | 7 | Open-ended (Hands-on) | CTT |

| Mayer et al. (2008) | Biology in Context (BiK) | 5–10 | Open-ended | IRT |

| Nowak et al. (2013) | Model of cross-linking scientific inquiry between biology and chemistry (VerE) | 9–10 | Multiple-choice | IRT |

| Tamir, Nussinovitz & Friedler (1982) | Practical Tests Assessment Inventory (PTAI) | 12 | Open-ended (Hands-on) | CTT |

| Temiz, Tasar & Tan (2006) | Multiple format test of science process skills (MFT-SPS) | 9 | Open-ended (Hands-on) | CTT |

| Tobin & Capie (1982) | Test of Integrated Science Processes (TISP) | 6–9 | Multiple-choice | CTT |

| Vorholzer et al. (2016) | Experimantal Thinking and Working Methods Test (EDAWT) | 11 | Multiple-choice | IRT |

| Kremer et al. (2012) | Evaluation of National Educational Standards (ESNaS) | 9–10 | Multiple-choice, short answers & open-ended | IRT |

| Aspect (and level) | Description | |

|---|---|---|

| Sub-competence: Hypotheses | ||

| Dependent Variable | The variable to be observed or measured is named. | |

| Independent variable | One variable that might cause change in the dependent variable is named. | |

| Prediction | The relationship between dependent and independent variable is formulated as a conditional sentence (e.g., using „if” and „then”) | |

| Justification | The choice of independent variable is justified | |

| Alternative Hypothesis | At least one alternative hypothesis/independent variable is named. | |

| Sub-competence: Design | ||

| Dependent variable | I | Something is observed unspecifically without mentioning or operationalizing any specific dependent variable. |

| II | Specific dependent variable is named, but not operationalized quantitatively. | |

| III | Specific dependent variable is named and operationalized quantitatively. | |

| Independent variable | I | Independent variable is varied without any specification. |

| II | Independent variable is varied using qualitative specifications of variation. | |

| III | Independent variable is varied using at least one quantitative specifications of variation. | |

| Confounding variables | I | Global mentioning of controlling confounding variables. |

| II | One or two specific confounding variables are named/controlled. | |

| III | More than two specific confounding variables are named/controlled. | |

| Test times | I | One specification about test times is given (start, duration or intervals of measurement). |

| II | Two specifications about test times are given (start and/or duration and/or intervals of measurement). | |

| III | Three specifications about test times are given (start and duration and intervals of measurement). | |

| Repetition | I | Planning repetition of the test using other objects. |

| II | Planning repetition the test using the same object. | |

| III | Planning repetition of the test using the same object and other objects. | |

| Sub-competence: Data | ||

| Description | Data is described objectively. | |

| Interpretation | Data is interpreted with respect to hypothesis. | |

| Certainty | Interpretation is evaluated critically/limited to some extent. | |

| Criticism | Procedure is evaluated critically/ideas for improvement are given. | |

| Outlook | Implications for further research/further research questions are formulated. | |

References

- Abd-El-Khalick, F.; BouJaoude, S.; Duschl, R.; Lederman, N.G.; Mamlok-Naaman, R.; Hofstein, A.; Niaz, M.; Treagust, D.; Tuan, H.L. Inquiry in Science Education: International Perspectives. Sci. Educ. 2004, 88, 397–419. [Google Scholar] [CrossRef]

- Schwartz, R.; Lederman, N.; Crawford, B. Developing views of nature of science in an authentic context: An explicit approach to bridging the gap between nature of science and scientific inquiry. Sci. Educ. 2004, 88, 610–645. [Google Scholar] [CrossRef]

- Arnold, J.C.; Kremer, K.; Mayer, J. Understanding Students’ Experiments—What kind of support do they need in inquiry tasks? Int. J. Sci. Educ. 2014, 36, 2719–2749. [Google Scholar] [CrossRef]

- DfES/QCA (Department for Education and Skills/Qualification and Curriculum Authority). Science—The National Curriculum for England; HMSO: London, UK, 2004. [Google Scholar]

- Kultusminister Konferenz (KMK). Beschlüsse der Kultusministerkonferenz—Bildungsstandards im Fach Biologie für den Mittleren Schulabschluss; Luchterhand: München, Germany, 2005. [Google Scholar]

- NRC/National Research Council. National Science Education Standards; National Academy Press: Washington, DC, USA, 1996. [Google Scholar]

- NRC/National Research Council. Inquiry and the National Science Education Standards; National Academy Press: Washington, DC, USA, 2000. [Google Scholar]

- NGSS Lead States. Next Generation Science Standards: For States. Available online: http://www.nextgenscience.org/next-generation-science-standards (accessed on 12 January 2013).

- Beaumont-Walters, Y.; Soyibo, K. An Analysis of High School Students’ Performance on Five Integrated Science Process Skills. Res. Sci. Technol. Educ. 2001, 19, 133–145. [Google Scholar] [CrossRef]

- Burns, J.C.; Okey, J.R.; Wise, K.C. Development of an Integrated Process Skill Test: TIPS II. J. Res. Sci. Teach. 1985, 22, 169–177. [Google Scholar] [CrossRef]

- Dillashaw, F.G.; Okey, J.R. Test of the Integrated Science Process Skills for Secondary Science Students. Sci. Educ. 1980, 64, 601–608. [Google Scholar] [CrossRef]

- Nehring, A.; Nowak, K.H.; zu Belzen, A.U.; Tiemann, R. Predicting Students’ Skills in the Context of Scientific Inquiry with Cognitive, Motivational, and Sociodemographic Variables. Int. J. Sci. Educ. 2015, 37, 1343–1363. [Google Scholar] [CrossRef]

- Nehring, A.; Nowak, K.H.; zu Belzen, A.U.; Tiemann, R. Doing Inquiry in Chemistry and Biology—The Context’s Influence on the Students’ Cognitive Load. La Chimica Nella Scuola XXXIV-3 2012, 3, 227–258. [Google Scholar]

- Mayer, J.; Wellnitz, N. Die Entwicklung von Kompetenzstrukturmodellen. In Methoden in der Naturwissenschaftsdidaktischen Forschung; Krüger, D., Parchmann, I., Schecker, H., Eds.; Springer: Berlin, Germany, 2014; pp. 19–29. [Google Scholar]

- Tamir, P.; Nussinovitz, R.; Friedler, Y. The design and use of a Practical Tests Assessment Inventory. J. Boil. Educ. 1982, 16, 42–50. [Google Scholar] [CrossRef]

- Liu, O.L.; Lee, H.-S.; Linn, M.C. Measuring knowledge integration: Validation of four-year assessments. J. Res. Sci. Teach. 2011, 48, 1079–1107. [Google Scholar] [CrossRef]

- Stecher, B.M.; Klein, S.P. The cost of Science Performance Assessments in Large-Scale Testing Programs. Educ. Eval. Policy Anal. 1997, 19, 1–14. [Google Scholar] [CrossRef]

- Klahr, D.; Dunbar, K. Dual space search during scientific reasoning. Cogn. Sci. 1988, 12, 1–48. [Google Scholar] [CrossRef]

- Mayer, J. Erkenntnisgewinnung als wissenschaftliches Problemlösen. In Theorien in der Biologiedidaktischen Forschung; Krüger, D., Vogt, H., Eds.; Springer: Berlin, Germany, 2007; pp. 177–186. [Google Scholar]

- Wellnitz, N.; Mayer, J. Modelling and Assessing Scientific Methods. In Proceedings of the Annual Meeting of the National Association of Research in Science Teaching (NARST), Olandor, FL, USA, 3–6 April 2011. [Google Scholar]

- Kremer, K.; Fischer, H.E.; Kauertz, A.; Mayer, J.; Sumfleth, E.; Walpuski, M. Assessment of Standard-based Learning Outcomes in Science Education: Perspectives from the German Project ESNaS. In Making It Tangible—Learning Outcomes in Science Education; Bernholt, S., Neumann, K., Nentwig, P., Eds.; Waxmann: Münster, Germany, 2012; pp. 217–235. [Google Scholar]

- Osborne, J.; Collins, S.; Ratcliffe, M.; Millar, R.; Duschl, R. What ‘Ideas-about-Science’ Should Be Taught in School Science? A Delphi Study of the Expert Community. J. Res. Sci. Teach. 2003, 40, 692–720. [Google Scholar] [CrossRef]

- Bauer, H.H. Scientific Literacy and the Myth of the Scientific Method; University of Illinois Press: Chicago, IL, USA, 1994. [Google Scholar]

- McComas, W.F. Ten myths of science: Reexamining what we think we know about the nature of science. Sch. Sci. Math. 1996, 96, 10–15. [Google Scholar] [CrossRef]

- Arnold, J.; Kremer, K.; Mayer, J. Wissenschaftliches Denken beim Experimentieren—Kompetenzdiagnose in der Sekundarstufe II. In Erkenntnisweg Biologiedidaktik 11; Krüger, D., Upmeier zu Belzen, A., Schmiemann, P., Möller, A., Elster, D., Eds.; Universitätsdruckerei: Kassel, Germany, 2013; pp. 7–20. [Google Scholar]

- Zimmerman, C. The Development of Scientific Reasoning Skills. Dev. Rev. 2000, 20, 99–149. [Google Scholar] [CrossRef]

- Harwood, W.S. A New Model for Inquiry—Is the Scientific Method Dead? J. Coll. Sci. Teach. 2004, 33, 29–33. [Google Scholar]

- Hofstein, A.; Navon, O.; Kipnis, M.; Mamlok-Naaman, R. Developing students’ ability to ask more and better questions resulting from inquiry-type chemistry laboratories. J. Res. Sci. Teach. 2005, 42, 791–806. [Google Scholar] [CrossRef]

- Rönnebeck, S.; Bernholt, S.; Ropohl, M. Searching for a common ground—A literature review of empirical research on scientific inquiry activities. Stud. Sci. Educ. 2016, 52, 161–197. [Google Scholar] [CrossRef]

- Vorholzer, A.; von Aufschnaiter CKirschner, S. Entwicklung und Erprobung eines Tests zur Erfassung des Verständnisses experimenteller Denk- und Arbeitsweisen. Z. Für Didakt. Der Naturwiss. 2016, 22, 25–41. [Google Scholar] [CrossRef]

- Germann, P.J.; Aram, R.J. Student Performance on Asking Questions, Identifying Variables, and Formulating Hypotheses. Sch. Sci. Math. 1996, 4, 192–201. [Google Scholar] [CrossRef]

- Mayer, J.; Grube, C.; Möller, A. Kompetenzmodell naturwissenschaftlicher Erkenntnisgewinnung. In Lehr-und Lernforschung in der Biologiedidaktik; Harms, U., Sandmann, A., Eds.; Studienverlag: Innsbruck, Austria, 2008; pp. 63–79. [Google Scholar]

- Phillips, K.A.; Germann, P.J. The Inquiry ‘I’: A Tool for Learning Scientific Inquiry. Am. Boil. Teach. 2002, 64, 512–520. [Google Scholar] [CrossRef]

- Lin, X.; Lehman, J.D. Supporting learning of variable control in a computer-based biology environment: Effects of prompting college students to reflect on their own thinking. J. Res. Sci. Teach. 1999, 36, 837–858. [Google Scholar] [CrossRef]

- Lawson, A.E. The Generality of Hypothetico-Deductive Reasoning: Making Scientific Thinking Explicit. Am. Boil. Teach. 2000, 62, 482–495. [Google Scholar] [CrossRef]

- Temiz, B.K.; Tasar, M.F.; Tan, M. Development and validation of a multiple format test of science process skills. Int. Educ. J. 2006, 7, 1007–1027. [Google Scholar]

- Germann, P.J.; Aram, R.; Burke, G. Identifying Patterns and Relationships among the Responses of Seventh-Grade Students to the Science Process Skill of Designing Experiments. J. Res. Sci. Teach. 1996, 33, 79–99. [Google Scholar] [CrossRef]

- Tobin, K.G.; Capie, W. Development and Validation of a Group Test of Integrated Science Processes. J. Res. Sci. Teach. 1982, 19, 133–141. [Google Scholar] [CrossRef]

- Meier, M.; Mayer, J. Selbständiges Experimentieren: Entwicklung und Einsatz eines anwendungsbezogenen Aufgabendesigns. Mathematisch und naturwissenschaftlicher Unterricht 2014, 67, 4–10. [Google Scholar]

- Fraser, B.J. Development and Validation of a Test of Enquiry Skills. J. Res. Sci. Teach. 1980, 17, 7–16. [Google Scholar] [CrossRef]

- Germann, P.J.; Aram, R.J. Student Performances on the Science Processes of Recording Data, Analyzing Data, Drawing Conclusions, and Providing Evidence. J. Res. Sci. Teach. 1996, 33, 773–798. [Google Scholar] [CrossRef]

- Chinn, C.A.; Malhotra, B.A. Epistemologically authentic inquiry in schools: A theoretical framework for evaluating inquiry tasks. Sci. Educ. 2002, 86, 175–218. [Google Scholar] [CrossRef]

- Kuhn, D.; Dean, D. Is developing scientific thinking all about learning to control variables? Psychol. Sci. 2005, 16, 866. [Google Scholar] [CrossRef] [PubMed]

- de Jong, T.; van Joolingen, W.R. Scientific discovery learning with computer simulations of conceptual domains. Rev. Educ. Res. 1998, 68, 179–201. [Google Scholar] [CrossRef]

- Klahr, D.; Fay, A.L.; Dunbar, K. Heuristics for Scientific Experimentation: A Developmental Study. Cogn. Psychol. 1993, 25, 111–146. [Google Scholar] [CrossRef] [PubMed]

- Dunbar, K. Concept Discovery in a Scientific Domain. Cogn. Sci. 1993, 17, 397–434. [Google Scholar] [CrossRef]

- Schauble, L.; Glaser, R.; Duschl, R.A.; Schulze, S.; John, J. Students’ Understanding of the Objectives and Procedures of Experimentation in the Science Classroom. J. Learn. Sci. 1995, 4, 131–166. [Google Scholar] [CrossRef]

- Hammann, M.; Hoi Phan, T.T.; Ehmer, M.; Grimm, T. Assessing pupils’ skills in experimentation. J. Boil. Educ. 2008, 42, 66–72. [Google Scholar] [CrossRef]

- Chen, Z.; Klahr, D. All Other Things Being Equal: Acquisition and Transfer of the Control of Variables Strategy. Child Dev. 1999, 70, 1098–1120. [Google Scholar] [CrossRef] [PubMed]

- Duggan, S.; Johnson, P.; Gott, R. A critical point in investigative work: Defining variables. J. Res. Sci. Teach. 1996, 33, 461–474. [Google Scholar] [CrossRef]

- Duggan, S.; Gott, R. Intermediate General National Vocational Qualification (GNVQ) Science: A Missed Opportunity for a Focus on Procedural Understanding? Res. Sci. Technol. Educ. 2000, 18, 201–214. [Google Scholar] [CrossRef]

- Lubben, F.; Millar, R. Children’s ideas about the reliability of experimental data. Int. J. Sci. Educ. 1996, 18, 955–968. [Google Scholar] [CrossRef]

- Roberts, R.; Gott, R. A written test for procedural understanding: A way forward for assessment in the UK science curriculum? Res. Sci. Technol. Educ. 2004, 22, 5–21. [Google Scholar] [CrossRef]

- Enger, S.K.; Yager, R.E. The Iowa Assessment Handbook; The Iowa-SS&C Project, The University of Iowa: Iowa City, IA, USA, 1998. [Google Scholar]

- Arnold, J. Die Wirksamkeit von Lernunterstützungen beim Forschenden Lernen: Eine Interventionsstudie zur Förderung des Wissenschaftlichen Denkens in der Gymnasialen Oberstufe; Logos: Berlin, Germany, 2015. [Google Scholar]

- Roberts, R.; Gott, R. Assessment of Biology Investigations. J. Boil. Educ. 2003, 37, 114–121. [Google Scholar] [CrossRef]

- Harlen, W. Purposes and Procedures for Assessing Science Process Skills. Assessment in Education. Princ. Policy Pract. 1999, 6, 129–144. [Google Scholar]

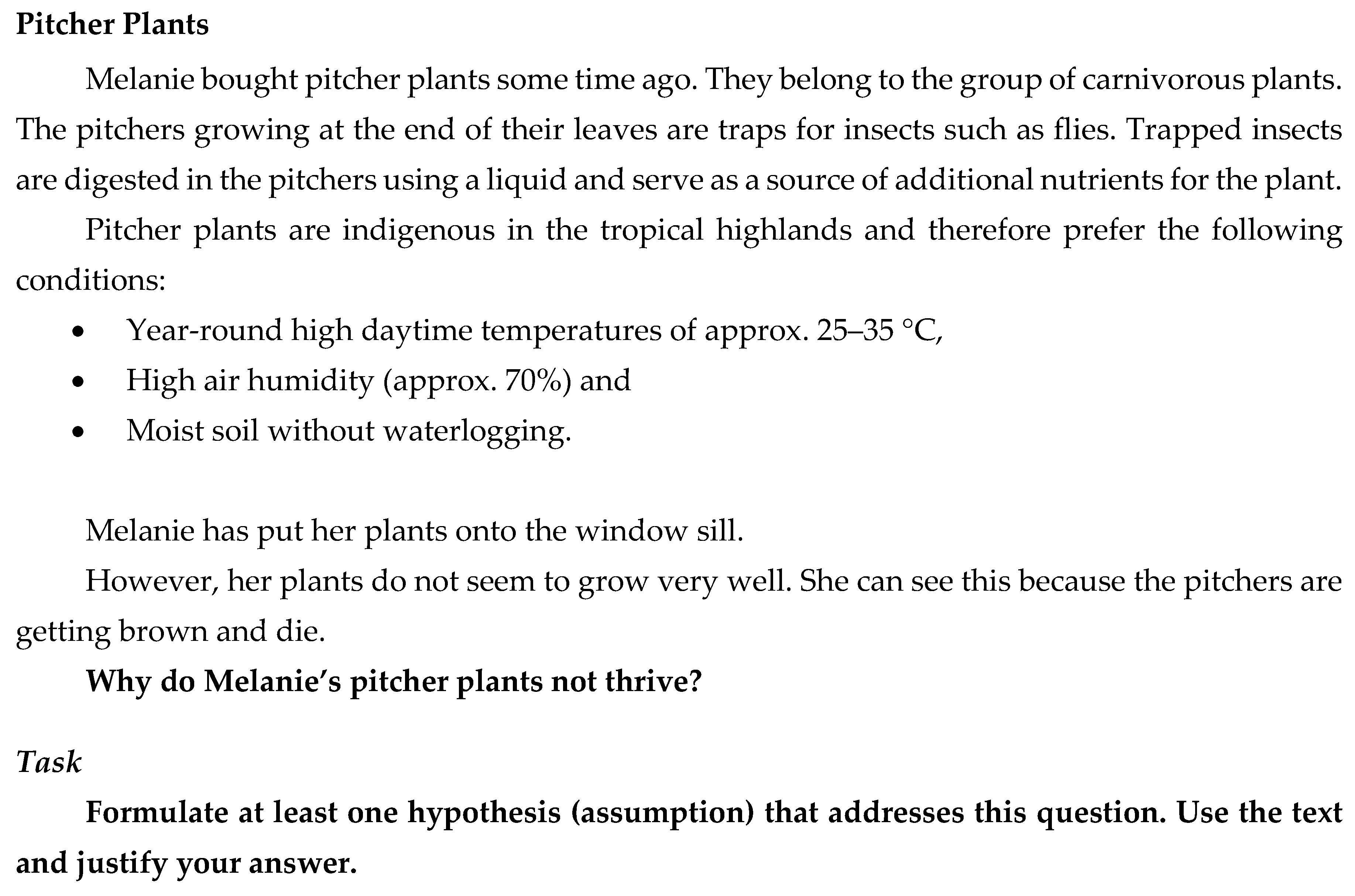

- Arnold, J.; Kremer, K. Hilfe für Kannenpflanzen. In Experimentieren Sie! Biologieunterricht mit Aha-Effekt—Selbstständiges, kompetenzorientiertes Erarbeiten von Lehrplaninhalten; Schmiemann, P., Mayer, J., Eds.; Cornelsen: Berlin, Germany, 2013; pp. 26–28. [Google Scholar]

- Landis, J.R.; Koch, G.G. The Measurement of Observer Agreement for Categorical Data. Biometrics 1977, 33, 159–174. [Google Scholar] [CrossRef] [PubMed]

- Linacre, J.M. Winsteps® Rasch Measurement Computer Program User’s Guide; Winsteps.com: Beaverton, OR, USA, 2015. [Google Scholar]

- Wright, B.D. Rack and Stack: Time 1 vs. Time 2 or Pre-Test vs. Post-Test. Rasch Meas. Trans. 2003, 17, 905–906. [Google Scholar]

- Baghaei, P. The Rasch Model as a Construct Validation Tool. Rasch Meas. Trans. 2008, 22, 1145–1146. [Google Scholar]

- Linacre, J.M. Test Validity and Rasch Measurement: Construct, Content, etc. Rasch Meas. Trans. 2004, 18, 970–971. [Google Scholar]

- Boone, W.J.; Staver, J.R.; Yale, M.S. Rasch Analysis in the Human Sciences; Springer: Dordrecht, The Netherlands, 2014. [Google Scholar]

- Boone, W.J.; Scantlebury, K. The Role of Rasch Analysis When Conducting Science Education Research Utilizing Multiple-Choice Tests. Sci. Educ. 2006, 90, 253–269. [Google Scholar] [CrossRef]

- Bond, T.G.; Fox, C.M. Applying the Rasch Model—Fundamental Measurement in the Human Sciences, 2nd ed.; Routledge: New York, NY, USA, 2012. [Google Scholar]

- Kremer, K.; Specht, C.; Urhahne, D.; Mayer, J. The relationship in biology between the nature of science and scientific inquiry. J. Biol. Educ. 2013, 48, 1–8. [Google Scholar] [CrossRef]

- Wright, B.D.; Stone, M.H. Best Test Design—Rasch Measurement; MESA Press: Chicago, IL, USA, 1979. [Google Scholar]

- Boone, W.; Rogan, J. Rigour in quantitative analysis: The promise of Rasch analysis techniques. Afr. J. Res. SMT Educ. 2005, 9, 25–38. [Google Scholar] [CrossRef]

- Fox, C.M.; Jones, J.A. Uses of Rasch Modeling in Counseling Psychology Research. J. Couns. Psychol. 1998, 45, 30–45. [Google Scholar] [CrossRef]

- Nowak, K.H.; Nehring, A.; Tiemann, R.; zu Belzen, A.U. Assessing students’ abilities in processes of scientific inquiry in biology using a paper-and-pencil test. J. Boil. Educ. 2013, 47, 182–188. [Google Scholar] [CrossRef]

- Wright, B.D.; Masters, G.N. Rating Scale Analysis; MESA Press: Chicago, IL, USA, 1982. [Google Scholar]

- Andrich, D. Rasch Models for Measurement. Quantitative Applications in the Social Sciences; Sage Publications: Thousand Oaks, CA, USA, 1988. [Google Scholar]

- Combrinck, C.; Scherman, V.; Maree, D. Evaluating anchor items and reframing assessment results through a practical application of the Rasch measurement model. S. Afr. J. Psychol. 2017, 47, 316–329. [Google Scholar] [CrossRef]

- Cunningham, J.D.; Bradley, K.D. Applying the Rasch model to measure change in student performance over time. In Proceedings of the American Educational Research Association Annual Meeting, Denver, CO, USA, 30 April–4 May 2010. [Google Scholar]

- Doyle, P.J.; Hula, W.D.; McNeil, M.; Mikolic, J.M.; Mathews, C. An application of Rasch analysis to the measurement of communicative functioning. J. Speech Lang. Hear. Res. 2005, 48, 1412–1428. [Google Scholar] [CrossRef]

- Lundgren-Nilsson, A.; Tennant, A.; Grimby, G.; Sunnerhagen, K.S. Cross-diagnostic validity in a generic instrument: An example from the functional independence measure in Scandinavia. Health Qual. Life Outcomes 2006, 23, 4–55. [Google Scholar]

- Miller, K.J.; Slade, A.L.; Pallant, J.F.; Galea, M.P. Evaluation of the psychometric properties of the upper limb subscales of the motor assessment scale using a Rasch analysis model. J. Rehabil. Med. 2010, 42, 315–322. [Google Scholar] [CrossRef] [PubMed]

| Sub-Competence | Items (Context) |

|---|---|

| Hypothesis | Pitcher Plant (Ecology); Apple Juice (Enzymology) |

| Design | Dummy Experiment (Ethology); Food Preservation (Enzymology) |

| Data | Nicotine (Neurophysiology); Fever (Enzymology) |

| Item Context | Measure | Error | Infit MNSQ | Outfit MNSQ | Measure | Error | Infit MNSQ | Outfit MNSQ |

|---|---|---|---|---|---|---|---|---|

| Enzymes | Other | |||||||

| Min. | −4.29 | 0.05 | 0.94 | 0.66 | −5.15 | 0.06 | 0.84 | 0.64 |

| Max. | 3.64 | 0.38 | 1.07 | 1.84 | 4.05 | 0.58 | 1.15 | 1.22 |

| Mean | 0.15 | 0.16 | 1.02 | 1.05 | −0.15 | 0.17 | 0.99 | 0.95 |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Arnold, J.C.; Boone, W.J.; Kremer, K.; Mayer, J. Assessment of Competencies in Scientific Inquiry Through the Application of Rasch Measurement Techniques. Educ. Sci. 2018, 8, 184. https://doi.org/10.3390/educsci8040184

Arnold JC, Boone WJ, Kremer K, Mayer J. Assessment of Competencies in Scientific Inquiry Through the Application of Rasch Measurement Techniques. Education Sciences. 2018; 8(4):184. https://doi.org/10.3390/educsci8040184

Chicago/Turabian StyleArnold, Julia C., William J. Boone, Kerstin Kremer, and Jürgen Mayer. 2018. "Assessment of Competencies in Scientific Inquiry Through the Application of Rasch Measurement Techniques" Education Sciences 8, no. 4: 184. https://doi.org/10.3390/educsci8040184

APA StyleArnold, J. C., Boone, W. J., Kremer, K., & Mayer, J. (2018). Assessment of Competencies in Scientific Inquiry Through the Application of Rasch Measurement Techniques. Education Sciences, 8(4), 184. https://doi.org/10.3390/educsci8040184