Effects of Modeling Instruction Professional Development on Biology Teachers’ Scientific Reasoning Skills

Abstract

1. Introduction

1.1. Scientific Reasoning

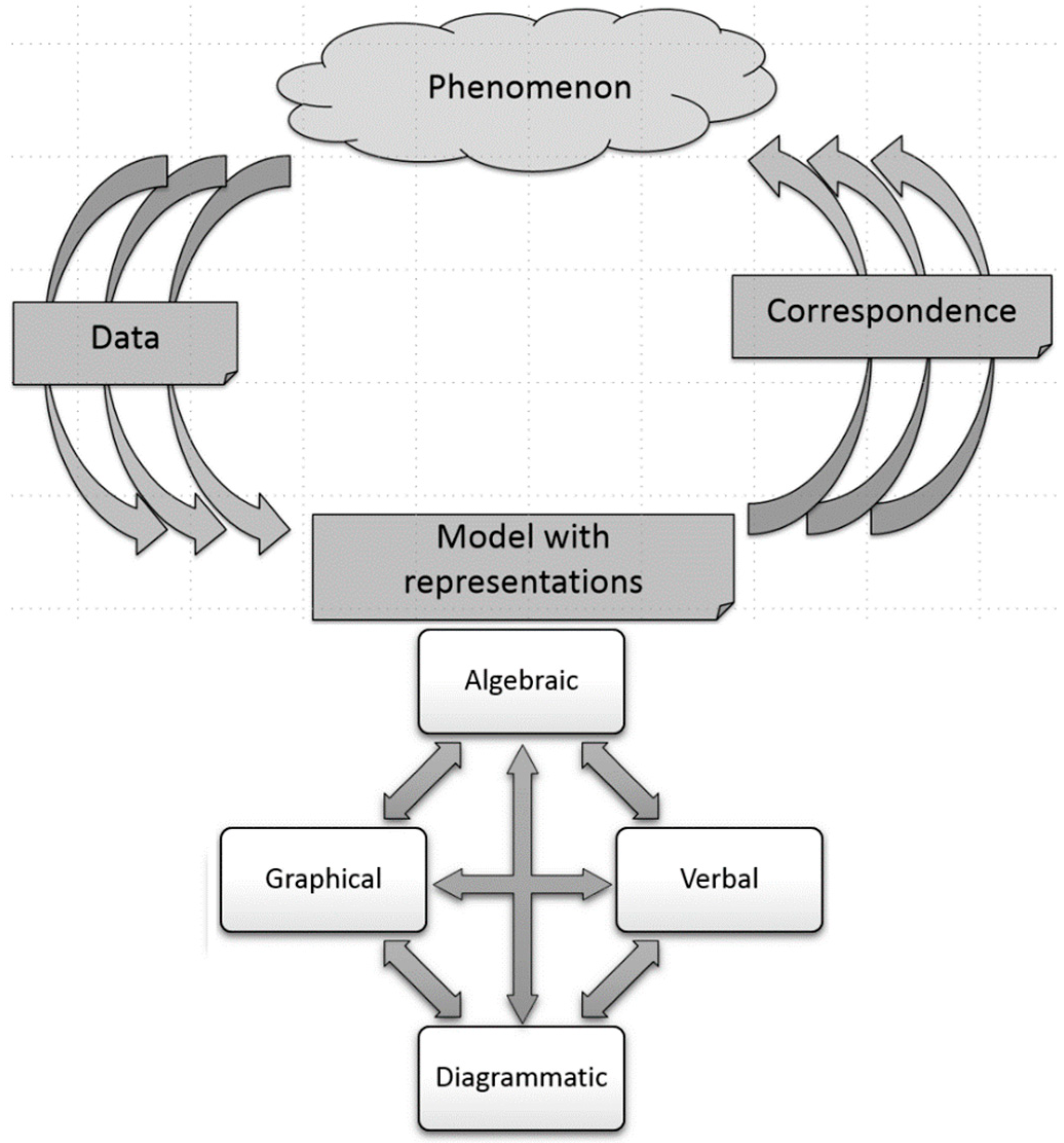

1.2. Modeling Instruction (MI)

Effectiveness of Modeling Instruction on Student Learning and Teacher Learning

1.3. Quality Teacher Workshop Characteristics

2. Materials and Methods

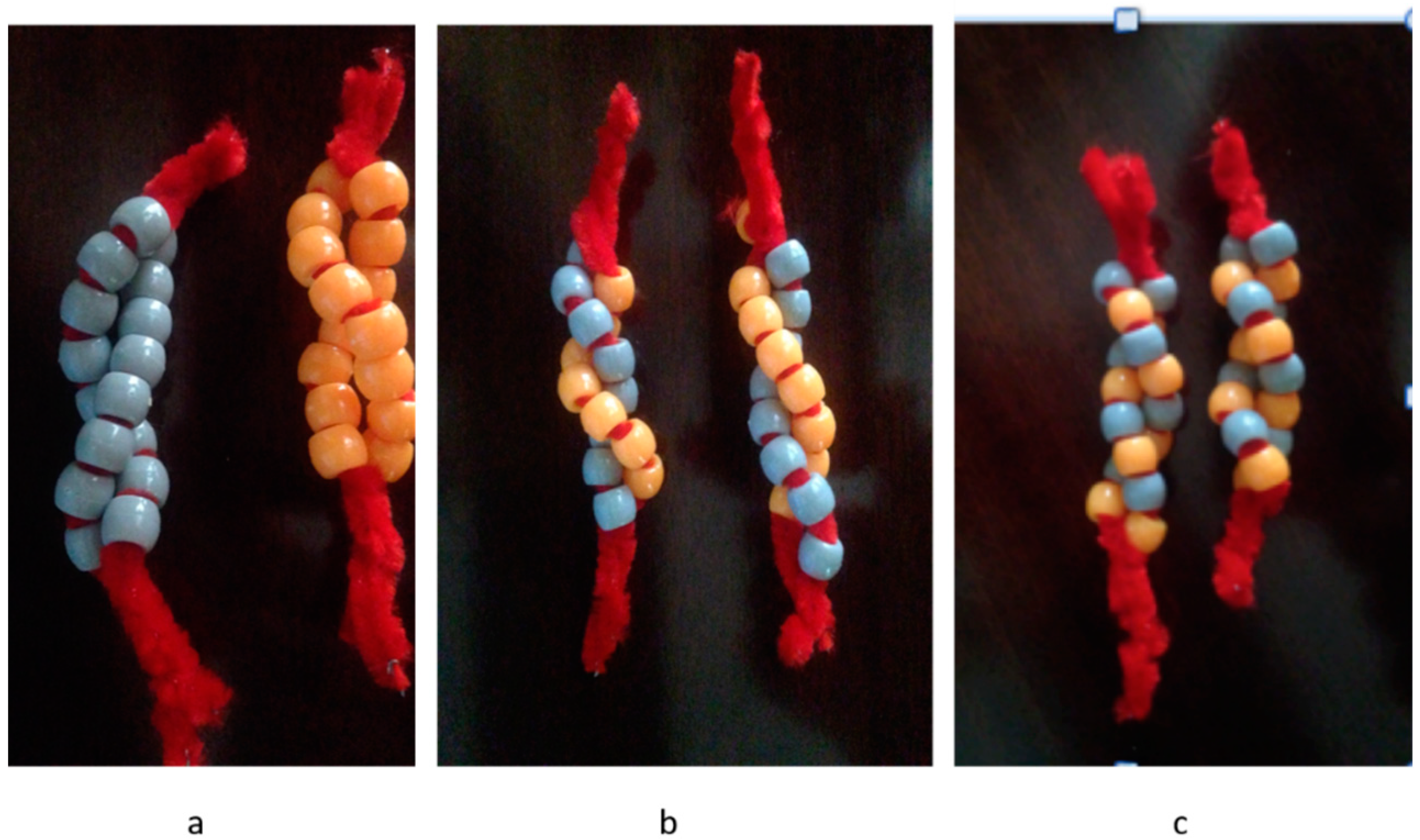

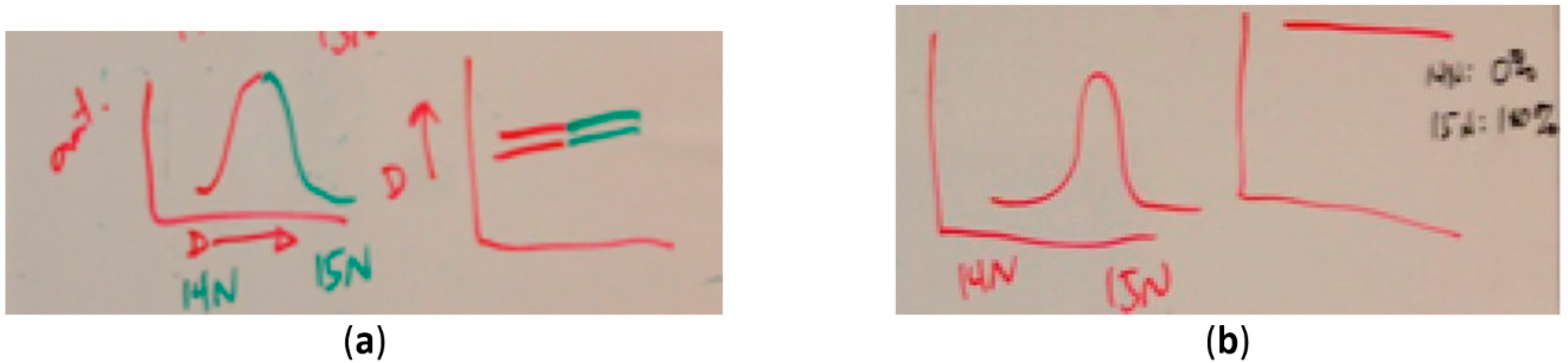

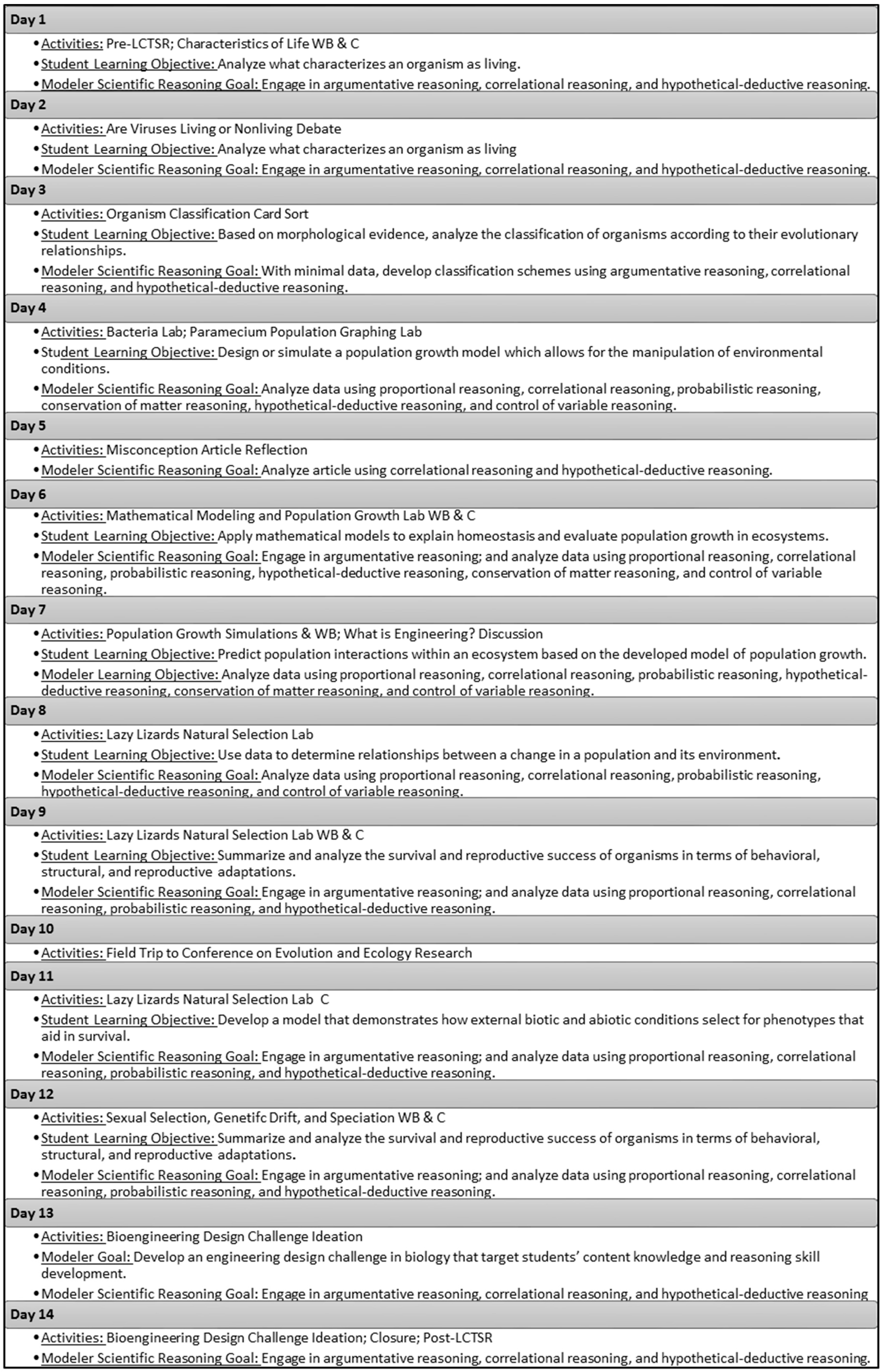

2.1. Workshop Context

2.2. Workshop Participants (Modelers)

2.3. Measures

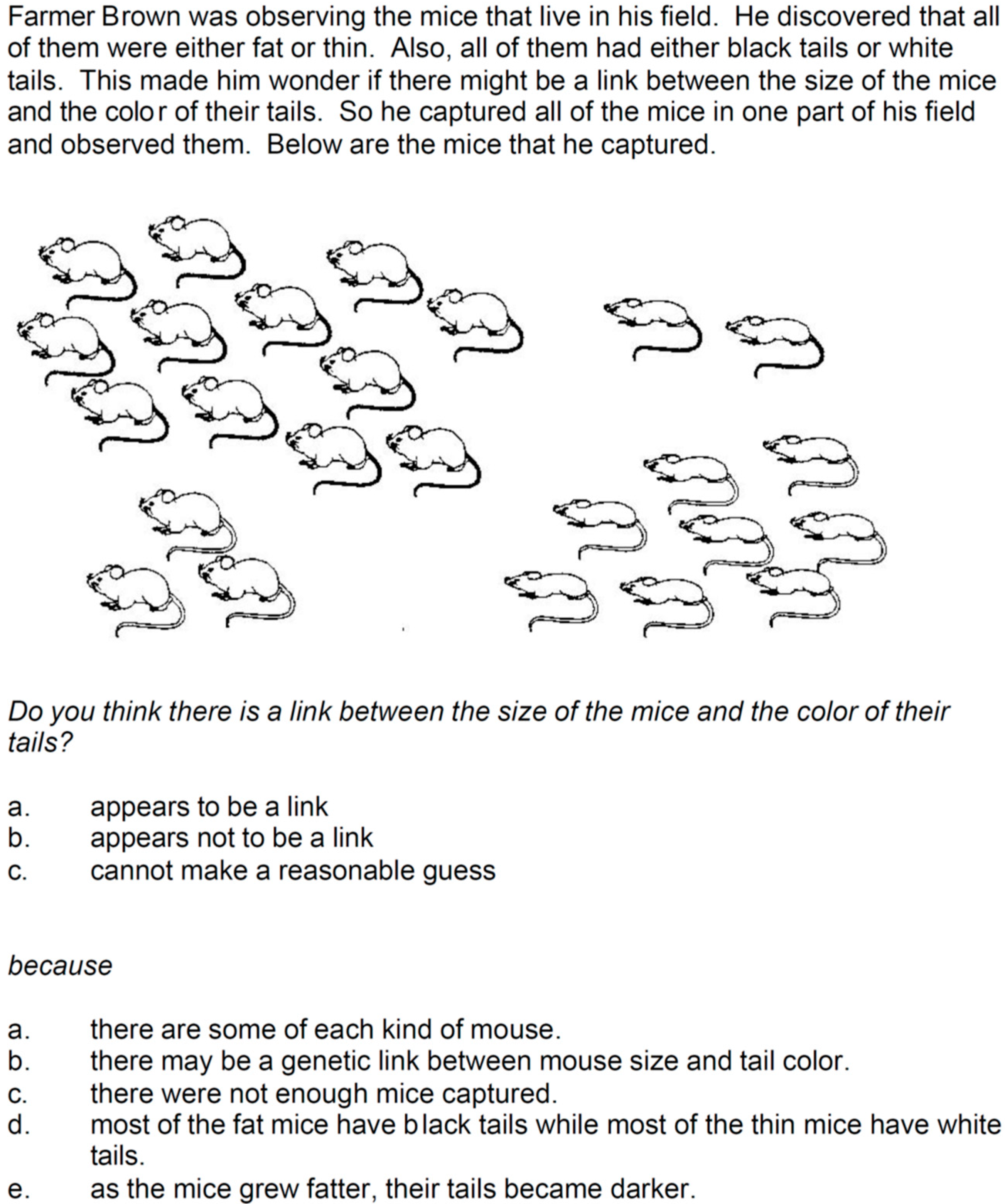

2.3.1. LCTSR

2.3.2. Teacher Focus Groups, Interviews, and Writing Samples

- After the workshop, do you have new ways to help your students experience biology topics in your classroom?

- What do you think your students will learn about biology from incorporating models into your classroom?

- What do you think your students will learn about biology from incorporating modeling instruction into your classroom?

3. Results

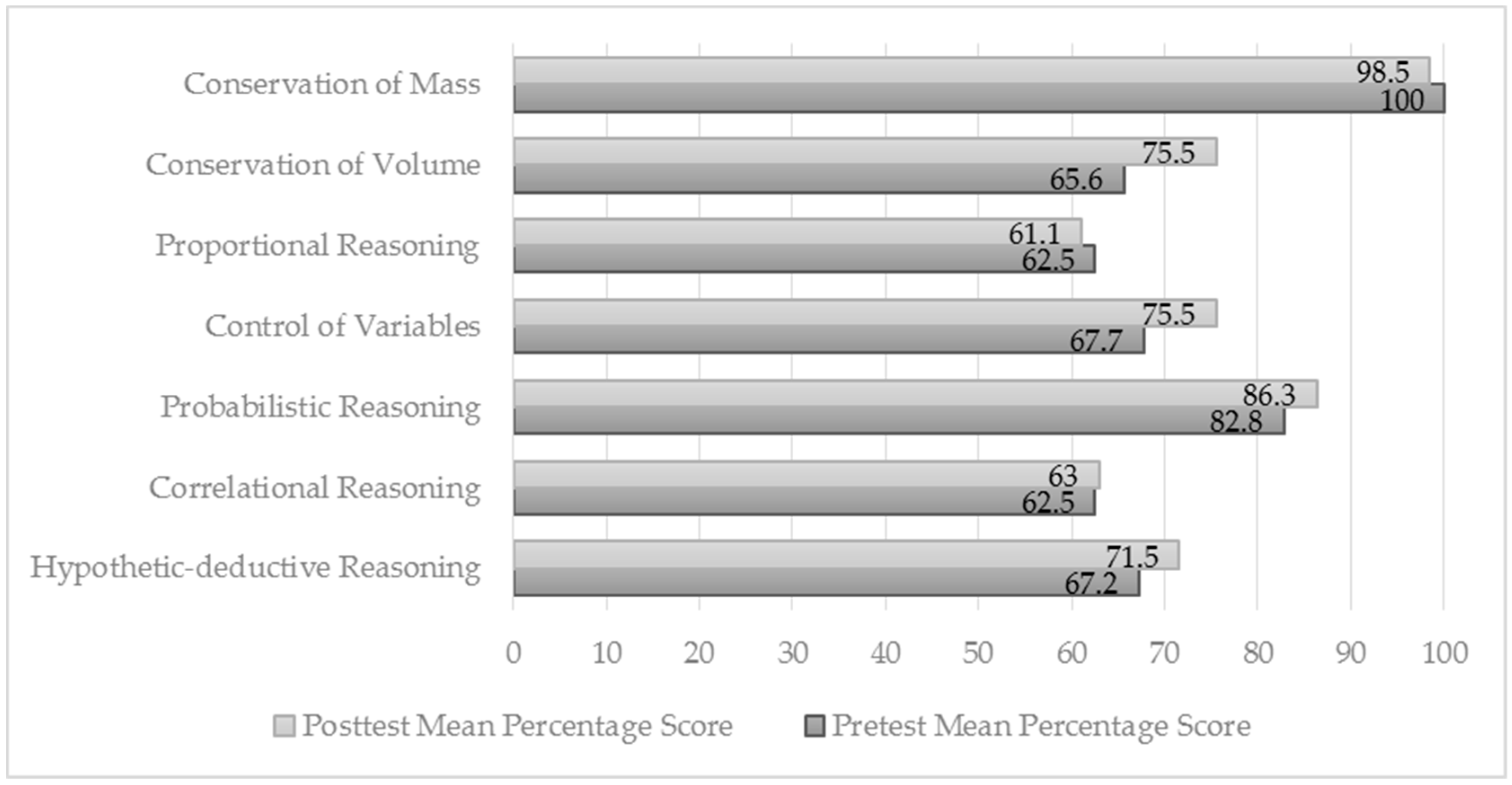

3.1. Single-Tier Item Analysis

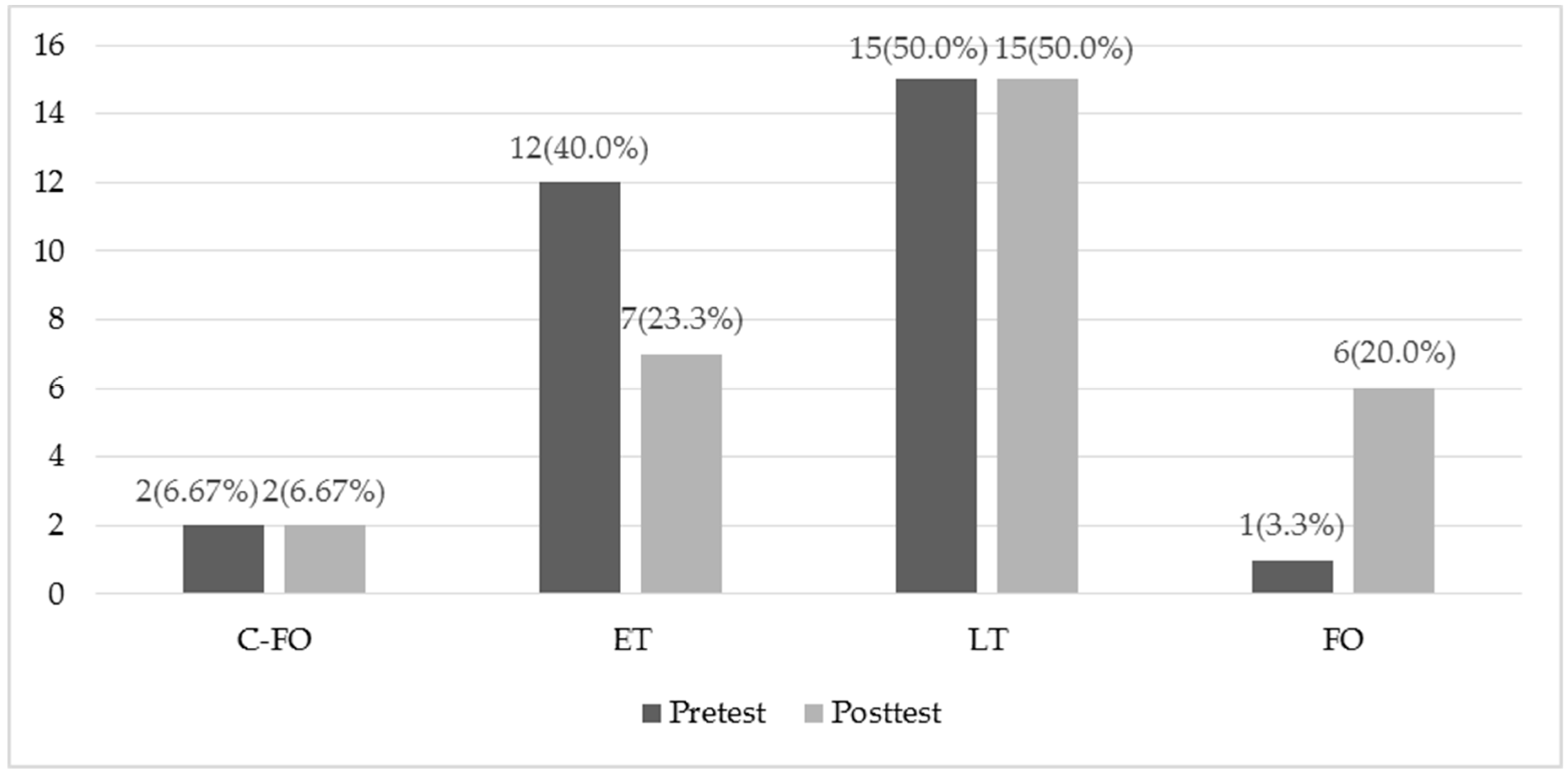

3.2. Two-Tier Item Analysis

3.3. Focus Groups, Interviews, and Writing Sample

4. Discussion

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Kelly, D.; Xie, H.; Nord, C.W.; Jenkins, F.; Chan, J.Y.; Kastberg, D. Performance of U.S. 15-Year-Old Students in Mathematics, Science, and Reading Literacy in an International Context: First Look at PISA 2012; U.S. Department of Education, Washington’s National Center for Education Statistics: Washington, DC, USA, 2013. Available online: http://nces.ed.gov/pubs (accessed on 8 October 2016).

- Organisation for Economic Co-operation and Development (OECD). What Are Tertiary Students Choosing to Study? OECD Publishing: Paris, France, 2014; Available online: http://www.oecd.org/education/skills-beyond-school/EDIF%202014--No19.pdf (accessed on 24 June 2018).

- Coletta, V.P.; Phillips, J.A.; Steinert, J.J. Why you should measure your students’ reasoning ability. Phys. Teach. 2007, 45, 235–238. [Google Scholar] [CrossRef]

- Ding, L. Verification of causal influences of reasoning skills and epistemology on physics conceptual learning. Phys. Rev. Spec. Top. Phys. Educ. Res. 2014, 10, 023101. [Google Scholar] [CrossRef]

- Moore, J.C.; Rubbo, L.J. Scientific reasoning abilities of nonscience majors in physics-based courses. Phys. Rev. Spec. Top. Phys. Educ. Res. 2012, 8, 10106. [Google Scholar] [CrossRef]

- Lawson, A.E.; Banks, D.L.; Logvin, M. Self-efficacy, reasoning ability and achievement in college biology. J. Res. Sci. Teach. 2007, 44, 706–724. [Google Scholar] [CrossRef]

- Deming, J.C.; O’Donnell, J.R.; Malone, C.J. Scientific literacy: Resurrecting the phoenix with thinking skills. Sci. Educ. 2012, 21, 10–17. [Google Scholar]

- Hilfert-Rüppell, D.; Looß, M.; Klingenberg, K.; Eghtessad, A.; Höner, K.; Müller, R.; Strahl, A.; Pietzner, V. Scientific reasoning of prospective science teachers in designing a biological experiment. Lehrerbildung auf dem Prüfstand 2013, 6, 135–154. [Google Scholar]

- Reinisch, B.; Krüger, D. Preservice biology teachers’ conceptions about the tentative nature of theories and models in biology. Res. Sci. Educ. 2018, 48, 71–103. [Google Scholar] [CrossRef]

- Großschedl, J.; Harms, U.; Kleickmann, T.; Glowinski, I. Preservice biology teachers’ professional knowledge: Structure and learning opportunities. J. Sci. Teach. Educ. 2015, 26, 291–318. [Google Scholar] [CrossRef]

- Park, S.; Jang, J.Y.; Chen, Y.C.; Jung, J. Is pedagogical content knowledge (PCK) necessary for reformed science teaching? Evidence from an empirical study. Res. Sci. Educ. 2011, 41, 245–260. [Google Scholar] [CrossRef]

- Tsui, C.; Treagust, D. Evaluating secondary students’ scientific reasoning in genetics using a two-tier diagnostic instrument. Int. J. Sci. Educ. 2010, 32, 1073–1098. [Google Scholar] [CrossRef]

- Krell, M.; Krüger, D. Testing models: A key aspect to promote teaching activities related to models and modelling in biology lessons? J. Biol. Educ. 2016, 50, 160–173. [Google Scholar] [CrossRef]

- Ware, T.; Malone, K.L.; Irving, K.; Mollohan, K. Models and modeling: An evaluation of teacher knowledge. In Proceedings of the HICE 2017: The 15th Annual Hawaii International Conference on Education, Honolulu, HI, USA, 3–6 January 2017. [Google Scholar]

- Zhang, M.; Parker, J.; Koehler, M.J.; Eberhardt, J. Understanding inservice science teachers’ needs for professional development. J. Sci. Teach. Educ. 2015, 26, 471–496. [Google Scholar] [CrossRef]

- Kuhn, D. Children and adults as intuitive scientists. Psychol. Rev. 1989, 96, 674–689. [Google Scholar] [CrossRef]

- Lawson, A.E. The nature and development of scientific reasoning: A synthetic view. Int. J. Sci. Math. Educ. 2004, 2, 307–338. [Google Scholar] [CrossRef]

- Hogan, K.; Fishkeller, J. Dialogue as data: Assessing students’ scientific reasoning with interactive protocols. In Assessing Science Understanding: A Human Constructivist View; Mintzes, J.J., Wandersee, J.H., Novak, J.D., Eds.; Elsevier: Burlington, MA, USA, 2005; pp. 95–127. ISBN 0124983650 9780124983656. [Google Scholar]

- Samarapungavan, A. Reasoning 2009. Available online: www.education.com/references/article/reasoning (accessed on 14 November 2014).

- Lawson, A.E.; Clark, B.; Cramer-Meldrum, E.; Falconer, K.A.; Sequist, J.M.; Kwon, Y.-J. Development of scientific reasoning in college biology: Do two levels of general hypothesis-testing still exist? J. Res. Sci. Teach. 2000, 37, 81–101. [Google Scholar] [CrossRef]

- National Research Council. A Framework for K-12 Science Education: Practices, Crosscutting Concepts, and Core Ideas; National Academies Press: Washington, DC, USA, 2012. [Google Scholar]

- Mullis, I.V.S.; Martin, M.O.; Loveless, T. 20 Years of TIMSS: International Trends in Mathematics and Science Achievement, Curriculum, and Instruction; TIMSS & PIRLS International Study Center, Bostin College: Chestnut Hill, MA, USA, 2016; ISBN 978-1-889938-40-0. [Google Scholar]

- UNESCO. Current Challenges in Basic Science Education; UNESCO Education Sector: Paris, France, 2010. [Google Scholar]

- NGSS Lead States. Next Generation Science Standards: For States, by States; The National Academies Press: Washington, DC, USA, 2013. [Google Scholar]

- KMK [Sekretariat der Ständigen Konferenz der Kultusminister der Länder in der BRD]. Bildungsstandards im Fach Biologie für den Mittleren Schulabschluss [Biology education standards for the Mittlere Schulabschluss]; Wolters Kluwer: Neuwied, Germany, 2008. [Google Scholar]

- Coletta, V.P.; Phillips, J.A. Interpreting FCI scores: Normalized gain, preinstruction scores and scientific reasoning ability. Am. J. Phys. 2005, 73, 1172–1182. [Google Scholar] [CrossRef]

- Dauer, J.T.; Momsen, J.L.; Speth, E.B.; Makohon-Moore, S.C.; Long, T.M. Analyzing change in students’ gene-to-evolution models in college-level introductory biology. J. Res. Sci. Teach. 2013. [Google Scholar] [CrossRef]

- Johnson, M.A.; Lawson, A.E. What are the relative effects of reasoning ability and prior knowledge on biology achievement in expository and inquiry classes? J. Res. Sci. Teach. 1998, 35, 89–103. [Google Scholar] [CrossRef]

- Tfeily, O.; Dancy, M. Why do students struggle in freshman physics? In 2006 Physics Education Research Conference AIP Conference Proceedings; McCullough, L., Hsu, L., Heron, P., Eds.; American Institute of Physics: College Park, MD, USA, 2007. [Google Scholar]

- Ding, L.; Wei, X.; Mollohan, K. Does Higher Education Improve Student Scientific Reasoning Skills? Int. J. Sci. Math. Educ. 2016, 14, 619–634. [Google Scholar] [CrossRef]

- Sundre, D.L.; Thelk, A.D. Advancing assessment of quantitative and scientific reasoning. Numeracy 2010, 3, 1–12. [Google Scholar] [CrossRef]

- Gormally, C.; Brickman, P.; Lutz, M. Developing a test of scientific literacy skills (TOSLS): Measuring undergraduates’ evaluation of scientific information and arguments. CBE-Life Sci. Educ. 2012, 11, 364–377. [Google Scholar] [CrossRef] [PubMed]

- Lawson, A.E. The development and validation of a classroom test of formal reasoning. J. Res. Sci. Teach. 1978, 15, 11–24. [Google Scholar] [CrossRef]

- Lawson, A.E. Classroom Test of Scientific Reasoning: Multiple Choice Version. J. Res. Sci. Teach. 2000, 15, 11–24. [Google Scholar] [CrossRef]

- Lawson, A.E.; Alkhoury, S.; Benford, R.; Clark, B.R.; Falconer, K.A. What kinds of scientific concepts exist? Concept construction and intellectual development in college biology. J. Res. Sci. Teach. 2000, 37, 996–1018. [Google Scholar] [CrossRef]

- Marshall, J.C.; Smart, J.B.; Alston, D.M. Inquiry-based instruction: A possible solution to improving student learning of both science concepts and scientific practices. Int. J. Sci. Math. Educ. 2017, 15, 777–796. [Google Scholar] [CrossRef]

- Westbrook, S.L.; Rogers, L.N. Examining the development of scientific reasoning in ninth-grade physical science students. J. Res. Sci. Teach. 1994, 31, 65–76. [Google Scholar] [CrossRef]

- Wilson, C.D.; Taylor, J.A.; Kowalski, S.M.; Carlson, J. The relative effects and equity of inquiry-based and commonplace science teaching on students’ knowledge, reasoning, and argumentation. J. Res. Sci. Teach. 2010, 47, 276–301. [Google Scholar] [CrossRef]

- O’Donnell, J.R. Creation of National Norms for Scientific Thinking Skills Using the Classroom Test of Scientific Reasoning. Master’s Thesis, Winona State University, Winona, MN, USA, 2011. [Google Scholar]

- Hestenes, D.; Wells, M.; Swackhamer, G. Force concept inventory. Phys. Teach. 1992, 30, 141–158. [Google Scholar] [CrossRef]

- Wells, M.; Hestenes, D.; Swackhamer, G. A modeling method for high school physics instruction. Am. J. Phys. 1995, 63, 606–619. [Google Scholar] [CrossRef]

- Hestenes, D. Modeling theory for math and science education. In Modeling Students’ Mathematical Modeling Competencies; Springer: Boston, MA, USA, 2010; pp. 13–41. ISBN 978-1-4419-0561-1. [Google Scholar]

- Karplus, R.; Butts, D.P. Science teaching and the development of reasoning. J. Res. Sci. Teach. 1977, 14, 169–175. [Google Scholar] [CrossRef]

- Buckley, B.C.; Gobert, J.D.; Kindfield, A.C.H.; Horwitz, P.; Tinker, R.F.; Gerlits, B.; Wilensky, U.; Dede, C.; Willett, J. Model-based teaching and learning with BioLogica™: What do they learn? How do they learn? How do we know? J. Sci. Educ. Technol. 2004, 13, 23–41. [Google Scholar] [CrossRef]

- Giere, R.N. How models are used to represent reality. Philos. Sci. 2004, 71, 742–752. [Google Scholar] [CrossRef]

- Godfrey-Smith, P. The strategy of model-based science. Biol. Philos. 2006, 21, 725–740. [Google Scholar] [CrossRef]

- Harrison, A.G.; Treagust, D.F. Learning about atoms, molecules, and chemical bonds: A case study of multiple-model use in grade 11 chemistry. Sci. Educ. 2000, 84, 352–381. [Google Scholar] [CrossRef]

- Malone, K.L.; Schuchardt, A.; Sabree, Z. Models and modeling in evolution. In Evolution Education Re-Considered; Harms, U., Reiss, M.J., Eds.; Expected Publication; Springer: Berlin, Germany, in press.

- Malone, K. Correlations among knowledge structures, force concept inventory, and problem-solving behaviors. Phys. Rev. Spec. Top. Phys. Educ. Res. 2008, 4, 020107. [Google Scholar] [CrossRef]

- Jackson, J.; Dukerich, L.; Hestenes, D. Modeling Instruction: An effective model for science education. Sci. Educ. 2008, 17, 10–17. [Google Scholar]

- Liang, L.L.; Fulmer, G.W.; Majerich, D.M.; Clevenstine, R.; Howanski, R. The effects of a model-based physics curriculum program with a physics first approach: A causal-comparative study. J. Sci. Educ. Technol. 2012, 21, 114–124. [Google Scholar] [CrossRef]

- Malone, K.L.; Schuchardt, A. The efficacy of Modeling Instruction in chemistry: A case study. In Proceedings of the HICE 2016: The 14th Annual Hawaii International Conference on Education, Honolulu, HI, USA, 3–6 January 2016; pp. 1513–1518. [Google Scholar]

- Schuchardt, A.; Malone, K.L.; Diehl, W.; Harless, K.; McGinnis, R.; Parr, T. A case study of student performance following a switch to a modeling-based physics first course sequence. In Proceedings of the NARST International Conference, Baltimore, MD, USA, 30 March–2 April 2008. [Google Scholar]

- Momsen, J.L.; Long, T.M.; Wyse, S.A.; Ebert-May, D. Just the facts? Introductory undergraduate biology courses focus on low-level cognitive skills. CBE-Life Sci. Educ. 2010, 9, 435–440. [Google Scholar] [CrossRef] [PubMed]

- Adamson, S.L.; Banks, D.; Burtch, M.; Cox, F.I.I.I.; Judson, E.; Turley, J.B.; Benford, R.; Lawson, A.E. Reformed undergraduate instruction and its subsequent impact on secondary school teaching practice and student achievement. J. Res. Sci. Teach. 2003, 40, 939–957. [Google Scholar] [CrossRef]

- Koenig, K.; Schen, M.; Bao, L. Explicitly targeting pre-service teacher scientific reasoning abilities and understanding of nature of science through an introductory science course. Sci. Educ. 2012, 21, 1. [Google Scholar]

- Moore, N.; O’Donnell, J.; Poirier, D. Using cognitive acceleration materials to develop pre-service teachers’ reasoning and pedagogical Expertise. In Advancing the STEM Agenda in Education; Madison University of Wisconsin-Stout: Menomonie, WI, USA, 2012. [Google Scholar]

- Koba, S.; Wojnowski, B. Exemplary Science: Best Practices in Professional Development, 2nd ed.; NSTA Press: Arlington, VA, USA, 2013; ISBN 978-1-936959-07-5. [Google Scholar]

- Loucks-Horsley, S.; Stiles, K.E.; Mundry, S.; Love, N.; Hewsen, P.W. Designing Professional Development for Teachers of Science and Mathematics, 3rd ed.; Corwin Press: Thousand Oaks, CA, USA, 2010; ISBN 1412974143. [Google Scholar]

- Hewson, P. Teacher professional development in science. In Handbook of Research in Science Education; Abell, S.K., Lederman, H.J., Eds.; Lawrence Erlbaum Associates: Mahwah, NJ, USA, 2007; pp. 1179–1203. [Google Scholar]

- Darling-Hammond, L.; Chung Wei, R.; Andree, A.; Richardson, N.; Orphanos, S. Professional Learning in the Learning Profession: A Status Report on Teacher Development in the United States and Abroad; National Staff Development Council: Oxford, OH, USA, 2009. [Google Scholar]

- Garet, M.S.; Porter, A.C.; Desimone, L.; Birman, B.F.; Kwang, S.Y. What makes professional development effective? Results from a national sample of teachers. Am. Educ. Res. J. 2001, 38, 915–945. [Google Scholar] [CrossRef]

- Weiss, I.R.; Pasley, J.D. Scaling Up Instructional Improvement through Teacher Professional Development: Insights from the Local Systemic Change Initiative. Consortium for Policy Research in Education (CPRE) Policy Briefs. 2006. Available online: http://repository.upenn.edu/cpre_policybriefs/32 (accessed on 12 June 2018).

- Supovitz, J.A.; Turner, H.M. The effects of professional development on science teaching practices and classroom culture. J. Res. Sci. Teach. 2000, 37, 963–980. [Google Scholar] [CrossRef]

- Rhoton, J.; Wojnowski, B. Building ongoing and sustained professional development. In Teaching Science in the 21st Century; Shane, P., Rhoton, J., Eds.; National Science Teachers Association and the National Science Education Leadership Association: Washington, DC, USA, 2005; pp. 113–126. [Google Scholar]

- Shane, P.; Wojnowski, B. Technology integration enhancing science: Things take time revisited. Sci. Educ. 2007, 16, 51–57. [Google Scholar]

- Van Driel, J.H.; Beijaard, D.; Verloop, N. Professional development and reform in science education: The role of teachers’ practical knowledge. J. Res. Sci. Teach. 2001, 38, 137–158. [Google Scholar] [CrossRef]

- Inhelder, B.; Piaget, J. The Growth of Logical Thinking from Childhood to Adolescence: An Essay on the Construction of Formal operational Structures; Routledge & Kegan Paul: London, UK, 1958; ISBN 9780415210027. [Google Scholar]

- Abd-El-Khalick, F.; Lederman, N.G. Improving science teachers’ conceptions of nature of science: A critical review of the literature. Int. J. Sci. Educ. 2000, 22, 665–701. [Google Scholar] [CrossRef]

| Main Code | Examples Coded from Interviews |

|---|---|

| Control of Variables | FG1: start with research question and generate data FG4: how to gather data |

| Proportional Reasoning | FG1: look for patterns that represent the data |

| Hypothetical-deductive reasoning | FG2: a process of thinking FG3: [conclusions are] evidence based; evidence and reasoning |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Stammen, A.N.; Malone, K.L.; Irving, K.E. Effects of Modeling Instruction Professional Development on Biology Teachers’ Scientific Reasoning Skills. Educ. Sci. 2018, 8, 119. https://doi.org/10.3390/educsci8030119

Stammen AN, Malone KL, Irving KE. Effects of Modeling Instruction Professional Development on Biology Teachers’ Scientific Reasoning Skills. Education Sciences. 2018; 8(3):119. https://doi.org/10.3390/educsci8030119

Chicago/Turabian StyleStammen, Andria N., Kathy L. Malone, and Karen E. Irving. 2018. "Effects of Modeling Instruction Professional Development on Biology Teachers’ Scientific Reasoning Skills" Education Sciences 8, no. 3: 119. https://doi.org/10.3390/educsci8030119

APA StyleStammen, A. N., Malone, K. L., & Irving, K. E. (2018). Effects of Modeling Instruction Professional Development on Biology Teachers’ Scientific Reasoning Skills. Education Sciences, 8(3), 119. https://doi.org/10.3390/educsci8030119