The Index Number Problem with DEA: Insights from European University Efficiency Data

Abstract

1. Introduction

2. Ranking Systems and Research Data

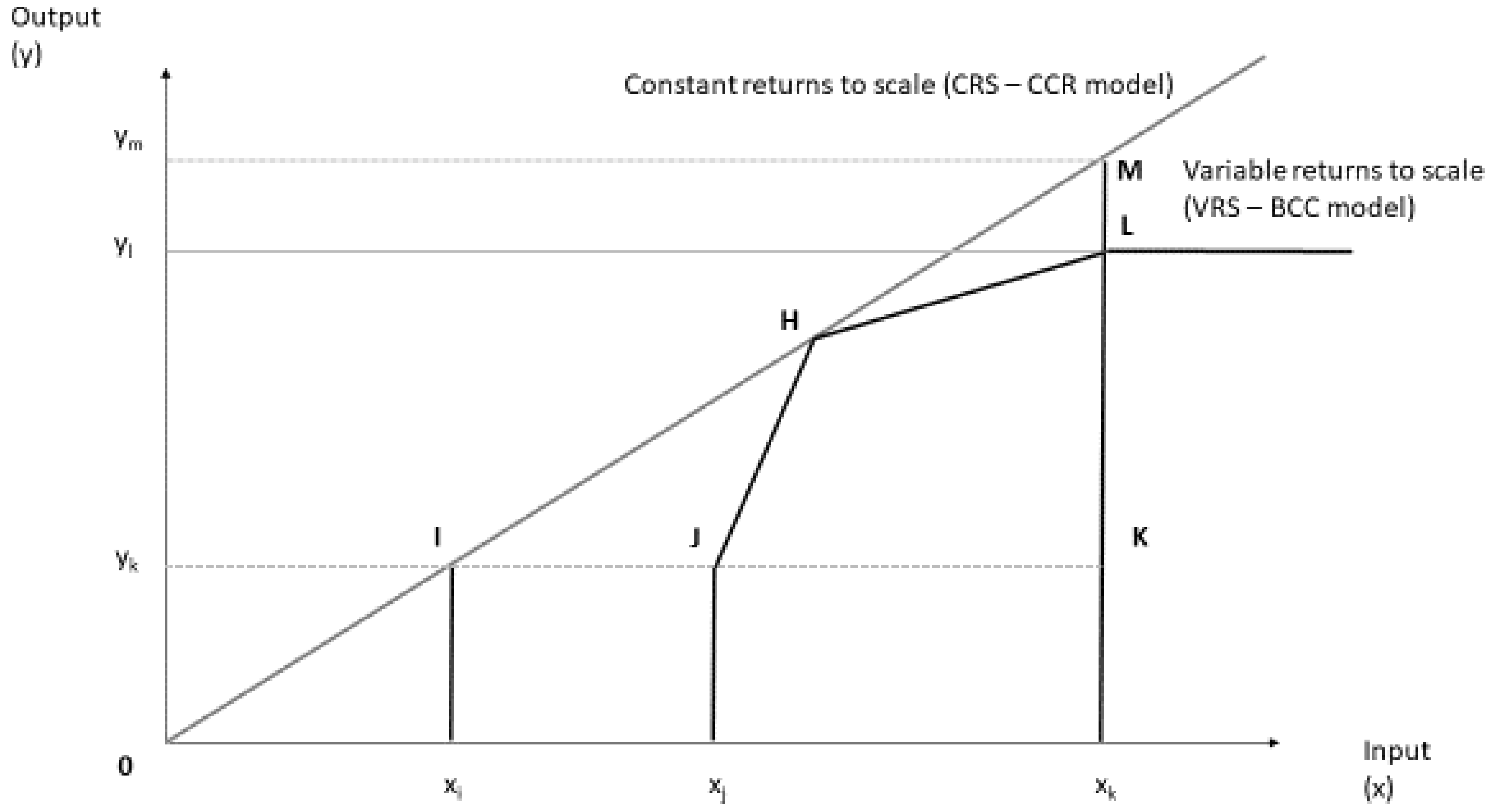

3. Research Methodology and Index Numbers

- ▪

- For each input and output, there are numerical, positive data for all DMU.

- ▪

- Selected values (inputs, outputs, and the chosen DMU) should depict the interest of decision-makers towards the relative efficiency evaluations.

- ▪

- DMU are homogenous in terms of identical inputs and outputs.

- ▪

- Input and output indicator units and scales are congruent.

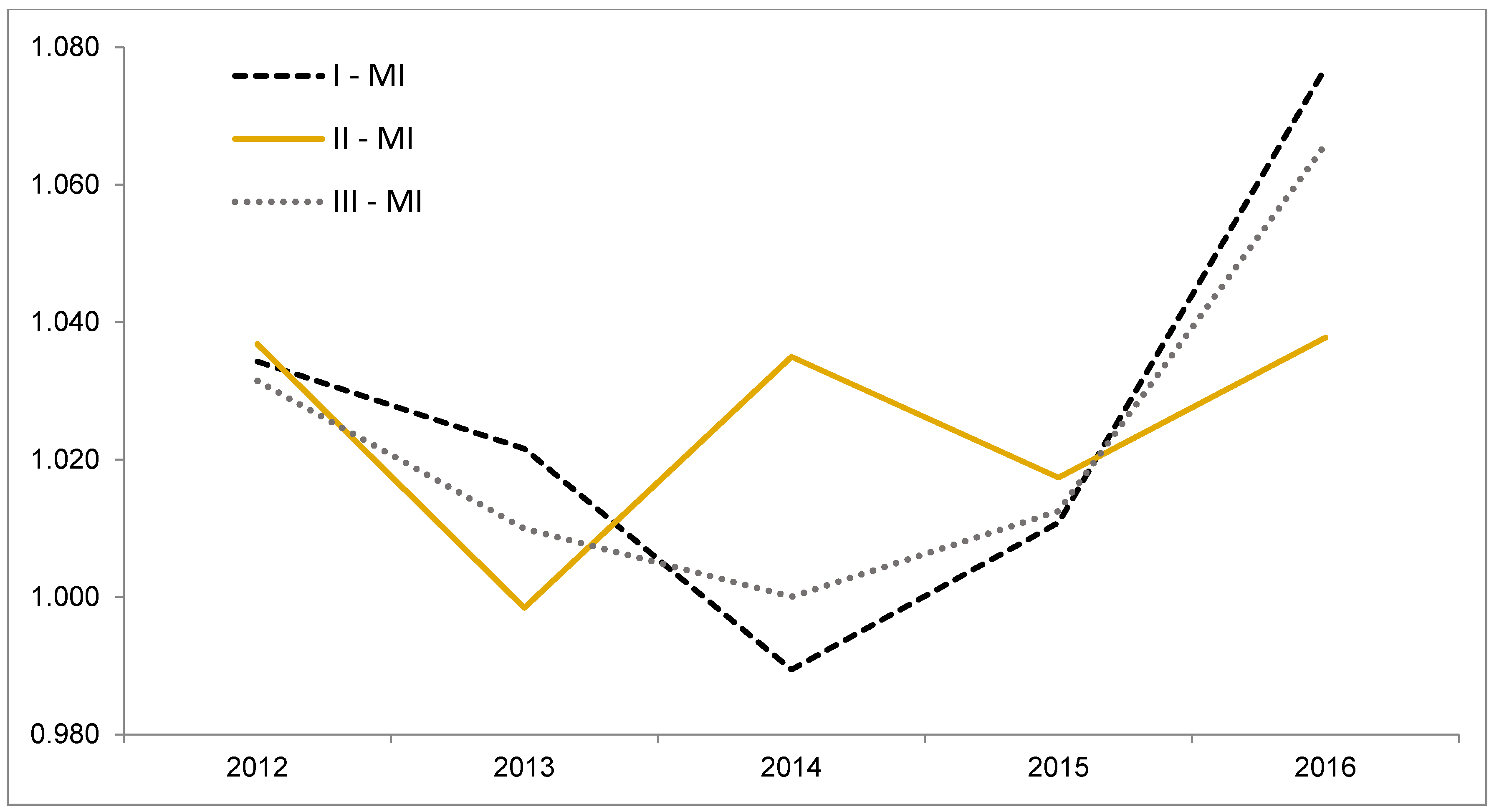

4. Results

5. Discussion

6. Conclusions and Outlook

Funding

Conflicts of Interest

Appendix A

| RUN I (THE) | RUN II (CWTS) | RUN III (THE & CWTS) | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| University | Year * | Malmquist Index | Catch-Up | Frontier Shift | Malmquist Index | Catch-Up | Frontier Shift | Malmquist Index | Catch-Up | Frontier Shift |

| Aarhus University | 2011 | |||||||||

| Aarhus University | 2012 | 1.1500 | 1.1335 | 1.0146 | 1.0367 | 0.9989 | 1.0378 | 1.1500 | 1.1335 | 1.0146 |

| Aarhus University | 2013 | 1.0259 | 1.0577 | 0.9699 | 1.0442 | 1.0844 | 0.9629 | 1.0259 | 1.0577 | 0.9699 |

| Aarhus University | 2014 | 0.9372 | 0.9462 | 0.9905 | 1.0814 | 1.0480 | 1.0318 | 0.9374 | 0.9467 | 0.9902 |

| Aarhus University | 2015 | 0.9786 | 0.9935 | 0.9851 | 1.0764 | 1.0582 | 1.0172 | 0.9804 | 0.9943 | 0.9860 |

| Aarhus University | 2016 | 1.1883 | 1.1423 | 1.0403 | 1.0696 | 1.0425 | 1.0260 | 1.1870 | 1.1408 | 1.0405 |

| Bielefeld University | 2011 | |||||||||

| Bielefeld University | 2012 | 0.8061 | 0.8252 | 0.9768 | 1.0055 | 0.9916 | 1.0140 | 0.8061 | 0.8252 | 0.9768 |

| Bielefeld University | 2013 | 1.0855 | 1.1112 | 0.9768 | 1.0482 | 1.1397 | 0.9198 | 1.0855 | 1.1112 | 0.9768 |

| Bielefeld University | 2014 | 0.9529 | 0.9926 | 0.9600 | 1.0547 | 1.0250 | 1.0290 | 0.9529 | 0.9926 | 0.9600 |

| Bielefeld University | 2015 | 0.9247 | 0.9202 | 1.0050 | 1.0250 | 1.0408 | 0.9849 | 0.9247 | 0.9202 | 1.0050 |

| Bielefeld University | 2016 | 1.3023 | 1.0984 | 1.1856 | 1.0516 | 1.0312 | 1.0198 | 1.3006 | 1.0984 | 1.1841 |

| Delft University of Technology | 2011 | |||||||||

| Delft University of Technology | 2012 | 1.0024 | 1.0000 | 1.0024 | 0.9936 | 0.9282 | 1.0705 | 1.0027 | 1.0000 | 1.0027 |

| Delft University of Technology | 2013 | 1.0361 | 1.0000 | 1.0361 | 1.0266 | 1.0471 | 0.9804 | 1.0352 | 1.0000 | 1.0352 |

| Delft University of Technology | 2014 | 0.9631 | 1.0000 | 0.9631 | 1.0484 | 1.0238 | 1.0240 | 0.9647 | 1.0000 | 0.9647 |

| Delft University of Technology | 2015 | 1.0110 | 1.0000 | 1.0110 | 1.0945 | 1.0744 | 1.0187 | 1.0133 | 1.0000 | 1.0133 |

| Delft University of Technology | 2016 | 1.0328 | 1.0000 | 1.0328 | 1.1385 | 1.1006 | 1.0345 | 1.0328 | 1.0000 | 1.0328 |

| Durham University | 2011 | |||||||||

| Durham University | 2012 | 1.0284 | 1.0082 | 1.0200 | 0.9602 | 0.9268 | 1.0361 | 1.0278 | 1.0071 | 1.0206 |

| Durham University | 2013 | 0.9888 | 1.0000 | 0.9888 | 0.9487 | 0.9764 | 0.9716 | 0.9846 | 1.0000 | 0.9846 |

| Durham University | 2014 | 0.9453 | 1.0000 | 0.9453 | 1.0045 | 0.9266 | 1.0841 | 0.9465 | 1.0000 | 0.9465 |

| Durham University | 2015 | 1.0133 | 0.9781 | 1.0360 | 1.1159 | 1.1384 | 0.9802 | 1.0146 | 0.9801 | 1.0352 |

| Durham University | 2016 | 1.0161 | 0.9514 | 1.0680 | 0.9590 | 0.9632 | 0.9957 | 1.0229 | 0.9817 | 1.0419 |

| Eindhoven University of Tec. | 2011 | |||||||||

| Eindhoven University of Tec. | 2012 | 1.0376 | 1.0000 | 1.0376 | 1.0599 | 1.0206 | 1.0385 | 1.0376 | 1.0000 | 1.0376 |

| Eindhoven University of Tec. | 2013 | 1.0213 | 1.0000 | 1.0213 | 1.0501 | 1.0968 | 0.9575 | 1.0213 | 1.0000 | 1.0213 |

| Eindhoven University of Tec. | 2014 | 0.9704 | 1.0000 | 0.9704 | 1.0545 | 1.0257 | 1.0281 | 0.9751 | 1.0000 | 0.9751 |

| Eindhoven University of Tec. | 2015 | 0.9776 | 1.0000 | 0.9776 | 1.0136 | 1.0237 | 0.9901 | 0.9776 | 1.0000 | 0.9776 |

| Eindhoven University of Tec. | 2016 | 0.8549 | 0.8495 | 1.0063 | 1.1259 | 1.1159 | 1.0090 | 0.8670 | 0.8633 | 1.0042 |

| Erasmus Univ. Rotterdam | 2011 | |||||||||

| Erasmus Univ. Rotterdam | 2012 | 1.0539 | 1.0159 | 1.0373 | 1.0838 | 1.0000 | 1.0838 | 1.0171 | 1.0000 | 1.0171 |

| Erasmus Univ. Rotterdam | 2013 | 1.1899 | 1.1013 | 1.0805 | 0.9814 | 1.0000 | 0.9814 | 1.1164 | 1.0000 | 1.1164 |

| Erasmus Univ. Rotterdam | 2014 | 0.9577 | 1.0000 | 0.9577 | 1.0566 | 1.0000 | 1.0566 | 0.9752 | 1.0000 | 0.9752 |

| Erasmus Univ. Rotterdam | 2015 | 0.9998 | 1.0000 | 0.9998 | 0.9726 | 1.0000 | 0.9726 | 0.9744 | 1.0000 | 0.9744 |

| Erasmus Univ. Rotterdam | 2016 | 1.1147 | 1.0000 | 1.1147 | 0.9864 | 1.0000 | 0.9864 | 1.0427 | 1.0000 | 1.0427 |

| ETH Lausanne | 2011 | |||||||||

| ETH Lausanne | 2012 | 1.0331 | 1.0000 | 1.0331 | 1.0786 | 1.0144 | 1.0633 | 1.0327 | 1.0000 | 1.0327 |

| ETH Lausanne | 2013 | 1.0006 | 1.0000 | 1.0006 | 1.0041 | 1.0591 | 0.9481 | 0.9995 | 1.0000 | 0.9995 |

| ETH Lausanne | 2014 | 0.9859 | 1.0000 | 0.9859 | 1.0679 | 1.0364 | 1.0304 | 0.9907 | 1.0000 | 0.9907 |

| ETH Lausanne | 2015 | 1.0108 | 1.0000 | 1.0108 | 1.2424 | 1.2268 | 1.0127 | 1.0154 | 1.0000 | 1.0154 |

| ETH Lausanne | 2016 | 1.0169 | 1.0000 | 1.0169 | 1.1238 | 1.0628 | 1.0574 | 1.0186 | 1.0000 | 1.0186 |

| Ghent University | 2011 | |||||||||

| Ghent University | 2012 | 1.0083 | 1.0002 | 1.0081 | 1.0455 | 1.0131 | 1.0320 | 1.0146 | 1.0069 | 1.0077 |

| Ghent University | 2013 | 0.9927 | 0.9877 | 1.0050 | 1.0153 | 1.0394 | 0.9768 | 0.9860 | 1.0017 | 0.9843 |

| Ghent University | 2014 | 0.9923 | 0.9930 | 0.9993 | 1.0488 | 1.0245 | 1.0237 | 1.0128 | 1.0000 | 1.0128 |

| Ghent University | 2015 | 0.9504 | 0.9432 | 1.0076 | 1.0076 | 0.9968 | 1.0109 | 0.9545 | 0.9442 | 1.0110 |

| Ghent University | 2016 | 1.0279 | 0.9757 | 1.0536 | 0.9998 | 0.9809 | 1.0193 | 1.0240 | 0.9894 | 1.0349 |

| Goethe University Frankfurt | 2011 | |||||||||

| Goethe University Frankfurt | 2012 | 1.0781 | 1.0447 | 1.0320 | 1.0368 | 1.0186 | 1.0179 | 1.0781 | 1.0447 | 1.0320 |

| Goethe University Frankfurt | 2013 | 0.9831 | 1.0010 | 0.9821 | 1.0279 | 1.0086 | 1.0191 | 0.9831 | 1.0010 | 0.9821 |

| Goethe University Frankfurt | 2014 | 1.0863 | 1.0819 | 1.0040 | 1.0374 | 1.0077 | 1.0295 | 1.0869 | 1.0842 | 1.0025 |

| Goethe University Frankfurt | 2015 | 0.9759 | 0.9850 | 0.9907 | 1.0117 | 1.0095 | 1.0022 | 0.9760 | 0.9832 | 0.9927 |

| Goethe University Frankfurt | 2016 | 1.0063 | 0.9841 | 1.0226 | 1.0129 | 0.9889 | 1.0243 | 1.0064 | 0.9838 | 1.0229 |

| Heidelberg University | 2011 | |||||||||

| Heidelberg University | 2012 | 1.1011 | 1.0530 | 1.0456 | 1.0282 | 1.0138 | 1.0142 | 1.1011 | 1.0530 | 1.0456 |

| Heidelberg University | 2013 | 0.9621 | 0.9816 | 0.9801 | 1.0452 | 1.0109 | 1.0340 | 0.9621 | 0.9816 | 0.9801 |

| Heidelberg University | 2014 | 1.0065 | 1.0064 | 1.0000 | 1.0455 | 1.0067 | 1.0386 | 1.0065 | 1.0064 | 1.0000 |

| Heidelberg University | 2015 | 1.0704 | 1.0761 | 0.9948 | 1.0314 | 1.0121 | 1.0191 | 1.0707 | 1.0761 | 0.9950 |

| Heidelberg University | 2016 | 1.0959 | 1.0621 | 1.0318 | 1.0338 | 1.0048 | 1.0289 | 1.0959 | 1.0621 | 1.0318 |

| Humboldt University of Berlin | 2011 | |||||||||

| Humboldt University of Berlin | 2012 | 1.0599 | 1.0543 | 1.0053 | 1.0357 | 1.0000 | 1.0357 | 1.0917 | 1.0000 | 1.0917 |

| Humboldt University of Berlin | 2013 | 0.9692 | 1.0000 | 0.9692 | 0.8913 | 1.0000 | 0.8913 | 0.9215 | 1.0000 | 0.9215 |

| Humboldt University of Berlin | 2014 | 0.8934 | 1.0000 | 0.8934 | 1.0125 | 1.0000 | 1.0125 | 0.9527 | 1.0000 | 0.9527 |

| Humboldt University of Berlin | 2015 | 1.0525 | 1.0000 | 1.0525 | 0.9987 | 1.0000 | 0.9987 | 1.0130 | 1.0000 | 1.0130 |

| Humboldt University of Berlin | 2016 | 1.2664 | 1.0000 | 1.2664 | 1.0083 | 1.0000 | 1.0083 | 1.2122 | 1.0000 | 1.2122 |

| Imperial College London | 2011 | |||||||||

| Imperial College London | 2012 | 0.9955 | 1.0000 | 0.9955 | 1.0644 | 1.0000 | 1.0644 | 1.0220 | 1.0000 | 1.0220 |

| Imperial College London | 2013 | 0.9743 | 1.0000 | 0.9743 | 0.9991 | 1.0000 | 0.9991 | 0.9914 | 1.0000 | 0.9914 |

| Imperial College London | 2014 | 0.9492 | 1.0000 | 0.9492 | 0.9794 | 1.0000 | 0.9794 | 0.9636 | 1.0000 | 0.9636 |

| Imperial College London | 2015 | 0.9927 | 1.0000 | 0.9927 | 1.0026 | 1.0000 | 1.0026 | 0.9961 | 1.0000 | 0.9961 |

| Imperial College London | 2016 | 0.9892 | 1.0000 | 0.9892 | 1.0244 | 1.0000 | 1.0244 | 0.9918 | 1.0000 | 0.9918 |

| Karlsruhe Institute of Tec. | 2011 | |||||||||

| Karlsruhe Institute of Tec. | 2012 | 0.9963 | 0.9847 | 1.0118 | 1.0589 | 1.0187 | 1.0394 | 1.0091 | 0.9924 | 1.0168 |

| Karlsruhe Institute of Tec. | 2013 | 1.1554 | 1.1597 | 0.9963 | 0.9848 | 1.0329 | 0.9534 | 1.1325 | 1.1455 | 0.9886 |

| Karlsruhe Institute of Tec. | 2014 | 0.9790 | 1.0012 | 0.9778 | 1.1020 | 1.0811 | 1.0192 | 0.9790 | 1.0012 | 0.9778 |

| Karlsruhe Institute of Tec. | 2015 | 1.0006 | 0.9992 | 1.0014 | 1.1541 | 1.1434 | 1.0093 | 1.0077 | 1.0024 | 1.0053 |

| Karlsruhe Institute of Tec. | 2016 | 1.1948 | 1.1865 | 1.0070 | 1.0734 | 1.0572 | 1.0153 | 1.1875 | 1.1827 | 1.0041 |

| Karolinska Institute | 2011 | |||||||||

| Karolinska Institute | 2012 | 1.0719 | 1.0000 | 1.0719 | 1.0239 | 1.0000 | 1.0239 | 1.1080 | 1.0000 | 1.1080 |

| Karolinska Institute | 2013 | 1.0015 | 0.9942 | 1.0073 | 0.9719 | 0.9599 | 1.0125 | 0.9600 | 1.0000 | 0.9600 |

| Karolinska Institute | 2014 | 0.9369 | 0.9904 | 0.9459 | 1.0233 | 0.9811 | 1.0430 | 0.9617 | 1.0000 | 0.9617 |

| Karolinska Institute | 2015 | 0.9972 | 0.9965 | 1.0007 | 1.0306 | 1.0303 | 1.0004 | 0.9956 | 1.0000 | 0.9956 |

| Karolinska Institute | 2016 | 1.1510 | 1.0191 | 1.1295 | 1.0088 | 1.0219 | 0.9872 | 1.0926 | 1.0000 | 1.0926 |

| King’s College London | 2011 | |||||||||

| King’s College London | 2012 | 1.0562 | 1.0441 | 1.0115 | 1.0355 | 0.9695 | 1.0681 | 1.0590 | 1.0403 | 1.0179 |

| King’s College London | 2013 | 0.9630 | 0.9626 | 1.0005 | 0.9886 | 0.9970 | 0.9916 | 0.9366 | 0.9621 | 0.9736 |

| King’s College London | 2014 | 1.0336 | 1.0567 | 0.9782 | 1.0588 | 1.0759 | 0.9841 | 1.0314 | 1.0363 | 0.9952 |

| King’s College London | 2015 | 1.0220 | 1.0133 | 1.0086 | 1.0693 | 1.0526 | 1.0159 | 1.0171 | 1.0052 | 1.0119 |

| King’s College London | 2016 | 1.0816 | 1.0241 | 1.0562 | 1.1455 | 1.0856 | 1.0551 | 1.0809 | 1.0232 | 1.0565 |

| KTH Royal Institute of Tec. | 2011 | |||||||||

| KTH Royal Institute of Tec. | 2012 | 1.0212 | 1.0000 | 1.0212 | 0.9900 | 0.9477 | 1.0446 | 1.0212 | 1.0000 | 1.0212 |

| KTH Royal Institute of Tec. | 2013 | 1.0091 | 1.0000 | 1.0091 | 0.9753 | 0.9630 | 1.0128 | 1.0091 | 1.0000 | 1.0091 |

| KTH Royal Institute of Tec. | 2014 | 0.9793 | 1.0000 | 0.9793 | 1.0622 | 1.0262 | 1.0350 | 0.9793 | 1.0000 | 0.9793 |

| KTH Royal Institute of Tec. | 2015 | 0.9919 | 1.0000 | 0.9919 | 1.0434 | 1.0327 | 1.0103 | 0.9924 | 1.0000 | 0.9924 |

| KTH Royal Institute of Tec. | 2016 | 0.9396 | 1.0000 | 0.9396 | 1.0689 | 1.0667 | 1.0020 | 0.9525 | 1.0000 | 0.9525 |

| KU Leuven | 2011 | |||||||||

| KU Leuven | 2012 | 1.0214 | 1.0131 | 1.0082 | 1.0024 | 0.9679 | 1.0357 | 1.0194 | 1.0000 | 1.0194 |

| KU Leuven | 2013 | 1.0064 | 1.0000 | 1.0064 | 0.9823 | 1.0154 | 0.9674 | 0.9986 | 1.0000 | 0.9986 |

| KU Leuven | 2014 | 0.9942 | 1.0000 | 0.9942 | 1.0189 | 0.9896 | 1.0296 | 0.9999 | 1.0000 | 0.9999 |

| KU Leuven | 2015 | 1.0038 | 1.0000 | 1.0038 | 0.9959 | 0.9805 | 1.0157 | 0.9991 | 1.0000 | 0.9991 |

| KU Leuven | 2016 | 1.0496 | 1.0000 | 1.0496 | 0.9944 | 0.9704 | 1.0247 | 1.0485 | 1.0000 | 1.0485 |

| Lancaster University | 2011 | |||||||||

| Lancaster University | 2012 | 0.9467 | 0.9836 | 0.9624 | 1.0133 | 0.9802 | 1.0339 | 0.9358 | 0.9612 | 0.9736 |

| Lancaster University | 2013 | 0.9607 | 1.0133 | 0.9481 | 0.8593 | 1.0328 | 0.8320 | 0.9597 | 1.0113 | 0.9490 |

| Lancaster University | 2014 | 1.0022 | 1.0402 | 0.9635 | 1.0445 | 1.0260 | 1.0180 | 0.9958 | 1.0401 | 0.9574 |

| Lancaster University | 2015 | 0.9867 | 0.9902 | 0.9964 | 0.7532 | 0.8437 | 0.8927 | 0.9867 | 0.9902 | 0.9964 |

| Lancaster University | 2016 | 1.0644 | 1.0506 | 1.0131 | 1.0442 | 1.0305 | 1.0133 | 1.0648 | 1.0513 | 1.0128 |

| Leiden University | 2011 | |||||||||

| Leiden University | 2012 | 0.8690 | 0.8779 | 0.9898 | 1.1860 | 1.1101 | 1.0684 | 0.9546 | 0.9635 | 0.9907 |

| Leiden University | 2013 | 1.1281 | 1.0755 | 1.0490 | 1.0293 | 1.0317 | 0.9977 | 1.0762 | 1.0369 | 1.0379 |

| Leiden University | 2014 | 0.9939 | 1.0405 | 0.9551 | 1.0382 | 1.0187 | 1.0192 | 0.9991 | 1.0009 | 0.9981 |

| Leiden University | 2015 | 0.9858 | 0.9736 | 1.0125 | 0.9484 | 0.9562 | 0.9918 | 0.9845 | 0.9954 | 0.9890 |

| Leiden University | 2016 | 1.0431 | 0.9436 | 1.1054 | 0.9703 | 0.9796 | 0.9904 | 1.0252 | 0.9489 | 1.0805 |

| LMU Munich | 2011 | |||||||||

| LMU Munich | 2012 | 1.1073 | 1.0757 | 1.0294 | 1.0341 | 1.0228 | 1.0111 | 1.1073 | 1.0757 | 1.0294 |

| LMU Munich | 2013 | 1.0102 | 1.0306 | 0.9802 | 1.0184 | 0.9841 | 1.0348 | 1.0102 | 1.0306 | 0.9802 |

| LMU Munich | 2014 | 0.9740 | 0.9759 | 0.9981 | 1.0210 | 0.9746 | 1.0475 | 0.9742 | 0.9763 | 0.9979 |

| LMU Munich | 2015 | 1.1686 | 1.1505 | 1.0157 | 1.0025 | 0.9567 | 1.0479 | 1.1683 | 1.1501 | 1.0158 |

| LMU Munich | 2016 | 1.0230 | 1.0000 | 1.0230 | 1.0134 | 0.9834 | 1.0305 | 1.0230 | 1.0000 | 1.0230 |

| LSE London | 2011 | |||||||||

| LSE London | 2012 | 1.1377 | 1.0000 | 1.1377 | 1.0873 | 1.0371 | 1.0485 | 1.1377 | 1.0000 | 1.1377 |

| LSE London | 2013 | 0.9903 | 1.0000 | 0.9903 | 1.0185 | 1.2124 | 0.8401 | 0.9903 | 1.0000 | 0.9903 |

| LSE London | 2014 | 0.9642 | 1.0000 | 0.9642 | 0.9132 | 0.8884 | 1.0278 | 0.9555 | 1.0000 | 0.9555 |

| LSE London | 2015 | 1.0283 | 1.0000 | 1.0283 | 0.9619 | 1.8963 | 0.5072 | 1.0283 | 1.0000 | 1.0283 |

| LSE London | 2016 | 1.1503 | 1.0000 | 1.1503 | 1.1364 | 1.0000 | 1.1364 | 1.1503 | 1.0000 | 1.1503 |

| Lund University | 2011 | |||||||||

| Lund University | 2012 | 1.0333 | 1.0335 | 0.9999 | 0.9876 | 0.9657 | 1.0228 | 1.0194 | 0.9722 | 1.0486 |

| Lund University | 2013 | 1.0478 | 1.0463 | 1.0014 | 0.9684 | 0.9503 | 1.0191 | 1.0216 | 1.0230 | 0.9986 |

| Lund University | 2014 | 0.9217 | 0.9556 | 0.9645 | 1.0108 | 0.9790 | 1.0325 | 0.9467 | 0.9643 | 0.9817 |

| Lund University | 2015 | 1.0297 | 1.0154 | 1.0141 | 1.0190 | 1.0069 | 1.0120 | 1.0318 | 1.0312 | 1.0006 |

| Lund University | 2016 | 1.1367 | 1.0901 | 1.0428 | 1.0357 | 1.0190 | 1.0165 | 1.1320 | 1.0662 | 1.0617 |

| Newcastle University | 2011 | |||||||||

| Newcastle University | 2012 | 0.9924 | 0.9494 | 1.0453 | 0.9831 | 0.9946 | 0.9884 | 0.9954 | 0.9946 | 1.0008 |

| Newcastle University | 2013 | 1.0037 | 1.0305 | 0.9740 | 0.9507 | 1.0054 | 0.9456 | 0.9550 | 1.0054 | 0.9498 |

| Newcastle University | 2014 | 0.9732 | 0.9761 | 0.9970 | 1.0983 | 1.0000 | 1.0983 | 1.0577 | 1.0000 | 1.0577 |

| Newcastle University | 2015 | 0.9953 | 0.9660 | 1.0304 | 0.9812 | 1.0000 | 0.9812 | 0.9648 | 1.0000 | 0.9648 |

| Newcastle University | 2016 | 1.1384 | 1.1351 | 1.0029 | 1.0045 | 1.0000 | 1.0045 | 1.0393 | 1.0000 | 1.0393 |

| Queen Mary Univ. of London | 2011 | |||||||||

| Queen Mary Univ. of London | 2012 | 1.0081 | 0.9810 | 1.0276 | 1.1627 | 1.1185 | 1.0395 | 1.0081 | 0.9810 | 1.0276 |

| Queen Mary Univ. of London | 2013 | 0.9961 | 1.0329 | 0.9644 | 1.0241 | 1.0623 | 0.9640 | 0.9961 | 1.0329 | 0.9644 |

| Queen Mary Univ. of London | 2014 | 1.0088 | 1.0022 | 1.0065 | 1.1593 | 1.1178 | 1.0371 | 1.0088 | 1.0022 | 1.0065 |

| Queen Mary Univ. of London | 2015 | 1.0099 | 0.9869 | 1.0233 | 1.0394 | 1.0479 | 0.9919 | 1.0099 | 0.9869 | 1.0233 |

| Queen Mary Univ. of London | 2016 | 1.0516 | 1.0016 | 1.0499 | 1.0517 | 1.0382 | 1.0130 | 1.0529 | 1.0074 | 1.0452 |

| RWTH Aachen University | 2011 | |||||||||

| RWTH Aachen University | 2012 | 1.0727 | 1.0382 | 1.0331 | 1.0296 | 1.0006 | 1.0289 | 1.0727 | 1.0382 | 1.0331 |

| RWTH Aachen University | 2013 | 1.0700 | 1.0799 | 0.9908 | 1.0685 | 1.0318 | 1.0356 | 1.0700 | 1.0799 | 0.9908 |

| RWTH Aachen University | 2014 | 1.0611 | 1.0446 | 1.0158 | 1.0785 | 1.0348 | 1.0421 | 1.0611 | 1.0446 | 1.0158 |

| RWTH Aachen University | 2015 | 0.9718 | 1.0276 | 0.9457 | 1.0561 | 1.0221 | 1.0333 | 0.9718 | 1.0276 | 0.9457 |

| RWTH Aachen University | 2016 | 1.1986 | 1.1760 | 1.0192 | 1.0441 | 1.0129 | 1.0308 | 1.1986 | 1.1760 | 1.0192 |

| Stockholm University | 2011 | |||||||||

| Stockholm University | 2012 | 1.1557 | 1.1324 | 1.0206 | 1.0440 | 1.0191 | 1.0244 | 1.1557 | 1.1324 | 1.0206 |

| Stockholm University | 2013 | 1.0315 | 1.0604 | 0.9727 | 1.1025 | 1.1015 | 1.0009 | 1.0315 | 1.0604 | 0.9727 |

| Stockholm University | 2014 | 0.9535 | 0.9832 | 0.9697 | 1.0529 | 1.0250 | 1.0272 | 0.9535 | 0.9832 | 0.9697 |

| Stockholm University | 2015 | 1.0287 | 1.0007 | 1.0281 | 1.0282 | 1.0130 | 1.0150 | 1.0287 | 1.0007 | 1.0281 |

| Stockholm University | 2016 | 0.9288 | 0.8881 | 1.0458 | 1.0500 | 1.0399 | 1.0097 | 0.9288 | 0.8881 | 1.0458 |

| Swedish U. of Agri. Sciences | 2011 | |||||||||

| Swedish U. of Agri. Sciences | 2012 | 0.9937 | 1.0000 | 0.9937 | 1.0239 | 0.9898 | 1.0344 | 0.9937 | 1.0000 | 0.9937 |

| Swedish U. of Agri. Sciences | 2013 | 0.9957 | 1.0000 | 0.9957 | 0.9956 | 1.0063 | 0.9894 | 0.9957 | 1.0000 | 0.9957 |

| Swedish U. of Agri. Sciences | 2014 | 1.0093 | 1.0000 | 1.0093 | 1.0768 | 1.0398 | 1.0356 | 1.0043 | 1.0000 | 1.0043 |

| Swedish U. of Agri. Sciences | 2015 | 1.0318 | 1.0000 | 1.0318 | 1.1221 | 1.1271 | 0.9956 | 1.0413 | 1.0000 | 1.0413 |

| Swedish U. of Agri. Sciences | 2016 | 1.0248 | 1.0000 | 1.0248 | 1.0599 | 1.0478 | 1.0116 | 1.0248 | 1.0000 | 1.0248 |

| Tec. University of Denmark | 2011 | |||||||||

| Tec. University of Denmark | 2012 | 1.0056 | 0.9805 | 1.0256 | 1.0546 | 1.0182 | 1.0357 | 1.0056 | 0.9805 | 1.0256 |

| Tec. University of Denmark | 2013 | 1.0470 | 1.0371 | 1.0095 | 1.0816 | 1.0985 | 0.9846 | 1.0468 | 1.0371 | 1.0093 |

| Tec. University of Denmark | 2014 | 1.0061 | 0.9969 | 1.0092 | 1.0175 | 1.0512 | 0.9680 | 1.0063 | 0.9981 | 1.0083 |

| Tec. University of Denmark | 2015 | 1.0017 | 0.9986 | 1.0031 | 0.9632 | 0.9424 | 1.0221 | 1.0024 | 0.9984 | 1.0039 |

| Tec. University of Denmark | 2016 | 0.8786 | 0.8736 | 1.0057 | 1.0629 | 1.0411 | 1.0209 | 0.8918 | 0.8820 | 1.0112 |

| Tec. University of Munich | 2011 | |||||||||

| Tec. University of Munich | 2012 | 0.9105 | 0.8875 | 1.0259 | 1.0539 | 1.0235 | 1.0297 | 0.9077 | 0.8871 | 1.0233 |

| Tec. University of Munich | 2013 | 1.0031 | 1.0267 | 0.9770 | 1.0626 | 1.0254 | 1.0362 | 1.0031 | 1.0267 | 0.9770 |

| Tec. University of Munich | 2014 | 1.0547 | 1.0530 | 1.0016 | 1.0604 | 1.0132 | 1.0466 | 1.0547 | 1.0531 | 1.0015 |

| Tec. University of Munich | 2015 | 0.9763 | 0.9798 | 0.9964 | 1.0580 | 1.0147 | 1.0427 | 0.9763 | 0.9797 | 0.9966 |

| Tec. University of Munich | 2016 | 1.2287 | 1.2016 | 1.0225 | 1.0331 | 1.0024 | 1.0306 | 1.2287 | 1.2016 | 1.0225 |

| Trinity College Dublin | 2011 | |||||||||

| Trinity College Dublin | 2012 | 1.0144 | 1.0000 | 1.0144 | 1.0857 | 0.9985 | 1.0873 | 1.0174 | 1.0000 | 1.0174 |

| Trinity College Dublin | 2013 | 0.9802 | 1.0000 | 0.9802 | 1.0942 | 1.1177 | 0.9790 | 0.9866 | 1.0000 | 0.9866 |

| Trinity College Dublin | 2014 | 0.9721 | 1.0000 | 0.9721 | 1.0564 | 1.0339 | 1.0217 | 0.9747 | 1.0000 | 0.9747 |

| Trinity College Dublin | 2015 | 0.9675 | 0.9427 | 1.0262 | 1.1399 | 1.1160 | 1.0214 | 0.9869 | 0.9841 | 1.0028 |

| Trinity College Dublin | 2016 | 1.0000 | 0.9603 | 1.0413 | 1.0274 | 1.0076 | 1.0197 | 1.0080 | 0.9798 | 1.0289 |

| University College Dublin | 2011 | |||||||||

| University College Dublin | 2012 | 0.9440 | 0.9155 | 1.0311 | 1.1026 | 1.0659 | 1.0345 | 0.9564 | 0.9236 | 1.0355 |

| University College Dublin | 2013 | 0.9258 | 0.9643 | 0.9601 | 1.0606 | 1.0803 | 0.9818 | 0.9411 | 0.9809 | 0.9594 |

| University College Dublin | 2014 | 1.0322 | 1.0268 | 1.0053 | 1.0412 | 1.0013 | 1.0399 | 1.0339 | 1.0196 | 1.0140 |

| University College Dublin | 2015 | 0.9762 | 0.9578 | 1.0192 | 1.0953 | 1.1020 | 0.9939 | 0.9984 | 0.9914 | 1.0070 |

| University College Dublin | 2016 | 1.0871 | 1.0559 | 1.0296 | 0.9853 | 0.9734 | 1.0122 | 1.0679 | 1.0367 | 1.0301 |

| University College London | 2011 | |||||||||

| University College London | 2012 | 1.0244 | 1.0041 | 1.0202 | 1.0483 | 1.0000 | 1.0483 | 1.0468 | 1.0000 | 1.0468 |

| University College London | 2013 | 0.9823 | 0.9928 | 0.9894 | 0.9847 | 1.0000 | 0.9847 | 0.9847 | 1.0000 | 0.9847 |

| University College London | 2014 | 0.9909 | 0.9936 | 0.9973 | 1.0373 | 1.0000 | 1.0373 | 1.0224 | 1.0000 | 1.0224 |

| University College London | 2015 | 1.0088 | 0.9985 | 1.0103 | 1.0207 | 1.0000 | 1.0207 | 1.0207 | 1.0000 | 1.0207 |

| University College London | 2016 | 1.0538 | 1.0228 | 1.0304 | 1.0519 | 1.0000 | 1.0519 | 1.0484 | 1.0000 | 1.0484 |

| University of Aberdeen | 2011 | |||||||||

| University of Aberdeen | 2012 | 0.9930 | 0.9693 | 1.0244 | 1.0297 | 0.9813 | 1.0493 | 0.9958 | 0.9735 | 1.0229 |

| University of Aberdeen | 2013 | 0.9973 | 1.0276 | 0.9705 | 0.9195 | 0.9815 | 0.9369 | 0.9729 | 1.0089 | 0.9643 |

| University of Aberdeen | 2014 | 0.9857 | 0.9635 | 1.0231 | 0.9715 | 0.9169 | 1.0596 | 0.9950 | 0.9738 | 1.0218 |

| University of Aberdeen | 2015 | 1.0011 | 0.9853 | 1.0161 | 0.9508 | 0.9349 | 1.0171 | 1.0021 | 0.9840 | 1.0184 |

| University of Aberdeen | 2016 | 1.0827 | 1.0826 | 1.0000 | 0.9691 | 0.9517 | 1.0183 | 1.0670 | 1.0625 | 1.0042 |

| University of Amsterdam | 2011 | |||||||||

| University of Amsterdam | 2012 | 1.1270 | 1.1432 | 0.9858 | 1.0435 | 1.0067 | 1.0365 | 1.0459 | 1.0075 | 1.0381 |

| University of Amsterdam | 2013 | 1.1002 | 1.1002 | 1.0000 | 1.0404 | 1.0262 | 1.0138 | 1.0508 | 1.0430 | 1.0075 |

| University of Amsterdam | 2014 | 0.9673 | 1.0150 | 0.9529 | 1.0595 | 1.0302 | 1.0284 | 1.0353 | 1.0129 | 1.0222 |

| University of Amsterdam | 2015 | 1.0404 | 1.0257 | 1.0143 | 1.0616 | 1.0477 | 1.0133 | 1.0620 | 1.0506 | 1.0108 |

| University of Amsterdam | 2016 | 1.1430 | 1.0616 | 1.0766 | 1.0299 | 1.0156 | 1.0141 | 1.0501 | 1.0147 | 1.0350 |

| University of Basel | 2011 | |||||||||

| University of Basel | 2012 | 1.0341 | 1.0060 | 1.0280 | 0.9524 | 0.9215 | 1.0335 | 1.0312 | 1.0038 | 1.0273 |

| University of Basel | 2013 | 0.9924 | 1.0101 | 0.9825 | 1.0373 | 1.0216 | 1.0154 | 0.9922 | 1.0101 | 0.9822 |

| University of Basel | 2014 | 1.0281 | 1.0000 | 1.0281 | 1.1148 | 1.0739 | 1.0381 | 1.0281 | 1.0000 | 1.0281 |

| University of Basel | 2015 | 0.9964 | 1.0000 | 0.9964 | 1.0935 | 1.0893 | 1.0038 | 0.9990 | 1.0000 | 0.9990 |

| University of Basel | 2016 | 1.0416 | 1.0000 | 1.0416 | 1.0873 | 1.0914 | 0.9962 | 1.0414 | 1.0000 | 1.0414 |

| University of Bergen | 2011 | |||||||||

| University of Bergen | 2012 | 1.0059 | 0.9715 | 1.0354 | 1.0456 | 1.0268 | 1.0183 | 1.0059 | 0.9715 | 1.0354 |

| University of Bergen | 2013 | 0.9707 | 0.9917 | 0.9788 | 1.0396 | 1.0374 | 1.0021 | 0.9703 | 0.9945 | 0.9756 |

| University of Bergen | 2014 | 0.9428 | 0.9464 | 0.9961 | 1.0453 | 1.0168 | 1.0280 | 0.9413 | 0.9471 | 0.9939 |

| University of Bergen | 2015 | 1.0226 | 0.9894 | 1.0336 | 1.0359 | 1.0207 | 1.0149 | 1.0260 | 0.9945 | 1.0318 |

| University of Bergen | 2016 | 1.1952 | 1.1739 | 1.0182 | 1.0330 | 1.0248 | 1.0080 | 1.1901 | 1.1637 | 1.0226 |

| University of Birmingham | 2011 | |||||||||

| University of Birmingham | 2012 | 0.9456 | 0.9513 | 0.9940 | 0.9804 | 0.9530 | 1.0288 | 0.9616 | 0.9492 | 1.0131 |

| University of Birmingham | 2013 | 0.9947 | 1.0229 | 0.9724 | 0.9759 | 0.9739 | 1.0021 | 0.9628 | 1.0081 | 0.9551 |

| University of Birmingham | 2014 | 1.0266 | 1.0486 | 0.9789 | 0.9928 | 0.9675 | 1.0261 | 1.0274 | 1.0123 | 1.0149 |

| University of Birmingham | 2015 | 1.0440 | 1.0279 | 1.0157 | 1.0276 | 1.0071 | 1.0203 | 1.0495 | 1.0352 | 1.0139 |

| University of Birmingham | 2016 | 1.1072 | 1.0871 | 1.0185 | 1.0322 | 1.0183 | 1.0136 | 1.0956 | 1.0639 | 1.0298 |

| University of Bonn | 2011 | |||||||||

| University of Bonn | 2012 | 1.1156 | 1.0775 | 1.0353 | 1.0051 | 0.9855 | 1.0198 | 1.1156 | 1.0775 | 1.0353 |

| University of Bonn | 2013 | 1.1055 | 1.1274 | 0.9806 | 1.0772 | 1.0600 | 1.0162 | 1.1055 | 1.1274 | 0.9806 |

| University of Bonn | 2014 | 1.0037 | 0.9970 | 1.0067 | 1.0411 | 1.0118 | 1.0290 | 1.0038 | 0.9972 | 1.0066 |

| University of Bonn | 2015 | 1.0381 | 1.0474 | 0.9912 | 0.9747 | 0.9751 | 0.9996 | 1.0381 | 1.0472 | 0.9913 |

| University of Bonn | 2016 | 1.0179 | 0.9936 | 1.0244 | 1.0254 | 0.9996 | 1.0259 | 1.0179 | 0.9936 | 1.0244 |

| University of Bristol | 2011 | |||||||||

| University of Bristol | 2012 | 1.0856 | 1.0597 | 1.0244 | 1.0436 | 0.9878 | 1.0564 | 1.0705 | 1.0115 | 1.0583 |

| University of Bristol | 2013 | 0.9537 | 0.9752 | 0.9780 | 0.8462 | 0.8799 | 0.9618 | 0.9103 | 0.9531 | 0.9551 |

| University of Bristol | 2014 | 0.9793 | 1.0138 | 0.9659 | 1.0375 | 1.0648 | 0.9743 | 1.0011 | 1.0210 | 0.9805 |

| University of Bristol | 2015 | 1.0057 | 0.9847 | 1.0213 | 0.9423 | 0.9329 | 1.0101 | 0.9955 | 0.9801 | 1.0158 |

| University of Bristol | 2016 | 1.0771 | 1.0506 | 1.0252 | 0.9511 | 0.9263 | 1.0267 | 1.0562 | 1.0111 | 1.0446 |

| University of Cambridge | 2011 | |||||||||

| University of Cambridge | 2012 | 1.0243 | 1.0000 | 1.0243 | 1.0055 | 1.0000 | 1.0055 | 1.0132 | 1.0000 | 1.0132 |

| University of Cambridge | 2013 | 0.9964 | 1.0000 | 0.9964 | 1.0162 | 1.0000 | 1.0162 | 1.0162 | 1.0000 | 1.0162 |

| University of Cambridge | 2014 | 0.9952 | 1.0000 | 0.9952 | 1.0513 | 1.0000 | 1.0513 | 1.0303 | 1.0000 | 1.0303 |

| University of Cambridge | 2015 | 0.9858 | 1.0000 | 0.9858 | 1.0154 | 1.0000 | 1.0154 | 1.0131 | 1.0000 | 1.0131 |

| University of Cambridge | 2016 | 1.0044 | 1.0000 | 1.0044 | 1.0383 | 1.0000 | 1.0383 | 1.0251 | 1.0000 | 1.0251 |

| University of Copenhagen | 2011 | |||||||||

| University of Copenhagen | 2012 | 1.3003 | 1.2325 | 1.0550 | 1.0775 | 1.0391 | 1.0370 | 1.1659 | 1.1227 | 1.0385 |

| University of Copenhagen | 2013 | 1.0206 | 1.0271 | 0.9936 | 1.0687 | 1.0775 | 0.9918 | 1.0018 | 1.0238 | 0.9785 |

| University of Copenhagen | 2014 | 1.0027 | 1.0019 | 1.0008 | 1.0829 | 1.0452 | 1.0360 | 1.0537 | 1.0348 | 1.0183 |

| University of Copenhagen | 2015 | 0.9924 | 0.9877 | 1.0048 | 1.0390 | 1.0179 | 1.0208 | 1.0273 | 1.0102 | 1.0170 |

| University of Copenhagen | 2016 | 1.1718 | 1.1435 | 1.0248 | 1.0763 | 1.0449 | 1.0300 | 1.0917 | 1.0554 | 1.0344 |

| University of Dundee | 2011 | |||||||||

| University of Dundee | 2012 | 1.0718 | 1.0326 | 1.0380 | 1.0915 | 1.0035 | 1.0878 | 1.0696 | 1.0000 | 1.0696 |

| University of Dundee | 2013 | 0.9191 | 0.9400 | 0.9778 | 0.8434 | 0.8612 | 0.9794 | 0.9090 | 0.9521 | 0.9547 |

| University of Dundee | 2014 | 1.0310 | 1.0639 | 0.9691 | 0.9775 | 0.9621 | 1.0160 | 1.0292 | 1.0503 | 0.9800 |

| University of Dundee | 2015 | 0.9954 | 0.9481 | 1.0498 | 0.9141 | 0.9031 | 1.0121 | 0.9916 | 0.9505 | 1.0433 |

| University of Dundee | 2016 | 1.0652 | 1.0431 | 1.0212 | 1.0379 | 1.0339 | 1.0039 | 1.0643 | 1.0406 | 1.0228 |

| University of East Anglia | 2011 | |||||||||

| University of East Anglia | 2012 | 1.0977 | 1.1141 | 0.9853 | 0.9797 | 0.9934 | 0.9862 | 1.1203 | 1.1098 | 1.0095 |

| University of East Anglia | 2013 | 0.9916 | 1.0149 | 0.9771 | 0.8696 | 0.9210 | 0.9442 | 0.9562 | 1.0002 | 0.9560 |

| University of East Anglia | 2014 | 0.9623 | 1.0048 | 0.9577 | 0.9627 | 0.8880 | 1.0842 | 0.9543 | 0.9931 | 0.9609 |

| University of East Anglia | 2015 | 1.0265 | 0.9662 | 1.0624 | 1.0423 | 1.0221 | 1.0198 | 1.0283 | 0.9718 | 1.0581 |

| University of East Anglia | 2016 | 1.1003 | 1.1074 | 0.9936 | 1.0118 | 0.9906 | 1.0214 | 1.0953 | 1.1004 | 0.9953 |

| University of Edinburgh | 2011 | |||||||||

| University of Edinburgh | 2012 | 1.0718 | 1.0307 | 1.0398 | 1.0476 | 1.0169 | 1.0302 | 1.0718 | 1.0307 | 1.0398 |

| University of Edinburgh | 2013 | 0.9874 | 1.0066 | 0.9809 | 1.0035 | 0.9925 | 1.0111 | 0.9864 | 1.0066 | 0.9799 |

| University of Edinburgh | 2014 | 0.9514 | 0.9693 | 0.9815 | 1.0398 | 1.0110 | 1.0285 | 0.9574 | 0.9751 | 0.9819 |

| University of Edinburgh | 2015 | 1.0068 | 1.0054 | 1.0014 | 0.9989 | 0.9838 | 1.0154 | 1.0037 | 0.9995 | 1.0042 |

| University of Edinburgh | 2016 | 1.0996 | 1.0581 | 1.0392 | 1.0233 | 0.9983 | 1.0251 | 1.0996 | 1.0581 | 1.0392 |

| University of Exeter | 2011 | |||||||||

| University of Exeter | 2012 | 1.0916 | 1.0564 | 1.0333 | 1.0698 | 1.0341 | 1.0345 | 1.0916 | 1.0564 | 1.0333 |

| University of Exeter | 2013 | 1.0588 | 1.0899 | 0.9715 | 1.0855 | 1.1271 | 0.9631 | 1.0588 | 1.0899 | 0.9715 |

| University of Exeter | 2014 | 1.0112 | 1.0177 | 0.9936 | 1.0016 | 0.9351 | 1.0712 | 1.0114 | 1.0182 | 0.9933 |

| University of Exeter | 2015 | 1.0060 | 0.9766 | 1.0301 | 0.9250 | 0.9389 | 0.9852 | 1.0065 | 0.9774 | 1.0298 |

| University of Exeter | 2016 | 1.1610 | 1.1233 | 1.0335 | 1.2387 | 1.2357 | 1.0025 | 1.1543 | 1.1255 | 1.0256 |

| University of Freiburg | 2011 | |||||||||

| University of Freiburg | 2012 | 1.0299 | 1.0153 | 1.0144 | 1.0357 | 1.0046 | 1.0309 | 1.0299 | 1.0153 | 1.0144 |

| University of Freiburg | 2013 | 1.0119 | 1.0197 | 0.9924 | 1.0237 | 1.0155 | 1.0081 | 1.0119 | 1.0197 | 0.9924 |

| University of Freiburg | 2014 | 1.0413 | 1.0232 | 1.0177 | 1.0585 | 1.0240 | 1.0336 | 1.0413 | 1.0232 | 1.0177 |

| University of Freiburg | 2015 | 0.9808 | 0.9874 | 0.9933 | 1.0222 | 1.0215 | 1.0006 | 0.9808 | 0.9874 | 0.9933 |

| University of Freiburg | 2016 | 1.1739 | 1.1458 | 1.0245 | 1.0250 | 0.9971 | 1.0279 | 1.1736 | 1.1458 | 1.0242 |

| University of Geneva | 2011 | |||||||||

| University of Geneva | 2012 | 1.0184 | 1.0352 | 0.9838 | 1.0394 | 0.9876 | 1.0524 | 1.0183 | 1.0202 | 0.9981 |

| University of Geneva | 2013 | 0.9741 | 0.9997 | 0.9744 | 0.9960 | 0.9659 | 1.0312 | 0.9763 | 0.9993 | 0.9770 |

| University of Geneva | 2014 | 1.0117 | 1.0087 | 1.0030 | 1.0662 | 1.0242 | 1.0410 | 1.0122 | 1.0007 | 1.0115 |

| University of Geneva | 2015 | 1.0076 | 1.0000 | 1.0076 | 1.0162 | 1.0096 | 1.0066 | 1.0084 | 1.0000 | 1.0084 |

| University of Geneva | 2016 | 1.0210 | 1.0000 | 1.0210 | 1.0037 | 1.0018 | 1.0019 | 1.0211 | 1.0000 | 1.0211 |

| University of Glasgow | 2011 | |||||||||

| University of Glasgow | 2012 | 1.1143 | 1.0741 | 1.0374 | 1.0345 | 1.0045 | 1.0299 | 1.1143 | 1.0741 | 1.0374 |

| University of Glasgow | 2013 | 0.9411 | 0.9574 | 0.9830 | 0.9690 | 0.9617 | 1.0076 | 0.9419 | 0.9576 | 0.9836 |

| University of Glasgow | 2014 | 1.0117 | 1.0436 | 0.9695 | 1.0227 | 0.9962 | 1.0266 | 1.0183 | 1.0498 | 0.9700 |

| University of Glasgow | 2015 | 1.0439 | 1.0193 | 1.0241 | 1.0565 | 1.0527 | 1.0036 | 1.0426 | 1.0214 | 1.0208 |

| University of Glasgow | 2016 | 1.0150 | 0.9651 | 1.0518 | 1.0103 | 0.9820 | 1.0289 | 1.0109 | 0.9571 | 1.0561 |

| University of Göttingen | 2011 | |||||||||

| University of Göttingen | 2012 | 0.9091 | 0.8753 | 1.0387 | 1.2455 | 1.1875 | 1.0488 | 0.9095 | 0.8753 | 1.0391 |

| University of Göttingen | 2013 | 1.0985 | 1.1234 | 0.9778 | 1.1114 | 1.0845 | 1.0248 | 1.0986 | 1.1234 | 0.9780 |

| University of Göttingen | 2014 | 0.9676 | 0.9719 | 0.9956 | 0.6741 | 0.6477 | 1.0407 | 0.9660 | 0.9719 | 0.9938 |

| University of Göttingen | 2015 | 1.0270 | 1.0275 | 0.9995 | 1.0238 | 1.0186 | 1.0051 | 1.0270 | 1.0275 | 0.9995 |

| University of Göttingen | 2016 | 0.7960 | 0.7713 | 1.0321 | 1.0304 | 1.0085 | 1.0217 | 0.7960 | 0.7713 | 1.0321 |

| University of Groningen | 2011 | |||||||||

| University of Groningen | 2012 | 1.0521 | 1.0110 | 1.0407 | 1.0701 | 1.0372 | 1.0318 | 1.0812 | 1.0395 | 1.0401 |

| University of Groningen | 2013 | 1.2216 | 1.1876 | 1.0286 | 1.0497 | 1.0331 | 1.0161 | 1.1758 | 1.1930 | 0.9856 |

| University of Groningen | 2014 | 0.9529 | 0.9929 | 0.9598 | 1.0423 | 1.0079 | 1.0342 | 1.0122 | 1.0093 | 1.0029 |

| University of Groningen | 2015 | 1.0081 | 0.9980 | 1.0101 | 1.0448 | 1.0209 | 1.0235 | 1.0188 | 0.9980 | 1.0209 |

| University of Groningen | 2016 | 1.0935 | 1.0327 | 1.0588 | 1.0323 | 1.0230 | 1.0090 | 1.0429 | 1.0020 | 1.0409 |

| University of Helsinki | 2011 | |||||||||

| University of Helsinki | 2012 | 0.9988 | 0.9734 | 1.0261 | 1.0014 | 0.9675 | 1.0350 | 0.9983 | 0.9624 | 1.0374 |

| University of Helsinki | 2013 | 1.0232 | 1.0500 | 0.9745 | 1.0003 | 1.0316 | 0.9696 | 1.0071 | 1.0512 | 0.9580 |

| University of Helsinki | 2014 | 0.9845 | 1.0113 | 0.9735 | 1.0193 | 0.9916 | 1.0279 | 1.0064 | 1.0185 | 0.9881 |

| University of Helsinki | 2015 | 1.0212 | 1.0066 | 1.0145 | 1.0467 | 1.0325 | 1.0138 | 1.0256 | 1.0065 | 1.0190 |

| University of Helsinki | 2016 | 1.0599 | 1.0168 | 1.0423 | 1.0320 | 1.0099 | 1.0219 | 1.0399 | 0.9730 | 1.0687 |

| University of Konstanz | 2011 | |||||||||

| University of Konstanz | 2012 | 0.9118 | 1.0000 | 0.9118 | 0.9276 | 1.0000 | 0.9276 | 0.9118 | 1.0000 | 0.9118 |

| University of Konstanz | 2013 | 1.0922 | 1.0000 | 1.0922 | 1.0105 | 1.0000 | 1.0105 | 1.0922 | 1.0000 | 1.0922 |

| University of Konstanz | 2014 | 0.9744 | 1.0000 | 0.9744 | 1.0791 | 1.0000 | 1.0791 | 0.9744 | 1.0000 | 0.9744 |

| University of Konstanz | 2015 | 1.0052 | 1.0000 | 1.0052 | 0.9270 | 1.0000 | 0.9270 | 1.0056 | 1.0000 | 1.0056 |

| University of Konstanz | 2016 | 1.3417 | 1.0000 | 1.3417 | 1.0163 | 1.0000 | 1.0163 | 1.3320 | 1.0000 | 1.3320 |

| University of Lausanne | 2011 | |||||||||

| University of Lausanne | 2012 | 1.0792 | 1.0642 | 1.0140 | 0.9938 | 0.9300 | 1.0685 | 1.0738 | 1.0598 | 1.0132 |

| University of Lausanne | 2013 | 0.9647 | 0.9889 | 0.9755 | 1.0164 | 1.0044 | 1.0120 | 0.9592 | 0.9828 | 0.9760 |

| University of Lausanne | 2014 | 1.0176 | 1.0095 | 1.0081 | 1.0554 | 1.0196 | 1.0351 | 1.0189 | 1.0091 | 1.0097 |

| University of Lausanne | 2015 | 0.9974 | 0.9828 | 1.0149 | 1.0241 | 1.0283 | 0.9959 | 0.9979 | 0.9858 | 1.0123 |

| University of Lausanne | 2016 | 1.0518 | 1.0220 | 1.0291 | 0.9537 | 0.9595 | 0.9939 | 1.0491 | 1.0252 | 1.0233 |

| University of Leeds | 2011 | |||||||||

| University of Leeds | 2012 | 1.0597 | 1.0530 | 1.0064 | 1.0332 | 1.0073 | 1.0257 | 1.0920 | 1.0754 | 1.0155 |

| University of Leeds | 2013 | 1.0558 | 1.0676 | 0.9889 | 0.9659 | 0.9636 | 1.0024 | 0.9857 | 1.0241 | 0.9625 |

| University of Leeds | 2014 | 1.0458 | 1.0703 | 0.9771 | 1.0237 | 0.9977 | 1.0261 | 1.0505 | 1.0654 | 0.9860 |

| University of Leeds | 2015 | 0.9683 | 0.9506 | 1.0186 | 1.0294 | 1.0172 | 1.0120 | 0.9797 | 0.9604 | 1.0201 |

| University of Leeds | 2016 | 1.1377 | 1.1161 | 1.0194 | 1.0576 | 1.0233 | 1.0335 | 1.1274 | 1.0909 | 1.0334 |

| University of Liverpool | 2011 | |||||||||

| University of Liverpool | 2012 | 1.0702 | 1.0651 | 1.0048 | 1.0298 | 1.0020 | 1.0278 | 1.0984 | 1.0854 | 1.0120 |

| University of Liverpool | 2013 | 1.0304 | 1.0638 | 0.9686 | 0.9665 | 0.9586 | 1.0082 | 1.0035 | 1.0414 | 0.9635 |

| University of Liverpool | 2014 | 1.0125 | 1.0044 | 1.0080 | 1.0224 | 0.9927 | 1.0300 | 1.0212 | 1.0094 | 1.0116 |

| University of Liverpool | 2015 | 1.0616 | 1.0433 | 1.0175 | 1.0406 | 1.0233 | 1.0169 | 1.0600 | 1.0478 | 1.0117 |

| University of Liverpool | 2016 | 1.0926 | 1.0620 | 1.0288 | 1.0185 | 1.0090 | 1.0094 | 1.0807 | 1.0495 | 1.0297 |

| University of Manchester | 2011 | |||||||||

| University of Manchester | 2012 | 1.0398 | 1.0298 | 1.0097 | 1.0079 | 0.9713 | 1.0377 | 1.0170 | 0.9973 | 1.0198 |

| University of Manchester | 2013 | 0.9863 | 0.9876 | 0.9987 | 0.9740 | 0.9898 | 0.9840 | 0.9612 | 0.9835 | 0.9773 |

| University of Manchester | 2014 | 1.0014 | 1.0132 | 0.9883 | 1.0094 | 0.9766 | 1.0336 | 1.0344 | 1.0248 | 1.0094 |

| University of Manchester | 2015 | 1.0210 | 1.0111 | 1.0097 | 1.0100 | 1.0034 | 1.0066 | 1.0147 | 1.0038 | 1.0108 |

| University of Manchester | 2016 | 1.0622 | 1.0425 | 1.0189 | 1.0177 | 0.9903 | 1.0277 | 1.0575 | 1.0278 | 1.0289 |

| University of Nottingham | 2011 | |||||||||

| University of Nottingham | 2012 | 0.9697 | 0.9699 | 0.9998 | 1.0577 | 1.0265 | 1.0304 | 0.9979 | 0.9815 | 1.0167 |

| University of Nottingham | 2013 | 0.9804 | 0.9931 | 0.9872 | 0.9872 | 0.9919 | 0.9953 | 0.9359 | 0.9809 | 0.9541 |

| University of Nottingham | 2014 | 0.9782 | 0.9964 | 0.9817 | 1.0441 | 1.0188 | 1.0249 | 1.0178 | 1.0013 | 1.0165 |

| University of Nottingham | 2015 | 1.0039 | 0.9909 | 1.0131 | 1.0371 | 1.0270 | 1.0098 | 1.0294 | 1.0100 | 1.0192 |

| University of Nottingham | 2016 | 1.1428 | 1.1322 | 1.0093 | 1.0123 | 0.9922 | 1.0203 | 1.1006 | 1.0676 | 1.0309 |

| University of Oxford | 2011 | |||||||||

| University of Oxford | 2012 | 1.0369 | 1.0000 | 1.0369 | 1.0599 | 1.0000 | 1.0599 | 1.0403 | 1.0000 | 1.0403 |

| University of Oxford | 2013 | 1.0090 | 1.0000 | 1.0090 | 1.0463 | 1.0000 | 1.0463 | 1.0568 | 1.0000 | 1.0568 |

| University of Oxford | 2014 | 1.0251 | 1.0000 | 1.0251 | 1.0717 | 1.0000 | 1.0717 | 1.0540 | 1.0000 | 1.0540 |

| University of Oxford | 2015 | 0.9496 | 1.0000 | 0.9496 | 1.0293 | 1.0000 | 1.0293 | 0.9695 | 1.0000 | 0.9695 |

| University of Oxford | 2016 | 1.0056 | 1.0000 | 1.0056 | 1.0588 | 1.0000 | 1.0588 | 1.0306 | 1.0000 | 1.0306 |

| University of Sheffield | 2011 | |||||||||

| University of Sheffield | 2012 | 1.0638 | 1.0509 | 1.0123 | 0.9818 | 0.9585 | 1.0243 | 1.0649 | 1.0127 | 1.0516 |

| University of Sheffield | 2013 | 1.0137 | 1.0392 | 0.9755 | 0.9530 | 0.9521 | 1.0010 | 0.9603 | 0.9998 | 0.9605 |

| University of Sheffield | 2014 | 0.9940 | 1.0232 | 0.9714 | 1.0055 | 0.9811 | 1.0248 | 1.0139 | 1.0305 | 0.9839 |

| University of Sheffield | 2015 | 1.0199 | 0.9995 | 1.0203 | 0.9914 | 0.9751 | 1.0167 | 1.0219 | 1.0074 | 1.0144 |

| University of Sheffield | 2016 | 1.0857 | 1.0557 | 1.0284 | 1.0053 | 0.9903 | 1.0151 | 1.0719 | 1.0437 | 1.0270 |

| University of Southampton | 2011 | |||||||||

| University of Southampton | 2012 | 1.0255 | 1.0078 | 1.0175 | 0.9999 | 0.9682 | 1.0327 | 1.0476 | 1.0172 | 1.0299 |

| University of Southampton | 2013 | 1.0199 | 1.0406 | 0.9801 | 0.9904 | 0.9884 | 1.0020 | 0.9793 | 1.0194 | 0.9606 |

| University of Southampton | 2014 | 0.9811 | 0.9834 | 0.9977 | 1.0419 | 1.0164 | 1.0251 | 1.0039 | 1.0046 | 0.9994 |

| University of Southampton | 2015 | 1.0379 | 1.0273 | 1.0104 | 1.0705 | 1.0550 | 1.0147 | 1.0462 | 1.0338 | 1.0120 |

| University of Southampton | 2016 | 1.0668 | 1.0493 | 1.0167 | 1.0193 | 0.9982 | 1.0212 | 1.0559 | 1.0280 | 1.0271 |

| University of St Andrews | 2011 | |||||||||

| University of St Andrews | 2012 | 0.9444 | 1.0000 | 0.9444 | 1.0725 | 1.0000 | 1.0725 | 0.9640 | 1.0000 | 0.9640 |

| University of St Andrews | 2013 | 0.9987 | 1.0000 | 0.9987 | 0.9844 | 1.0000 | 0.9844 | 1.0274 | 1.0000 | 1.0274 |

| University of St Andrews | 2014 | 0.9756 | 1.0000 | 0.9756 | 0.9039 | 1.0000 | 0.9039 | 0.8899 | 1.0000 | 0.8899 |

| University of St Andrews | 2015 | 1.0312 | 1.0000 | 1.0312 | 0.9912 | 1.0000 | 0.9912 | 1.0327 | 1.0000 | 1.0327 |

| University of St Andrews | 2016 | 1.0959 | 1.0000 | 1.0959 | 0.9796 | 1.0000 | 0.9796 | 1.0954 | 1.0000 | 1.0954 |

| University of Sussex | 2011 | |||||||||

| University of Sussex | 2012 | 0.8821 | 1.0000 | 0.8821 | 1.0305 | 1.0000 | 1.0305 | 0.8821 | 1.0000 | 0.8821 |

| University of Sussex | 2013 | 1.0175 | 1.0000 | 1.0175 | 0.9044 | 1.0000 | 0.9044 | 1.0178 | 1.0000 | 1.0178 |

| University of Sussex | 2014 | 0.9227 | 1.0000 | 0.9227 | 1.0760 | 1.0000 | 1.0760 | 0.9571 | 1.0000 | 0.9571 |

| University of Sussex | 2015 | 1.0354 | 1.0000 | 1.0354 | 0.8338 | 0.9551 | 0.8730 | 1.0354 | 1.0000 | 1.0354 |

| University of Sussex | 2016 | 0.9965 | 1.0000 | 0.9965 | 0.9897 | 0.9737 | 1.0164 | 0.9965 | 1.0000 | 0.9965 |

| University of Tübingen | 2011 | |||||||||

| University of Tübingen | 2012 | 1.1367 | 1.0764 | 1.0560 | 1.0126 | 1.0014 | 1.0112 | 1.1367 | 1.0764 | 1.0560 |

| University of Tübingen | 2013 | 1.0913 | 1.1137 | 0.9799 | 0.9947 | 0.9826 | 1.0123 | 1.0913 | 1.1137 | 0.9799 |

| University of Tübingen | 2014 | 0.9729 | 0.9659 | 1.0072 | 1.0178 | 0.9889 | 1.0292 | 0.9737 | 0.9712 | 1.0025 |

| University of Tübingen | 2015 | 1.0586 | 1.0622 | 0.9965 | 1.0248 | 1.0240 | 1.0008 | 1.0548 | 1.0565 | 0.9985 |

| University of Tübingen | 2016 | 1.0768 | 1.0395 | 1.0359 | 1.0159 | 0.9902 | 1.0259 | 1.0768 | 1.0395 | 1.0359 |

| University of Twente | 2011 | |||||||||

| University of Twente | 2012 | 1.0509 | 0.9501 | 1.1060 | 0.9631 | 0.9396 | 1.0250 | 1.0341 | 0.9287 | 1.1136 |

| University of Twente | 2013 | 1.0914 | 1.0460 | 1.0434 | 1.0123 | 1.0839 | 0.9340 | 1.0914 | 1.0460 | 1.0434 |

| University of Twente | 2014 | 0.9968 | 1.0543 | 0.9455 | 1.1994 | 1.1369 | 1.0550 | 1.0092 | 1.0844 | 0.9306 |

| University of Twente | 2015 | 1.0635 | 1.0594 | 1.0039 | 0.8912 | 0.8872 | 1.0044 | 1.0398 | 1.0300 | 1.0095 |

| University of Twente | 2016 | 1.0798 | 1.0775 | 1.0021 | 1.0687 | 1.0632 | 1.0051 | 1.0798 | 1.0775 | 1.0021 |

| University of Würzburg | 2011 | |||||||||

| University of Würzburg | 2012 | 1.0250 | 0.9889 | 1.0365 | 0.9977 | 0.9696 | 1.0290 | 1.0250 | 0.9889 | 1.0365 |

| University of Würzburg | 2013 | 1.1244 | 1.1498 | 0.9779 | 0.9704 | 0.9664 | 1.0041 | 1.1244 | 1.1498 | 0.9779 |

| University of Würzburg | 2014 | 1.0860 | 1.0788 | 1.0067 | 1.0185 | 0.9904 | 1.0284 | 1.0860 | 1.0788 | 1.0067 |

| University of Würzburg | 2015 | 1.0574 | 1.0653 | 0.9926 | 1.0219 | 1.0079 | 1.0140 | 1.0574 | 1.0653 | 0.9926 |

| University of Würzburg | 2016 | 0.9717 | 0.9530 | 1.0196 | 1.0099 | 0.9870 | 1.0232 | 0.9717 | 0.9530 | 1.0196 |

| University of York | 2011 | |||||||||

| University of York | 2012 | 0.8835 | 0.8938 | 0.9885 | 1.0080 | 0.9653 | 1.0442 | 0.8890 | 0.8912 | 0.9974 |

| University of York | 2013 | 1.1014 | 1.1019 | 0.9996 | 0.8685 | 0.8919 | 0.9738 | 1.0910 | 1.1010 | 0.9909 |

| University of York | 2014 | 1.0287 | 1.0802 | 0.9522 | 0.9785 | 0.9191 | 1.0646 | 1.0310 | 1.0802 | 0.9544 |

| University of York | 2015 | 1.0105 | 0.9715 | 1.0401 | 0.9494 | 0.9543 | 0.9948 | 1.0098 | 0.9726 | 1.0382 |

| University of York | 2016 | 0.9359 | 0.9127 | 1.0255 | 1.0838 | 1.0855 | 0.9985 | 0.9384 | 0.9184 | 1.0218 |

| Uppsala University | 2011 | |||||||||

| Uppsala University | 2012 | 0.9847 | 0.9519 | 1.0345 | 1.0019 | 0.9787 | 1.0236 | 0.9666 | 0.9410 | 1.0271 |

| Uppsala University | 2013 | 0.9708 | 0.9435 | 1.0289 | 0.9745 | 0.9711 | 1.0035 | 0.9533 | 0.9555 | 0.9976 |

| Uppsala University | 2014 | 0.9764 | 1.0235 | 0.9540 | 1.0272 | 0.9997 | 1.0275 | 0.9733 | 0.9907 | 0.9824 |

| Uppsala University | 2015 | 1.0505 | 1.0378 | 1.0123 | 0.9783 | 0.9589 | 1.0203 | 1.0348 | 1.0169 | 1.0176 |

| Uppsala University | 2016 | 1.1425 | 1.0734 | 1.0644 | 1.0445 | 1.0264 | 1.0177 | 1.1357 | 1.0667 | 1.0647 |

| Utrecht University | 2011 | |||||||||

| Utrecht University | 2012 | 1.3740 | 1.3267 | 1.0356 | 1.0458 | 1.0155 | 1.0298 | 1.0518 | 1.0155 | 1.0358 |

| Utrecht University | 2013 | 1.0370 | 1.0510 | 0.9867 | 1.0596 | 1.0093 | 1.0499 | 1.0427 | 1.0093 | 1.0331 |

| Utrecht University | 2014 | 0.9979 | 1.0246 | 0.9740 | 1.0429 | 1.0000 | 1.0429 | 1.0214 | 1.0000 | 1.0214 |

| Utrecht University | 2015 | 0.9801 | 0.9844 | 0.9956 | 0.9900 | 1.0000 | 0.9900 | 0.9929 | 1.0000 | 0.9929 |

| Utrecht University | 2016 | 1.0933 | 1.0169 | 1.0751 | 1.0108 | 1.0000 | 1.0108 | 1.0313 | 1.0000 | 1.0313 |

| VU University Amsterdam | 2011 | |||||||||

| VU University Amsterdam | 2012 | 0.9843 | 0.9397 | 1.0474 | 1.0399 | 0.9940 | 1.0462 | 1.0162 | 0.9768 | 1.0403 |

| VU University Amsterdam | 2013 | 1.0151 | 1.0303 | 0.9852 | 1.0051 | 0.9898 | 1.0155 | 1.0018 | 0.9934 | 1.0084 |

| VU University Amsterdam | 2014 | 0.9981 | 1.0232 | 0.9754 | 1.0776 | 1.0731 | 1.0041 | 1.0157 | 1.0012 | 1.0145 |

| VU University Amsterdam | 2015 | 1.0139 | 0.9886 | 1.0256 | 1.0146 | 1.0122 | 1.0024 | 1.0207 | 1.0052 | 1.0154 |

| VU University Amsterdam | 2016 | 1.1810 | 1.1220 | 1.0526 | 1.0910 | 1.0719 | 1.0178 | 1.1198 | 1.0643 | 1.0522 |

| Wageningen University & R. | 2011 | |||||||||

| Wageningen University & R. | 2012 | 1.0528 | 1.0000 | 1.0528 | 1.0783 | 1.0625 | 1.0149 | 1.0689 | 1.0000 | 1.0689 |

| Wageningen University & R. | 2013 | 1.0135 | 1.0000 | 1.0135 | 0.9279 | 0.9686 | 0.9580 | 0.9980 | 1.0000 | 0.9980 |

| Wageningen University & R. | 2014 | 0.9382 | 1.0000 | 0.9382 | 1.0278 | 0.9667 | 1.0632 | 0.9664 | 1.0000 | 0.9664 |

| Wageningen University & R. | 2015 | 1.0024 | 1.0000 | 1.0024 | 1.0561 | 1.0558 | 1.0003 | 1.0033 | 1.0000 | 1.0033 |

| Wageningen University & R. | 2016 | 1.0744 | 1.0000 | 1.0744 | 1.1048 | 1.1032 | 1.0014 | 1.0815 | 1.0000 | 1.0815 |

References

- Altbach, P.G.; Knight, J. The internationalization of higher education: Motivations and realities. J. Stud. Int. Educ. 2007, 11, 290–305. [Google Scholar] [CrossRef]

- Albers, S. Esteem indicators: Membership in editorial boards or honorary doctorates, discussion of quantitative and qualitative rankings of scholars by Rost and Frey. Schmalenbach Bus. Rev. 2011, 63, 92–98. [Google Scholar] [CrossRef]

- Teichler, U. Social Contexts and Systematic Consequences of University Rankings: A Meta-Analysis of the Ranking Literature. In University Rankings—Theoretical Basis, Methodology and Impacts on Global Higher Education; Shin, J.C., Toutkoushian, R.K., Teichler, U., Eds.; Springer: Dordrecht, The Netherlands, 2011; pp. 55–69. [Google Scholar]

- Klumpp, M.; de Boer, H.; Vossensteyn, H. Comparing national policies on institutional profiling in Germany and the Netherlands. Comp. Educ. 2014, 50, 156–176. [Google Scholar] [CrossRef]

- Groot, T.; García-Valderrama, T. Research quality and efficiency—An analysis of assessments and management issues in Dutch economics and business research programs. Res. Policy 2006, 35, 1362–1376. [Google Scholar] [CrossRef]

- Costas, R.; van Leeuwen, T.N.; Bordos, M. A bibliometric classificatory approach for the study and assessment of research performance at the individual level: The effects of age on productivity and impact. J. Am. Soc. Inf. Sci. Technol. 2010, 61, 1564–1581. [Google Scholar] [CrossRef]

- Krapf, M. Research evaluation and journal quality weights: Much ado about nothing. J. Bus. Econ. 2011, 81, 5–27. [Google Scholar] [CrossRef]

- Rost, K.; Frey, B.S. Quantitative and qualitative rankings of scholars. Schmalenbach Bus. Rev. 2011, 63, 63–91. [Google Scholar] [CrossRef]

- Sarrico, C.S. On performance in higher education—Towards performance government. Tert. Educ. Manag. 2010, 16, 145–158. [Google Scholar] [CrossRef]

- Kreiman, G.; Maunsell, J.H.R. Nine criteria for a measure of scientific output. Front. Comput. Neurosci. 2011, 5, 48. [Google Scholar] [CrossRef] [PubMed]

- Wilkins, S.; Huisman, J. Stakeholder perspectives on citation and peer-based rankings of higher education journals. Tert. Educ. Manag. 2015, 21, 1–15. [Google Scholar] [CrossRef]

- Hicks, D.; Wouters, P.; Waltman, L.; de Rijcke, S.; Rafols, I. The Leiden Manifesto for research. Nature 2016, 520, 429–431. [Google Scholar] [CrossRef] [PubMed]

- Parteka, A.; Wolszczak-Derlacz, J. Dynamics of productivity in higher education: Cross-European evidence based on bootstrapped Malmquist indices. J. Prod. Anal. 2013, 40, 67–82. [Google Scholar] [CrossRef]

- Dundar, H.; Lewis, D.R. Departmental productivity in American universities: Economies of scale and scope. Econ. Educ. Rev. 1995, 14, 199–244. [Google Scholar] [CrossRef]

- Hashimoto, K.; Cohn, E. Economies of scale and scope in Japanese private universities. Educ. Econ. 1997, 5, 107–115. [Google Scholar] [CrossRef]

- Casu, B.; Thanassoulis, E. Evaluating cost efficiency in central administrative services in UK universities. Omega 2006, 34, 417–426. [Google Scholar] [CrossRef]

- Sarrico, C.S.; Teixeira, P.N.F.L.; Rosa, M.J.; Cardoso, M.F. Subject mix and productivity in Portuguese universities. Eur. J. Oper. Res. 2009, 197, 287–295. [Google Scholar] [CrossRef]

- Mainardes, E.; Alves, H.; Raposo, M. Using expectations and satisfaction to measure the frontiers of efficiency in public universities. Tert. Educ. Manag. 2014, 20, 339–353. [Google Scholar] [CrossRef]

- Daraio, C.; Bonaccorsi, A.; Simar, L. Efficiency and economies of scale and specialization in European universities—A directional distance approach. J. Infometr. 2015, 9, 430–448. [Google Scholar] [CrossRef]

- Millot, B. International rankings: Universities vs. higher education systems. Int. J. Educ. Dev. 2015, 20, 156–165. [Google Scholar] [CrossRef]

- Dixon, R.; Hood, C. Ranking Academic Research Performance: A Recipe for Success? Sociol. Trav. 2016, 58, 403–411. [Google Scholar] [CrossRef]

- Lee, B.L.; Worthington, A.C. A network DEA quantity and quality-orientated production model: An application to Australian university research services. Omega 2016, 60, 26–33. [Google Scholar] [CrossRef]

- Olcay, G.A.; Bulu, M. Is measuring the knowledge creation of universities possible? A review of university rankings. Technol. Forecast. Soc. Change 2016. [Google Scholar] [CrossRef]

- Charnes, A.; Cooper, W.W.; Rhodes, E.L. Measuring the efficiency of decision making units. Eur. J. Oper. Res. 1978, 2, 429–444. [Google Scholar] [CrossRef]

- Banker, R.D.; Charnes, A.; Cooper, W.W. Some models for estimating technical and scale inefficiencies in data envelopment analysis. Manag. Sci. 1984, 30, 1078–1092. [Google Scholar] [CrossRef]

- Bessent, A.M.; Bessent, E.W.; Charnes, A.; Cooper, W.W.; Thorogood, N. Evaluation of educational program proposals by means of DEA. Educ. Admin. Q. 1983, 19, 82–107. [Google Scholar] [CrossRef]

- Johnes, G.; Johnes, J. Measuring the research performance of UK economics departments: An application of data envelopment analysis. Oxf. Econ. Pap. 1993, 45, 332–347. [Google Scholar] [CrossRef]

- Athanassopoulos, A.D.; Shale, E. Assessing the comparative efficiency of higher education institutions in the UK by means of data envelopment analysis. Educ. Econ. 1997, 5, 117–134. [Google Scholar] [CrossRef]

- McMillan, M.L.; Datta, D. The relative efficiencies of Canadian universities: A DEA perspective. Can. Public Policy 1998, 24, 485–511. [Google Scholar] [CrossRef]

- Ng, Y.C.; Li, S.K. Measuring the research performance of Chinese higher education institutions: An application of data envelopment analysis. Educ. Econ. 2000, 8, 139–156. [Google Scholar] [CrossRef]

- Feng, Y.J.; Lu, H.; Bi, K. An AHP/DEA method for measurement of the efficiency of R&D management activities in universities. Int. Trans. Oper. Res. 2004, 11, 181–191. [Google Scholar] [CrossRef]

- Johnes, J. Measuring efficiency: A comparison of multilevel modelling and data envelopment analysis in the context of higher education. Bull. Econ. Res. 2006, 58, 75–104. [Google Scholar] [CrossRef]

- Kocher, M.G.; Luptacik, M.; Sutter, M. Measuring productivity of research in economics: A cross-country study using DEA. Socio-Econ. Plan. Sci. 2006, 40, 314–332. [Google Scholar] [CrossRef]

- Malmquist, S. Index numbers and indifference surfaces. Trabajos De Estadistica 1953, 42, 209–242. [Google Scholar] [CrossRef]

- Grifell-Tatje, E.; Lovell, K.C.A.; Pastor, J.T. A quasi-Malmquist productivity index. J. Prod. Anal. 1998, 10, 7–20. [Google Scholar] [CrossRef]

- Wang, Y.M.; Lan, Y.X. Measuring Malmquist productivity index: A new approach based on double frontiers data envelopment analysis. Math. Comp. Model. 2011, 54, 2760–2771. [Google Scholar] [CrossRef]

- Castano, M.C.N.; Cabana, E. Sources of efficiency and productivity growth in the Philippine state universities and colleges: A non-parametric approach. Int. Bus. Econ. Res. J. 2007, 6, 79–90. [Google Scholar] [CrossRef]

- Worthington, A.C.; Lee, B.L. Efficiency, technology and productivity change in Australian universities, 1998–2003. Econ. Educ. Rev. 2008, 27, 285–298. [Google Scholar] [CrossRef]

- Times Higher Education. World University Rankings 2015–2016 Methodology. Available online: https://www.timeshighereducation.com/news/ranking-methodology-2016 (accessed on 12 April 2018).

- Academic Ranking of World Universities: Methodology. Available online: http://www.shanghairanking.com/ARWU-Methodology-2016.html (accessed on 12 April 2018).

- Morphew, C.C.; Swanson, C. On the Efficacy of Raising Your University’s Rankings. In University Rankings—Theoretical Basis, Methodology and Impacts on Global Higher Education; Shin, J.C., Toutkoushian, R.K., Teichler, U., Eds.; Springer: Dordrecht, The Netherlands, 2011; pp. 185–199. [Google Scholar]

- Federkeil, G.; van Vught, F.A.; Westerheijden, D.F. Classifications and Rankings. In Multidimensional Ranking—The Design and Development of U-Multirank; van Vught, F.A., Ziegele, F., Eds.; Springer: Dordrecht, The Netherlands, 2011; pp. 25–37. [Google Scholar]

- Hazelkorn, E. Rankings and the Reshaping of Higher Education: The Battle for World-Class Excellence; Palgrave Macmillan: London, UK, 2011. [Google Scholar]

- Farrell, M.J. The Measurement of Productive Efficiency. J. R. Stat. Soc. Ser. A 1957, 120, 253–290. [Google Scholar] [CrossRef]

- Ylvinger, S. Industry performance and structural efficiency measures: Solutions to problems in firm models. Eur. J. Oper. Res. 2000, 121, 164–174. [Google Scholar] [CrossRef]

- Teichler, U. The Future of University Rankings. In University Rankings—Theoretical Basis, Methodology and Impacts on Global Higher Education; Shin, J.C., Toutkoushian, R.K., Teichler, U., Eds.; Springer: Dordrecht, The Netherlands, 2011; pp. 259–265. [Google Scholar]

- Shin, J.C. Organizational Effectiveness and University Rankings. In University Rankings—Theoretical Basis, Methodology and Impacts on Global Higher Education; Shin, J.C., Toutkoushian, R.K., Teichler, U., Eds.; Springer: Dordrecht, The Netherlands, 2011; pp. 19–34. [Google Scholar]

- Yeravdekar, V.R.; Tiwari, G. Global Rankings of Higher Education Institutions and India’s Effective Non-Presence. Procedia Soc. Behav. Sci. 2014, 157, 63–83. [Google Scholar] [CrossRef]

- O’Connel, C. An examination of global university rankings as a new mechanism influencing mission differentiation: The UK context. Tert. Educ. Manag. 2015, 21, 111–126. [Google Scholar] [CrossRef]

- Bonaccorsi, A.; Cicero, T. Nondeterministic ranking of university departments. J. Informetr. 2016, 10, 224–237. [Google Scholar] [CrossRef]

- Kehm, B.M.; Stensaker, B. University Rankings, Diversity and the New Landscape of Higher Education; Sense Publishers: Rotterdam, The Netherlands, 2009. [Google Scholar]

- Hazelkorn, E. Higher Education’s Future: A New Global World Order. In Resilient Universities—Confronting Changes in a Challenging World; Karlsen, J.E., Pritchard, R.M.O., Eds.; Peter Lang: Oxford, UK, 2013; pp. 53–89. [Google Scholar]

- Federkeil, G.; van Vught, F.A.; Westerheijden, D.F. An Evaluation and Critique of Current Rankings. In Multidimensional Ranking—The Design and Development of U-Multirank; van Vught, F.A., Ziegele, F., Eds.; Springer: Dordrecht, The Netherlands, 2011; pp. 39–70. [Google Scholar]

- Times Higher Education. World University Rankings 2016–2017 Methodology. Available online: https://www.timeshighereducation.com/world-university-rankings/2017/world-ranking#!/page/0/length/25/sort_by/rank/sort_order/asc/cols/stats (accessed on 12 April 2018).

- Institute for Higher Education Policy. College and University Ranking Systems—Global Perspectives and American Challenges; Institute for Higher Education Policy: Washington, DC, USA, 2007. [Google Scholar]

- Rauhvargers, A. Global University Rankings and Their Impact—EUA Report on Rankings 2013; European University Association (EUA): Brussels, Belgium, 2013. [Google Scholar]

- Longden, B. Ranking Indicators and Weights. In University Rankings—Theoretical Basis, Methodology and Impacts on Global Higher Education; Shin, J.C., Toutkoushian, R.K., Teichler, U., Eds.; Springer: Dordrecht, The Netherlands, 2011; pp. 73–104. [Google Scholar]

- Selkälä, A.; Ronkainen, S.; Alasaarela, E. Features of the Z-scoring method in graphical two-dimensional web surveys: The case of ZEF. Qual. Quant. 2011, 45, 609–621. [Google Scholar] [CrossRef]

- University of Leiden. CWTS Leiden Ranking 2011/12—Methodology; Centre for Science and Technology Studies: Leiden, The Netherlands, 2012. [Google Scholar]

- Waltman, L.; van Eck, N.J.; Tijssen, R.; Wouters, P. Moving Beyond Just Ranking—The CWTS Leiden Ranking 2016. Available online: https://www.cwts.nl/blog?article=n-q2w254 (accessed on 12 April 2018).

- ETER Project: European Tertiary Education Register. Available online: https://www.eter-project.com>A (accessed on 12 April 2018).

- Humboldt, W. Von Über die innere und äussere Organisation der höheren wissenschaftlichen Anstalten zu Berlin. In Gelegentliche Gedanken Über Universitäten; Müller, E., Ed.; Reclam: Leipzig, Germany, 1809; pp. 273–283. [Google Scholar]

- Anderson, R. Before and after Humboldt: European universities between the eighteenth and the nineteenth century. Hist. High. Educ. Annu. 2000, 20, 5–14. [Google Scholar]

- Ash, M.G. Bachelor of What, Master of Whom? The Humboldt Myth and Historical Transformations of Higher Education in German-Speaking Europe and the US. Eur. J. Educ. 2006, 41, 245–267. [Google Scholar] [CrossRef]

- Koopmans, T.C. Analysis of Production as an Efficient Combination of Activities. In Analysis of Production and Allocation, Proceedings of A Conference; Koopmans, T.C., Ed.; Wiley: New York, NY, USA, 1953; pp. 33–97. [Google Scholar]

- Debreu, G. The Coefficient of Resource Utilization. Econometrica 1951, 19, 273–292. [Google Scholar] [CrossRef]

- Diewert, W.E. Functional Forms for Profit and Transformation Functions. J. Econ. Theory 1973, 6, 284–316. [Google Scholar] [CrossRef]

- Cohn, E.; Rhine, S.L.W.; Santos, M.C. Institutions of higher education as multiproduct firms: Economies of scale and scope. Rev. Econ. Stat. 1989, 71, 284–290. [Google Scholar] [CrossRef]

- Flegg, T.A.; Allen, D.O.; Field, K.; Thurlow, T.W. Measuring the Efficiency of British Universities: A Multi-period Data Envelopment Analysis. Educ. Econ. 2004, 12, 231–249. [Google Scholar] [CrossRef]

- Agasisti, T.; Johnes, G. Beyond frontiers: Comparing the efficiency of higher education decision-making units across more than one country. Educ. Econ. 2009, 17, 59–79. [Google Scholar] [CrossRef]

- Ramón, N.; Ruiz, J.L.; Sirvent, I. Using Data Envelopment Analysis to Assess Effectiveness of the Processes at the University with Performance Indicators of Quality. Int. J. Oper. Quant. Manag. 2010, 16, 87–103. [Google Scholar]

- Bolli, T.; Olivares, M.; Bonaccorsi, A.; Daraio, C.; Aracil, A.G.; Lepori, B. The differential effects of competitive funding on the production frontier and the efficiency of universities. Econ. Educ. Rev. 2016, 52, 91–104. [Google Scholar] [CrossRef]

- Dyckhoff, H.; Clermont, M.; Dirksen, A.; Mbock, E. Measuring balanced effectiveness and efficiency of German business schools’ research performance. J. Bus. Econ. 2013, 39–60. [Google Scholar] [CrossRef]

- Cooper, W.W.; Seiford, L.M.; Tone, K. Data Envelopment Analysis—A Comprehensive Text with Models, Applications, References and DEA-Solver Software; Springer: New York, NY, USA, 2007. [Google Scholar]

- Ding, L.; Yang, Q.; Sun, L.; Tong, J.; Wang, Y. Evaluation of the Capability of Personal Software Process based on Data Envelopment Analysis. In Unifying the Software Process Spectrum: International Software Process Spectrum; Li, M., Boehm, B., Osterweil, L.J., Eds.; Springer: Berlin, Germany, 2006; pp. 235–248. [Google Scholar]

- Fisher, I. The Purchasing Power of Money, Its Determination and Relation to Credit, Interest and Crises; MacMillan: New York, NY, USA, 1911. [Google Scholar]

- Fisher, I. The Making of Index Numbers; Houghton Mifflin: Boston, MA, USA, 1922. [Google Scholar]

- Divisia, F. L’ Indice Monétaire et la Theorie de la Monnaie. Rev. Econ. Politique 1926, 40, 49–81. [Google Scholar]

- Nordhaus, W.D. Quality Change in Price Indices. J. Econ. Perspect. 1998, 12, 59–68. [Google Scholar] [CrossRef]

- Pollak, R.A. The Consumer Price Index: A Research Agenda and Three Proposals. J. Econ. Perspect. 1998, 12, 69–78. [Google Scholar] [CrossRef]

- Giannetti, B.F.; Agostinho, F.; Almeida, C.M.V.B.; Huisingh, D. A review of limitations of GDP and alternative indices to monitor human wellbeing and to manage eco-system functionality. J. Clean. Prod. 2015, 87, 11–25. [Google Scholar] [CrossRef]

- Pal, D.; Mita, S.K. Asymmetric oil product pricing in India: Evidence from a multiple threshold nonlinear ARDL model. Econ. Model. 2016, 59, 314–328. [Google Scholar] [CrossRef]

- Petrou, P.; Vandoros, S. Pharmaceutical price comparisons across the European Union and relative affordability in Cyprus. Health Policy Technol. 2016, 5, 350–356. [Google Scholar] [CrossRef]

- Frisch, R. Annual Survey of General Economic Theory: The Problem of Index Numbers. Econometrica 1936, 4, 1–38. [Google Scholar] [CrossRef]

- Theil, H. Best Linear Index Numbers of Prices and Quantities. Econometrica 1960, 28, 464–480. [Google Scholar] [CrossRef]

- Kloek, T.; Theil, H. International Comparisons of Prices and Quantities Consumed. Econometrica 1965, 33, 535–556. [Google Scholar] [CrossRef]

- Gilbert, M. The Problem of Quality Changes and Index Numbers. Mon. Lab. Rev. 1961, 84, 992–997. [Google Scholar]

- Kloek, T.; de Wit, G.M. Best Linear and Best Linear Unbiased Index Numbers. Econometrica 1961, 29, 602–616. [Google Scholar] [CrossRef]

- Jiménez-Sáeza, F.; Zabala-Iturriagagoitia, J.M.; Zofío, J.L.; Castro-Martínez, E. Evaluating research efficiency within National R&D Programmes. Res. Policy 2011, 40, 230–241. [Google Scholar] [CrossRef]

- Juo, J.C.; Fu, T.T.; Yu, M.M.; Lin, Y.H. Non-radial profit performance: An application to Taiwanese banks. Omega 2016, 65, 111–121. [Google Scholar] [CrossRef]

- Gulati, R.; Kumar, S. Assessing the impact of the global financial crisis on the profit efficiency of Indian banks. Econ. Model. 2016, 58, 167–181. [Google Scholar] [CrossRef]

- Lazov, I. Profit management of car rental companies. Eur. J. Oper. Res. 2016, 258, 307–314. [Google Scholar] [CrossRef]

- Pasternack, P. Die Exzellenzinitiative als politisches Programm—Fortsetzung der normalen Forschungsförderung oder Paradigmentwechsel? In Making Excellence—Grundlagen, Praxis und Konsequenzen der Exzelleninitiative; Bloch, R., Keller, A., Lottmann, A., Würmann, C., Eds.; Bertelsmann: Bielefeld, Germany, 2008; pp. 13–36. [Google Scholar]

- Örkcü, H.H.; Balıkçı, C.; Dogan, M.I.; Genç, A. An evaluation of the operational efficiency of Turkish airports using data envelopment analysis and the Malmquist productivity index: 2009–2014 case. Transp. Policy 2016, 48, 92–104. [Google Scholar] [CrossRef]

- Sueyoshi, T.; Goto, M. DEA environmental assessment in time horizon: Radial approach for Malmquist index measurement on petroleum companies. Energy Econ. 2015, 51, 329–345. [Google Scholar] [CrossRef]

- Emrouznejad, A.; Yang, G. A framework for measuring global Malmquist-Luenberger productivity index with CO2 emissions on Chinese manufacturing industries. Energy 2016, 115, 840–856. [Google Scholar] [CrossRef]

- Fandel, G. On the performance of universities in North Rhine-Westphalia, Germany: Government’s redistribution of funds judged using DEA efficiency measures. Eur. J. Oper. Res. 2007, 176, 521–533. [Google Scholar] [CrossRef]

- Johnes, J. Data envelopment analysis and its application to the measurement of efficiency in higher education. Econ. Educ. Rev. 2006, 25, 273–288. [Google Scholar] [CrossRef]

- Fuentes, R.; Fuster, B.; Lillo-Banuls, A. A three-stage DEA model to evaluate learning-teaching technical efficiency: Key performance indicators and contextual variables. Expert Syst. Appl. 2016, 48, 89–99. [Google Scholar] [CrossRef]

- Alsabawy, A.Y.; Cater-Steel, A.; Soar, J. Determinants of perceived usefulness of e-learning systems. Comput. Hum. Behav. 2016, 64, 843–858. [Google Scholar] [CrossRef]

- Chang, V. E-learning for academia and industry. Int. J. Inf. Manag. 2016, 36, 476–485. [Google Scholar] [CrossRef]

- Tiemann, O.; Schreyögg, J. Effects of Ownership on Hospital Efficiency in Germany. Bus. Res. 2009, 2, 115–145. [Google Scholar] [CrossRef]

- Harlacher, D.; Reihlen, M. Governance of professional service firms: A configurational approach. Bus. Res. 2014, 7, 125–160. [Google Scholar] [CrossRef]

- Bottomley, A.; Dunworth, J. Rate of return analysis and economies of scale in higher education. Socio-Econ. Plan. Sci. 1974, 8, 273–280. [Google Scholar] [CrossRef]

- Bornmann, L. Peer Review and Bibliometric: Potentials and Problems. In University Rankings—Theoretical Basis, Methodology and Impacts on Global Higher Education; Shin, J.C., Toutkoushian, R.K., Teichler, U., Eds.; Springer: Dordrecht, The Netherlands, 2011; pp. 145–164. [Google Scholar]

- Osterloh, M.; Frey, B.S. Academic Rankings between the Republic of Science and New Public Management. In The Economics of Economists; Lanteri, A., Fromen, J., Eds.; Cambridge University Press: Cambridge, UK, 2014; pp. 77–78. [Google Scholar]

- Harmann, G. Competitors of Rankings: New Directions in Quality Assurance and Accountability. In University Rankings—Theoretical Basis, Methodology and Impacts on Global Higher Education; Shin, J.C., Toutkoushian, R.K., Teichler, U., Eds.; Springer: Dordrecht, The Netherlands, 2011; pp. 35–53. [Google Scholar]

- Eisend, M. Is VHB-JOURQUAL2 a Good Measure of Scientific Quality? Assessing the Validity of the Major Business Journal Ranking in German-Speaking Countries. Bus. Res. 2011, 4, 241–274. [Google Scholar] [CrossRef]

- Lorenz, D.; Löffler, A. Robustness of personal rankings: The Handelsblatt example. Bus. Res. 2015, 8, 189–212. [Google Scholar] [CrossRef]

- Schrader, U.; Hennig-Thurau, T. VHB-JOURQUAL2: Method, Results, and Implications of the German Academic Association for Business Research’s Journal Ranking. Bus. Res. 2009, 2, 180–204. [Google Scholar] [CrossRef]

- Blackmore, P. Universities Face a Choice between Prestige and Efficiency, 5 April 2016. Available online: https://www.timeshighereducation.com/blog/universities-face-choice-between-prestige-and-efficiency (accessed on 12 April 2018).

- Destatis. Education and Culture—University Finances, 11(4.5) Bildung und Kultur, Finanzen der Hochschulen, Fachserie 11, Reihe 4.5; Statistisches Bundesamt: Wiesbaden, Germany, 2014. [Google Scholar]

- Abramo, G.; Cicero, T.; D’Angelo, C.A. A sensitivity analysis of researchers’ productivity rankings to the time of citation observation. J. Informetr. 2012, 6, 192–201. [Google Scholar] [CrossRef]

- Baruffaldi, S.H.; Landoni, P. Return mobility and scientific productivity of researchers working abroad: The role of home country linkages. Res. Policy 2012, 41, 1655–1665. [Google Scholar] [CrossRef]

- Beaudry, C.; Larivière, V. Which gender gap? Factors affecting researchers’ scientific impact in science and medicine. Res. Policy 2016, 45, 1790–1817. [Google Scholar] [CrossRef]

- Fedderke, J.W.; Goldschmidt, M. Does massive funding support of researchers work: Evaluating the impact of the South African research chair funding initiative. Res. Policy 2015, 44, 467–482. [Google Scholar] [CrossRef]

| Output Field | Weight | Indicator | Weight |

|---|---|---|---|

| THE Teaching * | 30.00% | Academic reputation survey (THE) | 15.00% |

| Doctorates awarded-to-academic staff ratio ** | 6.00% | ||

| Staff-to-student ratio | 4.50% | ||

| Doctorate-to-bachelor’s ratio | 2.25% | ||

| Institutional income *** | 2.25% | ||

| THE International Outlook * | 7.50% | International-to-domestic-student ratio | 2.50% |

| International-to-domestic-staff ratio | 2.50% | ||

| International collaboration (proportion of research journal publications with at least one international co-author) ** | 2.50% | ||

| THE Research * | 30.00% | Academic reputation survey (THE) | 18.00% |

| Research income | 6.00% | ||

| Research productivity (publications in Scopus indexed academic journals per scholar) ** | 6.00% | ||

| THE Citations * | 30.00% | Number of times a university’s published work is cited by scholars globally, compared with the number of citations a publication of similar type and subject is expected to have. (Bibliometric data supplier Elsevier examined more than 51 million citations to 11.3 million journal articles, published over five years. The data are drawn from the 23,000 academic journals indexed by Scopus and include all indexed journals published between 2010 and 2014. Only three types of publications are analysed: journal articles, conference proceedings and reviews—citations to these papers from 2010 to 2015 are collected.) | 30.00% |

| THE Industry Income * | 2.50% | Research income an institution earns from industry *** | 2.50% |

| U. Oxford (UK) | 2011 | 2016 | Change 2011–2016 | Arith. Mean ** | U. Würzburg (DE) | 2011 | 2016 | Change 2011–2016 | Arith. Mean ** |

|---|---|---|---|---|---|---|---|---|---|

| THE Teaching * | 88.20 | 86.50 | −1.93% | 48.70 | 34.60 | −28.95% | |||

| THE Int. Outlook * | 77.20 | 94.40 | 22.28% | 40.30 | 50.90 | 26.30% | |||

| THE Research * | 93.90 | 98.90 | 5.32% | 40.90 | 35.80 | −12.47% | |||

| THE Citations * | 95.10 | 98.80 | 3.89% | 60.40 | 79.10 | 30.96% | |||

| THE Industry Income * | 73.50 | 73.10 | −0.54% | 5.80% | 27.90 | 47.90 | 71.68% | 17.51% | |

| CWTS_P | 10,701.00 | 13,300.00 | 24.29% | 3219.00 | 3349.00 | 4.04% | |||

| CWTS_TCS | 89,149.00 | 127,888.00 | 43.45% | 22,932.00 | 26,866.00 | 17.16% | |||

| CWTS_TNCS | 15,464.00 | 20,373.00 | 31.74% | 3748.00 | 3998.00 | 6.67% | |||

| CWTS_P_top1 | 215.00 | 311.00 | 44.65% | 36.00 | 51.00 | 41.67% | |||

| CWTS_P_top50 | 6593.00 | 8408.00 | 27.53% | 34.33% | 1883.00 | 1899.00 | 0.85% | 14.08% | |

| Budget (Mil. €, Input) | 1119.77 | 1349.76 | 20.54% | Budget (Mil. €, Input) | 806.97 | 823.58 | 2.06% | ||

| Academic Staff (Input) | 5375.00 | 6120.00 | 13.86% | 17.20% | Academic Staff (Input) | 3281.00 | 3420.00 | 4.24% | 3.15% |

| n = 420 | Budget | Acad. Staff | THE Teaching | THE Int. Outlook | THE Research | THE Citations | THE Ind.Inc. | CWTS P | CWTS TCS | CWTS TNCS | CWTS P_top1 | CWTS P_top50 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Budget | 1.000 | 0.860 | 0.533 | −0.084 | 0.386 | 0.265 | 0.077 | 0.678 | 0.683 | 0.654 | 0.594 | 0.665 |

| Acad. Staff | 1.000 | 0.490 | −0.130 | 0.378 | 0.172 | 0.186 | 0.681 | 0.631 | 0.639 | 0.579 | 0.656 | |

| THE Teaching | 1.000 | 0.228 | 0.890 | 0.231 | 0.213 | 0.690 | 0.724 | 0.733 | 0.746 | 0.711 | ||

| THE Internat. Outlook | 1.000 | 0.173 | 0.375 | −0.142 | 0.053 | 0.149 | 0.138 | 0.240 | 0.092 | |||

| THE Research | 1.000 | 0.198 | 0.273 | 0.700 | 0.709 | 0.733 | 0.734 | 0.718 | ||||

| THE Citations | 1.000 | −0.252 | 0.296 | 0.423 | 0.368 | 0.408 | 0.334 | |||||

| THE Industry Income | 1.000 | 0.167 | 0.128 | 0.178 | 0.173 | 0.174 | ||||||

| CWTS_P | 1.000 | 0.963 | 0.980 | 0.921 | 0.995 | |||||||

| CWTS_TCS | 1.000 | 0.985 | 0.959 | 0.978 | ||||||||

| CWTS_TNCS | 1.000 | 0.974 | 0.994 | |||||||||

| CWTS_P_top1 | 1.000 | 0.949 | ||||||||||

| CWTS_P_top50 | 1.000 | |||||||||||

| “Loading” (ari. mean r) | 0.483 | 0.468 | 0.563 | 0.099 | 0.536 | 0.256 | 0.107 | 0.648 | 0.666 | 0.671 | 0.662 | 0.661 |

| University | Run I—Eff. Score | Returns to Scale | Run II—Eff. Score | Returns to Scale | Run III—Eff. Score | Returns to Scale |

|---|---|---|---|---|---|---|

| Aarhus University | 66.00% | Decrease | 45.00% | Decrease | 66.00% | Decrease |

| Bielefeld University | 83.10% | Decrease | 32.30% | Increase | 83.10% | Decrease |

| Delft University of Technology | 100.00% | Constant | 54.00% | Decrease | 100.00% | Constant |

| Durham University | 99.20% | Decrease | 66.70% | Increase | 99.30% | Decrease |

| ETH Lausanne | 100.00% | Constant | 54.10% | Decrease | 100.00% | Constant |

| Eindhoven University of Technology | 100.00% | Constant | 45.50% | Decrease | 100.00% | Constant |

| Erasmus University Rotterdam | 89.40% | Decrease | 100.00% | Constant | 100.00% | Constant |

| Ghent University | 97.80% | Decrease | 71.40% | Decrease | 99.10% | Decrease |

| Goethe University Frankfurt | 74.40% | Decrease | 40.10% | Decrease | 74.40% | Decrease |

| Heidelberg University | 76.30% | Decrease | 53.90% | Decrease | 76.30% | Decrease |

| Humboldt University of Berlin | 94.90% | Decrease | 100.00% | Constant | 100.00% | Constant |

| Imperial College London | 100.00% | Constant | 100.00% | Constant | 100.00% | Constant |

| KTH Royal Institute of Technology | 100.00% | Constant | 58.70% | Increase | 100.00% | Constant |

| KU Leuven | 98.70% | Decrease | 76.70% | Decrease | 100.00% | Constant |

| Karlsruhe Institute of Technology | 73.40% | Decrease | 43.90% | Decrease | 73.70% | Decrease |

| Karolinska Institute | 100.00% | Constant | 100.00% | Constant | 100.00% | Constant |

| King’s College London | 89.00% | Decrease | 68.00% | Decrease | 91.90% | Decrease |

| LMU Munich | 80.30% | Decrease | 59.30% | Decrease | 80.30% | Decrease |

| LSE London | 100.00% | Constant | 47.20% | Increase | 100.00% | Constant |

| Lancaster University | 92.30% | Decrease | 85.80% | Increase | 94.70% | Decrease |

| Leiden University | 100.00% | Constant | 82.70% | Decrease | 100.00% | Constant |

| Lund University | 76.50% | Decrease | 83.20% | Decrease | 84.70% | Decrease |

| Newcastle University | 90.00% | Decrease | 100.00% | Constant | 100.00% | Constant |

| Queen Mary University of London | 98.50% | Decrease | 33.60% | Increase | 98.50% | Decrease |

| RWTH Aachen University | 69.30% | Decrease | 35.00% | Decrease | 69.30% | Decrease |

| Stockholm University | 82.50% | Decrease | 36.70% | Decrease | 82.50% | Decrease |

| Swedish U. of Agricultural Sciences | 100.00% | Constant | 38.50% | Increase | 100.00% | Constant |

| Technical University of Denmark | 98.30% | Decrease | 45.20% | Decrease | 98.30% | Decrease |

| Technical University of Munich | 87.80% | Decrease | 44.70% | Decrease | 87.90% | Decrease |

| Trinity College Dublin | 100.00% | Constant | 65.50% | Increase | 100.00% | Constant |

| University College Dublin | 100.00% | Constant | 50.60% | Increase | 100.00% | Constant |

| University College London | 97.80% | Decrease | 100.00% | Constant | 100.00% | Constant |

| University of Aberdeen | 97.70% | Decrease | 74.60% | Increase | 100.00% | Constant |

| University of Amsterdam | 67.00% | Decrease | 88.10% | Decrease | 88.10% | Decrease |

| University of Basel | 98.40% | Decrease | 49.50% | Decrease | 98.60% | Decrease |

| University of Bergen | 81.60% | Decrease | 41.00% | Decrease | 81.60% | Decrease |

| University of Birmingham | 76.00% | Decrease | 63.10% | Decrease | 81.30% | Decrease |

| University of Bonn | 69.50% | Decrease | 39.90% | Decrease | 69.50% | Decrease |

| University of Bristol | 88.10% | Decrease | 92.30% | Increase | 98.30% | Decrease |

| University of Cambridge | 100.00% | Constant | 100.00% | Constant | 100.00% | Constant |

| University of Copenhagen | 61.60% | Decrease | 71.90% | Decrease | 71.90% | Decrease |

| University of Dundee | 96.80% | Decrease | 69.90% | Increase | 100.00% | Constant |

| University of East Anglia | 82.30% | Decrease | 60.00% | Increase | 84.80% | Decrease |

| University of Edinburgh | 92.90% | Decrease | 66.10% | Decrease | 92.90% | Decrease |

| University of Exeter | 77.50% | Decrease | 44.70% | Increase | 77.50% | Decrease |

| University of Freiburg | 83.40% | Decrease | 37.80% | Decrease | 83.40% | Decrease |

| University of Geneva | 95.80% | Decrease | 54.20% | Decrease | 98.00% | Decrease |

| University of Glasgow | 81.30% | Decrease | 59.40% | Decrease | 81.30% | Decrease |

| University of Groningen | 77.00% | Decrease | 79.60% | Decrease | 79.90% | Decrease |

| University of Göttingen | 99.20% | Decrease | 53.80% | Decrease | 99.20% | Decrease |

| University of Helsinki | 80.80% | Decrease | 70.00% | Decrease | 85.40% | Decrease |

| University of Konstanz | 100.00% | Constant | 100.00% | Constant | 100.00% | Constant |

| University of Lausanne | 87.20% | Decrease | 54.80% | Decrease | 88.10% | Decrease |

| University of Leeds | 64.80% | Decrease | 60.00% | Decrease | 67.30% | Decrease |

| University of Liverpool | 71.80% | Decrease | 55.10% | Decrease | 72.80% | Decrease |

| University of Manchester | 81.90% | Decrease | 83.80% | Decrease | 87.00% | Decrease |

| University of Nottingham | 76.60% | Decrease | 63.50% | Decrease | 82.30% | Decrease |

| University of Oxford | 100.00% | Constant | 100.00% | Constant | 100.00% | Constant |

| University of Sheffield | 71.80% | Decrease | 69.70% | Decrease | 79.20% | Decrease |

| University of Southampton | 82.20% | Decrease | 65.50% | Decrease | 84.70% | Decrease |

| University of St Andrews | 100.00% | Constant | 100.00% | Constant | 100.00% | Constant |