Student Engagement and Blended Learning: Making the Assessment Connection

Abstract

:1. Introduction

- (1)

- Active and collaborative learning

- (2)

- Student interactions with faculty members

- (3)

- Level of academic challenge

- (4)

- Enriching educational experiences

- (5)

- Supportive campus environment

- (1)

- How are instructors designing assessment activities to incorporate student use of collaborative learning applications in blended courses?

- (2)

- How do students perceive the value of these digital tools?

- (3)

- Is there a correlation between the use of these tools, the level of perceived student engagement, and academic achievement in blended courses?

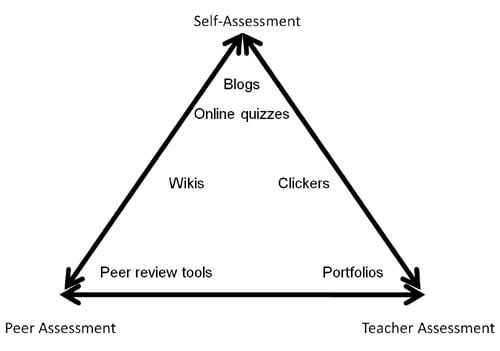

2. Theoretical Framework

3. Methods of Investigation

3.1. Data Collection

3.2. Data Analysis

3.2.1. Quantitative Data

3.2.2. Qualitative Data

4. Findings

- (1)

- How are instructors designing assessment activities to incorporate student use of collaborative learning applications in blended courses?

- (2)

- How do students perceive the value of these digital tools?

- (3)

- Is there a correlation between the use of these tools, the level of perceived student engagement in these courses, and academic achievement in blended courses?

4.1. Demographic Profile and Technology Ownership of the Study Participants

Student Demographics

| Item | Percentage/Number |

|---|---|

| Female | 69% |

| 24 years of age or less | 82% |

| Employed (part-time 62%; full-time 13%) | 75% |

| Average hours of work per week | 16 |

| Off-campus accommodation within driving distance | 84% |

| First year of studies | 75% |

| Average number of courses enrolled in/semester | 4 |

| Core course in program | 78% |

4.2. Student Digital Technology Access and Use in the Classroom

| Technology Access and Proficiency | Percentage |

|---|---|

| Personal rating of computer skills as intermediate/advanced | 63/34 |

| Access to high-speed home Internet connection | 96 |

| Have your own cell phone | 95 |

| Have your own MP3 digital music player | 88 |

| Have your own laptop computer | 82 |

| Technology | Often/Very Often | Never/Sometimes |

|---|---|---|

| Accessed course materials online (i.e., via Blackboard site, course wiki, etc.) | 96% | 4% |

| Used email or a discussion forum to communicate with the instructor(s) of this course | 49% | 51% |

| Worked in teams or groups using information and communication technology (i.e., clickers, Blackboard, wikis, blogs, Google Docs, etc.) | 48% | 52% |

| Used a MRC Library online database (i.e., EBSCO, ProQuest, etc.) to find material for a course assignment or project | 38% | 62% |

| Used real-time communication tools (i.e., Elluminate, cell phone, chat group, Internet, instant messaging, etc.) to discuss or complete an assignment with classmates in this course | 38% | 62% |

| Used a social networking application (i.e., Twitter, Facebook, MySpace, Ning, etc.) for discussion of course material, assignments or project work | 34% | 66% |

| Used clickers (i.e., personal response systems) in class | 32% | 68% |

| Used a computer and/or a digital projector to make a class presentation | 32% | 68% |

| Wiki or other collaborative writing tool (e.g., Google Docs, etc.) for course assignments or projects | 27% | 73% |

| Media sharing application (i.e., YouTube, Flikr, Podomatic, Slideshare) to create, share or access information for a course assignment or project | 18% | 82% |

| Blog for course related work such as assignments or projects | 13% | 87% |

| Social bookmarking tool (e.g., Delicious, Furl, Connotea, etc.) to manage/organize and share online resources in this course | 5% | 95% |

| Virtual world application (i.e., Second Life, The Palace, Moove, etc.) for course assignments or project work | 2% | 98% |

| Mashup application (i.e., Visuwords, Quintura, Intel’s Mash Maker, etc.) for course assignments or project work | 1% | 99% |

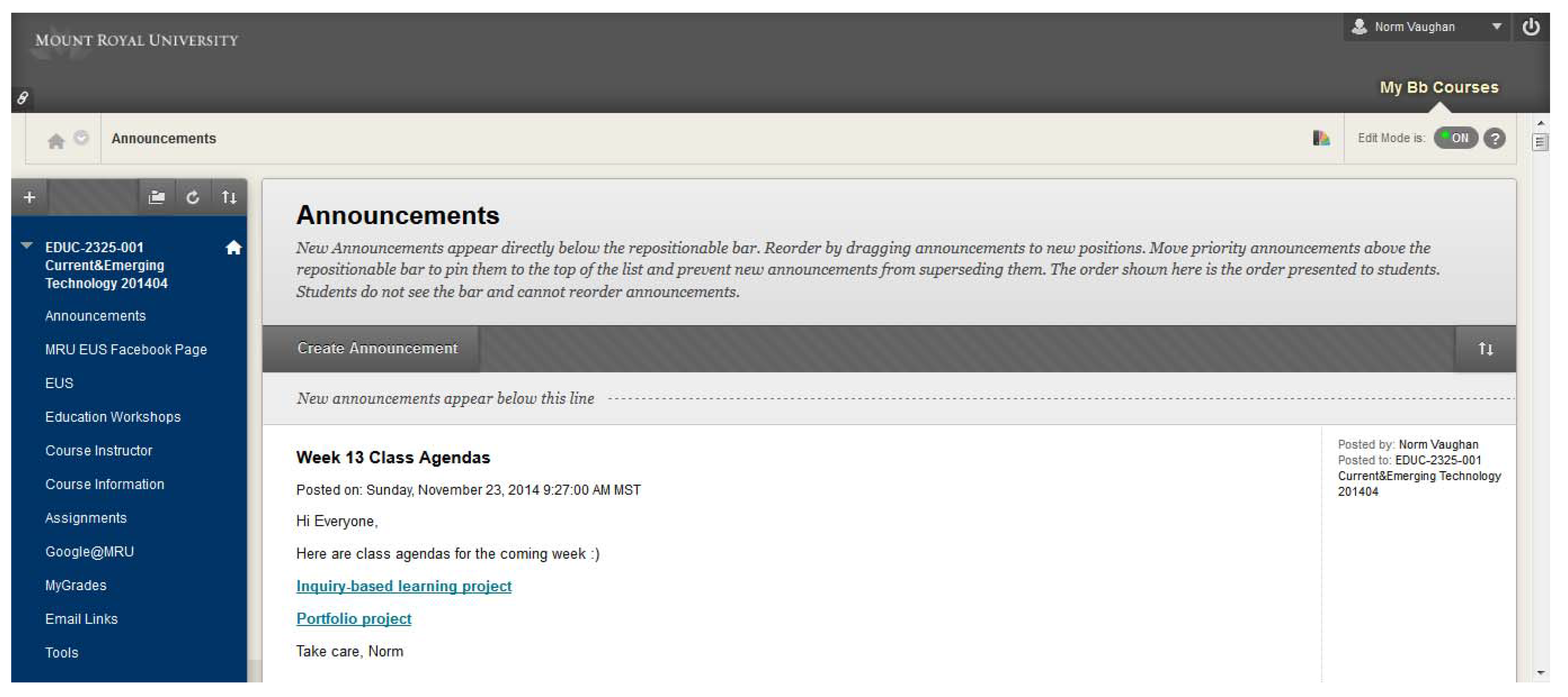

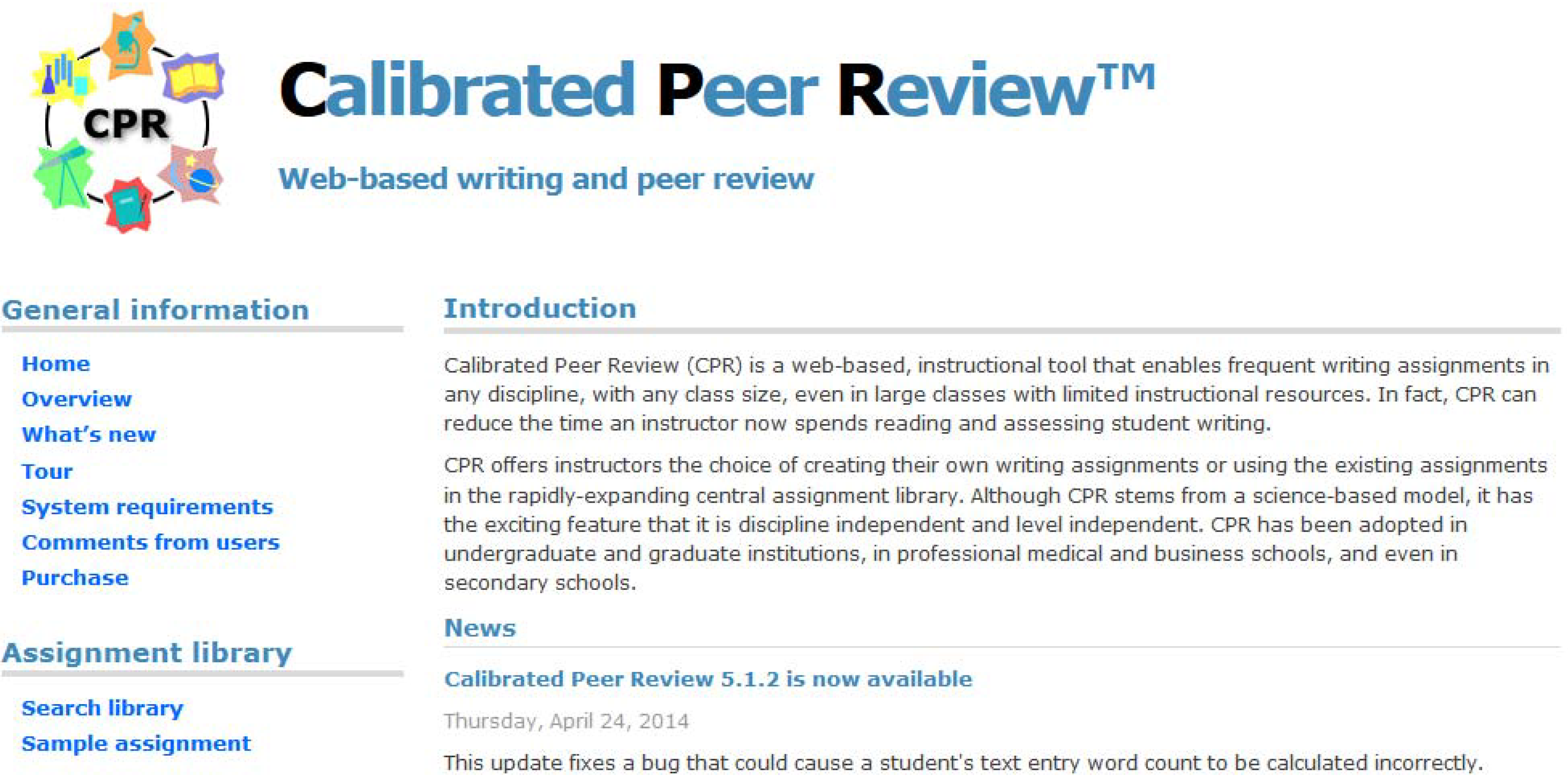

4.3. Assessment Practices and Collaborative Learning Applications

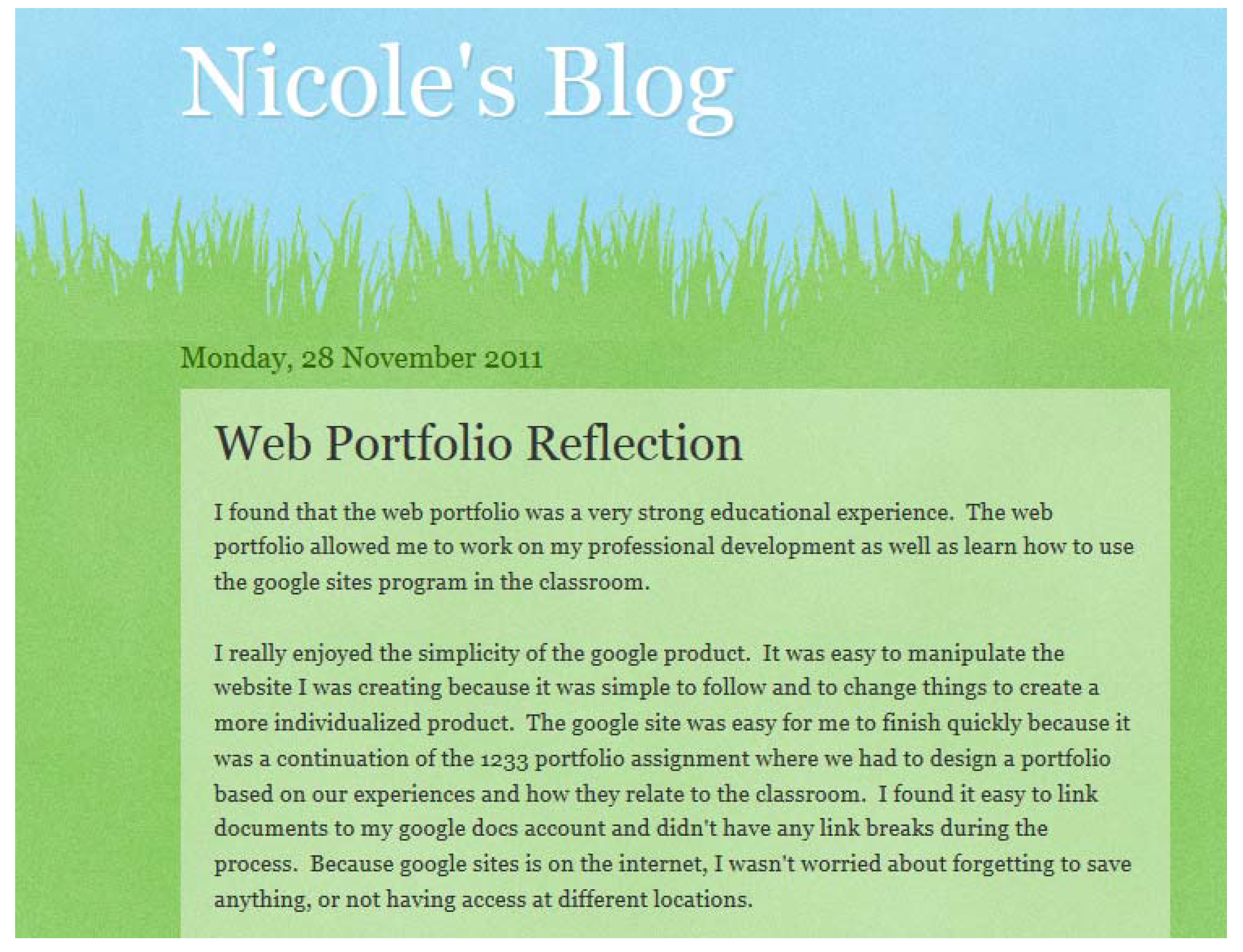

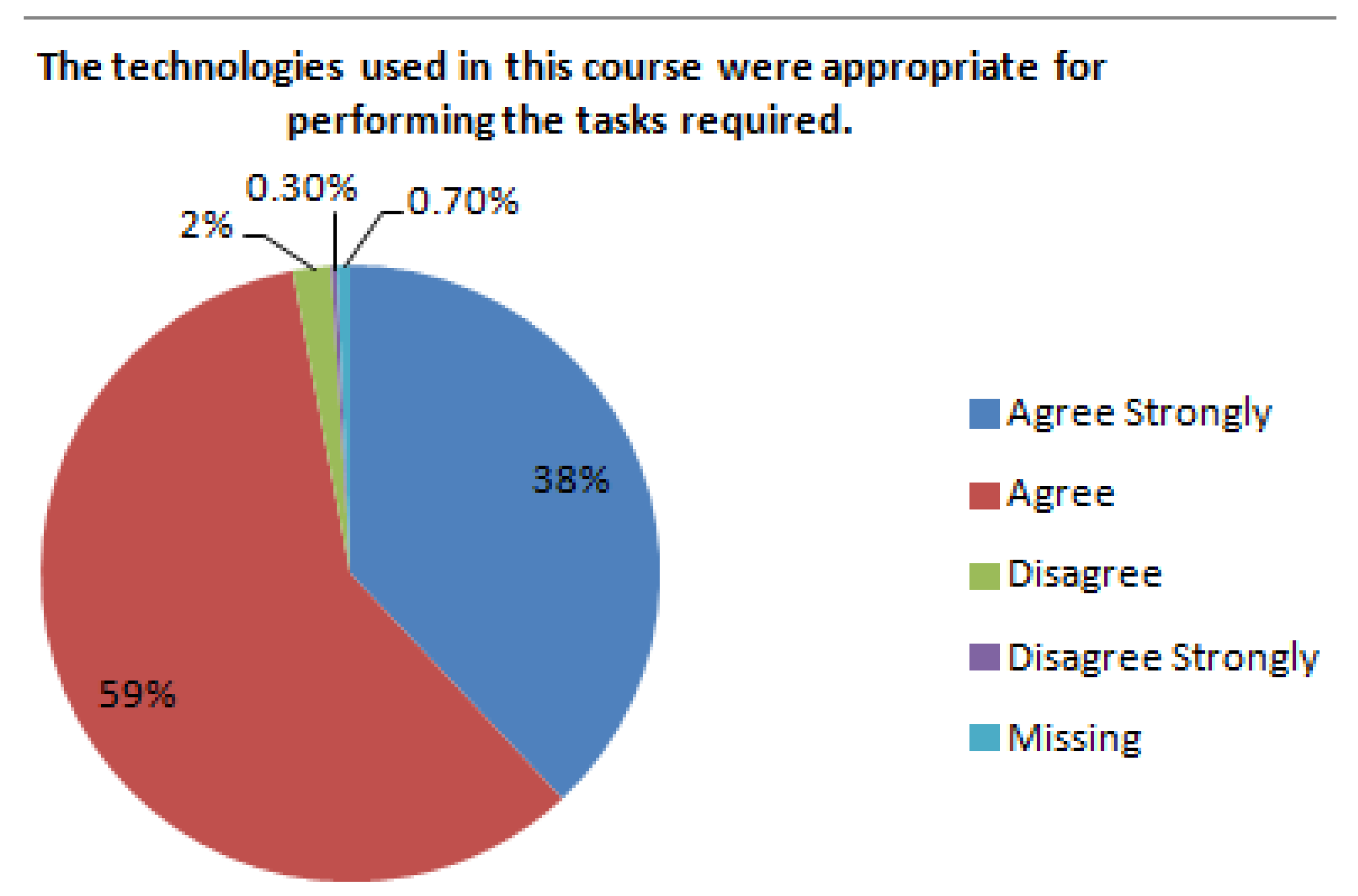

4.4. Student Perceptions of Assessment Practices and Collaborative Learning Applications

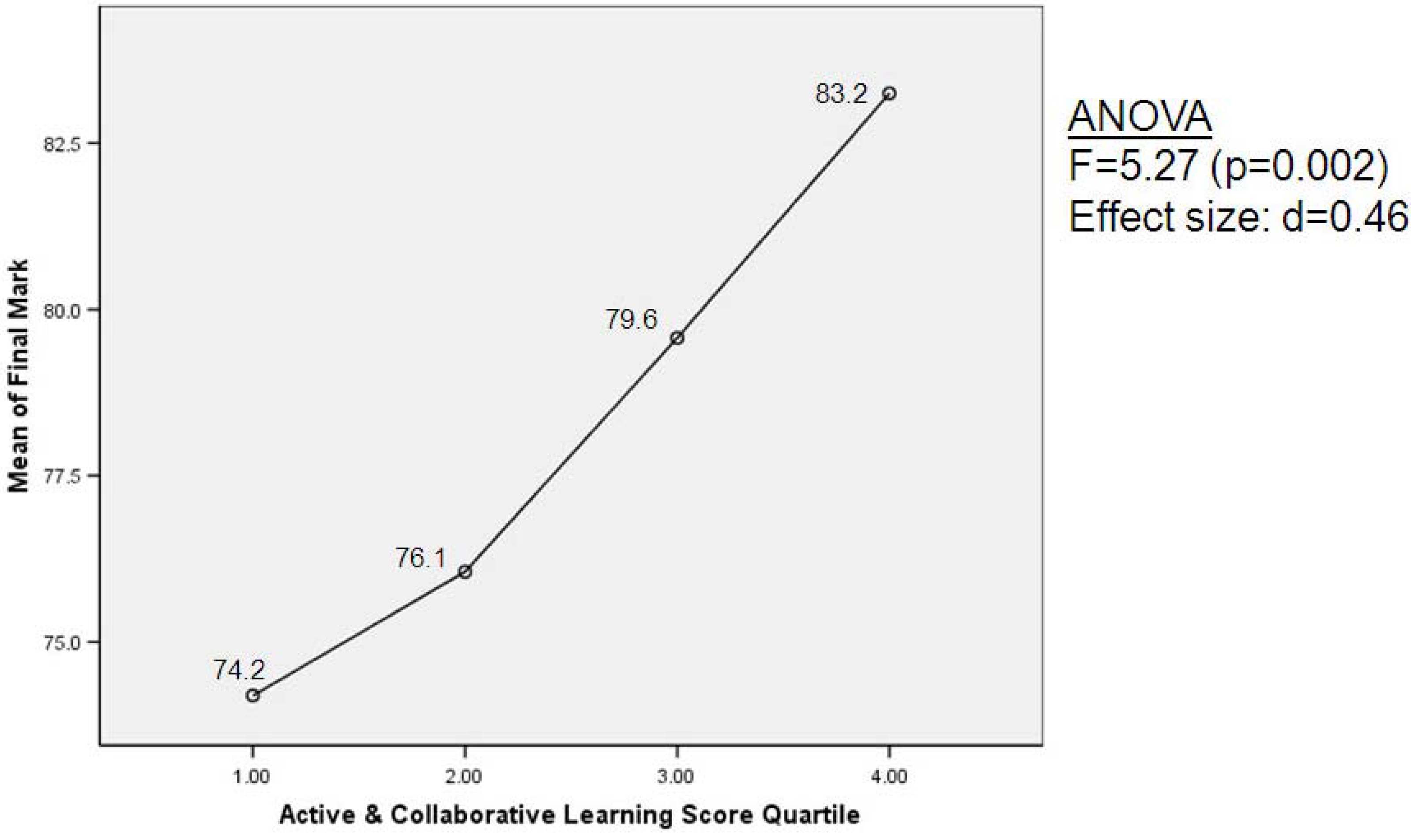

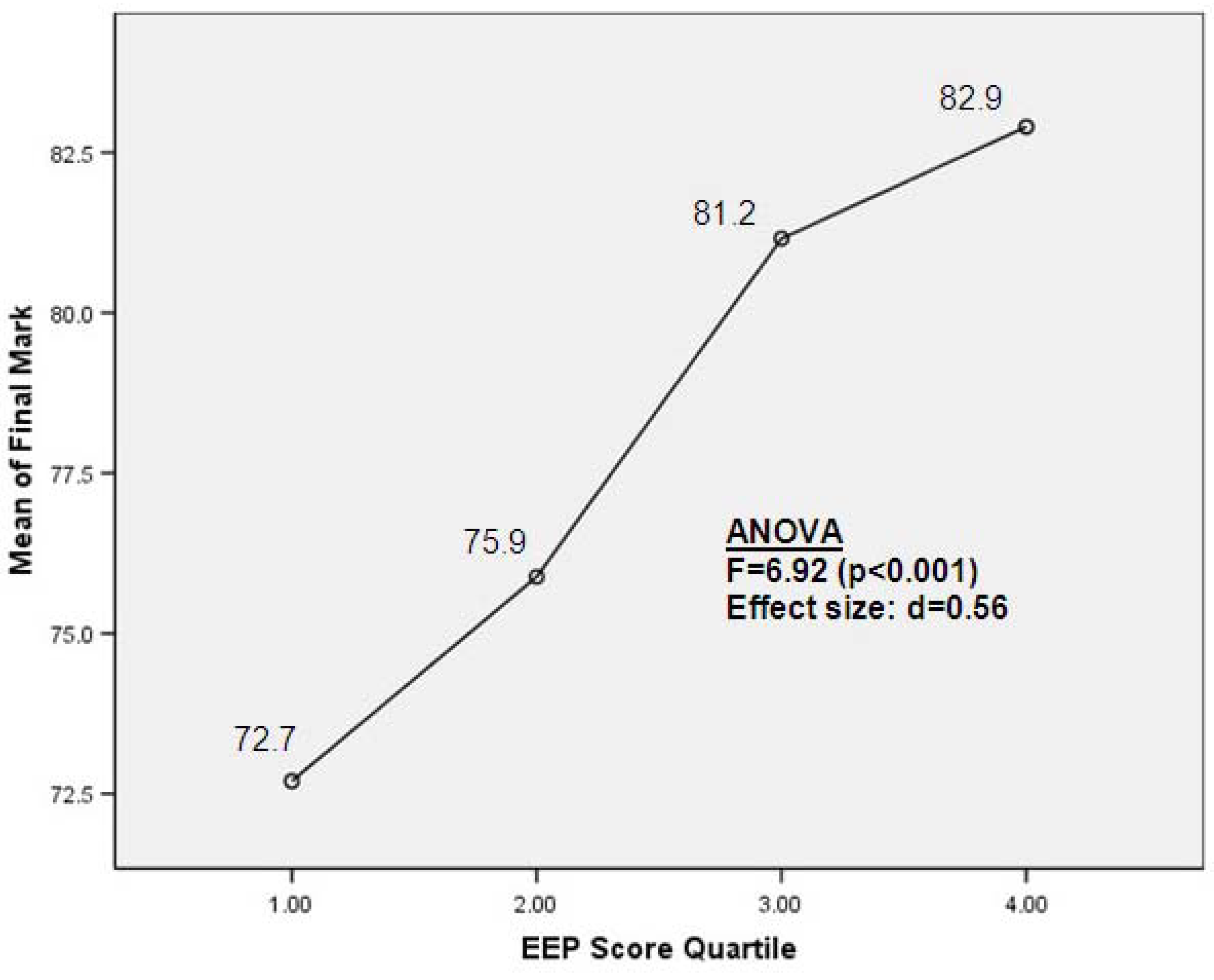

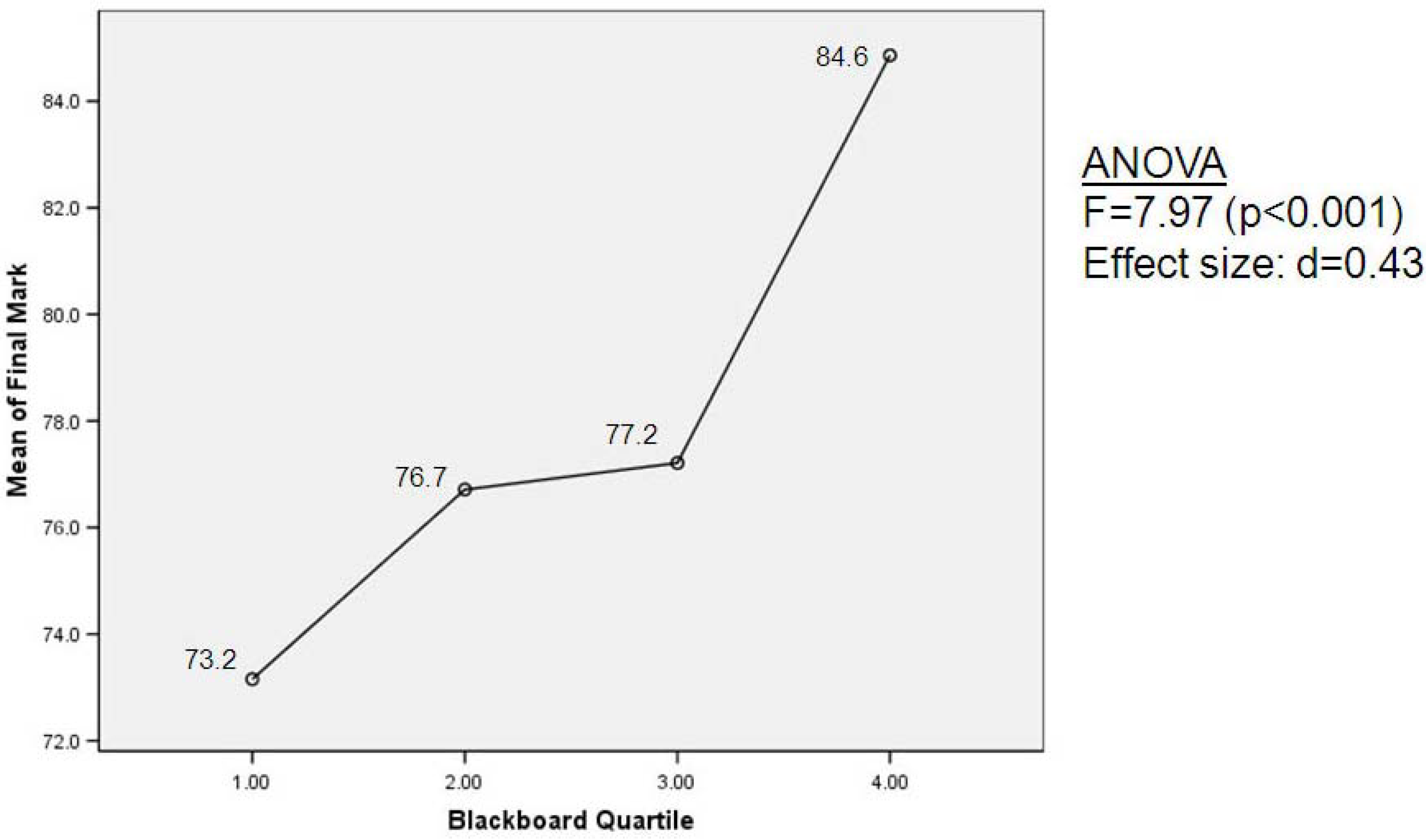

4.5. Associations between the Use of Collaborative Learning Applications, Engagement and Academic Achievement

| Scale | Cronbach Alpha |

|---|---|

| Engagement in effective educational practices (20 items) | 0.83 |

| Active and collaborative learning (7 items) | 0.78 |

| Student-faculty interaction (6 items) | 0.71 |

| Level of academic challenge (6 items) | 0.68 |

| Intensity of course-related technology use (12 items) | 0.69 |

| Variables | r |

|---|---|

| Engagement in effective educational practices | 0.303 ** |

| Active and Collaborative Learning (ACL) | 0.260 ** |

| Level of Academic Challenge (LAC) | 0.181 * |

| Student Interactions with Faculty Members (SFI) | 0.148 * |

| Engagement Indicators | Blackboard Use | Intensity of Course-Related Technology Use |

|---|---|---|

| Engagement in effective educational practices | r = 0.270 ** | r = 0.643 ** |

| Active and collaborative learning | r = 0.177 ** | r = 0.482 ** |

| Student-faculty interaction | r = 0.189 ** | r = 0.413 ** |

| Level of academic challenge | r = 0.187 ** | r = 0.339 ** |

5. Discussion

- (1)

- How are instructors designing course assessment activities to incorporate student use of collaborative learning applications in blended courses?

- (2)

- How do students perceive the value of these digital tools?

- (3)

- Is there a correlation between the use of these tools, the level of perceived student engagement in these courses, and academic achievement in blended courses?

5.1. Assessment Practices and Collaborative Learning Applications

5.2. Student Perceptions of Assessment Practices and Collaborative Learning Applications

5.3. Associations between the Use of Collaborative Learning Applications, Engagement and Academic Achievement

6. Conclusions

Conflicts of Interest

References

- Regier, P. Using technology to engage the non-traditional student. Educause Rev. 2014, 6, pp. 70–88. Available online: https://net.educause.edu/ir/library/pdf/ERM1454.pdf (accessed on 17 November 2014).

- Littky, D.; Grabelle, S. The Big Picture: Education is Everyone’s Business; Association for Supervision and Curriculum Development: Alexandria, VA, USA, 2014. [Google Scholar]

- Csikszentmihalyi, M. Flow: The Psychology of Optimal Experience; Harper and Row: New York, NY, USA, 1990. [Google Scholar]

- Pink, D.H. Drive: The Surprising Truth about What Motivates Us; Riverhead Books: New York, NY, USA, 2009. [Google Scholar]

- Fullan, M. Stratosphere: Integrating technology, pedagogy, and change knowledge; Pearson Canada: Toronto, Canada, 2012. [Google Scholar]

- National Survey of Student Engagement. In Experiences that Matter: Enhancing Student Learning and Success—Annual Report 2007; Center for Postsecondary Research: Bloomington, IN, USA, 2007.

- Dziuban, C.; Graham, C.; Picciano, A.G. Research Perspectives in Blended Learning, 2nd ed.; Routledge, Taylor and Francis: New York, NY, USA, 2013. [Google Scholar]

- Williams, J. Blending into the Background. E-Learning Age Magazine, 28 September 2013; 1. [Google Scholar]

- Allen, I.E.; Seaman, J. Class Differences: Online Education in the United States, 2010. Babson Survey Research Group, The Sloan Consortium, 2010. Available online: http://sloanconsortium.org/publications/survey/class_differences (accessed on 17 November 2014).

- Clark, D. Blend it Like Beckham; Epic Group PLC: East Sussex, UK, 2003. [Google Scholar]

- Sharpe, R.; Benfield, G.; Roberts, G.; Francis, R. The Undergraduate Experience of Blended e-Learning: A Review of UK Literature and Practice; Higher Education Academy: London, UK, 2006. [Google Scholar]

- Williams, C. Learning on-line: A review of recent literature in a rapidly expanding field. J. Furth. High. Educ. 2002, 26, 263–272. [Google Scholar] [CrossRef]

- Bleed, R. A hybrid campus for a new millennium. Educause Rev. 2001, 36, 16–24. [Google Scholar]

- Garnham, C.; Kaleta, R. Introduction to Hybrid Courses. Teach. Technol. Today. 2002, 8, pp. 1–4. Available online: http://hccelearning.files.wordpress.com/2010/09/introduction-to-hybrid-course1.pdf (accessed on 23 November 2014).

- Littlejohn, A.; Pegler, C. Preparing for Blended e-Learning: Understanding Blended and Online Learning (Connecting with E-Learning); Routledge: London, UK, 2007. [Google Scholar]

- Norberg, A.; Dziuban, C.D.; Moskal, P.D. A time-based blended learning model. Horizon 2011, 19, 207–216. [Google Scholar] [CrossRef]

- Garrison, D.R.; Vaughan, N.D. Blended Learning in Higher Education; Jossey-Bass: San Francisco, CA, USA, 2008. [Google Scholar]

- Arabasz, P.; Boggs, R.; Baker, M.B. Highlights of e-Learning Support Practices. Educause Center for Applied Research: Research Bulletin, 29 April 2003; 9. [Google Scholar]

- Graham, C.R. Blended learning systems: Definitions, current trends, and future directions. In The Handbook of Blended Learning: Global Perspectives, Local Designs; Bonk, C., Graham, C., Eds.; Pfeiffer: San Francisco, CA, USA, 2006; pp. 3–21. [Google Scholar]

- Mayadas, F.A.; Picciano, A.G. Blended learning and localness: The means and the end. J. Asynchronous Learn. Netw. 2007, 11, 3–7. [Google Scholar]

- Moskal, P.D.; Dziuban, C.D.; Hartman, J. Blended learning: A dangerous idea? Intern. High. Educ. 2013, 18, 15–23. [Google Scholar] [CrossRef]

- Leslie, S.; Langdon, B. Social Software for Learning: What is it, Why Use it? The Observatory on Borderless Higher Education: London, UK, 2008. [Google Scholar]

- Garrison, D.R.; Archer, W. A Transactional Perspective on Teaching and Learning: A Framework for Adult and Higher Education; Pergamon: Oxford, UK, 2000. [Google Scholar]

- Dewey, J. Democracy and education: An introduction to the philosophy of education; Macmillan: New York, NY, USA, 1916. [Google Scholar]

- National Survey of Student Engagement. In Bringing the Institution into Focus—Annual Report 2014; Center for Postsecondary Research: Bloomington, IN, USA, 2014.

- Pace, C. Measuring the quality of student effort. Curr. Issues High. Educ. 1980, 2, 10–16. [Google Scholar]

- Astin, A. Student involvement: A developmental theory for higher education. J. Coll. Stud. Dev. 1999, 49, 518–529. [Google Scholar]

- Chickering, A.W.; Gamson, Z.F. Development and adaptations of the seven principles for good practice in undergraduate education. New Dir. Teach. Learn. 1999, 80, 75–82. [Google Scholar] [CrossRef]

- Pascarella, E.; Terenzini, P. How College Affects Students: A Third Decade of Research, 2nd ed.; Jossey-Bass: San Francisco, CA, USA, 2005. [Google Scholar]

- Stringer, E.T. Action Research, 3rd ed.; Sage Publications: London, UK, 2007. [Google Scholar]

- Creswell, J.W. Research Design, 4th ed.; Sage: Thousand Oaks, CA, USA, 2013. [Google Scholar]

- Ouimet, J.A.; Smallwood, R.A. CLASSE—The class-level survey of student engagement. Assess. Update 2005, 17, 24–35. [Google Scholar]

- Dahlstrom, E.; de Boor, T.; Grunwald, P.; Vockley, M. The ECAR Study of Undergraduate Students and Information Technology, 2011; Research Report; Educause: Boulder, CO, USA, 2011; Available online: http://net.educause.edu/ir/library/pdf/ERS1103/ERS1103W.pdf (accessed on 17 November 2014).

- Ehrmann, S.C.; Zuniga, R.E. The Flashlight Evaluation Handbook; Corporation for Public Broadcasting: Washington, DC, USA, 1997. [Google Scholar]

- Patton, M.Q. Qualitative Evaluation and Research Methods, 2nd ed.; Sage Publications: Newbury Park, CA, USA, 1990. [Google Scholar]

- Canadian University Survey Consortium (CUSC). Undergraduate Student Survey; CUSC: Ottawa, ON, Canada, 2013. [Google Scholar]

- Lenhart, A.; Purcell, K.; Smith, A.; Zickuhr, K. Social media & mobile internet use among teens and young adults. Pew Intern. Am. Life Proj. 2010. Available online: http://www.pewinternet.org/2010/02/03/social-media-and-young-adults/ (accessed on 17 November 2014).

- Crouch, C.H.; Mazur, E. Peer instruction: Ten years of experience and results. Am. J. Phys. 2001, 69, 970–977. [Google Scholar] [CrossRef]

- Birenbaum, M.; Breuer, K.; Cascallar, E.; Dochy, F.; Dori, Y.; Ridgway, J. A learning integrated assessment system. Educ. Res. Rev. 2006, 1, 61–67. [Google Scholar] [CrossRef]

- Nunnally, J.C. Psychometric Theory, 2nd ed.; McGraw-Hill: New York, NY, USA, 1978. [Google Scholar]

- Thistlethwaite, J. More thoughts on assessment drives learning. Med. Educ. 2006, 40, 1149–1150. [Google Scholar] [CrossRef] [PubMed]

- Hedberg, J.; Corrent-Agostinho, S. Creating a postgraduate virtual community: Assessment drives learning. Educ. Media Int. 2000, 37, 83–90. [Google Scholar] [CrossRef]

- Entwistle, N.J. Approaches to studying and levels of understanding: The influences of teaching and assessment. In Higher Education: Handbook of Theory and Research; Smart, J.C., Ed.; Agathon Press: New York, NY, USA, 2000; Volume XV, pp. 156–218. [Google Scholar]

- Yeh, S.S. The cost-effectiveness of raising teacher quality. Educ. Res. Rev. 2009, 4, 220–232. [Google Scholar] [CrossRef]

- Hattie, J. Visible Learning: A Synthesis of over 800 Meta-Analyses Relating to Achievement; Routledge: New York, NY, USA, 2009. [Google Scholar]

- Twigg, C.A. Improving learning and reducing costs: New models for online learning. Educause Rev. 2003, 38, 29–38. [Google Scholar]

- Carini, R.; Kuh, G.; Klein, S. Student engagement and student learning: Testing the linkages. Res. High. Educ. 2006, 47, 1–32. [Google Scholar] [CrossRef]

- Kuh, G.D.; Kinzie, J.; Cruce, T. Connecting the Dots: Multi-Faceted Analyses of the Relationships between Student Engagement Results from the NSSE and the Institutional Practices and Conditions that Foster Student Success. 2007. Available online: http://nsse.iub.edu/pdf/Connecting_the_Dots_Report.pdf (accessed on 16 November 2014).

- Kuh, G.D. High Impact Educational Practices: What They Are, Who Has Access to Them, and Why They Matter; Association of American Colleges and Universities: Washington, DC, USA, 2008. [Google Scholar]

- National Survey of Student Engagement. In Assessment for Improvement: Tracking Student Engagement over Time—Annual Results 2009; Indiana University Center for Postsecondary Research: Bloomington, IN, USA, 2009.

- Coates, H. A model of online and general campus-based student engagement. Assess. Eval. High. Educ. 2007, 32, 121–141. [Google Scholar] [CrossRef]

- Lipman, M. Thinking in Education; Cambridge University Press: Cambridge, UK, 1991. [Google Scholar]

© 2014 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Vaughan, N. Student Engagement and Blended Learning: Making the Assessment Connection. Educ. Sci. 2014, 4, 247-264. https://doi.org/10.3390/educsci4040247

Vaughan N. Student Engagement and Blended Learning: Making the Assessment Connection. Education Sciences. 2014; 4(4):247-264. https://doi.org/10.3390/educsci4040247

Chicago/Turabian StyleVaughan, Norman. 2014. "Student Engagement and Blended Learning: Making the Assessment Connection" Education Sciences 4, no. 4: 247-264. https://doi.org/10.3390/educsci4040247

APA StyleVaughan, N. (2014). Student Engagement and Blended Learning: Making the Assessment Connection. Education Sciences, 4(4), 247-264. https://doi.org/10.3390/educsci4040247