4. Results

RQ1: What are the students’ perceptions of the quality and relevance of the course content when they are unaware that it is AI-designed?

The themes of the data analysis were divided into four categories: (1) attitudes and perceptions of the course materials, (2) noticed changes in the course materials, (3) competency in AI application, and (4) overall course experience.

Regarding their attitudes and perceptions of the course materials, all the participants agreed that the content was well-organized and easy to understand. As such, their attitudes and perceptions of the course materials were positive overall. Some examples are mentioned in their reflection notes:

In terms of curriculum design, the content was designed to stimulate motivation or actual interest before the lesson compared to previous sessions. This content increased interest and focus in the lesson, and the connection to the lesson seemed seamless (extracted from Teacher B’s reflection notes).

With respect to delivery methods, the content matches well with the expectations of every lesson. They contain the main points briefly and in an understandable way (extracted from Teacher C’s reflection notes).

Most participants liked how the professor conveyed the course materials during the interviews. Here is another example.

The professor provided sufficient information in the course on the PPT slides, making it easy for me to catch up. My English was not good, so I thought it could be challenging to follow the lecture at the beginning of the semester. However, I could receive sufficient background information from the course materials, so it was good for me to follow up (Teacher C, second interview).

- 2.

Noticed changes in the course materials

Among the three participants, Teacher A noticed critical changes in the course materials during the semester. Specifically, Teacher A noted the changes in the content displayed because she thought the first and second halves of the PPT slides looked very different. The following is the part of the transcript from her second interview.

In the second half of the course, I noticed that the PPT materials were changed. In the first half of the semester, I felt like the professor made those PPT slides by himself. However, in the second half of the semester, the content display and formatting were changed and different. Thus, I thought the professor used the AI tool for course PPT slides for reasons… I’m not too fond of the second half of the PPT slides because… AI tools created those (Teacher A, second interview).

In the reflection note, Teacher A mentioned the following:

Week 11 and 12: The PPTs could disrupt the course flow and make it harder for me to grasp the material. The PPTs seemed to be made by AI, not by my professor (extracted from Teacher A’s reflection notes).

On the other hand, the other two participants did not notice changes in the content displayed in the course materials. The authors mentioned that the background of the PPT slides was slightly changed, but the content display and delivery methods were not changed.

In the second half of the semester, the background and format of the PPT slides were changed, but this did not impact my understanding of the content. Additionally, the professor told us that he was using the AI tool to create the PPT background, so this was okay (Teacher B, second interview).

The first half of the semester is mainly arranged with sentences. The second half of the semester consists of more diverse and concise designs and pictures, which are good for understanding. Both designs are appropriate for the curriculum because, in the early phase of the semester, we need to familiarize ourselves with the lesson, so we need more detailed written explanations (extracted from Teacher C’s reflection notes).

It is concluded that each participant has different opinions and experiences of changes in content and materials based on their understanding and experiences of the course.

- 3.

Their confidence in AI applications

All three participants mentioned that their self-confidence could be enhanced when comparing their competency level in the early semester and at the end of the semester. However, previous backgrounds and skills related to AI impact the actual ability of AI applications in their classrooms. Specifically, participants with previous background knowledge and skills in AI, such as Teacher C, showed high competency.

I am fairly confident in working with AI applications. I would say 8 out of 10 scale in competency levels. My previous experience developing apps and tools helped me get better ideas for what I will do for my future research projects. I am working on making apps to help university applications for high school kids, so the course helped me build new ideas and make them real for final assignments. I am enjoying it now (Teacher C, second interview).

However, other participants who lacked previous background knowledge and skills in AI showed low competency. For instance, Teacher B, who has low competency, mentioned in the interview:

I thought I was at the beginner level of AI application, so my competency in AI application was not that high. What I can do is create a lesson plan that incorporates AI components more effectively. However, I need somebody’s help making AI technology in real practice. So, I wish I could create something new, like app development for the final project assignment, but it was challenging now (Teacher B, second interview).

Some teachers’ reflection notes also showed a lack of competency in practical AI applications.

I can understand what AI is, how it works, and how it can have an impact on society. However, as a teacher, I still struggle to design and apply AI systems as pedagogical tools for my lessons (extracted from Teacher A’s reflection notes).

It is concluded that based on the previous knowledge, expertise, and experience of AI applications, each individual participant’s competency in AI applications could differ.

- 4.

Overall experiences while taking the course

Regarding overall course experiences, all participants agreed that the course environment was learner-friendly and encouraged a collaborative learning process. Additionally, the professor effectively facilitated the participants’ in-class activity and participation. Thus, all of them are quite satisfied with the course. Some examples are below.

I liked the classroom atmosphere because we were working together during the course, and it was like American higher education with a small number of graduate students. I prefer this kind of course format because I do not like lecture-only classes in the programs with other courses. I like to receive hands-on activities and assignments that I could use for my teaching classrooms (Teacher A, second interview).

This course was one of the practical courses we took from teacher preparation programs. We took the lecture part and had enough chance to work on collaborative or individual projects afterward. It was an encouraging learning environment that we all enjoyed (Teacher B, second interview).

RQ2. What are the affordances and limitations of AI-developed courses and lesson designs?

All course information, including course topics, lesson content, midterm projects, and final projects, was created with AI. Although the content was created by AI, there was an iterative process of refinement through prompts to elaborate on the existing information. This process concentrated on developing existing information rather than explicitly requesting new information that the instructor identified as potentially relevant but not initially provided by AI.

4.1. AI Affordances

To assess the quality and coherence of topics generated by ChatGPT, four distinct queries were conducted, each requesting twelve topics. All four outputs consistently featured lessons on chatbots and virtual assistants, natural language processing, and learning analytics, among others. However, there were some minor differences in some of the outputs. For example, the first query separated machine learning and deep learning into separate lessons, whereas the second query incorporated this information into the first lesson (introduction to AI in education) and the second lesson (AI and personalized learning). Although machine learning and deep learning are important aspects of AI, dedicating entire lessons to these topics was not suitable for educators. Instead, these subjects were integrated into other topics. The four different queries generated topics that were appropriate and met the expectations for a course focused on AI and education with education majors.

Another affordance offered by AI was the ability to generate subtopics within the theme of each lesson. During the first part of the semester, ChatGPT 3.5 and 4.0 were able to generate topic information by prompting them to create a constructivist lesson plan. In the second part of the semester, the teachersbuddy website’s ‘brainstorming’ feature offered numerous potential subtopics for the main subject. Similar to generating 12 topics for the course, generating subtopics for each specific lesson was comprehensive and coherent.

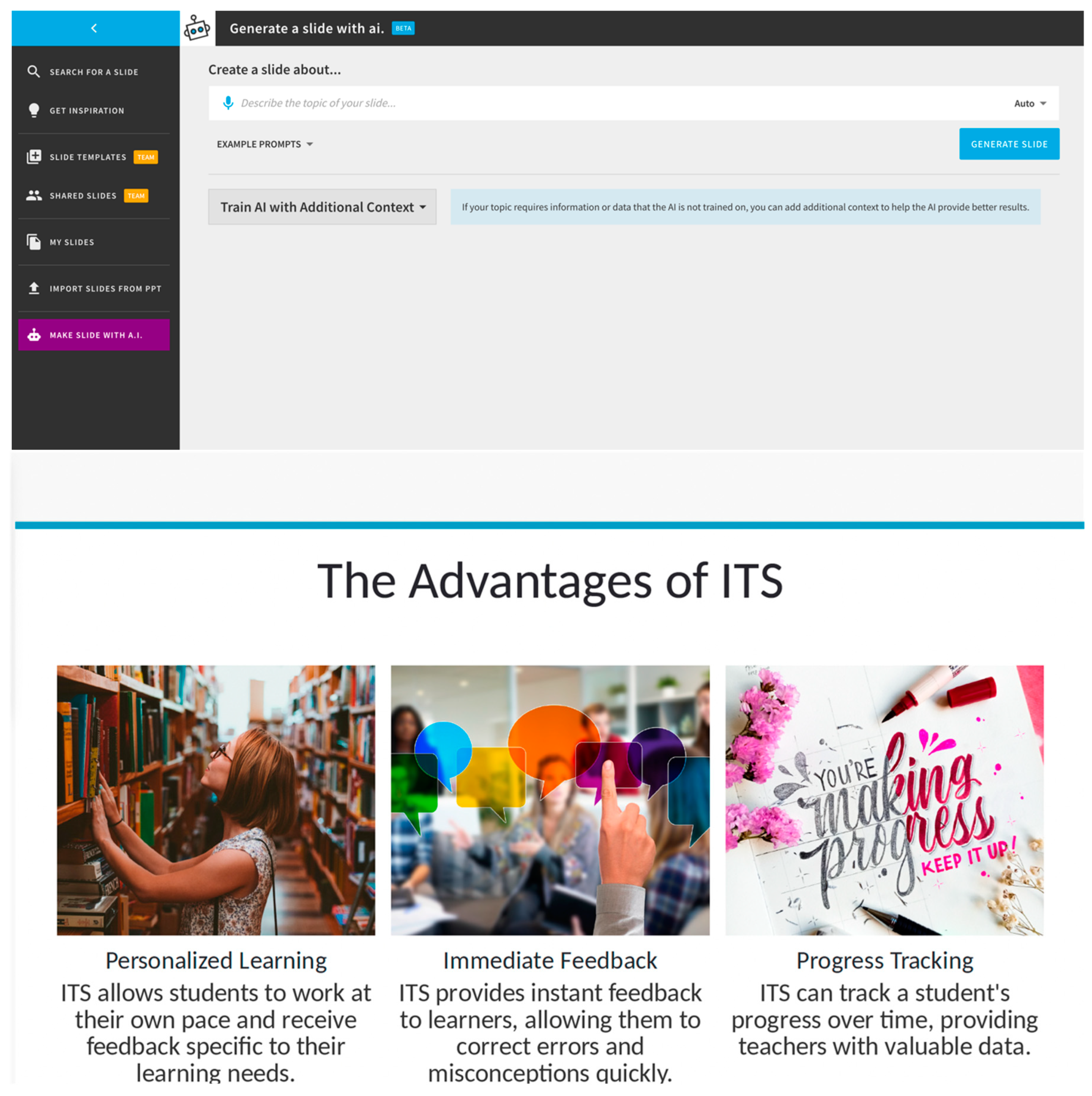

Finally, an additional AI-based program designed for presentations (beautiful.ai) was used in tandem with teachersbuddy and ChatGPT in the second half of the semester. The AI-generated content from teachersbuddy and ChatGPT was placed inside the ‘AI slide generator bot’ of beautiful.ai to create the slides. This bot could discern important information from short paragraphs, reducing the time required for manual slide preparation from approximately five hours per lesson to just one hour. Additionally, suitable images and icons were selected from an AI comprehensive library of professional resources. AI was able to summarize text into key points, create an appropriate slide design (bullet points, paragraphs, timelines, text boxes, etc.), and identify pictures and icons that matched the idea of the point being explained.

Figure 1 shows the AI generator bot and a sample output.

When creating a new course with no prior content, both ChatGPT and teachersbuddy allow designers to identify the key topics and relevant information. Generally, designers spend days or weeks searching for different resources on the Internet or in the library to collect materials. AI can streamline the process to a single day. For designers who are already familiar with the subject matter, ChatGPT can turn the laborious process of searching for information into a more efficient exercise of recognition, making the process quicker and less cognitively demanding. In addition, AI-powered slide generators not only save time but also enhance the visual quality of the presentations.

4.2. AI Limitations

Despite these advantages, there are inherent limitations associated with using AI for course and instructional design.

One primary constraint for AI is effective prompting. When using ChatGPT, prompting is essential for generating desired outputs. This becomes particularly important when newer versions of AI software are released, as each version may require changes in prompting strategies. For example, ChatGPT-3.5 and ChatGPT-4.0 generated lesson plans with bullet points but differed significantly when expanding on that information. In version 3.5, the AI had to be prompted to discuss the information as if it were giving a presentation, whereas in ChatGPT-4, simple prompts to request that the AI expand on the output provided more detailed information. The presentation prompts of ChatGPT-3.5 did not work as well with ChatGPT-4. When using ChatGPT, the details of the prompts and the version used are important factors for extracting the desired information. Teachersbuddy.com offered less control over prompts than ChatGPT, making it less flexible, but it allowed inputs, such as age group or educational methodology.

Another limitation is the potential for outdated or inaccurate information. When using ChatGPT, users are informed that the AI system’s training data will extend only up to September 2021, limiting its ability to offer current updates in technology. Moreover, the AI approach generated false research articles by well-known authors and journals in the field with complete DOI numbers. These inaccuracies made it unsuitable for finding external reading materials for graduate-level coursework. The risk of outdated or incorrect information was an issue when using AI to generate lesson plans and activities.

Finally, the most significant challenges associated with using AI-generated lesson plans were the lack of depth of information provided by iterative prompting and the propensity to generate the same information. Throughout the semester, AI consistently incorporated the same generic sections on ethics and inclusivity when covering any aspect of AI and education. Ultimately, the further the course went into the semester, the more the information became repetitive. The teachersbuddy website exhibited similar limitations, requiring additional iterations through ChatGPT to add depth, only to result in repetitiveness. Therefore, from a designer’s perspective, the depth of information about the subject and how it connects with other topics was not expansive. AI appeared unable to connect present information with past and future information, a skill that human educators naturally possess.

5. Discussion

The use of AI for course design and instructional design offers potential benefits for educators but also has several limitations that warrant consideration. From the student perspective, satisfaction with the course content was high, and the course was considered well-organized. The only issue expressed was not the content itself but rather the use of beautiful.ai, which created more dynamic slides than conventional PowerPoint presentations. The lack of critical assessment of the course content suggests that students might be able to evaluate their experience in the course [

23], but they might not have the capacity to properly evaluate the curriculum [

24]. Student assessments often include factors such as the approach to learning, the academic environment, and instructional theories held by teachers [

25]. These elements matched comments such as ‘American higher education’ (Teacher A) or ‘collaborative or individual projects’ (Teacher B). Korean graduate students viewed the content and teaching of this course from their prior experiences as learners; although two were in-service educators, curriculum design and instructional design were not assessed through the lens of a teacher or designer. Notably, the students did not seem to notice the redundancy of information presented across multiple lessons.

From the educator’s perspective, some of the findings aligned with previously reported affordances and issues. ChatGPT-3.5, ChatGPT-4, and teachersbuddy, which used ChatGPT (version unknown), were able to identify appropriate topics and subtopics for the course and create detailed lesson plans. This finding aligns with Zhu et al. (2023) [

18]. The authors found that ChatGPT could create a long-term and detailed lesson plan that would be appropriate for a course and customized to meet the learner’s needs. Both versions of ChatGPT can greatly reduce the workload of educators, and Kersting et al. (2014) [

10] suggested that this was a benefit of AI in education. These affordances allow the educator or designer to minimize the amount of time spent outlining and identifying internal structures. Although this approach is not perfect and requires careful assessment, both versions of ChatGPT are sophisticated enough to dramatically reduce workload and time commitment.

These research findings also support issues identified in previous research, such as incorrect or completely fabricated data [

18] and a lack of understanding or depth when elaborating on information [

17,

18]. Although ChatGPT excels at structuring information, it may lack depth and is susceptible to generating repetitive or inaccurate content. For educators or designers who are well-versed in their subjects, identifying these limitations will be straightforward. Individuals who lack experience or advanced knowledge may need to exercise a required skepticism when reviewing ChatGPT outputs.

Finally, this research identified two limitations that are seldom discussed with respect to ChatGPT. First, ChatGPT-3.5 and ChatGPT-4 may require different prompts for effective information retrieval. Karakose et al. (2023) [

19] noted that ChatGPT-3.5 was broader and that ChatGPT-4 was more concise when asked to generate information on teachers’ technology integration. For this study, the same prompts generated different outputs, and the prompts were reworked to retrieve useful information. This suggests that users need to become proficient in prompting to achieve the desired results. However, even after mastering optimal prompting techniques, changes in versions could require the reevaluation of these strategies. However, ChatGPT-4 was easier to prompt than ChatGPT-3.5.

Lastly, the greatest issue with creating course and lesson plans with ChatGPT is that AI lacks the ability to understand how to strategically connect information that has been previously used or will be used in course content. Although capable of organizing topics and subtopics, it cannot integrate the previous or upcoming course materials as a human educator might, leading to redundant explanations across multiple lessons, as if the information was being presented for the first time. Additionally, educators must recognize that ChatGPT’s outputs are generalized information and may not always align perfectly with specific contexts. In other words, while ChatGPT can provide topical information on a subject, it may lack more detailed insights that integrate social, cultural, or context-specific elements relevant to the students and their learning environment. For instance, the information needed by Korean graduate students learning about AI and education might require a nuanced understanding due to the specificities of the Korean context. As an example, nearly every school in Korea has access to high-speed internet, which influences how AI and related tools are taught, differing from contexts like the United States where such infrastructure may not be as uniformly available in schools. Although the course materials generated by ChatGPT may not always make a coherent connection between past and future information, and despite its potential limitations in recognizing specific needs for certain student groups or contexts, ChatGPT can still be valuable in generating content ideas and providing basic information for lessons. Therefore, ChatGPT can be viewed as an extremely powerful search engine that is capable of aggregating information and presenting it in a centralized format. Nonetheless, the educator still plays a crucial role in making the information relevant and applicable to the specific student group and teaching context.