Moodle Quizzes as a Continuous Assessment in Higher Education: An Exploratory Approach in Physical Chemistry

Abstract

:1. Introduction

2. Materials and Methods

2.1. Sample

2.2. Development of the Experience: Didactic Strategy

3. Results and Discussion

3.1. Participation in the Quizzes

3.2. Statistical and Psychometric Data of the Quizzes and Each Item

3.3. Students’ Opinion on the Educational Activities

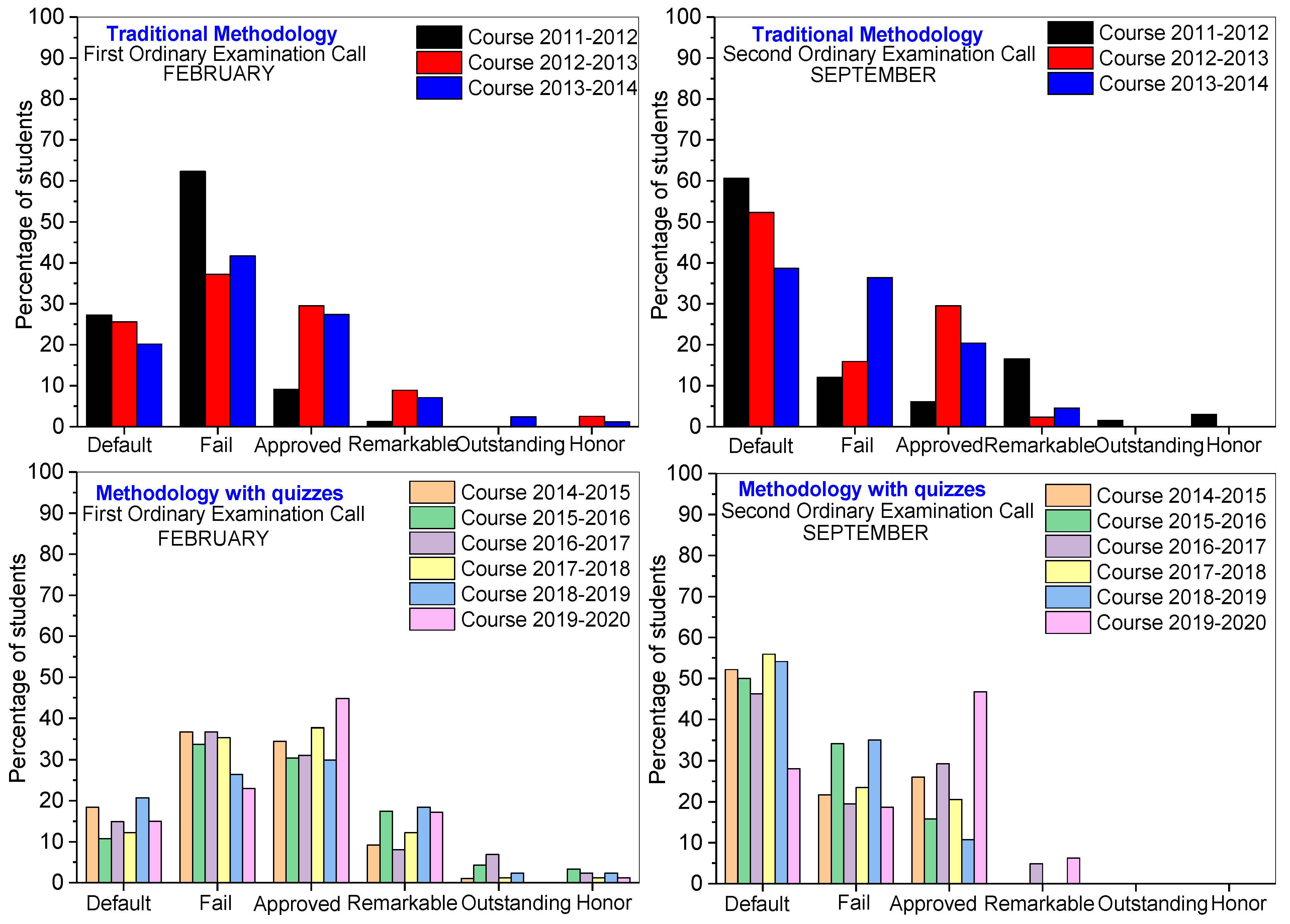

3.4. Final Scores in General Physical Chemistry

4. Conclusions

Supplementary Materials

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Valverde-Berrocoso, J.; Garrido-Arroyo, M.C.; Burgos-Videla, C.; Morales-Cevallos, M.B. Trends in Educational Research about e-Learning: A Systematic Literature Review (2009–2018). Sustainability 2020, 12, 5153. [Google Scholar] [CrossRef]

- Linuma, M. Learning and Teaching with Technology in the Knowledge Society; Springer: Singapore, 2016. [Google Scholar]

- Luisa Sevillano-García, M.A.; Vázquez-Cano, E. The Impact of Digital Mobile Devices in Higher Education. J. Educ. Technol. Soc. 2015, 18, 106–118. Available online: http://www.jstor.org/stable/jeductechsoci.18.1.106 (accessed on 10 March 2021).

- Delialioglu, O.; Yildirim, Z. Design and development of a technology enhanced hybrid instruction bases on MOLTA model: Its effectiveness in comparison to traditional instruction. Comput. Educ. 2008, 51, 474–483. [Google Scholar] [CrossRef]

- Ryberg, T.; Niemczik, C.; Brenstein, E. Methopedia-Pedagogical Design for European Educators. In Proceedings of the 8th European Conference on e-Learning, Bari, Italy, 29–30 October 2009; pp. 503–511. [Google Scholar]

- Tamin, R.M.; Bernard, R.M.; Borokhovski, E.; Abrami, P.C.; Schmid, R.F. What Forty Years of Research Says About the Impact of Technology on Learning: A Second-Order Meta-Analysis and Validation Study. Rev. Educ. Res. 2011, 81, 4–28. [Google Scholar] [CrossRef] [Green Version]

- Francis, R.; Shannon, S.J. Engaging with blended learning to improve students’ learning outcomes. Eur. J. Eng. Educ. 2013, 38, 1–11. [Google Scholar] [CrossRef]

- Kesici, S.; Sahin, I.; Akturk, A.O. Analysis of cognitive learning strategies and computer attitudes, according to college students’ gender and locus of control. Comput. Hum. Behav. 2009, 25, 529–534. [Google Scholar] [CrossRef]

- López-Pérez, M.V.; Pérez-López, M.C.; Rodríguez-Ariza, L. Blended learning in higher education: Students’ perceptions and their relation to outcomes. Comput. Educ. 2011, 56, 818–826. [Google Scholar] [CrossRef]

- Randy Garrison, D.; Vaughan, N.D. Blended Learning in Higher Education: Framework, Principles, and Guidelines; John Wiley & Sons, Inc.: New York, NY, USA, 2008; ISBN 978-1-118-26955-8. [Google Scholar]

- Anthony, B.; Kamaludin, A.; Romli, A.; Raffei, A.F.M.; Phon, D.N.; Abdullah, A.; Ming, G.L. Blended Learning Adoption and Implementation in Higher Education: A Theoretical and Systematic Review. Technol. Knowl. Learn. 2020, 25, 1–48. [Google Scholar] [CrossRef]

- Bidarra, J.; Rusman, E. Towards a pedagogical model for science education: Bridging educational contexts through a blended learning approach. Open Learn. J. Open Distance E-Learn. 2017, 32, 6–20. [Google Scholar] [CrossRef] [Green Version]

- Jamshidifarsani, H.; Tamayo-Serrano, P.; Garbaya, S.; Lim, T. A three-step model for the gamification of training and automaticity acquisition. J. Comput. Assist. Learn. 2021, 37, 994–1014. [Google Scholar] [CrossRef]

- Kirzner, R.S.; Alter, T.; Hughes, C.A. Online Quiz as Exit Ticket: Using Technology to Reinforce Learning in Face to Face Classes. J. Teach. Soc. Work 2021, 41, 151–171. [Google Scholar] [CrossRef]

- Manzano-Leon, A.; Camacho-Lazarraga, P.; Guerrero, M.A.; Guerrero-Puerta, L.; Aguilar-Parra, J.M.; Trigueros, R.; Alias, A. Between Level Up and Game Over: A Systematic Literature Review of Gamification in Education. Sustainability 2021, 13, 2247. [Google Scholar] [CrossRef]

- Lo, C.; Tang, K. Blended Learning with Multimedia e-Learning in Organic Chemistry Course. In Proceedings of the 2018 International Symposium on Educational Technology (ISET), Osaka, Japan, 31 July–2 August 2018; pp. 23–25. [Google Scholar] [CrossRef]

- Nash, S.S.; Rice, W. Moodle 3 E-Learning Course Development; PACKT Publisher: Birmingham, UK, 2015. [Google Scholar]

- Brophy, J.; Alleman, J. Assessment in a Social Constructivist Classroom. Soc. Educ. 1998, 62, 32–34. [Google Scholar]

- Gold, S. Constructivist approach to online training for online teachers. J. Asynchronous Learn. Netw. 2001, 5, 35–57. Available online: https://wikieducator.org/images/f/fb/ALN_Constructivist_Approach.pdf (accessed on 2 March 2021). [CrossRef]

- Gamage, S.H.; Ayres, J.R.; Behrend, M.B.; Smith, E.J. Optimising Moodle quizzes for online assessment. Int. J. STEM Educ. 2019, 6, 1–14. [Google Scholar] [CrossRef] [Green Version]

- Gómez-Soberón, J.; Gómez-Soberón, M.C.; Corral-Higuera, R.; Arredonde-Rea, S.P.; Almaral-Sánchez, J.L.; Cabrera-Cavarrubias, F.G. Calibrating Questionnaires by Psychometric Analysis to Evaluate Knowledge; SAGE: Thousand Oaks, CA, USA, 2013. [Google Scholar] [CrossRef] [Green Version]

- Jaeger, M.; Adair, D. Time pressure in scenario-based online construction safety quizzes and its effect on students’ performance. Eur. J. Eng. Educ. 2017, 42, 241–251. [Google Scholar] [CrossRef]

- Krause, C.M.A.; Krause, R.B.S.; Krause, R.B.S.; Gomez, N.G.M.D.; Jafry, Z.M.D.; AmDinh, V. Effectiveness of a 1-Hour Extended Focused Assessment with Sonography in Trauma Session in the Medical Student Surgery Clerkship. J. Surg. Educ. 2017, 74, 968–974. [Google Scholar] [CrossRef] [PubMed]

- Sullivan, D.P. An Integrated Approach to Preempt Cheating on Asynchronous, Objective, Online Assessments in Graduate Business Classes. Online Learn. 2016, 20, 195–209. Available online: http://onlinelearningconsortium.org/read/online-learning-journal/ (accessed on 4 May 2021). [CrossRef]

- Burden, P. ELT teacher views on the appropriateness for teacher development of end of semester student evaluation of teaching in a Japanese context. System 2008, 36, 478–491. [Google Scholar] [CrossRef]

- Nulty, D.D. The adequacy of response rates to online and paper surveys: What can be done? Assess. Eval. High. Educ. 2008, 33, 301–314. [Google Scholar] [CrossRef] [Green Version]

- Shepard, L.A. The Assessment in the Classroom: Texts Evaluation; National Institute for Educational Evaluation: Distrito Federal, Mexico, 2006.

- Ferrao, M. E-assessment within the Bologna paradigm: Evidence from Portugal. Assess. Eval. High. Educ. 2010, 35, 819–830. [Google Scholar] [CrossRef]

- Blanco, M.; Ginovart, M. Moodle quizzes for assessing statistical topics in engineering studies. In Proceedings of the Joint International IGIP-SEFI Annual Conference 2010, Trnava, Slovakia, 19–22 September 2010; Available online: https://upcommons.upc.edu/bitstream/handle/2117/9992/blanco_ginovart_igip_sefi_2010.pdf (accessed on 9 August 2021).

- Blanco, M.; Ginovart, M. On How Moodle Quizzes Can Contribute to the Formative e-Assessment of First-Year Engineering Students in Mathematics Courses. RUSC Univ. Knowl. Soc. J. 2012, 9, 166–183. [Google Scholar] [CrossRef] [Green Version]

- Crocker, L.; Algina, J. Introducction to Classical and Modern Test Theory; Holt Rinehart and Winston: New York, NY, USA, 1986. [Google Scholar]

- Wang, Z.; Osterlind, S.J. Classical Test Theory. In Handbook of Quantitative Methods for Educational Research; Teo, T., Ed.; Sense Publishers: Rotterdam, The Netherlands, 2013. [Google Scholar] [CrossRef]

- Shrout, P.E.; Lane, S.P. Psychometrics. In Handbook of Research Methods for Studying Daily Life; Mehl, M.R., Conner, T.S., Eds.; The Guilford Press: New York, NY, USA, 2012; pp. 302–320. [Google Scholar]

- GNU. General Public License. 2013. Available online: https://docs.moodle.org/dev/Quiz_report_statistic (accessed on 7 July 2020).

| Theme | Brief Description of the Topic | Thematic Block | N° Moodle Items |

|---|---|---|---|

| 1 2 3 | The matter: Laws of chemical combination Gaseous state Liquid and solid states |

| 27 True/False 53 Multiple choice 9 Matching 10 Numerical |

| 4 5 | Solutions Colligative properties of solutions |

| 34 True/False 49 Multiple choice 6 Matching 9 Numerical |

| 6 7 8 | Zeroth Law and First Law of Thermodynamics Second and Third Law of Thermodynamics Chemical equilibrium |

| 31 True/False 62 Multiple choice 14 Matching 15 Numerical |

| 9 10 | Electrolytes and electrolytic solutions Electrochemistry: electrolytic and galvanic cells |

| 20 True/False 50 Multiple choice 7 Matching 7 Numerical |

| 11 | Kinetics chemistry |

| 13 True/False 29 Multiple choice 2 Matching 9 Numerical |

| 2014–2015 | 2015–2016 | |||||||||

| BQ | FI (%) | Average Score ± Error | SD (%) | Bias | ICC (%) | FI (%) | Average Score ± Error | SD (%) | Bias | ICC (%) |

| 1 | 68–94 | 8.24 ± 1.20 | 24.36 | −1.29 | 78.85 | 31–95 | 6.98 ± 1.43 | 21.34 | −0.28 | 54.55 |

| 2 | 78–97 | 9.04 ± 0.80 | 16.50 | −2.90 | 76.50 | 83–97 | 9.35 ± 0.63 | 13.40 | −2.38 | 74.30 |

| 3 | 88–98 | 9.47 ± 0.65 | 10.26 | −2.20 | 59.52 | 80–100 | 9.24 ± 0.76 | 12.94 | −1.90 | 65.23 |

| 4 | 86–97 | 9.11 ± 0.79 | 15.66 | −2.38 | 74.45 | 85–100 | 9.46 ± 0.66 | 9.50 | −2.18 | 50.73 |

| 5 | 90–99 | 9.41 ± 0.61 | 14.89 | −2.99 | 82.97 | 90–98 | 9.44 ± 0.65 | 12.04 | −2.33 | 70.83 |

| 6 | 81–95 | 8.36 ± 0.78 | 21.20 | −2.50 | 86.40 | 15–97 | 8.48 ± 0.73 | 17.90 | −2.62 | 83.60 |

| 7 | 71–100 | 9.22 ± 0.59 | 10.50 | −1.66 | 67.90 | 47–97 | 8.53 ± 0.73 | 15.40 | −2.86 | 77.60 |

| 8 | 85–98 | 9.36 ± 0.66 | 13.90 | −2.46 | 77.30 | 93–97 | 9.56 ± 0.53 | 13.20 | −5.47 | 84.00 |

| 9 | 95–100 | 9.77 ± 0.45 | 6.80 | −3.38 | 56.40 | 89–98 | 9.62 ± 0.56 | 9.10 | −2.93 | 62.30 |

| 10 | 80–92 | 8.46 ± 0.85 | 20.80 | −2.08 | 83.50 | 37–90 | 6.51 ± 0.10 | 27.80 | −0.54 | 84.70 |

| 11 | 93–100 | 9.68 ± 0.48 | 10.00 | −5.42 | 76.90 | 58–100 | 8.93 ± 0.89 | 10.50 | −1.38 | 27.80 |

| 2016–2017 | 2017–2018 | |||||||||

| BQ | FI (%) | Average Score ± Error | SD (%) | Bias | ICC (%) | FI (%) | Average Score ± Error | SD (%) | Bias | ICC (%) |

| 1 | 81–98 | 9.35 ± 0.78 | 13.07 | −2.85 | 64.11 | 84–98 | 8.93 ± 0.88 | 19.54 | −2.07 | 79.34 |

| 2 | 85–100 | 9.54 ± 0.63 | 8.30 | −2.09 | 42.50 | 87–98 | 9.38 ± 0.63 | 14.80 | −2.78 | 81.60 |

| 3 | 97–100 | 9.86 ± 0.37 | 3.50 | −2.10 | −15.84 | 74–98 | 9.01 ± 0.85 | 14.09 | −1.53 | 62.9 |

| 4 | 77–100 | 9.25 ± 0.73 | 13.16 | −1.98 | 68.83 | 87–96 | 9.22 ± 0.75 | 14.19 | −1.83 | 71.78 |

| 5 | 91–98 | 9.63 ± 0.53 | 10.03 | −3.47 | 72.16 | 85–98 | 9.04 ± 0.78 | 17.62 | −1.78 | 80.33 |

| 6 | 52–95 | 8.48 ± 0.88 | 21.30 | −2.11 | 82.90 | 77–98 | 8.66 ± 0.82 | 23.60 | −1.96 | 87.90 |

| 7 | 62–100 | 9.11 ± 0.68 | 10.30 | −1.24 | 56.00 | 83–100 | 8.98 ± 0.71 | 16.80 | −1.76 | 82.10 |

| 8 | 86–96 | 9.10 ± 0.82 | 14.70 | −1.75 | 68.70 | 92–98 | 9.48 ± 0.64 | 11.60 | −2.20 | 70.10 |

| 9 | 82–100 | 9.35 ± 0.72 | 10.90 | −1.71 | 56.10 | 91–100 | 9.53 ± 0.62 | 10.00 | −2.49 | 61.30 |

| 10 | 51–94 | 7.73 ± 1.02 | 27.00 | −1.39 | 85.80 | 74–97 | 8.36 ± 0.79 | 25.00 | −1.90 | 90.10 |

| 11 | 88–100 | 9.55 ± 0.60 | 9.60 | −2.96 | 60.80 | 82–100 | 9.43 ± 0.68 | 10.10 | −1.53 | 54.90 |

| 2018–2019 | 2019–2020 | |||||||||

| BQ | FI (%) | Average Score ± Error | SD (%) | Bias | ICC (%) | FI (%) | Average Score ± Error | SD (%) | Bias | ICC (%) |

| 1 | 81–100 | 9.16 ± 0.78 | 18.01 | −2.36 | 80.85 | 95–65 | 8.59 ± 0.89 | 21.37 | −1.57 | 76.43 |

| 2 | 90–97 | 9.54 ± 0.59 | 11.10 | −2.90 | 71.50 | 99–89 | 9.34 ± 0.67 | 12.40 | −2.38 | 66.50 |

| 3 | 93–98 | 9.65 ± 0.53 | 8.88 | −2.90 | 64.26 | 93–100 | 9.46 ± 0.62 | 10.81 | −2.72 | 62.93 |

| 4 | 89–97 | 9.47 ± 0.64 | 11.37 | −2.64 | 68.22 | 86–97 | 9.26 ± 0.61 | 16.98 | −3.36 | 84.93 |

| 5 | 93–100 | 9.48 ± 0.61 | 12.41 | −2.77 | 76.22 | 91–100 | 9.52 ± 0.52 | 13.70 | −3.21 | 84.02 |

| 6 | 82–97 | 8.88 ± 0.79 | 22.40 | −2.26 | 87.60 | 75–99 | 9.05 ± 0.65 | 16.20 | −2.07 | 80.30 |

| 7 | 96–100 | 9.83 ± 0.31 | 7.50 | −5.40 | 82.90 | 85–97 | 9.25 ± 0.53 | 15.70 | −2.40 | 86.30 |

| 8 | 92–100 | 9.69 ± 0.53 | 7.50 | −3.05 | 50.50 | 93–100 | 9.79 ± 0.42 | 6.20 | −4.47 | 51.90 |

| 9 | 92–100 | 9.83 ± 0.39 | 5.00 | −3.96 | 40.50 | 84–100 | 9.45 ± 0.55 | 13.90 | −3.56 | 81.80 |

| 10 | 60–100 | 9.29 ± 0.50 | 10.80 | −4.08 | 78.70 | 70–98 | 9.05 ± 0.59 | 18.90 | −3.26 | 87.70 |

| 11 | 50–100 | 8.24 ± 0.96 | 11.90 | −0.54 | 34.90 | 93–100 | 9.90 ± 0.31 | 3.60 | −3.80 | 25.50 |

| 2014–2015 | 2015–2016 | |||||||||

| TBQ | FI (%) | Average Score ± Error | SD (%) | Bias | ICC (%) | FI (%) | Average Score ± Error | SD (%) | Bias | ICC (%) |

| 1 | 62–76 | 6.93 ± 1.57 | 20.20 | −0.76 | 39.60 | 53–78 | 6.36 ± 1.66 | 17.70 | −0.49 | 12.40 |

| 2 | 52–69 | 6.08 ± 1.66 | 19.00 | −0.14 | 23.40 | 50–64 | 5.74 ± 1.68 | 21.60 | 0.17 | 39.60 |

| 3 | 48–53 | 5.16 ± 1.62 | 20.90 | −0.30 | 39.80 | 48–58 | 5.18 ± 1.59 | 21.60 | −0.09 | 45.40 |

| 4 | 34–52 | 4.48 ± 1.63 | 25.30 | −0.13 | 58.70 | 39–66 | 5.30 ± 1.64 | 22.60 | −0.24 | 47.50 |

| 5 | 28–51 | 3.93 ± 1.70 | 21.90 | 0.18 | 40.00 | 38–54 | 4.70 ± 1.68 | 24.30 | −0.37 | 52.20 |

| 2016–2017 | 2017–2018 | |||||||||

| TBQ | FI (%) | Average Score ± Error | SD (%) | Bias | ICC (%) | FI (%) | Average Score ± Error | SD (%) | Bias | ICC (%) |

| 1 | 61–81 | 6.97 ± 1.55 | 19.80 | −0.58 | 38.60 | 62–74 | 7.01 ± 1.52 | 21.60 | −0.78 | 50.40 |

| 2 | 61–76 | 5.80 ± 1.57 | 18.50 | −0.72 | 28.20 | 50–64 | 5.23 ± 1.72 | 21.50 | −0.66 | 35.90 |

| 3 | 40–60 | 5.23 ± 1.62 | 22.90 | −0.03 | 50.00 | 42–61 | 5.14 ± 1.62 | 23.70 | −0.07 | 53.10 |

| 4 | 48–66 | 5.86 ± 1.68 | 22.40 | −0.30 | 43.80 | 46–76 | 5.82 ± 1.65 | 21.40 | −0.73 | 40.40 |

| 5 | 42–64 | 5.68 ± 1.67 | 19.40 | −0.35 | 26.50 | 50–73 | 5.88 ± 1.57 | 23.10 | −0.33 | 54.10 |

| 2018–2019 | 2019–2020 | |||||||||

| TBQ | FI (%) | Average Score ± Error | SD (%) | Bias | ICC (%) | FI (%) | Average Score ± Error | SD (%) | Bias | ICC (%) |

| 1 | 55–78 | 6.81 ± 1.57 | 19.50 | −0.64 | 35.50 | 61–75 | 6.99 ± 1.09 | 19.4 | −1.02 | 35.40 |

| 2 | 51–70 | 6.24 ± 1.65 | 20.60 | −0.50 | 35.20 | 50–68 | 6.00 ± 1.00 | 21.0 | −0.28 | 38.40 |

| 3 | 43–58 | 5.15 ± 1.67 | 19.40 | 0.07 | 25.70 | 49–57 | 5.31 ± 0.86 | 22.3 | −0.51 | 46.50 |

| 4 | 41–62 | 5.32 ± 1.76 | 16.80 | 0.03 | −10.30 | 49–65 | 5.73 ± 0.93 | 19.9 | −0.07 | 32.30 |

| 5 | 44–56 | 4.96 ± 1.69 | 18.70 | 0.02 | 18.30 | 43–56 | 4.95 ± 0.81 | 20.6 | −0.48 | 36.50 |

| Statements | Responses (Average Values) | |||

| Basic Quizzes (BQ) | YES | NO | NK/NA | |

| 1.- | The difficulty of the question in these quizzes has been adequate for a basic level | 89.9% | 5.4% | 4.7% |

| 2.- | The time limit of one hour is sufficient to take the quiz | 89.2% | 5.4% | 5.4% |

| 3.- | The quizzes allow you to study the subject continuously | 59.4% | 32.4% | 8.2% |

| 4.- | The quizzes are used for self-evaluation of the subject and know the level of knowledge acquired | 67.6% | 27.1% | 5.3% |

| Statements | Responses (Average Values) | |||

| Thematic Block Quizzes (TBQ) | YES | NO | NK/NA | |

| 1.- | The level of difficulty of these quizzes is higher than that of the basic ones | 91.9% | 5.4% | 2.7% |

| 2.- | The time limit of one hour is sufficient to take the quiz | 51.3% | 45.9% | 2.8% |

| 3.- | These quizzes carried out on dates programmed allow you to study the topics before evaluation | 62.1% | 35.1% | 2.8% |

| 4.- | These quizzes serve to strengthen the acquired knowledge | 59.4% | 37.8% | 2.8% |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

López-Tocón, I. Moodle Quizzes as a Continuous Assessment in Higher Education: An Exploratory Approach in Physical Chemistry. Educ. Sci. 2021, 11, 500. https://doi.org/10.3390/educsci11090500

López-Tocón I. Moodle Quizzes as a Continuous Assessment in Higher Education: An Exploratory Approach in Physical Chemistry. Education Sciences. 2021; 11(9):500. https://doi.org/10.3390/educsci11090500

Chicago/Turabian StyleLópez-Tocón, Isabel. 2021. "Moodle Quizzes as a Continuous Assessment in Higher Education: An Exploratory Approach in Physical Chemistry" Education Sciences 11, no. 9: 500. https://doi.org/10.3390/educsci11090500

APA StyleLópez-Tocón, I. (2021). Moodle Quizzes as a Continuous Assessment in Higher Education: An Exploratory Approach in Physical Chemistry. Education Sciences, 11(9), 500. https://doi.org/10.3390/educsci11090500