1. Introduction

Maintaining cleanliness in water pools and similar aquatic environments is a recurring challenge. Manual cleaning methods are labor-intensive and inefficient and expose workers to potential safety risks. Autonomous underwater vehicles (AUVs) provide a promising alternative by automating the cleaning process, which aligns with recent reviews on underwater robots and AUV-based inspection and maintenance tasks [

1,

2,

3]. However, practical deployment requires more than reliable hardware; it depends on a unified software framework that integrates perception, planning, and control into a complete and robust system.

Many existing studies improve individual modules—for example, vision-based detection or path planning—but these are often evaluated in isolation under simplified conditions. Recent surveys on AUV path planning and mobile robot navigation further highlight that most planning methods focus on navigation or obstacle avoidance rather than coverage-oriented underwater cleaning tasks [

4,

5]. This separation makes it difficult to achieve consistent performance in real-world cleaning tasks, where accurate dirt detection, efficient coverage planning, and dependable control must operate together in real time. Particularly in resource-constrained embedded platforms, the challenge lies not in optimizing a single algorithm but in designing a system that achieves reliable end-to-end performance.

This paper presents a vision-based autonomous cleaning system designed for water pools. The system combines global camera and AprilTag-based localization, dirt detection using a customized YOLOv8 model, a multi-scale A* algorithm for efficient coverage path planning, and a hierarchical control scheme. In the current implementation, perception and planning modules are executed on a PC, while motion commands are transmitted to a NanoPi-based low-level controller over Ethernet using a lightweight JSON-RPC protocol. Although experiments are conducted in a PC–NanoPi setup, the overall architecture is modular and designed with embedded deployment in mind.

The main contributions of this work are as follows:

Perception-Driven Multi-Scale A* Planning: We introduce a novel perception-guided multi-scale A* algorithm in which local high-resolution refinement is activated directly by YOLO-detected dirt regions. Unlike prior multi-resolution planners that refine resolution based on geometric complexity or heuristic expansion, our approach reshapes the search space using real-time semantic information, enabling precise, coverage-oriented planning with significantly reduced computational cost.

Task-Specific Integrated Architecture for Underwater Cleaning: We design a tightly coupled perception–planning–control framework tailored to aquatic cleaning tasks. The architecture allows perception outputs to directly influence both planning resolution and control targets, forming a task-driven integration pipeline distinct from traditional tool- or data-oriented robotic frameworks.

A Fully Implemented and Experimentally Validated Autonomous Cleaning System: We develop a practical underwater cleaning robot integrating a single overhead camera, a lightweight YOLOv8 detector, the proposed multi-scale planner, and a NanoPi-based hierarchical controller. The complete system is validated through physical experiments, providing a reproducible and low-cost baseline for real-world pool and aquaculture cleaning applications.

The remainder of this paper is organized as follows.

Section 2 reviews related work in underwater robotics, perception systems, and coverage path planning, highlighting the research gaps that motivate this study.

Section 3 introduces the overall system architecture and describes the interactions among perception, planning, and control modules.

Section 4 details the design and implementation of the proposed system, including camera pre-processing, AprilTag-based localization, YOLOv8 dirt detection, the multi-scale A* planner, and the hierarchical control architecture.

Section 5 presents the experimental setup and reports comprehensive evaluations of both the path-planning module and the fully integrated system. Finally,

Section 6 concludes the paper and discusses future extensions, including potential applications of the proposed framework to broader AUV-related tasks.

2. Related Work

Our work lies at the intersection of path planning, coverage algorithms, and integrated robotic systems. This section reviews the main developments in these areas and highlights the research gap that motivates our study.

2.1. Path Planning Algorithms

Path planning is a fundamental problem in mobile robotics. The A* algorithm is a widely used heuristic search method that guarantees optimality for graph-based shortest path problems [

6]. Its performance, however, is highly dependent on grid resolution: coarse grids enable faster computation but result in poor path quality, while fine grids improve accuracy at the expense of excessive computation and memory usage. Variants such as D*, Lifelong Planning A* (LPA*), and Anytime Repairing A* (ARA*) enhance adaptability through efficient replanning or anytime strategies [

7,

8,

9], but they remain focused on dynamic navigation rather than systematic coverage.

Beyond grid-based methods, sampling-based planners such as Rapidly exploring Random Trees (RRTs) and Probabilistic Roadmaps (PRMs) excel in high-dimensional spaces [

10,

11]. However, the irregular and non-systematic paths they generate make them unsuitable for cleaning applications, where complete and orderly coverage is essential.

In addition to these classical methods, hierarchical and multi-resolution A* planners have been extensively studied for accelerating large-scale pathfinding. The well-known Hierarchical Path-Finding A (HPA) [

12] partitions large grids into abstract regions to reduce search complexity, while multi-resolution planning frameworks such as the one proposed in [

13] provide theoretical analysis and efficient implementations for mixed-resolution search. These methods, however, primarily aim to improve computational efficiency and maintain near-optimal navigation paths. They do not incorporate perception-driven refinement nor address task-specific coverage requirements such as aligning the robot’s cleaning footprint with detected dirt regions.

2.2. Coverage Path Planning

Coverage Path Planning (CPP) addresses the need to generate paths that ensure full coverage of a target area [

14,

15]. Classical strategies such as boustrophedon decomposition or spiral patterns guarantee coverage in obstacle-free settings but quickly become inefficient and redundant in environments with obstacles.

To manage computational complexity, hierarchical and multi-resolution methods have been explored. Quadtree decompositions and multi-level grid maps enable coarse-to-fine planning [

16]. More recently, multi-resolution heuristic search algorithms such as Multi-Resolution A* (MRA*) have been proposed to combine the benefits of different discretization resolutions [

17]. Other work, such as E* and interpolated navigation functions, focuses on continuous cost interpolation and dynamic replanning for smooth paths rather than coverage completeness [

18]. However, most of these approaches have been studied in the context of exploration or navigation for ground and aerial robots, with an emphasis on computational speed. Their effectiveness in underwater cleaning tasks—where the robot’s physical cleaning radius must be considered to avoid blind spots—remains underexplored.

2.3. Integrated Robotic Systems

A further challenge lies in transitioning from isolated algorithms to functional robotic systems. Several robotic platforms have been proposed for floor cleaning or inspection, many of which rely on predefined coverage patterns and specific mechanical designs. For instance, reconfigurable floor-cleaning robots can change their morphology to enhance coverage efficiency and adapt to cluttered indoor environments [

19]. For underwater environments, surveys on underwater robots summarize a broad range of technologies and applications, including inspection, monitoring, and cleaning tasks [

1]. In particular, AUV navigation and localization techniques—such as dead reckoning, acoustic positioning, and visual methods—have been extensively reviewed, highlighting the challenges of reliable pose estimation in GPS-denied underwater environments [

2]. More recent work emphasizes the role of autonomous underwater robots in inspection and maintenance of industrial infrastructure, where replacing manual operations is crucial for safety and cost reduction [

3].

At the software level, many robotic systems rely on middleware frameworks such as ROS, which provide message-passing infrastructures, standardized interfaces, and a rich ecosystem of perception and control modules [

20]. While such frameworks greatly accelerate development and integration, they can introduce non-negligible overhead and complexity, making them less suitable for lightweight, resource-constrained embedded platforms. In addition, many existing cleaning or inspection systems assume pre-known maps or static dirt distributions and perform most computation on a powerful onboard computer, which limits feasibility in cost-sensitive scenarios.

The integration of modern perception with real-time planning and control for cleaning tasks remains relatively limited. Deep-learning-based detectors such as YOLO have become standard tools for real-time object detection in robotics [

21], yet their use in closed-loop AUV cleaning systems, where detection outputs directly drive coverage-oriented planners, is still rare. Likewise, lightweight communication frameworks—for example, simple HTTP- or RPC-based protocols instead of full-featured middleware—are often overlooked in academic research, despite their practical advantages for rapid prototyping and modular deployment on low-cost hardware.

2.4. Our Positioning

In summary, while substantial progress has been made in path planning, coverage algorithms, and robotic system design, important gaps remain. Classical A* and its incremental or anytime extensions [

6,

7,

8,

9] are well suited for navigation and replanning, but they do not directly address the efficiency–coverage trade-off in cleaning tasks under constrained computational resources. CPP methods and surveys [

14,

15] highlight the need for systematic coverage, yet most existing planners are designed for ground or aerial robots and rarely incorporate real-time visual detections into their decision-making process. Multiresolution path-planning approaches [

16,

17] and interpolated navigation functions [

18] provide efficient multi-scale search or smooth potential fields, but they are generally evaluated in navigation scenarios without explicit consideration of cleaning radius or underwater visual constraints.

Our work addresses this gap by proposing a tightly integrated system that combines a novel multi-scale A* planner for coverage, a real-time vision pipeline for perception, and a hierarchical control scheme, all validated in a realistic cleaning scenario. In contrast to prior works that primarily evaluate algorithms in simulation, our system demonstrates integrated performance through physical experiments using real hardware.

3. System Architecture

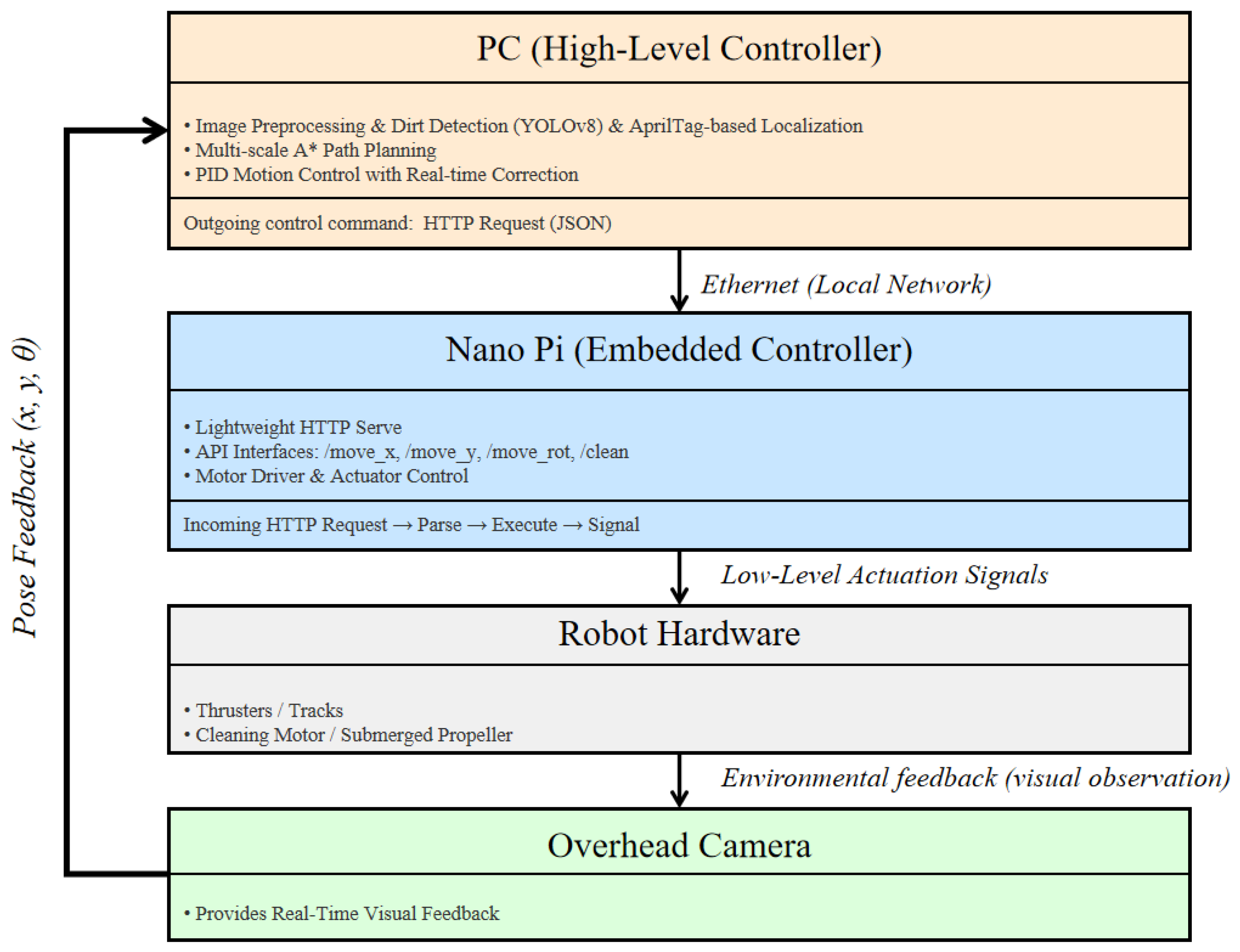

This section provides a high-level overview of the proposed autonomous cleaning system. The system adopts a modular and hierarchical architecture to ensure robustness, scalability, and suitability for deployment under resource constraints. As illustrated in

Figure 1, the workflow forms a closed perception–planning–action loop, encompassing four major modules: Visual Localization & Perception, Multi-scale Path Planning, Hierarchical Control, and the Robot Hardware Platform. The system operates as follows. First, a global overhead camera captures images of the underwater environment.

The Visual Localization & Perception Module, running on a PC, processes these images through two parallel streams. One stream applies AprilTag detection to estimate the robot’s pose in the global coordinate frame, while the other employs a custom-trained YOLOv8 model to identify and localize dirt regions. The outputs of this module—the robot’s current position and the coordinates of detected dirt—are passed to the planning layer.

The Multi-scale Path Planning Module, also executed on the PC, receives these perceptual inputs. Using our novel multi-scale A* algorithm, it generates an efficient, coverage-optimized path that balances global efficiency with local refinement, thereby avoiding cleaning blind spots.

The resulting path is then transmitted to the hierarchical control module. In the current implementation, this communication occurs between the PC and a NanoPi via a lightweight JSON-RPC protocol over Ethernet. This architecture decouples computationally intensive perception and planning from real-time actuation. The NanoPi functions as the low-level controller, translating high-level path information into motor commands and executing them on the robot hardware platform, which includes the propulsion units and the cleaning actuators.

This modular and hierarchical design offers several advantages. It enables computationally demanding algorithms, such as deep learning models, to be executed on a PC, while keeping the embedded controller simple, robust, and energy-efficient. The use of JSON-RPC provides interoperability and flexibility for future system extensions. The subsequent sections detail the technical implementation of each module.

Comparison with Existing System Integration Frameworks

Classical system-integration research identifies three major paradigms: (1) tool-oriented integration, in which heterogeneous modules are combined through a shared execution environment; (2) data-oriented integration, where subsystems exchange information through a unified data representation; and (3) process-oriented integration, which coordinates modules through workflow scheduling or rule-based execution [

22]. The proposed architecture aligns most closely with the data-oriented paradigm, as perception, planning, and control interact through well-defined spatial and semantic data structures such as robot pose, dirt coordinates, and path waypoints.

However, unlike traditional CAD/CAM integration frameworks—which operate offline, emphasize static model consistency, and typically rely on manually prepared datasets [

22]—the present system performs real-time integration within a closed feedback loop. Visual perception continuously updates the planning layer, which in turn interacts with the hierarchical controller responsible for executing refined motor-level commands. This tight perception–planning–action coupling is essential for autonomous robotic cleaning and is not addressed in conventional data-oriented integration models.

Furthermore, the proposed architecture incorporates task-driven coupling between modules: dirt detections do not merely trigger information exchange but actively reshape the search space of the multi-scale A* planner, while the resulting trajectory dynamically influences motion control. This bidirectional, perception-driven interaction goes beyond the predominantly unidirectional data exchange seen in traditional integration schemes.

Thus, the novelty of the proposed integrated framework lies not simply in combining multiple subsystems but in designing a real-time, perception-guided hierarchical architecture tailored to underwater cleaning, where sensing, planning, and execution must operate continuously, reliably, and under embedded hardware constraints.

4. System Design and Implementation

This section presents the design and implementation of the core modules that constitute the autonomous cleaning system.

4.1. Visual Localization and Perception Module

The system employs a single overhead global camera to provide visual information for robot localization and dirt detection in the experimental setup considered in this study. The camera is fixed to the ceiling at a height of approximately 3.5 m directly above the center of the pool, providing a near-orthographic top view that fully covers the experimental pool after lens undistortion and homography rectification. This configuration ensures continuous visibility of both the robot and detectable dirt regions on the pool bottom throughout the experiments.

The use of a single global camera is an engineering choice tailored to the controlled pool environment considered in this work, where full coverage can be achieved with a single top-mounted viewpoint. It does not represent a fundamental limitation of the proposed perception–planning–control framework. Rather, the framework relies on the availability of sufficiently complete environmental observations to support global localization and task-relevant perception.

In the present implementation, several assumptions are made: (1) the water is clear or moderately clear, allowing the pool bottom to be observed from above; (2) the pool geometry permits near-complete coverage from an overhead viewpoint, as is typical for rectangular or mildly irregular basins; and (3) illumination conditions provide adequate visual contrast for the YOLO-based dirt detector to operate reliably. These assumptions correspond to common controlled scenarios such as household, recreational, or aquaculture pools and define the experimental conditions under which the system is evaluated.

In environments where a single overhead camera is insufficient—such as in turbid or muddy water, or in pools with complex geometries that introduce occlusions—the proposed framework can be extended by incorporating multiple cameras, including additional overhead cameras or onboard sensors. When multiple visual sources are available, their outputs can be fused to form a more complete global perception of the workspace, while the subsequent planning and hierarchical control components remain unchanged. Such configurations fall beyond the scope of the current experimental study but are compatible with the proposed system architecture.

The Visual Localization and Perception Module processes the incoming video stream on the host PC and provides essential environmental information for subsequent planning. Two parallel tasks are performed: robot localization and dirt region detection. Robot pose is estimated using AprilTag detection and planar homography projection, while dirt regions are identified through a custom-trained YOLOv8 model, whose detections are mapped into the pool coordinate frame. The outputs of this module—robot pose and dirt coordinates—form the input to the multi-scale path planning algorithm.

4.1.1. Robot Localization via AprilTags

The robot’s planar pose

within the pool is estimated using a fiducial marker-based localization system built on AprilTags [

23]. A single AprilTag is mounted on the top surface of the robot, and a global overhead camera continuously captures top-view images that include this tag. Prior to deployment, the camera is calibrated offline to obtain intrinsic parameters, radial–tangential distortion coefficients, and a planar homography that maps image coordinates to the pool-plane coordinate frame. These parameters are applied during real-time processing to ensure geometric consistency throughout the visual perception pipeline.

During operation, the localization procedure consists of the following steps:

Image Pre-processing: Each incoming frame from the overhead camera is corrected using the intrinsic parameters and distortion coefficients obtained during offline calibration following Zhang’s checkerboard-based method [

24]. The calibration was performed using a

checkerboard pattern captured at multiple orientations to ensure numerical stability. After undistortion, four manually measured reference points on the pool boundary are used to compute a planar homography matrix that maps the corrected image to a metric top-down coordinate frame [

25]. As shown in

Figure 2, this process removes lens distortion and rectifies perspective deformation, producing a geometrically consistent view of the pool for both localization and dirt detection.

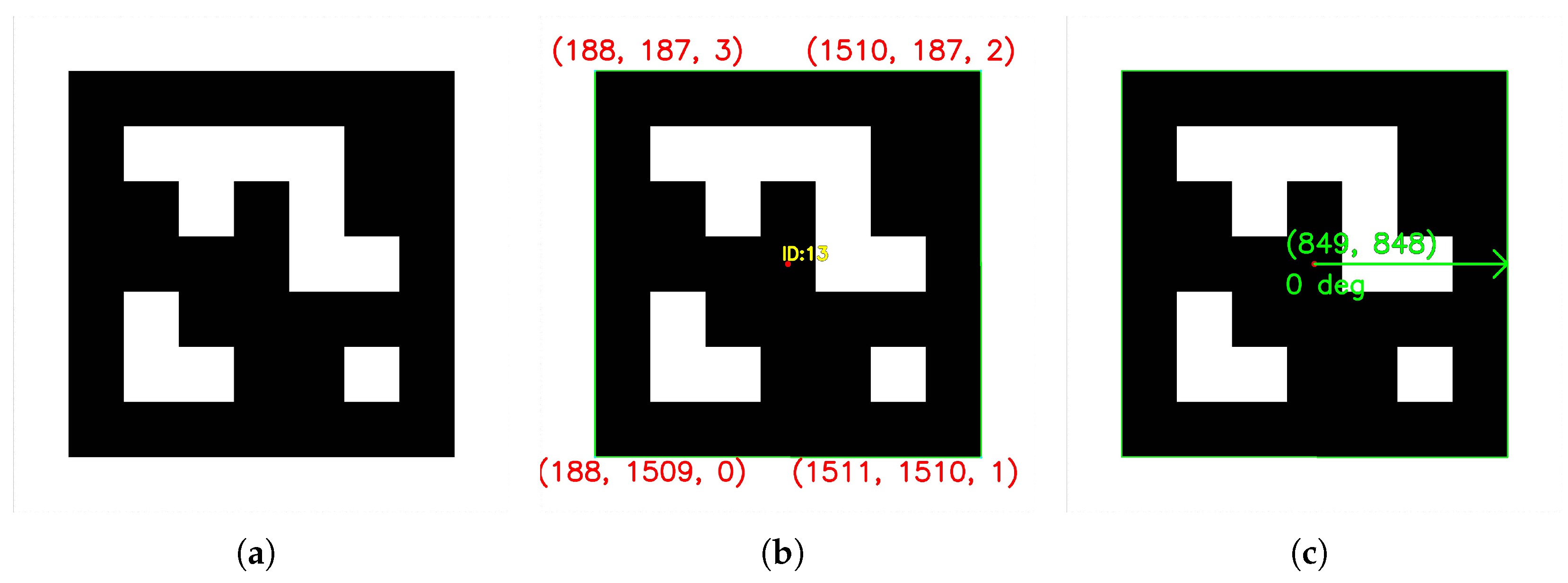

AprilTag Detection: As shown in

Figure 3a, the input image contains an AprilTag of the TAG25h9 family. The AprilTag detection library processes this image and outputs the pixel coordinates of the tag’s four corner points. Each corner is indexed from 0 to 3 in counterclockwise order starting from the upper-left vertex, as illustrated in

Figure 3b. These indices are used solely to distinguish the detected vertices and do not correspond to the robot’s pose parameters

;

2D Pose Estimation: The planar position and orientation of the robot are derived from the detected tag geometry. As illustrated in

Figure 3, the robot’s 2D position

is computed as the centroid of the four tag corner coordinates, representing the geometric center of the robot. The orientation angle

is determined by connecting the tag’s center point to the midpoint of one of its designated edges (typically the edge between corner 1 and corner 2). The direction of this line, measured relative to the image’s horizontal axis (taken as

), defines the robot’s heading. The resulting pixel-based pose

is then projected onto the real-world pool plane via the precomputed homography matrix, ensuring spatial consistency with the path-planning coordinate frame [

25].

This approach provides an accurate and computationally efficient 2D localization method using only a single top-view monocular camera. By leveraging offline calibration and geometric relationships in the AprilTag pattern [

23], the system achieves stable and repeatable pose estimation without requiring additional onboard sensors.

4.1.2. Dirt Detection Using YOLOv8

Detecting dirt on a pool bottom requires a detector that can operate in real time, reliably identify small and low-contrast targets, and remain robust under visual disturbances caused by water refraction, illumination changes, and mild turbidity. Since the detection results directly drive the downstream multi-scale A* planner, high recall is essential to avoid missed dirt regions, while high precision prevents unnecessary refinement operations. These requirements make a lightweight yet accurate one-stage detector particularly suitable for the proposed system.

YOLOv8 [

26] is adopted in this work because it provides a favorable balance between detection accuracy, model complexity, and deployability. Compared with earlier variants such as YOLOv5 [

27] and YOLOv7 [

28], YOLOv8 introduces a decoupled detection head and an improved CSP-based backbone, which enhances feature extraction for small and low-contrast dirt patches commonly appearing in underwater top-view imagery. More recent versions, including YOLOv9 [

29], YOLOv10 [

30], and YOLOv11 [

26], feature architectural refinements aimed at large-scale multi-class benchmarks, on-device computational efficiency, or maximal accuracy through heavier backbones. These features offer limited benefits for a single-class, small-object detection task with a modest dataset such as the one in this study. In contrast, YOLOv8 offers stable training behavior, strong built-in augmentation support, and excellent generalization for the scale and characteristics of our data, making it well suited for integration into the perception–planning pipeline of the proposed cleaning system.

After selecting YOLOv8 as the detection backbone, the model was fine-tuned on a custom dataset collected in the experimental pool.

A custom dataset was collected in the experimental pool, consisting of 267 manually annotated images captured from two overhead viewpoints and an underwater camera. To enhance robustness under varying illumination, turbidity, and noise conditions, the dataset was expanded to 1869 images using extensive data augmentation, including pixel dropout, sharpening, affine transformations, brightness and hue adjustment, horizontal flipping, and the random insertion of several noise types. The dataset was divided into 70% training, 20% validation, and 10% testing.

Model training was performed using the default YOLOv8n architecture with a 640 × 640 input resolution, 300 training epochs, and a batch size of 16, using the SGD optimizer with standard momentum and weight-decay regularization. The pretrained YOLOv8n weights were used as initialization, and mixed-precision training (AMP) was enabled. YOLO’s built-in augmentation pipeline—including HSV perturbation, geometric transformations, Mosaic augmentation, RandAugment, and random erasing—was activated, while Mixup and Copy-Paste were disabled. Early stopping (patience = 50) was used during training.

The fine-tuned model achieved 0.98343 mAP@50, 0.70981 mAP@50–95, 0.97581 precision, and 0.98536 recall on the validation set, demonstrating strong detection reliability. High recall minimizes missed dirt regions, while high precision prevents unnecessary activation of the multi-scale refinement module, contributing to both cleaning completeness and computational efficiency.

During operation, each pre-processed frame from the overhead camera is fed into the trained YOLOv8 network. The model outputs bounding boxes corresponding to detected dirt regions on the pool bottom. The centroid of each bounding box is then computed and projected onto the world coordinate plane

, ensuring consistency with the localization module. As illustrated in

Figure 4, the model successfully detects multiple dirt regions on the pool floor, represented by red bounding boxes with confidence scores, from above-water top-view images. These detections provide accurate and real-time input coordinates for the subsequent path-planning process.

4.2. Multi-Scale Path Planning Module

The Multi-scale Path Planning Module serves as the central decision-making component of the system. It receives the robot’s current pose from the localization module and the target coordinates from the perception module and generates an efficient, coverage-oriented path for execution. This module is built upon an enhanced multi-scale A* algorithm, specifically designed to address the trade-off between computational efficiency and cleaning coverage completeness.

4.2.1. Algorithm Overview and Integration

The conventional A* algorithm operates on a single grid resolution [

6], which poses an inherent trade-off: a coarse grid offers fast computation but may overlook areas smaller than a cell size, while a fine grid ensures full coverage at the expense of excessive computation time and memory usage. In standard A* search, each node

n is evaluated using the cost function

where

denotes the accumulated cost from the start node to

n, and

is a heuristic estimate of the cost from

n to the goal. This formulation serves as the mathematical basis for both the coarse- and fine-grid searches in the proposed multi-scale framework.

The proposed multi-scale approach mitigates this limitation by adopting a hierarchical dual-resolution strategy inspired by multiresolution search ideas [

16,

17]. Unlike conventional multiresolution planners that refine resolution based on geometric complexity or search heuristics, our approach is explicitly task-driven and tailored to coverage-oriented underwater cleaning.

In this framework, two distinct grid levels are defined: a large grid for rapid global path exploration and a small grid for precise local refinement. In this work, the large-grid cell size is approximately three times that of the small grid, providing a balance between search efficiency and spatial precision. The planner first performs a global A* search on the large grid to obtain a coarse path from the robot’s current location to the detected dirt coordinate. This global path efficiently bypasses large-scale obstacles and determines the general direction of motion, while the small grid is subsequently used to refine the final segment of the route and ensure precise alignment with the dirt region.

The specific grid resolutions used in this work—1 for the coarse grid and 1/3 for the fine grid—were selected based on the physical characteristics of the cleaning task and the typical size of dirt patches observed in the pool. In our experimental environment, most dirt regions ranged from approximately 10 cm to 30 cm in diameter, while the robot’s effective cleaning width was about 35–40 cm. The 1 coarse grid enables fast global search without oversensitivity to small variations in dirt shape or distribution, whereas the 1/3 fine grid provides sufficient spatial granularity to align the robot’s cleaning footprint with the centroid of each detected dirt region and avoid blind spots. Finer resolutions were tested during development but led to significantly higher planning time without measurable improvements in cleaning outcomes. Although the chosen values work well for the tested scenario, the multi-scale framework does not depend on fixed grid sizes; both resolutions can be adjusted to suit different pool geometries, robot dimensions, or dirt characteristics in other deployment contexts.

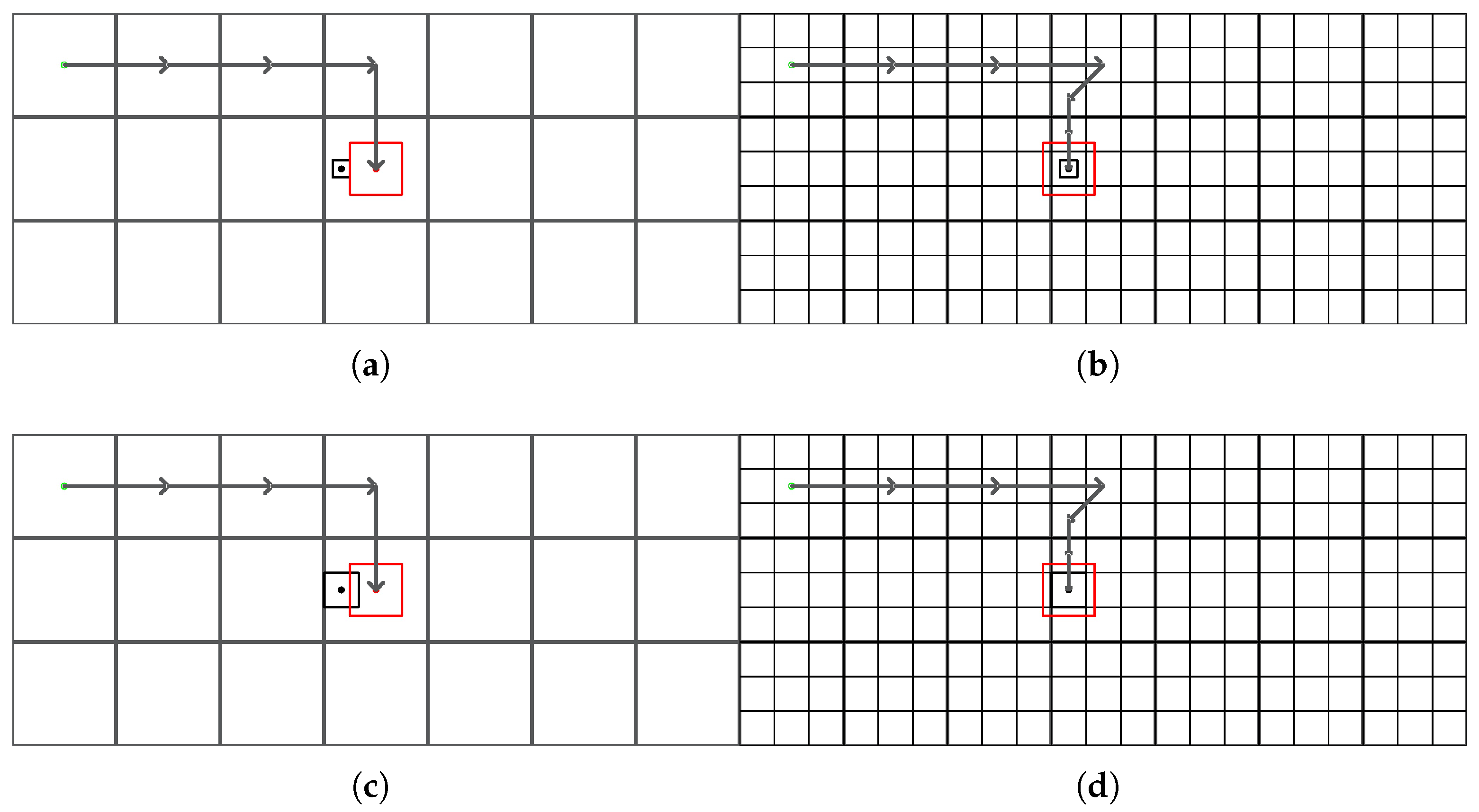

As illustrated in

Figure 5, the overall workflow of the proposed multi-scale A* path-planning algorithm consists of four main stages: (1) grid initialization, (2) hierarchical A* searches on large and small grids, (3) multi-endpoint traversal for target sequencing, and (4) merging of global and local paths into the final continuous trajectory. This hierarchical process enables the planner to combine global efficiency with local precision, ensuring that the robot follows an optimized and coverage-oriented path toward each dirt region.

To provide a clearer understanding of

Figure 5, the four stages refer specifically to the data flow and module interactions illustrated in the diagram, complementing the algorithmic concepts described earlier.

In the first stage (Grid Initialization), the system constructs both coarse and fine grid maps of the pool environment. These maps encode obstacles, dirt regions, and the robot’s initial pose at different spatial resolutions, forming the structural basis for subsequent hierarchical planning.

In the second stage (Hierarchical A* Search), two coordinated searches are performed. The coarse-grid A* search identifies a globally efficient route around large obstacles and directs the robot toward the neighborhood of the dirt region. When this coarse trajectory reaches a predefined proximity threshold, a fine-grid A* search is invoked to compute a precise local path that aligns the robot with the dirt centroid.

The third stage (Multi-Endpoint Traversal) handles cases with multiple dirt regions. Here, the planner evaluates geometric distances between all detected dirt points and determines an efficient visiting order that reduces redundant movement while preserving coverage quality.

In the final stage (Global–Local Path Merging), the coarse and fine trajectories are combined into a single continuous waypoint sequence expressed in world coordinates. Optional smoothing and resolution adjustments ensure compatibility with the robot’s motion controller. This merged trajectory preserves global optimality while providing the local accuracy required to avoid blind spots and ensure effective cleaning.

4.2.2. Path Refinement and Blind Spot Avoidance

When the robot approaches the target region (as determined by a predefined proximity threshold), the planner switches from the large grid to the small grid for precise local path generation. The endpoint of the large-grid path is used as the starting node for a new A* search on the small grid, with the dirt centroid serving as the goal. This two-stage planning mechanism allows the robot to follow a globally efficient route while achieving fine local positioning to align its cleaning mechanism with the detected dirt area, thereby eliminating potential cleaning blind spots. Such blind spots are a known issue in coverage path planning when cell size and cleaning footprint are not properly coordinated [

14,

15].

To further enhance motion safety and trajectory smoothness, an adaptive motion strategy is applied, as illustrated in

Figure 6. In this strategy, the planner dynamically adjusts the number of allowable movement directions according to the surrounding environment: eight-directional movement in open areas to improve efficiency, and four-directional movement near obstacles to minimize collision risk.

To further clarify the behavior illustrated in

Figure 6, the adaptive motion strategy can be interpreted as follows. The figure depicts a local grid neighborhood centered on the robot’s current position (shown as the black, bold-outlined cell), with surrounding cells marked as either free or occupied by obstacles (red cells). The green arrows indicate the movement directions permitted at the current step.

When the robot is located in an open, obstacle-free region, all eight possible movement directions (up, down, left, right, and the four diagonal directions) are enabled. Allowing diagonal motion in such regions shortens path length and improves navigation efficiency.

However, when the robot approaches obstacles, the planner automatically restricts movement to the four cardinal directions only. This prevents diagonal transitions that could cause the robot’s footprint to intersect an obstacle—an issue common in grid-based planners if diagonal motion is not properly constrained. In

Figure 6, this is shown by the absence of diagonal arrows near red obstacle cells.

By switching between 8-direction and 4-direction neighborhoods based on local obstacle configuration, the adaptive motion strategy improves both safety and smoothness of the planned trajectory, ensuring reliable behavior during fine-grid refinement near dirt regions or pool boundaries.

4.2.3. Final Path Generation

The module outputs a sequence of waypoints in the world coordinate frame, representing the robot’s desired coverage trajectory. As illustrated in

Figure 7, the multi-scale A* algorithm combines global and local planning to achieve both efficient navigation and accurate coverage of detected dirt regions. In this process, the blue circles mark the centers of the large-grid cells that form the coarse global path, while the green circles correspond to the centers of the small-grid cells associated with the detected dirt locations. The red squares indicate the dirt areas identified by the perception module, and the blue line depicts the final planned trajectory connecting these regions.

This integration of global and local grids enables the planner to generate paths that balance speed and precision, achieving efficient overall navigation while ensuring complete local coverage [

14,

15]. The resulting waypoints are then transmitted to the hierarchical control module for real-time execution, where the high-level commands are converted into low-level motor actions to maintain reliable motion under computational constraints.

4.2.4. Comparison with Existing Multi-Resolution A* Methods

Multi-resolution and multi-scale variants of graph-based search have been widely explored in recent years. Multi-Resolution A* (MRA*) [

17] performs search across multiple discretizations of the environment, allowing coarse resolutions to accelerate global progress while fine resolutions preserve local accuracy. AMRA* further extends this idea by introducing an anytime, multi-heuristic framework that continuously refines the solution quality across multiple resolution layers [

31].

In the maritime domain, multi-scale improvements have been applied to long-distance navigation tasks. Representative examples include the multi-scale Theta* algorithm for unmanned surface vehicles [

32] and multi-scale A* approaches incorporating collision-risk assessment for ship routing in congested waterways [

33]. Beyond maritime applications, more recent work has investigated multi-scale grid structures for large-scale scene navigation, such as scale-elastic maps that support flexible resolution adaptation during search [

34].

Although these methods share the overarching strategy of combining coarse and fine resolutions, their refinement mechanisms and evaluation objectives differ substantially from the requirements of underwater cleaning. In existing work, resolution refinement is typically triggered by geometric complexity (e.g., obstacle density, map boundaries) or by search-efficiency considerations. Correspondingly, the evaluation metrics emphasize path length, planning time, or collision-risk minimization. Moreover, these approaches are almost exclusively validated through simulation, without deployment in a complete robotic system.

In contrast, the multi-scale A* used in this work is designed specifically for task-driven coverage in underwater cleaning. Instead of refining the resolution based on geometric complexity or real-time robot proximity, the planner incorporates perception information directly into the search space: fine-grid refinement is activated in regions surrounding the dirt detected by the vision system, while the rest of the workspace is represented using a coarse grid. As a result, high-resolution search is applied precisely where accurate alignment with the dirt center affects cleaning completeness, and coarse-grid search handles the remaining global navigation. This strategy guides the robot through the central part of each dirt region to reduce blind spots, while avoiding the computational burden of running single-scale A* on a uniformly fine grid, as demonstrated in

Section 5.2. Furthermore, unlike prior multi-resolution planning approaches that are validated primarily in simulation, the proposed method is fully deployed on a real underwater cleaning robot and integrated with global-camera localization, dirt detection, and embedded motor control, confirming its practical effectiveness in real underwater environments.

4.3. Hierarchical Control Architecture

The system adopts a hierarchical control structure in which high-level and low-level functions are executed on separate computational units. The host PC is responsible for global-camera perception, localization, multi-scale path planning, and PID-based motion command generation, while the onboard NanoPi controller handles real-time actuator driving and motor control. By distributing decision-making and actuation across two processors, the architecture reduces the computational load on the embedded platform and improves modularity and robustness.

4.3.1. System Architecture and Communication Protocol

Within this framework, the hierarchical control module serves as the execution layer that bridges the perception–planning subsystem on the PC and the actuator drivers on the robot. The PC continuously updates motion commands based on real-time localization feedback, while the NanoPi provides deterministic and stable motor actuation. This division of responsibilities enables accurate trajectory tracking while keeping the onboard controller lightweight.

It is important to clarify that the robot in this study adopts a tethered design, which is common in underwater inspection and cleaning systems. The tether bundle, including both the power line and an Ethernet data line, is physically connected to the robot at one end and to a surface control station at the other end. The surface station serves as an intermediate interface between the robot and the host PC.

In the experimental setup, the host PC is connected to the surface control station via a standard Ethernet cable. Through this configuration, the PC communicates with the NanoPi onboard the robot over a continuous wired Ethernet link, while the power supply is simultaneously provided through the tether. This arrangement enables stable, low-latency communication and continuous operation without reliance on onboard batteries.

For pool-scale deployments, including large swimming pools, this tethered communication scheme provides a practical and reliable solution. Tether management is handled at the surface station, which minimizes excessive drag forces on the robot and ensures uninterrupted data transmission during extended cleaning tasks.

PC (High-Level Controller):

The PC connects to the overhead camera via USB to acquire real-time visual data. It performs image pre-processing, dirt detection, AprilTag-based localization, and multi-scale A* path planning. A discrete PID motion controller then compares the robot’s current pose

with the next waypoint and computes the corresponding heading correction. The control signal is generated according to

where

denotes the heading error at step

k. To ensure that the controller output remains within a suitable range for motor actuation, the raw PID output

is further processed through a scaling and saturation operation,

where

is a scaling factor used to regulate the overall magnitude of the control signal and

denotes the maximum allowable command. This operation prevents excessively large control outputs that could lead to abrupt motor commands or actuator saturation, thereby improving execution stability. In our implementation for heading control, the gains are

,

,

, with

and

. The resulting forward-speed and heading commands are sent to the NanoPi for low-level execution.

NanoPi (Low-Level Controller):

The NanoPi hosts a lightweight HTTP server that exposes backend control interfaces for the robot’s actuators, including thrusters, tracks, and the cleaning motor. The PC sends JSON-RPC commands to this server over Ethernet. Upon receiving a request, the NanoPi parses the JSON message, decodes the target motion or cleaning action, and converts the high-level parameters into low-level motor drive signals. These signals are then applied to the track motors and suction/cleaning motor to realize behaviors such as start, stop, speed adjustment, and directional motion.

Communication:

The PC sends JSON-RPC commands to the NanoPi over a local Ethernet connection. This HTTP-based communication is lightweight and human-readable, making it easy to debug and ensuring compatibility between the Python 3.8.20-based implementation on the PC and the C/C++ implementation on the NanoPi. Compared with more complex middleware frameworks such as ROS [

20], the chosen JSON-RPC interface simplifies deployment on resource-constrained embedded platforms while preserving modularity and network transparency.

As illustrated in

Figure 8, the hierarchical control architecture separates high-level perception and planning on the PC from low-level motor execution on the NanoPi, enabling modular and reliable system operation.

4.3.2. Path Execution and Low-Level Control

During execution, the planned trajectory is followed through a closed-loop control process coordinated between the PC and the NanoPi. At each control cycle, the PC retrieves the robot’s updated pose from the localization module and uses the PID controller to compute the required forward velocity and heading adjustment relative to the next waypoint.

These motion commands are transmitted to the NanoPi as JSON-RPC messages. After receiving a command, the NanoPi immediately invokes the corresponding backend routine, converts the command parameters into PWM or voltage outputs that drive the left and right track motors and the cleaning motors accordingly.

Because the NanoPi performs only low-level actuation and does not participate in perception or planning, it is able to maintain stable and timely motor execution even if the PC experiences temporary delays. This division of responsibilities ensures that the robot follows the planned path smoothly and preserves the robustness of the overall closed-loop system.

5. Experiments and Results

This section presents a comprehensive evaluation of the proposed autonomous cleaning system. The experiments were designed to validate two main aspects:

The performance improvement of the multi-scale A* algorithm compared with conventional single-scale methods; and

The overall effectiveness and robustness of the integrated vision–planning–control system in performing end-to-end cleaning tasks.

All experiments were conducted in a controlled pool simulation environment that closely approximates real-world aquatic cleaning conditions.

5.1. Experimental Setup

The system was deployed in a simulated pool measuring . A global overhead camera was used for both robot localization and dirt detection. The cleaning robot was equipped with a NanoPi controller and maintained communication with the host PC via a local Ethernet connection using the JSON-RPC protocol.

For the path planning module, the multi-scale A* algorithm was configured with a large grid cell size of approximately 1 , and a small grid cell size set to one-third of that value. This 3:1 ratio allows the planner to perform a fast global search on the coarse grid and refine the trajectory locally on the finer grid. For comparison, a standard single-scale A* algorithm was implemented using both the coarse (1 ) grid and the fine (1/3 ) grid resolutions.

The evaluation metrics included:

Planning time (s): total computation time required for path generation;

Memory usage (MB): peak memory consumed during planning;

Coverage rate (%): proportion of the workspace effectively traversed during cleaning.

As illustrated in

Figure 9, the experimental setup includes four components.

Figure 9a shows an overview of the pool environment, where a global overhead camera is installed above the water surface to provide perception for both localization and dirt detection.

Figure 9b presents the prototype of the cleaning robot. The platform is equipped with two tracked drive units for forward, backward, and turning motions, and two suction thruster modules that generate the intake flow required for dirt removal. An AprilTag marker is mounted on the top of the robot to support global pose estimation.

During operation, as shown in

Figure 9c, the robot performs underwater cleaning tasks in the pool environment.

Finally,

Figure 9d displays the visualization interface running on the PC, which shows the planned trajectory generated by the multi-scale A* path planner during real-time testing.

5.2. Performance of Multi-Scale A* Path Planning

To quantitatively evaluate the planning module, two experimental scenarios with different obstacle densities were tested.

Quantitative Analysis

The qualitative differences among the three planners, as illustrated in

Figure 10, are further supported by the quantitative performance metrics summarized in

Figure 11, which presents the computation time (

Figure 11a) and memory usage (

Figure 11b) for different A* configurations. The results confirm that while the coarse-grid single-scale A* is the fastest, it compromises spatial precision; the fine-grid single-scale A* provides the highest accuracy but at the cost of computation time and memory. The proposed multi-scale A* achieves a desirable balance—its computation time is approximately 20–25% lower than that of the fine-grid A*, and its memory consumption is also reduced while maintaining a comparable coverage rate.

Although the three A* configurations exhibit similar computation times (<1 s) and memory usage (around 6 MB) in our experiments, this is mainly due to the limited spatial scale of the test pool, which restricts the total number of grid cells and therefore keeps the search space small. When the environment size grows or when finer grid resolutions are required, the number of grid cells increases quadratically with map dimensions. Because the computational complexity of grid-based A* grows approximately with the number of expanded nodes—which scale with the total number of cells—the planning time and memory usage of single-scale fine-grid A* rise rapidly as the grid resolution increases.

The proposed multi-scale A* avoids this quadratic explosion by restricting fine-grid search to compact regions around detected dirt while maintaining coarse resolution elsewhere. Even in an offline “plan-then-execute” workflow such as the one adopted in this study, this structure substantially reduces the computation required for generating each cleaning path, particularly when the target region is small relative to the global workspace. This advantage becomes increasingly significant in larger pools or aquaculture ponds, where a uniformly fine grid can easily reach tens of thousands of cells, making a single fine-grid A* plan considerably more expensive in both time and memory. In contrast, the multi-scale approach keeps global planning overhead close to that of a coarse grid while applying high-resolution search only where task accuracy matters.

Thus, while the advantages of multi-scale planning appear modest in our small experimental pool due to its limited grid size, the method provides clear scalability benefits for realistic large-area cleaning scenarios, even when planning is performed offline rather than in a continuous replanning loop.

5.3. Analysis of Cleaning Blind Spots

A key advantage of the multi-scale approach lies in its ability to reduce cleaning blind spots—areas left uncleaned due to mismatches between grid resolution and the robot’s effective cleaning radius.

5.3.1. Blind Spot Formation in Single-Scale Planning

When using a coarse grid, the planned path may not align precisely with small or irregular dirt regions. As illustrated in

Figure 12a, even when the computed trajectory is geometrically correct, the robot’s cleaning footprint (red bounding box) may fail to fully cover the dirt area (black square), resulting in incomplete cleaning.

5.3.2. Effectiveness of Multi-Scale Refinement

The multi-scale A* algorithm effectively addresses this issue by switching to a finer grid near the target region. As shown in

Figure 12b, the local refinement enables the robot to adjust its final approach and accurately align its cleaning mechanism with the detected dirt, achieving more thorough coverage. Experimental observations confirmed that the multi-scale strategy consistently provided smoother trajectories and significantly improved cleaning completeness compared to single-scale planning.

In addition, the selection of the fine-grid resolution is directly related to the physical cleaning capability of the robot. Since the proposed system employs a top-down global camera and performs planning in a planar workspace, the coverage effect can be analyzed geometrically in the same coordinate frame. In our experimental setup, the robot’s effective cleaning width is approximately 35–40 cm. By selecting a fine-grid resolution of 1/3 m, which is equal to or slightly smaller than the cleaning footprint, adjacent waypoints generated by the fine-grid planner inevitably produce overlapping cleaning trajectories. This ensures that the target region is fully covered without leaving uncovered gaps between neighboring passes.

From a coverage perspective, this relationship guarantees that the spatial discretization of the planner does not exceed the physical coverage resolution of the cleaning mechanism. As a result, blind spots caused by a mismatch between grid resolution and cleaning footprint are structurally avoided in the proposed multi-scale framework.

5.3.3. Scenario with Enlarged Dirt Area

For larger dirt patches, the coarse-grid A* planner often achieves only partial coverage, as illustrated in

Figure 12c. In contrast,

Figure 12d demonstrates that the multi-scale planner enables the robot to traverse the key central region of the dirt area, ensuring more complete cleaning coverage and reducing the likelihood of missed spots.

5.4. End-to-End System Validation

To validate the performance of the fully integrated cleaning system, an end-to-end experiment was conducted, combining visual perception, multi-scale path planning, and robot control within a closed-loop workflow.

In the implemented experimental setup, the control framework followed a client–server architecture. The PC acted as the high-level controller, running the YOLOv8-based dirt detection module and the multi-scale A* path-planning algorithm. The NanoPi onboard the robot functioned as a lightweight low-level controller, executing motion commands received from the PC via a JSON-RPC protocol over a local Ethernet connection. This design enabled reliable and low-latency communication while avoiding the complexity of heavy middleware frameworks.

Figure 13 presents the visualization interface displayed on the PC during system operation. The global overhead camera continuously captured the experimental environment, and the processed results showed the robot’s position, orientation, planned trajectory, and detected dirt regions in real time. The figure clearly demonstrates that the robot successfully followed the planned path and reached each dirt location for cleaning, confirming the system’s effective coordination between perception, planning, and control.

Multiple trials were conducted with different dirt distributions to evaluate the robustness and stability of the system. Throughout all experiments, the integrated perception–planning–control loop operated smoothly and consistently without failure. The robot was accurately localized, dirt regions were reliably detected, and the NanoPi executed the planned trajectories in real time with stable communication performance.

These results confirm that the proposed system demonstrates high operational stability and dependable autonomy, successfully completing the cleaning process under all tested configurations and validating its feasibility and effectiveness for autonomous underwater cleaning tasks.

5.5. Overall Analysis and Discussion

The experimental results collectively verify the effectiveness and practicality of the proposed autonomous cleaning system. Through systematic testing under multiple environmental configurations, both the performance of individual modules and the functionality of the fully integrated system have been comprehensively validated.

5.5.1. Synthesis of Key Findings

The proposed multi-scale A* algorithm effectively resolves the inherent trade-off between computational efficiency and cleaning coverage in traditional path planning. As demonstrated in

Section 5.2 and

Section 5.3, the algorithm achieves noticeably shorter planning times and lower memory consumption than the fine-grid single-scale A*, while maintaining consistently high coverage performance. This improvement results from the hierarchical design that combines fast global planning on the coarse grid with precise local refinement near the target regions. The analysis of cleaning blind spots further confirms that the multi-scale approach enhances coverage completeness, ensuring that even small and irregular dirt regions can be effectively cleaned. This feature is particularly valuable for long-term maintenance, as missed regions tend to accumulate dirt over time and degrade cleaning effectiveness.

5.5.2. System-Level Integration Benefits

The end-to-end experiments confirm the robustness of the hierarchical architecture. The Ethernet-based JSON-RPC communication between the PC and the NanoPi provides reliable and low-latency interaction, ensuring smooth coordination between the perception, planning, and control layers. This hierarchical and decoupled framework ensures that occasional computation delays in visual processing do not compromise the stability of robot motion.

Integrating real-time perception with adaptive path planning allows the system to shift from uniform, blind cleaning to goal-oriented cleaning. The robot autonomously identifies dirt regions, plans optimized trajectories, and executes them through the embedded controller without manual intervention. The closed-loop tests across multiple configurations consistently demonstrated stable and efficient cleaning performance, verifying the practical feasibility of the proposed approach.

5.5.3. Comparative Advantages and Limitations

Compared with existing underwater or pool-cleaning systems—many of which rely on random motion or predefined Z- or S-shaped sweeping patterns—the proposed framework offers several practical advantages. First, the system provides high-precision global perception using a single calibrated overhead camera. Unlike conventional cleaning robots that operate without explicit dirt awareness, the integration of AprilTag-based localization and YOLOv8 dirt detection enables the robot to perform goal-driven, dirt-aware cleaning. This reduces redundant coverage in clean regions and ensures that areas with concentrated dirt receive sufficient cleaning attention.

Second, the task-driven multi-scale A* planner offers a significant improvement over traditional navigation-oriented A* variants. By activating fine-grid refinement only around vision-detected dirt regions, the method achieves precise alignment of the robot’s cleaning footprint with detected targets while avoiding the computational burden of a uniformly fine grid. This perception-guided refinement is tailored specifically for coverage tasks rather than generic shortest-path navigation.

Third, the hierarchical control architecture separates high-level perception and planning from low-level deterministic actuation. This allows computationally intensive modules—such as YOLO inference and multi-scale A* planning—to run on the PC, while the embedded NanoPi focuses on stable motor control. This design ensures reliable operation on low-cost hardware and increases deployment flexibility.

Finally, the system is validated end-to-end on real hardware rather than in simulation alone. Experiments confirm stable detection, accurate localization, efficient path planning, and smooth execution across various dirt distributions. These results highlight the system’s practical viability and demonstrate clear advantages over existing commercial approaches that lack adaptive sensing or intelligent path planning.

Despite these advantages, the system also exhibits several limitations. The reliance on a fixed overhead camera restricts deployment to environments where the pool bottom is clearly visible from above, limiting the system’s applicability in turbid water or irregularly shaped pools. The dirt detection model, trained on a modest dataset, may require additional data and domain adaptation techniques to generalize well under challenging lighting or water conditions. Furthermore, the current planning workflow follows a “plan-then-execute” procedure without online replanning; thus, the system cannot yet respond dynamically to moving obstacles or evolving dirt patterns. Addressing these limitations—such as through onboard sensing, expanded training data, or real-time replanning—will be the focus of future work.

Overall, the integration of global visual sensing, task-aware multi-scale planning, and hierarchical control yields an efficient and reliable solution that surpasses existing random-motion or pattern-based cleaning approaches. Experimental results across multiple configurations confirm the system’s adaptability, robustness, and strong potential for broader applications in aquatic maintenance, including pool cleaning, aquaculture environments, and other underwater service tasks.

5.5.4. System Resilience Discussion

Recent literature distinguishes robustness—which concerns maintaining performance under bounded disturbances—from resilience, which emphasizes the system’s ability to recover gracefully and sustain functionality under unexpected or disruptive conditions [

35]. Although the present study focuses on autonomous underwater cleaning in controlled pool environments, several aspects of the proposed system contribute to its resilience.

First, the hierarchical control architecture enhances disturbance tolerance. The high-level PC performs perception and planning, while the low-level NanoPi executes deterministic motor actuation. Even if the visual processing on the PC experiences temporary latency, the NanoPi continues to apply the latest valid command, preventing abrupt motion changes and preserving operational stability.

Second, although the water in our experimental pool exhibits minimal flow disturbance, the robot employs a PID-based heading controller whose proportional–derivative structure offers intrinsic disturbance rejection. Small lateral drift caused by mild currents or hydrodynamic fluctuations is corrected in real time, ensuring convergence toward planned waypoints. This capability suggests that the system would remain functional under the more typical low-speed flows present in swimming pools or aquaculture tanks.

Third, the system incorporates perception redundancy through continuous AprilTag-based localization. Occasional detection errors—for example, due to momentary glare or partial occlusion—are mitigated by the frame-to-frame consistency of the homography projection and the inertia of the PID controller. These mechanisms allow the robot to recover normal operation after short-term perceptual disturbances.

It is important to note that the current system adopts an offline “plan-then-execute” workflow rather than continuous replanning. Thus, resilience here refers to maintaining stable execution rather than adaptive route modification. Future extensions involving online replanning could further improve resilience by enabling the robot to react to dynamic obstacles or time-varying flow conditions.

Overall, in the sense defined by Sun et al. [

35], the proposed system exhibits resilience by sustaining acceptable functionality and recovering stable operation after transient perceptual or control disturbances, rather than merely maintaining nominal performance under bounded uncertainties.

6. Conclusions and Future Work

This paper presented an integrated, vision-based autonomous underwater cleaning system that combines real-time localization, deep-learning-based dirt detection, a multi-scale A* path planning algorithm, and a hierarchical control architecture. The system is implemented in a practical PC–NanoPi setup using a lightweight JSON-RPC protocol, forming a complete perception–planning–control loop suitable for resource-constrained platforms.

Comprehensive experiments conducted in a controlled pool environment demonstrate reliable closed-loop performance of the system. Specifically, the YOLOv8-based dirt detector, trained on an augmented environment-specific dataset, achieved an mAP@50 of 0.983, precision of 0.976, and recall of 0.985, providing accurate and robust dirt localization for downstream planning. Quantitative comparisons across different A* configurations show that the proposed multi-scale planner maintains coverage quality comparable to a fine-grid single-scale A* while reducing planning time by approximately 20–25% and lowering memory usage. Qualitative trajectory analysis further confirms that the fine-grid refinement stage consistently eliminates small-area blind spots that occur when planning solely on a coarse grid. End-to-end trials with varying dirt distributions additionally verify stable perception, consistent localization, and smooth execution of planned paths through the NanoPi-based controller.

While the system performs effectively in the tested scenarios, several limitations suggest directions for future work. First, the current perception module depends on a fixed overhead camera, which constrains deployment to pools where the bottom surface remains visible from above. Future research will investigate the integration of onboard sensing—such as downward-looking cameras, side-view cameras, or acoustic sensors—to enable localization and perception in larger or more complex underwater environments. Second, the dirt detection model is trained on a finite static dataset; extending the dataset and incorporating adaptive or online learning schemes could further improve robustness under varying illumination, turbidity, and background conditions. Third, the present multi-scale planner assumes static obstacles and slowly varying dirt distributions. Extending the framework to support dynamic replanning in the presence of moving obstacles or newly detected dirt would enhance autonomy in more realistic, time-varying environments. Finally, migrating perception and planning modules from the host PC to an onboard embedded platform will be an important step toward a fully self-contained commercial cleaning robot. In future work, we also plan to extend the system to three-dimensional cleaning tasks (e.g., wall and sloped-surface cleaning) and explore multi-robot coordination strategies for large-scale aquatic maintenance.

Beyond underwater cleaning, the proposed system architecture is designed as a general perception–planning–control framework that can be extended to a variety of AUV-related tasks, provided that sufficient task-relevant environmental information can be obtained.

When global or near-complete visual information of the workspace is available, the system can be directly adapted to vision-driven underwater applications such as structural defect inspection, bridge-pier safety assessment, biofouling or attachment removal, and localized mineral or debris cleaning. In these scenarios, the perception module can be reconfigured to detect task-specific targets (e.g., cracks, corrosion regions, biological attachments, or inspection landmarks), while the multi-scale A* planner can generate coverage- or waypoint-oriented paths that guide the AUV to regions of interest with task-aware precision.

In environments where optical visibility is insufficient to fully support perception—such as in turbid water, low-light conditions, or complex three-dimensional structures—the proposed framework can be extended by incorporating complementary sensing modalities, including acoustic imaging, sonar, or other onboard sensors. In such cases, visual and non-visual sensor outputs can be fused to form a unified information flow that drives planning and control, while the overall architecture and hierarchical decision structure remain unchanged. The perception layer is thus generalized from purely vision-based input to multi-modal environmental awareness.

From a system perspective, this modular design allows the same perception–planning–control pipeline to be reused across different underwater tasks by replacing task-specific perception models, cost functions, and control objectives, rather than redesigning the entire system. Therefore, the proposed approach should be viewed not only as a solution for pool cleaning, but as a flexible and extensible foundation for autonomous underwater operations in inspection, maintenance, and service-oriented AUV applications.

In summary, this study provides a comprehensive and practical framework for vision-guided autonomous underwater cleaning. By integrating computer vision, heuristic search, and a hierarchical control architecture into a unified system, the proposed approach demonstrates reliable performance and strong potential for real-world aquatic maintenance applications.

Author Contributions

Conceptualization, Z.S. and E.C.; methodology, Z.S. and Z.L.; software, Z.L.; validation, Z.L. and X.F.; formal analysis, Z.L.; investigation, Z.L. and X.F.; resources, J.C. and P.C.; data curation, Z.L.; writing—original draft preparation, Z.L.; writing—review and editing, Z.S., E.C., P.C. and X.F.; visualization, Z.L.; supervision, Z.S.; project administration, Z.S.; funding acquisition, Z.S. All authors have read and agreed to the published version of the manuscript.

Funding

This research was funded by the project “Research on Image Matching Methods in Complex Scenes” (ZQ2024060) and Jimei University.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

The data presented in this study are available on request from the corresponding author.

Acknowledgments

The authors thank all colleagues who provided helpful discussions and technical support during the course of this research. We also extend our sincere thanks to Jimei University for providing the valuable research facilities and technical support that made this work possible.

Conflicts of Interest

Author Jiancheng Chen was employed by the Xiamen Intretech Inc. The remaining authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Abbreviations

The following abbreviations are used in this manuscript:

| A* | A-star algorithm |

| AUV | Autonomous Underwater Vehicle |

| CPP | Coverage Path Planning |

| JSON-RPC | JavaScript Object Notation Remote Procedure Call |

| PID | Proportional-Integral-Derivative |

| YOLO | You Only Look Once |

References

- Bogue, R. Underwater Robots: A Review of Technologies and Applications. Ind. Robot. 2015, 42, 186–191. [Google Scholar] [CrossRef]

- Paull, L.; Saeedi, S.; Seto, M.; Li, H. AUV Navigation and Localization: A Review. IEEE J. Ocean. Eng. 2013, 39, 131–149. [Google Scholar] [CrossRef]

- Nauert, F.; Kampmann, P. Inspection and Maintenance of Industrial Infrastructure with Autonomous Underwater Robots. Front. Robot. AI 2023, 10, 1240276. [Google Scholar] [CrossRef] [PubMed]

- Cheng, C.; Sha, Q.; He, B.; Li, G. Path Planning and Obstacle Avoidance for AUV: A Review. Ocean Eng. 2021, 235, 109355. [Google Scholar] [CrossRef]

- Qin, H.; Shao, S.; Wang, T.; Yu, X.; Jiang, Y.; Cao, Z. Review of Autonomous Path Planning Algorithms for Mobile Robots. Drones 2023, 7, 211. [Google Scholar] [CrossRef]

- Hart, P.E.; Nilsson, N.J.; Raphael, B. A Formal Basis for the Heuristic Determination of Minimum Cost Paths. IEEE Trans. Syst. Sci. Cybern. 1968, 4, 100–107. [Google Scholar] [CrossRef]

- Koenig, S.; Likhachev, M. D* Lite. In Proceedings of the 18th National Conference on Artificial Intelligence (AAAI), Edmonton, AB, Canada, 28 July–1 August 2002; pp. 476–483. [Google Scholar]

- Koenig, S.; Likhachev, M.; Furcy, D. Lifelong Planning A*. Artif. Intell. 2004, 155, 93–146. [Google Scholar] [CrossRef]

- Likhachev, M.; Ferguson, D.I.; Gordon, G.J.; Stentz, A.; Thrun, S. Anytime Dynamic A*: An Anytime, Replanning Algorithm. In Proceedings of the International Conference on Automated Planning and Scheduling (ICAPS), Monterey, CA, USA, 5–10 June 2005; pp. 262–271. [Google Scholar]

- LaValle, S. Rapidly-Exploring Random Trees: A New Tool for Path Planning. In Research Report 9811; Iowa State University: Ames, IA, USA, 1998. [Google Scholar]

- Kavraki, L.E.; Svestka, P.; Latombe, J.-C.; Overmars, M.H. Probabilistic Roadmaps for Path Planning in High-Dimensional Configuration Spaces. IEEE Trans. Robot. Autom. 2002, 12, 566–580. [Google Scholar] [CrossRef]

- Botea, A.; Müller, M.; Schaeffer, J. Near Optimal Hierarchical Path-Finding. J. Game Dev. 2004, 1, 7–28. [Google Scholar]

- Likhachev, M.; Ferguson, D.; Gordon, G.; Stentz, A. Multi-Resolution Path Planning: Theoretical Analysis, Efficient Implementation, and Extensions to Dynamic Environments. In Proceedings of the 49th IEEE Conference on Decision and Control (CDC), Atlanta, GA, USA, 15–17 December 2010; pp. 1384–1390. [Google Scholar]

- Choset, H. Coverage for Robotics—A Survey of Recent Results. Ann. Math. Artif. Intell. 2001, 31, 113–126. [Google Scholar] [CrossRef]

- Galceran, E.; Carreras, M. A Survey on Coverage Path Planning for Robotics. Robot. Auton. Syst. 2013, 61, 1258–1276. [Google Scholar] [CrossRef]

- Kambhampati, S.; Davis, L. Multiresolution Path Planning for Mobile Robots. IEEE J. Robot. Autom. 1986, 2, 135–145. [Google Scholar] [CrossRef]

- Du, W.; Islam, F.; Likhachev, M. Multi-Resolution A*. In Proceedings of the International Symposium on Combinatorial Search, Online, 8–12 June 2020; pp. 29–37. [Google Scholar]

- Philippsen, R.; Siegwart, R. An Interpolated Dynamic Navigation Function. In Proceedings of the 2005 IEEE International Conference on Robotics and Automation, Barcelona, Spain, 18–22 April 2005; pp. 3782–3789. [Google Scholar]

- Prabakaran, V.; Elara, M.R.; Pathmakumar, T.; Nansai, S. Floor Cleaning Robot with Reconfigurable Mechanism. Autom. Constr. 2018, 91, 155–165. [Google Scholar] [CrossRef]

- Quigley, M.; Conley, K.; Gerkey, B.; Faust, J.; Foote, T.; Leibs, J.; Wheeler, R.; Ng, A.Y. ROS: An Open-Source Robot Operating System. In Proceedings of the ICRA Workshop on Open Source Software, Kobe, Japan, 17 May 2009. [Google Scholar]

- Redmon, J.; Divvala, S.; Girshick, R.; Farhadi, A. You Only Look Once: Unified, Real-Time Object Detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 779–788. [Google Scholar]

- Zhang, W. An Integrated Environment for CAD/CAM of Mechanical Systems. Ph.D. Thesis, Delft University of Technology, Delft, The Netherlands, 1994. [Google Scholar]

- Olson, E. AprilTag: A Robust and Flexible Visual Fiducial System. In Proceedings of the 2011 IEEE International Conference on Robotics and Automation, Shanghai, China, 9–13 May 2011; pp. 3400–3407. [Google Scholar]

- Zhang, Z. A Flexible New Technique for Camera Calibration. IEEE Trans. Pattern Anal. Mach. Intell. 2002, 22, 1330–1334. [Google Scholar] [CrossRef]

- Hartley, R.; Zisserman, A. Multiple View Geometry in Computer Vision, 2nd ed.; Cambridge University Press: Cambridge, UK, 2003. [Google Scholar]

- Jocher, G.; Chaurasia, A.; Qiu, J. YOLOv8: State-of-the-Art YOLO Model for Object Detection, Segmentation, and Classification. Available online: https://github.com/ultralytics/ultralytics (accessed on 20 January 2024).

- Jocher, G. YOLOv5 by Ultralytics. Available online: https://github.com/ultralytics/yolov5 (accessed on 10 January 2024).

- Wang, C.-Y.; Bochkovskiy, A.; Liao, H.-Y.M. YOLOv7: Trainable Bag-of-Freebies Sets New State-of-the-Art for Real-Time Object Detectors. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, New Orleans, LA, USA, 19–20 June 2022; pp. 7464–7475. [Google Scholar]

- Wang, C.-Y.; Yeh, I.-H.; Liao, H.-Y.M. YOLOv9: Learning What You Want to Learn Using Programmable Gradient Information. In Computer Vision—ECCV 2024, Proceedings of the European Conference on Computer Vision (ECCV), Milan, Italy, 29 September–4 October 2024; Springer: Cham, Switzerland, 2024; pp. 1–21. [Google Scholar]

- Wang, A.; Chen, H.; Liu, L.; Chen, K.; Lin, Z.; Han, J. YOLOv10: Real-Time End-to-End Object Detection. Adv. Neural Inf. Process. Syst. 2024, 37, 107984–108011. [Google Scholar]

- Saxena, D.M.; Kusnur, T.; Likhachev, M. AMRA*: Anytime Multi-Resolution Multi-Heuristic A*. In Proceedings of the 2022 IEEE International Conference on Robotics and Automation (ICRA), Philadelphia, PA, USA, 23–27 May 2022. [Google Scholar]

- Han, X.; Zhang, X. Multi-Scale Theta* Algorithm for the Path Planning of Unmanned Surface Vehicle. Proc. Inst. Mech. Eng. Part M J. Eng. Marit. Environ. 2022, 236, 427–435. [Google Scholar] [CrossRef]

- Song, C.; Guo, T.; Sui, J. Ship Path Planning Based on Improved Multi-Scale A* Algorithm of Collision Risk Function. Sci. Rep. 2024, 14, 30418. [Google Scholar] [CrossRef] [PubMed]

- Sun, Y.; Tong, X.; Lei, Y.; Guo, C.; Lei, Y.; Song, H.; An, Z.; Tang, J.; Wu, Y. A Multi-Scale Path-Planning Method for Large-Scale Scenes Based on a Framed Scale-Elastic Grid Map. Int. J. Digit. Earth 2024, 17, 2383852. [Google Scholar] [CrossRef]

- Sun, Z.; Yang, G.; Zhang, B.; Zhang, W. On the Concept of the Resilient Machine. In Proceedings of the 6th IEEE Conference on Industrial Electronics and Applications (ICIEA), Beijing, China, 21–23 June 2011; pp. 357–360. [Google Scholar]

Figure 1.

Overall architecture of the autonomous cleaning system. The workflow forms a closed perception–planning–action loop, integrating global visual sensing, PC-based perception and path planning, hierarchical control execution, and NanoPi-level motor actuation.

Figure 1.

Overall architecture of the autonomous cleaning system. The workflow forms a closed perception–planning–action loop, integrating global visual sensing, PC-based perception and path planning, hierarchical control execution, and NanoPi-level motor actuation.

Figure 2.

Image pre-processing results. (a) Original image. (b) Undistorted image. (c) Perspective-transformed top view.

Figure 2.

Image pre-processing results. (a) Original image. (b) Undistorted image. (c) Perspective-transformed top view.

Figure 3.

AprilTag detection and orientation estimation process. (a) Original input image containing the AprilTag. (b) Detection result showing the tag ID and the numbered corner coordinates. (c) Orientation estimation by connecting the tag center to the midpoint of the reference edge ( = direction). Different colored annotations are used solely for visualization purposes to distinguish the tag ID, corner coordinates, and orientation reference.

Figure 3.

AprilTag detection and orientation estimation process. (a) Original input image containing the AprilTag. (b) Detection result showing the tag ID and the numbered corner coordinates. (c) Orientation estimation by connecting the tag center to the midpoint of the reference edge ( = direction). Different colored annotations are used solely for visualization purposes to distinguish the tag ID, corner coordinates, and orientation reference.

Figure 4.

Example of YOLOv8-based dirt detection results on the pool bottom.

Figure 4.

Example of YOLOv8-based dirt detection results on the pool bottom.

Figure 5.

Workflow of the proposed multi-scale A* path-planning framework. The red boxes indicate the current robot positions, which also represent the corresponding cleaning coverage areas. The red arrows denote path segments planned on the coarse (big-grid) resolution, while the black arrows represent path segments refined on the fine (small-grid) resolution.

Figure 5.