Scalable MLOps Pipeline with Complexity-Driven Model Selection Using Microservices

Abstract

1. Introduction

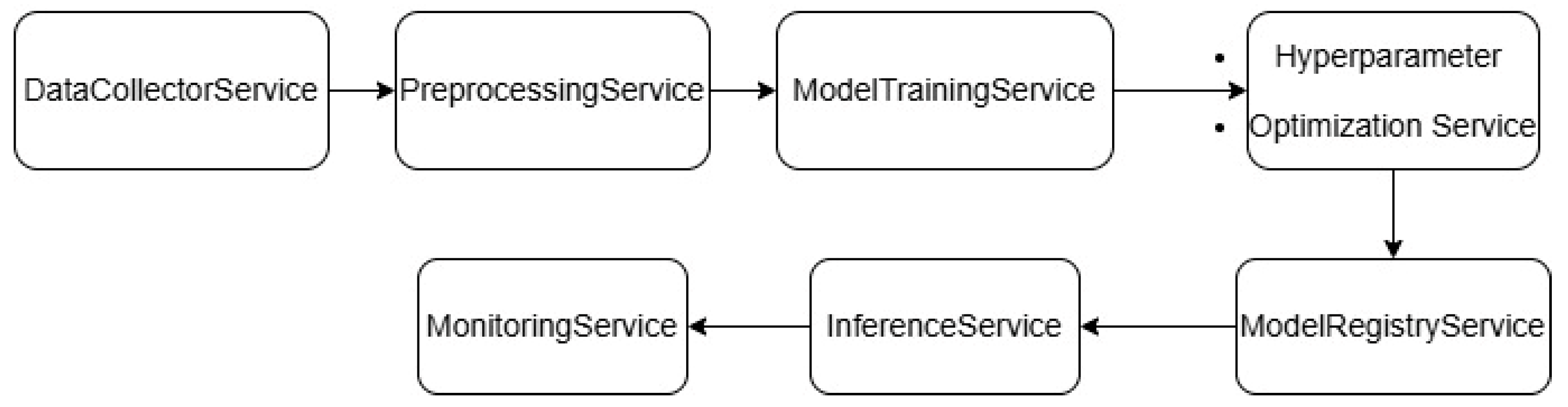

- A method for selecting the optimal convolutional neural network model for image classification, depending on the input characteristics of the dataset within the framework of the developed ML pipeline, is proposed, which allows for optimal use of resources in the quality–processing time ratio.

- A universal adaptive ML pipeline is developed, which automatically selects a model depending on the complexity of the data and available resources and includes a microservices approach, which allows for increased reliability, scalability, modularity, distribution, and support for deep learning in a cloud environment.

- A quantitative characteristics classification module based on the ensemble method is developed within the framework of the proposed ML pipeline. Using the optimizer allows you to select the optimal combination of classifiers, enabling higher accuracy and reduced processing time.

- The advantages of the proposed approach over existing MLOps solutions are shown, in particular in the context of highly loaded systems, large datasets, and deep neural networks.

2. Literature Review

3. Materials and Methods

3.1. Generalized Proposed Pipeline

- The frontend module is responsible for displaying the main module settings in a graphical form on a website with a database. This approach will allow you to develop functionality for convenient interaction with the service without requiring significant programming knowledge. The main component of this module is the use of Twitter Bootstrap as a front-end framework to ensure the graphical interface’s adaptability. The use of Docker (version 29.1.3) containers is a standard approach to ensure containerization. Additionally, the module includes separate functionality to simplify working with API Gateway.

- API Gateway is a small but very significant module that is located on port 8080 and is designed to act as an intermediary between the frontend and parts of microservices.

- The server part of the code, which ensures the functioning of the site system, is implemented using the Laravel framework and the MySQL (version 8.4) database. The primary purpose of this approach is to store user information, conduct research, communicate using a client-server architecture, and work with web applications.

- The model selection module for classification and segmentation is key in this architecture, which allows you to adapt the input data set to a specific type of network to obtain the optimal result in terms of classification quality, execution time, and resource usage. Three main categories of models are distinguished, namely easy, medium, and heavy.

- The quantitative characteristics module is designed to perform segmentation tasks and calculate quantitative characteristics of micro-objects, such as area, perimeter, circumference, axis length, etc. This module allows selection of the necessary parameters for local storage in a database for further classification or clustering.

- The ensemble-based classification module is implemented using more than 10 data classification algorithms and is designed to select the most optimal combinations for voting in soft or hard mode.

- Prometheus (version 3.8.1) and Grafana (version 12.3.0) technologies are used to monitor the system. The module is implemented as a separate microservice.

- The database is an essential component and is implemented as a separate storage, and it combines mechanisms for storing media objects, images, and text data.

3.2. Self-Optimizing ML Pipeline

- Light;

- Balanced;

- Heavy.

3.3. Ensemble Methods

- Loading a CSV file with prepared data divided into categories and classes;

- Model pool definition via ModelProvider;

- Adaptive BayesianModelSelector for selecting the best models;

- Ensemble building with hard/soft voting;

- Feature importance determination;

- Graphical representation of results.

4. Results

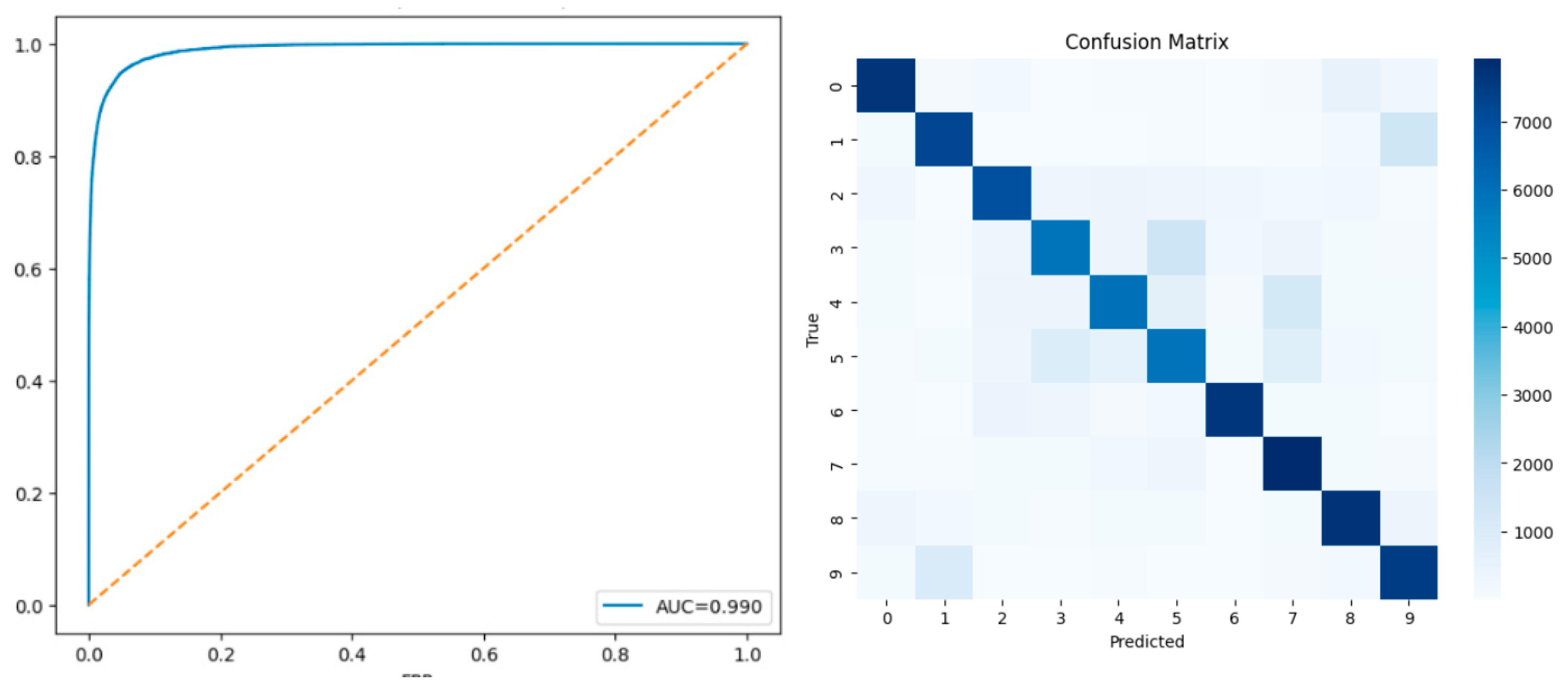

4.1. Image Classification

4.2. Ensemble Classification

- −

- Models are evaluated independently;

- −

- The best models are selected;

- −

- The selected models form an ensemble.

| Approach | Model Selection Complexity | Pipeline Overhead | Scalability |

|---|---|---|---|

| Static CNN pipeline | O(1) | Low | Limited |

| Traditional MLOps | O(1) | Moderate | Moderate |

| Resource-aware inference | O(k) | Low | Moderate |

| High | |||

| Proposed pipeline | O(k) | Moderate |

5. Discussion

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| DIS | Data Intake Service |

| APS | Adaptive Pre-processing Service |

| MSRS | Model Selection & Routing Service |

| NAS | Neural Architecture Search |

| API gateway | A central entry point that sits between clients and backend services |

| Grafana | Open source analytics & monitoring solution for every database |

| Prometheus | Collects and stores time-series metrics (like CPU, memory) |

| Mysql | Free relational database management system |

| Docker | Toolkit for managing isolated Linux containers |

References

- Artamonov, Y.; Plotytsia, S.; Radchenko, K.; Kotsiur, A. Microservice architecture of intelligent educational platforms with elements of ml pipeline self-optimization. Sci. Technol. 2025, 10, 1059–1073. [Google Scholar] [CrossRef]

- Sharma, P.; Sarangdevot, S.S. Optimizing Machine Learning Pipelines via Adaptive Hybrid Classification Models: Toward Scalable, Self-Updating AI Architectures. Int. J. Sci. Res. Sci. Eng. Technol. 2025, 12, 84–96. [Google Scholar] [CrossRef]

- Yin, H.; Jin, D.; Hong, H.; Moon, J.; Gu, Y.H. IAQ-STL-ML: A novel indoor air quality prediction pipeline using a meta-learning framework with STL decomposition. Environ. Technol. Innov. 2025, 38, 104107. [Google Scholar] [CrossRef]

- Gómez, C.; López, L.; Ayala, C.; López, M. MLSToolbox Code Generator: A tool for generating quality ML pipelines for ML systems. SoftwareX 2025, 32, 102379. [Google Scholar] [CrossRef]

- DeGroat, W.; Venkat, V.; Pierre-Louis, W.; Abdelhalim, H.; Ahmed, Z. Hygieia: AI/ML pipeline integrating healthcare and genomics data to investigate genes associated with targeted disorders and predict disease. Softw. Impacts 2023, 16, 100493. [Google Scholar] [CrossRef]

- Khattab, O.; Singhvi, A.; Maheshwari, P.; Zhang, Z.; Santhanam, K.; Vardhamanan, S.; Haq, S.; Sharma, A.; Joshi, T.T.; Moazam, H.; et al. DSPy: Compiling Declarative Language Model Calls into Self-Improving Pipelines. arXiv 2023, arXiv:2310.03714. [Google Scholar] [CrossRef]

- Tamim, I.; Shami, A.; Ong, L. ML-Based Strategies to Optimize O-RAN VNFs for Latency and Reliability. In Proceedings of the 2023 IEEE Future Networks World Forum (FNWF), Baltimore, MD, USA, 13–15 November 2023; pp. 1–7. [Google Scholar]

- Al Marouf, A.; Ahmed; Rokne, J.G.; Alhajj, R. Identification of Potential Biomarkers in Prostate Cancer Microarray Gene Expression Leveraging Explainable Machine Learning Classifiers. Cancers 2025, 17, 3853. [Google Scholar] [CrossRef]

- Vincenzo, L.; Coelli, S.; Gorlini, C.; Forzanini, F.; Rinaldo, S.; Andreasi, N.G.; Romito, L.; Eleopra, R.; Bianchi, A.M. The Role of MER Processing Pipelines for STN Functional Identification During DBS Surgery: A Feature-Based Machine Learning Approach. Bioengineering 2025, 12, 1300. [Google Scholar] [CrossRef]

- Conte; Luana; De Nunzio, G.; Raso, G.; Cascio, D. Multi-Class Segmentation and Classification of Intestinal Organoids: YOLO Stand-Alone vs. vs. Hybrid Machine Learning Pipelines. Appl. Sci. 2025, 15, 11311. [Google Scholar] [CrossRef]

- Koukaras; Paraskevas; Tjortjis, C. Data Preprocessing and Feature Engineering for Data Mining: Techniques, Tools, and Best Practices. AI 2025, 6, 257. [Google Scholar] [CrossRef]

- Thilagavathy, R.; Veeramani, T.; Sundaravadivazhagan, B.; Deebalakshmi, R. Evolution of software engineering: From traditional to modern approaches. In Advances in Computers; Elsevier: Amsterdam, The Netherlands, 2025. [Google Scholar] [CrossRef]

- Pitsun, O.; Shymchuk, M. A high-load architecture for image processing based on microservices. In Proceedings of the CIAW-2025: Computational Intelligence Application Workshop, Lviv, Ukraine, 26–27 September 2025. [Google Scholar]

- Zhu, J.; Bai, W.; Zhang, H.; Lin, W.; Zhou, T.; Li, K. Adaptive multi-objective swarm intelligence for containerized microservice deployment. Future Gener. Comput. Syst. 2026, 174, 108012. [Google Scholar] [CrossRef]

- Ponce, F.; Verdecchia, R.; Miranda, B.; Soldani, J. Microservices testing: A systematic literature review. Inf. Softw. Technol. 2025, 188, 107870. [Google Scholar] [CrossRef]

- Kaushik, N.; Kumar, H.; Raj, V. A systematic review of QoS enhancement techniques in microservices architecture. Comput. Electr. Eng. 2025, 127, 110550. [Google Scholar] [CrossRef]

- Alshuqayran, N.; Ali, N.; Evans, R. A model-driven architecture approach for recovering microservice architectures: Defining and evaluating MiSAR. Inf. Softw. Technol. 2025, 186, 107808. [Google Scholar] [CrossRef]

- Oyeniran, O.C.; Adewusi, A.O.; Adeleke, A.G.; Akwawa, L.A.; Azubuko, C.F. Microservices architecture in cloud-native applications: Design patterns and scalability. Comput. Sci. IT Res. J. 2024, 5, 2107–2124. [Google Scholar] [CrossRef]

- Lucian, A.C.; Bocu, R. Authentication Challenges and Solutions in Microservice Architectures. Appl. Sci. 2025, 15, 12088. [Google Scholar] [CrossRef]

- Al-Qora’n, L.F.; Ahmad, A.A.-S. Modular Monolith Architecture in Cloud Environments: A Systematic Literature Review. Future Internet 2025, 17, 496. [Google Scholar] [CrossRef]

- Yuanbo, L.; Li, Y.; Wang, G.; Hu, H. An Adaptive Dynamic Defense Strategy for Microservices Based on Deep Reinforcement Learning. Electronics 2025, 14, 4096. [Google Scholar] [CrossRef]

- Hossam, H.; Abdel-Fattah, M.A.; Mohamed, W. A Pattern-Based Framework for Automated Migration of Monolithic Applications to Microservices. Big Data Cogn. Comput. 2025, 9, 253. [Google Scholar] [CrossRef]

- Rakshith, S.; Sierla, S.; Vyatkin, V. From DevOps to MLOps: Overview and Application to Electricity Market Forecasting. Appl. Sci. 2022, 12, 9851. [Google Scholar] [CrossRef]

- CobParro; Carlos, A.; Lalangui, Y.; Lazcano, R. Fostering Agricultural Transformation through AI: An Open-Source AI Architecture Exploiting the MLOps Paradigm. Agronomy 2024, 14, 259. [Google Scholar] [CrossRef]

- Andrej, R.; Kotuliak, I.; Sobolev, D. Evaluating Deployment of Deep Learning Model for Early Cyberthreat Detection in On-Premise Scenario Using Machine Learning Operations Framework. Computers 2025, 14, 506. [Google Scholar] [CrossRef]

- Park, Y.; Mun, J.; Lee, Y.; Um, J.; Choi, J.; Choi, J. Data-Driven Optimization of Healthcare Recommender System Retraining Pipelines in MLOps with Wearable IoT Data. Sensors 2025, 25, 6369. [Google Scholar] [CrossRef]

- Daniel, Z.R.; Bărbulescu, C.; Constantinescu, R. A Practical Approach to Defining a Framework for Developing an Agentic AIOps System. Electronics 2025, 14, 1775. [Google Scholar] [CrossRef]

- Caruana, R.; Niculescu-Mizil, A.; Crew, G.; Ksikes, A. Ensemble selection from libraries of models. In Proceedings of the Twenty-First International Conference on Machine Learning (ICML ‘04), Banff, AL, Canada, 4–8 July 2004; Association for Computing Machinery: New York, NY, USA, 2004; p. 18. [Google Scholar] [CrossRef]

- Yao, Y.; Pirš, G.; Vehtari, A.; Gelman, A. Bayesian Hierarchical Stacking: Some Models Are (Somewhere) Useful. Bayesian Anal. 2022, 17, 1043–1071. [Google Scholar] [CrossRef]

- Berezsky, O.; Datsko, T.; Melnyk, G. Cytological and Histological Images of Breast Cancer [Data Set]; Zenodo: Geneva, Switzerland, 2023. [Google Scholar] [CrossRef]

- Database. IHCDBI Digital Immunohistochemical Image Database of Breast Cancer. /10.05.2023 Bulletin No. 76. Dated 31 July 2023//Copyright Registration Certificate Number 118979. Available online: https://iprop-ua.com/cr/0r6kml00/ (accessed on 4 January 2026).

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef] [PubMed]

- Yang, C.; Wang, W.; Zhang, Y.; Zhang, Z.; Shen, L.; Li, Y.; See, J. MLife: A lite framework for machine learning lifecycle initialization. Mach Learn. 2021, 110, 2993–3013. [Google Scholar] [CrossRef]

- Zhang, C.; Shan, G.; Roh, B.H.; Zhu, F.; Jiang, J. FEI-Hi: Federated Edge Intelligence for Healthcare Informatics. IEEE J. Biomed. Health Inform. 2025. Preprint. [Google Scholar] [CrossRef]

- Berezsky, O.; Pitsun, O.; Melnyk, G.; Derysh, B.; Liashchynskyi, P. Application Of MLOps Practices for Biomedical Image Classification. In Proceedings of the 5th International Conference on Informatics & Data-Driven Medicine, Ceur Workshop Proceedings, Bratislava, Slovakia, 17–19 November 2023; Volume 3302, pp. 69–77. [Google Scholar]

| CIFAR-10 | CINIC-10 | IHCDBI [30] | |

|---|---|---|---|

| MobileNetV3 | Accuracy: 0.9 | Accuracy: 0.77 | Accuracy: 0.84 |

| Precision: 0.9 | Precision: 0.78 | Precision: 0.84 | |

| Recall: 0.9 | Recall: 0.77 | Recall: 0.84 | |

| F1: 0.9 | F1: 0.77 | F1: 0.84 | |

| ResNet-50 | Accuracy: 0.88 | Accuracy: 0.78 | Accuracy: 0.87 |

| Precision: 0.89 | Precision: 0.78 | Precision: 0.89 | |

| Recall: 0.88 | Recall: 0.78 | Recall: 0.87 | |

| F1: 0.88 | F1: 0.77 | F1: 0.85 | |

| EfficientNet-B7 | Accuracy: 0.91 | Accuracy: 0.79 | Accuracy: 0.92 |

| Precision: 0.91 | Precision: 0.79 | Precision: 0.92 | |

| Recall: 0.91 | Recall: 0.79 | Recall: 0.92 | |

| F1: 0.9 | F1: 0.79 | F1: 0.92 |

| Feature | Importance |

|---|---|

| contour_area | 0.309368 |

| contour_circularity | 0.295311 |

| contour_perimetr | 0.260561 |

| aspect_ratio | 0.134759 |

| Algorithm | rf | gb | lr | svc |

| 0.94 | 0.98 | 0.6 | 0.49 |

| Parameter | Kubeflow Pipelines | Apache Airflow | Proposed Pipeline |

|---|---|---|---|

| Type of architecture | Kubernetes-based | DAG-workflow | Microservice architecture with autonomous modules |

| Scalability | High | Medium | High, dynamic autoscalability |

| Service isolation | Partial | Limited | Complete isolation through lightweight services |

| Automation | High | High | Autonomous optimization |

| Flexibility of integrations | Kubernetes orientation | Mixed scenarios | Multi-environment, multi-cloud integration |

| Adaptability of ML processes | +/- | +/- | Automatic model and configuration selection |

| Support for model auto-tuning | third-party components | third-party components | Built-in AutoModelSelector component |

| Image orientation (CV) | - | - | Special task complexity profiles and CNN architectures |

| Feature | Static ML Pipeline | Traditional MLOps Solutions | Proposed Pipeline |

|---|---|---|---|

| Dynamic model selection | - | Limited | + |

| Data complexity awareness | - | - | + |

| Adaptive computation graph | - | - | + |

| High | |||

| Support for high-load systems | Limited | Moderate | |

| Ensemble-based complexity classification | - | - | + |

| Approach | Time to Deploy a Project from Scratch (Before the Launch Stage) |

|---|---|

| CNN | 3–6 min |

| Unet | 4–8 min |

| Ensemble methods (quantitative characteristics) | 2–4 min |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Pitsun, O.; Shymchuk, M. Scalable MLOps Pipeline with Complexity-Driven Model Selection Using Microservices. Technologies 2026, 14, 45. https://doi.org/10.3390/technologies14010045

Pitsun O, Shymchuk M. Scalable MLOps Pipeline with Complexity-Driven Model Selection Using Microservices. Technologies. 2026; 14(1):45. https://doi.org/10.3390/technologies14010045

Chicago/Turabian StylePitsun, Oleh, and Myroslav Shymchuk. 2026. "Scalable MLOps Pipeline with Complexity-Driven Model Selection Using Microservices" Technologies 14, no. 1: 45. https://doi.org/10.3390/technologies14010045

APA StylePitsun, O., & Shymchuk, M. (2026). Scalable MLOps Pipeline with Complexity-Driven Model Selection Using Microservices. Technologies, 14(1), 45. https://doi.org/10.3390/technologies14010045