Abstract

A state space model with an unobserved multivariate random walk and a linear observation equation is studied. The purpose is to find out when the extracted trend cointegrates with its estimator, in the sense that a linear combination is asymptotically stationary. It is found that this result holds for the linear combination of the trend that appears in the observation equation. If identifying restrictions are imposed on either the trend or its coefficients in the linear observation equation, it is shown that there is cointegration between the identified trend and its estimator, if and only if the estimators of the coefficients in the observation equations are consistent at a faster rate than the square root of sample size. The same results are found if the observations from the state space model are analysed using a cointegrated vector autoregressive model. The findings are illustrated by a small simulation study.

JEL Classification:

C32

1. Introduction and Summary

This paper is inspired by a study on long-run causality, see Hoover et al. (2014). Causality is usually studied for a sequence of multivariate i.i.d. variables using conditional independence, see Spirtes et al. (2000) or Pearl (2009). For stationary autoregressive processes, causality is discussed in terms of the variance of the shocks, that is, the variance of the i.i.d. error term. For nonstationary cointegrated variables, the common trends play an important role for long-run causality. In Hoover et al. (2014), the concept is formulated in terms of independent common trends and their causal impact coefficients on the nonstationary observations. Thus, the emphasis is on independent trends, and how they enter the observation equations, rather than on the variance of the measurement errors.

The trend is modelled as an dimensional Gaussian random walk, starting at ,

where are i.i.d. , that is, Gaussian in m dimensions with mean zero and variance . This trend has an impact on future values of the dimensional observation modelled by

where are i.i.d. and . It is also assumed that the and are independent for all s and t. In the following the joint distribution of conditional on a given value of is considered.

The observations are collected in the matrices , , and , , which are defined as

The processes and are obviously nonstationary, but the conditional distribution of given is well defined. We define

Then the density of conditional on is given by the prediction error decomposition

where given is p dimensional Gaussian with mean and variance

In this model it is clear that and cointegrate, that is, is stationary, and the same holds for and the extracted trend . Note that in the statistical model defined by (1) and (2) with parameters , and , only the matrices and are identified because for any matrix of full rank, and give the same likelihood, by redefining the trend as .

Let be an estimator of . The paper investigates whether there is cointegration between and given two different estimation methods: A simple cointegrating regression and the maximum likelihood estimator in an autoregressive representation of the state space model.

Section 2, on the probability analysis of the data generating process, formulates the model as a common trend state space model, and summarizes some results in three Lemmas. Lemma 1 contains the Kalman filter equations and the convergence of , see Durbin and Koopman (2012), and shows how its limit can be calculated by solving an eigenvalue problem. Lemma 1 also shows how can be represented in terms of its prediction errors , . This result is used in Lemma 2 to represent in steady state as an infinite order cointegrated vector autoregressive model, see (Harvey 2006, p. 373). Section 3 discusses the statistical analysis of the data and the identification of the trends and their loadings. Two examples are discussed. In the first example, only B is restricted and the trends are allowed to be correlated. In the second example, B is restricted but the trends are uncorrelated, so that also the variance matrix is restricted. Lemma 3 analyses the data from (1) and (2) using a simple cointegrating regression, see Harvey and Koopman (1997), and shows that the estimator of the coefficient B suitably normalized is n-consistent.

Section 4 shows in Theorem 1 that the spread between and its estimator is asymptotically stationary irrespective of the identification of B and . Then Theorem 2 shows that the spread between and its estimator is asymptotically stationary if and only if B has been identified so that the estimator of B is superconsistent, that is, consistent at a rate faster than .

The findings are illustrated with a small simulation study in Section 5. Data are generated from (1) and (2) with , and the observations are analysed using the cointegrating regression discussed in Lemma 3. If the trends and their coefficients are identified by the trends being independent, the trend extracted by the state space model does not cointegrate with its estimator. If, however, the trends are identified by restrictions on the coefficients alone, they do cointegrate.

2. Probability Analysis of the Data Generating Model

This section contains first two examples, which illustrate the problem to be solved. Then a special parametrization of the common trends model is defined and some, mostly known, results are given in Lemmas 1 concerning the Kalman filter recursions. Lemma 2 is about the representation of the steady state solution as an autoregressive process. All proofs are given in the Appendix.

2.1. Two Examples

Two examples are given which illustrate the problem investigated. The examples are analysed further by a simulation study in Section 5.

Example 1.

In the first example the two random walks and are allowed to be dependent, so is unrestricted, and identifying restrictions are imposed only on their coefficients B. The equations are

for .Thus, , , and

Moreover, is , is , and both are unrestricted positive definite. Simulations indicate that is stationary, and this obviously implies that the same holds for the first two coordinates and . ■

Example 2.

The second example concerns two independent random walks and , and the three observation equations

In this example

and is and unrestricted positive definite. Thus the nonstationarity is caused by two independent trends. The first, , is the cause of the nonstationarity of , whereas both trends are causes of the nonstationarity of . From the first equation it is seen that and cointegrate. It is to be expected that also the extracted trend cointegrates with , and also that cointegrates with its estimator . This is all supported by the simulations. Similarly, it turns out that

is asymptotically stationary. In this case, however, is not asymptotically stationary, and the paper provides an answer to why this is the case. ■

The problem to be solved is why in the first example cointegration was found between the extracted trends and their estimators, and in the second example they do not cointegrate. The solution to the problem is that it depends on the way the trends and their coefficients are identified. For some identification schemes the estimator of B is n-consistent, and then stationarity of can be proved. But if identification is achieved by imposing restrictions also on the covariance of the trends, as in Example 2, then the estimator for B is only -consistent, and that is not enough to get asymptotic stationarity of .

2.2. Formulation of the Model as a Common Trend State Space Model

The common trend state space model with constant coefficients is defined by

, see Durbin and Koopman (2012) or Harvey (1989), with initial state . Here is the unobserved m−dimensional state variable and the p−dimensional observation and B is of rank . The errors and are as specified in the discussion of the model given by (1) and (2).

Defining , gives the model (1) and (2). Note that in this notation is the predicted value of the trend , which means that it is easy to formulate the Kalman filter.

The Kalman filter calculates the prediction and its conditional variance by the equations

starting with and .

Lemma 1 contains the result that converges for to a finite limit V, which can be calculated by solving an eigenvalue problem. Equation (11) is an algebraic Ricatti equation, see Chan et al. (1984), where the convergence result can be found. The recursion (10) is used to represent in terms of its cumulated prediction errors , as noted by Harvey (2006, Section 7.3.2).

Lemma 1.

Let and .

(a) The recursion for , (11), can be expressed as

where for . Moreover,

which has positive eigenvalues less than one, such that is a contraction, that is, , .

(b) The limit of can be found by solving the eigenvalue problem

for eigenvectors W and eigenvalues , such that and . Hence, for

The prediction errors are independent Gaussian with mean zero and variances

such that in steady state the prediction errors are i.i.d. , and (16) shows that is approximately an process, for which the reduced form autoregressive representation can be found, see (Harvey 2006, Section 7.3.2).

Lemma 2.

If the system (7) is in steady state, prediction errors are i.i.d. and

Applying the Granger Representation Theorem, is given by

Here and .

2.3. Cointegration among the Observations and Trends

In model (1) and (2), the equation shows that and are cointegrated. It also holds that is asymptotically stationary because

which shows that is asymptotically stationary. Multiplying by , the same holds for .

In model (18) the extracted trend is

and (16) shows that in steady state, is stationary, so that cointegrates with . Thus, the process and the trends , , and all cointegrate, in the sense that suitable linear combinations are asymptotically stationary. The next section investigates when similar results hold for the estimated trends.

3. Statistical Analysis of the Data

In this section it is shown how the parameters of (7) can be estimated from the CVAR (18) using results of Saikkonen (1992) and Saikkonen and Lutkepohl (1996), or using a simple cointegrating regression, see (Harvey and Koopman 1997, p. 276) as discussed in Lemma 3. For both the state space model (1)–(2) and for the CVAR in (18) there is an identification problem between and its coefficient B, or between and , because for any matrix of full rank, one can use as parameter and as trend and as variance, and similarly for and . In order to estimate B, T, and , it is therefore necessary to impose identifying restrictions. Examples of such identification are given next.

Identification 1.

Because B has rank m, the rows can be permuted such that , where is and has full rank. Then the parameters and trend are redefined as

Note that and . This parametrization is the simplest which separates parameters that are n-consistently estimated, , from those that are -consistently estimated, , see Lemma 3. Note that the (correlated) trends are redefined by choosing as the trend in , then as the trend in , as in Example 1.

A more general parametrization, which also gives n-consistency, is defined, as in simultaneous equations, by imposing linear restrictions on each of the m columns and require the identification condition to hold, see Fisher (1966). ■

Identification 2.

The normalization with diagonality of is part of the next identification, because this is the assumption in the discussion of long-run causality. Let be a Cholesky decomposition of . That is, is lower-triangular with one in the diagonal, corresponding to an ordering of the variables. Using this decomposition the new parameters and the trend are

such that and .

Identification of the trends is achieved in this case by defining the trends to be independent and constrain how they load into the observations. In Example 2, was defined as the trend in , and as the trend in , but orthogonalized on , such that the trend in is a combination of and . ■

3.1. The Vector Autoregressive Model

When the process is in steady state, the infinite order CVAR representation is given in (18). The model is approximated by a sequence of finite lag models, depending on sample size n,

where the lag length is chosen to depend on n such that increases to infinity with n, but so slowly that converges to zero. Thus one can choose for instance or , for some . With this choice of asymptotics, the parameters , , , , and the residuals, , can be estimated consistently, see Johansen and Juselius (2014) for this application of the results of Saikkonen and Lutkepohl (1996).

This defines for each sample size consistent estimators , , and , as well residuals . In particular the estimator of the common trend is . Thus, , and . If is identified as , then . In steady state, the relations

hold, see (11) and Lemma 2. It follows that

Finally, an estimator for can be found as

Note that is not a symmetric matrix in model (18), but its estimator converges in probability towards the symmetric matrix .

3.2. The State Space Model

The state space model is defined by (1) and (2). It can be analysed using the Kalman filter to calculate the diffuse likelihood function, see Durbin and Koopman (2012), and an optimizing algorithm can be used to find the maximum likelihood estimator for the parameters , , and B, once B is identified.

In this paper, an estimator is used which is simpler to analyse and which gives an n-consistent estimator for B suitably normalized, see (Harvey and Koopman 1997, p. 276).

The estimators are functions of and , and therefore do not involve the initial value . Irrespective of the identification, the relations

hold, and they gives rise to two moment estimators, which determine and , once B has been identified and estimated.

Consider the identified parametrization (19), where , and take . Then define and , such that and , that is,

This equation defines the regression estimator :

To describe the asymptotic properties of , two Brownian motions are introduced

Lemma 3.

(a) From (21) and (22) it follows that

define -consistent asymptotically Gaussian estimators for and , irrespective of the identification of B.

(b) If B and are identified as , , and is adjusted accordingly, then in (24) is n-consistent with asymptotic Mixed Gaussian distribution

(c) If B is identified as , , and , then , but (27) still holds for , so that some linear combinations of are consistent.

In the simulations of Examples 1 and 2 the initial value is , so the Kalman filter with is used to calculate the extracted trend using observations and known parameters. Similarly the estimator of the extracted trend is calculated using observations and estimated parameters based on Lemma 3. The next section investigates to what extent these estimated trends cointegrate with the extracted trends, and if they cointegrate with each other.

4. Cointegration between Trends and Their Estimators

This section gives the main results in two theorems with proofs in the Appendix. In Theorem 1 it is shown, using the state space model to extract the trends and the estimator from Lemma 3, that is asymptotically stationary. For the CVAR model it holds that , such that this spread is asymptotically stationary. Finally, the estimated trends in the two models are compared, and it is shown that is asymptotically stationary. The conclusion is that in terms of cointegration of the trends and their estimators, it does not matter which model is used to extract the trends, as long as the focus is on the identified trends and .

Theorem 1.

(a) If the state space model is used to extract the trends, and Lemma 3 is used for estimation, then is asymptotically stationary.

(b) If the vector autoregressive model is used to extract the trends and for estimation, then. .

(c) Under assumptions of (a) and (b), it holds that is asymptotically stationary.

In Theorem 2 a necessary and sufficient condition for asymptotic stationarity of , , and is given.

Theorem 2.

In the notation of Theorem 1, any of the spreads , or is asymptotically stationary if and only if B and the trend are identified such that the corresponding estimator for B satisfies and .

The missing cointegration between and , say, can be explained in terms of the identity

Here the second term, , is asymptotically stationary by Theorem 1. But the first term, , is not necessarily asymptotically stationary, because in general, that is, depending on the identification of the trend and B, it holds that and , see (16).

The parametrization ensures n-consistency of , so there is asymptotic stationarity of , , and in this case. This is not so surprising because

is stationary. Another situation where the estimator for B is n-consistent is if satisfies linear restriction of the columns, , or equivalently for some , and the condition for identification is satisfied

see Fisher (1966). For a just-identified system, one can still use , and then solve for the identified parameters. For overidentified systems, the parameters can be estimated by a nonlinear regression of on reflecting the overidentified parametrization. In either case the estimator is n-consistent such that , , and are asymptotically stationary.

If the identification involves the variance , however, the estimator of B is only -consistent, and hence no cointegration is found between the trend and estimated trend.

The analogy with the results for the CVAR, where and need to be identified, is that if is identified using linear restrictions (29) then is n-consistent, whereas if is identified by restrictions on then is -consistent. An example of the latter is if is identified as the first m rows of the matrix , corresponding to , then is -consistent and asymptotically Gaussian, see (Johansen 2010, Section 4.3).

5. A Small Simulation Study

The two examples introduced in Section 2.1 are analysed by simulation. The equations are given in (5) and (3). Both examples have and . The parameters B and contain parameters, but the matrix is of rank 2 and has only 5 estimable parameters. Thus, 4 restrictions must be imposed to identify the parameters. In both examples the Kalman filter with is used to extract the trends, and the cointegrating regression in Lemma 3 is used to estimate the parameters.

Example 1 continued.

To illustrate the results, data are simulated with observations starting with and parameter values , , and , such that

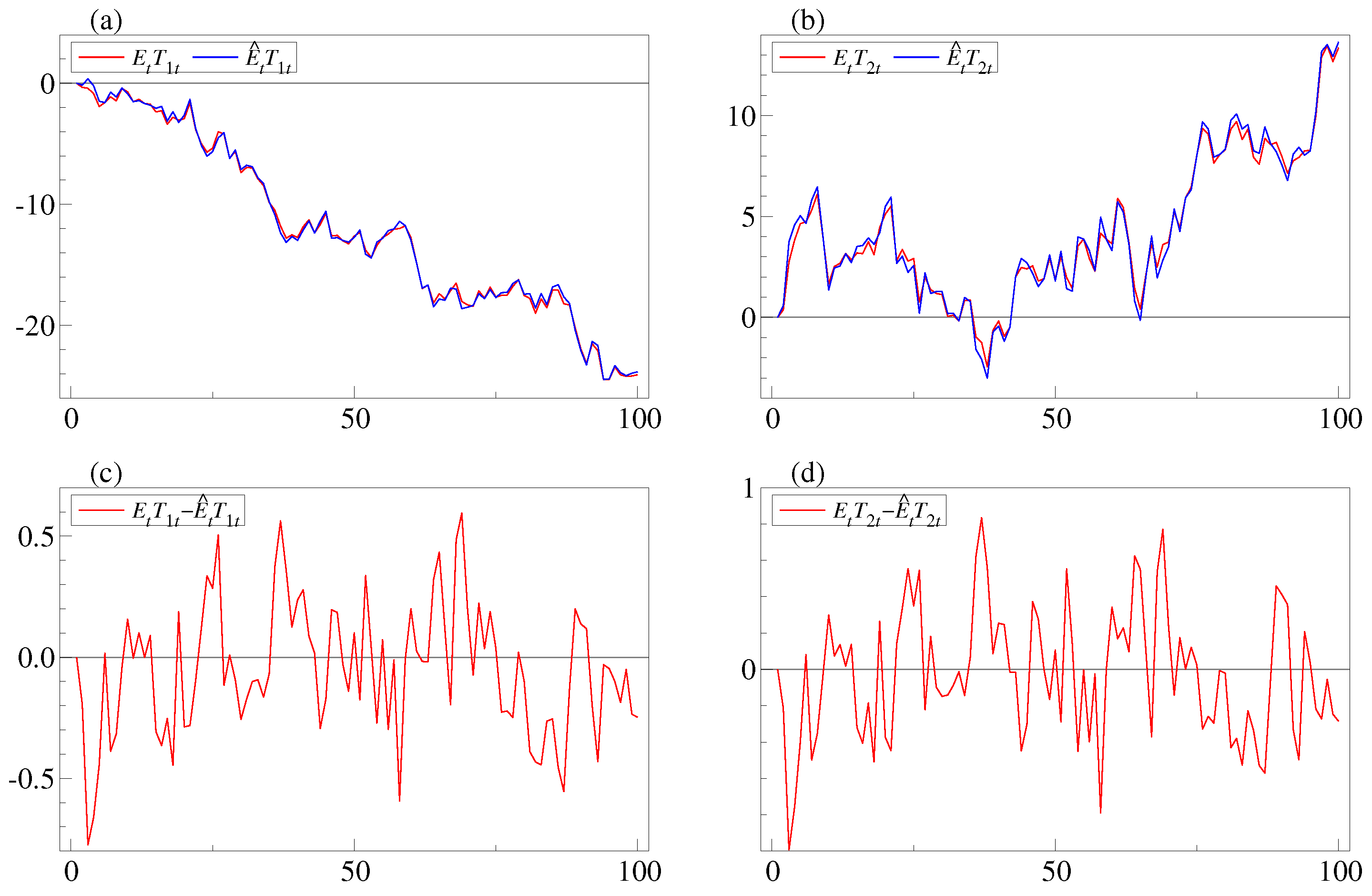

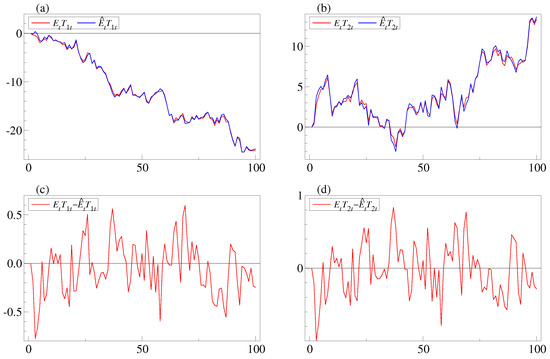

The parameters are estimated by (28) and the estimates become , , , , and . The extracted and estimated trends are plotted in Figure 1. Panels a and b show plots of and , respectively, and it is seen that they co-move. In panels c and d the differences and both appear to be stationary in this parametrization of the model. ■

Figure 1.

The figure shows the extracted and estimated trends for the simulated data in Example 1 with the identification in (19). Panels a and b show plots of and , and and , respectively. Note that in both cases, the processes seem to co-move. In panels c and d, and are plotted and appear stationary, because they are both recovered from as the first two coordinates, see (19).

Example 2 continued.

The parameter B in this example is given in (6) such that

By the results in Theorem 1(a), all three rows are asymptotically stationary, in particular . Moreover, the second row of (32), , is asymptotically stationary. Thus, asymptotic stationarity of requires asymptotic stationary of the term

Here, the second term, , is asymptotically stationary because is. However, the first term, , is not asymptotically stationary because is -consistent. In this case , which has a Gaussian distribution, and , where is the Brownian motion generated by the sum of It follows that their product

converges in distribution to the product of Z and , , and this limit is nonstationary. It follows that is not asymptotically stationary for the identification in this example. This argument is a special case of the proof of Theorem 2.

To illustrate the results, data are simulated from the model with observations starting with and parameter values , , and , which is identical to (31).

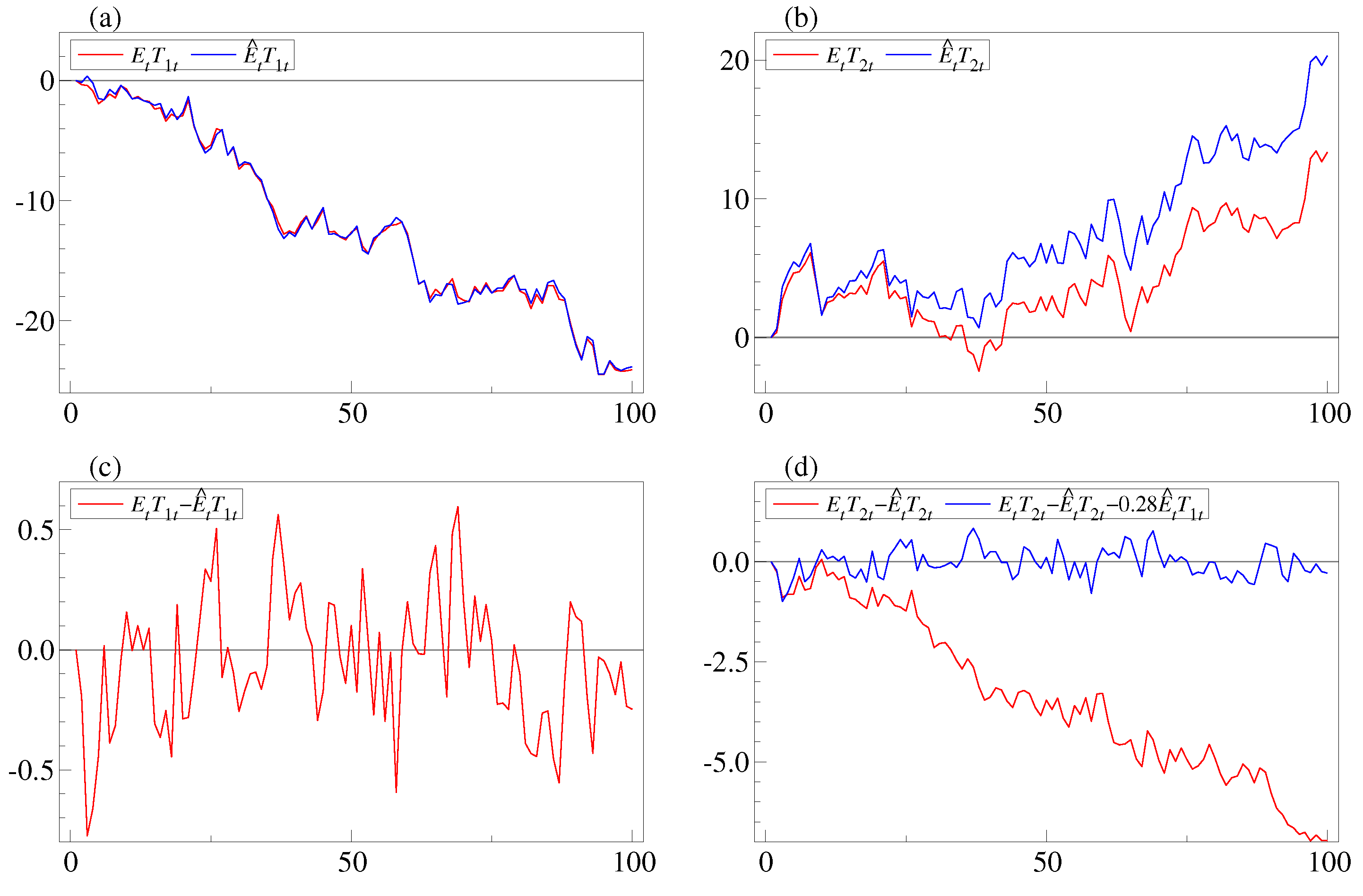

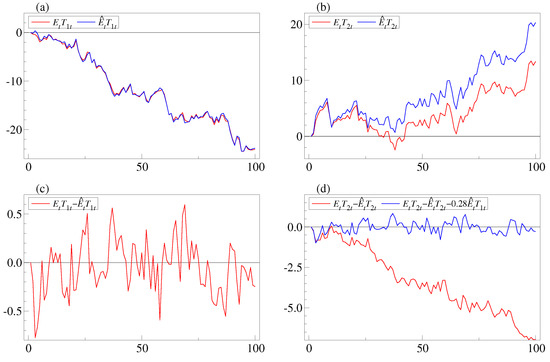

The parameters are estimed as in Example 1 and we find , , , , and , which are solved for , , , , and . The extracted and estimated trends are plotted in Figure 2. The panels a and b show plots of and , respectively. It is seen that and co-move, whereas and do not co-move. In panels c and d, the differences and are plotted. Note that the first looks stationary, whereas the second is clearly nonstationary. When comparing with the plot of in panel a, it appears that the process can explain the nonstationarity of . This is consistent with Equation (33) with and . In panel d, is plotted and it is indeed stationary. ■

Figure 2.

The figure shows the extracted and estimated trends for the simulated data in Example 2 with the identification in (20). Panels a and b show plots of and , and and , respectively. Note that and seem to co-move, whereas and do not. In panel is plotted and appears stationary, but in panel d the spread is nonstationary, whereas is stationary.

6. Conclusions

The paper analyses a sample of n observations from a common trend model, where the state is an unobserved multivariate random walk and the observation is a linear combination of the lagged state variable and a noise term. For such a model, the trends and their coefficients in the observation equation need to be identified before they can be estimated separately. The model leads naturally to cointegration between observations, trends, and the extracted trends. Using simulations it was discovered, that the extracted trends do not necessarily cointegrate with their estimators. This problem is investigated, and it is found to be related to the identification of the trends and their coefficients in the observation equation. It is shown in Theorem 1, that provided only the linear combinations of the trends from the observation equation are considered, there is always cointegration between extracted trends and their estimators. If the trends and their coefficients are defined by identifying restrictions, the same result holds if and only if the estimated identified coefficients in the observation equation are consistent at a rate faster than . For the causality study mentioned in the introduction, where the components of the unobserved trend are assumed independent, the result has the following implication: For the individual extracted trends to cointegrate with their estimators, overidentifying restrictions must be imposed on the trend’s causal impact coefficients on the observations, such that the estimators of these become super-consistent.

Acknowledgments

S.J. is grateful to CREATES—Center for Research in Econometric Analysis of Time Series (DNRF78), funded by the Danish National Research Foundation. M.N.T. is grateful to the Carlsberg Foundation (grant reference 2013_01_0972). We have benefitted from discussions with Siem Jan Koopman and Eric Hillebrand on state space models and thankfully acknowledge the insightful comments from two anonymous referees.

Author Contributions

S.J. has contributed most of the mathematical derivations. M.N.T. has performed the simulations and posed the problem to be solved.

Conflicts of Interest

The authors declare no conflict of interest.

Appendix A

Proof of Lemma 1

Proof of (a): Let , , such that

where

Proof of (b): If the recursion starts with , then can be diagonalized by W for all t and the limit satisfies , where

This has solution given in (15).

Proof of (c): The first result follows by summation from the recursion for in (10), and the second from . ■

Proof of Lemma 2

The polynomial describes (17) as

Note that is singular and , satisfies is nonsingular, where . This means that the Granger Representation Theorem (Johansen 1996, Theorem 4.5) can be applied and gives the expansion (18) for and . ■

Proof of Lemma 3

Proof of (a): Consider first the product moments (21) and (22). The result (26) follows from the Law of Large Numbers and the asymptotic Gaussian distribution of and follows from the Central Limit Theorem.

Proof of (b): It follows from (23), (24), and (25) that the least squares estimator satisfies (27). Let , then

Proof of (c): Note that for the other parametrization, (20), where , it holds that , such that for both parametrizations (27) holds. The estimator of B, in the parametrization (20), is , where is derived from the -consistent estimator of , such that for this parametrization, estimation of B is not n-consistent, but only -consistent and . ■

Proof of Theorem 1.

Proof of (a): Let , then

Here is stationary, so that it is enough to show that is asymptotically stationary. From the definition of and the Kalman filter recursion (10) calculated for and for the estimated parameters, it holds that

Subtracting the expressions gives

which gives the recursion

Note that is not a contraction, because eigenvalues are one. Hence it is first proved that is small and then a contraction is found for . From the definition of , it follows from (27), that

Next define and , such that . From (A2) it follows by multiplying by and using , that

because and .

From (14) it is seen that and . This shows that and hence is asymptotically a stationary process.

Proof of (b): The CVAR (18) is expressed as , and the parameters are estimated using maximum likelihood with lag length and . This gives estimators and residuals . The representation of in terms of is given by

where . This relation also holds for the estimated parameters and residuals, and subtracting one finds

It is seen that the right hand side is and hence asymptotically stationary.

Proof of (c): Each estimated trend is compared with the corresponding trend which gives

Here the first term is asymptotically stationary using Theorem 2(a), the middle term is asymptotically stationary, and the last is by Theorem 1(b). ■

Proof of Theorem 2.

The proof is the same for all the spreads, so consider , and the identity

The left hand side is asymptotically stationary by Theorem 1(a) and therefore is asymptotically stationary if and only

is asymptotically stationary. Here the second factor converges to a nonstationary process,

see (16), so for the term to be asymptotically stationary it is necessary and sufficient that . ■

References

- Chan, Siew Wah, Graham Clifford Goodwin, and Kwai Sang Sin. 1984. Convergence properties of the Ricatti difference equation in optimal filtering of nonstabilizable systems. IEEE Transaction on Automatic Control 29: 110–18. [Google Scholar] [CrossRef]

- Durbin, Jim, and Siem Jan Koopman. 2012. Time Series Analysis by State Space Methods, 2nd ed. Oxford: Oxford University Pres. [Google Scholar]

- Fisher Frank, M. 1966. The Identification Problem in Econometrics. New York: McGraw-Hill. [Google Scholar]

- Harvey, Andrew. 1989. Forecasting, Structural Time Series Models and the Kalman Filter. Cambridge: Cambridge University Press. [Google Scholar]

- Harvey, Andrew C. 2006. Forecasting with Unobserved Components Time Series Models. In Handbook of Economic Forecasting. Edited by G. Elliot, C. Granger and A. Timmermann. Amsterdam: North Holland, pp. 327–412. [Google Scholar]

- Harvey, Andrew C., and Siem Jan Koopman. 1997. Multivariate structural time series models. In System Dynamics in Economics and Financial Models. Edited by C. Heij, J.M. Schumacher, B. Hanzon and C. Praagman. New York: John Wiley and Sons. [Google Scholar]

- Hoover, Kevin D., Søren Johansen, Katarina Juselius, and Morten Nyboe Tabor. 2014. Long-run Causal Order: A Progress Report. Unpublished manuscript. [Google Scholar]

- Johansen, Søren. 1996. Likelihood-Based Inference in Cointegrated Vector Autoregressive Models, 2nd ed. Oxford: Oxford University Press. [Google Scholar]

- Johansen, Søren. 2010. Some identification problems in the cointegrated vector autoregressive model. Journal of Economietrics 158: 262–73. [Google Scholar] [CrossRef]

- Johansen, Søren, and Katarina Juselius. 2014. An asymptotic invariance property of the common trends under linear transformations of the data. Journal of Econometrics 178: 310–15. [Google Scholar] [CrossRef]

- Pearl, Judea. 2009. Causality: Models, Reasoning and Inference, 2nd ed. Cambridge: Cambridge University Press. [Google Scholar]

- Saikkonen, Pentti. 1992. Estimation and testing of cointegrated systems by an autoregressive approximation. Econometric Theory 8: 1–27. [Google Scholar] [CrossRef]

- Saikkonen, Pentti, and Helmut Lütkepohl. 1996. Infinite order cointegrated vector autoregressive processes. Estimation and Inference. Econometric Theory 12: 814–44. [Google Scholar] [CrossRef]

- Spirtes, Peter, Clark Glymour, and Richard Scheines. 2000. Causation, Prediction, and Search, 2nd ed. Cambridge: MIT Press. [Google Scholar]

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).