Wireless Networks for Traffic Light Control on Urban and Aerotropolis Roads

Abstract

1. Introduction

2. Related Work

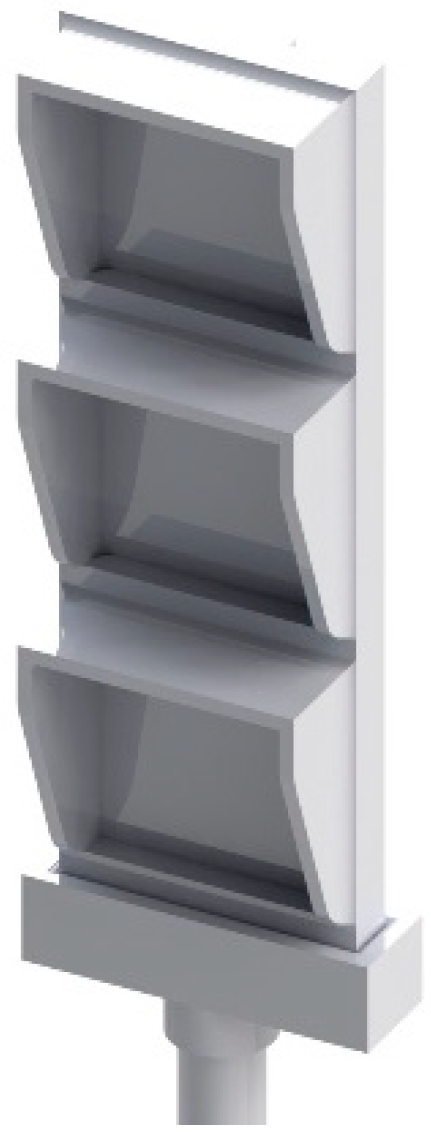

3. System Development

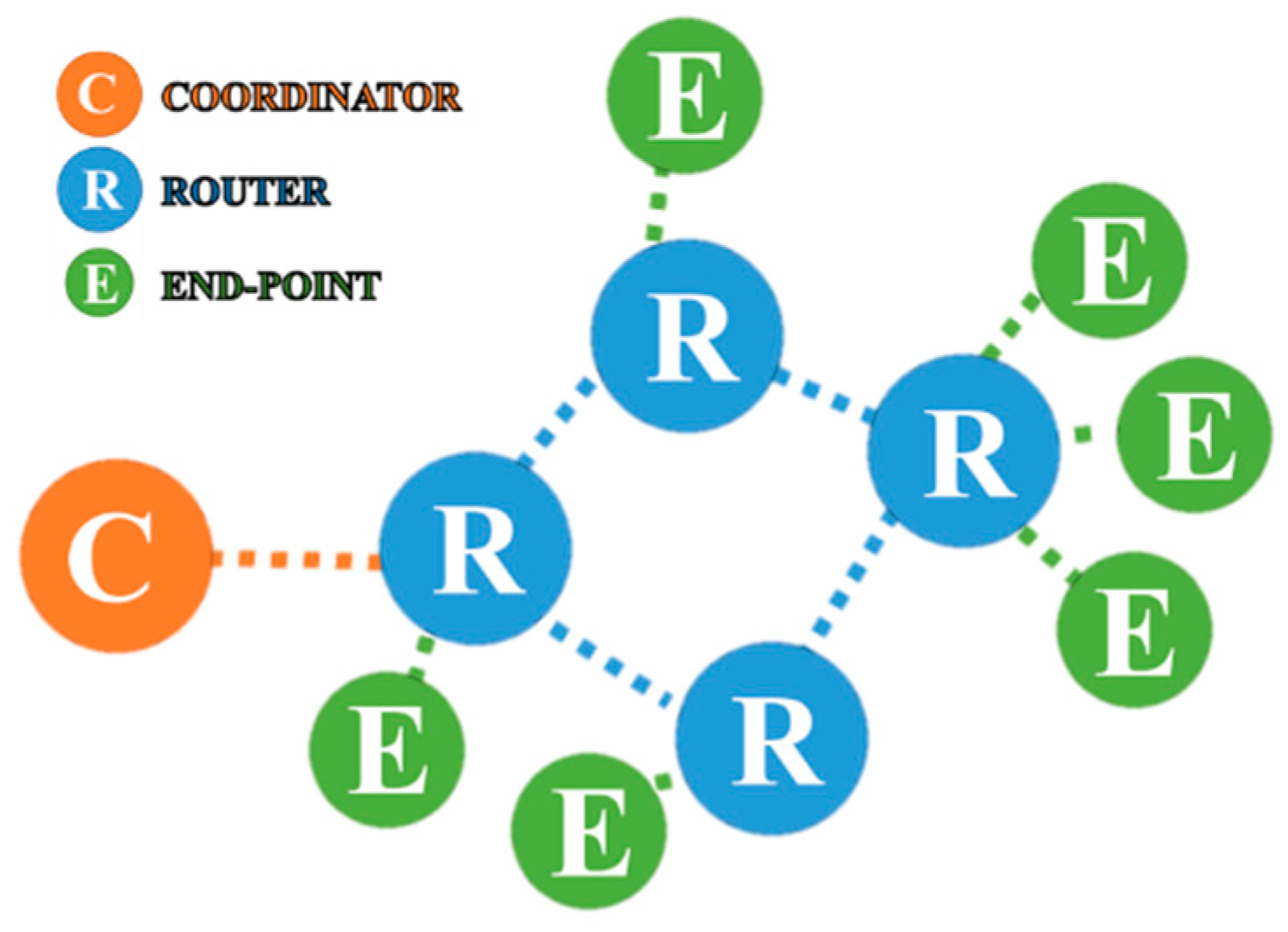

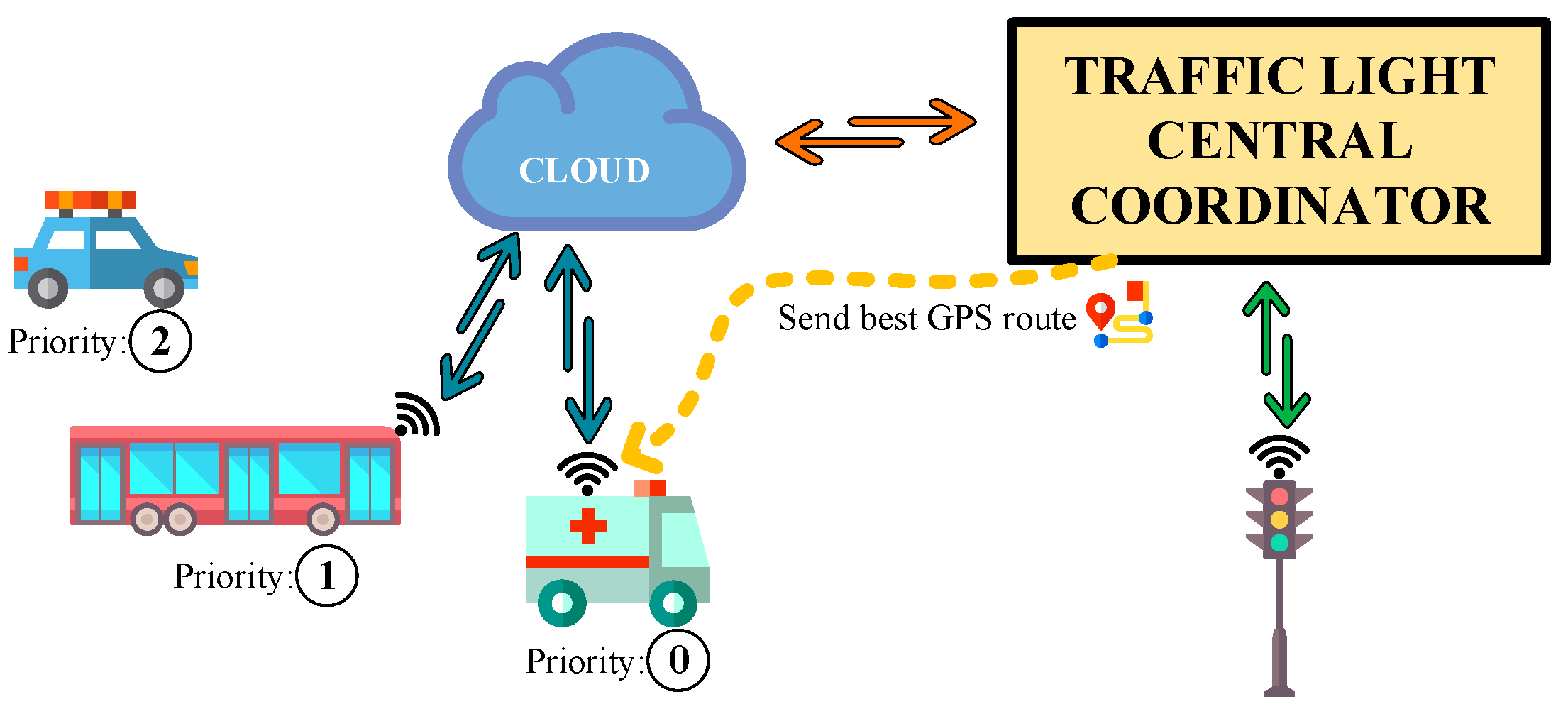

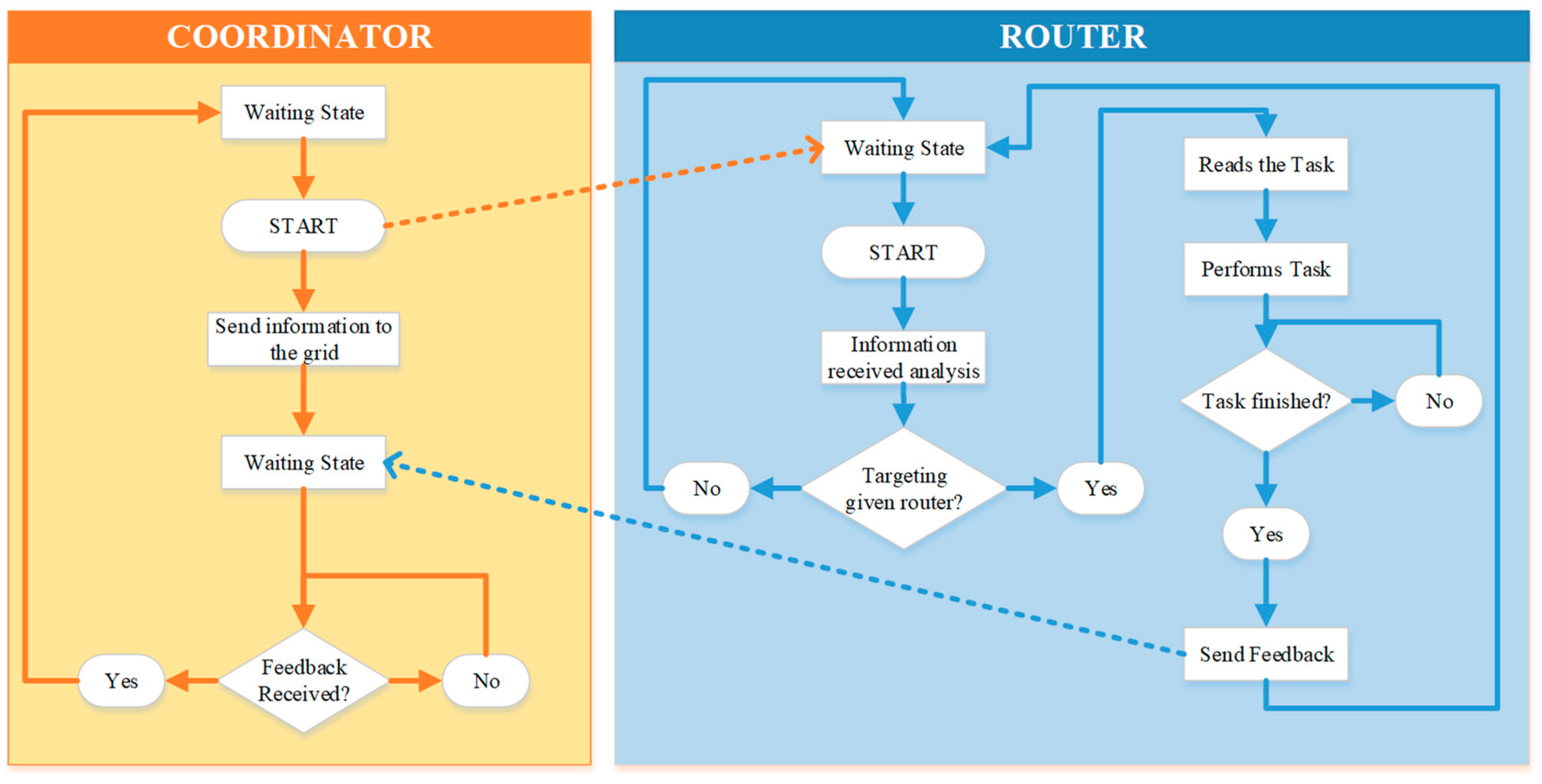

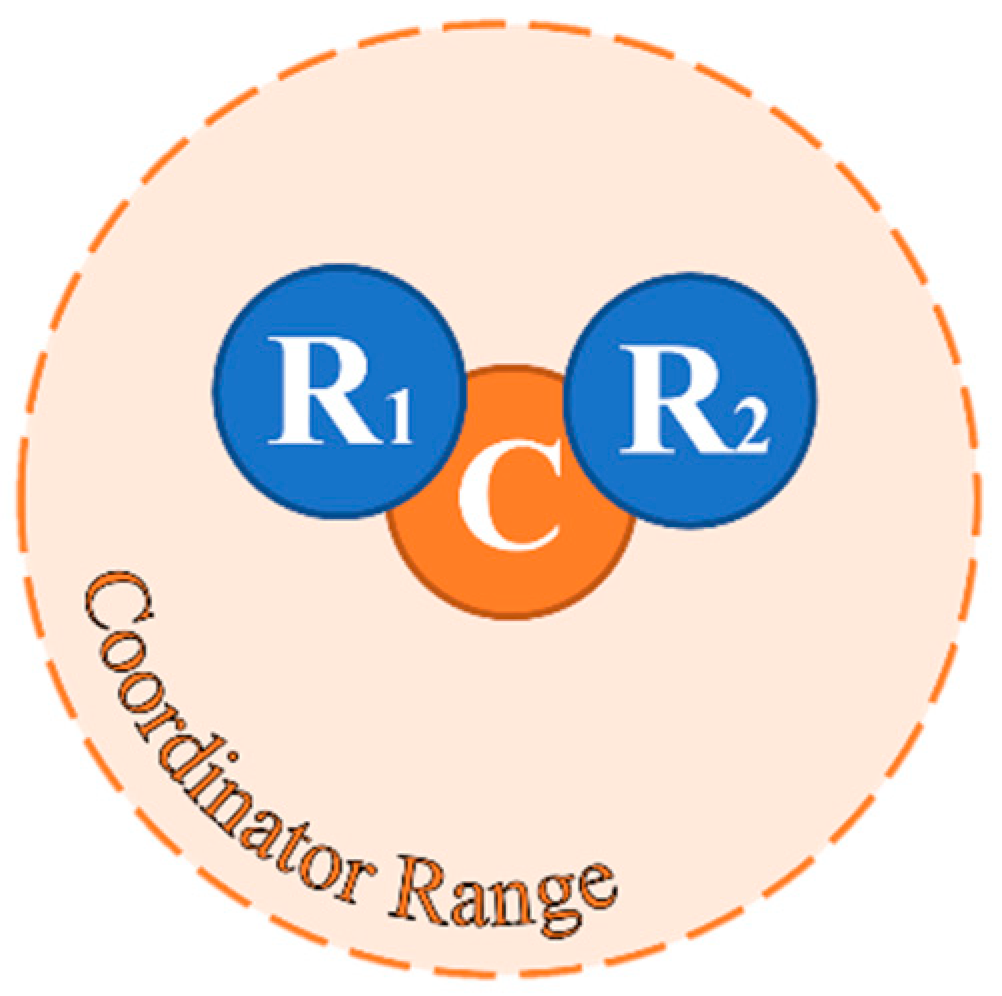

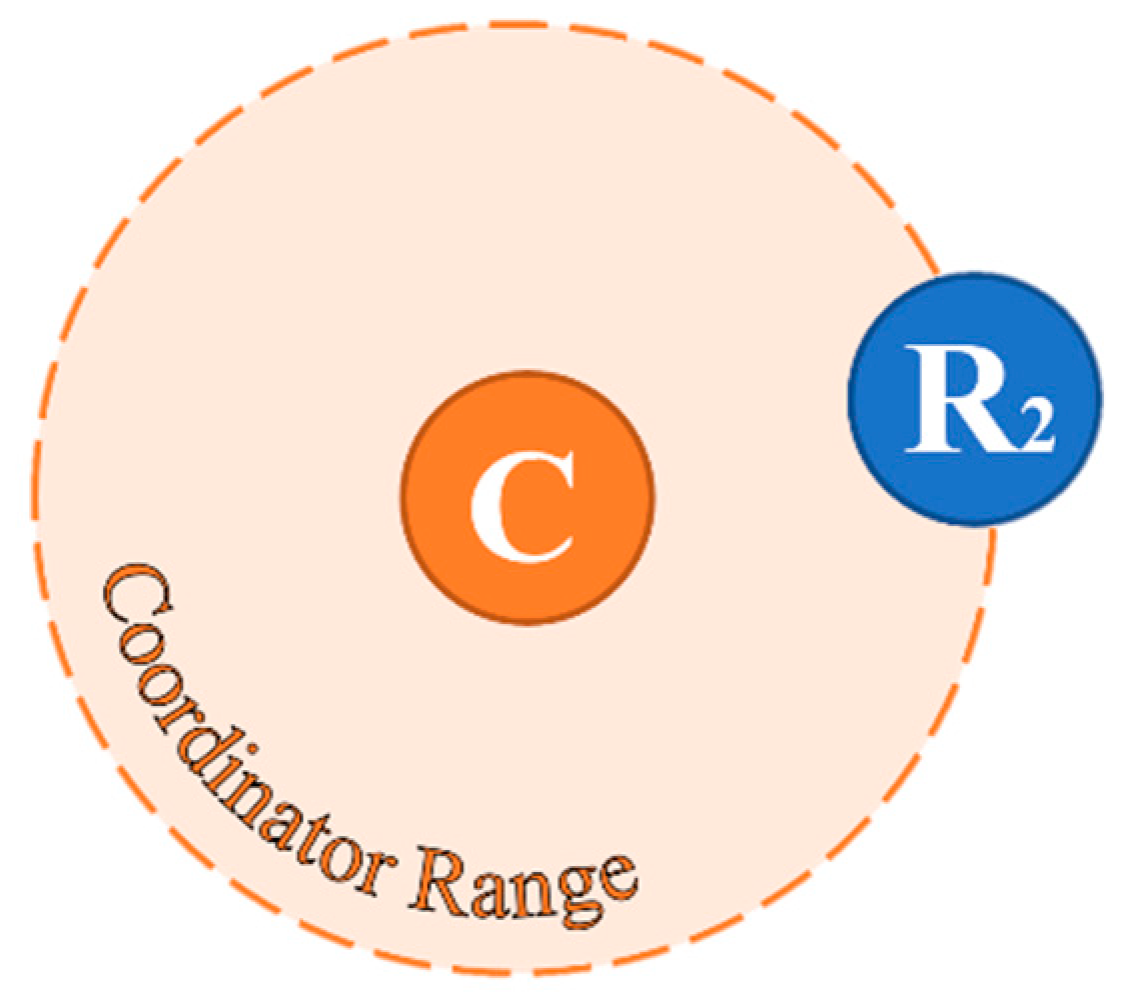

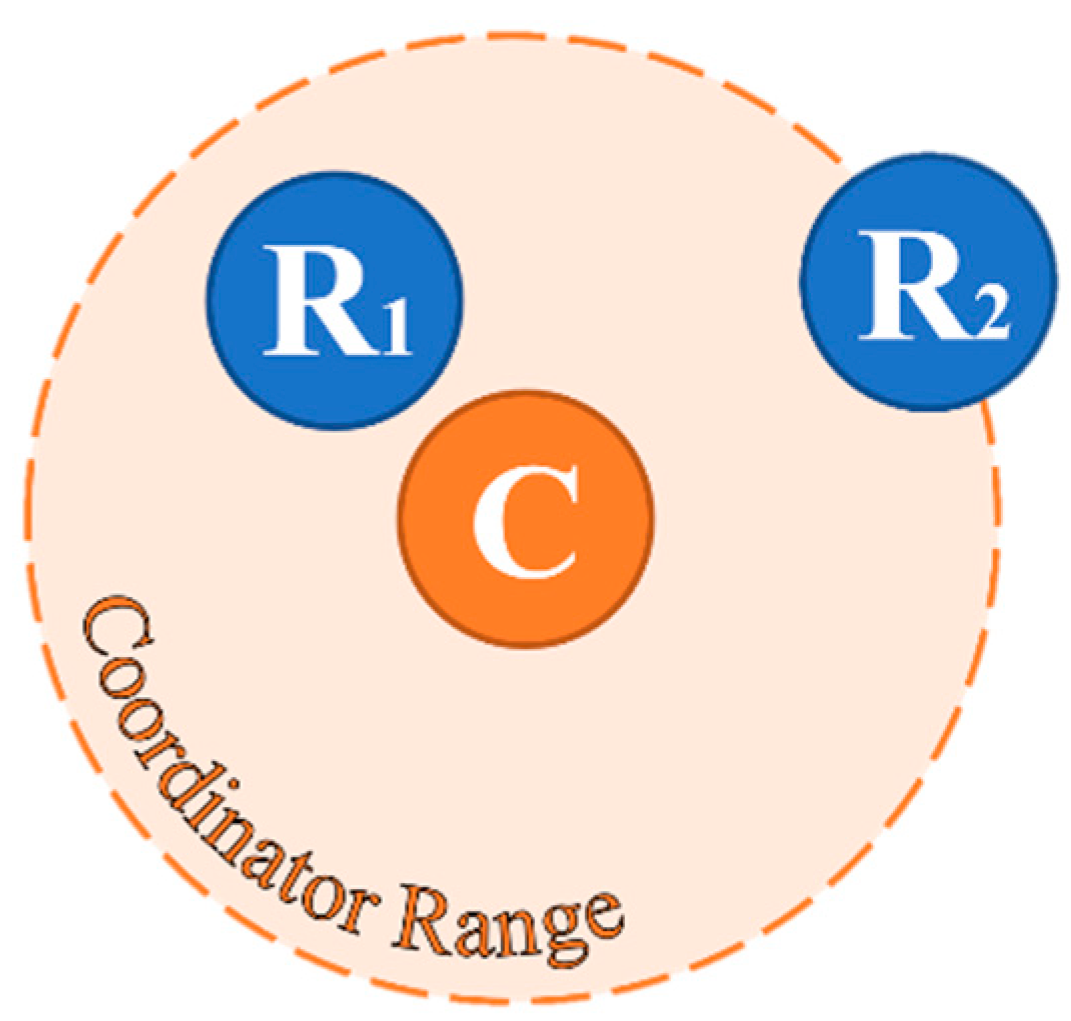

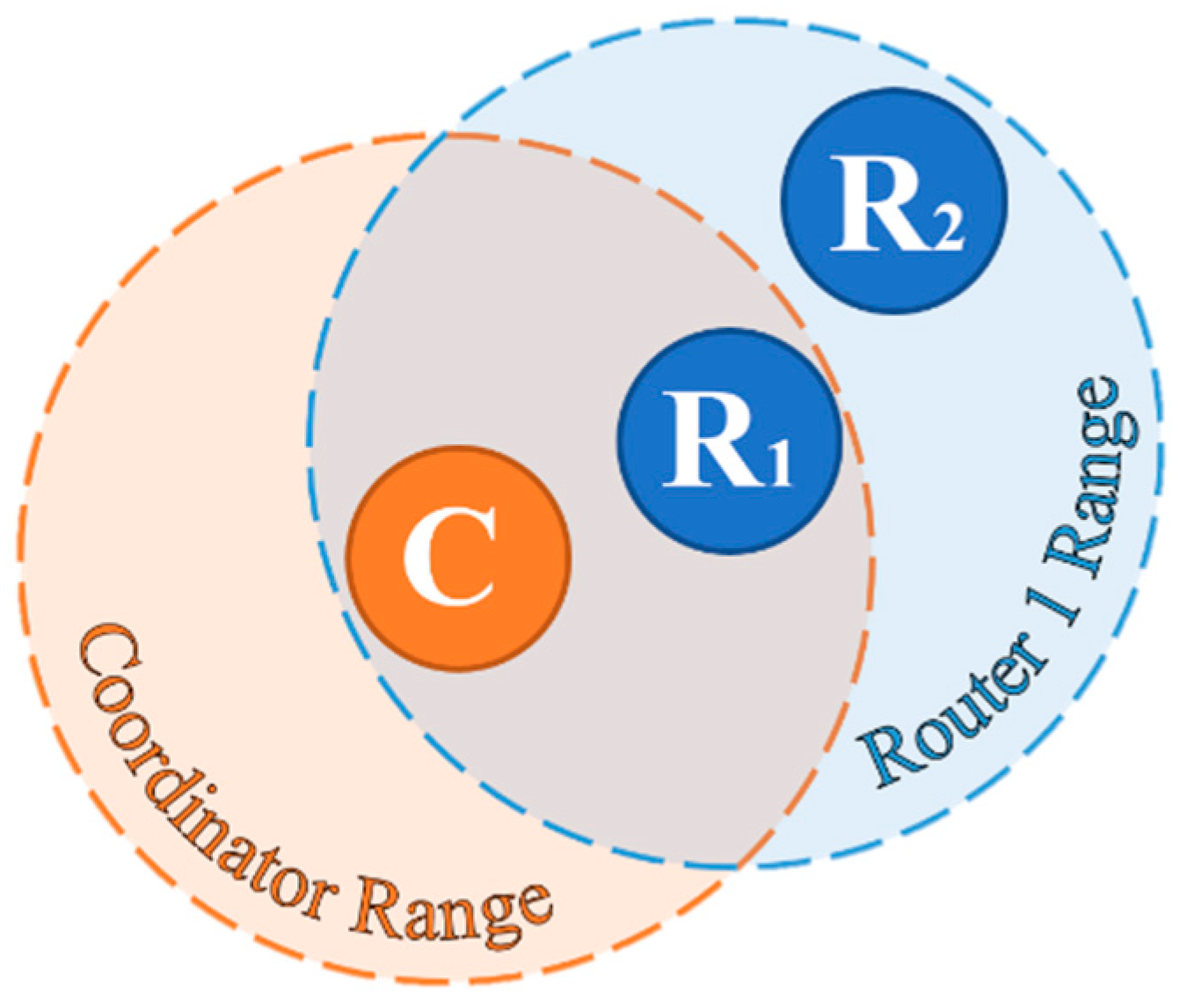

3.1. Configuration

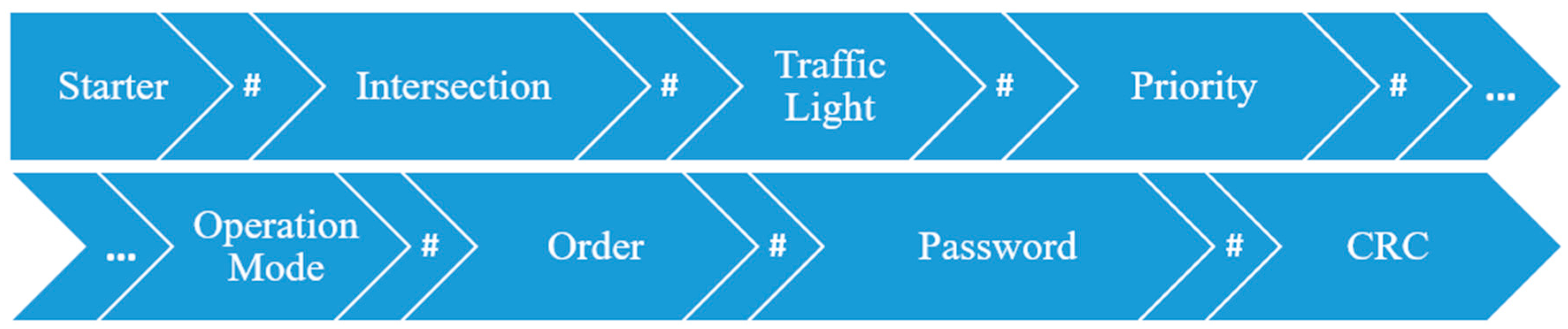

3.2. Protocol and Security

4. Experimental Setup

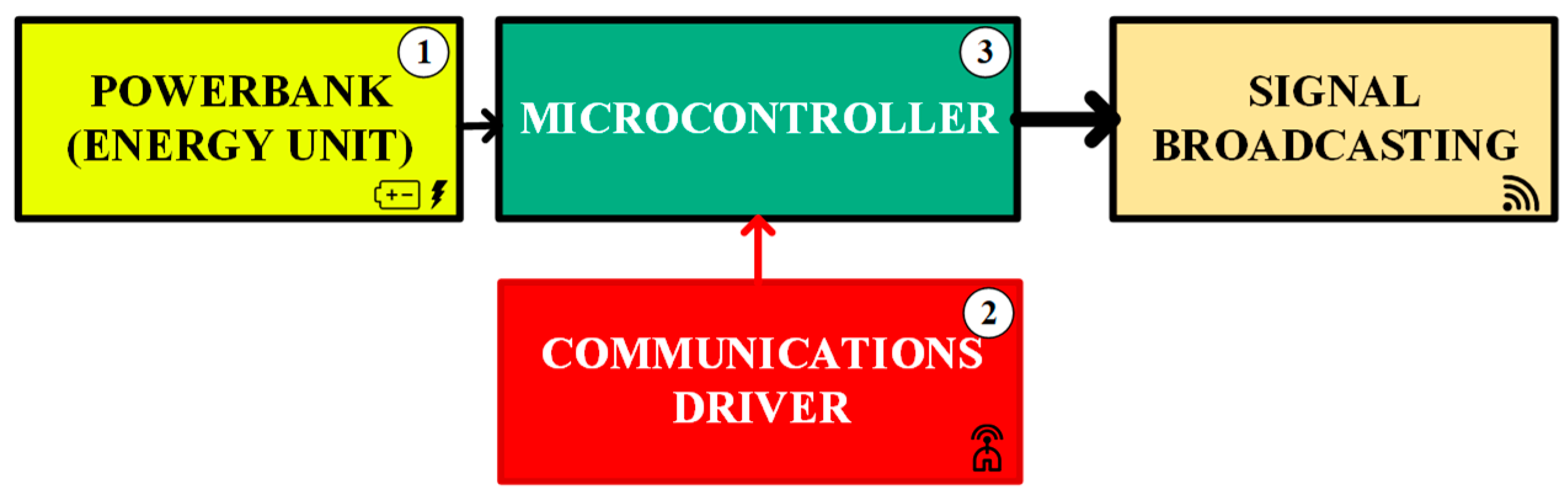

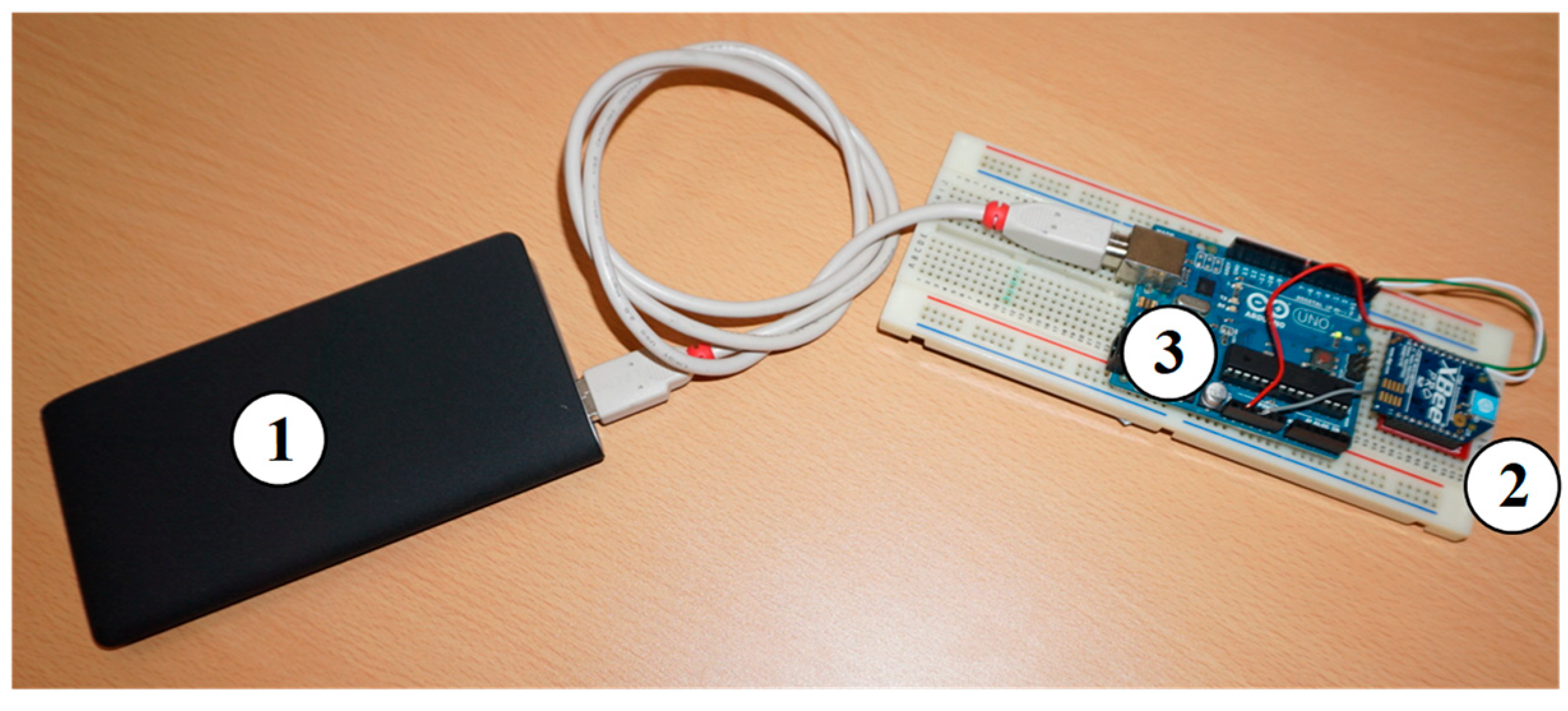

4.1. Case Study A—Communication Devices

4.2. Case Study B—Interference Frequency of Communication Devices

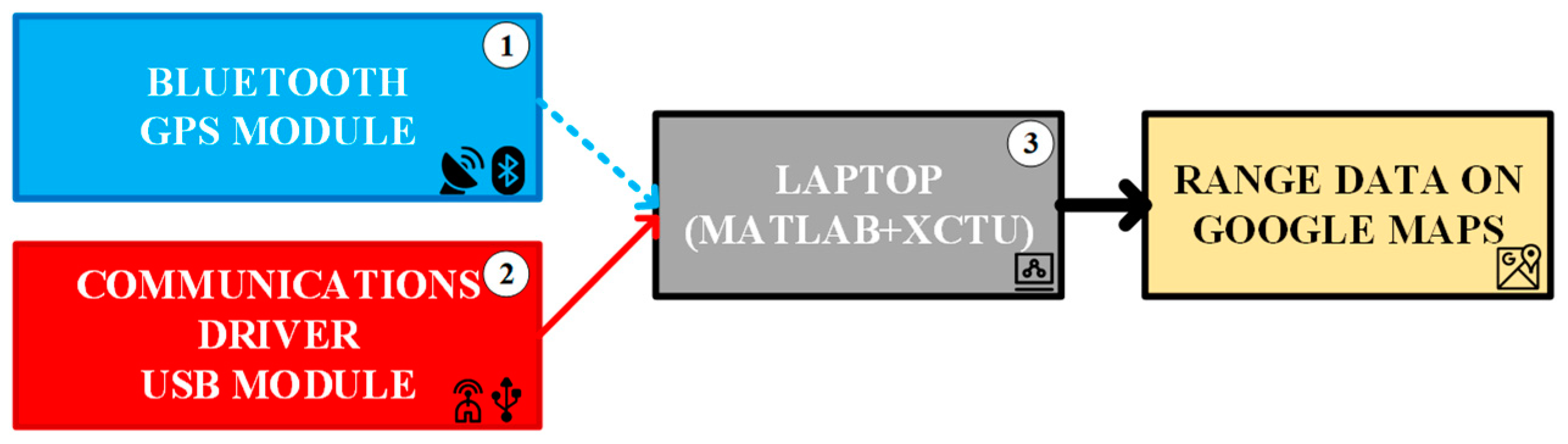

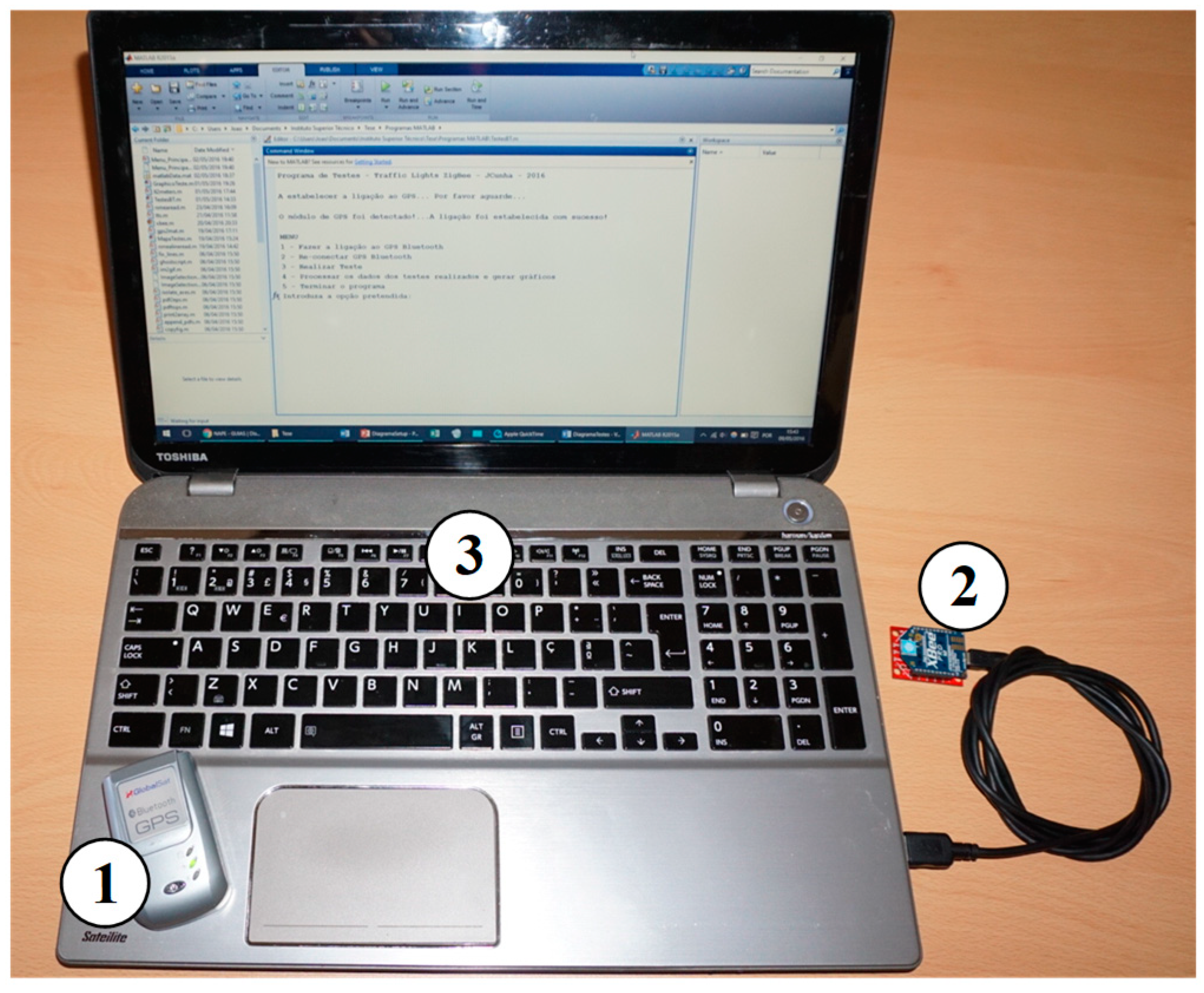

4.3. Case Study C—Range of Communication Devices and Signal Quality

4.4. Case Study D—Traffic Light Operation

5. Experimental Results

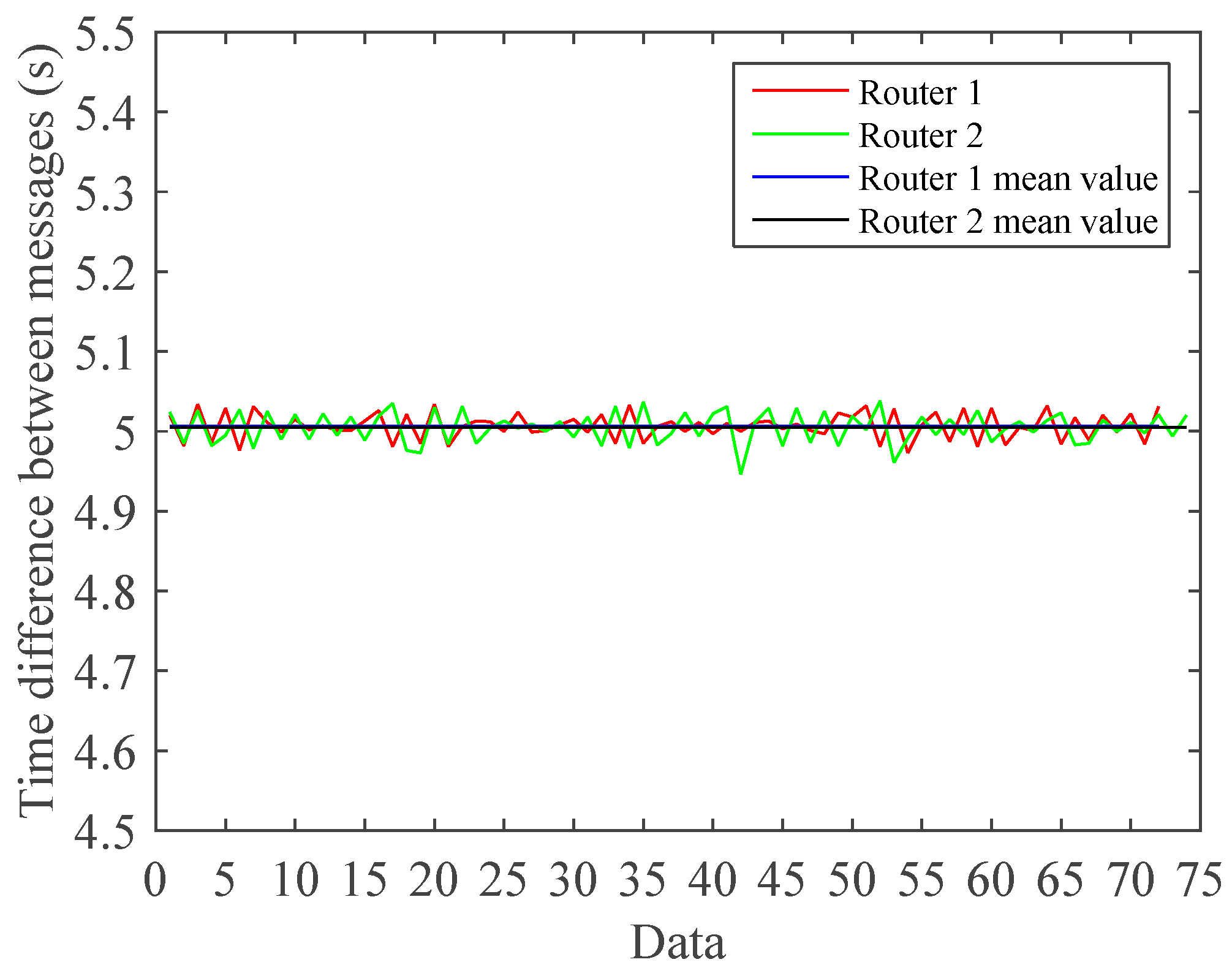

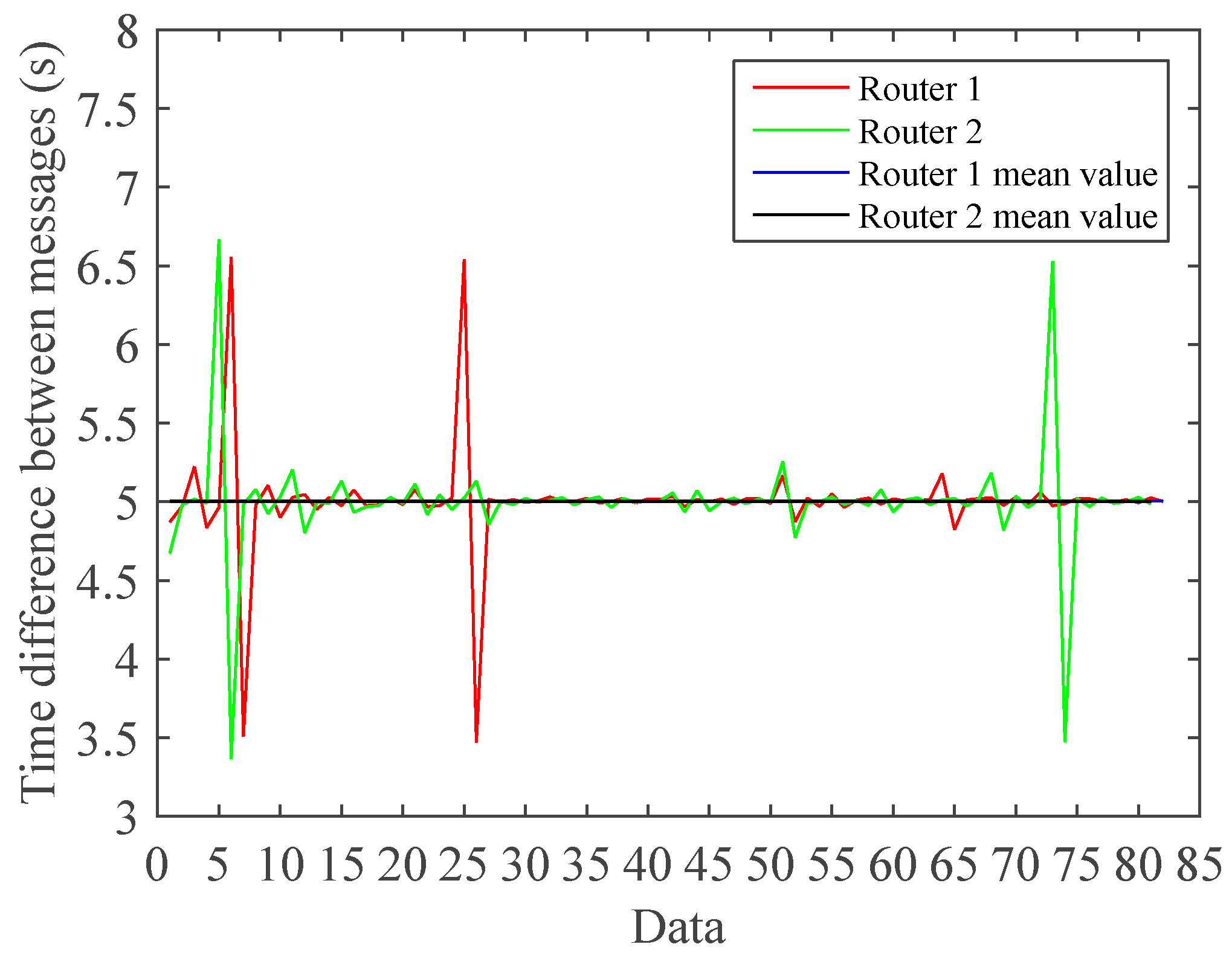

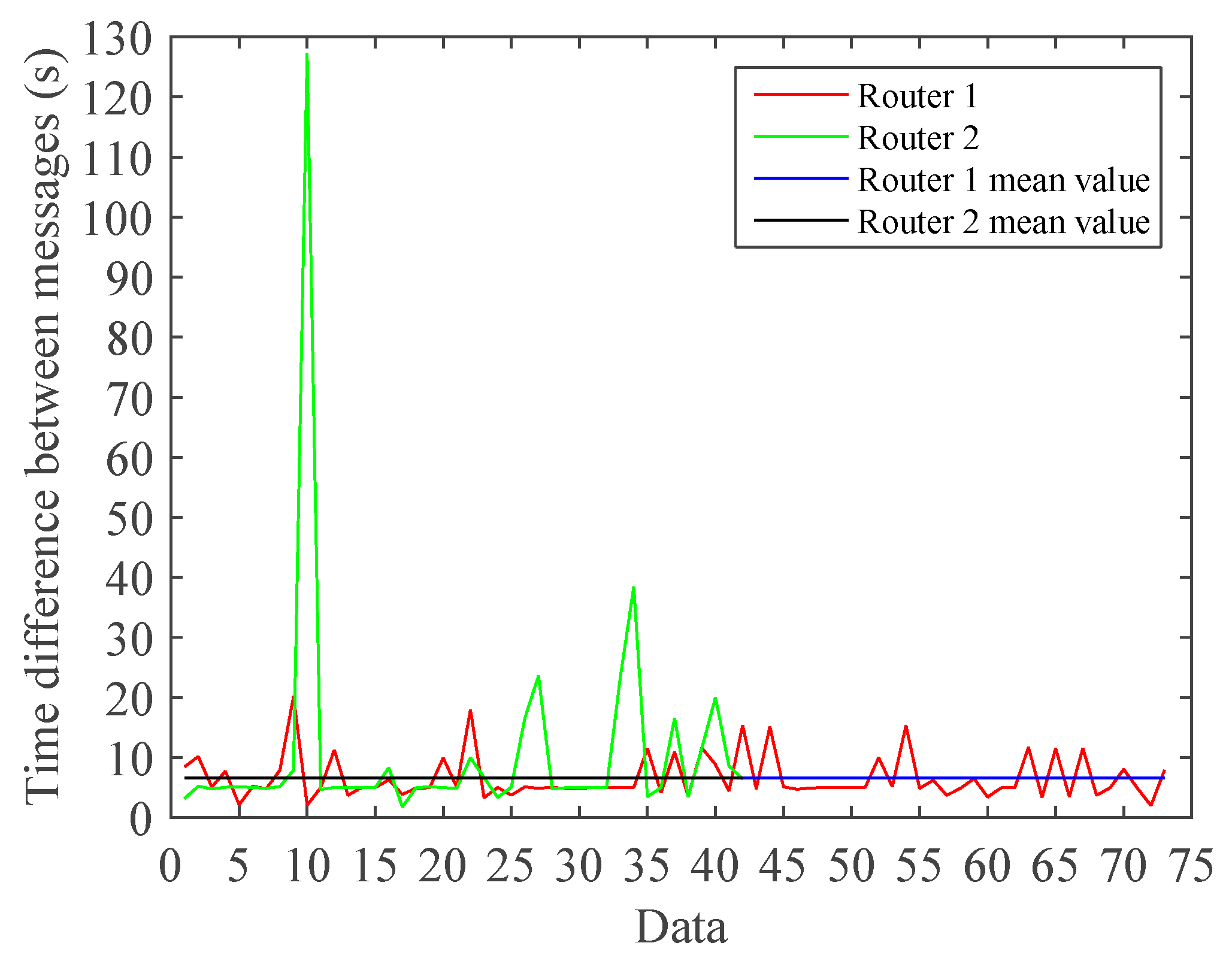

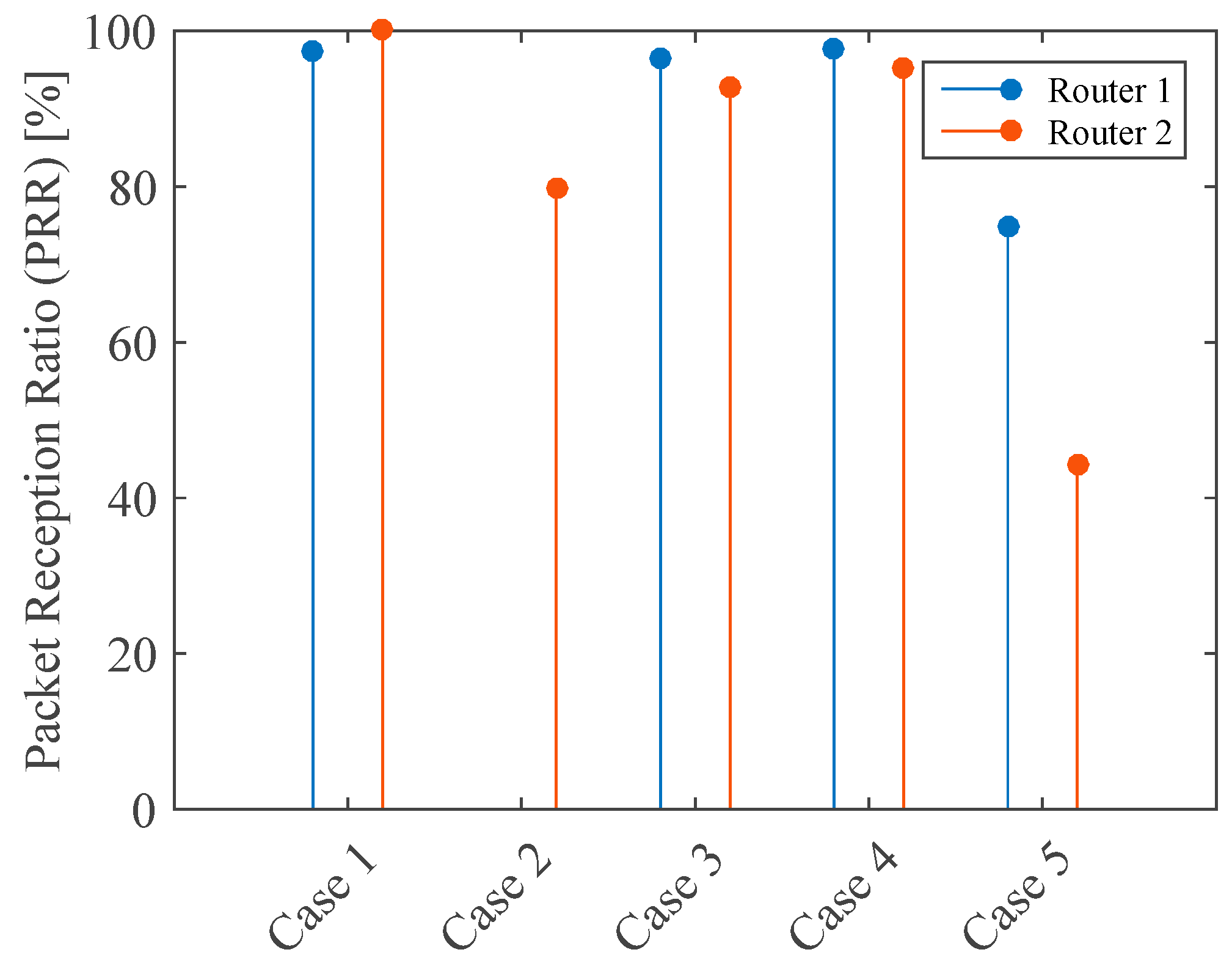

5.1. Experimental Results for Case Study A

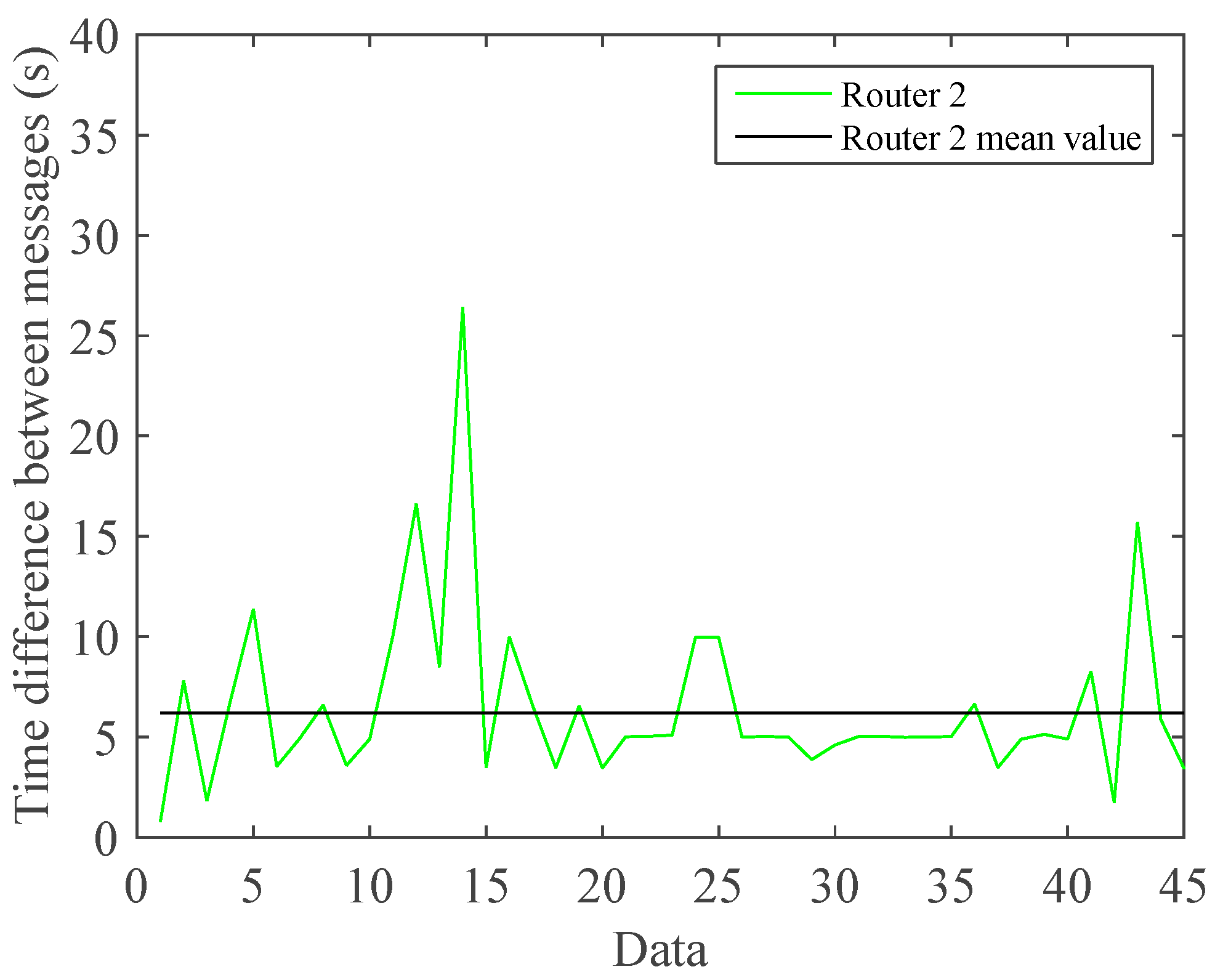

5.2. Experimental Results for Case Study B

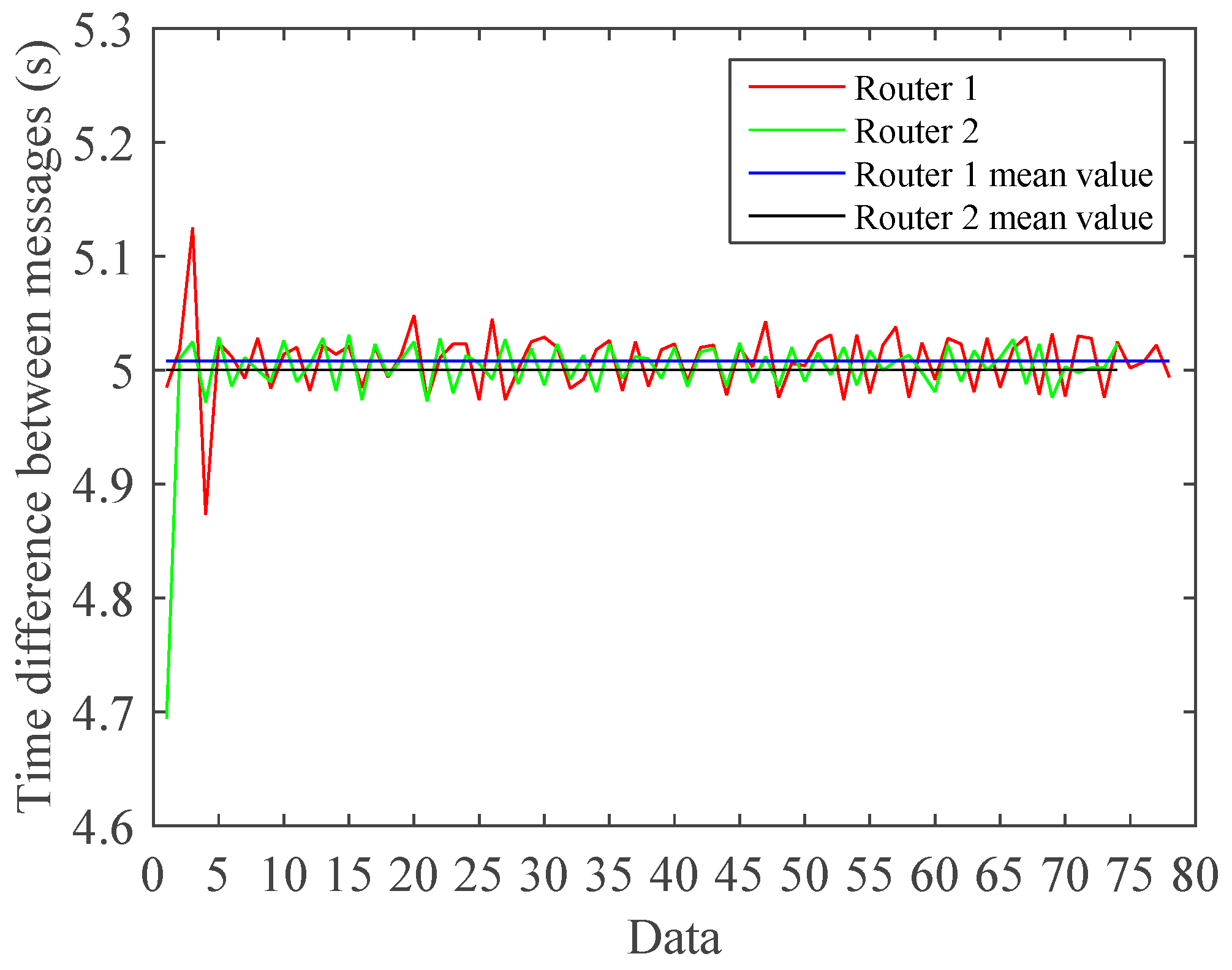

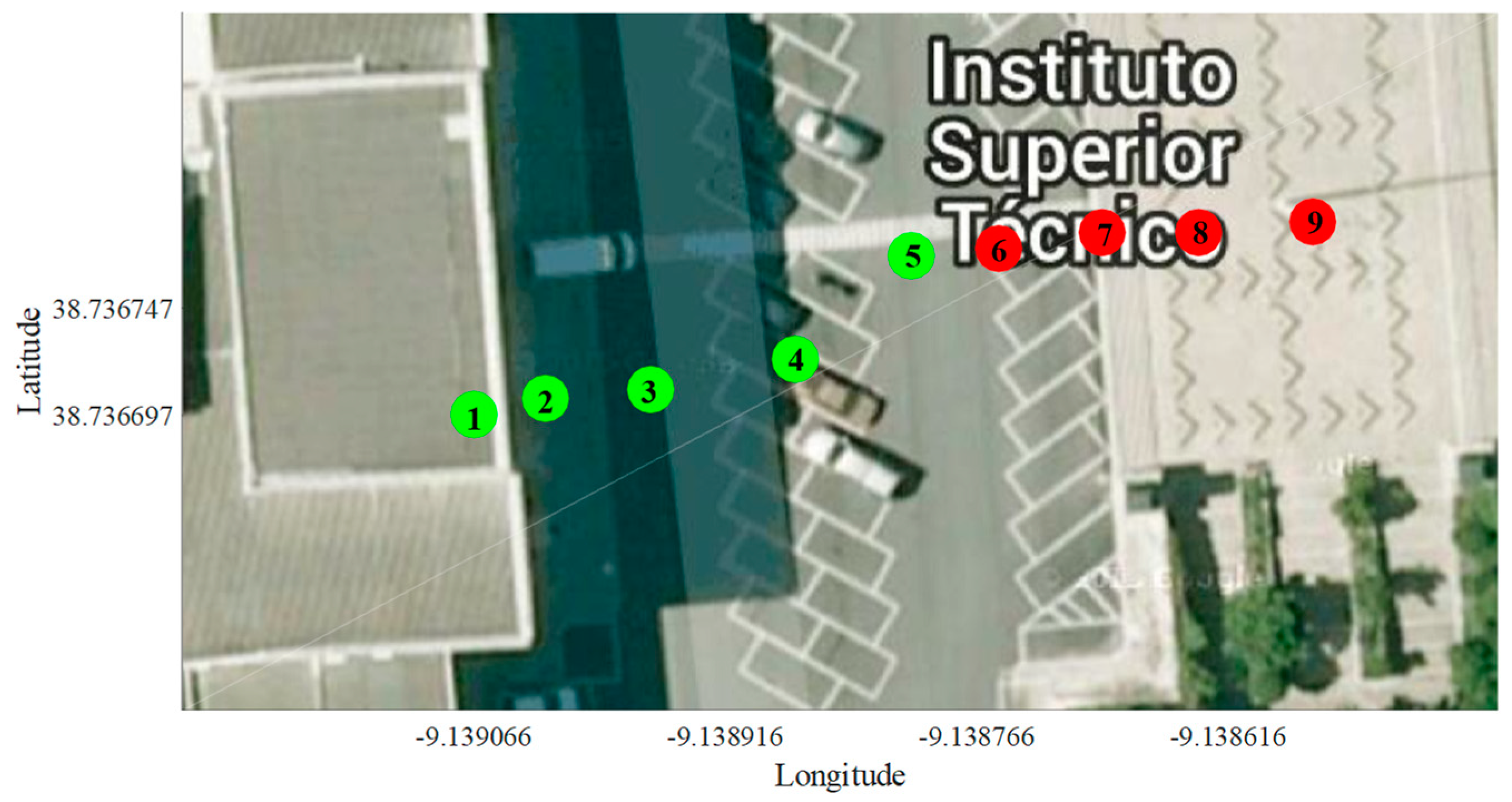

5.3. Experimental Results for Case Study C

6. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Nomenclature

| AES | Advanced encryption standard |

| API | Application programming interface |

| CP | Communication protocol |

| IoT | Internet of Things |

| ITS | Intelligent transport system |

| LQI | Link quality indicator |

| PRR | Packet reception ratio |

| SCs | Smart Cities |

| SNR | Signal-to-noise ratio |

| TC | Traffic control |

| WCT | Wireless communication technology |

References

- Kasarda, J.D. Aerotropolis. In The Wiley Blackwell Encyclopedia of Urban and Regional Studies; Orum, A., Ed.; John Wiley & Sons: Hoboken, NJ, USA, 2019; pp. 1–7. [Google Scholar]

- Yousef, K.M.; Al-Karaki, J.N.; Shatnawi, A.M. Intelligent traffic flow control system using wireless sensors networks. J. Inf. Sci. Eng. 2010, 26, 753–768. [Google Scholar]

- Pescaru, D.; Curiac, D. Ensemble based traffic light control for city zones using a reduced number of sensors. Transp. Res. Part C: Emerg. Technol. 2014, 46, 261–273. [Google Scholar] [CrossRef]

- Kasarda, J.D.; Stephen, J.A. Planning a competitive aerotropolis. In The Economics of International Airline Transport; James, H.P., Jr., Ed.; Emerald Group Publishing Limited: West Yorkshire, UK, 2014; pp. 281–308. [Google Scholar]

- Graham, A. How important are commercial revenues to today’s airports? J. Air Transp. Manag. 2009, 15, 106–111. [Google Scholar] [CrossRef]

- Airport Planning Manual: Part 2 Land Use and Environmental Control, 3rd Ed. ed; Doc 9184; International Civil Aviation Organization (ICAO): Montréal, QC, Canada, 2002.

- Knaian, A.N. A Wireless Sensor Network for Smart Roadbeds and Intelligent Transportation Systems. Master’s Thesis, Massachusetts Institute of Technology, Cambridge, MA, USA, 2000. [Google Scholar]

- Faye, S.; Chaudet, C. Characterizing the topology of an urban wireless sensor network for road traffic management. IEEE Trans. Veh. Technol. 2016, 65, 5720–5725. [Google Scholar] [CrossRef]

- Wen, W. A dynamic and automatic traffic light control expert system for solving the road congestion problem. Expert Syst. Appl. 2008, 34, 2370–2381. [Google Scholar] [CrossRef]

- Araghi, S.; Khosravi, A.; Creighton, D. A review on computational intelligence methods for controlling traffic signal timing. Expert Syst. Appl. 2015, 42, 1538–1550. [Google Scholar] [CrossRef]

- Jiang, R.; Chen, J.; Ding, Z.; Ao, D.; Hu, M.; Gao, Z.; Jia, B. Network operation reliability in a Manhattan-like urban system with adaptive traffic lights. Transp. Res. Part C 2016, 69, 527–547. [Google Scholar] [CrossRef]

- Hodge, V.J.; O’Keefe, S.; Weeks, M.; Moulds, A. Wireless Sensor networks for condition monitoring in the railway industry: A survey. IEEE Trans. Intell. Transp. Syst. 2015, 16, 1088–1106. [Google Scholar] [CrossRef]

- Wen, W. An intelligent traffic management expert system with RFID technology. Expert Syst. Appl. 2010, 37, 3024–3035. [Google Scholar] [CrossRef]

- Franceries, E.; Liver, K. Centralized traffic management system as response to the effective realization of urban traffic fluency. Arch. Transp. Telemat. 2011, 4, 4–10. [Google Scholar]

- Directive 2010/40/EU. On the Framework for the Deployment of Intelligent Transport Systems in the Field of Road Transport and for Interfaces with Other Modes of Transport; European Parliament and of the Council: Brussels, Belgium, 2010.

- Zantalis, F.; Koulouras, G.; Karabetsos, S.; Kandris, D.A. A review of machine learning and IoT in smart transportation. Future Internet 2019, 11, 94. [Google Scholar] [CrossRef]

- Collotta, M.; Lo Bello, L.; Pau, G. A novel approach for dynamic traffic lights management based on wireless sensor networks and multiple fuzzy logic controllers. Expert Syst. Appl. 2015, 42, 5403–5415. [Google Scholar] [CrossRef]

- Kafi, M.A.; Challal, Y.; Djenouri, D.; Doudou, M.; Bouabdallah, A.; Badache, N. A study of wireless sensor networks for urban traffic monitoring: Applications and architectures. Procedia Comput. Sci. 2013, 19, 617–626. [Google Scholar] [CrossRef]

- Hussian, R.; Sharma, S.; Sharma, V. WSN applications: Automated intelligent traffic control system using sensors. Int. J. Soft Comput. Eng. 2013, 3, 77–81. [Google Scholar]

- Siemens Mobility 2016. Sitraffic Wimag: Wireless Magnetic Detector, Innovation in Traffic Detection. Available online: http://www.siemens.com/mobility/ (accessed on 20 January 2020).

- Cardeira, C.; Colombo, A.W.; Schoop, R. Wireless solutions for automation requirements. ATP Int. -Autom. Technol. Pract. 2006, 2, 51–58. [Google Scholar]

- Batista, N.C.; Melicio, R.; Matias, J.C.O.; Catalão, J.P.S. Photovoltaic and wind energy systems monitoring and building/home energy management using ZigBee devices within a smart grid. Energy 2013, 49, 306–315. [Google Scholar] [CrossRef]

- IEEE Standard for Information Technology-Telecommunications and Information Exchange between Systems-Local and Metropolitan Area Networks-Specific Requirements—Part 15.4: Wireless Medium Access Control (MAC) And Physical Layer (PHY) Specifications for Low-Rate Wireless Personal Area Networks (LR-WPANs); IEEE Computer Society: Washington, DC, USA, 2018; pp. 1–679, IEEE Std 802.15.4‐2003.

- Morais, R.; Fernandes, M.A.; Matos, S.G.; Serôdio, C.; Ferreira, P.J.S.G.; Reis, M.J.C.S. A ZigBee multi-powered wireless acquisition device for remote sensing applications in precision viticulture. Comput. Electron. Agric. 2008, 62, 94–106. [Google Scholar] [CrossRef]

- Zanella, A.; Bui, N.; Castellani, A.; Vangelista, L.; Zorzi, M.A. Internet of things for smart cities. IEEE Internet Things J. 2014, 1, 22–32. [Google Scholar] [CrossRef]

- Pérez, J.; Seco, F.; Milanés, V.; Jiménez, A.; Díaz, J.C.; De Pedro, T. An RFID-based intelligent vehicle speed controller using active traffic signals. Sensors 2010, 10, 5872–5887. [Google Scholar] [CrossRef]

- Zhou, B.; Cao, J.; Zeng, X.; Wu, H. Adaptive traffic light control in wireless sensor network-based intelligent transportation system. In Proceedings of the 72nd IEEE Vehicular Technology Conference-Fall, Otawa, ON, Canada, 6–9 September 2010; pp. 1–5. [Google Scholar]

- Swathi, K.; Sivanagaraju, V.; Manikanta, A.K.S.; Kumar, S.D. Traffic density control and accident indicator using WSN. Int. J. Mod. Trends Sci. Technol. 2016, 2, 2455–3778. [Google Scholar]

- Sivarao, S.K.S.; Mazran, E.; Anand, T.J.S. Electrical & mechanical fault alert traffic light system using wireless technology. Int. J. Mech. Mechatron. Eng. 2010, 10, 15–18. [Google Scholar]

- Tubaishat, M.; Shang, Y.; Shi, H. Adaptive traffic light control with wireless sensor networks. In Proceedings of the 4th IEEE Consumer Communications and Networking Conference, Las Vegas, NV, USA, 11–13 January 2007; pp. 187–191. [Google Scholar]

- Naranjo, P.G.V.; Pooranian, Z.; Shojafar, M.; Conti, M.; Buyya, R. FOCAN: A Fog-supported smart city network architecture for management of applications in the internet of everything environments. J. Parallel Distrib. Comput. 2019, 132, 274–283. [Google Scholar] [CrossRef]

- Charles, M.B.; Barnes, P.; Ryan, N.; Clayton, J. Airport futures: Towards a critique of the aerotropolis model. Futures 2007, 39, 1009–1028. [Google Scholar] [CrossRef]

- Cunha, J.; Cardeira, C.; Melicio, R. Traffic lights control prototype using wireless technologies. Renew. Energy Power Qual. J. (RE&PQJ) 2016, 1, 1031–1036. [Google Scholar]

- Cunha, J.; Cardeira, C.; Melicio, R. Wireless technologies for controlling a traffic lights prototype. In Proceedings of the 17th IEEE International Conference on Power Electronics and Motion Control, Varna, Bulgaria, 25–30 September 2016; pp. 866–871. [Google Scholar]

- Batista, N.C.; Melicio, R.; Mendes, V.M.F. Services enabler architecture for smart grid and smart living services providers under industry 4.0. Energy Build. 2017, 141, 16–27. [Google Scholar] [CrossRef]

- Usman, A.; Shami, S.H. Evolution of communication technologies for smart grid applications. Renew. Sustain. Energy Rev. 2013, 3, 191–199. [Google Scholar] [CrossRef]

- Batista, N.C.; Melicio, R.; Matias, J.C.O.; Catalão, J.P.S. ZigBee standard in the creation of wireless networks for advanced metering infrastructures. In Proceedings of the 16th IEEE Mediterranean Electrotechnical Conference, Yasmine Hammamet, Tunisia, 25–28 March 2012; pp. 220–223. [Google Scholar]

- Batista, N.C.; Melicio, R.; Matias, J.C.O.; Catalão, J.P.S. ZigBee wireless area network for home automation and energy management: Field trials and installation approaches. In Proceedings of the 3rd IEEE PES Europe Conference on Innovative Smart Grid Technologies, Berlin, Germany, 14–17 October 2012; pp. 1–5. [Google Scholar]

- Batista, N.C.; Melicio, R.; Matias, J.C.O.; Catalão, J.P.S. ZigBee devices for distributed generation management: Field tests and installation approaches. In Proceedings of the 6th IET International Conference on Power Electronics, Machines and Drives, Bristol, UK, 27–29 March 2012; pp. 1–5. [Google Scholar]

- Batista, N.C.; Melicio, R.; Mendes, V.M.F. Layered smart grid architecture approach and field tests by ZigBee technology. Energy Convers. Manag. 2014, 88, 49–59. [Google Scholar] [CrossRef]

- IEEE Standard for Local and Metropolitan Area Networks-Part 15.4: Low-Rate Wireless Personal Area Networks (LR-WPANs); IEEE Computer Society: Washington, DC, USA, 2011; pp. 1–314, IEEE Std 802.15.4‐2011.

- Digi International. XBee/XBee-PRO ZB RF Modules; Digi International, Inc.: Hopkins, MN, USA, 2012; pp. 1–157. [Google Scholar]

- ZigBee Alliance. ZigBee Specification—Document 053474r20; ZigBee Standards Organization: Davis, CA, USA, 2012; pp. 1–622. [Google Scholar]

- Koopman, P.; Chakravarty, T. Cyclic redundancy code (CRC) polynomial selection for embedded networks. In Proceedings of the International Conference on Dependable Systems and Networks, Florence, Italy, 28 June–1 July 2004; pp. 1–11. [Google Scholar]

- Goel, A.; Ray, S.; Chandra, N. Intelligent traffic light system to prioritized emergency purpose vehicles based on wireless sensor network. Int. J. Comput. Appl. 2012, 40, 36–39. [Google Scholar] [CrossRef]

- Iyyappan, M.S.; Nandagopal, M.V. Automatic accident detection and ambulance rescue with intelligent traffic light system. Int. J. Adv. Res. Electr. Electron. Instrum. Eng. 2013, 2, 1319–1325. [Google Scholar]

- Vieira, M.A. Melhoria da velocidade dos transportes públicos de superfície em Lisboa por regulação da admissão de trânsito. Master’s Thesis, Instituto Superior Técnico, Lisbon, Portugal, 2004. [Google Scholar]

- Koehler, L.A.; Kraus, W., Jr. Simultaneous control of traffic lights and bus departure for priority operation. Transp. Res. Part C 2010, 18, 288–298. [Google Scholar] [CrossRef]

- Huang, Y.; Shiue, J.; Luo, J. A traffic signal control policy for emergency vehicles preemption using timed petri nets. IFAC-PapersOnLine 2015, 48, 2183–2188. [Google Scholar] [CrossRef]

- Antipova, A.; Ozdenerol, E. Using longitudinal employer dynamics (LED) data for the analysis of Memphis Aerotropolis, Tennessee. Appl. Geogr. 2013, 42, 48–62. [Google Scholar] [CrossRef]

- Banai, R. The aerotropolis: Urban sustainability perspectives from the regional city. J. Transp. Land Use 2017, 10, 357–373. [Google Scholar] [CrossRef]

- Zheng, G.; Han, D.; Zheng, R.; Schmitz, C.; Yuan, X. A link quality inference model for IEEE 802.15.4 low-rate WPANs. In Proceedings of the IEEE Global Telecommunications Conference, Houston, TX, USA, 5–9 December 2011; pp. 1–6. [Google Scholar]

| Cases | Data Analysis | ||||

|---|---|---|---|---|---|

| Source | Mean Time per Packet (s) | Maximum Time per Packet (s) | Minimum Time per Packet (s) | Packet Reception Ratio (PRR) | |

| Case 1 | Router 1 | 5.0068 | 5.0340 | 4.9730 | 97.33 |

| Router 2 | 5.0047 | 5.0380 | 4.9460 | 100 | |

| Case 2 | Router 1 (OFF) | NA | NA | NA | NA |

| Router 2 | 6.1841 | 26.4100 | 0.7650 | 79.80 | |

| Case 3 | Router 1 | 5.0083 | 5.1250 | 4.8730 | 96.34 |

| Router 2 | 5.3491 | 31.1570 | 4.6940 | 92.68 | |

| Case 4 | Router 1 | 5.0059 | 6.5560 | 3.4710 | 97.65 |

| Router 2 | 5.0003 | 6.6680 | 3.3680 | 95.35 | |

| Case 5 | Router 1 | 6.6024 | 20.1980 | 2.0120 | 74.75 |

| Router 2 | 10.8373 | 127.3110 | 1.7620 | 44.33 | |

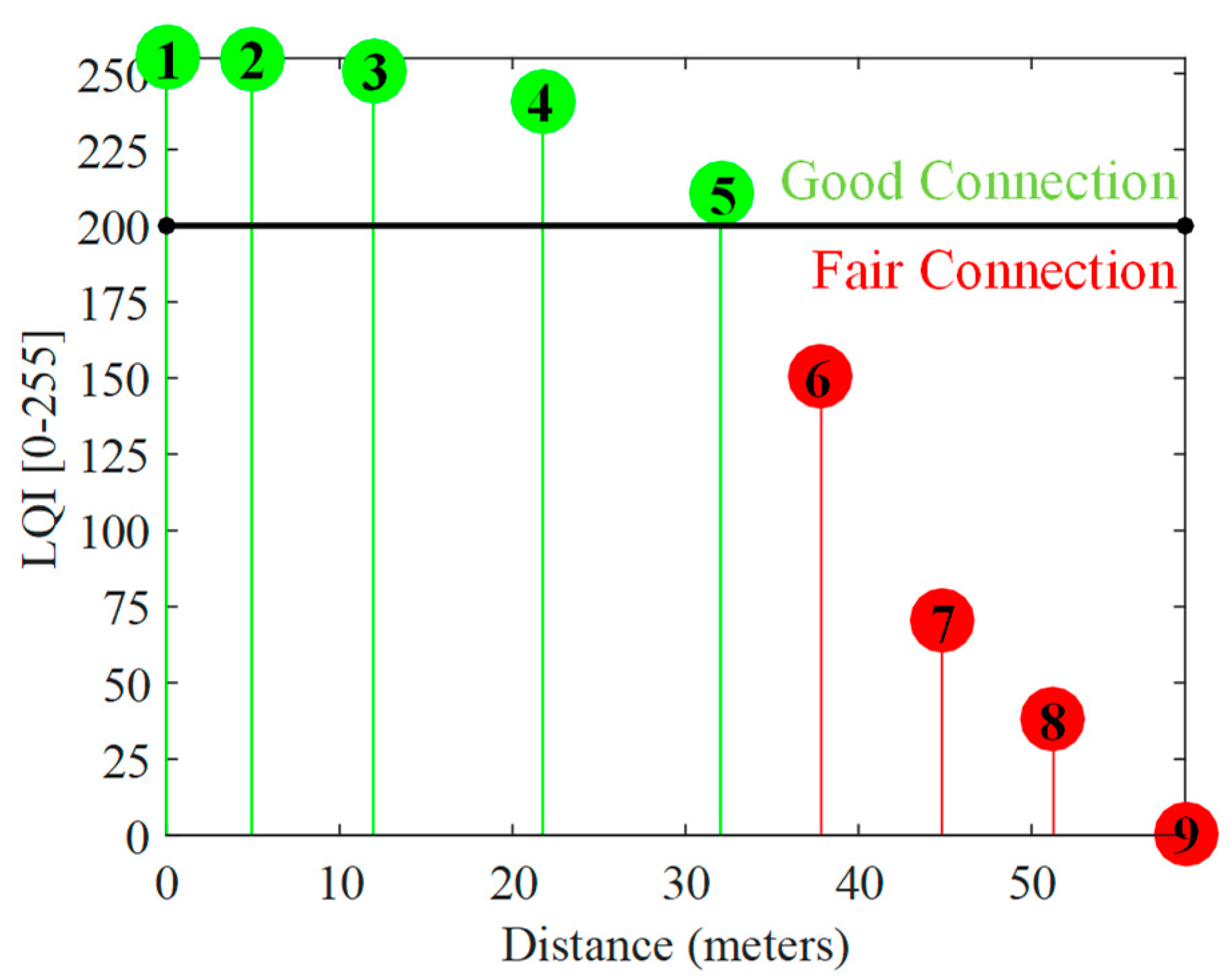

| Points | Data Analysis | |||

|---|---|---|---|---|

| Longitude | Latitude | Mean Link Quality Indicator (LQI) (0–255) | Observations | |

| Point 1 | –9.139066 | 38.736697 | 255 | Connection established |

| Point 2 | –9.139023 | 38.736705 | 254.7 | Good connection |

| Point 3 | –9.138960 | 38.736709 | 250.7 | Good connection |

| Point 4 | –9.138874 | 38.736723 | 241 | Good connection |

| Point 5 | –9.138805 | 38.736771 | 210.3 | Good connection |

| Point 6 | –9.138753 | 38.739775 | 150.3 | Fair connection |

| Point 7 | –9.138691 | 38.736782 | 70.7 | Fair connection |

| Point 8 | –9.138633 | 38.736782 | 38.3 | Fair connection |

| Point 9 | –9.138565 | 38.736787 | 0 | Connection lost |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Cunha, J.; Batista, N.; Cardeira, C.; Melicio, R. Wireless Networks for Traffic Light Control on Urban and Aerotropolis Roads. J. Sens. Actuator Netw. 2020, 9, 26. https://doi.org/10.3390/jsan9020026

Cunha J, Batista N, Cardeira C, Melicio R. Wireless Networks for Traffic Light Control on Urban and Aerotropolis Roads. Journal of Sensor and Actuator Networks. 2020; 9(2):26. https://doi.org/10.3390/jsan9020026

Chicago/Turabian StyleCunha, João, Nelson Batista, Carlos Cardeira, and Rui Melicio. 2020. "Wireless Networks for Traffic Light Control on Urban and Aerotropolis Roads" Journal of Sensor and Actuator Networks 9, no. 2: 26. https://doi.org/10.3390/jsan9020026

APA StyleCunha, J., Batista, N., Cardeira, C., & Melicio, R. (2020). Wireless Networks for Traffic Light Control on Urban and Aerotropolis Roads. Journal of Sensor and Actuator Networks, 9(2), 26. https://doi.org/10.3390/jsan9020026