Big Sensed Data Meets Deep Learning for Smarter Health Care in Smart Cities

Abstract

1. Introduction

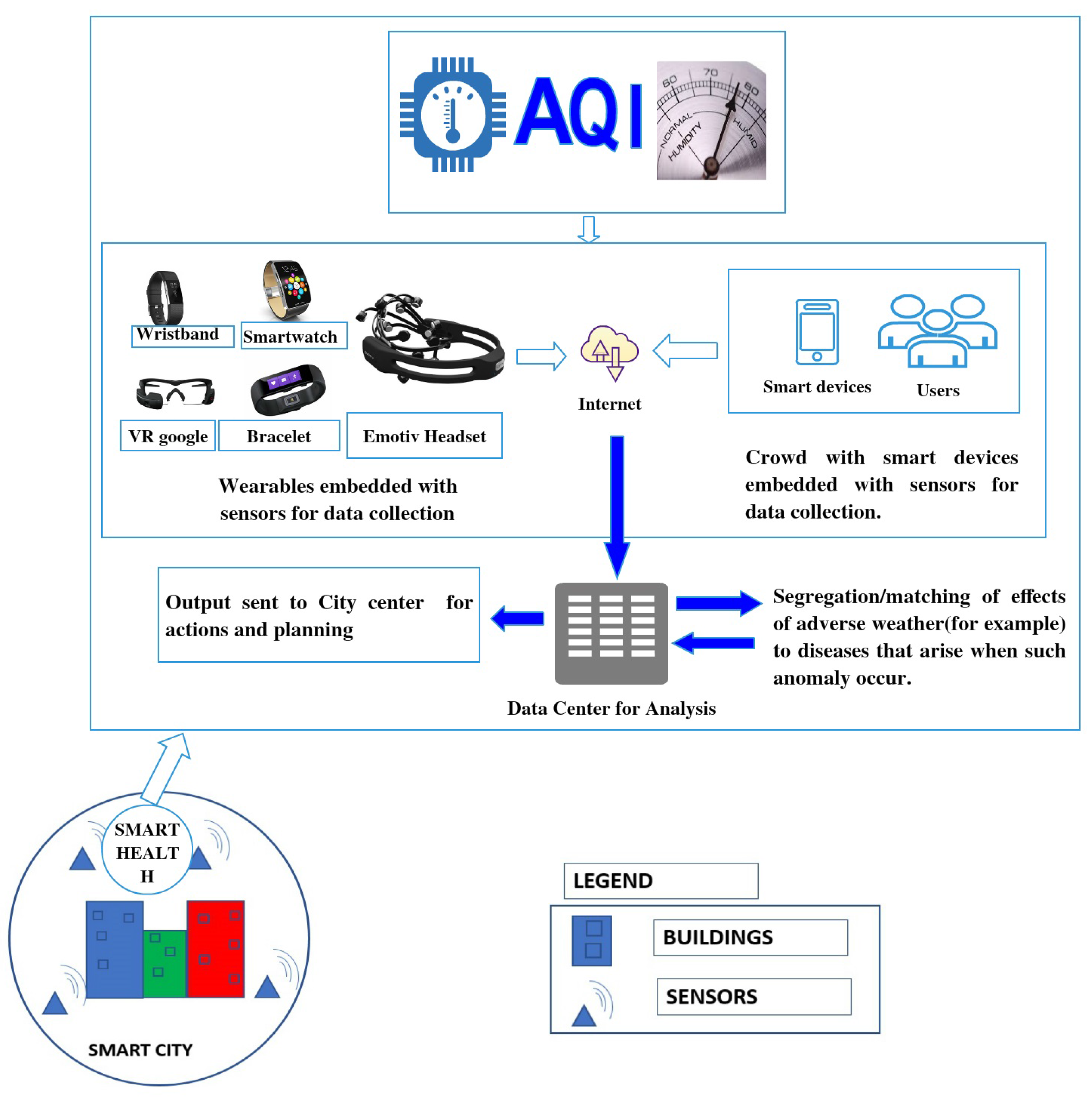

2. Analysis of Sensory Data in E-Health

2.1. Conventional Machine Learning on Sensed Health Data

2.2. Deep Learning on Sensed Health Data

3. Deep Learning Methods and Big Sensed Data

3.1. Deep Learning on Sensor Network Applications

3.2. Major Deep Learning Methods in Medical Sensory Data

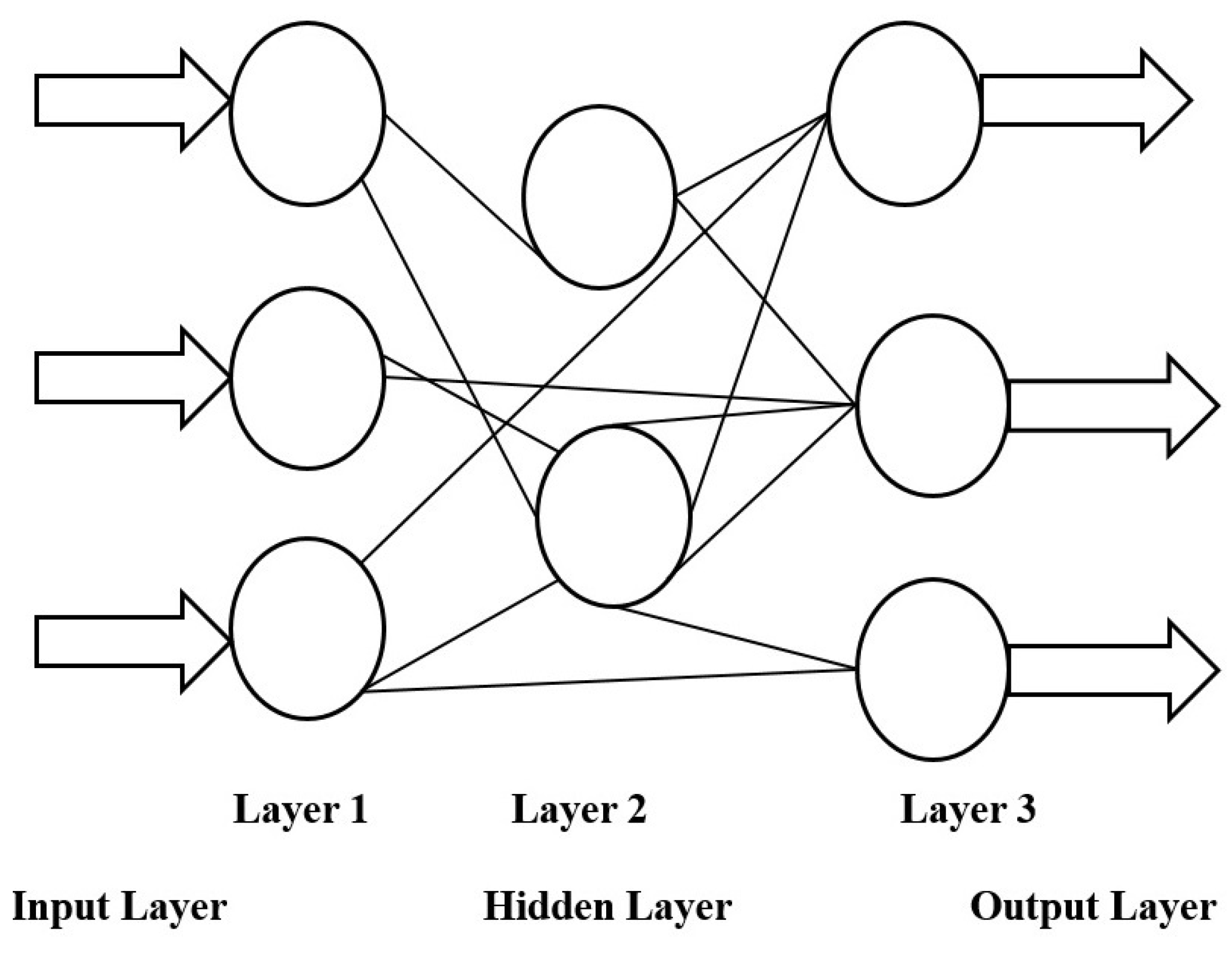

3.2.1. Deep Feedforward Networks

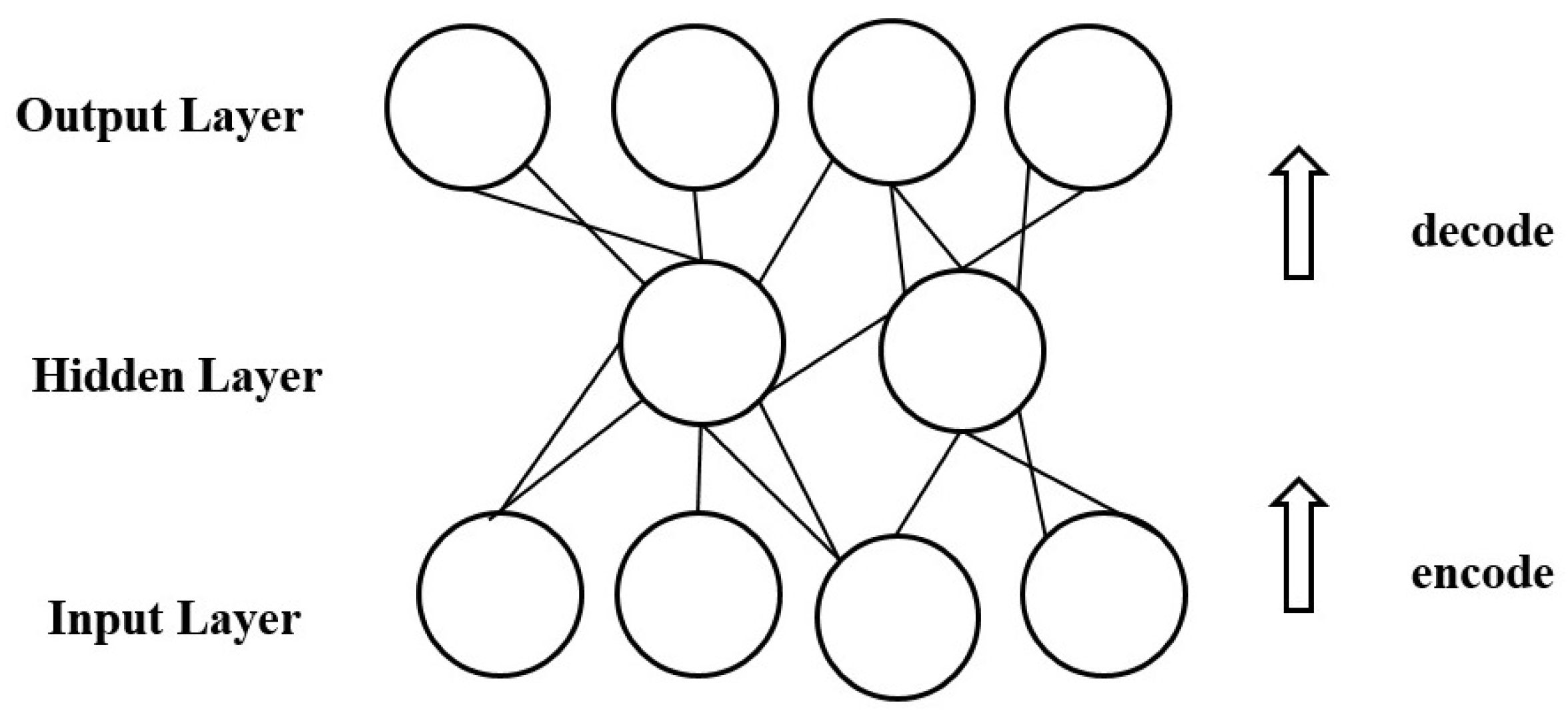

3.2.2. Autoencoder

- Undercomplete autoencoders [54] are suitable for the situation where the dimension of the code is less than the dimension of the input. This phenomenon usually leads to the inclusion of important features during training and learning.

- Regularized autoencoders [56] enable training any architecture of autoencoder successfully by choosing the code dimension and the capacity of the encoder/decoder based on the complexity of the distribution to be modelled.

- Sparse autoencoders [54] have a training criterion with a sparsity penalty, which usually occurs in the code layer with the purpose of copying the input to the output. Sparse autoencoders are used to learn features for another task such as classification.

- Denoising autoencoders [22] change the reconstruction error term of the cost function instead of adding a penalty to the cost function. Thus, a denoising autoencoder minimizes , where is a copy of x that has been distorted by noise.

- Contractive autoencoders [57] introduce an explicit regularizer on making the derivatives of f as small as possible. The contractive autoencoders are trained to resist any perturbation of the input; as such, they map a neighbourhood of input points to a smaller neighbourhood of output points.

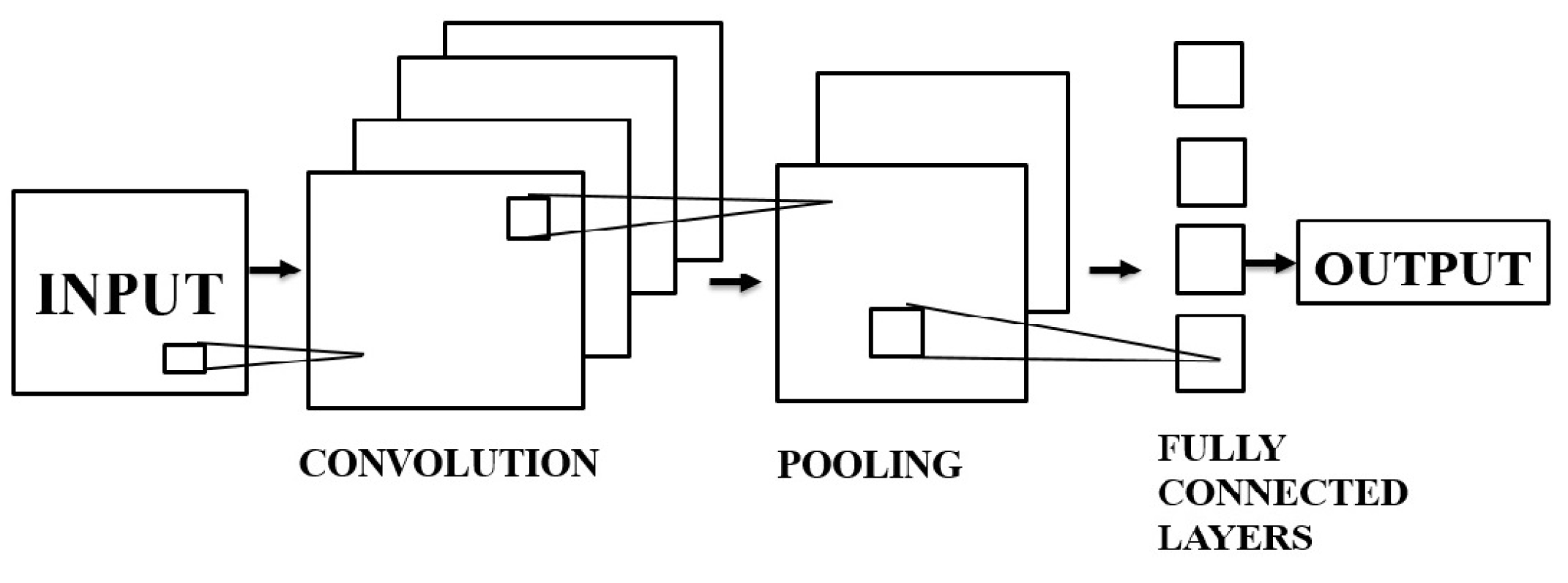

3.2.3. Convolutional Neural Networks

- Convolutional layer: The convolutional layer takes an image as the input, where m and r denote the height/width of the image and the number of channels, respectively. The convolutional layer contains k filters (or kernels) of size , where and q can be less than or equal to the number of channels r (i.e., ). Here, q may vary for each kernel, and the feature map in this case has a size of .

- Pooling layers: These are listed as a key aspect of CNNs. The pooling layers are in general applied following the convolutional layers. A pooling layer in a CNN subsamples its input. Applying a max operation to the output of each filter is the most common way of pooling. Pooling over the complete matrix is not necessary. With respect to classification, pooling gives an output matrix with a fixed size thereby reducing the dimensionality of the output while keeping important information.

- Fully-connected layers: The layers here are all connected, i.e., both units of preceding and subsequent layers are connected

3.2.4. Deep Belief Network

- Learning generative weights is through a layer-by-layer process with the purpose of determining the dependability of the variables in layer ℓ on the variables in layer where ℓ denotes the index of any upper layer.

- Upon observing data in the bottom layer, inferring the values of the latent variables can be done in a single attempt.

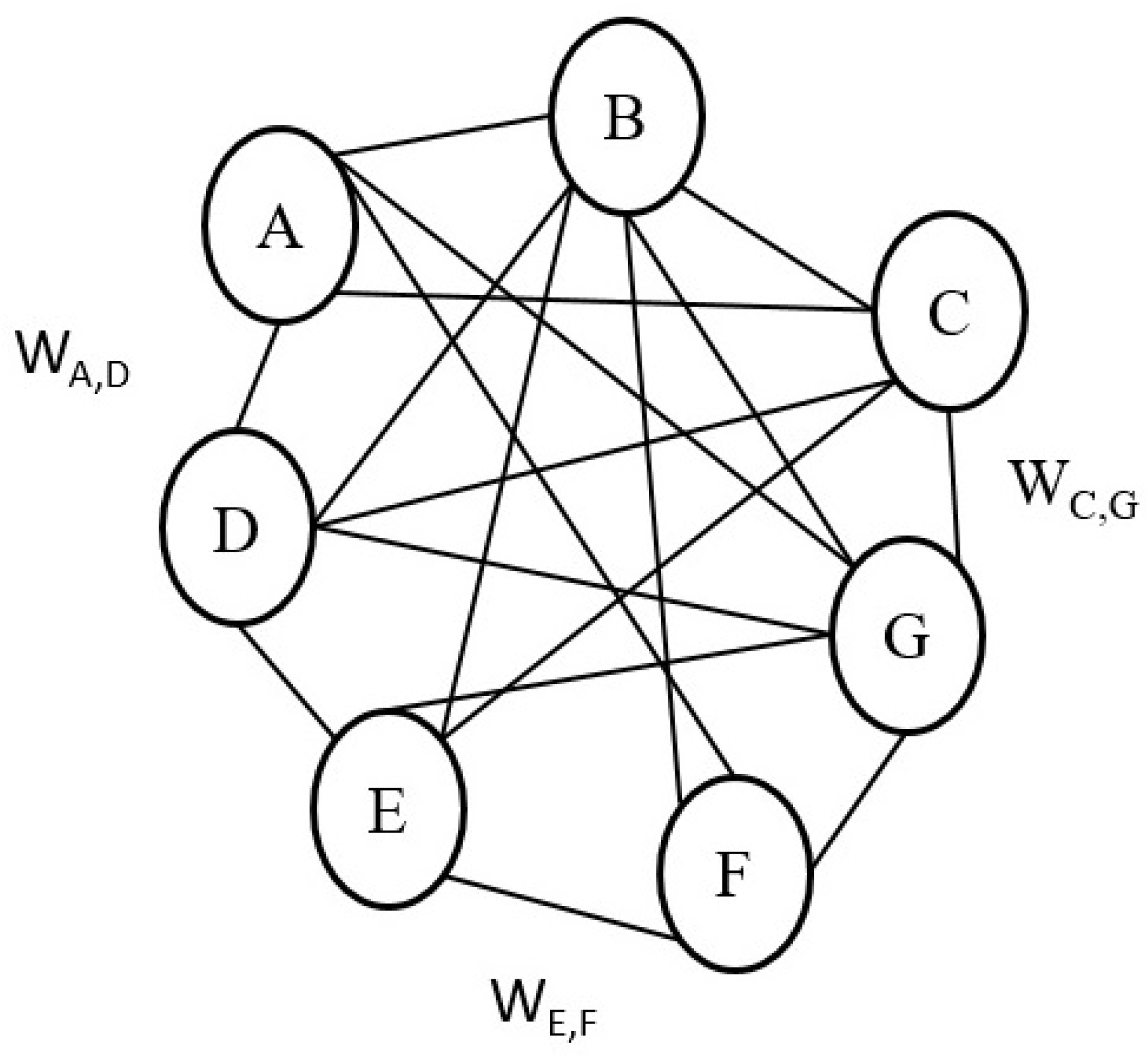

3.2.5. Boltzmann Machine

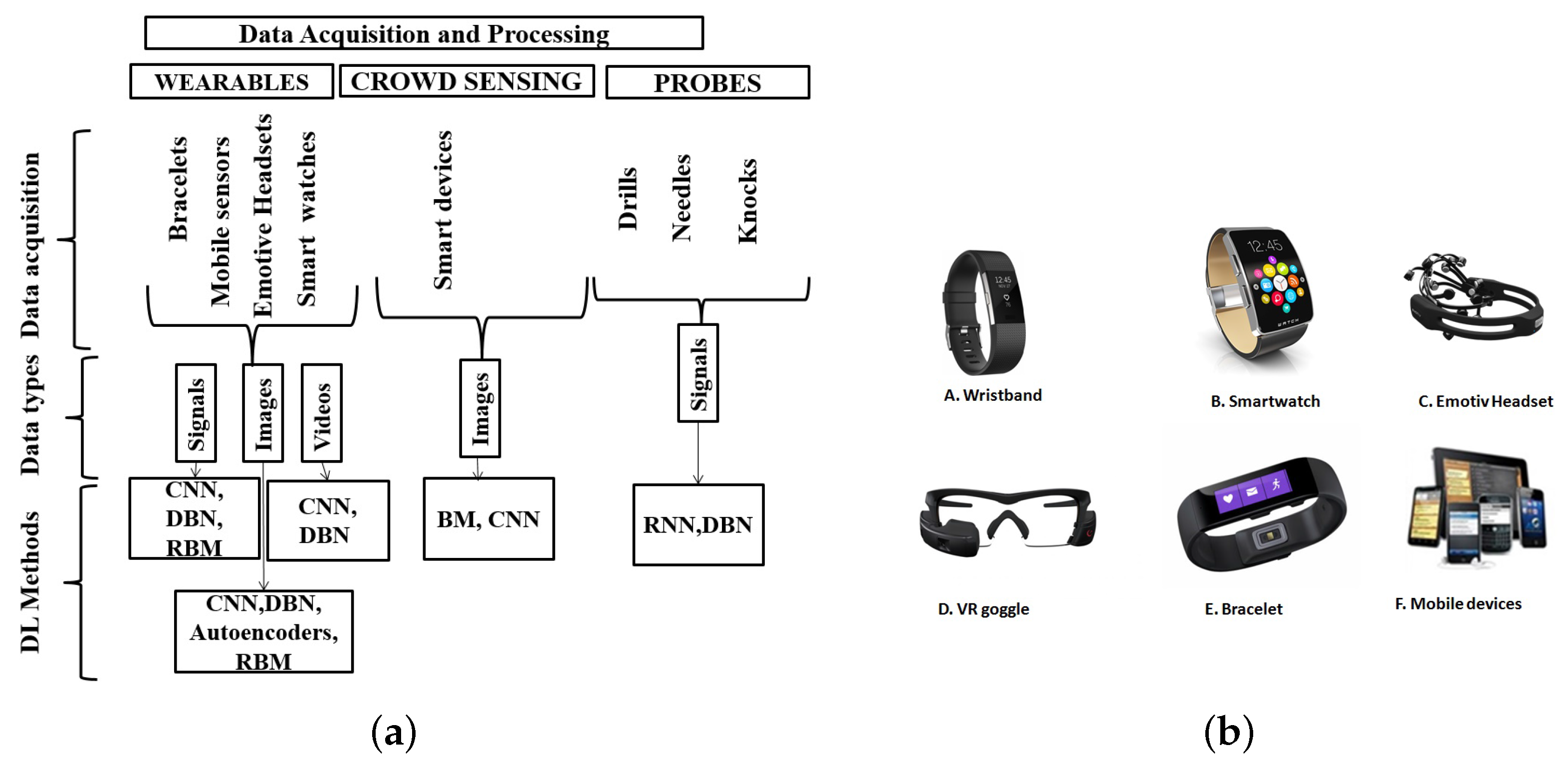

4. Sensory Data Acquisition and Processing Using Deep Learning in Smart Health

4.1. Sensory Data Acquisition and Processing via Wearables and Carry-Ons

- Image processing: Deep learning techniques play a major role in image processing for health advancements. Prominent amongst these methods are CNN, DBN, autoencoders and RBM. The authors in [65] use CNNs to help create a new network architecture with the aim of multi-channel data acquisition and also for supervised feature learning. Extracting features from brain images (e.g., magnetic resonance imaging (MRI), functional Magnetic resonance imaging (fMRI)) can help in early diagnosis and prognosis of severe diseases such as glioma. Moreover, the authors in [66] use DBN for the classification of mammography images in a bid to detect calcifications that may be the indicators of breast cancer. With high accuracy achieved in the detection, proper diagnosis of breast cancer becomes possible in radiology. Kuang and He in [67] modified and used DBN for the classification of attention deficit hyperactivity disorder (ADHD) using images from fMRI data. In a similar fashion, Li et al. [68] used the RBM for training and processing the dataset generated from MRI and positron emission tomography (PET) scans with aim of accurately diagnosing Alzheimer’s disease. Using deep CNN and clinical images, Esteva et al. [69] were able to detect and classify melanoma, which is a type of skin cancer. According to their research, this method outperforms the already available skin cancer classification techniques. In the same context, Peyman and Hamid [70] showed that CNN performs better in the preprocessing of clinical and dermoscopy images in the lesion segmentation part of the skin. The study argues that CNN requires less preprocessing procedure when compared to other known methods.

- Signal processing: Signal processing is an area of applied computing that has been evolving since its inception. Signal processing is an utmost important tool in diverse fields including the processing of medical sensory data. As new methods are being improved for accurate signal processing on sensory data, deep learning, as a robust method, appears as a potential technique used in signal processing. For instance, Ha and Choi use improved versions of CNN to process the signals derived from embedded sensors in mobile devices for proper recognition of human activities [71]. Human activity recognition is an important aspect of ubiquitous computing and one of the examples of its application is the diagnosis and provision of support and care for those with limited movement ability and capabilities. The authors in [72] propose applying a CNN-based methodology on sensed data for the prediction of sleep quality. In the corresponding study, the CNN model is used with the objective of classifying the factors that contribute to efficient and poor sleeping habits with wearable sensors [72]. Furthermore, deep CNN and deep feed-forward networks on the data acquired via wearable sensors are used for the classification and processing of human activity recognition by the researchers in this field [73].

4.2. Data Acquisition via Probes

4.3. Data Acquisition via Crowd-Sensing

5. Deep Learning Challenges in Big Sensed Data: Opportunities in Smart Health Applications

5.1. Challenges and Open Issues

5.2. Opportunities in Smart Health Applications for Deep Learning

- Medical imaging: Deep learning techniques have actually helped the improvement of healthcare through accurate disease detection and recognition. An example is the detection of melanoma. To do this, deep learning algorithms learn important features related to melanoma from a group of medical images and run their learning-based prediction algorithm to detect the presence or likelihood of the disease.Furthermore, using images from MRI, fMRI and other sources, deep learning has been able to help 3D brain construction using autoencoders and deep CNN [90], neural cell classification using CNN [65], brain tissue classifications using DBN [67,68], tumour detection using DNN [65,66] and Alzheimer’s diagnosis using DNN [91].

- Bioinformatics: The applications of deep learning in bioinformatics have seen a resurgence in the diagnosis and treatment of most terminal diseases. Examples of these could be seen in cancer diagnosis where deep autoencoders are used using gene expression as the input data [92]; gene selection/classification and gene variants using micro-array data sequencing with the aid of deep belief networks [93]. Moreover, deep belief networks play a key role in protein slicing/sequencing [94,95].

- Predictive analysis: Disease predictions have gained momentum with the advent of learning-based systems. Therefore, with the capability of deep learning to predict the occurrence of diseases accurately, predictive analysis of the future likelihood of diseases has experienced significant progress. Particular techniques that are used for predictive analysis of diseases are autoencoders [96], recurrent neural networks [97] and CNNs [97,98]. On the other hand, it is worth mentioning that in order to improve the accuracy of prediction, sensory data monitoring medical phenomena have to be coupled with sensory data monitoring human behaviour. Coupling of data acquired from medical and behavioural sensors helps in conducting effective analysis of human behaviour in order to find patterns that could help in disease predictions and preventions.

6. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Guelzim, T.; Obaidat, M.; Sadoun, B. Chapter 1—Introduction and overview of key enabling technologies for smart cities and homes. In Smart Cities and Homes; Obaidat, M.S., Nicopolitidis, P., Eds.; Morgan Kaufmann: Boston, MA, USA, 2016; pp. 1–16. [Google Scholar]

- Liu, D.; Huang, R.; Wosinski, M. Development of Smart Cities: Educational Perspective. In Smart Learning in Smart Cities; Springer: Singapore, 2017; pp. 3–14. [Google Scholar]

- Nam, T.; Pardo, T.A. Conceptualizing smart city with dimensions of technology, people, and institutions. In Proceedings of the 12th Annual International Digital Government Research Conference: Digital Government Innovation in Challenging Times, College Park, MD, USA, 12–15 June 2011; ACM: New York, NY, USA, 2011; pp. 282–291. [Google Scholar]

- Anthopoulos, L.G. The Rise of the Smart City. In Understanding Smart Cities: A Tool for Smart Government or an Industrial Trick? Springer: Cham, Switzerland, 2017; pp. 5–45. [Google Scholar]

- Munzel, A.; Meyer-Waarden, L.; Galan, J.P. The social side of sustainability: Well-being as a driver and an outcome of social relationships and interactions on social networking sites. Technol. Forecast. Soc. Change 2017, in press. [Google Scholar] [CrossRef]

- Fan, M.; Sun, J.; Zhou, B.; Chen, M. The smart health initiative in China: The case of Wuhan, Hubei province. J. Med. Syst. 2016, 40, 62. [Google Scholar] [CrossRef] [PubMed]

- Ndiaye, M.; Hancke, G.P.; Abu-Mahfouz, A.M. Software Defined Networking for Improved Wireless Sensor Network Management: A Survey. Sensors 2017, 17, 1031. [Google Scholar] [CrossRef] [PubMed]

- Pramanik, M.I.; Lau, R.Y.; Demirkan, H.; Azad, M.A.K. Smart health: Big data enabled health paradigm within smart cities. Expert Syst. Appl. 2017, 87, 370–383. [Google Scholar] [CrossRef]

- Nef, T.; Urwyler, P.; Büchler, M.; Tarnanas, I.; Stucki, R.; Cazzoli, D.; Müri, R.; Mosimann, U. Evaluation of three state-of-the-art classifiers for recognition of activities of daily living from smart home ambient data. Sensors 2015, 15, 11725–11740. [Google Scholar] [CrossRef] [PubMed]

- Ali, Z.; Muhammad, G.; Alhamid, M.F. An Automatic Health Monitoring System for Patients Suffering from Voice Complications in Smart Cities. IEEE Access 2017, 5, 3900–3908. [Google Scholar] [CrossRef]

- Hossain, M.S.; Muhammad, G.; Alamri, A. Smart healthcare monitoring: A voice pathology detection paradigm for smart cities. Multimedia Syst. 2017. [Google Scholar] [CrossRef]

- Mehta, Y.; Pai, M.M.; Mallissery, S.; Singh, S. Cloud enabled air quality detection, analysis and prediction—A smart city application for smart health. In Proceedings of the 2016 3rd MEC International Conference on Big Data and Smart City (ICBDSC), Muscat, Oman, 15–16 March 2016; pp. 1–7. [Google Scholar]

- Sahoo, P.K.; Thakkar, H.K.; Lee, M.Y. A Cardiac Early Warning System with Multi Channel SCG and ECG Monitoring for Mobile Health. Sensors 2017, 17, 711. [Google Scholar] [CrossRef] [PubMed]

- Kim, T.; Park, J.; Heo, S.; Sung, K.; Park, J. Characterizing dynamic walking patterns and detecting falls with wearable sensors using Gaussian process methods. Sensors 2017, 17, 1172. [Google Scholar] [CrossRef] [PubMed]

- Yeh, Y.T.; Hsu, M.H.; Chen, C.Y.; Lo, Y.S.; Liu, C.T. Detection of potential drug-drug interactions for outpatients across hospitals. Int. J. Environ. Res. Public Health 2014, 11, 1369–1383. [Google Scholar] [CrossRef] [PubMed]

- Venkatesh, J.; Aksanli, B.; Chan, C.S.; Akyurek, A.S.; Rosing, T.S. Modular and Personalized Smart Health Application Design in a Smart City Environment. IEEE Internet Things J. 2017, PP, 1. [Google Scholar] [CrossRef]

- Rajaram, M.L.; Kougianos, E.; Mohanty, S.P.; Sundaravadivel, P. A wireless sensor network simulation framework for structural health monitoring in smart cities. In Proceedings of the 2016 IEEE 6th International Conference on Consumer Electronics-Berlin (ICCE-Berlin), Berlin, Germany, 5–7 September 2016; pp. 78–82. [Google Scholar]

- Hijazi, S.; Page, A.; Kantarci, B.; Soyata, T. Machine Learning in Cardiac Health Monitoring and Decision Support. IEEE Comput. 2016, 49, 38–48. [Google Scholar] [CrossRef]

- Ota, K.; Dao, M.S.; Mezaris, V.; Natale, F.G.B.D. Deep Learning for Mobile Multimedia: A Survey. ACM Trans. Multimedia Comput. Commun. Appl. 2017, 13, 34. [Google Scholar] [CrossRef]

- Yu, D.; Deng, L. Deep Learning and Its Applications to Signal and Information Processing [Exploratory DSP]. IEEE Signal Process. Mag. 2011, 28, 145–154. [Google Scholar] [CrossRef]

- Larochelle, H.; Bengio, Y. Classification using discriminative restricted Boltzmann machines. In Proceedings of the 25th International Conference on Machine Learning, Helsinki, Finland, 5–9 July 2008; pp. 536–543. [Google Scholar]

- Vincent, P.; Larochelle, H.; Lajoie, I.; Bengio, Y.; Manzagol, P.A. Stacked denoising autoencoders: Learning useful representations in a deep network with a local denoising criterion. J. Mach. Learn. Res. 2010, 11, 3371–3408. [Google Scholar]

- Wang, L.; Sng, D. Deep Learning Algorithms with Applications to Video Analytics for A Smart City: A Survey. arXiv, 2015; arXiv:1512.03131. [Google Scholar]

- Cavazza, J.; Morerio, P.; Murino, V. When Kernel Methods Meet Feature Learning: Log-Covariance Network for Action Recognition From Skeletal Data. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Honolulu, HI, USA, 21–26 July 2017; pp. 1251–1258. [Google Scholar]

- Keceli, A.S.; Kaya, A.; Can, A.B. Action recognition with skeletal volume and deep learning. In Proceedings of the 2017 25th Signal Processing and Communications Applications Conference (SIU), Antalya, Turkey, 15–18 May 2017; pp. 1–4. [Google Scholar]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef] [PubMed]

- Alsheikh, M.A.; Lin, S.; Niyato, D.; Tan, H.P. Machine Learning in Wireless Sensor Networks: Algorithms, Strategies, and Applications. IEEE Commun. Surv. Tutor. 2014, 16, 1996–2018. [Google Scholar] [CrossRef]

- Mohri, M.; Rostamizadeh, A.; Talwalkar, A. Foundations of Machine Learning; MIT Press: Cambridge, MA, USA, 2012. [Google Scholar]

- Clifton, L.; Clifton, D.A.; Pimentel, M.A.F.; Watkinson, P.J.; Tarassenko, L. Predictive Monitoring of Mobile Patients by Combining Clinical Observations with Data from Wearable Sensors. IEEE J. Biomed. Health Inform. 2014, 18, 722–730. [Google Scholar] [CrossRef] [PubMed]

- Tsiouris, K.M.; Gatsios, D.; Rigas, G.; Miljkovic, D.; Seljak, B.K.; Bohanec, M.; Arredondo, M.T.; Antonini, A.; Konitsiotis, S.; Koutsouris, D.D.; et al. PD_Manager: An mHealth platform for Parkinson’s disease patient management. Healthcare Technol. Lett. 2017, 4, 102–108. [Google Scholar] [CrossRef] [PubMed]

- Hu, K.; Rahman, A.; Bhrugubanda, H.; Sivaraman, V. HazeEst: Machine Learning Based Metropolitan Air Pollution Estimation from Fixed and Mobile Sensors. IEEE Sens. J. 2017, 17, 3517–3525. [Google Scholar] [CrossRef]

- Tariq, O.B.; Lazarescu, M.T.; Iqbal, J.; Lavagno, L. Performance of Machine Learning Classifiers for Indoor Person Localization with Capacitive Sensors. IEEE Access 2017, 5, 12913–12926. [Google Scholar] [CrossRef]

- Jahangiri, A.; Rakha, H.A. Applying Machine Learning Techniques to Transportation Mode Recognition Using Mobile Phone Sensor Data. IEEE Trans. Intell. Transp. Syst. 2015, 16, 2406–2417. [Google Scholar] [CrossRef]

- Schmidhuber, J. Deep Learning in Neural Networks: An Overview. Neural Netw. 2014, 61, 85–117. [Google Scholar] [CrossRef] [PubMed]

- Deng, L.; Yu, D. Deep learning: Methods and applications. Found. Trends Signal Process. 2014, 7, 197–387. [Google Scholar] [CrossRef]

- Hinton, G.E.; Osindero, S.; Teh, Y.W. A fast learning algorithm for deep belief nets. Neural Comput. 2006, 18, 1527–1554. [Google Scholar] [CrossRef] [PubMed]

- Do, T.M.T.; Gatica-Perez, D. The places of our lives: Visiting patterns and automatic labeling from longitudinal smartphone data. IEEE Trans. Mob. Comput. 2014, 13, 638–648. [Google Scholar] [CrossRef]

- Al Rahhal, M.M.; Bazi, Y.; AlHichri, H.; Alajlan, N.; Melgani, F.; Yager, R.R. Deep learning approach for active classification of electrocardiogram signals. Inform. Sci. 2016, 345, 340–354. [Google Scholar] [CrossRef]

- Acharya, U.R.; Fujita, H.; Lih, O.S.; Adam, M.; Tan, J.H.; Chua, C.K. Automated Detection of Coronary Artery Disease Using Different Durations of ECG Segments with Convolutional Neural Network. Knowl.-Based Syst. 2017, 132, 62–71. [Google Scholar] [CrossRef]

- Hosseini, M.P.; Tran, T.X.; Pompili, D.; Elisevich, K.; Soltanian-Zadeh, H. Deep Learning with Edge Computing for Localization of Epileptogenicity Using Multimodal rs-fMRI and EEG Big Data. In Proceedings of the 2017 IEEE International Conference on Autonomic Computing (ICAC), Columbus, OH, USA, 17–21 July 2017; pp. 83–92. [Google Scholar]

- Zheng, W.L.; Zhu, J.Y.; Peng, Y.; Lu, B.L. EEG-based emotion classification using deep belief networks. In Proceedings of the 2014 IEEE International Conference on Multimedia and Expo (ICME), Chengdu, China, 14–18 July 2014; pp. 1–6. [Google Scholar]

- Gu, W. Non-intrusive blood glucose monitor by multi-task deep learning: PhD forum abstract. In Proceedings of the 16th ACM/IEEE International Conference on Information Processing in Sensor Networks, Pittsburgh, PA, USA, 18–20 April 2017; ACM: New York, NY, USA, 2017; pp. 249–250. [Google Scholar]

- Anagnostopoulos, T.; Zaslavsky, A.; Kolomvatsos, K.; Medvedev, A.; Amirian, P.; Morley, J.; Hadjieftymiades, S. Challenges and Opportunities of Waste Management in IoT-Enabled Smart Cities: A Survey. IEEE Trans. Sustain. Comput. 2017, 2, 275–289. [Google Scholar] [CrossRef]

- Taleb, S.; Al Sallab, A.; Hajj, H.; Dawy, Z.; Khanna, R.; Keshavamurthy, A. Deep learning with ensemble classification method for sensor sampling decisions. In Proceedings of the 2016 International Wireless Communications and Mobile Computing Conference (IWCMC), Paphos, Cyprus, 5–9 September 2016; pp. 114–119. [Google Scholar]

- Costilla-Reyes, O.; Scully, P.; Ozanyan, K.B. Deep Neural Networks for Learning Spatio-Temporal Features from Tomography Sensors. IEEE Trans. Ind. Electron. 2018, 65, 645–653. [Google Scholar] [CrossRef]

- Fang, S.H.; Fei, Y.X.; Xu, Z.; Tsao, Y. Learning Transportation Modes From Smartphone Sensors Based on Deep Neural Network. IEEE Sens. J. 2017, 17, 6111–6118. [Google Scholar] [CrossRef]

- Xu, X.; Yin, S.; Ouyang, P. Fast and low-power behavior analysis on vehicles using smartphones. In Proceedings of the 2017 6th International Symposium on Next Generation Electronics (ISNE), Keelung, Taiwan, 23–25 May 2017; pp. 1–4. [Google Scholar]

- Eskofier, B.M.; Lee, S.I.; Daneault, J.F.; Golabchi, F.N.; Ferreira-Carvalho, G.; Vergara-Diaz, G.; Sapienza, S.; Costante, G.; Klucken, J.; Kautz, T.; et al. Recent machine learning advancements in sensor-based mobility analysis: Deep learning for Parkinson’s disease assessment. In Proceedings of the 2016 IEEE 38th Annual International Conference of the Engineering in Medicine and Biology Society (EMBC), Orlando, FL, USA, 16–20 August 2016; pp. 655–658. [Google Scholar]

- Zhu, J.; Pande, A.; Mohapatra, P.; Han, J.J. Using deep learning for energy expenditure estimation with wearable sensors. In Proceedings of the 2015 17th International Conference on E-health Networking, Application & Services (HealthCom), Boston, MA, USA, 14–17 October 2015; pp. 501–506. [Google Scholar]

- Jankowski, S.; Szymański, Z.; Dziomin, U.; Mazurek, P.; Wagner, J. Deep learning classifier for fall detection based on IR distance sensor data. In Proceedings of the 2015 IEEE 8th International Conference on Intelligent Data Acquisition and Advanced Computing Systems: Technology and Applications (IDAACS), Warsaw, Poland, 24–26 September 2015; Volume 2, pp. 723–727. [Google Scholar]

- Menotti, D.; Chiachia, G.; Pinto, A.; Schwartz, W.R.; Pedrini, H.; Falcao, A.X.; Rocha, A. Deep representations for iris, face, and fingerprint spoofing detection. IEEE Trans. Inform. Forensics Secur. 2015, 10, 864–879. [Google Scholar] [CrossRef]

- Yin, Y.; Liu, Z.; Zimmermann, R. Geographic information use in weakly-supervised deep learning for landmark recognition. In Proceedings of the 2017 IEEE International Conference on Multimedia and Expo (ICME), Hong Kong, China, 10–14 July 2017; pp. 1015–1020. [Google Scholar]

- Barreto, T.L.; Rosa, R.A.; Wimmer, C.; Moreira, J.R.; Bins, L.S.; Cappabianco, F.A.M.; Almeida, J. Classification of Detected Changes from Multitemporal High-Res Xband SAR Images: Intensity and Texture Descriptors From SuperPixels. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2016, 9, 5436–5448. [Google Scholar] [CrossRef]

- Goodfellow, I.; Bengio, Y.; Courville, A. Deep Learning; MIT Press: Cambridge, MA, USA, 2016. [Google Scholar]

- Hinton, G.E.; Salakhutdinov, R.R. Reducing the dimensionality of data with neural networks. Science 2006, 313, 504–507. [Google Scholar] [CrossRef] [PubMed]

- Alain, G.; Bengio, Y.; Rifai, S. Regularized auto-encoders estimate local statistics. Proc. CoRR 2012, 1–17. [Google Scholar]

- Rifai, S.; Bengio, Y.; Dauphin, Y.; Vincent, P. A generative process for sampling contractive auto-encoders. arXiv, 2012; arXiv:1206.6434. [Google Scholar]

- Abdulnabi, A.H.; Wang, G.; Lu, J.; Jia, K. Multi-Task CNN Model for Attribute Prediction. IEEE Trans. Multimedia 2015, 17, 1949–1959. [Google Scholar] [CrossRef]

- Deng, L.; Abdelhamid, O.; Yu, D. A deep convolutional neural network using heterogeneous pooling for trading acoustic invariance with phonetic confusion. In Proceedings of the 2013 IEEE International Conference on Acoustics, Speech and Signal Processing, Vancouver, BC, Canada, 26–31 May 2013; pp. 6669–6673. [Google Scholar]

- Aghdam, H.H.; Heravi, E.J. Guide to Convolutional Neural Networks: A Practical Application to Traffic-Sign Detection and Classification; Springer: Cham, Switzerland, 2017. [Google Scholar]

- Huang, G.; Lee, H.; Learnedmiller, E. Learning hierarchical representations for face verification with convolutional deep belief networks. In Proceedings of the 2012 IEEE Conference on Computer Vision and Pattern Recognition, Providence, RI, USA, 16–21 June 2012; pp. 2518–2525. [Google Scholar]

- Ackley, D.H.; Hinton, G.E.; Sejnowski, T.J. A learning algorithm for boltzmann machines. Cognit. Sci. 1985, 9, 147–169. [Google Scholar] [CrossRef]

- Salakhutdinov, R.; Mnih, A.; Hinton, G. Restricted Boltzmann machines for collaborative filtering. In Proceedings of the 24th international conference on Machine learning, Corvalis, OR, USA, 20–24 June 2007; pp. 791–798. [Google Scholar]

- Ribeiro, B.; Gonçalves, I.; Santos, S.; Kovacec, A. Deep Learning Networks for Off-Line Handwritten Signature Recognition. In Proceedings of the 2011 CIARP 16th Iberoamerican Congress on Pattern Recognition, Pucón, Chile, 15–18 November 2011; pp. 523–532. [Google Scholar]

- Nie, D.; Zhang, H.; Adeli, E.; Liu, L.; Shen, D. 3D Deep Learning for Multi-Modal Imaging-Guided Survival Time Prediction of Brain Tumor Patients; Springer: Cham, Switzerland, 2016. [Google Scholar]

- Rose, D.C.; Arel, I.; Karnowski, T.P.; Paquit, V.C. Applying deep-layered clustering to mammography image analytics. In Proceedings of the 2010 Biomedical Sciences and Engineering Conference, Oak Ridge, TN, USA, 25–26 May 2010; pp. 1–4. [Google Scholar]

- Kuang, D.; He, L. Classification on ADHD with Deep Learning. In Proceedings of the 2014 International Conference on Cloud Computing and Big Data, Wuhan, China, 12–14 November 2014; pp. 27–32. [Google Scholar]

- Li, F.; Tran, L.; Thung, K.H.; Ji, S.; Shen, D.; Li, J. A Robust Deep Model for Improved Classification of AD/MCI Patients. IEEE J. Biomed. Health Inform. 2015, 19, 1610–1616. [Google Scholar] [CrossRef] [PubMed]

- Esteva, A.; Kuprel, B.; Novoa, R.A.; Ko, J.; Swetter, S.M.; Blau, H.M.; Thrun, S. Dermatologist-level classification of skin cancer with deep neural networks. Nature 2017, 542, 115–118. [Google Scholar] [CrossRef] [PubMed]

- Sabouri, P.; GholamHosseini, H. Lesion border detection using deep learning. In Proceedings of the 2016 IEEE Congress on Evolutionary Computation (CEC), Vancouver, BC, Canada, 24–29 July 2016; pp. 1416–1421. [Google Scholar]

- Ha, S.; Choi, S. Convolutional neural networks for human activity recognition using multiple accelerometer and gyroscope sensors. In Proceedings of the 2016 International Joint Conference on Neural Networks (IJCNN), Vancouver, BC, Canada, 24–29 July 2016; pp. 381–388. [Google Scholar]

- Sathyanarayana, A.; Joty, S.; Fernandez-Luque, L.; Ofli, F.; Srivastava, J.; Elmagarmid, A.; Arora, T.; Taheri, S. Sleep quality prediction from wearable data using deep learning. JMIR mHealth uHealth 2016, 4, e125. [Google Scholar] [CrossRef] [PubMed]

- Hammerla, N.Y.; Halloran, S.; Ploetz, T. Deep, convolutional, and recurrent models for human activity recognition using wearables. arXiv, 2016; arXiv:1604.08880. [Google Scholar]

- Baccouche, M.; Mamalet, F.; Wolf, C.; Garcia, C.; Baskurt, A. Sequential deep learning for human action recognition. In International Workshop on Human Behavior Understanding; Springer: Berlin/Heidelberg, Germany, 2011; pp. 29–39. [Google Scholar]

- Ji, S.; Xu, W.; Yang, M.; Yu, K. 3D convolutional neural networks for human action recognition. IEEE Trans. Pattern Anal. Mach. Intell. 2013, 35, 221–231. [Google Scholar] [CrossRef] [PubMed]

- Karpathy, A.; Toderici, G.; Shetty, S.; Leung, T.; Sukthankar, R.; Fei-Fei, L. Large-scale video classification with convolutional neural networks. In Proceedings of the 2014 IEEE Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 23–28 June 2014; pp. 1725–1732. [Google Scholar]

- Cheron, G.; Draye, J.P.; Bourgeios, M.; Libert, G. A dynamic neural network identification of electromyography and arm trajectory relationship during complex movements. IEEE Trans. Biomed. Eng. 1996, 43, 552–558. [Google Scholar] [CrossRef] [PubMed]

- Page, A.; Hijazi, S.; Askan, D.; Kantarci, B.; Soyata, T. Research Directions in Cloud-Based Decision Support Systems for Health Monitoring Using Internet-of-Things Driven Data Acquisition. Int. J. Serv. Comput. 2016, 4, 18–34. [Google Scholar]

- Guo, B.; Han, Q.; Chen, H.; Shangguan, L.; Zhou, Z.; Yu, Z. The Emergence of Visual Crowdsensing: Challenges and Opportunities. IEEE Commun. Surv. Tutor. 2017, PP, 1. [Google Scholar] [CrossRef]

- Ma, H.; Zhao, D.; Yuan, P. Opportunities in mobile crowd sensing. IEEE Commun. Mag. 2014, 52, 29–35. [Google Scholar] [CrossRef]

- Haddawy, P.; Frommberger, L.; Kauppinen, T.; De Felice, G.; Charkratpahu, P.; Saengpao, S.; Kanchanakitsakul, P. Situation awareness in crowdsensing for disease surveillance in crisis situations. In Proceedings of the Seventh International Conference on Information and Communication Technologies and Development, Singapore, 15–18 May 2015; p. 38. [Google Scholar]

- Cardone, G.; Foschini, L.; Bellavista, P.; Corradi, A.; Borcea, C.; Talasila, M.; Curtmola, R. Fostering participaction in smart cities: a geo-social crowdsensing platform. IEEE Commun. Mag. 2013, 51, 112–119. [Google Scholar] [CrossRef]

- Pan, Z.; Yu, H.; Miao, C.; Leung, C. Crowdsensing Air Quality with Camera-Enabled Mobile Devices. In Proceedings of the Twenty-Ninth IAAI Conference, San Francisco, CA, USA, 6–9 February 2017; pp. 4728–4733. [Google Scholar]

- Mittal, G.; Yagnik, K.B.; Garg, M.; Krishnan, N.C. SpotGarbage: Smartphone app to detect garbage using deep learning. In Proceedings of the 2016 ACM International Joint Conference on Pervasive and Ubiquitous Computing, Heidelberg, Germany, 12–16 September 2016; pp. 940–945. [Google Scholar]

- Habibzadeh, H.; Qin, Z.; Soyata, T.; Kantarci, B. Large Scale Distributed Dedicated- and Non-Dedicated Smart City Sensing Systems. IEEE Sens. J. 2017, 17, 7649–7658. [Google Scholar] [CrossRef]

- Xu, L.; Hao, X.; Lane, N.D.; Liu, X.; Moscibroda, T. More with Less: Lowering User Burden in Mobile Crowdsourcing through Compressive Sensing. In Proceedings of the 2015 ACM International Joint Conference on Pervasive and Ubiquitous Computing, Osaka, Japan, 7–11 September 2015; ACM: New York, NY, USA, 2015; pp. 659–670. [Google Scholar]

- Pouryazdan, M.; Kantarci, B. The Smart Citizen Factor in Trustworthy Smart City Crowdsensing. IT Prof. 2016, 18, 26–33. [Google Scholar] [CrossRef]

- Pouryazdan, M.; Kantarci, B.; Soyata, T.; Song, H. Anchor-Assisted and Vote-Based Trustworthiness Assurance in Smart City Crowdsensing. IEEE Access 2016, 4, 529–541. [Google Scholar] [CrossRef]

- Farahani, B.; Firouzi, F.; Chang, V.; Badaroglu, M.; Constant, N.; Mankodiya, K. Towards fog-driven IoT eHealth: Promises and challenges of IoT in medicine and healthcare. Future Gener. Comput. Syst. 2018, 78, 659–676. [Google Scholar] [CrossRef]

- Kleesiek, J.; Urban, G.; Hubert, A.; Schwarz, D.; Maier-Hein, K.; Bendszus, M.; Biller, A. Deep MRI brain extraction: A 3D convolutional neural network for skull stripping. Neuroimage 2016, 129, 460–469. [Google Scholar] [CrossRef] [PubMed]

- Fritscher, K.; Raudaschl, P.; Zaffino, P.; Spadea, M.F.; Sharp, G.C.; Schubert, R. Deep Neural Networks for Fast Segmentation of 3D Medical Images. In Medical Image Computing and Computer-Assisted Intervention—MICCAI; Springer: Cham, Switzerland, 2016. [Google Scholar]

- Fakoor, R.; Ladhak, F.; Nazi, A.; Huber, M. Using deep learning to enhance cancer diagnosis and classification. In Proceedings of the 30th International Conference on Machine Learning, Atlanta, GA, USA, 16–21 June 2013. [Google Scholar]

- Khademi, M.; Nedialkov, N.S. Probabilistic Graphical Models and Deep Belief Networks for Prognosis of Breast Cancer. In Proceedings of the 2015 IEEE 14th International Conference on Machine Learning and Applications, Miami, FL, USA, 9–11 December 2016; pp. 727–732. [Google Scholar]

- Angermueller, C.; Lee, H.J.; Reik, W.; Stegle, O. DeepCpG: Accurate prediction of single-cell DNA methylation states using deep learning. Genome Biol. 2017, 18, 67. [Google Scholar] [CrossRef] [PubMed]

- Tian, K.; Shao, M.; Wang, Y.; Guan, J.; Zhou, S. Boosting Compound-Protein Interaction Prediction by Deep Learning. Methods 2016, 110, 64–72. [Google Scholar] [CrossRef] [PubMed]

- Che, Z.; Purushotham, S.; Khemani, R.; Liu, Y. Distilling Knowledge from Deep Networks with Applications to Healthcare Domain. Ann. Chirurgie 2015, 40, 529–532. [Google Scholar]

- Lipton, Z.C.; Kale, D.C.; Elkan, C.; Wetzell, R. Learning to Diagnose with LSTM Recurrent Neural Networks. In Proceedings of the International Conference on Learning Representations (ICLR 2016), San Juan, Puerto Rico, 2–4 May 2016. [Google Scholar]

- Liang, Z.; Zhang, G.; Huang, J.X.; Hu, Q.V. Deep learning for healthcare decision making with EMRs. In Proceedings of the IEEE International Conference on Bioinformatics and Biomedicine, Belfast, UK, 2–5 November 2014; pp. 556–559. [Google Scholar]

| Notations | Definition |

|---|---|

| x | Samples |

| y | Outputs |

| v | Visible vector |

| h | Hidden vector |

| q | State vector |

| W | Matrix of weight vectors |

| M | Total number of units for the hidden layer |

| Weights vector between hidden unit and visible unit | |

| Binary state of a vector | |

| Binary state assigned to unit i by state vector q | |

| Z | Partition factor |

| Biased weights for the j-th hidden units | |

| Biased weights for the i-th visible units | |

| Total i-th inputs | |

| Visible unit i | |

| Weight vector from the k-th unit in the hidden Layer 2 to the j-th output unit | |

| Weight vector from the j-th unit in the hidden Layer 1 to the i-th output unit | |

| Matrix of weights from the j-th unit in the hidden Layer 1 to the i-th output unit | |

| Energy of a state vector q | |

| activation function | |

| Probability of a state vector q | |

| Energy function with respect to visible and hidden units | |

| Probability distribution with respect to visible and hidden units |

| Data Acquisition Technique | Data Type | Deep Learning Technique |

|---|---|---|

| Wearables | Image | CNN [65,69,70], DBN [66,67], RBM [68], |

| Signal | CNN [71,72,73] | |

| Video | CNN [74,75,76] | |

| Probes | Signal | RNN [77] |

| Crowd-sensing | Image | BM [83], CNN [84] |

| Application | Problem | Deep Learning Techniques | References |

|---|---|---|---|

| Medical Imaging | Neural Cells Classification | CNN | [65] |

| 3D brain reconstruction | Deep CNN | [90] | |

| Brain Tissue Classification | DBN | [67,68] | |

| Tumour Detection | DNN | [65,66] | |

| Alzheimer’s Diagnosis | DNN | [91] | |

| Bioinformatics | Cancer Diagnosis | Deep Autoencoder | [92] |

| Gene Classification | DBN | [93] | |

| Protein Slicing | DBN | [94,95] | |

| Predictive Analysis | Disease prediction and analysis | Autoencoder | [96] |

| RNN | [97] | ||

| CNN | [97,98] |

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Obinikpo, A.A.; Kantarci, B. Big Sensed Data Meets Deep Learning for Smarter Health Care in Smart Cities. J. Sens. Actuator Netw. 2017, 6, 26. https://doi.org/10.3390/jsan6040026

Obinikpo AA, Kantarci B. Big Sensed Data Meets Deep Learning for Smarter Health Care in Smart Cities. Journal of Sensor and Actuator Networks. 2017; 6(4):26. https://doi.org/10.3390/jsan6040026

Chicago/Turabian StyleObinikpo, Alex Adim, and Burak Kantarci. 2017. "Big Sensed Data Meets Deep Learning for Smarter Health Care in Smart Cities" Journal of Sensor and Actuator Networks 6, no. 4: 26. https://doi.org/10.3390/jsan6040026

APA StyleObinikpo, A. A., & Kantarci, B. (2017). Big Sensed Data Meets Deep Learning for Smarter Health Care in Smart Cities. Journal of Sensor and Actuator Networks, 6(4), 26. https://doi.org/10.3390/jsan6040026