1. Introduction

The number of older people living alone and in need of care has grown to become one of the great societal challenges of the most developed countries (e.g., Europe, USA, Japan, Australia). Indeed, high-income countries have the oldest population profiles, with more than 20% of the population predicted to be over 65 in 2050, when citizens older than 80 years old will be triple that of today. This is a challenge for social care systems, which, as of now, are struggling to meet the demand of assistance for vulnerable adults because of limitations in their budgets and in the difficulty of recruiting new skilled workers. Socially assistive robots are increasingly seen as one possible new technology able to address human resource and economic pressures on social care systems. Indeed, the scientific research is increasingly providing evidence of the possible successful application, which is leading the development of the robotic platforms focused on services for ageing well, with the specific aim to support and accompany the elderly users. For instance, recent research, e.g., [

1,

2,

3], shows that humanoid robots can be successfully employed in health-care environments due to their peculiarity of being able to provide personalized treatments making use of adaptable social skills.

A promising area yet to be fully explored is the application of robotics in the diagnostic process. To this end, preliminary studies have found that the application of humanoid social robots may support professionals in the detection of initial signs of impairment being exploited also at patients’ homes. The possibility to use them outside the clinic permit to reach more patients and thus allowing large-scale screenings. For this reason, various researchers began to investigate this new field of application for robotic, showing initial promising results. Humanoid social robots have been used, in fact, to improve Autistic Spectrum Disorder (ASD) diagnosis in children [

4] or to administer Patient-Reported Outcome measurement questionnaires to senior adults [

5]. The success of this approach can be favoured by the preference of interacting with a humanoid social robot rather than a non-embodied computer screen, as found in young children and older adults [

6,

7]. Users are more likely to consider human-robot interaction more satisfying when the robot can exhibit human-like behaviours [

7,

8], indeed, when people interact with an embodied physical agent, they are typically more engaged and motivated by the interaction with respect to other technologies [

9]. These characteristics make social robots with human-like appearance and behaviours a viable solution to automatic psychometric evaluation. Most importantly, previous work [

10,

11] suggested that a robot-led cognitive assessment could have many advantages, such as test standardization and assessor neutrality [

12], which is naturally guaranteed, and good usability [

13], which should be achieved with a correct design of the interaction.

Preliminary results, presented in [

10,

12], showed the viability of robotic-led data collection and cloud AI evaluation for cognitive level screening. The studies found no statistical differences between two paper-and-pencil tests, the Montreal Cognitive Assessment (MoCA) and the Addenbrooke’s Cognitive Examination Revised (ACE-R), compared to a novel prototype of a robotic-led cognitive test, administered by a social humanoid robot, the SoftBank Pepper. However, in this context, several factors have to be accounted for that could affect Human-Robot Interaction (HRI), such as the user cognitive profile. To this end, it is critical to consider individuals’ factors, such as the attitude when assessing people’s responses to the interaction with a social robot, because they may be naturally inclined to like or dislike robots as an entity, regardless of the actual interaction.

Basing upon previous literature showing the effect of personality factors, e.g., personal innovativeness [

14] and openness to experience [

15] on the evaluation and intention to use new technologies, in this work, we further analyze the influence of personality traits both on the acceptance of robotic technology and also on users’ cognitive test performance. Moreover, we investigate the impact that the subject’s ability to feel empathy has on both acceptance and performance. To this aim, we recruited a sample of senior adults, who volunteered to complete a robotic-led cognitive assessment test, and several traditional paper-and-pencil tests: the Addenbrooke’s Cognitive Examination Revised (ACE-R) test [

16]; the NEO Personality Inventory-3 (NEO-PI-3) [

17]; the Empathy Quotient (EQ) [

18]; the eye test [

19]; and a questionnaire in Italian based on the constructs of the Unified Theory of Acceptance and Use of Technology (UTAUT). The paper-and-pencil tests were administered by a chartered psychologist and used to evaluate personality traits, empathy, and technology acceptance, respectively.

Summarizing, starting from our preliminary study in [

12], in which the psychometric assessment evaluation process supported by a social robot has been presented, we extend this work by (i) investigating the cognitive and personality factors that might influence the human-robot interaction and user performance with a fully autonomous robot working as a cognitive assessment tool with a population of 19 elderly; (ii) analyzing the influence of empathy both on the acceptance of robotic technology and on users’ cognitive test performance; (iii) analyzing possible changes in technology acceptance after the very first interaction with the robot.

Results showed that personality traits, as openness to experience that in literature has been shown to correlate with robot acceptance, have influence also on the user’s performance during psychometric tests. Moreover, empathy has an impact on user acceptance showed a positive correlation with the robot perceived sociability in the case of the EQ test, but a negative one with respect to the eye test due to the lack of facial expression of the considered robot. Moreover, after this first interaction with the robot, the perceived anxiety is reduced.

The rest of the paper is organised as follows:

Section 2 introduces the background and some related work;

Section 3 describes the materials used in this work, while

Section 4 describes the methodology applied in our experiments.

Section 5 presents and discusses the results. Finally,

Section 6 gives our conclusions.

2. Background and Related Works

In the last decade, many social robotics studies focused on the use of humanoid robots to support educational and psychological interventions with children [

20] or elderly people [

21]. Particularly, in support of elderly people with dementia or Mild Cognitive Impairment (MCI), social robots have been proved to be effective in facilitating independence and improving both caregiver and senior people’s well-being. People with MCI are generally more independent than those with dementia. However, they are at a higher risk than the normal population of developing worse conditions if not adequately supported and constantly monitored [

22].

Currently, the literature in assistive robotics that tries to address the psychometric evaluation is quite limited. Probably this is due to the skepticism of the practitioners [

23] and to the perception of users on the reliability shown by the robots [

24]. However, we argue that, since an essential characteristic of psycho-diagnostic tests is that the stimuli and the methods for their administration should be just as rigorously standardized to guarantee the reliability (i.e., its repeatability in different times and places) and the validity of the results, assistive robots can represent a valuable way to meet these requirements and provide a reliable automatic tool for psychometric assessment [

10,

11,

12,

13,

25,

26]. Additionally, not only a psychometric test is as reliable and valid as the stimuli are representative of the cognitive function or of the area of the personality that one wants to study [

27], but also the acceptability of the robotic tools is affected by the individual users’ personality and the psychological variables [

28,

29]. This suggests that for a successful deployment of socially assistive robots [

30] in this context, it is of paramount importance the knowledge of personality factors being those able to affect both reliability and acceptability.

The scientific literature increasingly suggests, in fact, that acceptance is related to the psychological variables of individual, but also to the social and physical environment in which the robots operate [

31,

32,

33]. These variables interact with each other to positively or negatively influence the acceptance of social robots [

31,

34,

35]. For example, success factors for robots in the context of social care include being enjoyable and easy-to-use [

36,

37,

38] and full-filling their function without error [

39,

40]. For these reasons, to fully engage with a social robot, it is crucial that users feel at ease while interacting with it [

23]. Conversely to the majority of studies, which are more focused on the design of the robot “personality” in terms of physical and expressive characteristics that it should have [

41,

42] and its effects on human-robot interaction [

43,

44,

45], we are mainly interested in the relationship between the users’ personality traits and the acceptability of the robots by the users, e.g., [

12,

46].

It is a matter of fact that personality traits act as antecedents to users’ attitudes and cognitive behaviours and, in turn, to their engagement with new technologies [

47,

48,

49]. These factors have been also evaluated with respect to the use of computer tools or social networks [

50], less in relation to the interaction with robotic devices [

51]. In this paper, we propose the use of a robot as a psychometric tool, that represents a new context in this field, by considering users’ personality factors that can affect its acceptability and reliability. The relationship between personality traits and computer acceptance investigated in previous studies typically consider the aspects of the “Five Factors Model” [

17,

52], which defined five-dimensional structures for personality traits: extroversion, agreeableness, consciousness, emotional stability, and openness to experience. According to current literature review papers in this field [

53,

54] stating that additional personality factors (beyond the Big Five) should be necessary to capture how people differ qualitatively in their mental models for advanced, intelligent and autonomous robots, in this work, we propose the use of NEO Personality Inventory-3 test (NEO-PI-3) [

17] that does not limit itself to investigating the five main personality traits, but, for each of them, it considers six facets allowing a deeper understanding of the user profile. This greater level of detail allowed us to better grasp the subjective nuances (sub-factors) related to each dimension with respect to the state-of-the-art.

Attitudes to social robots can be influenced not only by its ability to act upon things (mind agency) but also to feel (mind experience), which also influences the degree of emotional response that robots may evoke. For this reason, we also evaluate the emotional aspects of participants when interacting with the robot via the administration the Empathy Quotient and EQ-Eye Test.

Namely, we applied our robotic psychometric tool to a senior population, thus we had to take into account that investigating the perception of robots by senior adults does not seem as simple as by young adults. Indeed, identifying unmet needs and expectations is usually more complicated with senior adults, who have reduced awareness of their own needs due to habituation and, more often, they are unwilling to acknowledge limitations because of stigmatization or fear of independence loss [

55]. This may have an impact on robot acceptability by older individuals and disabled. In general, before a person has their first direct experience of robots, users form a mental model about them, which conditions their perception of the robot and the interaction with it. Mental models are influenced by past personal experience with technology and second-hand sources of information external to the individual, such as the information found on the internet, including social networks, the media (newspapers, magazines), and science fiction [

56,

57,

58]. Previous studies in human-robot interaction reported the evidence regarding how gender, education, age, and prior computer experience impact on attitude towards robots. In particular, Heerink [

34] explored the influence of these variables on acceptance by senior adults and showed that participants with more education were less open to perceive the robot as a social entity. Nomura et al. [

59] found that different age groups adopted alternative approaches when learning how to use unfamiliar technologies: young people used trial and error, adults read instructions whereas senior adults preferred to ask for help. Stafford et al. [

60] investigated whether perceptions about the robot’s artificial mind can predict the Healthbot robot usage and how these perceptions may affect attitudes towards robots. The results show that participants who attributed more autonomy to the robot were more cautious and less willing to use it, but their attitudes improved while interacting with the robots, as they realised the limited ability to think and remember. In this work, the attitude toward robots and technology, in general, are evaluated by the administration of the UTAUT questionnaire before and after the interaction to evaluate how the acceptance of such technology may vary after the very first interaction with a robot. For information about the UTAUT see [

61,

62].

4. Methodology

The study followed a within-subjects design with two stages. This design approach allows testing the effects of the human-robot interaction by evaluating the performance of the same participant before and after the interaction of the robot. The advantage of a within-subject design is that it requires fewer participants, but it also required the use of two different psychometric instruments to avoid a learning effect for the participants, a typical issue of this approach.

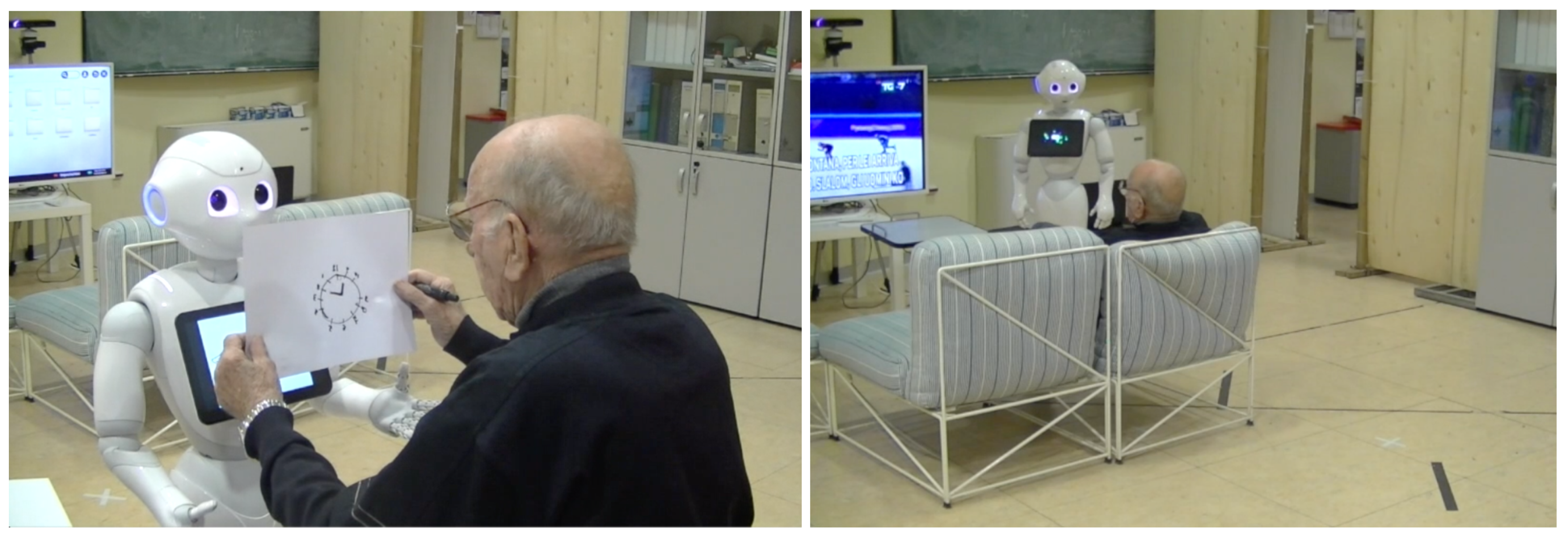

The robotic platform used in our experiments is the humanoid Pepper robot produced by Softbank Robotics. Tests were performed in a large laboratory area, where common home-furnishing has been used to simulate a home environment (see

Figure 1). The idea was to put at ease the senior participants by trying to simulate a domestic environment in view of the evaluation of the realized robotic platform in in-house applications.

4.1. Experimental Procedure

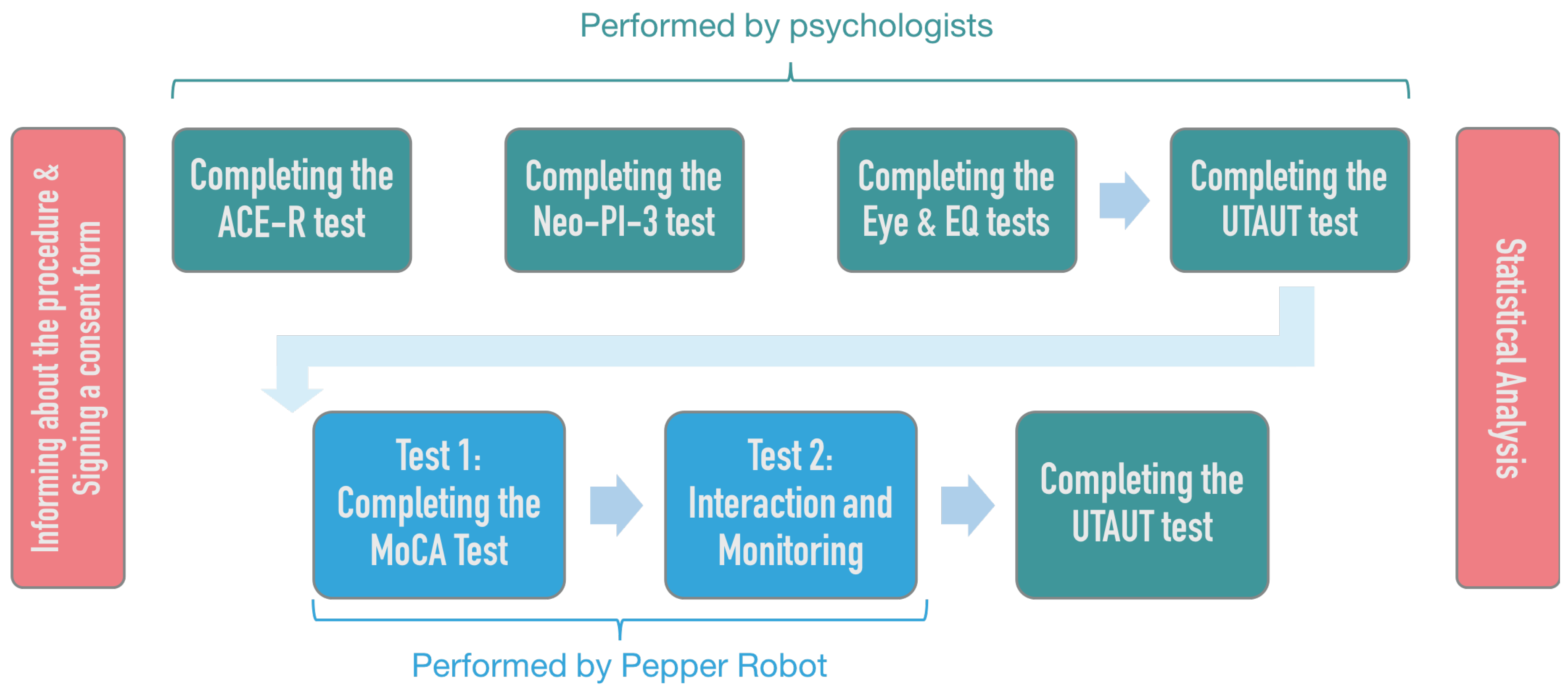

The different stages of the experiments are summarized in

Figure 2. Upon arrival, testers were informed about the procedure and signed a consent form. In the first stage with a human psychologist, the elderly participants were led to a first room, where were administered: (i) Cognitive Examination Revised (ACE-R); (ii) NEO Personality Inventory-3 (NEO-PI-3); (iii) the Italian version of the “Reading the Mind in the Eyes” test; (iv) the short Italian version of the Empathy Quotient test to evaluate the emotional aspect of the theory of mind and various aspects of empathy, respectively. After the completion of the abovementioned tests and questionnaires, all participants filled a questionnaire based on UTAUT model. This questionnaire was administered to participants also at the end of the experiment to evaluate the possible changes in acceptance before and after the physical and social interaction with the robot. The order of the administrated tests is shown in

Figure 2. It has been the same for all subjects to not impact on performance evaluation. In particular, to avoid a possible effect of fatigue, the most complex cognitive tests were administered first.

After completing the psychological testing, participants were invited to enter the home-like environment (

Figure 1), then to interact with the humanoid robot Pepper. In the first task, Pepper engaged the participants to administer the different psychometric tasks of the MoCA test [

26] and to record and store the users’ scoring. The MoCA robotic administration is performed by providing verbal instructions for the tasks using text-to-speech and animated speech. To receive and analyze the user’s input, we rely on speech recognition and face tracking facilities, image analysis, and the robot’s tablet. See

Figure 1 (left) for an example.

In the second task with Pepper (Test 2), the subject was asked to perform different activities in the house environment, while the robot from time to time approached the user to monitor its behavior (but without any interaction with him/her). The results of the second test can be found in [

67].

Screen-shots of the first and the second tasks with the robot are shown in

Figure 3 on the right. At the end of the interaction with the robot, the UTAUT questionnaire was administrated by the psychologists to evaluate the level of technology acceptance by the participants after the physical and social interaction with the robot.

To allow the experimenter monitoring the tests without interfering with the user-robot interaction, two cameras were positioned within the testing environment to allow both real-time monitoring (giving the experimenter the possibility to intervene in case of problems) and storing video recordings for deeper posterior analysis. Additionally, Pepper took photos during the drawings tasks of the MoCA and produced a dialogue file with the transcription of the verbal conversation between the robot and participants. In this way, at the end of the experiments, psychologists were able to supervise the automatic score computed by the robot supported by the additional data collected by the robot (recordings, photos, transcriptions of the administrations), thus providing the actual score achieved by the user.

4.2. Participants

A total of 21 Italian native-speaker senior adults were enrolled for this study and recruited following ethical guidelines of the Department of Psychology of the University of Campania “Luigi Vanvitelli”. All the participants signed a consent form. Among these, 19 subjects (eight females, 11 males) completed, in separate sessions, both the traditional paper-and-pencil cognitive and personality evaluations and the robotic-led cognitive assessment. Participants’ average age was 61 years old (range = 53–82, standard deviation = 7), while the average of the years spent in education was 12 (range = 8–18, standard deviation = 4).

4.3. Statistical Analysis

The SPSS software version 24 was used to analyse the data and calculate the statistics.

The statistical analysis comprises the usual descriptive statistics (mean, minimum, maximum, standard deviation) for the psychometric (paper-and-pencil and robotic-led) tests’ scores. For deeper analysis, we calculated the correlation among the variables. Spearman’s and Pearson’s correlations were chosen according to the type of data group and the shape of its distribution. Once correlations were found among variables, the regression analysis was used to confirm the predictive role of one factor (predictor variable) to another (dependent variable).

Furthermore, we calculated the Cronbach Alpha (CA) coefficient. The CA estimates the internal consistency of a composite score. This coefficient is considered a measure of reliability, which is important to confirm the use of constructs as a single entity, like in the case of the UTAUT. T-tests were performed to verify a significant difference in the UTAUT scores before and after the human-robot interaction. T-test was chosen because it is not affected by the size of the sample, and its validity was supported by the approximately normal distribution of the differences between the paired pre- and post-interaction scores, the type of variable and the absence of outliers in the differences between the two dependent samples.

5. Results Analysis

In this section, we present and analyse the results of our test by firstly considering the impact of personality on the scores of the psychometric tests (both paper and pencil and administered by the robot). We then analyze such values concerning the UTAUT results before and after the interaction with the robot. Finally, we discuss the effect of empathy.

5.1. Descriptive Statistics: NEO-PI-3, Empathy, and Eye Test

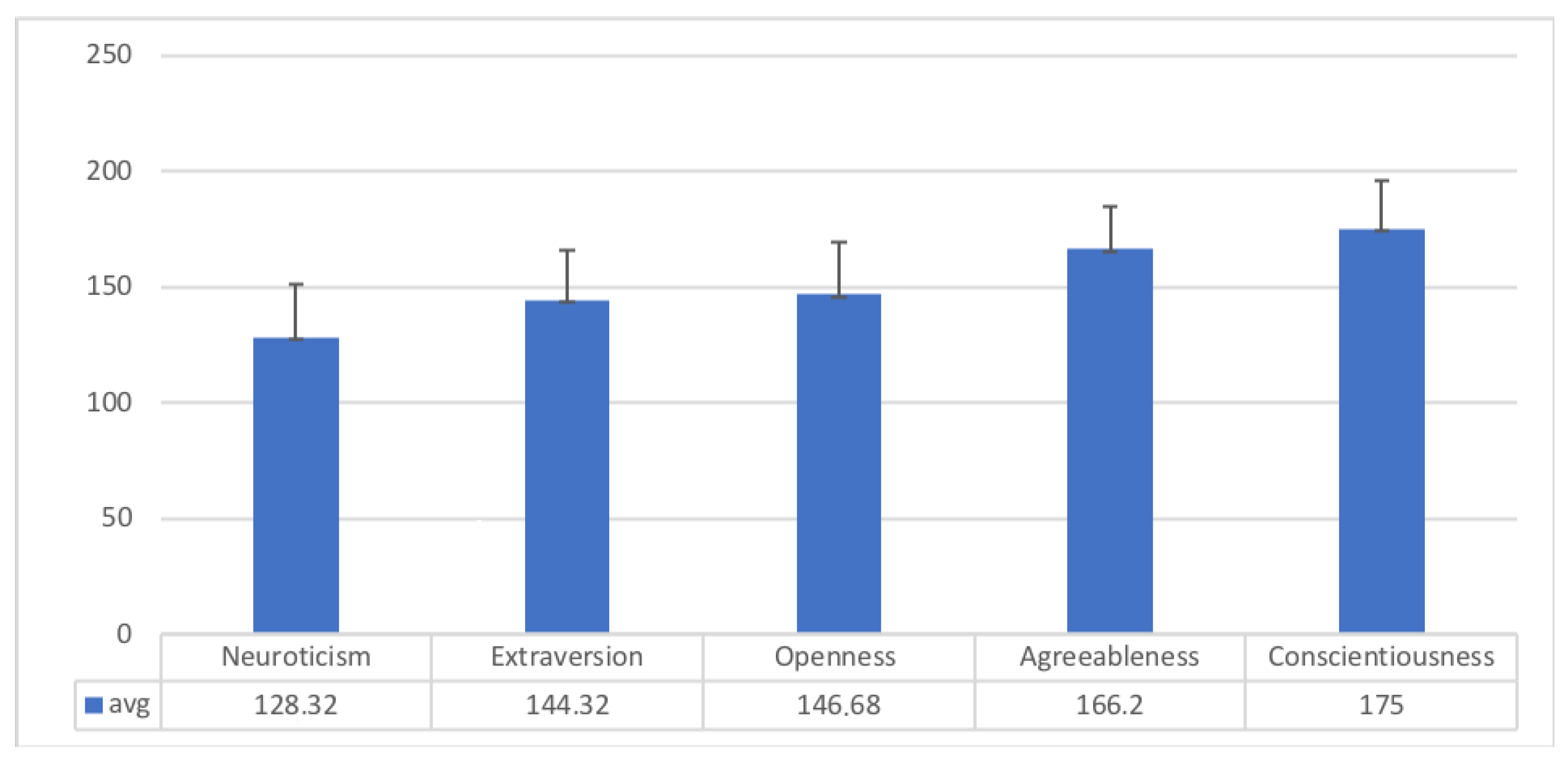

Figure 4 shows average global scores of the NEO-PI-3 personality factors.

Table 1 reports the mean and standard deviation of the sub-factors for each personality dimension. The average of the Eyes test is 22.67 (range = 16–29; SD = 3.49) and the average of EQ is 45.14 (range = 35–61; SD = 8.44).

5.2. Descriptive Statistics: ACE-R and Robotic Assessment

The results of the paper and pencil administration of the ACE-R resulted in a mean total score of 90.42 (range = 78–96; SD = 4.17). The automatic scoring for the robotic test gave a global mean score of 13.74 (range = 7–20; SD = 3.43), which, after the professional revision of the data collected by the robot, was increased to 21.16, which is the mean of the supervised global score for the robotic cognitive assessment (range = 13–27; SD = 3.98).

Table 2 shows the results for each subset of the supervised and automatic subsets scores.

5.3. Correlations among Cognitive Scores and Personality Domains

The relationships between traditional/robotic cognitive assessments and NEO-PI-3 personality domains were investigated via Spearman correlations (see

Table 3). The only strong and significant relationship was found between the openness to experience dimension and the robotic automatic score (

ρ = 0.58;

p < 0.01). Going deeper, openness to experience strongly and significantly correlated with the robotic automatic language sub-test (

ρ = 0.52;

p < 0.05). In addition, the automatic score positively correlated with the sub-factors of openness, specifically the “fantasy” (r = 0.51;

p < 0.05), the “ideas” (r = 0.50;

p < 0.05) and the “values” (r = 0.67;

p < 0.01). This positive association between openness to experience and the cognitive test performance on robot-assisted MoCA might indicate that this specific trait can play a relevant contribution in increasing the performance of elderly people when a neuro-psychological test is administered by a humanoid robot. This finding could be further explained considering that openness is a personality domain related to the tendency to be receptive to new ideas and experiences. Indeed, social and physical interaction with a social robot may represent a novel experience for elderly participants. In other words, the correlation between the automatic scoring and openness to experience suggests that a positive attitude toward the novel technology may facilitate the unsupervised application of the robotic instrument for cognitive assessment. Moreover, this specifically highlights the importance to effectively design the multi-modal interfaces of the robot for the elderly, because it could not only facilitate those who are not inclined to use the social robot, but also favour those who want to use it. The present results might also suggest that better performance on cognitive tests is related to the tendency of having a vivid and active imagination contributing to enrich their lives, too the development of new ideas, even unconventional ones.

Moreover, the robotic automatic attention subtest strongly and significantly correlates with extraversion (r = 0.63; p < 0.01), and the robotic automatic naming subtest with neuroticism (r = 0.47; p < 0.05). No other significant correlations involving personality factors were found.

Linear Regression Analysis

We performed a linear regression analysis to confirm the relationship between personality domains and robotic automatic score. As shown in the previous section, openness to experience was the only factor that significantly correlated to the robotic automatic score. Therefore, we considered this domain as the only independent variable (prediction) for the regression analysis, which resulted in the relation shown in

Table 4, whereas the dependent variable is the automatic score of the robot-led cognitive assessment. The regression analysis confirms that the openness to experience could facilitate the interaction with the robot and therefore improved the accuracy of the automatic score calculated without human supervision.

5.4. UTAUT before and after the Interaction

First, we evaluated the reliability of the UTAUT questionnaire through the Cronbach’s alpha analysis evaluated for each of the 12 constructs. Then, we presented the descriptive statistics where: average, scores, minimum, maximum, standard deviation values are shown, respectively, for the UTAUT responses before and after the interaction with the robot. Finally, we computed the parametric statistical t-test on the UTAUT scores collected before and after the interaction with the robot. The test compares the means that are from the same individual in two different times (e.g., pre-test and post-test with the intervention of the robotic psychometric tool between the two-time points). The purpose of the test is to determine whether there is statistical evidence that the mean difference between paired observations on a particular outcome is significantly different. Additionally, we analyzed the possible correlation between the UTAUT and the EQ-Eye Test to evaluate the emotional aspects of participants when interacting with the robot.

5.4.1. Cronbach Alpha (CA) Analysis on the UTAUT Sub-Scores (before and after the Tests)

Before starting the analysis of the collected data, we computed the CA associated with the subscales of the UTAUT test performed by the participants before and after the interaction with the robot (see

Table 5). We observed that the estimated CA was 0.946, which means that 95% was the reliability of the composite score combining the 41 items submitted to the analysis, which corresponds to the 41 scores of the UTAUT questions collected from participants before the interaction with the robot. We thus calculated a composite score, where 95% percent of the variance inner score will be considered what is called true score value or internally consistent reliable value.

The CA computed on the sub-scores of the UTAUT questionnaire filled by the participants after the interaction with the robot presents the same high-reliability value. In particular, the CA was equal to 0.938.

5.4.2. Descriptive Statistics: UTAUT Scores before and after the Interaction

UTAUT average constructs and their differences (before and after the interaction) are shown in

Table 6. In general, participants considered the robotic platform enjoyable and ease of use (high values for PAD and PENJ). They had a fairly positive perception of the main characteristics of the robot, such as adaptability, sociability (PS, SP), and usefulness, while the sense of trust and the social influence of the robot were scored with lower values. In addition, the intention to use the technology and the facilitation conditions were scored with lower values. This could be due to the fact that participants were not at all confident with robotic applications. Finally, the subjects showed moderate anxiety (ANX) towards the robot before the interaction that increases a little bit after the interaction. Note that an increased score in ANX corresponded to a decrease of perceived anxiety since this item is evaluated with a reverse score.

5.4.3. t-Test on the UTAUT Scores before and after the Interaction with the Robot

This analysis aims at investigating if the interaction with the robot affected the UTAUT results. Specifically, we adopted a t-test analysis on paired samples of the UTAUT items collected before and after the HRI in order to observe if the mean value of the UTAUT items computed before the interaction with the robot is statistically significantly different from that of the items computed after the interaction.

In

Table 6, the results of the t-test are summarized. We could observe that there were no significant differences about the scores of the UTAUT before and after the HRI test, except for the Anxiety (ANX) value that increased after the interaction with the robot and the Perceived Adaptability (PAD) of the robot form the user’s point of view that decreased after the interaction. In particular, we observed a negative correlation (t = −2.154 with

p < 0.05) between the ANX value before and after, and a weak positive correlation (t = 2.010 with

p = 0.058) between PAD before and after the interaction with the robot.

5.4.4. Correlations among UTAUT Constructs and Cognitive Scores

Table 7 reports the correlations among the UTAUT constructs and the cognitive scores, which showed that the supervised evaluation score for visuospatial capabilities and anxiety had a significant moderate correlation. This could indicate that a high-level of anxiety when using a novel system could increase user attention and improve cognitive test performance. Indeed, literature [

68] shows that a state of moderate anxiety has not a negative impact on cognition in elderly people, but rather it can improve the selectivity of attention [

69]. In practice, the interaction with a robot was an unusual and novel situation for elderly participants, who could have some performance anxiety before the beginning of the interaction and that decreases at the end, as shown by the slight increase in the ANX construct of the UTAUT. This could also have facilitated the selectivity of attention [

70] on visuospatial tasks.

Of interest is the inverse correlation between trust and the supervised evaluation of attention. This might indicate that the elderly with good attention capabilities may tend to do not follow the instructions of the robot. Moreover, a moderate correlation of delayed recall (DR) with the supervised evaluation of the intention of use (ITU) suggests that senior people with higher memory performance could be more willing to use the robot system over a longer period. This more positive intention of the participants might be associated with the idea that the technology can improve their memory, stimulate learning of new information and help people to preserve their mind against age-dependent decline as previously noted by Tanaka et al. [

71]. In addition, the social presence (SP) and the visuospatial automatic score (VS(A)) were inversely correlated, but, the automatic visuospatial scoring was highly affected by the robot’s low performance in recognizing the subject’s drawings, so these results should be interpreted cautiously.

5.4.5. Correlation of UTAUT with EQ and Eye Test

We found a significant but negative correlation between the score on the Eyes test and Perceived Sociability (PS) subtest of the UTAUT recorded after interaction with the robot (rho = −0.507; p = 0.019). This result might indicate that after a direct social interaction with the robot, people with high levels of emotion recognition (evaluated by the Eyes Test) perceive the robot as not social and not living machine. Moreover, note that the Pepper robot does not have the capability to express emotions by face and eye modifications. This could have an impact on this result.

However, contrariwise, we found a significant and positive correlation between EQ score and Perceived Sociability (PS) and Social Presence (SP) subtests of the UTAUT recorded after interaction with the Robot (rho = 0.492, p = 0.024; rho = 0.453, p = 0.039, respectively). The present results suggested that people who perceived themselves as with high levels of empathy tended to perceive the robot as a social agent capable of performing sociable behavior and perceiving the person.

5.5. Limitations

This study may have some limitations, also related to the small number of participants. However, this is a common limitation of older people to visit the research labs for experiencing the robotic systems.

The difference between the instruments administered by the psychologists (ACE-R) with respect to the one administered by the robot could affect our results because of their different targets (ACE-R is focused on dementia, while MoCA is generically for MCI). However, several comparative studies have found that they have a similar performance in measuring the cognitive level, e.g., [

72]. Moreover, it is a standard method in validating a new psychometric test to use a different instrument that measures the same variable [

73]. Of course, it is expected that the result will not be identical but there should be some differences like those we observed in our analyses.

Finally, the Hawthorne Effect, also known as the observer effect, which supposes the alteration of subjects’ conduct, because of their awareness of being observed, may have an impact on the subject’s performances during the tests as well as on their evaluation of the interaction. However, by analyzing the subjects’ behaviors in the video, we noted a natural and relaxed attitude. Indeed, during our experiments, two participants cheated by writing down the words that should have been recalled later. This, on the one hand, suggests that the Hawthorn effect does not influence all experimental conditions and participants, and on the other hand draws the attention towards the investigation of possible cheating behaviour during the robot assessment.

6. Conclusions

This article presented an approach to cognitive assessment that makes use of social robots to administer psychometric tests and collect the data for the assessment via a pre-programmed human-robot interaction. In particular, the investigation focused on the factors that might influence the human-robot interaction and user performance. The approach was tested in a simulated home environment by elderly participants, who (N = 19) completed both the same test (MoCA) with the robot and a human assessor, in the standard paper and pencil modality. The participants also completed a personality test (NEO-PI-3), the Eyes and EQ tests, and filled a questionnaire aimed at investigating the UTAUT factors.

The analysis of the results showed that the Openness to Experience personality trait has a positive influence on the performance obtained during the interaction with the robot, and in particular in the automatic MoCa evaluation as performed by the robot. Thus, while the interaction with a robot may represent a novel experience for elderly people, in the case of subjects with a high openness trait this can facilitate the unsupervised application of the robotic instrument for cognitive assessment. Moreover, neuroticism and extraversion correlate respectively with the automatic evaluation of the naming and attention tasks.

No statistically significant differences were found with respect to the UTAUT evaluation before and after the interaction with the robot. The only exception is for the anxiety construct that slightly increases after such first and novel interaction with the robot, so leading to a decrease in the perceived anxiety after the interaction. Indeed, it has a moderate correlation with the evaluation of visuospatial capabilities. This could indicate that a high level of anxiety experienced before using a novel system could help cognitive test performance, but it requires a further investigation to assess the effect on long-term interaction.

As expected, empathy has an impact on user acceptance. In particular, the results of the Eyes and EQ tests both significantly correlate with the UTAUT results of the Perceived Sociability (PS) of the robot after the interaction. In detail, EQ scores directly correlated with Perceived Sociability and Social Presence meaning that the users still perceived the robot as a social agent capable of performing social behaviors. However, Pepper’s lack of a face probably caused a negative correlation between PS and the Eyes test.

In conclusion, the results of the present study suggest designing a novel psychometric test that enhances the strengths and compensates for the weaknesses of the use of a social robot in cognitive assessment. This new test should be validated in new trials with larger samples and for a longer time also to provide further evidence and insights about the practical use of this new technological tool in the clinical context. Indeed, in future work, we will further explore the use of the most advanced AI Cloud services, which demonstrated to be beneficial to improve the automatic scoring of the tests [

10]. The use of cloud services will enable automated storage and remote analysis by doctors. Therefore, this will save time for both patients and doctors and allow increasing the number of people that have access to mental health services.

Furthermore, future work should focus on a new psychometric instrument, which should be created and tailored to be administered by social robots and evaluated by artificial intelligence technologies. To fully take advantage of the opportunities given by these novel technologies, the new system should be able to proactively engage the user, adapt to their technological level, and rely less on speech recognition (because it not fully reliable at the moment). The novel instrument should be co-designed with experts, refined with user evaluation feedback, then validated via clinical trials with longitudinal study designs (e.g., administration of the instrument every 6 months to monitor the subject’s cognitive condition). Comparisons with alternative computerised solutions should be performed, also considering user attitudes toward technologies along with cultural components and differences in social habits.