The Use of UTAUT and Post Acceptance Models to Investigate the Attitude towards a Telepresence Robot in an Educational Setting

Abstract

1. Introduction

2. Related Work

3. Materials and Methods

3.1. Technology Acceptance Model (TAM)

3.2. Post Acceptance Model

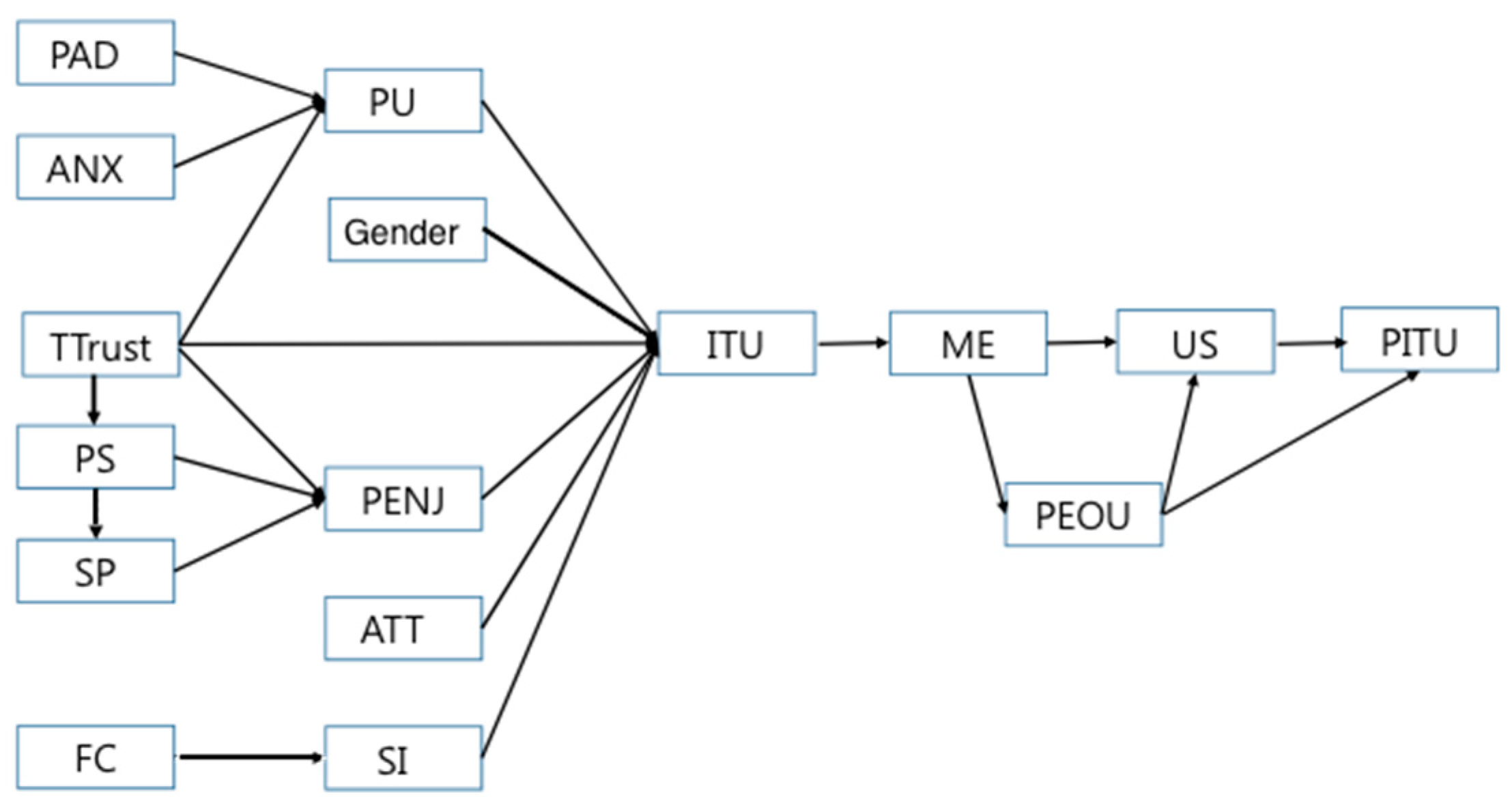

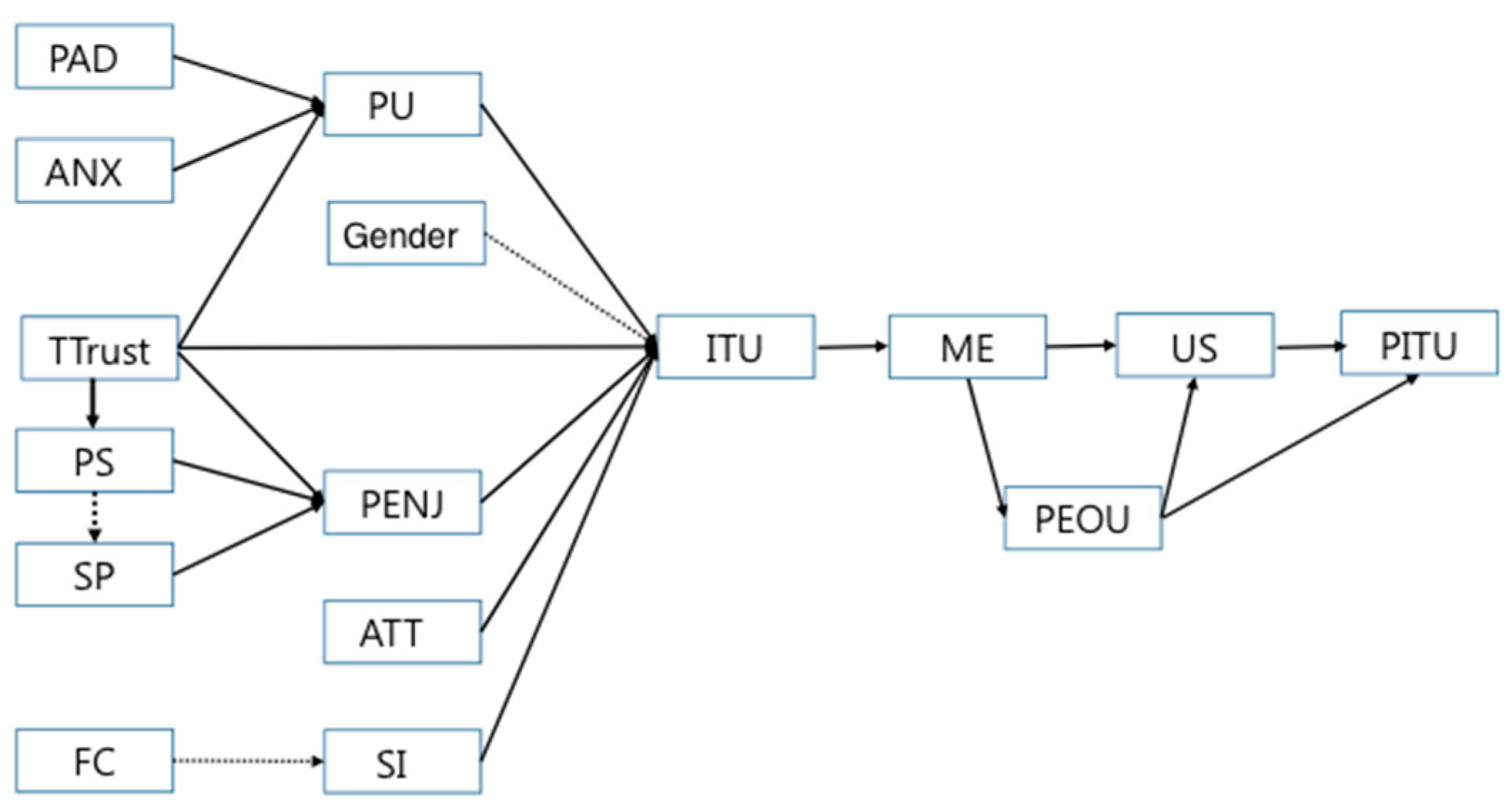

3.3. Overview of Construct Interrelations

- H1—Intention to use (ITU) is determined by (a) perceived usefulness (PU), (b) perceived enjoyment (PENJ), (c) attitude (ATT), (d) trust of technology (TTrust), (e) social influence (SI), and (f) gender.

- H2—Perceived usefulness (PU) is influenced by (a) perceived adaptability (PAD), (b) anxiety (ANX), and (c) trust of technology (TTrust).

- H3—Perceived enjoyment (PENJ) is influenced by (a) social presence (SP), (b) perceived sociability (PS), and (c) trust of technology (TTrust).

- H4—Perceived sociability (PS) is influenced by trust of technology (TTrust).

- H5—Social influence (SI) is influenced by facilitating conditions (FC).

- H6—Social presence (SP) is influenced by perceived sociability (PS).

- H7—Post-intention to use (PITU) is determined by (a) user satisfaction (US) and (b) perceived ease of use (PEOU).

- H8—User satisfaction (US) is influenced by (a) met expectation (ME) and (b) perceived ease of use (PEOU).

- H9—Perceived ease of use (PEOU) is influenced by met expectation (ME).

- H10—Met expectation (ME) is influenced by the intention to use (ITU).

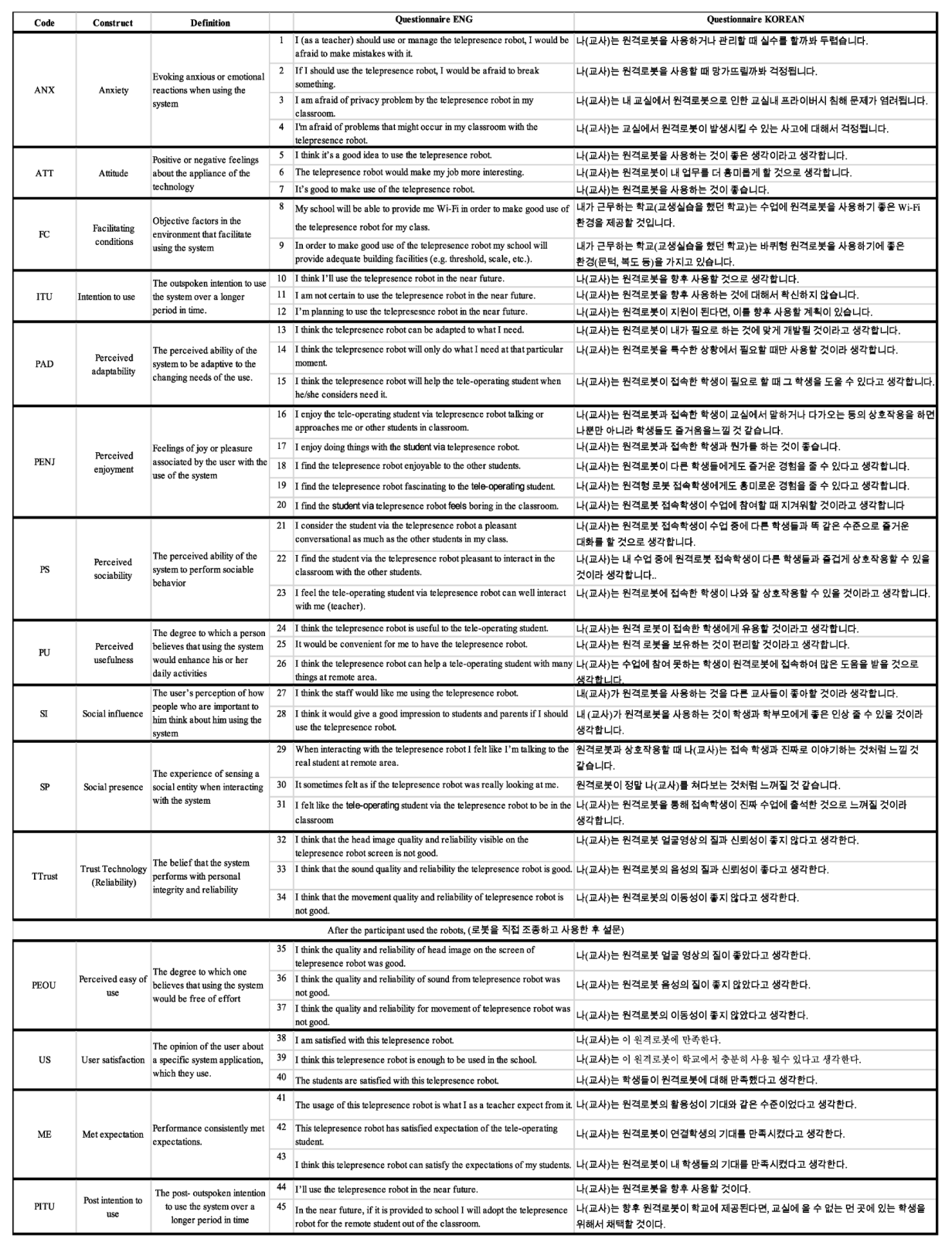

3.4. The Instrument

3.5. Experimental Setup

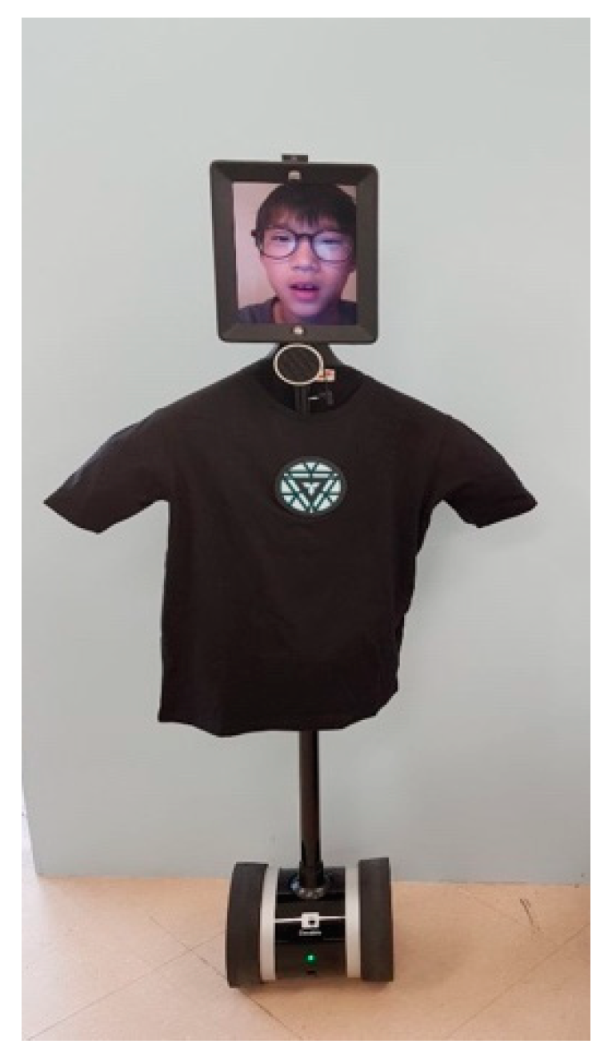

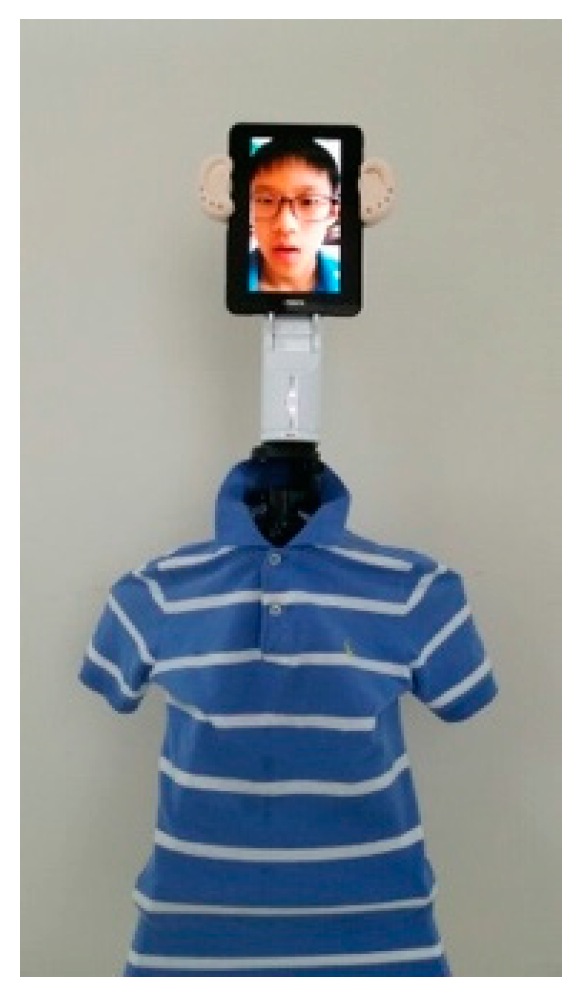

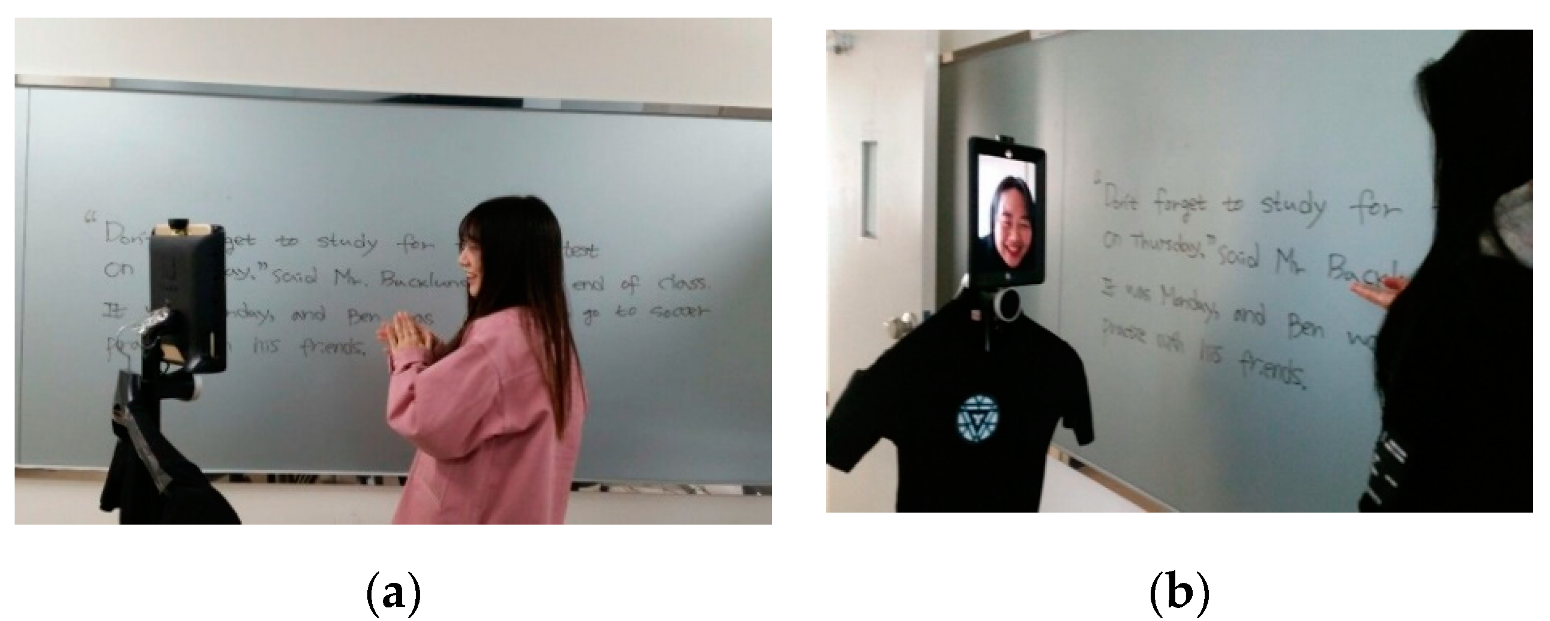

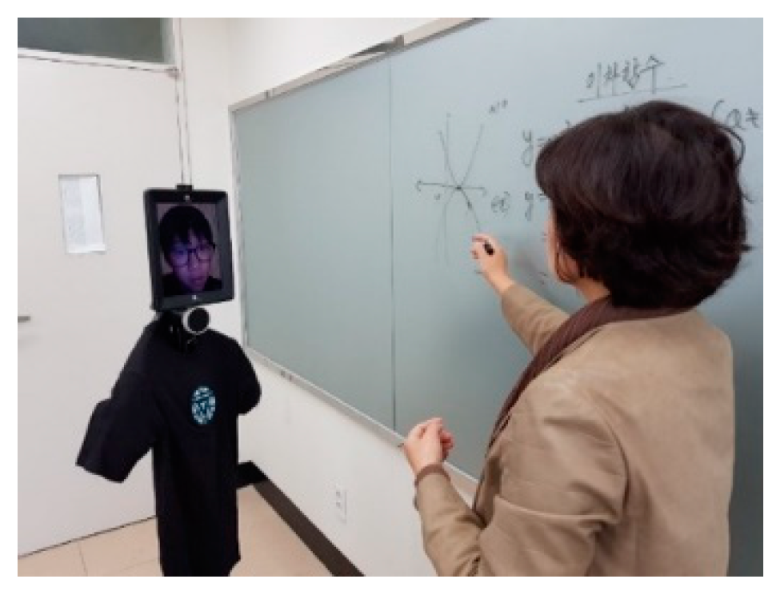

3.5.1. The Telepresence Robotic Platforms

3.5.2. Participants

3.5.3. Experimental Procedure

3.6. Data Analyses

4. Results

5. Discussion and Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Ethical Approval

Informed Consent

Appendix A

References

- ISO; DIS. 8373: 2012. Robots and Robotic Devices–Vocabulary; International Standardization Organization (ISO): Geneva, Switzerland, 2012. [Google Scholar]

- Feil-Seifer, D.; Mataric, M.J. Defining Socially Assistive Robotics. In Proceedings of the 9th International Conference on Rehabilitation Robotics, Chicago, IL, USA, 28 June–1 July 2005; pp. 465–468. [Google Scholar]

- Conti, D.; Cirasa, C.; di Nuovo, S.; di Nuovo, A. Robot, tell me a tale!: A Social Robot as tool for Teachers in Kindergarten. Interact. Stud. 2020, 21, 221–243. [Google Scholar]

- Conti, D.; di Nuovo, S.; Buono, S.; di Nuovo, A. Robots in education and care of children with developmental disabilities: A study on acceptance by experienced and future professionals. Int. J. Soc. Robot. 2017, 9, 51–62. [Google Scholar] [CrossRef]

- Conti, D.; Cattani, A.; di Nuovo, S.; di Nuovo, A. Are Future Psychologists Willing to Accept and Use a Humanoid Robot in Their Practice? Italian and English Students’ Perspective. Front. Psychol. 2019, 10, 1–13. [Google Scholar] [CrossRef] [PubMed]

- Venkatesh, V.; Morris, M.; Davis, G.; Davis, F. User acceptance of information technology: Toward a unified view. MIS Q. 2003, 27, 425–478. [Google Scholar] [CrossRef]

- Bhattacherjee, A. Understanding information systems continuance: An expectation-confirmation model. MIS Q. 2001, 351–370. [Google Scholar] [CrossRef]

- Whelan, S.; Murphy, K.; Barrett, E.; Krusche, C.; Santorelli, A.; Casey, D. Factors affecting the acceptability of social robots by older adults including people with dementia or cognitive impairment: A literature review. Int. J. Soc. Robot. 2018, 10, 643–668. [Google Scholar] [CrossRef]

- Mubin, O.; Stevens, C.J.; Shahid, S.; al Mahmud, A.; Dong, J.-J. A Review of the Applicability of Robots in Education. Technol. Educ. Learn. 2013, 1, 13. [Google Scholar] [CrossRef]

- Frey, C.B.; Osborne, M.A. The future of employment: How susceptible are jobs to computerisation? Technol. Forecast. Soc. Chang. 2017, 114, 254–280. [Google Scholar] [CrossRef]

- European Commission. Special Eurobarometer 382—Public Attitudes towards Robots. European Commission: Brussels, Belgium, 2012. [Google Scholar]

- Taipale, S.; de Luca, F.; Sarrica, M.; Fortunati, L. Robot shift from industrial production to social reproduction. In Social Robots from a Human Perspective; Springer: Berlin/Heidelberg, Germany, 2015; pp. 11–24. [Google Scholar]

- European Commission. Special Eurobarometer 460—Attitudes towards the Impact of Digitisation and Automation on Daily Life; European Commission: Brussels, Belgium, 2017. [Google Scholar]

- Broadbent, E.; Stafford, R.; MacDonald, B. Acceptance of Healthcare Robots for the Older Population: Review and Future Directions. Int. J. Soc. Robot. 2009, 1, 319–330. [Google Scholar] [CrossRef]

- Kanda, T.; Miyashita, T.; Osada, T.; Haikawa, Y.; Ishiguro, H. Analysis of humanoid appearances in human–robot interaction. IEEE Trans. Robot. 2008, 24, 725–735. [Google Scholar] [CrossRef]

- Venkatesh, V.; Davis, F.D. A Theoretical Extension of the Technology Acceptance Model: Four Longitudinal Field Studies. Manag. Sci. 2000, 46, 186–204. [Google Scholar] [CrossRef]

- Fishbein, M.; Ajzen, I. Belief, attitude, intention, and behavior: An introduction to theory and research; Contemporary Sociology; Addison-Wesley: Reading, MA, USA, 1975. [Google Scholar] [CrossRef]

- Ajzen, I. Attitudes, traits, and actions: Dispositional prediction of behavior in personality and social psychology. In Advances in Experimental Social Psychology; Elsevier: Amsterdam, The Netherlands, 1987; Volume 20, pp. 1–63. [Google Scholar]

- Fabrigar, L.R.; Wegener, D.T. Attitude structure. In Advanced Social Psychology: The State of the Science; Oxford University Press: Oxford, UK, 2010; pp. 177–216. [Google Scholar]

- Wagner, W.; Kronberger, N.; Seifert, F. Collective symbolic coping with new technology: Knowledge, images and public discourse. Br. J. Soc. Psychol. 2002, 41, 323–343. [Google Scholar] [CrossRef] [PubMed]

- Broadbent, E.; Tamagawa, R.; Kerse, N.; Knock, B.; Patience, A.; MacDonald, B. Retirement home staff and residents’ preferences for healthcare robots. In Proceedings of the RO-MAN 2009-The 18th IEEE International Symposium on Robot and Human Interactive, Toyama, Japan, 27 September–2 October 2009; pp. 645–650. [Google Scholar]

- Conti, D.; Commodari, E.; Buono, S. Personality factors and acceptability of socially assistive robotics in teachers with and without specialized training for children with disability. Life Span Disabil. 2017, 20, 251–272. [Google Scholar]

- Wu, Y.; Wrobel, J.; Cornuet, M.; Kerhervé, H.; Damnée, S.; Rigaud, A.-S. Acceptance of an assistive robot in older adults: A mixed-method study of human–robot interaction over a 1-month period in the Living Lab setting. Clin. Interv. Aging 2014, 9, 801. [Google Scholar] [CrossRef]

- Heerink, M. Exploring the influence of age, gender, education and computer experience on robot acceptance by older adults. In Proceedings of the 2011 6th ACM/IEEE International Conference on Human-Robot Interaction (HRI), Lausanne, Switzerland, 8–11 March 2011; pp. 147–148. [Google Scholar]

- Scopelliti, M.; Giuliani, M.V.; Fornara, F. Robots in a domestic setting: A psychological approach. Univers. Access Inf. Soc. 2005, 4, 146–155. [Google Scholar] [CrossRef]

- Nomura, T.; Sugimoto, K.; Syrdal, D.S.; Dautenhahn, K. Social acceptance of humanoid robots in Japan: A survey for development of the frankenstein syndorome questionnaire. In Proceedings of the 2012 12th IEEE-RAS International Conference on Humanoid Robots (Humanoids 2012), Osaka, Japan, 29 November–1 December 2012; pp. 242–247. [Google Scholar]

- Arras, K.O.; Cerqui, D. Do We Want to Share Our Lives and Bodies with Robots? A 2000 People Survey: A 2000-People Survey; Technical Report; Swiss Federal Institute of Technology: Zurich, Switzerland, 2005; Volume 605. [Google Scholar]

- Gross, H.-M.; Schroeter, C.; Mueller, S.; Volkhardt, M.; Einhorn, E.; Bley, A.; Langner, T.; Merten, M.; Huijnen, C.; van den Heuvel, H.; et al. Further progress towards a home robot companion for people with mild cognitive impairment. In Proceedings of the 2012 IEEE International Conference on Systems, Man, and Cybernetics (SMC), Seoul, Korea, 14–17 October 2012; pp. 637–644. [Google Scholar]

- de Graaf, M.M.; Allouch, S.B.; Klamer, T. Sharing a life with Harvey: Exploring the acceptance of and relationship-building with a social robot. Comput. Hum. Behav. 2015, 43, 1–14. [Google Scholar] [CrossRef]

- Torta, E.; Werner, F.; Johnson, D.O.; Juola, J.F.; Cuijpers, R.H.; Bazzani, M.; Oberzaucher, J.; Lemberger, J.; Lewy, H.; Bregman, J. Evaluation of a small socially-assistive humanoid robot in intelligent homes for the care of the elderly. J. Intell. Robot. Syst. 2014, 76, 57–71. [Google Scholar] [CrossRef]

- Olson, J.M.; Maio, G.R. Attitudes and Social Behavior. In Handbook of Psychology; Weiner, I.B., Ed.; John Wiley & Sons: New Jersey, NJ, USA, 2003; Volume 5. [Google Scholar]

- Savela, N.; Turja, T.; Oksanen, A. Social acceptance of robots in different occupational fields: A systematic literature review. Int. J. Soc. Robot. 2018, 10, 493–502. [Google Scholar] [CrossRef]

- Cherry, C.O.; Chumbler, N.R.; Richards, K.; Huff, A.; Wu, D.; Tilghman, L.M.; Butler, A. Expanding stroke telerehabilitation services to rural veterans: A qualitative study on patient experiences using the robotic stroke therapy delivery and monitoring system program. Disabil. Rehabil. Assist. Technol. 2017, 12, 21–27. [Google Scholar] [CrossRef]

- Holm, S.G.; Angelsen, R.O. A descriptive retrospective study of time consumption in home care services: How do employees use their working time? BMC Health Serv. Res. 2014, 14, 439. [Google Scholar] [CrossRef]

- Benitti, F.B.V. Exploring the educational potential of robotics in schools: A systematic review. Comput. Educ. 2012, 58, 978–988. [Google Scholar] [CrossRef]

- Tanaka, F.; Movellan, J.R.; Fortenberry, B.; Aisaka, K. Daily HRI evaluation at a classroom environment: Reports from dance interaction experiments. In Proceedings of the 1st ACM SIGCHI/SIGART Conference on Human-Robot Interaction, Salt Lake City, UT, USA, 2–3 March 2006; pp. 3–9. [Google Scholar]

- Chang, C.-W.; Lee, J.-H.; Wang, C.-Y.; Chen, G.-D. Improving the authentic learning experience by integrating robots into the mixed-reality environment. Comput. Educ. 2010, 55, 1572–1578. [Google Scholar] [CrossRef]

- Reich-Stiebert, N.; Eyssel, F. Learning with educational companion robots? Toward attitudes on education robots, predictors of attitudes, and application potentials for education robots. Int. J. Soc. Robot. 2015, 7, 875–888. [Google Scholar] [CrossRef]

- Scassellati, B.; Admoni, H.; Matarić, M. Robots for Use in Autism Research. Annu. Rev. Biomed. Eng. 2012, 14, 275–294. [Google Scholar] [CrossRef]

- di Nuovo, A.; Conti, D.; Trubia, G.; Buono, S.; di Nuovo, S. Deep learning systems for estimating visual attention in robot-assisted therapy of children with autism and intellectual disability. Robotics 2018, 7, 25. [Google Scholar] [CrossRef]

- Rogers, E. Diffusion of Innnovations, 5th ed.; Free Press: New York, NY, USA, 2003. [Google Scholar]

- Davis, F. Perceived usefulness, perceived ease of use, and user acceptance of information technology. MIS Q. 1989, 13, 319–340. [Google Scholar] [CrossRef]

- Ajzen, I. The theory of planned behavior. Organ. Behav. Hum. Decis. Process. 1991, 50, 179–211. [Google Scholar] [CrossRef]

- Festinger, L. A Theory of Cognitive Dissonance; Stanford University Press: Redwood City, CA, USA, 1957; Volume 2. [Google Scholar]

- Davis, F.D.; Bagozzi, R.P.; Warshaw, P.R. User acceptance of computer technology: A comparison of two theoretical models. Manag. Sci. 1989, 35, 982–1003. [Google Scholar] [CrossRef]

- Taylor, S.; Todd, P.A. Understanding information technology usage: A test of competing models. Inf. Syst. Res. 1995, 6, 144–176. [Google Scholar] [CrossRef]

- Bhattacherjee, A. An empirical analysis of the antecedents of electronic commerce service continuance. Decis. Support Syst. 2001, 32, 201–214. [Google Scholar] [CrossRef]

- Oliver, R.L. A cognitive model of the antecedents and consequences of satisfaction decisions. J. Mark. Res. 1980, 17, 460–469. [Google Scholar] [CrossRef]

- Oliver, R.L.; Linda, G. Effect of satisfaction and its antecedents on consumer preference and intention. Adv. Consum. Res. 1981, 8, 88–93. [Google Scholar]

- Tse, D.K.; Wilton, P.C. Models of consumer satisfaction formation: An extension. J. Mark. Res. 1988, 25, 204–212. [Google Scholar] [CrossRef]

- Hunt, H.K. CS/D-overview and future research directions. In Conceptualization and Measurement of Consumer Satisfaction and Dissatisfaction; Marketing Science Institute: Cambridge, MA, USA, 1977; pp. 455–488. [Google Scholar]

- Heerink, M.; Kröse, B.; Evers, V.; Wielinga, B. Assessing acceptance of assistive social agent technology by older adults: The almere model. Int. J. Soc. Robot. 2010, 2, 361–375. [Google Scholar] [CrossRef]

- Churchill, G.A., Jr.; Surprenant, C. An investigation into the determinants of customer satisfaction. J. Mark. Res. 1982, 19, 491–504. [Google Scholar] [CrossRef]

- Oliver, R.L. Cognitive, affective, and attribute bases of the satisfaction response. J. Consum. Res. 1993, 20, 418–430. [Google Scholar] [CrossRef]

- Zmud, R.W. Diffusion of modern software practices: Influence of centralization and formalization. Manag. Sci. 1982, 28, 1421–1431. [Google Scholar] [CrossRef]

- Kwon, T.H.; Zmud, R.W. Unifying the fragmented models of information systems implementation. In Critical Issues in Information Systems Research; Wiley: Hoboken, NJ, USA, 1987; pp. 227–251. [Google Scholar]

- Cooper, R.B.; Zmud, R.W. Information technology implementation research: A technological diffusion approach. Manag. Sci. 1990, 36, 123–139. [Google Scholar] [CrossRef]

- Venkatesh, V. Determinants of Perceived Ease of Use: Integrating Control, Intrinsic Motivation, and Emotion into the Technology Acceptance Model. Inf. Syst. Res. 2000, 11, 342–365. [Google Scholar] [CrossRef]

- Moore, G.C.; Benbasat, I. Development of an instrument to measure the perceptions of adopting an information technology innovation. Inf. Syst. Res. 1991, 2, 192–222. [Google Scholar] [CrossRef]

- Venkatesh, V.; Morris, M.G. Why don’t men ever stop to ask for directions? Gender, social influence, and their role in technology acceptance and usage behavior. MIS Q. 2000, 115–139. [Google Scholar] [CrossRef]

- Vallerand, R.J. Toward a hierarchical model of intrinsic and extrinsic motivation. In Advances in Experimental Social Psychology; Elsevier: Amsterdam, The Netherlands, 1997; Volume 29, pp. 271–360. [Google Scholar]

- Davis, F.D.; Bagozzi, R.P.; Warshaw, P.R. Extrinsic and intrinsic motivation to use computers in the workplace 1. J. Appl. Soc. Psychol. 1992, 22, 1111–1132. [Google Scholar] [CrossRef]

- Han, J.-H. UTAUT Model of Pre-service Teachers for Telepresence Robot-Assisted Learning. J. Creat. Inf. Cult. 2018, 4, 95–102. [Google Scholar]

- Tay, B.; Jung, Y.; Park, T. When stereotypes meet robots: The double-edge sword of robot gender and personality in human–robot interaction. Comput. Hum. Behav. 2014, 38, 75–84. [Google Scholar] [CrossRef]

- Robert, L.; Alahmad, R.; Esterwood, C.; Kim, S.; You, S.; Zhang, Q. A Review of Personality in Human‒Robot Interactions. Found. Trends Inf. Syst. 2020, 4, 107–212. [Google Scholar] [CrossRef]

- Han, J. Emerging technologies: Robot assisted language learning. Lang. Learn. Technol. 2012, 16, 1–9. [Google Scholar]

- Han, J.; Jo, M.; Park, S.; Kim, S. The educational use of home robots for children. In Proceedings of the ROMAN 2005. IEEE International Workshop on Robot and Human Interactive Communication, Nashville, TN, USA, 13–15 August 2005. [Google Scholar]

- Hyun, E.; Kim, S.; Jang, S.; Park, S. Comparative study of effects of language instruction program using intelligence robot and multimedia on linguistic ability of young children. In Proceedings of the RO-MAN 2008-The 17th IEEE International Symposium on Robot and Human Interactive Communication, Munich, Germany, 1–3 August 2008; pp. 187–192. [Google Scholar]

| Code | Construct | No. Items [4] | No. Items HANCON | Cronbach’s Alpha |

|---|---|---|---|---|

| ANX | Anxiety | 4 | 4 | 0.626 |

| ATT | Attitude | 3 | 3 | 0.887 |

| FC | Facilitating Conditions | 2 | 2 | 0.509 |

| ITU | Intention To Use | 3 | 3 | 0.827 |

| PAD | Perceived ADaptability | 3 | 3→2 | 0.443→0.681 |

| PENJ | Perceived ENJoyment | 5 | 5 | 0.869 |

| PS | Perceived Sociability | 4 | 4 | 0.868 |

| PU | Perceived Usefulness | 3 | 3 | 0.701 |

| SI | Social Influence | 2 | 2 | 0.737 |

| SP | Social Presence | 5 | 3 | 0.803 |

| TTrust | Trust Technology (Reliability) | 2 | 3 | 0.619 |

| PEOU | Perceived Ease of Use | - | 3 | 0.610 |

| US | User Satisfaction | - | 3 | 0.878 |

| ME | Met Expectation | - | 3 | 0.901 |

| PITU | Post Intention To Use | - | 2 | 0.614 |

| Code | Average | Shapiro-Wilk Statistic | Df | p-Value |

|---|---|---|---|---|

| ANX | 3.34 | 0.973 | 105 | 0.030 |

| ATT | 3.71 | 0.931 | 105 | 0.000 |

| FC | 3.05 | 0.966 | 105 | 0.008 |

| ITU | 3.36 | 0.967 | 105 | 0.009 |

| PAD | 3.38 | 0.966 | 105 | 0.008 |

| PENJ | 3.77 | 0.949 | 105 | 0.001 |

| PS | 3.33 | 0.943 | 105 | 0.000 |

| PU | 3.84 | 0.927 | 105 | 0.000 |

| SI | 3.32 | 0.907 | 105 | 0.000 |

| SP | 3.20 | 0.961 | 105 | 0.003 |

| TTrust | 3.24 | 0.973 | 105 | 0.028 |

| PEOU | 3.38 | 0.961 | 105 | 0.003 |

| US | 3.63 | 0.932 | 105 | 0.000 |

| ME | 3.63 | 0.918 | 105 | 0.000 |

| PITU | 4.05 | 0.902 | 105 | 0.000 |

| ANX | ATT | ITU | PAD | PENJ | PS | PU | SI | SP | TTrust | PEOU | US | ME | PITU | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| ANX | 1 | |||||||||||||

| ATT | −0.234 * | 1 | ||||||||||||

| ITU | −0.250 | 0.673 *** | 1 | |||||||||||

| PAD | −0.145 | 0.561 *** | 0.627 *** | 1 | ||||||||||

| PENJ | −0.207 * | 0.626 *** | 0.582 *** | 0.574 *** | 1 | |||||||||

| PS | −0.212 * | 0.594 *** | 0.564 *** | 0.452 *** | 0.695 *** | 1 | ||||||||

| PU | −0.193 * | 0.595 *** | 0.583 *** | 0.633 *** | 0.642 *** | 0.605 *** | 1 | |||||||

| SI | −0.121 | 0.509 *** | 0.504 *** | 0.476 *** | 0.543 *** | 0.582 *** | 0.554 *** | 1 | ||||||

| SP | −0.215 * | 0.414 *** | 0.479 *** | 0.370 *** | 0.564 *** | 0.628 *** | 0.553 ** | 0.681 *** | 1 | |||||

| TTrust | −0.310 ** | 0.351 *** | 0.409 *** | 0.234 *** | 0.394 *** | 0.502 *** | 0.421 *** | 0.460 *** | 0.569 *** | 1 | ||||

| PEOU | −0.262 ** | 0.244 * | 0.288 ** | 0.269 *** | 0.425 *** | 0.485 *** | 0.274 ** | 0.284 ** | 0.383 *** | 0.485 *** | 1 | |||

| US | −0.237 * | 0.435 *** | 0.460 ** | 0.499 *** | 0.587 *** | 0.562 *** | .571 ** | 0.408 *** | 0.454 *** | 0.527 *** | 0.568 *** | 1 | ||

| ME | −0.186 | 0.481 *** | 0.401 *** | 0.440 *** | 0.579 *** | 0.512 *** | .453 *** | 0.330 ** | 0.392 *** | 0.486 *** | 0.550 *** | 0.780 *** | 1 | |

| PITU | −0.140 | 0.398 *** | 0.486 *** | 0.549 *** | 0.516 *** | 0.459 *** | .621 *** | 0.389 *** | 0.463 *** | 0.314 ** | 0.415 *** | 0.663 *** | 0.547 *** | 1 |

| Models | Hypothesis | Independent Variable | Dependent Variable | Intercept | Beta | Tau | p-Value |

|---|---|---|---|---|---|---|---|

| TAM | H1 | PU | ITU | −0.232 | 0.971 | 0.470 | <0.001 *** |

| PENJ | 0.759 | 0.714 | 0.462 | <0.001 *** | |||

| TTrust | 1.83 | 0.500 | 0.323 | <0.001 *** | |||

| ATT | 0.662 | 0.752 | 0.566 | <0.001 *** | |||

| SI | 1.03 | 0.660 | 0.414 | <0.001 *** | |||

| H2 | PAD | PU | 1.36 | 0.660 | 0.538 | <0.001 *** | |

| ANX | 4.37 | −0.124 | −0.150 | <0.05 * | |||

| TTrust | 2.76 | 0.340 | 0.324 | <0.001 *** | |||

| H3 | TTrust | PENJ | 2.41 | 0.448 | 0.309 | <0.001 *** | |

| PS | 1.77 | 0.606 | 0.560 | <0.001 *** | |||

| SP | 1.81 | 0.600 | 0.441 | <0.001 *** | |||

| H4 | TTrust | PS | 1.46 | 0.602 | 0.401 | <0.001 *** | |

| H6 | PS | SP | 0.670 | 0.777 | 0.521 | 0.222 | |

| PAM | H7 | US | PITU | 1.91 | 0.599 | 0.558 | <0.001 *** |

| PEOU | 2.88 | 0.373 | 0.327 | <0.001 *** | |||

| H8 | ME | US | 0.060 | 0.985 | 0.689 | <0.001 *** | |

| PEOU | 1.73 | 0.599 | 0.461 | <0.001 *** | |||

| H9 | ME | PEOU | 1.39 | 0.571 | 0.449 | <0.001 *** | |

| H10 | ITU | ME | 2.68 | 0.330 | 0.329 | <0.001 *** |

| Construct | Pre-Service Teachers (Students) | Teachers | Mean Difference | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Mean | Max | Min | SD | POS (%) | NEG (%) | Mean | Max | Min | SD | POS (%) | NEG(%) | ||

| ANX | 3.34 | 5.00 | 1.00 | 0.75 | 62 | 23 | 3.28 | 5.00 | 1.00 | 1.15 | 57 | 32 | 0.07 |

| ATT | 3.71 | 5.00 | 1.00 | 0.90 | 77 | 15 | 3.18 | 5.00 | 1.00 | 1.15 | 45 | 45 | 3.11 ** |

| FC | 3.05 | 5.00 | 1.00 | 0.97 | 42 | 34 | 2.69 | 5.00 | 1.00 | 1.06 | 31 | 51 | 2.18 * |

| PAD | 3.38 | 5.00 | 1.00 | 0.76 | 82 | 9 | 4.17 | 5.00 | 1.00 | 0.91 | 80 | 5 | −2.90 ** |

| PENJ | 3.77 | 5.00 | 1.00 | 0.79 | 77 | 12 | 3.75 | 5.00 | 1.00 | 0.90 | 83 | 12 | 0.11 |

| PS | 3.33 | 5.00 | 1.00 | 0.90 | 61 | 29 | 3.35 | 5.00 | 1.00 | 0.99 | 54 | 29 | 0.21 |

| PU | 3.84 | 5.00 | 1.00 | 0.70 | 85 | 5 | 3.68 | 5.00 | 1.00 | 0.93 | 75 | 15 | 0.94 |

| SI | 3.32 | 5.00 | 1.00 | 0.85 | 58 | 19 | 3.28 | 5.00 | 1.00 | 1.04 | 51 | 25 | 0.55 |

| SP | 3.20 | 5.00 | 1.00 | 0.92 | 56 | 34 | 3.34 | 5.00 | 1.00 | 1.06 | 48 | 31 | 0.72 |

| TTrust | 3.24 | 4.67 | 1.00 | 0.76 | 55 | 29 | 3.17 | 5.00 | 1.00 | 0.82 | 43 | 29 | 0.99 |

| ITU | 3.36 | 5.00 | 1.00 | 0.86 | 60 | 22 | 3.26 | 5.00 | 1.00 | 0.96 | 55 | 31 | 0.67 |

| PEOU | 3.38 | 5.00 | 1.67 | 0.78 | 62 | 27 | 3.20 | 5.00 | 1.00 | 1.04 | 46 | 28 | 1.12 |

| US | 3.63 | 5.00 | 1.00 | 0.83 | 71 | 17 | 3.53 | 5.00 | 1.00 | 0.94 | 60 | 20 | 0.83 |

| ME | 3.63 | 5.00 | 1.00 | 0.81 | 71 | 11 | 3.62 | 5.00 | 1.00 | 0.99 | 68 | 15 | 0.05 |

| PITU | 4.05 | 5.00 | 1.00 | 0.72 | 86 | 3 | 3.70 | 5.00 | 1.00 | 1.10 | 69 | 20 | 1.68 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Han, J.; Conti, D. The Use of UTAUT and Post Acceptance Models to Investigate the Attitude towards a Telepresence Robot in an Educational Setting. Robotics 2020, 9, 34. https://doi.org/10.3390/robotics9020034

Han J, Conti D. The Use of UTAUT and Post Acceptance Models to Investigate the Attitude towards a Telepresence Robot in an Educational Setting. Robotics. 2020; 9(2):34. https://doi.org/10.3390/robotics9020034

Chicago/Turabian StyleHan, Jeonghye, and Daniela Conti. 2020. "The Use of UTAUT and Post Acceptance Models to Investigate the Attitude towards a Telepresence Robot in an Educational Setting" Robotics 9, no. 2: 34. https://doi.org/10.3390/robotics9020034

APA StyleHan, J., & Conti, D. (2020). The Use of UTAUT and Post Acceptance Models to Investigate the Attitude towards a Telepresence Robot in an Educational Setting. Robotics, 9(2), 34. https://doi.org/10.3390/robotics9020034