Motion Signal Processing for a Remote Gas Metal Arc Welding Application

Abstract

1. Introduction

2. Materials and Methods

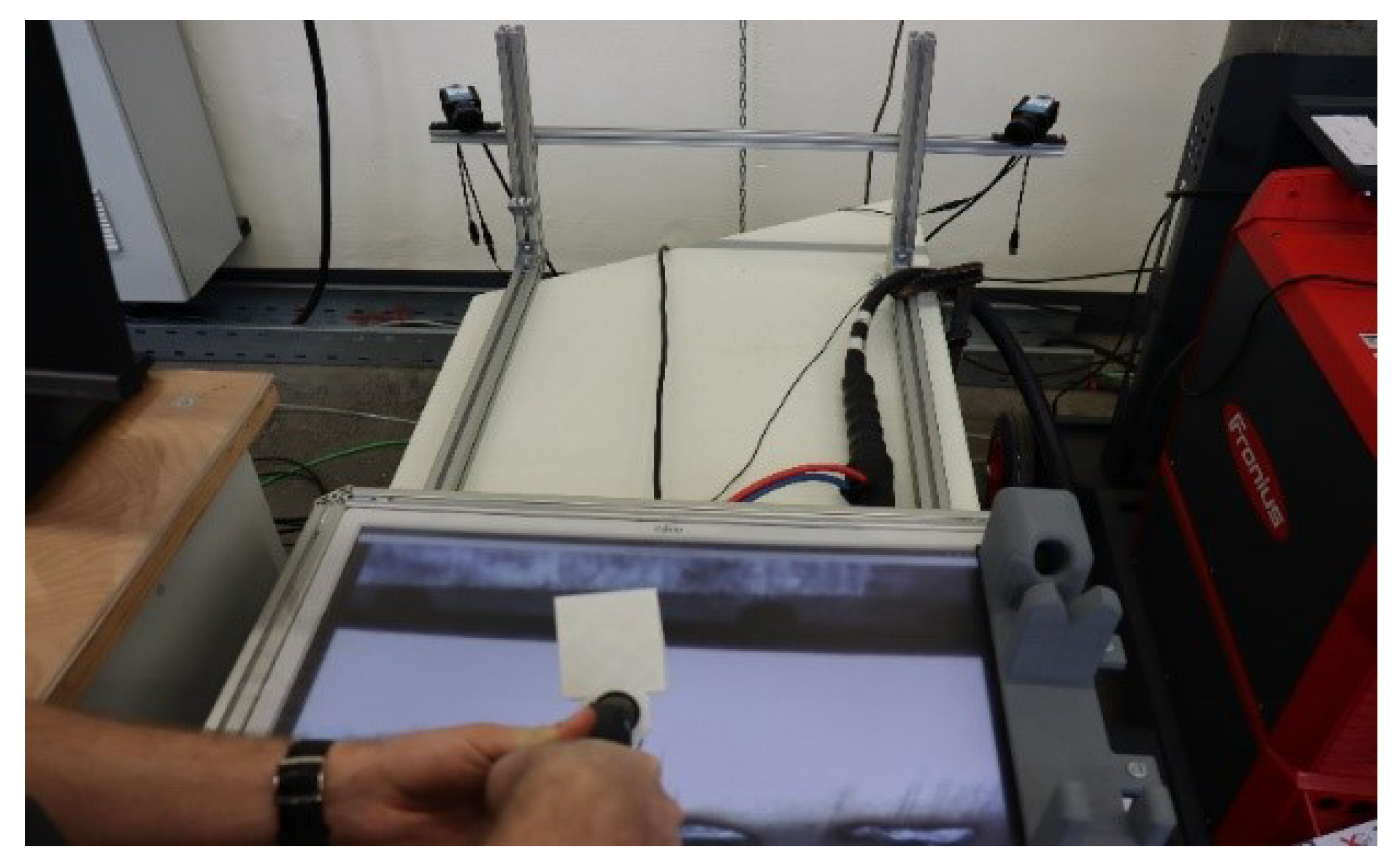

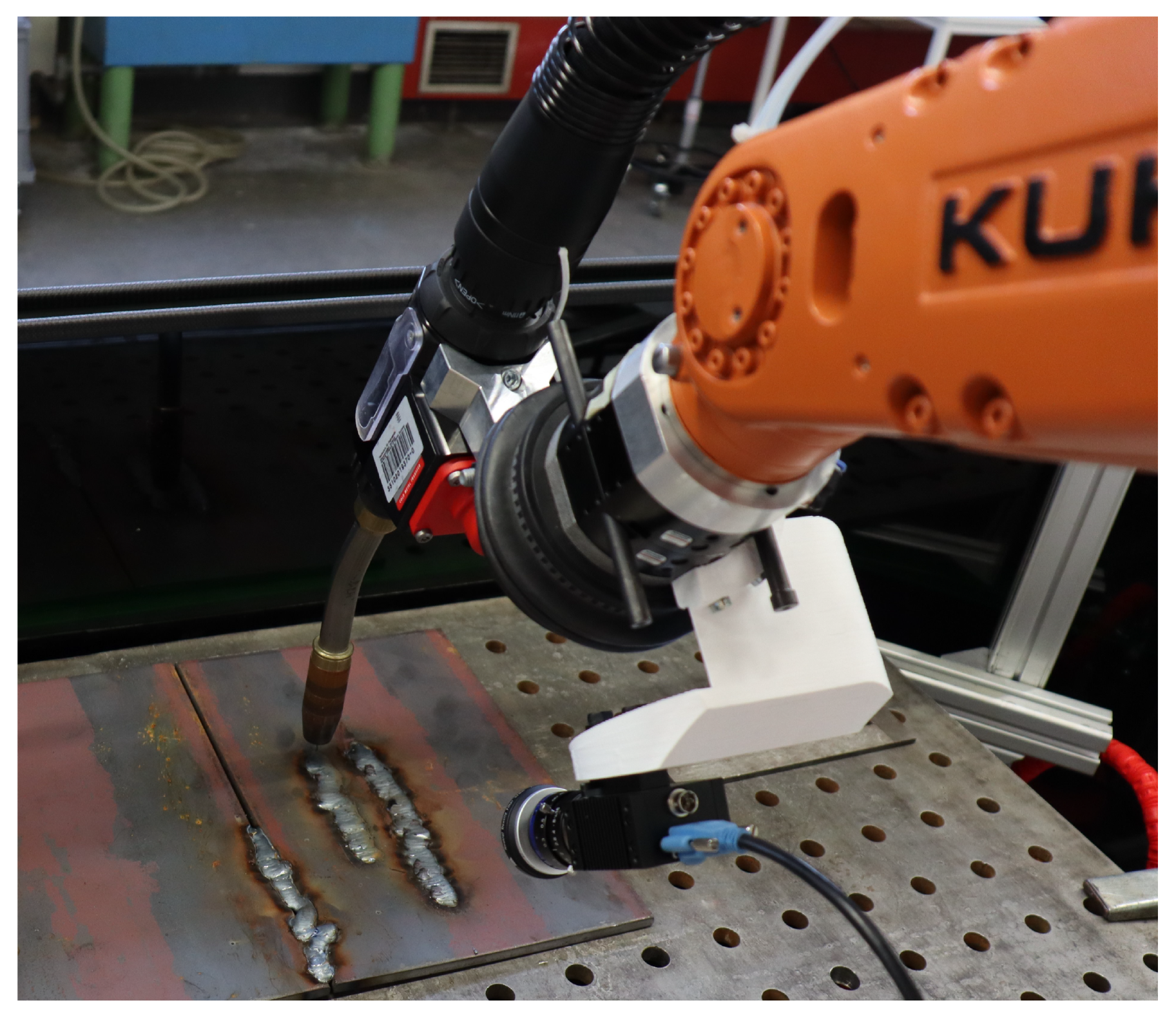

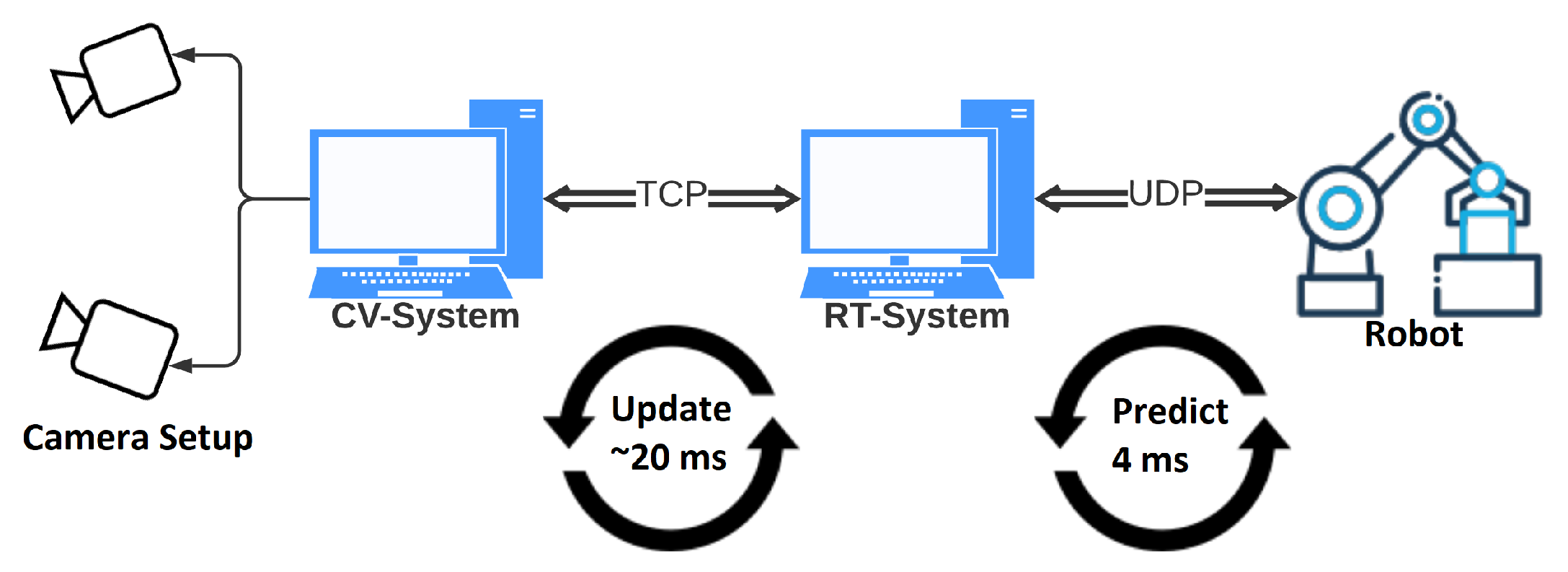

2.1. Experimental Setup

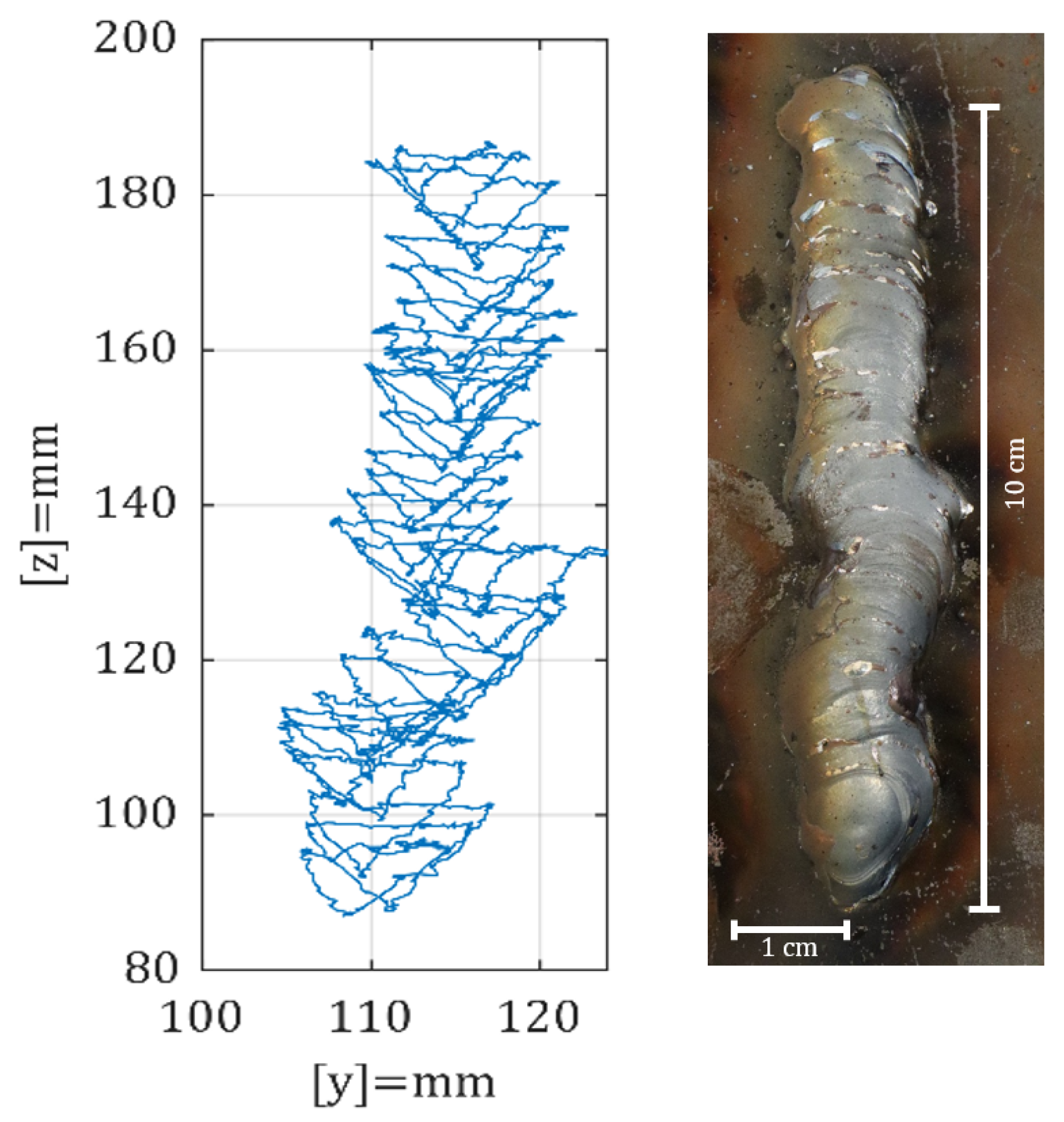

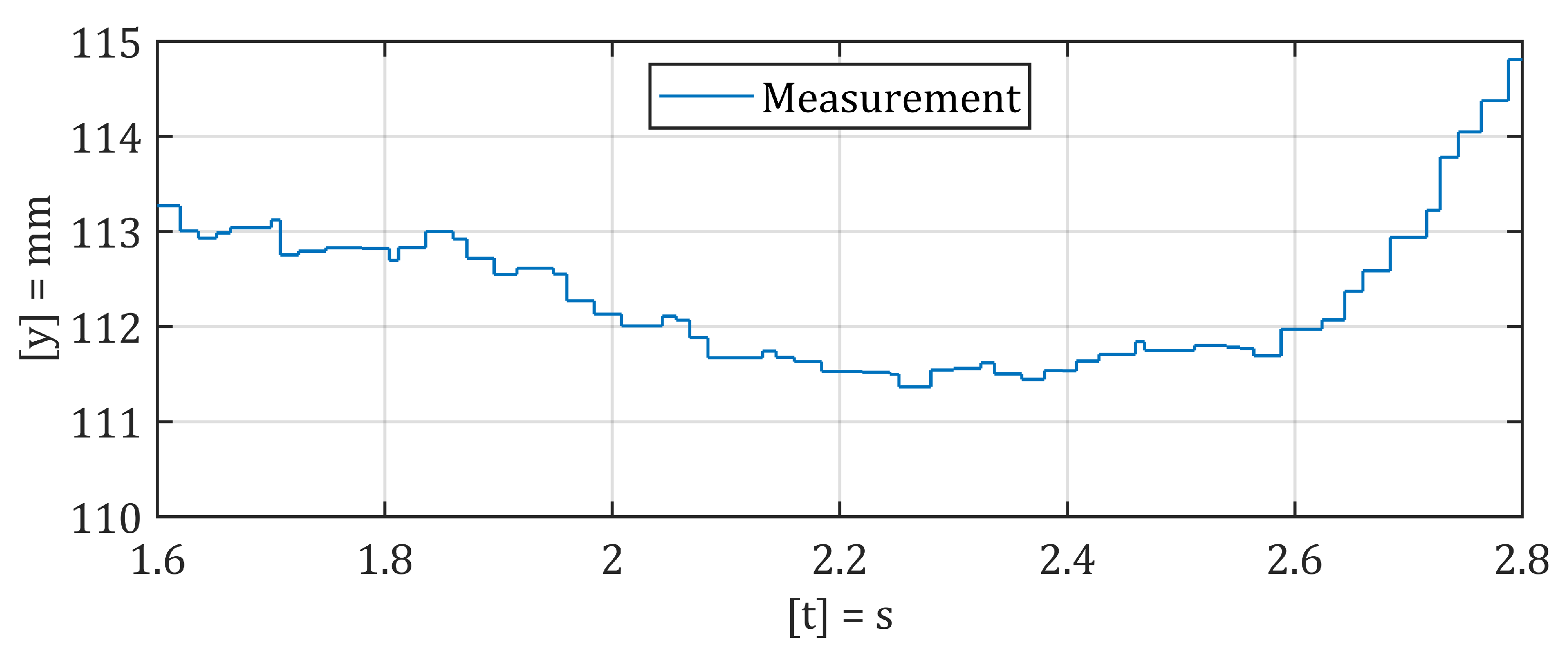

2.2. Motion Signal Analysis

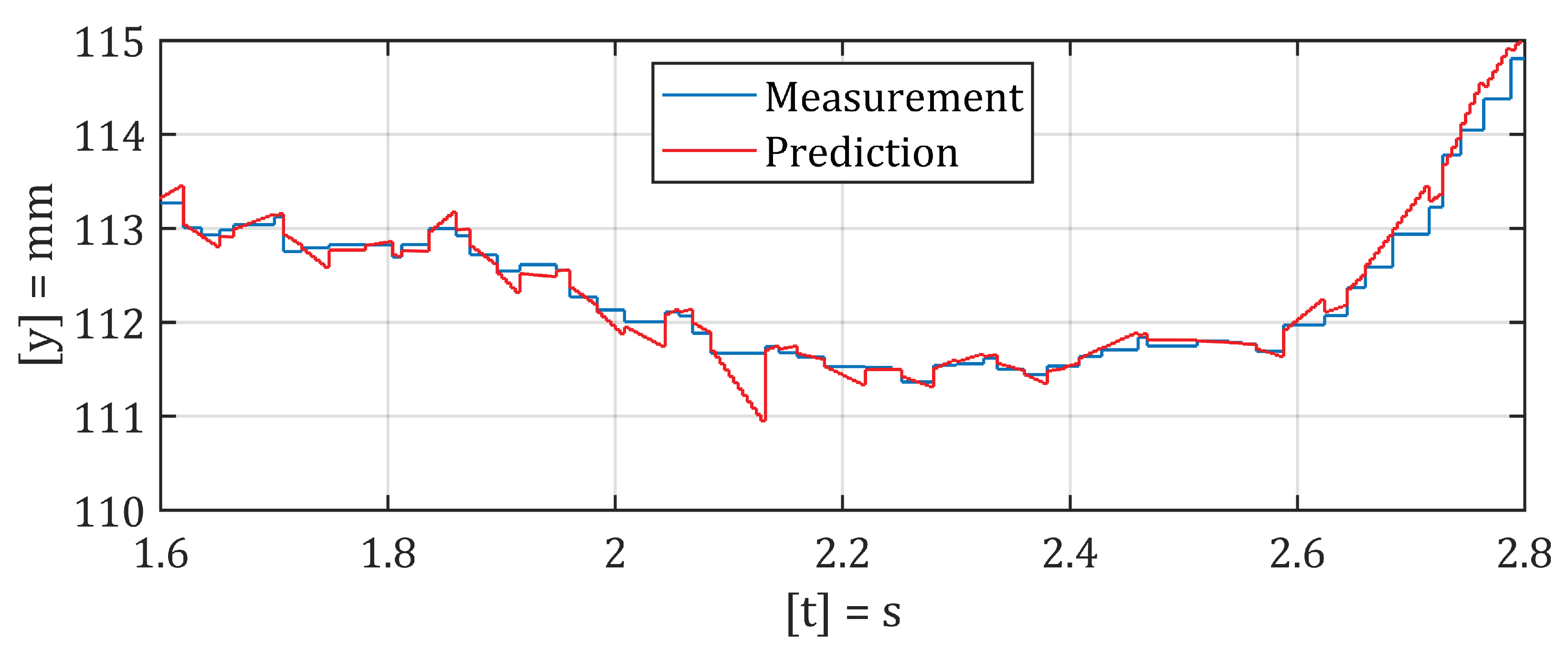

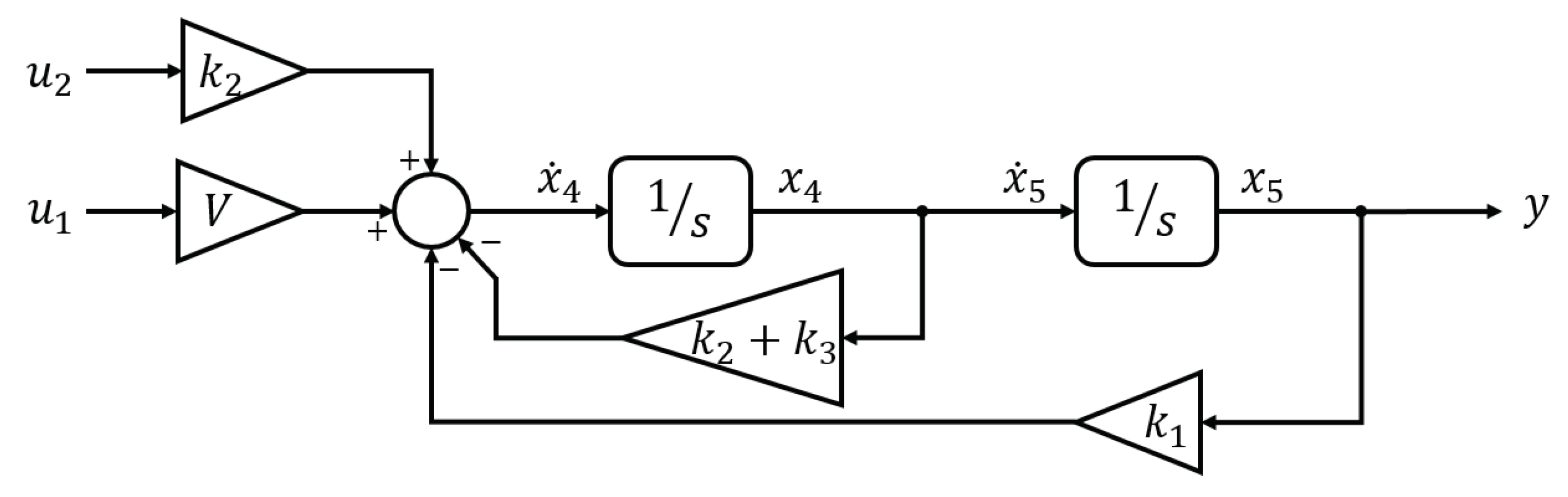

2.3. Prediction Filter Design

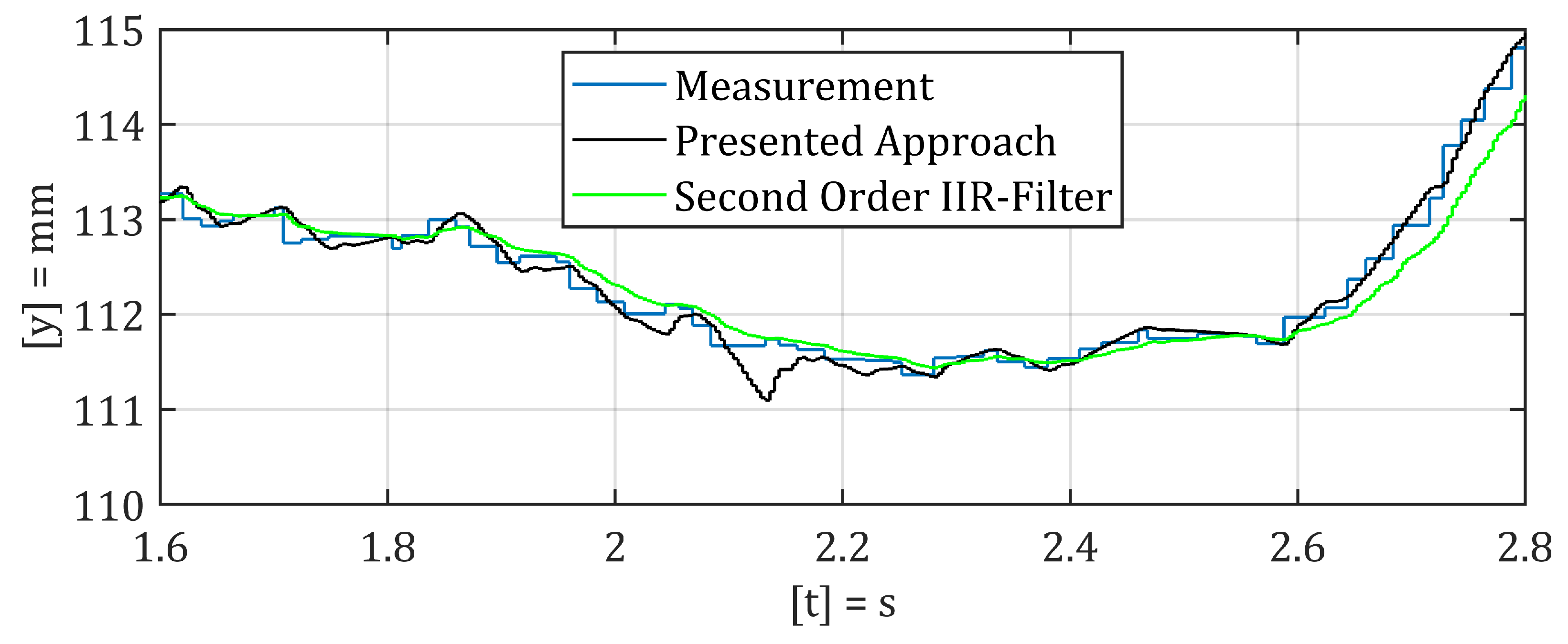

3. Results and Discussion

4. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Abbreviations

| KUKA | Keller und Knappich Augsburg |

| RSI | Robot sensor interface |

| MAG | Metal active gas |

| Two times differentiable continuous |

References

- Papakostasa, N.; Pintzos, G.; Matsas, M.; Chryssolouris, G. Knowledge-enabled design of cooperating robots assembly cells. Procidia CIRP 2014, 23, 165–170. [Google Scholar] [CrossRef]

- Pellegrinelli, S.; Pedrocchi, N.; Tosatti, L.M.; Fischer, A.; Tolio, T. Multi-robot spot-welding cells: An integrated approach to cell design and motion planning. CIRP Ann. 2014, 63, 17–20. [Google Scholar] [CrossRef]

- Pellegrinelli, S.; Pedrocchi, N.; Tosatti, L.M.; Fischer, A.; Tolio, T. Validation of an Extended Approach to Multi-robot Cell Design and Motion Planning. Procedia CIRP 2015, 36, 6–11. [Google Scholar] [CrossRef]

- Papakostas, N.; Alexopoulos, K.; Kopanakis, A. Integrating digital manufacturing and simulation tools in the assembly design process: A cooperating robots cell case. CIRP J. Manuf. Sci. Technol. 2011, 4, 96–100. [Google Scholar] [CrossRef]

- Bartelt, M.; Stumm, S.; Kuhlenkötter, B. Tool oriented Robot Cooperation. Procedia CIRP 2014, 23, 188–193. [Google Scholar] [CrossRef]

- van Essen, M.J.; van der Jagt, N.; Troll, M.; Wanders, M.; Erden, S.; van Beek, M.S.; Tomiyama, T. Identifying Welding Skills for Robot Assistance. In Proceedings of the IEEE/ASME International Conference on Mechatronic and Embedded Systems and Applications, Beijing, China, 12–15 October 2008; pp. 437–442. [Google Scholar]

- Singhand, B.; Singhal, P. Work Related Musculoskeletal Disorders (WMSDs) Risk Assessment for Different Welding Positions and Processes. In Proceedings of the 14th International Conference on Humanizing Work and Work Environment HWWE, Jalandhar, India, 8–11 December 2016. [Google Scholar]

- Kendzia, B.; Behrens, T.; Jöckel, K.-H.; Siemiatycki, J.; Kromhout, H.; Vermeulen, R.; Peters, S.; Van Gelder, R.; Olsson, A.; Brüske, I.; et al. Welding and Lung Cancer in a Pooled Analysis of Case-Control Studies. Am. J. Epidemiol. 2013, 178, 1513–1525. [Google Scholar] [CrossRef] [PubMed]

- Popović, O.; Prokić-Cvetković, R.; Burzić, M.; Lukić, U.; Beljić, B. Fume and gas emission during arc welding: Hazards and recommendation. Renew. Sustain. Energy Rev. 2014, 37, 509–516. [Google Scholar] [CrossRef]

- Erden, M.S.; Billard, A. Hand Impedance Measurements During Interactive Manual Welding with a Robot. IEEE Trans. Robot. 2015, 31, 168–179. [Google Scholar] [CrossRef]

- Erden, M.S.; Billard, A. Robotic Assistance by Impedance Compensation for Hand Movements while Manual Welding. IEEE Trans. Cybern. 2016, 46, 2459–2472. [Google Scholar] [CrossRef] [PubMed]

- Hua, S.; Lin, W.; Hongming, G. Remote welding robot system. In Proceedings of the Fourth International Workshop on Robot Motion and Control (IEEE Cat. No.04EX891), Puszczykowo, Poland, 17–20 June 2004; pp. 317–320. [Google Scholar]

- Wang, Q.; Jiao, W.; Yu, R.; Johnson, M.T.; Zhang, Y. Virtual Reality Robot-Assisted Welding Based on Human Intention Recognition. IEEE Trans. Autom. Sci. Eng. 2020, 17, 799–808. [Google Scholar] [CrossRef]

- Xu, J.; Zhang, G.; Hou, Z.; Wang, J.; Liang, J.; Bao, X.; Yang, W.; Wang, W. Advances in Multi-robotic Welding Techniques: A Review. Int. J. Mech. Eng. Robot. Res. 2020, 9, 421–428. [Google Scholar]

- Liu, Y.; Zhang, Y. Toward Welding Robot With Human Knowledge: A Remotely-Controlled Approach. IEEE Trans. Autom. Sci. Eng. 2015, 12, 769–774. [Google Scholar] [CrossRef]

- Liu, Y.; Zhang, Y.M. Control of human arm movement in machine-human cooperative welding process. Control. Eng. Pract. 2014, 32, 161–171. [Google Scholar] [CrossRef]

- KUKA Aktiengesellschaft. Available online: https://www.kuka.com/en-gb/services/downloads?terms=Language:en:1 (accessed on 15 March 2020).

- Fronius USA LLC. Available online: https://www.fronius.com/en-us/usa (accessed on 15 March 2020).

- Micro-Epsilon Messtechnik GmbH & Co. KG. Available online: https://www.micro-epsilon.de/2D_3D/laser-scanner/scanCONTROL-2600/ (accessed on 15 March 2020).

- The Linux Foundation. Available online: https://wiki.linuxfoundation.org/realtime/start (accessed on 15 March 2020).

- Kim, P. Kalman Filter for Beginners; CreateSpace Independent Publishing Platform: Scotts Valley, CA, USA, 2011. [Google Scholar]

- Parvez, I.; Rahmati, A.; Guvenc, I.; Sarwat, A.I.; Dai, H. A Survey on Low Latency Towards 5G: RAN, Core Network and Caching Solutions. IEEE Commun. Surv. Tutor. 2018, 20, 3098–3130. [Google Scholar] [CrossRef]

- Eto, H.; Harry, A.H. Seamless Manual-to-Autopilot Transition: An Intuitive Programming Approach to Robotic Welding. In Proceedings of the 28th IEEE International Conference on Robot and Human Interactive Communication (RO-MAN), New Delhi, India, 14–18 October 2019; pp. 1–7. [Google Scholar]

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ebel, L.C.; Zuther, P.; Maass, J.; Sheikhi, S. Motion Signal Processing for a Remote Gas Metal Arc Welding Application. Robotics 2020, 9, 30. https://doi.org/10.3390/robotics9020030

Ebel LC, Zuther P, Maass J, Sheikhi S. Motion Signal Processing for a Remote Gas Metal Arc Welding Application. Robotics. 2020; 9(2):30. https://doi.org/10.3390/robotics9020030

Chicago/Turabian StyleEbel, Lucas Christoph, Patrick Zuther, Jochen Maass, and Shahram Sheikhi. 2020. "Motion Signal Processing for a Remote Gas Metal Arc Welding Application" Robotics 9, no. 2: 30. https://doi.org/10.3390/robotics9020030

APA StyleEbel, L. C., Zuther, P., Maass, J., & Sheikhi, S. (2020). Motion Signal Processing for a Remote Gas Metal Arc Welding Application. Robotics, 9(2), 30. https://doi.org/10.3390/robotics9020030