From an implementation point of view, our system works through the coordination of two files: a Lisp file named Models containing the individual ACT-R models that implement the characters, and another file, named Environment that deals with managing the choices made by the players who influence the narrative path of the characters of the story.

The implementation strategies focus on the aspects related to the memory of the ACT-R model, the type of modules to be used, and the degree of generalization of the applicable rules. The sub-symbolic components provided by the architecture are used to provide greater flexibility to the models, as happens with the use of partial matching whose purpose is to relax the constraints on buffers to provide a greater variability. The storytelling is obtained through the dialogue between the characters, implemented as independent cognitive models.

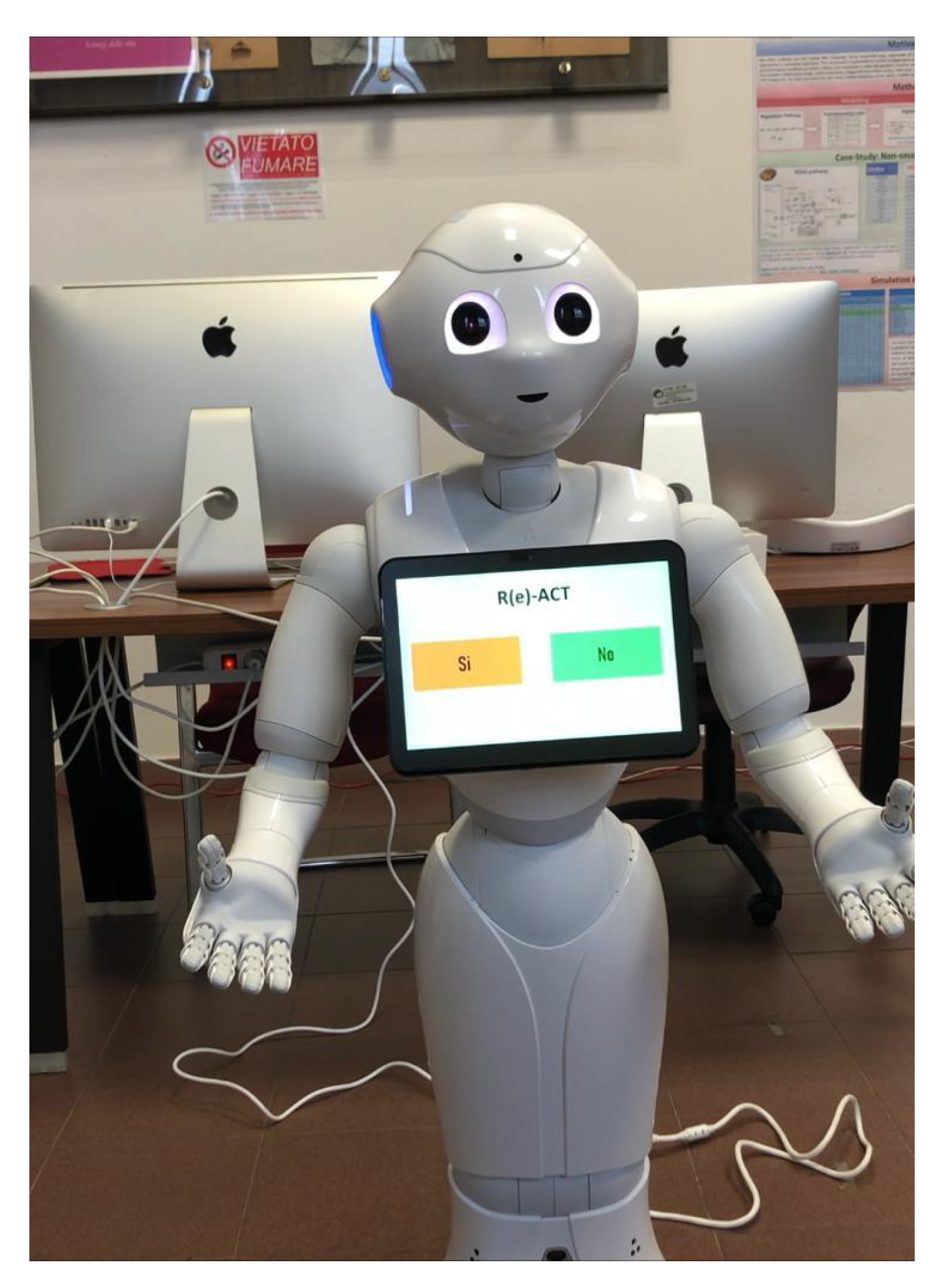

The following sections describe in detail the system implementation and its fruition through the interaction with a humanoid robot.

4.1. Story Plotting and Evolution

In this preliminary phase of the work, the proposed solution is largely inspired by the FearNot! plot. The story told in FearNot! addresses the delicate issue of bullying among children. The main character of the story is John: a child recently moved to the school of Meadow View and who, due to his shyness, struggles to make new friends. Some of the boys led by Luke, strong in their popularity, decide to target John by opposing him in his activities and humiliating him as soon as possible.

However, although our story leaves a few points of FearNot! story unchanged, for example, the scene of chocolate theft or the names of the characters, the rest of the story has been rewritten. Our episodes were planned and described to provide the agents with all the information required to implement their internal cognitive processes. So we chose to manage some episodes, necessary for the representation of a complete narrative dramatic arc, to focus more on the implementation of the knowledge of the world to be provided to the agent, on his reasoning ability to evaluate the situations and events and, as a consequence, letting it deliberating the most appropriate actions to achieve its goals. In particular, we wanted to dwell on the reasoning and motivations that push a character to perform one action or another one, rather than on the type of action carried out by an agent.

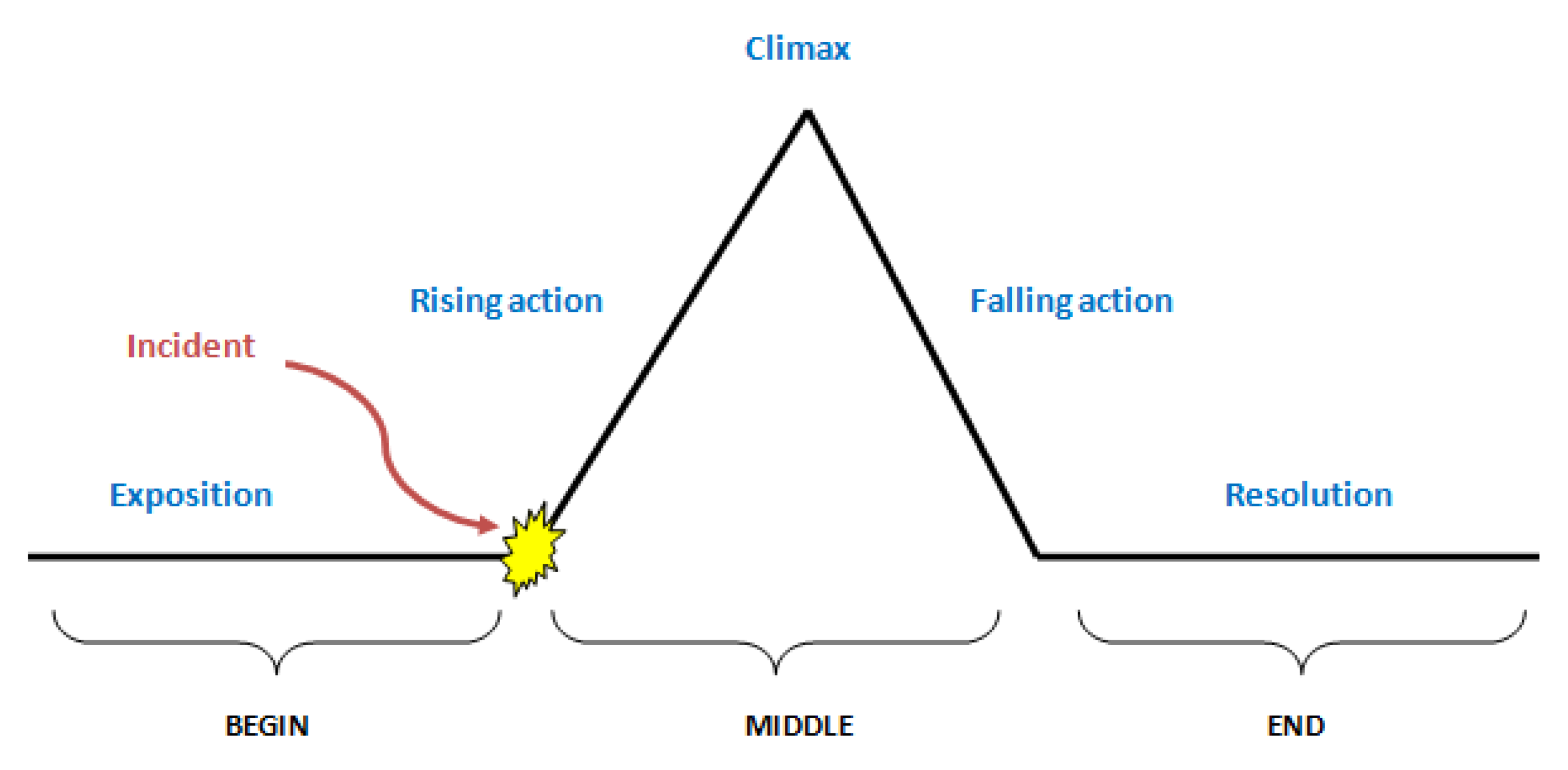

The story evolves according to a typical dramatic arc, following the Freytag dramatic structure (

Figure 2) [

33], starting from an exposition phase, and continuing with a set of events, among which there is an accident that leads to the point of maximum tension (the

climax). Finally, the climax is resolved to reach an epilogue.

Specifically, the initial event is directly managed by the narrator, who introduces the context of the events and the main characters to the player. The goals pursued by the different characters (according to their cognitive models) inevitably change along the course of the story, according to the interactions with the other models. The different goals pursued by the protagonist and the antagonist lead to the presentation of a complication for the main character. The complication is triggered by the behavior of the antagonist who carries out one or more actions whose ultimate aim is to move the protagonist away from its goal.

The complication leads to the climax, for which it is necessary to find a solution to close the conflict. The subsequent actions and the intervention of the player, who is asked for an opinion in situations of stalemate, brings the narrative arc towards the end of the story, the final event.

4.2. Characters Cognitive Models

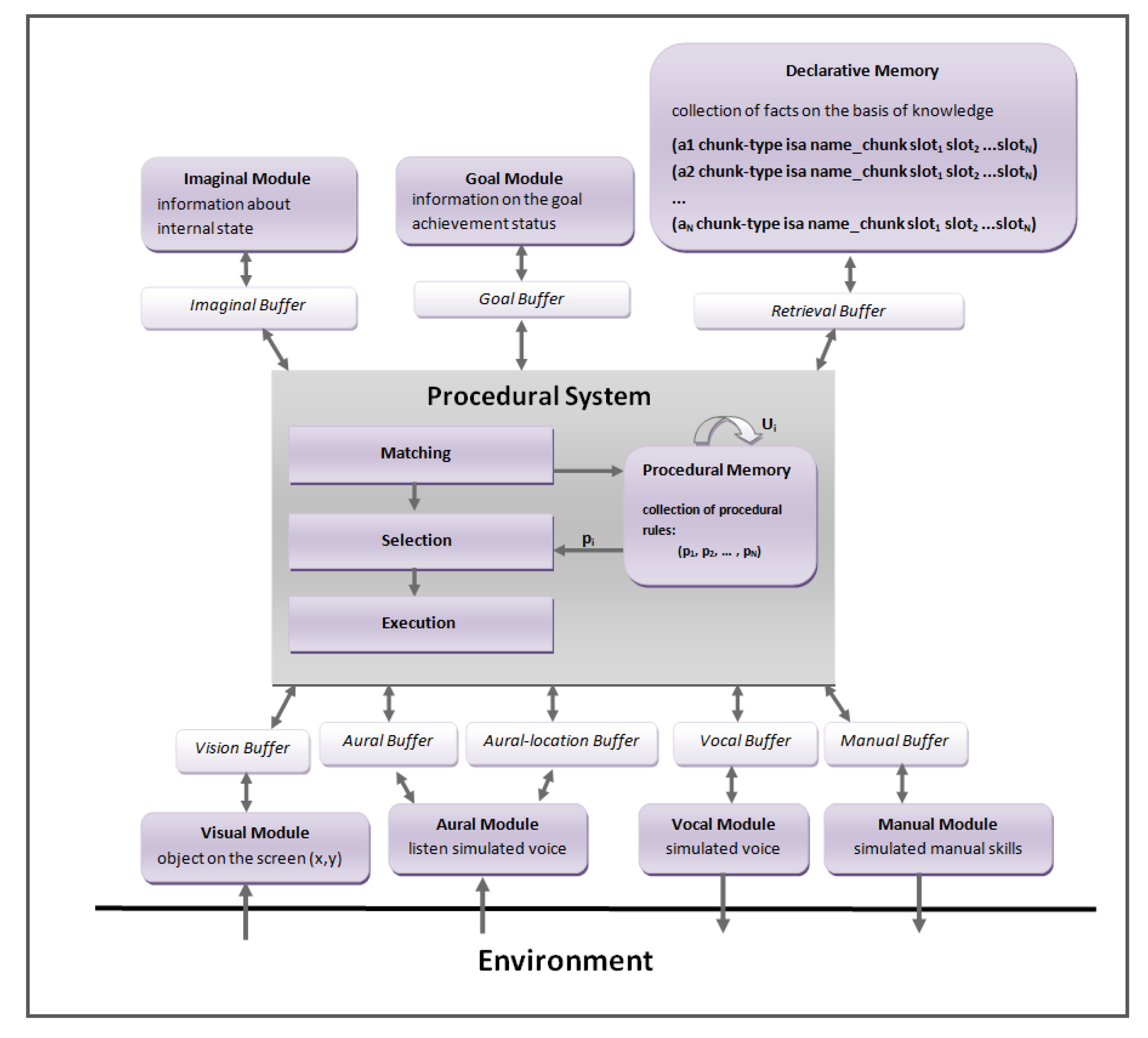

The ACT-R architecture, as seen above, is characterized by different modules, each of which has the important function of implementing a specific human cognitive function.

In the proposed storytelling system, the story takes place in the form of a dialogue between the characters, who interact with each other within the same environment. For our purposes, therefore, we chose to use only some modules of architecture that mainly allow simulating the dialogue and the cognitive reasoning of the characters. The modules used for the implementation of the models of our system are:

Declarative Module

Procedural Module

Speech Module

Aural Module

The declarative module assumes an important role in the implementation of the actors who behave as cognitive agents ACT-R. The knowledge of these agents is put in the form of chunks allocated in the declarative memory. The knowledge of the agent is the collection of the information regarding the other agents and the information related to the semantic meaning of some words that allow the evolution of the dialogue between the characters. The Procedural Module instead has a collection of all the rules that the model uses to process inputs coming from the outside world and managing its knowledge. The use of these rules thus allows the agent to run the actions. The Speech and Aural modules are used together to simulate the dialogue between the characters of the story. The Speech module allows us to synthesize the vocal strings outwards through its vocal buffer, while the Aural Module is responsible for the cognitive process of listening. This module processes the source of the heard sound-word and its semantic content through its two (aural-location and aural) buffers.

Each character in the story is represented as a cognitive model by ACT-R. Three different models were defined:

M1: The victim’s model;

M2: The bully’s model;

M3: The moderator model.

In particular, the moderator leads the narration of the events and facilitates the resolution of deadlock situations through the help of the human player. This ensures the interactivity of the system and gives the listener the opportunity to establish an empathetic relationship with the characters of the story.

The interaction between the models within the environment represents one of the fundamental points of our work; it would not be possible to generate the dynamics of the story without it. This interaction between the characters is made possible by exploiting the Speech and Aural Modules, which make it possible to simulate a real dialogue. Each model uses these two modules to interact with the other models and to generate changes within the environment.

The models, running on the same narrative context environment, use these modules as sensors to filter new inputs, and generate changes in the environment. When the model synthesizes a vocal string through the Speech Module, the string is reproduced within the environment. Each running model uses its aural-location buffer to intercept the sound strings spread in the environment by the other agents. When this buffer identifies a string, it recognizes its source and sends a request to the associated aural buffer to create a chunk of sound type, whose function is to contain at its internal the perceived string.

The definition of this particular type of chunk is typical of the ACT-R architecture. After identifying a vocal string through the appropriate modules, the cognitive processes of the model begin to focus on the analysis of the declarative memory to identify a possible match between what was listened to and what it is represented in its knowledge base.

As mentioned above, each model will try to pursue its goals during events that could be modified due to interactions with other models. These goals are managed by the Goal Module of ACT-R, while the Imaginal Module is used to keep track of the internal states necessary to achieve the goals among the various steps.

The declarative memory, made up of chunks, is used to express the facts owned by the model. The collection of facts represents, thus, the agent’s knowledge of the world.

In this phase of the work, we chose to equip the model with some information that can be used during the interaction with the other models, such as basic information about the other characters in the story and a set of notions regarding the meaning of some terms to manage the problem of understanding specific sound words. For example, regarding the

M1 model, we defined the

info chunk-type with atomic information such as details about the name, the role played within the story, and the age of models

M2 and

M3.

(chunk-type info model role name age) |

Starting from this definition of the chunk-type it is possible to instantiate in the declarative memory of the

M1 model three different chunks (called

p1, p2, p3), containing information regarding each actor of the story:

(p1 ISA info model m1 role victim name “Luke” age 11) |

(p2 ISA info model m2 role bully name “Paul” age 11) |

(p3 ISA info model m3 role assistant name “Pepper”) |

Instead to improve the management of the dialogue and allow the model to understand the speech, we defined in our code the

semantic chunk-type. It provides the model the skill to understand the

meaning of the

word exchanged with the other models. So the set of instances of this chunk-type allows the model to think about what it has just heard and to make the correct evaluations based on its knowledge. It is formalized as follows:

(chunk-type semantic meaning word) |

Among the instances of this chunk-type contained in the declarative memory, we can identify the underlying assets, which have the purpose of associating positive or negative feedback with certain words:

(p isa semantic meaning positive-feedback word “yes”) |

(q isa semantic meaning negative-feedback word “no”) |

In the underlying

listening-comprehension production, it is possible to examine how the

semantic chunk-type is used by the model in the process of understanding. This production is used when the model listens to a sound-word. At this specific moment it is possible to find the word just heard in the aural buffer, which is responsible for the listening activity. The model is thus ready to search its meaning by forwarding a request to the retrieval-buffer to access its declarative memory to find some match with what it knows.

((p listening-comprehension |

=aural> |

isa sound |

content =word |

?retrieval> |

state free |

==> |

+retrieval> |

isa semantic |

word =word |

!output! (M1 start reasoning..) |

) |

At this point, the procedural system evaluates which action must be executed considering the current state of the buffers.

If no match is found between the sound-word heard and one of the chunks present in the declarative memory, then the model ignores what it has heard, and it continues the activity it was carrying out. Instead, if the match is successful, the chunk extrapolated from the declarative memory will be placed in the retrieval buffer, and it can be evaluated by the Procedural System for the identification of an applicable rule. For example, the retrieval buffer forwards to its module the request for information regarding a sound-word listened to. The Declarative Module finds the match and loads the identified chunk into its buffer, and, according to the values identified by the chunk’s slots, the Procedural System chooses to activate one rule instead of another one. In the specific case shown below, the listened word has a meaning relative to an answer, or feedback, expected by the model. The model evaluates the sequence of actions to be performed based on the value corresponding to the meaning slot.

In this particular example, if the value contained in this slot is

negative-feedback, the model chooses a closing reaction and protection towards oneself, such as “ignoring the interlocutor”, as defined in the following rule:

(p negative_feedback |

=retrieval> |

isa semantic |

meaning negative-feedback |

?vocal> |

state free |

==> |

+vocal> |

cmd speak |

string “ignore” |

!output!(Ignore model...) ) |

In this rule, after evaluating the value contained in the chunk located in the retrieval buffer, a test is carried out on the vocal buffer. If this buffer is free, a specific vocal string can be synthesized. Instead, if the content of the slot meaning indicates a positive-meaning, then the model can evaluate the execution of an action that has the purpose of strengthening the affiliation with his interlocutor; for example, it can propose to start a collective activity or to entertain a discussion on a given topic.

The choice of collective activity to be proposed or the type of topic to be discussed is completely free from further constraints. Since in our system the sub-symbolic activation values of the chunks contained in the declarative memory of the models are set to the same value, the chunk that is selected from the set of chunks that satisfy a request is chosen in a completely free manner. This degree of freedom provides a certain level of uncertainty on the behavior chosen by the model, starting from a set of feasible behaviors known, and this inevitably induces variability in history. So providing more knowledge to the model allows you to get a character capable of implementing actions that can affect the course of history. However, it could arise the problem that the continuous identification of new actions could change the structure of the story so to the Freytag’s dramatic arc is not respected. It could happen that the models get stuck in the initial phase without ever reaching the central phase of the conflict. To avoid this problem the narrator, interpreted by the M3 model, observes the interactions between the models and the progress of the events, intervening only to redirect the story to avoid that the structure can shift from the one described by Freytag providing new elements and new narrative contexts to the characters. The structure of the story is therefore kept stable by the narrator while the uncertainty is linked to the behaviors assumed by the models in certain contexts.

In ACT-R, the sub-symbolic components provide a powerful tool to make a model dynamic and flexible within the environment. Each rule can be associated with a utility value; this value can be either static, or it can dynamically vary along with the execution of the model through a learning process based on the learning rule of Rescorla-Wagner [

34]. In this phase of the work, we exploited this learning rule only on those rules more frequently used by the model, such as

listening-comprehension. We also exploited the partial matching feature, to allow the model to take into consideration those rules whose conditions partially satisfy the current state of the buffers. Since the rules refer to actions or behaviors that the model can perform in certain situations, this element provides the story with a certain degree of indeterminacy concerning the actions performed by the characters. The

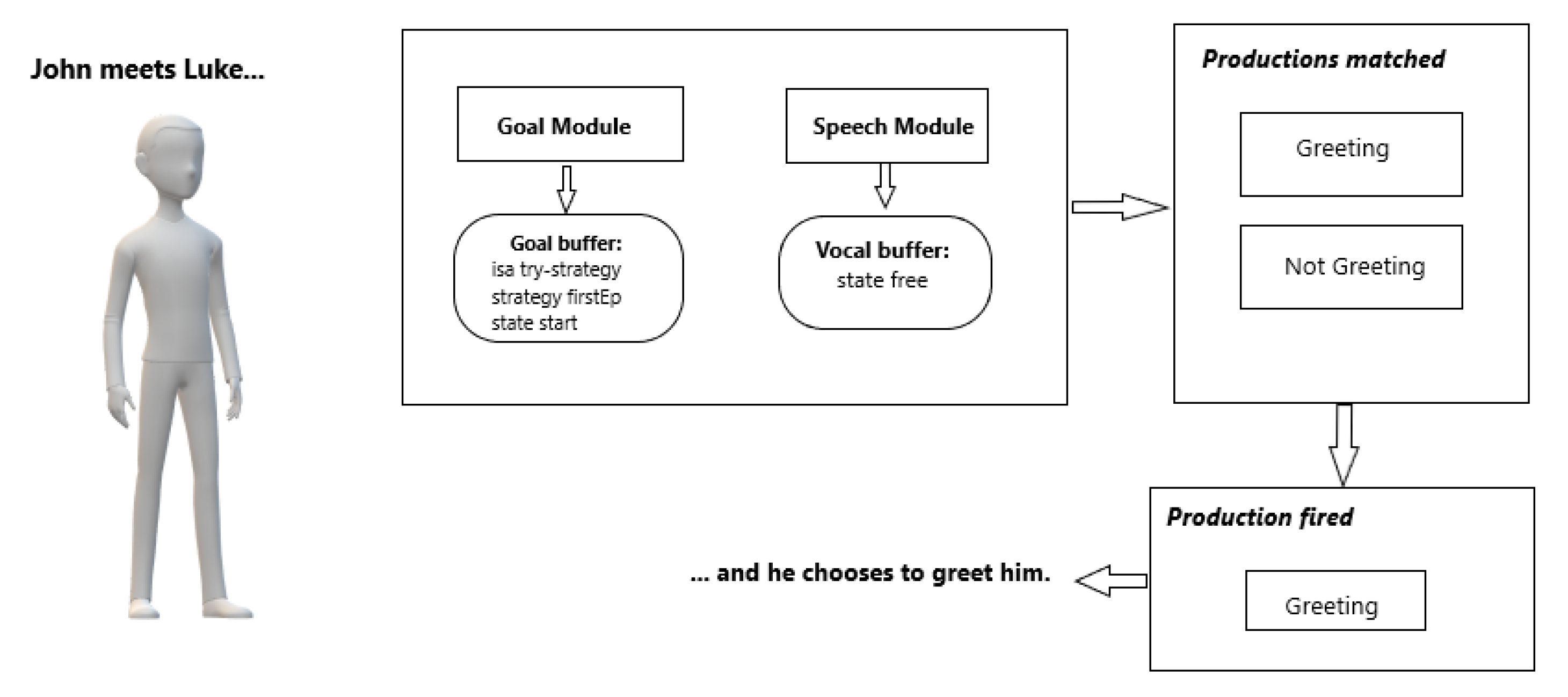

Figure 3 and

Figure 4 show two different states of the active buffers in the model of John, the main character, in an initial phase of the story, where there is no conflict with Luke. Here John is walking towards school and meets Luke. The model will evaluate the status of its buffers and the applicable productions, and finally, it will choose to execute one of the possible actions (Status A depicted in

Figure 3).

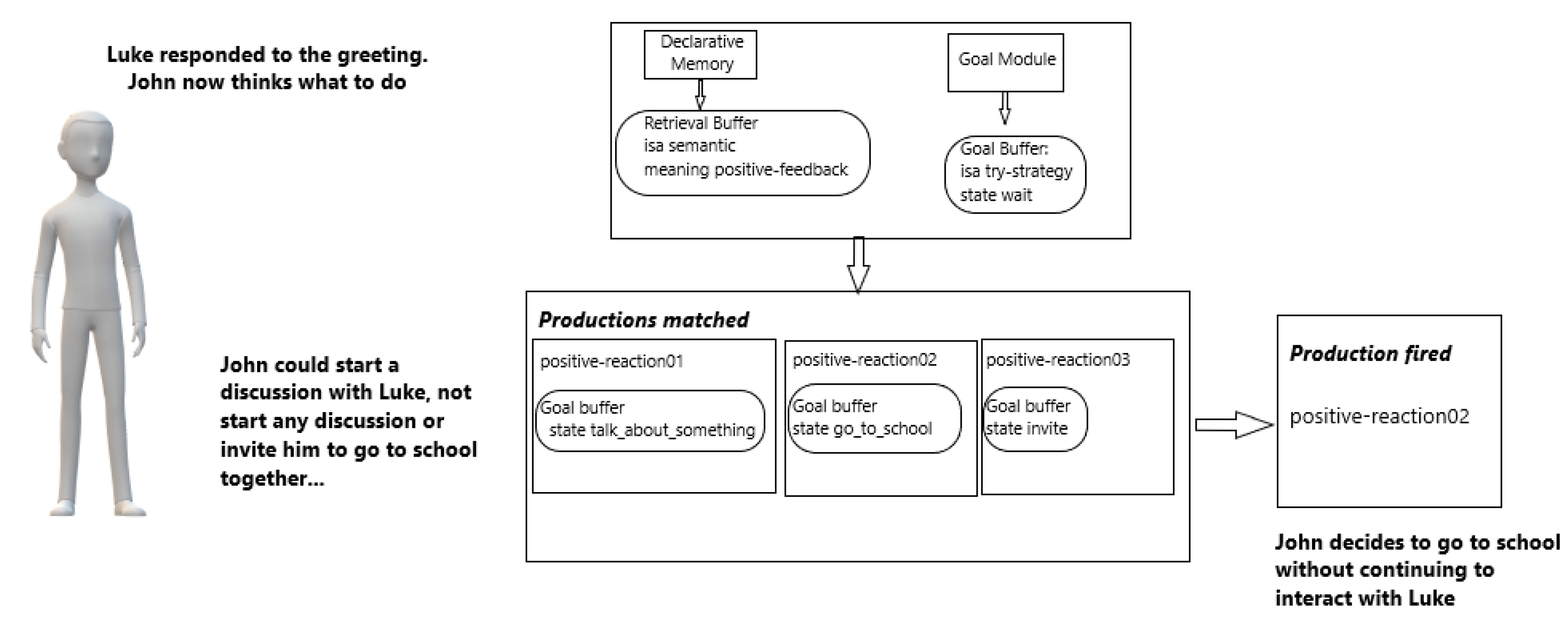

The type of choice implemented by the model is important since it can speed up or delay the evolution of the conflict within the story. For example, if John decides to say goodbye to Luke, he will expect his greeting to be returned. If this happens, then he can choose to start a friendly discussion with Luke or continue with his initial goal of going to school (Status B depicted in

Figure 4).

Another important aspect of our system is the interaction between the characters and the player. This is implemented through the distinction of particular points in the plot, called turning points, used by the narrator to take control of the story. In these points of the plot, the main character is called upon to make a certain choice that could have important effects for him and the other characters involved in the story. In this case, the narrator will directly address the player inviting him to choose one of the two proposed actions to be performed by the protagonist.

In our system, we implemented several similar situations, including the following one: " It’s breakfast time and John, the bullying victim of the story, starts eating his chocolate. The bully thus decides to oppose John even in this situation and chooses to steal the chocolate bar from the victim solely to humiliate him in front of the other companions."

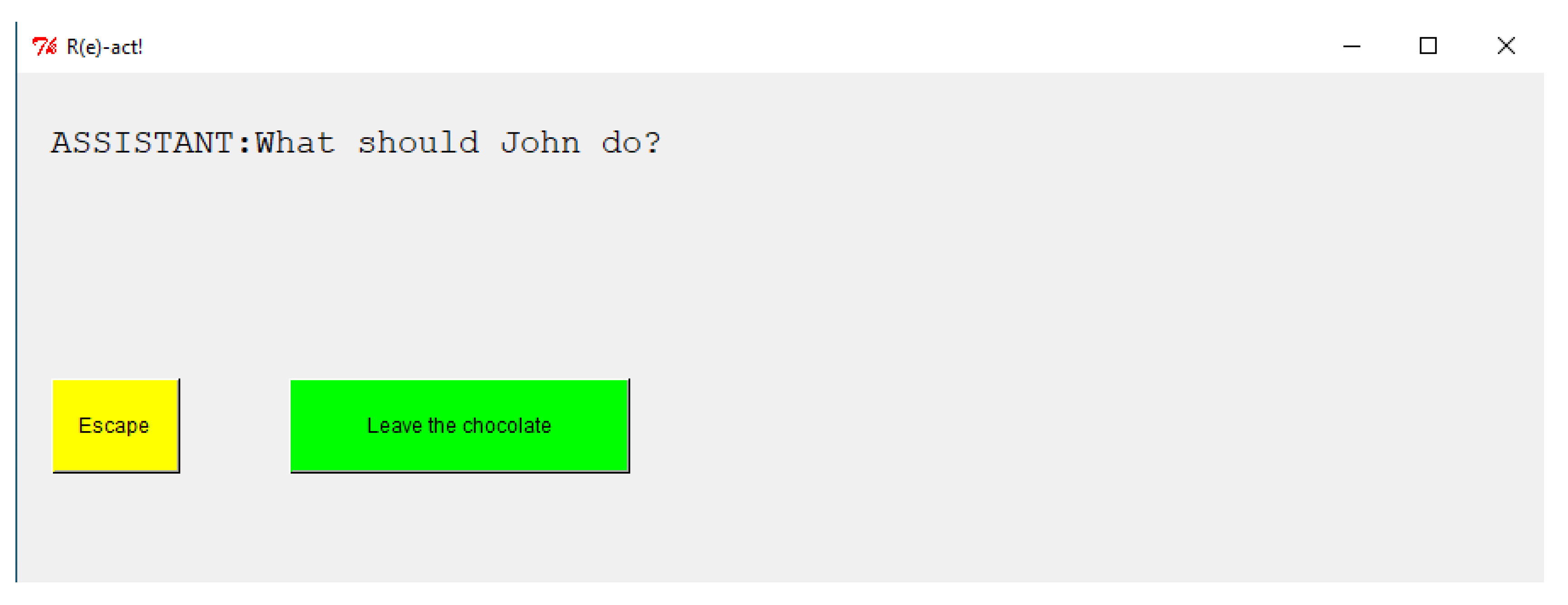

In this particular episode, the victim can do two things: run away with chocolate or leave it to Luke. Hence, the narrator proposes the two following alternatives to the player by showing a screen in which it will be possible to select a specific choice.

Figure 5 depicts the selection window that is shown at a given point of the narration.

When the player makes his choice, the chunk in the model’s goal buffer is appropriately modified. For example, if the player’s choice falls under “Escape” then the objective of the

M1 model is modified as follows:

actr.set_current_model(player1) |

actr.define_chunks([‘goal’,‘isa’, ‘strategy’,‘state’,‘escape’]) |

actr.goal_focus(‘goal’) |

In this way a new goal is set in the respective buffer and the following

escape rule is activated.

(p escape |

=goal> |

state escape |

?vocal> |

state escape |

==> |

+vocal> |

cmd speak |

string “escape” |

=goal> |

mode escape |

!output! (I escape with chocolate) |

) |

If instead the choice falls under “Leave the chocolate” the chunk placed in the

Goal buffer will be accordingly modified:

actr.set_current_model(player1) |

actr.define_chunks([‘goal’,‘isa’, ‘strategy’, ‘state’,‘leave’]) |

actr.goal_focus(‘goal’) |

and the Procedural System of the model will activate the following rule:

(p leave-chocolate |

=goal> |

state leave |

?vocal> |

state free |

==> |

+vocal> |

cmd speak |

string “leave” |

=goal> |

mode leave |

!output! (I leave chocolate bar...) |

) |

The player is thus called to take on an important role in the course of the story. The goal is to be able to create an empathetic link between the end-user himself and the main character of the story he is trying to help. In this way, the user will be assigned a certain degree of responsibility during the evolution process of the story, which will end with a positive outcome or failure.