Augmented Reality for Robotics: A Review

Abstract

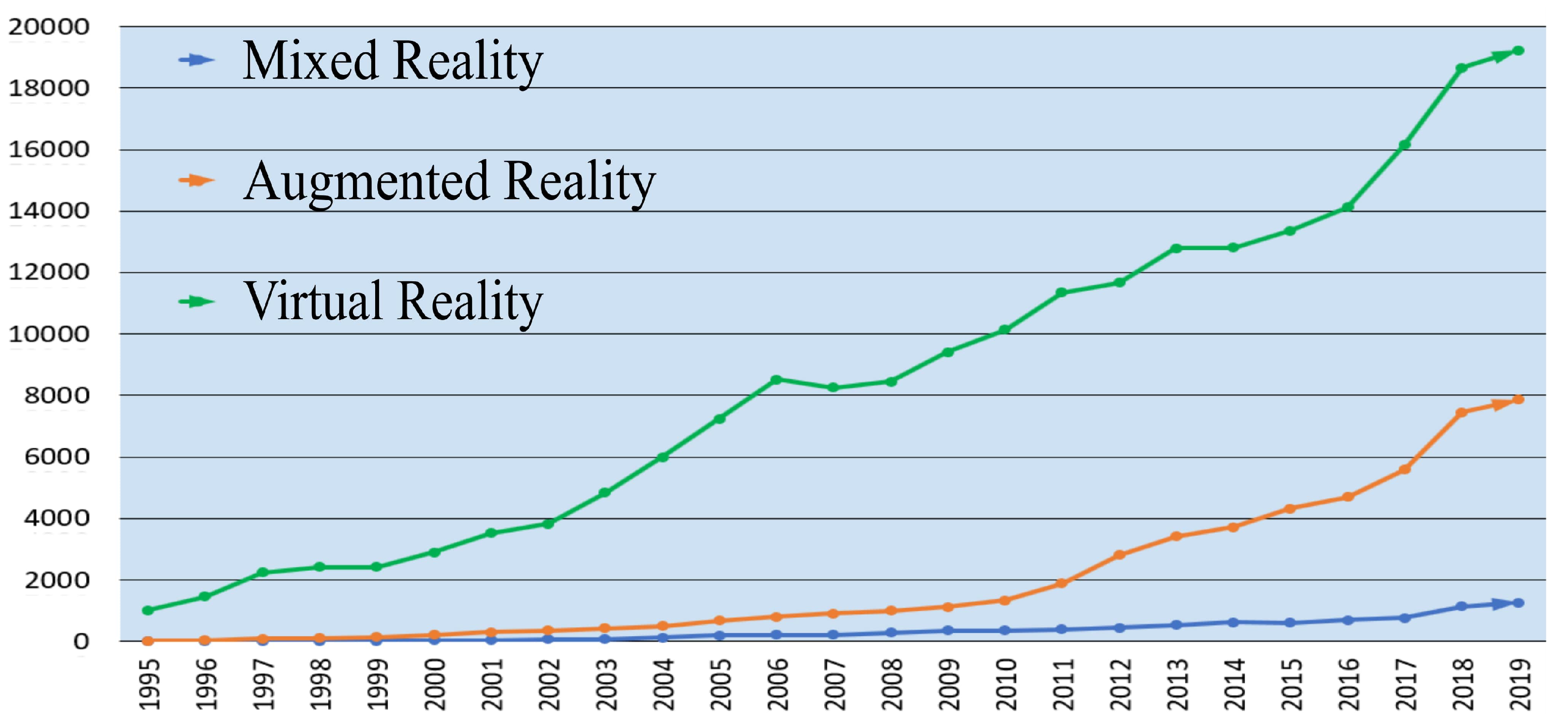

1. Introduction

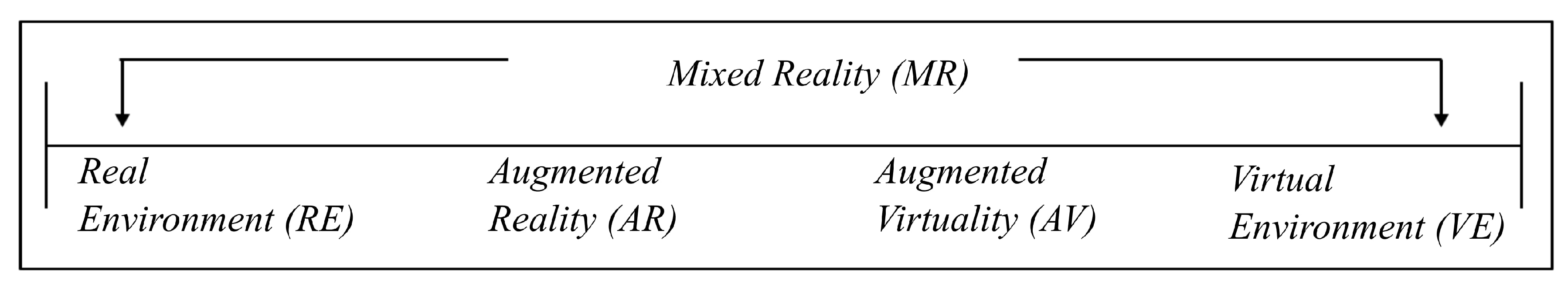

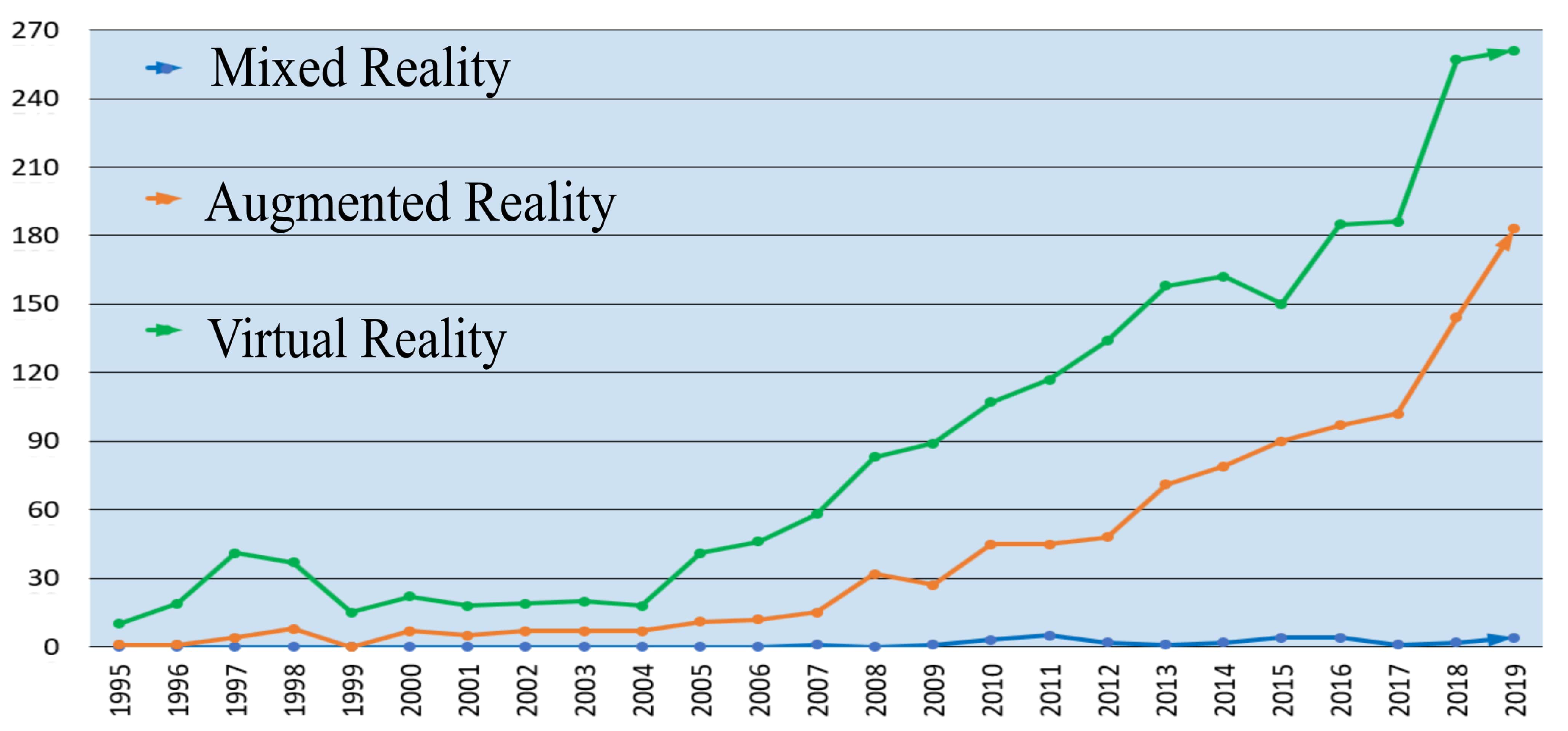

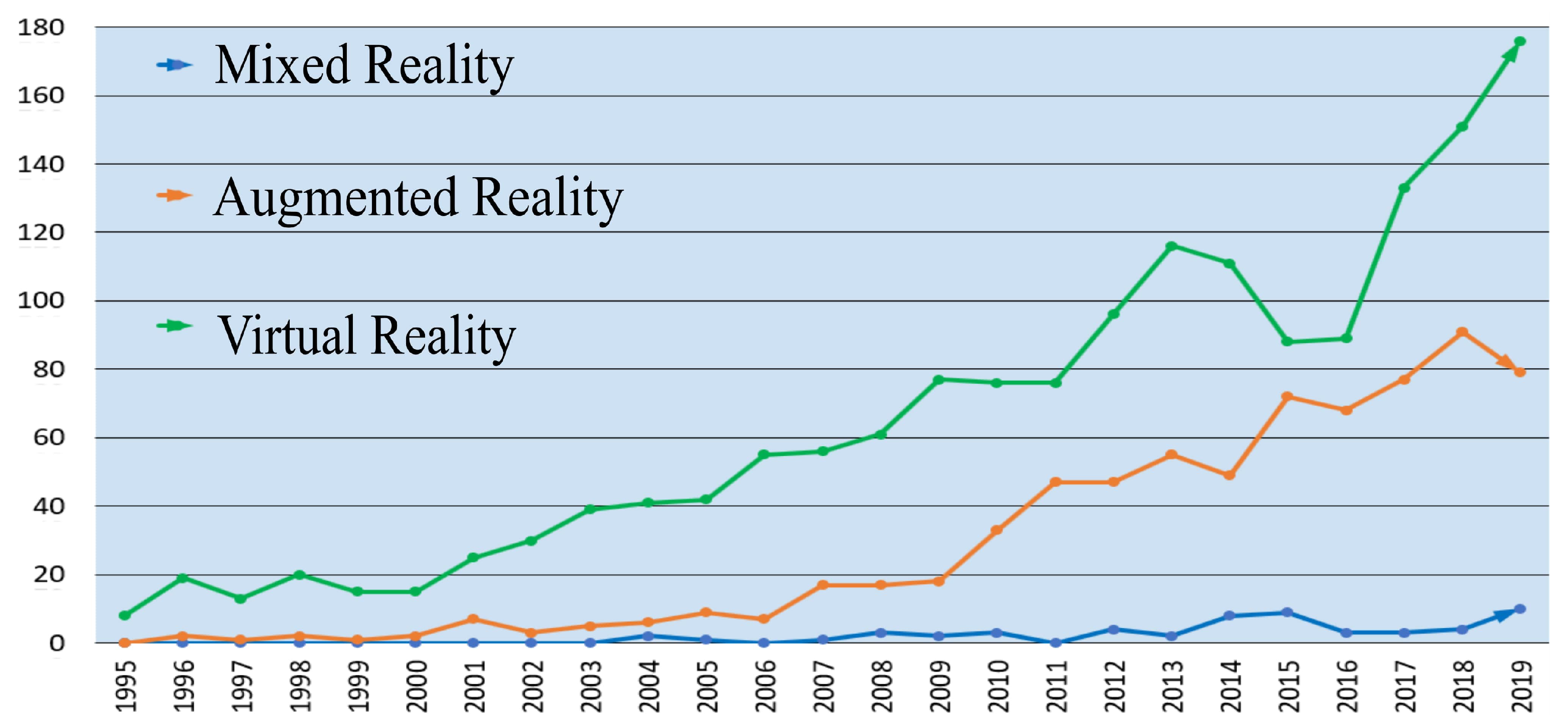

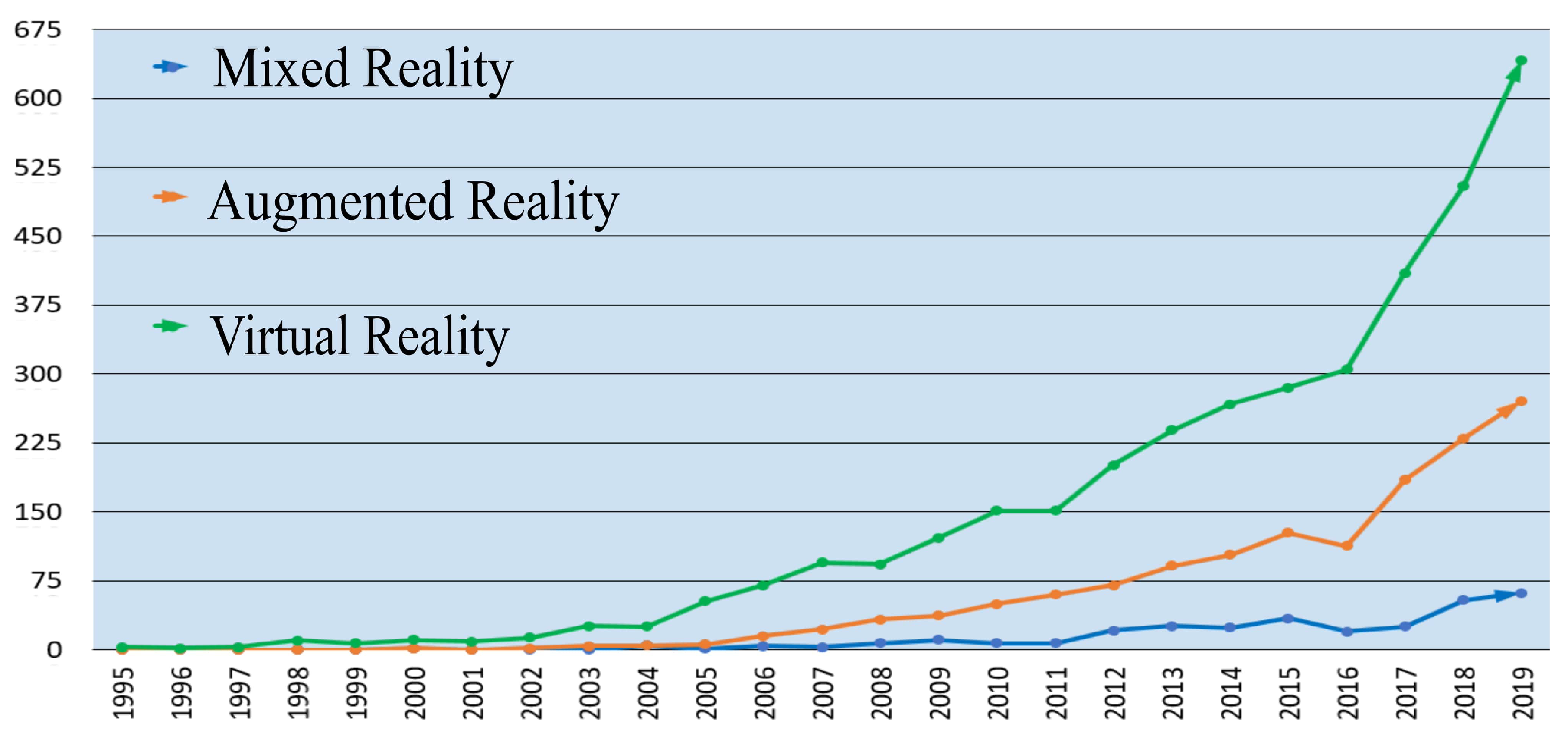

2. A Brief History of AR

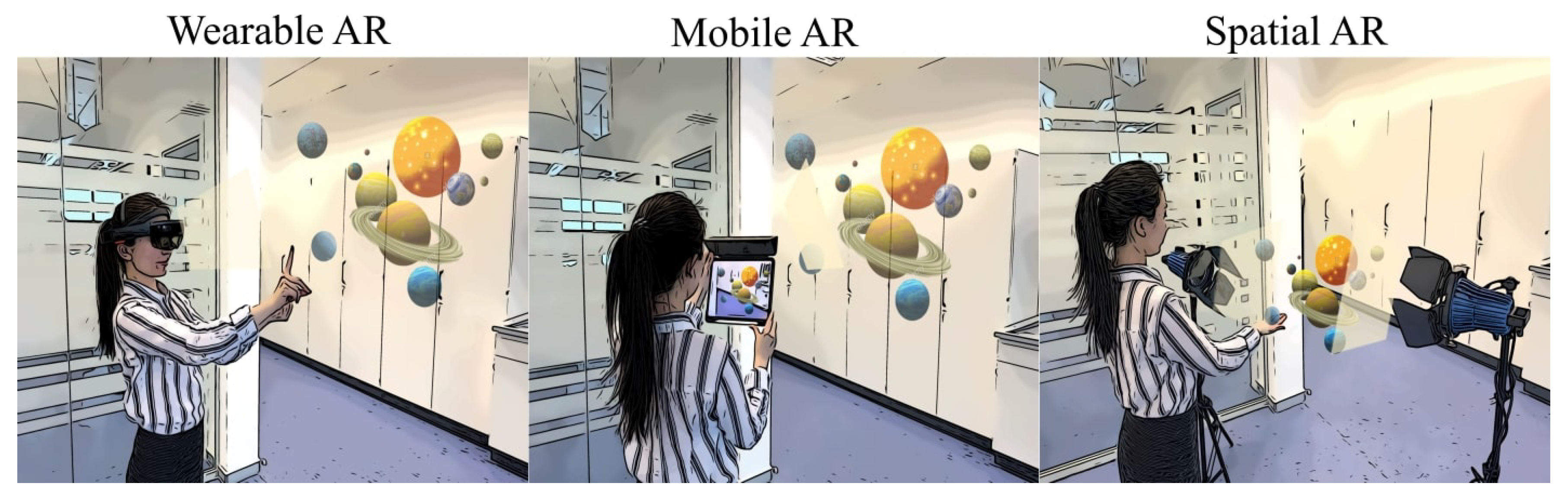

3. AR Technology

4. AR Applications in Robotics

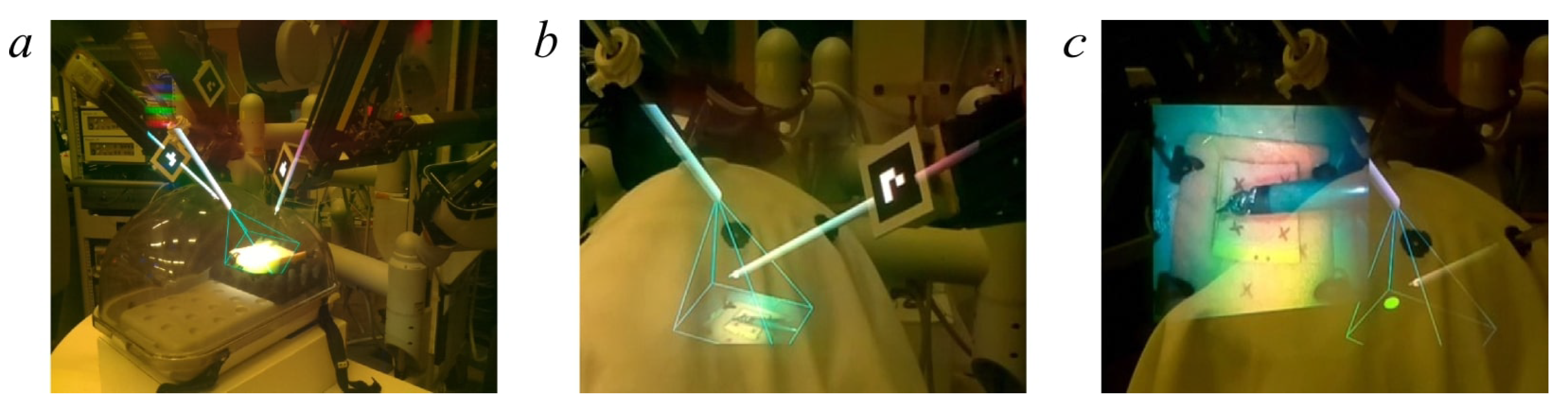

4.1. AR in Medical Robotics

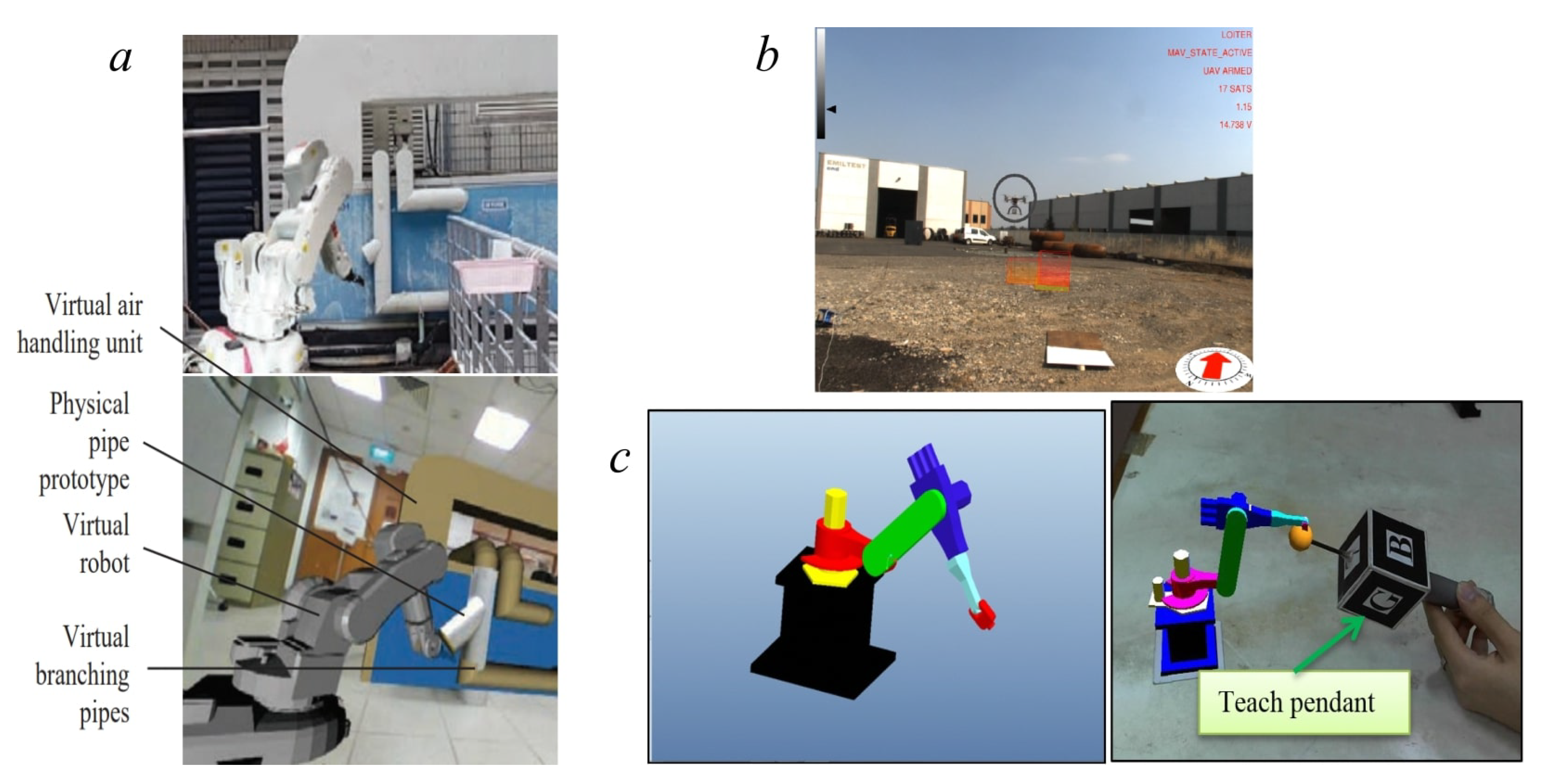

4.2. AR in Robot Control and Planning

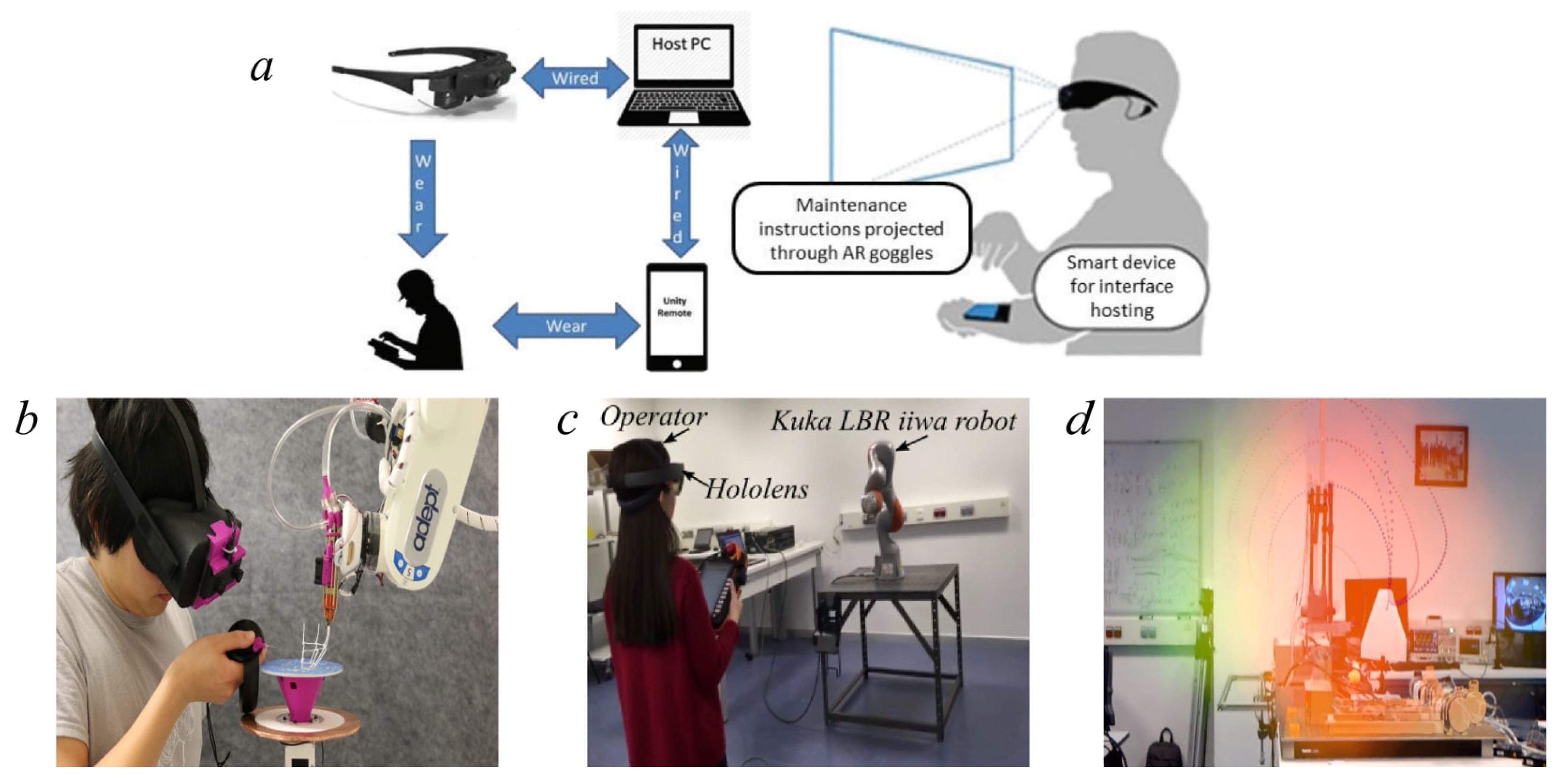

4.3. AR for Human-Robot Interaction

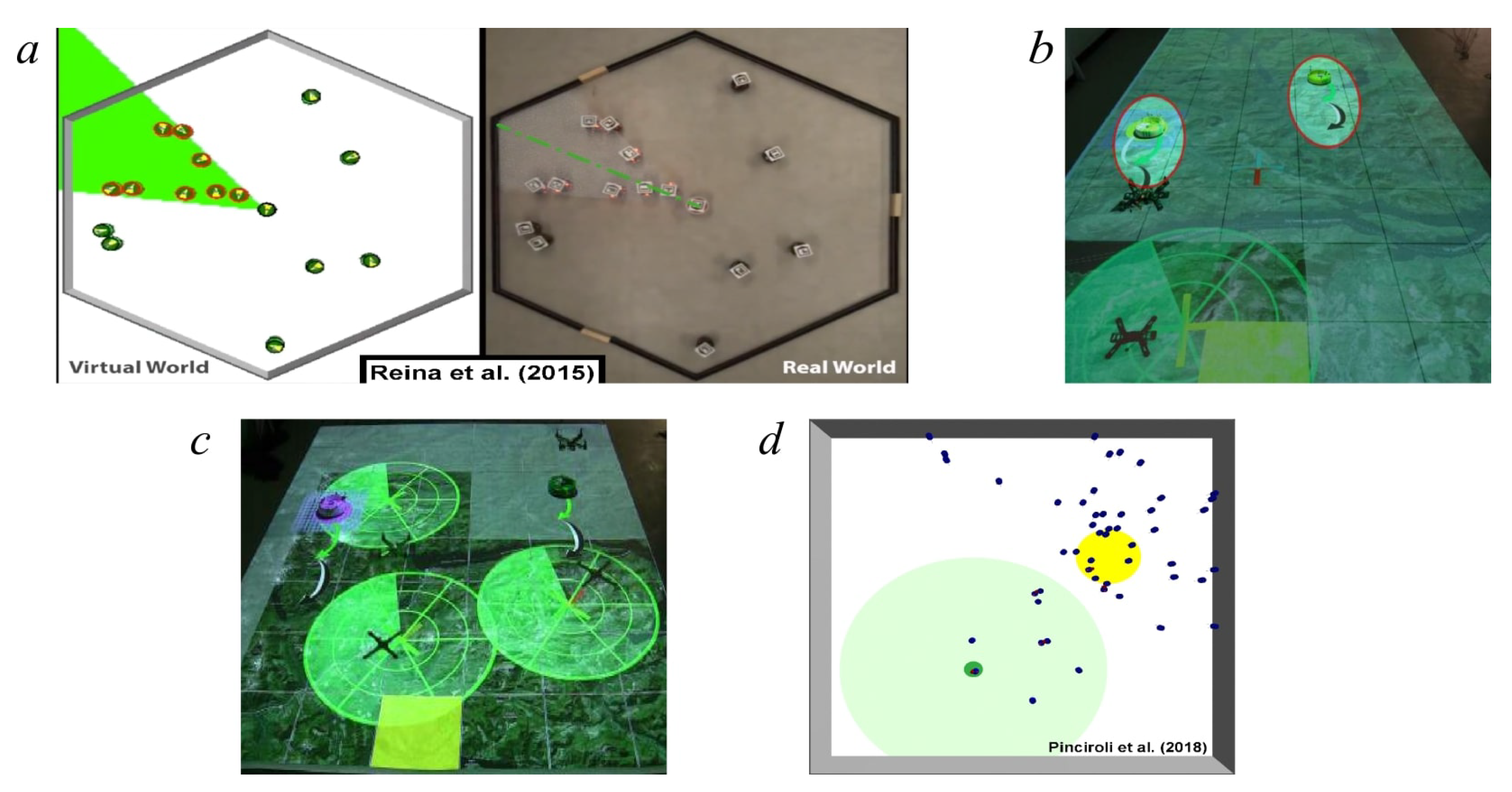

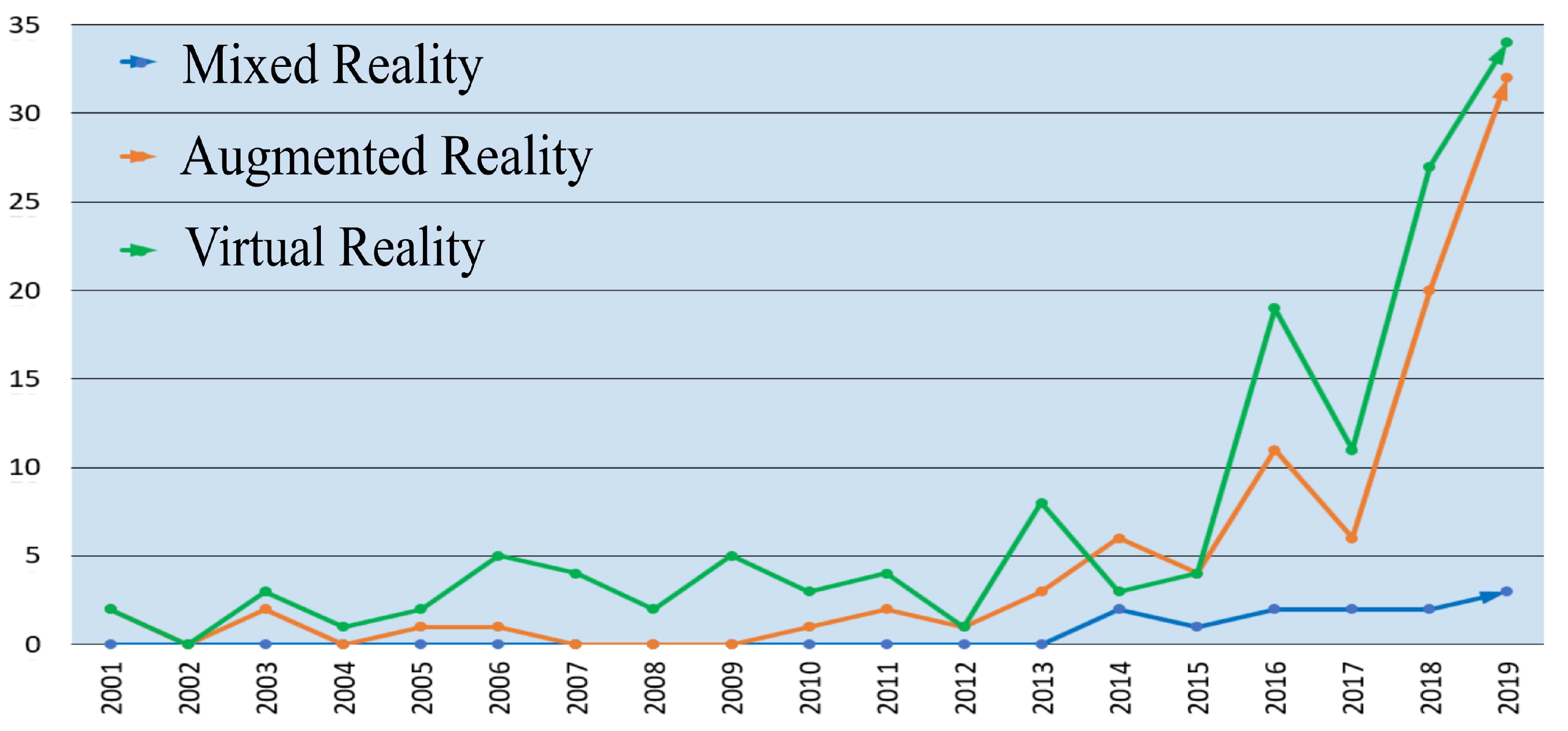

4.4. AR-Based Swarm Robot Research

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

Abbreviations

| AR | Augmented Reality |

| BCI | Brain-Computer Interface |

| CNC | Computer Numerical Control |

| DL | Deep Learning |

| DoF | Degrees-of-Freedom |

| HMD | Head-Mounted Display |

| HRI | Human-Robot Interaction |

| MR | Mixed Reality |

| RAS | Robot Assisted Surgery |

| ROS | Robot Operating System |

| SAR | Spatial Augmented Reality |

| SLAM | Simultaneous Localization and Mapping |

| VR | Virtual Reality |

References

- Gorecky, D.; Schmitt, M.; Loskyll, M.; Zühlke, D. Human-machine-interaction in the Industry 4.0 era. In Proceedings of the 12th IEEE International Conference on Industrial Informatics (INDIN), Porto Alegre, Brazil, 27–30 July 2014; pp. 289–294. [Google Scholar]

- Hockstein, N.G.; Gourin, C.; Faust, R.; Terris, D.J. A history of robots: From science fiction to surgical robotics. J. Rob. Surg. 2007, 1, 113–118. [Google Scholar] [CrossRef] [PubMed]

- Kehoe, B.; Patil, S.; Abbeel, P.; Goldberg, K. A survey of research on cloud robotics and automation. IEEE Trans. Autom. Sci. Eng. 2015, 12, 398–409. [Google Scholar] [CrossRef]

- Azuma, R.T. A survey of augmented reality. Presence: Teleoperators Virtual Env. 1997, 6, 355–385. [Google Scholar] [CrossRef]

- Azuma, R.; Baillot, Y.; Behringer, R.; Feiner, S.; Julier, S.; MacIntyre, B. Recent advances in augmented reality. IEEE Comput. Graphics Appl. 2001, 21, 34–47. [Google Scholar] [CrossRef]

- Kato, H.; Billinghurst, M. Marker tracking and HMD calibration for a video-based augmented reality conferencing system. In Proceedings of the 2nd IEEE and ACM International Workshop on Augmented Reality (IWAR’99), San Francisco, CA, USA, 20–21 October 1999; pp. 85–94. [Google Scholar]

- Zhang, X.; Fronz, S.; Navab, N. Visual marker detection and decoding in AR systems: A comparative study. In Proceedings of the IEEE International Symposium on Mixed and Augmented Reality (ISMAR), Darmstadt, Germany, 1 October 2002; pp. 97–106. [Google Scholar]

- Welch, G.; Foxlin, E. Motion tracking survey. IEEE Comput. Graphics Appl. 2002, 22, 24–38. [Google Scholar] [CrossRef]

- Zhou, F.; Duh, H.B.L.; Billinghurst, M. Trends in augmented reality tracking, interaction and display: A review of ten years of ISMAR. In Proceedings of the IEEE/ACM International Symposium on Mixed and Augmented Reality (ISMAR), Cambridge, UK, 15–18 September 2008; pp. 193–202. [Google Scholar]

- Wang, X.; Ong, S.K.; Nee, A.Y. A comprehensive survey of augmented reality assembly research. Adv. Manuf. 2016, 4, 1–22. [Google Scholar] [CrossRef]

- Fuentes-Pacheco, J.; Ruiz-Ascencio, J.; Rendón-Mancha, J.M. Visual simultaneous localization and mapping: A survey. Artif. Intell. Rev. 2015, 43, 55–81. [Google Scholar] [CrossRef]

- Marchand, E.; Uchiyama, H.; Spindler, F. Pose estimation for augmented reality: A hands-on survey. IEEE Trans. Visual Comput. Graphics 2015, 22, 2633–2651. [Google Scholar] [CrossRef]

- Mekni, M.; Lemieux, A. Augmented reality: Applications, challenges and future trends. Appl. Comput. Sci. 2014, 205–214. [Google Scholar]

- Nicolau, S.; Soler, L.; Mutter, D.; Marescaux, J. Augmented reality in laparoscopic surgical oncology. Surgical Oncol. 2011, 20, 189–201. [Google Scholar] [CrossRef]

- Nee, A.Y.; Ong, S.; Chryssolouris, G.; Mourtzis, D. Augmented reality applications in design and manufacturing. CIRP Annals 2012, 61, 657–679. [Google Scholar] [CrossRef]

- Chatzopoulos, D.; Bermejo, C.; Huang, Z.; Hui, P. Mobile augmented reality survey: From where we are to where we go. IEEE Access 2017, 5, 6917–6950. [Google Scholar] [CrossRef]

- Sutherland, I.E. The Ultimate Display. In Proceedings of the IFIP Congress; Macmillan and Co.: London, UK, 1965; pp. 506–508. [Google Scholar]

- Sutherland, I.E. A head-mounted three dimensional display. In Proceedings of the Fall Joint Computer Conference, New York, NY, USA, 9–11 December 1968; pp. 757–764. [Google Scholar]

- Teitel, M.A. The Eyephone: A head-mounted stereo display. In Stereoscopic Displays and Applications; SPIE: Bellingham, DC, USA, 1990; Volume 1256, pp. 168–171. [Google Scholar]

- MacKenzie, I.S. Input devices and interaction techniques for advanced computing. In Virtual Environments and Advanced Interface Design; Oxford University Press: Oxford, UK, June 1995; pp. 437–470. [Google Scholar]

- Rosenberg, L.B. The Use of Virtual Fixtures as Perceptual Overlays to Enhance Operator Performance in Remote Environments; Technical Report; Stanford University Center for Design Research: Stanford, CA, USA, 1992. [Google Scholar]

- Rosenberg, L.B. Virtual fixtures: Perceptual tools for telerobotic manipulation. In Proceedings of the IEEE Virtual Reality Annual International Symposium, Seattle, WA, USA, 18–22 September 1993; pp. 76–82. [Google Scholar]

- Milgram, P.; Kishino, F. A taxonomy of mixed reality visual displays. Ieice Trans. Inf. Syst. 1994, 77, 1321–1329. [Google Scholar]

- Durrant-Whyte, H.; Bailey, T. Simultaneous Localization and Mapping: Part I. IEEE Robot. Autom. Mag. 2006, 13, 99–110. [Google Scholar] [CrossRef]

- Rapp, D.; Müller, J.; Bucher, K.; von Mammen, S. Pathomon: A Social Augmented Reality Serious Game. In Proceedings of the International Conference on Virtual Worlds and Games for Serious Applications (VS-Games), Würzburg, Germany, 5–7 September 2018. [Google Scholar]

- Bodner, J.; Wykypiel, H.; Wetscher, G.; Schmid, T. First experiences with the da Vinci operating robot in thoracic surgery. Eur. J. -Cardio-Thorac. Surg. 2004, 25, 844–851. [Google Scholar] [CrossRef]

- Vuforia, S. Vuforia Developer Portal. Available online: https://developer.vuforia.com/ (accessed on 28 January 2020).

- Malỳ, I.; Sedláček, D.; Leitao, P. Augmented reality experiments with industrial robot in Industry 4.0 environment. In Proceedings of the International Conference on Industrial Informatics (INDIN), Poitiers, France, 19–21 July 2016; pp. 176–181. [Google Scholar]

- ARKit. Developer Documentation, Apple Inc. Available online: https://developer.apple.com/documentation/arkit (accessed on 10 January 2020).

- ARCore. Developer Documentation, Google Inc. Available online: https://developers.google.com/ar (accessed on 15 January 2020).

- Balan, A.; Flaks, J.; Hodges, S.; Isard, M.; Williams, O.; Barham, P.; Izadi, S.; Hiliges, O.; Molyneaux, D.; Kim, D.; et al. Distributed Asynchronous Localization and Mapping for Augmented Reality. U.S. Patent 8,933,931, 13 January 2015. [Google Scholar]

- Balachandreswaran, D.; Njenga, K.M.; Zhang, J. Augmented Reality System and Method for Positioning and Mapping. U.S. Patent App. 14/778,855, 21 July 2016. [Google Scholar]

- Lalonde, J.F. Deep Learning for Augmented Reality. In Proceedings of the IEEE Workshop on Information Optics (WIO), Quebec, Canada, 16–19 July 2018; pp. 1–3. [Google Scholar]

- Park, Y.J.; Ro, H.; Han, T.D. Deep-ChildAR bot: Educational activities and safety care augmented reality system with deep learning for preschool. In Proceedings of the ACM SIGGRAPH Posters, Los Angeles, CA, USA, 28 July–1 August 2019; p. 26. [Google Scholar]

- Azuma, R.; Bishop, G. Improving static and dynamic registration in an optical see-through HMD. In Proceedings of the Annual Conference on Computer Graphics and Interactive Techniques, Orlando, FL, USA, 24–29 July 1994; pp. 197–204. [Google Scholar]

- Takagi, A.; Yamazaki, S.; Saito, Y.; Taniguchi, N. Development of a stereo video see-through HMD for AR systems. In Proceedings of the IEEE/ACM International Symposium on Augmented Reality (ISAR), Munich, Germany, 5–6 October 2000; pp. 68–77. [Google Scholar]

- Behzadan, A.H.; Timm, B.W.; Kamat, V.R. General-purpose modular hardware and software framework for mobile outdoor augmented reality applications in engineering. Adv. Eng. Inform. 2008, 22, 90–105. [Google Scholar] [CrossRef]

- Yew, A.; Ong, S.; Nee, A. Immersive augmented reality environment for the teleoperation of maintenance robots. Procedia Cirp 2017, 61, 305–310. [Google Scholar] [CrossRef]

- Brizzi, F.; Peppoloni, L.; Graziano, A.; Di, S.E.; Avizzano, C.A.; Ruffaldi, E. Effects of augmented reality on the performance of teleoperated industrial assembly tasks in a robotic embodiment. IEEE Trans. Hum. Mach. Syst. 2017, 48, 197–206. [Google Scholar] [CrossRef]

- Peng, H.; Briggs, J.; Wang, C.Y.; Guo, K.; Kider, J.; Mueller, S.; Baudisch, P.; Guimbretière, F. RoMA: Interactive fabrication with augmented reality and a robotic 3D printer. In Proceedings of the ACM/CHI Conference on Human Factors in Computing Systems, Kobe, Japan, 31 August–4 September 2018; p. 579. [Google Scholar]

- von Mammen, S.; Hamann, H.; Heider, M. Robot gardens: An augmented reality prototype for plant-robot biohybrid systems. In Proceedings of the ACM Conference on Virtual Reality Software and Technology, Munich, Germany, 2–4 November 2016; pp. 139–142. [Google Scholar]

- Lee, A.; Jang, I. Robust Multithreaded Object Tracker through Occlusions for Spatial Augmented Reality. Etri J. 2018, 40, 246–256. [Google Scholar] [CrossRef]

- Diana, M.; Marescaux, J. Robotic surgery. Br. J. Surg. 2015, 102, e15–e28. [Google Scholar] [CrossRef] [PubMed]

- Chowriappa, A.; Raza, S.J.; Fazili, A.; Field, E.; Malito, C.; Samarasekera, D.; Shi, Y.; Ahmed, K.; Wilding, G.; Kaouk, J.; et al. Augmented-reality-based skills training for robot-assisted urethrovesical anastomosis: A multi-institutional randomised controlled trial. BJU Int. 2015, 115, 336–345. [Google Scholar] [CrossRef] [PubMed]

- Costa, N.; Arsenio, A. Augmented reality behind the wheel-human interactive assistance by mobile robots. In Proceedings of the IEEE International Conference on Automation, Robotics and Applications (ICARA), Queenstown, New Zealand, 17–19 February 2015; pp. 63–69. [Google Scholar]

- Ocampo, R.; Tavakoli, M. Visual-Haptic Colocation in Robotic Rehabilitation Exercises Using a 2D Augmented-Reality Display. In Proceedings of the IEEE International Symposium on Medical Robotics (ISMR), Atlanta, GA, USA, 3–5 April 2019. [Google Scholar]

- Pessaux, P.; Diana, M.; Soler, L.; Piardi, T.; Mutter, D.; Marescaux, J. Towards cybernetic surgery: Robotic and augmented reality-assisted liver segmentectomy. Langenbeck’s Arch. Surg. 2015, 400, 381–385. [Google Scholar] [CrossRef] [PubMed]

- Liu, W.P.; Richmon, J.D.; Sorger, J.M.; Azizian, M.; Taylor, R.H. Augmented reality and cone beam CT guidance for transoral robotic surgery. J. Robot. Surg. 2015, 9, 223–233. [Google Scholar] [CrossRef] [PubMed]

- Navab, N.; Hennersperger, C.; Frisch, B.; Fürst, B. Personalized, relevance-based multimodal robotic imaging and augmented reality for computer assisted interventions. Med Image Anal. 2016, 33, 64–71. [Google Scholar] [CrossRef] [PubMed]

- Dickey, R.M.; Srikishen, N.; Lipshultz, L.I.; Spiess, P.E.; Carrion, R.E.; Hakky, T.S. Augmented reality assisted surgery: A urologic training tool. Asian J. Androl. 2016, 18, 732–734. [Google Scholar]

- Lin, L.; Shi, Y.; Tan, A.; Bogari, M.; Zhu, M.; Xin, Y.; Xu, H.; Zhang, Y.; Xie, L.; Chai, G. Mandibular angle split osteotomy based on a novel augmented reality navigation using specialized robot-assisted arms—A feasibility study. J. Cranio Maxillofac. Surg. 2016, 44, 215–223. [Google Scholar] [CrossRef]

- Wang, J.; Suenaga, H.; Yang, L.; Kobayashi, E.; Sakuma, I. Video see-through augmented reality for oral and maxillofacial surgery. Int. J. Med Robot. Comput. Assist. Surg. 2017, 13, e1754. [Google Scholar] [CrossRef]

- Zhou, C.; Zhu, M.; Shi, Y.; Lin, L.; Chai, G.; Zhang, Y.; Xie, L. Robot-assisted surgery for mandibular angle split osteotomy using augmented reality: Preliminary results on clinical animal experiment. Aesthetic Plast. Surg. 2017, 41, 1228–1236. [Google Scholar] [CrossRef]

- Bostick, J.E.; Ganci, J.J.M.; Keen, M.G.; Rakshit, S.K.; Trim, C.M. Augmented Control of Robotic Prosthesis by a Cognitive System. U.S. Patent 9,717,607, 1 August 2017. [Google Scholar]

- Qian, L.; Deguet, A.; Kazanzides, P. ARssist: Augmented reality on a head-mounted display for the first assistant in robotic surgery. Healthc. Technol. Lett. 2018, 5, 194–200. [Google Scholar] [CrossRef]

- Porpiglia, F.; Checcucci, E.; Amparore, D.; Autorino, R.; Piana, A.; Bellin, A.; Piazzolla, P.; Massa, F.; Bollito, E.; Gned, D.; et al. Augmented-reality robot-assisted radical prostatectomy using hyper-accuracy three-dimensional reconstruction (HA 3D) technology: A radiological and pathological study. BJU Int. 2019, 123, 834–845. [Google Scholar] [CrossRef]

- Bernhardt, S.; Nicolau, S.A.; Soler, L.; Doignon, C. The status of augmented reality in laparoscopic surgery as of 2016. Med. Image Anal. 2017, 37, 66–90. [Google Scholar] [CrossRef] [PubMed]

- Madhavan, K.; Kolcun, J.P.C.; Chieng, L.O.; Wang, M.Y. Augmented-reality integrated robotics in neurosurgery: Are We There Yet? Neurosurg. Focus 2017, 42, E3. [Google Scholar] [CrossRef] [PubMed]

- Qian, L.; Deguet, A.; Wang, Z.; Liu, Y.H.; Kazanzides, P. Augmented reality assisted instrument insertion and tool manipulation for the first assistant in robotic surgery. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Montreal, Canada, 20–24 May 2019; pp. 5173–5179. [Google Scholar]

- Hanna, M.G.; Ahmed, I.; Nine, J.; Prajapati, S.; Pantanowitz, L. Augmented reality technology using Microsoft HoloLens in anatomic pathology. Arch. Pathol. Lab. Med. 2018, 142, 638–644. [Google Scholar] [CrossRef] [PubMed]

- Quero, G.; Lapergola, A.; Soler, L.; Shabaz, M.; Hostettler, A.; Collins, T.; Marescaux, J.; Mutter, D.; Diana, M.; Pessaux, P. Virtual and augmented reality in oncologic liver surgery. Surg. Oncol. Clin. 2019, 28, 31–44. [Google Scholar] [CrossRef] [PubMed]

- Qian, L.; Zhang, X.; Deguet, A.; Kazanzides, P. ARAMIS: Augmented Reality Assistance for Minimally Invasive Surgery Using a Head-Mounted Display. In Proceedings of the International Conference on Medical Image Computing and Computer-Assisted Intervention, Shenzhen, China, 13–17 October 2019; pp. 74–82. [Google Scholar]

- Qian, L.; Wu, J.Y.; DiMaio, S.P.; Navab, N.; Kazanzides, P. A Review of Augmented Reality in Robotic-Assisted Surgery. IEEE Trans. Med Robot. Bionics 2020, 2, 1–16. [Google Scholar] [CrossRef]

- Gacem, H.; Bailly, G.; Eagan, J.; Lecolinet, E. Finding objects faster in dense environments using a projection augmented robotic arm. In Proceedings of the IFIP Conference on Human-Computer Interaction, Bamberg, Germany, 14–18 September 2015; pp. 221–238. [Google Scholar]

- Kuriya, R.; Tsujimura, T.; Izumi, K. Augmented reality robot navigation using infrared marker. In Proceedings of the IEEE International Symposium on Robot and Human Interactive Communication (ROMAN), Kobe, Japan, 31 August–4 September 2015; pp. 450–455. [Google Scholar]

- Dias, T.; Miraldo, P.; Gonçalves, N.; Lima, P.U. Augmented reality on robot navigation using non-central catadioptric cameras. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Hamburg, Germany, 28 September–2 October 2015; pp. 4999–5004. [Google Scholar]

- Stadler, S.; Kain, K.; Giuliani, M.; Mirnig, N.; Stollnberger, G.; Tscheligi, M. Augmented reality for industrial robot programmers: Workload analysis for task-based, augmented reality-supported robot control. In Proceedings of the IEEE International Symposium on Robot and Human Interactive Communication (ROMAN), New York City, NY, USA, 26–31 August 2016; pp. 179–184. [Google Scholar]

- Papachristos, C.; Alexis, K. Augmented reality-enhanced structural inspection using aerial robots. In Proceedings of the IEEE International Symposium on Intelligent Control (ISIC), Buenos Aires, Argentina, 19–22 September 2016; pp. 185–190. [Google Scholar]

- Aleotti, J.; Micconi, G.; Caselli, S.; Benassi, G.; Zambelli, N.; Bettelli, M.; Zappettini, A. Detection of nuclear sources by UAV teleoperation using a visuo-haptic augmented reality interface. Sensors 2017, 17, 2234. [Google Scholar] [CrossRef]

- Kundu, A.S.; Mazumder, O.; Dhar, A.; Lenka, P.K.; Bhaumik, S. Scanning camera and augmented reality based localization of omnidirectional robot for indoor application. Procedia Comput. Sci. 2017, 105, 27–33. [Google Scholar] [CrossRef]

- Zhu, D.; Veloso, M. Virtually adapted reality and algorithm visualization for autonomous robots. In Robot World Cup; Springer: Berlin/Heidelberg, Germany, 2016; pp. 452–464. [Google Scholar]

- Pai, Y.S.; Yap, H.J.; Dawal, S.Z.M.; Ramesh, S.; Phoon, S.Y. Virtual planning, control, and machining for a modular-based automated factory operation in an augmented reality environment. Sci. Rep. 2016, 6, 27380. [Google Scholar] [CrossRef]

- Lee, A.; Lee, J.H.; Kim, J. Data-Driven Kinematic Control for Robotic Spatial Augmented Reality System with Loose Kinematic Specifications. ETRI J. 2016, 38, 337–346. [Google Scholar] [CrossRef]

- Kamoi, T.; Inaba, G. Robot System Having Augmented Reality-compatible Display. U.S. Patent App. 14/937,883, 9 June 2016. [Google Scholar]

- Guhl, J.; Tung, S.; Kruger, J. Concept and architecture for programming industrial robots using augmented reality with mobile devices like Microsoft HoloLens. In Proceedings of the IEEE International Conference on Emerging Technologies and Factory Automation (ETFA), Limassol, Cyprus, 12–15 September 2017; pp. 1–4. [Google Scholar]

- Malayjerdi, E.; Yaghoobi, M.; Kardan, M. Mobile robot navigation based on Fuzzy Cognitive Map optimized with Grey Wolf Optimization Algorithm used in Augmented Reality. In Proceedings of the IEEE/RSI International Conference on Robotics and Mechatronics (ICRoM), Tehran, Iran, 25–27 October 2017; pp. 211–218. [Google Scholar]

- Ni, D.; Yew, A.; Ong, S.; Nee, A. Haptic and visual augmented reality interface for programming welding robots. Adv. Manuf. 2017, 5, 191–198. [Google Scholar] [CrossRef]

- Liu, H.; Zhang, Y.; Si, W.; Xie, X.; Zhu, Y.; Zhu, S.C. Interactive robot knowledge patching using augmented reality. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Brisbane, Australia, 21–25 May 2018; pp. 1947–1954. [Google Scholar]

- Quintero, C.P.; Li, S.; Pan, M.K.; Chan, W.P.; Van, d.L.H.M.; Croft, E. Robot programming through augmented trajectories in augmented reality. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Madrid, Spain, 1–5 October 2018; pp. 1838–1844. [Google Scholar]

- Gradmann, M.; Orendt, E.M.; Schmidt, E.; Schweizer, S.; Henrich, D. Augmented Reality Robot Operation Interface with Google Tango. In Proceedings of the International Symposium on Robotics (ISR), Munich, Germany, 20–21 June 2018; pp. 170–177. [Google Scholar]

- Hoffmann, H.; Daily, M.J. System and Method for Robot Supervisory Control with an Augmented Reality User Interface. U.S. Patent 9,880,553, 30 January 2018. [Google Scholar]

- Chan, W.P.; Quintero, C.P.; Pan, M.K.; Sakr, M.; Van der Loos, H.M.; Croft, E. A Multimodal System using Augmented Reality, Gestures, and Tactile Feedback for Robot Trajectory Programming and Execution. In Proceedings of the ICRA Workshop on Robotics in Virtual Reality, Brisbane, Australia, 21–25 May 2018. [Google Scholar]

- Mourtzis, D. Simulation in the design and operation of manufacturing systems: State of the art and new trends. Int. J. Prod. Res. 2019, 1–23. [Google Scholar] [CrossRef]

- Mourtzis, D.; Zogopoulos, V. Augmented reality application to support the assembly of highly customized products and to adapt to production re-scheduling. Int. J. Adv. Manuf. Technol. 2019, 1–12. [Google Scholar] [CrossRef]

- Mourtzis, D.; Samothrakis, V.; Zogopoulos, V.; Vlachou, E. Warehouse Design and Operation using Augmented Reality technology: A Papermaking Industry Case Study. Procedia Cirp 2019, 79, 574–579. [Google Scholar] [CrossRef]

- Ong, S.; Yew, A.; Thanigaivel, N.; Nee, A. Augmented reality-assisted robot programming system for industrial applications. Robot. Comput. Integr. Manuf. 2020, 61, 101820. [Google Scholar] [CrossRef]

- Ong, S.K.; Nee, A.Y.C.; Yew, A.W.W.; Thanigaivel, N.K. AR-assisted robot welding programming. Adv. Manuf. 2020, 8, 40–48. [Google Scholar] [CrossRef]

- Avalle, G.; De Pace, F.; Fornaro, C.; Manuri, F.; Sanna, A. An Augmented Reality System to Support Fault Visualization in Industrial Robotic Tasks. IEEE Access 2019, 7, 132343–132359. [Google Scholar] [CrossRef]

- Gong, L.; Ong, S.; Nee, A. Projection-based augmented reality interface for robot grasping tasks. In Proceedings of the International Conference on Robotics, Control and Automation, Guangzhou, China, 26–28 July 2019; pp. 100–104. [Google Scholar]

- Gurevich, P.; Lanir, J.; Cohen, B. Design and implementation of teleadvisor: A projection-based augmented reality system for remote collaboration. Comput. Support. Coop. Work. (Cscw) 2015, 24, 527–562. [Google Scholar] [CrossRef]

- Lv, Z.; Halawani, A.; Feng, S.; Ur, R.S.; Li, H. Touch-less interactive augmented reality game on vision-based wearable device. Pers. Ubiquitous Comput. 2015, 19, 551–567. [Google Scholar] [CrossRef]

- Clemente, F.; Dosen, S.; Lonini, L.; Markovic, M.; Farina, D.; Cipriani, C. Humans can integrate augmented reality feedback in their sensorimotor control of a robotic hand. IEEE Trans. Hum. Mach. Syst. 2016, 47, 583–589. [Google Scholar] [CrossRef]

- Gong, L.; Gong, C.; Ma, Z.; Zhao, L.; Wang, Z.; Li, X.; Jing, X.; Yang, H.; Liu, C. Real-time human-in-the-loop remote control for a life-size traffic police robot with multiple augmented reality aided display terminals. In Proceedings of the IEEE International Conference on Advanced Robotics and Mechatronics (ICARM), Hefei & Tai’an, China, 27–31 August 2017; pp. 420–425. [Google Scholar]

- Piumatti, G.; Sanna, A.; Gaspardone, M.; Lamberti, F. Spatial augmented reality meets robots: Human-machine interaction in cloud-based projected gaming environments. In Proceedings of the IEEE International Conference on Consumer Electronics (ICCE), Berlin, Germany, 3–6 September 2017; pp. 176–179. [Google Scholar]

- Dinh, H.; Yuan, Q.; Vietcheslav, I.; Seet, G. Augmented reality interface for taping robot. In Proceedings of the IEEE International Conference on Advanced Robotics (ICAR), Hong Kong, China, 10–12 July 2017; pp. 275–280. [Google Scholar]

- Lin, Y.; Song, S.; Meng, M.Q.H. The implementation of augmented reality in a robotic teleoperation system. In Proceedings of the IEEE International Conference on Real-time Computing and Robotics (RCAR), Angkor Wat, Cambodia, 6–9 June 2016; pp. 134–139. [Google Scholar]

- Shchekoldin, A.I.; Shevyakov, A.D.; Dema, N.U.; Kolyubin, S.A. Adaptive head movements tracking algorithms for AR interface controlled telepresence robot. In Proceedings of the IEEE International Conference on Methods and Models in Automation and Robotics (MMAR), Miedzyzdroje, Poland, 28–31 August 2017; pp. 728–733. [Google Scholar]

- Lin, K.; Rojas, J.; Guan, Y. A vision-based scheme for kinematic model construction of re-configurable modular robots. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Vancouver, BC, Canada, 24–28 September 2017; pp. 2751–2757. [Google Scholar]

- Mourtzis, D.; Zogopoulos, V.; Vlachou, E. Augmented reality application to support remote maintenance as a service in the robotics industry. Procedia Cirp 2017, 63, 46–51. [Google Scholar] [CrossRef]

- Makhataeva, Z.; Zhakatayev, A.; Varol, H.A. Safety Aura Visualization for Variable Impedance Actuated Robots. In Proceedings of the IEEE/SICE International Symposium on System Integration (SII), Paris, France, 14–16 January 2019; pp. 805–810. [Google Scholar]

- Walker, M.; Hedayati, H.; Lee, J.; Szafir, D. Communicating robot motion intent with augmented reality. In Proceedings of the ACM/IEEE International Conference on Human-Robot Interaction (HRI), Chicago, IL, USA, 5–8 March 2018; pp. 316–324. [Google Scholar]

- Walker, M.E.; Hedayati, H.; Szafir, D. Robot Teleoperation with Augmented Reality Virtual Surrogates. In Proceedings of the ACM/IEEE International Conference on Human-Robot Interaction (HRI), Daegu, Korea, 11–14 March 2019; pp. 202–210. [Google Scholar]

- Hedayati, H.; Walker, M.; Szafir, D. Improving collocated robot teleoperation with augmented reality. In Proceedings of the ACM/IEEE International Conference on Human-Robot Interaction (HRI), Chicago, IL, USA, 5–8 March 2018; pp. 78–86. [Google Scholar]

- Lee, D.; Park, Y.S. Implementation of Augmented Teleoperation System Based on Robot Operating System (ROS). In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Madrid, Spain, 1–5 October 2018; pp. 5497–5502. [Google Scholar]

- He, Y.; Fukuda, O.; Ide, S.; Okumura, H.; Yamaguchi, N.; Bu, N. Simulation system for myoelectric hand prosthesis using augmented reality. In Proceedings of the IEEE International Conference on Robotics and Biomimetics (ROBIO), Parisian Macao, China, 5–8 December 2017; pp. 1424–1429. [Google Scholar]

- Meli, L.; Pacchierotti, C.; Salvietti, G.; Chinello, F.; Maisto, M.; De Luca, A.; Prattichizzo, D. Combining wearable finger haptics and Augmented Reality: User evaluation using an external camera and the Microsoft HoloLens. IEEE Robot. Autom. Lett. 2018, 3, 4297–4304. [Google Scholar] [CrossRef]

- Wang, Y.; Zeng, H.; Song, A.; Xu, B.; Li, H.; Zhu, L.; Wen, P.; Liu, J. Robotic arm control using hybrid brain-machine interface and augmented reality feedback. In Proceedings of the IEEE/EMBS International Conference on Neural Engineering (NER), Shanghai, China, 25–28 May 2017; pp. 411–414. [Google Scholar]

- Zeng, H.; Wang, Y.; Wu, C.; Song, A.; Liu, J.; Ji, P.; Xu, B.; Zhu, L.; Li, H.; Wen, P. Closed-loop hybrid gaze brain-machine interface based robotic arm control with augmented reality feedback. Front. Neurorobotics 2017, 11, 60. [Google Scholar] [CrossRef] [PubMed]

- Si-Mohammed, H.; Petit, J.; Jeunet, C.; Argelaguet, F.; Spindler, F.; Evain, A.; Roussel, N.; Casiez, G.; Anatole, L. Towards BCI-based Interfaces for Augmented Reality: Feasibility, Design and Evaluation. IEEE Trans. Vis. Comput. Graph. 2018, 26, 1608–1621. [Google Scholar] [CrossRef]

- Urbani, J.; Al-Sada, M.; Nakajima, T.; Höglund, T. Exploring Augmented Reality Interaction for Everyday Multipurpose Wearable Robots. In Proceedings of the IEEE International Conference on Embedded and Real-Time Computing Systems and Applications (RTCSA), Hakodate, Japan, 28–31 August 2018; pp. 209–216. [Google Scholar]

- Williams, T.; Szafir, D.; Chakraborti, T.; Ben, A.H. Virtual, augmented, and mixed reality for human–robot interaction. In Proceedings of the ACM/IEEE International Conference on Human-Robot Interaction (HRI), Chicago, IL, USA, 5–8 March 2018; pp. 403–404. [Google Scholar]

- Sheridan, T.B. Human-robot interaction: Status and challenges. Hum. Factors 2016, 58, 525–532. [Google Scholar] [CrossRef] [PubMed]

- Chan, W.P.; Karim, A.; Quintero, C.P.; Van der Loos, H.M.; Croft, E. Virtual barriers in augmented reality for safe human–robot collaboration in manufacturing. In Proceedings of the Robotic Co-workers 4.0, Madrid, Spain, 5 October 2018. [Google Scholar]

- Luebbers, M.B.; Brooks, C.; Kim, M.J.; Szafir, D.; Hayes, B. Augmented reality interface for constrained learning from demonstration. In Proceedings of the International Workshop on Virtual, Augmented, and Mixed Reality for HRI (VAM-HRI), Daegu, Korea (South), 11–14 March 2019. [Google Scholar]

- Reina, A.; Salvaro, M.; Francesca, G.; Garattoni, L.; Pinciroli, C.; Dorigo, M.; Birattari, M. Augmented reality for robots: Virtual sensing technology applied to a swarm of e-pucks. In Proceedings of the NASA/ESA Conference on Adaptive Hardware and Systems (AHS), Montreal, QC, Canada, 15–18 June 2015. [Google Scholar]

- Omidshafiei, S.; Agha-Mohammadi, A.A.; Chen, Y.F.; Ure, N.K.; Liu, S.Y.; Lopez, B.T.; Surati, R.; How, J.P.; Vian, J. Measurable augmented reality for prototyping cyberphysical systems: A robotics platform to aid the hardware prototyping and performance testing of algorithms. IEEE Control. Syst. Mag. 2016, 36, 65–87. [Google Scholar]

- Pinciroli, C.; Talamali, M.S.; Reina, A.; Marshall, J.A.; Trianni, V. Simulating Kilobots within ARGoS: Models and experimental validation. In Proceedings of the International Conference on Swarm Intelligence (ICSI), Shanghai, China, 17–22 June 2018; pp. 176–187. [Google Scholar]

- Reina, A.; Cope, A.J.; Nikolaidis, E.; Marshall, J.A.; Sabo, C. ARK: Augmented reality for Kilobots. IEEE Robot. Auto. Lett. 2017, 2, 1755–1761. [Google Scholar] [CrossRef]

- Valentini, G.; Antoun, A.; Trabattoni, M.; Wiandt, B.; Tamura, Y.; Hocquard, E.; Trianni, V.; Dorigo, M. Kilogrid: A novel experimental environment for the kilobot robot. Swarm Intell. 2018, 12, 245–266. [Google Scholar] [CrossRef]

- Reina, A.; Ioannou, V.; Chen, J.; Lu, L.; Kent, C.; Marshall, J.A. Robots as Actors in a Film: No War, A Robot Story. arXiv 2019, arXiv:1910.12294. [Google Scholar]

- Llenas, A.F.; Talamali, M.S.; Xu, X.; Marshall, J.A.; Reina, A. Quality-sensitive foraging by a robot swarm through virtual pheromone trails. In Proceedings of the International Conference on Swarm Intelligence, Shanghai, China, 17–22 June 2018; pp. 135–149. [Google Scholar]

- Talamali, M.S.; Bose, T.; Haire, M.; Xu, X.; Marshall, J.A.; Reina, A.; Talamali, M.S.; Marshall, J.A.; Bose, T.; Reina, A.; et al. Sophisticated Collective Foraging with Minimalist Agents: A Swarm Robotics Test. Swarm Intell. 2019, 6, 30–34. [Google Scholar] [CrossRef]

| Work | Application | AR System Components | Robot | Limitations |

|---|---|---|---|---|

| [44] | RAS: training tool | Haptic-enabled AR-based training system (HoST), Robot-assisted Surgical Simulator (RoSS) | da Vinci Surgical System | Limitations in the evaluation of the cognitive load when using the simulation system, visual errors when the surgical is places into the different position |

| [45] | Human Interactive Assistance | SAR system: digital projectors, camera, and Kinect depth camera | Mobile robot | Spatial distortion due to the robot movement during projection, error prone robot localization |

| [47] | RAS | Robotic binocular camera, CT scan, video mixer (MX 70; Panasonic, Secaucus, NJ), VR-RENDER® software, Virtual Surgical Planning (VSP®, IRCAD) | da Vinci™ (Intuitive Surgical, Inc., Sunnyvale, CA) | Use of the fixed virtual model leading to the limited AR accuracy during the interaction with mobile and soft tissues |

| [48] | RAS | Video augmentation of the primary stereo endoscopy, volumetric CBCT scan, Visualization Toolkit, Slicer 3D bidirectional socket-based communication interface, 2D X-rays | Da Vinci si robot | Sensitivity to the marker occlusion and distortions in orientation leading to the low accuracy of vision-based resection tool |

| [50] | Augmented Reality Assisted Surgery | Google Glass® optical head-mounted display | Andrologic training tool | Technical limitation: low battery life, overheating, complexity in software integration |

| [51] | RAS: AR navigation | AR Toolkit software, display system, rapid prototyping (RP) technology(ProJet 660 Pro, 3DSYSTEM, USA) models, MicronTracker (Claron Company, Canada): optical sensors with 3 cameras, nVisor ST60 (NVIS Company, US) | Robot-assisted arms | Limited precision in cases of soft tissues within operational area |

| [52] | Oral and maxillofacial surgery | Markerless video see-through AR, video camera, optical flow tracker, cascade detector, integrator, online labeling tool, OpenGL software | Target registration errors, uncertainty in 3P pose estimation (minimize the distance between camera and tracked object), time increase when the tracking is performed | |

| [53] | Mandibular angle split osteotomy | Imaging device (Siemens Somatom Definition Edge), Mimics CAD/CAM software for 3D virtual models | 7 DoF serial arm | Errors due to deviation between planned and actual drilling axes, errors during the target registration |

| [54] | Wearable devices (prosthesis) | Camera, AR glasses, AR glasses transceiver, AR glasses camera, robotic, server, cognitive system | robotic prosthetic device | |

| [55] | RAS | ARssist system: HMD (Microsoft HoloLens), endoscope camera, fiducial markers, vision-based tracking algorithm | da Vinci Research kit | Kinematic inaccuracies, marker tracking error due to the camera calibration and limited intrinsic resolution, AR system latency |

| [56] | RAS | Video augmentation of the primary stereo endoscopy | Da Vinci si robot | Sensitivity to the marker occlusion and distortions in orientation |

| [46] | Rehabilitation | 2D spatial AR projector (InFocus IN116A), Unity Game Engine | 2 DoF planar rehabilitation robot (Quanser) | Occlusion problems, presence of error prone calibration of projection system |

| Work | Application | AR System Components | Robot | Limitations |

|---|---|---|---|---|

| [65] | Robot navigation | Infrared camera, projector, IR filter (Fuji Film IR-76), infrared marker, ARToolkit | Wheeled mobile robot | Positioning error of the robot along the path |

| [66] | Robot navigation | Non-central catadioptric camera (perspective camera, spherical mirror) | Mobile robot (Pioneer 3D-X) | Projection error of 3D virtual object into the 2D plane, high computation effort |

| [67] | Robot programming | Tablet-based AR interface: unity, Vuforia library, smartphone | Sphero 2.0 robot ball | Presence of expertise reversal effect (simple interface is good for expert user, for beginners vice versa) |

| [68] | Robot path planning | Stereo camera, IMU, Visual–Inertial SLAM framework, nadir–facing PSEye camera system, smartphone, VR headset | Micro Aerial Vehicle | Complexity of the system, errors during the automatic generation of the optimized path |

| [70] | Robot localization | Scanning camera (360 degree), display device, visual/infrared markers, web cam | Omni wheel robot | Error prone localization readings from detected markers during the scan, limited updated rate |

| [72] | Simulation system | Camera, HMD, webcam, simulation markers | CNC machine, KUKA KR 16 KS robot | Deviation error of different modules between simulation and real setups, positioning errors due to user’s hand movements |

| [75] | Remote robot programming | HMD (Microsoft HoloLens), Tablet (Android, Windows), PC, marker | Universal Robots UR 5, Comau NJ, KUKA KR 6 | System communication errors |

| [76] | Robot navigation | Webcam, marker, Fuzzy Cognitive Map, GRAFT library | Rohan mobile robot | Error prone registration of the camera position and marker direction, errors from optimization process |

| [77] | Remote robot programming | Depth camera, AR display, haptic device, Kinect sensor, PC camera | Welding robot | Error prone registration of the depth data, difference between virtual and actual paths |

| [38] | Robot teleoperation | Optical tracking system, handheld manipulator, HMD (Oculus Rift DK2), camera, fiducial marker | ABB IRB 140 robot arm | Low accuracy of optical tracking, limited performance due to dynamic obstacles |

| [69] | UAV teleoperation | 3 DoF haptic device, ground fixed camera, virtual camera, virtual compass, PC screen, GPS receiver, marker | Unmanned aerial vehicle | Error prone registration of buildings within the real environment, limited field of view, camera calibration errors |

| [78] | Human robot interaction | HMD (Microsoft HoloLens), LeapMotion sensor, DSLR camera, Kinect camera | Rethink Baxter robot | Errors in AR marker tracking and robot localization |

| [79] | Robot programming (simulation) | HMD (Microsoft HoloLens), speech/gesture inputs, MYO armband (1 DoF control input) | 7 DoF Barrett Whole-Arm Manipulator | Accuracy of robot localization degrade with time and user movements |

| [80] | Robot operation | RGB camera, depth camera, wide-angle camera, tablet, marker | KUKA LBR robot | Time consuming process of object detection and registration |

| [87] | Robot welding | HMD, motion capture system (three Optitrack Flex 3 cameras) | Industrial robot | Small deviation between planned and actual robot paths |

| [89] | Robot grasping | Microsoft Kinect camera, laser projector, OptiTrack motion capture system | ABB robot | Error prone object detection due to the sensor limitations, calibration errors between sensors |

| Work | Application | AR System Components | Robot | Limitations |

|---|---|---|---|---|

| [90] | Remote collaboration | Projector, camera, user interface (mouse-based GUI on PC), mobile base | Robotic arm | Distortions introduced by optics as the camera moves, unstable tracking mechanism |

| [91] | Interaction | Projector, camera, user interface (mouse-based GUI on PC), mobile base | Robotic arm | Distortions introduced by optics as the camera moves, unstable tracking mechanism |

| [92] | Prosthetic device | AR glasses (M100 Smart Glasses), data glove (Cyberglove), 4-in mobile device screen, personal computer | Robot hand (IH2 Azzurra) | Increased time necessary for grasping and completing the pick-and-lift task |

| [93] | Robot remote control | AR glasses (MOVERIO BT-200), Raspberry Pi camera remote video stream, Kinect toolkit, display terminals | Life-size Traffic Police Robot IWI | Latency within the video frames, inaccuracies in the estimation of the depth of the field, memory limitations of the system |

| [94] | AR-based gaming | RGB-D camera, OpenPTrack library, control module, websocket communication | Mobile robot (bomber) | Delay in gaming due to the ROS infrastructure utilized in the system |

| [95] | Interactive interface | AR goggles (Epson Moverio BT-200), motion sensors, Kinect scanner, handheld wearable device, markers | Semi-automatic taping robotic system | Errors during the user localization and calibration of robot-object position |

| [96] | Teleoperation | Projector, camera, user interface (mouse-based GUI on PC), mobile base | Robotic arm | Distortions introduced by optics as the camera moves, unstable tracking mechanism |

| [97] | Teleoperation | Projector, camera, user interface (mouse-based GUI on PC), mobile base | Robotic arm | Distortions introduced by optics as the camera moves, unstable tracking mechanism |

| [98] | Robot programming | Kinect camera, fiducial markers, AR tag tracking | Modular manipulator | Error prone tag placement and marker detection |

| [101] | Robot communication | HMD (Microsoft HoloLens), virtual drone, waypoint delegation interface, motion tracking system | AscTec Hummingbird drone | Limited localization ability, narrow field of view of the HMD, limited generalizability in more cluttered spaces |

| [102] | Teleoperation | Projector, camera, user interface (mouse-based GUI on PC), mobile base | Robotic arm | Distortions introduced by optics as the camera moves, unstable tracking mechanism |

| [103] | Robotic teleoperation | HMD (Microsoft HoloLens), Xbox controller, video display, motion tracking cameras | Parrot Bebop quadcopter | Limited visual feedback from the system during operation of the teleoperated robot |

| [104] | Robotic teleoperation | RGB-D sensor, haptic hand controller, KinectFusion, HMD, marker | Baxter robot | Limited sensing accuracy, irregularity in the 3D reconstruction of the surface |

| [105] | Prosthetic device | Microsoft Kinect, RGB camera, PC display, virtual prosthetic hand | Myoelectric hand prosthesis | Error prone alignment of the virtual hand prosthesis with the user’s forearm |

| [106] | Wearable robotics | Projector, camera, user interface (mouse-based GUI on PC), mobile base | Robotic arm | Distortions introduced by optics as the camera moves, unstable tracking mechanism |

| [107] | BMI robot control | Monocular camera, desktop eye tracker (EyeX), AR interface in PC | 5 DoF desktop robotic arm (Dobot) | Errors due to calibration of the camera, gaze tracking errors |

| [108] | BMI for robot control | Webcam, marker-based tracking | 5 DoF robotic arm (Dobot) | Error prone encoding of the camera position and orientation in 3D world, calibration process |

| [109] | BCI system | Projector, camera, user interface (mouse-based GUI on PC), mobile base | Robotic arm | Distortions introduced by optics as the camera moves, unstable tracking mechanism |

| [110] | Wearable robots | HMD, fiducial markers, Android smartphone, WebSockets | Shape-shifting wearable robot | Tracking limitations due to change in lighting conditions and camera focus, latency in wireless connection |

| Work | Application | AR System Components | Robot | Limitations |

|---|---|---|---|---|

| [115] | Robot perception | Arena tracking system (4 by 4 matrix of HD cameras), 16-core server, QR code, ARGoS simulator, virtual sensors | 15 e-puck mini mobile robots | Tracking failure when objects bigger than robot size occludes the view of ceiling cameras |

| [118] | Swarm robot control | Overhead controller, 4 cameras, unique markers, virtual sensors, infrared sensors | Kilobot swarms | Error prone automatic system calibration and ID assignment technique |

| [117] | Motion study | ARGoS simulator, AR for Kilobots platform, virtual light sensors | Kilobot swarms | Presence of the internal noise in the motion of the Kilobots leading to the errors in precision during the real world experiments |

| [119] | Virtualization environment | Overhead controller (OHC), infrared signals, virtual sensors (infrared/proximity), virtual actuators, KiloGUI application | Kilobot swarms | Communication limitations between kilobots and control module of the Kilogrid simulator, problems with identification of the position and orientation by kilobots at the borders of arena |

| [122] | Sophisticated collective foraging | Overhead control board (OHC), RGB LED, wireless IR communication, AR for Kilobots simulator, IR-OHC, control module (computer) | Kilobots | |

| [41] | Biohybrid design | HMD (Oculus DK2), stereoscopic camera, gamepad, QR-code | Plant-robot system | Limitations in spatial capabilities of the hardware setup, limited immersion performance |

| [116] | Prototyping Cyberphysical systems | Motion-capture system: 18 Vicon T-Series motion-capture cameras, projection system: six ceiling-mounted Sony VPL-FHZ55 ground projectors, motion-capture markers | Autonomous aerial vehicles |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Makhataeva, Z.; Varol, H.A. Augmented Reality for Robotics: A Review. Robotics 2020, 9, 21. https://doi.org/10.3390/robotics9020021

Makhataeva Z, Varol HA. Augmented Reality for Robotics: A Review. Robotics. 2020; 9(2):21. https://doi.org/10.3390/robotics9020021

Chicago/Turabian StyleMakhataeva, Zhanat, and Huseyin Atakan Varol. 2020. "Augmented Reality for Robotics: A Review" Robotics 9, no. 2: 21. https://doi.org/10.3390/robotics9020021

APA StyleMakhataeva, Z., & Varol, H. A. (2020). Augmented Reality for Robotics: A Review. Robotics, 9(2), 21. https://doi.org/10.3390/robotics9020021