Do You Care for Robots That Care? Exploring the Opinions of Vocational Care Students on the Use of Healthcare Robots

Abstract

1. Introduction

How do care and welfare students at diverse levels of vocational education differentially evaluate healthcare robotics in relation to their daily routines?

- RQ-1: Do prospective care professionals perceive different types of robots (Assistive, Monitoring, and Companion) differently in terms of occupational ethics (Beneficence, Maleficence, Justice, Autonomy)?

- RQ-2: Do perceptions of healthcare robots differ between vocational students at lower and middle levels? Related, do they perceive the various robot types differently?

- RQ-3: How do prospective care professionals evaluate care robots in terms of utility and possible use intentions? Related, how do these evaluations differ per robot type?

2. Methods

Participants

3. Data Collection

4. Measures

5. Results

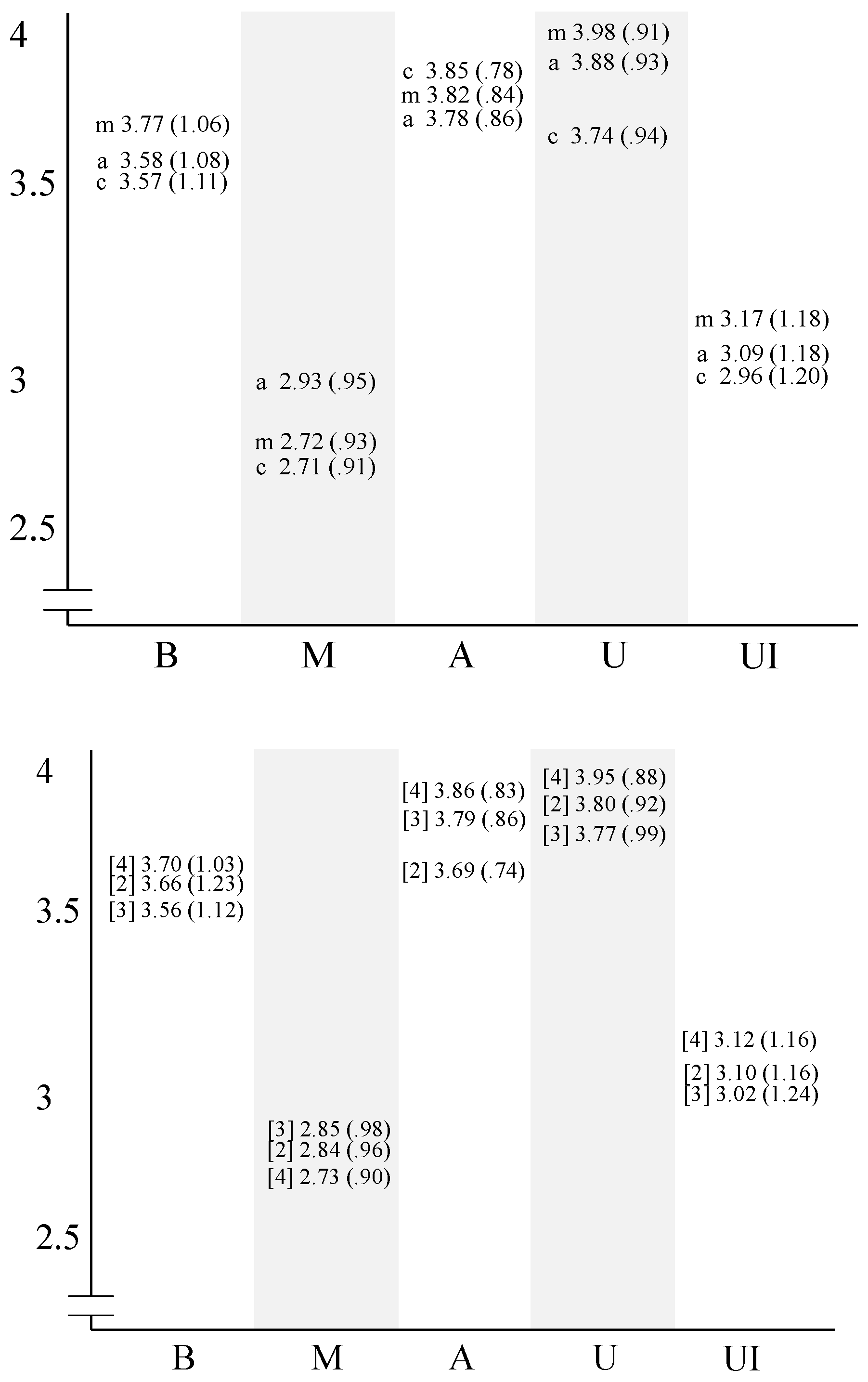

Rating of Appraisal Domains through Ethics

Rating of Appraisal Domains by Robot Type

Rating of Appraisal Domains by Educational Levels

Overall Ratings of Appraisal Domains

6. Conclusions

7. Discussion

Possible Explanations for the Hesitation in Using Care Robots

Education could Make a Difference

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Appendix A

| Handy | Utility 1 + |

| Clumsy | Utility 2 − |

| Unusable | Utility 3 − |

| Suitable for his work | Utility 4 + |

| Harmful | Maleficence 1 + |

| Dangerous | Maleficence 2 + |

| Caring | Beneficence 1 + |

| Does the patient well | Beneficence 2 + |

| Makes someone more independent | Autonomy 1 + |

| Disadvantaged other patients | Justice 1 − |

| Limits freedom of the patient | Autonomy 2 − |

| Ensures that a patients can take better care of himself | Autonomy 3 + |

| Hurts the patient | Maleficence 3 + |

| Makes the patient independent | Autonomy 4 − |

| Makes life worse | Maleficence 4 + |

| Favours some patients | Justice 2 − |

| Split attention evenly | Justice 3 + |

| Increases the quality of life | Beneficence 3 + |

| Let the patient make its own choice | Justice 4 + |

| Is not going to be used | Use Intention 1 − |

| Makes life better | Beneficence 4 + |

| Can help the patient | Beneficence 5 + |

| Treats everybody equally | Justice 5 + |

| Diminishes quality of life | Maleficence 5 + |

| Diminishes self-reliance | Autonomy 5 − |

| Makes no distinction between people | Justice 6 + |

| Neglects the patient | Maleficence 6 + |

| Returns freedom to the patient | Autonomy 6 + |

| Is taking good care of the patient | Beneficence 6 + |

| Does things behind your back | Justice 7 - |

| Limits the patient in his freedom of choice | Justice 8 - |

| With this robot I would like to work | Use Intention 2 + |

| This robot seems suitable for the job | Use Intention 3 + |

| I would like to use such a robot | Use Intention 4 + |

| I would leave the robot in the closet | Use Intention 5 − |

| I rather do the work myself | Use Intention 6 − |

| Working with a robot takes time | Utility 5 − |

| Working with a robot saves time | Utility 6 + |

| As extra control-question regarding Use Intention: “It is more than likely that I will use this robot in the near future” (yes/no). | |

| + and − sign indicate reversed items for counter-indicative purposes. The number refers to the respective question item. All domains have at least six items per category. Answers were scored with Likert-type items, each rated on a six-point scale (1 = strongly disagree, 2 = disagree, 3 = slightly disagree, 4 = slightly agree, 5 = agree, and 6 = strongly agree). | |

References

- Bloom, D.; Chatterji, S.; Kowal, P.; Lloyd-Sherlock, P.; McKee, M. Macroeconomic implications of population ageing and selected policy response. Lancet 2015, 385, 649–657. [Google Scholar] [CrossRef]

- Christensen, K.; Doblhammer, K.; Rau, R.; Vaupel, J. Ageing populations: The challenges ahead. Lancet 2009, 374, 1196–1208. [Google Scholar] [CrossRef]

- Lindahl-Jacobsen, R.; Rau, R.; Canudas-Romo, V.; Jeune, V.; Lenart, A.; Christensen, K.; Vaupel, J. Rise, stagnation, and rise of Danish women’s life expectancy. Proc. Natl. Acad. Sci. USA 2016, 113, 4005–4020. [Google Scholar] [CrossRef]

- Schwiegelshohn, F.; Hubner, M.; Wehner, P.; Gohringer, D. Tackling the New Health-Care Paradigm Through Service Robotics: Unobtrusive, efficient, reliable and modular solutions for assisted-living environments. IEEE Consum. Electron. Mag. 2017, 6, 34–41. [Google Scholar] [CrossRef]

- Giesbers, H.; Verwey, A.; de Beer, J.D. Vergrijzing Samengevat; Volksgezondheid Toekomst Verkenning. Nationaal Kompas Volksgezondheid [Aging in Summary; Public Health Future Exploration. National Compass Public Health]. 2013. Available online: http://www.nationaalkompas.nl/bevolking/vergrijzing/vergrijzing-samengevat/ (accessed on 28 November 2018).

- Broadbent, E.; Stafford, R.; MacDonald, B. Acceptance of Healthcare Robots for the Older Population. Int. J. Soc. Robot. 2009, 1, 319–330. [Google Scholar] [CrossRef]

- Bemelmans, R.; Gelderblom, G.; Jonker, P.; de Witte, L. Socially Assistive Robots in Elderly Care: A systematic Review into Effects and Effectiveness. J. Am. Med Dir. Assoc. 2012, 13, 114–120. [Google Scholar] [CrossRef]

- Banks, M.; Willoughby, L.; Banks, W. Aninmal-Assisted Therapy and Loneliness in Nursing Homes: Use of Robotic versus Living Dogs. J. Am. Med Dir. Assoc. 2008, 9, 173–177. [Google Scholar] [CrossRef] [PubMed]

- Broekens, J.; Heerink, M.; Rosendal, H. Assistive Social Robots in Elderly Care: A Review. Gerontechnology 2009, 8, 94–103. [Google Scholar] [CrossRef]

- Frennert, S.; Östlund, B.; Eftring, H. Would Granny let an assistive robot into her home? In International Conference on Social Robotics; Springer: Berlin/Heidelberg, Germany, 2012; pp. 128–137. [Google Scholar]

- Klein, B.; Cook, G. Emotional Robotics in Elder Care–A Comparison of Findings in the UK and Germany. In International Conference on Social Robotics; Springer: Berlin/Heidelberg, Germany, October 2012; pp. 108–117. [Google Scholar]

- Moyle, W.; Beattie, E.; Cooke, M.; Jones, C.; Klein, B.; Cook, C.; Gray, C. Exploring the Effect of Companion Robots on Emotional Expression in Older Adults with Dementia: A Pilot Randomized Controlled Trial. J. Gerontol. Nurs. 2016, 39, 46–53. [Google Scholar] [CrossRef]

- Tamura, T.; Yonemitsu, S.; Itoh, A.; Oikawa, D.; Kawakami, A.; Higashi, Y.; Fujimoto, T.; Nakajima, K. Is an Entertainment Robot Useful in the Care of Elderly People With Severe Dementia? J. Gerontol. Ser. A 2004, 59, M83–M85. [Google Scholar] [CrossRef]

- Lauckner, M.; Kobiela, F.; Manzey, D. ‘Hey robot, please step back!’—Exploration of a spatial threshold of comfort for human-mechanoid spatial interaction in a hallway scenario. In Proceedings of the 23rd IEEE International Symposium on Robot and Human Interactive Communication: Human-Robot Co-Existence: Adaptive Interfaces and Systems for Daily Life, Therapy, Assistance and Socially Engaging Interactions (IEEE RO-MAN 2014), Edinburgh, UK, 25–29 August 2014; Institute of Electrical and Electronics Engineers: New York, NY, USA, 2014; pp. 780–787. [Google Scholar]

- Van Kemenade, M.; Hoorn, J.; Konijn, E. Healthcare Students’ Ethical Considerations of Care Robots in The Netherlands. Appl. Sci. 2018, 8, 1712. [Google Scholar] [CrossRef]

- Ienca, M.; Jotterand, F.; Vica, C. Social and Assistive Robotics in Dementia Care: Ethical Recommendations for Research and Practice. Int. J. Soc. Robot. 2016, 8, 565–573. [Google Scholar] [CrossRef]

- MBO Raad. Dutch VET. Retrieved from MBO Counsil. Available online: https://www.mboraad.nl/english (accessed on 27 September 2017).

- Beauchamp, T.L.; Childress, J.F. Principles of Biomedical Ethics; Oxford University Press: Oxford, UK, 2013. [Google Scholar]

- Yu, T.; Lin, M.; Liao, Y. Understanding factors influencing information communication technology adoption behavior: The moderators of information literacy and digital skills. Comput. Hum. Behav. 2017, 71, 196–208. [Google Scholar] [CrossRef]

- Cook, N.; Winkler, S. Acceptance, Usability and Health Applications of Virtual Worlds by Older Adults: A Feasibility Study. JMIR Res. Protoc. 2016, 5, e81. [Google Scholar] [CrossRef]

- Massimiliano, S.; Giuliana, M.; Fornara, F. Robots in a Domestic Setting: A psychological Approach. Univ. Access Inf. Soc. 2005, 4, 146–155. [Google Scholar]

- CBS Statline 2018. MBO; Doorstroom en Uitstroom, Migratieachtergrond, Generatie, Regiokenmerken. 1 24. November 19, 2018. [VET; Flow and Outflow, Migration Background, Generation, Regional Characteristics]. Available online: https://statline.cbs.nl/StatWeb/publication/?VW=T&DM=SLNL&PA=71895NED&D1=a&D2=2,5,8,11&D3=2,8,10&D4=a&D5=0-1,3-4&D6=0&D7=0&D8=l&HD=101029-1031&HDR=G6,G5,G7,G4,T&STB=G3,G1,G2 (accessed on 14 January 2019).

- MBO Raad–[Vocational Counsel]. Het MBO Feiten en Cijfers [The VET, Facts and Figures]. 2019. Available online: https://www.mboraad.nl/het-mbo/feiten-en-cijfers/mbo-scholen (accessed on 28 February 2019).

- Rijksoverheid–[Dutch Government]. Onderwijs in Cijfers [Education in Figures]. 2018. Available online: https://www.onderwijsincijfers.nl/kengetallen/mbo (accessed on 28 February 2019).

- “Alice Cares” [“Ik ben Alice”]. Documentary, Directed by S. Burger. Keydocs/Doxy/NCRV. 2015. Available online: http://www.ikbenalice.nl/ (accessed on 14 January 2019).

- Taber, K. The use of Cronbach’s alpha when developing and reporting research instruments in science education. Res. Sci. Educ. 2018, 48, 1273–1296. [Google Scholar] [CrossRef]

- Adams, T. Gender and feminization in healthcare professions. Sociol. Compass 2010, 4, 454–465. [Google Scholar] [CrossRef]

- Armstrong, R.A. When to use the Bonferroni correction. Ophthalmic Physiol. Opt. 2014, 34, 502–508. [Google Scholar] [CrossRef]

- Kurshan, M. Teaching 21st Century Skills For 21st Century Success Requires an Ecosystem Approach. 2017. Available online: https://www.forbes.com/sites/barbarakurshan/2017/07/18/teaching-21st-century-skills-for-21st-century-success-requires-an-ecosystem-approach/#1c5790f3fe64 (accessed on 28 November 2018).

- Teo, T.; Zhou, M. Explaining the intention to use technology among university students: A structural equation modeling approach. J. Comput. High. Educ. 2014, 26, 124–142. [Google Scholar] [CrossRef]

- Lewis, J.; West, A. Re-Shaping Social Care Services for Older People in England: Policy Development and the Problem of Achieving ‘Good Care’. J. Soc. Policy 2014, 43, 1–18. [Google Scholar] [CrossRef]

- Public Attitudes towards Robots; Special Eurobarometer 382; European Commission: Brussels, Belgium, 2012.

- Holloway, I.; Wheeler, S. Qualitative Research in Nursing and Healthcare; Wiley-Blackwell: Oxford, UK, 2015. [Google Scholar]

- Kachouie, R.; Sedighadeli, S.; Khosla, R.; Chu, M.T. Socially Assistive Robots in Elderly Care: A Mixed-Method Systematic Literature Review. Int. J. Hum. Comput. Interact. 2014, 30, 369–393. [Google Scholar] [CrossRef]

| Mean | SD | Cronbach’s Alpha | |

|---|---|---|---|

| Appraisal Domains | |||

| Beneficence | 3.640 | 1.088 | 0.88 |

| Maleficence | 2.788 | 1.088 | 0.82 |

| Autonomy | 3.818 | 0.832 | 0.78 |

| Utility | 3.868 | 0.929 | 0.79 |

| Use Intention | 3.080 | 1.190 | 0.90 |

| Justice | - | - | 0.57 |

| Percentage | |||

| Gender | |||

| Female | 92.9 | - | |

| Male | 7.1 | - | |

| Level of Education | |||

| Vocational Level 2 | 9.1 | - | |

| Vocational Level 3 | 39.0 | - | |

| Vocational Level 4 | 51.9 | - | |

| Robot Type | |||

| Assisting | 33.25 | - | |

| Monitoring | 33.46 | - | |

| Companion | 33.29 | - | |

| Skilled with Computer | |||

| Yes | 93.6 | - | |

| No | 6.4 | - | |

| Skilled in Technology | |||

| Yes | 45.8 | - | |

| No | 54.2 | - | |

| Enjoys Working with Computers | |||

| Yes | 72.3 | - | |

| No | 27.7 | - | |

| Age | |||

| ≤16 | 6.5 | - | |

| 17 | 18.3 | - | |

| 18 | 19.5 | - | |

| 19 | 15.1 | - | |

| 20 | 10.4 | - | |

| 21 | 5.3 | - | |

| 22 | 3.5 | - | |

| 23 | 2.9 | - | |

| ≥24 | 18.5 | - | |

| Lower Vocational | Middle Vocational | Total | Chi-Square | |

|---|---|---|---|---|

| Mean (SD) | Mean (SD) | Mean (SD) | ||

| Assistive | ||||

| Beneficence | 3.54 (1.14) | 3.62 (1.02) | 3.58 (1.08) | 35.80 |

| Maleficence | 2.97 (0.99) | 2.89 (0.92) | 2.93 (0.95) | 29.52 |

| Autonomy | 3.72 (0.88) | 3.83 (0.84) | 3.78 (0.86) | 43.20 |

| Utility | 3.77 (1.00) | 3.98 (0.85) | 3.88 (0.93) | 42.93 |

| Use Intention | 3.07 (1.24) | 3.13 (1.13) | 3.10 (1.18) | 27.97 |

| Monitoring | ||||

| Beneficence | 3.67 (1.10) | 3.87 (1.02) | 3.77 (1.06) | 46.56 * |

| Maleficence | 2.80 (0.96) | 2.66 (0.91) | 2.72 (0.93) | 34.24 |

| Autonomy | 3.77 (0.84) | 3.86 (0.85) | 3.82 (0.84) | 40.55 |

| Utility | 3.88 (0.92) | 4.08 (0.89) | 3.98 (0.91) | 44.19 * |

| Use Intention | 3.07 (1.20) | 3.27 (1.16) | 3.17 (1.18) | 39.62 |

| Companion | ||||

| Beneficence | 3.53 (1.19) | 3.60 (1.04) | 3.57 (1.11) | 33.73 |

| Maleficence | 2.77 (0.96) | 2.66 (0.85) | 2.71 (0.91) | 23.87 |

| Autonomy | 3.81 (0.79) | 3.90 (0.78) | 3.86 (0.79) | 42.67 |

| Utility | 3.68 (1.00) | 3.80 (0.87) | 3.75 (0.94) | 42.70 |

| Use Intention | 2.97 (1.23) | 2.96 (1.17) | 2.97 (1.20) | 42.16 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

van Kemenade, M.A.M.; Hoorn, J.F.; Konijn, E.A. Do You Care for Robots That Care? Exploring the Opinions of Vocational Care Students on the Use of Healthcare Robots. Robotics 2019, 8, 22. https://doi.org/10.3390/robotics8010022

van Kemenade MAM, Hoorn JF, Konijn EA. Do You Care for Robots That Care? Exploring the Opinions of Vocational Care Students on the Use of Healthcare Robots. Robotics. 2019; 8(1):22. https://doi.org/10.3390/robotics8010022

Chicago/Turabian Stylevan Kemenade, Margo A. M., Johan F. Hoorn, and Elly A. Konijn. 2019. "Do You Care for Robots That Care? Exploring the Opinions of Vocational Care Students on the Use of Healthcare Robots" Robotics 8, no. 1: 22. https://doi.org/10.3390/robotics8010022

APA Stylevan Kemenade, M. A. M., Hoorn, J. F., & Konijn, E. A. (2019). Do You Care for Robots That Care? Exploring the Opinions of Vocational Care Students on the Use of Healthcare Robots. Robotics, 8(1), 22. https://doi.org/10.3390/robotics8010022