Blast Hole Seeking and Dipping: Navigation and Perception Framework in a Mine Site Inspection Robot

Abstract

1. Introduction

1.1. Control and Planning

1.2. Sensing and Perception

1.3. Data Availability

1.4. Contributions and Scope

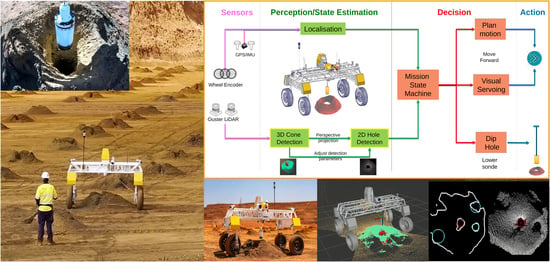

2. System Overview

3. Navigation Strategy

3.1. Decoupled Localisation

3.2. Control Paradigm

4. Target Detection and Tracking

4.1. Ground Tilt Correction

4.2. Cone Detection

4.3. Virtual Image Formation

4.4. Tracking with Distance-Dependent Image Projection

4.4.1. Point Sparsity

4.4.2. Cone Face Coverage

4.4.3. Hole Size

4.4.4. Cone Height

5. Hole Detection

- 1.

- 2.

- Apply morphological operators and Gaussian blur filters to fill up spacings from sparse points. After binary thresholding, a well-rendered whole-cone object appears and is segmented from the hole (see Figure 8c).

- 3.

- Identify the hole object using simple moment analysis (see Figure 8d).

- 4.

- Apply fine-stage projection using the blob centre as the projection point; the hole object is at the centre of the new image and is very circular (see Figure 8e).

- 5.

- Apply similar morphological operations to form a connected cone object, then extract features to identify circular regions (see Figure 8f).

- 6.

- 7.

- Compute the hole’s 3D position by first back-projecting the 2D centre on the stored depth map value using Equation (4), then another transform to determine the robot-centred coordinates.

5.1. Coarse Detection

5.2. Fine Detection

5.2.1. FRST Feature and ROI Extraction

5.2.2. RANSAC and Circle Fitting

5.2.3. Non-Maximum Suppression

6. Experiments

6.1. DIPPeR Design

6.1.1. Hardware Configuration

6.1.2. Software Architecture

6.2. Feature Tests

6.2.1. Detection Accuracy Analysis—In-Lab Tests

6.2.2. Single-Cone Visual Servoing—In-Lab Tests

6.2.3. Detection Robustness Analysis—On Real-World Data

6.2.4. Cone Detection Result—Simulated Data

6.2.5. Approach Behaviour Analysis—Real-World Data

6.2.6. Mission Test—On Usyd Campus

6.3. Comparison to Learning-Based Method— Real-World Data

6.4. Long-Duration Mission Test—Simulation

6.5. Site Trials

7. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A. FRST Feature Extraction

Appendix B. Taubin’s Circle Fitting Algorithm—Conversion from Constrained Optimisation to Unconstrained Optimisation

References

- Noopur, J. An Overview of Drilling and Blasting in Mining. AZoMining 2025. Available online: https://www.azomining.com/Article.aspx?ArticleID=1848 (accessed on 16 November 2025).

- Kokkinis, A.; Frantzis, T.; Skordis, K.; Nikolakopoulos, G.; Koustoumpardis, P. Review of Automated Operations in Drilling and Mining. Machines 2024, 12, 845. [Google Scholar] [CrossRef]

- Leung, R.; Hill, A.J.; Melkumyan, A. Automation and Artificial Intelligence Technology in Surface Mining: A Brief Introduction to Open-Pit Operations in the Pilbara. IEEE Robot. Autom. Mag. 2023, 32, 164–183. [Google Scholar] [CrossRef]

- Kottege, N.; Williams, J.; Tidd, B.; Talbot, F.; Steindl, R.; Cox, M.; Frousheger, D.; Hines, T.; Pitt, A.; Tam, B.; et al. Heterogeneous Robot Teams with Unified Perception and Autonomy: How Team CSIRO Data61 Tied for the Top Score at the DARPA Subterranean Challenge. IEEE Trans. Field Robot. 2025, 2, 100–130. [Google Scholar] [CrossRef]

- Arad, B.; Balendonck, J.; Barth, R.; Ben-Shahar, O.; Edan, Y.; Hellström, T.; Hemming, J.; Kurtser, P.; Ringdahl, O.; Tielen, T. Development of a sweet pepper harvesting robot. J. Field Robot. 2020, 37, 1027–1039. [Google Scholar] [CrossRef]

- Eiffert, S.; Wallace, N.D.; Kong, H.; Pirmarzdashti, N.; Sukkarieh, S. Resource and Response Aware Path Planning for Long-Term Autonomy of Ground Robots in Agriculture. Field Robot. 2022, 2, 1–33. [Google Scholar] [CrossRef]

- Bai, Y.; Zhang, B.; Xu, N.; Zhou, J.; Shi, J.; Diao, Z. Vision-based navigation and guidance for agricultural autonomous vehicles and robots: A review. Comput. Electron. Agric. 2023, 205, 107584. [Google Scholar] [CrossRef]

- Vatavuk, I.; Polic, M.; Hrabar, I.; Petric, F.; Orsag, M.; Bogdan, S. Autonomous, Mobile Manipulation in a Wall-Building Scenario: Team LARICS at MBZIRC 2020. Field Robot. 2022, 2, 201–221. [Google Scholar] [CrossRef]

- Drilling and Blasting. 2025. Available online: https://en.wikipedia.org/wiki/Drilling%5Fand%5Fblasting (accessed on 5 November 2025).

- Real-Time Kinematic Positioning. 2025. Available online: https://en.wikipedia.org/wiki/Real-time_kinematic_positioning (accessed on 10 November 2025).

- Valencia, J.; Emami, E.; Battulwar, R.; Jha, A.; Gomez, J.A.; Moniri-Morad, A.; Sattarvand, J. Blasthole Location Detection Using Support Vector Machine and Convolutional Neural Networks on UAV Images and Photogrammetry Models. Electronics 2024, 13, 1291. [Google Scholar] [CrossRef]

- Balamurali, M.; Hill, A.J.; Martinez, J.; Khushaba, R.; Liu, L.; Kamyabpour, N.; Mihankhah, E. A Framework to Address the Challenges of Surface Mining through Appropriate Sensing and Perception. In Proceedings of the 2022 17th International Conference on Control, Automation, Robotics and Vision (ICARCV), Singapore, 11–13 December 2022; pp. 261–267. [Google Scholar] [CrossRef]

- Bogoslavskyi, I.; Stachniss, C. Efficient Online Segmentation for Sparse 3D Laser Scans. PFG-J. Photogramm. Remote Sens. Geoinf. Sci. 2017, 85, 41–52. [Google Scholar] [CrossRef]

- Chen, X.; Li, S.; Mersch, B.; Wiesmann, L.; Gall, J.; Behley, J.; Stachniss, C. Moving Object Segmentation in 3D LiDAR Data: A Learning-Based Approach Exploiting Sequential Data. IEEE Robot. Autom. Lett. 2021, 6, 6529–6536. [Google Scholar] [CrossRef]

- Mohapatra, S.; Hodaei, M.; Yogamani, S.; Milz, S.; Gotzig, H.; Simon, M.; Rashed, H.; Maeder, P. LiMoSeg: Real-time Bird’s Eye View based LiDAR Motion Segmentation. In Proceedings of the 17th International Joint Conference on Computer Vision, Imaging and Computer Graphics Theory and Applications (VISIGRAPP 2022), Online, 6–8 February 2022; Volume 5 VISAPP, pp. 828–835. [Google Scholar] [CrossRef]

- Nowakowski, M.; Kurylo, J.; Dang, P.H. Camera Based AI Models Used with LiDAR Data for Improvement of Detected Object Parameters. In International Conference on Modelling and Simulation for Autonomous Systems; Lecture Notes in Computer Science; Springer Nature: Cham, Switzerland, 2025; Volume 14615, pp. 287–301. [Google Scholar]

- Shan, M.; Narula, K.; Wong, Y.F.; Worrall, S.; Khan, M.; Alexander, P.; Nebot, E. Demonstrations of Cooperative Perception: Safety and Robustness in Connected and Automated Vehicle Operations. Sensors 2021, 21, 200. [Google Scholar] [CrossRef] [PubMed]

- Zhu, Y.; Jia, X.; Yang, X.; Yan, J. FlatFusion: Delving into Details of Sparse Transformer-based Camera-LiDAR Fusion for Autonomous Driving. In Proceedings of the 2025 IEEE International Conference on Robotics and Automation (ICRA), Atlanta, GA, USA, 19–23 May 2025; pp. 8581–8588. [Google Scholar] [CrossRef]

- Wang, H.; Tang, H.; Shi, S.; Li, A.; Li, Z.; Schiele, B.; Wang, L. UniTR: A Unified and Efficient Multi-Modal Transformer for Bird’s-Eye-View Representation. In Proceedings of the 2023 IEEE/CVF International Conference on Computer Vision (ICCV), Paris, France, 1–6 October 2023; pp. 6769–6779. [Google Scholar] [CrossRef]

- Liu, Z.; Tang, H.; Amini, A.; Yang, X.; Mao, H.; Rus, D.; Han, S. BEVFusion: Multi-Task Multi-Sensor Fusion with Unified Bird’s-Eye View Representation. In Proceedings of the 2023 IEEE International Conference on Robotics and Automation (ICRA), London, UK, 29 May–2 June 2023; pp. 2774–2781. [Google Scholar] [CrossRef]

- Paul, G.; Liu, L.; Liu, D. A novel approach to steel rivet detection in poorly illuminated steel structural environments. In Proceedings of the 2016 14th International Conference on Control, Automation, Robotics and Vision (ICARCV), Phuket, Thailand, 13–15 November 2016. [Google Scholar] [CrossRef]

- Hartley, R.; Zisserman, A. Multiple View Geometry in Computer Vision, 2nd ed.; Cambridge University Press: Cambridge, MA, USA, 2004. [Google Scholar]

- Qin, T.; Li, P.; Shen, S. VINS-Mono: A Robust and Versatile Monocular Visual-Inertial State Estimator. IEEE Trans. Robot. 2018, 34, 1004–1020. [Google Scholar] [CrossRef]

- Bouguet, J.Y. Pyramidal Implementation of the Lucas Kanade Feature Tracker; Intel Corporation Microprocessor Research Labs: Hillsboro, OR, USA, 2000. [Google Scholar]

- Mur-Artal, R.; Montiel, J.M.M.; Tardós, J.D. ORB-SLAM: A Versatile and Accurate Monocular SLAM System. IEEE Trans. Robot. 2015, 31, 1147–1163. [Google Scholar] [CrossRef]

- Mur-Artal, R.; Tardos, J.D. Visual-Inertial Monocular SLAM With Map Reuse. IEEE Robot. Autom. Lett. 2017, 2, 796–803. [Google Scholar] [CrossRef]

- McConnell, R.K. Method of and Apparatus for Pattern Recognition. U.S. Patent 4,567,610, 28 January 1986. [Google Scholar]

- Kiran, K.; Worrall, S.; Berrio Perez, J.S.; Balamurali, M. CyberPotholes: Pothole defect detection using synthetic depth-maps. In Proceedings of the 2024 IEEE 27th International Conference on Intelligent Transportation Systems (ITSC), Edmonton, AB, Canada, 24–27 September 2024. [Google Scholar]

- Choset, H.; Pignon, P. Coverage path planning: The boustrophedon cellular decomposition. In Field and Service Robotics; Springer: London, UK, 1998; pp. 203–209. [Google Scholar]

- Liu, L.; Zhang, T.; Liu, Y.; Leighton, B.; Zhao, L.; Huang, S.; Dissanayake, G. Parallax Bundle Adjustment on Manifold with Improved Global Initialization. In Algorithmic Foundations of Robotics XIII, Wafr 2018; Springer Proceedings in Advanced Robotics; Springer: Cham, Switzerland, 2020; Volume 14. [Google Scholar] [CrossRef]

- Liu, L.; Mikhankha, E.; Hill, A. Robust blast-hole Detection for a Mine-site Inspection Robot. In Proceedings of the Australasian Conference on Robotics and Automation (ACRA 2022), Brisbane, Australia, 6–8 December 2022; pp. 176–184. [Google Scholar]

- Loy, G.; Zelinsky, A. A Fast Radial Symmetry Transform for Detecting Points of Interest. In Computer Vision—ECCV 2002. ECCV 2002; Lecture Notes in Computer Science; Springer: Berlin/Heidelberg, Germany, 2002; Volume 2350. [Google Scholar] [CrossRef]

- Al-sharadqah, A.; Chernov, N. Error analysis for circle fitting algorithms. Electron. J. Stat. 2009, 3, 886–911. [Google Scholar] [CrossRef]

- Taubin, G. Estimation of planar curves, surfaces, and nonplanar space curves defined by implicit equations with applications to edge and range image segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 1991, 13, 1115–1138. [Google Scholar] [CrossRef]

- Fischler, M.A.; Bolles, R.C. Random sample consensus: A paradigm for model fitting with applications to image analysis and automated cartography. Commun. ACM 1981, 24, 381–395. [Google Scholar] [CrossRef]

- OS1. 2022. Available online: https://ouster.com/products/hardware/os1-lidar-sensor (accessed on 1 October 2022).

- OS0. 2022. Available online: https://ouster.com/products/hardware/os0-lidar-sensor (accessed on 1 October 2022).

- Dual Antenna GNSS/INS|Certus. 2023. Available online: https://www.advancednavigation.com/inertial-navigation-systems/mems-gnss-ins/certus/ (accessed on 1 August 2023).

- Quigley, M.; Gerkey, B.; Conley, K.; Faust, J.; Foote, T.; Leibs, J.; Berger, E.; Wheeler, R.; Ng, A. ROS: An open-source Robot Operating System. In Proceedings of the 2009 International Conference on Robotics and Automation (ICRA), Workshop on Open Source Software, Kobe, Japan, 12–17 May 2009. [Google Scholar]

- Rösmann, C.; Hoffmann, F.; Bertram, T. Integrated online trajectory planning and optimization in distinctive topologies. Robot. Auton. Syst. 2017, 88, 142–153. [Google Scholar] [CrossRef]

- Moore, T.; Stouch, D. A Generalized Extended Kalman Filter Implementation for the Robot Operating System. In Intelligent Autonomous Systems 13; Advances in Intelligent Systems and Computing; Springer: Cham, Switzerland, 2014; Volume 302. [Google Scholar] [CrossRef]

- Rusu, R.B.; Cousins, S. 3D is here: Point Cloud Library (PCL). In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Shanghai, China, 9–13 May 2011. [Google Scholar]

- Bradski, G. The OpenCV Library. Dr. Dobb’s J. Softw. Tools 2000, 25, 120–123. [Google Scholar]

- RobMa/circle_fit. 2019. Available online: https://github.com/RobMa/circle_fit (accessed on 1 August 2022).

- Reality and Spatial Modeling. 2025. Available online: https://www.bentley.com/software/reality-and-spatial-modeling/ (accessed on 23 May 2025).

- Ultralytics YOLO11. 2025. Available online: https://docs.ultralytics.com/models/yolo11/ (accessed on 11 January 2025).

- Bronstein, M.M.; Bruna, J.; LeCun, Y.; Szlam, A.; Vandergheynst, P. Geometric Deep Learning: Going beyond Euclidean data. IEEE Signal Process. Mag. 2017, 34, 18–42. [Google Scholar] [CrossRef]

- DroneDeploy. 2025. Available online: https://dronedeploy.com/ (accessed on 12 January 2025).

| Navigation Strategy | Ours | Map | Odom |

|---|---|---|---|

| Total missions attempted | 15 | 7 | 5 |

| Successful missions | 15 | 0 | 0 |

| Total holes dipped | 60 | 1 | 2 |

| Tracks after rotation | Y | N | N |

| Test Category | Ours | Yolo | ||

|---|---|---|---|---|

| Confusion Matrix | Example | Confusion Matrix | Example | |

| (a) General 260 samples |  |  |  |  |

| (b) Feature sn’tvt 38 samples |  |  |  |  |

| (c) Phantom hole 109 samples |  |  |  |  |

| (d) Hole centrl’ty 92 samples |  |  |  |  |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Liu, L.; Mihankhah, E.; Wallace, N.D.; Martinez, J.; Hill, A.J. Blast Hole Seeking and Dipping: Navigation and Perception Framework in a Mine Site Inspection Robot. Robotics 2025, 14, 173. https://doi.org/10.3390/robotics14120173

Liu L, Mihankhah E, Wallace ND, Martinez J, Hill AJ. Blast Hole Seeking and Dipping: Navigation and Perception Framework in a Mine Site Inspection Robot. Robotics. 2025; 14(12):173. https://doi.org/10.3390/robotics14120173

Chicago/Turabian StyleLiu, Liyang, Ehsan Mihankhah, Nathan D. Wallace, Javier Martinez, and Andrew J. Hill. 2025. "Blast Hole Seeking and Dipping: Navigation and Perception Framework in a Mine Site Inspection Robot" Robotics 14, no. 12: 173. https://doi.org/10.3390/robotics14120173

APA StyleLiu, L., Mihankhah, E., Wallace, N. D., Martinez, J., & Hill, A. J. (2025). Blast Hole Seeking and Dipping: Navigation and Perception Framework in a Mine Site Inspection Robot. Robotics, 14(12), 173. https://doi.org/10.3390/robotics14120173