A Perspective Review on Integrating VR/AR with Haptics into STEM Education for Multi-Sensory Learning †

Abstract

:1. Introduction

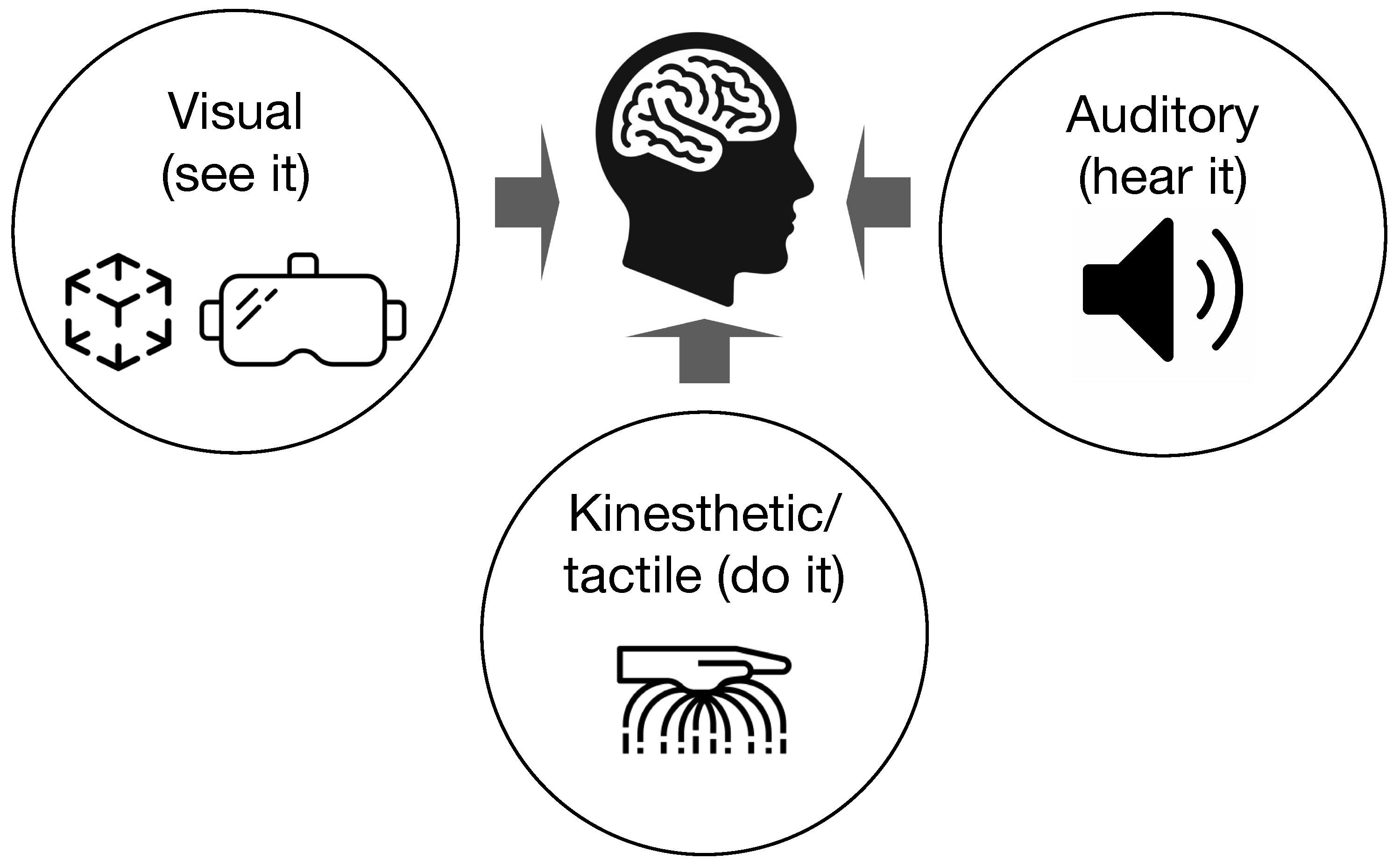

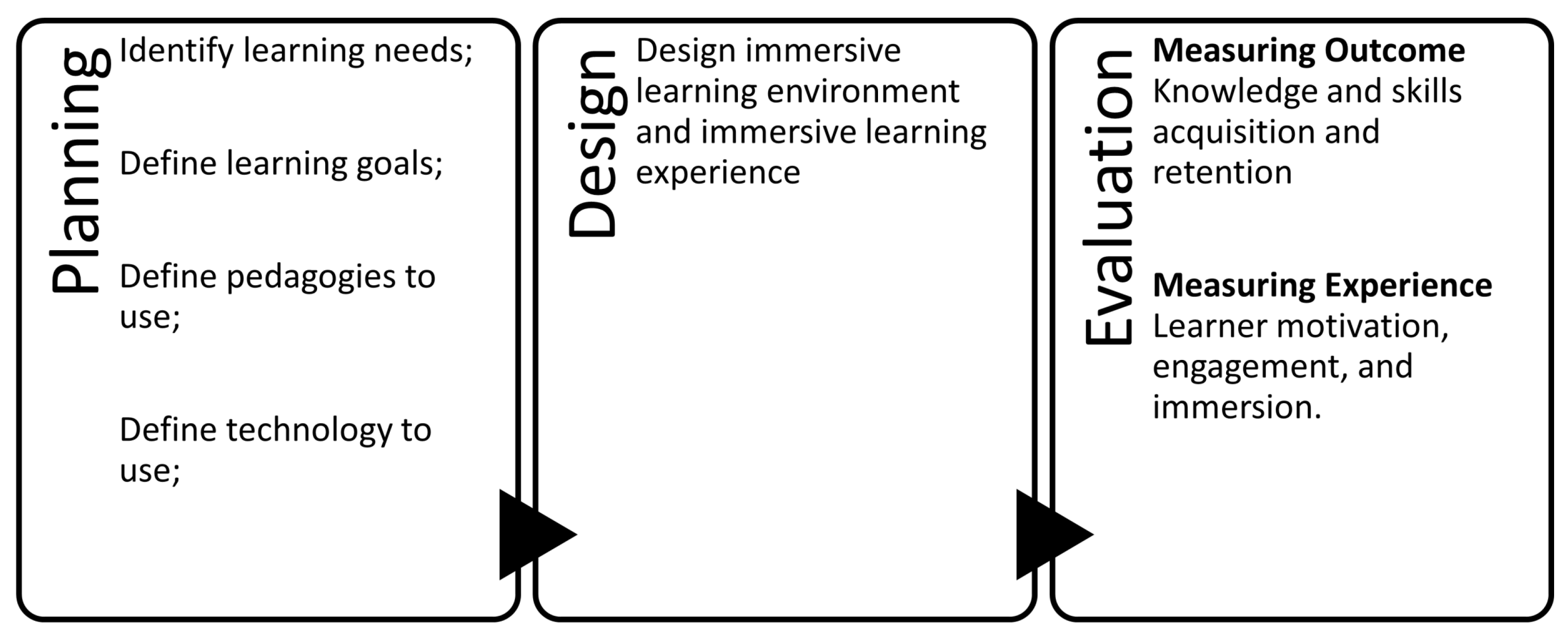

2. Learning Theories

3. Perspective Strategy

3.1. Required School Tools

3.2. Potential Home Solutions

3.3. Requirements to Facilities

- Spacious: i.e., no obstruction, giving enough space to act without worries of hitting solid objects, such as walls or tables when using VR/AR and disconnect from “reality”.

- Flexible use: for private and public room purposes. This is true for those who want to learn in a small group as VR/AR apps require one to do certain movements or even repeat from the auditory feedback to be able to conduct the VR tasks. This solution will give an opportunity to minimise the psychological discomfort for those who need it. The same space could also be used in a larger group as a means to learn together and to get peer feedback.

- Health and safety issue: as some physiological discomforts have been reported in many studies [35,36], in the facility requirement guideline, we recommend having more than one person in a room to anticipate if an unwanted event occurs to a learner such as motion sickness symptoms, thus others can help.

4. VR Technology

5. AR Technology

6. Haptic Technology

7. Evaluation, Assessment and Eye-Tracking Technology

8. Discussion

8.1. Implications

- Practitioners will find much need to advance the current technology as well as to find ways how it can be applied effectively and efficiently.

- For theory, much additional work is needed. Our work has shown that the gaps in understanding VR, AR and haptics in education are still large, and they are rather widening than being closed. We expect that more targeted research will be needed, and that research will need to be multi-disciplinary to follow pace with the technological development yet grasp the consequences for teaching.

- Educators will be able to use rich tools in the near future, but they will need much help in doing so. Selecting appropriate courses, fitting didactic, setting up equipment, and evaluating its use will need support. Moreover, close collaboration with those who develop VR/AR environment wit haptics will be required.

- Developers and designers of virtual worlds and augmented reality applications will need better tools to create multi-sensory learning environments that have high educational value. Work is also needed to improve their interface to educators, making exchange easy and fruitful.

8.2. Limitations

8.3. Future Research

- to operationalise further the multisensory learning concept for STEM education by taking advantages of current development of the VR, AR and haptic technologies, by focusing on the more affordable technologies;

- to focus on hands-on laboratory work and pedagogical tools that provide practical experiences for the students;

- to provide assessment tools for educators by having competencies evaluation on using VR, AR and wearable haptics in STEM education;

- to explore open source libraries of VR, AR and wearable haptics to be used for re-designed study modules and engineering laboratories;

- to develop teaching modules and to test our concept with engineering students in an experimental setting, to evaluate the applicability of the concept.

9. Concluding Remarks

Author Contributions

Funding

Conflicts of Interest

Abbreviations

| STEM | Science, technology, engineering, and mathematics |

| COTS | Commercially available off-the-shelf |

| VR | Virtual reality |

| AR | Augmented reality |

| VAKT | Visual, auditory, kinesthetic, and tactile |

References

- United Nations Educational, Scientific and Cultural Organization (UNESCO). National Learning Platforms and Tools. 2021. Available online: https://en.unesco.org/covid19/educationresponse/nationalresponses (accessed on 6 May 2021).

- United Nations Educational, Scientific and Cultural Organization (UNESCO). Distance Learning Solutions. 2021. Available online: https://en.unesco.org/covid19/educationresponse/solutions (accessed on 6 May 2021).

- Colthorpe, K.; Ainscough, L. Do-it-yourself physiology labs: Can hands-on laboratory classes be effectively replicated online? Adv. Physiol. Educ. 2021, 45, 95–102. [Google Scholar] [CrossRef] [PubMed]

- Thompson, P. Learning by doing. In Handbook of the Economics of Innovation; Elsevier: Amsterdam, The Netherlands, 2010; Volume 1, pp. 429–476. [Google Scholar]

- Wood, D.F. Problem based learning. BMJ 2003, 326, 328–330. [Google Scholar] [CrossRef] [PubMed]

- Settles, B. Active Learning Literature Survey; Technical Report 1648; University of Wisconsin—Madison Department of Computer Sciences: Madison, WI, USA, 2009. [Google Scholar]

- Sanfilippo, F.; Osen, O.L.; Alaliyat, S. Recycling A Discarded Robotic Arm For Automation Engineering Education. In Proceedings of the 28th European Conference on Modelling and Simulation (ECMS), Brescia, Italy, 27–30 May 2014; pp. 81–86. [Google Scholar]

- Sanfilippo, F.; Austreng, K. Enhancing teaching methods on embedded systems with project-based learning. In Proceedings of the IEEE International Conference on Teaching, Assessment and Learning for Engineering (TALE), Wollongong, Australia, 4–7 December 2018; pp. 169–176. [Google Scholar]

- Sanfilippo, F.; Austreng, K. Sustainable Approach to Teaching Embedded Systems with Hands-On Project-Based Visible Learning. Int. J. Eng. Educ. 2021, 37, 814–829. [Google Scholar]

- Shams, L.; Seitz, A.R. Benefits of multisensory learning. Trends Cogn. Sci. 2008, 12, 411–417. [Google Scholar] [CrossRef]

- Sanfilippo, F.; Blauskas, T.; Salvietti, G.; Ramos, I.; Vert, S.; Radianti, J.; Majchrzak, T.A. Integrating VR/AR with Haptics into STEM Education. In Proceedings of the 4th International Conference on Intelligent Technologies and Applications (INTAP 2021), Grimstad, Norway, 11–13 October 2021. accepted for publication. [Google Scholar]

- Alizadehsalehi, S.; Hadavi, A.; Huang, J.C. From BIM to extended reality in AEC industry. Autom. Constr. 2020, 116, 103254. [Google Scholar] [CrossRef]

- Hernández-de Menéndez, M.; Guevara, A.V.; Martínez, J.C.T.; Alcántara, D.H.; Morales-Menendez, R. Active learning in engineering education. A review of fundamentals, best practices and experiences. Int. J. Interact. Des. Manuf. 2019, 13, 909–922. [Google Scholar] [CrossRef]

- Bonwell, C.C.; Eison, J.A. Active Learning: Creating Excitement in the Classroom. 1991 ASHE-ERIC Higher Education Reports; ERIC: Washington, DC, USA, 1991. [Google Scholar]

- Christie, M.; De Graaff, E. The philosophical and pedagogical underpinnings of Active Learning in Engineering Education. Eur. J. Eng. Educ. 2017, 42, 5–16. [Google Scholar] [CrossRef]

- Lucas, B.; Hanson, J. Thinking like an engineer: Using engineering habits of mind and signature pedagogies to redesign engineering education. Int. J. Eng. Pedagog. 2016, 6, 4–13. [Google Scholar] [CrossRef]

- Roberts, D.; Roberts, N.J. Maximising sensory learning through immersive education. J. Nurs. Educ. Pract. 2014, 4, 74–79. [Google Scholar] [CrossRef] [Green Version]

- Holly, M.; Pirker, J.; Resch, S.; Brettschuh, S.; Gütl, C. Designing VR Experiences–Expectations for Teaching and Learning in VR. Educ. Technol. Soc. 2021, 24, 107–119. [Google Scholar]

- Fromm, J.; Radianti, J.; Wehking, C.; Stieglitz, S.; Majchrzak, T.A.; vom Brocke, J. More than Experience?—On the Unique Opportunities of Virtual Reality to Afford an Holistic Experiential Learning Cycle. Internet High. Educ. 2021, 50, 100804. [Google Scholar] [CrossRef]

- Radianti, J.; Majchrzak, T.A.; Fromm, J.; Wohlgenannt, I. A systematic review of immersive virtual reality applications for higher education: Design elements, lessons learned, and research agenda. Comput. Educ. 2020, 147, 103778. [Google Scholar] [CrossRef]

- Radianti, J.; Majchrzak, T.A.; Fromm, J.; Stieglitz, S.; vom Brocke, J. Virtual Reality Applications for Higher Educations: A Market Analysis. In Proceedings of the 54th Hawaii International Conference on Systems Science (HICSS-54), Maui, HI, USA, 4–9 January 2021. [Google Scholar]

- Ip, H.H.S.; Li, C.; Leoni, S.; Chen, Y.; Ma, K.F.; Wong, C.H.t.; Li, Q. Design and evaluate immersive learning experience for massive open online courses (MOOCs). IEEE Trans. Learn. Technol. 2018, 12, 503–515. [Google Scholar] [CrossRef]

- Bhattacharjee, D.; Paul, A.; Kim, J.H.; Karthigaikumar, P. An immersive learning model using evolutionary learning. Comput. Electr. Eng. 2018, 65, 236–249. [Google Scholar] [CrossRef]

- Fracaro, S.G.; Glassey, J.; Bernaerts, K.; Wilk, M. Immersive technologies for the training of operators in the process industry: A Systematic Literature Review. Comput. Chem. Eng. 2022, 160, 107691. [Google Scholar]

- Makransky, G.; Petersen, G.B. The cognitive affective model of immersive learning (CAMIL): A theoretical research-based model of learning in immersive virtual reality. Educ. Psychol. Rev. 2021, 33, 937–958. [Google Scholar] [CrossRef]

- De Back, T.T.; Tinga, A.M.; Louwerse, M.M. CAVE-based immersive learning in undergraduate courses: Examining the effect of group size and time of application. Int. J. Educ. Technol. High. Educ. 2021, 18, 56. [Google Scholar] [CrossRef]

- Swensen, H. Potential of augmented reality in sciences education. A literature review. In Proceedings of the 9th International Conference of Education, Research and Innovation (ICERI), Seville, Spain, 14–16 November 2016; pp. 2540–2547. [Google Scholar]

- Unity Real-Time Development Platform. 2021. Available online: https://unity.com/ (accessed on 6 May 2021).

- Walkington, C. Exploring Collaborative Embodiment for Learning (EXCEL): Understanding Geometry Through Multiple Modalities. 2022. Available online: https://ies.ed.gov/funding/grantsearch/details.asp?ID=4484 (accessed on 23 February 2022).

- Culbertson, H.; Lpez Delgado, J.J.; Kuchenbecker, K.J. One hundred data-driven haptic texture models and open-source methods for rendering on 3D objects. In Proceedings of the 2014 IEEE Haptics Symposium (HAPTICS), Houston, TX, USA, 23–26 February 2014; pp. 319–325. [Google Scholar] [CrossRef]

- Pacchierotti, C.; Sinclair, S.; Solazzi, M.; Frisoli, A.; Hayward, V.; Prattichizzo, D. Wearable haptic systems for the fingertip and the hand: Taxonomy, review, and perspectives. IEEE Trans. Haptics 2017, 10, 580–600. [Google Scholar] [CrossRef] [Green Version]

- Haptics in Apple User Interaction. 2022. Available online: https://developer.apple.com/design/human-interface-guidelines/ios/user-interaction/haptics/ (accessed on 18 March 2022).

- Ma, R.; Dollar, A. Yale openhand project: Optimizing open-source hand designs for ease of fabrication and adoption. IEEE Robot. Autom. Mag. 2017, 24, 32–40. [Google Scholar] [CrossRef]

- AugmentedWearEdu. Available online: https://augmentedwearedu.uia.no/ (accessed on 27 March 2022).

- Chattha, U.A.; Janjua, U.I.; Anwar, F.; Madni, T.M.; Cheema, M.F.; Janjua, S.I. Motion sickness in virtual reality: An empirical evaluation. IEEE Access 2020, 8, 130486–130499. [Google Scholar] [CrossRef]

- Tychsen, L.; Foeller, P. Effects of immersive virtual reality headset viewing on young children: Visuomotor function, postural stability, and motion sickness. Am. J. Ophthalmol. 2020, 209, 151–159. [Google Scholar] [CrossRef] [PubMed]

- Zhou, N.N.; Deng, Y.L. Virtual reality: A state-of-the-art survey. Int. J. Autom. Comput. 2009, 6, 319–325. [Google Scholar] [CrossRef]

- Mütterlein, J. The three pillars of virtual reality? Investigating the roles of immersion, presence, and interactivity. In Proceedings of the 51st Hawaii International Conference on System Sciences, Waikoloa Village, HI, USA, 2–6 January 2018. [Google Scholar]

- Freina, L.; Ott, M. A literature review on immersive virtual reality in education: State of the art and perspectives. Int. Sci. Conf. Elearning Softw. Educ. 2015, 1, 10–1007. [Google Scholar]

- Zhao, J.; Allison, R.S.; Vinnikov, M.; Jennings, S. Estimating the motion-to-photon latency in head mounted displays. In Proceedings of the IEEE Virtual Reality (VR), Los Angeles, CA, USA, 18–22 March 2017; pp. 313–314. [Google Scholar]

- Clay, V.; König, P.; Koenig, S. Eye tracking in virtual reality. J. Eye Mov. Res. 2019, 12. [Google Scholar] [CrossRef] [PubMed]

- Munafo, J.; Diedrick, M.; Stoffregen, T.A. The virtual reality head-mounted display Oculus Rift induces motion sickness and is sexist in its effects. Exp. Brain Res. 2017, 235, 889–901. [Google Scholar] [CrossRef]

- Logitech. VR Ink Stylus. 2021. Available online: https://www.logitech.com/en-roeu/promo/vr-ink.html (accessed on 6 May 2021).

- Sipatchin, A.; Wahl, S.; Rifai, K. Eye-tracking for low vision with virtual reality (VR): Testing status quo usability of the HTC Vive Pro Eye. bioRxiv 2020. [Google Scholar] [CrossRef]

- Ogdon, D.C. HoloLens and VIVE pro: Virtual reality headsets. J. Med. Libr. Assoc. 2019, 107, 118. [Google Scholar] [CrossRef] [Green Version]

- Stengel, M.; Grogorick, S.; Eisemann, M.; Eisemann, E.; Magnor, M.A. An affordable solution for binocular eye tracking and calibration in head-mounted displays. In Proceedings of the 23rd ACM international conference on Multimedia, Brisbane, Australia, 26–30 October 2015; pp. 15–24. [Google Scholar]

- Syed, R.; Collins-Thompson, K.; Bennett, P.N.; Teng, M.; Williams, S.; Tay, D.W.W.; Iqbal, S. Improving Learning Outcomes with Gaze Tracking and Automatic Question Generation. In Proceedings of the Web Conference, Taipei, Taiwan, 20–25 April 2020; pp. 1693–1703. [Google Scholar]

- Muender, T.; Bonfert, M.; Reinschluessel, A.V.; Malaka, R.; Döring, T. Haptic Fidelity Framework: Defining the Factors of Realistic Haptic Feedback for Virtual Reality. 2022; preprint. [Google Scholar]

- Kang, N.; Lee, S. A meta-analysis of recent studies on haptic feedback enhancement in immersive-augmented reality. In Proceedings of the 4th International Conference on Virtual Reality, Hong Kong, China, 24–26 February 2018; pp. 3–9. [Google Scholar]

- Edwards, B.I.; Bielawski, K.S.; Prada, R.; Cheok, A.D. Haptic virtual reality and immersive learning for enhanced organic chemistry instruction. Virtual Real. 2019, 23, 363–373. [Google Scholar] [CrossRef]

- HaptX. HaptX Gloves. 2022. Available online: https://haptx.com/ (accessed on 23 February 2021).

- Gu, X.; Zhang, Y.; Sun, W.; Bian, Y.; Zhou, D.; Kristensson, P.O. Dexmo: An inexpensive and lightweight mechanical exoskeleton for motion capture and force feedback in VR. In Proceedings of the 2016 CHI Conference on Human Factors in Computing Systems, San Jose, CA, USA, 7–12 May 2016; pp. 1991–1995. [Google Scholar]

- Interhaptics. Haptics for Virtual Reality (VR) and Mixed Reality (MR). 2022. Available online: https://www.interhaptics.com/ (accessed on 23 February 2021).

- Azuma, R.T. A survey of augmented reality. Presence Teleoperators Virtual Environ. 1997, 6, 355–385. [Google Scholar] [CrossRef]

- Azuma, R.T. Making augmented reality a reality. In Applied Industrial Optics: Spectroscopy, Imaging and Metrology; Optical Society of America: Washington, DC, USA, 2017; p. JTu1F-1. [Google Scholar] [CrossRef] [Green Version]

- Bacca Acosta, J.L.; Baldiris Navarro, S.M.; Fabregat Gesa, R.; Graf, S.; Kinshuk, D. Augmented reality trends in education: A systematic review of research and applications. J. Educ. Technol. Soc. 2014, 17, 133–149. [Google Scholar]

- Chen, P.; Liu, X.; Cheng, W.; Huang, R. A review of using Augmented Reality in Education from 2011 to 2016. In Innovations in Smart Learning; Springer: Berlin/Heidelberg, Germany, 2017; pp. 13–18. [Google Scholar]

- Garzón, J.; Pavón, J.; Baldiris, S. Systematic review and meta-analysis of augmented reality in educational settings. Virtual Real. 2019, 23, 447–459. [Google Scholar] [CrossRef]

- Craig, A.B. Understanding Augmented Reality: Concepts and Applications Newnes; Morgan Kaufmann: Burlington, MA, USA, 2013. [Google Scholar]

- Wang, J.; Zhu, M.; Fan, X.; Yin, X.; Zhou, Z. Multi-Channel Augmented Reality Interactive Framework Design for Ship Outfitting Guidance. IFAC Pap. Online 2020, 53, 189–196. [Google Scholar] [CrossRef]

- Ren, G.; Wei, S.; O’Neill, E.; Chen, F. Towards the design of effective haptic and audio displays for augmented reality and mixed reality applications. Adv. Multimed. 2018, 2018, 4517150. [Google Scholar] [CrossRef] [Green Version]

- Ibáñez, M.B.; Delgado-Kloos, C. Augmented reality for STEM learning: A systematic review. Comput. Educ. 2018, 123, 109–123. [Google Scholar] [CrossRef]

- Rieger, C.; Majchrzak, T.A. Towards the Definitive Evaluation Framework for Cross-Platform App Development Approaches. J. Syst. Softw. 2019, 153, 175–199. [Google Scholar] [CrossRef]

- Radu, I. Augmented reality in education: A meta-review and cross-media analysis. Pers. Ubiquitous Comput. 2014, 18, 1533–1543. [Google Scholar] [CrossRef]

- Fromm, J.; Eyilmez, K.; Ba’feld, M.; Majchrzak, T.A.; Stieglitz, S. Social Media Data in an Augmented Reality System for Situation Awareness Support in Emergency Control Rooms. Inf. Syst. Front. 2021, 1–24. [Google Scholar] [CrossRef]

- Sırakaya, M.; Alsancak Sırakaya, D. Augmented reality in STEM education: A systematic review. Interact. Learn. Environ. 2020, 1–14. [Google Scholar] [CrossRef]

- Sanfilippo, F.; Weustink, P.B.; Pettersen, K.Y. A coupling library for the force dimension haptic devices and the 20-sim modelling and simulation environment. In Proceedings of the 41st Annual Conference (IECON) of the IEEE Industrial Electronics Society, Yokohama, Japan, 9–12 November 2015; pp. 168–173. [Google Scholar]

- Williams, R.L., II; Chen, M.Y.; Seaton, J.M. Haptics-augmented high school physics tutorials. Int. J. Virtual Real. 2001, 5, 167–184. [Google Scholar] [CrossRef]

- Williams, R.L.; Srivastava, M.; Conaster, R.; Howell, J.N. Implementation and evaluation of a haptic playback system. Haptics-e Electron. J. Haptics Res. 2004. Available online: http://hdl.handle.net/1773/34888 (accessed on 27 March 2022).

- Teklemariam, H.G.; Das, A. A case study of phantom omni force feedback device for virtual product design. Int. J. Interact. Des. Manuf. 2017, 11, 881–892. [Google Scholar] [CrossRef]

- Salvietti, G.; Meli, L.; Gioioso, G.; Malvezzi, M.; Prattichizzo, D. Multicontact Bilateral Telemanipulation with Kinematic Asymmetries. IEEE/ASME Trans. Mechatron. 2017, 22, 445–456. [Google Scholar] [CrossRef]

- Leonardis, D.; Barsotti, M.; Loconsole, C.; Solazzi, M.; Troncossi, M.; Mazzotti, C.; Castelli, V.P.; Procopio, C.; Lamola, G.; Chisari, C.; et al. An EMG-controlled robotic hand exoskeleton for bilateral rehabilitation. IEEE Trans. Haptics 2015, 8, 140–151. [Google Scholar] [CrossRef] [PubMed]

- Leonardis, D.; Solazzi, M.; Bortone, I.; Frisoli, A. A wearable fingertip haptic device with 3 DoF asymmetric 3-RSR kinematics. In Proceedings of the 2015 IEEE World Haptics Conference (WHC), Evanston, IL, USA, 22–26 June 2015; pp. 388–393. [Google Scholar]

- Minamizawa, K.; Fukamachi, S.; Kajimoto, H.; Kawakami, N.; Tachi, S. Gravity grabber: Wearable haptic display to present virtual mass sensation. In Proceedings of the ACM SIGGRAPH 2007 Emerging Technologies, San Diego, CA, USA, 5–9 August 2007; p. 8. [Google Scholar]

- Prattichizzo, D.; Chinello, F.; Pacchierotti, C.; Malvezzi, M. Towards wearability in fingertip haptics: A 3-dof wearable device for cutaneous force feedback. IEEE Trans. Haptics 2013, 6, 506–516. [Google Scholar] [CrossRef] [PubMed]

- Maisto, M.; Pacchierotti, C.; Chinello, F.; Salvietti, G.; De Luca, A.; Prattichizzo, D. Evaluation of wearable haptic systems for the fingers in augmented reality applications. IEEE Trans. Haptics 2017, 10, 511–522. [Google Scholar] [CrossRef] [Green Version]

- Pacchierotti, C.; Salvietti, G.; Hussain, I.; Meli, L.; Prattichizzo, D. The hRing: A wearable haptic device to avoid occlusions in hand tracking. In Proceedings of the 2016 IEEE Haptics Symposium (HAPTICS), Philadelphia, PA, USA, 8–11 April 2016; pp. 134–139. [Google Scholar]

- Baldi, T.L.; Scheggi, S.; Aggravi, M.; Prattichizzo, D. Haptic guidance in dynamic environments using optimal reciprocal collision avoidance. IEEE Robot. Autom. Lett. 2017, 3, 265–272. [Google Scholar] [CrossRef] [Green Version]

- Chinello, F.; Malvezzi, M.; Pacchierotti, C.; Prattichizzo, D. Design and development of a 3RRS wearable fingertip cutaneous device. In Proceedings of the IEEE International Conference on Advanced Intelligent Mechatronics (AIM), Busan, Korea, 7–11 July 2015; pp. 293–298. [Google Scholar]

- Hayward, V.; Astley, O.R.; Cruz-Hernandez, M.; Grant, D.; Robles-De-La-Torre, G. Haptic interfaces and devices. Sens. Rev. 2004, 24, 16–29. [Google Scholar] [CrossRef]

- Pacchierotti, C.; Meli, L.; Chinello, F.; Malvezzi, M.; Prattichizzo, D. Cutaneous haptic feedback to ensure the stability of robotic teleoperation systems. Int. J. Robot. Res. 2015, 34, 1773–1787. [Google Scholar] [CrossRef]

- Salazar, S.V.; Pacchierotti, C.; de Tinguy, X.; Maciel, A.; Marchal, M. Altering the stiffness, friction, and shape perception of tangible objects in virtual reality using wearable haptics. IEEE Trans. Haptics 2020, 13, 167–174. [Google Scholar] [CrossRef] [Green Version]

- Kreimeier, J.; Hammer, S.; Friedmann, D.; Karg, P.; Bühner, C.; Bankel, L.; Götzelmann, T. Evaluation of different types of haptic feedback influencing the task-based presence and performance in virtual reality. In Proceedings of the 12th ACM International Conference on PErvasive Technologies Related to Assistive Environments, Rhodes, Greece, 5–7 June 2019; pp. 289–298. [Google Scholar]

- Heeneman, S.; Oudkerk Pool, A.; Schuwirth, L.W.; van der Vleuten, C.P.; Driessen, E.W. The impact of programmatic assessment on student learning: Theory versus practice. Med. Educ. 2015, 49, 487–498. [Google Scholar] [CrossRef]

- Kamińska, D.; Zwoliński, G.; Wiak, S.; Petkovska, L.; Cvetkovski, G.; Barba, P.D.; Mognaschi, M.E.; Haamer, R.E.; Anbarjafari, G. Virtual Reality-Based Training: Case Study in Mechatronics. Technol. Knowl. Learn. 2021, 26, 1043–1059. [Google Scholar] [CrossRef]

- Fucentese, S.F.; Rahm, S.; Wieser, K.; Spillmann, J.; Harders, M.; Koch, P.P. Evaluation of a virtual-reality-based simulator using passive haptic feedback for knee arthroscopy. Knee Surg. Sport. Traumatol. Arthrosc. 2015, 23, 1077–1085. [Google Scholar] [CrossRef] [PubMed]

- Yurdabakan, İ. The view of constructivist theory on assessment: Alternative assessment methods in education. Ank. Univ. J. Fac. Educ. Sci. 2011, 44, 51–78. [Google Scholar] [CrossRef]

- Schuwirth, L.W.; Van der Vleuten, C.P. Programmatic assessment: From assessment of learning to assessment for learning. Med. Teach. 2011, 33, 478–485. [Google Scholar] [CrossRef] [PubMed]

- Vraga, E.; Bode, L.; Troller-Renfree, S. Beyond self-reports: Using eye tracking to measure topic and style differences in attention to social media content. Commun. Methods Meas. 2016, 10, 149–164. [Google Scholar] [CrossRef]

- Alemdag, E.; Cagiltay, K. A systematic review of eye tracking research on multimedia learning. Comput. Educ. 2018, 125, 413–428. [Google Scholar] [CrossRef]

- Wu, C.; Cha, J.; Sulek, J.; Zhou, T.; Sundaram, C.P.; Wachs, J.; Yu, D. Eye-tracking metrics predict perceived workload in robotic surgical skills training. Hum. Factors 2020, 62, 1365–1386. [Google Scholar] [CrossRef] [Green Version]

- Da Silva, A.C.; Sierra-Franco, C.A.; Silva-Calpa, G.F.M.; Carvalho, F.; Raposo, A.B. Eye-tracking Data Analysis for Visual Exploration Assessment and Decision Making Interpretation in Virtual Reality Environments. In Proceedings of the 2020 22nd Symposium on Virtual and Augmented Reality (SVR), Porto de Galinhas, Brazil, 7–10 November 2020; pp. 39–46. [Google Scholar]

- Pernalete, N.; Raheja, A.; Segura, M.; Menychtas, D.; Wieczorek, T.; Carey, S. Eye-Hand Coordination Assessment Metrics Using a Multi-Platform Haptic System with Eye-Tracking and Motion Capture Feedback. In Proceedings of the 2018 40th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), Honolulu, HI, USA, 17–21 July 2018; pp. 2150–2153. [Google Scholar] [CrossRef]

- Sanfilippo, F. A multi-sensor system for enhancing situational awareness in offshore training. In Proceedings of the IEEE International Conference On Cyber Situational Awareness, Data Analytics Furthermore, Assessment (CyberSA), London, UK, 13–16 June 2016; pp. 1–6. [Google Scholar]

- Sanfilippo, F. A multi-sensor fusion framework for improving situational awareness in demanding maritime training. Reliab. Eng. Syst. Saf. 2017, 161, 12–24. [Google Scholar] [CrossRef]

- Ziv, G. Gaze behavior and visual attention: A review of eye tracking studies in aviation. Int. J. Aviat. Psychol. 2016, 26, 75–104. [Google Scholar] [CrossRef]

- Chen, Y.; Jermias, J.; Panggabean, T. The role of visual attention in the managerial Judgment of Balanced-Scorecard performance evaluation: Insights from using an eye-tracking device. J. Account. Res. 2016, 54, 113–146. [Google Scholar] [CrossRef]

- Fan, S.; Shen, Z.; Jiang, M.; Koenig, B.L.; Xu, J.; Kankanhalli, M.S.; Zhao, Q. Emotional attention: A study of image sentiment and visual attention. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018; pp. 7521–7531. [Google Scholar]

- Sanfilippo, F.; Bla’auskas, T.; Gird’i’na, M.; Janonis, A.; Kiudys, E.; Salvietti, G. A Multi-Modal Auditory-Visual-Tactile e-Learning Framework. In Proceedings of the 4th International Conference on Intelligent Technologies and Applications (INTAP 2021), Grimstad, Norway, 11–13 October 2021. accepted for publication. [Google Scholar]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sanfilippo, F.; Blazauskas, T.; Salvietti, G.; Ramos, I.; Vert, S.; Radianti, J.; Majchrzak, T.A.; Oliveira, D. A Perspective Review on Integrating VR/AR with Haptics into STEM Education for Multi-Sensory Learning. Robotics 2022, 11, 41. https://doi.org/10.3390/robotics11020041

Sanfilippo F, Blazauskas T, Salvietti G, Ramos I, Vert S, Radianti J, Majchrzak TA, Oliveira D. A Perspective Review on Integrating VR/AR with Haptics into STEM Education for Multi-Sensory Learning. Robotics. 2022; 11(2):41. https://doi.org/10.3390/robotics11020041

Chicago/Turabian StyleSanfilippo, Filippo, Tomas Blazauskas, Gionata Salvietti, Isabel Ramos, Silviu Vert, Jaziar Radianti, Tim A. Majchrzak, and Daniel Oliveira. 2022. "A Perspective Review on Integrating VR/AR with Haptics into STEM Education for Multi-Sensory Learning" Robotics 11, no. 2: 41. https://doi.org/10.3390/robotics11020041

APA StyleSanfilippo, F., Blazauskas, T., Salvietti, G., Ramos, I., Vert, S., Radianti, J., Majchrzak, T. A., & Oliveira, D. (2022). A Perspective Review on Integrating VR/AR with Haptics into STEM Education for Multi-Sensory Learning. Robotics, 11(2), 41. https://doi.org/10.3390/robotics11020041