Unsupervised Online Grounding for Social Robots

Abstract

1. Introduction

2. Background

3. Related Work

4. Proposed Framework

- Perceptual feature extraction

- Input: Video stream.

- Output: 156 audio features.

- Perceptual feature classification

- Input: 156 audio features.

- Output: Concrete representations of percepts.

- Language grounding

- Input: Natural language descriptions, concrete representations of percepts, previously detected auxiliary words, and word and percept occurrence information.

- Output: Set of auxiliary words and word to concrete representation mappings.

4.1. Perceptual Featue Extraction

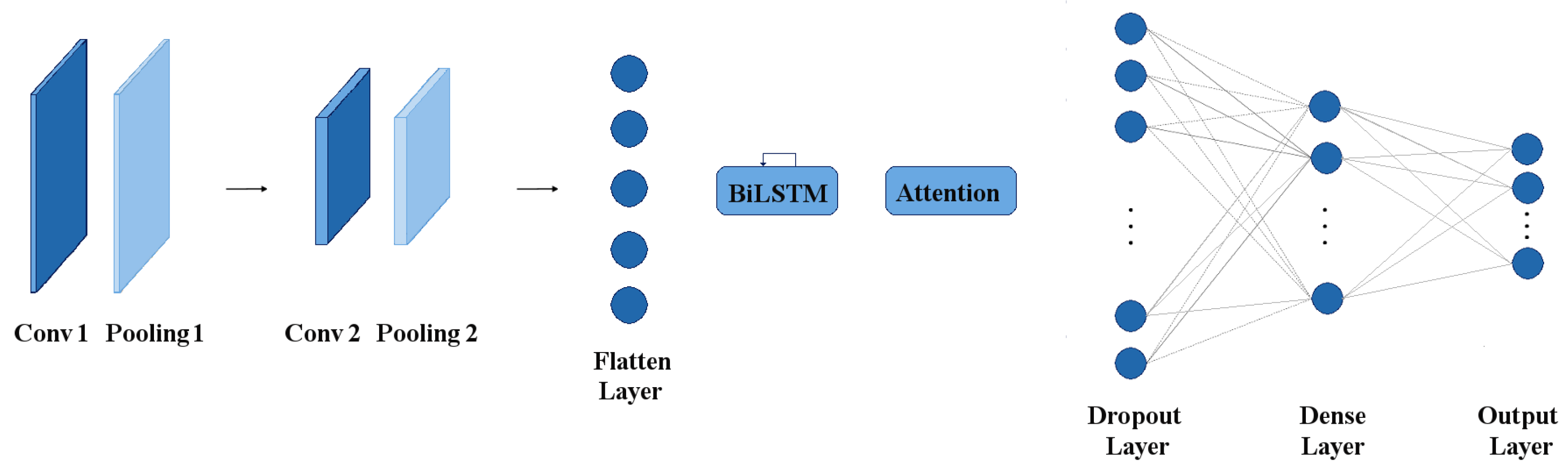

4.2. Perceptual Feature Classification

4.3. Language Grounding

| Algorithm 1 The grounding procedure takes as input all words (W) and concrete representations (CR) of the current situation, the sets of all previously obtained word-concrete representation (WCRPS) and concrete representation-word (CRWPS) pairs, and the set of auxiliary words (AW) and returns the sets of grounded words (GW) and grounded concrete representations (GCR). |

|

| Algorithm 2 The auxiliary word detection procedure takes as input the sets of word and concrete representation occurrences (WO and CRO), and the set of previously detected auxiliary words (AW) and returns an updated AW. |

|

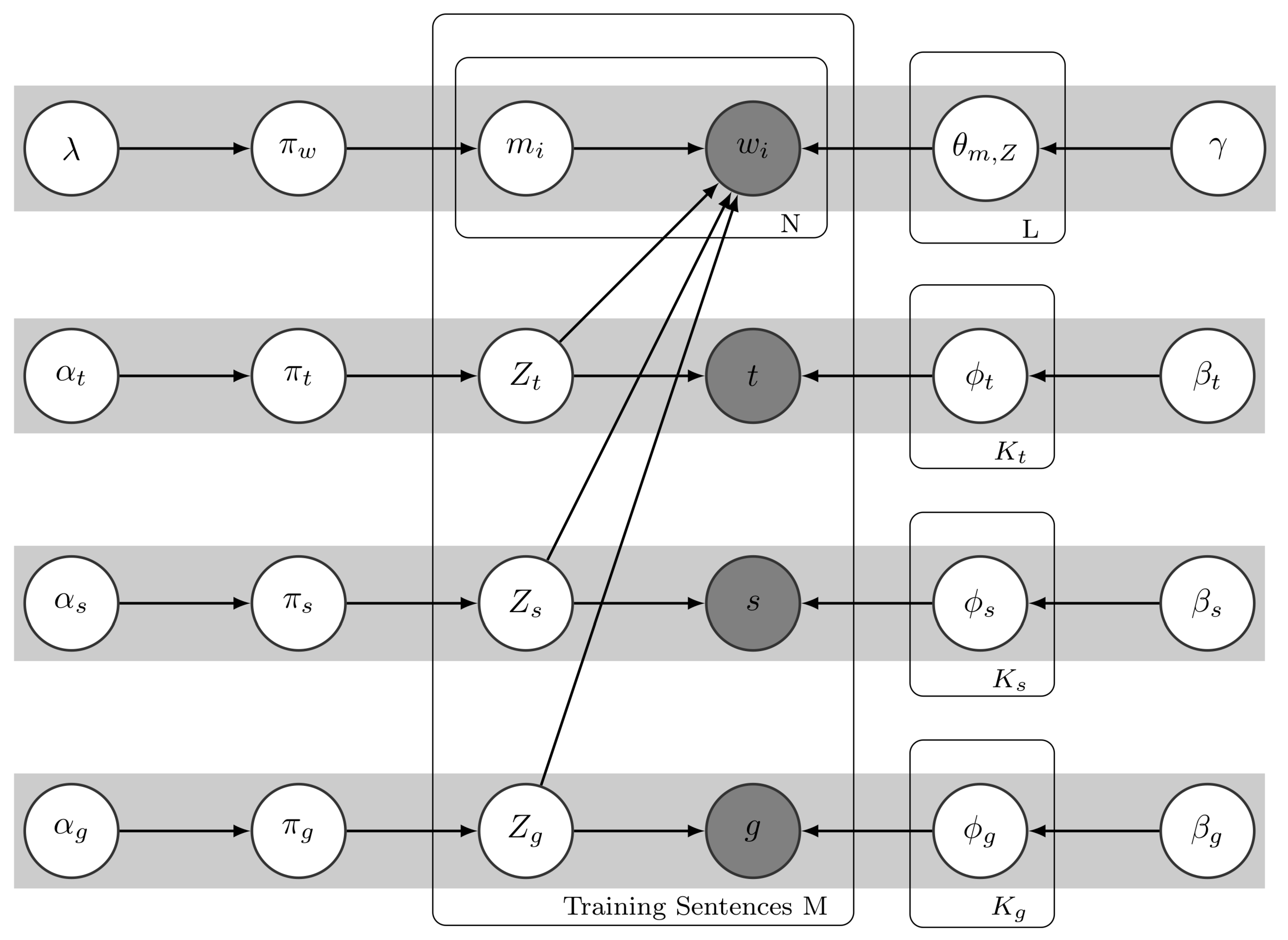

5. Baseline Framework

| Algorithm 3 Inference of the model’s latent variables. was set to 100. |

|

6. Experimental Setup

- The video representing the current situation is given to OpenEAR, which extracts 384 features (Section 4.1);

- 156 (MFCC and PCM RMS) of the 384 features are provided as input to the employed deep neural networks to determine the concrete representations of the expressed emotion, its intensity and the gender of the person expressing it (Section 4.2);

- The concrete representations are provided to the agent together with a sentence describing the emotion type, intensity and gender of the person in the video, e.g., “She is very angry.”;

- The agent uses cross-situational learning to ground words through corresponding concrete representations (Section 4.3 and Section 5).

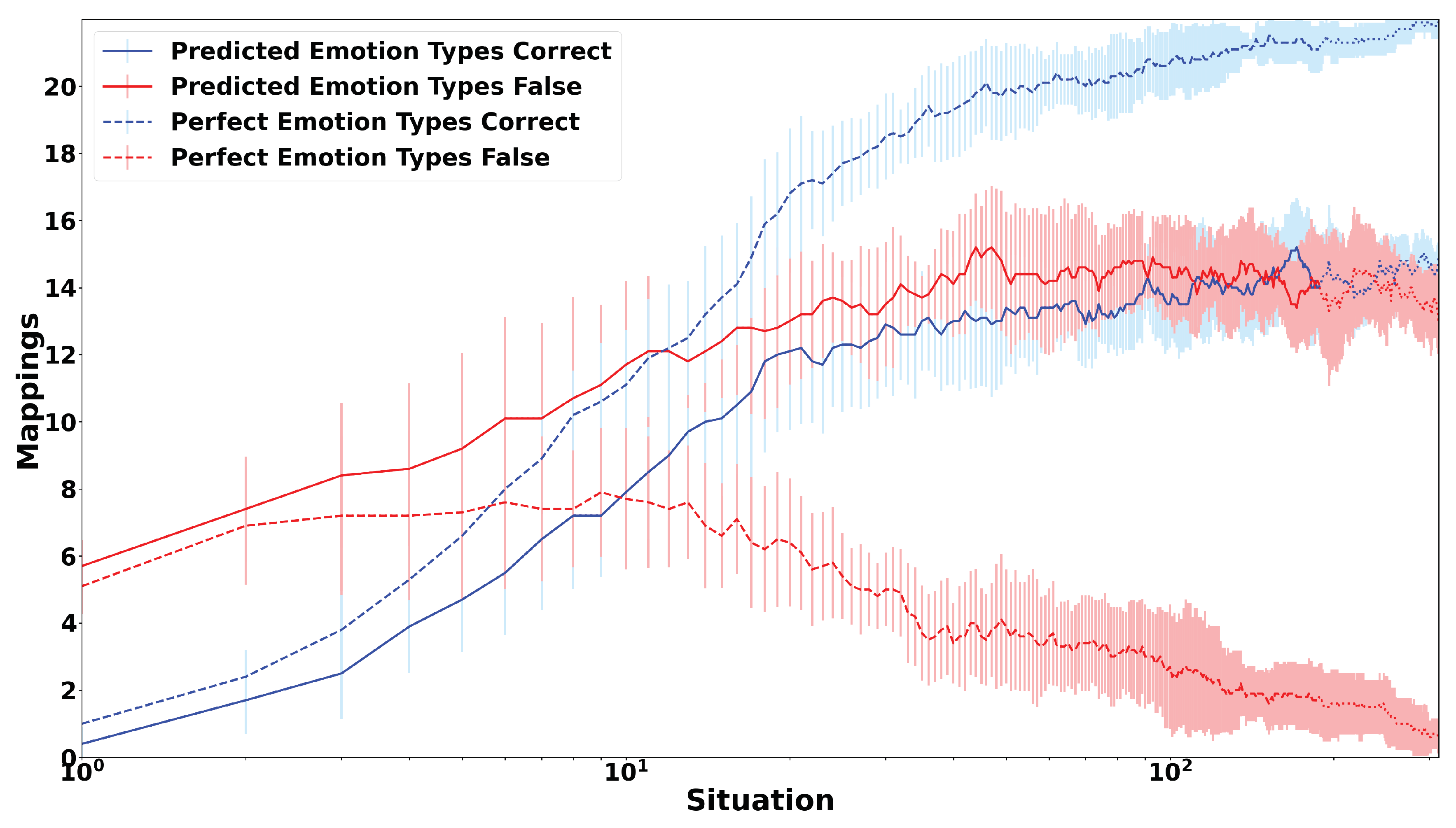

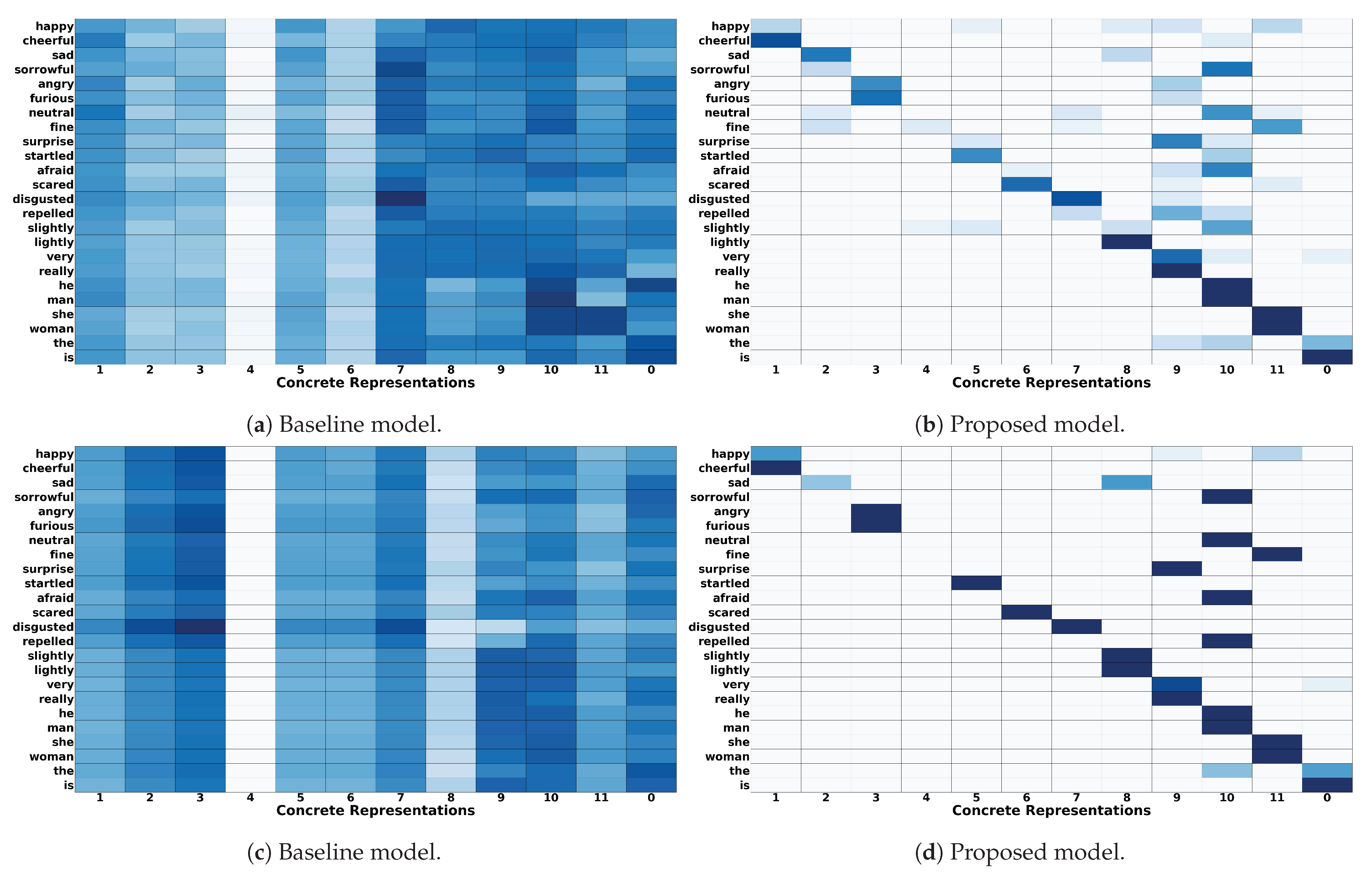

7. Results and Discussion

8. Conclusions and Future Work

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Feil-Seifer, D.; Matarić, M.J. Defining Socially Assistive Robotics. In Proceedings of the 2005 IEEE 9th International Conference on Rehabilitation Robotics (ICORR), Chicago, IL, USA, 28 June–1 July 2005; pp. 465–468. [Google Scholar]

- Bemelmans, R.; Gelderblom, G.J.; Jonker, P.; de Witte, L. Socially Assistive Robots in Elderly Care: A Systematic Review into Effects and Effectiveness. J. Am. Med Dir. Assoc. (JAMDA) 2012, 13, 114–120. [Google Scholar] [CrossRef] [PubMed]

- Kachouie, R.; Sedighadeli, S.; Khosla, R.; Chu, M.T. Socially Assistive Robots in Elderly Care: A Mixed-Method Systematic Literature Review. Int. J. Hum. Comput. Interact. 2014, 30, 369–393. [Google Scholar] [CrossRef]

- Cao, H.L.; Esteban, P.G.; Bartlett, M.; Baxter, P.; Belpaeme, T.; Billing, E.; Cai, H.; Coeckelbergh, M.; Costescu, C.; David, D.; et al. Robot-Enhanced Therapy: Development and Validation of Supervised Autonomous Robotic System for Autism Spectrum Disorders Therapy. IEEE Robot. Autom. Mag. 2019, 26, 49–58. [Google Scholar] [CrossRef]

- Coeckelbergh, M.; Pop, C.; Simut, R.; Peca, A.; Pintea, S.; David, D.; Vanderborght, B. A Survey of Expectations About the Role of Robots in Robot-Assisted Therapy for Children with ASD: Ethical Acceptability, Trust, Sociability, Appearance, and Attachment. Sci. Eng. Ethics 2016, 22, 47–65. [Google Scholar] [CrossRef]

- Brose, S.; Weber, D.; Salatin, B.; Grindle, G.; Wang, H.; Vazquez, J.; Cooper, R. The Role of Assistive Robotics in the Lives of Persons with Disability. Am. J. Phys. Med. Rehabil. 2010, 89, 509–521. [Google Scholar] [CrossRef] [PubMed]

- Brave, S.; Nass, C.; Hutchinson, K. Computers that care: Investigating the effects of orientation of emotion exhibited by an embodied computer agent. Int. J. Hum. Comput. Stud. 2005, 62, 161–178. [Google Scholar] [CrossRef]

- Rasool, Z.; Masuyama, N.; Islam, M.N.; Loo, C.K. Empathic Interaction Using the Computational Emotion Model. In Proceedings of the IEEE Symposium Series on Computational Intelligence, Cape Town, South Africa, 7–10 December 2015. [Google Scholar]

- Gibbs, J.P. Norms: The Problem of Definition and Classification. Am. J. Sociol. 1965, 70, 586–594. [Google Scholar] [CrossRef]

- Mackie, G.; Moneti, F. What Are Social Norms? How Are They Measured? Technical Report; UNICEF/UCSD Center on Global Justice: New York, NY, USA; San Diego, CA, USA, 2014. [Google Scholar]

- Sutton, R.S.; Barto, A.G. Reinforcement Learning: An Introduction; MIT Press: Cambridge, MA, USA, 1998. [Google Scholar]

- Argall, B.D.; Chernova, S.; Veloso, M.; Browning, B. A survey of robot learning from demonstration. Robot. Auton. Syst. 2009, 57, 469–483. [Google Scholar] [CrossRef]

- Huang, C.M.; Mutlu, B. Learning-based modeling of multimodal behaviors for humanlike robots. In Proceedings of the 2014 ACM/IEEE International Conference on Human-Robot Interaction (HRI), Bielefeld, Germany, 3–6 March 2014; pp. 57–64. [Google Scholar] [CrossRef]

- Liu, P.; Glas, D.F.; Kanda, T.; Ishiguro, H.; Hagita, N. How to Train Your Robot—Teaching Service Robots to Reproduce Human Social Behavior. In Proceedings of the 23rd IEEE International Symposium on Robot and Human Interactive Communication (RO-MAN), Edinburgh, UK, 25–29 August 2014. [Google Scholar]

- Gao, Y.; Yang, F.; Frisk, M.; Hemandez, D.; Peters, C.; Castellano, G. Learning Socially Appropriate Robot Approaching Behavior Toward Groups using Deep Reinforcement Learning. In Proceedings of the 28th IEEE International Conference on Robot and Human Interactive Communication (RO-MAN), New Delhi, India, 14–18 October 2019. [Google Scholar]

- Bagheri, E.; Roesler, O.; Cao, H.L.; Vanderborght, B. A Reinforcement Learning Based Cognitive Empathy Framework for Social Robots. Int. J. Soc. Robot. 2020. [Google Scholar] [CrossRef]

- Harnad, S. The Symbol Grounding Problem. Physica D 1990, 42, 335–346. [Google Scholar] [CrossRef]

- Dawson, C.R.; Wright, J.; Rebguns, A.; Escárcega, M.V.; Fried, D.; Cohen, P.R. A generative probabilistic framework for learning spatial language. In Proceedings of the IEEE Third Joint International Conference on Development and Learning and Epigenetic Robotics (ICDL), Osaka, Japan, 18–22 August 2013. [Google Scholar]

- Roesler, O.; Aly, A.; Taniguchi, T.; Hayashi, Y. Evaluation of Word Representations in Grounding Natural Language Instructions through Computational Human-Robot Interaction. In Proceedings of the 14th ACM/IEEE International Conference on Human-Robot Interaction (HRI), Daegu, Korea, 11–14 March 2019; pp. 307–316. [Google Scholar]

- Tellex, S.; Kollar, T.; Dickerson, S.; Walter, M.R.; Banerjee, A.G.; Teller, S.; Roy, N. Approaching the symbol grounding problem with probabilistic graphical models. AI Mag. 2011, 32, 64–76. [Google Scholar] [CrossRef]

- Aly, A.; Taniguchi, T. Towards Understanding Object-Directed Actions: A Generative Model for Grounding Syntactic Categories of Speech through Visual Perception. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Brisbane, QLD, Australia, 21–25 May 2018. [Google Scholar]

- Pinker, S. Learnability and Cognition; MIT Press: Cambridge, MA, USA, 1989. [Google Scholar]

- Fisher, C.; Hall, D.G.; Rakowitz, S.; Gleitman, L. When it is better to receive than to give: Syntactic and conceptual constraints on vocabulary growth. Lingua 1994, 92, 333–375. [Google Scholar] [CrossRef]

- Blythe, R.A.; Smith, K.; Smith, A.D.M. Learning Times for Large Lexicons Through Cross-Situational Learning. Cogn. Sci. 2010, 34, 620–642. [Google Scholar] [CrossRef] [PubMed]

- Smith, A.D.M.; Smith, K. Cross-Situational Learning. In Encyclopedia of the Sciences of Learning; Seel, N.M., Ed.; Springer: Boston, MA, USA, 2012; pp. 864–866. [Google Scholar] [CrossRef]

- Akhtar, N.; Montague, L. Early lexical acquisition: The role of cross-situational learning. First Lang. 1999, 19, 347–358. [Google Scholar] [CrossRef]

- Gillette, J.; Gleitman, H.; Gleitman, L.; Lederer, A. Human simulations of vocabulary learning. Cognition 1999, 73, 135–176. [Google Scholar] [CrossRef]

- Smith, L.; Yu, C. Infants rapidly learn word-referent mappings via cross-situational statistics. Cognition 2008, 106, 1558–1568. [Google Scholar] [CrossRef]

- Carey, S. The child as word-learner. In Linguistic Theory and Psychological Reality; Halle, M., Bresnan, J., Miller, G.A., Eds.; MIT Press: Cambridge, MA, USA, 1978; pp. 265–293. [Google Scholar]

- Carey, S.; Bartlett, E. Acquiring a single new word. Pap. Rep. Child Lang. Dev. 1978, 15, 17–29. [Google Scholar]

- Vogt, P. Exploring the Robustness of Cross-Situational Learning Under Zipfian Distributions. Cogn. Sci. 2012, 36, 726–739. [Google Scholar] [CrossRef]

- Bleys, J.; Loetzsch, M.; Spranger, M.; Steels, L. The Grounded Color Naming Game. In Proceedings of the 18th IEEE International Symposium on Robot and Human Interactive Communication (RO-MAN), Toyama, Japan, 27 September–2 October 2009. [Google Scholar]

- Spranger, M. Grounded Lexicon Acquisition—Case Studies in Spatial Language. In Proceedings of the IEEE Third Joint International Conference on Development and Learning and Epigenetic Robotics (ICDL-Epirob), Osaka, Japan, 18–22 August 2013. [Google Scholar]

- Steels, L.; Loetzsch, M. The Grounded Naming Game. In Experiments in Cultural Language Evolution; Steels, L., Ed.; John Benjamins: Amsterdam, The Netherlands, 2012; pp. 41–59. [Google Scholar]

- She, L.; Yang, S.; Cheng, Y.; Jia, Y.; Chai, J.Y.; Xi, N. Back to the Blocks World: Learning New Actions through Situated Human-Robot Dialogue. In Proceedings of the SIGDIAL 2014 Conference, Philadelphia, PA, USA, 18–20 June 2014; pp. 89–97. [Google Scholar]

- Siskind, J.M. A computational study of cross-situational techniques for learning word-to-meaning mappings. Cognition 1996, 61, 39–91. [Google Scholar] [CrossRef]

- Smith, K.; Smith, A.D.M.; Blythe, R.A. Cross-Situational Learning: An Experimental Study of Word-Learning Mechanisms. Cogn. Sci. 2011, 35, 480–498. [Google Scholar] [CrossRef]

- Roesler, O. Unsupervised Online Grounding of Natural Language during Human-Robot Interaction. In Proceedings of the Second Grand Challenge and Workshop on Multimodal Language at ACL 2020, Seattle, WA, USA, 10 July 2020. [Google Scholar]

- Eyben, F.; Wöllmer, M.; Schuller, B. OpenEAR—Introducing the Munich Open-Source Emotion and Affect Recognition Toolkit. In Proceedings of the 3rd International Conference on Affective Computing and Intelligent Interaction and Workshops, Amsterdam, The Netherlands, 10–12 September 2009. [Google Scholar]

- Livingstone, S.R.; Russo, F.A. The Ryerson Audio-Visual Database of Emotional Speechand Song (RAVDESS): A dynamic, multimodal set of facial and vocal expressions in North American English. PLoS ONE 2018, 13, e0196391. [Google Scholar] [CrossRef] [PubMed]

- Schuller, B.; Steidl, S.; Batliner, A. The Interspeech 2009 Emotion Challenge. In Proceedings of the Tenth Annual Conference of the International Speech Communication Association, Brighton, UK, 6–10 September 2009; pp. 312–315. [Google Scholar]

- Kingma, D.P.; Ba, L.J. Adam: A Method for Stochastic Optimization. In Proceedings of the International Conference on Learning Representations (ICLR), San Diego, CA, USA, 7–9 May 2015. [Google Scholar]

- Bagheri, E.; Roesler, O.; Cao, H.L.; Vanderborght, B. Emotion Intensity and Gender Detection via Speech and Facial Expressions. In Proceedings of the 31th Benelux Conference on Artificial Intelligence (BNAIC), Leiden, The Netherlands, 19–20 November 2020. [Google Scholar]

- Geman, S.; Geman, D. Stochastic Relaxation, Gibbs Distributions, and the Bayesian Restoration of Images. IEEE Trans. Pattern Anal. Mach. Intell. (TPAMI) 1984, 6, 721–741. [Google Scholar] [CrossRef] [PubMed]

- Ekman, P. Strong evidence for universals in facial expressions: A reply to Russell’s mistaken critique. Psychol. Bull. 1994, 115, 268–287. [Google Scholar] [CrossRef] [PubMed]

| Emotion Type | Emotion Intensity | Gender | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Happiness | Sadness | Anger | Neutral | Surprise | Fear | Disgust | Normal | Strong | Male | Female |

| 50% | 77.08% | 77.08% | 37.5% | 62.5% | 52.08% | 56.25% | 41.66% | 80.5% | 99.35% | 78.02% |

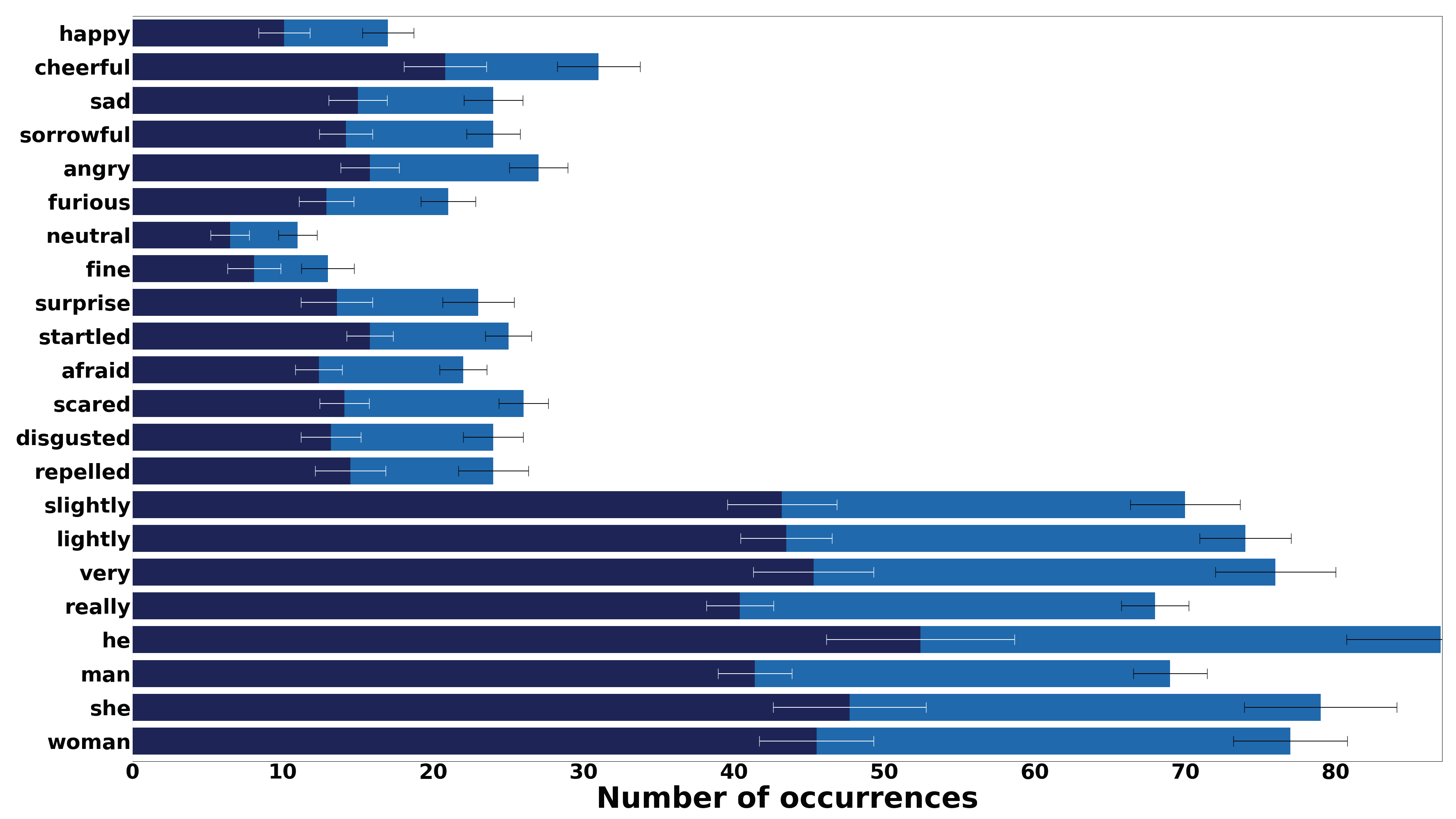

| Type | Concept | Synonyms | CR# |

|---|---|---|---|

| Emotion Type | Happiness | happy, cheerful | 1 |

| Sadness | sad, sorrowful | 2 | |

| Anger | angry, furious | 3 | |

| Neutral | neutral, fine | 4 | |

| Surprise | surprised, startled | 5 | |

| Fear | afraid, scared | 6 | |

| Disgust | disgusted, appalled | 7 | |

| Emotion Intensity | Normal 1 | slightly, lightly | 8 |

| Strong | very, really | 9 | |

| Gender | Female | she, woman | 10 |

| Male | he, man | 11 | |

| Auxiliary word | - | the, is | 0 |

| Parameter | Definition |

|---|---|

| Hyperparameter of the distribution | |

| Hyperparameters of the distributions and | |

| Modality index of each word | |

| (modality index ∈ {Type, Strength, Gender, AW}) | |

| Indices of type, strength and gender distributions | |

| Word indices | |

| Observed states representing types, strengths and genders | |

| Hyperparameter of the distribution | |

| Hyperparameters of the distributions and | |

| Word distribution over modalities |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Roesler, O.; Bagheri, E. Unsupervised Online Grounding for Social Robots. Robotics 2021, 10, 66. https://doi.org/10.3390/robotics10020066

Roesler O, Bagheri E. Unsupervised Online Grounding for Social Robots. Robotics. 2021; 10(2):66. https://doi.org/10.3390/robotics10020066

Chicago/Turabian StyleRoesler, Oliver, and Elahe Bagheri. 2021. "Unsupervised Online Grounding for Social Robots" Robotics 10, no. 2: 66. https://doi.org/10.3390/robotics10020066

APA StyleRoesler, O., & Bagheri, E. (2021). Unsupervised Online Grounding for Social Robots. Robotics, 10(2), 66. https://doi.org/10.3390/robotics10020066