1. Introduction

Since their first introduction in 1996 [

1,

2], collaborative robots (cobots) have gained more and more relevance for manufacturing industries as a key technology to improve the production line, in compliance with the Industry 4.0 paradigm [

3]. They are today adopted in the new collaborative workstations and in the hybrid meta-collaborative workstations [

4], where humans and robots collaborate, thanks to the establishment of a common communication method. One of the main key points of cobots is their easy reconfiguration on the production line, allowing them to be placed everywhere according to the needs [

5]. They are designed to be flexible; in fact, a single manipulator may perform a plethora of tasks, thanks to (i) a fast re-configuration of their workspace and (ii) their easy re-programming even by non-expert users by means of intuitive programming methods. Moreover, compared to industrial robots they are lightweight; hence, they are more easily moved around the production line according to the needs [

6,

7,

8]. However, because of their reconfigurability, cobots need to be frequently calibrated. In fact, while an industrial robot is usually adopted for a specific task and placed inside a robotic cell to perform it continuously, cobots may be used for a certain task for a certain period and repositioned and reprogrammed afterward according to the production line needs. Moreover, because of their reduced weight, they may be placed on moving carts; thus, they may be misplaced by accident. This difference is the reason why the calibration of the user frame is performed frequently on cobots; hence, it is necessary to adopt an intuitive, fast, and performing calibration method according to the specific set-up of the cobot workstation.

Today, cobots have finally gained their rightful importance; thus, their performances are required to be at least competitive with those of industrial robots even if their mechanical characteristics are sometimes extremely different. Several studies have been conducted to compare the two as described in Reference [

9], especially focusing on their positioning accuracy.

As detailed in the UNI EN ISO 9283 standard [

10], two statistical indexes are typically required to describe the positioning performances of a robot: (i) the positioning repeatability and (ii) the positioning accuracy [

11]. Usually, operators prefer to describe the locations to which the robot would operate (called “user frame” from now on) using custom reference systems, while the robot task is planned considering the reference system centered in the robot base (called “robot frame” from now on). Therefore, to properly move the robot to the user frame coordinates, it is necessary to establish a univocal relationship that converts the user frame coordinates to the robot frame coordinates, and vice-versa [

12].

The positioning repeatability is described as the robot ability to repetitively move in the exact same pose or configuration (i.e., position and orientation of the end-effector). It is important to note that this index only refers to the unidirectional repeatability, which is the ability to repeat the same pose starting from the same initial position, thus reducing the backlash to a minimum. In fact, since the encoders are usually placed on the motors axes and high reduction ratios are adopted for most joints, the unidirectional repeatability is mostly affected by (i) hysteresis, (ii) backlash, (iii) torsional elasticity [

13], and (iv) gear friction [

14] instead of the encoder resolution, which contributes very little to the final positioning repeatability that may be observed. Moreover, when considering long time-periods, this value may also be affected by thermal expansion [

15].

On the other hand, the positioning accuracy may be described as the error between the exact position and the one reached by the robot as measured by external sensors and devices. Albeit being affected by the same physical factors as repeatability, the positioning accuracy is mostly affected by (i) the mathematical errors that may occur when the original point in the user frame is converted in the robot frame and (ii) by the robot geometrical discrepancies and elasticities occurring in the robot links and transmissions [

11].

However, both positioning repeatability and accuracy may be greatly improved by performing a calibration procedure.

As stated in Reference [

16], robot calibration may be classified according to which object needs to be calibrated with respect to the robot: (i) joints calibration; (ii) robot-equipment calibration, and (iii) robot-user frames calibration.

In the first case, the robot joints are calibrated with each other, thus establishing a relationship between the robot base coordinate system and a secondary coordinate system usually centered on the end-effector, like in the work presented in Reference [

17]. This is particularly important because the robot positioning may be affected by slight changes or drifts caused by mechanical wearing of parts or by dimensional drifts caused by thermal effects or vibrations [

18].

In the second case, the calibration happens between the robot base coordinate system and other equipment, that may be, for example, an external tool needed for the task, a monitoring camera, or even another robot operating in the same workspace [

19].

Finally, in the third case, the workpiece coordinate system is calibrated with respect to the robot base coordinate system. By calibrating the user frame, the robot task programming is made easier for the operator, both online and offline, because the user frame coordinate system is usually more intuitive to use and stays the same regardless of where the robot is positioned. So, considering the user frame as the fixed reference system, it is possible to change the manipulator configuration and position according to the needs, a fundamental requirement for today’s flexible production lines.

However, the robot-user frames calibration requires the two coordinate systems to be fixed to work properly. This means that even small movements of the user frame or of the robot with respect to their original position may affect the final positioning of the end-effector. If industrial robots and heavy machineries are usually kept in the same position, collaborative robots are often moved around the production line. Therefore, cobots need a fast calibration method that guarantees low positioning errors.

The positioning accuracy of cobots using the user frame as a reference system is given by the combination of both the robot repeatability and the accuracy of the user frame calibration with respect to the fixed reference system of the robot. For this reason, different calibration methods could lead to different positioning accuracy even with the same movement repeatability of the cobots.

Two methods are usually adopted to perform a calibration procedure: (i) mechanical methods and (ii) vision-based methods.

Mechanical calibration methods are thoroughly described in Reference [

20]: they have been used for decades by companies and scientists since they are the most accurate approaches to determine the unique relationship between the two reference systems to calibrate. They usually involve markers or reference points on the user frame to which accurately move the robot end-effector, either online or offline. Especially in the case of applications where accurate positioning is required, external sensors may be used to measure the distance between the marker and the robot tip, such as lasers or proximity sensors, such as in the procedure described in the UNI EN ISO 9283 standard regulation [

10]. Another example is shown in Reference [

21], where a laser pointer has been mounted on the robot end-effector and used as a reference to perform the robot joints calibration. Similarly, in Reference [

22], a geometric calibration system based on the position of the laser pointer dot is presented as a fast, yet rough calibration method suited for tasks that do not require high positioning performances. Another example of an automated method to calibrate the robot joint using external sensors is shown in Reference [

23], where the authors used a physical model of the robot and a theodolite system to estimate all the significant sources of pose deviation, including elastic deformations and internal gear geometrical discrepancies.

Vision-based calibration methods are designed to be faster, yet usually less accurate, than mechanical methods. However, since the calibration accuracy achieved by using these approaches is often sufficient for the task, they have been recently sparked the interest of the scientific community working on cobots. A plethora of innovative procedures have been developed in late years adopting computer vision algorithms capable to extract useful information from images. Authors of Reference [

24] describe a calibration procedure based on a custom L-shaped 3D printed tool with three holes that is carefully placed on the workpiece to calibrate with respect to a CNC machine tool. The holes are accurately detected by a Circular Hough Transform algorithm applied to the RGB image of the calibration tool acquired by the stereo vision system mounted on the machine arm. The work presented in Reference [

25] shows a robot pose estimation system based on a single camera mounted on the robot end-effector. The algorithm adopted, which is an improved version of the camera calibration algorithm described in Reference [

26], allows users to extract the robot pose (hence, the joints positions and orientations) by analyzing a single image of the calibration chessboard. Other automatic calibration methods based on the camera calibration algorithm are presented in References [

27,

28], where the user frame is estimated by analyzing the chessboard pattern, thus extracting the camera coordinate system and parameters. Another vision-based approach in the field of surgical robots is shown in Reference [

29], where authors demonstrated their innovative method to calibrate the surgical robot arms in a collaborative environment without using external sensors nor calibration templates. Instead, their method directly uses images obtained by the endoscopic camera combined with the robot encoder data.

It is worth noting that even hybrid approaches may be of interest. In fact, authors of Reference [

30] developed a teleoperation system based on a frames calibration chain involving both vision and mechanical methods.

Thanks to the advances in the field of augmented reality, an innovative vision-based solution involving fiducial markers has been developed as an alternative version of standard methods adopting calibration templates, such as chessboards. Fiducial markers are asymmetrical matrixes of black and white squares representing a certain pattern that is easily recognized by computer vision algorithms. By analyzing the distortion of the pattern, it is possible to estimate the pose of the object (position and orientation) in the reference system of choice [

31,

32]. Compared to traditional methods, the adoption of fiducial markers to calibrate the user frame results in a faster and intuitive procedure. This is an important aspect in today’s industries because the operators working with cobots may not be robot experts but will be required to perform the user frame calibration when necessary [

33]. Moreover, fiducial markers may be of reduced size, hence they may be placed on a wide variety of surfaces to calibrate without interfering much with the overall set-up, a requirement that may be extremely limiting in some cases, for example when the workpiece is of reduced size or some portions of it cannot be occluded by large markers [

34].

However, albeit most cobots are supplied with built-in user frame calibration software, according to the specific needs of the workstation and to the characteristics of the software and of the cobot adopted, users may need to perform a custom calibration procedure. Therefore, this research work presents a quantitative study of the repeatability achieved by a one arm Rethink Robotics Sawyer manipulator adopting different user frame calibration methods. The aim is to analyze their pros and cons and determine which are the key factors users should keep in mind when designing custom calibration procedures, such as: (i) which calibration method to adopt, being it vision-based or traditional, (ii) the number of calibration points to adopt according to the set-up, and (iii) the overall calibration time. To our knowledge, this information is not sufficiently discussed in research works and very little comparisons between vision-based and traditional methods have been performed on cobots.

2. Calibration Procedure

2.1. Three-Points Calibration

Let us consider a set of

i points in the user frame with homogeneous coordinates

, corresponding to a set of points in the robot frame

. It is possible to estimate the affine transformation matrix

needed to convert the point coordinate from one frame to the other given the fundamental equation that converts a point in one reference frame to the other [

35]:

where matrix

is defined as:

It is worth noting that the aluminum markers (AMs) used to calibrate the system have slightly different heights; hence, to avoid hitting their surfaces with the sensor, for each point, a z-axis offset has been experimentally calculated. By moving the robot on top of the AMs in correspondence of points , it was possible to read from the robot encoder the corresponding coordinates .

To obtain a reliable reading, the proximity sensor must be positioned close to the AM while considering its measurement range. Furthermore, considering that the AMs may be positioned at different heights due to the table non-planarity, as a preliminary step, an offset has been obtained for each point affecting their z coordinate. is the distance along z read from the proximity sensor after it has been moved close or away from the AM to keep the sensor inside its measurement range. It is computed as , where is the z coordinate of point , and is the corresponding offset, which may be different for each point. Therefore, the robot is moved to a new position defined as .

Since the result of this procedure varies according to the proximity sensor readings, the robot has been moved to each point times, and, for each point, the offset has been saved. Hence, at each cycle, a different is obtained as . These points have been used to calibrate the plane.

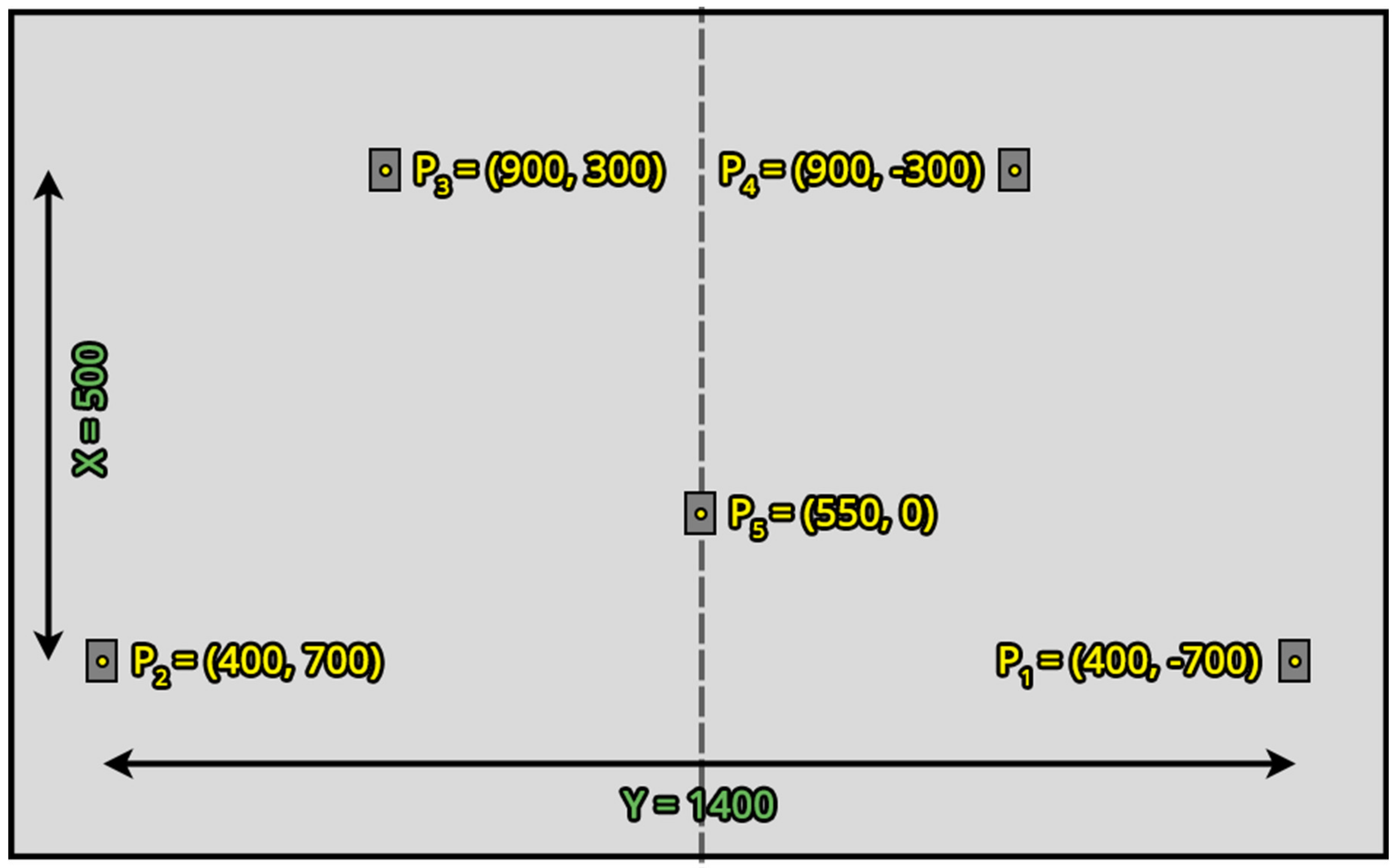

Let us consider

points laying on a plane positioned in a rectangular shape and a fifth one positioned in the middle of it as described in the UNI EN ISO 9283 standard regulation [

10]. It is possible to easily calculate matrix

given three points

in the user frame and their corresponding points

in the robot frame chosen in order to form an edge (e.g., points

,

and

of Figure 6). In fact, the origin point in the middle of the rectangle (e.g.,

of Figure 6) is easily estimated by choosing these three points and averaging their coordinates.

To compute the other values of

corresponding to the rotation parameters, it is sufficient to evaluate the two vectors connecting

to

and

to

, namely

and

:

From these vectors, it is possible to find the versors representing the rotations along the three axes, as follows:

Therefore, the matrix

is obtained by substituting the versors values calculated in Equation (4) and the origin point coordinates in Equation (2), resulting in:

Again, to be compliant to the standard regulation [

10], the procedure just described has been repeated for

times. In practice, after the definition of points

and

, the offsets

have been added to each point, obtaining points

. Therefore, a total of

n affine transformation matrixes

have been calculated.

2.2. Five-Points Calibration

The matrix may be calculated only with three points, i.e., using only three vectors to calibrate the robot frame resulting in a simpler method that, however, may result in inaccurate positionings. To reduce these potential errors, more than three points should be adopted.

In the case of multiple couples of points

and

representing the same position in different reference systems, matrix

may be estimated by solving a linear system using the least squares method [

36]. The more points are used to estimate

, the more accurate the resulting matrix is, provided that very few outliers are adopted.

Hence, given the procedure detailed in

Section 2.1, in this case, to obtain the robot points

according to Equation (1), it is necessary to calculate the optimal matrix

that best fits the points by solving a linear system:

which is solved by providing two matrixes, that contain the five points coordinates in the two reference frames, to the least squares method:

In a similar way to the procedure described in

Section 2.1, this has been performed

times, hence obtaining

calibration matrixes by using

n sets of

points.

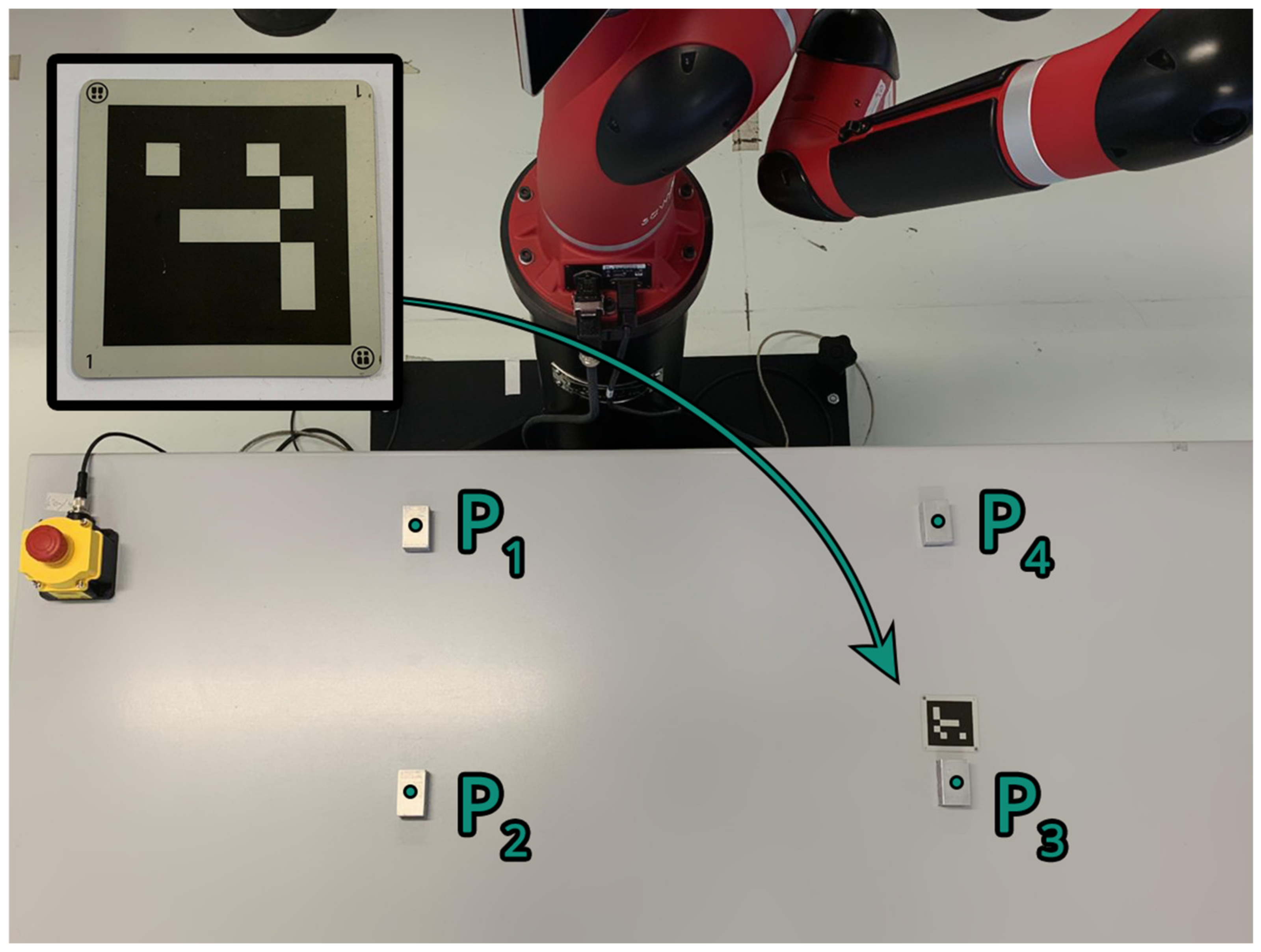

2.3. Robot Positioning System Calibration

The robot built-in calibration method called Robot Positioning System (RPS) [

37] is a vision-based calibration method that leverages the Sawyer robot monochrome wrist camera. Thanks to this system, users may quickly calibrate a working plane by placing a fiducial marker called “landmark” on the flat surface of choice (Figure 2). The system calculates the plane position and orientation by analyzing the spatial distortions of the landmark grid in the monochrome images taken by the wrist camera, thus computing the camera projection of the landmark grid points. This procedure is similar to the standard fiducial marker calibration described in Reference [

32]. As detailed in Reference [

37], to perform this calibration, the user moves the robot arm in manual guidance in correspondence of the landmark placed on the surface to calibrate. Then, the vision system mounted on the robot wrist takes some pictures of the scene, finds the landmark, and the built-in software automatically computes a plane that fits the position and orientation of the object by analyzing the distortion of its black and white grid.

However, this procedure computes planes locally around the landmark. Hence, even if more landmarks are placed on the surface, they are not used to compute a single calibration plane that considers their individual contributions; instead, local planes are computed around each fiducial marker.

Therefore, in this work, this calibration procedure has been carried out by placing a landmark in between points and (Figure 2). After the plane has been created by the software, the resulting affine transformation matrix has been used to move the robot to all the points of the set-up. It is worth noting that Sawyer robots possess (i) an internal operative system called Intera and (ii) a ROS (Robot Operative System) interface, leaving to users the choice of the method to use to operate the robot. However, Intera cannot be modified by users, especially non-expert ones, to implement custom-made software or even to export internal variables, such as the affine transformation matrix that the RPS system computes. This limits the procedure, forcing users to create custom software for the ROS interface instead if they wish to implement new functionalities.

3. Positioning Repeatability Calculation

To estimate the positioning repeatability of the robot, it is necessary to calculate the distance

of each actual position reached by the end-effector from a reference point. To simplify the set-up, this was performed by using a custom-made rectangular aluminum object (alloy 6061-O) of size 50 × 30 × 20 mm

3 (aluminum marker, AM) and measuring the distance from the end-effector along the z-axis only, using a proximity sensor mounted on the end-effector. The acquisition has been repeated

times; hence, for each point

n distance values

have been obtained. To properly compute the deviation

of the data, it is necessary to calculate (i) the mean of the data

for each point as in Equation (9), (ii) the distance from the mean

corresponding to each single data

shown in Equation (10), and (iii) the resulting mean

in Equation (11).

Then, the deviation

of the data for each point may be computed as:

The positioning repeatability for each point is obtained as:

Finally, a single parameter is defined to summarize the overall performances of the robot and to allow comparisons between tests. This value, called

, is an averaged sum over the individual

values obtained for each point

calculated as following:

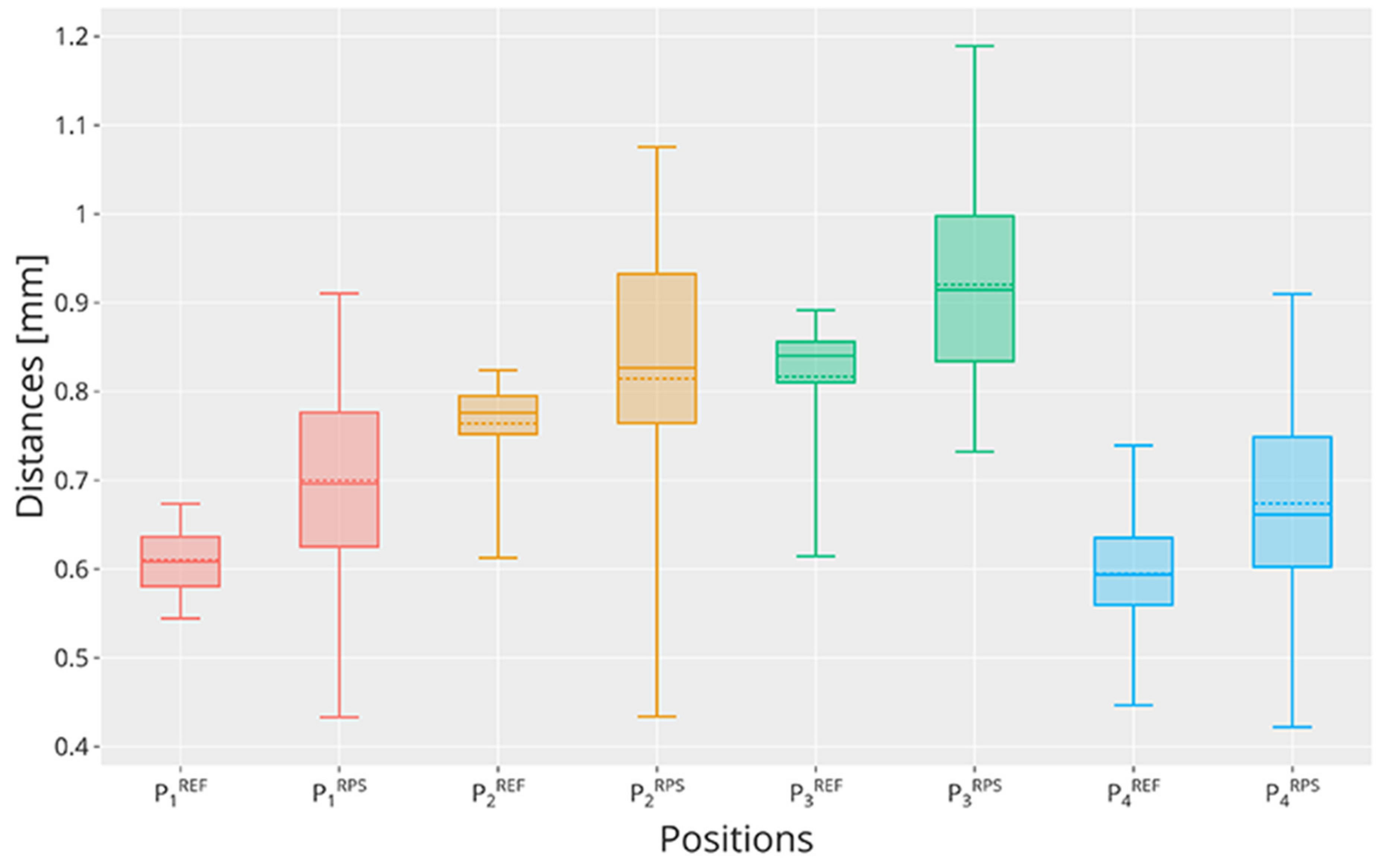

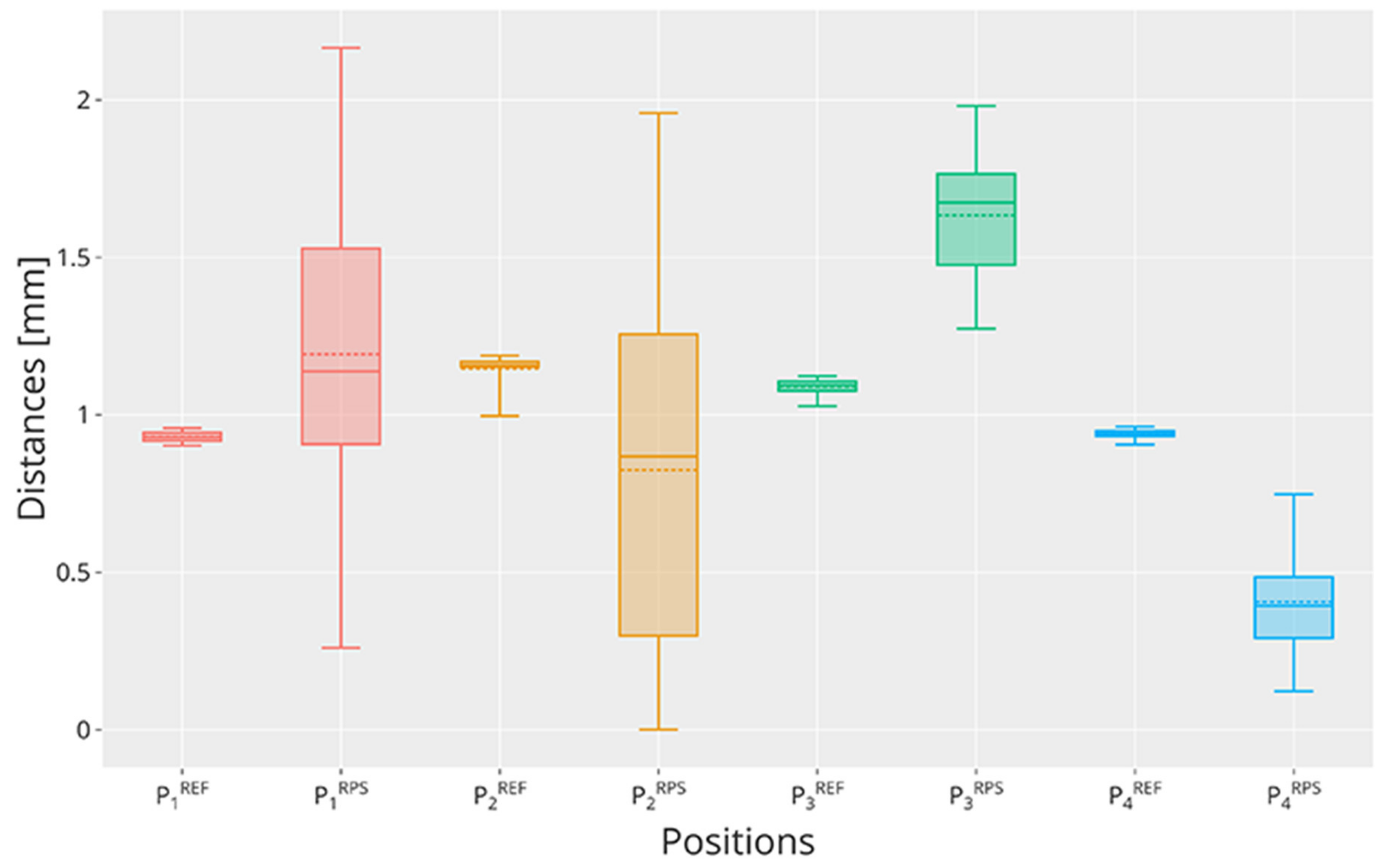

It is worth noting that the repeatability calculated in the following experiments may be different if the point to which the robot moves to has been calculated, thanks to the application of a calibration matrix , or not. In the first case, the resulting repeatability refers to the calibration itself; hence, it is a measure of how well the user frame has been calibrated with respect to the robot. On the other hand, by moving the manipulator to a certain known position (by sending to its controller the corresponding waypoint in robot coordinates), the resulting repeatability refers to the robot (reference repeatability).

5. Conclusions and Future Work

This paper aimed to analyze different user frame calibration methods (vision-based and traditional mechanical methods) that may be used by practitioners working with cobots in industrial processing lines. The analysis aimed at highlighting key factors to keep in mind when designing custom user frame calibration software to achieve the best results. To our knowledge, these are details that are not sufficiently discussed in the literature, leading to incorrect and not precise calibration procedures performed by operators working on the production line. These factors are: (i) which calibration method to adopt, being it vision-based or traditional, (ii) the number of calibration points to adopt according to the set-up, and (iii) the overall calibration time.

As shown by the literature analysis, the choice of the calibration method usually depends on the working conditions of the robot and of the available equipment and software. In fact, if the cobot does not possess a built-in camera, adopting vision-based calibration methods may be difficult or not reliable enough; for example, mounting an external camera on the robot wrist could not be a feasible or steady solution that guarantees the right conditions for the method to work properly. On the other hand, traditional user frame calibration systems adopt rigid markers that may not be placed on certain set-ups and often require users to manually move the end-effector near the markers. Hence, if the cobot is supplied with a wrist camera, it is suggested to adopt fiducial markers calibration procedures because the markers are usually economic (in most cases, they can be printed by users and glued on the surfaces), and the calibration procedure is more intuitive.

Considering the Rethink Robotics Sawyer robot adopted in this work and the limitations of the built-in calibration procedure presented in

Section 2.3, the quantitative analysis has been first focused on the evaluation of the robot repeatability performances achieved by adopting the RPS method. This resulted in a more complete analysis of the method’s limitations that emerged from the literature but, to our knowledge, had never been studied in detail before. Hence, we compared two traditional methods involving rigid markers to quantitatively measure the performances variations occurring when more points are adopted to obtain the user frame calibration plane.

The positioning repeatability evaluation has been performed by moving the Sawyer cobot (i) without calibration, hence obtaining a reference repeatability, (ii) after the user frame has been calibrated by a vision-based method, and (iii) after the user frame has been calibrated by two traditional mechanical methods. The vision-based method adopts the proprietary RPS system of Rethink Robotics, involving a fiducial marker and the monochrome camera mounted on the cobot wrist, needed to estimate the pose and orientation of the landmark to compute the affine transformation matrix. The two traditional methods considered in this study are standard procedures to compute the affine transformation matrix needed to calibrate the user frame by solving a three or five-points linear system, as described in the UNI EN ISO 9283 standard regulation.

First, the analysis has been conducted on the RPS system alone, which has been tested for all axes independently. It is important to stress that, since the RPS system is a proprietary software of Rethink Robotics, it was not possible to modify the calibration procedure which only adopts one landmark at a time and computes a local plane around it. Hence, the affine transformation matrix resulting from the procedure is not accurate and does not model the entire surface in a satisfactory way. Moreover, even if the surface is covered by landmarks, the resulting planes are computed locally, hence the procedure does not consider the contribution of several landmarks in the calculation of the affine transformation matrix. This is a serious issue of this procedure that non-expert users may ignore, which is made evident by the results of this experiment. In fact, the repeatability values of the tested points are extremely different and depend on the position of the landmark used: values less than 1 may be observed for points closer to the landmark, while values close to 2 may be observed for the other two. This is particularly worrying since the surface to calibrate was of limited dimension, pointing out that the local plane computed by the RPS system is very small.

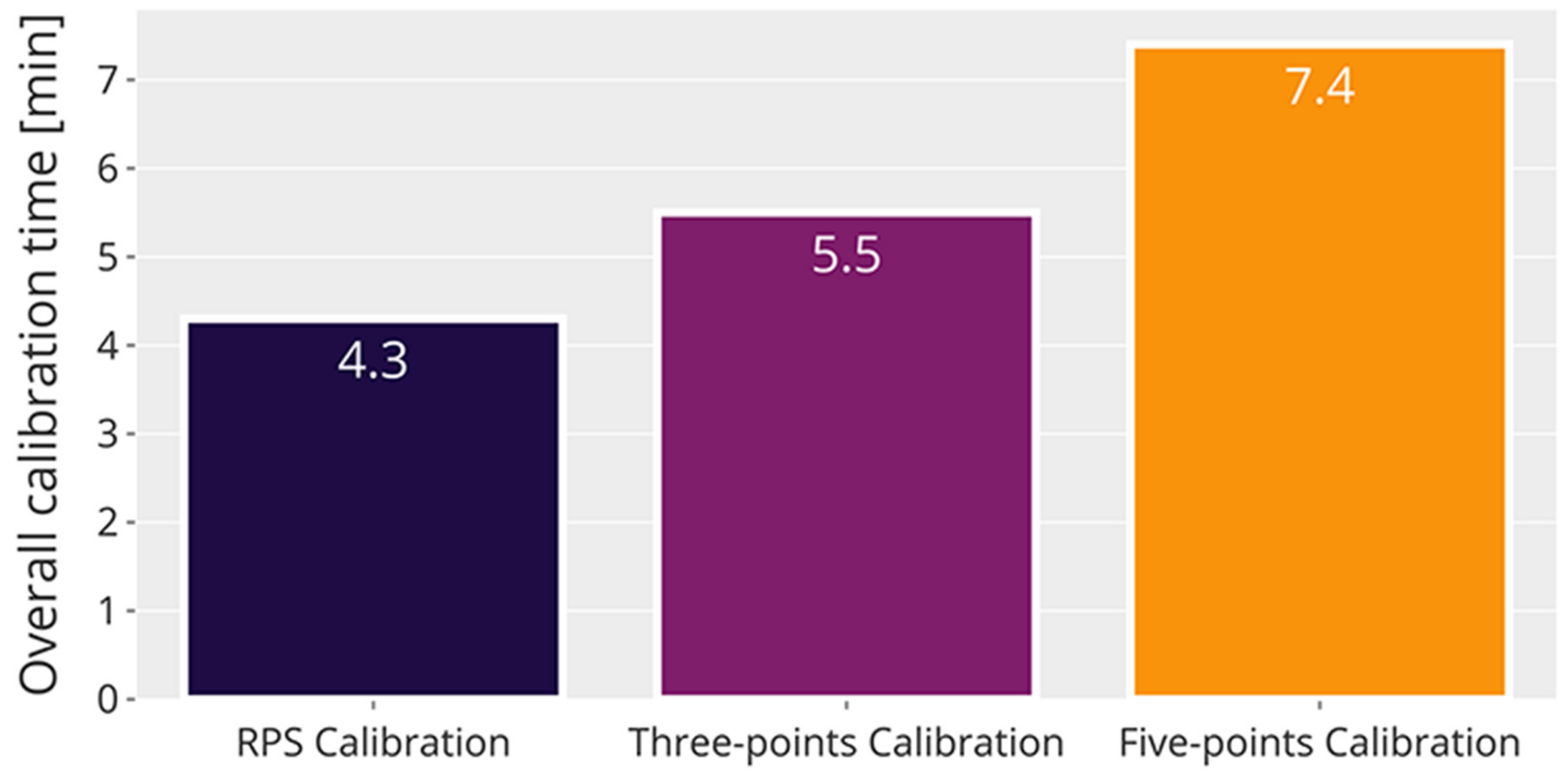

Second, the analysis focused on the comparison of two traditional calibration methods involving rigid markers and was aimed at determining the ideal number of calibration points to adopt in a user frame calibration procedure. As expected, adopting five-points leads to the best results because, by optimizing the transformation matrix using more points, the estimation of the point coordinates in the new reference system is typically better. However, it is shown that increasing the number of points also increases the overall calibration time required. Furthermore, since the repeatability values cannot be better than the reference repeatability, it is also shown that incrementing the number of calibration points to more than five does not guarantee major improvements on the performances. It is also worth noting that, in some cases, due to the user frame limitations, such as the presence of occlusions or geometry issues of both the user frame and the cobot adopted, the number of calibration points to use may be limited. Hence, by using only three points it is shown that the repeatability results are overall good in exchange of a reduced amount of time needed to perform the calibration. Nevertheless, it should be investigated if other conditions may have affected the measures, for example (i) the environmental conditions, such as temperature and vibrations, (ii) the robot stability, and (iii) the numerosity of the sample. Such considerations deserve a different and more rigorous study in the future to correctly address the contribution of each of these conditions.

Given the serious issues of the RPS calibration procedure that resulted from the experiments, in future works, we aim to develop a ROS-based calibration procedure involving more landmarks for the Sawyer cobot, hence obtaining a refined transformation matrix that could consider the eventual distortions of the plane on which the user frame lays. In fact, it is shown that, when calibrating a wider space, the results get generally worse for each calibration method; thus, a better calibration procedure should involve more points if the surface is particularly wide or irregular. However, this behavior is also influenced by the mechanical characteristics of the cobot, such as vibrations and geometrical limbs limitations. Therefore, if the cobot must operate in a wide workspace, it is suggested to plan the task accordingly to reduce these effects. Possible solutions may be to reduce the robot operating cycle or its speed, hence avoiding wide limb movements whenever possible.