Optical Camera Communications: Principles, Modulations, Potential and Challenges

Abstract

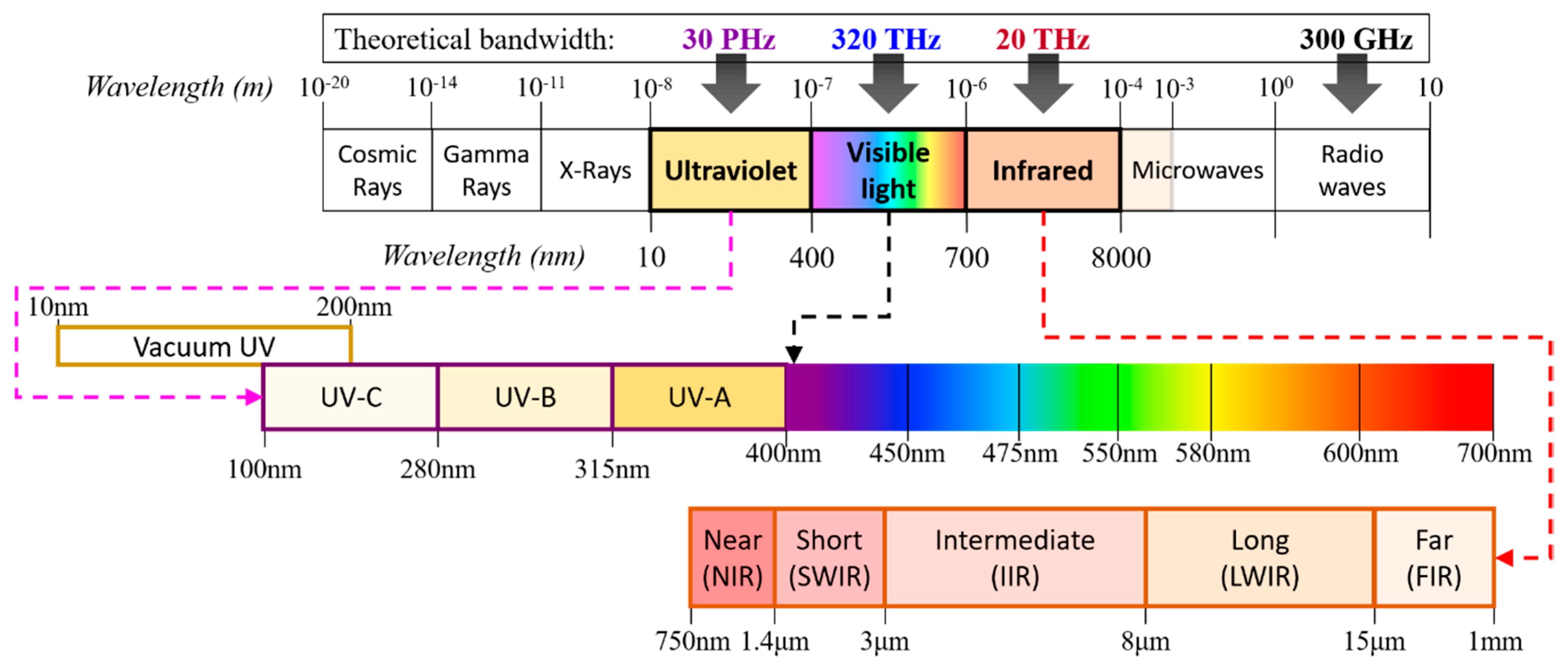

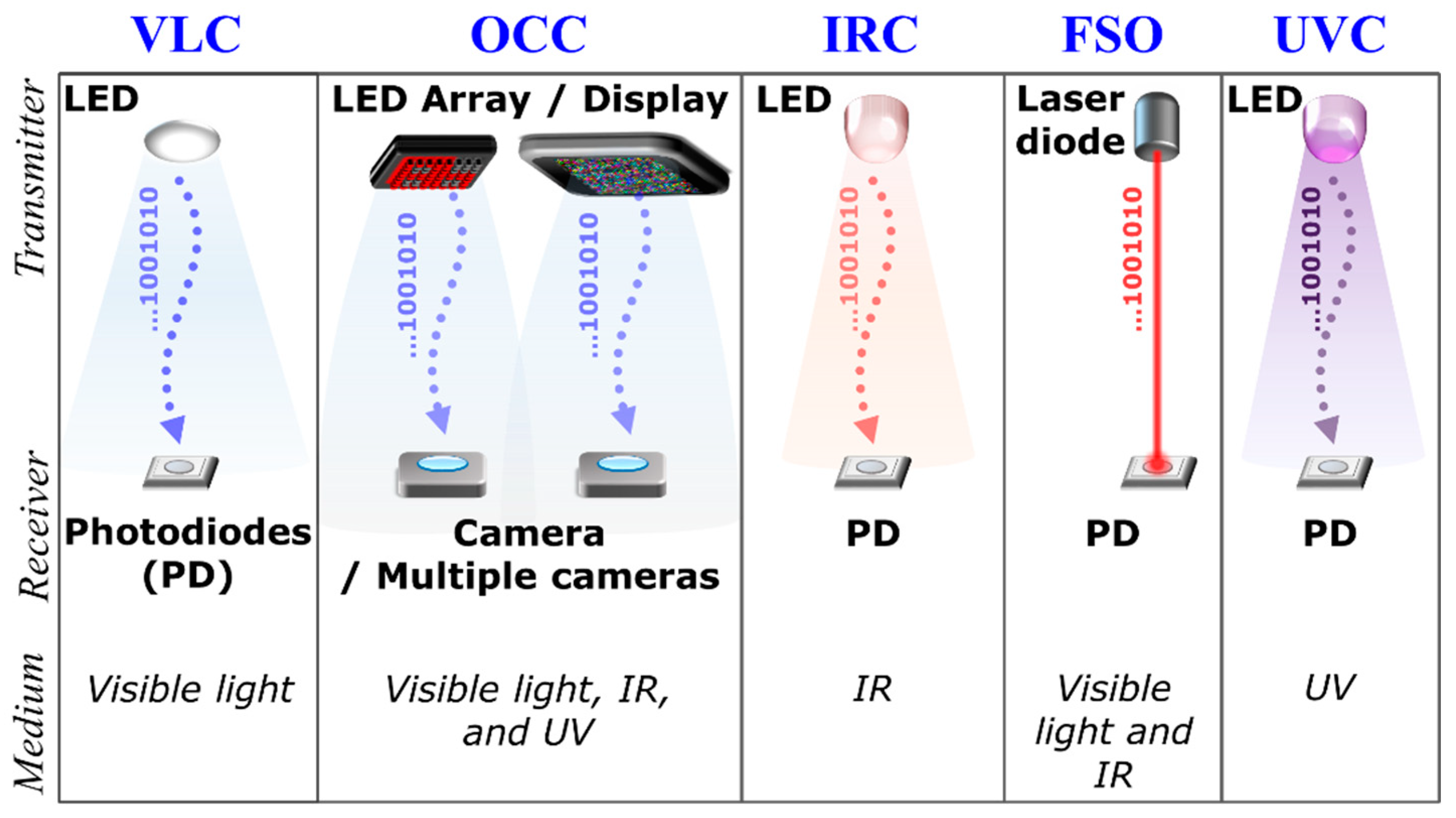

1. Introduction

2. Optical Camera Communication

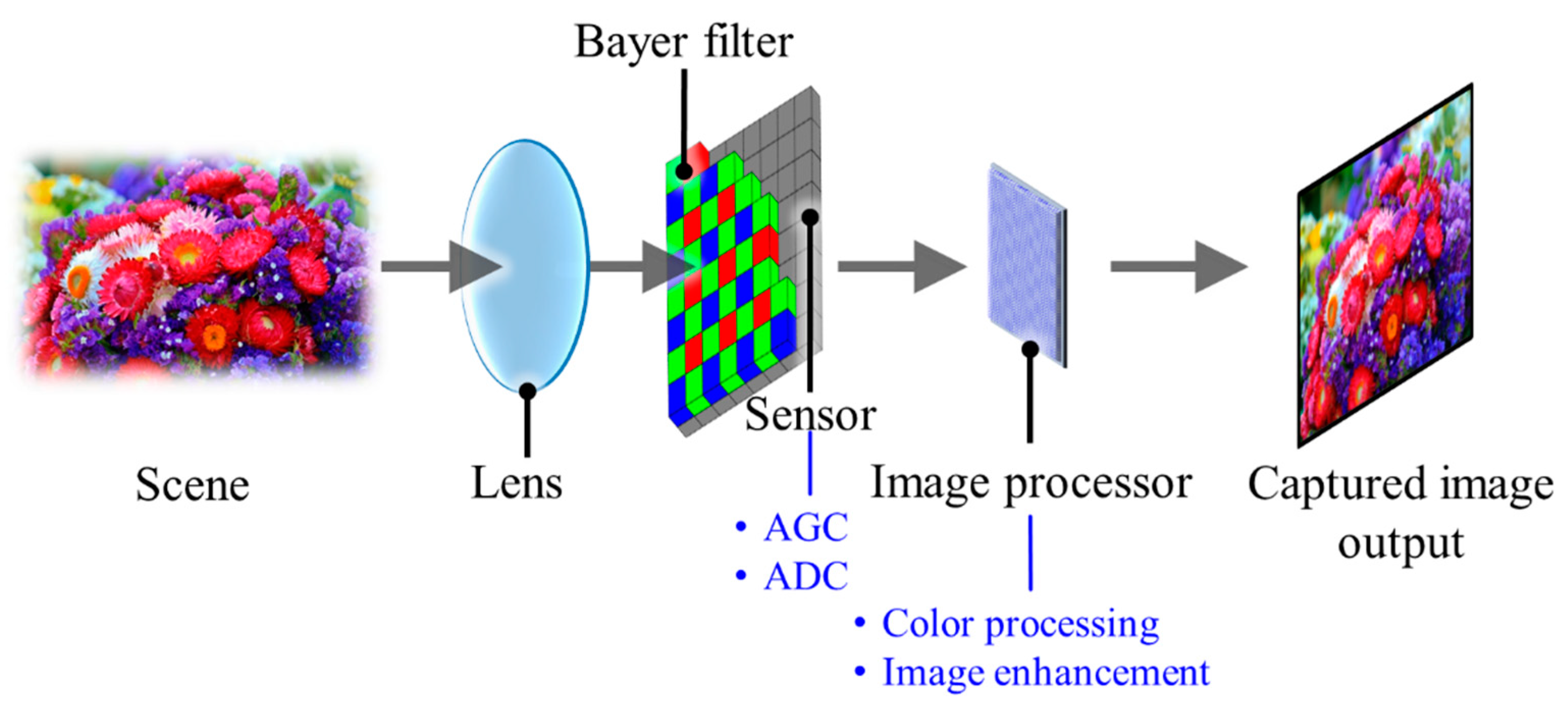

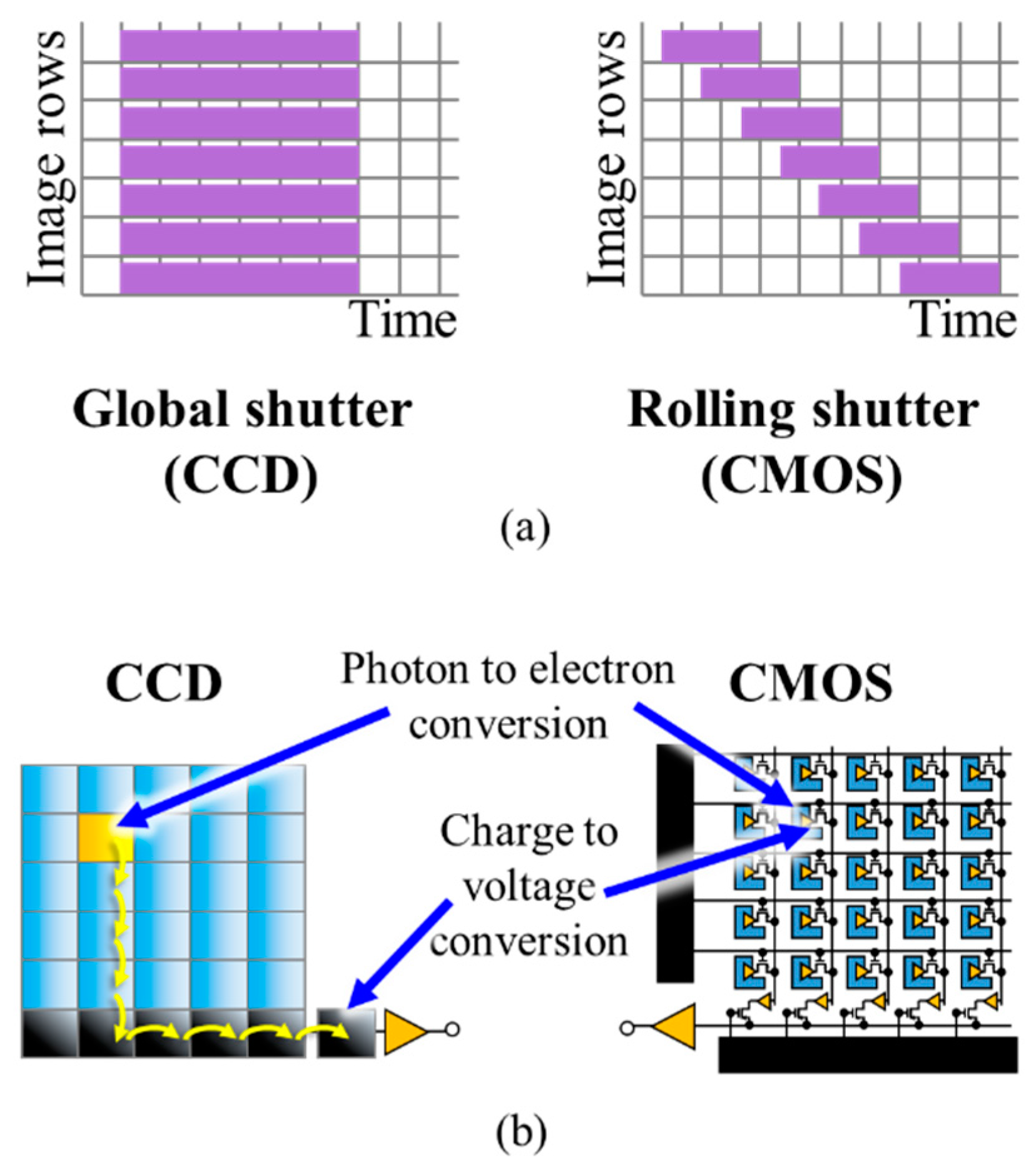

2.1. Principles

2.2. Standardization

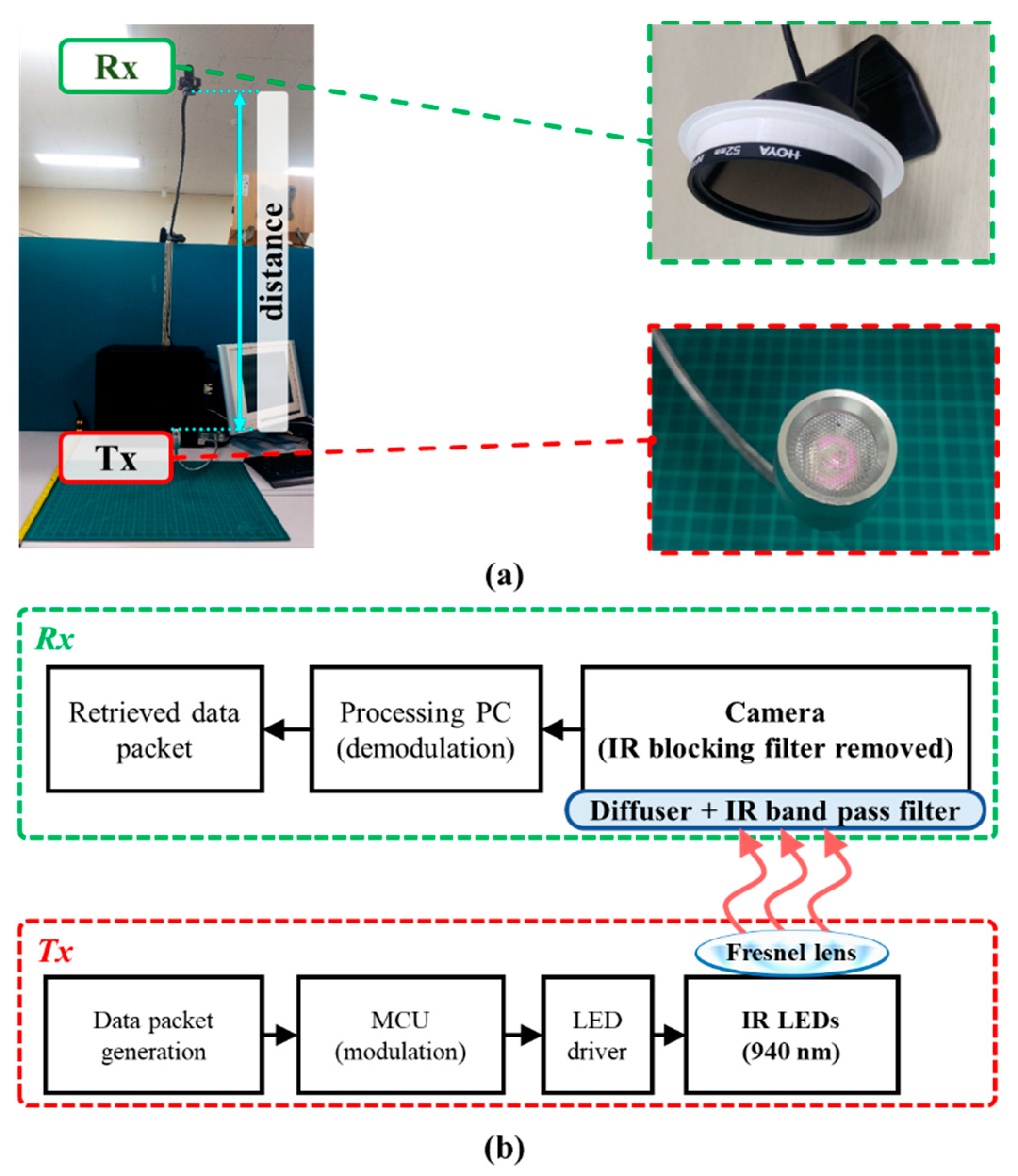

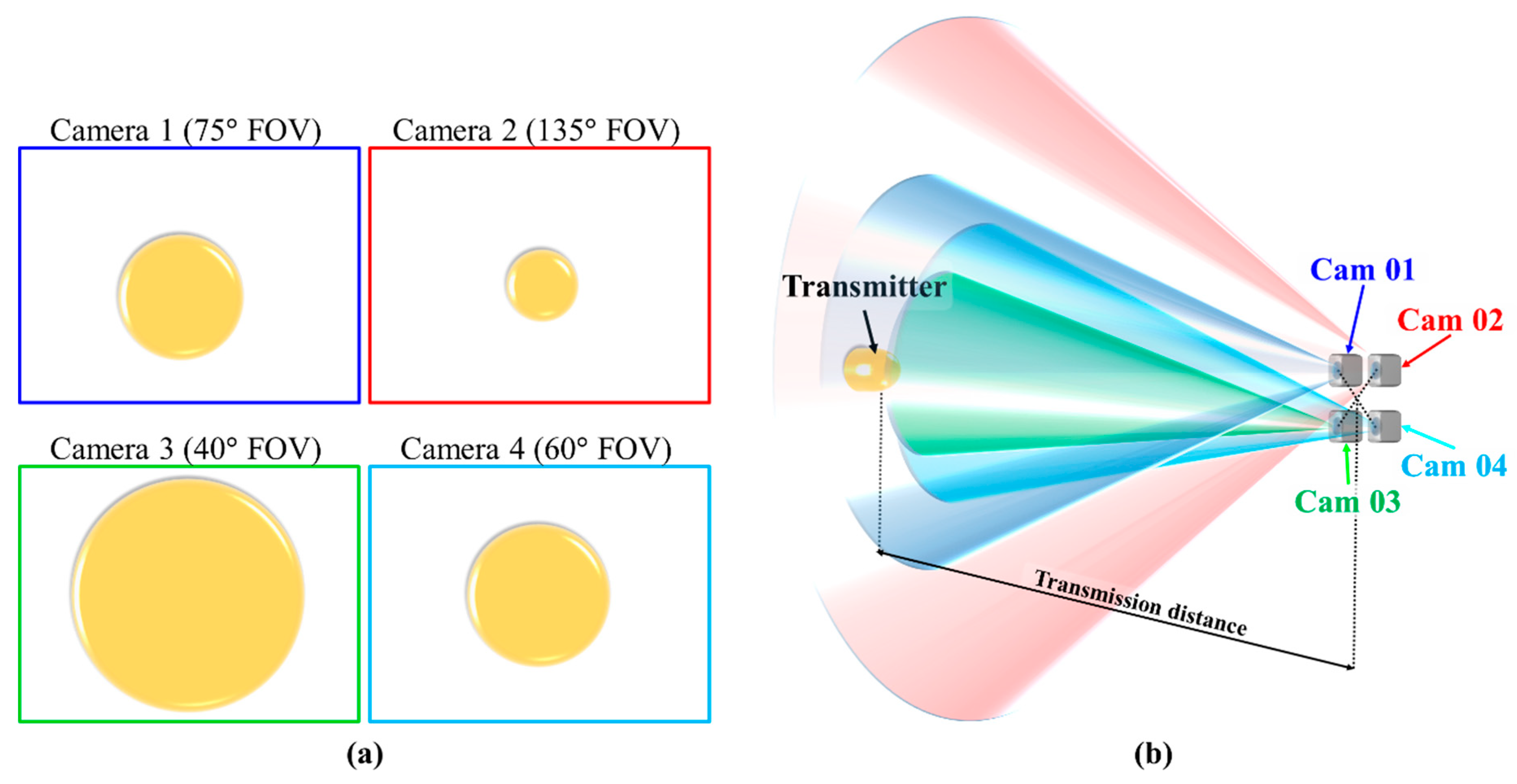

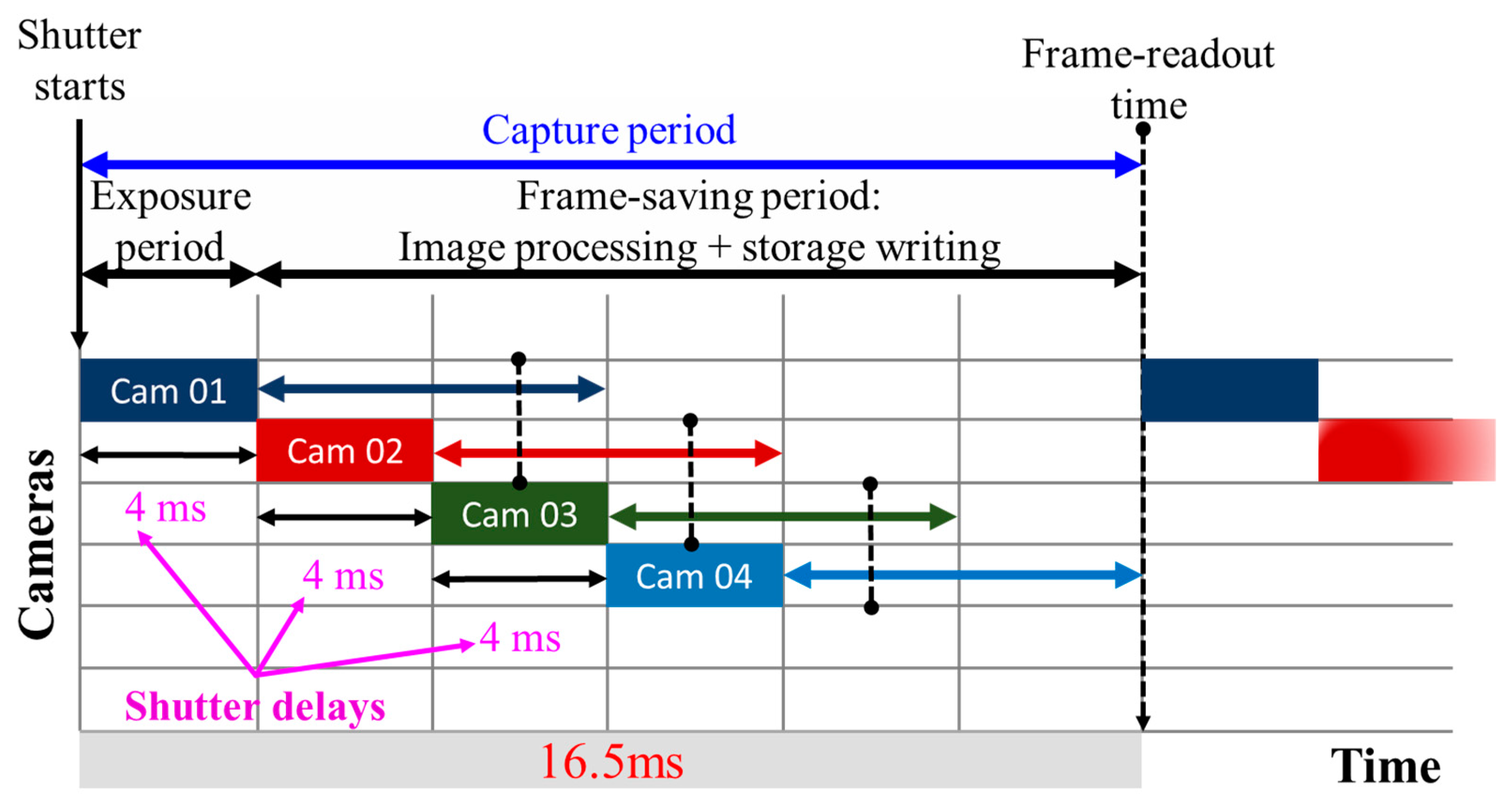

2.3. Transceivers

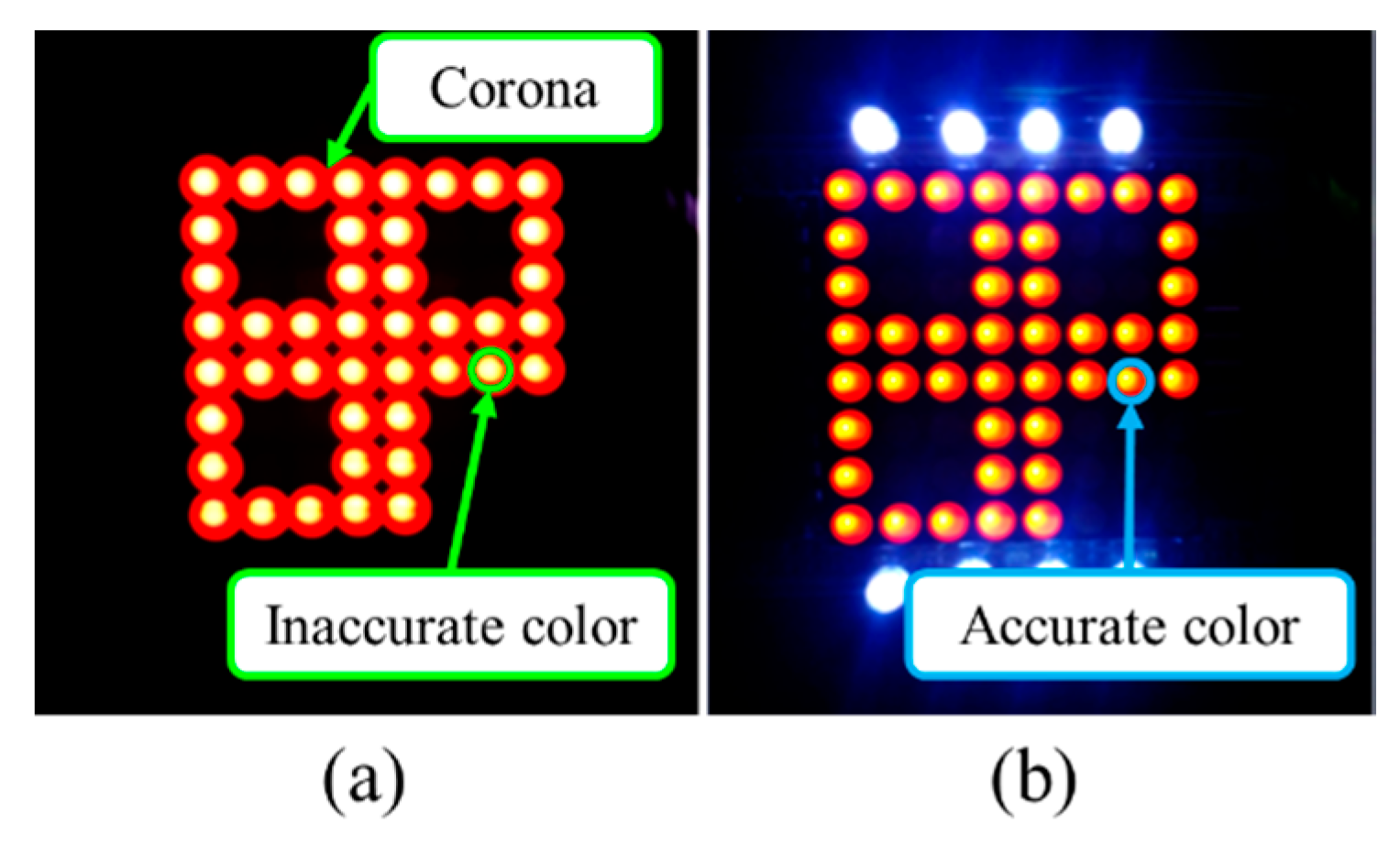

2.4. Channel Characteristics

3. Modulations

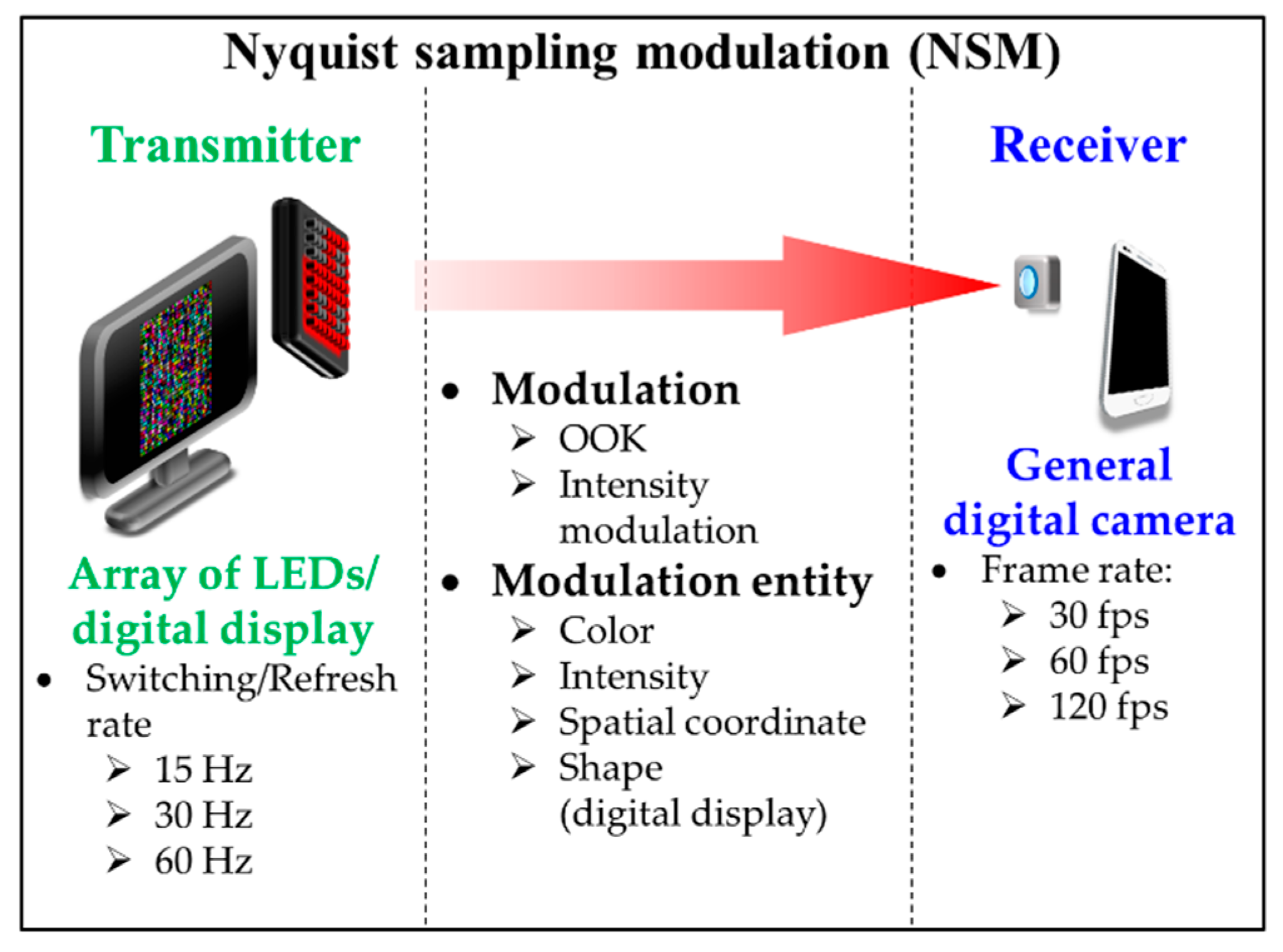

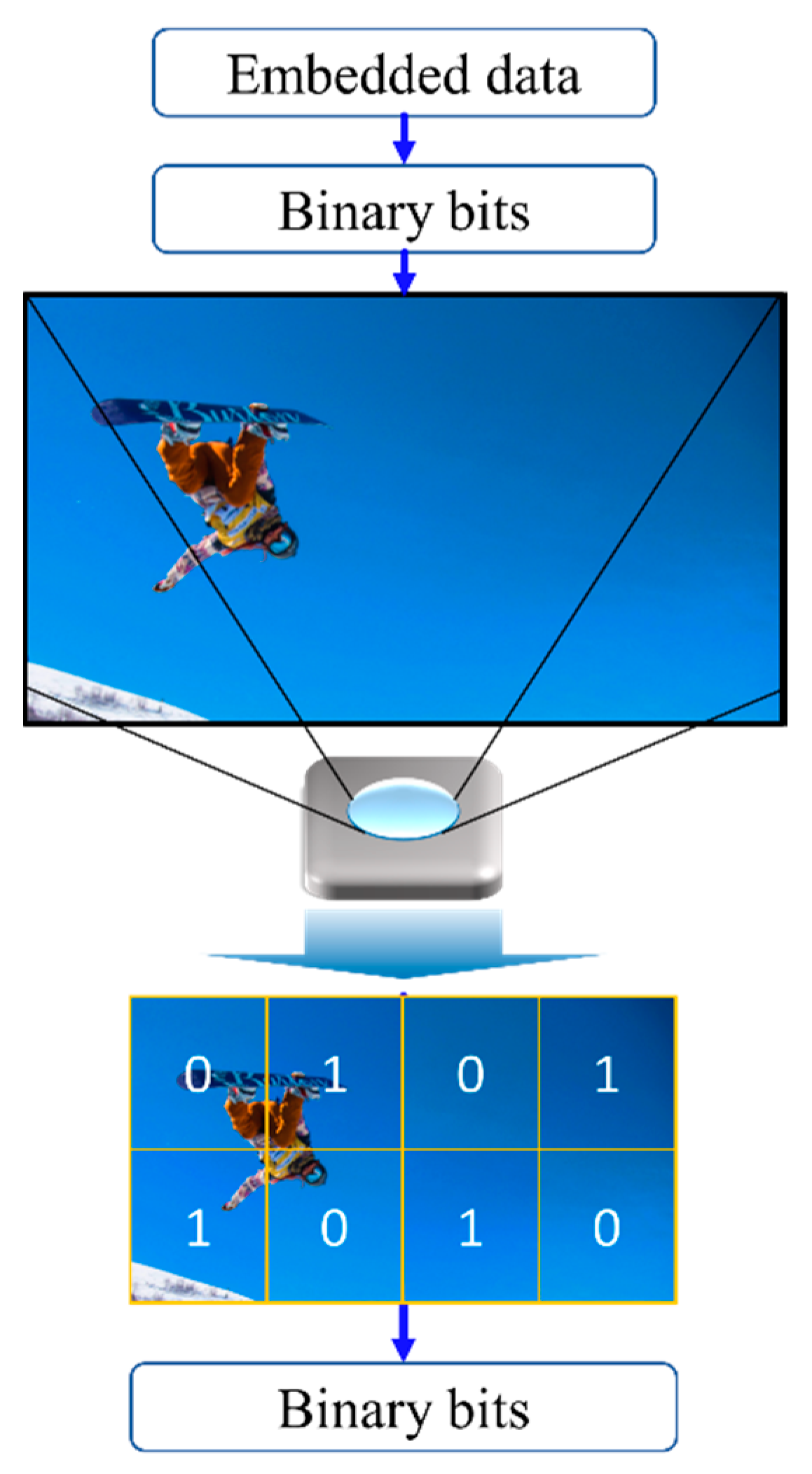

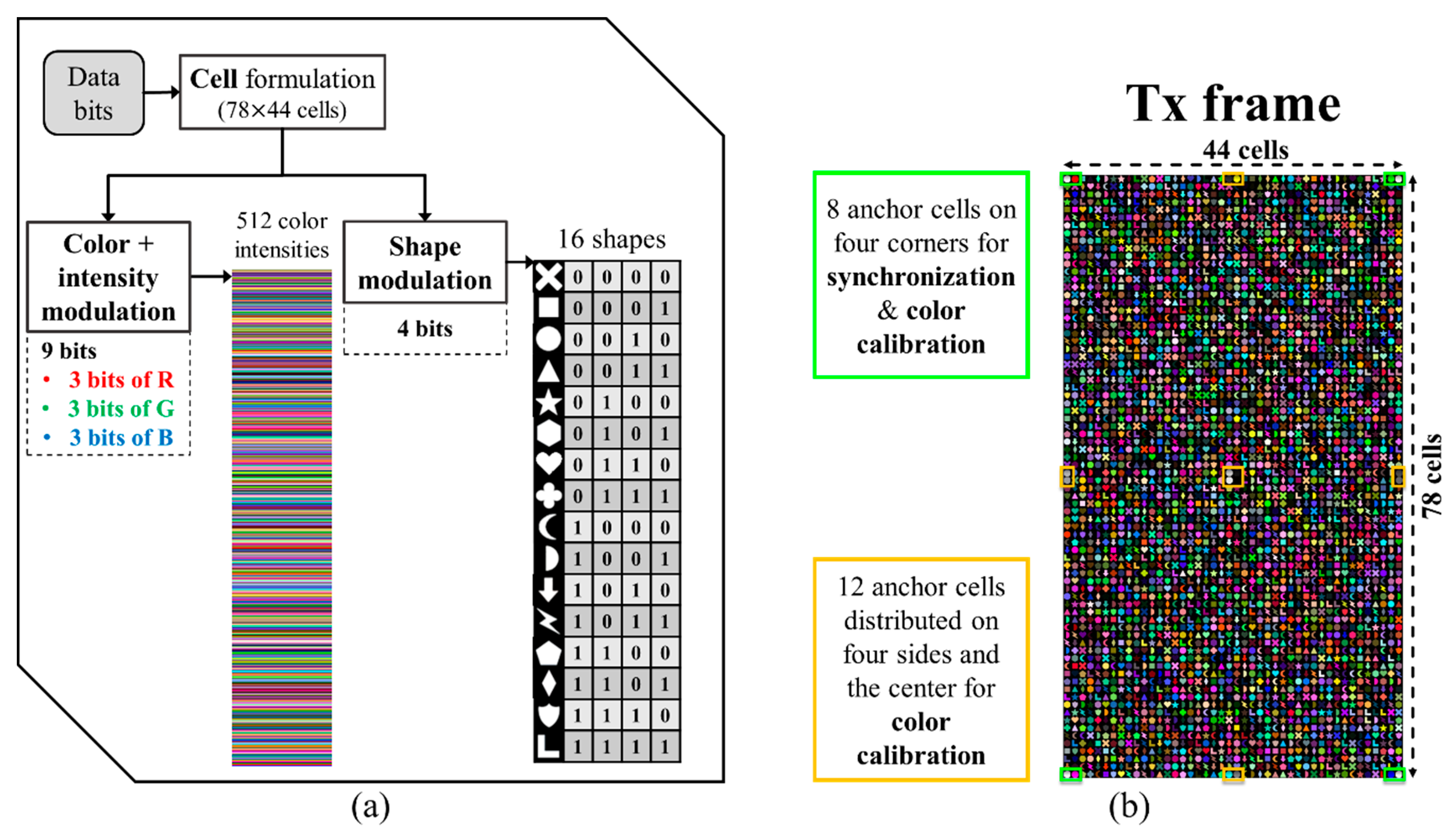

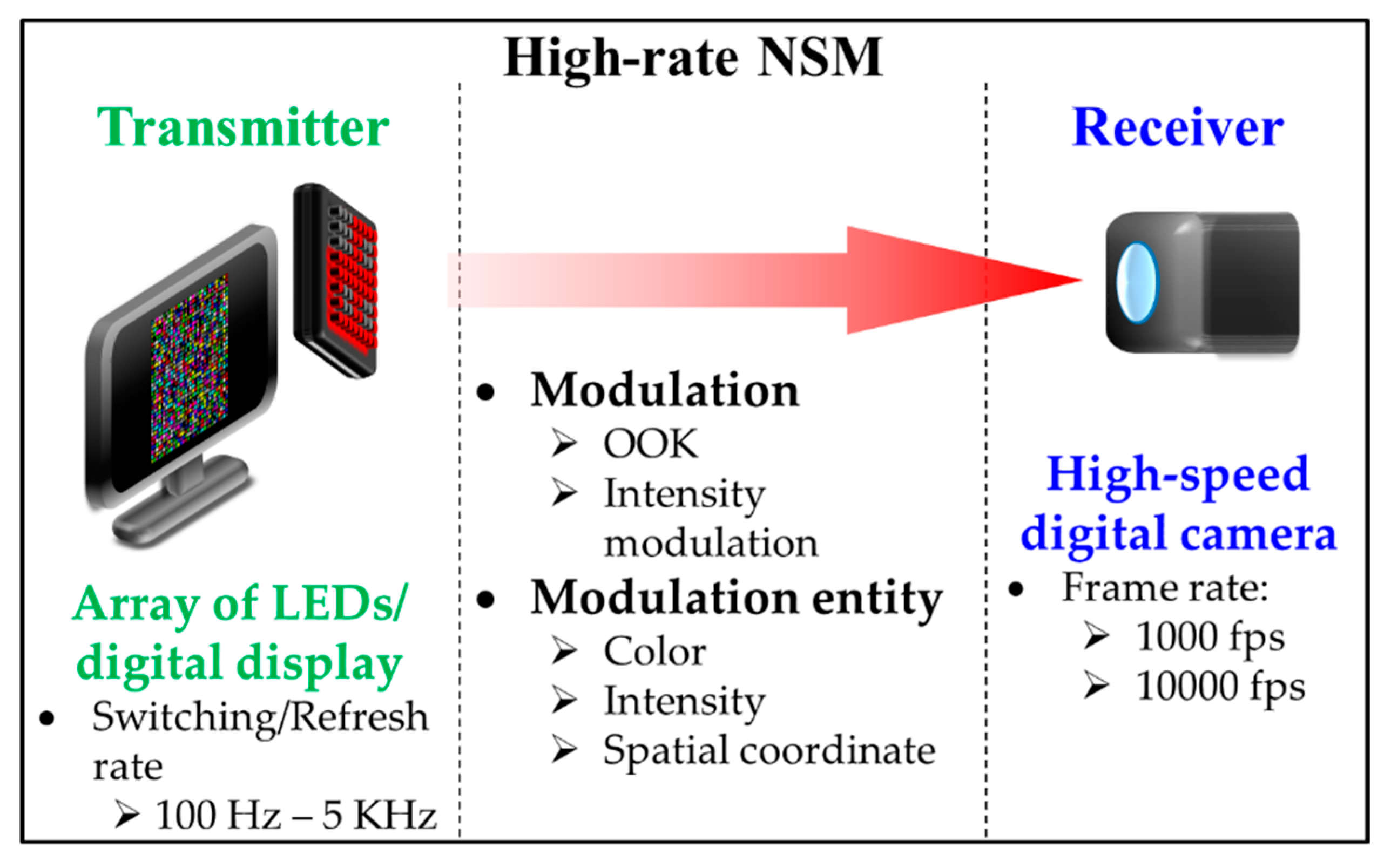

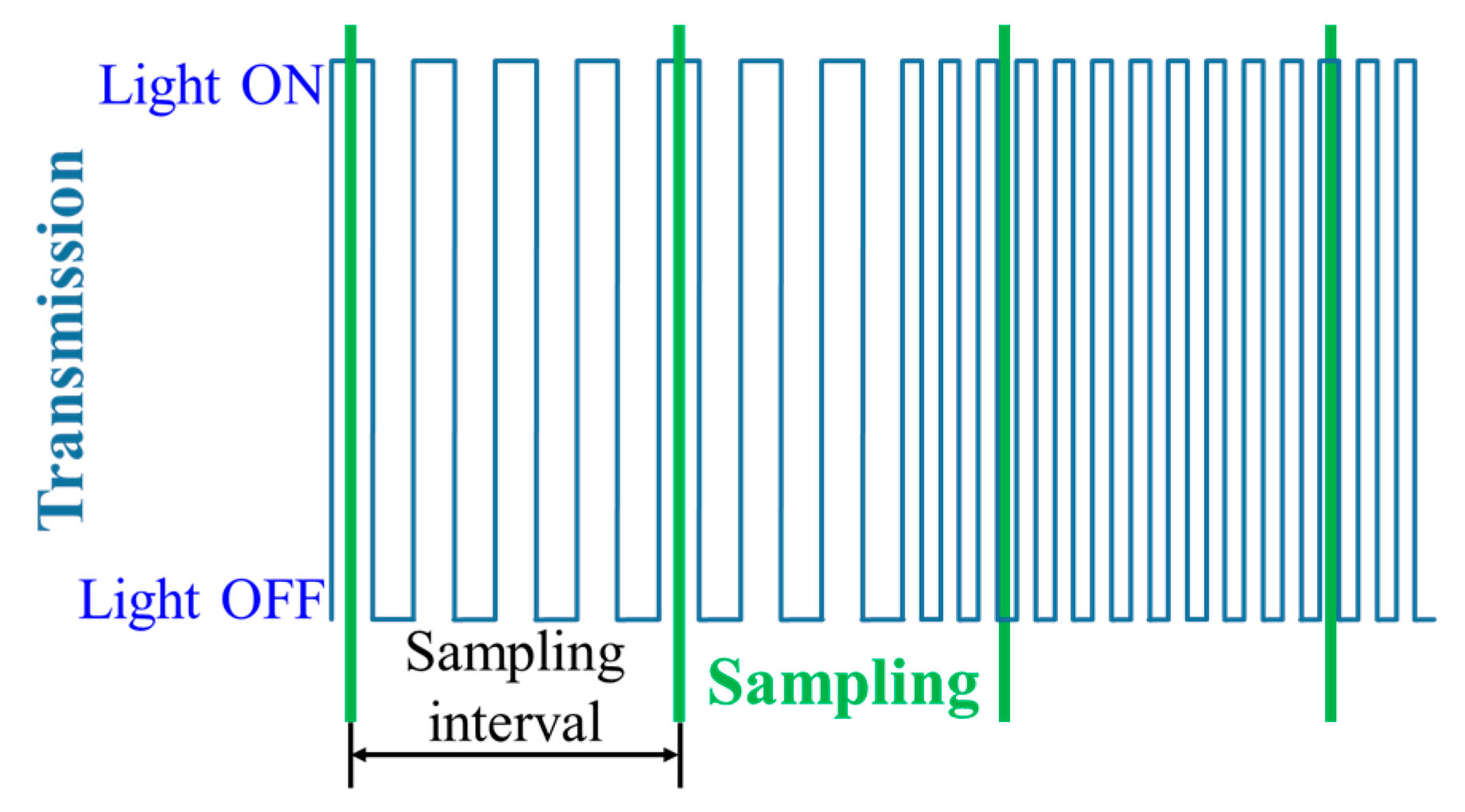

3.1. Nyquist Sampling Modulation

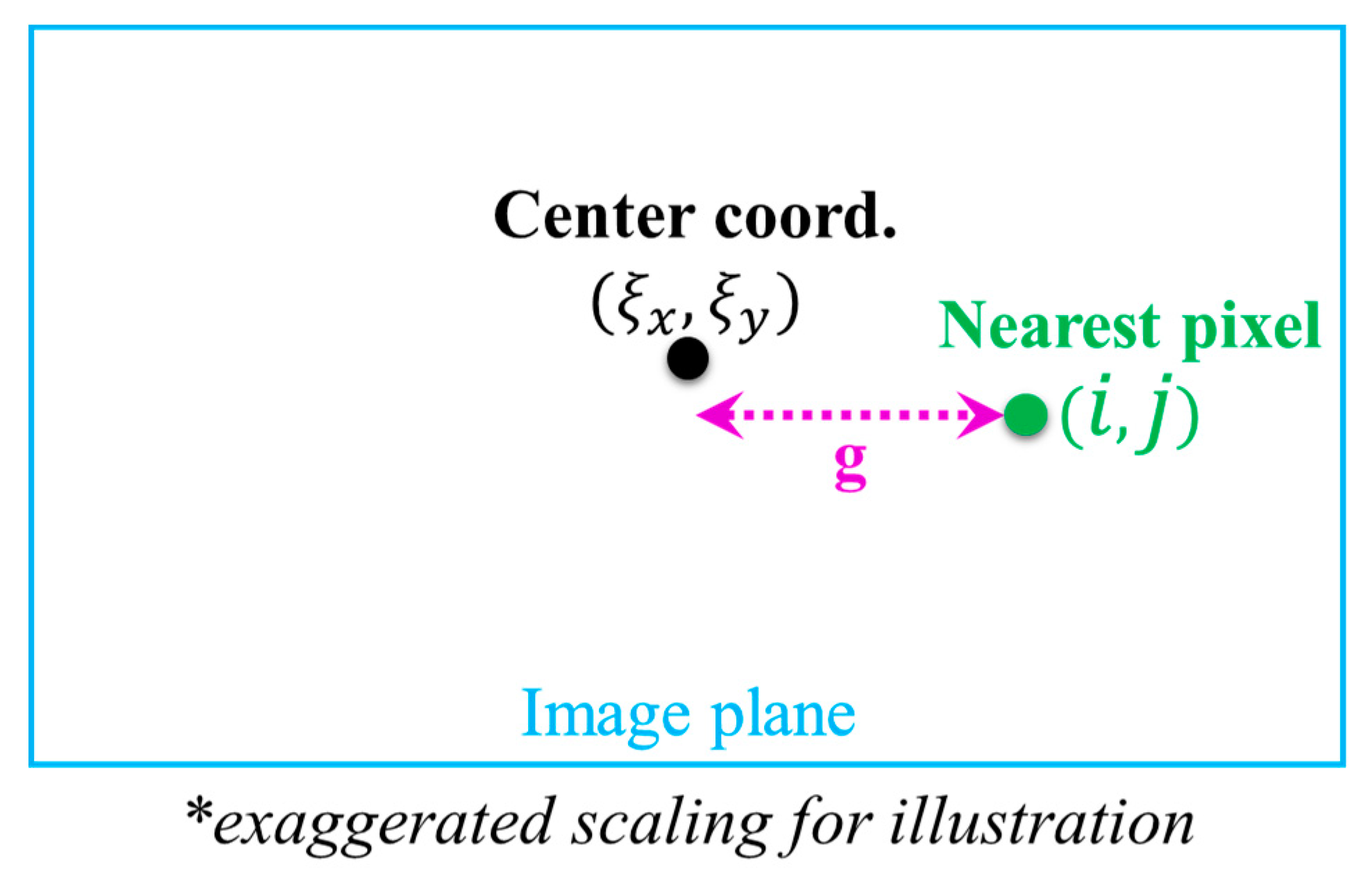

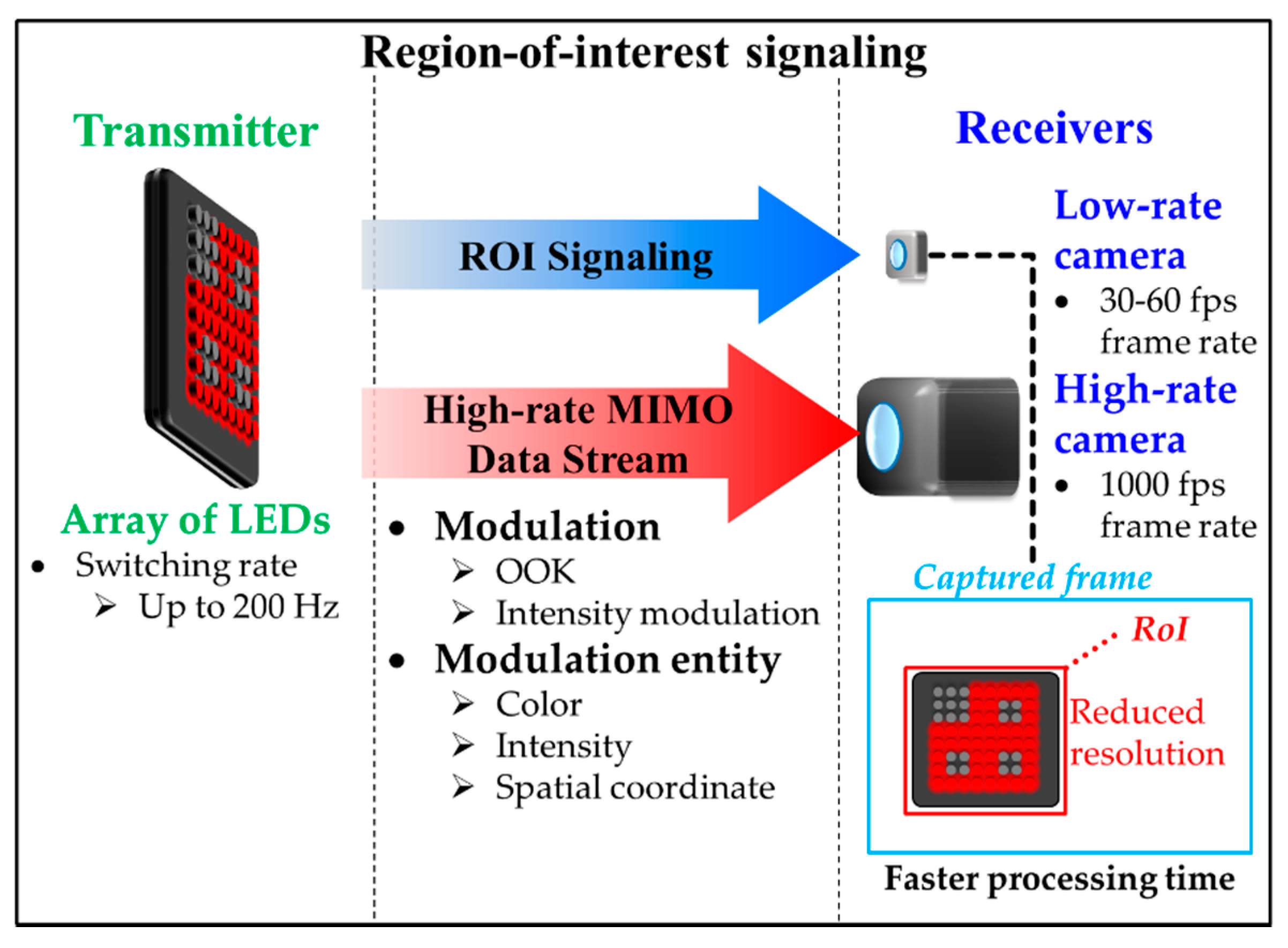

3.2. Region-of-Interest Signaling

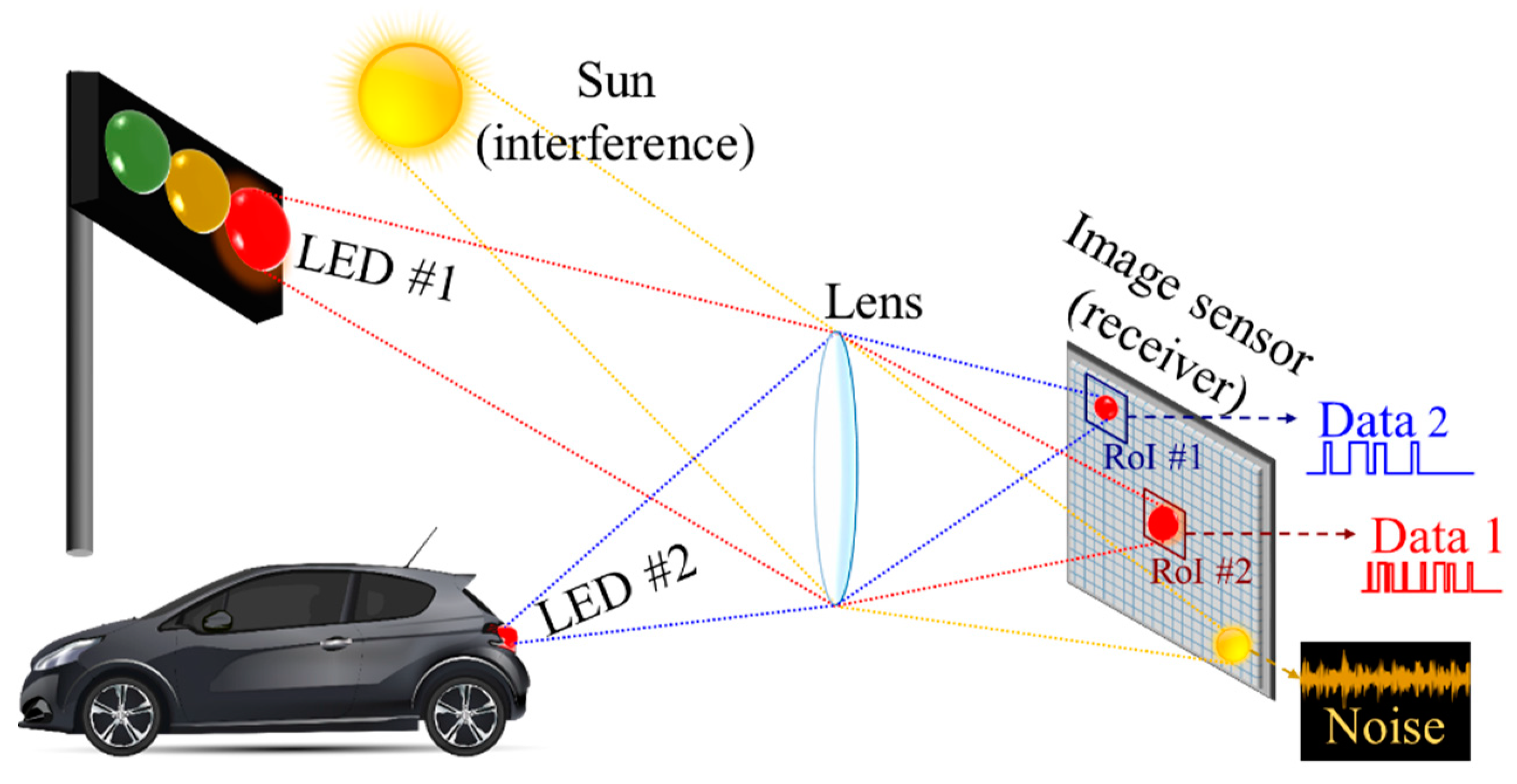

3.2.1. RoI Signaling

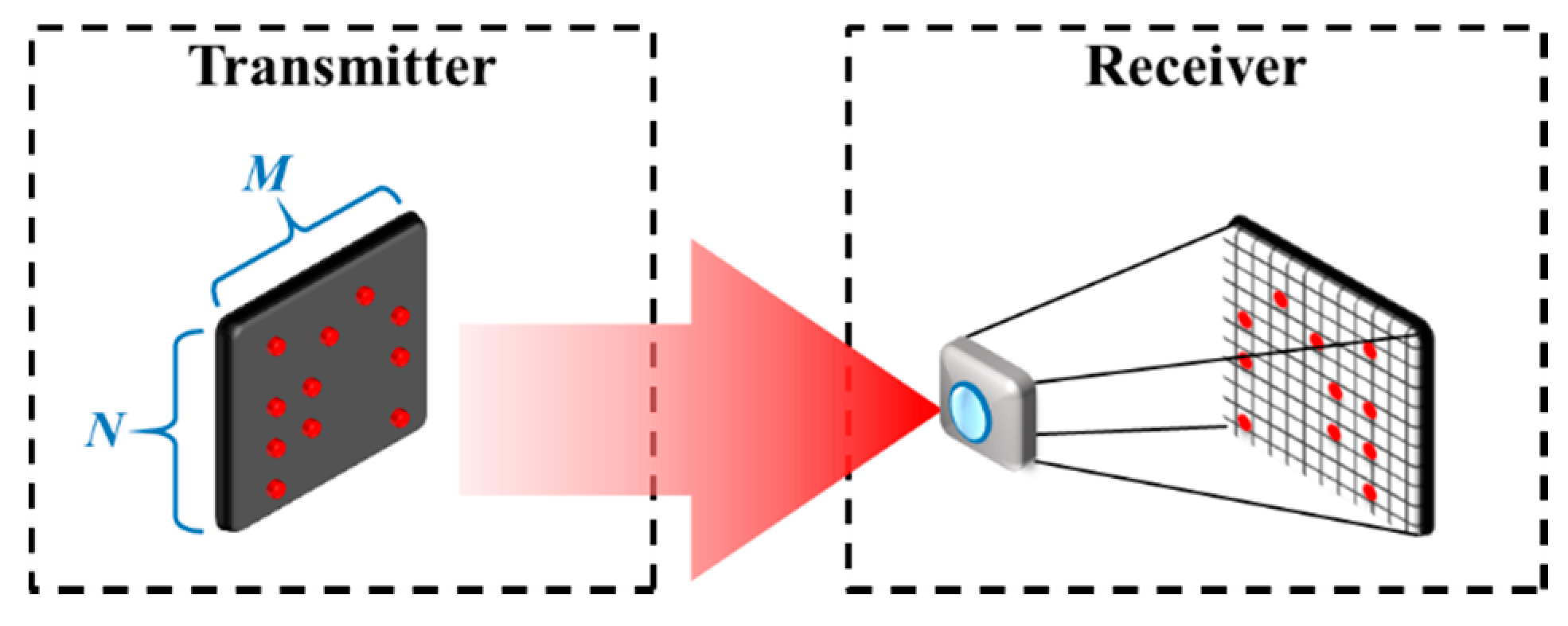

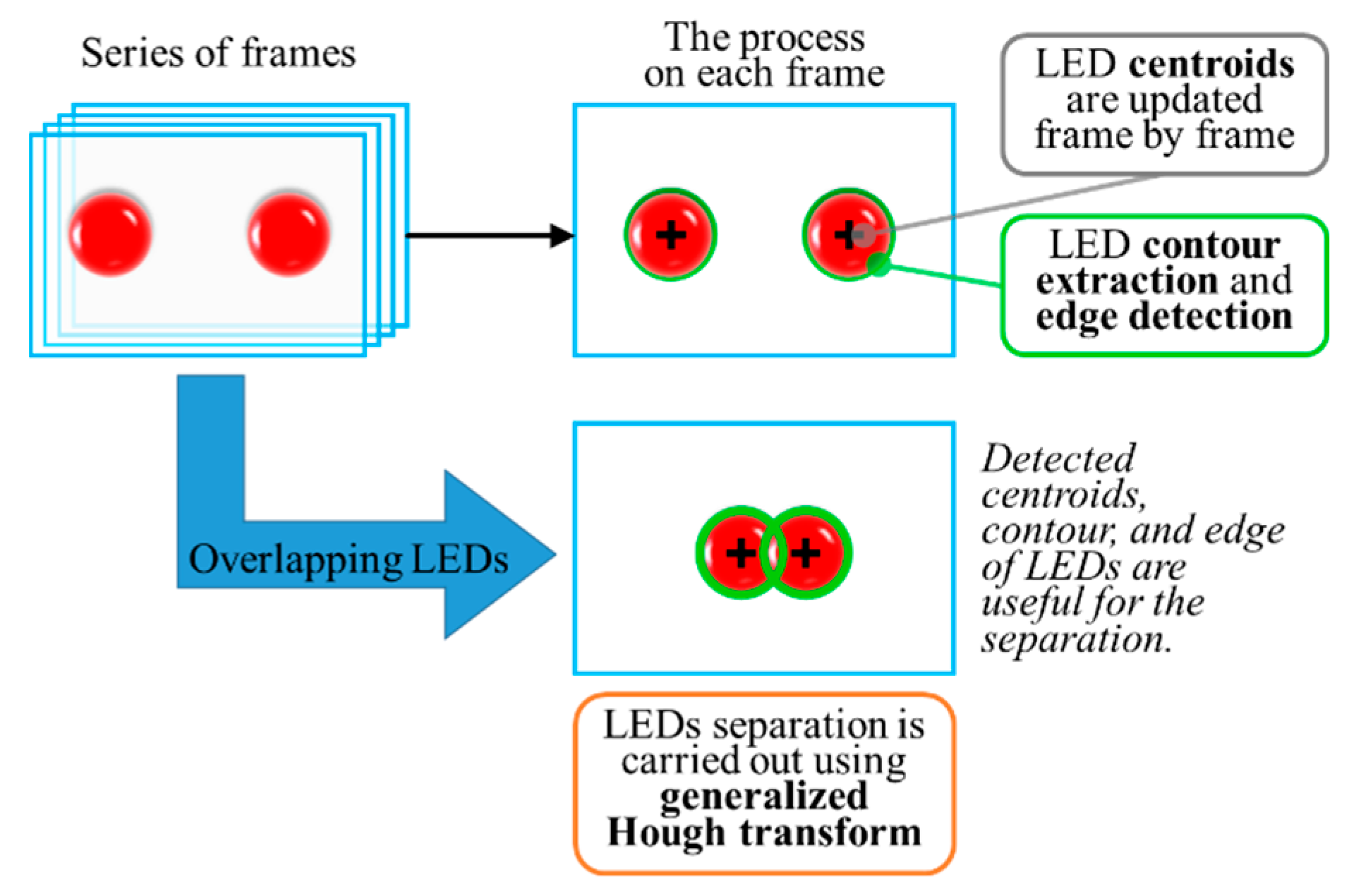

3.2.2. High Rate MIMO Data Stream

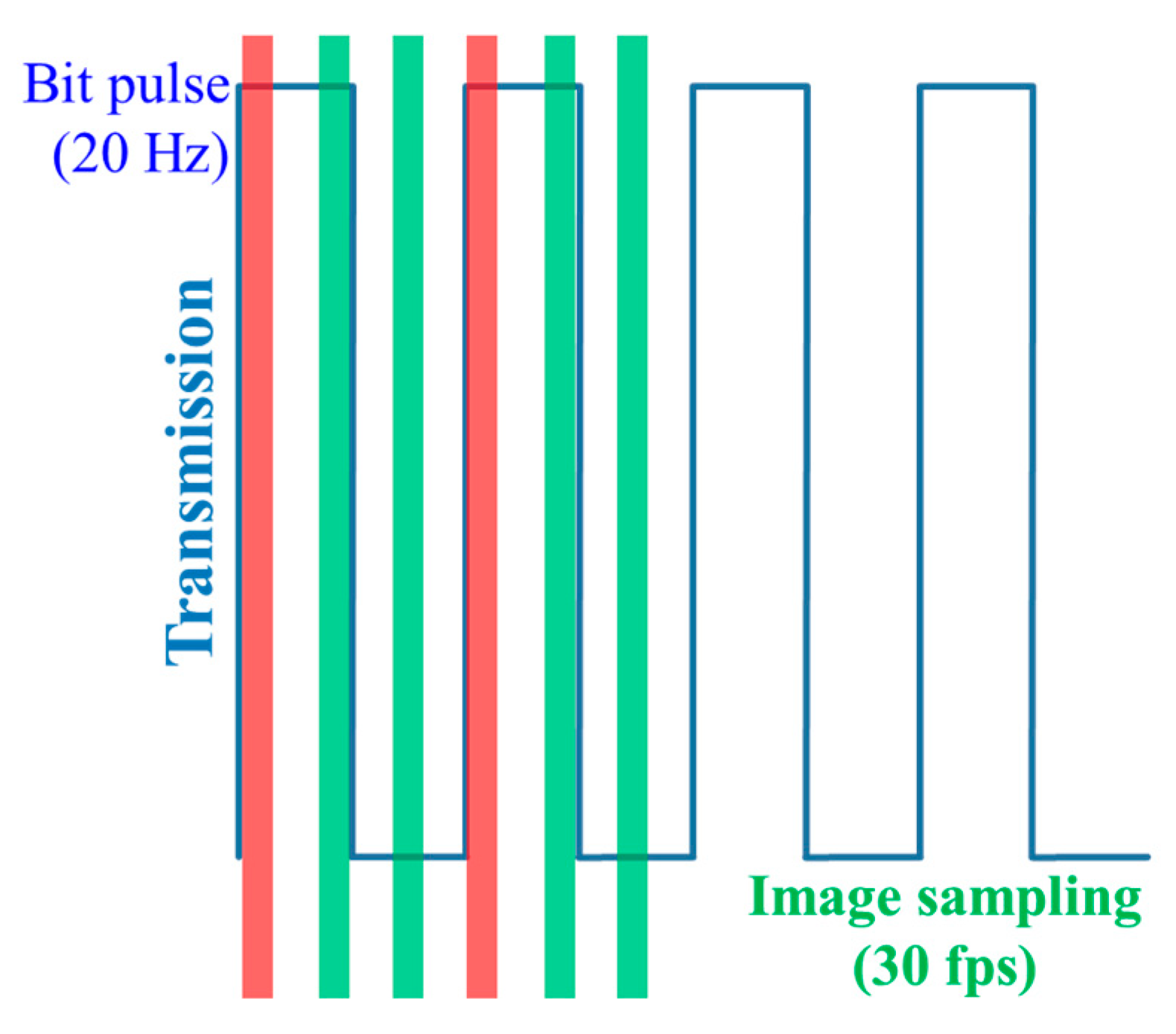

3.2.3. Compatible Encoding Scheme in the Time Domain

3.3. Hybrid Camera-PD Modulation

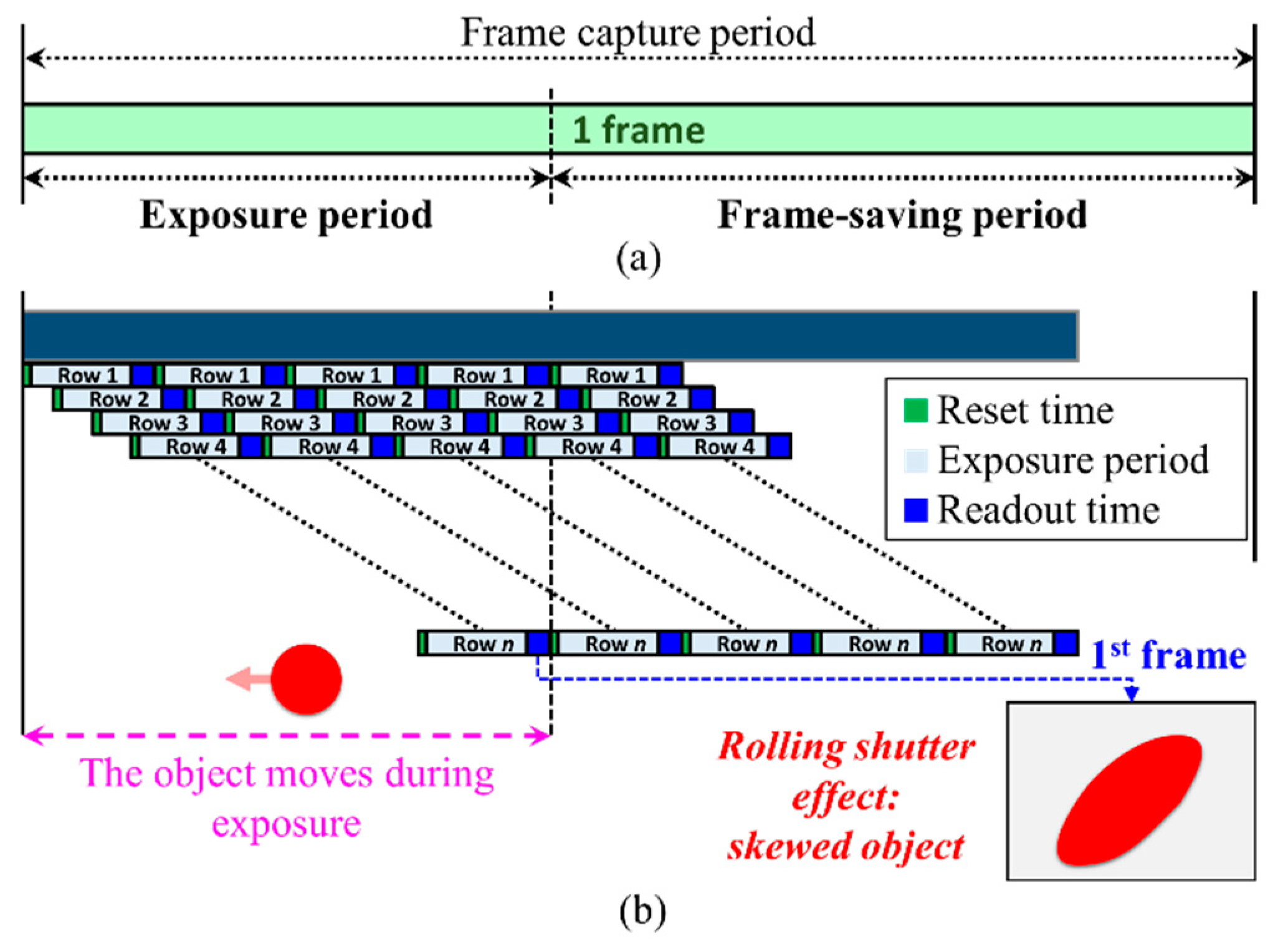

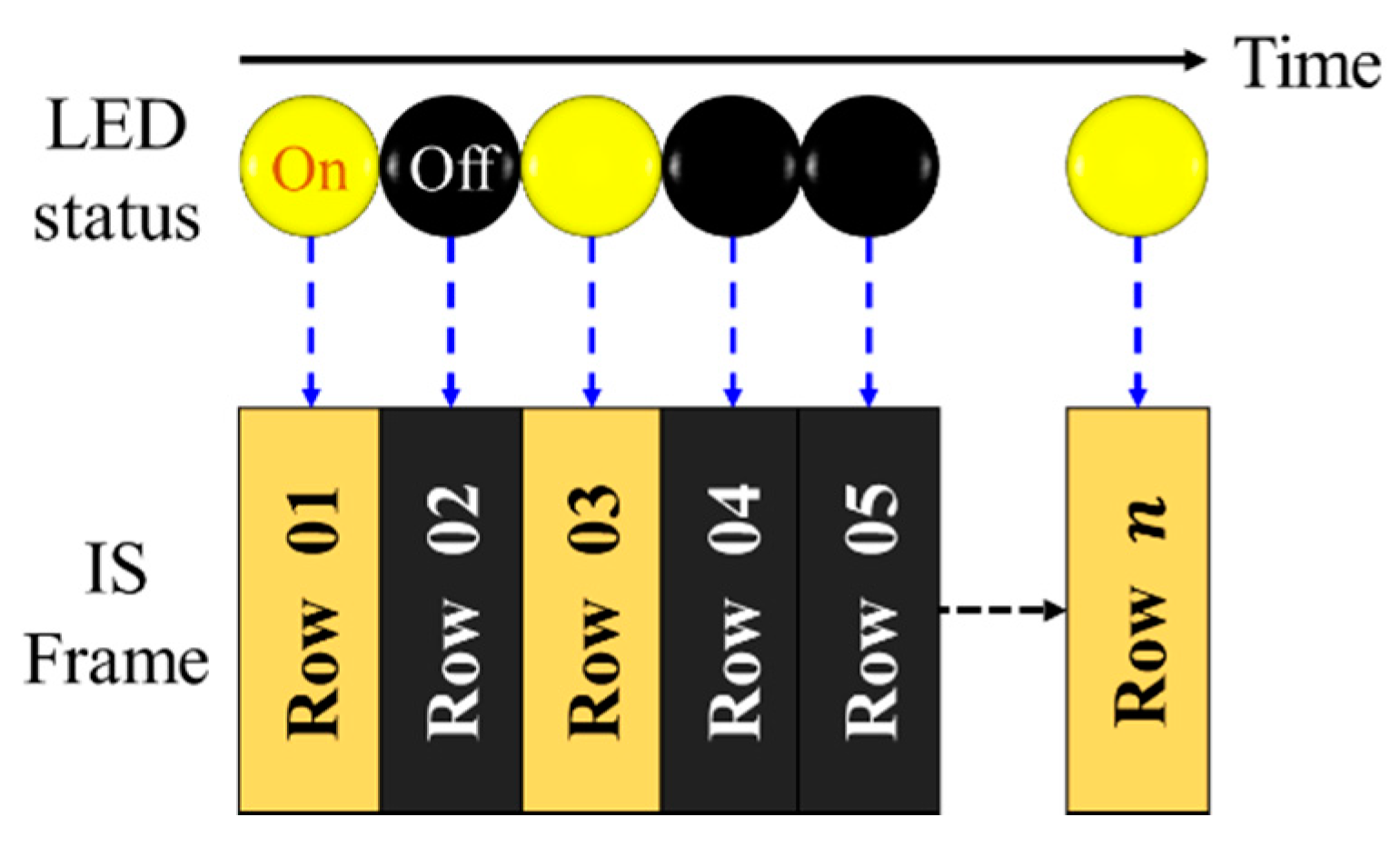

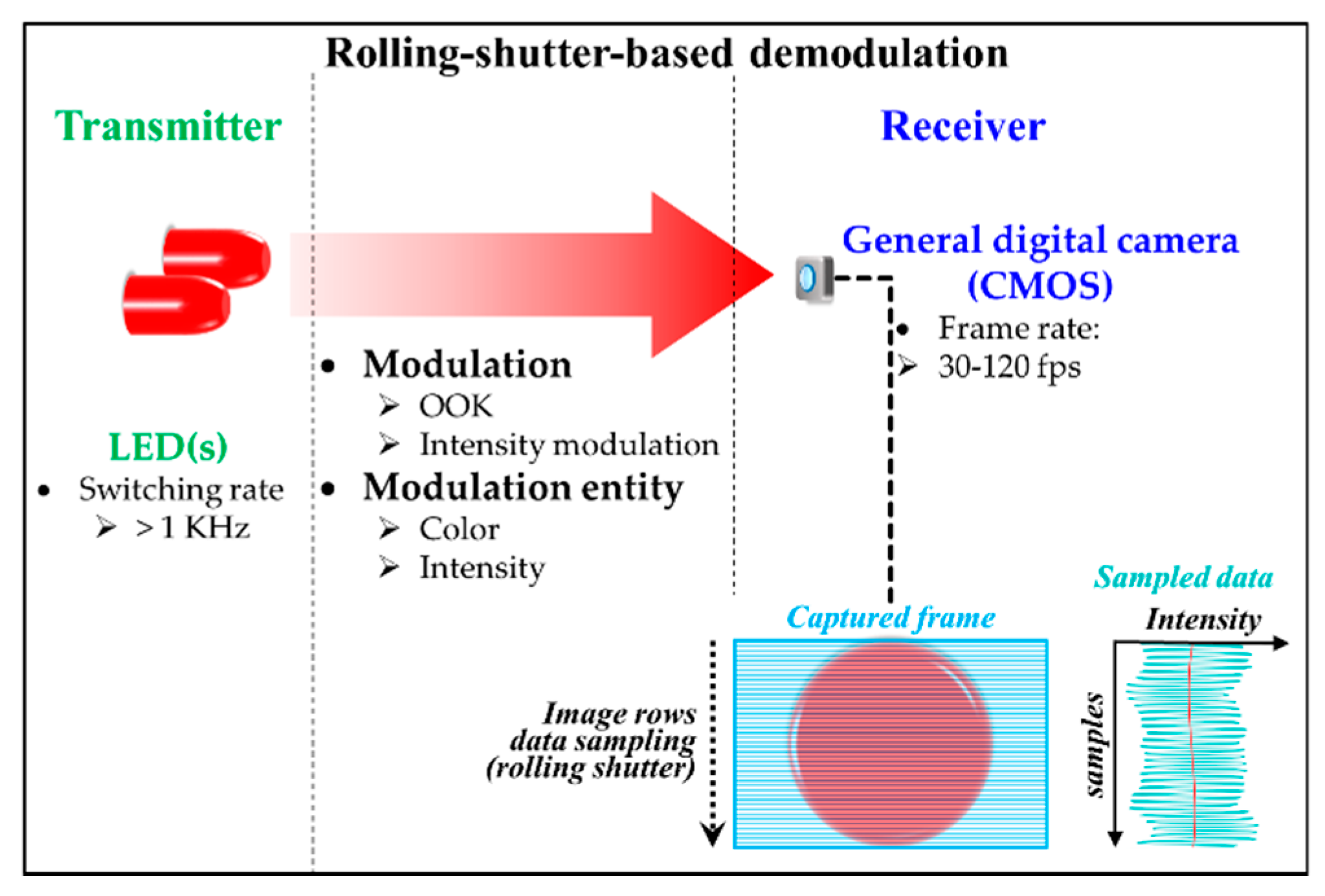

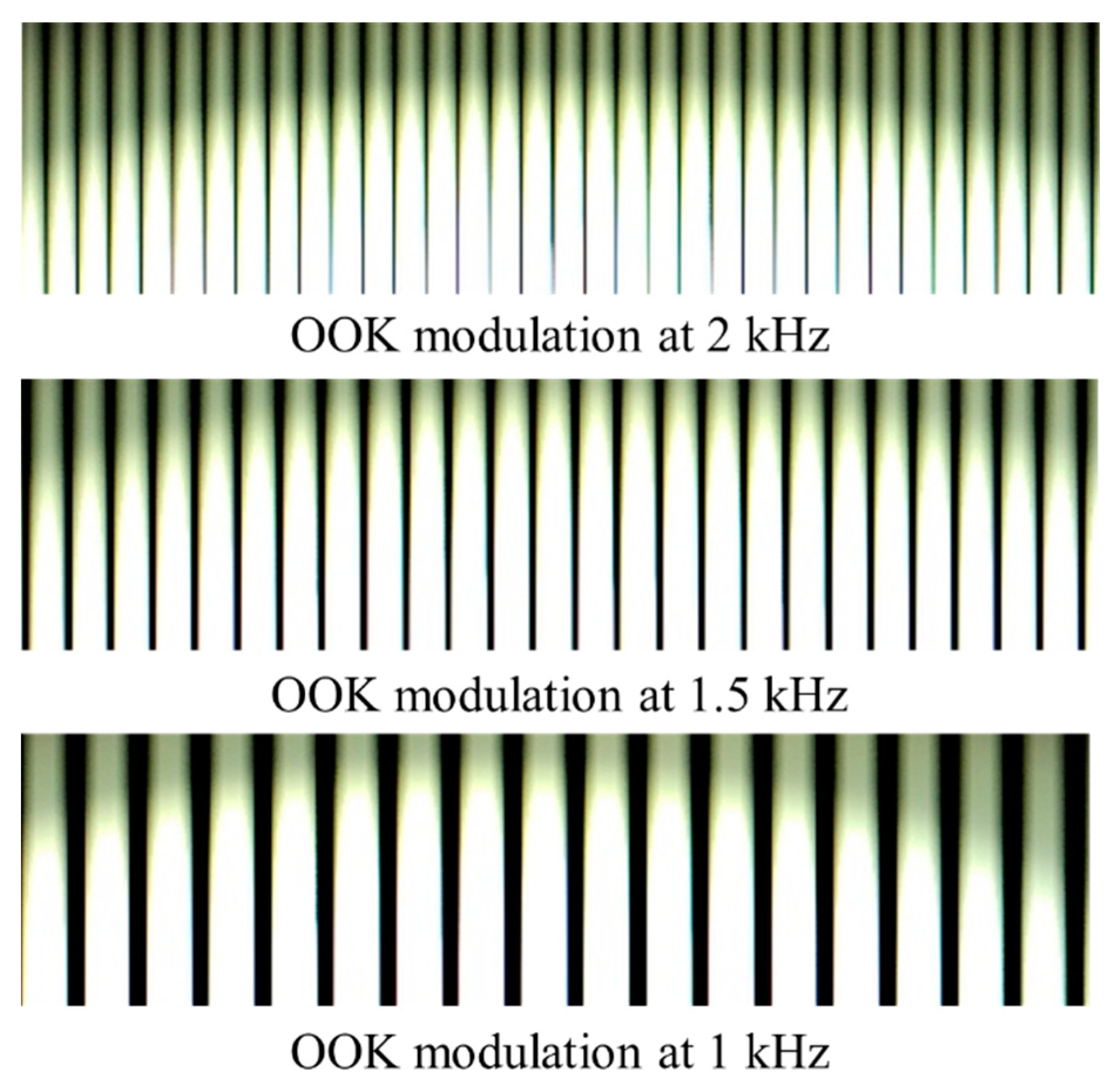

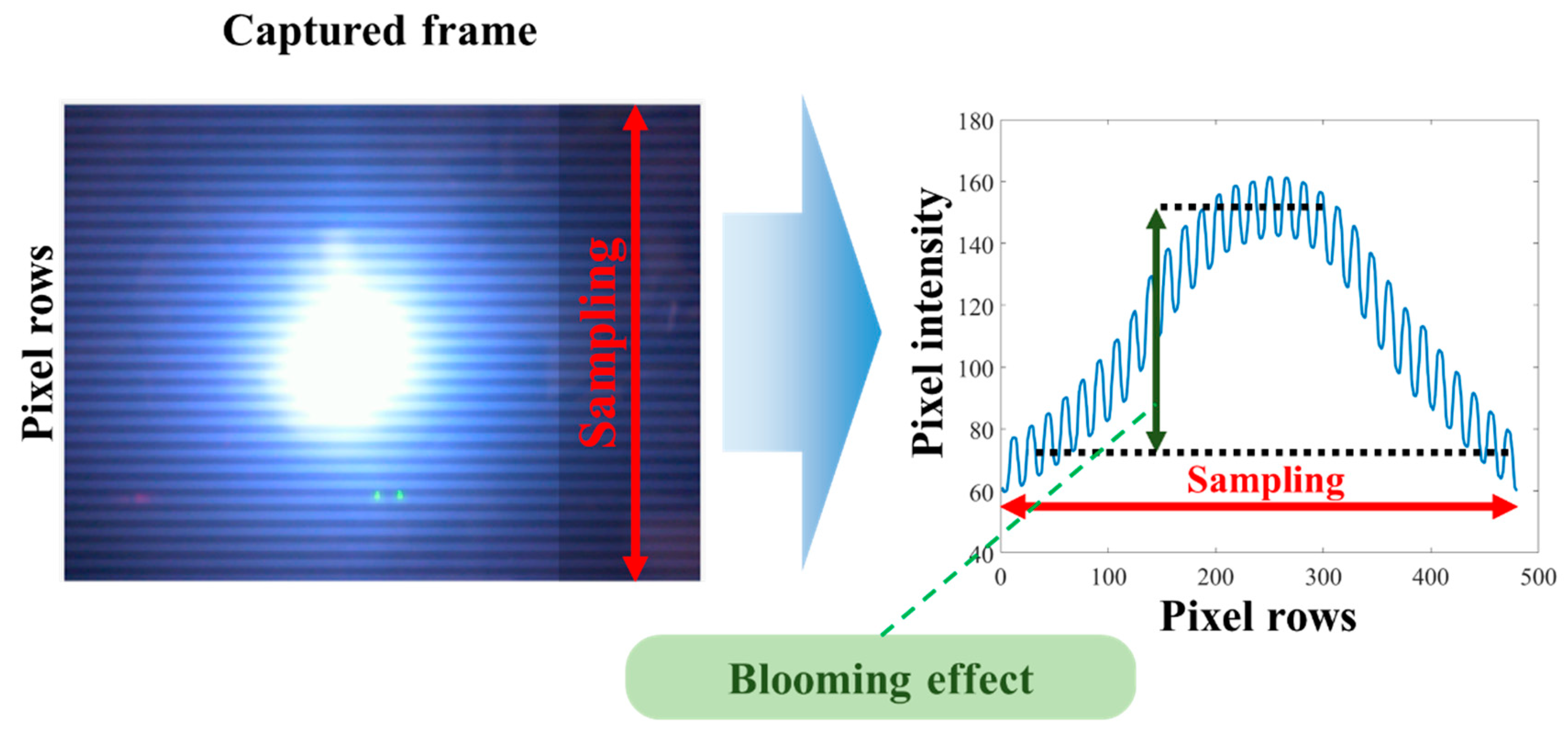

3.4. Rolling-Shutter-Based OCC

3.5. Performance Comparison of Modulation Schemes

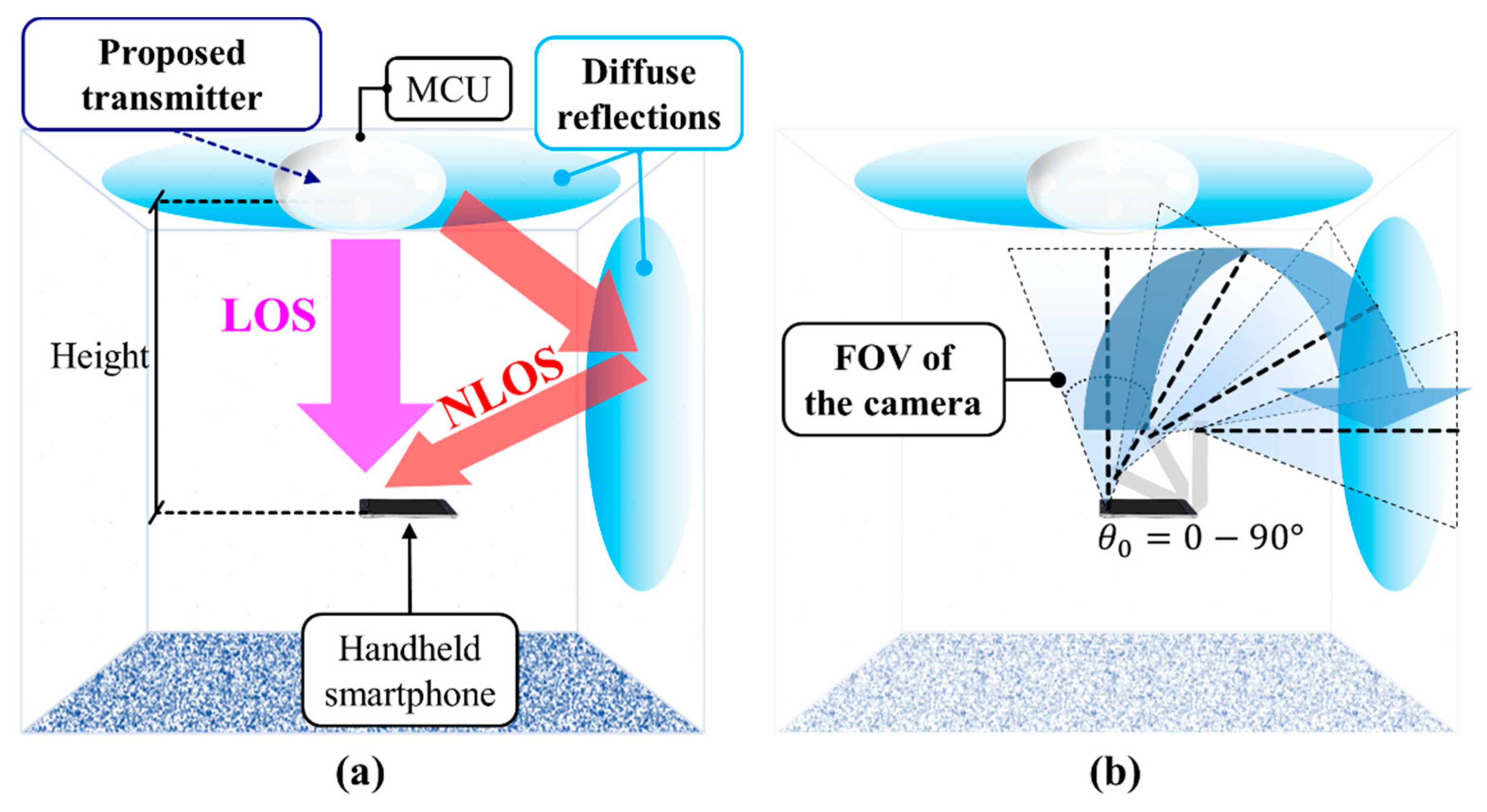

4. Mobility, Coverage, and Interference

4.1. Mobility and Coverage

4.2. Interference Mitigation

5. Applications

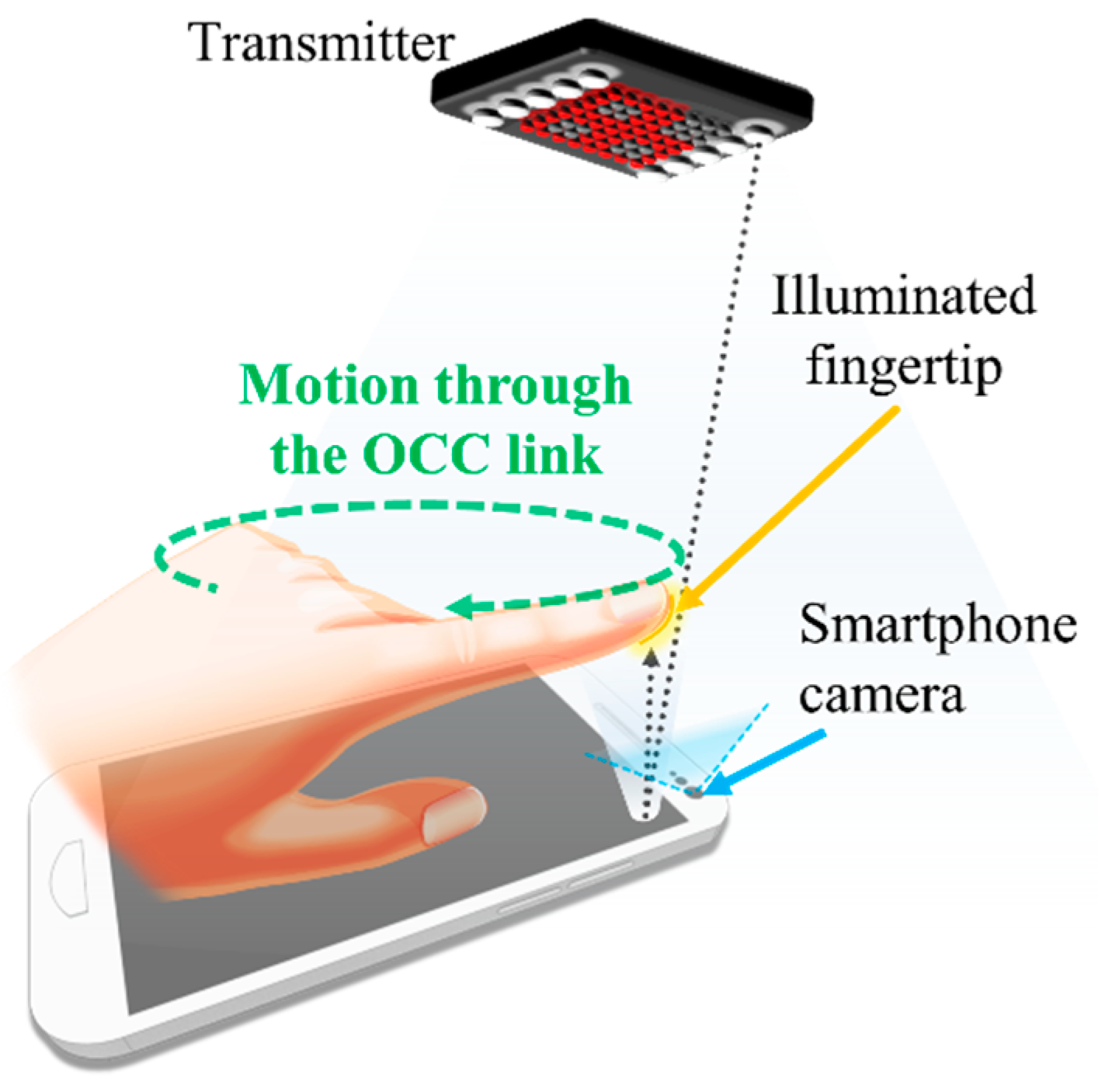

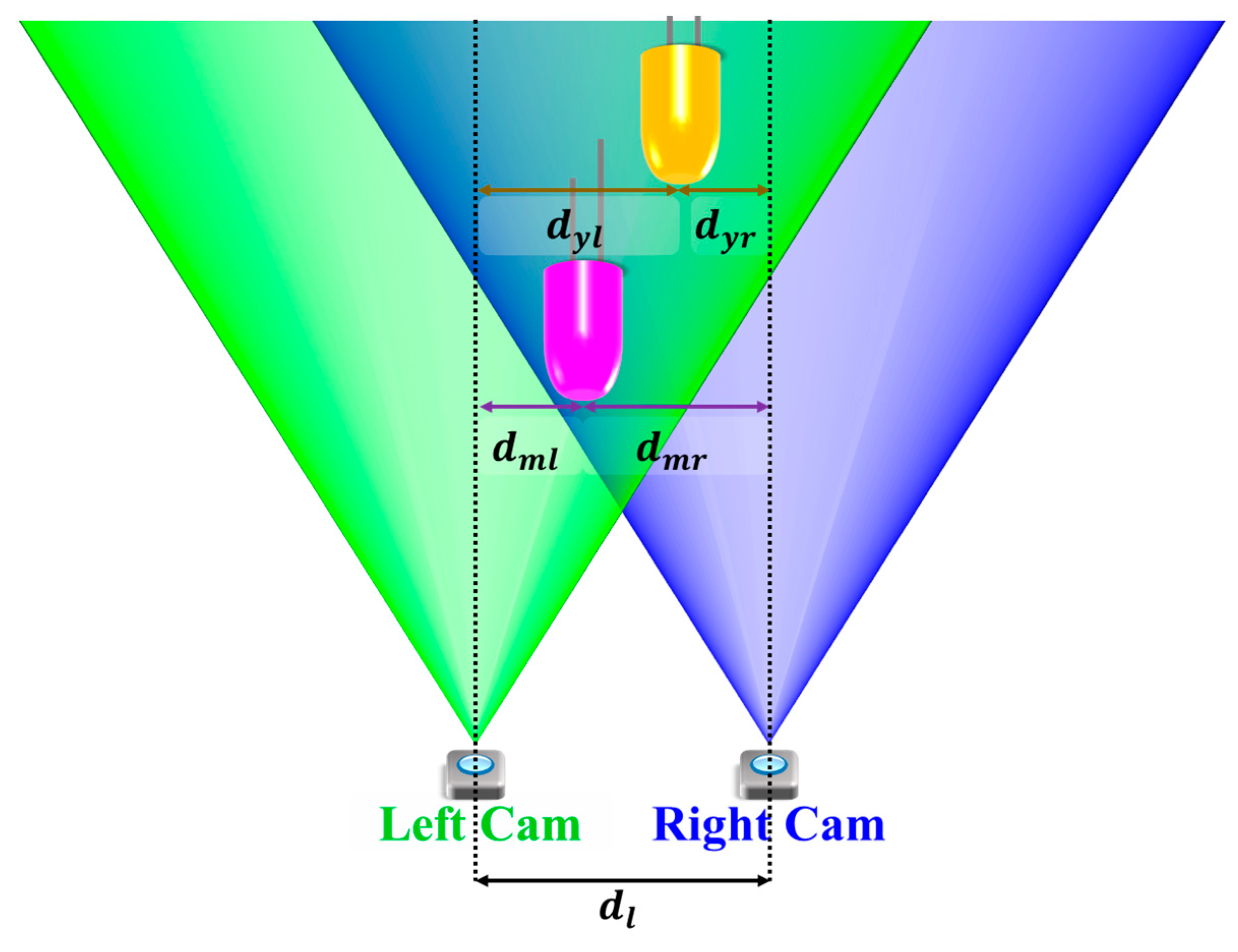

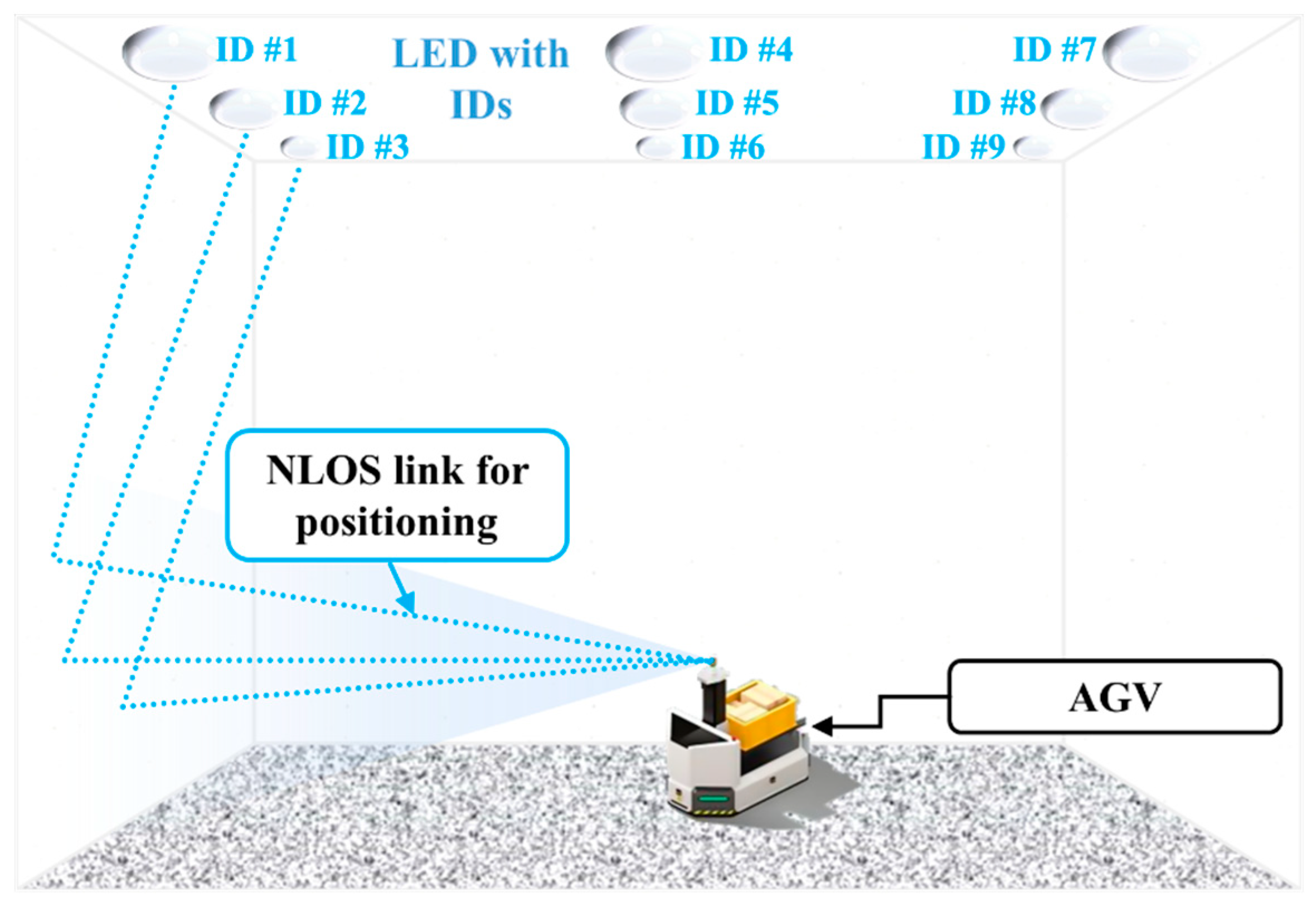

5.1. Indoor Schemes

5.2. Outdoor Schemes

6. Potential and Challenges

6.1. Potential Developments of Optical Camera Communication

6.1.1. Camera Hardware Advancements

6.1.2. Neural Network Assistance

6.1.3. Multiple Cameras Development

6.1.4. Advanced Modulation and Communication Medium

6.1.5. Automated Indoor Navigation

6.1.6. Beyond 5G

6.2. Challenges

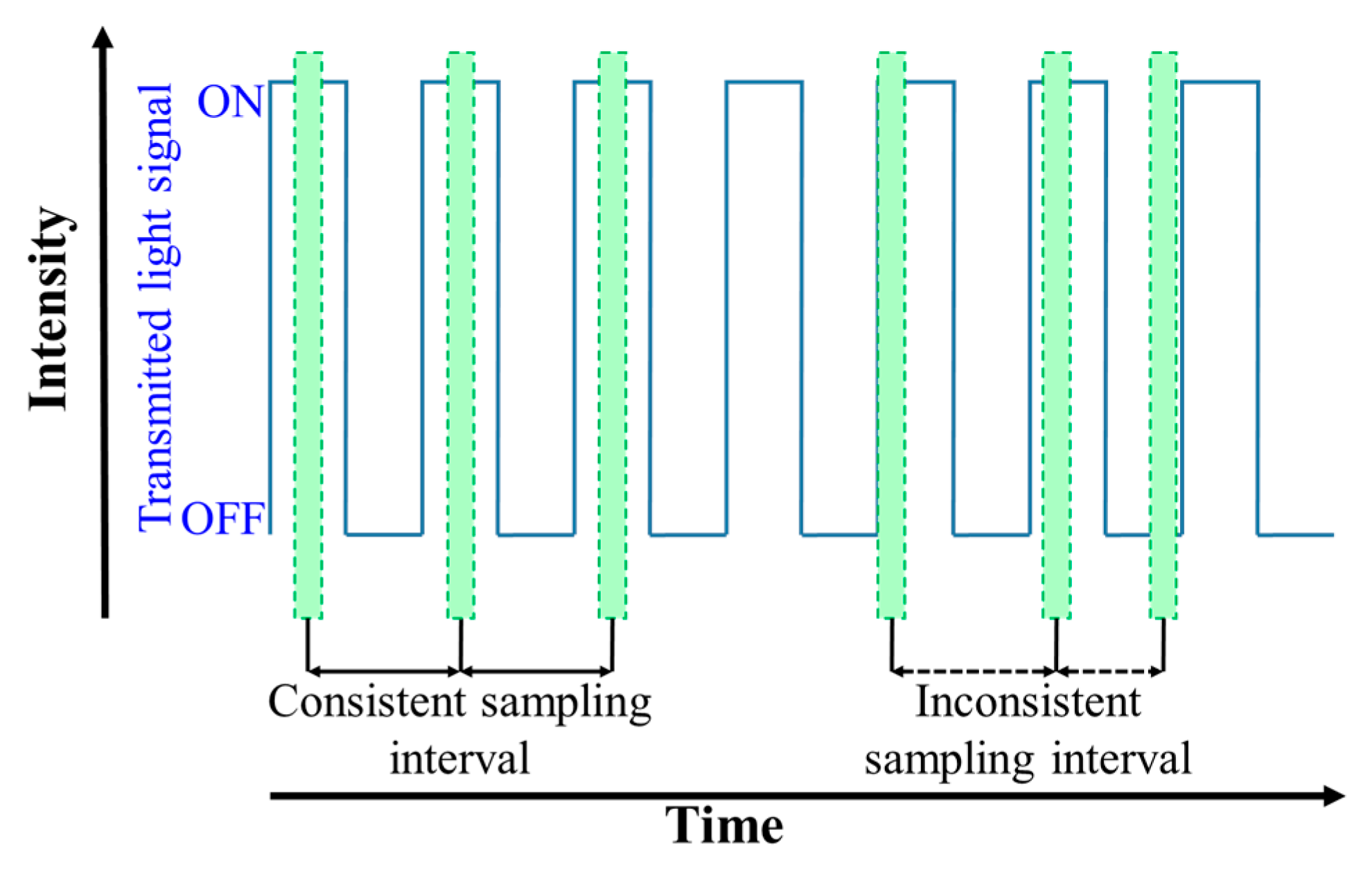

6.2.1. Synchronization

6.2.2. Data Rate Efficiency

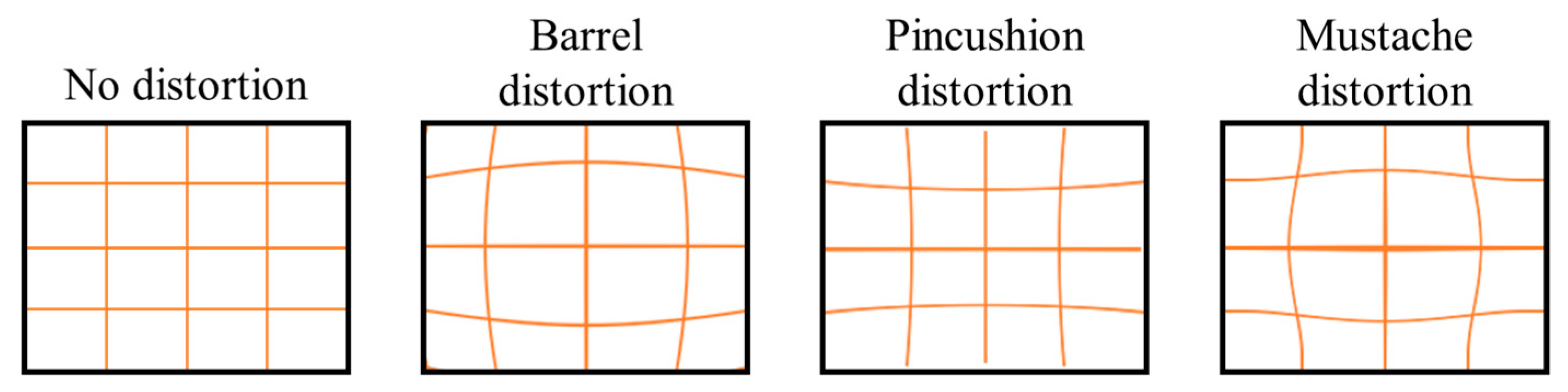

6.2.3. Camera Lens Distortion

6.2.4. Flicker-Free Modulation Formats

6.2.5. Higher Order Modulation Formats

7. Summary

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Appendix A

| Acronym | Full Title |

|---|---|

| 2D | Two-dimension |

| 5G | Fifth generation wireless networks |

| A-QL | Asynchronous quick link |

| ACO-OFDM | Asymmetrically clipped optical OFDM |

| ADC | Analog-to-digital Converter |

| Adv | Advanced |

| AGC | Automatic gain Control |

| AI | Artificial intelligence |

| AR | Augmented reality |

| bps | Bits per second |

| C-OOK | Camera OOK |

| CFA | Color filter array |

| CM-FSK | Camera multiple frequency shift keying |

| CMOS | Complementary metal-oxide semiconductor |

| CoC | Circle of confusion |

| Comm | Communication |

| CSK | Color shift keying |

| DC-biased | Direct current-biased |

| DCO-OFDM | Direct current-biased optical OFDM |

| Dev | Development |

| DMT | Discrete multitone |

| eU-OFDM | Enhanced unipolar OFDM |

| ER | Extinction ratio |

| fps | Frames per second |

| FR | Frame rate |

| FHD | Full high definition |

| FOV | Field of view |

| FSO | Free space optics |

| Gbps | Gigabits per second |

| GPS | Global positioning system |

| HA-QL | Hidden asynchronous quick link |

| HDM | High density modulation |

| HS-PSK | Hybrid spatial phase shift keying |

| IAC | Illumination augmented OCC |

| IOCC | Infrared-based OCC |

| IoT | Internet-of-things |

| IPS | Indoor positioning system |

| IPU | image processing unit |

| IR | Infrared |

| IRC | Infrared communications |

| IS | Image sensor |

| ISC | Image sensor Communications |

| Kbps | Kilobits per second |

| LCD | Liquid crystal display |

| LED | Light-emitting diode |

| LED-ID | LED identification |

| LOS | Line of sight |

| Mbps | Megabits per second |

| MIMO | Multiple-input-multiple-output |

| MSE | Mean squared error |

| MR | Mixed reality |

| NIR | Near infrared |

| NLOS | Non line of sight |

| NN | Neural network |

| NSM | Nyquist sampling modulation |

| OCC | Optical camera communications |

| OFDM | Orthogonal frequency division multiplexing |

| OLED | Organic light-emitting diode |

| OOK | On-off Keying |

| OWC | Optical wireless communications |

| PD | Photodiode/photodetector |

| PHY | Physical |

| PPM | Pulse position modulation |

| PWM | Pulse width modulation |

| RGB | Red, green, and blue |

| RF | Radio frequency |

| RFID | RF identification |

| RoI | Region of Interest |

| RoI-S | RoI signaling |

| RPO-OFDM | Reverse polarity optical OFDM |

| RS | Rolling shutter |

| RS-OCC | Rolling-shutter-based OCC |

| RS-FSK | Rolling shutter frequency shift keying |

| RX | Receiver |

| S2-PSK | Spatial 2-phase shift keying |

| SNR | Signal-to-noise ratio |

| sps | Samples per second |

| SVLC | Smartphone VLC |

| TX | Transmitter |

| UAV | Unmanned Aerial Vehicle |

| UFSOOK | Undersampled frequency shift OOK |

| UPSOOK | Undersampled phase shift OOK |

| UV | Ultraviolet |

| UVC | UV communications |

| UWB | Ultra-wide band |

| V2I | Vehicle-to-infrastructure communications |

| V2V | Vehicle-to-vehicle communications |

| VLC | Visible Light communications |

| VPPM | Variable pulse position modulation |

| VTASC | Variable transparent amplitude shape code |

| Wi-Fi | Wireless fidelity |

| WRO | Wide receiver orientation |

References

- Paathak, P.H.; Feng, X.; Hu, P.; Mohapatra, P. Visible Light Communication, Networking, and Sensing: A Survey, Potential and Challenges. IEEE Commun. Surv. Tutor. 2015, 17, 2047–2077. [Google Scholar] [CrossRef]

- Ghassemlooy, Z.; Popoola, W.; Rajbhandari, S. Optical Wireless Communications; CRC Press: Boca Raton, FL, USA, 2013. [Google Scholar]

- Koonen, T. Indoor Optical Wireless Systems: Technology, Trends, and Applications. J. Lightwave Technol. 2018, 36, 1459–1467. [Google Scholar] [CrossRef]

- O’Brien, D. Optical wireless communications: Current status and future prospects. In Proceedings of the 2016 IEEE Photonics Society Summer Topical Meeting Series, Newport Beach, CA, USA, 11–13 July 2016. [Google Scholar]

- Nguyen, T.; Islam, A.; Hossan, M.T.; Jang, Y.M. Current Status and Performance Analysis of Optical Camera Communication Technologies for 5G Networks. IEEE Access 2017, 5, 4574–4594. [Google Scholar] [CrossRef]

- Arya, S.; Chung, Y.H. Non-line-of-sight Ultraviolet Communication with Receiver Diversity in Atmospheric Turbulence. IEEE Photonics Technol. Lett. 2018, 30, 895–898. [Google Scholar] [CrossRef]

- Ayyash, M.; Elgala, H.; Khreishah, A.; Little, T.; Shao, S.; Rahaim, M.; Schulz, D.; Hilt, J.; Freund, R. Coexistence of WiFi and LiFi toward 5G: Concepts, Opportunities, and Challenges. IEEE Commun. Mag. 2016, 54, 64–71. [Google Scholar] [CrossRef]

- Elgala, H.; Mesleh, R.; Haas, H. Indoor Optical Wireless Communication: Potential and State-of-the-art. IEEE Commun. Mag. 2011, 49, 56–62. [Google Scholar] [CrossRef]

- Ghassemlooy, Z.; Alves, L.N.; Zvanovec, S.; Khalighi, M.-A. Visible Light Communications: Theory and Applications; CRC Press: Boca Raton, FL, USA, 2017. [Google Scholar]

- Ghassemlooy, Z.; Popoola, W.O.; Tang, X.; Zvanovec, S.; Rajbhandar, S. Visible Light Communications—A Review. IEEE COMSOC MMTC Commun. Front. 2017, 12, 6–13. [Google Scholar]

- Saha, N.; Ifthekhar, M.; Shareef, N.; Le, T.; Jang, Y.M. Survey on optical camera communications: Challenges and opportunities. IET Optoelectron. 2015, 9, 172–183. [Google Scholar] [CrossRef]

- Le, N.T. Invisible watermarking optical camera communication and compatibility issues of IEEE 802.15.7r1 specification. Opt. Commun. 2017, 390, 144–155. [Google Scholar] [CrossRef]

- Haruta, T.; Nakajima, T.; Hashizume, J.; Umebayashi, T.; Takahashi, H.; Taniguchi, K.; Kuroda, M.; Sumihiro, H.; Enoki, K.; Yamasaki, T.; et al. 4.6 A 1/2.3inch 20Mpixel 3-layer stacked CMOS. In Proceedings of the IEEE International Solid-State Circuits Conference, San Fransisco, CA, USA, 5–9 February 2017. [Google Scholar]

- Nguyen, T.; Islam, A.; Yamazato, T.; Jang, Y.M. Technical Issues on IEEE 802.15.7m Image Sensor Communication Standardization. IEEE Commun. Mag. 2018, 56, 213–218. [Google Scholar] [CrossRef]

- IEEE. IEEE 802.15.7-2011—IEEE Standard for Local and Metropolitan Area Networks—Part 15.7: Short-Range Wireless Optical Communication Using Visible Light. IEEE Standards Association. 6 September 2011. Available online: https://standards.ieee.org/standard/802_15_7-2011.html (accessed on 23 October 2018).

- IEEE. IEEE 802.15 WPANTM 15.7 Maintenance: Short-Range Optical Wireless Communications Task Group (TG 7m). 23 October 2018. Available online: http://www.ieee802.org/15/pub/IEEE%20802_15%20WPAN%2015_7%20Revision1%20Task%20Group.htm (accessed on 23 October 2018).

- Le, N.T.; Hossain, M.A.; Jang, Y.M. A Survey of Design and Implementation for Optical Camera Communication. Signal Process. Image Commun. 2017, 53, 95–109. [Google Scholar] [CrossRef]

- Saeed, N.; Guo, S.; Park, K.-H.; Al-Naffouri, T.Y.; Alouini, M.-S. Optical camera communications: Survey, use cases, challenges, and future trends. Phys. Commun. 2019, 37, 100900. [Google Scholar] [CrossRef]

- Rappaport, T.S.; Xing, Y.; Kanhere, O.; Ju, S.; Madanayake, A.; Mandal, S.; Alkhateeb, A.; Trichopoulos, G.C. Wireless Communications and Applications Above 100 GHz: Opportunities and Challenges for 6G and Beyond. IEEE Access 2019, 7, 78729–78757. [Google Scholar] [CrossRef]

- Wikipedia. IEEE 802.15. Wikimedia Foundation, 19 September 2018. Available online: https://en.wikipedia.org/wiki/IEEE_802.15 (accessed on 20 October 2018).

- IEEE. IEEE 802.15 WPAN™ 15.7 Amendment—Optical Camera Communications Study Group (SG 7a). 23 October 2018. Available online: http://www.ieee802.org/15/pub/SG7a.html (accessed on 23 October 2018).

- Cahyadi, W.A.; Kim, Y.H.; Chung, Y.H.; Ahn, C.-J. Mobile Phone Camera-Based Indoor Visible Light Communications with Rotation Compensation. IEEE Photonics J. 2016, 8, 1–8. [Google Scholar] [CrossRef]

- Chow, C.W.; Chen, C.Y.; Chen, S.H. Visible light communication using mobile-phone camera with data rate higher than frame rate. Opt. Express 2015, 23, 26080–26085. [Google Scholar] [CrossRef] [PubMed]

- Danakis, C.; Afgani, M.; Povey, G.; Underwood, I.; Haas, H. Using a CMOS camera sensor for visible light communication. In Proceedings of the 2012 IEEE Globecom Workshops, Anaheim, CA, USA, 3–7 December 2012. [Google Scholar]

- Cha, J. IEEE 802 Optical Wireless Communication (IEEE 802 OWC Technology Trends and Use Cases). In Proceedings of the IEEE Blockchain Summit Korea, Seoul, Korea, 7–8 June 2018. [Google Scholar]

- Serafimovski, N.; Jungnickel, V.; Jang, Y.; Qiang, J. An Overview on High Speed Optical Wireless/Light Communication. 3 July 2017. Available online: https://mentor.ieee.org/802.11/dcn/17/11-17-0962-01-00lc-an-overview-on-high-speed-optical-wireless-light-communications.pdf (accessed on 14 March 2019).

- Jang, Y.M. IEEE 802.15 Documents: 15.7m Closing Report. 14 November 2018. Available online: https://mentor.ieee.org/802.15/dcn/18/15-18-0596-00-007a-15-7m-closing-report-nov-2018.pptx (accessed on 8 February 2019).

- IEEE. IEEE 802.15.7-2018—IEEE Draft Standard for Local and Metropolitan Area Networks—Part 15.7: Short-Range Optical Wireless Communications. 5 December 2018. Available online: https://standards.ieee.org/standard/802_15_7-2018.html (accessed on 12 February 2019).

- Gamal, A.E.; Eltoukhy, H. CMOS Image Sensors. IEEE Circuits Devices Mag. 2005, 21, 6–20. [Google Scholar] [CrossRef]

- Merritt, R. TSMC Goes Photon to Cloud; EE Times: San Jose, CA, USA, 2018. [Google Scholar]

- Celebi, M.E.; Lecca, M.; Smolka, B. Image Demosaicing. In Color Image and Video Enhancement; Springer International Publishing: Cham, Switzerland, 2015; pp. 13–54. [Google Scholar]

- Tanaka, M. Give Me Four: Residual Interpolation for Image Demosaicing and Upsampling; Tokyo Institute of Technology: Tokyo, Japan, 2018; Available online: http://www.ok.sc.e.titech.ac.jp/~mtanaka/research.html (accessed on 8 November 2018).

- Meroli, S. Stefano Meroli Lecture: CMOS vs CCD Pixel Sensor. 17 August 2005. Available online: Meroli.web.cern.ch/lecture_cmos_vs_ccd_pixel_sensor.html (accessed on 22 October 2018).

- Chen, Z.; Wang, X.; Pacheco, S.; Liang, R. Impact of CCD camera SNR on polarimetric accuracy. Appl. Opt. 2014, 53, 7649–7656. [Google Scholar] [CrossRef]

- Goto, Y.; Takai, I.; Yamazato, T.; Okada, H.; Fujii, T. A New Automotive VLC System Using Optical Communication Image Sensor. IEEE Photonics J. 2016, 8, 1–17. [Google Scholar] [CrossRef]

- Teli, S.; Chung, Y.H. Selective Capture based High-speed Optical Vehicular Signaling System. Signal Process. Image Commun. 2018, 68, 241–248. [Google Scholar] [CrossRef]

- Cahyadi, W.A.; Chung, Y.H. Smartphone Camera based Device-to-device Communication Using Neural Network Assisted High-density Modulation. Opt. Eng. 2018, 57, 096102. [Google Scholar] [CrossRef]

- Boubezari, R.; Minh, H.; Ghassemlooy, Z.; Bouridane, A. Smartphone Camera Based Visible Light Communication. J. Lightwave Technol. 2016, 34, 4121–4127. [Google Scholar] [CrossRef]

- QImaging. Rolling Shutter vs. Global Shutter: CCD and CMOS Sensor Architecture and Readout Modes. 2014. Available online: https://www.selectscience.net/application-notes/Rolling-Shutter-vs-Global-Shutter-CCD-and-CMOS-Sensor-Architecture-and-Readout-Modes/?artID=33351 (accessed on 21 March 2018).

- Do, T.-H.; Yoo, M. Performance Analysis of Visible Light Communication Using CMOS Sensors. Sensors 2016, 16, 309. [Google Scholar] [CrossRef] [PubMed]

- Shlomi Arnon, B.G. Visible Light Communication; Cambridge University Press: Cambridge, UK, 2015. [Google Scholar]

- National Instruments, Calculating Camera Sensor Resolution and Lens Focal Length. 15 February 2018. Available online: http://www.ni.com/product-documentation/54616/en/ (accessed on 6 November 2018).

- Cahyadi, W.A.; Kim, Y.H.; Chung, Y.H. Dual Camera based Split Shutter for High-rate and Long-distance Optical Camera Communications. Opt. Eng. 2016, 55, 110504. [Google Scholar] [CrossRef]

- Hassan, N.; Ghassemlooy, Z.; Zvanovec, S.; Luo, P.; Le-Minh, H. Non-line-of-sight 2 × N indoor optical camera communications. Appl. Opt. 2018, 57, 144–149. [Google Scholar] [CrossRef] [PubMed]

- Chau, J.C.; Little, T.D. Analysis of CMOS active pixel sensors as linear shift-invariant receivers. In Proceedings of the 2015 IEEE International Conference on Communication Workshop, London, UK, 8–12 June 2015. [Google Scholar]

- Hassan, N.B. Impact of Camera Lens Aperture and the Light Source Size on Optical Camera Communications. In Proceedings of the 11th International Symposium on Communication Systems, Networks & Digital Signal Processing (CSNDSP), Budapest, Hungary, 12–18 July 2018. [Google Scholar]

- Hossain, M.A.; Hong, C.H.; Ifthekhar, M.S.; Jang, Y.M. Mathematical modeling for calibrating illuminated image in image sensor communication. In Proceedings of the 2015 International Conference on Information and Communication Technology Convergence (ICTC), Jeju, Korea, 28–30 October 2015. [Google Scholar]

- Huynh-Thu, Q.; Ghanbari, M. Scope of validity of PSNR in image/video quality assessment. Electron. Lett. 2008, 44, 800–801. [Google Scholar] [CrossRef]

- Iizuka, N. CASIO Response to 15.7r1 CFA. March 2015. Available online: https://mentor.ieee.org/802.15/dcn/15/15-15-0173-01-007a-casioresponse-to-15-7r1-cfa.pdf (accessed on 24 January 2019).

- Perli, S.D.; Ahmed, N.; Katabi, D. PixNet: Interference-free wireless links using LCD-camera pairs. In Proceedings of the MobiCom ACM, New York, NY, USA, 20–24 September 2010. [Google Scholar]

- Hao, T.; Zhou, R.; Xing, G. COBRA: Color barcode streaming for smartphone system. In Proceedings of the MobiSys ACM, Ambleside, UK, 25–29 June 2012. [Google Scholar]

- Hu, W.; Gu, H.; Pu, Q. LightSync: Unsynchronized visual communication over screen-camera links. In Proceedings of the 19th Annual International Conference on Mobile Computing & Networking, New York, NY, USA, 30 September–4 October 2013. [Google Scholar]

- Nguyen, T.; Jang, Y.M. Novel 2D-sequential color code system employing Image Sensor Communications for Optical Wireless Communications. ICT Express 2016, 2, 57–62. [Google Scholar] [CrossRef]

- Hossain, M.A.; Lee, Y.T.; Lee, H.; Lyu, W.; Hong, C.H.; Nguyen, T.; Le, N.T.; Jang, Y.M. A Symbiotic Digital Signage system based on display to display communication. In Proceedings of the 2015 Seventh International Conference on Ubiquitous and Future Networks, Sapporo, Japan, 7–10 July 2015. [Google Scholar]

- Huang, W.; Tian, P.; Xu, Z. Design and implementation of a real-time CIMMIMO optical camera communication system. Opt. Express 2016, 24, 24567–24579. [Google Scholar] [CrossRef]

- Chen, S.H.; Chow, C. Color-filter-free WDM MIMO RGB-LED visible light communication system using mobile-phone camera. In Proceedings of the 2014 13th International Conference on Optical Communications and Networks (ICOCN), Suzhou, China, 9–10 November 2014. [Google Scholar]

- Chen, S.; Chow, C.W. Color-Shift Keying and Code-Division Multiple-Access Transmission for RGB-LED Visible Light Communications Using Mobile Phone Camera. IEEE Photonics J. 2014, 6, 1–6. [Google Scholar] [CrossRef]

- Basu, J.K.; Bhattacharyya, D.; Kim, T. Use of Artifical Neural Network in Pattern Recognition. Int. J. Softw. Eng. Appl. 2010, 4, 23–34. [Google Scholar]

- Yu, P.; Anastassopoulos, V.; Venetsanopoulos, A.N. Pattern classification and recognition based on morphology and neural networks. Can. J. Electr. Comput. Eng. 1992, 17, 58–64. [Google Scholar] [CrossRef]

- Yamazato, T.; Takai, I.; Okada, H.; Fujii, T.; Yendo, T.; Arai, S. Image-Sensor-Based Visible Light Communication for Automotive Applications. IEEE Commun. Mag. 2014, 52, 88–97. [Google Scholar] [CrossRef]

- Photron. Photron Fastcam SA-Z. 2018. Available online: https://photron.com/fastcam-sa-z/ (accessed on 8 November 2018).

- Davis, J. Humans perceive flicker artifacts at 500 Hz. Sci. Rep. 2015, 5, 1–4. [Google Scholar] [CrossRef] [PubMed]

- Ebihara, K.; Kamakura, K.; Yamazato, T. Layered transmission of space-time coded signals for image-sensor-based visible light communications. J. Lightwave Technol. 2015, 33, 4193–4206. [Google Scholar] [CrossRef]

- Nguyen, T.; Islam, A.; Jang, Y.M. Region-of-interest signaling vehicular system using optical camera communications. IEEE Photonics J. 2017, 9, 1–20. [Google Scholar] [CrossRef]

- Yuan, W.; Dana, K.; Varga, M.; Ashok, A.; Gruteser, M.; Mandayam, N. Computer vision methods for visual MIMO optical system. In Proceedings of the 2011 Computer Vision and Pattern Recognition Workshops (CVPRW), Colorado Springs, CO, USA, 20–25 June 2011. [Google Scholar]

- Roberts, R.D. A MIMO protocol for camera communications (CamCom) using undersampled frequency shift ON-OFF keying (UFSOOK). In Proceedings of the IEEE Globecom Workshops, Atlanta, GA, USA, 9–13 December 2013. [Google Scholar]

- Roberts, R.D. Intel Proposal in IEEE 802.15.7r1. 28 March 2017. Available online: https://mentor.ieee.org/802.15/dcn/16/15-16-0006-01-007a-intel-occ-proposal.pdf (accessed on 28 January 2019).

- Luo, P.; Zhang, M.; Ghassemlooy, Z.; Zvanovec, S.; Feng, S.; Zhang, P. Undersampled-Based Modulation Schemes for Optical Camera Communications. IEEE Commun. Mag. 2018, 56, 204–212. [Google Scholar] [CrossRef]

- Luo, P.; Zhang, M.; Ghassemlooy, Z. Experimental Demonstration of RGB LED-Based Optical Camera Communications. IEEE Photonics J. 2015, 7, 1–12. [Google Scholar] [CrossRef]

- Jang, Y.M.; Nguyen, T. Kookmin University PHY Sub-Proposal for ISC Using Dimmable Spatial M-PSK (DSM-PSK). January 2016. Available online: https://mentor.ieee.org/802.15/dcn/16/15-16-0015-02-007a-kookmin-university-phy-sub-proposal-for-isc-using-dimmable-spatial-m-psk-dsm-psk.pptx (accessed on 29 January 2019).

- Nguyen, T.; Hossain, M.A.; Jang, Y.M. Design and Implementation of a Novel Compatible Encoding Scheme in the Time Domain for Image Sensor Communication. Sensors 2016, 16, 736. [Google Scholar] [CrossRef]

- Takai, I.; Ito, S.; Yasutomi, K.; Kagawa, K.; Andoh, M.; Kawahito, S. LED and CMOS image sensor based optical wireless communication system for automotive applications. IEEE Photonics J. 2013, 5, 6801418. [Google Scholar] [CrossRef]

- Itoh, S.; Takai, I.; Sarker, M.Z.; Hamai, M.; Yasutomi, K.; Andoh, M.; Kawahito, S. BA CMOS image sensor for 10 Mb/s 70 m-range LED-based spatial optical communication. In Proceedings of the IEEE International Solid-State Circuits Conference, San Fransisco, CA, USA, 7–11 February 2010. [Google Scholar]

- Liu, Y. Decoding mobile-phone image sensor rolling shutter effect for visible light communications. Opt. Eng. 2016, 55, 016103. [Google Scholar] [CrossRef]

- Chow, C.; Chen, C.; Chen, S. Enhancement of signal performance in LED visible light communications using mobile phone camera. IEEE Photonics J. 2015, 7, 7903607. [Google Scholar] [CrossRef]

- Wang, W.C.; Chow, C.W.; Wei, L.; Liu, Y.; Yeh, C.H. Long distance non-line-of-sight (NLOS) visible light signal detection based on rolling-shutter-patterning of mobile-phone camera. Opt. Express 2017, 25, 10103–10108. [Google Scholar] [CrossRef] [PubMed]

- Chen, C.; Chow, C.; Liu, Y.; Yeh, C. Efficient demodulation scheme for rollingshutter-patterning of CMOS image sensor based visible light communications. Opt. Express 2017, 25, 24362–24367. [Google Scholar] [CrossRef] [PubMed]

- Liang, K.; Chow, C.; Liu, Y. Mobile-phone based visible light communication using region-grow light source tracking for unstable light source. Opt. Express 2016, 24, 17505–17510. [Google Scholar] [CrossRef] [PubMed]

- Liu, Y.; Chow, C.; Liang, K.; Chen, H.; Shu, C.; Chen, C.; Chen, S. Comparison of thresholding schemes for visible light communication using mobile-phone image sensor. Opt. Express 2016, 24, 1973–1978. [Google Scholar] [CrossRef] [PubMed]

- Gonzales, R.; Woods, R. Digital Image Processing; Pearson: Tennessee, TN, USA, 2002. [Google Scholar]

- Wellner, P. Adaptive thresholding for the digital desk. Xerox 1993, EPC-1993-110, 1–19. [Google Scholar]

- Wang, W.; Chow, C.; Chen, C.; Hsieh, H.; Chen, Y. Beacon Jointed Packet Reconstruction Scheme for Mobile-Phone Based Visible Light Communications Using Rolling Shutter. IEEE Photonics J. 2017, 9, 7907606. [Google Scholar] [CrossRef]

- Chow, C.; Shiu, R.; Liu, Y.; Yeh, C. Non-flickering 100 m RGB visible light communication transmission based on a CMOS image sensor. Opt. Express 2018, 26, 7079–7084. [Google Scholar] [CrossRef]

- Yang, Y.; Hao, J.; Luo, J. CeilingTalk: Lightweight indoor broadcast through LED-camera communication. IEEE Trans. Mob. Comput. 2017, 16, 3308–3319. [Google Scholar] [CrossRef]

- Nguyen, T.; Le, N.; Jang, Y. Asynchronous Scheme for Optical Camera Communication-Based Infrastructure-to-Vehicle Communication. Int. J. Distrib. Sens. Netw. 2015, 11, 908139. [Google Scholar] [CrossRef]

- Cahyadi, W.A.; Chung, Y.H. Wide Receiver Orientation Using Diffuse Reflection in Camera-based Indoor Visible Light Communication. Opt. Commun. 2018, 431, 19–28. [Google Scholar] [CrossRef]

- Do, T.-H.; Yoo, M. A Multi-Feature LED Bit Detection Algorithm in Vehicular Optical Camera Communication. IEEE Access 2019, 7, 95797. [Google Scholar] [CrossRef]

- Chow, C.-W.; Li, Z.-Q.; Chuang, Y.-C.; Liao, X.-L.; Lin, K.-H.; Chen, Y.-Y. Decoding CMOS Rolling Shutter Pattern in Translational or Rotational Motions for VLC. IEEE Photonics J. 2019, 11, 7902305. [Google Scholar] [CrossRef]

- Shi, J.; He, J.; Jiang, Z.; Zhou, Y.; Xiao, Y. Enabling user mobility for optical camera communication using mobile phone. Opt. Express 2018, 26, 21762–21767. [Google Scholar] [CrossRef] [PubMed]

- Han, B.; Hranilovic, S. A Fixed-Scale Pixelated MIMO Visible Light Communication. IEEE J. Sel. Areas Commun. 2018, 36, 203–211. [Google Scholar] [CrossRef]

- Cahyadi, W.A.; Chung, Y.H. Experimental Demonstration of Indoor Uplink Near-infrared LED Camera Communication. Opt. Express 2018, 26, 19657–19664. [Google Scholar] [CrossRef]

- Huynh, T.-H.; Pham, T.-A.; Yoo, M. Detection Algorithm for Overlapping LEDs in Vehicular Visible Light Communication System. IEEE Access 2019, 7, 109945. [Google Scholar] [CrossRef]

- Chow, C.; Shiu, R.; Liu, Y.; Liao, X.; Lin, K. Using advertisement light-panel and CMOS image sensor with frequency-shift-keying for visible light communication. Opt. Express 2018, 26, 12530–12535. [Google Scholar] [CrossRef]

- Kuraki, K.; Kato, K.; Tanaka, R. ID-Embedded LED Lighting Technology for Providing Object-Related Information. January 2016. Available online: http://www.fujitsu.com/global/documents/about/resources/publications/fstj/archives/vol52-1/paper12.pdf (accessed on 6 February 2019).

- Ifthekhar, M.; Saha, N.; Jang, Y.M. Neural network based indoor positioning technique in optical camera communication system. In Proceedings of the 2014 International Conference on Indoor Positioning and Indoor Navigation (IPIN), Busan, Korea, 27–30 October 2014. [Google Scholar]

- Teli, S.; Cahyadi, W.; Chung, Y. Optical Camera Communication: Motion Over Camera. IEEE Commun. Mag. 2017, 55, 156–162. [Google Scholar] [CrossRef]

- Teli, S.; Cahyadi, W.A.; Chung, Y.H. Trained neurons-based motion detection in optical camera communications. Opt. Eng. 2018, 57, 040501. [Google Scholar] [CrossRef]

- Liu, Y.; Chen, H.; Liang, K.; Hsu, C.; Chow, C.; Yeh, C. Visible Light Communication Using Receivers of Camera Image Sensor and Solar Cell. IEEE Photonics J. 2016, 8, 1–7. [Google Scholar] [CrossRef]

- Rida, M.E.; Liu, F.; Jadi, Y. Indoor Location Position Based on Bluetooth Signal Strength. In Proceedings of the International Conference on Information Science and Control Engineering IEEE, Shanghai, China, 24–26 April 2015. [Google Scholar]

- Yang, C.; Shao, H. WiFi-based indoor positioning. IEEE Commun. Mag. 2015, 53, 150–157. [Google Scholar] [CrossRef]

- Huang, C. Real-Time RFID Indoor Positioning System Based on Kalman-Filter Drift Removal and Heron-Bilateration Location Estimation. IEEE Trans. Instrum. Meas. 2015, 64, 728–739. [Google Scholar] [CrossRef]

- Li, Y.; Ghassemlooy, Z.; Tang, X.; Lin, B.; Zhang, Y. A VLC Smartphone Camera Based Indoor Positioning System. IEEE Photonics Technol. Lett. 2018, 30, 1171. [Google Scholar] [CrossRef]

- Zhang, R.; Zhong, W.; Qian, K.; Wu, D. Image Sensor Based Visible Light Positioning System with Improved Positioning Algorithm. IEEE Access 2017, 5, 6087–6094. [Google Scholar] [CrossRef]

- Gomez, A.; Shi, K.; Quintana, C.; Faulkner, G.; Thomsen, B.C.; O’Brien, D. A 50 Gb/s transparent indoor optical wireless communications link with an integrated localization and tracking system. J. Lightwave Technol. 2016, 34, 2517. [Google Scholar] [CrossRef]

- Kuo, Y.; Pannuto, P.; Hsiao, K.; Dutta, P. Luxapose: Indoor Positioning with Mobile Phones and Visible Light. In Proceedings of the 20th Annual International Conference on Mobile Computing and Networking (MobiCom), Maui, HI, USA, 7–11 September 2014. [Google Scholar]

- Wang, A.; Wang, L.; Chi, X.; Liu, S.; Shi, W.; Deng, J. The research of indoor positioning based on visible light communication. China Commun. 2015, 12, 85–92. [Google Scholar] [CrossRef]

- Brena, R.F.; García-Vázquez, J.P.; Galván-Tejada, C.E.; Muñoz-Rodriguez, D.; Vargas-Rosales, C.; Fangmeyer, J.J. Evolution of indoor positioning technologies: A survey. J. Sens. 2017, 2017. [Google Scholar] [CrossRef]

- Kim, H. Three-Dimensional Visible Light Indoor Localization Using AOA and RSS With Multiple Optical Receivers. Lightwave Technol. J. 2014, 32, 2480–2485. [Google Scholar]

- Hassan, N.; Naeem, A.; Pasha, M.; Jadoon, T. Indoor Positioning Using Visible LED Lights: A Survey. ACM Comput. Surv. 2015, 48, 1–32. [Google Scholar] [CrossRef]

- Renesas. Eye Safety for Proximity Sensing Using Infrared Light-Emitting Diodes. 28 April 2016. Available online: https://www.renesas.com/doc/application-note/an1737.pdf (accessed on 29 October 2018).

- Khan, F.N. Joint OSNR monitoring and modulation format identification in digital coherent receivers using deep neural networks. Opt. Express 2017, 25, 17767. [Google Scholar] [CrossRef]

- Philips Lighting (Signify), Enabling the New World of Retail: A White Paper on Indoor Positioning. 2017. Available online: http://www.lighting.philips.com/main/inspiration/smart-retail/latest-thinking/indoor-positioning-retail (accessed on 10 February 2019).

- Carroll, A.; Heiser, G. An analysis of power consumption in a smartphone. In Proceedings of the 2010 USENIX Conference on USENIX Annual Technical Conference, Boston, MA, USA, 22–25 June 2010. [Google Scholar]

- Bekaroo, G.; Santokhee, A. Power consumption of the Raspberry Pi: A comparative analysis. In Proceedings of the 2016 IEEE International Conference on Emerging Technologies and Innovative Business Practices for the Transformation of Societies (EmergiTech), Balaclava, Mauritius, 3–6 August 2016. [Google Scholar]

- Akram, M.; Aravinda, L.; Munaweera, M.; Godaliyadda, G.; Ekanayake, M. Camera based visible light communication system for underwater applications. In Proceedings of the IEEE International Conference on Industrial and Information Systems (ICIIS), Peradeniya, Sri Lanka, 15–16 December 2017. [Google Scholar]

- Takai, I.; Andoh, M.; Yasutomi, K.; Kagawa, K.; Kawahito, S. Optical Vehicle-to-Vehicle Communication System Using LED Transmitter and Camera Receiver. IEEE Photonics J. 2014, 6, 1–14. [Google Scholar] [CrossRef]

- Ifthekhar, M.; Saha, N.; Jang, Y. Stereo-vision-based cooperative vehicle positioning using OCC and neural networks. Opt. Commun. 2015, 352, 166–180. [Google Scholar] [CrossRef]

- Yamazato, T.; Kinoshita, M.; Arai, S.; Souke, E.; Yendo, T.; Fujii, T.; Kamakura, K.; Okada, H. Vehicle Motion and Pixel Illumination Modeling for Image Sensor Based Visible Light Communication. IEEE J. Sel. Areas Commun. 2015, 33, 1793–1805. [Google Scholar] [CrossRef]

- Kawai, Y.; Yamazato, T.; Okada, H.; Fujii, T.; Yendo, T.; Arai, S. Tracking of LED headlights considering NLOS for an image sensor based V2I-VLC. In Proceedings of the International Conference and Exhibition on Visible Light Communications, Yokohama, Japan, 25–26 October 2015. [Google Scholar]

- Akram, M.; Godaliyadda, R.; Ekanayake, P. Design and analysis of an optical camera communication system for underwater applications. IET Optoelectron. 2019, 14, 10–21. [Google Scholar] [CrossRef]

- Wikichip. Wikichip Semiconductor & Computer Engineering. Wikimedia Foundation. 12 January 2019. Available online: https://en.wikichip.org/wiki/hisilicon/kirin/970 (accessed on 21 January 2019).

- Kleinfelder, S.; Chen, Y.; Kwiatkowski, K.; Shah, A. High-Speed CMOS Image Sensor Circuits with In Situ Frame Storage. IEEE Trans. Nucl. Sci. 2004, 51, 1648–1656. [Google Scholar] [CrossRef]

- Millet, L. A 5500-frames/s 85-GOPS/W 3-D Stacked BSI Vision Chip Based on Parallel In-Focal-Plane Acquisition and Processing. IEEE J. Solid State Circuits 2019, 54, 1096–1105. [Google Scholar] [CrossRef]

- Gu, J.; Hitomi, Y.; Mitsunaga, T.; Nayar, S. Coded rolling shutter photography: Flexible space-time sampling. In Proceedings of the IEEE International Conference on Computational Photography (ICCP), Cambridge, MA, USA, 29–30 March 2010. [Google Scholar]

- Wilkins, A.; Veitch, J.; Lehman, B. LED lighting flicker and potential health concerns: IEEE standard PAR1789 update. In Proceedings of the 2010 IEEE Energy Conversion Congress and Exposition, Atlanta, GA, USA, 12–16 September 2010. [Google Scholar]

- Alphabet Deepmind, AlphaGo. Alphabet (Google). 2018. Available online: https://deepmind.com/research/alphago/ (accessed on 31 October 2018).

- iClarified. Google Uses Machine Learning to Improve Photos App. 13 October 2016. Available online: http://www.iclarified.com/57301/google-uses-machine-learning-to-improve-photos-app (accessed on 8 February 2019).

- Carneiro, T.; Da Nóbrega, R.M.; Nepomuceno, T.; Bian, G.; De Albuquerque, V.; Filho, P. Performance Analysis of Google Colaboratory as a Tool for Accelerating Deep Learning Applications. IEEE Access 2018, 6, 61677–61685. [Google Scholar] [CrossRef]

- Banerjee, A. A Microarchitectural Study on Apple’s A11 Bionic Processor; Arkansas State University: Jonesboro, AR, USA, 2018. [Google Scholar]

- Sims, G. What is the Kirin 970’s NPU?—Gary Explains. Android Authority. 22 December 2017. Available online: https://www.androidauthority.com/what-is-the-kirin-970s-npu-gary-explains-824423/ (accessed on 21 January 2019).

- Haigh, P.; Ghassemlooy, Z.; Rajbhandari, S.; Papakonstantinou, I.; Popoola, W. Visible Light Communications: 170 Mb/s Using an Artificial Neural Network Equalizer in a Low Bandwidth White Light Configuration. J. Lightwave Technol. 2014, 32, 1807–1813. [Google Scholar] [CrossRef]

- Liu, J.; Kong, X.; Xia, F.; Wang, L.; Qing, Q.; Lee, I. Artificial Intelligence in the 21st Century. IEEE Access 2018, 6, 34403–34421. [Google Scholar] [CrossRef]

- Nguyen, T.; Thieu, M.; Jang, Y. 2D-OFDM for Optical Camera Communication: Principle and Implementation. IEEE Access 2019, 7, 29405–29424. [Google Scholar] [CrossRef]

- Xu, Z.; Sadler, B.M. Ultraviolet Communications: Potential and State-Of-The-Art. IEEE Commun. Mag. 2008, 46, 67–73. [Google Scholar]

- Huo, Y.; Dong, X.; Lu, T.; Xu, W.; Yuen, M. Distributed and Multilayer UAV Networks for Next-Generation Wireless Communication and Power Transfer: A Feasibility Study. IEEE Internet Things J. 2019, 6, 7103–7115. [Google Scholar] [CrossRef]

- Huo, Y.; Dong, X.; Xu, W.; Yuen, M. Enabling Multi-Functional 5G and Beyond User Equipment: A Survey and Tutorial. IEEE Access 2019, 7, 116975–117008. [Google Scholar] [CrossRef]

- Ahmed, M.; Farag, A. Nonmetric calibration of camera lens distortion: Differential methods and robust estimation. IEEE Trans. Image Process. 2005, 14, 1215–1230. [Google Scholar] [CrossRef] [PubMed]

- Tian, H.; Srikanthan, T.; Asari, K.; Lam, S. Study on the effect of object to camera distance on polynomial expansion coefficients in barrel distortion correction. In Proceedings of the Fifth IEEE Southwest Symposium on Image Analysis and Interpretation, Santa Fe, NM, USA, 7–9 April 2002. [Google Scholar]

- Tang, Z.; Von Gioi, R.G.; Monasse, P.; Morel, J. A Precision Analysis of Camera Distortion Models. IEEE Trans. Image Process. 2017, 26, 2694–2704. [Google Scholar] [CrossRef] [PubMed]

- Drew Gray Photography. Distortion 101—Lens vs. Perspective. Drew Gray Photography. 8 November 2014. Available online: http://www.drewgrayphoto.com/learn/distortion101 (accessed on 22 March 2019).

- Brauers, J.; Aach, T. Geometric Calibration of Lens and Filter Distortions for Multispectral Filter-Wheel Cameras. IEEE Trans. Image Process. 2010, 20, 496–505. [Google Scholar] [CrossRef]

- Xu, Y.; Zhou, Q.; Gong, L.; Zhu, M.; Ding, X.; Teng, R.K.F. High-Speed Simultaneous Image Distortion Correction Transformations for a Multicamera Cylindrical Panorama Real-time Video System Using FPGA. IEEE Trans. Circuits Syst. Video Technol. 2014, 24, 1061–1069. [Google Scholar] [CrossRef]

| PHY | Description | Modulation Scheme |

|---|---|---|

| I | Existing IEEE 802.15.7-2011 |

|

| II | Existing IEEE 802.15.7-2011 |

|

| III | Existing IEEE 802.15.7-2011 |

|

| IV | Image sensor communication modes (added to TG7m) |

|

| V | Image sensor communication modes (added to TG7m) |

|

| Parameter | Flicker-Free Modulation | Flicker Modulation | ||

|---|---|---|---|---|

| High Frame Rate Processing | RoI Signaling Technique | Rolling Shutter Technique | Screen/Projector | |

| Distance | <100 m | Hundreds of meters | Several meters | Several meters |

| Data rate | Tens of Kbps |

| <10 Kbps | Kbps to Mbps |

| Intended system |

|

|

|

|

| Characteristics |

|

|

|

|

| Scheme/Name | Rate of TX (Hz) | Rate of RX (Camera) (fps) | Typical Distance (m) | Effective Data Rate (bps) |

|---|---|---|---|---|

| NSM/PixNet [50] | 3 | 60 (DSLR) | 0–10 | 6.4 K–12 M |

| NSM/Cobra [51] | 15 | 30 (phone) | 0–0.15 | 80–225 K |

| NSM/2D Seq. code [53] | 15 | 30 (webcam) | <0.1 | 6.09–18.27 K |

| NSM/Invisible Watermarking [12] | 10 | 20 (webcam) | 0.5–0.7 | 60–140 |

| NSM/CIM-MIMO [55] | 82.5 | 330 (FPGA-cam) | 1.0 | 126.72 K |

| NSM/IAC [22] | 20 | 30 (phone) | 0.3 | 1.28 K |

| NSM/Split shutter [43] | 60 | 120 (phone) | 2 | 11.52 K |

| NSM/HDM [37] | 60 | 120 (phone) | 0.2–0.5 | 326.82 K–2.66 M |

| High-rate NSM/V2V-VLC [60] | 500 | 1000 (Fastcam) | 30–50 | 10 M |

| RoI-S/Spatial 2-PSK [64] | 125–200 | 30 and 1000 | Up to 30 | Up to 10 M |

| RoI-S/Selective Capture [36] | 217 | 435 (Raspicam) | 1.75 | 3.46 K |

| RoI-S/UPSOOK [68] | 200–250 | 50 (DSLR) | 0.25–0.8 | 50–150 |

| Hybrid Camera-PD/OCI [35] | 14.5 M | 35 MHz (OCI) | 1.5 | 55 M |

| RS/EVA [77] | 200 | 60 (phone) | <10 cm | 7.68 K |

| RS/Beacon Jointed Packet [82] | 1.92–14.04 K | 30 (phone) | 0.1–0.25 | 5.76–10.32 K |

| RS/Ceiling-Talk [84] | 80 K | 30 (phone) | 5 | 1 K |

| Device | Average Frame Rate (fps) | Minimum (fps) | Maximum (fps) |

|---|---|---|---|

| Samsung Smartphone (SHV-N916L) | 29.656 | 15.77 | 31.44 |

| LG Smartphone (F700L) | 29.97 | 22.85 | 30.13 |

| Sony Smartphone (G8141) | 30.00 | 29.654 | 30.36 |

| Thinkpad webcam (Sunplus IT) | 30.00 | 20.819 | 31.94 |

| Microsoft webcam (HD-3000) | 29.87 | 23.09 | 30.80 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Cahyadi, W.A.; Chung, Y.H.; Ghassemlooy, Z.; Hassan, N.B. Optical Camera Communications: Principles, Modulations, Potential and Challenges. Electronics 2020, 9, 1339. https://doi.org/10.3390/electronics9091339

Cahyadi WA, Chung YH, Ghassemlooy Z, Hassan NB. Optical Camera Communications: Principles, Modulations, Potential and Challenges. Electronics. 2020; 9(9):1339. https://doi.org/10.3390/electronics9091339

Chicago/Turabian StyleCahyadi, Willy Anugrah, Yeon Ho Chung, Zabih Ghassemlooy, and Navid Bani Hassan. 2020. "Optical Camera Communications: Principles, Modulations, Potential and Challenges" Electronics 9, no. 9: 1339. https://doi.org/10.3390/electronics9091339

APA StyleCahyadi, W. A., Chung, Y. H., Ghassemlooy, Z., & Hassan, N. B. (2020). Optical Camera Communications: Principles, Modulations, Potential and Challenges. Electronics, 9(9), 1339. https://doi.org/10.3390/electronics9091339