1. Introduction

Deep learning (DL) [

1] and its particular embodiment in the form of convolutional neural networks (CNNs) have become the de-facto approach to address many computer vision tasks, e.g., image recognition, object detection, or segmentation. New training and processing techniques together with the availability of large datasets and high computational power are the main underlying factors supporting the distinctive feature of CNNs versus classical algorithms, i.e., their high accuracy. The flip side of this high accuracy is a notable increase in processing and memory requirements, which has prompted multiple research efforts on designing efficient network architectures [

2,

3,

4,

5,

6,

7,

8] and reducing the computational load of CNNs through techniques such as network pruning, data quantization, and network compression [

9,

10,

11].

Despite these efforts, the implementation of CNNs still constitutes a major challenge for edge visual inference. Application scenarios such as robotics [

12], environmental monitoring [

13], or the Internet of Things (IoT) [

14] can greatly benefit from incorporating vision capabilities in ultra-low-power low-cost devices. However, DL-based processing pipelines deplete the scarce resources available in such devices, significantly affecting the timely completion of other tasks. Furthermore, CNNs can degrade their own performance—and therefore the performance of the whole system—as a result of thermal throttling, precluding continuous inference at prescribed throughput during a long runtime period.

In this study, we expressly focused on the problem of thermal throttling, i.e., the automatic reduction of processor performance caused by temperature excess. It is a preventive action to avoid thermal damage of system components and implies a reduction of the clock frequency. Our analysis is based on an extensive set of indoor measurements comprising state-of-the-art CNN models and software frameworks deployed on the latest version of arguably the most popular embedded CPU platform, i.e., Raspberry Pi 4B (RPi4B). In addition to the standard 32-bit operating system (OS) usually running on RPi4B, we also characterized the 64-bit beta version recently released. This vast set of measurements is intrinsically valuable as a basis to compare and select the optimal combination of components according to prescribed specifications for edge vision. Concerning thermal throttling, we examined the long-term performance of 80 combinations without and with active cooling in terms of throughput; CPU usage, temperature, and frequency; and fan usage. The best combinations for each parameter are pointed out and interesting conclusions are drawn. For instance, we prove that the 64-bit OS is not always the best option, in spite of the fact that it is supposed to better exploit the 64-bit architecture of the RPi4B CPU. We also analyze the case of outdoor operation to elucidate the impact of varying ambient temperature with no active cooling. This is critical for remote sensing applications in which the extra power consumption of the fan could not be affordable.

The remainder of this paper is organized as follows. In

Section 2, related studies reported in the literature are briefly reviewed and our contribution is emphasized. The experimental setup for assessing thermal throttling during CNN inference together with the evaluated hardware and software components are introduced in

Section 3. The characterization methodology is described in

Section 4. The indoor experimental results are presented and discussed in

Section 5 whereas the outdoor case is addressed in

Section 6. Finally, we draw relevant conclusions in

Section 7.

2. Related Work

Throughput, energy budget, or memory restrictions are major concerns when designing an embedded system for edge vision. These days, it implies to carefully consider the heterogeneity of CNN models and specialized hardware and software available for DL-based inference. Regarding the ever-growing zoo of CNN models, comparative studies facilitate model selection according to the prescribed application accuracy and model size suitable for the target platform [

15,

16]. However, there is a strong dependence on the underlying hardware integration and software framework. In recent studies, the inference time and energy consumption of CNNs on various edge platforms and hardware accelerators were measured [

17,

18,

19]. At software level, the performance of a diversity of frameworks for mobile applications was also evaluated in [

20], where it is stated that the software stack contributes to the inference performance in the same proportion as model complexity does. The recent project

MLPerf [

21] constitutes an attempt to address this complex scenario holistically. It is a collaborative benchmark framework to provide reliable performance figures for multiple models, hardware platforms, and software environments.

In view of these benchmarking efforts, it is remarkable that thermal issues derived from running CNNs on edge devices have hardly been addressed in previous works. In [

22], different algorithms were studied for managing both platform overheating and power consumption during extensive workloads on IoT devices. To this end, the authors applied well-known techniques such as dynamic power management and dynamic voltage and frequency scaling (DVFS). However, they focused on basic vision tasks and did not analyze the impact of thermal throttling. Two power control policies for embedded systems were evaluated in [

23]. CNNs were characterized under DVFS thermal management based on such policies. In a more recent study [

24], the same authors analyzed the impact of model topology scaling on power-constrained systems over both functional (accuracy) and non-functional (latency and temperature) constraints. In both works [

23,

24], the authors stressed the importance of thermally induced performance degradation and the scarcity of studies on the topic.

In this context, the first major contribution of our study is a thorough assessment of the impact of thermal throttling on a broad set of state-of-the-art CNN workloads running on a low-cost CPU platform under stable ambient temperature. Performance metrics are compared with the case of hysteresis-based active cooling, which prevented performance degradation for all the workloads. A second contribution is the evaluation of fan usage during active cooling, which allows to quantify the extra power consumption required to keep maximum performance; interestingly, the fan usage widely varied over the benchmarking set. Finally, a third important contribution is the analysis of outdoor performance in a wide range of ambient temperature with no cooling. We consider that the discussion and conclusions about our results will also be of interest for the related research community.

3. Experimental Setup

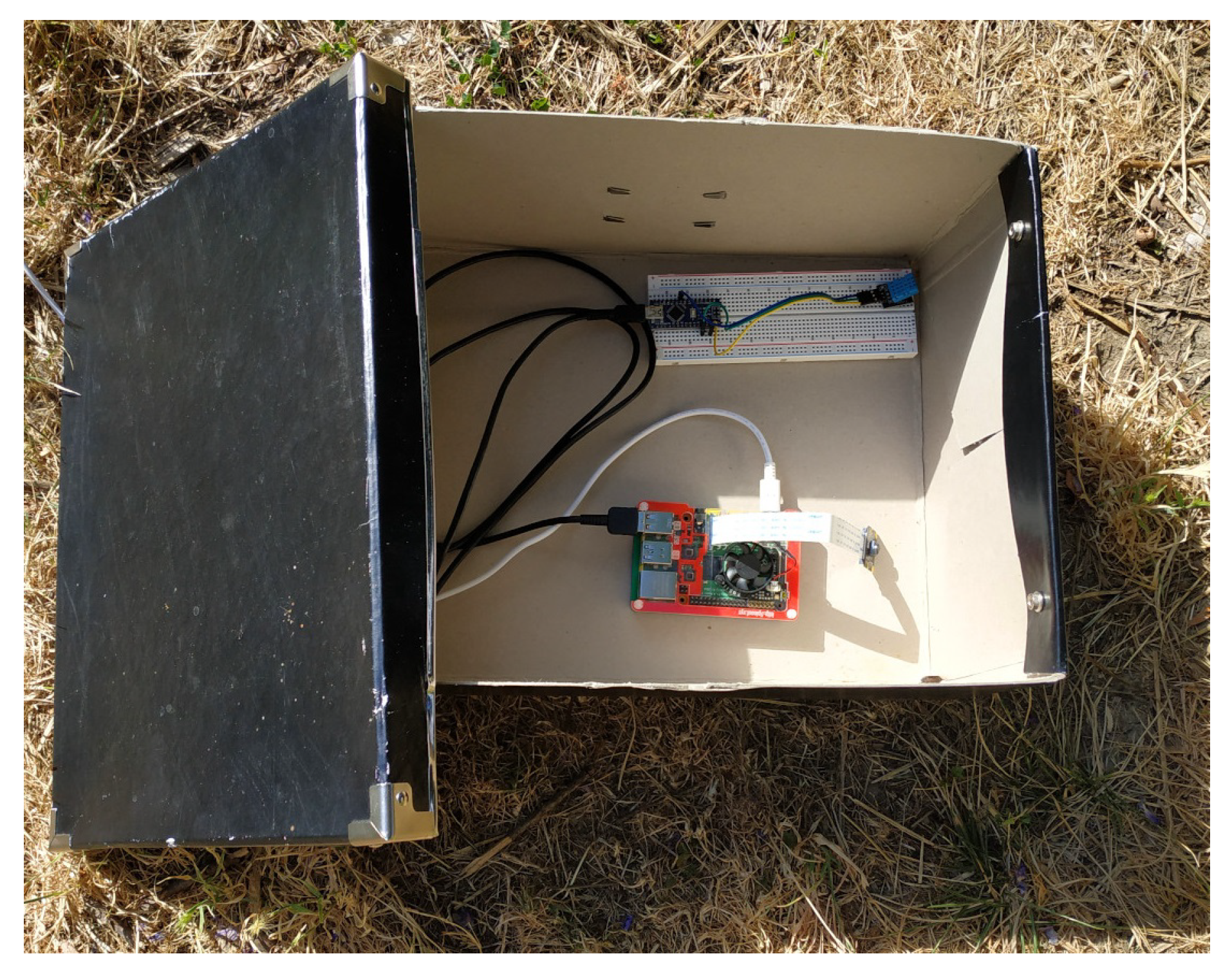

Figure 1 shows the experimental setup employed for the different characterizations performed in this study. The central device is the RPi4B. An off-the-shelf camera module [

25] was also integrated, but it was not finally used for testing. Instead, images previously stored in memory provided a constant input flow, thereby ensuring that no bias on performance occurred due to changes in illumination conditions. The ambient temperature was sensed by a DHT11 temperature sensor [

26] connected to an Arduino Nano microcontroller, which transmits the temperature values to the RPi4B through the serial port. Next, we provide further details about the RPi4B platform and briefly describe the different components of the software stack and the evaluated CNN models.

3.1. Hardware Platform

CNN-based computer vision was implemented on the RPi4B [

27]. Its Broadcom BCM2711 system-on-chip includes a quad-core ARMv8 Cortex-A72 64-bit CPU that can work at frequencies ranging from 600 MHz to 1.5 GHz. It also incorporates a Broadcom VideoCore VI GPU, but it cannot be easily exploited for CNN inference at the present time, i.e., leveraging open-source DL software for GPU acceleration. We specifically used an RPi4B model featuring 8 GB LPDDR4-3200 SDRAM.

Heavy processing workloads resulting from running CNNs cause CPU overheating, which is automatically counteracted through a DVFS strategy encoded in the RPi4B firmware [

28]. When the temperature of the quad-core ARM processor exceeds a critical value (

°C for RPi4B), the clock frequency of the cores is progressively reduced from 1.5 GHz to, eventually, 600 MHz, thereby gradually degrading CNN inference performance. Alternatively, CPU overheating can be mitigated through an external cooling fan. We employed a commercial module [

29] compatible with RPi4B consisting of a fan unit mounted on a circuit board. This board allows the user to control the fan with a hysteresis approach in accordance with the CPU temperature through a Python library [

30]. The fan consumes 0.65 W when it is on. Given that the power consumption of the RPi4B ranges from 3 W (idle) to 6.25 W (under stress) [

31], the fan increases the power consumption of the system at least by approximately 10%.

3.2. Software Stack

3.2.1. Operating System

Raspberry Pi Foundation officially provides a 32-bit OS for RPi4B—so-called Raspbian [

32]. Nonetheless, a 64-bit beta version of this OS was recently released [

33]. This second version aims to completely leverage the 64-bit ARM processor of the platform. Experiments were conducted on both Raspbian flavors.

3.2.2. DL Frameworks

Training, testing, and deployment of DL-based applications are facilitated by DL software frameworks. Within the diversity of tools publicly available these days, we selected the following frameworks specifically oriented for embedded inference:

OpenCV [

34] is a popular library that allows importing DNN models previously trained with other tools—e.g., Caffe, TensorFlow, or Torch—to perform visual inference. We built OpenCV version 4.3.0-dev by setting the pertinent compilation flags to exploit the ARM NEON instruction set and enable VFPv3 optimizations. This library was accessed through its C++ interface.

Tengine [

35], developed by OPEN AI LAB [

36], is a tool intended for running neural networks on embedded devices and IoT scenarios. It is specifically designed to make the most of ARM architectures. Pre-trained models from Tensorflow, Caffe, and MXNet, in addition to ONNX models, can be converted to the particular model format employed by Tengine. We used the standalone version of Tengine v1.12.0 and C++ coding.

NCNN [

37] is an inference framework optimized for mobile platforms. Its high-performance implementation, exclusively encoded in C++, supports ARM NEON optimizations and multi-core parallel computing acceleration. It can import models from Caffe, PyTorch, MXNet, ONNX, and DarkNet.

ArmNN SDK [

38] is a set of open-source Linux software tools that enables machine leaning workloads on power-efficient devices. It provides a bridge between existing neural network frameworks and low-power Cortex-A CPUs, ARM Mali GPUs, and ARM Ethos neural processing units. Consequently, it is supposed to fully leverage the RPi4B cores. This software can take networks from other DL frameworks, translate them into the internal ArmNN format and deploy them efficiently. It supports Caffe, TensorFlow, TensorFlow Lite, and ONNX models. The version of ArmNN we used is v20.05 through its Python API (pyArmNN).

3.3. Convolutional Neural Networks

We benchmarked the five CNNs listed in

Table 1. All of them were trained over the ImageNet dataset [

39] for 1000-category image classification. The set includes complex—ResNets—and lightweight—MobileNets and SqueezeNet—models. Note that there is no clear correlation between an increasing number of weights and a corresponding higher accuracy. This has a significant impact on the achievable performance, as will be demonstrated in

Section 5. We downloaded the files with the corresponding pre-trained network weights compatible with each framework from the public repositories shown in

Table 2.

4. Characterization Methodology

The long-term performance of each and every combination among the aforementioned OSs, software frameworks, and CNN models was evaluated on the RPi4B according to the methodology described next.

4.1. Metrics

Key performance metrics such as throughput, CPU usage, CPU temperature, and CPU frequency were periodically monitored during continuous inference periods. The experiments were repeated for two cases:

case (a): raw version of the RPi4B hardware, i.e., no active cooling.

case (b): application of active cooling through the external fan unit.

A C++ test program was coded to extract the metrics (In the case of ArmNN, the test program was coded in Python given that we used the Python interface of this framework). This program proceeded as follows:

Images from ImageNet were resized to fit the input resolution of the pre-trained CNNs, i.e., . This pre-processing time was not included in the throughput.

Real-time CNN inference with batch size 1 was performed and the instantaneous throughput was measured.

CPU usage, temperature, and frequency were monitored through calls to Linux tools, in particular vcgencmd and mpstat.

The instantaneous ambient temperature was also periodically recorded during the tests. For the indoor experiments, this information allowed us to ensure that all the tests were conducted at ambient temperatures as stable and similar as possible, always within the range 27–29 °C. For the outdoor experiments, the ambient temperature was correlated with the system performance under no-cooling conditions to evaluate the impact of the former on the latter.

Finally, we also evaluated the fan usage, denoted by

, for case (b) according to the following equation:

where

denotes the sum of time intervals in which the fan was on within the total test time, denoted by

. Note that when applying active cooling, a hysteresis approach is typically employed to reduce

as much as possible. We set the hysteresis on/off temperatures at 75 °C and 65 °C, respectively, to prevent thermal throttling on the RPi4B CPU.

4.2. Tests

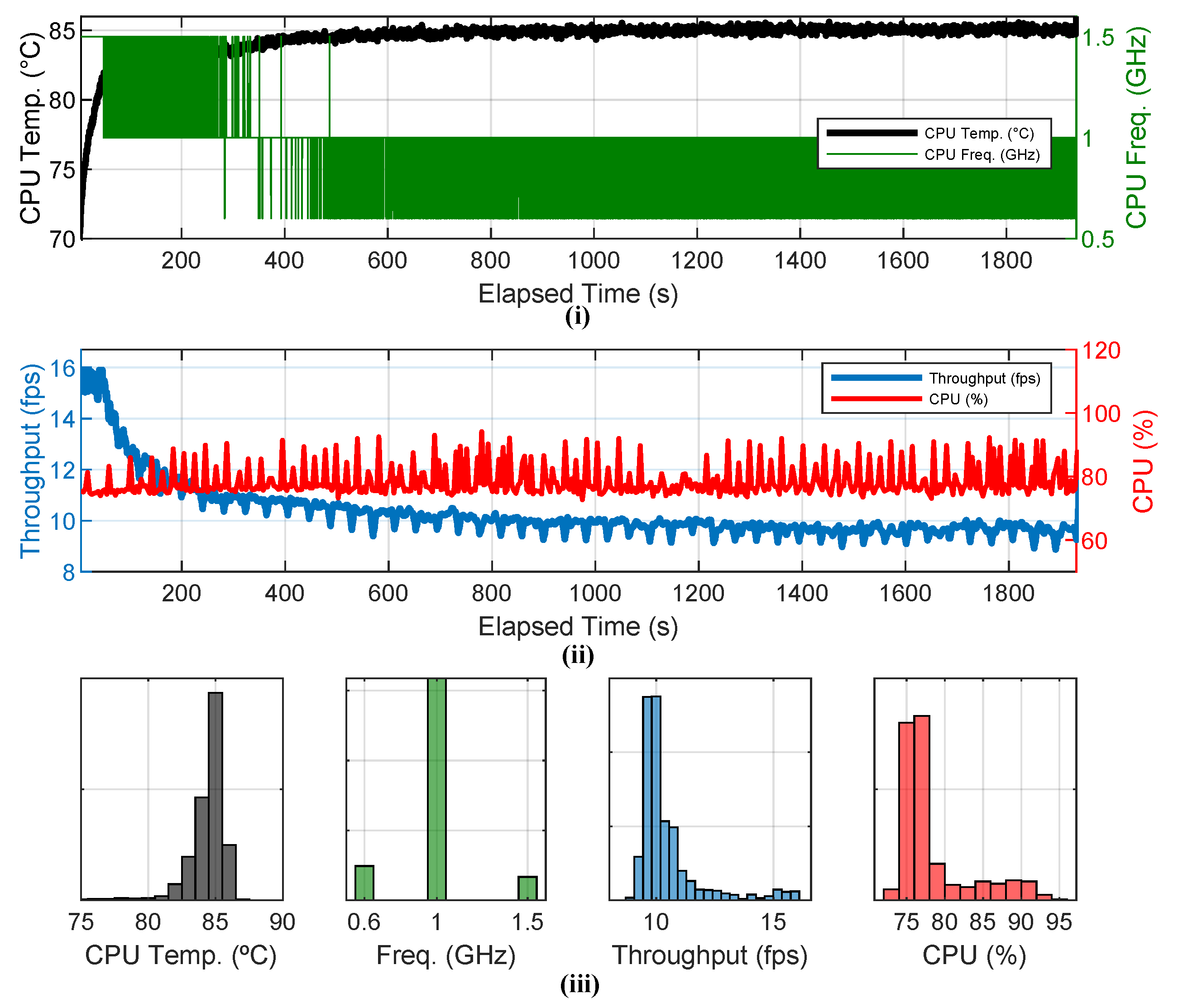

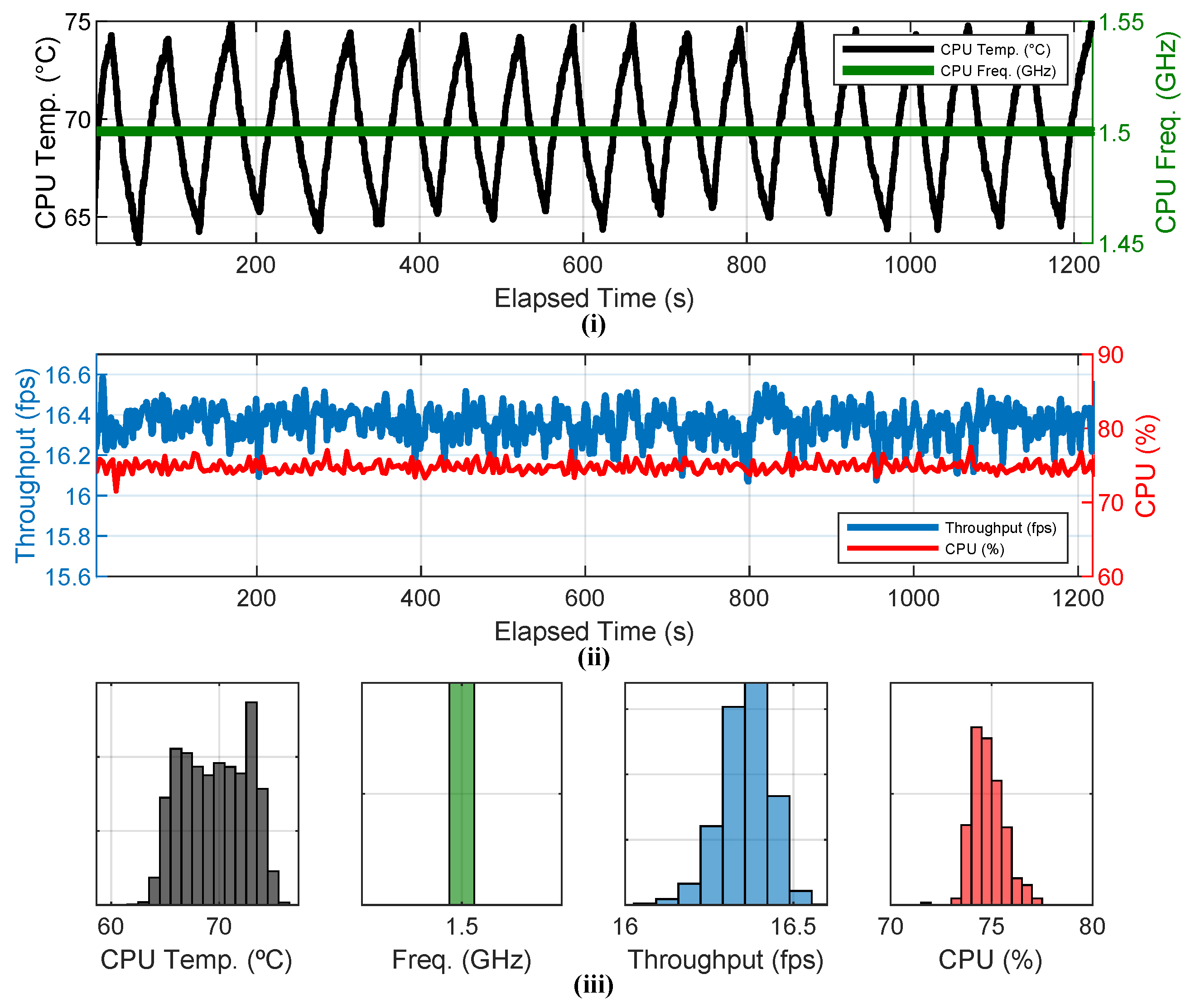

Each individual experiment was started when the CPU temperature was cool and stable, far away from thermal throttling. The two considered cases—(a) no cooling; (b) active cooling—were analyzed by averaging the aforementioned metrics at steady state. In case (a), steady state means that CPU overheating gave rise to thermal throttling and the DVFS strategy encoded in the RPi4B achieved a stable system performance. In case (b), active cooling maintained a steady performance throughout the total duration of all the tests. We exemplify these behaviors in

Figure 2 and

Figure 3 for 64-bit OS/OpenCV/SqueezeNet.

Figure 2 shows the long-term behavior of CNN inference without active cooling. The CPU temperature reached

after approximately 50 s of workload, causing thermal throttling and hence reduction of the CPU frequency from that instant on. Consequently, the throughput was notably affected, eventually reaching a stable value of approximately 10 fps, which is significantly lower than its initial value, around 16 fps. This occurred after approximately 1000 s of continuous inference, when the underlying DVFS strategy achieved a stable temperature around 85 °C. The histograms in the bottom plot reveal that the processor operated at 1 GHz most of the time, with peaks of CPU usage reaching 95%.

Figure 3 depicts the same CNN inference but applying active cooling. The impact of the fan hysteresis cycle on the CPU temperature is evident. Thermal throttling never occurred. Both CPU frequency and usage kept stable at 1.5 GHz and approximately 75%, respectively. Likewise, the throughput slightly fluctuated around 16.4 fps. The histograms in the bottom plot confirm this steady behavior. Note that the CPU temperature swept a wider interval than in

Figure 2 but always below

.

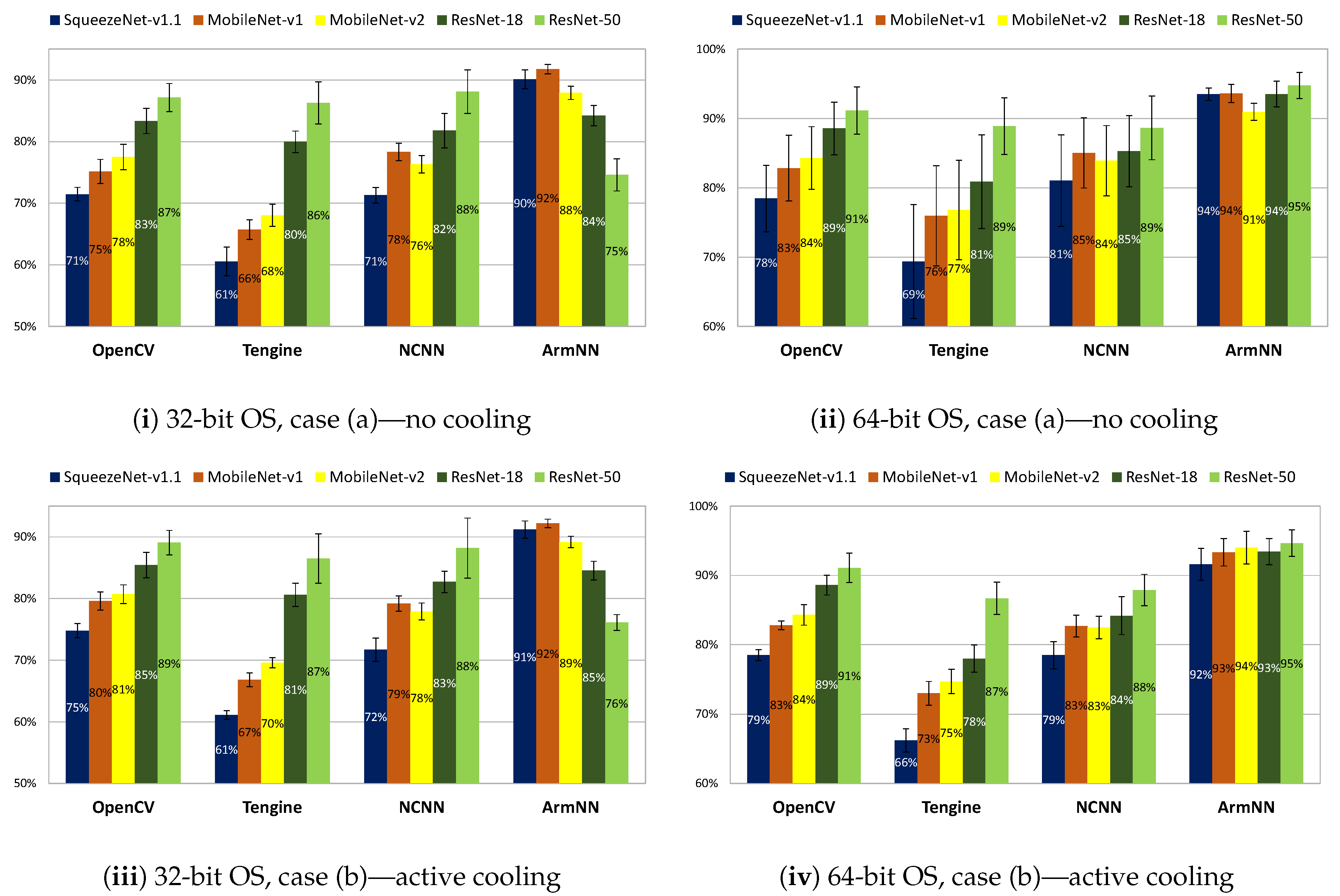

5. Indoor Tests: Results and Analysis

Indoor experiments were conducted under stable ambient temperature. Considering every possible combination of the aforementioned OSs, DL frameworks, and CNNs (ArmNN v20.05 did not support SqueezeNet at the moment of performing these tests. Thus, we specifically employed v20.08 for this CNN) along with the two general cases, (a) and (b), a total of 80 inference scenarios were characterized. For all of them, the tests were properly extended until reaching steady-state performance. As an example, the longest indoor test lasted 5340 s for 32-bit OS/OpenCV/ResNet-50 with no cooling. The results are summarized in

Figure 4,

Figure 5,

Figure 6 and

Figure 7 and discussed below. Note that

Figure 6 only shows the average steady-state CPU frequency for case (a) given that this parameter always keeps at its maximum (1.5 GHz) when active cooling was applied. Likewise,

Figure 7 only depicts the fan usage for case (b). As a general comment, the variability of these results demonstrate the importance of carefully selecting a set of hardware and software components suitable for the target application.

Throughput.

Figure 4 shows the average steady-state throughput (expressed in frames per second) for cases (a)—no cooling—and (b)—active cooling. As expected, there is a clear inverse correlation between the complexity of the models, reflected by the rightmost column in

Table 1, and the average frame rate: the higher the network complexity, the lower the throughput. As pointed out in

Section 3.3, the accuracy does not follow such correlation so markedly. Thus, throughput is not always traded off for a more precise inference. In such cases, the global performance is significantly degraded.

Table 3 and

Table 4 show the best combination of OS and DL framework for each CNN. Remarkably, Tengine achieves the highest throughput in all cases but one (ResNet-50, no cooling), for which anyway Tengine performs closely to ArmNN. Note that, as expected, active cooling always leads to better performance than no cooling, no matter the combination considered. However, this is not the case for the 64-bit OS versus 32-bit OS. Indeed, with no cooling, many combinations performed better on the 32-bit OS. We conjecture that this is due to the specificity of each framework when it comes to exploiting the underlying system architecture. Finally, note that there are combinations for which active cooling is particularly beneficial. For instance, this is the case of OpenCV on the 64-bit OS, for which active cooling improves the throughput by approximately 82% on average for the five CNNs, with an absolute maximum of approximately 90% for ResNet-18. Interestingly, the average improvement for OpenCV on the 32-bit OS is only 27.5%.

CPU usage.

Figure 5 shows the average and standard deviation of the steady-state CPU usage. This metric is particularly interesting because it provides information about the available CPU power to carry out other tasks. The first aspect to point out is that, generally speaking, the application of active cooling hardly affects the average CPU usage. However, it does have an impact on the standard deviation, in particular for the 64-bit OS, achieving greater stability—i.e., smaller standard deviation—at steady state. Note that Tengine, in addition to achieving the highest frame rates, is the most efficient framework in terms of CPU usage for the majority of combinations. By contrast, ArmNN is the less efficient in most of the cases.

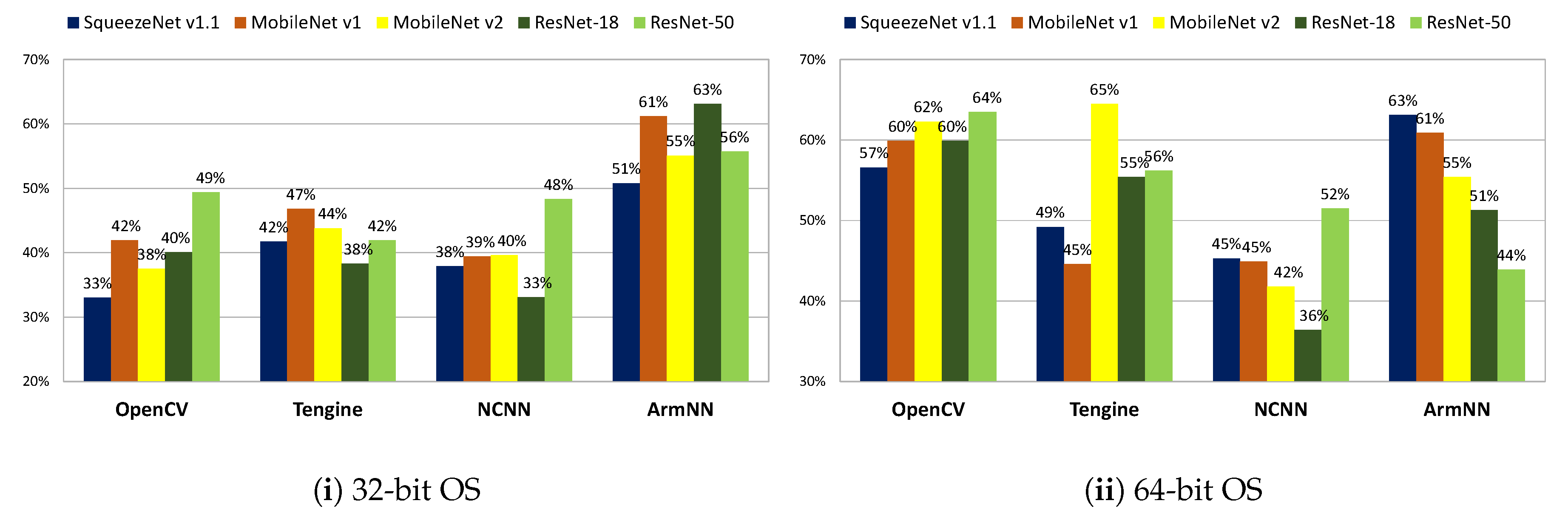

CPU frequency. The average CPU frequency (The fact that we calculate the average explains the granularity of values of the CPU frequency, which actually takes instantaneous values from a reduced set within the interval [600 MHz, 1.5 GHz]) for case (a)—no cooling—is shown in

Figure 6. This metric is directly related with power consumption. For the 32-bit OS, ArmNN operates at the lowest frequency for four out of the five network models whereas OpenCV runs at the highest frequency also for four of the CNNs. Interestingly, this changes for the 64-bit OS, on which OpenCV operates at the lowest CPU frequency for four models and NCNN clearly reaches the highest frequency in all cases. Again, keep in mind that for case (b)—active cooling—the CPU frequency never decreased from its maximum value, i.e., 1.5 GHz.

Fan usage.

Figure 7 shows the values of

for case (b)—active cooling. This parameter determines the extra consumption required for precluding thermal throttling. Note that NCNN is the framework demanding the lowest usage of active cooling in most of the cases for both the 32-bit and 64-bit OSs. By contrast, ArmNN is clearly the framework requiring the longest time of fan for the 32-bit OS. Concerning the 64-bit OS, OpenCV presents high fan usage—not necessarily the highest—for the five CNN models. Globally, the values of

range from 33% to 65%.

Two important conclusions can be drawn from the above discussions. First, active cooling is worth it as long as its power consumption is affordable. Note that the extra consumption with respect to no cooling stems from two sources: (i) the consumption associated with the fan to prevent thermal throttling; (ii) the higher consumption derived from the fact the CPU constantly operates at its highest frequency. If affordable, active cooling always improves the throughput, with a significant increase in some cases. Second, Tengine seems to be the best option, no matter the OS version or whether active cooling is applied. This framework achieves the best throughput at the lowest CPU utilization in most scenarios, with moderate CPU frequency in case of no cooling, and moderate fan usage in case of active cooling.

6. Outdoor Tests: Results and Analysis

Outdoor visual inference typically suffers from unregulated ambient temperatures that can affect the processor performance. According to the results presented in the previous section, thermal throttling can be prevented through active cooling. However, there are power budgets in outdoor application scenarios—e.g., remote environmental monitoring—that could not afford the extra consumption of the fan. Thus, we conducted a number of outdoor tests to evaluate the impact of ambient temperature sweeping a wide variation interval while performing continuous CNN processing on the RPi4B with no active cooling.

The setup for outdoor tests is shown in

Figure 8. The whole system was placed in a black cardboard box for protection and homogenization of the temperature of all the components. Following the same procedure described in

Section 4.1, we periodically monitored the instantaneous CNN runtime, the CPU temperature and frequency, and the ambient temperature.

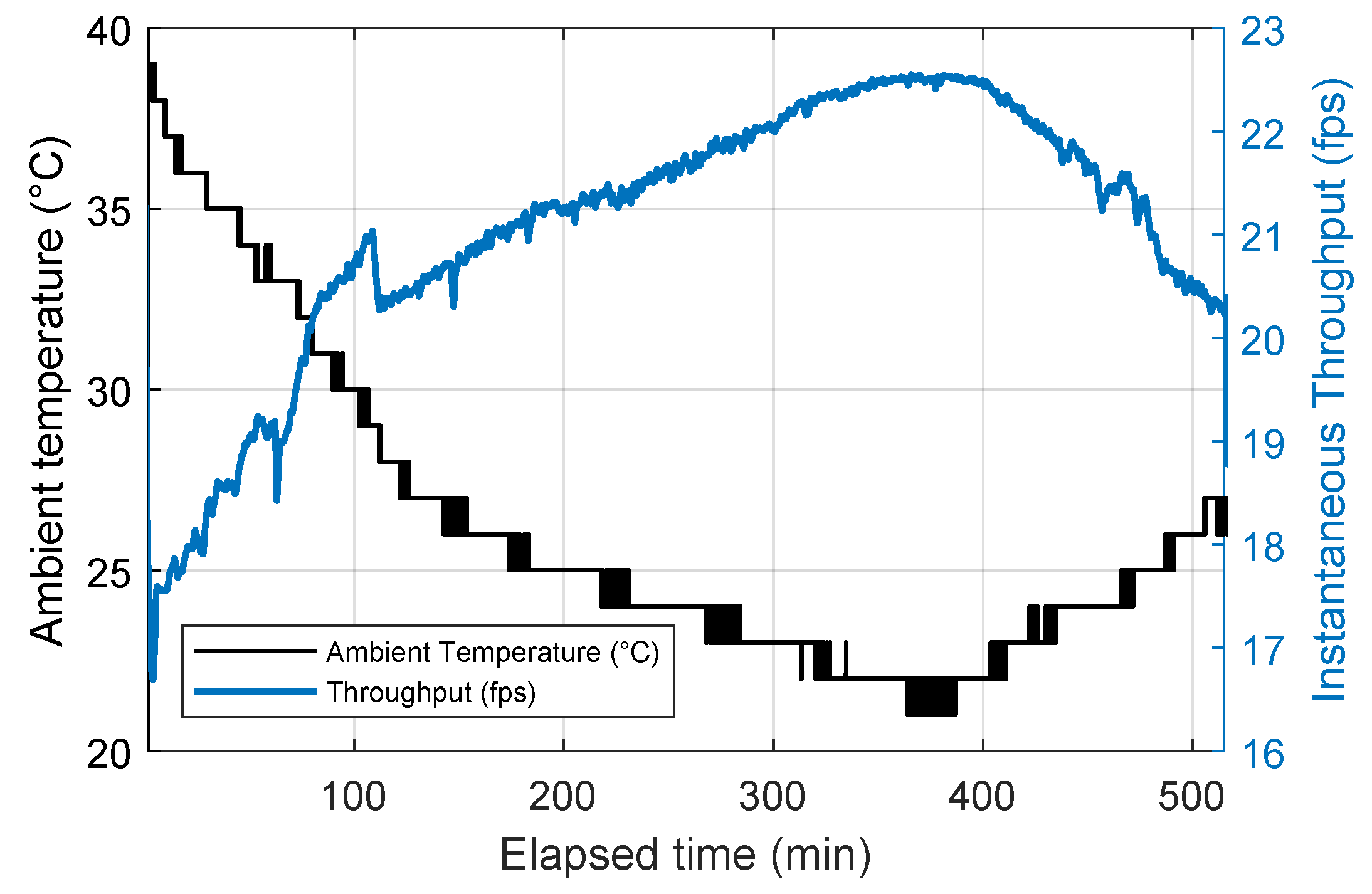

Figure 9 shows an example of collected temporal data. It corresponds to approximately 520 min of continuous inference with 32-bit OS/Tengine/SqueezeNet. In this particular test, the ambient temperature inside the box ranged from 38 °C in direct sun in the afternoon to 21 °C at night. The throughput correspondingly varied from 17.7 fps to 22.5 fps. To better understand this temperature dependency,

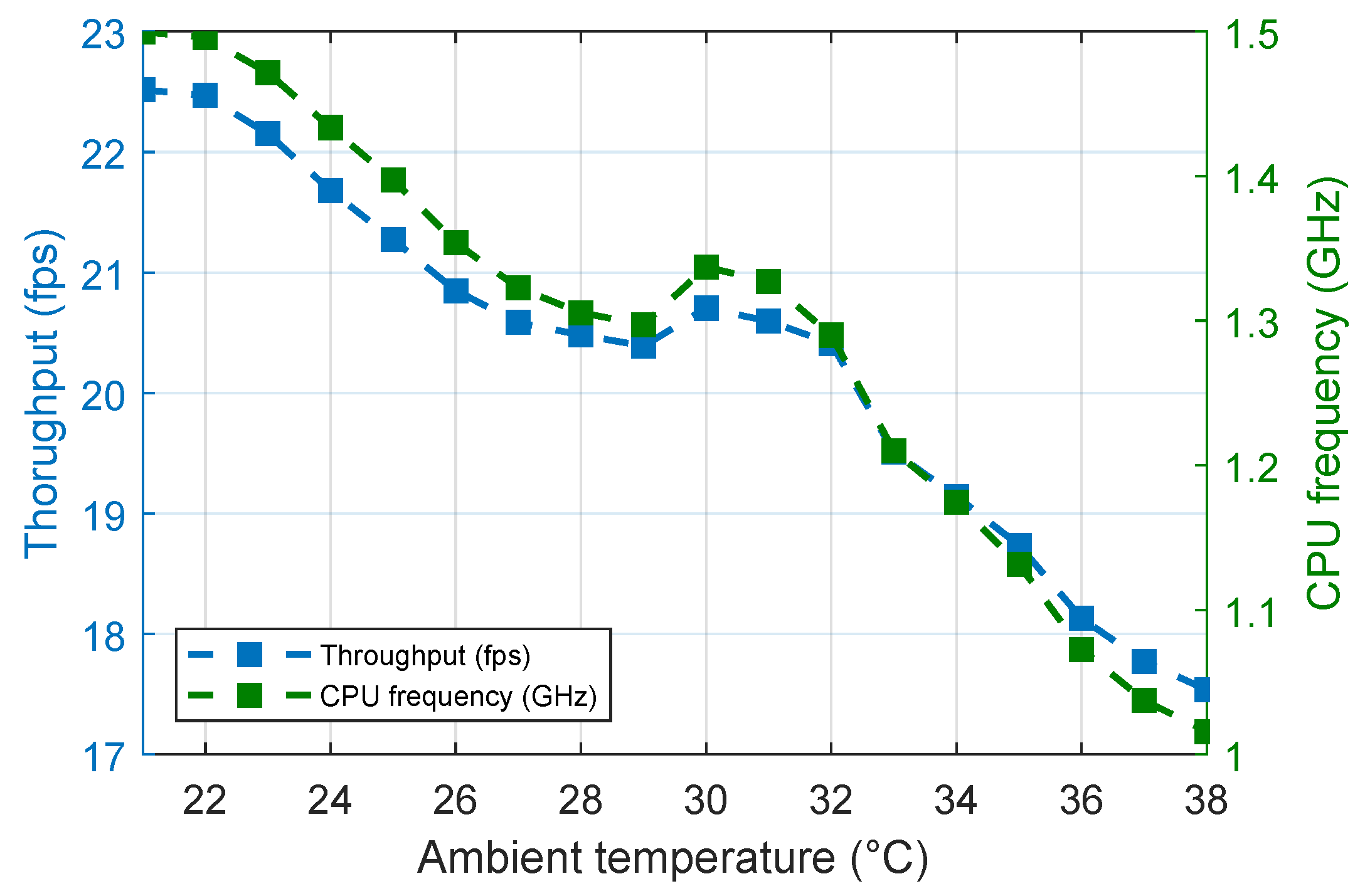

Figure 10 depicts throughput and CPU frequency as a function of the ambient temperature. Data on the

y-axis represent the average of all the measurements taken for each step of 1 °C (

x-axis). The correlation is evident: higher ambient temperature gives rise to worse inference performance (blue dots) due to thermal throttling expressed in terms of CPU frequency (green dots).

The proposed metric to analyze the impact of ambient temperature is the

maximum relative throughput variation, denoted by

. It measures the loss of throughput with respect to the maximum value recorded during a particular experiment, i.e.,

. Thus,

is expressed as follows:

where

represents the range of throughput variation during the corresponding outdoor test.

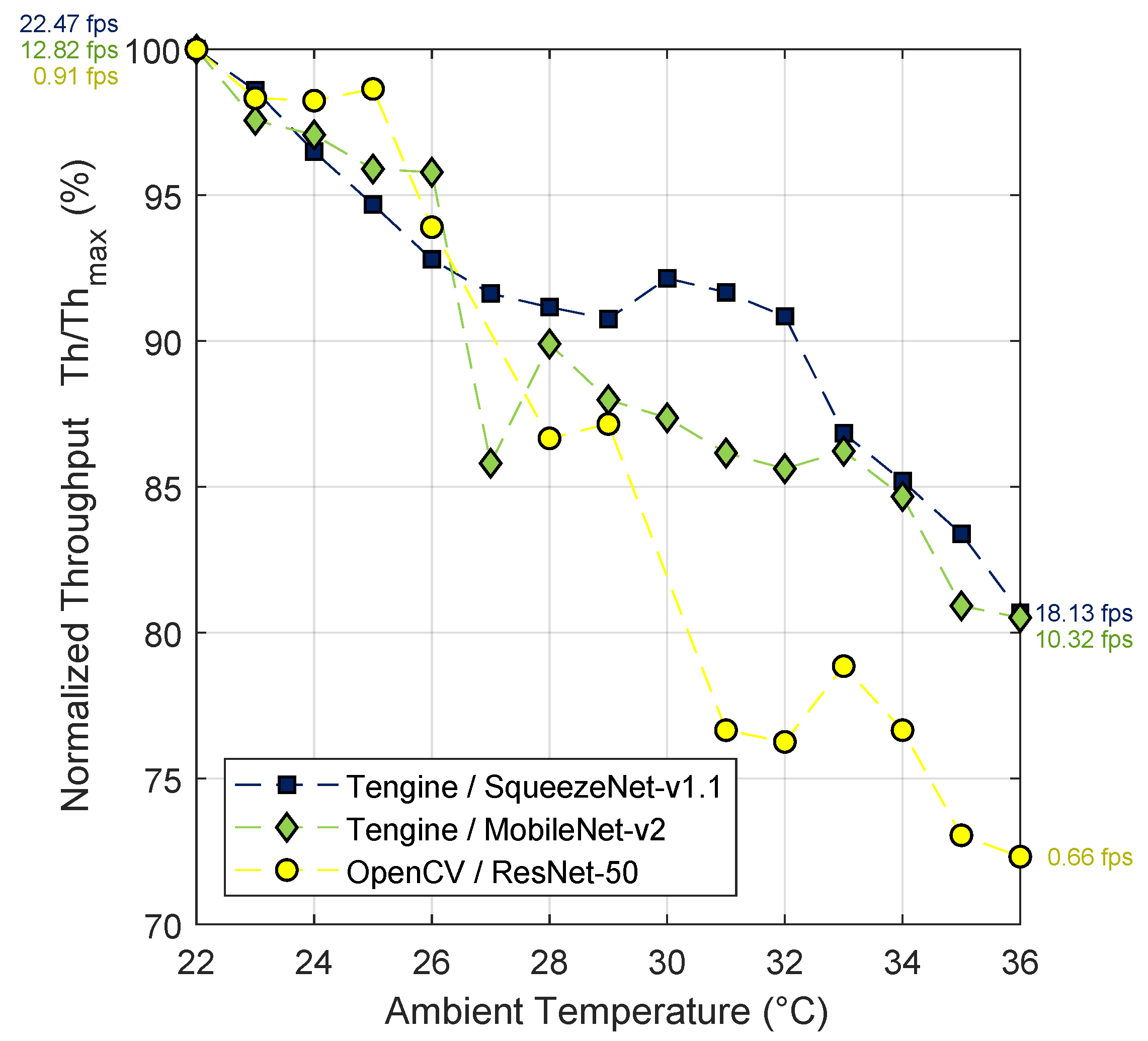

Note that the conducted outdoor tests were time-consuming—typically a few hours were required for ambient temperature to vary in a wide interval—and strongly dependent of external thermal conditions—it was difficult to replicate experiments sweeping the same range of temperatures. Eventually, we were able to successfully complete tests for three representative combinations of framework/model on the 32-bit OS: Tengine/SqueezeNet, Tengine/MobileNet-v2, and OpenCV/ResNet-50. This covers from a case featuring low CPU usage and high throughput, i.e., Tengine/SqueezeNet, to a combination characterized by a high computational load such as OpenCV/ResNet-50, with Tengine/MobileNet-v2 as an intermediate case. For these combinations, we were able to reproduce similar conditions, with the ambient temperature always in the range 22 °C–36 °C.

Figure 11 summarizes the results. It shows the average throughput for each temperature step normalized with respect to its maximum, that is,

. This maximum occurred at 22 °C in all cases; the particular values of throughput at this point are included in the plot. Likewise, the minimum occurred at 36 °C in all cases; the particular values of throughput at that point are also included in the plot. The corresponding values of

are reported in

Table 5. Remarkably, the impact of varying ambient conditions on continuous inference is not negligible at all. Even for the lightest model (SqueezeNet),

takes a value of 19.3 %. ResNet-50 on OpenCV is even more sensitive to the ambient temperature, with

reaching 27.7%. Depending on the requirements at application level, this variation could be critical to continuously meet the expected performance in real operation conditions.

7. Conclusions

In this study, we demonstrate that thermal effects cannot be neglected when it comes to designing embedded vision systems for real application scenarios. Thermal throttling can notably decrease the throughput during long-term continuous inference, with further degradation in case of high ambient temperature. Active cooling can prevent it, but at the cost of extra power consumption. In both cases—no cooling and active cooling—the resulting performance varies significantly depending on the OS, software framework, and CNN model. Thus, benchmarking and tests conducted under real operation conditions become fundamental to gain reliable insight about the expected behavior. Future work will address the development of techniques to maximize the performance of embedded systems tailored for remote sensing in terms of throughput, accuracy, and battery lifetime. These techniques will cover from advanced temperature and power managing algorithms to dynamic adaptation of the image resolution according to the scene content to optimize the use of the available computational power.

Author Contributions

T.B.-C.: system integration, experimental set-up, measurements, and first paper draft; D.V.-M.: supervision of experimental tests, realization of figures, revision of first paper draft; J.F.-B.: proposal of research topic, supervision, comprehensive revision of the manuscript before submission. All authors have read and agreed to the published version of the manuscript.

Funding

This work was supported by Spanish Government MICINN (European Region Development Fund, ERDF/FEDER) through project RTI2018-097088-B-C31, European Union H2020 MSCA through project ACHIEVE-ITN (Grant No. 765866), and by the US Office of Naval Research through Grant No. N00014-19-1-2156.

Conflicts of Interest

The authors declare no conflict of interest.

References

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep Learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef] [PubMed]

- Szegedy, C.; Liu, W.; Jia, Y.; Sermanet, P.; Reed, S.; Anguelov, D.; Erhan, D.; Vanhoucke, V.; Rabinovich, A. Going Deeper with Convolutions. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; pp. 1–9. [Google Scholar] [CrossRef]

- Zhang, X.; Zhou, X.; Lin, M.; Sun, J. ShuffleNet: An Extremely Efficient Convolutional Neural Network for Mobile Devices. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake, UT, USA, 12–18 June 2018; pp. 6848–6856. [Google Scholar] [CrossRef]

- Ma, N.; Zhang, X.; Zheng, H.-T.; Sun, J. ShuffleNet V2: Practical Guidelines for Efficient CNN Architecture Design. In Proceedings of the European Conference on Computer Vision (ECCV); Springer: London, UK, 2018; pp. 122–138. [Google Scholar]

- Howard, A.G.; Zhu, M.; Chen, B.; Kalenichenko, D.; Wang, W.; Weyand, T.; Andreetto, M.; Adam, H. MobileNets: Efficient Convolutional Neural Networks for Mobile Vision Applications. arXiv 2017, arXiv:1704.04861. [Google Scholar]

- Sandler, M.; Howard, A.; Zhu, M.; Zhmoginov, A.; Chen, L.-C. MobileNetV2: Inverted Residuals and Linear Bottlenecks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake, UT, USA, 12–18 June 2018; pp. 4510–4520. [Google Scholar] [CrossRef]

- Iandola, F.N.; Han, S.; Moskewicz, M.W.; Ashraf, K.; Dally, W.J.; Keutzer, K. SqueezeNet: AlexNet-Level Accuracy with 50× Fewer Parameters and <1 MB model size. arXiv 2016, arXiv:1602.07360. [Google Scholar]

- Tan, M.; Le, Q. EfficientNet: Rethinking Model Scaling for Convolutional Neural Networks. In Proceedings of the 36th International Conference on Machine Learning, Long Beach, CA, USA, 10–15 June 2019; Volume 97, pp. 6105–6114. [Google Scholar]

- Zhou, A.; Yao, A.; Guo, Y.; Xu, L.; Chen, Y. Incremental Network Quantization: Towards Lossless CNNs with Low-Precision Weights. In Proceedings of the International Conference on Learning Representations (ICLR), Toulon, France, 24–26 April 2017. [Google Scholar]

- Molchanov, P.; Tyree, S.; Karras, T.; Aila, T.; Kautz, J. Pruning Convolutional Neural Networks for Resource Efficient Inference. In Proceedings of the 5th International Conference on Learning Representations (ICLR), Toulon, France, 24–26 April 2017. [Google Scholar]

- Kim, Y.D.; Park, E.; Yoo, S.; Choi, T.; Yang, L.; Shin, D. Compression of Deep Convolutional Neural Networks for Fast and Low Power Mobile Applications. In Proceedings of the 4th International Conference on Learning Representations (ICLR), San Juan, Puerto Rico, 2–4 May 2016. [Google Scholar]

- Thomas, A.; Hedley, J. FumeBot: A Deep Convolutional Neural Network Controlled Robot. Robotics 2019, 8, 62. [Google Scholar] [CrossRef]

- Fernández-Berni, J.; Carmona-Galán, R.; Martínez-Carmona, J.F.; Rodríguez-Vázquez, Á. Early Forest Fire Detection by Vision-Enabled Wireless Sensor Networks. Int. J. Wildland Fire 2012, 21, 938–949. [Google Scholar] [CrossRef]

- Lin, J.; Yu, W.; Zhang, N.; Yang, X.; Zhang, H.; Zhao, W. A Survey on Internet of Things: Architecture, Enabling Technologies, Security and Privacy, and Applications. IEEE Internet Things J. 2017, 4, 1125–1142. [Google Scholar] [CrossRef]

- Canziani, A.; Paszke, A.; Culurciello, E. An Analysis of Deep Neural Network Models for Practical Applications. arXiv 2016, arXiv:1605.07678. [Google Scholar]

- Bianco, S.; Cadene, R.; Celona, L.; Napoletano, P. Benchmark Analysis of Representative Deep Neural Network Architectures. IEEE Access 2018, 6, 64270–64277. [Google Scholar] [CrossRef]

- Ostrowski, E.; Kaufmann, S. Survey of Alternative Hardware for Neural Network Computation in the Context of Computer Vision; Technical Report; University of Cologne and IT-Designers GmbH: Cologne, Germany, 2020. [Google Scholar]

- Torelli, P.; Bangale, M. Measuring Inference Performance of Machine-Learning Frameworks on Edge-Class Devices with the MLMark Benchmark; Technical Report; Embedded Microprocessor Benchmark Consortium (EEMBC): Vancouver, WA, USA, 2019. [Google Scholar]

- Ignatov, A.; Timofte, R.; Chou, W.; Wang, K.; Wu, M.; Hartley, T.; Van Gool, L. AI Benchmark: Running Deep Neural Networks on Android Smartphones. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2019; pp. 288–314. [Google Scholar]

- Luo, C.; He, X.; Zhan, J.; Wang, L.; Gao, W.; Dai, J. Comparison and Benchmarking of AI Models and Frameworks on Mobile Devices. arXiv 2020, arXiv:2005.05085. [Google Scholar]

- Reddi, V.J.; Cheng, C.; Kanter, D.; Mattson, P.; Schmuelling, G.; Wu, C.-J.; Anderson, B.; Breughe, M.; Charlebois, M.; Chou, W.; et al. MLPerf Inference Benchmark. In Proceedings of the ACM/IEEE 47th Annual International Symposium on Computer Architecture, Valencia, Spain, 30 May–3 June 2020; pp. 446–459. [Google Scholar]

- Choi, J.; Jung, B.; Choi, Y.; Son, S. An Adaptive and Integrated Low-Power Framework for Multicore Mobile Computing. Mob. Inf. Syst. 2017, 1–11. [Google Scholar] [CrossRef]

- Peluso, V.; Rizzo, R.G.; Calimera, A. Performance Profiling of Embedded ConvNets under Thermal-Aware DVFS. Electronics 2019, 8, 1423. [Google Scholar] [CrossRef]

- Peluso, V.; Rizzo, R.G.; Calimera, A. Efficacy of Topology Scaling for Temperature and Latency Constrained Embedded ConvNets. J. Low Power Electron. Appl. 2020, 10, 10. [Google Scholar] [CrossRef]

- Raspberry Pi Camera Module. Available online: https://www.raspberrypi.org/documentation/hardware/camera/ (accessed on 16 September 2020).

- DHT11 Datasheet. Available online: https://www.mouser.com/datasheet/2/758/DHT11-Technical-Data-Sheet-Translated-Version-1143054.pdf (accessed on 16 September 2020).

- Raspberry Pi 4. Available online: https://www.raspberrypi.org/products/raspberry-pi-4-model-b/ (accessed on 16 September 2020).

- Raspberry Pi Documentation—Frequency Management and Thermal Control. Available online: https://www.raspberrypi.org/documentation/hardware/raspberrypi/frequency-management.md (accessed on 16 September 2020).

- Fan Shim for Raspberry Pi. Available online: https://shop.pimoroni.com/products/fan-shim (accessed on 16 September 2020).

- Fan Shim Github. Available online: https://github.com/pimoroni/fanshim-python (accessed on 16 September 2020).

- Raspberry Pi Documentation—FAQs. Available online: https://www.raspberrypi.org/documentation/faqs/ (accessed on 16 September 2020).

- Raspberry Pi OS. Available online: https://www.raspberrypi.org/downloads/raspberry-pi-os/ (accessed on 16 September 2020).

- Raspberry Pi OS 64 bit Beta. Available online: https://www.raspberrypi.org/forums/viewtopic.php?f=117&t=275370 (accessed on 16 September 2020).

- OpenCV. Available online: https://github.com/opencv/opencv (accessed on 16 September 2020).

- Tengine. Available online: https://github.com/OAID/Tengine (accessed on 16 September 2020).

- OPEN AI LAB. Available online: http://www.openailab.com/ (accessed on 16 September 2020).

- NCNN. Available online: https://github.com/Tencent/ncnn (accessed on 16 September 2020).

- ArmNN. Available online: https://github.com/ARM-software/armnn (accessed on 16 September 2020).

- Deng., J.; Dong, W.; Socher, R.; Li, L.J.; Li, K.; Li, F.-F. ImageNet: A Large-Scale Hierarchical Image Database. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Miami, FL, USA, 20–25 June 2009; pp. 248–255. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2016; pp. 770–778. [Google Scholar] [CrossRef]

- SqueezeNet v1.1 Caffemodel. Available online: https://github.com/forresti/SqueezeNet/tree/master/SqueezeNet_v1.1 (accessed on 16 September 2020).

- NCNN Pre-Trained Models. Available online: https://github.com/Tencent/ncnn/tree/master/benchmark (accessed on 16 September 2020).

- SqueezeNet v1.1 TFlite Model. Available online: https://storage.googleapis.com/download.tensorflow.org/models/tflite/model_zoo/upload_20180427/squeezenet_2018_04_27.tgz (accessed on 16 September 2020).

- MobileNet Caffemodel. Available online: https://github.com/shicai/MobileNet-Caffe (accessed on 16 September 2020).

- MobileNet v1 TFlite Model. Available online: https://storage.googleapis.com/download.tensorflow.org/models/mobilenet_v1_2018_02_22/mobilenet_v1_1.0_224.tgz (accessed on 16 September 2020).

- MobileNet v2 TFlite Model. Available online: https://storage.googleapis.com/download.tensorflow.org/models/tflite_11_05_08/mobilenet_v2_1.0_224.tgz (accessed on 16 September 2020).

- ResNet-18 Caffemodel. Available online: https://github.com/HolmesShuan/ResNet-18-Caffemodel-on-ImageNet (accessed on 16 September 2020).

- ResNet-18 Keras Model. Available online: https://github.com/qubvel/classification_models/releases/download/0.0.1/resnet18_imagenet_1000.h5 (accessed on 16 September 2020).

- ResNet-50 Caffemodel. Available online: https://github.com/KaimingHe/deep-residual-networks (accessed on 16 September 2020).

- ResNet-50 Keras Model. Available online: https://github.com/keras-team/keras-applications/releases/download/resnet/resnet50_weights_tf_dim_ordering_tf_kernels.h5 (accessed on 16 September 2020).

| Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).