1. Introduction

Compressed sensing (CS) [

1,

2,

3] theory has aroused significant concern over the past few years. It asserts that a signal can be conducted using compressive sampling, which has a much lower frequency than that of Nyquist. The signal processing of an electrical circuit includes an analog-to-digital converter (ADC). The ADC receives an analog input signal, samples the analog input signal based on a sampling clock signal and converts the sampled analog input signal into a digital output signal. The compressed sensing method can be used to sample the analog signal with a lower sample rate than the Nyquist sampling rate. CS theory mainly includes three core issues [

4]: (1) The signal sparsity representation, which designs the sparsity basis or the over-complete dictionary with the capability of sparse representation; (2) The compressive measurement of the sparse signal or compressive signal for designing the sensing matrix, which satisfies the incoherence of atoms or restricted isometry property (RIP) [

5]; and (3) The reconstruction of the sparse signal is to design the efficiency signal recovery algorithm. In terms of the aspects of signal sparse representation and sensing matrix design, there have been several better solutions. However, extending CS theory to practical applications requires a crucial step to implement, which is the design of a signal recovery algorithm. Therefore, the design of a recovery algorithm is still an important topic in the field of CS research.

Currently, several mature signal recovery algorithms have been proposed. Among the existing recovery algorithms, two major approaches are the

-norm minimization (or convex optimization) and

-norm minimization (or greedy pursuit) methods. Convex optimization methods approach the signal by changing the non-convex problem into convex ones such as the basis pursuit (BP) [

6] algorithm, the gradient projection for sparse reconstruction (GPSR) [

7] algorithm, the interior-point method Bergman iteration (BT) [

8] and total-variation (TV) [

9]. While the convex optimization methods work correctly for all sparse signals and provide theoretical performance guarantees, its high computational complexity may prevent it from encountering practical large-scale recovery problems. The other category is the greedy pursuit algorithm, which iteratively identifies the true support of the original signal and constructs an approximation signal based on a set of chosen supports until the halt iteration stop condition is satisfied. This can more efficiently solve large-scale data recovery problems. An example of an earlier typical greedy algorithm is the matching pursuit (MP) [

10] algorithm. The orthogonal matching pursuit (OMP) [

11] algorithm was developed based on the MP algorithm to optimize MP by orthogonalizing the atoms of the support set. However, the OMP algorithm selects one of the columns of preliminary atoms to add the candidate atoms set, which will increase the number of iterations, thereby reducing the speed of the OMP algorithm. Subsequently, some researchers have proposed several modified methods and as for the shortcoming where OMP places only one atom (or column) onto the support atom set at each round of iteration, the stage-wise OMP (StOMP) [

12] algorithm has been proposed. StOMP can select multiple atoms to add to the support atom set by using the thresholds. Regularization is introduced in OMP and can provide a powerful theoretical guarantee. This recovery algorithm is called the regularized OMP (ROMP) [

13] algorithm. The computational complexity of these algorithms is significantly lower than that of the convex optimization methods; however, they require more measurement of values for exact recovery and have poor reconstruction performance in a noisy environment. To date, subspace pursuit (SP) [

14] and compressive sampling matching pursuit (CoSaMP) [

15,

16] algorithms have been proposed by incorporating a backtracking strategy. These algorithms offer strong theoretical guarantees and provide robustness to noise. However, both of these algorithms require the sparsity

as priority information, which may not be available in most practical applications. In order to overcome this weakness, the sparsity adaptive matching pursuit (SAMP) [

17] algorithm was proposed for blind signal recovery when the sparsity is unknown. The SAMP algorithm divides the recovery process of the algorithm into several stages with a fixed step-size and without the prior information of the sparsity. In the SAMP algorithm, the step-size is fixed at the initial stage of the SAMP algorithm. Additional iterations are required if the step-size is much smaller than the signal’s sparsity. This will lead to a long reconstruction time. Furthermore, the fixed step-size cannot estimate the real sparsity precisely because this method can only set the estimated sparsity to a multiple integer of the step-size. Although these traditional greedy pursuit algorithms are widely used due to their simple structure, convenient calculation and better reconstruction effect, they still have many drawbacks. These methods do not directly solve the original optimization problem, which will result in the quality of the signal recovery being of poorer quality than the convex optimization method-based

-norm. In addition, these greedy pursuit algorithms have the disadvantage of a high computing complexity and large storage capacity for large-scale date recovery.

Since calculating the orthogonal projection requires a large number of calculations using traditional greedy algorithms, this will result in a decline in the recovery efficiency of the greedy algorithm. Thomas et al. first proposed a gradient pursuit (GP) [

18] algorithm for the sake of overcoming this shortcoming. This algorithm uses the update of the gradient direction to replace the calculation of the orthogonal projection, which reduces the computational complexity of the greedy pursuit algorithms. Their successors include the Newton pursuit (NP) [

19] algorithm, the conjugate gradient pursuit (CGP) [

20] algorithm, the approximate conjugate gradient pursuit (ACGP) [

21] algorithm and the variable metric method-based gradient pursuit (VMMGP) [

22] algorithm. These methods reduce the computational complexity and storage space of the traditional greedy algorithm in terms of the large-scale recovery problem but the reconstruction performance still requires improvement. Therefore, based on the GP algorithm, the stage-wise weak gradient pursuit (SwGP) [

23] algorithm was proposed to improve the reconstruction efficiency and convergence speed of the GP algorithm via the weak selection strategy. Although the SwGP algorithm makes the fashioning of atom selection more flexible and improves the reconstruction precision, the time taken for atom selection is greatly increased. Recently, motivated by the stochastic gradient descent methods, the stochastic gradient matching pursuit (StoGradMP) [

24] algorithm was proposed for the optimization problem with sparsity constraints. The StoGradMP algorithm not only improves the reconstruction efficiency of the greedy recovery algorithm for the large-scale data recovery problem but also reduces the computational complexity of the algorithm. However, the StoGradMP algorithm still requires the sparsity of the signal as a priori information, which restricts the capacity of the algorithm’s availability in practical situations. This study proposed a sparsity pre-evaluation strategy to estimate the sparsity of the signal and utilized the estimated sparsity as the input parameter of the algorithm. This strategy will make the algorithm eliminate the dependence on signal sparsity and decrease the number of iterations of the algorithm. This algorithm then approaches the real sparsity of the signal by adjusting its initial sparsity estimation, thereby realizing the expansion of the support atoms set and the signal reconstruction.

In recent years, a variety of reconstruction algorithms have been proposed, which have further enhanced the application prospect of CS theory in the field of signal processing such as channel estimation and blind source separation. There is no denying that the application research of reconstruction algorithms will even further highlight the importance of such algorithms. In the literature [

25], novel subspace-based blind schemes have been proposed and applied to the sparse channel identification problem. Moreover, the adaptive sparse subspace tracking method was proposed to provide efficient real-time implementations. In Reference [

26], a novel unmixing method based on the simultaneously sparse and low-rank constrained non-negative Matrix factorization (NMF) was applied to the remote sensing image analysis.

2. Preliminaries and Problem Statement

In CS theory, for

, here,

is the length of signal

. If the number of non-zero entries is

in original signal, then we regard the signal

as the

-sparse signal or compressive signal (in noiseless environments). Generally, the signal

can be expressed as follows:

where

are the basis vectors of the sparse basis matrix

, that is,

is the matrix constituted by the

.

is a projection coefficient vector and

.

denotes that the number of non-zero entries in the projection coefficient vector

.

When the sparse representation of the original signal is completed, we need to construct a measurement matrix

for the compression measurement of the sparse signal

to obtain the observation values

, this process can be described as follows:

where

,

and

. According to Equation (3), the observation vector nearly contains the whole information of the

-dimensional signal

. Furthermore, this process is non-adaptive, which will ensure that the crucial information of the original signal is not lost when the dimensional signal is decreased from

to

. The

is called the number of observation values in the later description.

When the original signal

itself is not sparse, the original signal measurement process cannot be directly utilized in Equation (3). Thus, we need the compressive measurement on the projection coefficient vector

to obtain the measurement value. According to Equations (1) and (3), we can obtain the follow equation:

where

is the sensing matrix. According to Equation (4), we know that the dimensional of the observation vector

is much lower than the dimensional of signal

, that is,

. Therefore, Equation (4) is regarded as an under-determined problem and indicates that Equation (4) has an infinite number of solutions. That is to say, it is hard to reconstruct the projection coefficient vector

from observation vector

.

Whereas, according to the literature [

27], we know that the sufficient condition for exact sparse signal recovery is that sensing matrix

satisfies the RIP condition. Thus, if the sensing matrix satisfies the RIP condition, the reconstruction on signal

is equivalent to the

-norm optimization problem [

28]:

where

represents the number of non-zero entries in projection coefficient

. Unfortunately, Equation (5) is a NP-hard optimization problem. When the isometry constant

of the sensing matrix

is less than or equal to

, Equation (5) is equivalent to the

-norm optimization problem:

where

denotes that the absolute sum of the non-zero entries in projection coefficient

. Equation (6) is a convex optimization problem. Meanwhile, when the sparse basis is determined, in order to ensure that the sensing matrix

also satisfies the RIP condition, the measurement matrix

must meet certain conditions. However, in Reference [

29,

30], the researchers found that when the measurement matrix

was a random matrix with a Gaussian distribution, the sensing matrix

could satisfy the RIP condition with a large probability. This will greatly reduce the difficultly of the design of the measurement matrix.

However, in most practical applications and conditions, the original signal ordinarily contains noise signals. In this setting, this sensing process can be represented in the following equation:

where

is the noise signal. In this study, for simplicity, we supposed that the signal

itself was

-sparse, thus, the original signal

and sensing matrix

were equal to the projection coefficient

and measurement matrix

, respectively. According to Equation (7), it can be written as

. We minimized the follow equation to reconstruct the original sparse signal

:

where

is the residual of the original signal

, which is represented as

. That is,

.

represents the square of

-norm of the signal residual vector

. To analyze Equation (8), we combined Equation (1). In Equation (1),

is the projection coefficient of the sparse signal

. This notion is general enough to address many important sparse models such as group sparsity and low rankness (see studies [

31,

32] for examples). Then, we can express Equation (9) in the form of

where

is a smooth function, that is, it is a non-convex function.

is defined as the norm that captures the sparsity of signal

.

For a sparse signal recovery problem, the sparse basis

consists of

basic vectors, each of size

in the Euclidean space. This problem can be regarded as a special case of Equation (9) with

and

. The observation vector

is decomposed into the non-overlapping block observation vectors

with a size of

.

denotes the block-matrix of the measurement matrix of size

. According to Equations (8) and (9), the objective function

can be represented as in the following form:

where

, which is a positive integer. According to the equation, each smooth function

can be represented as

. Obviously, in this case, each sub-function

accounts for a collection (or block) of measurements of size

, rather than only one observation. Here, the smooth function

is divided into multiple smooth sub-functions

and the measurement matrix

block into multiple block matrix

, which will contribute to the computation of the gradient in the stochastic gradient matching pursuit algorithm, thereby improving the reconstruction performance of the algorithm.

3. StoGradMP Algorithm

The CoSaMP algorithm is fast for small-scale signals with a lower dimensional but for large-scale signals with a higher dimensional and noise signal, the reconstruction precise is not very accurate and the robustness of the algorithm itself is poorly. Therefore, in Reference [

30], the researchers generalized the idea of the CoSaMP algorithm and proposed the GradMP algorithm for the reconstruction problem of large-scale signals with sparsity constraints and noise signals. Regrettably, the GradMP algorithm needs to calculate the overall gradient of the smooth function

, which increases the computational complexity of the GradMP algorithm. After the GradMP algorithm, Needell et al. proposed a stochastic version of the GradMP algorithm called the StoGradMP [

24] algorithm. This algorithm only computes the gradient of the sub-function

at each round of iterations.

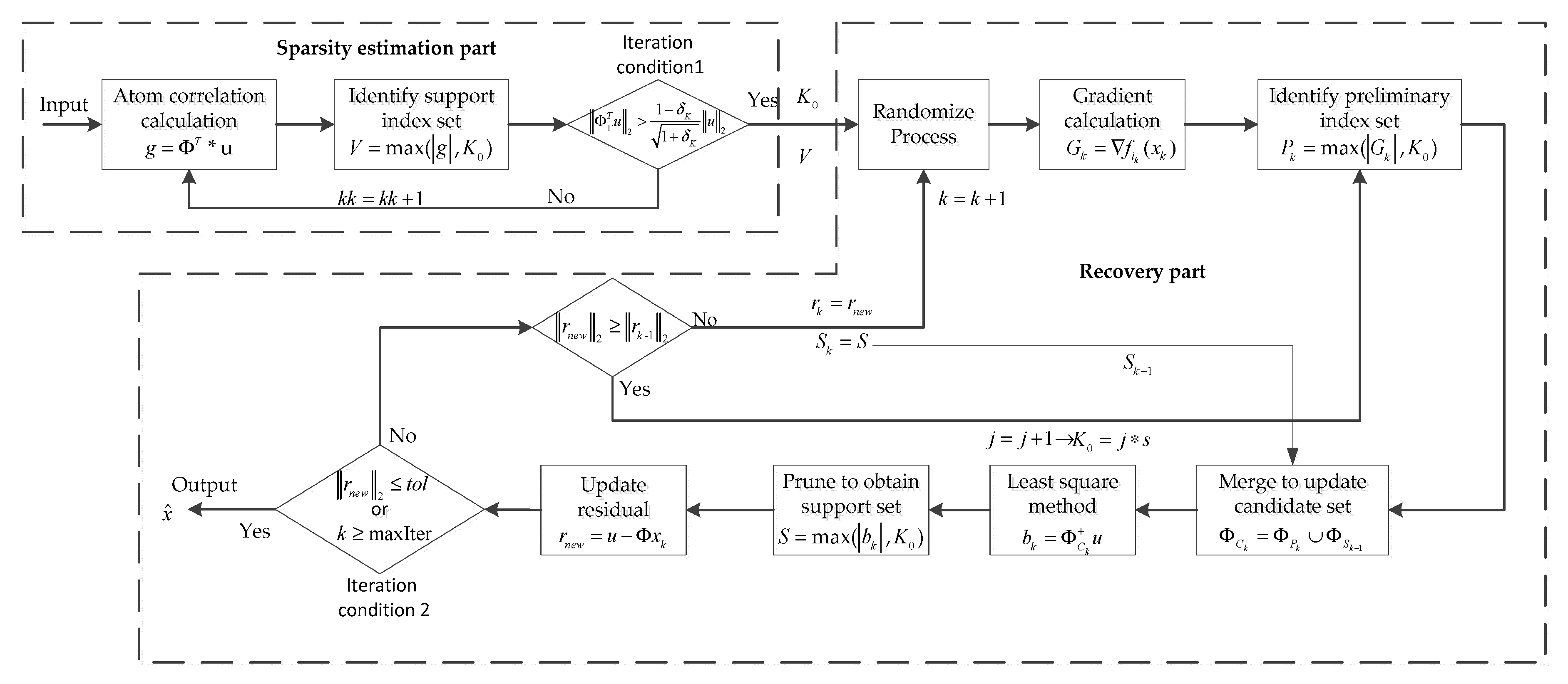

According to the literature [

24], the StoGradMP algorithm is described in Algorithm 1, which consists of the following steps at each round of iterations:

Randomize: The measurement matrix is randomly divided into blocks, that is, it searches the row index of the measurement matrix constituting a block matrix of size by the row vector corresponding to those row indexes. Then, according to Equation (10) and the block matrix, execute the calculation operation of sub-function .

Proxy: Compute the gradient of , where the gradient is a column vector.

Identify: The absolute value of the gradient vector is ranked in descending order, the first absolute value of the gradient coefficients are selected, the column index (atomic index) of the measurement matrix corresponding to those coefficients is found, then form a preliminary index set .

Merge: Constitute the candidate atomic index set , which is consists of the preliminary index set and the support index set of the previous iteration.

Estimation: The transition estimation of the signal by the least square method.

Prune: The absolute value of the estimation vector of the signal transition is ranked in descending order, the first absolute value of signal estimation coefficients is determined, then conduct a search for the atomic index of the measurement matrix corresponding to those coefficients, forming the support atomic index set .

Update: Update the final estimation of signal at the current iteration, which corresponds to the support atomic index set .

Check: When the -norm of the signal residual is less than the tolerance error of the StoGradMP algorithm, the iteration is halted. Or, if the loop index is greater than the maximum number of iterations, the proposed method ends and the approximation of signal is the output. Otherwise, continue the iteration until the halting condition is met.

6. Discussion

In this section, we used the signal with different -sparsity as the original signal. The measurement matrix was randomly generated with a Gaussian distribution. All performances were an average calculated after running the simulation 100 times using a computer with a 32-core, 64-bit processor, two processors and a 32 G memory. We also set the recovery error of all recovery methods as and the tolerance error as . The maximum number of iterations of the recovery part of the proposed method was .

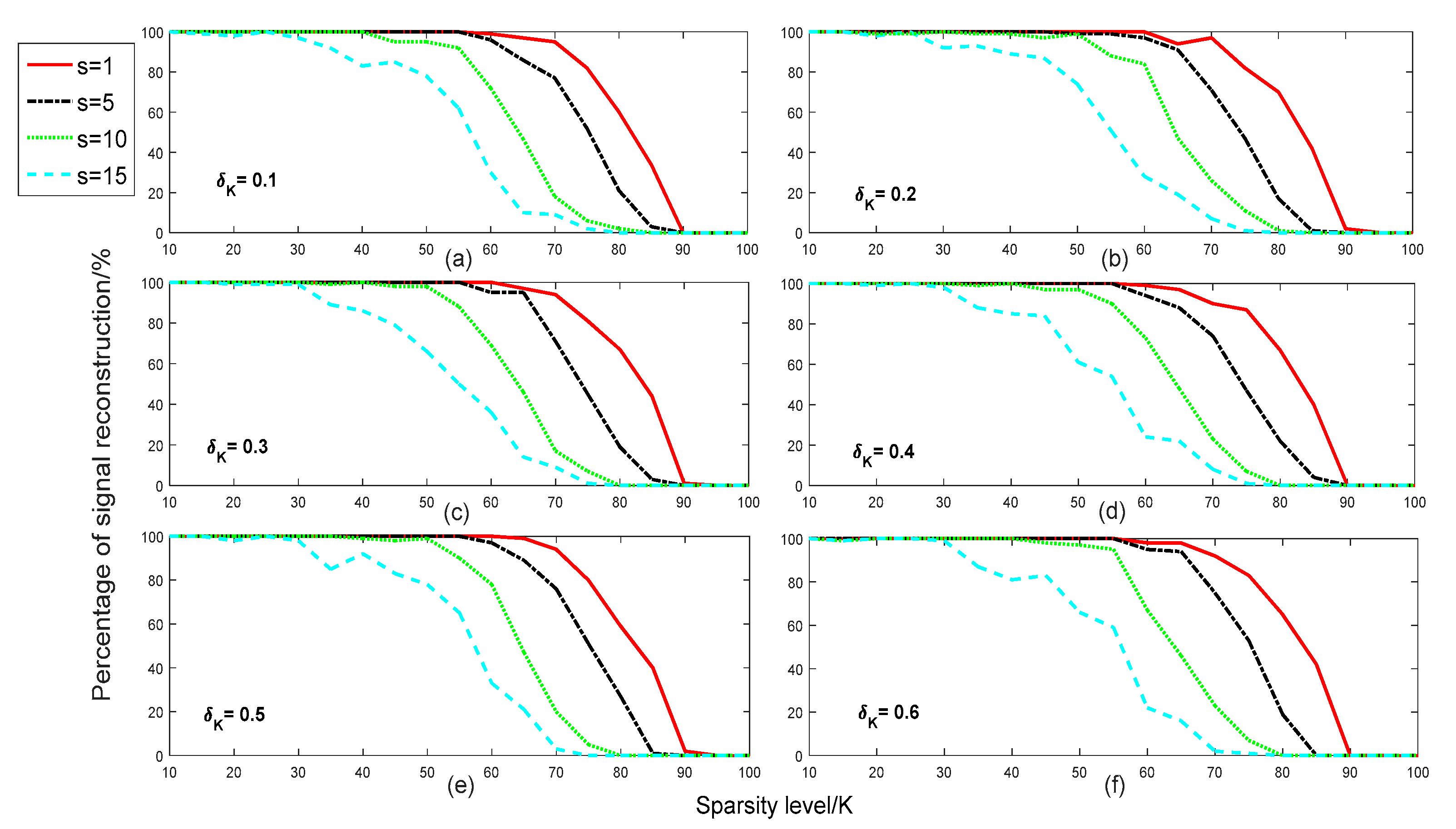

In

Figure 2, we compared the reconstruction percentage of different step-sizes of the proposed method with different sparsities in different isometry constants. We set the step size set and the range of sparsity as

and

, respectively. The isometry constant parameter set was

. From

Figure 2, we can see that the reconstruction percentage was very close, with almost no difference for all isometry constants

. This means that the selection of the isometry constants had almost no effect on the reconstruction percentage of the signal.

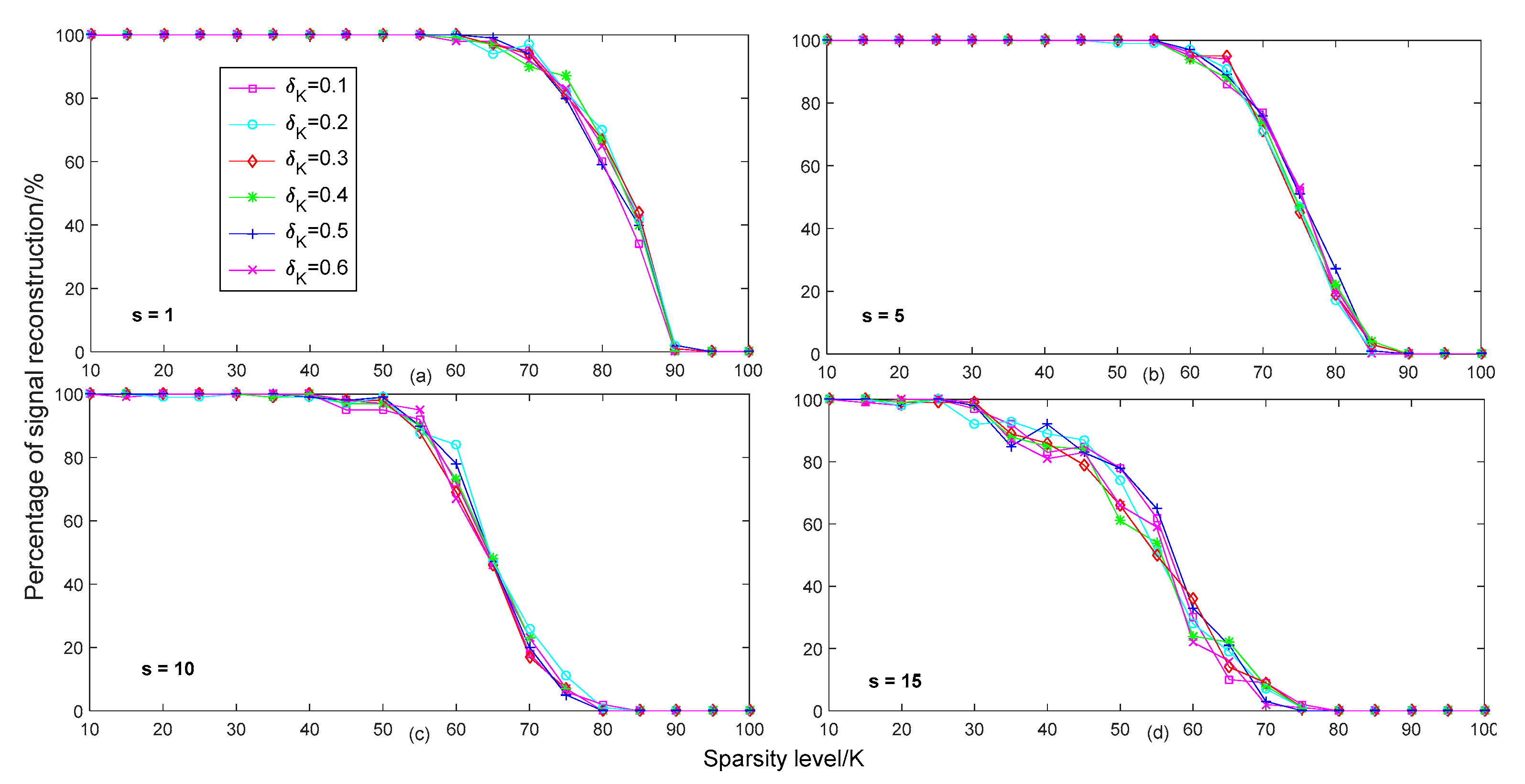

In

Figure 3, we compared the reconstruction percentage of different isometry constants

with different sparsities in different step-size conditions. In order to better analyze the effects of different step-sizes on the reconstruction percentage, the setting of parameters in

Figure 3 was consistent with the parameters in

Figure 2. From

Figure 3, we can see that when the step-size

was 1, the reconstruction performance was the best for different isometry constants. When the step size continued to increase, the reconstruction percentage of the proposed method gradually declined. In particular, when the step-size

was 15, the reconstruction performance was the worst. This shows that a smaller step-size benefits the reconstruction of the signal.

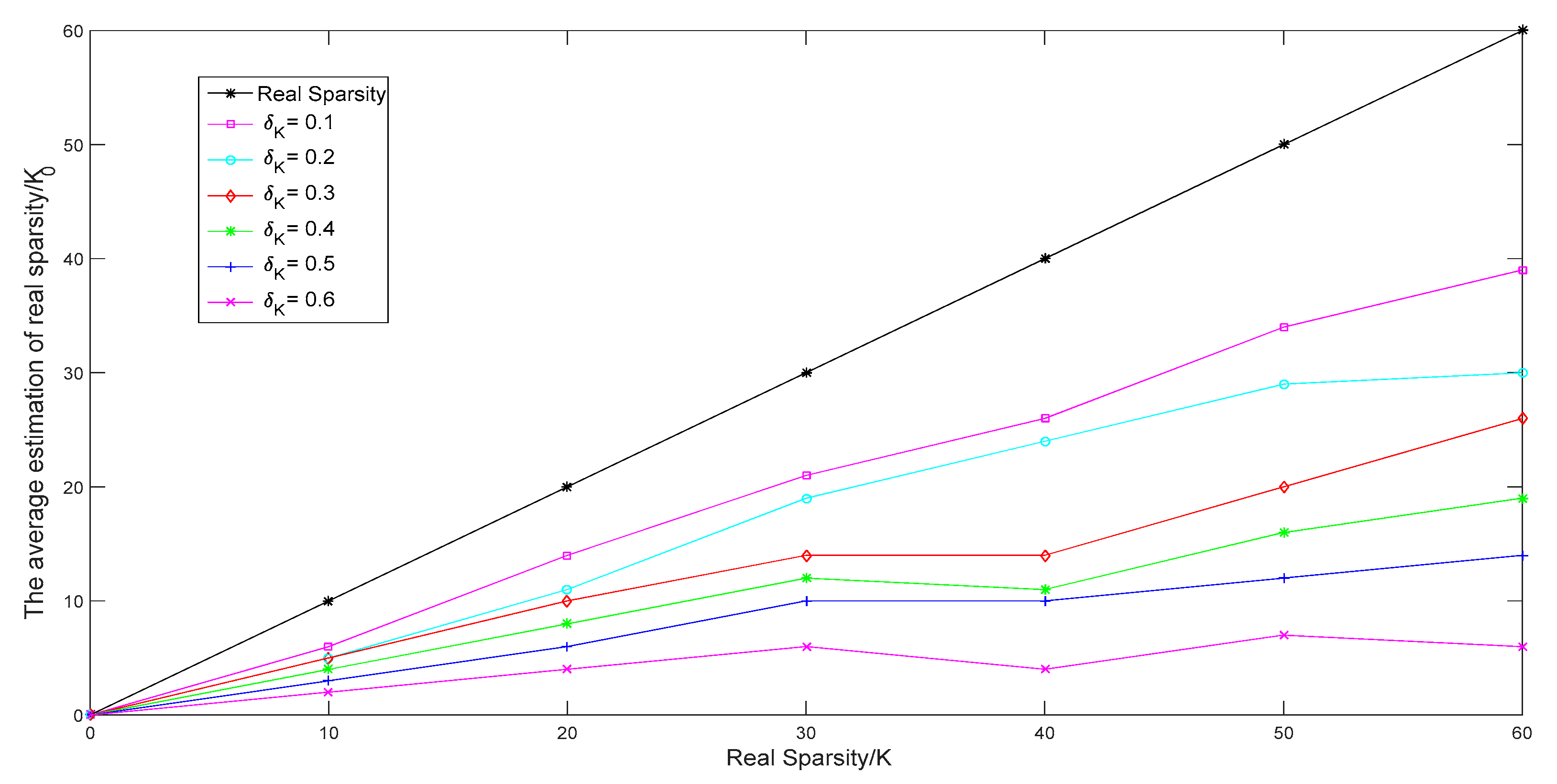

In

Figure 4, we compared the average estimate of the sparsity of different isometry constants

of the proposed method with different real sparsity

. We set the range of the real sparsity and isometry constant set as

and

, respectively. From

Figure 4, we can see that when the isometry constant was equal to 0.1, the estimated sparsity

was closer to the real sparsity of the original, rather than the other isometry constant. When the isometry constant was equal to 0.6, the estimated sparsity was much lower than the real sparsity of the signal. Therefore, we can say that a smaller isometry constant may be useful for estimating sparsity. Furthermore, this indicates that a smaller isometry constant can reduce the runtime of sparsity adjustments, making the recovery algorithm able to more quickly approach the real sparsity of the signal, thereby decreasing the overall recovery runtime of the proposed method.

In

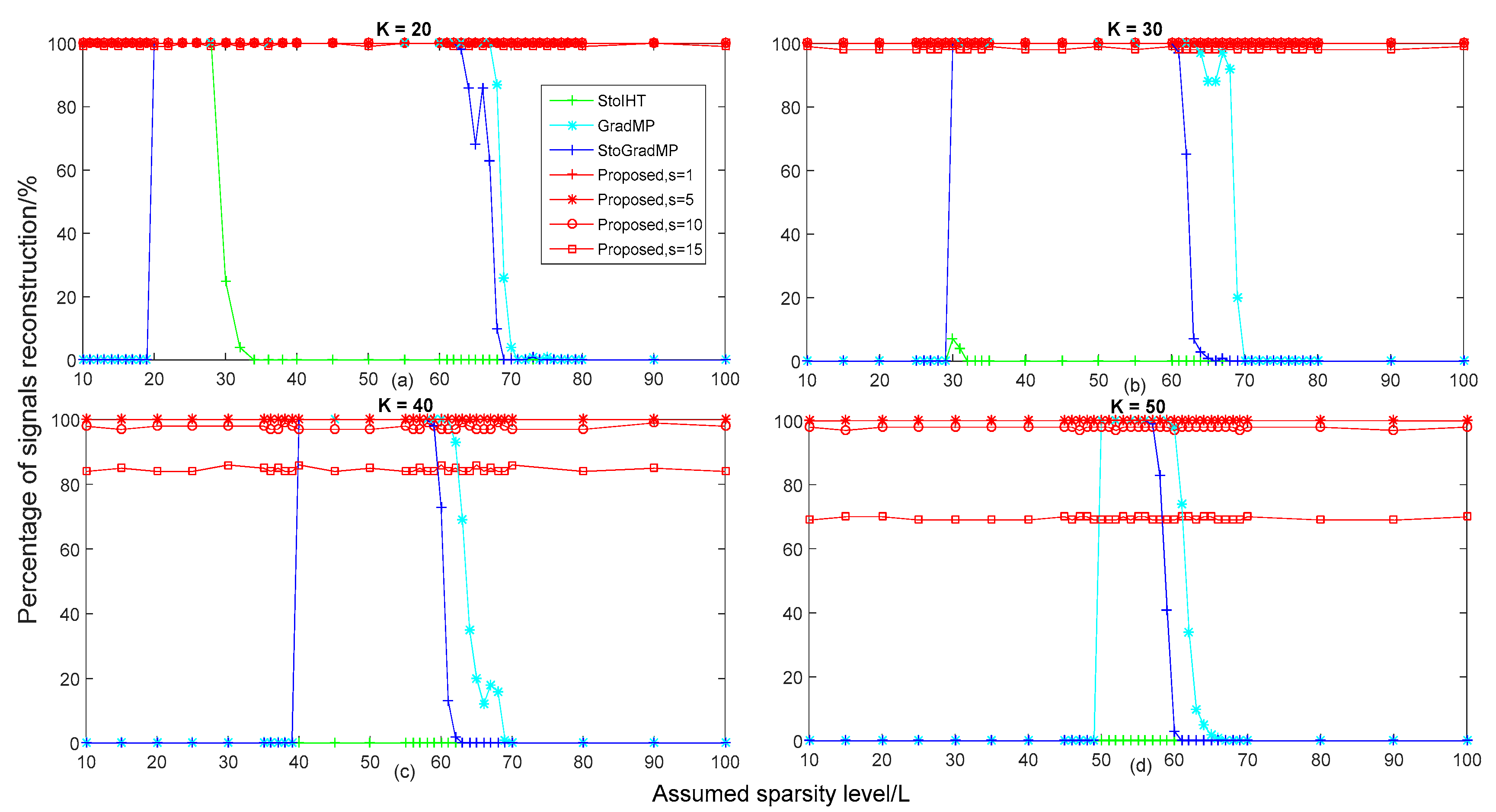

Figure 5, we compared the reconstruction percentage of different algorithms with different sparsities in different real sparsity conditions. We set the range of the real sparsity of the original signal and the assumed sparsity as

and

, respectively. From

Figure 4, we can see that when the isometry constant was equal to 0.1, the estimated level of sparsity was higher than the other isometry constants. Therefore, we set the isometry constant as 0.1 in the simulation in

Figure 5. In

Figure 5a,b, we can see that the proposed method had a higher reconstruction percentage than other algorithms when the real sparsity was equal to 20 and 30, almost all of them reached 100%. In

Figure 5a, for real sparsity

, we can see that when the assumed sparsity

, the reconstruction percentage of the StoIHT, GradMP and StoGradMP algorithms was 0%, that is to say, these algorithms could not complete the signal recovery. When

, all recovery methods almost achieved a higher reconstruction percentage. When

, the reconstruction percentage of the StoIHT algorithm began to decline from approximately 100% to 0%, while the other algorithms still had a higher reconstruction percentage. When

, the reconstruction percentage of the StoIHT algorithm was 0%. For

, the reconstruction percentage of the GradMP and StoGradMP algorithms began to decline from approximately 100% to 0%. Moreover, the reconstruction percentage of the GradMP algorithm was higher than the StoGradMP algorithm in the variation range of this sparsity. In

Figure 5b, we can see that the reconstruction percentage of the StoIHT algorithm was still 0% for all assumed sparsity. When

, the reconstruction percentage of the GradMP and StoGradMP algorithms was equal to 0%, while the proposed method had a higher reconstruction percentage and was approximately 100%. For

, the reconstruction percentage of all recovery methods was approximately equal to 100%. When

, the reconstruction percentage of the StoGradMP algorithm began to decline from approximately 99% to 1%, while the GradMP algorithm still had a higher reconstruction percentage. In

Figure 5c,d, we can see that the reconstruction percentage of the proposed method with

decreased from approximately 99% to 84% and 69%, respectively. Furthermore, from all of the sub-figures in

Figure 5, we can see that when the assumed sparsity was close to the real sparsity, the reconstruction percentage of the GradMP and StoGradMP algorithms were very close, with almost no difference. In addition, when the real sparsity of the original signal gradually increased, the range of sparsity that maintained a higher reconstruction percentage became smaller. This means that the GradMP and StoGradMP algorithms were more sensitive to larger real sparsity.

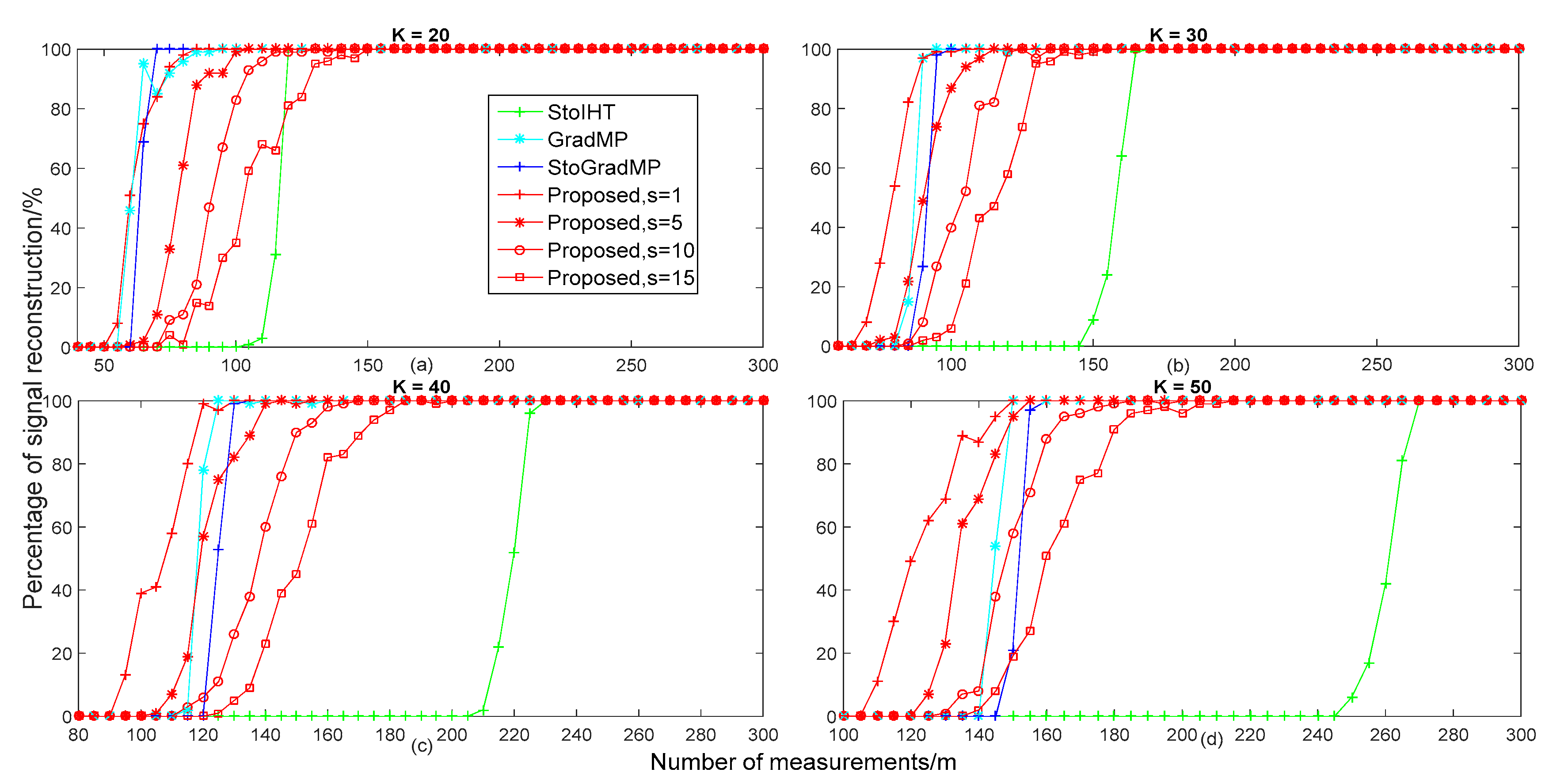

In

Figure 6, we compared the reconstruction percentage of different algorithms with different measurements in different real sparsity conditions. We set the range of real sparsity as

in the simulation of

Figure 6 to keep it consistent with

Figure 5. The range of the measurement was

. From

Figure 6, we can see that when the real sparsity ranged from 20 to 50, the proposed method was gradually higher than the other algorithms. In

Figure 6a, we can see that when

, the reconstruction percentage of the proposed method with

was higher than other methods. For

, the reconstruction percentage of the proposed method was lower than the StoGradMP and GradMP algorithms, except for the StoIHT algorithm. When

, the reconstruction percentage that the StoIHT algorithm was superior to the proposed method was

. When

, all of the recovery methods almost achieved higher reconstruction probabilities. In

Figure 6b, we can see that when

, the reconstruction percentage of the proposed method with

and

was higher than the StoGradMP and StoIHT algorithms. When

, the recovery percentage of the proposed method with

was higher than the StoIHT algorithm, except for the StoGradMP and GradMP algorithms. For

, the reconstruction percentage of the proposed method with

was higher than the proposed method with

and

, while the StoIHT algorithm still could not complete a recovery of the signal. When

, the reconstruction percentage of the SoIHT algorithm began to dramatically increase from approximately 0% to 100%, while the other algorithms still had a higher recovery percentage. When

, all of the methods almost achieved higher reconstruction probabilities. In

Figure 6c, we can see that when

, the reconstruction percentage of the proposed method with

and

was superior to the StoGradMP and StoIHT algorithms. For

, the recovery percentage of the proposed method with

was higher than the proposed method with

and

and the StoIHT algorithm, except for the StoGradMP algorithm. In

Figure 6d, we can see that the reconstruction percentage of the proposed method with

still had a higher recovery percentage than the other methods. When

, the reconstruction percentage of the proposed method with a random step-size was higher than the StoGradMP and StoIHT algorithms. For

, the reconstruction percentage of the proposed method with

and

was higher than the other methods. When

, the reconstruction percentage of the proposed method with

and

was superior to the StoIHT algorithm. When

, the reconstruction percentage of the StoIHT algorithm ranged from approximately 0% to 100%. When

, all of the methods could achieve complete recovery. Overall, based on all of the sub-figures in

Figure 6, we can see that the reconstruction performance of the proposed method with

was the best and the proposed method was more suitable for signal recovery under larger sparsity conditions.

Based on the above analysis, in a noise-free signal interference environment, the proposed method with and has a better recovery performance for different sparsity and measurements in comparison to other methods. Furthermore, the proposed method is more sensitive to larger sparsity signals. In other words, signals are more easily recovered in large sparsity environments.

In

Figure 7, we compared the average runtime of different algorithms with different sparsities. From

Figure 5a, we can see that the reconstruction percentage was 100% for the StoIHT algorithm with sparsity

and the real sparsity of

and for the GradMP, StoGradMP and the proposed method with

with

. Therefore, we set the range of the assumed sparsity as

and

in

Figure 7a, respectively. From

Figure 7a, we can see that the average runtime of the proposed algorithm with

was less than the StoGradMP algorithm, except for the proposed method

.

From

Figure 5b, we can see that the reconstruction percentage of all algorithms was 100% when the range of the assumed sparsity was

and the real sparsity was

, except for the StoIHT and the proposed method with

. Therefore, the range of the assumed sparsity was set as

in the simulation of

Figure 7b. From

Figure 7b, we can see that the average runtime of the proposed method with

was still lower than the StoGradMP algorithm.

From

Figure 5c,d, we can see that the reconstruction percentage of all reconstruction methods was 100% when the assumed sparsity level was

and

, respectively, except for the StoIHT and the proposed method with

and

. Therefore, we set the range of the assumed sparsity as

and

in the simulation of

Figure 7c,d, respectively. From

Figure 7c,d, we can see that the proposed algorithm with

had a shorter runtime than the StoGradMP algorithm. Although the proposed method with

had a longer runtime than the other method, it required less measurements to achieve the same reconstruction percentage as the others shown in

Figure 6. Furthermore, from all sub-figures in

Figure 7, we discovered that the average runtime of all algorithms increased when the assumed sparsity was gradually greater than the real sparsity, except for in the proposed method. This means that the inaccuracy of the sparsity estimation will increase the computational complexity of these algorithms. Meanwhile, it is indicated that the proposed method removes the dependence of the state-of-the-art algorithms on real sparsity and enhances the practical application capacity of the proposed algorithm.

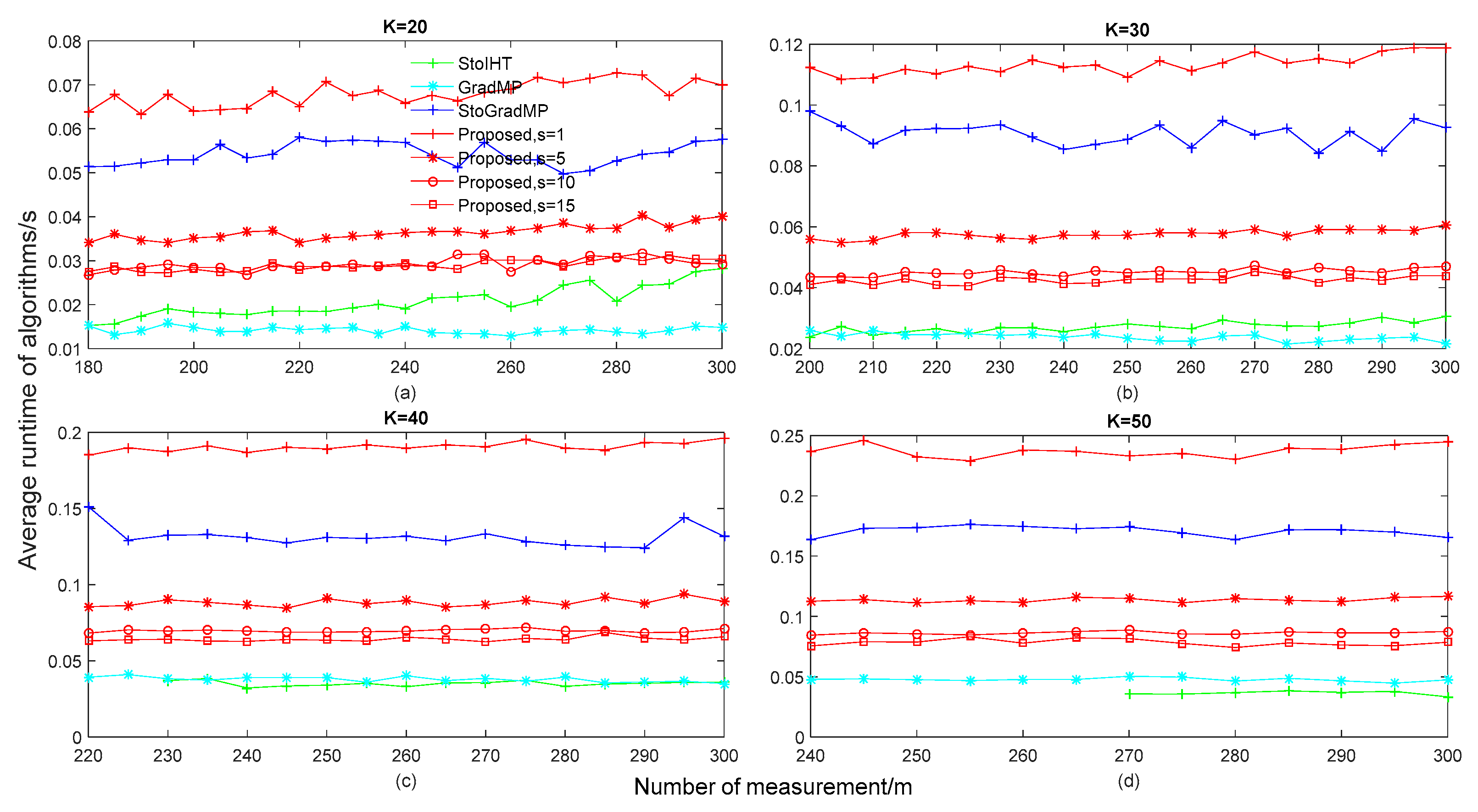

In

Figure 8, we compared the average runtime of different algorithms with different measurements in different real sparsity conditions. From

Figure 6, for the different sparsity levels, we can see that all algorithms could achieve 100% reconstruction when the number of measurements was greater than 180, 200, 220 and 240, respectively, except for the StoIHT algorithm. Therefore, we set the range of measurements as

,

,

, and

in

Figure 8a–d, respectively. In particular, in

Figure 6c,d, we can see that the reconstruction percentage was 100% when the number of measurements of the StoIHT algorithm was greater than 230 and 270, respectively. Therefore, we set the range of measurements as

and

in the simulation of

Figure 8c,d, respectively.

From

Figure 8, we can see that the GradMP algorithm had the lowest runtime, the next lowest were the StoIHT algorithm, the proposed algorithm with

and the StoGradMP algorithm. This means that the proposed method with

had a lower computational complexity than the StoGradMP algorithm, except for the GradMP and StoIHT algorithms. Meanwhile, in terms of the proposed algorithm, we can see that when the size of the step-size was

, the average runtime was the shortest, the next shortest were the proposed method with

, the proposed method with

and the proposed method with

, respectively. This shows that a larger step-size will be beneficial to approach the real sparsity

of the original signal, thereby reducing the computational complexity of the proposed method. Furthermore, from

Figure 6 and

Figure 8, although the proposed method with

had the highest runtime, it could achieve reconstruction with fewer measurements than the other algorithms.

Based on the above analysis, in a noise-free signal interference environment, the proposed algorithm had a lower computational complexity with a larger step-size than a smaller step-size. Although, the proposed method had a higher computational complexity than some existing algorithms in some conditions, it is more suitable for applications without knowing the sparsity information.

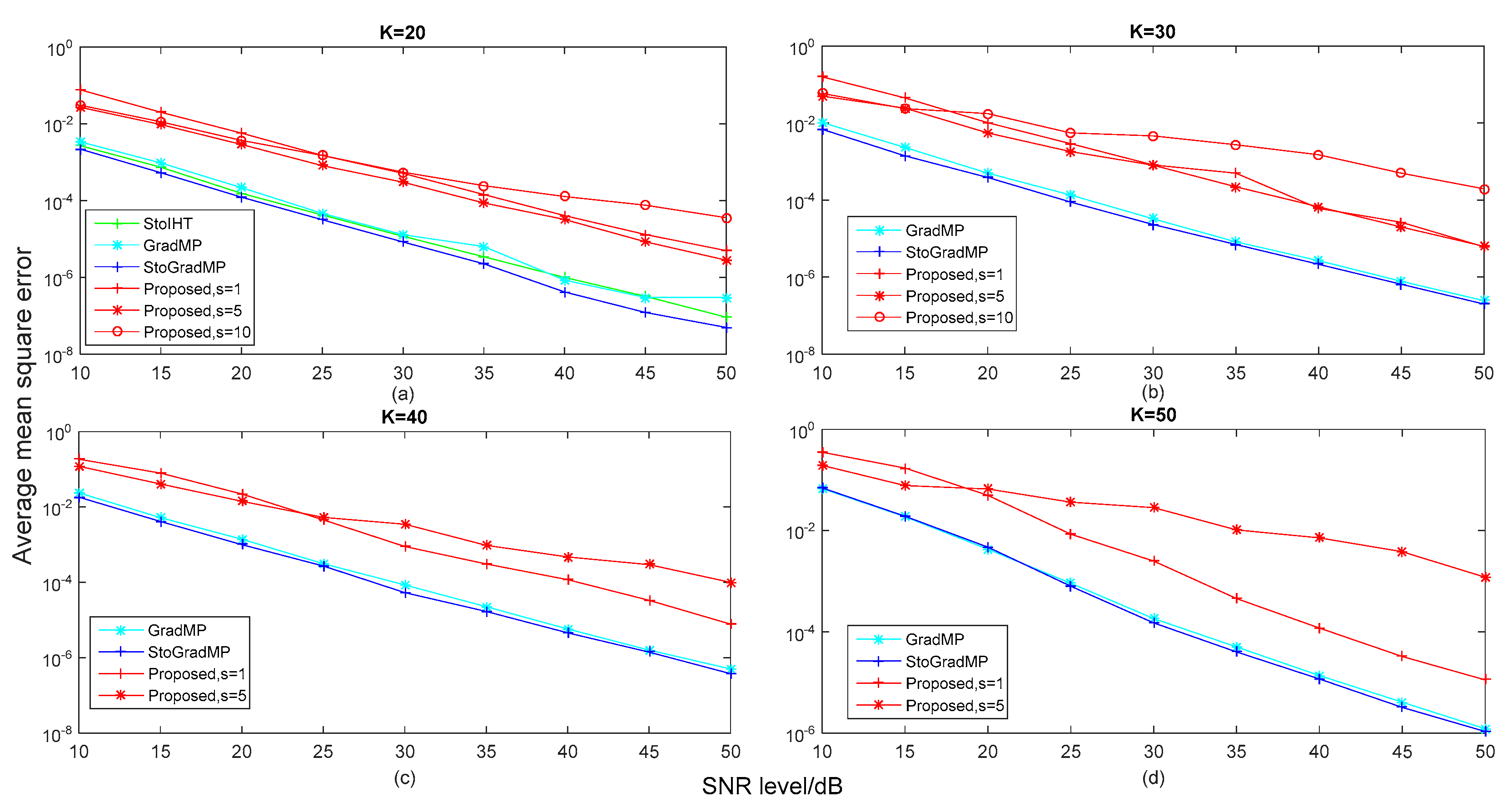

In

Figure 9, we compared the average mean square error of different algorithms with different

levels in different real sparsity conditions to better analyze the reconstruction performance of the different algorithms when the original sparse signal was corrupted with different levels of noise. We set the range of the noise signal level as

in simulation of

Figure 9. Furthermore, to better analyze the reconstruction performance of all reconstruction algorithms in different real sparsity levels conditions, we set the real sparsity level

as 20, 30, 40 and 50, respectively. Here, the noise signal was a Gaussian white noise signal. In particular, all of the experimental parameters were consistent with

Figure 5. In

Figure 5a,b, the reconstruction percentage of the proposed method was 100% with a step-size of

. Therefore, we set the size of

as 1, 5 and 10 in the simulation of

Figure 9a,b, respectively. In

Figure 5c,d, the reconstruction percentage of the proposed method was 100% with a step-size of

. Thus, we set the size of the step-size of the proposed method as 1 and 5 in

Figure 9c,d, respectively.

From

Figure 9, we can see that the proposed methods with different step-sizes had a higher error than other algorithms for different

levels. This is because the proposed methods supposed that the sparsity prior information of the source signal was unknown, while the other methods used the real sparsity as prior information. The estimated sparsity by our proposed method was still different to the real sparsity. This made the proposed method have a higher error than the others. In particular, the error was very small for all algorithms with a larger SNR, which had little effect on the reconstruction signal. Although the proposed method was inferior to other algorithms in terms of reconstruction performance when the original sparse signal was corrupted by different levels of noise, it provides a reconstruction scheme that is more suitable for practical applications. In this paper, we mainly focused on the no noise environments. Recently, in Reference [

33,

34,

35,

36], the researchers focused on the reconstruction solutions for the original signal in the presence of noise corruption and several algorithms were proposed. In the future, we can use their ideas to improve our proposed method in anti-noise interference performance.

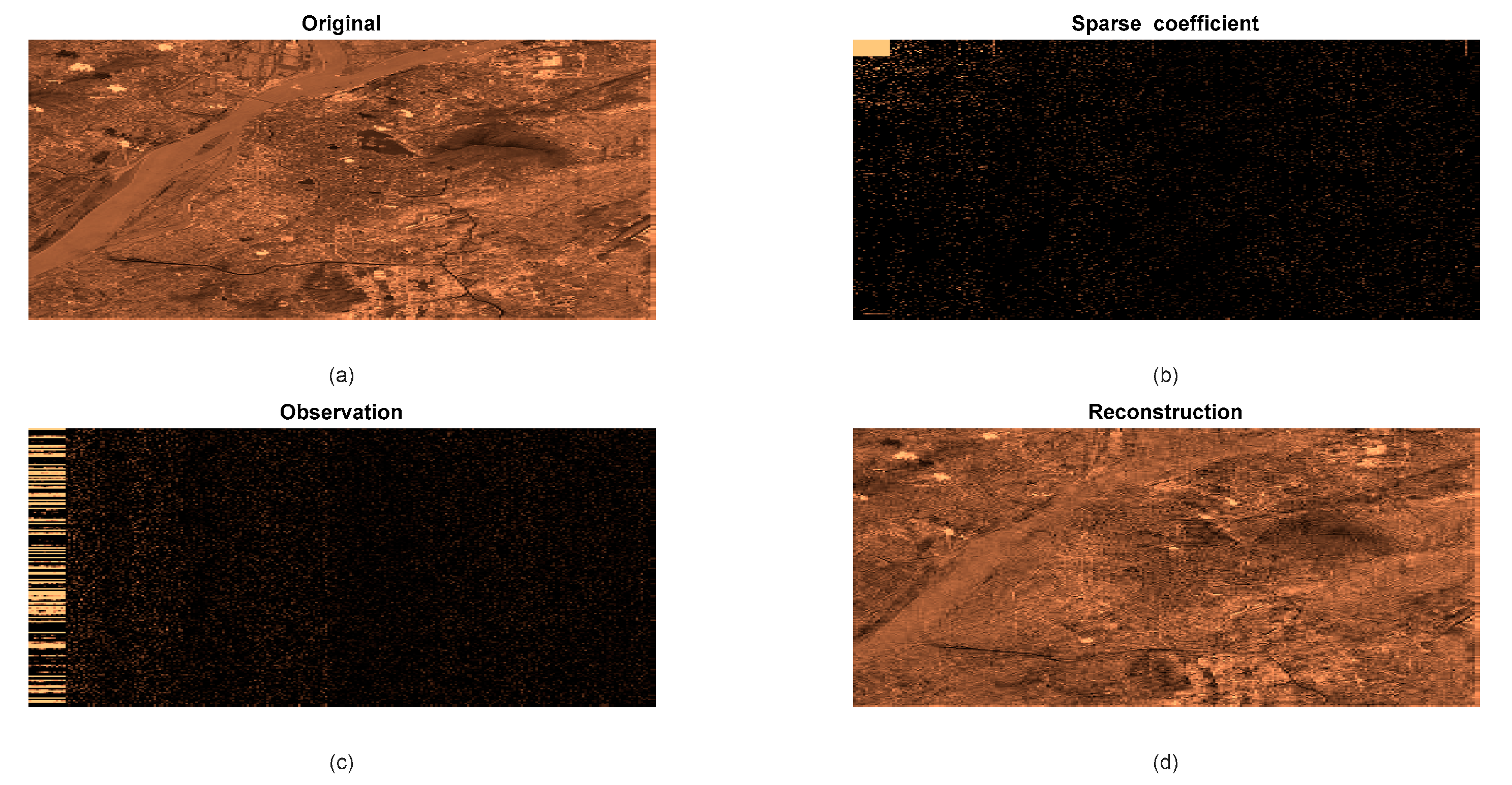

In

Figure 10, we test the application efficiency of our proposed method in remote sensing image compressing and reconstructing. The

Figure 10a–d show the original remote sensing image, its sparse coefficient, compressed image(observation signal) and reconstructed image by our proposed method. By comparing the

Figure 11a with

Figure 10d, we can see that our proposed method reconstructs the compressed remote sensing image successfully.

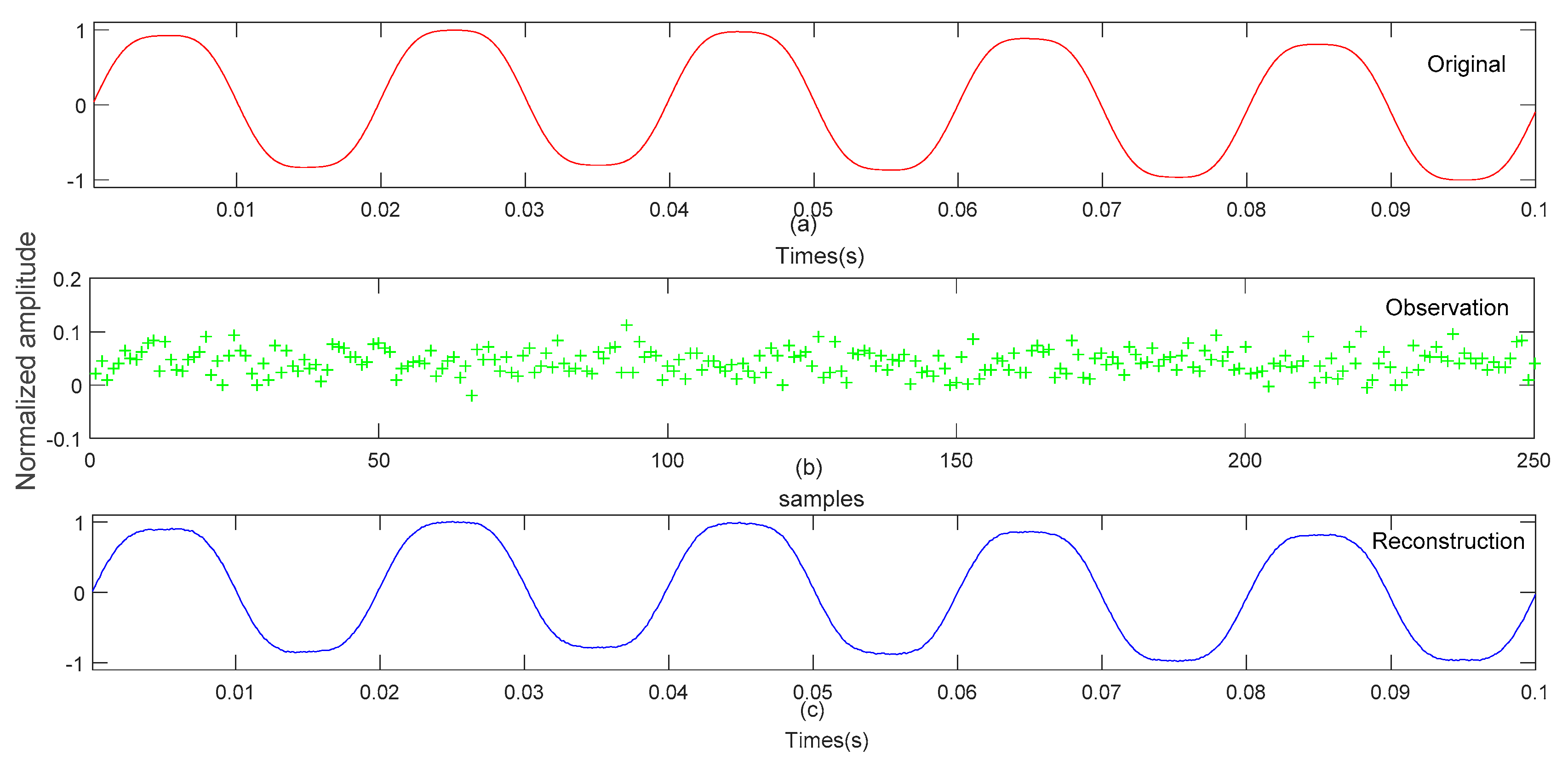

In

Figure 11, we test the efficiency of our proposed method in application of power quality signal compressing and reconstruction. The

Figure 11a–c show the inter-harmonic signal, compressed signal (observation signal) and reconstructed inter-harmonic signal by our proposed method respectively. It can be seen from

Figure 11a,c that the waveforms of two figures are basically the same. This proves that our proposed method is efficiency for inter-harmonic reconstruction.

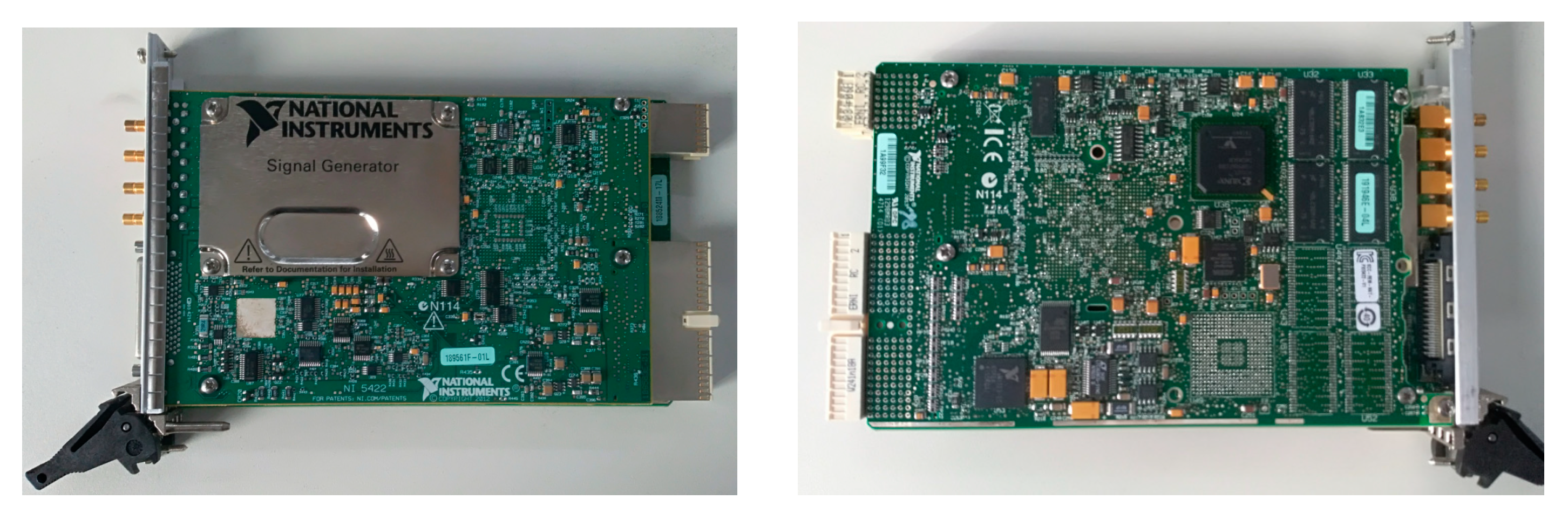

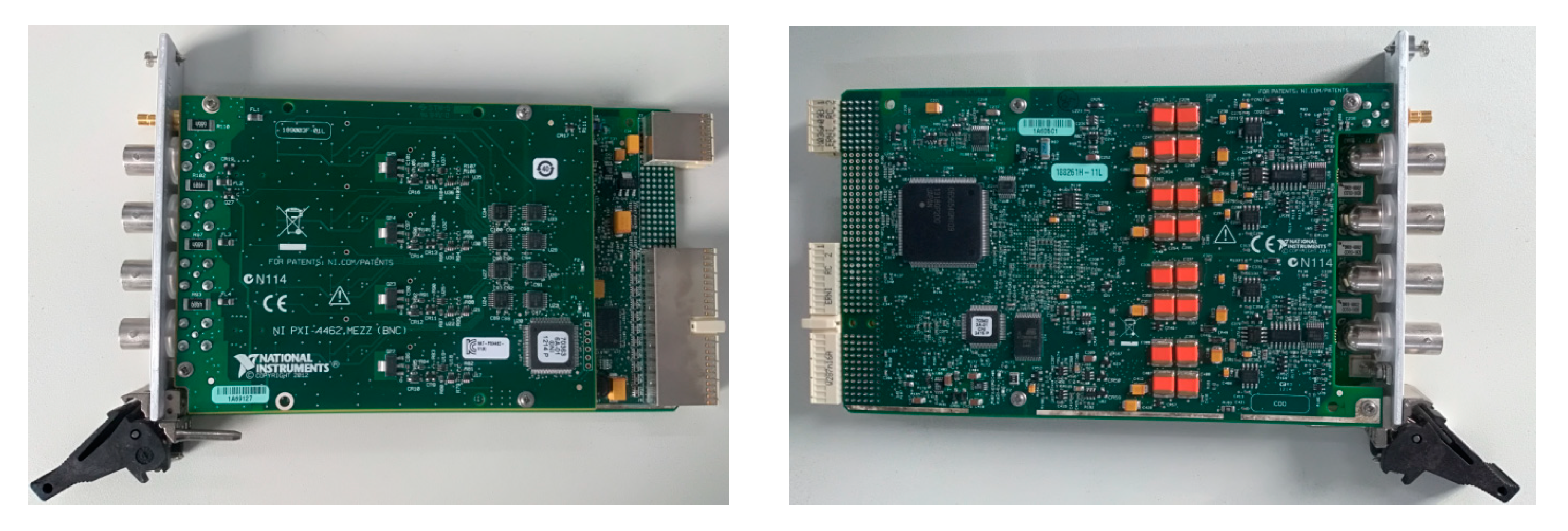

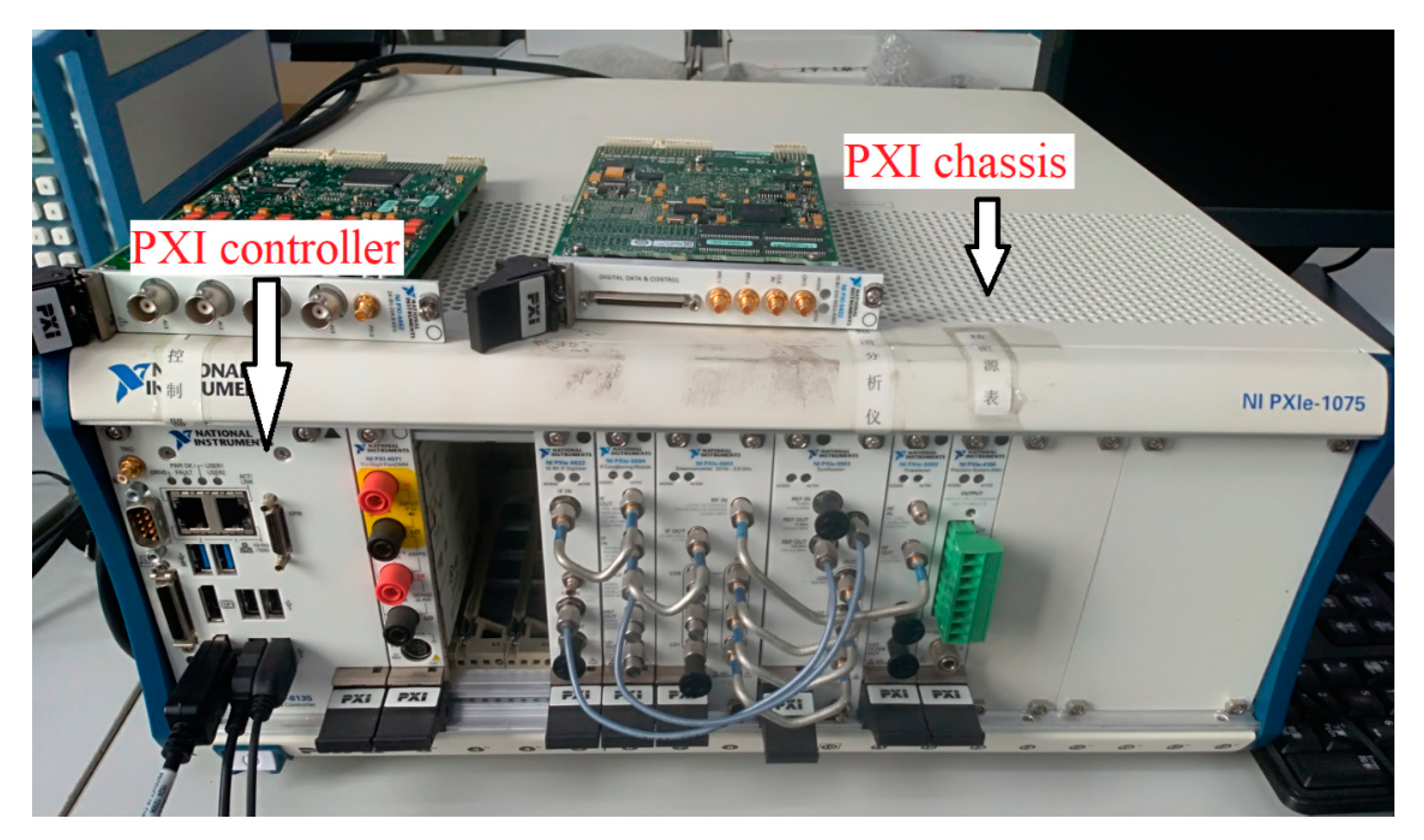

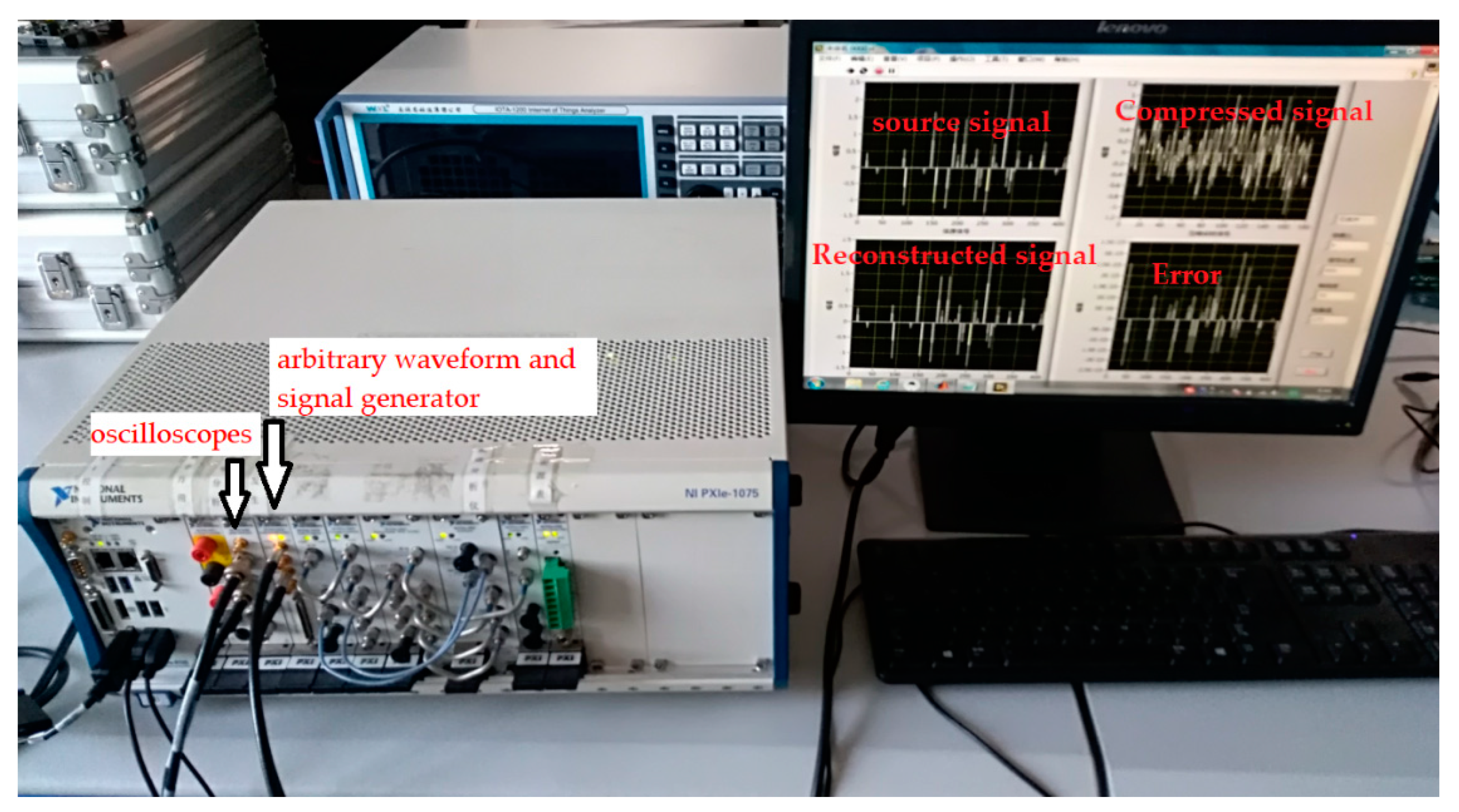

We also used the National Instruments PXI (peripheral component interconnect extensions for instrumentation) system to test the efficiency of our proposed method in application. The hardware of the PXI system includes an arbitrary waveform and signal generator and oscilloscopes. The hardware architecture of arbitrary waveform and signal generator is shown in

Figure 12. The hardware architecture of oscilloscopes is shown in

Figure 13.

Figure 14 shows the PXI chassis and controller, which are used to control the arbitrary waveform and signal generator and oscilloscopes. We insert the arbitrary waveform and signal generator and oscilloscopes into PXI chassis to construct the complete measurement device. As is shown in

Figure 15. Mixed programming of Labview and MATLAB were used to realize the compressed and reconstructed algorithm. From the experimental results, it can be seen that the proposed method successfully reconstructed the source signal from the compressed signal.