This section presents the experimental validation and performance evaluation of the bio-inspired gecko robot system developed in this study.

4.2. YOLOv5 Detection Performance

The model is trained using the training set. The model training uses the pre-trained model as the basis, and the network is fine-tuned through transfer learning. The hyperparameters during the experiment are shown in

Table 4.

To further analyze the robustness of the selected hyperparameters, we examined the influence of key parameters on training stability and detection performance.

Learning rate. The initial learning rate was set to 0.01. Increasing the learning rate beyond this value resulted in noticeable oscillations in the training loss and reduced convergence stability. Conversely, reducing the learning rate below 0.005 slowed convergence and increased training time without yielding significant improvement in mAP. Therefore, 0.01 provides a balanced trade-off between convergence speed and stability.

Batch size. A batch size of 16 was selected considering GPU memory constraints and gradient stability. Increasing the batch size slightly improved gradient smoothness but led to higher memory consumption and longer per-epoch training time. Reducing the batch size below 8 introduced larger fluctuations in loss curves, which negatively affected convergence stability.

Number of epochs. Training for fewer than 80 epochs resulted in incomplete convergence and slightly lower mAP values. Increasing the number of epochs beyond 150 did not produce significant accuracy gains but increased training time, indicating diminishing returns.

Input image size. The input resolution of was chosen as a compromise between detection accuracy and computational cost. Increasing resolution improved detection of small objects marginally but increased inference latency. Decreasing resolution reduced computational load but slightly degraded detection precision.

Overall, the selected hyperparameter configuration ensures stable convergence and balanced performance under the computational constraints of the gecko-inspired robotic platform. To provide a clearer overview of the parameter sensitivity analysis, the effects of varying key hyperparameters are summarized in

Table 5. This summary illustrates the rationale for the selected configuration and its balance between convergence stability, detection accuracy, and computational efficiency.

The dataset was trained using the hyperparameters of the model mentioned above, and the model underwent a total of 100 training rounds. During the model training process, various generated images helped us intuitively understand the model’s progress and performance at different stages.

Figure 8 shows the prediction results for the model validation set, where each box represents the object category identified by the model and its corresponding detection confidence score. From the figure, it can be seen that the confidence scores for most objects are at a high level. Each sub-figure represents a different image frame, showcasing object detection results in various scenes and demonstrating the model’s performance under diverse conditions. This reflects the model’s accuracy and reliability.

As shown in

Figure 9, the YOLOv5 training results in the dataset. The result figure displays the model loss curves in both the training set and the test set, as well as the changes in evaluation metrics such as accuracy, recall rate, average precision, and mean average precision. Box_loss represents the difference between the predicted box and the actual box. As the training progresses, this loss gradually decreases, indicating that the model’s performance in spatial localization is getting better and better. Obj_loss represents the loss of target confidence. This value also gradually decreases, indicating that the model’s confidence in target detection is increasing. The cls_loss represents the classification loss, which decreases as training progresses, indicating an improvement in the model’s performance in classification. Val/box_loss, val/obj_loss, and val/cs_loss all show a decreasing trend similar to the training loss, indicating that the model also performs well on the validation dataset and there is no obvious overfitting phenomenon. Recall represents the proportion of successfully detected samples among all true positive ones. This value is close to 1, indicating that the model performs very well in detecting real targets. MAP_0.5 and mAP_0.5:0.95, these two indicators reflect the comprehensive detection ability of the model under different IoU thresholds. As the training progresses, these two mAP values gradually increase, indicating a effective in the detection performance of the model under various conditions, especially when the mAP value approaches 1, indicating excellent overall performance of the model. The training curves indicate that the model exhibits stable learning behavior during both training and validation processes, with a continuous decrease in the loss curve and increasing accuracy and recall. Overall, the training results indicate stable convergence and suitability for practical object detection tasks.

The two graphs in

Figure 10 show the changes in precision and recall of the model relative to confidence. As the confidence level gradually increases from 0, the overall precision shows an upward trend, indicating that higher confidence thresholds lead to increased detection precision. The precision of all categories reached 1.00 at a confidence level of 0.947, indicating that all detected samples were correctly predicted at this threshold. Individual categories such as “chair” and “cabinet” perform relatively well in precision at lower confidence levels, but as they approach the high confidence threshold, precision may tend to saturate. The recall rate maintains a high level of confidence from 0 to 1, especially when it is relatively stable at low confidence, indicating that the model can detect most of the targets. The precision and recall of the model perform well in different classifications, which can strike a balance between precision and recall to meet the needs of different practical applications.

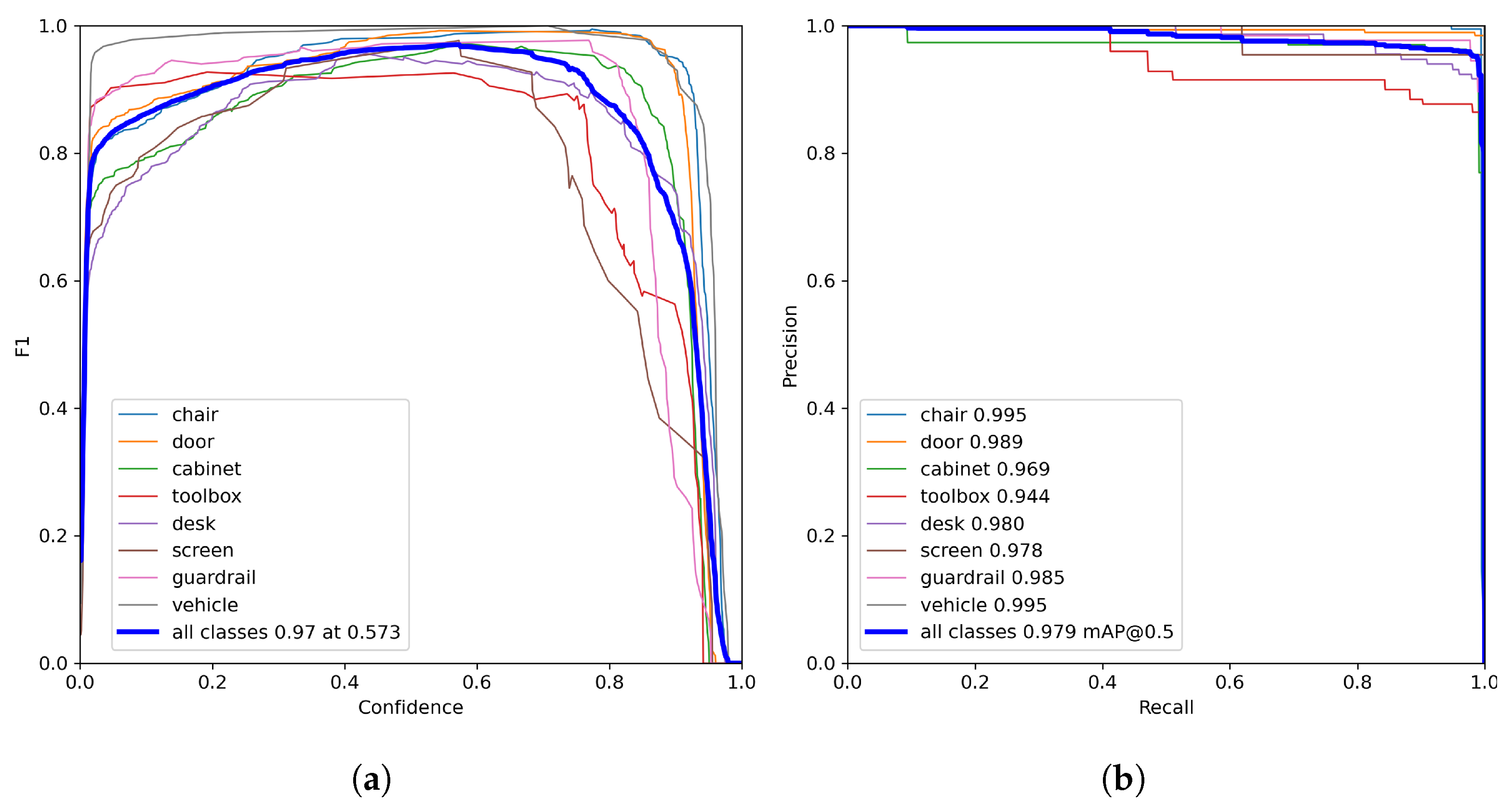

The two graphs in

Figure 11 illustrate the variation of the F1-score and the precision–recall (PR) curves of the proposed YOLOv5 model under different confidence thresholds. These metrics are commonly used to evaluate detection performance and to determine an appropriate operating point for practical deployment.

As shown in

Figure 11a, the F1-score of all categories increases with the confidence threshold and reaches its maximum value of 0.97 at a threshold of 0.573. Since the F1-score represents the harmonic mean of precision and recall, this operating point provides the best overall balance between false positives and missed detections. Therefore, the threshold corresponding to the maximum F1-score is considered the most suitable choice for practical obstacle detection.

The PR curves in

Figure 11b further demonstrate the detection capability of the model across different recall levels. Most object categories exhibit high precision even at large recall values, particularly for classes such as

chair,

guardrail, and

vehicle, indicating strong robustness and consistent detection performance. The overall mAP reaches 0.979, confirming the effectiveness of the trained model.

It is also observed that when the confidence threshold is increased to 0.947, the precision of all categories reaches 1.00, meaning that all remaining predicted bounding boxes are correct at this operating point. However, this perfect precision is obtained at the expense of recall, as a large number of low-confidence detections are suppressed, resulting in missed obstacle detections.

In object detection systems, the confidence threshold directly controls the trade-off between precision and recall. A higher threshold reduces false positives but increases the risk of missed detections, whereas a lower threshold improves recall while introducing additional false alarms. The confidence threshold mainly affects detection quality during post-processing and has negligible influence on inference speed.

For robotic obstacle avoidance tasks, missed detections pose a greater safety risk than occasional false positives. Consequently, although a threshold of 0.947 achieves perfect precision, it is not selected as the operating point in practice. Instead, a moderate confidence threshold corresponding to the maximum F1-score is adopted, as it provides a more reliable balance between detection accuracy and robustness for real-time navigation. The confidence threshold does not significantly affect inference speed, as it is applied during post-processing. Its primary impact lies in detection quality rather than computational efficiency.

4.3. Comparative Experiment of Models

To further evaluate model suitability under the system constraints of the proposed robotic platform, comparative experiments were conducted at two levels: (1) comparison between different detection architectures (YOLOv5 vs. YOLOv11), and (2) ablation-style analysis among different YOLOv5 variants.

4.3.1. Comparison Between YOLOv5 and YOLOv11

We conducted training experiments using YOLOv11 under identical dataset and training settings. The objective of this comparison is not to establish a benchmark ranking, but to analyze whether more recent model architectures provide practical advantages under the system constraints of the proposed robotic platform.

Table 6 summarizes the comparative training results between YOLOv5 and YOLOv11 under identical dataset and training settings. Both models achieve comparable precision and recall values, and no significant improvement in mAP is observed with YOLOv11. However, the YOLOv11 training curves exhibit larger oscillations in the early epochs, indicating increased sensitivity to training dynamics.

4.3.2. Ablation Analysis of YOLOv5 Variants

Given that the objective of this work is to ensure stable real-time perception under the hardware and system constraints of a gecko-inspired robotic platform, model suitability is evaluated based on detection reliability, training stability, computational efficiency, and deployment maturity. Under these criteria, YOLOv5 provides a more balanced and robust solution for the current task.

To further analyze model suitability under the system constraints of the proposed gecko-inspired climbing robot, we conducted an ablation-style comparison among different YOLOv5 variants, including YOLOv5n, YOLOv5s, YOLOv5m, and YOLOv5l. The purpose of this comparison is not to perform state-of-the-art benchmarking, but to investigate how model scale and network configuration affect detection performance and real-time feasibility within a unified system framework.

For fairness, all YOLOv5 variants were trained under identical experimental conditions, including the same input image resolution, data augmentation strategies, number of training epochs, and hyperparameter settings. Only the model scale and internal network configuration were varied. The evaluation metrics include precision, recall, mAP@0.5, and mAP@0.5:0.95, as summarized in

Table 7. To provide a clearer visual comparison of the performance differences among the models, a corresponding bar chart is presented in

Figure 12.

Among them, the precision of Yolov5s is 0.915, which is the lowest among the four models. The precision of Yolov5n and Yolov5m has slightly improved, reaching 0.926 and 0.942, respectively. Yolov5l has the highest precision, 0.947, demonstrating the best classification precision. The recall rates of all models are close to 0.97 or higher, indicating that these models perform well in detecting true positive cases, especially Yolov5l has the highest recall rate, indicating its excellent ability to collect all positive cases. All models are available at mAP@0.5. The performance of the indicators is relatively consistent, ranging from 0.96 to 0.97, indicating that all models can effectively detect targets under a relatively relaxed IOU threshold. However, under stricter criteria of mAP@0.5:0.95, Yolov5l also leads with a score of 0.84, demonstrating its higher robustness. In general, Yolov5l performs excellently in all indicators.

4.4. CPG Motion Performance

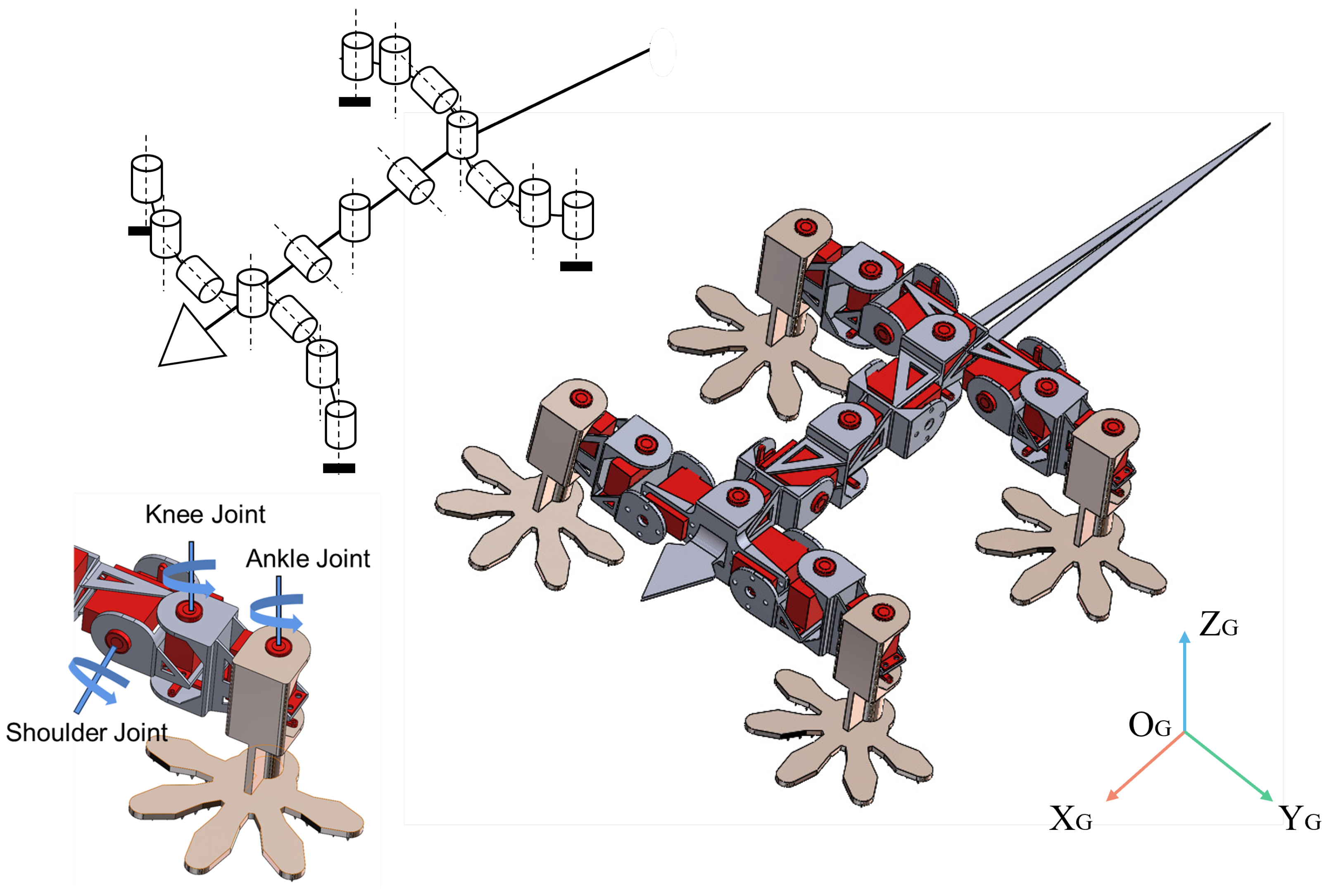

The first step in the CPG control algorithm is to build the oscillation center of the imitation gecko model, as shown in

Figure 13. Based on the kinematic model design, a total of 11 oscillation centers were set up, of which 8 were located in the shoulder and knee joints of the limbs. The other three are located on the flexible spine. Through the above design, the oscillation center can spontaneously and continuously generate joint control signals through learning.

The CPG signal generated through the Hopf oscillator network successfully performs diagonal gait control. The angular timing curves of the knee and shoulder joints are shown in

Figure 14 and

Figure 15. Among them, the left front leg (LF) and right rear leg (RH), the right front leg (RF), and left rear leg (LH) are respectively in synchronization, with a phase difference of 0, and a phase difference of

is present between the two groups of diagonal legs, which meets the diagonal gait requirements. The knee joint controls the forward motion of the robot, with the swing phase at 0 to 30 degrees and the support phase at 40 to 60 degrees. The phase state distinction is achieved by switching the symbol of the oscillator state variable

y in Equation (

5). The shoulder joint controls the leg lifting action and sets the joint angle change range from 0 to 60 degrees, corresponding to the linear mapping of

and

in Equation (

7). All joints oscillate at a frequency of 1 Hz (

rad/s).

The three lateral swing joints of the spine show wave motions with a phase difference of

. As shown in

Figure 16, the maximum deflection angles are ±15°, ±10°, and ±8°, respectively, which can effectively assist steering.

As shown in

Figure 17, the proportion of the support phase is about 60%, indicating the spatio-temporal coordination of diagonal gait. When one leg enters the swing phase, its opposite leg always remains in a supporting state, which avoids violent fluctuations in the center of gravity and increases the speed and stability of movement.

The joint angles output by the above CPG were mapped to each servo motor through PWM signals, and a crawling experiment was conducted on a horizontal plane. In the robot’s straight-line motion experiment, the following

Figure 18 shows the straight-line motion of the gecko in the diagonal gait. From the motion in

Figure 18, it can be found that the flexible spine of the gecko-like robot can produce periodic lateral deflection, accompanied by alternating swings of the limbs back and forth. The robot can move smoothly in a straight line on the experimental platform. The experimental results also demonstrated the feasibility of the CPG control algorithm.

4.5. Discussion on Future Integration

Based on the system architecture described in the previous section, this part discusses potential extensions toward a fully integrated perception–decision–motion closed-loop obstacle avoidance system.

The core integration concept of this study is to transform visual perception outputs into modulation of locomotion parameters within the CPG controller. Specifically, obstacle information obtained from YOLO-based detection is abstracted into low-dimensional descriptors, such as obstacle direction and relative proximity, which are mapped to oscillation amplitude, frequency, and phase offsets of the Hopf oscillator network. This parameter-level coupling provides a structured interface between perception and locomotion, enabling biologically inspired sensorimotor coordination at the framework level.

In a potential closed-loop implementation, the robot would acquire forward-view images through an onboard camera, detect obstacles in real time, and estimate their relative positions using depth information or geometric models. The system would then determine whether obstacles fall within a predefined avoidance region and adjust the CPG parameters accordingly. For example, obstacles on the left could increase the oscillation amplitude and phase advance of the right limbs to induce a rightward turn, while frontal obstacles at close range could reduce oscillation frequency to enable in-place turning. When no obstacle is detected within the critical region, the CPG maintains nominal gait generation. The resulting oscillator states

are mapped to joint angle commands and transmitted to the servo drivers. The conceptual architecture is illustrated in

Figure 19.

However, it is important to emphasize that the current experiments validate the perception and locomotion subsystems independently. Real-time perception–motion closed-loop control, including software integration, timing synchronization, latency analysis, and system-level debugging, has not yet been experimentally implemented. In particular, the spinal motion demonstrated in this study corresponds to rhythmic oscillation under preset CPG parameters, and visually triggered spinal modulation remains a conceptual extension rather than an experimentally verified capability.

From a systems perspective, transitioning from subsystem validation to true autonomous obstacle avoidance involves several major challenges. Reliable real-time mapping between perception descriptors and locomotion parameters must be established to ensure responsive motion adaptation. In addition, synchronization between perception update rates and high-frequency motor control loops is required to prevent timing conflicts and instability. Robustness mechanisms must also be developed to handle perception uncertainty, environmental disturbances, and varying surface conditions encountered in climbing scenarios. Finally, long-duration integrated experiments are necessary to evaluate stability, adaptability, and performance under continuous interaction.

These challenges define the gap between the current framework-level formulation and fully autonomous obstacle avoidance. Accordingly, the obstacle avoidance capability emphasized in this study should be interpreted as a research objective and system architecture rather than a completed autonomous function.

Future research will focus on implementing real-time perception–CPG coupling, including quantitative mapping strategies, synchronization analysis, and robustness enhancement. Adaptive or learning-based modulation mechanisms may be introduced to improve disturbance rejection and environment-dependent gait optimization. In addition, multimodal sensing integration, such as tactile and proprioceptive feedback, will be investigated to enhance closed-loop stability in complex climbing environments.

Regarding reproducibility, the experiments are conducted using a self-collected dataset tailored to the robot-centric viewpoint of a gecko-inspired climbing robot. Public benchmark datasets are not directly applicable due to differences in scene geometry and obstacle characteristics. Detailed descriptions of data acquisition, annotation standards, training configurations, and hyperparameters are provided in the

Section 3. Although the dataset is not publicly released at this stage, it can be made available upon reasonable academic request.

Overall, this work should be regarded as a foundational step toward integrated perception–motion systems for climbing robots, providing a structured framework and experimental baseline for future closed-loop development.

4.6. Limitations of the Proposed System

Despite the results obtained from subsystem validation, several limitations of the proposed framework should be acknowledged.

First, the perception and locomotion modules are evaluated independently, and real-time perception–motion closed-loop integration has not been experimentally implemented. Consequently, the obstacle avoidance capability remains at the framework level rather than a fully realized autonomous behavior.

Second, the perception–CPG coupling mechanism adopts a deterministic rule-based mapping, which does not incorporate adaptive learning, disturbance rejection, or environment-dependent optimization. This limits the system’s ability to cope with highly dynamic or uncertain environments.

Third, the experimental validation is conducted using a self-collected dataset under controlled conditions. Although it reflects the robot-centric viewpoint, further evaluation is required to assess generalization across diverse real-world environments.

Finally, long-duration integrated experiments and system-level robustness analysis under external disturbances have not yet been performed.

These limitations highlight the gap between the current subsystem-level verification and fully autonomous obstacle avoidance, and they define key directions for future research.