1. Introduction

The Internet of Things (IoT) is a network of interconnected physical devices, ranging from smart home appliances and wearable gadgets to industrial machines and vehicles, embedded with sensors, software, and connectivity. These smart objects collect, share, and analyze data, enabling automation and intelligent decision-making across various domains. Smart homes, as one of the domains of IoT, refer to residential environments equipped with interconnected IoT devices for automation [

1]. Smart home ecosystems encompass the broader network of devices and services and have transformed daily living through seamless automation, remote control, and monitoring [

2]. However, this convenience comes at the cost of adding numerous endpoints to smart home networks, the underlying infrastructure facilitating communication among devices. The increased complexity can lead to a lack of robust security safeguards [

1,

3]. Cybercriminals can exploit these devices to compromise privacy and safety [

1,

4,

5]. Recent reports estimate that over 60% of smart home devices harbor exploitable firmware flaws or default credentials, making them prime targets for botnets, data exfiltration, and lateral movement attacks [

6]. Traditional intrusion detection systems (IDS) often rely on static signature databases or behavioral rules, which rapidly become obsolete against polymorphic or zero-day threats [

2]. They are frequently inadequate due to the evolving nature of cyber threats and the diverse range of IoT devices, necessitating the need for effective cyber threat detection and mitigation strategies [

2,

7].

Generative AI models offer a powerful alternative by learning compact representations of normal system behavior and generating human-readable insights for mitigation [

8]. Variational Autoencoders (VAE) can compress high-dimensional network telemetry into low-dimensional latent spaces, enabling real-time anomaly scoring without explicit attack signatures [

7,

9]. Meanwhile, transformer-based large language models (LLM), such as Bidirectional Encoder Representations from Transformers (BERT), excel at classification tasks once fine-tuned on labeled security data [

10]. Models like Grok3 can translate detected anomalies data into contextualized, actionable countermeasures [

11].

This study builds upon the research framework and initial findings presented by Oacheșu [

12], and presents a three-stage AI pipeline for smart home security: (1) a VAE for unsupervised detection of anomalies in IoT traffic; (2) a BERT classifier that confirms and categorizes these anomalies with higher accuracy; and (3) an LLM that generates tailored mitigation strategies for each threat. Using the Aposemat IoT-23 dataset, the research shows promising detection performance, high classification fidelity, and policy-ready recommendations for IoT-based smart home network defense.

The structure of the paper is as follows:

Section 2 reviews IoT security and related work on intrusion detection systems and AI approaches to identify research gaps in this domain.

Section 3 outlines the methodology, including the Aposemat IoT-23 dataset preprocessing, VAE for anomaly detection, BERT for threat classification, and Grok 3 for countermeasure generation, alongside a simulation setup.

Section 4 presents the results, discusses limitations, and addresses validity threats.

Section 5 concludes with key findings and future research directions. To the authors’ knowledge, this is the first paper proposing a pipeline that integrates VAE, BERT, and a generative LLM to both detect and respond to IoT smart home-specific threats.

2. Related Work

2.1. Security Challenges in Smart Home IoT

The proliferation of IoT devices in smart homes has increased their vulnerability to attacks, including botnets, Distributed Denial of Service (DDoS) attacks, and data breaches [

5]. These devices, often resource-constrained, employ diverse communication protocols, which complicates security efforts [

13]. Traditional IDS, which relies on predefined attack signatures, has poor performance in detecting zero-day exploits or adapting to the dynamic IoT threat landscape [

2]. The inadequacy of static signature-based systems in IoT contexts is highlighted by numerous existing papers, which note high false-negative rates against novel attacks [

14,

15]. Additionally, the scalability and adaptability required for smart home security implies a shift toward learning-based approaches [

3,

16].

In real-world applications, smart home ecosystems function as Cyber–Physical Systems (CPS), where digital computation interacts with physical processes. Security in this domain often intersects with the field of discrete event systems (DES), which provides a formal abstraction for modeling system behaviors under threat. Recent work in this field has focused on the synthesis of resilient supervisors capable of maintaining system safety even when attackers modify sensor information or manipulate actuators to force the system into unsafe states [

17]. Understanding these theoretical foundations is critical for developing robust defense mechanisms that prevent physical hazards in smart home environments.

2.2. Intrusion Detection Systems (IDS) and Anomaly Detection

Traditional IDSs fall into three categories: Signature-based IDS, Anomaly-based IDS, and Specification-based IDS. A signature-based IDS detects suspicious network traffic by comparing attack signatures to a database, effectively handling known threats but struggling with zero-day and polymorphic attacks [

5,

14,

18]. An anomaly-based IDS, also known as a behavioral-based IDS, learns only the normal behavior from data and flags those behaviors that deviate from the norm. While this approach has the capability to detect zero-day novel attacks, it suffers from high false-positive rates and there is a need for large amount of data to define what constitute normal behaviors [

2]. Furthermore, anomaly detection approaches do not have the ability to provide information about the type of anomaly that is detected [

19]. A specification-based IDS, also known as a behavioral-rule-based IDS, uses predefined normal behavior profiles from devices. Clear rules help to reduce false positives [

20], but their reliance on predefined behaviors limits adaptability to new threats [

2]. Conversely, hybrid approaches blend signature and anomaly detection to balance accuracy and false alarms but often need significant labeled data and computational resources, which can be challenging in resource-limited IoT settings [

21].

2.3. Attack-Specific Countermeasures

This study focuses on four common cyber attacks in IoT smart homes: Command and Control (C&C), Distributed Denial of Service (DDoS), Okiru, and Horizontal Port Scan. Referencing the Aposemat IoT-23 dataset, these attacks exploit vulnerabilities in interconnected devices, threatening security, functionality, and user privacy [

22,

23]. For instance, attacks such as C&C enables compromised devices to enroll in botnets. Detection relies on identifying persistent C2 channels and blocking known malicious IPs [

24]. DDoS attack enables attacker to flood IoT network with a massive amount of malicious traffic from multiple compromised devices. Typical mitigation includes rate limiting, traffic filtering, and network segmentation [

25,

26]. Okiru malware is a Trojan backdoor targeting IoT devices, including routers and web IP cameras. Countermeasures involve firmware hardening, disabling unused services, and anomaly-based triggers [

27,

28]. A horizontal port scan is a technique where a single port is scanned across multiple IP addresses on a network, rather than multiple ports on a single host. The defenses use dynamic firewall rules, IDS rules, and geo-IP filtering [

4,

23].

While these countermeasures reflect best practices, they are typically reactive and static, lacking adaptability [

27,

29].

2.4. AI in IoT Security

While attackers can use artificial intelligence (AI) to exploit IoT ecosystems, such as through adversarial machine learning (ML) to evade detection [

7,

30], AI—particularly ML—has emerged as a promising solution for securing IoT ecosystems [

2,

5]. Supervised ML models, such as Random Forests and Support Vector Machines, have been widely applied to classify malicious activities in network traffic [

2,

31]. However, their dependence on labeled datasets, often unavailable in IoT settings, limits their practicality [

13]. To overcome this, unsupervised learning techniques like clustering and autoencoders have gained traction [

32].

Unsupervised learning uses normal traffic patterns to detect anomalies. Generative AI models like VAEs and GANs improve cybersecurity by modeling complex data distributions [

33], aiding in anomaly detection, dimension reduction, and generating synthetic data to tackle data imbalance or scarcity [

7,

34]. VAEs, in particular, excel at anomaly detection by learning to reconstruct normal data and flagging deviations with high reconstruction errors [

35]. According to Vadisetty and Polamarasetti [

8], a generative AI-enhanced IDS outperformed traditional methods, achieving a 15% improvement in malware detection accuracy and a 6% reduction in false positive rate in their study.

Beyond anomaly detection, LLMs such as BERT have been adapted for security tasks [

10,

36]. They can be used for analyzing security logs and threat classification, although their full usability in cybersecurity has not been fully explored [

37]. Generative AI can not only detect but also interpret and categorize threats, provide context, and develop understandable countermeasures. However, there are currently few IoT-specific implementations available.

Integrating advanced AI, such as Generative AI, into existing cybersecurity frameworks presents significant challenges due to high computing resource demands [

8]. Centralized control techniques face scaling challenges as the number of nodes increases, leading to a decrease in overall efficiency [

21]. These studies highlight AI’s potential in IoT security while also revealing its limitations in scalability, adaptability, and resource efficiency.

2.5. Countermeasure Generation

Generating effective countermeasures is as critical as detecting the anomalies or classifying the threats [

20,

21]. Traditional countermeasure systems rely on rule-based or expert-defined responses, which lack flexibility in dynamic environments [

2,

3,

14]. For instance, a rule-based framework for IoT security that mitigates known threats can fail against new attack vectors [

8,

20]. To address this, adaptive approaches have been investigated, such as a reinforcement learning model to generate countermeasures for network security, dynamically adjusting responses based on attack patterns. However, this requires significant computational resources [

2,

7].

The use of generative AI for countermeasure design remains largely unexplored, particularly in smart homes where real-time, lightweight responses are essential. Current research lacks adaptive, automated countermeasures.

2.6. Research Gap and Motivation

AI-based IDS solutions currently face challenges with resource demands and adapting to IoT environments. While using VAEs for anomaly detection shows promise, it often lacks in classification and mitigation. Although LLMs could provide adaptive responses, few systems successfully integrate these elements.

These gaps are addressed with a generative AI approach in this study:

VAE-based Anomaly Detection: Learns benign traffic and flags novel patterns without labeled attack data.

BERT-based Threat Classification: Refines anomaly flags into specific threats, reducing false positives and enabling contextual interpretation.

LLM-driven Mitigation (Grok): Produces actionable, tailored countermeasures to address a specific cyber threat.

This work proposes an end-to-end pipeline for IoT environments with specific focus on smart home security using unsupervised learning, transformer-based classification, and generative AI-based mitigation strategies.

3. Methodology

3.1. Overview of the Proposed Pipeline

The proposed system is a generative AI-based pipeline designed to enhance smart home IoT security by enabling near real-time threat detection and automated countermeasure generation. It comprises three core components: a VAE model for unsupervised anomaly detection, a BERT model for threat classification, and Grok for generating adaptive responses [

38]. This architecture addresses the limitations of traditional IDSs by leveraging the generative and contextual capabilities of generative AI to handle the dynamic and heterogeneous nature of IoT environments.

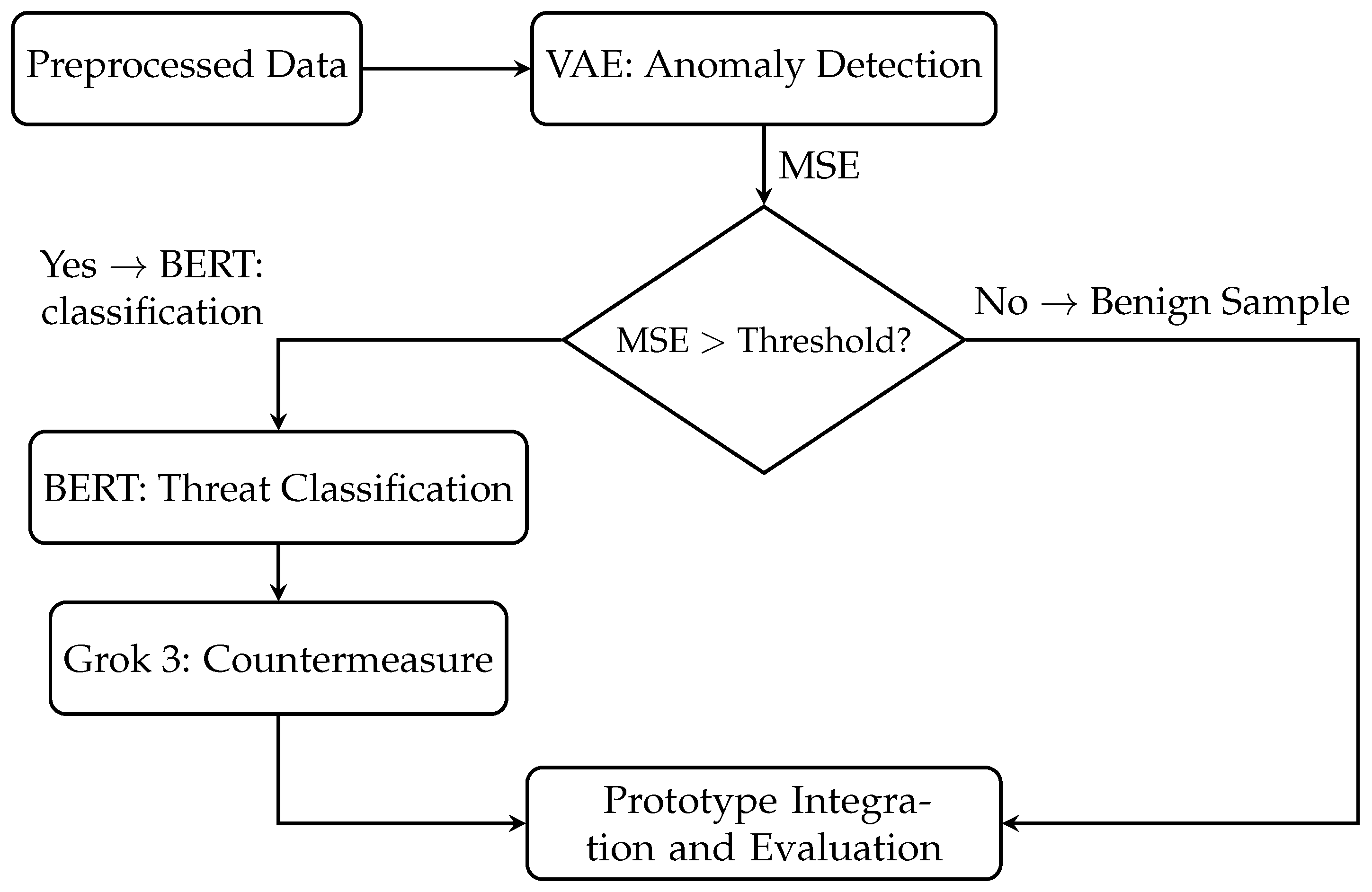

The data flow through the pipeline is sequential and can be summarized as follows (see

Figure 1):

Input Processing: Raw network traffic from smart home IoT devices (from the Aposemat IoT-23 dataset) is preprocessed to extract relevant features (e.g., resp_bytes, duration, proto) and to handle missing or inconsistent data.

Anomaly Detection: The VAE is trained exclusively on benign samples to learn normal traffic patterns. During inference, it computes reconstruction errors and flags samples exceeding a threshold as anomalous.

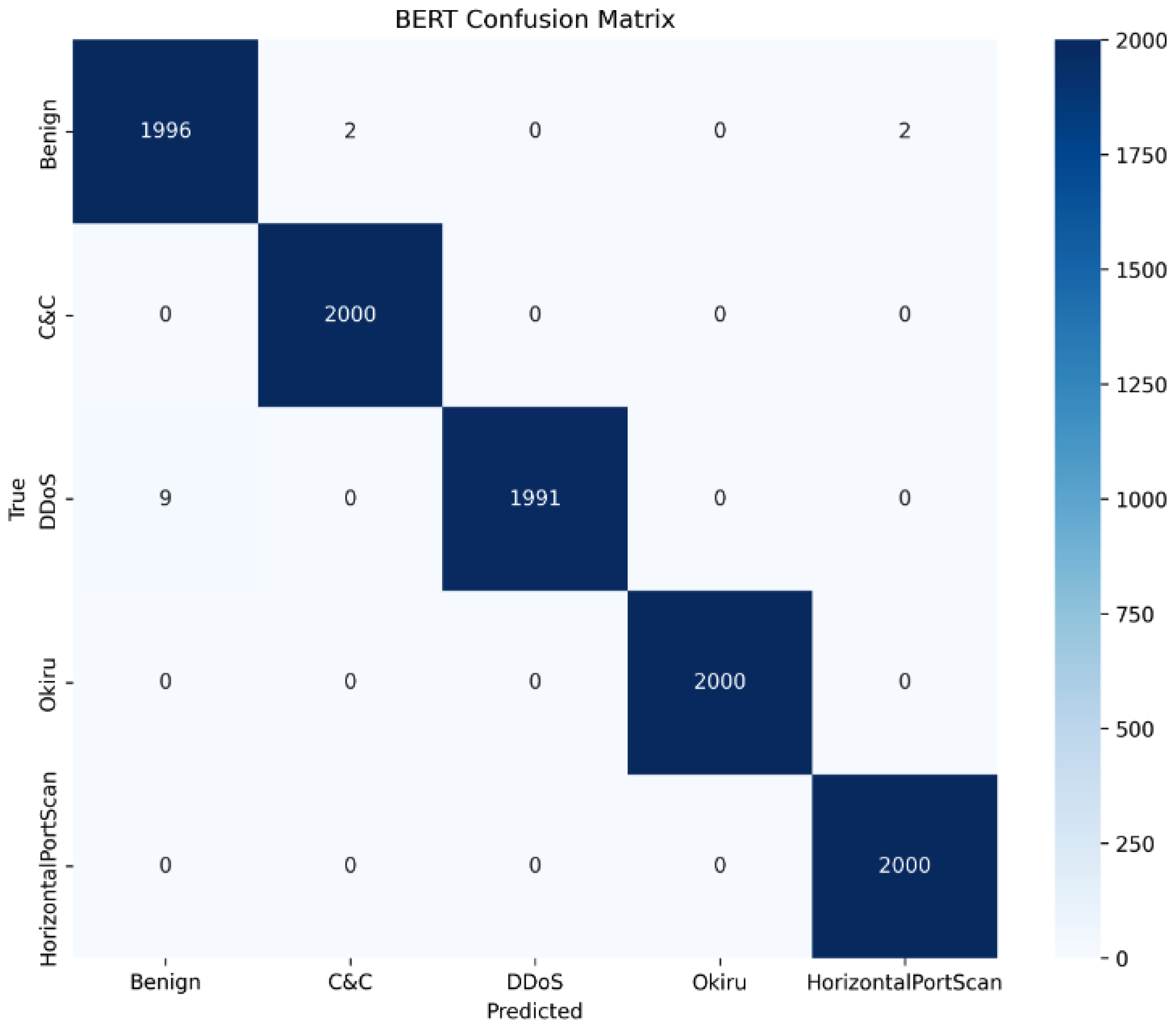

Threat Classification: Detected anomalies are passed to the BERT model, which classifies them into five categories—Benign, Command and Control, DDoS, Okiru, or Horizontal Port Scan—along with associated confidence scores.

Countermeasure Generation: Each classified threat is processed by Grok, which generates context-specific countermeasures (e.g., IP blocking, rate limiting) based on traffic features and threat type.

The pipeline, implemented in Python 3.10.13, processes CSV-formatted Aposemat IoT-23 data, ensuring interoperability for uniform data handling across VAE, BERT, and Grok3. The system’s architecture leverages the strengths of each model: VAE’s ability to detect novel anomalies without labeled data, BERT’s contextual understanding for accurate threat classification, and Grok’s generative capabilities for producing actionable and adaptive countermeasures. The system aims to process network traffic dynamically, ensuring scalability and adaptability for smart home environments. By combining unsupervised anomaly detection with supervised classification and generative countermeasure design, the pipeline provides a solution that not only identifies but also responds to cyber threats.

3.2. Dataset Description and Preprocessing

The Aposemat IoT-23 dataset [

22] is used to train and evaluate the proposed generative AI pipeline for smart home IoT security. This dataset, curated by Stratosphere Labs, contains simulated network traffic from IoT devices, capturing both benign and malicious activities. It comprises 23 scenarios, including various attack types, making it representative of smart home IoT environments. For this study, five classes of smart home threats are selected to align with common threats: Benign, C&C, DDoS, Okiru, and Horizontal Port Scan. These classes cover a range of attack vectors, from botnet communications to service disruptions, ensuring a comprehensive evaluation of the pipeline.

Preprocessing transforms raw network captures into a format suitable for the VAE, BERT, and Grok models. The dataset includes features such as duration, orig_bytes, resp_bytes, proto, service, and history, among others. The preprocessing steps are detailed below.

3.2.1. Handling Missing Values via Scenario-Specific Imputation

Missing values in the dataset, particularly in features like service and duration, were addressed using a scenario-specific imputation strategy. The Aposemat IoT-23 dataset comprises independent simulation scenarios. Training a single imputation model on the aggregated dataset would fail to capture the unique network behaviors and device characteristics inherent to each specific capture file.

Therefore, imputation models were instantiated and trained locally for each capture file. For categorical features (e.g., proto, service), a LightGBM classifier was fitted strictly on the subset of complete rows within that specific scenario. For numerical features (e.g., duration, orig_bytes), a LightGBM regressor paired with an autoencoder was used to refine imputation (see Algorithm 1 and

Table 1). This process ensures that the imputation learns only from the local context (e.g., a specific Mirai botnet run) and does not leak distributional information from the global dataset.

| Algorithm 1: Scenario-Specific Missing Value Imputation |

![Electronics 15 00092 i001 Electronics 15 00092 i001]() |

The aggregation of data and subsequent splitting into Training (VAE/BERT training) and Testing (Pipeline evaluation) sets was performed after this localized cleaning process. This enforces data separation, ensuring that the generative models were evaluated on patterns they had not implicitly learned during preprocessing.

3.2.2. Labeling and Filtering

The dataset includes five classes: Benign, C&C, DDoS, Okiru, and Horizontal Port Scan. Feature selection is performed using Chi-square tests and Principal Component Analysis (PCA) to identify important features and eliminate non-informative ones (e.g., uid, id_orig_h), reducing dimensionality and improving efficiency.

3.2.3. Normalization/Encoding

Categorical features (e.g., proto, service) are converted to numerical representations using one-hot encoding to ensure compatibility with the VAE and BERT models. Numerical features (e.g., duration, resp_bytes) are normalized using StandardScaler to standardize their range, mitigating the impact of varying scales on model performance.

The preprocessed dataset is stored in CSV format, containing selected features, labels, and metadata, ensuring interoperability across pipeline components. This preprocessing approach ensures the data is clean, representative, and optimized for anomaly detection, threat classification, and countermeasure generation.

3.3. Variational Autoencoder for Anomaly Detection

The VAE serves as the initial stage in the generative AI pipeline, conducting unsupervised anomaly detection on smart home IoT traffic. It learns a probabilistic representation of normal traffic patterns to identify deviations that signal potential threats, particularly useful for zero-day attack detection.

3.3.1. Encoder–Latent–Decoder Structure

The VAE architecture comprises an encoder, a 6-dimensional latent space, and a decoder, implemented as a neural network (see

Figure 2). The encoder maps input features (e.g., duration, resp_bytes, proto) to the latent space through dense layers with dimensions [128, 64, 32]. The latent space is parameterized by mean and variance vectors, which enforce a Gaussian distribution through sampling. The decoder reconstructs the input from the latent representation through mirrored layers [32, 64, 128]. This structure balances model complexity and efficiency.

3.3.2. Activation Functions and Loss Function

Hidden layers use Leaky ReLU activations for non-linearity, with batch normalization to stabilize training and a dropout rate of 0.2 to prevent overfitting. The loss function combines reconstruction and regularization terms:

where

x is the input,

is the reconstructed output,

is the encoder’s distribution,

is a standard normal prior, and

weights the KL-divergence for latent space regularization.

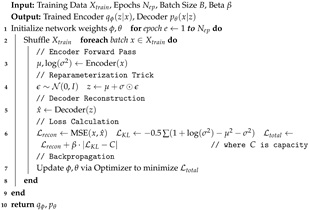

3.3.3. Training on Benign Data Only

The VAE is trained on 1,000,000 benign samples from the Aposemat IoT-23 dataset to capture normal traffic patterns. Training employs an optimizer with a learning rate of (1 × 10

−4), weight decay of (1 × 10

−6), and a batch size of 256 over 100 epochs, as shown in Algorithm 2 and

Table 2. A validation set of 100,000 benign samples ensures convergence. The contamination parameter is set to 0.01, reflecting the expected proportion of anomalies, and a capacity parameter of 0.1 controls model complexity.

| Algorithm 2: Variational Autoencoder (VAE) Training Procedure |

![Electronics 15 00092 i002 Electronics 15 00092 i002]() |

3.3.4. Threshold Selection for Anomaly Scoring

During inference, the VAE computes the Mean Squared Error (MSE) for each sample. A threshold is selected to classify samples as anomalous, determined using a validation set to prioritize high recall and minimize the number of missed threats. Anomalous samples are forwarded to the BERT module for classification.

3.4. BERT-Based Threat Classification

The BERT model serves as the second stage of the generative AI pipeline, classifying anomalies flagged by the VAE into specific threat categories.

3.4.1. Role of the Benign Class and Hierarchical Conflict Resolution

The VAE is intentionally tuned for high sensitivity, utilizing a low reconstruction error threshold (MSE > 0.26) based on Recall prioritization, resulting in a negligible missed-detection rate during evaluation. This configuration prioritizes the capture of almost all potential threats (minimizing False Negatives) at the cost of admitting a higher rate of benign traffic as potential anomalies (False Positives).

The BERT model serves two distinct purposes: (1) to classify the specific attack type of true anomalies, and (2) to act as a precision filter for the False Positives generated by the VAE. In cases of contradictory predictions—where the VAE flags a sample as anomalous but BERT classifies it as “Benign”, the system prioritizes the supervised BERT model’s prediction. The sample is treated as a False Positive from the unsupervised stage and is discarded from the countermeasure generation queue. This hierarchical approach leverages the VAE’s ability to catch novel deviations and BERT’s contextual understanding to reduce false alarms. Conversely, samples classified as benign by the VAE bypass the BERT module entirely.

3.4.2. Input Format for BERT Classification

The BERT model processes preprocessed network traffic features from the Aposemat IoT-23 dataset, including categorical features (e.g., proto, service) and numerical features (e.g., duration, orig_bytes, resp_bytes). These features are transformed into tokenized sequences using the bert-base-uncased tokenizer from the Hugging Face transformers library [

39]. Each sample is converted into a sequence of token IDs, attention masks, and token type IDs, suitable for BERT’s sequence classification task.

3.4.3. Number of Classes

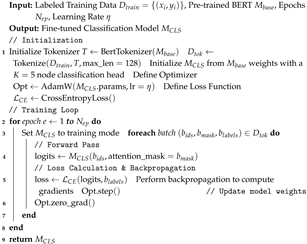

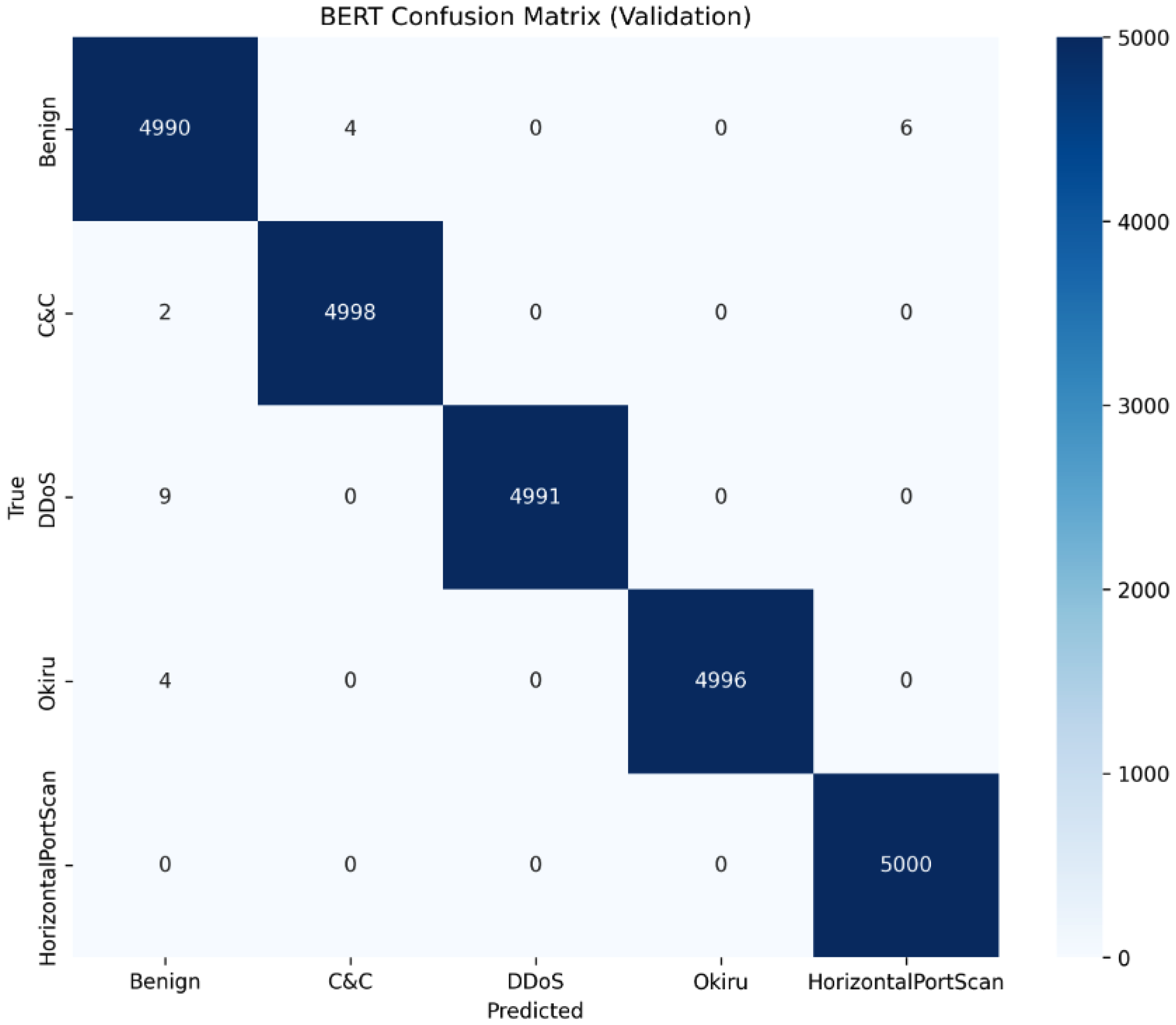

The pre-trained BERT model is fine-tuned for a 5-class classification task, corresponding to the threat categories: Benign (label 0), C&C (label 1), DDoS (label 2), Okiru (label 3), and Horizontal Port Scan (label 4), as illustrated in Algorithm 3 and

Table 3.

| Algorithm 3: BERT Fine-Tuning for Threat Classification |

![Electronics 15 00092 i003 Electronics 15 00092 i003]() |

3.4.4. Training, Validation, and Testing Split

The dataset is divided into training, validation, and testing sets for effective fine-tuning and evaluation. The training set has 150,000 samples (30,000 per class) for balanced representation across Benign, C&C, DDoS, Okiru, and Horizontal Port Scan. The validation set consists of 25,000 samples (5000 per class) for hyperparameter tuning and monitoring. The testing set includes 25,000 samples (5000 per class) to assess performance on unseen data.

3.4.5. Fine-Tuning and Evaluation Setup

Fine-tuning adapts the pre-trained bert-base-uncased model by adding a classification head for the 5-class task and with a learning rate of (3 × 10

−5), a batch size of 32, and early stopping after three epochs without improvement in validation loss or when accuracy passes 99% (see

Table 3 and

Table 4, and Algorithm 4). The model is trained using the Hugging Face transformers library, with evaluation loss (eval_loss) monitored to select the best model checkpoint. The setup is designed to leverage BERT’s contextual understanding for accurate threat classification in IoT networks.

| Algorithm 4: Model Training with Dual Early Stopping Strategy |

![Electronics 15 00092 i004 Electronics 15 00092 i004]() |

3.4.6. Classification Process

The BERT model transforms the tokenized input sequence into a probability distribution over the five threat classes. Given an input feature sequence

X, comprising tokenized network traffic features, BERT processes

X through its transformer layers to produce a contextualized embedding. A linear classification head, followed by a softmax function, maps this embedding to class probabilities:

where

W is the weight matrix,

h is the contextualized embedding,

b is the bias vector, and

represents the probability distribution over the classes

(Benign, C&C, DDoS, Okiru, Horizontal Port Scan). This process ensures that BERT assigns each anomaly a threat label with an associated confidence score, facilitating precise classification for the generation of downstream countermeasures.

3.5. LLM-Based Countermeasure Generation

The final stage of the generative AI pipeline employs Grok 3, a transformer-based large language model developed by xAI, to generate context-specific countermeasures for cyber threats identified by the VAE and classified by BERT. Grok 3 leverages advanced natural language processing to produce actionable, human-readable mitigation strategies, addressing the limitations of static, rule-based countermeasures.

3.5.1. Prompt Engineering: Input Structure and Context

Grok 3 is driven by structured prompts that encapsulate comprehensive threat information, ensuring precise countermeasure generation. Each prompt includes:

A SYSTEM_PROMPT defining the requested behavior: “You are a cybersecurity expert tasked with generating specific and actionable countermeasures for network attacks based on the provided IoT smart home network traffic data.”

The VAE’s Mean Squared Error (MSE) reconstruction error, indicating anomaly severity.

The BERT classification label (e.g., Benign, C&C, DDoS, Okiru, Horizontal Port Scan) and confidence score, providing threat type and certainty.

Original sample features (e.g., duration, orig_bytes, resp_bytes, orig_ip_bytes, resp_ip_bytes, proto, service).

An example prompt is:

“Analyze the following network traffic sample: Duration: 3976 s, Original Bytes: 42,439, Original IP Bytes: 25,752.22, Response IP Bytes: 0.0, Service: http. It is classified as an Okiru attack with a VAE MSE reconstruction error of 0.222 and a BERT confidence of 1.0. Suggest specific countermeasures to mitigate this threat attack.”

Prompts are created using Python libraries (pandas, requests) and processed via xAI’s API with a temperature setting of 0 to prioritize determinism. Samples are processed in batches of five with a 1-s delay to manage API rate limits.

3.5.2. Output Structure

Grok 3 generates countermeasures in a human-readable format, presenting them as lists of actionable recommendations tailored to specific identified threats. For instance, in the case of an Okiru attack, the output includes suggestions such as blocking infected IP addresses, implementing rate limiting, and enabling intrusion detection systems. These recommendations are addressing the unique characteristics of the attacks, such as botnet communications for Okiru and flooding for DDoS attacks.

3.5.3. Post-Processing or Formatting

Post-processing standardizes Grok 3’s outputs for usability:

Parsing: Text output is parsed to extract individual countermeasures, ensuring clarity and consistency.

Categorization: Countermeasures are grouped by threat type (e.g., Okiru, DDoS) based on similarity to create aggregated mitigation strategies.

Severity Assignment: Severity levels (Low, Medium, High) are assigned using the VAE MSE score (considering the deviation from the threshold), prioritizing actions for high-severity threats (e.g., immediate IP blocking) over monitoring for lower-severity ones.

Processed countermeasures are stored in CSV format with metadata (e.g., sample_id, prompt, attack_label) for integration into the pipeline and real-time deployment.

3.6. System Integration and Simulation Setup

3.6.1. Pipeline Execution Flow: Anomaly → Classification → Response

The pipeline processes network traffic data sequentially, as illustrated in Algorithm 5.

VAE Anomaly Detection: Preprocessed network traffic samples, including features like duration, orig_bytes, and proto, are fed into the VAE. The VAE, trained on benign samples, computes reconstruction errors (Mean Squared Error, MSE) and flags samples exceeding a predefined threshold as anomalous. These anomalous samples, along with their MSE scores, are passed to the BERT module.

BERT Threat Classification: The BERT model, fine-tuned for a 5-class classification task (Benign, C&C, DDoS, Okiru, Horizontal Port Scan), processes anomalous samples. It tokenizes input features and assigns threat labels with confidence scores, filtering out false positives. Classified malicious samples and their metadata are forwarded to Grok 3.

Grok 3 Countermeasure Generation: Grok 3 receives structured prompts containing the VAE’s MSE score, BERT’s threat label, confidence score, and original sample features. It generates context-specific countermeasures, stored in CSV format with metadata (e.g., sample_id, attack_label). The pipeline is implemented in Python, using libraries such as pandas for data handling and requests for API interactions with Grok 3, ensuring seamless data flow across components.

The data is stored as CSV files for interoperability and processed sequentially to ensure each stage builds on the previous one’s output.

| Algorithm 5: Generative AI Threat Detection and Response Pipeline |

![Electronics 15 00092 i005 Electronics 15 00092 i005]() |

3.6.2. Simulation Setup

The pipeline’s performance is evaluated through a simulation using 10,000 network traffic sessions from the Aposemat IoT-23 dataset, which includes a mix of benign and malicious traffic (C&C, DDoS, Okiru, Horizontal Port Scan). The simulation processes samples sequentially through the VAE, BERT, and Grok 3 to assess the functionality of each component. Data handling is optimized with intermediate outputs stored in CSV files for traceability.

3.6.3. Evaluation Strategy for End-to-End Performance

The end-to-end performance is assessed using quantitative and qualitative metrics to evaluate detection, classification, and response effectiveness:

Quantitative Metrics: Detection rate (proportion of malicious samples correctly identified and classified), false positive rate (benign samples incorrectly flagged or misclassified), and classification accuracy.

Qualitative Metrics: Countermeasure quality, assessed for relevance (specificity to the threat), alignment (with IoT security best practices) [

23,

24,

25,

26,

27,

28], and actionability (feasibility in smart home settings).

The evaluation utilizes a test set of 10,000 samples to ensure a robust assessment across diverse threat scenarios, with results validated through both statistical and qualitative analysis.

5. Conclusions and Future Work

This study presents a novel generative AI pipeline for enhancing smart home IoT security, including a VAE model for unsupervised anomaly detection, a fine-tuned BERT model for threat classification, and Grok 3 for automated countermeasure generation. Evaluated on the Aposemat IoT-23 dataset, the pipeline achieves a 99.95% detection rate, a 10.54% VAE false positive rate (FPR), and a 0.04% post-BERT FPR, with BERT classification accuracy of 99.90%. The VAE’s high recall (99.9%) ensures robust anomaly detection, while BERT’s precise classification minimizes false positives and Grok 3’s countermeasures provide adaptive, threat-specific responses. These results outperform traditional static intrusion detection systems [

40], addressing critical gaps in IoT security by enabling real-time, scalable threat detection and mitigation.

The pipeline’s preprocessing, leveraging LightGBM imputation and PCA (92% variance retention), ensures data quality, supporting the models’ high performance. The sequential architecture (VAE → BERT → Grok 3) creates a cohesive framework, validated through comprehensive simulations and metrics.

Future work should focus on model compression for scalability, local deployment of Grok 3 to reduce API latency, and testing on real-world IoT datasets for improved generalizability. Addressing operational impacts for countermeasures will strengthen evaluations. The proposed solution has broader applications, including industrial IoT and protecting critical infrastructure.