BERTSC: A Multi-Modal Fusion Framework for Stablecoin Phishing Detection Based on Graph Convolutional Networks and Soft Prompt Encoding

Abstract

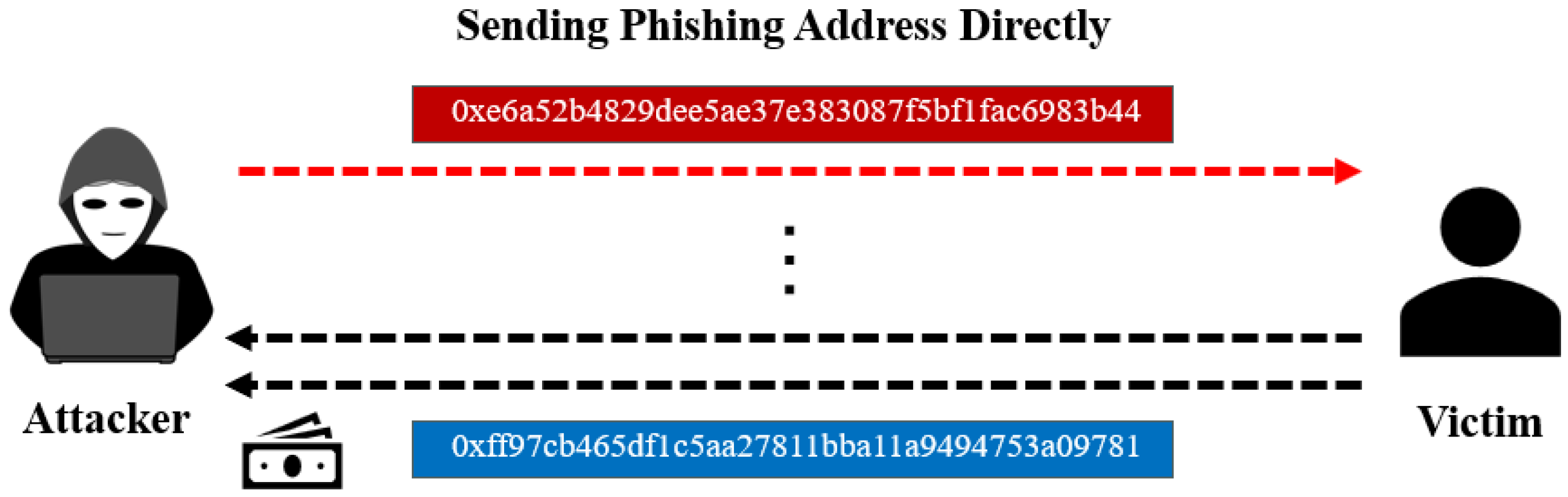

1. Introduction

- Stablecoin anomaly phishing detection framework with multi-edge heterogeneous graph: This work introduces a directed multi-edge heterogeneous graph of global account interactions that integrates multi-dimensional edge attributes—such as transaction amounts, temporal differences, and GasPrice—directly into Graph Convolutional Network inputs, augmented by a soft prompt encoder that adaptively maps numerical interaction priors into semantically enriched learnable vectors for enhanced multi-modal fusion.

- Hierarchical dynamic three-way gating for iterative multi-modal fusion: A novel tri-gate algorithm is proposed to synergistically integrate semantic embeddings, graph topology, and soft-prompted features. Through an iterative fusion architecture, the mechanism refines the comprehensive representation by re-integrating initial semantic and structural cues, ensuring superior detection reliability and robust feature expressiveness.

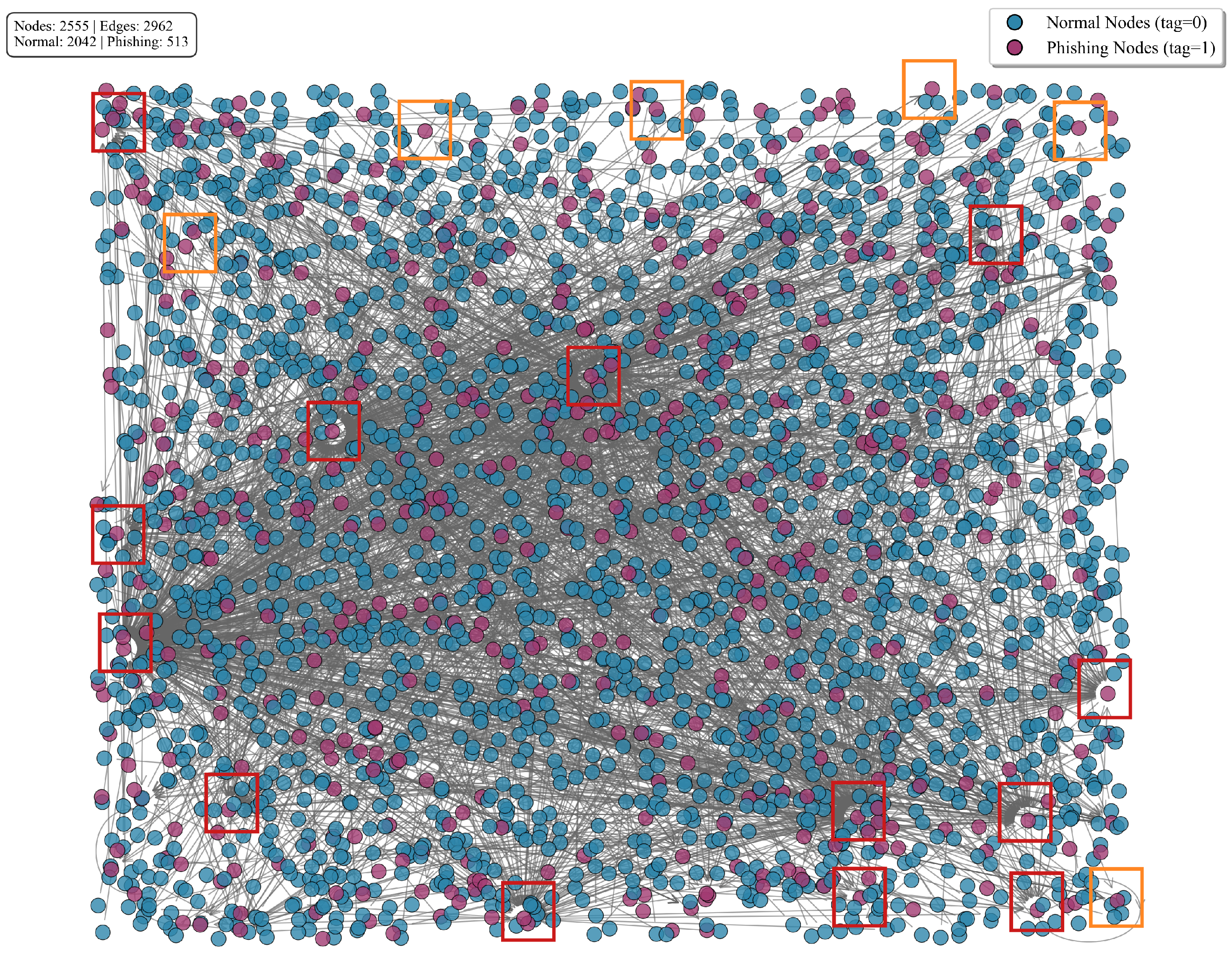

- Extensive real-world dataset construction and empirical validation: A large-scale stablecoin transaction dataset is curated, encompassing over 2.5 million nodes, 13 million directed edges with rich attributes, and 1766 phishing instances; rigorous evaluations demonstrate the BERTSC model’s superiority, achieving a 4.96% precision uplift over state-of-the-art baselines, underscoring its robustness in detecting phishing scams within decentralized financial ecosystems.

2. Related Work

2.1. Multi-Semantic Perception Approaches Based on DeepWalk

2.2. Approaches Based on Graph Neural Networks

2.3. Fraud Detection Approaches Based on Time Series Analysis

3. Methods

3.1. Data Acquisition and Preprocessing

3.2. Graph Convolutional Network Feature Extraction

3.2.1. Graph Data Generation Based on Adjacency Matrices

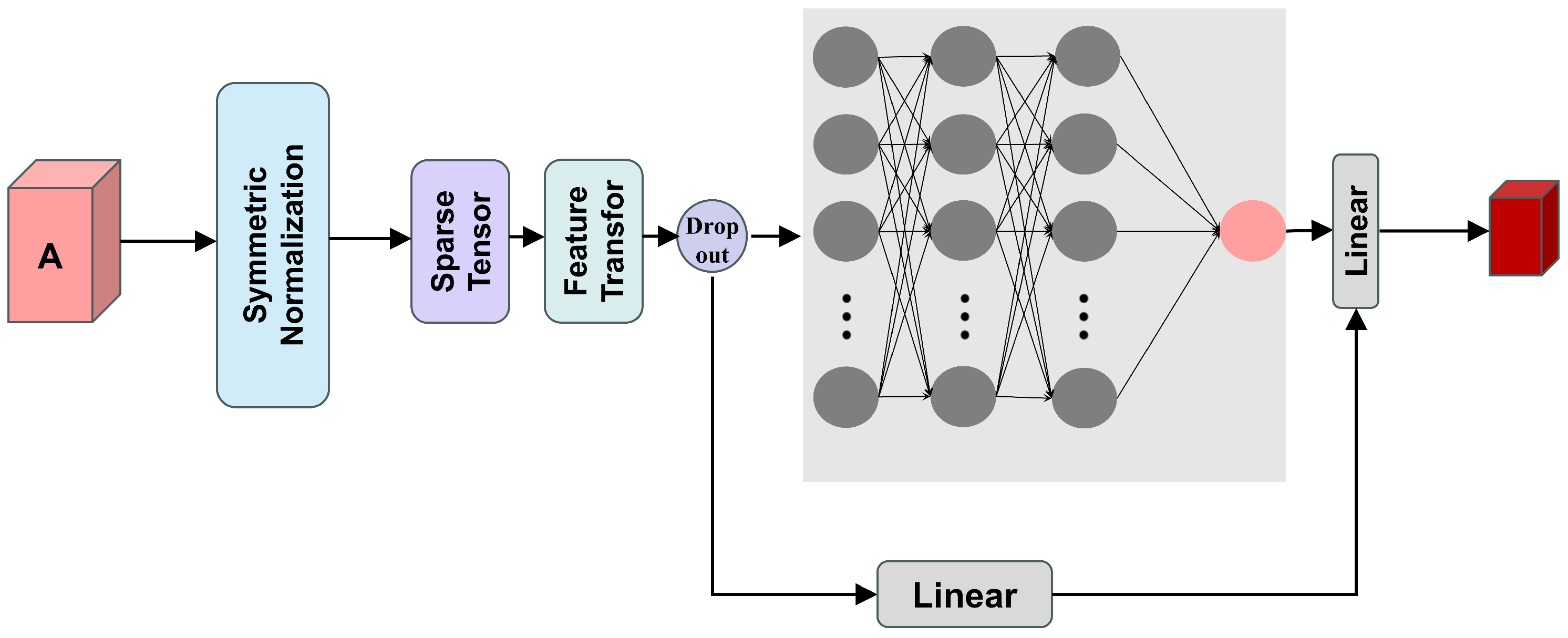

3.2.2. Graph-Based Representation Module

3.3. BERT Semantic Information Module

3.4. Soft Prompt Encoder Based on Account Interaction Features

| Algorithm 1 Vocab Graph Convolution (Soft Prompt Encoder) |

|

Account Interaction Feature Extraction

3.5. Three-Way Gate Control Mechanism

3.5.1. Gate-Controlled Network Design

| Algorithm 2 GCN-BERT-SoftPrompt Fusion Mechanism (Gating) |

|

3.5.2. Feature Fusion

3.6. Classification Module

4. Experimental Evaluation

4.1. Experimental Techniques and Evaluation Metrics

4.2. Comparative Analysis

- Graph embedding methods based on random walks include DeepWalk [12], Trans2Vec [13], Diff2Vec [17], and Role2Vec [18]: DeepWalk generates node sequences through random walks on graphs, utilising skip-gram models to learn low-dimensional node representations, and stands as a classic approach in graph embedding; Trans2Vec makes walks more inclined to follow the semantic and temporal weights of transaction relationships. Diff2Vec extracts sequences from subgraphs for representation learning; Role2Vec enables nodes with similar functions to obtain proximate representations.

- Graph neural network-based methods include GCN [7], GAT [26], and GSAGE [31]: GCN employs graph convolution operations for message propagation, updating node representations by aggregating neighbouring node information. GAT introduces an attention mechanism to compute weights between nodes, enabling adaptive learning of neighbouring node importance. GSAGE adopts a sampling and aggregation strategy, capable of processing large-scale graph data while supporting inductive learning.

- Mainstream baseline models include BERT4ETH [32], ETH-GBERT [27], TGN [33], and TLMG4Eth [34]: BERT4ETH applies the BERT model to Ethereum transaction data, learning semantic representations of transaction sequences through a pre-trained language model. ETH-GBERT combines a hybrid model of graph neural networks and BERT, enhancing BERT’s semantic understanding capabilities through graph structural information. TGN proposes a generic framework to represent as sequences of timed events, combining memory modules with graph-based operators to capture temporal dynamics. TLMG4Eth integrates a transaction language model with graph representation learning, fusing semantic embeddings from transaction sentences with similarity and structural features for Ethereum fraud detection.

4.3. Ablation Study

5. Discussion

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Pal, A.; Tiwari, C.K.; Behl, A. Blockchain technology in financial services: A comprehensive review of the literature. J. Glob. Oper. Strateg. Sourc. 2021, 14, 61–80. [Google Scholar] [CrossRef]

- Bhowmik, M.; Chandana, T.S.S.; Rudra, B. Comparative study of machine learning algorithms for fraud detection in blockchain. In Proceedings of the 2021 5th International Conference on Computing Methodologies and Communication (ICCMC), Erode, India, 8–10 April 2021; pp. 539–541. [Google Scholar]

- Wenhua, Z.; Qamar, F.; Abdali, T.-A.N.; Hassan, R.; Jafri, S.T.A.; Nguyen, Q.N. Blockchain technology: Security issues, healthcare applications, challenges and future trends. Electronics 2023, 12, 546. [Google Scholar] [CrossRef]

- Mita, M.; Ito, K.; Ohsawa, S.; Tanaka, H. What is stablecoin? A survey on price stabilization mechanisms for decentralized payment systems. In Proceedings of the 2019 8th International Congress on Advanced Applied Informatics (IIAI-AAI), Toyama, Japan, 7–11 July 2019; pp. 60–66. [Google Scholar]

- Aldasoro, I.; Frost, J.; Lim, S.H.; Perez-Cruz, F.; Shin, H.S. An Approach to Anti-Money Laundering Compliance for Cryptoassets; Bank for International Settlements: Basel, Switzerland, 2025. [Google Scholar]

- Givargizov, I. Unstable Financial and Economic Factors in the World and Their Influence on the Development of Blockchain Technologies. Int. Humanit. Univ. Her. Econ. Manag. 2023, 55. [Google Scholar] [CrossRef]

- Kipf, T.N. Semi-supervised classification with graph convolutional networks. arXiv 2016, arXiv:1609.02907. [Google Scholar]

- Béres, F.; Seres, I.A.; Benczúr, A.A.; Quintyne-Collins, M. Blockchain is watching you: Profiling and deanonymizing ethereum users. In Proceedings of the 2021 IEEE International Conference on Decentralized Applications and Infrastructures (DAPPS), Online, 23–26 August 2021; pp. 69–78. [Google Scholar]

- Ancelotti, A.; Liason, C. Review of blockchain application with graph neural networks, graph convolutional networks and convolutional neural networks. arXiv 2024, arXiv:2410.00875. [Google Scholar] [CrossRef]

- Mahrous, A.; Caprolu, M.; Di Pietro, R. Stablecoins: Fundamentals, Emerging Issues, and Open Challenges. arXiv 2025, arXiv:2507.13883. [Google Scholar] [CrossRef]

- Osterrieder, J.; Chan, S.; Chu, J.; Zhang, Y.; Misheva, B.H.; Mare, C. Enhancing security in blockchain networks: Anomalies, frauds, and advanced detection techniques. arXiv 2024, arXiv:2402.11231. [Google Scholar] [CrossRef]

- Perozzi, B.; Al-Rfou, R.; Skiena, S. Deepwalk: Online learning of social representations. In Proceedings of the 20th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, New York, NY, USA, 24–27 August 2014; pp. 701–710. [Google Scholar]

- Wu, J.; Yuan, Q.; Lin, D.; You, W.; Chen, W.; Chen, C.; Zheng, Z. Who are the phishers? Phishing scam detection on ethereum via network embedding. IEEE Trans. Syst. Man Cybern. Syst. 2020, 52, 1156–1166. [Google Scholar] [CrossRef]

- Belghith, K.; Fournier-Viger, P.; Jawadi, J. Hui2Vec: Learning transaction embedding through high utility itemsets. In Proceedings of the International Conference on Big Data Analytics, Hyderabad, India, 19–22 December 2022; pp. 211–224. [Google Scholar]

- Ahmed, U.; Srivastava, G.; Lin, J.C.-W. A federated learning approach to frequent itemset mining in cyber-physical systems. J. Netw. Syst. Manag. 2021, 29, 42. [Google Scholar] [CrossRef]

- Luo, J.; Qin, J.; Wang, R.; Li, L. A phishing account detection model via network embedding for Ethereum. IEEE Trans. Circuits Syst. II Express Briefs 2023, 71, 622–626. [Google Scholar] [CrossRef]

- Rozemberczki, B.; Sarkar, R. Fast sequence-based embedding with diffusion graphs. In Proceedings of the International Workshop on Complex Networks, Santiago de Compostela, Spain, 11–13 December 2018; pp. 99–107. [Google Scholar]

- Ahmed, N.K.; Rossi, R.; Lee, J.B.; Willke, T.L.; Zhou, R.; Kong, X.; Eldardiry, H. Learning role-based graph embeddings. arXiv 2018, arXiv:1802.02896. [Google Scholar] [CrossRef]

- Shen, J.; Zhou, J.; Xie, Y.; Yu, S.; Xuan, Q. Identity inference on blockchain using graph neural network. In Proceedings of the International Conference on Blockchain and Trustworthy Systems, Guangzhou, China, 8–10 December 2021; pp. 3–17. [Google Scholar]

- Huang, H.; Zhang, X.; Wang, J.; Gao, C.; Li, X.; Zhu, R.; Ma, Q. PEAE-GNN: Phishing detection on Ethereum via augmentation ego-graph based on graph neural network. IEEE Trans. Comput. Soc. Syst. 2024, 11, 4326–4339. [Google Scholar] [CrossRef]

- Zhou, J.; Hu, C.; Chi, J.; Wu, J.; Shen, M.; Xuan, Q. Behavior-aware account de-anonymization on Ethereum interaction graph. IEEE Trans. Inf. Forensics Secur. 2022, 17, 3433–3448. [Google Scholar] [CrossRef]

- Chen, Z.; Liu, S.-Z.; Huang, J.; Xiu, Y.-H.; Zhang, H.; Long, H.-X. Ethereum phishing scam detection based on data augmentation method and hybrid graph neural network model. Sensors 2024, 24, 4022. [Google Scholar] [CrossRef] [PubMed]

- Farrugia, S.; Ellul, J.; Azzopardi, G. Detection of illicit accounts over the Ethereum blockchain. Expert Syst. Appl. 2020, 150, 113318. [Google Scholar] [CrossRef]

- Hu, T.; Liu, X.; Chen, T.; Zhang, X.; Huang, X.; Niu, W.; Lu, J.; Zhou, K.; Liu, Y. Transaction-based classification and detection approach for Ethereum smart contract. Inf. Process. Manag. 2021, 58, 102462. [Google Scholar] [CrossRef]

- Li, S.; Gou, G.; Liu, C.; Hou, C.; Li, Z.; Xiong, G. TTAGN: Temporal transaction aggregation graph network for ethereum phishing scams detection. In Proceedings of the ACM Web Conference 2022, Virtual Event, 25–29 April 2022; pp. 661–669. [Google Scholar]

- Veličković, P.; Cucurull, G.; Casanova, A.; Romero, A.; Lio, P.; Bengio, Y. Graph attention networks. arXiv 2017, arXiv:1710.10903. [Google Scholar]

- Sheng, Z.; Song, L.; Wang, Y. Dynamic Feature Fusion: Combining Global Graph Structures and Local Semantics for Blockchain Phishing Detection. IEEE Trans. Netw. Serv. Manag. 2025; in press. [Google Scholar]

- Zhang, J.; Sui, H.; Sun, X.; Ge, C.; Zhou, L.; Susilo, W. GrabPhisher: Phishing scams detection in Ethereum via temporally evolving GNNs. IEEE Trans. Serv. Comput. 2024, 17, 3727–3741. [Google Scholar] [CrossRef]

- Pan, B.; Stakhanova, N.; Zhu, Z. Ethershield: Time-interval analysis for detection of malicious behavior on ethereum. ACM Trans. Internet Technol. 2024, 21, 1–30. [Google Scholar] [CrossRef]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef]

- Hamilton, W.; Ying, Z.; Leskovec, J. Inductive representation learning on large graphs. In Advances in Neural Information Processing Systems 30; Guyon, I., Luxburg, U.V., Bengio, S., Wallach, H., Fergus, R., Vishwanathan, S., Garnett, R., Eds.; Curran Associates, Inc.: Red Hook, NY, USA, 2017. [Google Scholar]

- Hu, S.; Zhang, Z.; Luo, B.; Lu, B.; He, S.; Liu, L. Bert4eth: A pre-trained transformer for ethereum fraud detection. In Proceedings of the ACM Web Conference 2023, Austin, TX, USA, 30 April–4 May 2023; pp. 2189–2197. [Google Scholar]

- Rossi, E.; Chamberlain, B.; Frasca, F.; Eynard, D.; Monti, F.; Bronstein, M. Temporal graph networks for deep learning on dynamic graphs. arXiv 2020, arXiv:2006.10637. [Google Scholar] [CrossRef]

- Sun, J.; Jia, Y.; Wang, Y.; Tian, Y.; Zhang, S. Ethereum fraud detection via joint transaction language model and graph representation learning. Inf. Fusion 2025, 120, 103074. [Google Scholar] [CrossRef]

- Chawla, N.V.; Bowyer, K.W.; Hall, L.O.; Kegelmeyer, W.P. SMOTE: Synthetic minority over-sampling technique. J. Artif. Intell. Res. 2002, 16, 321–357. [Google Scholar] [CrossRef]

| Type | Features |

|---|---|

| Node | Tag (phishing label or normal label) |

| Edge | Timestamp difference (time interval between consecutive transactions) |

| Amount (transferred stablecoin value in USD equivalent) | |

| GasPrice (transaction fee rate in Gwei) |

| Attribute | Value |

|---|---|

| Time span | 2017–2025 |

| Number of nodes | 2,529,625 |

| Number of directed edges | 13,071,630 |

| Phishing nodes | 1766 |

| Normal nodes | 2,527,859 |

| Processing Type | Description |

|---|---|

| Address Dictionary Construction | Unique address to integer ID mapping |

| Transaction direction encoding (send = 1, receive = 0) | |

| Aggregation of historical transaction records per address | |

| Transaction-Level Features | Timestamp difference between transactions (seconds) |

| Transaction amount (in USD equivalent) | |

| GasPrice (in Gwei) | |

| Block number of the transaction |

| Parameter | Example Value |

|---|---|

| tag | 0 |

| Amount | 1000 |

| Send | 1 |

| 2-gram | 5.3 s |

| 3-gram | 10.25 s |

| 4-gram | 20.13 s |

| 5-gram | 60 s |

| Feature | Description |

|---|---|

| in_out_amount_ratio | Reflecting the account fund flows |

| out_in_amount_ratio | |

| in_out_count_ratio | Transaction frequency patterns |

| out_in_count_ratio | |

| avg_in_gasprice | Reflecting the transaction priority |

| avg_out_gasprice | |

| log_in_amount | Logarithmically transformed features |

| log_out_amount | |

| log_ratio_amount | |

| counterpart_diversity | Measuring the breadth of an account’s interactions with different addresses |

| is_high_in_out_ratio | Marking anomalous patterns of fund flows |

| is_sink_node | Determining whether the account is a sink (hub) node |

| is_source_node | Determining whether the account is a source node |

| Hyperparameter | Value |

|---|---|

| Optimizer | BertAdam |

| Learning rate | |

| Batch size | 16 |

| Epochs | 9 |

| Weight decay () | 0.001 |

| Dropout rate | 0.2 |

| Warmup proportion | 0.1 |

| Max sequence length | 216 |

| GCN embedding dimension | 16 |

| Number of prompt tokens | 4 |

| Activation function | ReLU |

| Method | Precision | Recall | F1-Score | ROC-AUC | PR-AUC | FPR |

|---|---|---|---|---|---|---|

| DeepWalk | 30.07 | 46.63 | 36.56 | 45.58 | 38.65 | 76.93 |

| Trans2Vec | 49.17 | 52.36 | 49.58 | 57.53 | 50.76 | 63.60 |

| Diff2Vec | 51.84 | 67.10 | 58.43 | 57.95 | 52.42 | 49.84 |

| Role2Vec | 62.35 | 51.22 | 56.27 | 71.42 | 62.80 | 34.82 |

| GCN | 43.70 | 51.72 | 46.23 | 49.75 | 44.27 | 66.39 |

| GAT | 46.76 | 56.23 | 43.57 | 52.68 | 47.11 | 58.13 |

| GSAGE | 35.06 | 42.39 | 38.38 | 43.12 | 37.21 | 64.82 |

| TGN | 75.32 | 77.19 | 76.32 | 80.17 | 77.42 | 26.34 |

| TLMG4Eth | 75.14 | 84.24 | 79.43 | 91.31 | 82.26 | 14.79 |

| BERT4ETH | 78.58 | 75.67 | 77.04 | 91.97 | 80.79 | 16.42 |

| ETH-GBERT | 84.94 | 85.87 | 85.36 | 93.38 | 85.63 | 13.93 |

| BERTSC (ours) | 89.90 | 89.47 | 89.59 | 94.73 | 90.43 | 10.16 |

| Method | Precision | Recall | F1-Score | ROC-AUC | PR-AUC | FPR |

|---|---|---|---|---|---|---|

| BERT&SOFT | 86.10 | 86.13 | 86.11 | 93.52 | 86.15 | 10.29 |

| GCN&SOFT | 81.34 | 72.63 | 73.41 | 88.68 | 81.62 | 36.72 |

| GCN&BERT | 84.94 | 85.87 | 85.36 | 93.84 | 89.44 | 13.28 |

| BERTSC (ours) | 89.90 | 89.47 | 89.59 | 94.73 | 90.43 | 10.16 |

| Method | Precision | Recall | F1-Score | ROC-AUC | PR-AUC | FPR |

|---|---|---|---|---|---|---|

| Baseline | 84.94 | 85.87 | 85.36 | 93.38 | 85.63 | 13.93 |

| Only Weight | 86.75 | 87.72 | 87.19 | 94.27 | 86.39 | 12.70 |

| IF&W | 89.90 | 89.47 | 89.59 | 94.73 | 90.43 | 10.16 |

| Method | Precision | Recall | F1-Score | ROC-AUC | PR-AUC | FPR |

|---|---|---|---|---|---|---|

| Without SMOTE | 32.67 | 51.15 | 39.52 | 53.69 | 37.41 | 73.52 |

| I&W + SMOTE | 53.15 | 53.36 | 50.02 | 54.00 | 37.92 | 56.91 |

| Method | Precision | Recall | F1-Score | ROC-AUC | PR-AUC | FPR |

|---|---|---|---|---|---|---|

| Oversampling | 88.54 | 86.84 | 87.14 | 93.89 | 89.80 | 15.62 |

| Undersampling | 89.08 | 88.42 | 88.59 | 93.62 | 89.42 | 11.72 |

| Original (no sampling) | 89.90 | 89.47 | 89.59 | 94.73 | 90.43 | 10.16 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Xie, W.; Chen, Q.; Zhu, K.; Feng, C.; Chen, Z. BERTSC: A Multi-Modal Fusion Framework for Stablecoin Phishing Detection Based on Graph Convolutional Networks and Soft Prompt Encoding. Electronics 2026, 15, 179. https://doi.org/10.3390/electronics15010179

Xie W, Chen Q, Zhu K, Feng C, Chen Z. BERTSC: A Multi-Modal Fusion Framework for Stablecoin Phishing Detection Based on Graph Convolutional Networks and Soft Prompt Encoding. Electronics. 2026; 15(1):179. https://doi.org/10.3390/electronics15010179

Chicago/Turabian StyleXie, Weixin, Qihao Chen, Kexin Zhu, Chen Feng, and Zhide Chen. 2026. "BERTSC: A Multi-Modal Fusion Framework for Stablecoin Phishing Detection Based on Graph Convolutional Networks and Soft Prompt Encoding" Electronics 15, no. 1: 179. https://doi.org/10.3390/electronics15010179

APA StyleXie, W., Chen, Q., Zhu, K., Feng, C., & Chen, Z. (2026). BERTSC: A Multi-Modal Fusion Framework for Stablecoin Phishing Detection Based on Graph Convolutional Networks and Soft Prompt Encoding. Electronics, 15(1), 179. https://doi.org/10.3390/electronics15010179