Learning the N-Input Parity Function with a Single-Qubit and Single-Measurement Sampling

Abstract

1. Introduction: The Parity Problem

2. A Single-Qubit Classifier for the Parity Problem

Solution Landscape

3. Configuration of the Training Procedure

4. The ESGD Method: Towards an Optimization with Single Measurement Sampling

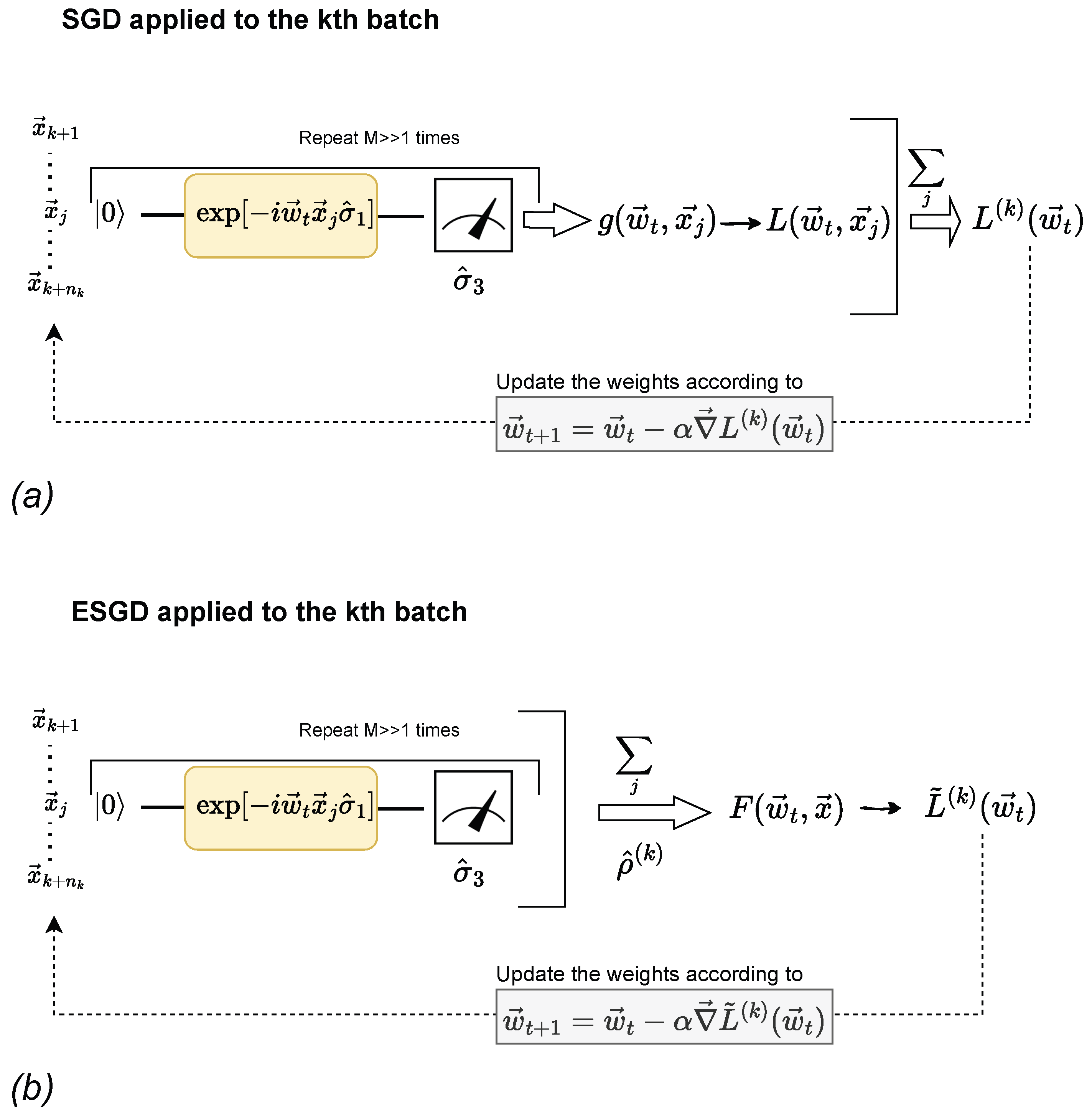

4.1. Application of the Stochastic Gradient Descent (SGD) Method

4.2. The Ensemble Stochastic Gradient Descent (ESGD) Method

4.3. The Doubly Stochastic Gradient Descent (DSGD) Method

4.4. Results of Optimization Methods

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| ESGD | Ensemble Stochastic Gradient Descent |

| NN | Neural Network |

| VQC | Variational Quantum Circuit |

| SQC | Single-Qubit Classifier |

| GD | Gradient Descent |

| SGD | Stochastic Gradient Descent |

| DSGD | Doubly Stochastic Gradient Descent |

References

- Theodoridis, S.; Koutroumbas, K. Pattern Recognition, 4th ed.; Academic Press: Cambridge, MA, USA, 2009. [Google Scholar]

- Fung, H.K.; Li, L.K. Minimal Feedforward Parity Networks Using Threshold Gates. Neural Comput. 2001, 13, 319–326. [Google Scholar] [CrossRef]

- Franco, L.; Cannas, S. Generalization properties of modular networks: Implementing the parity function. IEEE Trans. Neural Netw. 2001, 12, 1306–1313. [Google Scholar] [CrossRef] [PubMed]

- Leerink, L.R.; Giles, C.L.; Horne, B.G.; Jabri, M.A. Learning with product units. In Proceedings of the 8th International Conference on Neural Information Processing Systems NIPS’94, Denver, CO, USA, 1 January 1994; MIT Press: Cambridge, MA, USA, 1994; pp. 537–544. [Google Scholar]

- Havlíček, V.; Córcoles, A.D.; Temme, K.; Harrow, A.W.; Kandala, A.; Chow, J.M.; Gambetta, J.M. Supervised learning with quantum-enhanced feature spaces. Nature 2019, 567, 209–212. [Google Scholar] [CrossRef]

- Schuld, M.; Killoran, N. Quantum Machine Learning in Feature Hilbert Spaces. Phys. Rev. Lett. 2019, 122, 040504. [Google Scholar] [CrossRef]

- Schuld, M.; Bocharov, A.; Svore, K.M.; Wiebe, N. Circuit-centric quantum classifiers. Phys. Rev. A 2020, 101, 032308. [Google Scholar] [CrossRef]

- Sweke, R.; Wilde, F.; Meyer, J.; Schuld, M.; Faehrmann, P.K.; Meynard-Piganeau, B.; Eisert, J. Stochastic gradient descent for hybrid quantum-classical optimization. Quantum 2020, 4, 314. [Google Scholar] [CrossRef]

- Nielsen, M.A.; Chuang, I.L. Quantum Computation and Quantum Information: 10th Anniversary Edition; Cambridge University Press: Cambridge, UK, 2010. [Google Scholar]

- Farhi, E.; Goldstone, J.; Gutmann, S.; Sipser, M. Limit on the Speed of Quantum Computation in Determining Parity. Phys. Rev. Lett. 1998, 81, 5442–5444. [Google Scholar] [CrossRef]

- Jerbi, S.; Fiderer, L.J.; Poulsen Nautrup, H.; Kübler, J.M.; Briegel, H.J.; Dunjko, V. Quantum machine learning beyond kernel methods. Nat. Commun. 2023, 14, 517. [Google Scholar] [CrossRef]

- Mandilara, A.; Dellen, B.; Jaekel, U.; Valtinos, T.; Syvridis, D. Classification of data with a qudit, a geometric approach. Quantum Mach. Intell. 2024, 6, 17. [Google Scholar] [CrossRef]

- Crooks, G.E. Gradients of parameterized quantum gates using the parameter-shift rule and gate decomposition. arXiv 2019, arXiv:1905.13311. [Google Scholar]

- Schuld, M.; Bergholm, V.; Gogolin, C.; Izaac, J.A.; Killoran, N. Evaluating analytic gradients on quantum hardware. Phys. Rev. A 2019, 99, 032331. [Google Scholar] [CrossRef]

- Harrow, A.W.; Napp, J.C. Low-Depth Gradient Measurements Can Improve Convergence in Variational Hybrid Quantum-Classical Algorithms. Phys. Rev. Lett. 2021, 126, 140502. [Google Scholar] [CrossRef]

- Kübler, J.M.; Arrasmith, A.; Cincio, L.; Coles, P.J. An Adaptive Optimizer for Measurement-Frugal Variational Algorithms. Quantum 2020, 4, 263. [Google Scholar] [CrossRef]

- Lloyd, S.; Schuld, M.; Ijaz, A.; Izaac, J.; Killoran, N. Quantum embeddings for machine learning. arXiv 2020, arXiv:2001.03622. [Google Scholar]

| N | 6 | 7 | 8 | 9 | 10 |

|---|---|---|---|---|---|

| 0.97 | 0.97 | 0.8 | 0.7 | 0.6 | |

| 0.10 | 0.11 | 0.3 | 0.2 | 0.2 | |

| 0.96 | 0.94 | 0.6 | 0.34 | 0.16 |

| 2 | 4 | 8 | 16 | 32 | 64 | 128 | 256 | 512 | |

|---|---|---|---|---|---|---|---|---|---|

| # epocs | 2 | 4 | 8 | 16 | 32 | 64 | 128 | 256 | 512 |

| 0.7 | 0.7 | 0.6 | 0.6 | 0.7 | 0.58 | 0.56 | 0.50 | 0.55 | |

| 0.23 | 0.23 | 0.2 | 0.2 | 0.2 | 0.18 | 0.17 | 0.02 | 0.15 | |

| 0.24 | 0.28 | 0.28 | 0.24 | 0.32 | 0.16 | 0.12 | 0.0 | 0.10 |

| 2 | 4 | 8 | 16 | 32 | 64 | 128 | 256 | 512 | |

|---|---|---|---|---|---|---|---|---|---|

| # epocs | 2 | 4 | 8 | 16 | 32 | 64 | 128 | 256 | 512 |

| 0.70 | 0.86 | 0.97 | 0.96 | 0.997 | 0.998 | 0.998 | 0.97 | 0.98 | |

| 0.25 | 0.23 | 0.10 | 0.14 | 0.008 | 0.004 | 0.006 | 0.10 | 0.10 | |

| 0.40 | 0.72 | 0.84 | 0.72 | 0.96 | 0.96 | 0.92 | 0.84 | 0.96 |

| 8 | 16 | 32 | 64 | 128 | 256 | 512 | |

|---|---|---|---|---|---|---|---|

| # epocs | 8 | 16 | 32 | 64 | 128 | 256 | 512 |

| 0.75 | 0.91 | 0.967 | 0.98 | 0.98 | 0.988 | 0.999 | |

| 0.25 | 0.19 | 0.10 | 0.01 | 0.016 | 0.016 | 0.004 | |

| 0.44 | 0.76 | 0.60 | 0.32 | 0.44 | 0.64 | 0.96 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Tsili, A.; Maragkopoulos, G.; Mandilara, A.; Syvridis, D. Learning the N-Input Parity Function with a Single-Qubit and Single-Measurement Sampling. Electronics 2025, 14, 901. https://doi.org/10.3390/electronics14050901

Tsili A, Maragkopoulos G, Mandilara A, Syvridis D. Learning the N-Input Parity Function with a Single-Qubit and Single-Measurement Sampling. Electronics. 2025; 14(5):901. https://doi.org/10.3390/electronics14050901

Chicago/Turabian StyleTsili, Antonia, Georgios Maragkopoulos, Aikaterini Mandilara, and Dimitris Syvridis. 2025. "Learning the N-Input Parity Function with a Single-Qubit and Single-Measurement Sampling" Electronics 14, no. 5: 901. https://doi.org/10.3390/electronics14050901

APA StyleTsili, A., Maragkopoulos, G., Mandilara, A., & Syvridis, D. (2025). Learning the N-Input Parity Function with a Single-Qubit and Single-Measurement Sampling. Electronics, 14(5), 901. https://doi.org/10.3390/electronics14050901