HyFLM: A Hypernetwork-Based Federated Learning with Multidimensional Trajectory Optimization on Diffusion Paths

Abstract

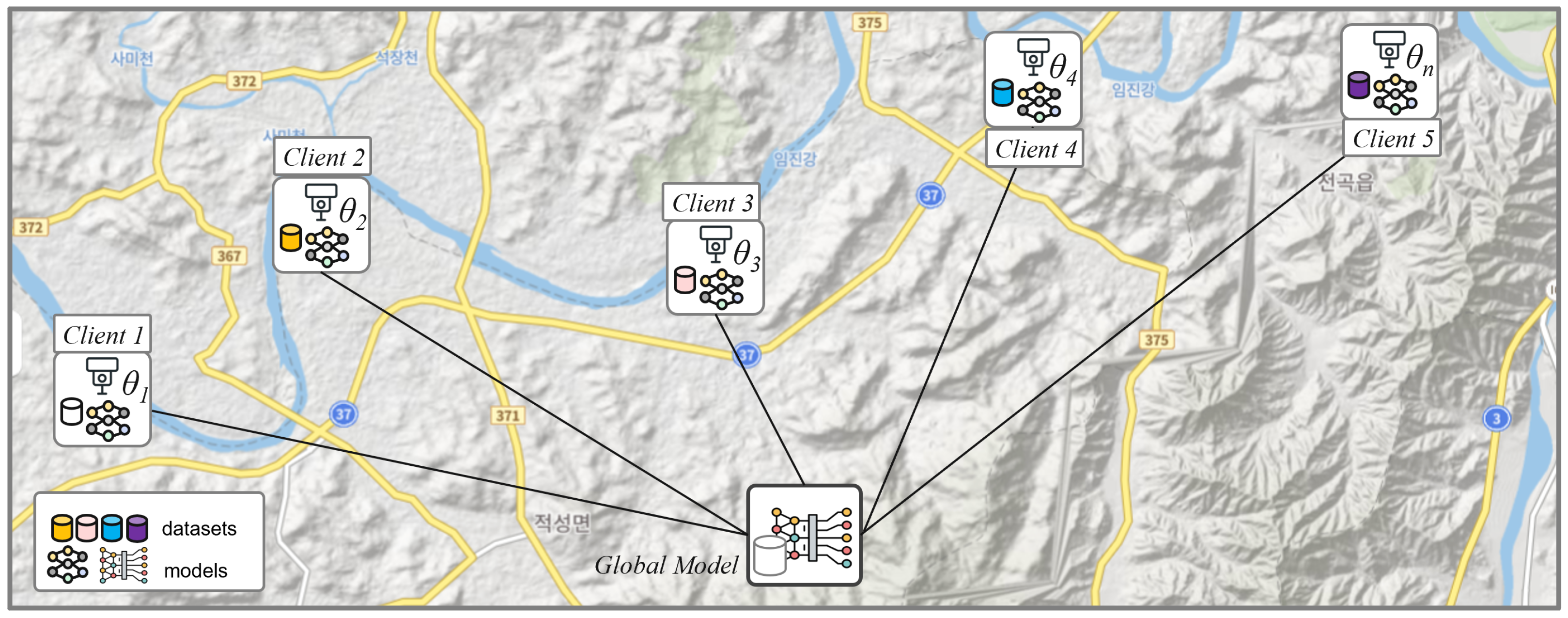

1. Introduction

- We propose a novel federated learning framework that combines diffusion-based aggregation and hypernetwork-driven personalization under the theory of inference trajectory optimization.

- Our empirical results show that the proposed approach achieves superior generalization performance on diverse benchmark datasets compared to well-recognized baselines.

- We demonstrate that HyFLM yields stable convergence under non-IID data and heterogeneous client models, reduced communication cost, and preserved security.

2. Related Work

2.1. Federated Learning

2.2. Hypernetwork-Based FL

2.3. Diffusion Based Federated Learning

3. Preliminaries

3.1. Design Diffusion-Based Inference Space

3.2. Multidimensional Adaptive Coefficient (MAC) for Inference Trajectory Optimization

4. Methodology

| Algorithm 1: HyFLM: A Hypernetwork-Based Federated Learning with Multidimensional Trajectory Optimization |

|

4.1. Research Methodology Overview

4.2. Diffusion-Based Training and Trajectory Optimization with MAC

- : Scales the magnitude of the diffusion update (drift or score term);

- : Controls the residual pull toward an anchor (e.g., the previous global model).

4.3. Hypernetwork-Based Personalization

5. Experiments

5.1. Experimental Environment

5.2. Dataset

- EMNIST. Handwritten English letters (26 classes), grayscale , which reflects on-device handwriting variability and serves as a classic benchmark.

- Fashion-MNIST. Grayscale clothing images (10 classes) at . It provides more shape/texture diversity than EMNIST and is suitable for lightweight CNNs.

- CIFAR-10. Natural RGB images (10 classes) at . Stresses color/texture cues and tests FL robustness under higher visual complexity.

5.3. Experiment

5.3.1. Baseline Methods

- Local—Each client independently trains its own model on local data without any communication or parameter sharing.

- FedAvg—The standard federated averaging algorithm with a single global model and aggregated each client model.

- FedPer—Partial personalization that shares a common feature extractor while each client keeps a private classification head.

- LG-FedAvg—Layer-grouped FedAvg updates lower layers globally while upper layers remain local, allowing architectural heterogeneity with minimal communication.

- pFedHN—Hypernetwork-based personalization where a server-side hypernetwork generates (parts of) client weights or adapters conditioned on a client embedding.

- pFedGPA—Diffusion-based generative parameter aggregation; the server learns a diffusion model over client parameters, performs parameter inversion, and synthesizes personalized weights by denoising sampling.

5.3.2. Implementation Details

6. Results and Discussion

6.1. Experimental Result

6.1.1. Personalization Performance Comparison

6.1.2. Communication Efficiency and Scalability

6.2. Discussion

6.3. Future Work

7. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| AI | Artificial Intelligence |

| DL | Deep Learning |

| DDPM | Denoising Diffusion Probabilistic Model |

| FedAvg | Federated Averaging |

| FL | Federated Learning |

| GPU | Graphics Processing Unit |

| ITO | Inference Trajectory Optimization |

| MAC | Multidimensional Adaptive Coefficient |

| ML | Machine Learning |

| MTO | Multidimensional Trajectory Optimization |

| IID | Independent and Identically Distributed |

| non-IID | non Independent and Identically Distributed |

| pFL | Personalized Federated Learning |

| NFE | Number of Function Evaluations |

| NLP | Natural Language Processing |

| CNN | Convolutional Neural Network |

| SGD | Stochastic Gradient Descent |

References

- Lu, Z.; Pan, H.; Dai, Y.; Si, X.; Zhang, Y. Federated Learning with Non-IID Data: A Survey. IEEE Internet Things J. 2024, 11, 19188–19209. [Google Scholar] [CrossRef]

- McMahan, H.B.; Moore, E.; Ramage, D.; Hampson, S.; Arcas, B.A.Y. Communication-Efficient Learning of Deep Networks from Decentralized Data. In Proceedings of the 20th International Conference on Artificial Intelligence and Statistics (AISTATS), Fort Lauderdale, FL, USA, 20–22 April 2017. [Google Scholar]

- Bonawitz, K.; Eichner, H.; Grieskamp, W.; Huba, D.; Ingerman, A.; Ivanov, V.; Kiddon, C.; Konečný, J.; Mazzocchi, S.; McMahan, H.B.; et al. Towards federated learning at scale: System design. arXiv 2019. [Google Scholar] [CrossRef]

- Phong, L.T.; Aono, Y.; Hayashi, T.; Wang, L.; Moriai, S. Privacy-preserving deep learning via additively homomorphic encryption. IEEE Trans. Inf. Forensics Secur. 2018, 13, 1333–1345. [Google Scholar] [CrossRef]

- Bonawitz, K.; Ivanov, V.; Kreuter, B.; Marcedone, A.; McMahan, H.B.; Patel, S.; Ramage, D.; Segal, A.; Seth, K. Practical secure aggregation for privacy-preserving machine learning. In Proceedings of the 2017 ACM SIGSAC Conference on Computer and Communications Security (CCS ’17), Dallas, TX, USA, 30 October–3 November 2017; Association for Computing Machinery: New York, NY, USA, 2017; pp. 1175–1191. [Google Scholar] [CrossRef]

- McMahan, B.; Ramage, D. Federated Learning: Collaborative Machine Learning Without Centralized Training Data. 2017. Available online: https://research.google/blog/federated-learning-collaborative-machine-learning-without-centralized-training-data/ (accessed on 24 October 2024).

- McMahan, H.B.; Ramage, D.; Talwar, K.; Zhang, L. Learning differentially private recurrent language models. arXiv 2018. [Google Scholar] [CrossRef]

- Li, Z.; Xu, P.; Chang, X.; Yang, L.; Zhang, Y.; Yao, L.; Chen, X. Feature Fusion for Object Detection Knowledge Distillation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–23 June 2018. [Google Scholar]

- Su, S.; Li, B.; Zhang, C.; Yang, M.; Xue, X. Cross-domain Federated Object Detection. In Proceedings of the IEEE International Conference on Multimedia and Expo (ICME), Brisbane, Australia, 10–14 July 2023. [Google Scholar]

- Lazaros, K.; Koumadorakis, D.E.; Vrahatis, A.G.; Kotsiantis, S. Federated learning: Navigating the landscape of collaborative intelligence. Electronics 2024, 13, 4744. [Google Scholar] [CrossRef]

- Li, T.; Sahu, A.K.; Zaheer, M.; Sanjabi, M.; Talwalkar, A.; Smith, V. Federated Optimization in Heterogeneous Networks. arXiv 2018, arXiv:1812.06127. [Google Scholar]

- Lai, J.; Li, J.; Xu, J.; Wu, Y.; Tang, B.; Chen, S.; Li, Y. pFedGPA: Diffusion-based Generative Parameter Aggregation for Personalized Federated Learning. In Proceedings of the AAAI Conference on Artificial Intelligence, Philadelphia, PA, USA, 25 February–5 March 2025; Volume 39, pp. 17999–18007. [Google Scholar]

- Arivazhagan, M.G.; Aggarwal, V.; Singh, A.K.; Choudhary, S. Federated learning with personalization layers. arXiv 2019, arXiv:1912.00818. [Google Scholar] [CrossRef]

- Husnoo, M.A.; Anwar, A.; Hosseinzadeh, N.; Islam, S.N.; Mahmood, A.N.; Doss, R. Fedrep: Towards horizontal federated load forecasting for retail energy providers. In Proceedings of the IEEE PES 14th Asia-Pacific Power and Energy Engineering Conference (APPEEC), Melbourne, Australia, 20–23 November 2022; pp. 1–6. [Google Scholar]

- Oh, J.; Kim, S.; Yun, S.Y. Fedbabu: Towards enhanced representation for federated image classification. arXiv 2021, arXiv:2106.06042. [Google Scholar]

- Li, D.; Xie, W.; Wang, Z.; Lu, Y.; Li, Y.; Fang, L. Feddiff: Diffusion model driven federated learning for multi-modal and multi-clients. IEEE Trans. Circuits Syst. Video Technol. 2024, 34, 10353–10367. [Google Scholar] [CrossRef]

- Mathew, C.; Asha, P. FedProx: FedSplit Algorithm based Federated Learning for Statistical and System Heterogeneity in Medical Data Communication. J. Internet Serv. Inf. Secur. 2024, 14, 353–370. [Google Scholar] [CrossRef]

- Karimireddy, S.P.; Kale, S.; Mohri, M.; Reddi, S.; Stich, S.; Suresh, A.T. SCAFFOLD: Stochastic Controlled Averaging for Federated Learning. In Proceedings of the 37th International Conference on Machine Learning (ICML), Online, 13–18 July 2020; Volume 119, pp. 5132–5143. Available online: https://proceedings.mlr.press/v119/karimireddy20a.html (accessed on 20 November 2025).

- Tsouvalas, V.; Saeed, A.; Ozcelebi, T.; Meratnia, N. Communication-Efficient Federated Learning through Adaptive Weight Clustering and Server-Side Distillation. arXiv 2024, arXiv:2401.14211. [Google Scholar]

- Fallah, A.; Mokhtari, A.; Ozdaglar, A. Personalized Federated Learning: A Meta-Learning Approach. arXiv 2020, arXiv:2002.07948. [Google Scholar] [CrossRef]

- Chen, H.Y.; Chao, W.L. FedBE: Making Bayesian Model Ensemble Applicable to Federated Learning. In Proceedings of the International Conference on Learning Representations (ICLR), Virtual, 3–7 May 2021. [Google Scholar]

- Shamsian, A.; Navon, A.; Fetaya, E.; Chechik, G. Personalized Federated Learning using Hypernetworks. In Proceedings of the 38th International Conference on Machine Learning (ICML), Virtual, 18–24 July 2021; Volume 139, pp. 9489–9502. Available online: https://proceedings.mlr.press/v139/shamsian21a.html (accessed on 20 November 2025).

- Bartholet, M.; Kim, T.; Beuret, A.; Yun, S.; Buhmann, J. Hypernetwork-Driven Model Fusion for Federated Domain Generalization. In Proceedings of the ICML, Vienna, Austria, 21–27 July 2024. [Google Scholar]

- Chen, X.; Huang, Y.; Xie, Z.; Pang, J. HyperFedNet: Communication-efficient personalized federated learning via hypernetwork. arXiv 2024, arXiv:2402.18445. [Google Scholar]

- Song, J.; Shen, Y.; Hou, C.; Wang, P.; Wang, J.; Tang, K.; Lv, H. FedAGHN: Personalized Federated Learning with Attentive Graph HyperNetworks. arXiv 2025, arXiv:2501.16379. [Google Scholar] [CrossRef]

- Liang, W.; Zhao, Y.; She, R.; Li, Y.; Tay, W.P. FedSheafHN: Personalized Federated Learning on Graph-structured Data. arXiv 2024, arXiv:2405.16056. [Google Scholar]

- Ho, J.; Jain, A.; Abbeel, P. Denoising diffusion probabilistic models. Adv. Neural Inf. Process. Syst. (NeurIPS) 2020, 33, 6840–6851. [Google Scholar]

- Bartosh, G.; Vetrov, D.; Naesseth, C.A. Neural Flow Diffusion Models: Learnable Forward Process for Improved Diffusion Modelling. Adv. Neural Inf. Process. Syst. 2024, 37, 73952–73985. [Google Scholar]

- Sasaki, H.; Willcocks, C.G.; Breckon, T.P. Unit-ddpm: Unpaired image translation with denoising diffusion probabilistic models. arXiv 2021, arXiv:2104.05358. [Google Scholar] [CrossRef]

- Yoon, T.; Shin, S.; Hwang, S.J.; Yang, E. FedMix: Approximation of mixup under mean augmented federated learning. In Proceedings of the International Conference on Learning Representations (ICLR), Addis Ababa, Ethiopia, 26–30 April 2020. [Google Scholar]

- Peng, Z.; Wang, X.; Chen, S.; Rao, H.; Shen, C.; Jiang, J. Federated Learning for Diffusion Models. IEEE Trans. Cogn. Commun. Netw. 2025; Early Access. [Google Scholar] [CrossRef]

- Huangsuwan, K.; Liu, T.; See, S.; Ng, A.B.; Vateekul, P. FedDrip: Federated Learning With Diffusion-Generated Synthetic Image. IEEE Access 2025, 13, 10111–10125. [Google Scholar] [CrossRef]

- Lee, D.; Park, J.; Kim, J.; Lee, K. Multidimensional Trajectory Optimization for Flow and Diffusion. In Proceedings of the International Conference on Learning Representations (ICLR), Singapore, 24–28 April 2025. [Google Scholar]

- Wang, C.; Sun, W. Controllable reference guided diffusion with local global fusion for real world remote sensing image super resolution. arXiv 2025, arXiv:2506.23801. [Google Scholar] [CrossRef]

| Dataset | Image Size | Channels | # Classes | Clients |

|---|---|---|---|---|

| MNIST | 1 (grayscale) | 10 | 10/20/100 | |

| EMNIST | 1 (grayscale) | 26 | 10/20/100 | |

| CIFAR-10 | 3 (RGB) | 10 | 10/20/100 |

| Method | EMNIST | Fashion-MNIST | CIFAR-10 | ||||||

|---|---|---|---|---|---|---|---|---|---|

| # Clients | 10 | 20 | 100 | 10 | 20 | 100 | 10 | 20 | 100 |

| Local-only | 71.75 | 71.26 | 74.84 | 86.24 | 85.24 | 86.14 | 65.45 | 65.21 | 66.12 |

| FedAvg | 72.43 | 73.44 | 75.25 | 82.23 | 84.96 | 84.15 | 65.27 | 68.47 | 70.55 |

| FedPer | 75.24 | 77.45 | 78.65 | 88.24 | 89.41 | 88.15 | 64.54 | 64.88 | 70.21 |

| LG-FedAvg | 73.42 | 71.21 | 76.54 | 86.12 | 86.45 | 84.45 | 65.15 | 65.65 | 66.24 |

| pFedHN | 77.34 | 78.12 | 77.15 | 86.80 | 86.90 | 86.25 | 71.66 | 75.12 | 77.10 |

| pFedGPA | 78.40 | 80.00 | 82.90 | 85.60 | 87.00 | 89.60 | 70.10 | 71.90 | 74.20 |

| HyFLM | 84.52 | 86.00 | 88.50 | 89.00 | 89.80 | 91.10 | 74.67 | 76.08 | 78.10 |

| Dataset | #Clients | Method | Acc@Last (%) | R@95% | Payload/Round (MB) | Total MB |

|---|---|---|---|---|---|---|

| EMNIST | 10 | pFedGPA | 78.4 | 145 | 2.37 | 236.6 |

| HyFLM | 83.9 | 28 | 2.37 | 165.6 | ||

| 20 | pFedGPA | 81.0 | 160 | 4.52 | 542.0 | |

| HyFLM | 85.8 | 32 | 4.52 | 361.3 | ||

| 100 | pFedGPA | 83.4 | 240 | 21.72 | 3910.2 | |

| HyFLM | 88.1 | 45 | 21.72 | 2606.8 | ||

| FMNIST | 10 | pFedGPA | 88.7 | 122 | 0.37 | 31.8 |

| HyFLM | 95.3 | 24 | 0.37 | 22.4 | ||

| 20 | pFedGPA | 89.4 | 150 | 0.71 | 74.9 | |

| HyFLM | 97.9 | 19 | 0.71 | 53.5 | ||

| 100 | pFedGPA | 91.0 | 184 | 3.43 | 514.9 | |

| HyFLM | 98.8 | 14 | 3.43 | 377.6 | ||

| CIFAR-10 | 10 | pFedGPA | 72.5 | 260 | 3.12 | 436.4 |

| HyFLM | 74.9 | 123 | 3.12 | 374.0 | ||

| 20 | pFedGPA | 72.5 | 181 | 5.95 | 1071.1 | |

| HyFLM | 75.1 | 158 | 5.95 | 892.6 | ||

| 100 | pFedGPA | 73.8 | 230 | 28.62 | 6582.5 | |

| HyFLM | 79.7 | 195 | 28.62 | 5437.7 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Song, H.-j.; Suh, Y.-J. HyFLM: A Hypernetwork-Based Federated Learning with Multidimensional Trajectory Optimization on Diffusion Paths. Electronics 2025, 14, 4704. https://doi.org/10.3390/electronics14234704

Song H-j, Suh Y-J. HyFLM: A Hypernetwork-Based Federated Learning with Multidimensional Trajectory Optimization on Diffusion Paths. Electronics. 2025; 14(23):4704. https://doi.org/10.3390/electronics14234704

Chicago/Turabian StyleSong, Ho-jun, and Young-Joo Suh. 2025. "HyFLM: A Hypernetwork-Based Federated Learning with Multidimensional Trajectory Optimization on Diffusion Paths" Electronics 14, no. 23: 4704. https://doi.org/10.3390/electronics14234704

APA StyleSong, H.-j., & Suh, Y.-J. (2025). HyFLM: A Hypernetwork-Based Federated Learning with Multidimensional Trajectory Optimization on Diffusion Paths. Electronics, 14(23), 4704. https://doi.org/10.3390/electronics14234704