Effect of Deep Recurrent Architectures on Code Vulnerability Detection: Performance Evaluation for SQL Injection in Python

Abstract

1. Introduction

- Improved performance for detecting SQL injection vulnerabilities in Python-based web applications using a deep recurrent architecture, considering their architectural features.

- A systematic analysis of Word2Vector embedding hyperparameters revealed the optimal configuration that maximises vulnerability detection performance.

- Evaluated peephole LSTM and modified GRU architectures (with layer normalisation and zoneout) for SQL vulnerability detection in the system development code base.

2. Related Works

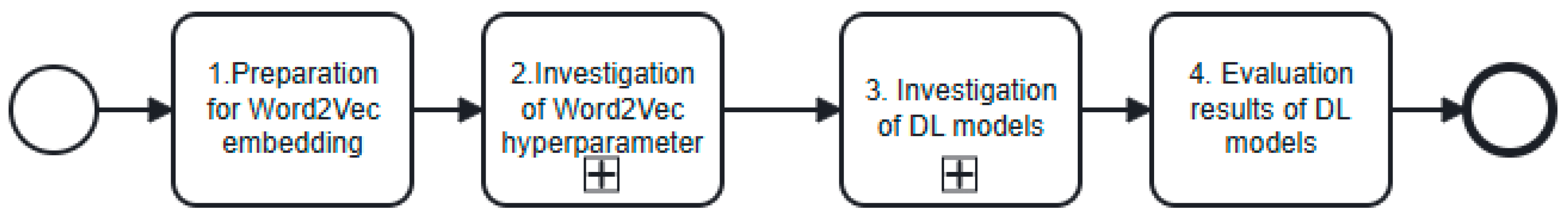

3. Design and Evaluation of RNN Architectures for SQL-Injection Detection

3.1. Research Methodology

3.2. The Preparation of the Data Set for Word2Vector Embedding

3.3. Investigation of the Hyperparameters of the Word2Vector Model

- Number of iterations. The number of iterations refers to the number of batches required to complete the epoch.

- Vector size. When Word2Vector is used, the tokens are transformed into numerical vectors of specified sizes. Increasing the size of these vectors adds more axes to the positioning of words relative to each other, allowing the Word2Vector model to capture more complex relationships.

- Minimum count. The minimum count determines how often a token must appear in the training corpus to be assigned a vector representation. Tokens that appear less frequently are ignored and not encoded. Later, these types of tokens are ignored when complete lists of tokens are converted into lists of vectors.

- Of the three hyperparameters, vector size, number of iterations, and minimum count, 80 combinations were created. These included:

- the number of iterations

- vector sizes

- minimum counts

- 2.

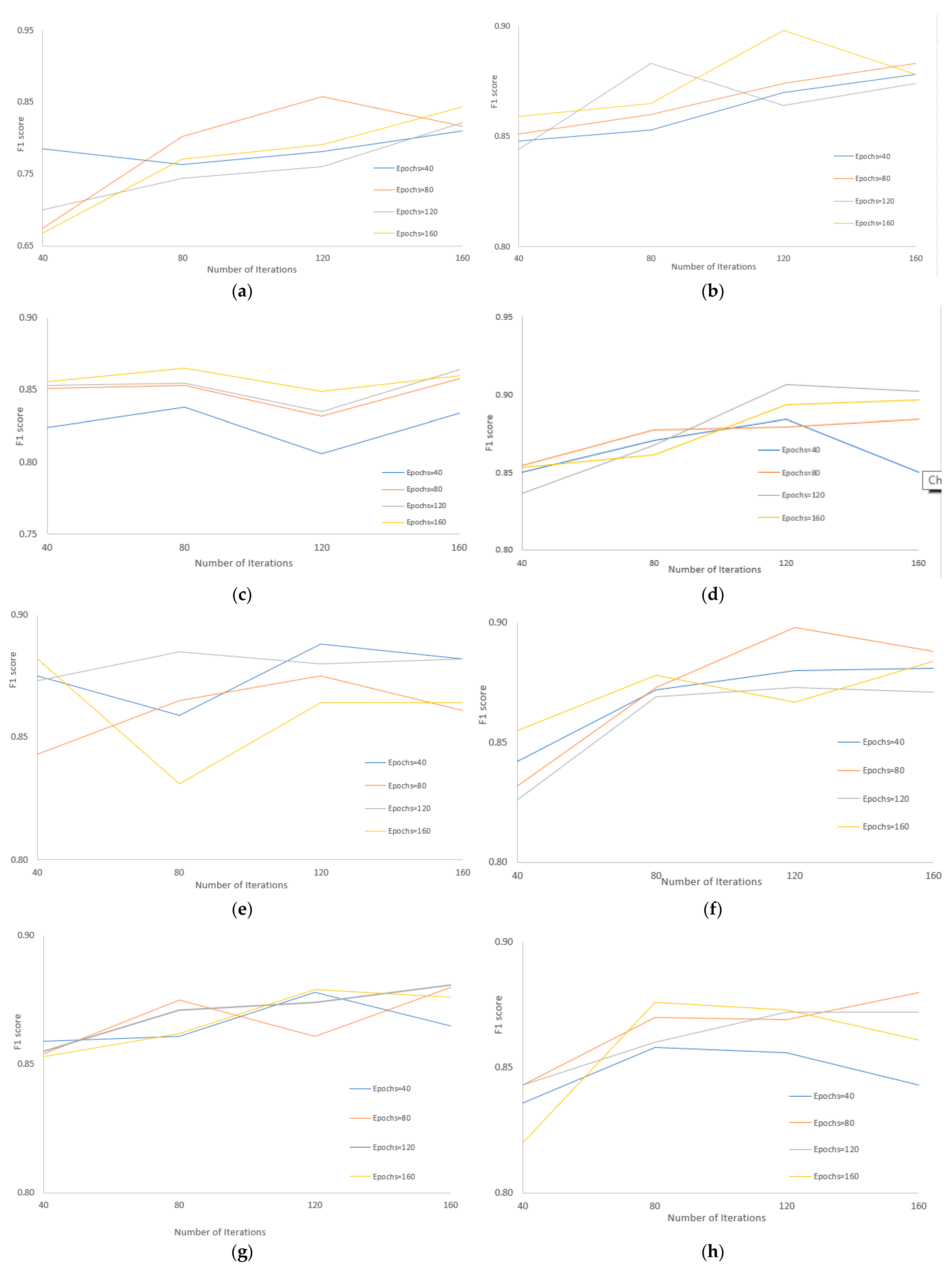

- Based on Shapley values, an analysis was conducted that the greatest impact on the performance of the Word2Vector model was derived when the vector size value was highest (see Table 4, last row). In contrast, the lowest impact was observed when the minimum count value was the lowest (see Table 2, the first three rows), and the highest Shapley value was when the number of iterations ranged from 40 to 80 (see Table 3, the first two rows). Accordingly, it was decided to run only 32 combinations with a continuously augmented count of epochs: 40, 80, 120, 160.

- 3.

- Word2Vector model performance (F1 score) was evaluated for each 32 combinations of hyperparameters (vector size, minimum count, number of iterations) with different numbers of epochs. The results of this investigation are presented in Figure 2.

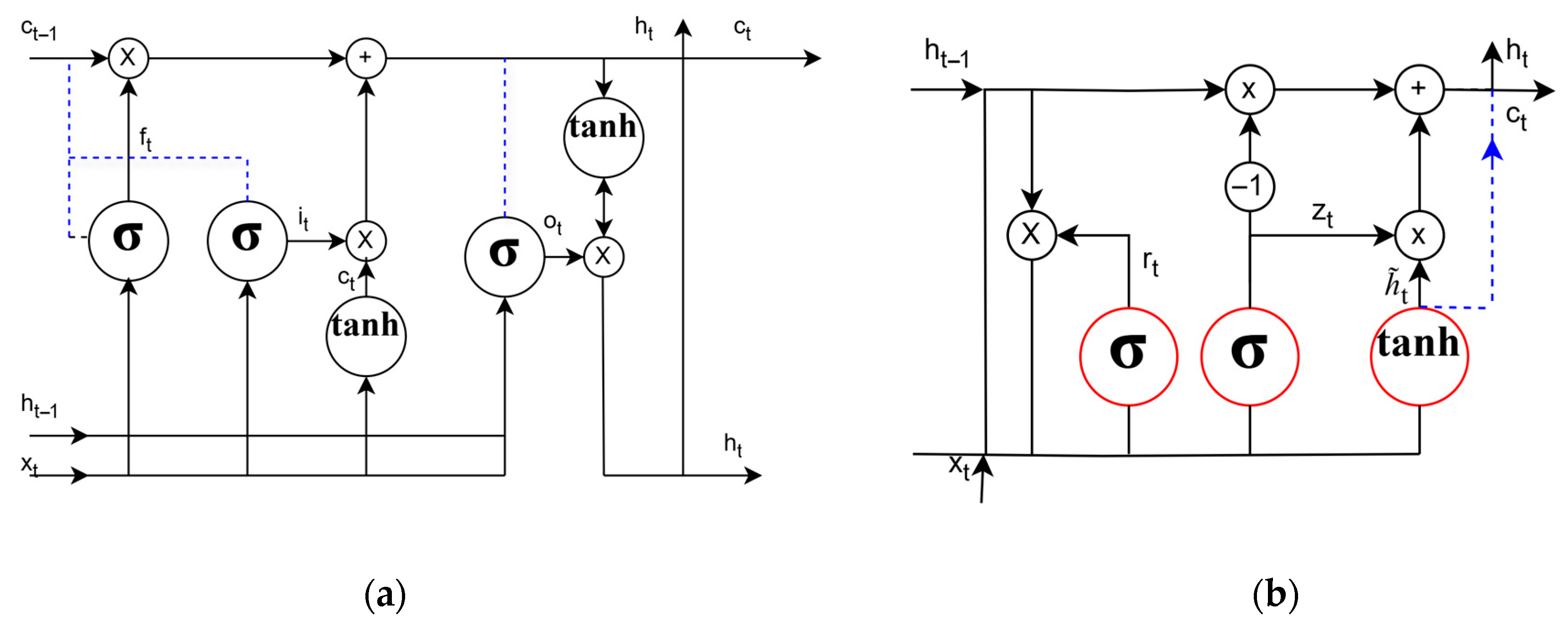

3.4. Recurrent Neural Network Architectures Analysis for SQL Injection Detection

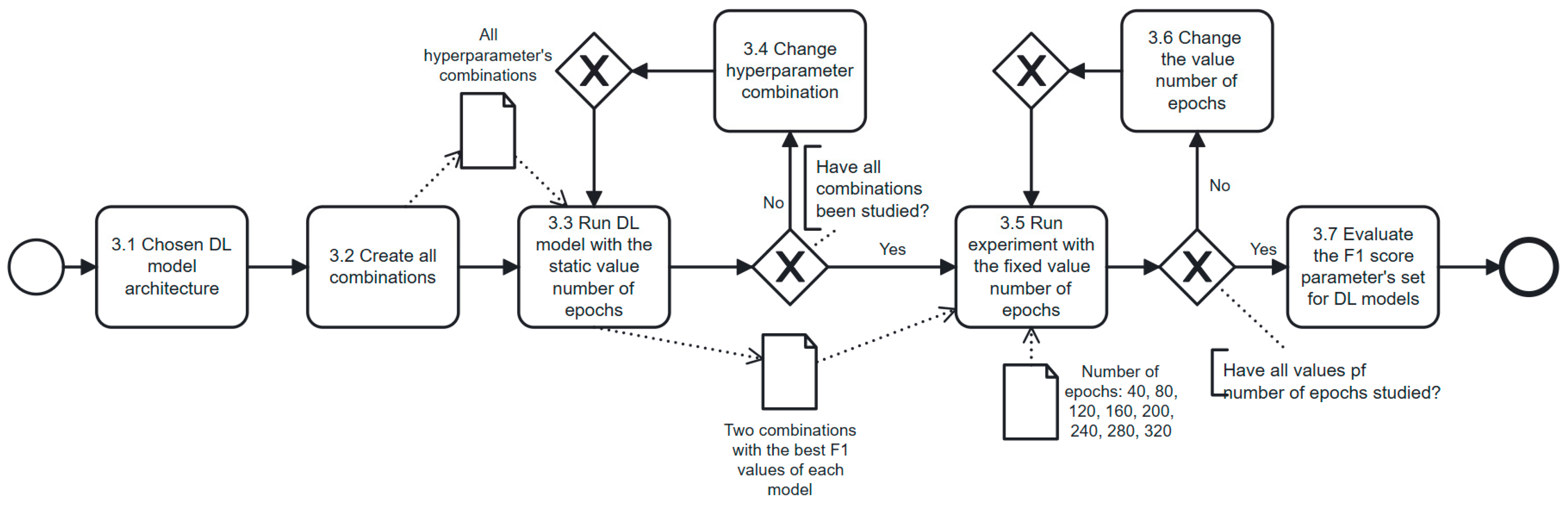

3.5. Deep Learning Models Investigation

4. Results and Discussions

5. Threats to Validity

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Alhazmi, O.; Malaiya, Y.; Ray, I. Measuring, analysing, and predicting security vulnerabilities in software systems. Comput. Secur. 2007, 26, 219–228. [Google Scholar] [CrossRef]

- Ghaffarian, S.M.; Shahriari, H.R. Software vulnerability analysis and discovery using machine-learning and data-mining techniques: A survey. ACM Comput. Surv. (CSUR) 2017, 50, 56. [Google Scholar] [CrossRef]

- Medeiros, I.; Neves, N.F.; Correia, M. Automatic detection and correction of web application vulnerabilities using data mining to predict false positives. In Proceedings of the 23rd International Conference on World Wide Web, New York, NY, USA, 7–11 April 2014; pp. 63–74. [Google Scholar]

- Russell, R.; Kim, L.; Hamilton, L.; Lazovich, T.; Harer, J.; Ozdemir, O.; Ellingwood, P.; McConley, M. Automated vulnerability detection in source code using deep representation learning. In Proceedings of the 2018, 17th IEEE International Conference on Machine Learning and Applications (ICMLA), Orlando, FL, USA, 17–20 December 2018; IEEE: Piscataway, NJ, USA, 2018; pp. 757–762. [Google Scholar]

- Coulter, R.; Han, Q.-L.; Pan, L.; Zhang, J.; Xiang, Y. Code analysis for intelligent cyber systems: A data-driven approach. Inf. Sci. 2020, 524, 46–58. [Google Scholar] [CrossRef]

- Wijekoon, A.; Wiratunga, N. A user-centred evaluation of DisCERN: Discovering counterfactuals for code vulnerability detection and correction. Knowl.-Based Syst. 2023, 278, 110830. [Google Scholar] [CrossRef]

- Raschka, S.; Patterson, J.; Nolet, C. Machine learning in Python: Main developments and technology trends in data science, machine learning, and artificial intelligence. Information 2020, 11, 193. [Google Scholar] [CrossRef]

- Goetz, S.; Schaad, A. You still have to study—On the Security of LLM-generated code. arXiv 2024, arXiv:2408.07106. [Google Scholar]

- OWASP Top Ten. 2021. Available online: https://owasp.org/Top10/ (accessed on 16 September 2024).

- Common Weakness Enumeration. Available online: https://cwe.mitre.org/ (accessed on 16 September 2024).

- Agbakwuru, A.O.; Njoku, D.O. SQL Injection Attack on Web-Based Application: Vulnerability Assessments and Detection Technique. Int. Res. J. Eng. Technol. 2021, 8, 243–252. [Google Scholar]

- Kumar, A.; Dutta, S.; Pranav, P. Analysis of SQL injection attacks in the cloud and in WEB applications. Secur. Priv. 2024, 7, e370. [Google Scholar] [CrossRef]

- Subhan, F.; Wu, X.; Bo, L.; Sun, X.; Rahman, M. A deep learning-based approach for software vulnerability detection using code metrics. IET Softw. 2022, 16, 516–526. [Google Scholar] [CrossRef]

- Harer, J.A.; Kim, L.Y.; Russell, R.L.; Ozdemir, O.; Kosta, L.R.; Rangamani, A.; Hamilton, L.H.; Centeno, G.I.; Key, J.R.; Ellingwood, P.M.; et al. Automated software vulnerability detection with machine learning. arXiv 2018, arXiv:1803.04497. [Google Scholar] [CrossRef]

- Bilgin, Z.; Ersoy, M.A.; Soykan, E.U.; Tomur, E.; Comak, P.; Karacay, L. Vulnerability prediction from source code using machine learning. IEEE Access 2020, 8, 150672–150684. [Google Scholar] [CrossRef]

- Sun, H.; Du, Y.; Li, Q. Deep learning-based detection technology for SQL injection research and implementation. Appl. Sci. 2023, 13, 9466. [Google Scholar] [CrossRef]

- Kakisim, A.G. A deep learning approach based on multi-view consensus for SQL injection detection. Int. J. Inf. Secur. 2024, 23, 1541–1556. [Google Scholar] [CrossRef]

- Li, Z.; Zou, D.; Xu, S.; Ou, X.; Jin, H.; Wang, S.; Deng, Z.; Zhong, Y. Vuldeepecker: A deep learning-based system for vulnerability detection. arXiv 2018, arXiv:1801.01681. [Google Scholar]

- Sestili, C.D.; Snavely, W.S.; VanHoudnos, N.M. Towards security defect prediction with AI. arXiv 2018, arXiv:1808.09897. [Google Scholar] [CrossRef]

- Dam, H.K.; Tran, T.; Pham, T.; Ng, S.W.; Grundy, J.; Ghose, A. Automatic feature learning for predicting vulnerable software components. IEEE Trans. Softw. Eng. 2018, 47, 67–85. [Google Scholar] [CrossRef]

- Saccente, N.; Dehlinger, J.; Deng, L.; Chakraborty, S.; Xiong, Y. Project Achilles: A prototype tool for static method-level vulnerability detection of Java source code using a recurrent neural network. In Proceedings of the 2019, 34th IEEE/ACM International Conference on Automated Software Engineering Workshop (ASEW), San Diego, CA, USA, 11–19 November 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 114–121. [Google Scholar]

- Chakraborty, S.; Krishna, R.; Ding, Y.; Ray, B. Deep learning based vulnerability detection: Are we there yet? IEEE Trans. Softw. Eng. 2021, 48, 3280–3296. [Google Scholar] [CrossRef]

- Bagheri, A.; Hegedűs, P. A Comparison of Different Source Code Representation Methods for Vulnerability Prediction in Python. arXiv 2021, arXiv:2108.02044. [Google Scholar] [CrossRef]

- Wartschinski, L.; Noller, Y.; Vogel, T.; Kehrer, T.; Grunske, L. VUDENC: Vulnerability detection with deep learning on a natural codebase for Python. Inf. Softw. Technol. 2022, 144, 106809. [Google Scholar] [CrossRef]

- Wang, R.; Xu, S.; Ji, X.; Tian, Y.; Gong, L.; Wang, K. An extensive study of the effects of different deep learning models on code vulnerability detection in Python code. Autom. Softw. Eng. 2024, 31, 15. [Google Scholar] [CrossRef]

- Tran, H.C.; Tran, A.D.; Le, K.H. DetectVul: A statement-level code vulnerability detection for Python. Future Gener. Comput. Syst. 2025, 163, 107504. [Google Scholar] [CrossRef]

- Mikolov, T. Efficient estimation of word representations in vector space. arXiv 2013, arXiv:1301.3781. [Google Scholar] [CrossRef]

- Feng, Z.; Guo, D.; Tang, D.; Duan, N.; Feng, X.; Gong, M.; Shou, L.; Qin, B.; Liu, T.; Jiang, D.; et al. Codebert: A pre-trained model for programming and natural languages. arXiv 2020, arXiv:2002.08155. [Google Scholar]

- Devlin, J. Bert: Pre-training of deep bidirectional transformers for language understanding. arXiv 2018, arXiv:1810.04805. [Google Scholar]

- Joulin, A. Fasttext. zip: Compressing text classification models. arXiv 2016, arXiv:1612.03651. [Google Scholar]

- Wartschinski, L. Vudenc—Datasets for Vulnerabilities. 2020. Available online: https://zenodo.org/record/3559841#.XeVaZNVG2Hs (accessed on 16 September 2024).

- Zhou, Y.; Sharma, A. Automated identification of security issues from commit messages and bug reports. In Proceedings of the ACM SIGSOFT Symposium on the Foundations of Software Engineering, Paderborn, Germany, 4–8 September 2017; Part F130154. pp. 914–919. [Google Scholar] [CrossRef]

- LemaÃŽtre, G.; Nogueira, F.; Aridas, C.K. Imbalanced-learn: A Python toolbox to tackle the curse of imbalanced datasets in machine learning. J. Mach. Learn. Res. 2017, 18, 1–5. [Google Scholar]

- García, V.; Sánchez, J.S.; Mollineda, R.A. Exploring the performance of resampling strategies for the class imbalance problem. In Proceedings of the Trends in Applied Intelligent Systems: 23rd International Conference on Industrial Engineering and Other Applications of Applied Intelligent Systems, IEA/AIE 2010, Cordoba, Spain, 1–4 June 2010; Proceedings, Part I 23; Springer: Berlin/Heidelberg, Germany, 2010; pp. 541–549. [Google Scholar]

- Thölke, P.; Mantilla-Ramos, Y.-J.; Abdelhedi, H.; Maschke, C.; Dehgan, A.; Harel, Y.; Kemtur, A.; Berrada, L.M.; Sahraoui, M.; Young, T.; et al. Class imbalance should not throw you off balance: Choosing the right classifiers and performance metrics for brain decoding with imbalanced data. NeuroImage 2023, 277, 120253. [Google Scholar] [CrossRef]

- Zulu, J.; Han, B.; Alsmadi, I.; Liang, G. Enhancing Machine Learning Based SQL Injection Detection Using Contextualized Word Embedding. In Proceedings of the 2024 ACM Southeast Conference, Marietta, GA, USA, 18–20 April 2024; pp. 211–216. [Google Scholar]

- Wang, F.; Zhang, G.; Kong, Q.; Fang, L.; Xiao, Y.; Wang, G. Semantic-Based SQL Injection Detection Method. In Proceedings of the 2023 5th International Conference on Artificial Intelligence and Computer Applications (ICAICA), Dalian, China, 28–30 November 2023; IEEE: Piscataway, NJ, USA, 2023; pp. 519–524. [Google Scholar]

- Liu, Y.; Dai, Y. Deep Learning in Cybersecurity: A Hybrid BERT–LSTM Network for SQL Injection Attack Detection. IET Inf. Secur. 2024, 2024, 5565950. [Google Scholar] [CrossRef]

- Dhingra, B.; Liu, H.; Salakhutdinov, R.; Cohen, W.W. A comparative study of word embeddings for reading comprehension. arXiv 2017, arXiv:1703.00993. [Google Scholar] [CrossRef]

- Lundberg, S.M.; Lee, S.I. A unified approach to interpreting model predictions. Adv. Neural Inf. Process. Syst. 2017, 30. [Google Scholar]

- Shapley, L.S. A value for n-person games. Contrib. Theory Games 1953. [Google Scholar]

- Fu, L. Time series-oriented load prediction using deep peephole LSTM. In Proceedings of the 2020 12th International Conference on Advanced Computational Intelligence (ICACI), Dali, China, 14–16 August 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 86–91. [Google Scholar]

- Essai Ali, M.H.; Abdellah, A.R.; Atallah, H.A.; Ahmed, G.S.; Muthanna, A.; Koucheryavy, A. Deep learning peephole LSTM neural network-based channel state estimators for OFDM 5G and beyond networks. Mathematics 2023, 11, 3386. [Google Scholar] [CrossRef]

- Garlapati, K.; Kota, N.; Mondreti, Y.S.; Gutha, P.; Nair, A.K. Deep Learning Aided Channel Estimation in OFDM Systems. In Proceedings of the 2022 International Conference on Futuristic Technologies (INCOFT), Belgaum, India, 25–27 November 2022; pp. 1–5. [Google Scholar]

- Zhang, Y.; Wu, R.; Dascalu, S.M.; Harris, F.C., Jr. A novel extreme adaptive GRU for multivariate time series forecasting. Sci. Rep. 2024, 14, 2991. [Google Scholar] [CrossRef]

- Krueger, D.; Maharaj, T.; Kramár, J.; Pezeshki, M.; Ballas, N.; Ke, N.R.; Goyal, A.; Bengio, Y.; Courville, A.; Pal, C. Zoneout: Regularising RNNs by randomly preserving hidden activations. arXiv 2016, arXiv:1606.01305. [Google Scholar]

- Nie, X.; Li, N.; Wang, K.; Wang, S.; Luo, X.; Wang, H. Understanding and tackling label errors in deep learning-based vulnerability detection (experience paper). In Proceedings of the 32nd ACM SIGSOFT International Symposium on Software Testing and Analysis, Seattle, WA, USA, 17–23 July 2023; pp. 52–63. [Google Scholar]

- Summers, C.; Dinneen, M.J. Nondeterminism and instability in neural network optimization. In Proceedings of the International Conference on Machine Learning, Vienna, Austria, 18–24 July 2021; pp. 9913–9922. [Google Scholar]

- Zhuang, D.; Zhang, X.; Song, S.; Hooker, S. Randomness in neural network training: Characterizing the impact of tooling. Proc. Mach. Learn. Syst. 2022, 4, 316–336. [Google Scholar]

| Authors | Research Objective | Vector Embedding Model | ML Model Used | Performance (F1 Score) |

|---|---|---|---|---|

| Bagheri & Hegeds (2021) [23] | Evaluate different source code representation methods for vulnerability prediction. | Word2Vector, FastText, BERT | LSTM | 0.84–0.86 of all code vulnerabilities |

| Wartschinski et al. (2022) [24] | Propose the DL model for a vulnerability detection system that automatically learns features of vulnerable code from a large, real-world codebase. | Word2Vector | LSTM | 0.80–0.90 of all code vulnerabilities; 80.1% of SQL injection vulnerabilities |

| Wang et al. (2024) [25] | Evaluate the effects of DL architectures derived from combinations of representation learning models on code vulnerability detection. | Word2Vector, FastText, Code-BERT | LSTM, XGBoost, GRU, CNN, MLP | 0.78- 0.88 of all code vulnerabilities. |

| Tran et al. (2025) [26] | A statement-level code vulnerability detection. | BERT | GNN-based models | 0.74 of all code vulnerabilities. |

| Minimum Count’s Values | Min | Max | Mean |

|---|---|---|---|

| 1 | 0.004052 | 0.004052 | 0.004052 |

| 10 | 0.003995 | 0.003995 | 0.003995 |

| 100 | 0.003424 | 0.003424 | 0.003424 |

| 1000 | −0.00229 | −0.00229 | −0.00229 |

| 4000 | −0.02134 | −0.02134 | −0.02134 |

| Number of Iterations’ Values | Min | Max | Mean |

|---|---|---|---|

| 40 | 0.005475 | 0.005475 | 0.005475 |

| 80 | 0.001825 | 0.005475 | 0.001825 |

| 120 | −0.00183 | −0.00183 | −0.00183 |

| 160 | −0.00548 | 0.005475 | −0.00495 |

| Vector Size’s Values | Min | Max | Mean |

|---|---|---|---|

| 10 | −0.09699 | 0.09699 | −0.09699 |

| 100 | −0.03781 | −0.03781 | −0.03781 |

| 150 | −0.00548 | −0.00493 | −0.00493 |

| 200 | 0.005475 | 0.027946 | 0.023789 |

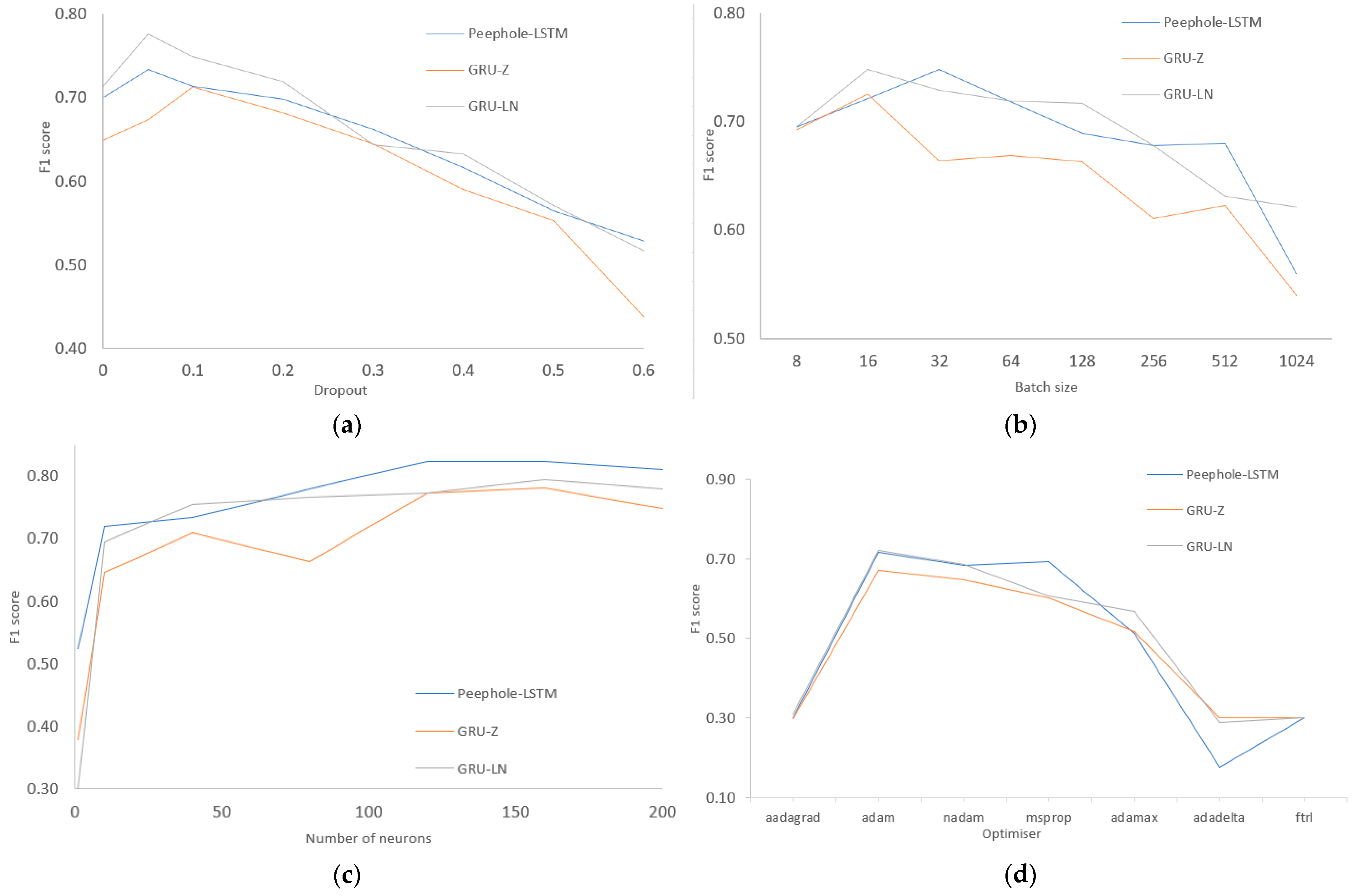

| Name of Hyperparameter | Description and Values in the Experiment |

|---|---|

| Number of neurons | Neurons perform complex computations during training that allow ML models to recognise complex relationships between data and make predictions based on input. A higher number of neurons increases the training time. Initial value = 10. During the experiment, values from the interval [1, 200] were used. |

| Dropout | A regularisation hyperparameter of an ML model, where random neurons are ignored during training to avoid overfitting. This improves the performance of the model by reducing interdependencies between neurons and increasing robustness when handling new unseen data. Initial value = 0.2. During the experiment, the interval values [0, 0.6] were used. |

| Optimizer | Optimizer is one of the most important hyperparameters of an ML model, as it determines how the model learns and updates its parameters during learning to minimise the loss function. Initial value = “adam”. During the experiment, the values from the list [“adagrad”, “adam”, “nadam”, “rmsprop”, “adamax”, “adadelta”, “ftrl”] were used. |

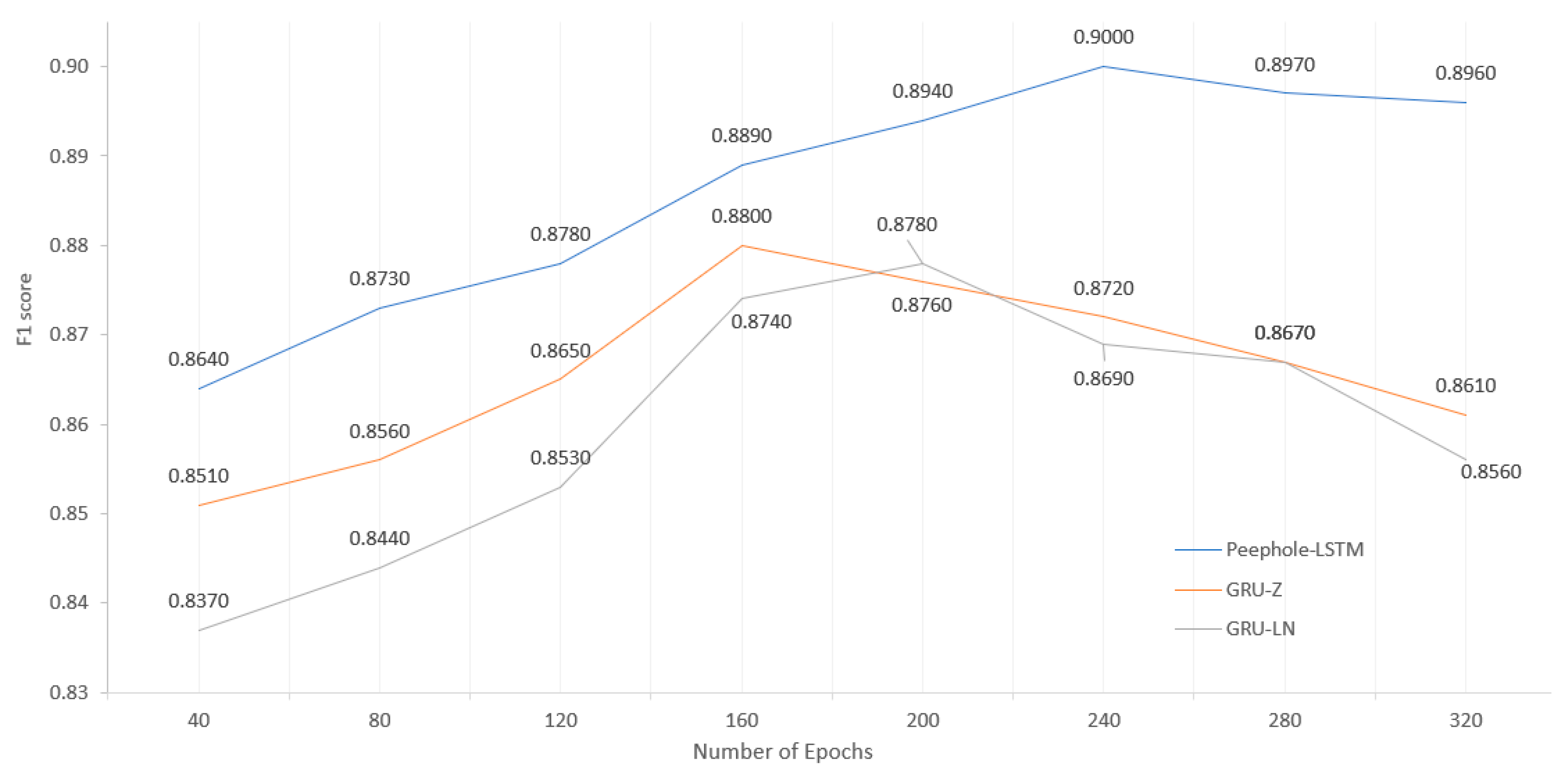

| Number of epochs | The number of epochs determines how many times the entire data set is passed through during training. Choosing the correct number of epochs is critical because it affects how well the model learns the relationships in the data set. Initial value = 15. During the experiment, values of the interval [40, 320] were used. |

| Batch Size | Batch size defines the number of samples processed by the ML model during training before updating its parameters. This hyperparameter is important because it affects the learning speed and memory usage, which are important to balance model performance and computational speed. Initial value = 250. During the experiment, the values from the interval [8, 1024] were used. |

| Zoneout | Zoneout is a state-of-the-art method for regularising RNN by stochastically preserving previous hidden activations [46]. Zone-out can improve performance by capturing longer-term dependencies compared to GRU. Initial value = 0.2. During the experiment, the values of the interval [0, 0.6] were used. |

| Number of Epochs | F1 of Peephole LSTM | F1 of GRU-LN | F1 of GRU-Z | ΔF1 (Peephole LSTM—GRU-LN) | ΔF1 (Peephole LSTM—GRU-Z) |

|---|---|---|---|---|---|

| 40 | 0.864 | 0.837 | 0.851 | 0.027 | 0.013 |

| 80 | 0.873 | 0.844 | 0.856 | 0.029 | 0.017 |

| 120 | 0.878 | 0.853 | 0.865 | 0.025 | 0.013 |

| 160 | 0.889 | 0.874 | 0.88 | 0.015 | 0.009 |

| 200 | 0.894 | 0.878 | 0.876 | 0.016 | 0.018 |

| 240 | 0.9 | 0.869 | 0.872 | 0.031 | 0.028 |

| 280 | 0.897 | 0.867 | 0.867 | 0.03 | 0.03 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Slotkienė, A.; Poška, A.; Stefanovič, P.; Ramanauskaitė, S. Effect of Deep Recurrent Architectures on Code Vulnerability Detection: Performance Evaluation for SQL Injection in Python. Electronics 2025, 14, 3436. https://doi.org/10.3390/electronics14173436

Slotkienė A, Poška A, Stefanovič P, Ramanauskaitė S. Effect of Deep Recurrent Architectures on Code Vulnerability Detection: Performance Evaluation for SQL Injection in Python. Electronics. 2025; 14(17):3436. https://doi.org/10.3390/electronics14173436

Chicago/Turabian StyleSlotkienė, Asta, Adomas Poška, Pavel Stefanovič, and Simona Ramanauskaitė. 2025. "Effect of Deep Recurrent Architectures on Code Vulnerability Detection: Performance Evaluation for SQL Injection in Python" Electronics 14, no. 17: 3436. https://doi.org/10.3390/electronics14173436

APA StyleSlotkienė, A., Poška, A., Stefanovič, P., & Ramanauskaitė, S. (2025). Effect of Deep Recurrent Architectures on Code Vulnerability Detection: Performance Evaluation for SQL Injection in Python. Electronics, 14(17), 3436. https://doi.org/10.3390/electronics14173436