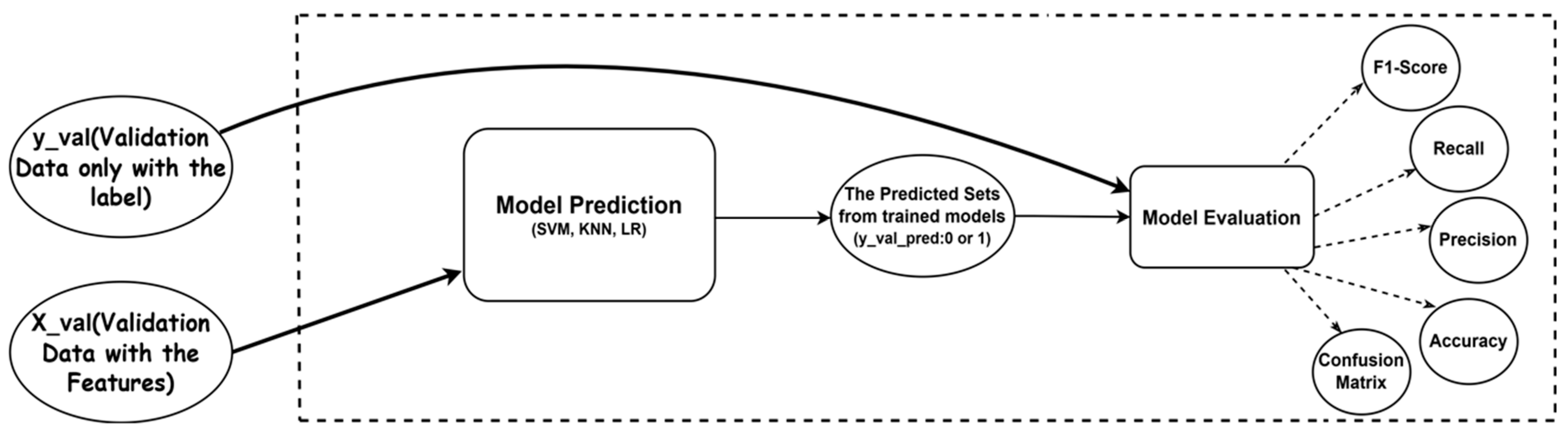

In this part, we will present the outcomes of our experiments and analyze the performance of three ML models: SVM, LR, and KNN. For the first step, we will show the performance of the models which were optimized by using Adaptive GridSearchCV with training and validation sets, and then we will show the outcome of the models on the testing set. The end of this part will contrast the outcome and efficiency of the models with different optimized methods on the testing set. The results will be presented in figures and tables, and we will provide a detailed analysis of the findings.

5.2.1. The Performance on the Training and Validation Sets

The training and validation accuracy of the SVM model, optimized using Adaptive GridSearchCV, is shown in

Table 1. As illustrated in the training output, the SVM model attains 0.999726 training accuracy while maintaining 0.999767 validation accuracy. The close alignment between these two metrics—where validation accuracy slightly exceeds training accuracy—is a positive indicator that the model generalizes well to unseen data rather than merely memorizing training examples. This indicates that it has successfully learned the underlying patterns in the training data without overfitting to noise or specific instances. Also presented in

Table 1 are the results for the KNN model, which was similarly optimized using Adaptive GridSearchCV.

The optimized KNN model attains 1.0000 training accuracy and 0.999680 validation accuracy, indicating that the model performs exceptionally well on the training data, achieving perfect accuracy. However, the validation accuracy is slightly lower, showing that while the model has learned the pattern very well, it still generalizes effectively to unseen data. The minimal difference between training and validation accuracy (only 0.000320 gap) demonstrates that the model is not overfitting to the training set. The consistent high performance across both training and validation sets confirms the model’s robustness and its ability to maintain predictive accuracy in real-world scenarios.

Finally, the table also includes accuracy results for the LR model, which was optimized using Adaptive GridSearchCV. The optimized LR model reaches a 0.990638 training accuracy and 0.986496 validation accuracy. It also demonstrates that the model performs well on the training data, and still generalizes effectively to unseen data like the validation set. The difference between training and validation accuracy is 0.004142, which is relatively small, suggesting that the model is not overfitting to the training data. This shows that the LR model has learned the underlying patterns in the training data and can maintain a good level of predictive accuracy when applied to new, unseen data (such as a testing set).

5.2.2. The Performance on the Testing Set

In this subsection, we will split into three parts to show the performance of the models on the testing set. The first part will show the performance of the models which optimized by using Standard GridSearchCV, the second part will show the performance of the models which optimized by using Adaptive GridSearchCV with testing set, and the last part will contrast the outcome and efficiency of the models with different optimized methods on the testing set.

The results of the SVM model evaluated on the testing set using general GridSearchCV are summarized in

Table 2. With the optimized hyperparameters, the classification report shows that the SVM model achieves perfect precision, recall, and F1-score of 1.00 for both classes (0 and 1), the model correctly classifies 49,763 instances of class 0 and 25,620 instances of class 1. Overall accuracy achieves 0.998647, with macro and weighted averages also reaching perfect scores of 1.00 across all metrics. The corresponding confusion matrix—[49,666, 97] and [5, 25,615]—reveals minimal misclassifications, with only 97 false positives and 5 false negatives out of 75,383 total instances.

Similarly, the Logistic Regression (LR) model, also evaluated using GridSearchCV, demonstrates strong performance. The classification report indicates that the LR model achieves perfect precision (1.00), recall (1.00), and F1-score (1.00) for class 0 with 49,763 instances. For class 1, it maintains high precision (0.99), recall (1.00), and F1-score (1.00). The total number of correctly classified instances is 49,526 for class 0 and 25,618 for class 1. The model attains an overall accuracy of 0.996830, with macro and weighted averages again reaching 1.00. According to the confusion matrix, there are 237 false positives and 2 false negatives, reflecting only a small number of errors.

As for the KNN model, it also exhibits excellent classification capability on the same test set. Both classes achieve perfect scores for precision, recall, and F1-score. The classification report demonstrates exceptional performance across both classes. Both class 0 and class 1 achieve perfect precision (1.00), recall (1.00), and F1-score (1.00), with class 0 having 49,763 instances and class 1 having 25,620 instances. The overall accuracy of the KNN model is 0.998395, with macro and weighted averages also reaching perfect scores of 1.00 across all metrics. Misclassifications are minimal, as evidenced by 119 false positives and 2 false negatives out of the total 75,383 instances.

Table 3 indicates the outcome of the SVM model on the testing set with Adaptive Grid SearchCV. With the classification report, the SVM model achieves a precision, recall and F1-score of 1.00 for both classes (0 and 1). And the macro and weighted averages also reach scores of 1.00 across all metrics. The overall accuracy of the SVM model is 0.998700, with the confusion matrix showing [49,669, 94] and [4, 25,616], demonstrating only 94 false positives and 4 false negatives out of 49,763 class 0 instances and 25,620 class 1 instances (total 75,383 instances).

Table 3 shows the outcome of the LR model on the testing set with Adaptive GridSearchCV. The classification report indicates that the LR model attains a precision, recall, and F1-score of 1.00 for class 0, a precision of 0.99, recall of 1.00, and F1-score of 1.00 for class 1. And the overall testing accuracy is 0.996830, with macro and weighted averages also reaching scores of 1.00 across all metrics. The confusion matrix shows [49,526, 237] and [2, 25,618], indicating with only 237 false positives and 2 false negatives out of 75,383 total instances.

Table 3 demonstrates the outcome of the KNN model on the testing set with Adaptive GridSearchCV. The classification report shows that the KNN model achieves a precision, recall, and F1-score of 1.00 for both classes (0 and 1). The overall testing accuracy is 0.998395, with macro and weighted averages also attaining scores of 1.00 across all metrics. The confusion matrix shows [49,644, 119] and [2, 25,618], indicating only 119 false positives and 2 false negatives out of 75,383 total instances.

Table 4 illustrates that the SVM model optimized by our method achieves a testing accuracy of 0.998700 with an execution time of 3949.99 s. The General GridSearchCV attains 0.998647 testing accuracy with an execution time of 5518.38 s. The General Grid SearchCV from another paper attains a testing accuracy of 0.95 (without execution time). This indicates that our method decreases around 1568.39 s of execution time (about 28% time decrease), and it further improves the testing accuracy by 0.000053 (extremely close to testing accuracy of 1).

Table 5 indicates the comparison of performance of LR model optimized by general Grid SearchCV and Adaptive GridSearchCV. The result shows that the LR model optimized by our method attains 0.996830 testing accuracy with an execution time of 16.90 s, while the General GridSearchCV achieves the same testing accuracy of 0.996830 with an execution time of 21.88 secoonds, and the General GridSearchCV from another paper (without execution time) reaches a testing accuracy of 0.94. From the result, we can see that our method apparently reduces the execution time by 4.98 s (about 22.8% time reduction) while maintaining the same testing accuracy as the general method. And compared to the general method from another paper, our method evidently has higher testing accuracy by 0.056830 (about 6% improvement). It suggests that our method keeps the same testing accuracy and is even better than another paper while reducing the execution time.

In

Table 6, it shows that the KNN model optimized by our method achieves a testing accuracy of 0.998395 with an execution time of 2388.89 s, while the general GridSearchCV achieves a testing accuracy of 0.998395 with an execution time of 6455.22 s, and the general one from other papers (without execution time) attains a testing accuracy of 0.9461. Based on the result, we can see that our method strongly reduces the execution time by 4066.33 s (about 63% time reduction) while maintaining the same testing accuracy. And compared to the general method from another paper, our method reaches a higher testing accuracy by 0.052295 (about 5.5% improvement). This indicates that our method is more efficient than the general method while maintaining a good level of testing accuracy.