A Hybrid Image Segmentation Method for Accurate Measurement of Urban Environments

Abstract

1. Introduction

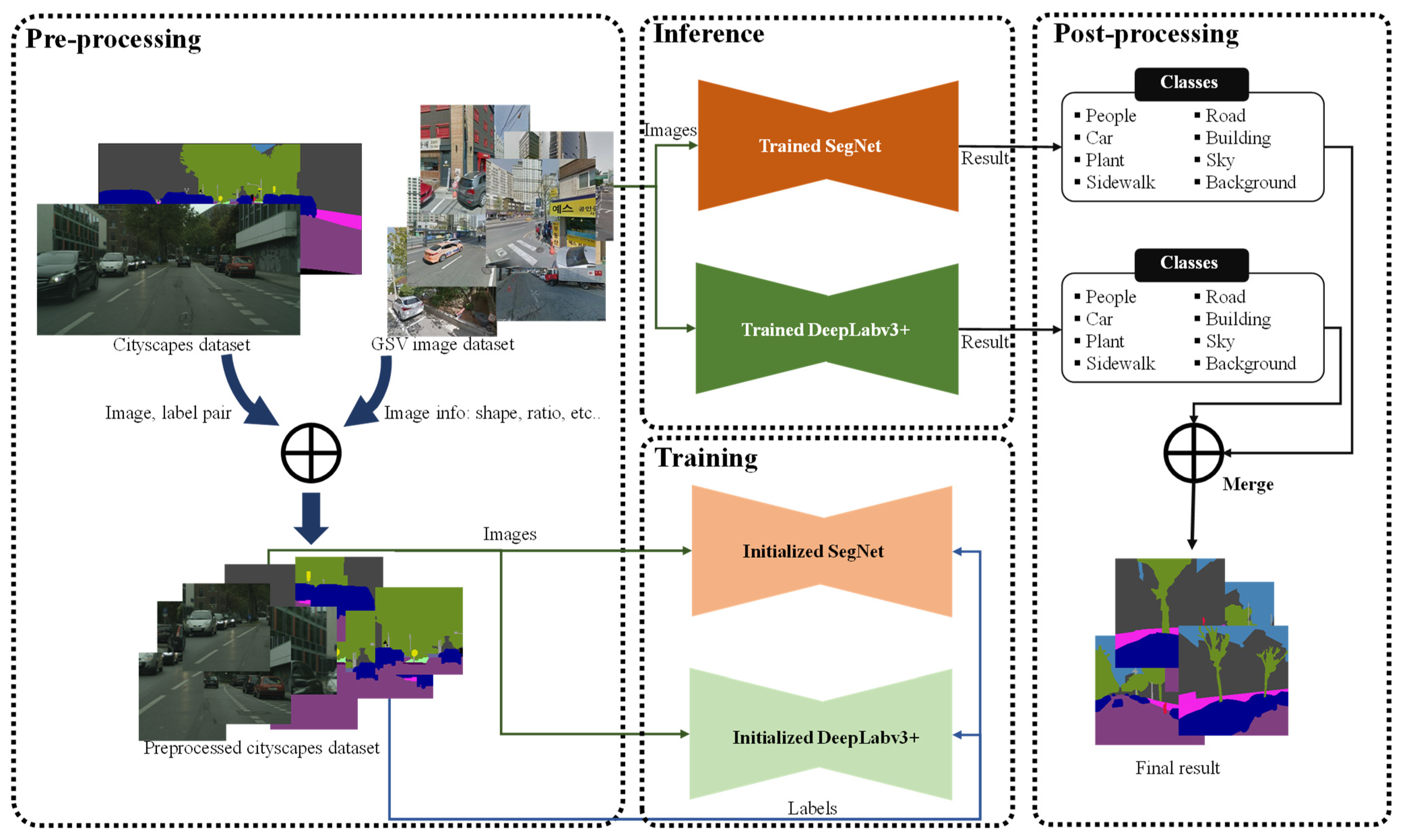

- We propose a hybrid model that combines the results of multiple segmentation models to accurately segment unlabeled GSV (Google Street View) images.

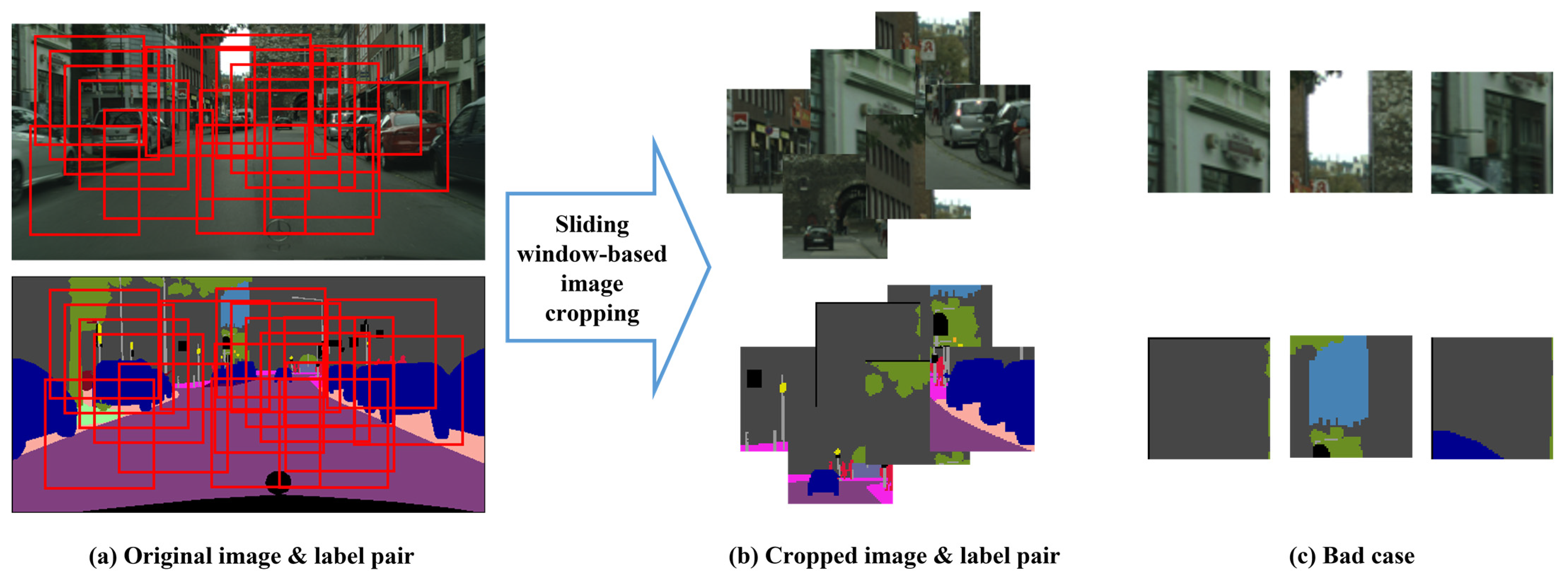

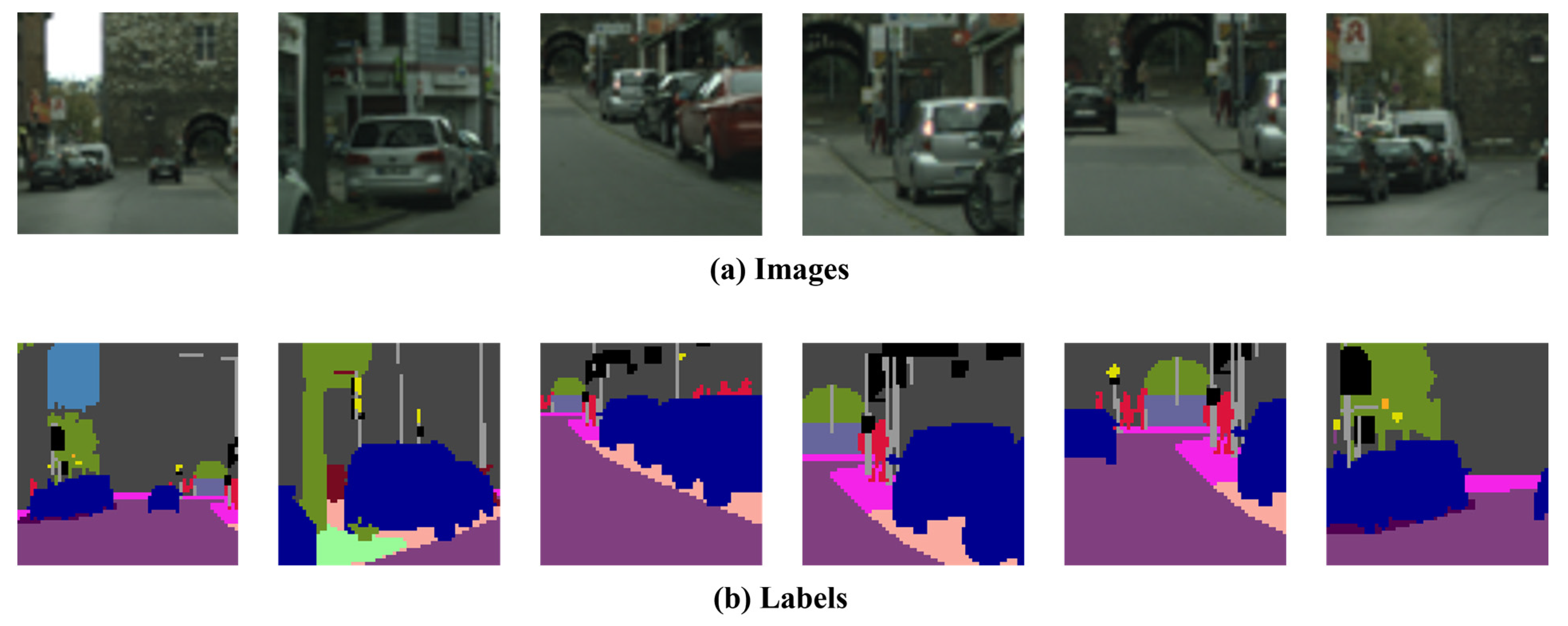

- We adopt a novel approach to training the segmentation model by completely re-training it after pre-processing the cityscapes dataset in a manner that closely resembles GSV data. This approach differs from the conventional method of using a pre-trained model.

- We enhance the accuracy of the segmentation model by using a weighted sum approach for classes that exhibit similar performance in the two models. These contributions enable the development of more effective and efficient techniques for analyzing urban environments using GSV images.

2. Related Works

2.1. Green Area Measuring

2.2. Image Segmentation

2.3. Hybrid and Fusion Scheme

3. Proposed Method

3.1. Pre-Processing

3.2. Base Models

3.3. Hybrid Model

4. Experiments

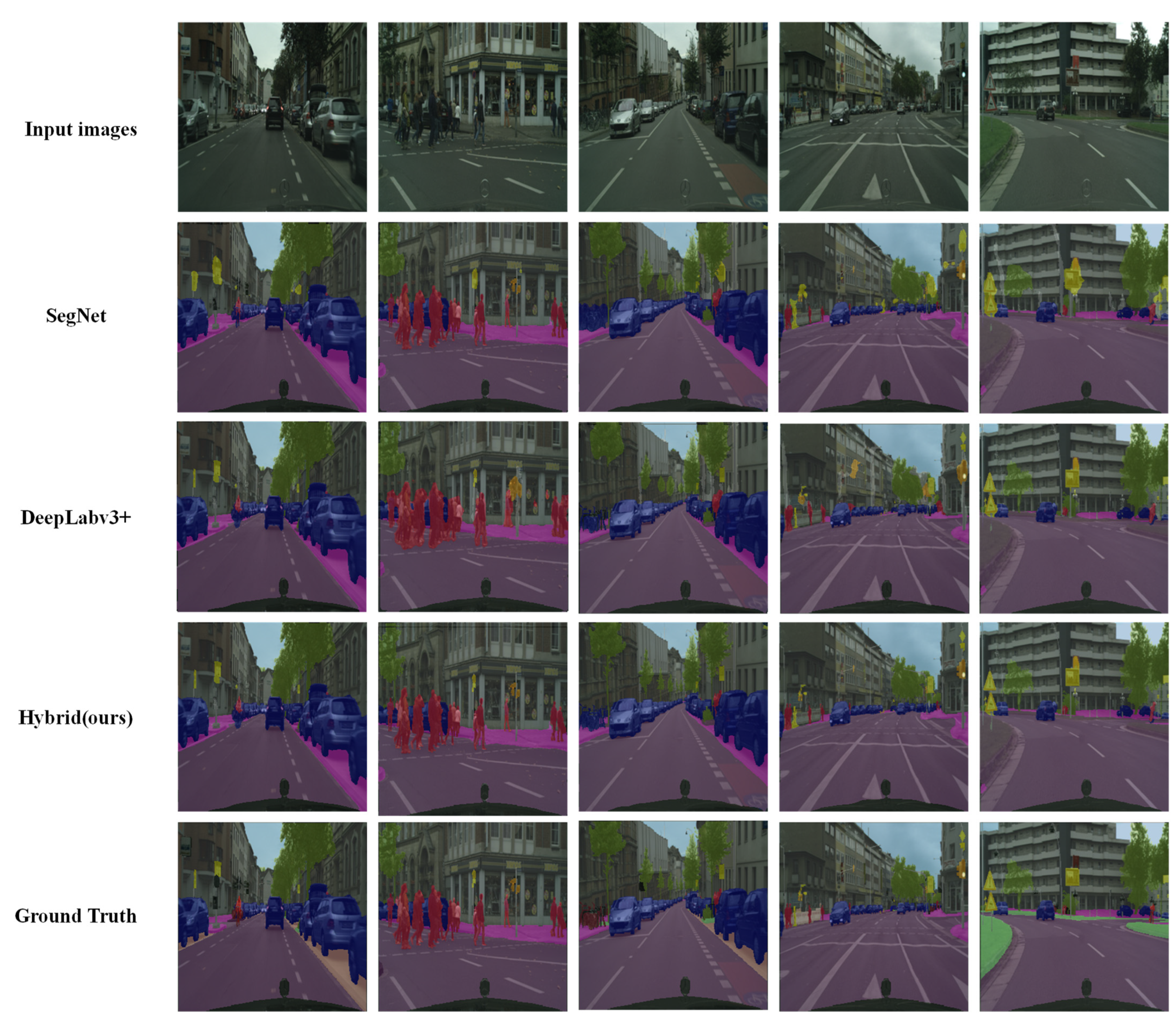

4.1. Evaluation Results for Cityscapes Dataset

4.2. Evaluation Results for GSV Images

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Rousselet, J.; Imbert, C.E.; Dekri, A.; Garcia, J.; Goussard, F.; Vincent, B.; Rossi, J.P. Assessing species distribution using Google Street View: A pilot study with the pine processionary moth. PLoS ONE 2013, 8, e74918. [Google Scholar] [CrossRef] [PubMed]

- Rzotkiewicz, A.; Pearson, A.L.; Dougherty, B.V.; Shortridge, A.; Wilson, N. Systematic review of the use of Google Street View in health research: Major themes, strengths, weaknesses and possibilities for future research. Health Place 2018, 52, 240–246. [Google Scholar] [CrossRef]

- Yin, L.; Cheng, Q.; Wang, Z.; Shao, Z. ‘Big data’ for pedestrian volume: Exploring the use of Google Street View images for pedestrian counts. Appl. Geogr. 2015, 63, 337–345. [Google Scholar] [CrossRef]

- Berland, A.; Lange, D.A. Google Street View shows promise for virtual street tree surveys. Urban For. Urban Green. 2017, 21, 11–15. [Google Scholar] [CrossRef]

- Liu, D.; Jiang, Y.; Wang, R.; Lu, Y. Establishing a citywide street tree inventory with street view images and computer vision techniques. Computers. Environ. Urban Syst. 2023, 100, 101924. [Google Scholar] [CrossRef]

- Gupta, K.; Kumar, P.; Pathan, S.K.; Sharma, K.P. Urban Neighborhood Green Index–A measure of green spaces in urban areas. Landsc. Urban Plan. 2012, 105, 325–335. [Google Scholar] [CrossRef]

- Kim, J.H.; Lee, S.; Hipp, J.R.; Ki, D. Decoding urban landscapes: Google street view and measurement sensitivity. Comput. Environ. Urban Syst. 2021, 88, 101626. [Google Scholar] [CrossRef]

- Rundle, A.G.; Bader, M.D.; Richards, C.A.; Neckerman, K.M.; Teitler, J.O. Using Google Street View to audit neighborhood environments. Am. J. Prev. Med. 2011, 40, 94–100. [Google Scholar] [CrossRef]

- Lu, Y.; Sarkar, C.; Xiao, Y. The effect of street-level greenery on walking behavior: Evidence from Hong Kong. Soc. Sci. Med. 2018, 208, 41–49. [Google Scholar] [CrossRef] [PubMed]

- Ye, Y.; Richards, D.; Lu, Y.; Song, X.; Zhuang, Y.; Zeng, W.; Zhong, T. Measuring daily accessed street greenery: A human-scale approach for informing better urban planning practices. Landsc. Urban Plan. 2019, 191, 103434. [Google Scholar] [CrossRef]

- Ki, D.; Lee, S. Analyzing the effects of Green View Index of neighborhood streets on walking time using Google Street View and deep learning. Landsc. Urban Plan. 2021, 205, 103920. [Google Scholar] [CrossRef]

- Choi, K.; Lim, W.; Chang, B.; Jeong, J.; Kim, I.; Park, C.R.; Ko, D.W. An automatic approach for tree species detection and profile estimation of urban street trees using deep learning and Google street view images. ISPRS J. Photogramm. Remote Sens. 2022, 190, 165–180. [Google Scholar] [CrossRef]

- Cordts, M.; Omran, M.; Ramos, S.; Rehfeld, T.; Enzweiler, M.; Benenson, R.; Franke, U.; Roth, S.; Schiele, B. The Cityscapes Dataset for Semantic Urban Scene Understanding. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016. [Google Scholar]

- Moon, J.; Park, S.; Rho, S.; Hwang, E. Robust building energy consumption forecasting using an online learning approach with R ranger. J. Build. Eng. 2022, 47, 103851. [Google Scholar] [CrossRef]

- Rew, J.; Cho, Y.; Moon, J.; Hwang, E. Habitat suitability estimation using a two-stage ensemble approach. Remote Sens. 2020, 12, 1475. [Google Scholar] [CrossRef]

- Badrinarayanan, V.; Handa, A.; Cipolla, R. Segnet: A deep convolutional encoder-decoder architecture for robust semantic pixel-wise labelling. arXiv 2015, arXiv:1505.07293. [Google Scholar]

- Badrinarayanan, V.; Kendall, A.; Cipolla, R. Segnet: A deep convolutional encoder-decoder architecture for image segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 2481–2495. [Google Scholar] [CrossRef]

- Chen, L.C.; Zhu, Y.; Papandreou, G.; Schroff, F.; Adam, H. Encoder–decoder with atrous separable convolution for semantic image segmentation. In Proceedings of the c European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 801–818. [Google Scholar]

- Li, X.; Zhang, C.; Li, W.; Ricard, R.; Meng, Q.; Zhang, W. Assessing street-level urban greenery using Google Street View and a modified green view index. Urban For. Urban Green. 2015, 14, 675–685. [Google Scholar] [CrossRef]

- Lu, Y.; Yang, Y.; Sun, G.; Gou, Z. Associations between overhead-view and eye-level urban greenness and cycling behaviors. Cities 2019, 88, 10–18. [Google Scholar] [CrossRef]

- Seiferling, I.; Naik, N.; Ratti, C.; Proulx, R. Green streets−Quantifying and mapping urban trees with street-level imagery and computer vision. Landsc. Urban Plan. 2017, 165, 93–101. [Google Scholar] [CrossRef]

- Wang, R.; Lu, Y.; Zhang, J.; Liu, P.; Yao, Y.; Liu, Y. The relationship between visual enclosure for neighbourhood street walkability and elders’ mental health in China: Using street view images. J. Transp. Health 2019, 13, 90–102. [Google Scholar] [CrossRef]

- Zarrin, I. Leaf based trees identification using convolutional neural network. In Proceedings of the 2019 IEEE 5th International Conference for Convergence in Technology (I2CT), Bombay, India, 29–31 March 2019; pp. 1–4. [Google Scholar]

- Sun, Y.; Wang, X.; Zhu, J.; Chen, L.; Jia, Y.; Lawrence, J.M.; Wu, J. Using machine learning to examine street green space types at a high spatial resolution: Application in Los Angeles County on socioeconomic disparities in exposure. Sci. Total Environ. 2021, 787, 147653. [Google Scholar] [CrossRef]

- Long, J.; Shelhamer, E.; Darrell, T. Fully convolutional networks for semantic segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–15 June 2015; pp. 3431–3440. [Google Scholar]

- Noh, H.; Hong, S.; Han, B. Learning deconvolution network for semantic segmentation. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chile, 7–13 December 2015; pp. 1520–1528. [Google Scholar]

- Ronneberger, O.; Fischer, P.; Brox, T. U-Net: Convolutional networks for biomedical image segmentation. In Proceedings of the Medical Image Computing and Computer-Assisted Intervention–MICCAI 2015: 18th International Conference, Munich, Germany, 5–9 October 2015; pp. 234–241. [Google Scholar]

- Chen, L.-C.; Papandreou, G.; Kokkinos, I.; Murphy, K.; Yuille, A.L. Semantic image segmentation with deep convolutional nets and fully connected crfs. arXiv 2014, arXiv:1412.7062. [Google Scholar]

- Chen, L.C.; Papandreou, G.; Kokkinos, I.; Murphy, K.; Yuille, A.L. Deeplab: Semantic image segmentation with deep convolutional nets, atrous convolution, and fully connected crfs. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 40, 834–848. [Google Scholar] [CrossRef]

- Chen, L.C.; Papandreou, G.; Schroff, F.; Adam, H. Rethinking atrous convolution for semantic image segmentation. arXiv 2017, arXiv:1706.05587. [Google Scholar]

- Bowen, C.; Maxwell, D.C.; Yukun, Z.; Ting, L.; Thomas, S.H.; Hartwig, A.; Chen, L.-C. Panoptic-DeepLab. arXiv 2019, arXiv:1910.04751. [Google Scholar]

- Cheng, B.; Misra, I.; Schwing, A.G.; Kirillov, A.; Girdhar, R. Masked-attention mask transformer for universal image segmentation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 19–24 June 2022; pp. 1290–1299. [Google Scholar]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An image is worth 16 × 16 words: Transformers for image recognition at scale. arXiv 2020, arXiv:2010.11929. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention is all you need. Adv. Neural Inf. Process. Syst. 2017, 30. [Google Scholar]

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. Bert: Pre-training of deep bidirectional transformers for language understanding. arXiv 2018, arXiv:1810.04805. [Google Scholar]

- Kabilan, R.; Devaraj, G.P.; Muthuraman, U.; Muthukumaran, N.; Gabriel, J.Z.; Swetha, R. Efficient color image segmentation using fastmap algorithm. In Proceedings of the 2021 Third International Conference on Intelligent Communication Technologies and Virtual Mobile Networks (ICICV), Tirunelveli, India, 4–6 February 2021; pp. 1134–1141. [Google Scholar]

- Huo, X.; Xie, L.; He, J.; Yang, Z.; Zhou, W.; Li, H.; Tian, Q. ATSO: Asynchronous teacher-student optimization for semi-supervised image segmentation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Virtual, 19–25 June 2021; pp. 1235–1244. [Google Scholar]

- Li, Y.; Guo, L.; Rao, J.; Xu, L.; Jin, S. Road segmentation based on hybrid convolutional network for high-resolution visible remote sensing image. IEEE Geosci. Remote Sens. Lett. 2018, 16, 613–617. [Google Scholar] [CrossRef]

- Wang, J.; Jiang, L.; Wang, Y.; Qi, Q. An improved hybrid segmentation method for remote sensing images. ISPRS Int. J. Geo-Inf. 2019, 8, 543. [Google Scholar] [CrossRef]

- Khoshboresh Masouleh, M.; Shah-Hosseini, R. A hybrid deep learning–based model for automatic car extraction from high-resolution airborne imagery. Appl. Geomat. 2020, 12, 107–119. [Google Scholar] [CrossRef]

- Sun, Y.; Zuo, W.; Yun, P.; Wang, H.; Liu, M. FuseSeg: Semantic segmentation of urban scenes based on RGB and thermal data fusion. IEEE Trans. Autom. Sci. Eng. 2020, 18, 1000–1011. [Google Scholar] [CrossRef]

- Khan, S.D.; Alarabi, L.; Basalamah, S. Deep hybrid network for land cover semantic segmentation in high-spatial resolution satellite images. Information 2021, 12, 230. [Google Scholar] [CrossRef]

- Niu, R.; Sun, X.; Tian, Y.; Diao, W.; Chen, K.; Fu, K. Hybrid multiple attention network for semantic segmentation in aerial images. IEEE Trans. Geosci. Remote Sens. 2021, 60, 1–18. [Google Scholar] [CrossRef]

- Abdollahi, A.; Pradhan, B.; Shukla, N.; Chakraborty, S.; Alamri, A. Multi-object segmentation in complex urban scenes from high-resolution remote sensing data. Remote Sens. 2021, 13, 3710. [Google Scholar] [CrossRef]

- Chen, L.; Fu, Y.; You, S.; Liu, H. Efficient hybrid supervision for instance segmentation in aerial images. Remote Sens. 2021, 13, 252. [Google Scholar] [CrossRef]

- Zhang, C.; Jiang, W.; Zhang, Y.; Wang, W.; Zhao, Q.; Wang, C. Transformer and CNN hybrid deep neural network for semantic segmentation of very-high-resolution remote sensing imagery. IEEE Trans. Geosci. Remote Sens. 2022, 60, 1–20. [Google Scholar] [CrossRef]

- Luo, X.; Tong, X.; Hu, Z.; Wu, G. Improving urban land cover/use mapping by integrating a hybrid convolutional neural network and an automatic training sample expanding strategy. Remote Sens. 2020, 12, 2292. [Google Scholar] [CrossRef]

- Li, M.; Xie, Y.; Shen, Y.; Ke, B.; Qiao, R.; Ren, B.; Lin, S.; Ma, L. Hybridcr: Weakly-supervised 3d point cloud semantic segmentation via hybrid contrastive regularization. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 19–24 June 2022; pp. 14930–14939. [Google Scholar]

- Hossain, M.D.; Chen, D. A hybrid image segmentation method for building extraction from high-resolution RGB images. ISPRS J. Photogramm. Remote Sens. 2022, 192, 299–314. [Google Scholar] [CrossRef]

- Valdez-Rodríguez, J.E.; Calvo, H.; Felipe-Riverón, E.; Moreno-Armendáriz, M.A. Improving depth estimation by embedding semantic segmentation: A hybrid CNN model. Sensors 2022, 22, 1669. [Google Scholar] [CrossRef]

- Wang, L.; Li, R.; Wang, D.; Duan, C.; Wang, T.; Meng, X. Transformer meets convolution: A bilateral awareness network for semantic segmentation of very fine resolution urban scene images. Remote Sens. 2021, 13, 3065. [Google Scholar] [CrossRef]

- Men, G.; He, G.; Wang, G. Concatenated Residual Attention UNet for Semantic Segmentation of Urban Green Space. Forests 2021, 12, 1441. [Google Scholar] [CrossRef]

- Wang, L.; Li, R.; Zhang, C.; Fang, S.; Duan, C.; Meng, X.; Atkinson, P.M. UNetFormer: A UNet-like transformer for efficient semantic segmentation of remote sensing urban scene imagery. ISPRS J. Photogramm. Remote Sens. 2022, 190, 196–214. [Google Scholar] [CrossRef]

- Gu, X.; Li, S.; Ren, S.; Zheng, H.; Fan, C.; Xu, H. Adaptive enhanced swin transformer with U-net for remote sensing image segmentation. Comput. Electr. Eng. 2022, 102, 108223. [Google Scholar] [CrossRef]

| Evaluation Metric: Intersection over Union (IoU) | ||

|---|---|---|

| Classes | Model | |

| SegNet | DeepLabv3+ | |

| People | 0.699 | 0.657 |

| Car | 0.681 | 0.658 |

| Plant | 0.702 | 0.706 |

| Sky | 0.705 | 0.792 |

| Building | 0.691 | 0.813 |

| Road | 0.705 | 0.796 |

| Sidewalk | 0.721 | 0.809 |

| Background | 0.760 | 0.814 |

| Average | 0.708 | 0.756 |

| Evaluation Metric: Intersection over Union (IoU) | |||

|---|---|---|---|

| Classes | Results | ||

| Hybrid | Compare to SegNet | Compare to DeepLabv3+ | |

| People | 0.706 | +0.007 | +0.049 |

| Car | 0.717 | +0.036 | +0.059 |

| Plant | 0.715 | +0.013 | +0.009 |

| Sky | 0.813 | +0.108 | +0.021 |

| Building | 0.815 | +0.124 | +0.002 |

| Road | 0.801 | +0.096 | +0.005 |

| Sidewalk | 0.812 | +0.091 | +0.003 |

| Background | 0.808 | +0.048 | −0.006 |

| Average | 0.773 | +0.065 | +0.018 |

| Model | Score |

|---|---|

| SegNet | 58 |

| DeepLabv3+ | 78 |

| Ensemble (Ours) | 134 |

| Model | Score | ||

|---|---|---|---|

| 5 | 3 | 1 | |

| SegNet | 10.00% | 26.67% | 63.33% |

| DeepLabv3+ | 13.33% | 53.33% | 33.33% |

| Ensemble (Ours) | 76.67% | 20.00% | 3.33% |

| The number of corresponding scored images per total number of images | |||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kim, H.; Lee, J.H.; Lee, S. A Hybrid Image Segmentation Method for Accurate Measurement of Urban Environments. Electronics 2023, 12, 1845. https://doi.org/10.3390/electronics12081845

Kim H, Lee JH, Lee S. A Hybrid Image Segmentation Method for Accurate Measurement of Urban Environments. Electronics. 2023; 12(8):1845. https://doi.org/10.3390/electronics12081845

Chicago/Turabian StyleKim, Hyungjoon, Jae Ho Lee, and Suan Lee. 2023. "A Hybrid Image Segmentation Method for Accurate Measurement of Urban Environments" Electronics 12, no. 8: 1845. https://doi.org/10.3390/electronics12081845

APA StyleKim, H., Lee, J. H., & Lee, S. (2023). A Hybrid Image Segmentation Method for Accurate Measurement of Urban Environments. Electronics, 12(8), 1845. https://doi.org/10.3390/electronics12081845