Recent Advances in Pulse-Coupled Neural Networks with Applications in Image Processing

Abstract

1. Introduction

2. Fundamentals of PCNN

2.1. Original Pulse-Coupled Neural Network

2.2. Intersecting Cortical Model

2.3. Spiking Cortical Model

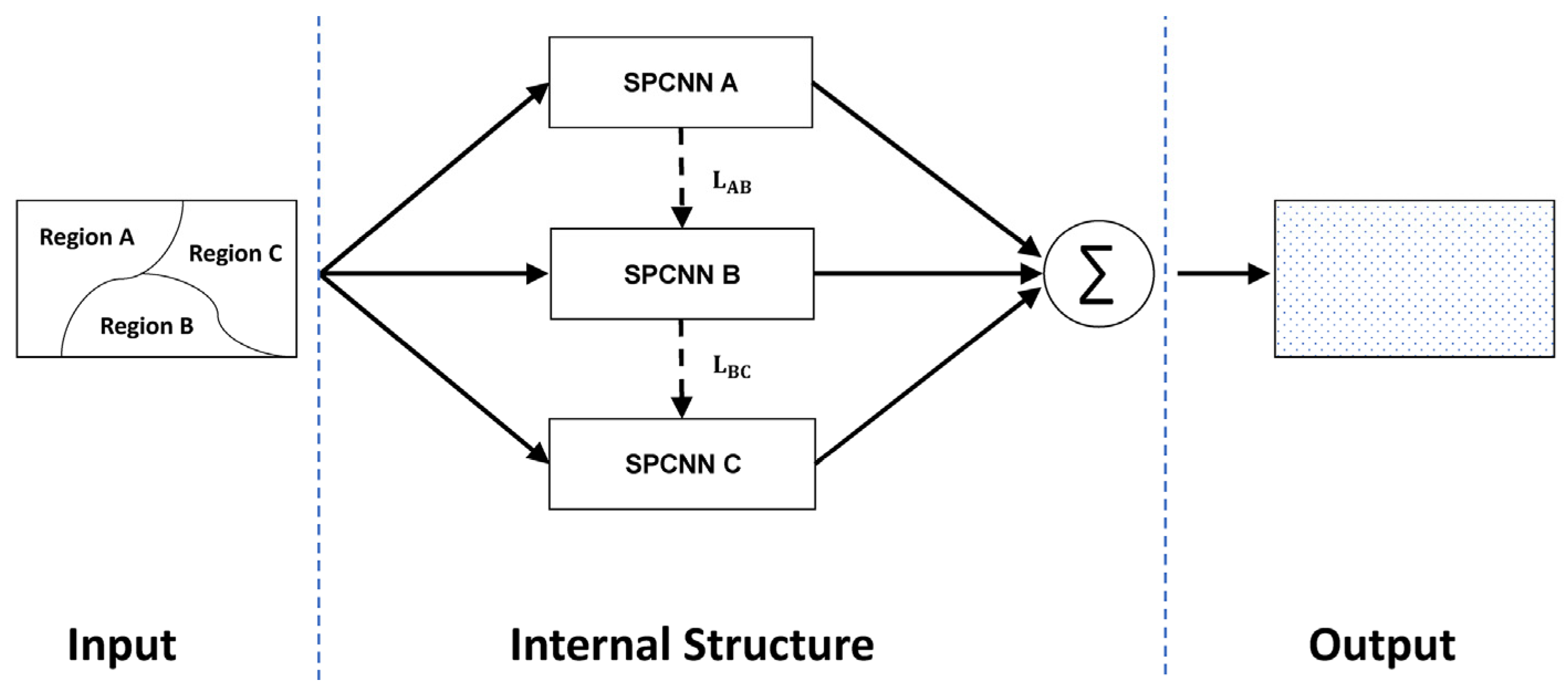

2.4. Modified SPCNN

2.5. Fire-Controlled MSPCNN

2.6. Sine–Cosine PCNN

2.7. Quasi-Continuous Model

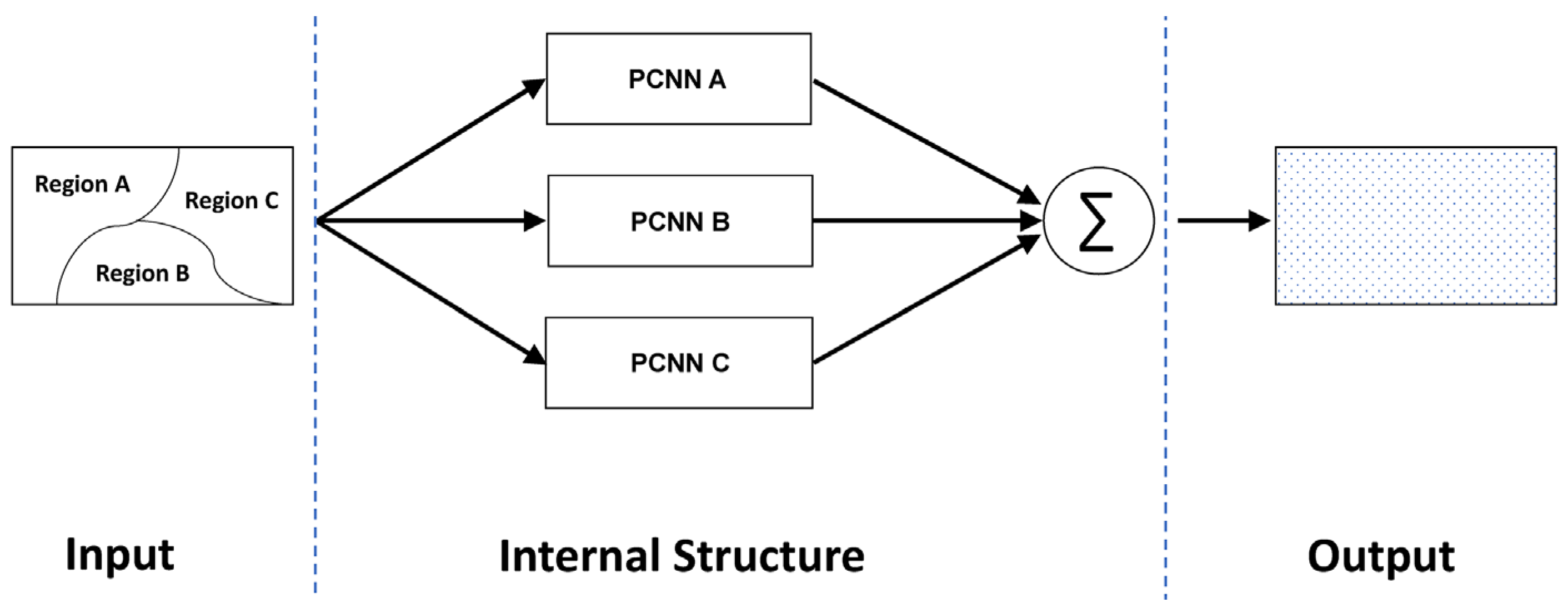

2.8. Heterogeneous PCNN

- Neurons with different weights, but the same structure.

- Neurons with different structures, but the same weight.

- Both structure and weight are different for different neurons.

2.9. Continuous-Coupled Neural Network

3. Applications

3.1. Color Image Processing

3.2. Diagnosis and Computer Vision

3.3. Image Fusion

3.4. Other Recent Advances

3.5. Summary

4. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Freeman, W.J.; van Dijk, B.W. Spatial patterns of visual cortical fast EEG during conditioned reflex in a rhesus monkey. Brain Res. 1987, 422, 267–276. [Google Scholar] [CrossRef]

- Eckhorn, R.; Bauer, R.; Jordan, W.; Brosch, M.; Kruse, W.; Munk, M.; Reitboeck, H.J. Coherent oscillations: A mechanism of feature linking in the visual cortex? Biol. Cybern. 1988, 60, 121–130. [Google Scholar] [CrossRef] [PubMed]

- Hodgkin, A.L.; Huxley, A.F. A quantitative description of membrane current and its application to conduction and excitation in nerve. J. Physiol. 1952, 117, 500–544. [Google Scholar] [CrossRef] [PubMed]

- Eckhorn, R.; Reitboeck, H.J.; Arndt, M.; Dicke, P. Feature Linking via Synchronization among Distributed Assemblies: Simulations of Results from Cat Visual Cortex. Neural Comput. 1990, 2, 293–307. [Google Scholar] [CrossRef]

- Johnson, J.L. Pulse-coupled neural nets: Translation, rotation, scale, distortion, and intensity signal invariance for images. Appl. Opt. 1994, 33, 6239–6253. [Google Scholar] [CrossRef] [PubMed]

- Ranganath, H.S.; Kuntimad, G.; Johnson, J.L. Pulse coupled neural networks for image processing. In Proceedings of the Proceedings IEEE Southeastcon ‘95. Visualize the Future, Raleigh, NC, USA, 26–29 March 1995; pp. 37–43. [Google Scholar]

- Gu, X.; Yu, D.; Zhang, L. Image shadow removal using pulse coupled neural network. IEEE Trans. Neural Netw. 2005, 16, 692–698. [Google Scholar] [CrossRef] [PubMed]

- Liu, M.; Zhao, F.; Jiang, X.; Zhang, H.; Zhou, H. Parallel binary image cryptosystem via spiking neural networks variants. Int. J. Neural Syst. 2021, 32, 2150014. [Google Scholar] [CrossRef] [PubMed]

- Ranganath, H.S.; Kuntimad, G. Object detection using pulse coupled neural networks. IEEE Trans. Neural Netw. 1999, 10, 615–620. [Google Scholar] [CrossRef]

- Yu, B.; Zhang, L. Pulse-coupled neural networks for contour and motion matchings. IEEE Trans. Neural Netw. 2004, 15, 1186–1201. [Google Scholar] [CrossRef]

- Jason, M.K. Simplified pulse-coupled neural network. In Applications and Science of Artificial Neural Networks; SPIE: Bellingham, WA, USA, 1996. [Google Scholar]

- Johnson, J.L.; Padgett, M.L. PCNN models and applications. IEEE Trans. Neural Netw. 1999, 10, 480–498. [Google Scholar] [CrossRef]

- Ekblad, U.; Kinser, J.M.; Atmer, J.; Zetterlund, N. The intersecting cortical model in image processing. Nucl. Instrum. Methods Phys. Res. Sect. A Accel. Spectrometers Detect. Assoc. Equip. 2004, 525, 392–396. [Google Scholar] [CrossRef]

- Zhan, K.; Zhang, H.; Ma, Y. New Spiking Cortical Model for Invariant Texture Retrieval and Image Processing. IEEE Trans. Neural Netw. 2009, 20, 1980–1986. [Google Scholar] [CrossRef]

- Huang, Y.; Ma, Y.; Li, S.; Zhan, K. Application of heterogeneous pulse coupled neural network in image quantization. J. Electron. Imaging 2016, 25, 61603. [Google Scholar] [CrossRef]

- Huang, Y.; Ma, Y.; Li, S. A new method for image quantization based on adaptive region related heterogeneous PCNN. In Proceedings of the International Symposium on Neural Networks, Jeju, Korea, 15–18 October 2015; pp. 269–278. [Google Scholar]

- Duan, P.; Kang, X.; Li, S.; Ghamisi, P. Multichannel Pulse-Coupled Neural Network-Based Hyperspectral Image Visualization. IEEE Trans. Geosci. Remote Sens. 2020, 58, 2444–2456. [Google Scholar] [CrossRef]

- Yang, Z.; Dong, M.; Guo, Y.; Gao, X.; Wang, K.; Shi, B.; Ma, Y. A new method of micro-calcifications detection in digitized mammograms based on improved simplified PCNN. Neurocomputing 2016, 218, 79–90. [Google Scholar] [CrossRef]

- Yang, Z.; Guo, Y.; Gong, X.; Ma, Y. A Non-integer Step Index PCNN Model and Its Applications. In Medical Image Understanding and Analysis; Springer: Cham, Switzerland, 2017; pp. 780–791. [Google Scholar]

- Liu, J.; Lian, J.; Sprott, J.C.; Liu, Q.; Ma, Y. The Butterfly Effect in Primary Visual Cortex. IEEE Trans. Comput. 2022, 1. [Google Scholar] [CrossRef]

- Liu, J.; Lian, J.; Sprott, J.C.; Ma, Y. A Novel Neuron Model of Visual Processor. arXiv 2012, arXiv:2104.07257. [Google Scholar]

- Wang, Z.; Ma, Y.; Cheng, F.; Yang, L. Review of pulse-coupled neural networks. Image Vis. Comput. 2010, 28, 5–13. [Google Scholar] [CrossRef]

- Yang, Z.; Lian, J.; Guo, Y.; Li, S.; Wang, D.; Sun, W.; Ma, Y. An Overview of PCNN Model’s Development and Its Application in Image Processing. Arch. Comput. Methods Eng. 2019, 26, 491–505. [Google Scholar] [CrossRef]

- Liu, H.; Cheng, Y.; Zuo, Z.; Sun, T.; Wang, K. Discrimination of neutrons and gamma rays in plastic scintillator based on pulse-coupled neural network. Nucl. Sci. Tech. 2021, 32, 82. [Google Scholar] [CrossRef]

- Liu, H.-R.; Zuo, Z.; Li, P.; Liu, B.-Q.; Chang, L.; Yan, Y.-C. Anti-noise performance of the pulse coupled neural network applied in discrimination of neutron and gamma-ray. Nucl. Sci. Tech. 2022, 33, 75. [Google Scholar] [CrossRef]

- Lian, J.; Yang, Z.; Sun, W.; Guo, Y.; Zheng, L.; Li, J.; Shi, B.; Ma, Y. An image segmentation method of a modified SPCNN based on human visual system in medical images. Neurocomputing 2019, 333, 292–306. [Google Scholar] [CrossRef]

- Chen, Y.; Park, S.; Ma, Y.; Ala, R. A New Automatic Parameter Setting Method of a Simplified PCNN for Image Segmentation. IEEE Trans. Neural Netw. 2011, 22, 880–892. [Google Scholar] [CrossRef] [PubMed]

- Otsu, N. A threshold selection method from gray-level histograms. IEEE Trans. Syst. Man Cybern. 1979, 9, 62–66. [Google Scholar] [CrossRef]

- Lian, J.; Yang, Z.; Sun, W.; Zheng, L.; Qi, Y.; Shi, B.; Ma, Y. A fire-controlled MSPCNN and its applications for image processing. Neurocomputing 2021, 422, 150–164. [Google Scholar] [CrossRef]

- Yang, Z.; Lian, J.; Li, S.; Guo, Y.; Ma, Y. A study of sine–cosine oscillation heterogeneous PCNN for image quantization. Soft Comput. 2019, 23, 11967–11978. [Google Scholar] [CrossRef]

- Lindblad, T.; Kinser, J.M.; Lindblad, T.; Kinser, J. Image Processing Using Pulse-Coupled Neural Networks; Springer: Berlin/Heidelberg, Germany, 2005. [Google Scholar]

- Ma, Y.; Wang, Z.; Zheng, J.Z.; Lu, L.; Wang, G.; Li, P.; Ma, T.; Xie, Y. Extracting Micro-calcification Clusters on Mammograms for Early Breast Cancer Detection. In Proceedings of the 2006 IEEE International Conference on Information Acquisition, Weihai, China, 20–23 August 2006; pp. 499–504. [Google Scholar]

- Beer, R.; Chiel, H.; Sterling, L.S. Heterogeneous neural networks for adaptive behavior in dynamic environments. Adv. Neural Inf. Process. Syst. 1988, 1, 577–585. [Google Scholar]

- Selverston, A.I. A consideration of invertebrate central pattern generators as computational data bases. Neural Netw. 1988, 1, 109–117. [Google Scholar] [CrossRef]

- Kuffler, S.W.; Nicholls, J.G. From Neuron to Brain, a Cellular Approach to the Function of the Nervous System; Stephen, W., Kuffler, J., Nicholls, G., Eds.; Sinauer Associates: Sunderland, MA, USA, 1976. [Google Scholar]

- Yang, Z.; Lian, J.; Li, S.; Guo, Y.; Qi, Y.; Ma, Y. Heterogeneous SPCNN and its application in image segmentation. Neurocomputing 2018, 285, 196–203. [Google Scholar] [CrossRef]

- Siegel, R.M. Non-linear dynamical system theory and primary visual cortical processing. Phys. D Nonlinear Phenom. 1990, 42, 385–395. [Google Scholar] [CrossRef]

- Jia, H.; Xing, Z.; Song, W. Three Dimensional Pulse Coupled Neural Network Based on Hybrid Optimization Algorithm for Oil Pollution Image Segmentation. Remote Sens. 2019, 11, 1046. [Google Scholar] [CrossRef]

- He, K.; Wang, R.; Tao, D.; Cheng, J.; Liu, W. Color Transfer Pulse-Coupled Neural Networks for Underwater Robotic Visual Systems. IEEE Access 2018, 6, 32850–32860. [Google Scholar] [CrossRef]

- Lian, J.; Liu, J.; Yang, Z.; Qi, Y.; Zhang, H.; Zhang, M.; Ma, Y. A Pulse-Number-Adjustable MSPCNN and Its Image Enhancement Application. IEEE Access 2021, 9, 161069–161086. [Google Scholar] [CrossRef]

- Shanker, R.; Bhattacharya, M. Automated Diagnosis system for detection of the pathological brain using Fast version of Simplified Pulse-Coupled Neural Network and Twin Support Vector Machine. Multimed. Tools Appl. 2021, 80, 30479–30502. [Google Scholar] [CrossRef]

- Altaf, M.M. A hybrid deep learning model for breast cancer diagnosis based on transfer learning and pulse-coupled neural networks. Math. Biosci. Eng. 2021, 18, 5029–5046. [Google Scholar] [CrossRef]

- Thyagharajan, K.K.; Kalaiarasi, G. Pulse coupled neural network based near-duplicate detection of images (PCNN–NDD). Adv. Electr. Comput. Eng. 2018, 18, 87–97. [Google Scholar] [CrossRef]

- Lian, J.; Yang, Z.; Liu, J.; Sun, W.; Zheng, L.; Du, X.; Yi, Z.; Shi, B.; Ma, Y. An Overview of Image Segmentation Based on Pulse-Coupled Neural Network. Arch. Comput. Methods Eng. 2021, 28, 387–403. [Google Scholar] [CrossRef]

- Qi, Y.; Yang, Z.; Sun, W.; Lou, M.; Lian, J.; Zhao, W.; Deng, X.; Ma, Y. A Comprehensive Overview of Image Enhancement Techniques. Arch. Comput. Methods Eng. 2022, 29, 583–607. [Google Scholar] [CrossRef]

- Jiang, L.; Zhang, D.; Che, L. Texture analysis-based multi-focus image fusion using a modified Pulse-Coupled Neural Network (PCNN). Signal Process. Image Commun. 2021, 91, 116068. [Google Scholar] [CrossRef]

- Du, C.; Gao, S. Multi-focus image fusion algorithm based on pulse coupled neural networks and modified decision map. Optik 2018, 157, 1003–1015. [Google Scholar] [CrossRef]

- Ramlal, S.D.; Sachdeva, J.; Ahuja, C.K.; Khandelwal, N. Multimodal medical image fusion using non-subsampled shearlet transform and pulse coupled neural network incorporated with morphological gradient. Signal Image Video Process. 2018, 12, 1479–1487. [Google Scholar] [CrossRef]

- Li, L.; Ma, H. Pulse Coupled Neural Network-Based Multimodal Medical Image Fusion via Guided Filtering and WSEML in NSCT Domain. Entropy 2021, 23, 591. [Google Scholar] [CrossRef] [PubMed]

- Rajalingam, B.; Priya, R. Hybrid multimodality medical image fusion based on guided image filter with pulse coupled neural network. Int. J. Sci. Res. Sci. Eng. Technol. 2018, 5, 86–99. [Google Scholar]

- Qin, X.; Ban, Y.; Wu, P.; Yang, B.; Liu, S.; Yin, L.; Liu, M.; Zheng, W. Improved Image Fusion Method Based on Sparse Decomposition. Electronics 2022, 11, 2321. [Google Scholar] [CrossRef]

- Ban, Y.; Liu, M.; Wu, P.; Yang, B.; Liu, S.; Yin, L.; Zheng, W. Depth Estimation Method for Monocular Camera Defocus Images in Microscopic Scenes. Electronics 2022, 11, 2012. [Google Scholar] [CrossRef]

- Chen, T.; Wang, H.; Cao, J. Research on Auto-focusing Method Based on Pulse Coupled Neural Network. J. Phys. Conf. Ser. 2021, 1848, 012158. [Google Scholar] [CrossRef]

- Dong, J.; Xia, Z.; Yan, W.; Zhao, Q. Dynamic gesture recognition by directional pulse coupled neural networks for human-robot interaction in real time. J. Vis. Commun. Image Represent. 2019, 63, 102583. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Liu, H.; Liu, M.; Li, D.; Zheng, W.; Yin, L.; Wang, R. Recent Advances in Pulse-Coupled Neural Networks with Applications in Image Processing. Electronics 2022, 11, 3264. https://doi.org/10.3390/electronics11203264

Liu H, Liu M, Li D, Zheng W, Yin L, Wang R. Recent Advances in Pulse-Coupled Neural Networks with Applications in Image Processing. Electronics. 2022; 11(20):3264. https://doi.org/10.3390/electronics11203264

Chicago/Turabian StyleLiu, Haoran, Mingzhe Liu, Dongfen Li, Wenfeng Zheng, Lirong Yin, and Ruili Wang. 2022. "Recent Advances in Pulse-Coupled Neural Networks with Applications in Image Processing" Electronics 11, no. 20: 3264. https://doi.org/10.3390/electronics11203264

APA StyleLiu, H., Liu, M., Li, D., Zheng, W., Yin, L., & Wang, R. (2022). Recent Advances in Pulse-Coupled Neural Networks with Applications in Image Processing. Electronics, 11(20), 3264. https://doi.org/10.3390/electronics11203264