Decision Making for Self-Driving Vehicles in Unexpected Environments Using Efficient Reinforcement Learning Methods

Abstract

:1. Introduction

- (1)

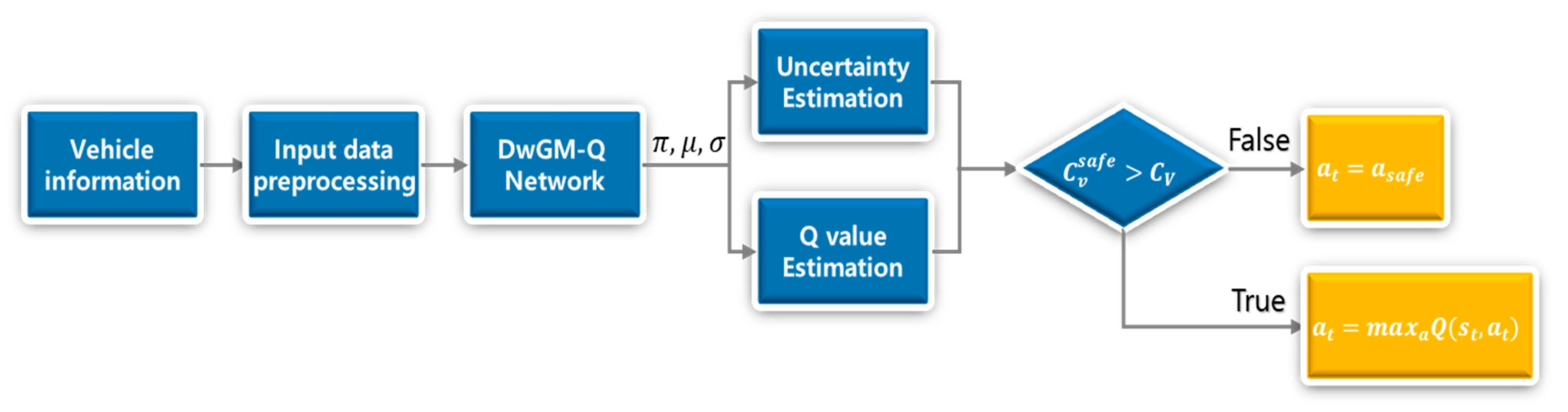

- This paper proposed DeepSet-Q with Gaussian mixture (DwGM-Q), which considered the unexpected environments that the DeepSet-Q agent cannot.

- (2)

- A method different from the existing process was presented, and the estimation speed was increased compared to the uncertainty inference method using the current ensemble.

- (3)

- The uncertainty estimation result was shown through the SUMO simulator.

2. Related Work

3. Method

3.1. Reinforcement Learning

3.2. Mixture Density Network (MDN)

3.3. Uncertainty Estimation

4. DeepSet with Gaussian Mixture Q Network (DwGM-Q)

4.1. Network Architecture

4.2. Weight Update

| Algorithm 1: Training DwGM-Q |

| Initialize |

| Set replay buffer |

| for episode = 1, M do |

| initialize random state |

| for step = 1, T do |

| if |

| (Equation (5)) |

| else |

| = |

| select |

| execute and observe reward and |

| Store transition () in |

| Sample random minibatch of transitions () from |

| Compute JTD loss (Equation (8)) |

| Update weights |

4.3. Decision Procedure

5. Environment Setup

5.1. State Input

5.2. Action

5.3. Reward

6. Experiment

7. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Appendix A

| Learning rate | 1 × 104 |

| node | 80 |

| node | 20 |

| 0.1 | |

| Batch size | 64 |

| Buffer size | 100,000 |

| Learning rate | 1 × 104 |

| # of Gaussian | 5 |

| node | 80 |

| node | 20 |

| 0.1 | |

| Batch size | 64 |

| Buffer size | 100,000 |

References

- Liu, Q.; Li, X.; Yuan, S.; Li, Z. Decision-Making Technology for Autonomous Vehicles: Learning-Based Methods, Applications and Future Outlook. In Proceedings of the 2021 IEEE International Intelligent Transportation Systems Conference (ITSC), Indianapolis, IN, USA, 19–22 September 2021; pp. 30–37. [Google Scholar] [CrossRef]

- Treiber, M.; Hennecke, A.; Helbing, D. Congested traffic states in empirical observations and microscopic simulations. Phys. Rev. E 2000, 62, 1805–1824. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Kesting, A.; Treiber, M.; Helbing, D. General lane-changing model MOBIL for car-following models. Transport. Res. Rec. 2007, 1999, 86–94. [Google Scholar] [CrossRef] [Green Version]

- Urmson, C.; Anhalt, J.; Bagnell, D.; Baker, C.; Bittner, R.; Clark, M.N.; Dolan, J.; Duggins, D.; Galatali, T.; Geyer, C.; et al. Autonomous driving in urban environments: Boss and the urban challenge. J. Field Robot. 2008, 25, 425–466. [Google Scholar] [CrossRef] [Green Version]

- Montemerlo, M.; Becker, J.; Bhat, S.; Dahlkamp, H.; Dolgov, D.; Ettinger, S.; Haehnel, D.; Hilden, T.; Hoffmann, G.; Huhnke, B.; et al. Junior: The Stanford entry in the urban challenge. J. Field Robot. 2008, 25, 569–597. [Google Scholar] [CrossRef] [Green Version]

- Bacha, A.; Bauman, C.; Faruque, R.; Fleming, M.; Terwelp, C.; Reinholtz, C.; Hong, D.; Wicks, A.; Alberi, T.; Anderson, D.; et al. Odin: Team VictorTango’s entry in the DARPA urban challenge. J. Field Robot. 2008, 25, 467–492. [Google Scholar] [CrossRef]

- Wang, Z.; Wan, Q.; Qin, Y.; Fan, S.; Xiao, Z. Research on intelligent algorithm for alerting vehicle impact based on multi-agent deep reinforcement learning. J. Ambient Intell. Humaniz. Comput. 2021, 12, 1337–1347. [Google Scholar] [CrossRef]

- Madani, Y.; Ezzikouri, H.; Erritali, M.; Hssina, B. Finding optimal pedagogical content in an adaptive e-learning platform using a new recommendation approach and reinforcement learning. J. Ambient Intell. Humaniz. Comput. 2020, 11, 3921–3936. [Google Scholar] [CrossRef]

- Tian, Z.; Si, X.; Zheng, Y.; Chen, Z.; Li, X. Multi-step medical image segmentation based on reinforcement learning. J. Ambient Intell. Humaniz. Comput. 2020, 11, 1–12. [Google Scholar] [CrossRef]

- Cai, L.; Barnes, L.E.; Boukhechba, M. Designing adaptive passive personal mobile sensing methods using reinforcement learning framework. J. Ambient Intell. Humaniz. Comput. 2021, 12, 133. [Google Scholar] [CrossRef]

- Huegle, M.; Kalweit, G.; Mirchevska, B.; Werling, M.; Boedecker, J. Dynamic Input for Deep Reinforcement Learning in Autonomous Driving. In Proceedings of the 2019 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), The Venetian, Macao, Macao, China, 4–8 November 2019; pp. 7566–7573. [Google Scholar] [CrossRef] [Green Version]

- Huegle, M.; Kalweit, G.; Werling, M.; Boedecker, J. Dynamic Interaction-Aware Scene Understanding for Reinforcement Learning in Autonomous Driving. In Proceedings of the 2020 IEEE International Conference on Robotics and Automation (ICRA), Paris, France, 31 May–31 August 2020; pp. 4329–4335. [Google Scholar] [CrossRef]

- McAllister, R.; Gal, Y.; Kendall, A.; Van der Wilk, M.; Shah, A.; Cipolla, R.; Weller, A. Concrete Problems for Autonomous Vehicle Safety: Advantages of Bayesian Deep Learning. In Proceedings of the 26th International Joint Conference on Artificial Intelligence, Melbourne, Australia, 19–25 August 2017; pp. 4745–4753. [Google Scholar] [CrossRef] [Green Version]

- Kendall, A.; Gal, Y. What Uncertainties Do We Need in Bayesian Deep Learning for Computer Vision? In Proceedings of the 31st Conference on Neural Information Processing Systems (NIPS), Long Beach, CA, USA, 4–9 December 2017; pp. 5580–5590. [Google Scholar] [CrossRef]

- Mirchevska, B.; Pek, C.; Werling, M.; Althoff, M.; Boedecker, J. High-level Decision Making for Safe and Reasonable Autonomous Lane Changing using Reinforcement Learning. In Proceedings of the 2018 21st IEEE International Conference on Intelligent Transportation Systems (ITSC), Maui, HI, USA, 4–7 November 2018; pp. 2156–2162. [Google Scholar] [CrossRef] [Green Version]

- An, H.; Jung, J.-I. Decision-making system for lane change using deep reinforcement learning in connected and automated driving. Electronics 2019, 8, 543. [Google Scholar] [CrossRef] [Green Version]

- Hoel, C.; Wolff, K.; Laine, L. Automated Speed and Lane Change Decision Making using Deep Reinforcement Learning. In Proceedings of the 21st IEEE International Conference on Intelligent Transportation Systems (ITSC), Maui, HI, USA, 4–7 November 2018; pp. 2148–2155. [Google Scholar] [CrossRef] [Green Version]

- Kendall, A.; Badrinarayanan, V.; Cipolla, R. Bayesian SegNet: Model Uncertainty in Deep Convolutional Encoder-Decoder Architectures for Scene Understanding. In Proceedings of the British Machine Vision Conference (BMVC), Imperial College London, London, UK, 4–7 September 2017; pp. 57.1–57.12. [Google Scholar] [CrossRef] [Green Version]

- Gal, Y.; Ghahramani, Z. Dropout as a Bayesian Approximation: Representing Model Uncertainty in Deep Learning. In Proceedings of the 33rd International Conference on Machine Learning (ICML), New York, NY, USA, 19–24 June 2016; pp. 1050–1059. Available online: http://proceedings.mlr.press/v48/gal16.pdf (accessed on 3 March 2022).

- Lakshminarayanan, B.; Pritzel, A.; Blundell, C. Simple and Scalable Predictive Uncertainty Estimation Using Deep Ensembles. In Proceedings of the 31st Conference on Neural Information Processing Systems (NIPS), Long Beach, CA, USA, 4–9 December 2017; pp. 6405–6416. [Google Scholar] [CrossRef]

- Choi, S.; Lee, K.; Lim, S.; Oh, S. Uncertainty-Aware Learning from Demonstration Using Mixture Density Networks with Sampling-Free Variance Modeling. In Proceedings of the 2018 IEEE International Conference on Robotics and Automation (ICRA), Brisbane Convention & Exhibition Centre, Brisbane, Australia, 21–26 May 2018; pp. 6915–6922. [Google Scholar] [CrossRef] [Green Version]

- Kahn, G.; Villaflor, A.; Pong, V.; Abbeel, P.; Levine, S.; Uncertainty-Aware Reinforcement Learning for Collision Avoidance. Berkeley Artificial Intelligence Research, University of California, Berkeley, Submitted on 3 February 2017. Available online: https://arxiv.org/pdf/1702.01182.pdf (accessed on 3 March 2022).

- Hoel, C.-J.; Wolff, K.; Laine, L. Tactical Decision-Making in Autonomous Driving by Reinforcement Learning with Uncertainty Estimation. In Proceedings of the 2020 IEEE Intelligent Vehicles Symposium (IV), Las Vegas, NV, USA, 23–26 June 2020; pp. 1563–1569. [Google Scholar] [CrossRef]

- Hoel, C.-J.; Tram, T.; Sjöberg, J. Reinforcement Learning with Uncertainty Estimation for Tactical Decision-Making in Intersections. In Proceedings of the 2020 IEEE 23rd International Conference on Intelligent Transportation Systems (ITSC), Rhodes, Greece, 20–23 September 2020; pp. 1–7. [Google Scholar] [CrossRef]

- Jazayeri, F.; Shahidinejad, A.; Ghobaei-Arani, M. Autonomous computation offloading and auto-scaling the in the mobile fog computing: A deep reinforcement learning-based approach. J. Ambient Intell. Humaniz. Comput. 2021, 12, 8265–8284. [Google Scholar] [CrossRef]

- Alam, T.; Ullah, A.; Benaida, M. Deep reinforcement learning approach for computation offloading in blockchain-enabled communications systems. J. Ambient Intell. Humaniz. Comput. 2022, 13, 313. [Google Scholar] [CrossRef]

- Watkins, C.J.C.H.; Dayan, P. Q-learning. Mach. Learn. 1992, 8, 279–292. [Google Scholar] [CrossRef]

- Mnih, V.; Kavukcuoglu, K.; Silver, D.; Graves, A.; Antonoglou, I.; Wierstra, D.; Riedmiller, M. Playing Atari with Deep Reinforcement Learning. 2013. Available online: https://www.cs.toronto.edu/~vmnih/docs/dqn.pdf (accessed on 3 March 2022).

- Bellemare, M.G.; Dabney, W.; Munos, R. A Distributional Perspective on Reinforcement Learning. In Proceedings of the 34th International Conference on Machine Learning (ICML), Sydney, NSW, Australia, 6–11 August 2017; pp. 449–458. Available online: http://proceedings.mlr.press/v70/bellemare17a/bellemare17a.pdf (accessed on 16 March 2022).

- Bishop, C. Mixture density networks. Neural Computing Research Group Report, Birmingham, UK. 1994. Available online: https://publications.aston.ac.uk/id/eprint/373/1/NCRG_94_004.pdf (accessed on 3 March 2022).

- Choi, Y.; Lee, K.; Oh, S. Distributional Deep Reinforcement Learning with a Mixture of Gaussians. In Proceedings of the 2019 IEEE International Conference on Robotics and Automation (ICRA), Montreal, QC, Canada, 20–24 May 2019; pp. 9791–9797. [Google Scholar] [CrossRef]

- Da Silva, F.L.; Hernandez-Leal, P.; Kartal, B.; Taylor, M.E. Uncertainty-Aware Action Advising for Deep Reinforcement Learning Agents. Proc. Conf. AAAI Artif. Intell. 2020, 34, 5792–5799. [Google Scholar] [CrossRef]

- Wang, X.; Song, J.; Qi, P.; Peng, P.; Tang, Z.; Zhang, W.; Li, W.; Pi, X.; He, J.; Gao, C.; et al. SCC: An efficient deep reinforcement learning agent mastering the game of StarCraft II. In Proceedings of the 38th International Conference on Machine Learning, Virtual, 18-24 July 2021; pp. 10905–10915. [Google Scholar] [CrossRef]

- Yang, D.; Qin, X.; Xu, X.; Li, C.; Wei, G. Sample Efficient Reinforcement Learning Method via High Efficient Episodic Memory. IEEE Access 2020, 8, 129274–129284. Available online: https://ieeexplore.ieee.org/document/9141230 (accessed on 3 March 2022). [CrossRef]

| Parameter | Value | Comments |

|---|---|---|

| Ego lane | ||

| Ego vehicle speed | ||

| Lane-change info | ||

| Relative long. Position of i-th vehicle | ||

| Relative latitude. Position of i-th vehicle | ||

| Relative speed of i-th vehicle | ||

| Lane-change state of i-th vehicle |

| Normal Scenario | Unexpected Scenario | ||||||

|---|---|---|---|---|---|---|---|

| Stop Scenario | Speeding Scenario | ||||||

| Mean Velocity (m/s) | Collision Rate (%) | Steps Per s (SPS) | Collision Rate (%) | Steps per s (SPS) | Collision Rate (%) | Steps Per s (SPS) | |

| DeepSet-Q | 21.9 | 2 | 213.5 | 100 | 213.6 | 100 | 213.6 |

| DeepSet-Q (15–55 m/s) | 21.8 | 3 | 213.6 | 100 | 213.5 | 0 | 213.5 |

| RPF- Ensemble | 23.4 | 2 | 165.5 | 0 | 165.3 | 0 | 165.6 |

| DwGM-Q | 22.1 | 2 | 184.4 | 0 | 184.5 | 0 | 184.5 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kim, M.-S.; Eoh, G.; Park, T.-H. Decision Making for Self-Driving Vehicles in Unexpected Environments Using Efficient Reinforcement Learning Methods. Electronics 2022, 11, 1685. https://doi.org/10.3390/electronics11111685

Kim M-S, Eoh G, Park T-H. Decision Making for Self-Driving Vehicles in Unexpected Environments Using Efficient Reinforcement Learning Methods. Electronics. 2022; 11(11):1685. https://doi.org/10.3390/electronics11111685

Chicago/Turabian StyleKim, Min-Seong, Gyuho Eoh, and Tae-Hyoung Park. 2022. "Decision Making for Self-Driving Vehicles in Unexpected Environments Using Efficient Reinforcement Learning Methods" Electronics 11, no. 11: 1685. https://doi.org/10.3390/electronics11111685

APA StyleKim, M.-S., Eoh, G., & Park, T.-H. (2022). Decision Making for Self-Driving Vehicles in Unexpected Environments Using Efficient Reinforcement Learning Methods. Electronics, 11(11), 1685. https://doi.org/10.3390/electronics11111685