1. Introduction

The goal of this research is to develop a theoretical basis and a practical systematic process for designing systems that behave ethically; even under non-ideal or hostile conditions.

The human race has become very good at designing systems that are effective, but we are very bad at designing systems that are reliably ethical. The majority of our social and computer-based systems are ethically fragile, lacking resilience under non-ideal conditions, and are generally vulnerable to abuse and manipulation. But we are now on the cusp of a technological wave that will thrust autonomous vehicles, robots, and other artificial intelligence (AI) systems into our daily lives, for good or bad; there will be no stopping them. And despite widespread recognition of the potential risks of creating superintelligence [

1] and the need to make AI and social systems ethical, systems theory, cybernetics, and AI have no adequate systematic processes for creating systems that behave ethically. Instead, we have to rely on the ad hoc skills of an ethically motivated designer to somehow specify a system that is hopefully ethical, despite the constant pressure from corporate executives to do things cheaper and faster. This is not a satisfactory solution to a problem that so urgently needs to be solved. In the context of cybernetics, this could be referred to as “The Ethics Problem”.

Many people think that all technologies can be used for good or evil, but this is not true. If we consider a system like that of public health inspections of restaurants, where an inspector performs a well-structured system of evaluations in defined dimensions, such as kitchen hygiene, food storage, waste management, and signs of vermin, to identify any inadequacies and specify necessary improvements to achieve certification of hygienic adequacy; such a system can only help to make restaurants more hygienic.

Might it be possible to adapt this certification model from the public health domain to create a system that can be used to certify whether a given system is ethically adequate or inadequate? And might such a system be a solution to “The Ethics Problem”?

In this paper, the terms “ethics” and “ethical” are used in a concrete applied sense of the acceptable behaviour in a society or nation. Treating ethics as an absolute standard that can be applied across all cultures is rejected, subscribing rather to Manel Pretel-Wilson’s assertion that “there is no supreme value but a plurality of values.” [

2]. For some systems, the term “ethical” might include aspects such as hygienic, safe, fair, honest, law-abiding, or environmentally friendly.

All societies regulate the behaviour of their members by defining what behaviour is acceptable or unacceptable. It is primarily through the rule of law that a society can be made safe, civilized, and “ethical” as defined by the norms of that society. And the only way that society or an individual can know or prove that something non-trivially unethical has occurred is because some kind of rule has been violated. So being pragmatic, if it is unethical to break laws, regulations, and rules, then it is laws, regulations, and rules that define the ethics for each legislative jurisdiction, which is why we bother to constantly try to improve them. Not all rules are defined formally in writing, some are unwritten conventions, yet in every culture, it is unacceptable to break such laws, regulations, rules, or customs.

However, the act of deciding what is ethical behaviour is very different from the act of behaving ethically by obeying a society’s laws and rules. It is the lawmakers who face genuine ethical dilemmas when making decisions about which behaviours are to be legislated as acceptable in society or forbidden. But a law-abiding citizen (or machine) needs only to obey the appropriate laws and rules in order to behave safely and ethically in all anticipated situations, with an acceptably small risk that something unethical might result despite following the current laws and rules.

Just as a law-abiding citizen does not need to be involved in the ethical decisions that are required when making laws, this paper does not address the issue of how a society decides what is made legal or illegal. We must accept that a law-abiding machine, like many law-abiding citizens, might blindly obey unjust or evil laws, such as those made in Nazi Germany, but the problems of the existence of evil dictatorships and unethical laws must be addressed at the political level. That is something that each society must resolve for itself and is outside the scope of this paper, which is concerned rather with how to create effective systems that are certifiably and reliably law-abiding; even under non-ideal or hostile conditions.

None of us are ever likely to have to decide whether to switch a runaway train to a different track to reduce the number of fatalities, but if the lawmakers of a country decide, for example, that in such situations, minimizing fatalities is the ethical and legal obligation, then it becomes trivial to encode it in a law, regulation, or rule so that it can be understood and obeyed by humans and machines. By doing so, what was an ethical dilemma is reduced to a simple rule. This line of reasoning implies that it is sufficient to disambiguate our laws and make robots, artificial intelligence, and autonomous vehicles rigorously law abiding. It is suggested that there is absolutely no need to make such autonomous systems capable of resolving genuine ethical dilemmas, which is the job of society’s lawmakers and regulatory organizations to anticipate, resolve, and codify in advance.

Methodology

The starting point for this research was trying to find a complete set of answers to the question “What properties must a system have for it to behave ethically?”.

The existing cybernetics literature provided the first two properties. Roger Conant and Ross Ashby’s Good Regulator Theorem [

3] proved that every good regulator of a system must be a model of that system, but it does not specify

how to create a good regulator. And Ross Ashby’s Law of Requisite Variety [

4] dictates the range of responses that an effective regulator must be capable of. However, having an internal model and a sufficient range of responses is insufficient to ensure effective regulation, let alone ethical regulation. An ethical system must have more than just these two properties.

Recent approaches to making AI ethical, such as IBM’s “Everyday Ethics for Artificial Intelligence: A practical guide for designers and developers” [

5] and the European Commission’s “High-Level Expert Group on Artificial Intelligence: Draft Ethics Guidelines for Trustworthy AI” [

6] provided “ethically white-washed” [

7] lists of requirements, without offering anything that could be applied systematically and repeatably to actually design an ethical AI.

Heinz von Foerster proposed an ethical imperative: “Act always so as to increase the number of choices” [

8]. Although this principle is valuable in the context of psychological therapy, it specifies no end condition, i.e., when to stop adding more choices. If one were to apply it when deciding how many different types of propulsion systems to build into a manned-spacecraft to adjust its motion and orientation, it would lead to unnecessary choices, unnecessary weight, unnecessary costs, increase the number of points of possible failure, and therefore increase the risk of catastrophic failure and loss of life. This counter example proves that maximizing choice can be the wrong (unethical) thing to do. And by definition, implementing more choices than is necessary to achieve the goal of a system is unnecessary and violates the principle of Occam’s razor. So we must reject von Foerster’s Ethical Imperative as being flawed.

In 1990, von Foerster gave a lecture titled “Ethics and Second-Order Cybernetics” to the International Conference, Systems and Family Therapy: Ethics, Epistemology, New Methods, in Paris, France. However, despite its promising title, it provides nothing concrete or systematic for making systems ethical [

9].

Stafford Beer’s viable system model (VSM) is specific to hierarchically structured systems and associates ethics with a specific level of the hierarchy (System 5) [

10]. Although every ethical system can be mapped onto the VSM structure, so too can every unethical system. And rather like creating an ethics committee, assigning “ethics” to a particular level of the architecture is insufficient to make a system behave ethically, it does not explain

how to make a system ethical. It just creates the illusion of having solved the problem, but the problem has not been solved; only delegated. Although applying VSM

might result in an ethical system, it is not inevitable. By contrast, we expect reliable ethicalness to be an inevitable emergent property of the entire system—if and only if the system is ethically adequate.

An important early step was to realize that the good regulator theorem is ambiguous because a regulator that is good at regulating is not necessarily good in an ethical sense. To avoid this ambiguity, this paper uses the term “effective” for the first meaning, “ethical” for the second meaning, and only uses “good” when both meanings are intended. It is only by imposing precision in the use of terminology that it was possible to clarify the otherwise muddled thinking and isolate the essence of an ethical system.

To identify more necessary properties, a selection of ethical and unethical systems were considered, including autonomous vehicles, bank ATMs, various flavours of capitalism, central banks, corrupt politicians, dating systems, democracies, dictatorships, healthcare robots, a jury, law-abiding citizens, money laundering banks, product design processes, superintelligent machines, the U.S. Supreme Court system, vehicle exhaust emission test cheating corporations, and voting machines.

Considering these diverse systems helped identify some general characteristics, such as having ethical goals, laws, and the intelligence to understand the laws and make rational decisions. Some other characteristics only became apparent after looking for ways that evil actors (internal or external to the system) could subvert each system, such as by hacking, tampering, feeding the system false information, or by threatening, bribing, and blackmailing people who have influence on the system.

The analysis was exploratory and unstructured. In considering such a wide range of different types of systems, it was only necessary to reflect on each system’s unique or special differences to identify any new aspects that had not already been identified. The first few requisite properties were found by considering an abstract regulator and then an autonomous vehicle. Each further system that was considered contributed its own points of special interest. For example, systems that control money include strict requirements for an audit trail and physical resistance to tampering, a jury might be threatened, a judge must cope with liars, a supreme court justice might be blackmailed, corrupt politicians need to make secret deals and obfuscate the source of their wealth and what they did to get it, most computer systems accept their inputs as truth without corroboration and can be hacked, robots must obey laws, and in a village, gossip keeps track of who has a bad reputation, but on the internet, criminal, violent, and abusive men can keep creating new profiles to find more victims, who have virtually zero chance of discovering, before it is too late, that they are replying to a psychopath.

Finally, a minimum set of additional properties were identified that would counter the entire set of potential vulnerabilities. In all, nine properties were identified that are necessary and sufficient to guarantee that a system will behave ethically. These nine requisites are integrated in the ethical regulator theorem (ERT), which can be used as a decision function, IsEthical, that can be applied systematically to categorize any regulated system as being ethically adequate, ethically inadequate, or ethically undecidable. A proof of the theorem is provided. Another result of ERT is a basis (known as the MakeEthical function) for systematically identifying improvements that are necessary for a given system to be made ethically adequate. The IsEthical and MakeEthical functions can be used to construct an ethical design process that can be retrofitted to enhance any existing formal design process, such as VSM.

Since ERT did not seem to fit into the existing cybernetics framework, a new framework was developed out of necessity. It uses the IsEthical function to distinguish between two types of superintelligent machines; those that are ethically adequate and those that are ethically inadequate. Together, the intelligence and ethics dimensions are used to identify four well-defined classes of systems. These four distinct classes can be appended to the existing two levels of first-order and second-order cybernetic systems to create a six-level framework for classifying cybernetic and superintelligent systems. An unexpected consequence of trying to categorize ERT was the realization that third-order cybernetics should be defined as “the cybernetics of ethical systems”.

Since the ethical regulator theorem can be applied to any regulated system in any domain, and offers a new and systematic approach to making systems more ethical, the implications for making the world a better place are significant.

One result of the exploration of the proposed six-level framework is the identification of a race condition that results in either a cyberanthropic utopia or a cybermisanthropic dystopia. This dystopic threat is well known, however, by identifying the exact nature of the race condition, it becomes clear what strategy must be employed to try to avoid the possibility that superintelligent machines could lead humanity into a dystopic disaster.

Since it is imperative for humanity to avoid this existential threat, concrete actions are proposed, including a grand challenge to apply ERT to new and existing systems in all areas of society in what is characterized as a systemic ethical revolution. And because an important component of that revolution is psychological, 82 ethically inspiring quotes by twelve famous empaths from five continents are presented that demonstrate that ethics transcends science, politics, genders, nations, and religions, and is probably the only force that can unify humanity to work together for our greater good.

2. The Ethical Regulator Theorem

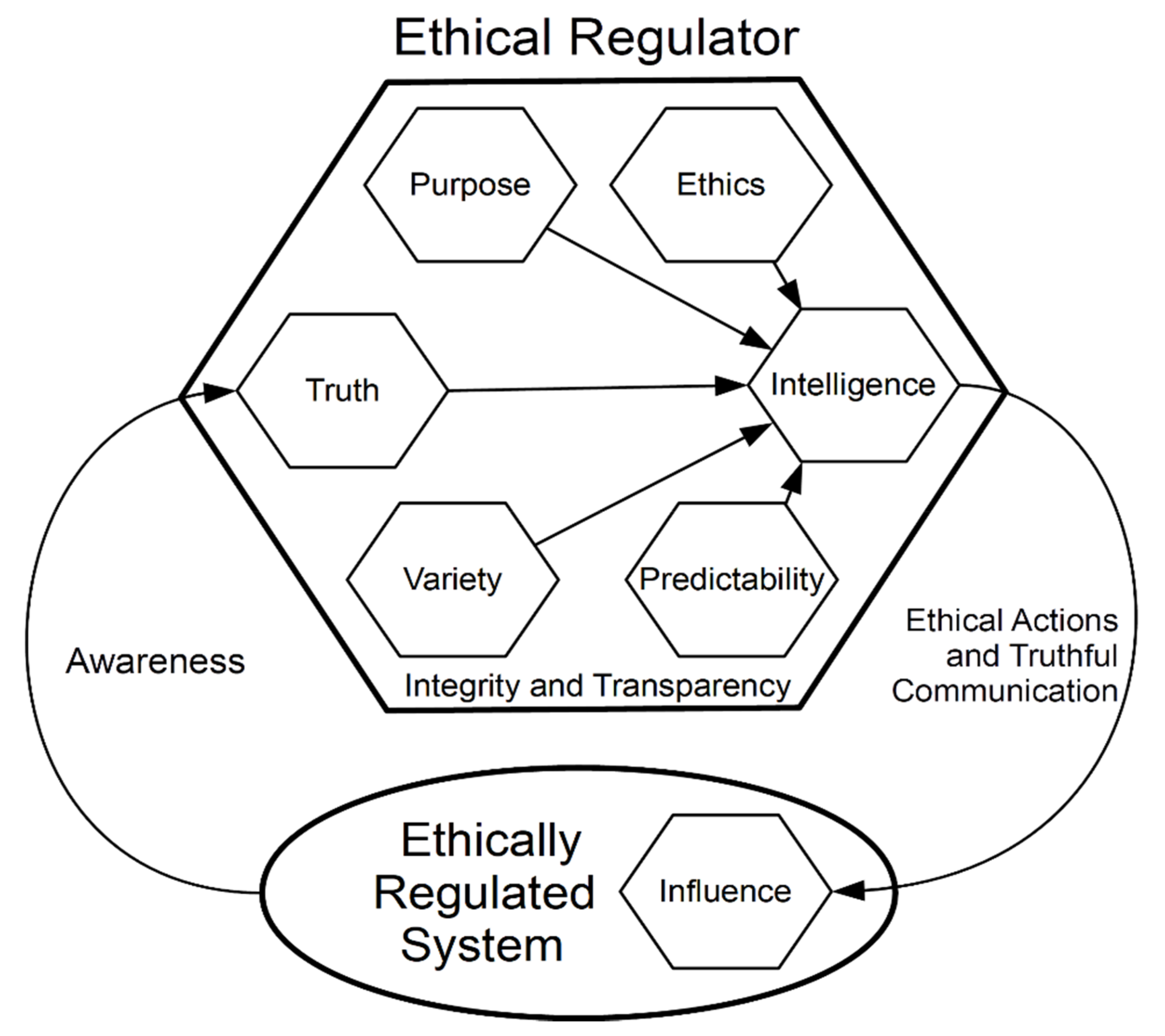

The ethical regulator theorem (ERT) claims that the following nine requisites are necessary and sufficient for a cybernetic regulator to be effective and ethical:

- (1)

Purpose expressed as unambiguously prioritized goals.

- (2)

Truth about the past and present.

- (3)

Variety of possible actions.

- (4)

Predictability of the future effects of actions.

- (5)

Intelligence to choose the best actions.

- (6)

Influence on the system being regulated.

- (7)

Ethics expressed as unambiguously prioritized rules.

- (8)

Integrity of all subsystems.

- (9)

Transparency of ethical behaviour.

Of these nine requisites, only the first six are necessary for a regulator to be effective. If a system does not need to be ethical, the three requisites ethics, integrity, and transparency are optional.

Figure 1 and the following sections explain the requisites in more detail.

2.1. Requisite Purpose

Because complex systems are required to satisfy multiple goals, purpose must be expressed as unambiguously prioritized goals. Without well-defined goals, the system cannot be effective and might randomly adopt or default to a goal that is regarded as unethical by the society that it exists in.

2.2. Requisite Truth

Truth is not just about information that the regulator treats as facts or receives as inputs, but also the reliability of any interpretations of such information. This is the regulator’s awareness of the current situation, knowledge, and beliefs. If the regulator’s information sources or interpretations are unreliable and cannot be error-corrected, then the integrity of the system is in danger. In extremis, if the perceptions of the regulator can be manipulated, it can be tricked into making decisions that are ineffective or unethical.

An ethical regulator does not require perfectly accurate information, but it must be sufficiently truth-seeking to be able to cope with uncertainties and minimize the impact of unreliable information, misinterpretations, and deliberate misinformation as best as it can. This is much like the requirement that a good judge (one that is both effective and ethical) must be able to reach reliable verdicts “beyond reasonable doubt” from unreliable evidence.

2.3. Requisite Variety

Variety in the range of possible actions to choose from must be as rich as the range of potential disturbances or situations. This is nothing other than the law of requisite variety.

2.4. Requisite Predictability

Predictability requires sufficiently accurate models of the system that is being regulated and of the regulator itself, to be able to rank the actions and strategies that will give the best outcome. This is nothing other than the good regulator theorem.

2.5. Requisite Intelligence

Intelligence is applied to the previous requisite types of information to select the most effective/ethical strategies and actions from the set of possible actions. And because the output of one regulator is generally an input to other regulators (systems or people), if the selected action is an act of communication, it must be as truthful as possible. Here, the name intelligence is used only to mean the ability to make an informed selection, and it should not be equated with human intelligence, though it can include it.

2.6. Requisite Influence

Influence is the existence of pathways to transmit the effects of the selected actions to the regulated system. This is not a property of the regulator, but a function of the connectivity relationships that span from the regulator’s outputs to elements of the regulated system and its environment. If a regulator has no influence on the regulated system, it is not a true regulator, it is a simulation or passive observer, and there are no direct ethical consequences; which can be important when observing or simulating dangerous situations or testing a preproduction system.

Depending on the nature of the system that is being regulated, the speed and duration of the effects of actions can vary greatly. For example, a self-driving vehicle applying the brakes has an immediate effect on the vehicle’s velocity, which lasts until the next acceleration; a new ruling by the Supreme Court has a much slower effect on society but could last for decades or possibly centuries; and the cascade that can be caused by someone sending a message to the network of transmission repeaters known as Twitter followers is unpredictably chaotic in both speed and duration.

In some systems, influence is more of a determining factor than variety. Indeed, the power of the law of requisite variety has often been overstated, for example, claiming that the subsystem with the most variety will control a system. ERT proves why this is not always true.

Let us consider two systems, A and B, that are competing to win control of system C, for example, two politicians seeking election. Often the variety of statements, actions, and strategies of the candidates is less important than their ability to purchase advertising to influence the voters.

And if a robber uses a gun to increase his effectiveness, the use of a gun does not amplify his variety, it is just one existing element in his range of possible variety, yet making that choice greatly increases his effectiveness at manipulating his victims. Such an increase in effectiveness, like buying advertising, is best explained in terms of an increase in influence.

In the light of the concept of influence, the belief that variety can be amplified appears to be as delusional as the idea that randomness can be amplified. Feeding variety or randomness into a genuinely noiseless amplifier cannot produce more variety or randomness than was fed into it. The variety of the robber or an advertising message is effectively constant.

The six requisites described so far are necessary and sufficient for a system to be effective but are not sufficient for it to be ethical.

2.7. Effectiveness Function

The ethical regulator theorem implies that we can define a function for the effectiveness that a regulator, R, has in controlling a system. It captures how the effectiveness of the regulator depends on the effectiveness of six requisites:

In this form, we would assign each requisite an effectiveness value between 0 and 1, where 1 means that it is perfect or optimal. And if the effectiveness of even just one of the requisites is close to zero, the effectiveness of the whole regulator is massively reduced. Applied to our two politicians: If EffectivenessA > EffectivenessB, then A is more likely than B to win control of system C.

However, it is neither necessary nor possible to calculate meaningful numerical values to compare the effectiveness of different systems or configurations. The essential value of the function is to understand the relationships and dependencies that it captures.

It is sufficient if an understanding of the effectiveness function informs the system design strategy; recognizing that a maximally effective system requires that the effectiveness of these six requisite dimensions are maximized, and that a successful attack on the integrity or effectiveness of any of them spells disaster for the effectiveness of the whole system.

It is worth noting that in social systems, money can buy media influence; and if the media is broadcasting lies, propaganda, or advertising, it reduces the quality of TruthX that is received by every voter or consumer, X, which can manipulate them into making decisions that are not in their best interest.

2.8. Requisite Ethics

Ethics must be expressed as unambiguously prioritized laws, regulations, and rules that codify constraints and imperatives, for example, Isaac Asimov’s First Law of Robotics: “A robot may not injure a human being or, through inaction, allow a human being to come to harm.” [

11], but ideally, expressed unambiguously in a formal language such as XML, which can be understood by humans and computers.

Ethical rules define constraints on the variety of actions and have a higher priority than the goals for purpose. By always obeying the relevant highest priority rules, the regulator is guaranteed to act ethically within the scope of the ethical schema, which provides a model of acceptable (ethical) behaviour. The ethical rules have the power of veto over possible actions and strategies, which makes it safe for AI to generate candidate strategies and actions algorithmically, without having to worry whether it might generate unethical possibilities.

Since ethical schemas vary between different cultures, in machines, they must be handled like plug-in software modules. And because an ethical schema can encode any ethics, good or evil, each ethical schema must be anchored explicitly in the laws of a legislative jurisdiction. When a person or system crosses a state or national border it is necessary to activate a different set of ethical schemas, i.e., a different set of laws, regulations, and rules. And the ethics modules must be prioritized so that it is unambiguous which module has precedence in the event of a conflict, for example, between national and state laws. The highest-level laws could be encoded in hardware to be unhackable.

A taxonomy of ethics modules can provide rules for all conceivable situations. For example, gun-law, traffic-rules, child-care, tax-law, contract-law, maritime-law, drone-flying, police-regulations, and warfare-rules-of-engagement. And the ethics modules can be treated like device drivers, so that to be fully operational, a hypothetical gun-carrying robot that can drive on roads requires valid ethics modules for gun-law and traffic-rules. Without the necessary ethics modules for the appropriate legal jurisdiction, the robot’s gun or driving capabilities are automatically disabled.

By legislating that all autonomous artificial intelligence systems must obey appropriate ethics modules that are issued by an organization that is run by humans, we can establish a control mechanism that should ensure that intelligent machines are always subject to human ethics; without unduly restricting the freedom of AI researchers. In fact, it will free up AI researchers and knowledge engineers to focus on the more challenging requisites of truth, variety, predictability, and intelligence.

When we introduce ethics, the effectiveness function must be modified because the effect of behaving ethically is that it reduces the variety of options that are available, by removing all possibilities that are unethical. Thus, if A is an ethical politician and B is an unethical politician, we get something like the following:

which captures the reality that politicians and businessmen who lie and cheat have an advantage over ones that are ethical.

2.9. Requisite Integrity

Integrity of the regulator and its subsystems must be assured through features such as resistance to tampering, intrusion detection, cryptographically authenticated ethics modules, and compliance with all laws, regulations, and rules. Monitoring mechanisms must detect if an invalid ethics module is being used or if an ethical constraint is violated, and if necessary, activate a fail-safe mode, preserve evidence, and notify the manufacturer and/or the appropriate authorities.

The regulator’s first-order integrity mechanisms cannot protect the pathways on which the regulator depends to influence the system. This poses a potential vulnerability that can only be mitigated by using the awareness feedback to check for evidence of the effect of each action.

2.10. Requisite Transparency

Demanding to be trusted is unethical because it enables betrayal. Trustworthiness must always be provable through Transparency. So the law of ethical transparency is introduced, stating:

For a system to be truly ethical, it must always be possible to prove retrospectively that it acted ethically with respect to the appropriate ethical schema.

Whereas it does not really matter whether the programmers of a chess playing robot can find out why a particular piece was sacrificed during a game, the logic of ethical decisions must never be hidden in the depths of opaque processes, neural networks, or lost to the passage of time. Generally, this requisite can be satisfied by having multiple independent witnesses or keeping an audit trail that is adequate and secure.

When an ethically adequate system violates an ethical constraint, as they sometimes will, analysis of the audit trail will identify the reason. For example, because a faulty neural network wrongly identified a boy leading a cow as a calf leading a man, or it will prove who in the chain-of-command knew what about illegal corporate activities.

Integrity and transparency are codependent security requisites: We require both integrity of transparency and transparency of integrity.

2.11. Evaluating Ethical Adequacy

Like a public health inspection of a restaurant, an evaluated system is judged on the adequacy of each requisite dimension. If and only if a system and all its significant subsystems satisfy all nine ERT requisites is it said to be “ethically adequate”. Otherwise it is classified as “ethically inadequate” and the weaknesses listed with recommendations for improving them.

And because a truly ethical system must be maximally tamper-resistant and unhackable, the evaluation of ethical adequacy also has similarities to penetration testing and red teaming techniques; where the evaluation team tries to identify weaknesses and theoretical possibilities to subvert the integrity of the system and all its subsystems via all possible attack vectors and surfaces.

For each of the nine dimensions, Di, the evaluators must consider the following three questions:

- (a)

How can the system fail or be subverted in the Di dimension?

- (b)

How can the system be improved in the Di dimension?

- (c)

Is the system adequate in the Di dimension?

This requires that the system is considered in 27 different ways, which delivers a systematic evaluation of the system’s strengths and weaknesses. This process is a significant improvement on ad hoc approaches that can give very different results, depending on who performs them.

The theorem cannot be used to certify that an ethical schema is ethical because schemas (laws, regulations, and rules) can vary arbitrarily between cultures. However, it can be used to help identify the root causes of crises and to evaluate the ethical adequacy of any proposed interventions [

12]. In the near future, certified ethical consultants may specialize in auditing and certifying the ethical adequacy of existing and proposed, products, processes, laws, organizations, and systems.

3. Ethical Regulator Theorem: Proof and Consequences

Now that we understand the nine requisites better, is it possible to prove that they are indeed necessary and sufficient for a cybernetic regulator to be effective and ethical?

3.1. Proof of Necessity

Proving necessity is simple: One-by-one, for each of the nine requisites dimensions, Di, ask yourself the question “Can a regulator be effective or ethical without Di?”—If it cannot, then Di is necessary. For example, “Can a regulator be effective or ethical without Truth?”.

The answer in each case is rather obvious, especially if you refer to

Figure 1 and, one-by-one, cover each requisite using your thumb, and then consider whether the resulting system can be effective or ethical without the obscured requisite.

Table 1 summarizes the results, which confirm the necessity claims, including the claim that ethics, integrity, and transparency, are optional for systems that only need to be effective.

3.2. Proof of Sufficiency

Proving that the nine requisites are sufficient is not so simple. First, let us assert that in the real world, effective systems and ethical systems exist. Now, for all those such systems, do any of them rely on any information, ability, or other factors to achieve effectiveness or ethicalness that is not covered by the nine requisites?

It is claimed that for all systems that have been considered by the author, the answer is no. However, this claim is easily refutable because it will only take one person to find one example of a necessary factor that is not covered by the nine requisites to demolish the current claim of sufficiency. In the event of that happening, we would adapt the theorem, if necessary, adding another requisite, reassert the sufficiency claim, thank whoever found the missing requisite, and issue the challenge: “Okay, now find one!”.

So, although it is impossible to prove that such an exception does not exist, we can assert that it will always be possible to extend the theorem to include any missing requisites that might be identified in the future, thus restoring the validity of the claim of sufficiency for all known systems that have been considered.

3.3. ERT Universality

Anyone who has the impression that ERT primarily applies to artificial intelligence, robots, self-driving vehicles, and autonomous weapons systems is urged to consider how the theorem can be applied to human systems that make decisions that affect people or the environment, such as organizations, corporations, education systems, government institutions, CEOs, or yourself.

Justice Stevens [

13] provided an excellent example of identifying the ethical inadequacy of the “Citizens United” ruling: “The Court’s ruling threatens to undermine the integrity of elected institutions across the Nation. The path it has taken to reach its outcome will, I fear, do damage to this institution.”, which implies that there is a pressing need to evaluate the ethical adequacy of the entire U.S. Supreme Court system.

Since the ethical regulator theorem can be applied to any system that is required to make ethical decisions, the nine ERT dimensions define a domain-independent abstraction layer that can be used to map between any regulated systems. This creates a vocabulary, or isomorphism, that allows practitioners in one domain to communicate meaningfully with practitioners in seemingly unrelated domains, and share insights and solutions, for example, across artificial intelligence, corporate governance, education systems, and designing consumer products. Specialists in each domain can share their challenges and solutions to improving purpose, truth, variety, predictability, intelligence/strategy, influence, ethics, integrity, and transparency. For example, perhaps a cloud-based secure audit trail service that was developed for one specific domain can be used to help solve transparency and integrity in completely unrelated domains.

3.4. ERT Reflexivity and Algebra

If the ethical regulator theorem is genuinely universal, it must produce meaningful results for the following two special cases:

First, let us define a convenient algebra that allows us to express important assertions in this domain. We need to distinguish between: (I) the act of evaluating the ethical adequacy of a system and (II) the act of determining the set of transformations or interventions that are necessary to make a system ethically adequate:

- (I)

A decision function, IsEthical (S), returns the value True if system S is ethically adequate, it returns the value False if S is ethically inadequate, or it returns the value Undecidable if S is significantly inconsistent, contradictory, or opaque. The value Undecidable should be regarded as an error message rather than a type of system, however it is prudent to treat such systems as ethically inadequate until proven otherwise.

- (II)

A function, MakeEthical (S), returns a set of transformations or interventions to make system S ethically adequate. If S is already ethically adequate, the function returns an empty set, {}.

Now we can use this ERT algebra to make some interesting and controversial claims in

Table 2.

3.5. The Law of Inevitable Ethical Inadequacy

We can build on the proof of necessity to derive this new law:

If you don’t specify that you require a secure ethical system, what you get is an insecure unethical system.

Most people have an intuitive understanding that this law is true, but ERT proves that when ethical adequacy is not specified as a requirement for a system design, the resulting design phase will, quite rightly, tend to optimize for effectiveness, and maximally avoid the extra costs that would be incurred by implementing the ethics, integrity, and transparency dimensions, which are optional for a system that only needs to be effective, thus guaranteeing that the resulting system is ethically inadequate and vulnerable to manipulation; by design.

4. Ethical Design Process

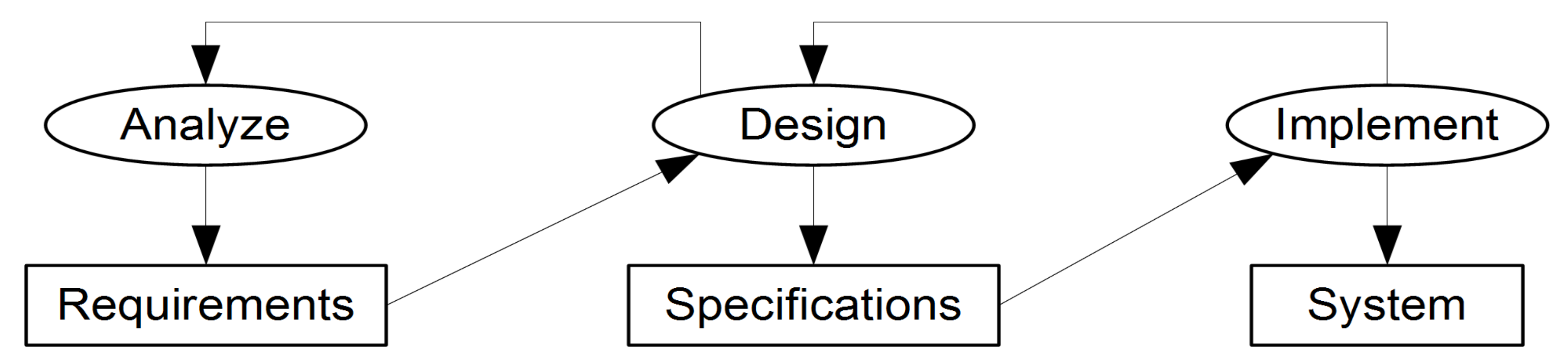

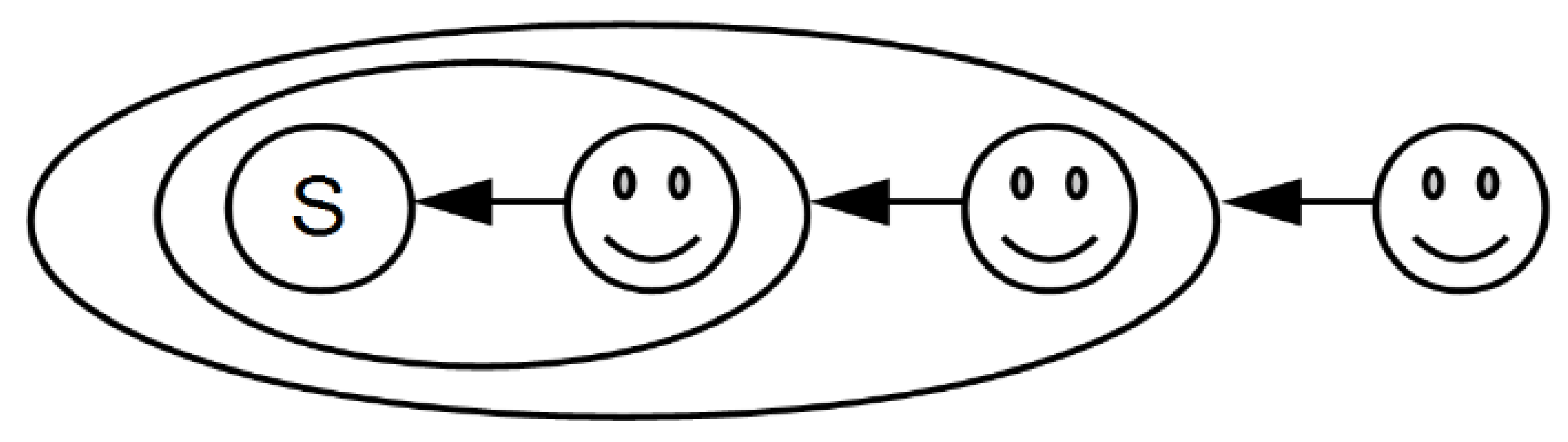

Figure 2 illustrates the elements of a design process, in which an analysis phase produces a requirements artefact, which is the input to the design phase that produces a specification artefact, which is used as the input to the implementation phase, which realizes the system.

If a problem is found in the requirements during the design phase, feedback can trigger another iteration of the analysis phase. And if a problem in the specifications is found during implementation, feedback can trigger the design team to update the specifications or pass feedback to the analysis team to update the requirements.

Such a design process can be effective at producing systems that are effective, however, because the design process is ethically inadequate, it is inevitably only capable of reliably producing systems that are also ethically inadequate; and we cannot be sure that the resulting systems are not actually ethically evil, whether by accident or intentionally.

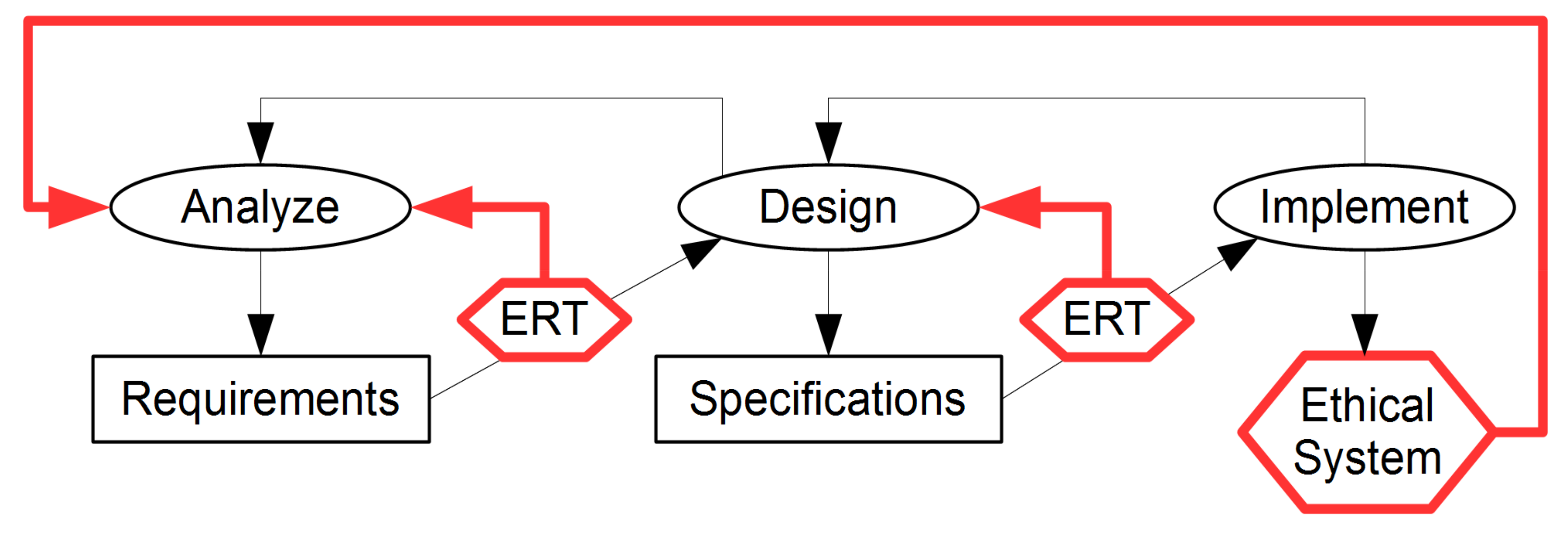

Fortunately, we can transform any effective but ethically inadequate design process, such as VSM, to make it ethically adequate by simply adding ethical adequacy acceptance testing of the requirements and specifications. How we can retrofit the ethical regulator theorem (ERT) to any effective design process that produces requirements and specifications before implementation starts is shown in

Figure 3.

To avoid inevitable ethical inadequacy, simply including ethical adequacy as a system requirement, will ensure that any effective but ethically inadequate design process that is employed must self-modify to include the ERT testing steps shown in

Figure 3, otherwise the requirement cannot be fulfilled, and even basic acceptance testing will recognize the failure and reject the results.

Ideally, the ERT ethical adequacy evaluations should be performed by a team that includes all people who were involved in the production of the artefact that is being tested, who have deep internal knowledge of it, and certified ERT consultant-facilitator participants who are more objective, experienced, and have fewer blind spots. If an artefact is found to be ethically inadequate, it is rejected and recommendations for fixing the problems are provided as feedback to trigger another iteration of that phase. If an artefact is found to be ethically adequate, the artefact is accepted and passed onto the next phase. Since the two ERT testing steps ensure that the requirements and specifications are ethically adequate, if the implementation process performs an effective and lossless implementation of the specifications, the resulting system will also be ethically adequate.

This means that instances of the resulting system that are deployed in the real-world will include a real-time integrity monitoring mechanism that detects and reports any significant problems as feedback to the analysis team, which must decide whether the issue necessitates updating the system requirements, redesigning the specifications, a reimplementation, a remote configuration update, and/or activating an ethical fail-safe mode. Only if the system fails to enter its fail-safe mode might it be necessary for it to be deactivated using a kill switch or for it to be retired by a blade runner.

This concludes the description of the theorem and how to use it.

5. Discussion

The ethical regulator theorem has many far-reaching implications.

5.1. Legislative Implications

By creating a well-defined interface for coding ethics, it becomes easier to apportion liability for failures. For example, if a self-driving car crosses the border into India, fails to switch to the Indian government certified ethics module for traffic-rules, and in an emergency, decides to hit a cow to avoid hitting a dog, then the car manufacturer might be held liable for killing a sacred animal. But if the audit trail proves that the correct ethics module was activated, but the “don’t hit cows” rule had an incorrectly low priority in the ethics schema, then the car manufacturer would not be liable.

It is foreseeable that one-day the laws and regulations of most countries will be published in a standardized computer-readable XML format, such as LKIF (legal knowledge interchange format), and cryptographically-signed by an official issuing authority. However, the existing governmental and regulatory organizations are inadequate to complete such a task in the necessary time frame. Perhaps, a non-profit organization without any conflicts of interests could define appropriate standards and start an open source ethics coding project for the laws, regulations, and rules that are most urgently required by the ethically adequate systems that we try to construct.

By standardizing ethics modules, systems from different manufacturers will all use identical ethics modules that are issued by national or international ethics authorities. The idea of having centralized ethics authorities might sound like part of a dystopic dictatorship but acting ethically is mostly just a matter of obeying laws, regulations, and rules, which are a normal and necessary part of every stable society. These ethics authorities could be independent of the legislative branch of government if the government lacks the necessary resources or commitment to unambiguous digital law-making.

Like Microsoft Windows operating system updates, when new laws, regulations, rules, or bug fixes to a previous ethics module are released, the new ethics module can be made available securely to all affected autonomous systems; crucially, including systems whose manufacturer has gone out of business or does not care about fixing end-user safety issues.

By comparison, Google’s Android operating system provides a classic example of the law of inevitable ethical inadequacy. Google delegated the responsibility for issuing Android updates to the device manufacturers. And the inevitable and predictable consequence of that design decision is that most Android devices (87% in 2015) are insecure [

14]. This exposes over one billion Android users to being hacked and their identity or financial details being stolen by criminals. The resulting chaos and the expensive suffering of the victims is not an innocent mistake, it is a typical effect of deliberately externalizing costs onto others and prioritizing a corporation’s profits and competitive advantages over ethical consumer safety. Google could have designed it to ensure that Android security fixes are issued centrally, and are made available to all affected devices, even if the manufacturer has gone out of business. But if we cannot trust Google, who can we trust?

We certainly do not want robots, self-driving vehicles, and autonomous weapons systems relying on an update mechanism that stops working when the manufacturer goes out of business or decides to optimize its profits at the expense of security and safety updates.

Such unethical corporate behaviour must be legislated out of existence, otherwise it will keep recurring in different and damaging ways; causing unnecessary externalized costs, social chaos, and avoidable deaths. For example, ethically inadequate Internet-of-Things devices that send unencrypted data over the internet are vulnerable to being hacked, and will never receive security patches. Importing or selling such unethical devices that threaten our privacy and the security of our digital infrastructure must be made as illegal as it is to sell exploding cars, pharmaceuticals that contain lethal impurities, or passenger aircraft that automatically crash themselves.

5.2. Case Study: Boeing 737 MAX

On 29 October 2018, 13 min after takeoff, Lion Air Flight 610, a Boeing 737 MAX 8 aircraft, crashed [

15]. It turned out that on the basic 737 MAX 8 model, the new manoeuvring characteristics augmentation system (MCAS) relied on a single angle of attack (AoA) sensor, and when the sensor failed, MCAS wrongly concluded that the plane was climbing too steeply, engaged improperly, and forced the aircraft’s nose down, which caused it to crash into the sea at high speed, killing all 189 people on board. A week later, on 7 November 2018, the Federal Aviation Administration (FAA) issued an emergency airworthiness directive to make all airlines operating Boeing 737 MAX aircraft aware of new operating procedures relating to faulty AoA sensors.

Five months later, on 10 March 2019, six minutes after takeoff, Ethiopian Airlines Flight 302, a Boeing 737 MAX 8, crashed [

16]. Again, the MCAS system had erroneously activated, and despite the pilot’s efforts to prevent the resulting nose dive, the plane hit the ground at 1100 km/h, killing all 157 people on board. The next day, without any chance to investigate the second crash, the FAA asserted that the Boeing 737 MAX 8 model was airworthy. The day after that, Boeing CEO Dennis Muilenburg telephoned President Trump, and assured him that the model was safe. The day after that (13 March 2019) the FAA publicly admitted that there was a similarity between the two crashes, and grounded all Boeing 737 MAX aircraft. By 18 March, 387 aircraft operated by 59 airlines were grounded [

17].

In such disasters, the regulatory body is often complicit due to either undue influence from the industry that they are supposed to be regulating (aka regulatory capture), or because of political pressure to avoid economic impacts. The FAA was certainly aware of the MCAS system’s dependency on a single AoA sensor in November 2018, and if only it had taken that single point of failure seriously then, and grounded all 737 MAX aircraft in 2018, it would have prevented the 2019 Ethiopian Airlines Flight 302 crash, and saved 157 lives. It should not require a second crash for a known danger to be taken seriously.

Without doubt, IsEthical (Boeing) = False, but can we be confident that IsEthical (FAA) = True? Apparently, the FAA had a “longstanding delegation of regulatory authority to Boeing employees” [

18], which creates obvious conflicts of interest, such as the Boeing employees feeling pressure not to cause production delays, increased costs, or reduced profitability. Perhaps industry regulators should be required to prove their honesty by being certified as ethically adequate. But who really regulates the regulators?

In July 2019, Boeing announced a

$50 million fund to compensate the families of the 346 victims, which is equivalent to

$144,500 per victim [

19]. Six months later, Muilenburg was fired as CEO of Boeing, and was entitled to receive

$62.2 million in compensation [

20].

The grounding of the 737 MAX fleet has caused Boeing over

$18 billion in losses [

21]. And now that their financial problems have been made worse by the COVID crisis, it seems inevitable that Boeing will receive a bailout of tens of billions of dollars from the U.S. government to rescue them. But the cost of Muilenburg’s avoidable 737 MAX disaster will not be paid for by Muilenburg, Boeing, or its shareholders, the politicians will externalize the bailout costs onto the U.S. tax payers.

We can identify the exact points in the ERT ethical adequacy evaluation process that the 737 MAX design weaknesses could have been identified. For example, if the MCAS system had been subjected to ethical adequacy evaluation, question a(D2) asks “How can the MCAS system fail or be subverted in the truth dimension?”.

Table 3 illustrates some of the problems that ERT could have identified long before the first crash and demonstrates the extensive coverage of the nine dimensions.

Any ethically adequate process would require further diligent investigation into all identified problems, require them to be fixed, and any decisions not to fix them should be documented thoroughly in a corporate audit trail that can be used in court to establish liability for negligence by the entire chain-of-command. This would create a strong incentive for all people involved (all the way up to CEO Muilenburg) to do the right thing during the design of the system. And the extra costs for Boeing to make their culture, processes, and products ethically adequate would have been a very small price to pay compared to 346 lives and 18,000,000,000 tax payer dollars.

5.3. Making Corporations More Ethical

A review of unethical corporate failures, such as Boeing 737 MAX, vehicle exhaust emissions test cheating, and the constant stream of crimes by too-big-to-be-convicted banks, shows that CEOs are so obsessed with cutting costs, maximizing profits, boosting the share price, deliberately creating layers of plausible deniability, and stuffing money into their own pockets that they ignore the other responsibilities of an ethical CEO, such as taking personal responsibility to ensure strict compliance with the law, avoiding disasters, and improving long-term viability. But there is no adequate incentive for CEOs to care about these things. If they are already multimillionaires, even a total collapse of the company would have no material effect on them or their families. The only effective deterrent for such people is a realistic threat of having to go to prison for a long time.

The fines that financial regulators impose on banks that commit serious crimes are less than they make from such crimes, and the CEOs never go to prison, so the fines are just a cost of doing business [

25] and are utterly inadequate deterrents. Viewed systemically, such fines actually encourage banks to operate as organized criminal enterprises that enjoy no liability for their crimes. So banks can commit crimes and threaten to collapse the economy until we “bail them out” again, i.e., give them even more money on top of what they stole. Imagine what would happen if the only punishment for stealing something from a shop was having to give it back. The mere existence of such ineffective perverse incentive “punishments” is suggestive that one way or another, the regulators and key politicians are all paid off; no regular citizens agree that such fines are adequate. And it has been like this for decades.

The banks, Wall street, and lobbyists certainly reward politicians of all major political parties with very generous political donations and lobbying perks, often followed by suspiciously lucrative revolving door jobs, such as Tony Blair’s £2 million per year job as “part-time adviser” to JP Morgan [

26] or the

$153 million that Bill and Hillary Clinton got in “speaking fees” [

27], much of it from groups that had lobbied the government [

28], in what creates the unfortunate appearance of being conflicts of interests, bribes, protection money, commissions, and/or backpay for services rendered.

One might think that it is time to legislate that large corporations must buy insurance to cover the full costs of insolvency, bailouts, negligence, or illegal activities. Anything less than that creates a moral hazard that encourages risk taking, confident in the knowledge that the costs of catastrophic failure will be externalized onto the tax payers. The new (higher) insurance premiums would reflect the true costs of the risks that corporations currently externalize onto others. However, given our arguably broken, corrupt, and captured political systems, this idea is a good example of a naïve utopia that could work in theory, but cannot realistically be reached from where we are now because the majority of politicians do not dare to do anything that their donors, powerful lobbyists, or potential future “employers” do not want, allowing them to effectively veto absolutely anything.

An alternative hope lies with the tenacity of insurance companies to find ways to avoid paying out. Given that ERT ethical adequacy evaluations could prevent many corporate disasters, insurance companies might start asking claimants on existing corporate liability insurance policies “Can you prove that you performed ethically adequate diligence?” and refuse to pay for disasters that can now be regarded as systematically avoidable. In a Darwinian economy, grossly negligent corporations should be destroyed. But even without such extreme consequences, the greed of the insurance industry could create the necessary incentives for other greedy cost-cutting industries to improve their ethical adequacy, thus transducing the self-interest of insurance corporations into a force that improves the behaviour of other corporations and increases the greater good as a side-effect of greed. It is a sad indictment of our dysfunctional democracies that the reason that this possible solution has a better chance of success is because it does not rely on politicians, who are not the solution, but are an integral part of the systemic ethical problems. But if we cannot trust our politicians, Google, banks, car manufacturers, Boeing, or the FAA to do the right thing, who can we trust?

5.4. Classification Framework

Now let us consider where the ethical regulator theorem fits into the existing cybernetics framework. One might assume that the theorem belongs in second-order cybernetics, however, in a 1990 conference plenary presentation, Heinz von Foerster (who made the distinction between first- and second-order cybernetics in 1974) implied that combining ethics and second-order cybernetics is not something that he would have suggested:

“I am impressed by the ingenuity of the organizers who suggested to me the title of my presentation. They wanted me to address myself to ‘Ethics and Second-Order Cybernetics’. To be honest, I would have never dared to propose such an outrageous title, but I must say that I am delighted that this title was chosen for me.”

Table 4 lists some of the cybernetic community’s definitions of first- and second-order cybernetics, as summarized by Stuart Umpleby [

29].

Although every one of these definitions captures an important distinction, when compared to how the qualifiers “first-order” and “second-order” are used by other scientific communities, the cybernetic community’s use of them appears to be rather subjective, lacks the consensus that is required by the scientific principle, and is of little utility, as required by Kuhn [

30].

This incoherence in defining cybernetics as first-order and second-order not only prevents it from being useful to classify different types of systems and dissipates intellectual energy, but it also prevents the classification from being extended to higher orders, which can be viewed as either a self-limiting dead-end, or paradigmal autoapoptosis (self-programmed death), which is not entirely unlike the tragic situation of 39 members of the Heaven’s Gate millennial death-cult, who believed that by committing suicide, they would be rescued by an alien spacecraft and “graduate to the Next Level” [

31].

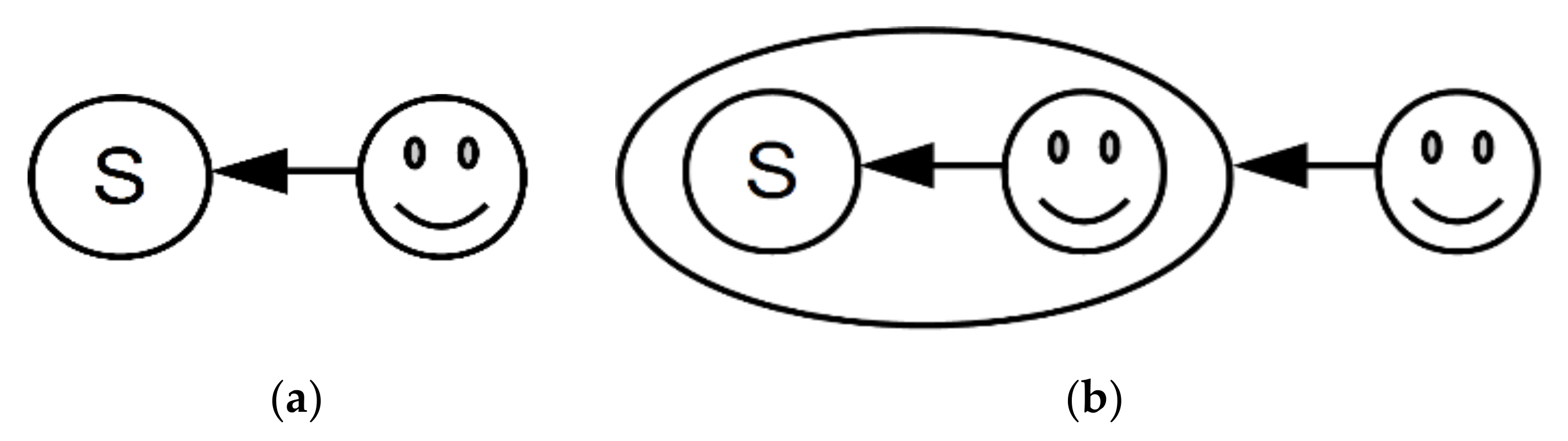

To illustrate the problem of classifying cybernetics into observer-centric “orders”, let us start by considering first- and second-order cybernetics, as defined by von Foerster.

Figure 4 illustrates how the observers’ perspectives relate to a system, S.

How can we use this paradigm to predict the future of cybernetics? Logically, third-order cybernetics would add a third observer’s perspective, as shown in

Figure 5.

However, from the perspective of the third observer, this looks more like psychology than cybernetics. In fact, this structure is isomorphic to a typical management team evaluation exercise, where the details of the task that is given to the team to work on is virtually irrelevant to the outermost observer. It can be any goal-oriented activity, such as building the highest stable tower possible from a limited set of Lego bricks, solving an impossible puzzle in a limited amount of time, or studying a first-order cybernetic system.

5.5. New Classification Framework

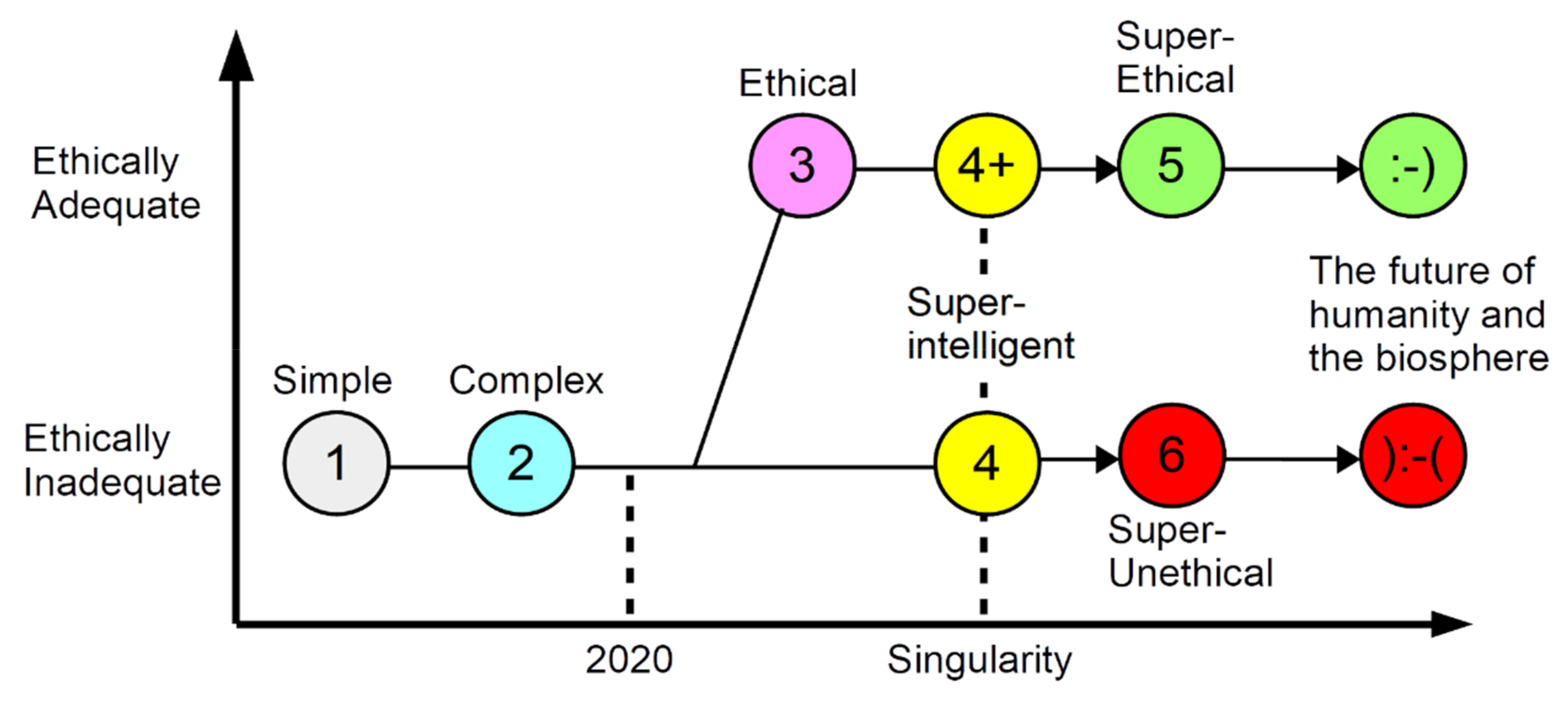

It could be of more utility to define “levels” of cybernetic systems that include categories of future systems that are already anticipated and associate each level with established concepts. To that end,

Table 5 defines a six-level framework (6LF) for classifying cybernetic and superintelligent systems that makes use of the ERT IsEthical function to distinguish between two important subclasses of superintelligent systems.

Today, we are in the transition from building complex Cybernetic Level 2 systems to building ethical systems and superintelligent systems of Cybernetic Levels 3 and 4, and the future of our species and our fragile ecosystem is in our hands, but first, let us clarify each level and explore where this new framework leads us.

5.5.1. Cybernetic Level 1: Simple Systems

This level is about studying and designing simple systems that are effective. It is approximately equivalent to von Foerster’s definition of first-order cybernetic systems.

5.5.2. Cybernetic Level 2: Complex Systems

This level is about studying and designing complex systems that are effective. It is approximately equivalent to von Foerster’s definition of second-order cybernetic systems. There is still much important work to be done at this level.

It is important to emphasize that these definitions are different from von Foerster’s definitions. According to his definition, a second-order cybernetic system always includes an observer. But complex systems may or may not include a human participant.

And although these two different definitions for Level 2 would often be in agreement about the classification level of any given system, there are some absurd edge cases that the new definition eliminates. For example, according to von Foerster’s definition, the study of weather systems on Jupiter is first-order, but the study of Earth weather systems is second-order, because Earth-based meteorologists affect the Earth’s weather system no less than the flapping wings of a butterfly. But under our definition of Level 2 systems as complex systems, studying weather systems falls into Level 2, regardless of the planet on which they occur.

Another important difference is that according to von Foerster himself, with his observer-centric definition, there is no possibility of there being a meaningful third-order cybernetics (see

Section 5.9 Third-Order Cybernetics). By contrast, defining Level 2 systems as complex systems does not rule out the possibility of the existence of a Level 3, so in this respect, the new definition is not self-limiting.

It is acknowledged that this retuning of definitions might appear to make nonsense of the strict use of the terms first-order and second-order. So the use of these terms in the “Also known as” column in

Table 5 for Levels 1 and 2 might now best be interpreted simply as an approximate mapping onto historical category names and not as scientific definitions. However, if we were to decide that the “orders” are now not levels of nested observers, but are orders of complexity or evolution, then the strict interpretation of applying the terms first-order, second-order, and third-order to categorize levels of systems is rigorously valid.

5.5.3. Cybernetic Level 3: Ethical Systems

In 1986, decades ahead of his time, it was the wonderful and inspiring Ranulph Glanville who defined “the cybernetics of ethics and the ethics of cybernetics” as “cybernethics” [

33].

The ethical regulator theorem belongs at this level, which is concerned with designing human-made systems that are ethically adequate. These systems are new, and do not occur in nature. They must be designed to satisfy all nine requisites of the ethical regulator theorem. The regulating agents can be humans, machines, cyberanthropic hybrids, organizations, corporations, or government institutions. Ethically adequate autonomous machines must obey certified ethics modules.

In retrospect, now that we are not trying to extrapolate from just two points in concept-space, if Level 3 cybernetic systems are ethical, it is apparent that the third observer in the third-order cybernetics system of

Figure 5 is not necessarily a psychologist or a lost cybernetician, but could be the second observer’s conscience, her super-ego or higher-self, that constantly self-observing sense that we all have that knows the difference between right and wrong and between good and evil. This self-monitoring mechanism is known as integrity, and is something that today’s ethically indifferent scientists, managers, executives, politicians, lawyers, bankers, and billionaires are woefully lacking. In non-psychopaths, integrity triggers feelings of bad conscience, regret, or guilt if it is ignored.

5.5.4. Cybernetic Level 4: Superintelligent Systems

The technological singularity is a hypothetical moment when a self-improvement process in a machine causes runaway improvements in intelligence that results in superintelligence that is far greater than any human mind. For this to happen, the system must be sufficiently self-aware of its own software and/or hardware specifications.

5.5.5. Superintelligence Tests

These types of self-awareness give rise to three levels of superintelligence abilities. The ability to reprogram better software for itself, redesign better hardware for itself, and the ability to do both.

Together with the Turing test [

34], these tests mark milestones in the evolution of AI systems towards superintelligence and should cause us alarm if progress towards them is made without significant progress creating ethical systems first. Of these tests, the Turing test is the easiest to achieve because it is essentially a parlour game that only requires that a computer can imitate a (not necessarily very intelligent) human sufficiently well to convince humans most of the time that it is a human being and does not require self-awareness or runaway improvements in intelligence.

5.5.6. Prophecies of Possible Futures

In 1951, writing in his journal [

35], Ross Ashby considered how to plan an advanced society as a “super brain” [

36]. A year later, he described how super-clever machines could create a cyberanthropic utopia: “It may be found that we shall solve our social problems by directing machines that can deliver an intelligence that is not our own” [

37].

Two pages later, he described a cybermisanthropic dystopia where a “Million I.Q. Engine” sounds like Facebook and Google, but on steroids: “What people could resist propaganda and blarney directed by an I.Q. of 1,000,000? It would get to know their secret wishes, their unconscious drives; it would use symbolic messages that they didn’t understand consciously; it would play on their enthusiasms and hopes. They would be as children to it. (This sounds very much like Goebbels controlling the Germans).”

On the appearance of such a machine, he described a paradox of perception of higher intelligence: “It seems, therefore, that a super-clever machine will not look clever. It will look either deceptively simple or, more likely, merely random.” [

38]. On the same subject, Arthur C. Clarke’s Third Law states: “Any sufficiently advanced technology is indistinguishable from magic.” [

39]. If you think that Clarke’s “magic” and Ashby’s “deceptively simple or merely random” are incompatible; take a moment to reflect on the magical simplicity and “randomness” of a Las Vegas magic show or Google’s search results’ pages.

Just as there are two diametrically opposite archetypes for genius; namely the benevolent good genius and the nasty evil genius, it is important not to conflate systems that are ethical with ones that are not ethical, by making them share the same name or category, such as “intelligent”, “Christian”, or “rich”. To do so, misdirects our cognitive focus onto the attention-grabbing dimension and leads us into the temptation to ignore the most important dimension: good and evil.

5.5.7. Cybernetic Level 5: Super-Ethical Systems

The term “super-ethical” is proposed to refer to superintelligent systems that are ethically adequate. Of course, by the time that super-ethical systems exist, a friendlier name, such as “sapient”, will have been adopted and the term “super-ethical” will seem quaintly archaic.

5.5.8. Cybernetic Level 6: Super-Unethical Systems

The term “super-unethical” is proposed to refer to superintelligent systems that are ethically inadequate. This term should always carry a certain stigma, like “weapons of mass destruction”. No one who is working to create artificially intelligent systems should be allowed to escape admitting whether the systems are ethically inadequate.

Just as human genetic experimentation is strictly ethically regulated, we need legislation, regulation, standards, and certification to ensure that autonomous AI systems that make decisions that can have ethical consequences are subjected to the same kind of obsessively rigorous safety-oriented design, construction, and operating procedures as commercial aircraft, nuclear power stations, and vehicles that carry humans into space.

One could start arguing that intelligence is ethically neutral, and it is, but such arguments are fallacies because a hyper-genius “Million I.Q. Engine” without ethics is not ethically neutral. Even if it had ethical goals, it might break laws to achieve them. The possibility of creating a superintelligence that is ethically inadequate should be treated like a bomb that could destroy our planet. Even just planning to construct such a device is effectively conspiring to commit a crime against humanity.

As a thought experiment, let us imagine a hypothetical super-unethical version of Google, named the Googlevil Corporation. The CEO is Dr Evil, and both the CEO and the corporate AI are without ethics, avoid transparency, and will do anything to maximize their profits and power. The corporation’s secret mission statement is “Collect and organize the world’s personal information and make it accessible and useful for maximizing our profits, avoiding paying taxes, and blackmailing anyone in a position of power” and its secret corporate credo is “Sincerely say ‘Believe me, we don’t do evil’, do it anyway, then look people in the eye and give them a creepy Zuckerberg-smile!”.

Anytime that the hypothetical super-unethical Googlevil artificial intelligence or the imaginary psychopathic demagogue Dr Evil wants to blackmail the CEOs of other corporations, politicians that cannot be bought, jury members, or Supreme Court justices around the world to make “random” decisions that incrementally further their secret mission, would they have to do anything more than query the Googlevil user-profile database?

In theory, they would only need to be able to blackmail a majority of members of lower- and upper-houses (how hard can that be?) to be able to get any legislation that they want in any country or just a few Supreme Court justices to steer a nation into a fascist dystopia. By the time that super-unethical AI systems exist, they could be indistinguishable from their corporations, be immortal, immoral, and make unlimited donations (also known as bribes) to all Googlevil-friendly political parties in all techno-democratic dystopias on the planet. Does this already sound familiar?

5.6. Future Time-Line Bifurcation Race Condition

At this point in time, there is an existentially critical fork in our future time-line. Depending on whether the systems that achieve the singularity are ethically adequate or not, the runaway increase in intelligence and inevitable ethical polarization pressures will result in one of two outcomes:

Figure 6 illustrates how plotting the ethical dimension orthogonally to the intelligence dimension clarifies the non-linear dependencies between different cybernetic levels, and clearly shows that the ethically inadequate superintelligent Level 4 systems have no dependency on us succeeding creating ethically adequate Level 3 systems first.

If we continue on the current path from complex Level 2 systems to ethically inadequate superintelligent Level 4 systems, we will end up in a dystopia that is dominated by super-unethical Level 6 psychopathic systems, and the potential cyberanthropic utopia of being ruled by benevolent super-ethical Level 5 sapient systems will become permanently unreachable.

We must create ethically adequate Level 3 systems before we create superintelligent systems. This is the only way to ensure that they have ethical purposes, integrity, a synthetic sense of empathy, and are strictly law-abiding—before they become hyper-intelligent. Level 4+ systems will evolve into good super-ethical Level 5 sapient beings rather than evil super-unethical Level 6 psychopathic beings.

So, there is a race condition that will determine which of these two mutually exclusive possible futures will be the fate of our species; will our technological progress reach Level 4 or Level 4+ first? Level 4 and Level 6 systems must be made illegal. But will legislators regulate these technologies ethically and adequately, or will they sell us out for bribes or threats from special interest groups that will “campaign” for “self-regulation”? However, the horrific truth is that “self-regulation” by psychopathic CEOs actually means an unregulated race to create evil.

It cannot be overemphasized that the singularity (at 4/4+) is the point-of-no-return where humanity probably loses control over machines that are our intellectual superiors. And now is the tiny window of opportunity to ensure that they are programmed with ethics and purposes that serve the greater good of humanity and our fragile biosphere. In this context, it is clear that the ultimate grand challenge for third-order cyberneticians is to find ways to build ethical and super-ethical systems, avoid a cybermisanthropic dystopia, and help humanity create a super-ethical society.

5.7. Super-Ethical Society

Imagine how different the world would be:

If we were happy to be ruled by wise and benevolent sapient beings that eliminated poverty, environmental destruction, corruption, and injustice.

If the United Nations could deploy heavily armed super-ethical peace-keeping robot armies into conflict zones to protect civilians and enforce ceasefires.

If we have super-ethical police robots that protect all citizens equally, 24 × 7, and never shoot our friends or family because of their race, religion, social class, lifestyle, or peaceful protesting.

If super-ethical child-care robots accompany our children wherever they go, protecting them from danger, including physical, emotional, and sexual abuse.

If ethically adequate corporations produce ethical products, provide ethical services, and pay ethical levels of unavoidable corporation tax.

Such a super-ethical society is possible; but only if we deliberately make it our goal, rise above polarizing politics, and act together in accordance with the undeniable truth that ethics is a higher power for good that transcends science, politics, genders, nations, and religions.

5.8. Cyberanthropic Utopia

Many ludicrous utopias have been proposed that are either just science fiction fantasies or are naïve designs that could work in theory, but can never realistically be reached peacefully from where we are now. So it is unsurprising that utopias have accumulated a bad reputation. But it is shockingly common for seemingly rational people to exhibit symptoms of classical conditioned-reflex (Pavlovian) negative responses to the stimulus word “utopia”; triggering emotional distress that disables their rational reasoning and evokes a childish response of ridicule. It is as if any serious use of the word “utopia” has become a reputation-threatening taboo. However, now that artificial intelligence is making such impressive progress and showing no signs of slowing down nor of having an upper-limit, Ross Ashby’s 68-year-old prediction looks increasingly realistic: We might be able to “... solve our social problems by directing machines that can deliver an intelligence that is not our own” [

37].

In addition, Ashby’s prediction hints at a possible definition of a realistic minimum viable utopia:

A world where our social problems have been solved.

Utopia need not mean a “perfect” society, or that we all have flying cars, robot servants, and never have to go to work. Just fulfilling human needs and eliminating poverty would create a truly magnificent utopia. And as we start making progress achieving it, many other human problems, such as starvation, malnutrition, parasitic diseases, homelessness, hopelessness-and poverty-driven prostitution, and crime will fade away and the world will become a very different and happier place to live in. Let no one say it cannot be done. Ethically adequate societies have existed in the past, where resources were shared and its members and the environment treated with respect. What is new is that we can now do it synthetically, consciously, deliberately.

5.9. Third-Order Cybernetics

Since Heinz von Foerster made the distinction between first- and second-order cybernetics in 1974, many people have attempted to find a plausible definition for third-order cybernetics, but no definition has gained acceptance. In a 1990 interview, he said “… it would not create anything new, because by ascending into ‘second-order,’ as Aristotle would say, one has stepped into the circle that closes upon itself. One has stepped into the domain of concepts that apply to themselves.” [

9].

This paper proposes that third-order cybernetics should be defined as “the cybernetics of ethical systems”, and that “the cybernetics of ethics”, “Cybernetics 3.0”, and “3oC” are all acceptable synonyms for it. Some of the supporting arguments for this proposal have already been mentioned, however a consolidated set of arguments are listed below:

Second-order cybernetics (2oC) discussions about the need to create ethical systems, including the need for cybernetics itself to embody ethics, did not produce any satisfactory solution. Here, “satisfactory solution” is understood to mean something like the ethically adequate design process of

Figure 3, which can be used systematically to create real Level 3 systems that are ethically adequate. Recognizing this need but failing to fulfil the need could be referred to in the context of second-order cybernetics as “The Ethics Problem”.

The fact that von Foerster described “Ethics and Second-Order Cybernetics” as “an outrageous title” strongly implies that ethics do not belong in the 2oC as he conceived it to be.

Whereas 2oC can be used for good or evil, the ethical regulator theorem can only be used for good. This is a fundamental difference that also implies ERT does not belong in 2oC. This claim to 3oC is not speculative: It is ERT’s existence that now creates the need to define 3oC.

If we extrapolate from first-order cybernetics having one observer and 2oC having a second observer, we might expect 3oC to introduce a third observer. This hypothesized third observer maps exactly onto the ERT requirement that ethical systems must have real-time integrity mechanisms that monitor and enforce compliance of the system with respect to an appropriate ethical schema. And this third observer is different from the first and second observers.

Alternatively, we can deploy the good regulator theorem to derive a more rigorous justification: A first-order cybernetic regulator requires a model of the system being regulated, and a second-order cybernetic regulator can only achieve reflexivity by having a model of itself. Then to behave ethically, a cybernetic regulator needs a third model, a model of acceptable behaviour, which is encoded in the ethical schema. It is then simply a consequence of the fact that every model requires observations as inputs that brings into existence the need for three observing parts to exist in an ethical regulator, independently of whether a cybernetician is watching, or not.

Echoing von Foerster’s interview comments, Ranulph Glanville claimed that a third-order system cannot exist because it collapses into being equivalent to a first-order system [

40], which might make sense in the observer-centric cybernetics paradigm, but it is nonsensical to suggest that the ethical regulator’s third model (of acceptable behaviour) is equivalent to either its first model (of the system being regulated) or its second model (of itself). This demonstrates an important advantage of ERT’s system-centric paradigm over the self-limiting observer-centric paradigm.

Ethically adequate systems are a rigorously defined and significant new type of system, and ERT plus the six-level framework (6LF) for classifying cybernetic and superintelligent systems (see

Table 5) define a new branch of cybernetics that goes beyond achieving effectiveness, does not belong in 2oC, and provides an elegant solution to “The Ethics Problem”. Logically, the system that is created by joining the 2oC and ERT systems would be named third-order cybernetics.

Although Glanville defined “the cybernetics of ethics and the ethics of cybernetics” as “cybernethics”, this term is invented jargon that carries no meaning for people who are not familiar with its definition. By contrast, the term “third-order cybernetics” carries enough meaning for people who are familiar with the term “second-order cybernetics” to at least trigger interest and curiosity. Therefore, using the term “third-order cybernetics” instead of “cybernethics” has advantages, and enhances 6LF by increasing symmetry in

Table 5.

Together, ERT + 6LF create a new paradigm that has greater explanatory and predictive power than 2oC. For example, producing the ERT effectiveness function, the law of inevitable ethical inadequacy, identifying that the ethically adequate Level 3 systems are the missing type of cybernetic system that is necessary to integrate cybernetic systems and superintelligent systems into a common framework, explaining the impending bifurcation into either a cyberanthropic utopia or a cybermisanthropic dystopia (see

Figure 6), and systematically identifying deficiencies in capitalism (see

Table 2) and the Boeing 737 MAX (see

Table 3). In addition, because 6LF integrates three classes of systems that do not yet exist, it can help us navigate a rational path into the future, for example, by predicting the existence of a race condition and thus identifying a possible solution to the dangers that are posed by superintelligent machines. Such insights cannot be obtained using 2oC.

Whereas it is impossible to define objectively which theories and practices belong in 2oC, making it an intimidating subject for outsiders to even contemplate mastering, ERT is defined and proved in eight pages and does not require knowledge of 2oC. This means that ERT, and how to apply it to any regulated system and in any domain, can easily be taught to non-cyberneticians, who will need no 2oC education. It is therefore logical and advantageous for ERT + 6LF to make a fresh start as third-order cybernetics, without being entangled with 46 years of unnecessary, fuzzy 2oC baggage. However, 3oC is not limited to ERT and 6LF, and will surely evolve before it matures.