“Space, the Final Frontier”: How Good are Agent-Based Models at Simulating Individuals and Space in Cities?

Abstract

:1. Introduction

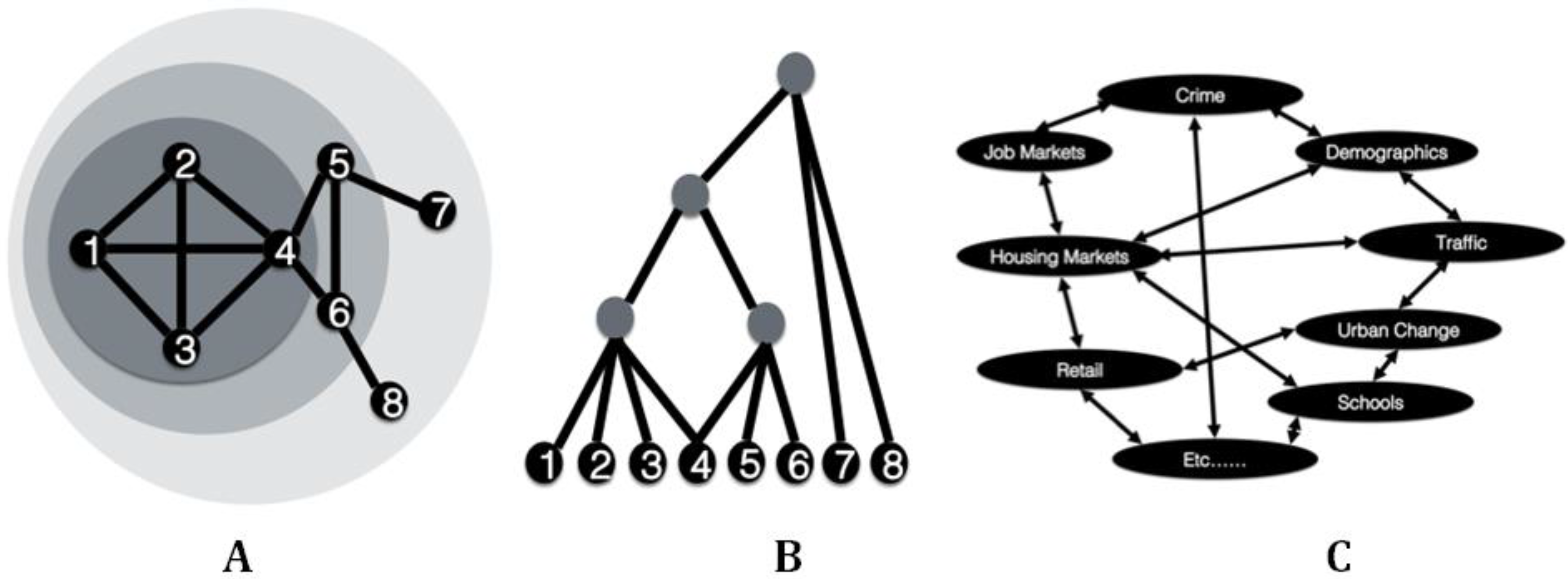

2. Cities as Complex Entities

3. Simulating Individual Behavior in the City

“The appeal is undeniable: it appears obvious that individual-level decision-making is the fundamental driver of social systems…”([43], p. 113)

- (i)

- Implicit representation of individual micro-dynamics—statistical models can only represent these interactions if the population is homogeneous or has coordinated or coherent interactions;

- (ii)

- Representation of potentially multiple spatial relationships;

- (iii)

- The structure of most ABM platforms are generally flexible enough to incorporate equations, statistical techniques, etc., whereas the converse often is not true.

4. ABM for City Simulation

| Author | Application | Entity | Behavior | Spatial Scale | Temporal Scale | Verification (Y/N) | Validation (Y/N) | Calibration (Y/N) |

|---|---|---|---|---|---|---|---|---|

| [49] | Public Event | Individuals | Mathematical | Neighborhood | Seconds | N | N | Y |

| [50] | Riots | Individuals | Mathematical | Neighborhood | Seconds | Y | N | N |

| [51] | Indoor Movement | Individuals | Mathematical | Indoor Scene | Seconds | Y | Y | N |

| [52] | Disease propagation | Individuals | Mathematical | City | Minutes | Y | Y | Y |

| [53] | Disease propagation & urban traffic | Individuals | Mathematical | City | Seconds | Y | Y | Y |

| [54] | Crime | Individuals | Cognitive Framework | Neighborhood | Minutes | Y | Y | Y |

| [55] | Crime | Individuals | Mathematical | City | Hours | Y | N | |

| [22] | Traffic | Individuals | Mathematical | City Center | Seconds | N | N | N |

| [56] | Flooding | Individuals | Mathematical | Town | Minutes | N | Y/N | N |

| [57] | Retail | Individuals | Mathematical | City | Days | N | Y | Y |

| [23] | Residential Location | Individuals | Mathematical | Neighborhood | Years | N | N | Y |

| [58] | Informal Settlement Growth | Households | Mathematical | Neighborhood | Days | Y | Y | N |

| [59] | Regeneration | Households | Mathematical | Neighborhood | Years | N | Y | Y |

| [60] | Urban Shrinkage | Households | Mathematical | City | Years | N | Y | Y |

| [61] | Urban Growth | Institutions & Developers | Mathematical | Region | Years | N | N | N |

| [18] | City Systems | City | Mathematical | Countries & Continents | Years | Y | Y | Y |

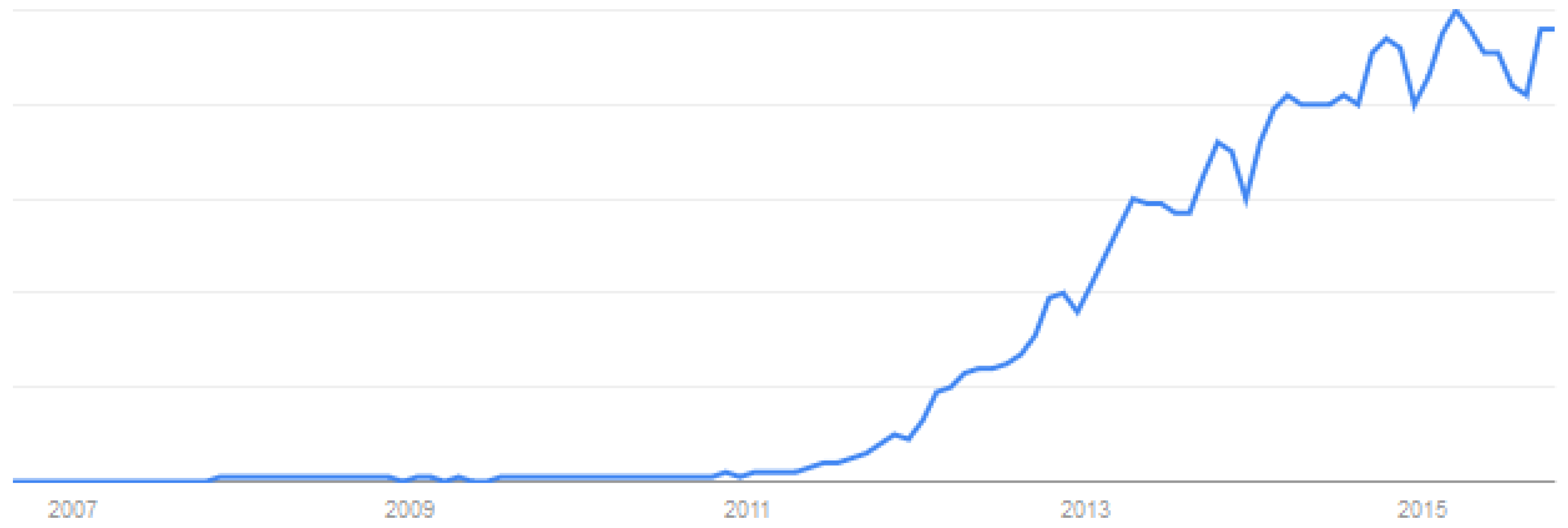

5. Big Data

- Automated data are those that are collected covertly/discretely and often by a third party. These include records of: individual movement (e.g., travel cards, automatic number plate recognition systems, pedestrian flow counters); websites visited; consumer behavior (e.g., spending on credit/debit cards, loyalty card schemes); environmental conditions (e.g., air quality, light/sound levels); health (e.g., life tracking, activity monitoring); and a wealth of others.

- Volunteered data are those that are donated freely by individual users (this assumes, of course, that contributors are aware that their contributions will be public). These include: messages posted to social media services like Facebook, Twitter and foursquare; contributions to collaborative sites such as blogs, wikis, discussions, and OpenStreetMap; and uploaded media (e.g., photos and videos).

6. Discussion

Acknowledgments

Author Contributions

Conflicts of Interest

References

- United Nations. World Urbanization Prospects: The 2014 Revision; Department of Economic and Social Affairs: New York, NY, USA, 2014. [Google Scholar]

- Batty, M. Urban Modelling: Algorithms, Calibrations, Predictions; Cambridge University Press: Cambridge, UK, 1976. [Google Scholar]

- Torrens, P.M. How Land-Use-Transportation Models Work; Centre for Advanced Spatial Analysis (University College London): London, UK, 2000. [Google Scholar]

- Batty, M. Fifty years of urban modelling: Macro-statics to micro-dynamics. In The Dynamics of Complex Urban Systems: An Interdisciplinary Approach; Albeverio, S., Andrey, D., Giordano, P., Vancheri, A., Eds.; Springer Physica-Verlag: New York, NY, USA, 2008; pp. 1–20. [Google Scholar]

- Torrens, P.M.; O’Sullivan, D. Cellular automata and urban simulation: Where do we go from here? Environ. Plan. B 2001, 28, 163–168. [Google Scholar] [CrossRef]

- Heppenstall, A.J.; Crooks, A.T.; Batty, M.; See, L.M. Agent-Based Models of Geographical Systems; Springer: New York, NY, USA, 2012. [Google Scholar]

- Alonso, W. Location and Land Use: Toward a General Theory of Land Rent; Harvard University Press: Cambridge, MA, USA, 1964. [Google Scholar]

- Hagerstrand, T. Innovation Diffusion as a Spatial Process; The University of Chicago Press: Chicago, IL, USA, 1967. [Google Scholar]

- Wilson, A. Catastrophe Theory and Bifurcation: Applications to Urban and Regional Systems; Routledge: Oxen, UK, 1981. [Google Scholar]

- Fotheringham, A.S.; O’Kelly, M.E. Spatial Interaction Models: Formulations and Applications; Springer: New York, NY, USA, 1989. [Google Scholar]

- Gilbert, N.; Troitzsch, K.G. Simulation for the Social Scientist, 2nd ed.; Open University Press: Milton Keynes, UK, 2005. [Google Scholar]

- Batty, M.; Crooks, A.T.; See, L.M.; Heppenstall, A.J. Perspectives on agent-based modelling. In Agent-Based Models of Geographical Systems; Heppenstall, A., Crooks, A.T., See, L.M., Batty, M., Eds.; Springer: New York, NY, USA, 2012; pp. 1–18. [Google Scholar]

- Nagel, K.; Schreckenberg, M. A cellular automaton model for freeway traffic. J. Phys. 1992, 1, 2221–2229. [Google Scholar] [CrossRef]

- White, R.; Engelen, G. Cellular automata and fractal urban form: A cellular modelling approach to the evolution of urban land use patterns. Environ. Plan. A 1993, 25, 1175–1199. [Google Scholar] [CrossRef]

- Benenson, I.; Torrens, P.M. Geosimulation: Automata-Based Modelling of Urban Phenomena; John Wiley & Sons: London, UK, 2004. [Google Scholar]

- Batty, M. Cities and Complexity: Understanding Cities with Cellular Automata, Agent-Based Models, and Fractals; The MIT Press: Cambridge, MA, USA, 2005. [Google Scholar]

- Bura, S.; Guérin-Pace, F.; Mathian, H.; Pumain, D.; Sanders, L. Multi-agent systems and the dynamics of a settlement system. Geogr. Anal. 1996, 28, 161–178. [Google Scholar] [CrossRef]

- Pumain, D. Multi-agent system modelling for urban systems: The series of simpop models. In Agent-Based Models of Geographical Systems; Heppenstall, A.J., Crooks, A.T., See, L.M., Batty, M., Eds.; Springer: New York, NY, USA, 2012; pp. 721–738. [Google Scholar]

- O’Sullivan, D. Geographical information science: Agent-based models. Prog. Hum. Geogr. 2008, 32, 541–550. [Google Scholar] [CrossRef]

- Torrens, P.M. Agent-based modeling and the spatial sciences. Geogr. Compass 2010, 4, 428–448. [Google Scholar] [CrossRef]

- Torrens, P.M. Moving agent-pedestrians through space and time. Ann. Assoc. Am. Geogr. 2012, 102, 35–66. [Google Scholar] [CrossRef]

- Manley, E.; Cheng, T.; Penn, A.; Emmonds, A. A framework for simulating large-scale complex urban traffic dynamics through hybrid agent-based modelling. Comput. Environ. Urban Syst. 2014, 44, 27–36. [Google Scholar] [CrossRef]

- Benenson, I.; Omer, I.; Hatna, E. Entity-based modelling of urban residential dynamics: The case of yaffo, tel aviv. Environ. Plan. B 2002, 29, 491–512. [Google Scholar] [CrossRef]

- Parker, D.C.; Manson, S.M.; Janssen, M.A.; Hoffmann, M.J.; Deadman, P. Multi-agent systems for the simulation of land-use and land-cover change: A review. Ann. Assoc. Am. Geogr. 2003, 93, 314–337. [Google Scholar] [CrossRef]

- An, L.; Zvoleff, A.; Liu, J.; Axinn, W. Agent-based modeling in coupled human and natural systems (chans): Lessons from a comparative analysis. Ann. Assoc. Am. Geogr. 2014, 104, 723–745. [Google Scholar] [CrossRef]

- Jacobs, J. The Death and Life of Great American Cities; Vintage Books: New York, NY, USA, 1961. [Google Scholar]

- Crooks, A.T. The use of agent-based modelling for studying the social and physical environment of cities. In Complexity and Planning: Systems, Assemblages and Simulations; De Roo, G., Hiller, J., van Wezemael, J., Eds.; Ashgate: Burlington, VT, USA, 2012; pp. 385–408. [Google Scholar]

- Batty, M. A generic framework for computational spatial modelling. In Agent-Based Models of Geographical Systems; Heppenstall, A., Crooks, A.T., See, L.M., Batty, M., Eds.; Springer: New York, NY, USA, 2012; pp. 19–50. [Google Scholar]

- Simon, H.A. The Sciences of the Artificial, 3rd ed.; MIT Press: Cambridge, MA, USA, 1996. [Google Scholar]

- Cioffi-Revilla, C. Introduction to Computational Social Science: Principles and Applications; Springer: New York, NY, USA, 2014. [Google Scholar]

- Batty, M. The New Science of Cities; MIT Press: Cambridge, MA, USA, 2013. [Google Scholar]

- Christaller, W. Die Centralen; Gustav Fischer: Jena, Germany, 1933. [Google Scholar]

- Liu, X.; Andersson, C. Assessing the impact of temporal dynamics on land-use change modelling. Comput. Environ. Urban Syst. 2004, 28, 107–124. [Google Scholar] [CrossRef]

- Batty, M. Cellular automata and urban form: A primer. J. Am. Plan. Assoc. 1997, 63, 266–274. [Google Scholar] [CrossRef]

- Torrens, P.M. High-fidelity behaviors for model people on model streetscapes. Ann. GIS 2014, 20, 139–157. [Google Scholar] [CrossRef]

- Heckbert, S.; Baynes, T.; Reeson, A. Agent-based modeling in ecological economics. Ann. N. Y. Acad. Sci. 2010, 1185, 39–53. [Google Scholar] [CrossRef] [PubMed]

- Crooks, A.T.; Heppenstall, A. Introduction to agent-based modelling. In Agent-Based Models of Geographical Systems; Heppenstall, A., Crooks, A.T., See, L.M., Batty, M., Eds.; Springer: New York, NY, USA, 2012; pp. 85–108. [Google Scholar]

- Kennedy, W. Modelling human behaviour in agent-based models. In Agent-Based Models of Geographical Systems; Heppenstall, A., Crooks, A.T., See, L.M., Batty, M., Eds.; Springer: New York, NY, USA, 2012; pp. 167–180. [Google Scholar]

- Coleman, J.S. Foundations of Social Theory; Harvard University Press: Cambridge, MA, USA, 1990. [Google Scholar]

- Axelrod, R. Advancing the art of simulation in the social sciences. In Simulating Social Phenomena; Conte, R., Hegselmann, R., Terno, P., Eds.; Springer: Berlin, Germany, 1997; pp. 21–40. [Google Scholar]

- Bonabeau, E. Agent-based modelling: Methods and techniques for simulating human systems. Proc. Natl. Acad. Sci. USA 2002, 99, 7280–7287. [Google Scholar] [CrossRef] [PubMed]

- Friedkin, N.E.; Johnsen, E.C. Social influence networks and opinion change. Adv. Group Process. 1999, 16, 1–29. [Google Scholar]

- O’Sullivan, D.; Millington, J.; Perry, G.; Wainwright, J. Agent-based models—Because they are worth it? In Agent-Based Models of Geographical Systems; Heppenstall, A.J., Crooks, A.T., Batty, M., See, L.M., Eds.; Springer: New York, NY, USA, 2012. [Google Scholar]

- Grimm, V.; Berger, U.; Bastiansen, F.; Eliassen, S.; Ginot, V.; Giske, J.; Goss-Custard, J.; Grand, T.; Heinz, S.; Huse, G.; et al. A standard protocol for describing individual-based and agent-based models. Ecol. Model. 2006, 198, 115–126. [Google Scholar] [CrossRef]

- Grimm, V.; Revilla, E.; Berger, U.; Jeltsch, F.; Mooij, W.M.; Railsback, S.F.; Thulke, H.; Weiner, J.; Wiegand, T.; DeAngelis, D.L. Pattern-oriented modeling of agent-based complex systems: Lessons from ecology. Science 2005, 310, 987–991. [Google Scholar] [CrossRef] [PubMed]

- Crooks, A.T.; Castle, C. The integration of agent-based modelling and geographical information for geospatial simulation. In Agent-Based Models of Geographical Systems; Heppenstall, A., Crooks, A.T., See, L.M., Batty, M., Eds.; Springer: New York, NY, USA, 2012; pp. 219–252. [Google Scholar]

- Malik, A.; Crooks, A.; Root, H.; Swartz, M. Exploring creativity and urban development with agent-based modeling. J. Artif. Soc. So. Simul. 2015, 18, 12. [Google Scholar] [CrossRef]

- Axtell, R.; Epstein, J.M. Agent-based modelling: Understanding our creations. Bull. St. Fe Inst. 1994, 9, 28–32. [Google Scholar]

- Batty, M.; Desyllas, J.; Duxbury, E. Safety in numbers? Modelling crowds and designing control for the notting hill carnival. Urban Stud. 2003, 40, 1573–1590. [Google Scholar] [CrossRef]

- Torrens, P.M.; McDaniel, A.W. Modeling geographic behavior in riotous crowds. Ann. Assoc. Am. Geogr. 2013, 103, 20–46. [Google Scholar] [CrossRef]

- Crooks, A.T.; Croitoru, A.; Lu, X.; Wise, S.; Irvine, J.M.; Stefanidis, A. Walk this way: Improving pedestrian agent-based models through scene activity analysis. ISPRS Int. J. Geo-Inf. 2015, 4, 1627–1656. [Google Scholar] [CrossRef]

- Crooks, A.T.; Hailegiorgis, A.B. An agent-based modeling approach applied to the spread of cholera. Environ. Model. Softw. 2014, 62, 164–177. [Google Scholar] [CrossRef]

- Eubank, S.; Guclu, H.; Kumar, A.V.S.; Marathe, M.V.; Srinivasan, A.; Toroczkai, Z.; Wang, N. Modelling disease outbreaks in realistic urban social networks. Nature 2004, 429, 180–184. [Google Scholar] [CrossRef] [PubMed]

- Malleson, N.; Heppenstall, A.; See, L.; Evans, A. Using an agent-based crime simulation to predict the effects of urban regeneration on individual household burglary risk. Environ. Plan. B 2013, 40, 405–426. [Google Scholar] [CrossRef]

- Groff, E.R. Simulation for theory testing and experimentation: An example using routine activity theory and street robbery. J. Quant. Criminol. 2007, 23, 75–103. [Google Scholar] [CrossRef]

- Dawson, R.J.; Peppe, R.; Wang, M. An agent-based model for risk-based flood incident management. Nat. Hazards 2011, 59, 167–189. [Google Scholar] [CrossRef]

- Heppenstall, A.J.; Evans, A.J.; Birkin, M.H. Using hybrid agent-based systems to model spatially-influenced retail markets. J. Artif. Soc. Soc. Simul. 2006, 9, 2. [Google Scholar]

- Augustijn-Beckers, E.; Flacke, J.; Retsios, B. Simulating informal settlement growth in dar es salaam, tanzania: An agent-based housing model. Comput. Environ. Urban Syst. 2011, 35, 93–103. [Google Scholar] [CrossRef]

- Jordan, R.; Birkin, M.; Evans, A. An agent-based model of residential mobility assessing the impacts of urban regeneration policy in the easel district. Comput. Environ. Urban Syst. 2014, 48, 49–63. [Google Scholar] [CrossRef]

- Haase, D.; Lautenbach, S.; Seppelt, R. Modeling and simulating residential mobility in a shrinking city using an agent-based approach. Environ. Model. Softw. 2010, 25, 1225–1240. [Google Scholar] [CrossRef]

- Xie, Y.; Fan, S. Multi-city sustainable regional urban growth simulation—Msrugs: A case study along the mid-section of silk road of china. Stoch. Environ. Res. Risk Assess. 2014, 28, 829–841. [Google Scholar] [CrossRef]

- Helbing, D.; Balietti, S. How to Do Agent-Based Simulations in the Future: From Modeling Social Mechanisms to Emergent Phenomena and Interactive Systems Design; Santa Fe Institute: Santa Fe, NM, USA, 2011. [Google Scholar]

- Helbing, D.; Molnár, P. Social force model for pedestrian dynamics. Phys. Rev. E 1995, 51, 4282–4286. [Google Scholar] [CrossRef]

- Pumain, D.; Sanders, L. Theoretical principles in interurban simulation models: A comparison. Environ. Plan. A 2013, 45, 2243–2260. [Google Scholar] [CrossRef]

- Gode, D.K.; Sunder, S. Allocative efficiency of markets with zero-intelligence traders: Market as a partial substitute for individual rationality. J. Political Econ. 1993, 101, 119–137. [Google Scholar] [CrossRef]

- Crooks, A.T. Constructing and implementing an agent-based model of residential segregation through vector gis. Int. J. GIS 2010, 24, 661–675. [Google Scholar] [CrossRef]

- Rao, A.S.; Georgeff, M.P. Modeling Rational Agents within a BDI-Architecture. In Proceedings of the Second International Conference on Principles of Knowledge Representation and Reasoning, San Mateo, CA, USA, April 1991.

- Schmidt, B. The modelling of human behaviour: The pecs reference model. In Proceedings of the 14th European Simulation Symposium, Dresden, Germany, 23–26 October 2002.

- Brantingham, P.; Glasser, U.; Kinney, B.; Singh, K.; Vajihollahi, M. A computational model for simulating spatial aspects of crime in urban environments. In Proceedings of the 2005 IEEE International Conference on Systems, Man and Cybernetics, Waikoloa, HI, USA, 10–12 October 2005; pp. 3667–3674.

- Laird, J.E. The Soar Cognitive Architecture; The MIT Press: Cambridge, MA, USA, 2012. [Google Scholar]

- Anderson, J.R.; Lebiere, C. The Atomic Components of Thought; Psychology Press: Mahwah, NJ, USA, 1998. [Google Scholar]

- The end of theory: The Data Deluge Makes the Scientific Method Obsolete. Available online: http://www.uvm.edu/~cmplxsys/wordpress/wp-content/uploads/reading-group/pdfs/2008/anderson2008.pdf (accessed on 23 June 2008).

- Law, A.M. Simulation Modelling and Analysis, 5th ed.; McGraw-Hill: New York, NY, USA, 2015. [Google Scholar]

- Balci, O. Verification, validation, and testing. In Handbook of Simulation: Principles, Methodology, Advances, Applications, and Practice; John Wiley & Sons: New York, NY, USA, 1996; pp. 335–393. [Google Scholar]

- Lee, J.S.; Filatova, T.; Ligmann-Zielinska, A.; Hassani-Mahmooei, B.; Stonedahl, F.; Lorscheid, I.; Voinov, A.; Polhill, G.; Sun, Z.; Parker, D.C. The complexities of agent-based modeling output analysis. J. Artif. Soc. Soc. Simul. 2015, 18, 4. [Google Scholar] [CrossRef]

- Takadama, K.; Kawai, T.; Koyama, Y. Micro- and macro-level validation in agent-based simulation: Reproduction of human-like behaviours and thinking in a sequential bargaining game. J. Artif. Soc. Soc. Simul. 2008, 11, 9. [Google Scholar]

- Windrum, P.; Fagiolo, G.; Moneta, A. Empirical validation of agent-based models: Alternatives and prospects. J. Artif. Soc. Soc. Simul. 2007, 10, 8. [Google Scholar]

- Smajgl, A.; Brown, D.G.; Valbuena, D.; Huigen, M.G.A. Empirical characterisation of agent behaviours in socio-ecological systems. Environ. Model. Softw. 2011, 26, 837–844. [Google Scholar] [CrossRef]

- Moss, S. Alternative approaches to the empirical validation of agent-based models. J. Artif. Soc. Soc. Simul. 2008, 11, 5. [Google Scholar]

- Kocabas, V.; Dragicevic, S. Agent-based model validation using bayesian networks and vector spatial data. Environ. Plan. B 2009, 36, 787–801. [Google Scholar] [CrossRef]

- Weinberger, S. Web of war: Can computational social science help to prevent or win wars? Nature 2011, 471, 566–568. [Google Scholar] [CrossRef] [PubMed][Green Version]

- Crooks, A.T.; Pfoser, D.; Jenkins, A.; Croitoru, A.; Stefanidis, A.; Smith, D.A.; Karagiorgou, S.; Efentakis, A.; Lamprianidis, G. Crowdsourcing urban form and function. Int. J. Geogr. Inf. Sci. 2015, 29, 720–741. [Google Scholar] [CrossRef]

- Laney, D. 3D Data Management: Controlling Data Volume, Velocity and Variety; META Group Inc: Stamford, CT, USA, 2001. [Google Scholar]

- Kitchin, R. Big data and human geography opportunities, challenges and risks. Dialogues Hum. Geogr. 2013, 3, 262–267. [Google Scholar] [CrossRef]

- Google Trends. Searching for “Big data” In google trends. Available online: https://www.google.com/trends/explore#q=big%20data (accessed on 8 September 2015).

- Mayer-Schönberger, V.; Cukier, K. Big Data: A Revolution that will Transform How We Live, Work and Think; John Murray: London, UK, 2013. [Google Scholar]

- Crosbie, T. Using activity diaries: Some methodological lessons. J. Res. Pract. 2006, 2, 1. [Google Scholar]

- Cranshaw, J.; Schwartz, R.; Hong, J.I.; Sadeh, N.M. The livehoods project: Utilizing social media to understand the dynamics of a city. In Proceedings of the Sixth International AAAI Conference on Weblogs an Social Media, Dublin, Ireland, 4–7 June 2012.

- Kling, F.; Pozdnoukhov, A. When a City Tells a Story: Urban Topic Analysis. In Proceedings of the 20th ACM SIGSPATIAL International Conference on Advances in Geographic Information Systems, Redondo Beach, CA, USA, 7–9 November 2012; pp. 482–485.

- Frias-Martinez, V.; Frias-Martinez, E. Spectral clustering for sensing urban land use using twitter activity. Eng. Appl. Artif. Intell. 2014, 35, 237–245. [Google Scholar] [CrossRef]

- Malleson, N.; Andresen, M.A. The impact of using social media data in crime rate calculations: Shifting hot spots and changing spatial patterns. Cartogr. Geogr. Inf. Sci. 2015, 42, 112–121. [Google Scholar] [CrossRef]

- Isaacman, S.; Becker, R.; Cáceres, R.; Kobourov, S.; Martonosi, M.; Rowland, J.; Varshavsky, A. Identifying important places in people’s lives from cellular network data. In Pervasive Computing, Lecture Notes in Computer Science; Lyons, K., Hightower, J., Huang, E.M., Eds.; Springer: Berlin, Germany, 2011; pp. 133–151. [Google Scholar]

- Crooks, A.T.; Croitoru, A.; Stefanidis, A.; Radzikowski, J. #Earthquake: Twitter as a distributed sensor system. Trans. GIS 2013, 17, 124–147. [Google Scholar]

- Croitoru, A.; Wayant, N.; Crooks, A.T.; Radzikowski, J.; Stefanidis, A. Linking cyber and physical spaces through community detection and clustering in social media feeds. Comput. Environ. Urban Syst. 2014. [Google Scholar] [CrossRef]

- Qu, Y.; Zhang, J. Regularly visited patches in human mobility. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, Paris, France, 27 April–2 May 2009; pp. 395–398.

- Birkin, M.; Harland, K.; Malleson, N.; Cross, P.; Clarke, M. An examination of personal mobility patterns in space and time using twitter. Int. J. Agric. Environ. Inf. Syst. 2014, 5, 55–72. [Google Scholar] [CrossRef]

- Arribas-Bel, D.; Kourtit, K.; Nijkamp, P.; Steenbruggen, J. Cyber cities: Social media as a tool for understanding cities. Appl. Spat. Anal. Policy 2015, 8, 231–247. [Google Scholar] [CrossRef]

- Lovelace, R.; Birkin, M.; Cross, P.; Clarke, M. From big noise to big data: Toward the verification of large data sets for understanding regional retail flows. Geogr. Anal. 2015. [Google Scholar] [CrossRef]

- Lovelace, R.; Birkin, M.; Malleson, N. Can Social Media Data be Useful in Spatial Modelling? A Case Study of “Museum Tweets” and Visitor Flows. In Proceedings of the 22nd Geographical Information Systems Research UK Conference, Glasgow, UK, 16–18 April 2014.

- Wise, S. Agent-Based Modeling and Gis: Exploring Our New Tools in a Disaster Context. In Proceedings of the 22nd Geographical Information Systems Research UK Conference, Glasgow, UK, 16–18 April 2014.

- Batty, M. Agents, cells, and cities: New representational models for simulating multiscale urban dynamics. Environ. Plan. A 2005, 37, 1373–1394. [Google Scholar] [CrossRef]

- Epstein, J.M. Modelling to contain pandemics. Nature 2009, 460, 687. [Google Scholar] [CrossRef] [PubMed]

- Savage, M.; Burrows, R. The coming crisis of empirical sociology. Sociology 2007, 41, 885–899. [Google Scholar] [CrossRef]

- Yu, L. Understanding information inequality: Making sense of the literature of the information and digital divides. J. Librariansh. Inf. Sci. 2006, 38, 229–252. [Google Scholar] [CrossRef]

- Fuchs, C. The role of income inequality in a multivariate cross-national analysis of the digital divide. Soc. Sci. Comput. Rev. 2008, 27, 41–58. [Google Scholar] [CrossRef]

- Schradie, J. The digital production gap: The digital divide and web 2.0 collide. Poetics 2011, 39, 145–168. [Google Scholar] [CrossRef]

- Chen, W.; Wellman, B. Minding the cyber-gap: The internet and social inequality. In The Blackwell Companion to Social Inequalities; Romero, M., Margolis, E., Eds.; Blackwell Publishing Ltd: London, UK, 2005; pp. 523–545. [Google Scholar]

- Graham, M.; Shelton, T. Geography and the future of big data, big data and the future of geography. Dialogues Hum. Geogr. 2013, 3, 255–261. [Google Scholar] [CrossRef]

- Glasgow, M.L.; Rudra, C.B.; Yoo, E.H.; Demirbas, M.; Merriman, J.; Nayak, P.; Crabtree-Ide, C.; Szpiro, A.A.; Rudra, A.; Wactawski-Wende, J.; et al. Using smartphones to collect time-activity data for long-term personal-level air pollution exposure assessment. J. Expo. Sci. Environ. Epidemiol. 2014. [Google Scholar] [CrossRef] [PubMed]

- Salzmann-Erikson, R.M.; Eriksson, R.H. Torrenting values, feelings, and thoughts—Cyber nursing and virtual self-care in a breast augmentation forum. Int. J. Qual. Stud. Health Well-Being 2011, 6, 7378. [Google Scholar] [CrossRef] [PubMed]

- Ratti, C.; Pulselli, R.M.; Williams, S.; Frenchman, D. Mobile landscapes: Using location data from cell phones for urban analysis. Environ. Plan. B 2006, 33, 727–748. [Google Scholar] [CrossRef]

- American Sociological Association. Code of Ethics and Policies and Procedures of the Asa Committee on Professional Ethics; American Sociological Association: Washington, DC, USA, 1999. [Google Scholar]

- Eysenbach, G.; Till, J.E. Ethical issues in qualitative research on internet communities. BMJ 2001, 323, 1103–1105. [Google Scholar] [CrossRef] [PubMed]

- Wilkinson, D.; Thelwall, M. Researching personal information on the public web: Methods and ethics. Soc. Sci. Comput. Rev. 2011, 29, 387–401. [Google Scholar] [CrossRef]

- McKee, R. Ethical issues in using social media for health and health care research. Health Policy 2013, 110, 298–301. [Google Scholar] [CrossRef] [PubMed]

- NSA Prism Program Taps in to User Data of Apple, Google and Others. Available online: http://www.alleanzaperinternet.it/wp-content/uploads/2013/06/guardian.pdf (accessed on 7 June 2013).

- Duhigg, C. How companies learn your secrets. N.Y. Times, 2012; 16, 1–16. [Google Scholar]

- Aggarwal, C.; Yu, P. Privacy-Preserving Data Mining: Models and Algorithms; Springer: New York, NY, USA, 2008. [Google Scholar]

© 2016 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons by Attribution (CC-BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Heppenstall, A.; Malleson, N.; Crooks, A. “Space, the Final Frontier”: How Good are Agent-Based Models at Simulating Individuals and Space in Cities? Systems 2016, 4, 9. https://doi.org/10.3390/systems4010009

Heppenstall A, Malleson N, Crooks A. “Space, the Final Frontier”: How Good are Agent-Based Models at Simulating Individuals and Space in Cities? Systems. 2016; 4(1):9. https://doi.org/10.3390/systems4010009

Chicago/Turabian StyleHeppenstall, Alison, Nick Malleson, and Andrew Crooks. 2016. "“Space, the Final Frontier”: How Good are Agent-Based Models at Simulating Individuals and Space in Cities?" Systems 4, no. 1: 9. https://doi.org/10.3390/systems4010009

APA StyleHeppenstall, A., Malleson, N., & Crooks, A. (2016). “Space, the Final Frontier”: How Good are Agent-Based Models at Simulating Individuals and Space in Cities? Systems, 4(1), 9. https://doi.org/10.3390/systems4010009