A Human-Centred Extended Reality (XR) System for Safe Human–Robot Collaboration (HRC) in Smart Logistics

Abstract

1. Introduction

1.1. Industry 4.0 Logistics Systems and the Need for Human-Centred Capability Development

1.2. Architectural Framework and Research Contributions

- RQ1: How can a modular XR architecture support ergonomics-aware training for human–robot collaboration in smart logistics environments?

- RQ2: How can multimodal interaction telemetry and feedback mechanisms contribute to the assessment of safety awareness and ergonomics-related behaviour during XR-based HRC training?

- RQ3: How can an XR-based training framework be integrated within a scalable ecosystem to support accessible collaborative robotics education for non-specialist learners?

2. Related Work on HRC, XR, and Human-Centred Industrial Systems

2.1. Training and Education for HRC

2.2. XR Applications in Vocational and Industrial Training

2.3. Ethical, Safety, and Ergonomic Considerations in HRC

2.4. Multimodal Feedback and Attention-Aware Interaction in XR

2.5. XR Platforms and Educational Ecosystems

3. Design Goals and Pedagogical Mapping of the HRC-XR Trainer

3.1. Pedagogical Framework and Learning Principles

3.2. System Design Goals

3.3. Mapping of Pedagogical Objectives to Instructional Modules

3.4. Alignment with Learning Outcomes

4. System Architecture and Implementation

4.1. Layered System Architecture and Integration Workflow

4.2. Modular SDK Architecture and Toolkit Integration

4.3. Data Flow, Analytics, and Iterative Refinement

5. Instructional Modules and Learning Scenarios in HRC-XR Training

5.1. Module 1: Foundational Awareness and Conceptual Understanding

5.2. Module 2: Experiential Learning and Safe-Behaviour Practice

5.3. Module 3: Task-Based Reflection and Ergonomics-Oriented Coaching

5.4. Learning Objectives and Assessment Mapping Across Modules

6. Evaluation Design and Pilot Protocol

6.1. Participants and Evaluation Design

6.2. Measures and Data Collection

7. Discussion

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| HRC | Human–Robot Collaboration |

| XR | Extended Reality |

| AR | Augmented Reality |

| REBA | Rapid Entire Body Assessment |

| RULA | Rapid Upper Limb Assessment |

| SDK | Software Development Kit |

References

- Pietrantoni, L.; Favilla, M.; Fraboni, F.; Mazzoni, E.; Morandini, S.; Benvenuti, M.; De Angelis, M. Integrating collaborative robots in manufacturing, logistics, and agriculture: Expert perspectives on technical, safety, and human factors. Front. Robot. AI 2024, 11, 1342130. [Google Scholar] [CrossRef]

- Dhanda, M.; Rogers, B.A.; Hall, S.; Dekoninck, E.; Dhokia, V. Reviewing human-robot collaboration in manufacturing: Opportunities and challenges in the context of industry 5.0. Robot. Comput.-Integr. Manuf. 2025, 93, 102937. [Google Scholar] [CrossRef]

- Daios, A.; Kladovasilakis, N.; Kelemis, A.; Kostavelis, I. AI applications in supply chain management: A survey. Appl. Sci. 2025, 15, 2775. [Google Scholar] [CrossRef]

- Daios, A.; Kostavelis, I. Industry 4.0 Technologies in Distribution Centers: A Survey. In Supply Chains, Proceedings of the 5th Olympus International Conference; Springer: Berlin/Heidelberg, Germany, 2024; pp. 3–11. [Google Scholar] [CrossRef]

- Daios, A.; Xanthopoulos, A.; Folinas, D.; Kostavelis, I. Towards automating stocktaking in warehouses: Challenges, trends, and reliable approaches. Procedia Comput. Sci. 2024, 232, 1437–1445. [Google Scholar] [CrossRef]

- Daios, A.; Kladovasilakis, N.; Kostavelis, I. Mixed Palletizing for Smart Warehouse Environments: Sustainability Review of Existing Methods. Sustainability 2024, 16, 1278. [Google Scholar] [CrossRef]

- Sun, X.; Yu, H.; Solvang, W.D.; Wang, Y.; Wang, K. The application of Industry 4.0 technologies in sustainable logistics: A systematic literature review (2012–2020) to explore future research opportunities. Environ. Sci. Pollut. Res. 2022, 29, 9560–9591. [Google Scholar] [CrossRef] [PubMed]

- Kadir, B.A.; Broberg, O.; da Conceição, C.S. Current research and future perspectives on human factors and ergonomics in Industry 4.0. Comput. Ind. Eng. 2019, 137, 106004. [Google Scholar] [CrossRef]

- Scorgie, D.; Feng, Z.; Paes, D.; Parisi, F.; Yiu, T.; Lovreglio, R. Virtual reality for safety training: A systematic literature review and meta-analysis. Saf. Sci. 2024, 171, 106372. [Google Scholar] [CrossRef]

- Freina, L.; Ott, M. A literature review on immersive virtual reality in education: State of the art and perspectives. In Proceedings of the International Scientific Conference Elearning and Software for Education; SCIRP Open Access: Irvine, CA, USA, 2019; Volume 1, pp. 10–1007. [Google Scholar] [CrossRef]

- Li, S.; Wang, Q.C.; Wei, H.H.; Chen, J.H. Extended reality (XR) training in the construction industry: A content review. Buildings 2024, 14, 414. [Google Scholar] [CrossRef]

- Rizzuto, M.A.; Sonne, M.W.; Vignais, N.; Keir, P.J. Evaluation of a virtual reality head mounted display as a tool for posture assessment in digital human modelling software. Appl. Ergon. 2019, 79, 1–8. [Google Scholar] [CrossRef]

- Man, S.S.; Wen, H.; So, B.C.L. Are virtual reality applications effective for construction safety training and education? A systematic review and meta-analysis. J. Saf. Res. 2024, 88, 230–243. [Google Scholar] [CrossRef]

- Reljić, V.; Milenković, I.; Dudić, S.; Šulc, J.; Bajči, B. Augmented reality applications in industry 4.0 environment. Appl. Sci. 2021, 11, 5592. [Google Scholar] [CrossRef]

- Mulero-Pérez, D.; Zambrano-Serrano, B.; Ruiz Zúñiga, E.; Fernandez-Vega, M.; Garcia-Rodriguez, J. Enhancing Robotics Education Through XR Simulation: Insights from the X-RAPT Training Framework. Appl. Sci. 2025, 15, 10020. [Google Scholar] [CrossRef]

- Diego-Mas, J.A.; Alcaide-Marzal, J.; Poveda-Bautista, R. Effects of using immersive media on the effectiveness of training to prevent ergonomics risks. Int. J. Environ. Res. Public Health 2020, 17, 2592. [Google Scholar] [CrossRef]

- Memmesheimer, V.M.; Ebert, A. Scalable extended reality: A future research agenda. Big Data Cogn. Comput. 2022, 6, 12. [Google Scholar] [CrossRef]

- Hignett, S.; McAtamney, L. Rapid entire body assessment (REBA). Appl. Ergon. 2000, 31, 201–205. [Google Scholar] [CrossRef] [PubMed]

- MASTER-XR Project Consortium. MANIPULAY XR: Playfully Learning Basics of Manipulators and Kinematics in Extended Reality, 2026. Available online: https://www.master-xr.eu/oc-project/manipulay-xr/ (accessed on 7 February 2026).

- MASTER-XR Project Consortium. EMPOWER: Enhancing Workplace Safety Through XR-Based Digital Assistance, 2026. Available online: https://www.master-xr.eu/oc-project/empower/ (accessed on 7 February 2026).

- MASTER-XR Project Consortium. ERGON-XR: Ergonomics Assessment in XR Environments, 2026. Available online: https://www.master-xr.eu/oc-project/ergon-xr/ (accessed on 7 February 2026).

- Barz, M.; Karagiannis, P.; Kildal, J.; Rivera Pinto, A.; de Munain, J.R.; Rosel, J.; Madarieta, M.; Salagianni, K.; Aivaliotis, P.; Makris, S.; et al. MASTER-XR: Mixed Reality Ecosystem for Teaching Robotics in Manufacturing. In Integrated Systems: Data Driven Engineering; Springer: Berlin/Heidelberg, Germany, 2024; pp. 167–182. [Google Scholar] [CrossRef]

- European Commission CORDIS. MASTER—Mixed Reality Ecosystem for Teaching Robotics in Manufacturing (Project 101093079). Available online: https://cordis.europa.eu/project/id/101093079 (accessed on 7 February 2026).

- Chan, W.P.; Crouch, M.; Hoang, K.; Chen, C.; Robinson, N.; Croft, E. Design and implementation of a human-robot joint action framework using augmented reality and eye gaze. arXiv 2022, arXiv:2208.11856. [Google Scholar] [CrossRef]

- Xia, G.; Ghrairi, Z.; Wuest, T.; Hribernik, K.; Heuermann, A.; Liu, F.; Liu, H.; Thoben, K.D. Towards human modeling for human-robot collaboration and digital twins in industrial environments: Research status, prospects, and challenges. Robot. Comput.-Integr. Manuf. 2025, 95, 103043. [Google Scholar] [CrossRef]

- Dodoo, J.E.; Al-Samarraie, H.; Alzahrani, A.I.; Tang, T. XR and Workers’ safety in High-Risk Industries: A comprehensive review. Saf. Sci. 2025, 185, 106804. [Google Scholar] [CrossRef]

- Wang, Z.; Bai, X.; Zhang, S.; Billinghurst, M.; He, W.; Wang, P.; Lan, W.; Min, H.; Chen, Y. A comprehensive review of augmented reality-based instruction in manual assembly, training and repair. Robot. Comput.-Integr. Manuf. 2022, 78, 102407. [Google Scholar] [CrossRef]

- Palmarini, R.; Erkoyuncu, J.A.; Roy, R.; Torabmostaedi, H. A systematic review of augmented reality applications in maintenance. Robot. Comput.-Integr. Manuf. 2018, 49, 215–228. [Google Scholar] [CrossRef]

- ISO 10218-2:2025; Robotics—Safety Requirements—Part 2: Industrial Robot Applications and Robot Cells. International Organization for Standardization: Geneva, Switzerland, 2025. Available online: https://www.iso.org/standard/73934.html (accessed on 7 February 2026).

- McAtamney, L.; Corlett, E.N. RULA: A survey method for the investigation of work-related upper limb disorders. Appl. Ergon. 1993, 24, 91–99. [Google Scholar] [CrossRef] [PubMed]

- Winfield, A.F.; Jirotka, M. Ethical governance is essential to building trust in robotics and artificial intelligence systems. Philos. Trans. R. Soc. A Math. Phys. Eng. Sci. 2018, 376, 20180085. [Google Scholar] [CrossRef] [PubMed]

- Plopski, A.; Hirzle, T.; Norouzi, N.; Qian, L.; Bruder, G.; Langlotz, T. The eye in extended reality: A survey on gaze interaction and eye tracking in head-worn extended reality. ACM Comput. Surv. (CSUR) 2022, 55, 53. [Google Scholar] [CrossRef]

- Barz, M.; Bhatti, O.S.; Alam, H.M.T.; Nguyen, D.M.H.; Sonntag, D. Interactive fixation-to-AOI mapping for mobile eye tracking data based on few-shot image classification. In Proceedings of the Companion Proceedings of the 28th International Conference on Intelligent User Interfaces; ACM Digital Library: New York, NY, USA, 2023; pp. 175–178. [Google Scholar] [CrossRef]

- Virtualware. VIROO Studio for Unity—Documentation Overview, 2025. Available online: https://virooportal.virtualwareco.com/docs/2.5/ (accessed on 7 February 2026).

- Kolb, D.A. Experiential Learning: Experience as the Source of Learning and Development, 2nd ed.; FT Press: Upper Saddle River, NJ, USA, 2014. [Google Scholar]

- Kolodner, J.L. An Introduction to Case-Based Reasoning. Artif. Intell. Rev. 1992, 6, 3–34. [Google Scholar] [CrossRef]

- Lindgren, R.; Tscholl, M.; Wang, S.; Johnson, E. Enhancing learning and engagement through embodied interaction within a mixed reality simulation. Comput. Educ. 2016, 95, 174–187. [Google Scholar] [CrossRef]

- Radianti, J.; Majchrzak, T.A.; Fromm, J.; Wohlgenannt, I. A systematic review of immersive virtual reality applications for higher education: Design elements, lessons learned, and research agenda. Comput. Educ. 2020, 147, 103778. [Google Scholar] [CrossRef]

- Coeckelbergh, M. AI Ethics; MIT Press: Cambridge, MA, USA, 2020. [Google Scholar]

- Anderson, L.W.; Krathwohl, D.R. A Taxonomy for Learning, Teaching, and Assessing: A Revision of Bloom’s Taxonomy of Educational Objectives, Complete Edition; Addison Wesley Longman, Inc.: Saddle River, NJ, USA, 2001. [Google Scholar]

- Brooke, J. SUS: A “Quick and Dirty” Usability Scale. In Usability Evaluation in Industry; Jordan, P.W., Thomas, B., Weerdmeester, B.A., McClelland, I.L., Eds.; Taylor & Francis: London, UK, 1996; pp. 189–194. [Google Scholar]

| Dimension | Prior XR Training Systems | HRC-XR Trainer Framework |

|---|---|---|

| Ergonomics integration | Partial | Integrated |

| Scalability | Limited | High |

| Multimodal feedback | Partial | Integrated |

| Pedagogical structure | Limited | Structured |

| Goal | Description | Pedagogical Orientation |

|---|---|---|

| G1 Accessibility | Deployment on consumer-grade XR hardware to support participation by non-specialist users across varied logistics contexts. | Supports inclusive access, affordability, and scalable training deployment. |

| G2 Embodied Understanding | Integration of natural interaction mechanisms such as gaze inference, controller-based manipulation, and posture-oriented cues, subject to hardware capabilities. | Reinforces embodied cognition and promotes ergonomics-aware interaction during task execution. |

| G3 Progressive Scaffolding | Structuring of learning activities into staged modules progressing from initial familiarisation to applied practice and reflective evaluation. | Supports constructivist learning progression and gradual competency development [40]. |

| G4 Immediate Multimodal Feedback | Provision of real-time visual and auditory feedback to support behavioural correction and adaptive task execution during interaction. | Strengthens experiential learning through responsive, context-sensitive system feedback. |

| G5 Safety and Responsibility Awareness | Integration of safety-oriented interaction logic designed to promote awareness of personal responsibility and adaptive behaviour in shared human–robot workspaces. | Encourages reflective judgement and safety-conscious conduct within collaborative task contexts. |

| G6 Reusability and Sustainability | Adoption of modular SDK-based integration within the MASTER XR ecosystem to support extensibility and reuse across training configurations. | Enables interoperability, long-term adaptability, and sustainable system evolution. |

| G7 Evaluation and Analytics | Collection of anonymised interaction and performance data to support analysis of learning progression and ergonomic exposure. | Facilitates evidence-based validation and iterative system refinement. |

| Module | Learning Focus | Implemented Toolkits | Primary Feedback Modalities |

|---|---|---|---|

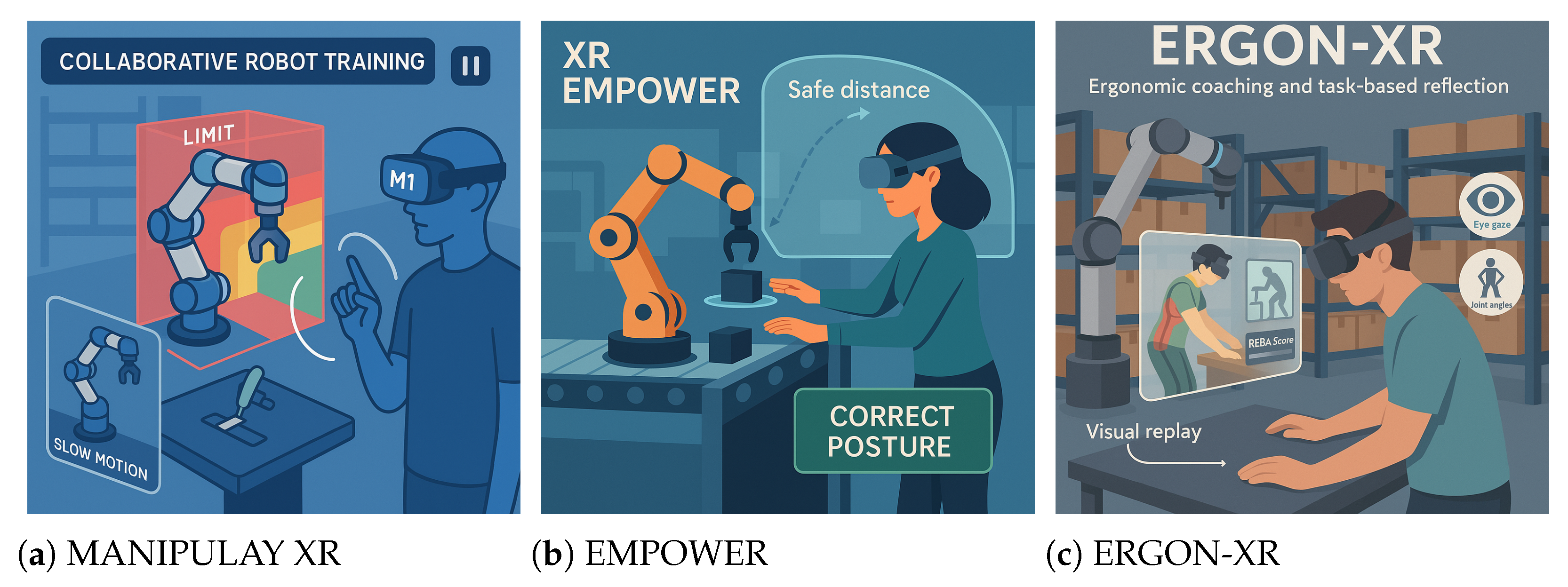

| M1 Foundational Awareness | Understanding robotic structure, joint kinematics, motion limits, and reachable workspace in relation to safe interaction zones | MANIPULAY XR | Visual feedback supporting guided assembly, joint manipulation, reach testing, and spatial reasoning during structured tasks. |

| M2 Experiential Safe-Behaviour Practice | Development of embodied safety awareness and coordination skills in shared human–robot workspaces | EMPOWER | Real-time visual and auditory cues indicating proximity, unsafe posture, and behavioural adaptation during collaborative task execution. |

| M3 Reflective Ergonomic Evaluation | Interpretation of ergonomic exposure and movement patterns during logistics-oriented pick-and-place activities | ERGON-XR | Post-task and near-real-time feedback based on posture indicators, task timing, and REBA-aligned risk visualisations. |

| Module | Learning Objective | Assessment Indicator |

|---|---|---|

| M1 Foundational Awareness | Recognition of robot components, joint constraints, reachable workspace, and designated safety zones. | Task completion success, assembly accuracy, and configuration consistency. |

| M2 Experiential Safe-Behaviour Practice | Execution of safe spatial coordination and ergonomically appropriate posture during shared HRC tasks. | Posture deviation indicators, proximity threshold compliance, and task interruption frequency. |

| M3 Reflective Ergonomic Evaluation | Interpretation of ergonomic exposure and identification of corrective adjustments based on task feedback. | REBA-aligned risk indicators, posture stability measures, and variation across repeated task execution. |

| Category | Measure | Instrument/Source |

|---|---|---|

| Cognitive Learning | Change in conceptual understanding across training modules | Pre- and post-intervention multiple-choice assessment and structured task checklist |

| Ergonomic Behaviour | REBA-aligned proxy indicators and posture stability measures | Ergonomics assessment supported by the ERGON-XR toolkit |

| User Experience | Perceived usability and interaction clarity | System Usability Scale [41] questionnaire administered post-session |

| Task Performance | Task completion success, execution time, and error occurrence | Application-level performance metrics recorded during training sessions |

| Qualitative Feedback | Participant reflections and observed interaction patterns | Semi-structured interviews and structured observation records |

| System Performance | Frame rate stability and interaction responsiveness | Runtime diagnostics from Unity and device-level telemetry |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Daios, A.; Kostavelis, I. A Human-Centred Extended Reality (XR) System for Safe Human–Robot Collaboration (HRC) in Smart Logistics. Systems 2026, 14, 348. https://doi.org/10.3390/systems14040348

Daios A, Kostavelis I. A Human-Centred Extended Reality (XR) System for Safe Human–Robot Collaboration (HRC) in Smart Logistics. Systems. 2026; 14(4):348. https://doi.org/10.3390/systems14040348

Chicago/Turabian StyleDaios, Adamos, and Ioannis Kostavelis. 2026. "A Human-Centred Extended Reality (XR) System for Safe Human–Robot Collaboration (HRC) in Smart Logistics" Systems 14, no. 4: 348. https://doi.org/10.3390/systems14040348

APA StyleDaios, A., & Kostavelis, I. (2026). A Human-Centred Extended Reality (XR) System for Safe Human–Robot Collaboration (HRC) in Smart Logistics. Systems, 14(4), 348. https://doi.org/10.3390/systems14040348