Machine Learning-Driven Ubiquitous Mobile Edge Computing as a Solution to Network Challenges in Next-Generation IoT

Abstract

1. Introduction

2. Literature Review

| Author and Year | Technique | Objective | Pros | Cons |

|---|---|---|---|---|

| Yu et al., 2021 [10] | Deep Imitation Learning-Driven Caching and Offloading Algorithm | To reduce the usage of satellite resources and the task completion time | Achieved real-time decision-making | Time required for implementation was high |

| Ai et al., 2023 [11] | Hierarchical Spatio-temporal Monitoring (HSTM) | To achieve prediction of dynamic service | Offloading efficiency was enhanced | High computational expenditure and large memory needed |

| Sood et al., 2021 [12] | Smart traffic management approach | To predict inflow of traffic and to enhance vehicle’s smart navigation | More effective and better load balancing | Cannot be suitable energetically for resource-restricted mobile mechanism |

| Mazumdar et al. 2021 [13] | Load-offloading method | To optimize the service in suitable time and to support only static IoT devices | Reduces the amount of data to be sent to the cloud | Communication delay as well as high network complexity |

| Shah et al., 2018 [15] | Empirical multi-agent cognitive method | To attain consecutive transition of IoT APIs | Achieves transparency in the heterogeneity and distribution of IoT APIs | The challenge is in designing the future of connected ecosystems |

| Bolettieri et al., 2021 [16] | Heuristic algorithm with linear relaxation and rounding techniques | To minimize complexity | High efficiency | Not effective in handling inconsistent traffic demands |

| Chien et al., 2021 [14] | Convolutional neural network (CNN) | To reduce the time cost during data transmission to the cloud server | Reduced training time and increased prediction accuracy | Use of large amount of data largely impacted the performance |

| Abbasi et al., 2021 [18] | Genetic algorithm (GA) | To enhance management and processing of IoT and smart grid | Reduced delay and power consumption | Deep neural networks were required to solve the multi-objective optimization problems |

| Liao et al., 2023 [19] | Reputation- and voting based blockchain consensus (RVC) | To rectify issues such as reducing consensus efficiency | Successful consensus rate, transaction output, and reduced time consumption | Required large number of nodes |

| Karjee et al., 2022 [20] | Deep neural network (DNN) | To alleviate the issues of partly offloading | Minimizes the overall execution time of each task | Computational capabilities |

| Mutichiro et al., 2021 [24] | Dynamic pod-scheduling model | To solve the task scheduling problem at the edge | Maximizes node utilization, minimizes the cost, and optimizes the service time | Few constraints in resource capacity (CPU and memory) and total service time |

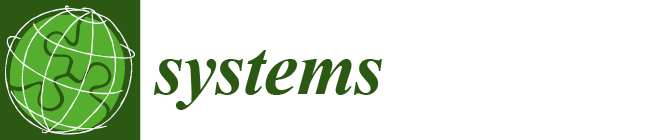

3. Proposed Methodology

3.1. Resource Allocation and QoS Optimization Objective Function

3.2. Hybrid Kernel Random Forest and Ensemble SVM Algorithm

3.2.1. Kernel Random Forest Algorithm

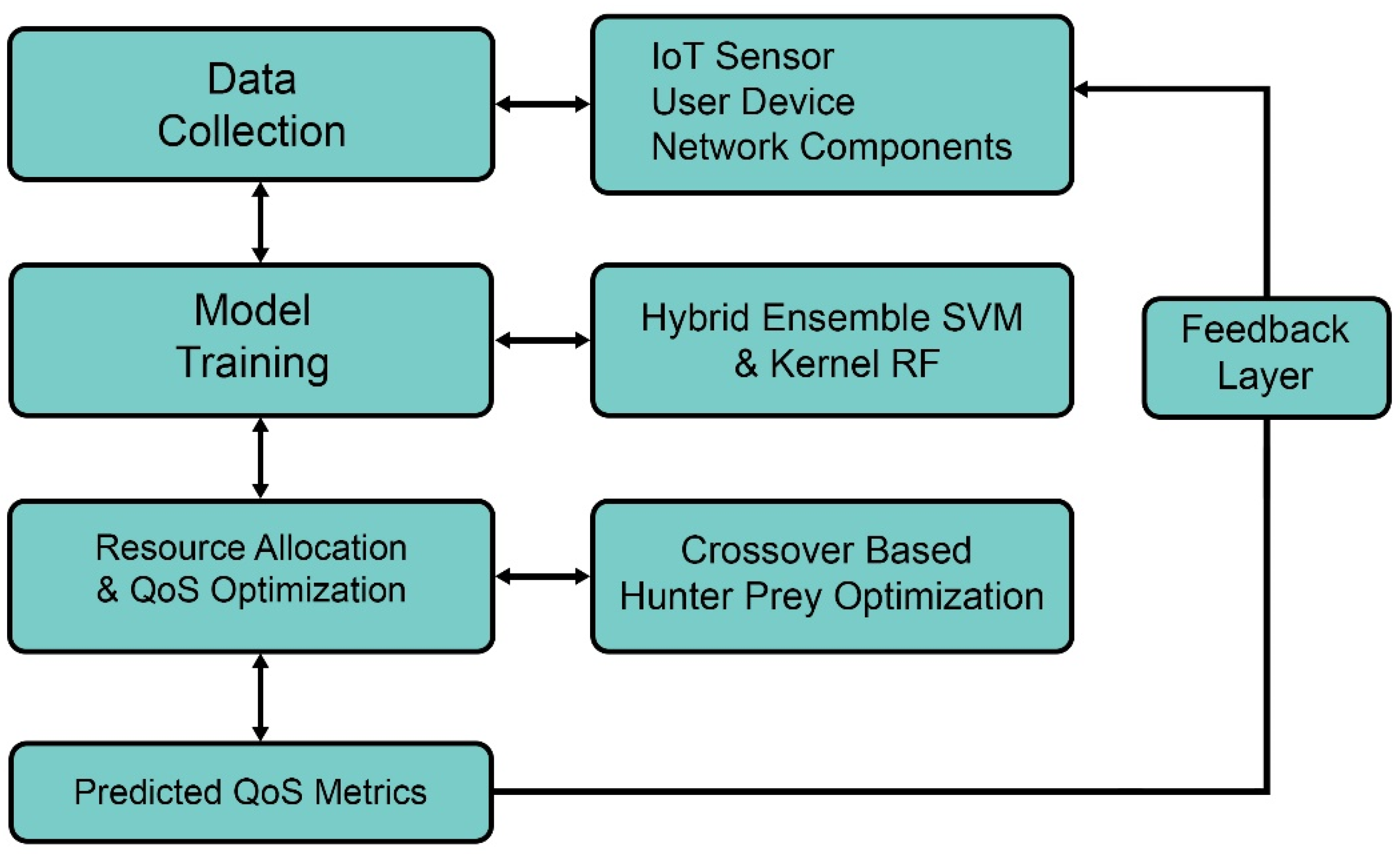

3.2.2. Ensemble SVM Algorithm

3.2.3. Hybrid Kernel Random Forest and Ensemble SVM Algorithm

3.3. Hunter-Prey Optimization (HPO)

Crossover-Based Hunter–Prey Optimization

4. Results and Discussion

4.1. Parameter Settings

4.2. Performance Measures

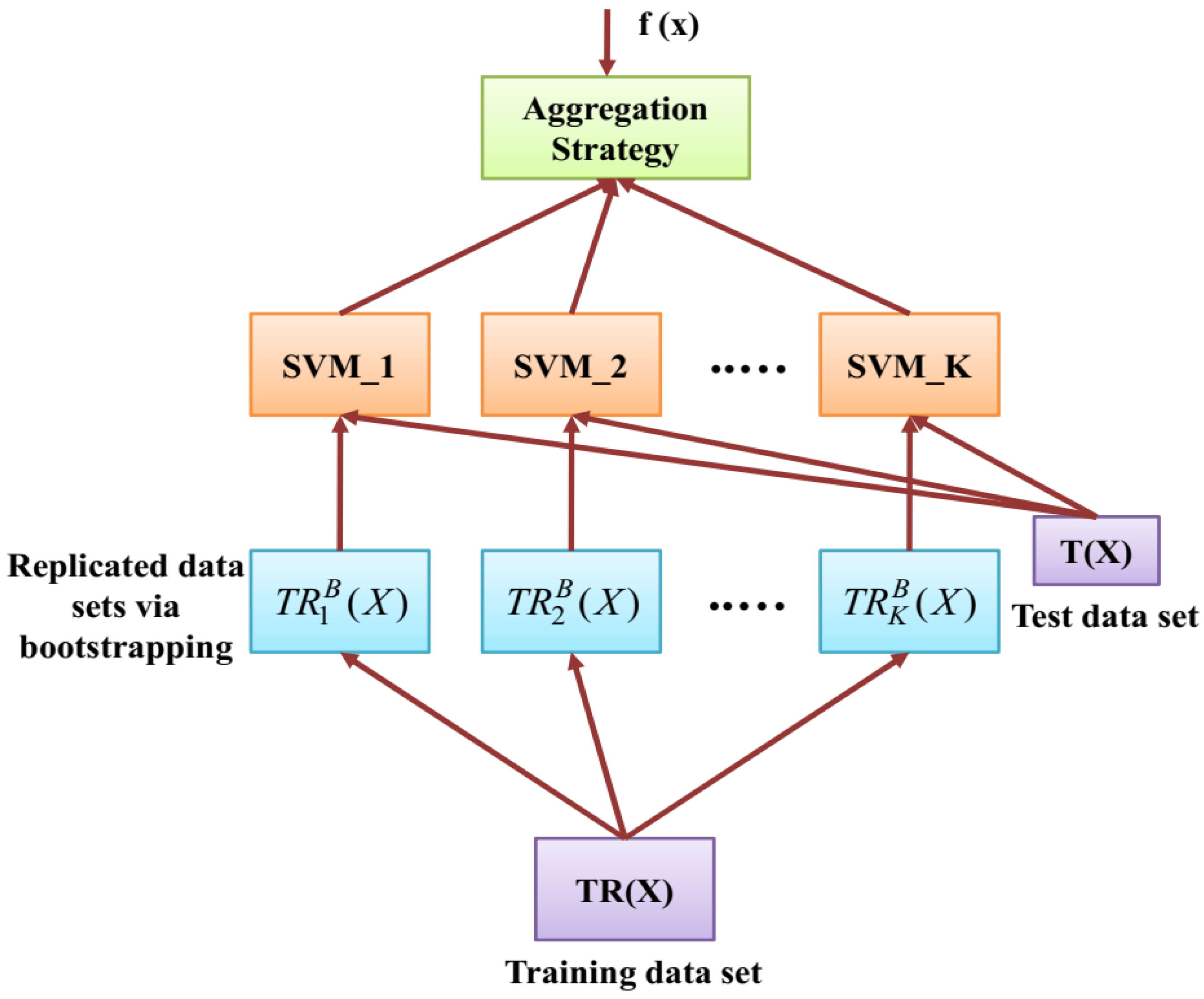

- Throughput

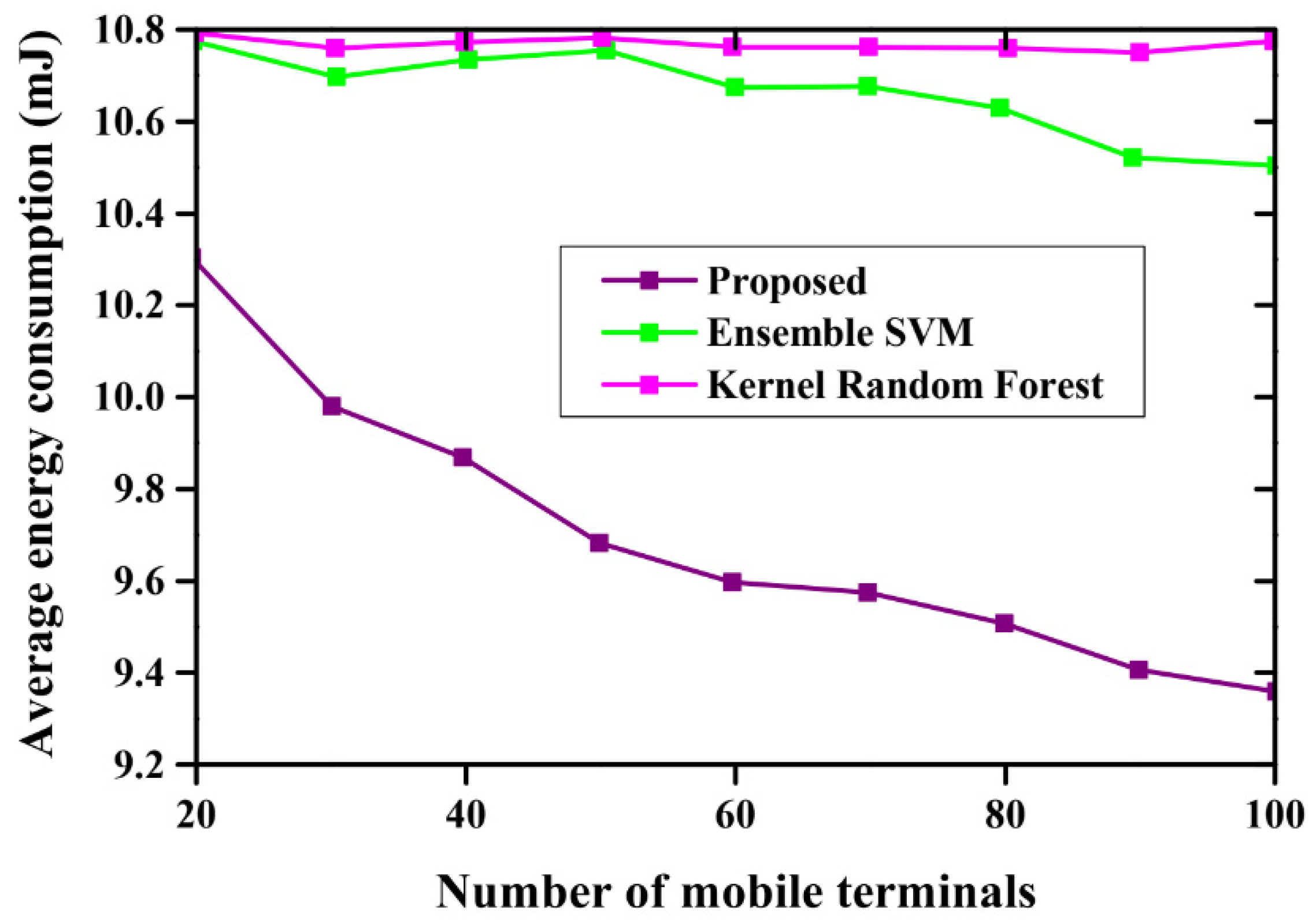

- Energy consumption

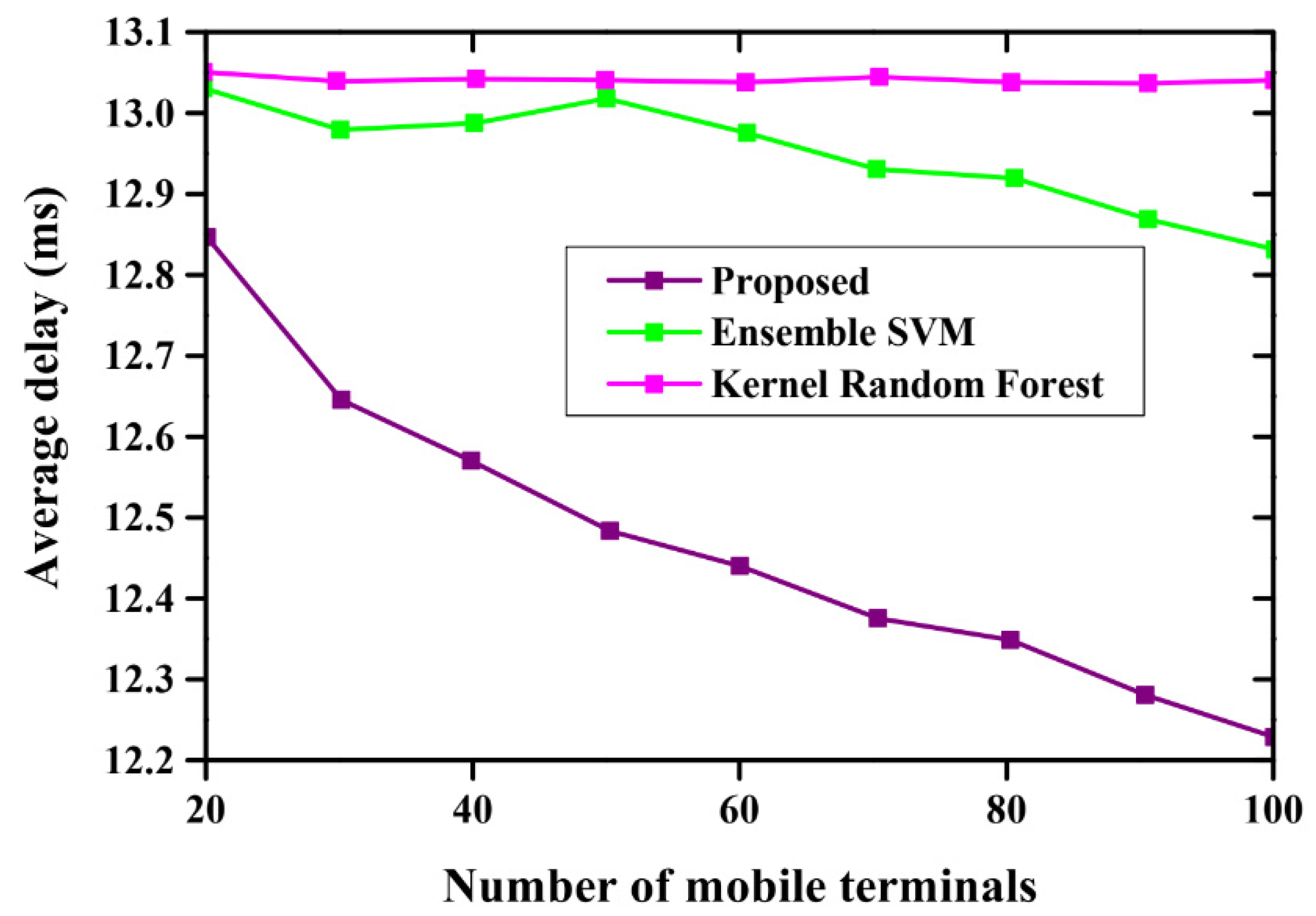

- Delay

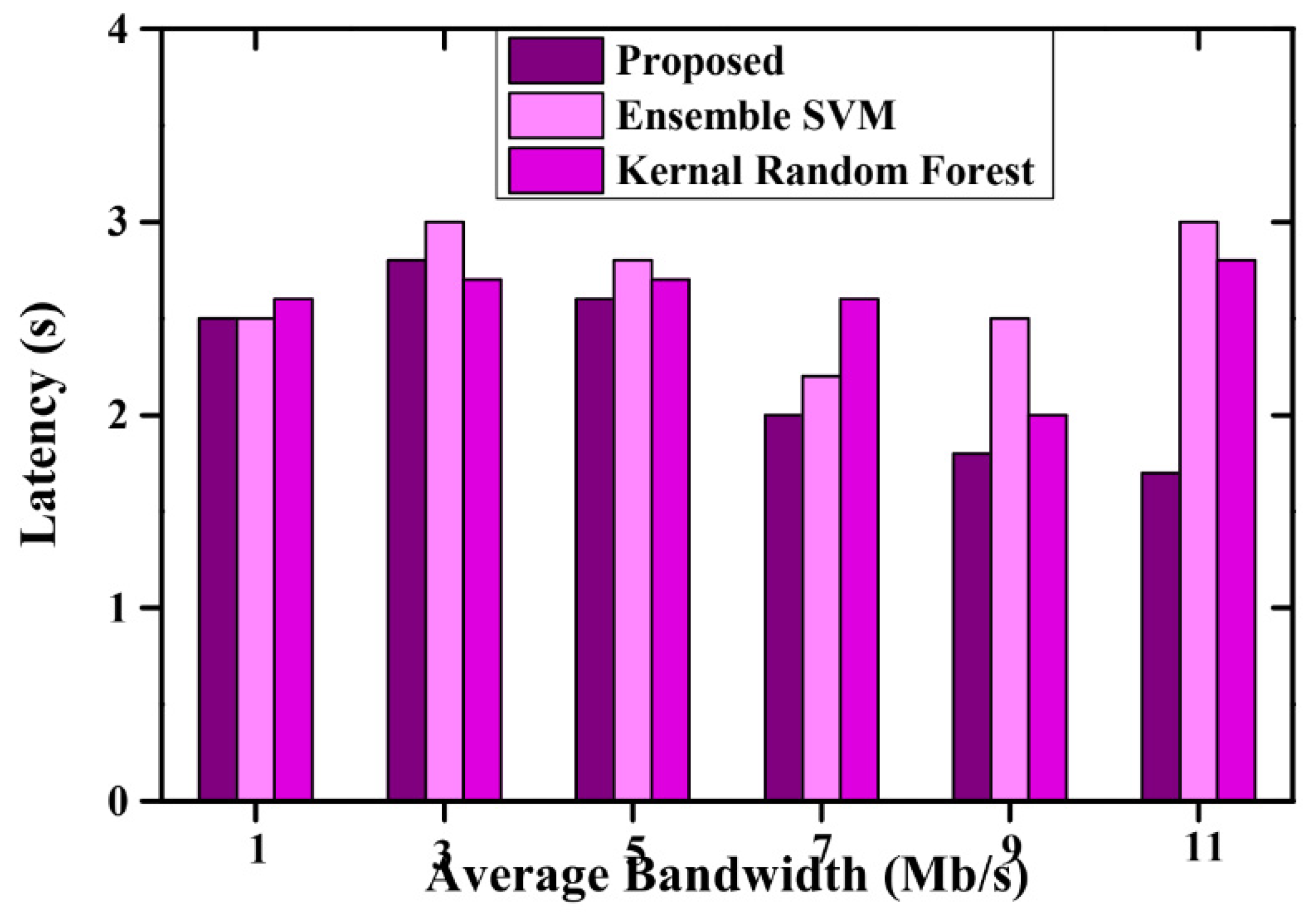

- Latency

4.3. Performance Evaluation

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Shahraki, A.; Ohlenforst, T.; Kreyß, F. When machine learning meets network management and orchestration in Edge-based networking paradigms. J. Netw. Comput. Appl. 2022, 212, 103558. [Google Scholar] [CrossRef]

- Chen, P.; Liu, H.; Xin, R.; Carval, T.; Zhao, J.; Xia, Y.; Zhao, Z. Effectively Detecting Operational Anomalies in Large-Scale IoT Data Infrastructures by Using A GAN-Based Predictive Model. Comput. J. 2022, 65, 2909–2925. [Google Scholar] [CrossRef]

- Adil, M. Congestion-free opportunistic multipath routing load balancing scheme for the Internet of Things (IoT). Comput. Netw. 2021, 184, 107707. [Google Scholar] [CrossRef]

- Shakarami, A.; Ghobaei-Arani, M.; Shahidinejad, A. A survey on the computation offloading approaches in mobile edge computing: A machine learning-based perspective. Comput. Netw. 2020, 182, 107496. [Google Scholar] [CrossRef]

- Li, J.; Deng, Y.; Sun, W.; Li, W.; Li, R.; Li, Q.; Liu, Z. Resource Orchestration of Cloud-Edge–Based Smart Grid Fault Detection. ACM Trans. Sen. Netw. 2022, 18, 1–26. [Google Scholar] [CrossRef]

- Wang, Y.; Han, X.; Jin, S. MAP based modeling method and performance study of a task offloading scheme with time-correlated traffic and VM repair in MEC systems. Wirel. Netw. 2022, 29, 47–68. [Google Scholar] [CrossRef]

- Liu, J.; Lin, C.H.R.; Hu, Y.C.; Donta, P.K. Joint beamforming, power allocation, and splitting control for SWIPT-enabled IoT networks with deep reinforcement learning and game theory. Sensors 2022, 22, 2328. [Google Scholar] [CrossRef] [PubMed]

- Scornet, E. Random forests and kernel methods. IEEE Trans. Inf. Theory 2016, 62, 1485–1500. [Google Scholar] [CrossRef]

- Kim, H.C.; Pang, S.; Je, H.M.; Kim, D.; Bang, S.Y. Constructing support vector machine ensemble. Pattern Recognit. 2003, 36, 2757–2767. [Google Scholar] [CrossRef]

- Yu, S.; Gong, X.; Shi, Q.; Wang, X.; Chen, X. EC-SAGINs: Edge-computing-enhanced space–air–ground-integrated networks for the internet of vehicles. IEEE Internet Things J. 2021, 9, 5742–5754. [Google Scholar] [CrossRef]

- Ai, Z.; Zhang, W.; Li, M.; Li, P.; Shi, L. A smart collaborative framework for dynamic multi-task offloading in IIoT-MEC networks. Peer-Peer Netw. Appl. 2023, 16, 749–764. [Google Scholar] [CrossRef]

- Sood, S.K. Smart vehicular traffic management: An edge cloud-centric IoT-based framework. Internet Things 2021, 14, 100140. [Google Scholar]

- Mazumdar, N.; Nag, A.; Singh, J.P. Trust-based load-offloading protocol to reduce service delays in fog-computing-empowered IoT. Comput. Electr. Eng. 2021, 93, 107223. [Google Scholar] [CrossRef]

- Chien, W.C.; Huang, Y.M. A lightweight model withspatial–temporal correlation for cellular traffic prediction in the Internet of Things. J. Supercomput. 2021, 77, 10023–10039. [Google Scholar] [CrossRef]

- Shah, V.S. Multi-agent cognitive architecture-enabled IoT applications of mobile edge computing. Ann. Telecommun. 2018, 73, 487–497. [Google Scholar] [CrossRef]

- Bolettieri, S.; Bruno, R.; Mingozzi, E. Application-aware resource allocation and data management for MEC-assisted IoT service providers. J. Netw. Comput. Appl. 2021, 181, 103020. [Google Scholar] [CrossRef]

- Hashash, O.; Sharafeddine, S.; Dawy, Z.; Mohamed, A.; Yaacoub, E. Energy-aware distributed edge ML for mhealth applications with strict latency requirements. IEEE Wirel. Commun. Lett. 2021, 10, 2791–2794. [Google Scholar] [CrossRef]

- Abbasi, M.; Mohammadi-Pasand, E.; Khosravi, M.R. Intelligent workload allocation in IoT–Fog–cloud architecture towards mobile edge computing. Comput. Commun. 2021, 169, 71–80. [Google Scholar] [CrossRef]

- Liao, Z.; Cheng, S. RVC: A reputation and voting based blockchain consensus mechanism for edge computing-enabled IoT systems. J. Netw. Comput. Appl. 2023, 209, 103510. [Google Scholar] [CrossRef]

- Karjee, J.; Naik, P.; Anand, K.; Bhargav, V.N. Split computing: DNN inference partition with load balancing in IoT-edge platform for beyond 5G. Meas. Sens. 2022, 23, 100409. [Google Scholar] [CrossRef]

- Elshahed, M.; El-Rifaie, A.M.; Tolba, M.A.; Ginidi, A.; Shaheen, A.; Mohamed, S.A. An Innovative Hunter-Prey-Based Optimization for Electrically Based Single-, Double-, and Triple-Diode Models of Solar Photovoltaic Systems. Mathematics 2022, 10, 4625. [Google Scholar] [CrossRef]

- Chen, W.; Zhu, Y.; Liu, J.; Chen, Y. Enhancing Mobile Edge Computing with Efficient Load Balancing Using Load Estimation in Ultra-Dense Network. Sensors 2021, 21, 3135. [Google Scholar] [CrossRef] [PubMed]

- Poryazov, S.A.; Saranova, E.T.; Andonov, V.S. Overall Model Normalization towards Adequate Prediction and Presentation of QoE in Overall Telecommunication Systems. In Proceedings of the 2019 14th International Conference on Advanced Technologies, Systems and Services in Telecommunications (TELSIKS), Nis, Serbia, 23–25 October 2019; pp. 360–363. [Google Scholar]

- Mutichiro, B.; Tran, M.N.; Kim, Y.H. QoS-based service-time scheduling in the IoT-edge cloud. Sensors 2021, 21, 5797. [Google Scholar] [CrossRef]

- Xu, J.; Ma, R.; Stankovski, S.; Liu, X.; Zhang, X. Intelligent Dynamic Quality Prediction of Chilled Chicken with Integrated IoT Flexible Sensing and Knowledge Rules Extraction. Foods 2022, 11, 836. [Google Scholar] [CrossRef]

- Jiang, H.; Dai, X.; Xiao, Z.; Iyengar, A.K. Joint Task Offloading and Resource Allocation for Energy-Constrained Mobile Edge Computing. IEEE Trans. Mob. Comput. 2022, 22, 4000–4015. [Google Scholar] [CrossRef]

- Banoth, S.P.R.; Donta, P.K.; Amgoth, T. Target-aware distributed coverage and connectivity algorithm for wireless sensor networks. Wirel. Netw. 2023, 29, 1815–1830. [Google Scholar] [CrossRef]

- Saab, S., Jr.; Saab, K.; Phoha, S.; Zhu, M.; Ray, A. A multivariate adaptive gradient algorithm with reduced tuning efforts. Neural Netw. 2022, 152, 499–509. [Google Scholar] [CrossRef]

- Rani, S.; Babbar, H.; Srivastava, G.; Gadekallu, T.R.; Dhiman, G. Security Framework for Internet of Things based Software Defined Networks using Blockchain. IEEE Internet Things J. 2022, 10, 6074–6081. [Google Scholar] [CrossRef]

- Liu, K.; Wang, P.; Zhang, J.; Fu, Y.; Das, S.K. Modeling the Interaction Coupling of Multi-View Spatiotemporal Contexts for Destination Prediction. In Proceedings of the 2018 SIAM International Conference on Data Mining, San Diego, CA, USA, 3–5 May 2018. [Google Scholar]

| Parameters | Ranges |

|---|---|

| Learning rate | 0.1 |

| Total number of trees | 50 |

| Regularization parameter | 1 |

| Maximum depth of each tree | 10 |

| Size of population | 20 |

| Total number of iterations | 50 |

| Number of Trees (HKRF) | Types of Kernels (HKRF) | Number of Iterations (CHPO) | Accuracy | Computational Efficiency | Convergence Speed | Resource Utilization |

|---|---|---|---|---|---|---|

| 100 | Linear | 10 | 0.85 | High | Fast | Moderate |

| 200 | RBF | 20 | 0.87 | Moderate | Moderate | Moderate |

| 150 | Polynomial | 15 | 0.89 | High | Slow | High |

| 300 | Sigmoid | 25 | 0.82 | Low | Moderate | Low |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Al Moteri, M.; Khan, S.B.; Alojail, M. Machine Learning-Driven Ubiquitous Mobile Edge Computing as a Solution to Network Challenges in Next-Generation IoT. Systems 2023, 11, 308. https://doi.org/10.3390/systems11060308

Al Moteri M, Khan SB, Alojail M. Machine Learning-Driven Ubiquitous Mobile Edge Computing as a Solution to Network Challenges in Next-Generation IoT. Systems. 2023; 11(6):308. https://doi.org/10.3390/systems11060308

Chicago/Turabian StyleAl Moteri, Moteeb, Surbhi Bhatia Khan, and Mohammed Alojail. 2023. "Machine Learning-Driven Ubiquitous Mobile Edge Computing as a Solution to Network Challenges in Next-Generation IoT" Systems 11, no. 6: 308. https://doi.org/10.3390/systems11060308

APA StyleAl Moteri, M., Khan, S. B., & Alojail, M. (2023). Machine Learning-Driven Ubiquitous Mobile Edge Computing as a Solution to Network Challenges in Next-Generation IoT. Systems, 11(6), 308. https://doi.org/10.3390/systems11060308