The Effectiveness of Interactive Dashboards to Optimise Antibiotic Prescribing in Primary Care: A Systematic Review

Abstract

1. Introduction

2. Results

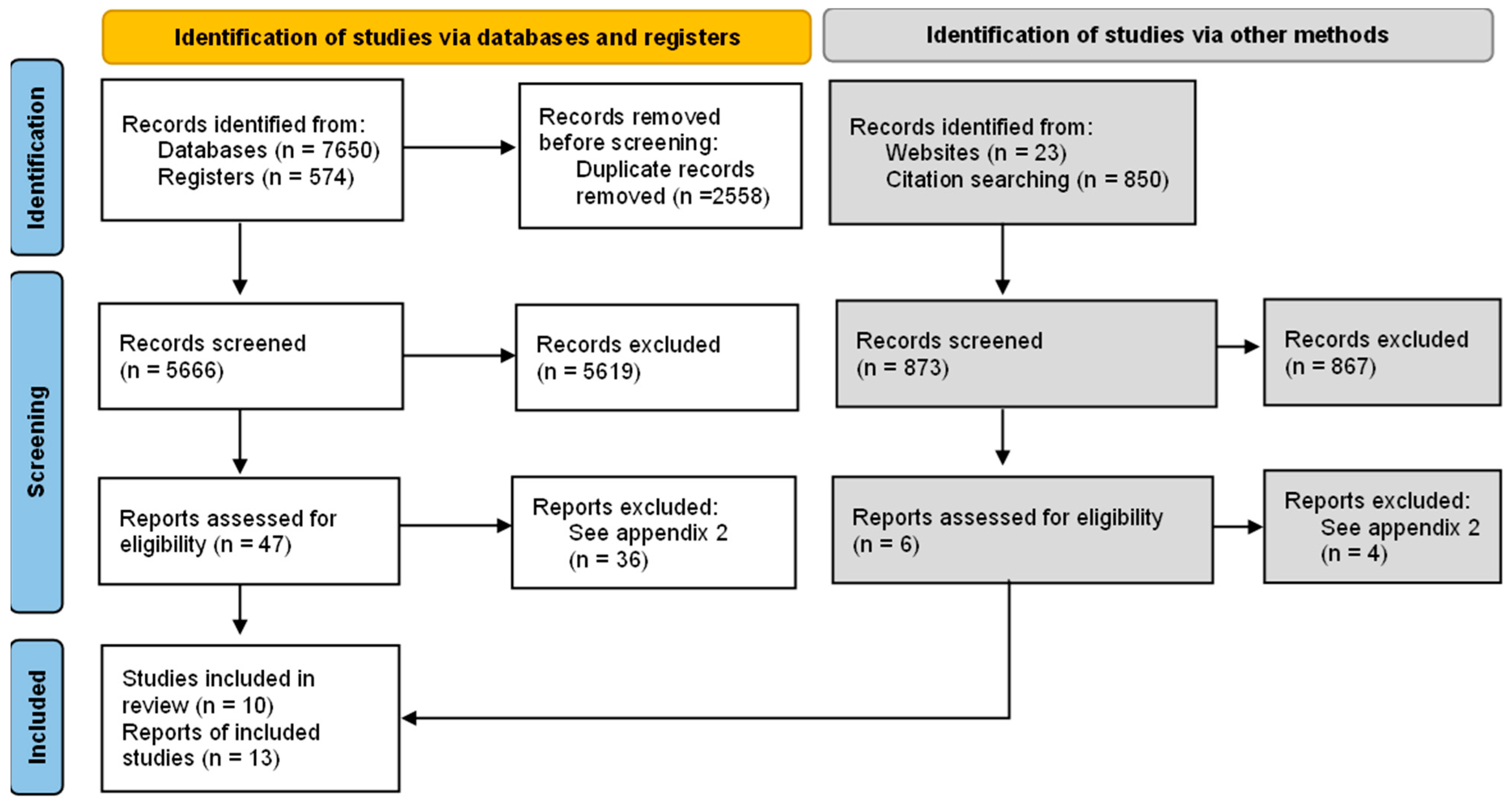

2.1. Included Studies

2.1.1. Study Design

2.1.2. Participants and Settings

2.1.3. Description of the Intervention

2.1.4. Outcomes

2.2. Excluded Studies

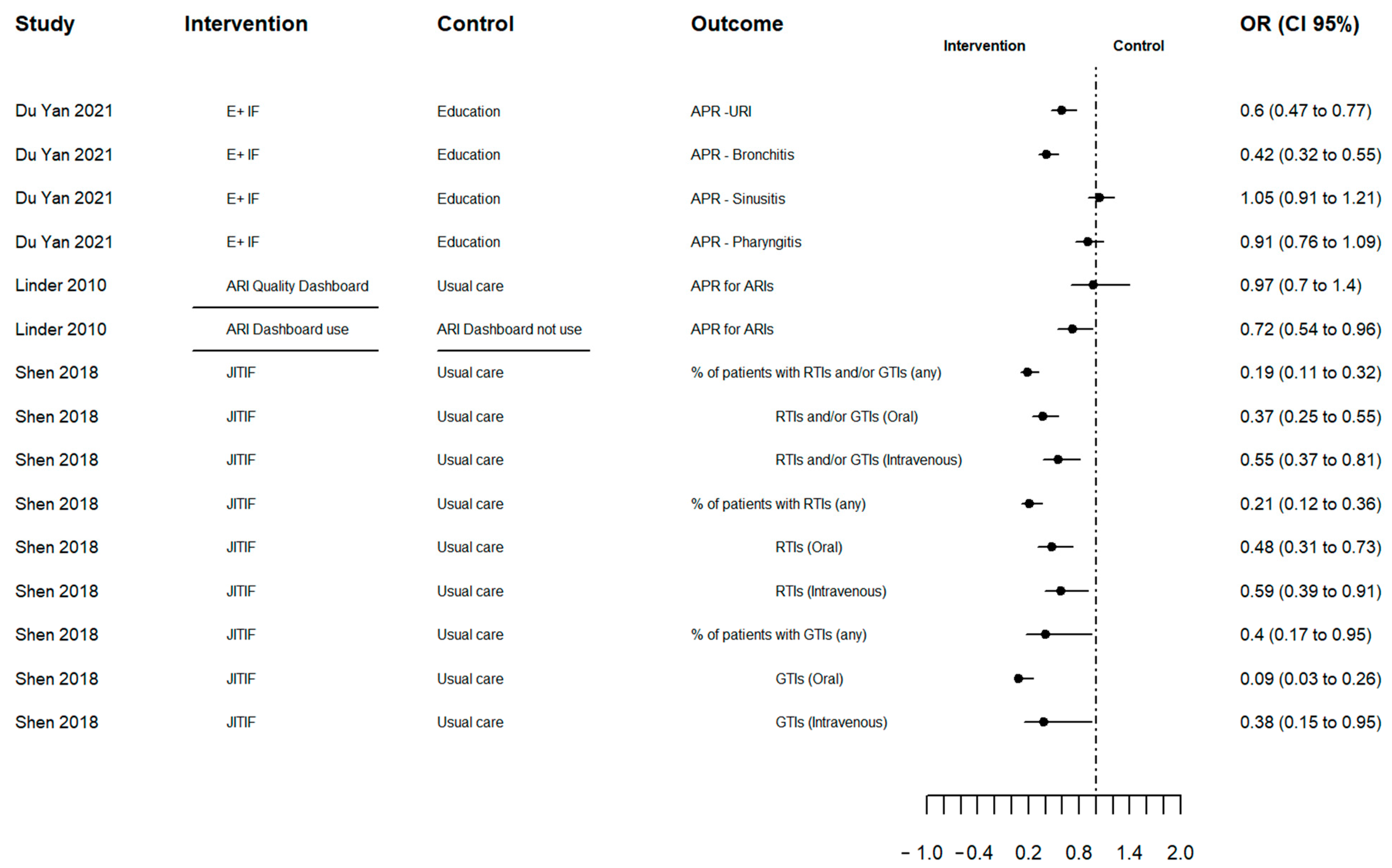

2.3. Effects of the Interventions

2.4. Risk of Bias

2.5. Grading the Quality of Evidence

3. Discussion

4. Materials and Methods

4.1. Criteria for Considering Studies for this Review

- Participants: general practices and primary care settings focused on GPs or other health professionals.

- Intervention: Any intervention using prescription data illustrated in a visual analytical tool (i.e., dashboard). Decision support tools which were incorporated as alerts or risk calculators were excluded.

- Comparator: usual care or any other intervention without visual analytical tools (dashboard).

- Outcomes of interest included:

- ○

- Change in AP (primary outcome)

- ○

- Prescribed antibiotic class

- ○

- Change in prescription of inappropriate (i.e., not recommended) antibiotics

- ○

- Antibiotic duration

- ○

- Patients’ re-consultation

- ○

- Dashboard engagement (not initially included in the protocol).

- Types of studies: RCTs and non-randomised controlled trials (non-RCTs) (controlled before and after studies, interrupted time series studies (ITS) and controlled trials using non-random methods) assessing the effectiveness of dashboards including prescription data in general practice.

4.2. Search Methods for Identification of Studies

4.3. Data Collection and Analysis

4.3.1. Selection of Studies

4.3.2. Data Extraction and Management

4.3.3. Risk of Bias (Quality) Assessment

4.3.4. Measures of Treatment Effect

4.3.5. Missing Data

4.3.6. Assessment of Heterogeneity

4.3.7. Data Synthesis

4.3.8. Grading the Quality of Evidence

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Murray, C.J.; Ikuta, K.S.; Sharara, F.; Swetschinski, L.; Robles Aguilar, G.; Gray, A.; Han, C.; Bisignano, C.; Rao, P.; Wool, E.; et al. Global Burden of Bacterial Antimicrobial Resistance in 2019: A Systematic Analysis. Lancet 2022, 399, 629–655. [Google Scholar] [CrossRef] [PubMed]

- Klein, E.Y.; Milkowska-Shibata, M.; Tseng, K.K.; Sharland, M.; Gandra, S.; Pulcini, C.; Laxminarayan, R. Assessment of WHO Antibiotic Consumption and Access Targets in 76 Countries, 2000–2015: An Analysis of Pharmaceutical Sales Data. Lancet Infect. Dis. 2021, 21, 107–115. [Google Scholar] [CrossRef] [PubMed]

- Davies, S.C. Reducing Inappropriate Prescribing of Antibiotics in English Primary Care: Evidence and Outlook. J. Antimicrob. Chemother. 2018, 73, 833–834. [Google Scholar] [CrossRef] [PubMed]

- Research Reveals Levels of Inappropriate Prescriptions in England—GOV.UK. Available online: https://www.gov.uk/government/news/research-reveals-levels-of-inappropriate-prescriptions-in-england (accessed on 2 October 2022).

- Richards, A.R.; Linder, J.A. Behavioral Economics and Ambulatory Antibiotic Stewardship: A Narrative Review. Clin. Ther. 2021, 43, 1654–1667. [Google Scholar] [CrossRef]

- European Centre for Disease Prevention and Control. Antimicrobial Consumption in the EU/EEA (ESAC-Net)—Annual Epidemiological Report 2020; European Centre for Disease Prevention: Stockholm, Sweden, 2021. [Google Scholar]

- Tsuchida, R.E.; Haggins, A.N.; Perry, M.; Chen, C.M.; Medlin, R.P.; Meurer, W.J.; Burkhardt, J.; Fung, C.M. Developing an Electronic Health Record–Derived Health Equity Dashboard to Improve Learner Access to Data and Metrics. AEM Educ. Train. 2021, 5, S116–S120. [Google Scholar] [CrossRef]

- Carvalho, É.; Estrela, M.; Zapata-Cachafeiro, M.; Figueiras, A.; Roque, F.; Herdeiro, M.T. E-Health Tools to Improve Antibiotic Use and Resistances: A Systematic Review. Antibiotics 2020, 9, 505. [Google Scholar] [CrossRef] [PubMed]

- Holstiege, J.; Mathes, T.; Pieper, D. Effects of Computer-Aided Clinical Decision Support Systems in Improving Antibiotic Prescribing by Primary Care Providers: A Systematic Review. J. Am. Med. Informatics Assoc. 2015, 22, 236–242. [Google Scholar] [CrossRef]

- Chima, S.; Reece, J.C.; Milley, K.; Milton, S.; McIntosh, J.G.; Emery, J.D. Decision Support Tools to Improve Cancer Diagnostic Decision Making in Primary Care: A Systematic Review. Br. J. Gen. Pract. 2019, 69, e809–e818. [Google Scholar] [CrossRef]

- Sutton, R.T.; Pincock, D.; Baumgart, D.C.; Sadowski, D.C.; Fedorak, R.N.; Kroeker, K.I. An Overview of Clinical Decision Support Systems: Benefits, Risks, and Strategies for Success. NPJ Digit. Med. 2020, 3, 17. [Google Scholar] [CrossRef]

- Harada, T.; Miyagami, T.; Kunitomo, K.; Shimizu, T. Clinical Decision Support Systems for Diagnosis in Primary Care: A Scoping Review. Int. J. Environ. Res. Public Health 2021, 18, 8435. [Google Scholar] [CrossRef]

- Chung, G.W.; Wu, J.E.; Yeo, C.L.; Chan, D.; Hsu, L.Y. Antimicrobial Stewardship: A Review of Prospective Audit and Feedback Systems and an Objective Evaluation of Outcomes. Virulence 2013, 4, 151–157. [Google Scholar] [CrossRef] [PubMed]

- Tsang, J.Y.; Peek, N.; Buchan, I.; van der Veer, S.N.; Brown, B. Systematic Review and Narrative Synthesis of Computerized Audit and Feedback Systems in Healthcare. J. Am. Med. Inform. Assoc. 2022, 29, 1106–1119. [Google Scholar] [CrossRef] [PubMed]

- Tuti, T.; Nzinga, J.; Njoroge, M.; Brown, B.; Peek, N.; English, M.; Paton, C.; van der Veer, S.N. A Systematic Review of Electronic Audit and Feedback: Intervention Effectiveness and Use of Behaviour Change Theory. Implement. Sci. 2017, 12, 61. [Google Scholar] [CrossRef] [PubMed]

- Dowding, D.; Randell, R.; Gardner, P.; Fitzpatrick, G.; Dykes, P.; Favela, J.; Hamer, S.; Whitewood-Moores, Z.; Hardiker, N.; Borycki, E.; et al. Dashboards for Improving Patient Care: Review of the Literature. Int. J. Med. Inform. 2015, 84, 87–100. [Google Scholar] [CrossRef]

- Xie, C.X.; Chen, Q.; Hincapié, C.A.; Hofstetter, L.; Maher, C.G.; Machado, G.C. Effectiveness of Clinical Dashboards as Audit and Feedback or Clinical Decision Support Tools on Medication Use and Test Ordering: A Systematic Review of Randomized Controlled Trials. J. Am. Med. Inform. Assoc. 2022, 29, 1773–1785. [Google Scholar] [CrossRef] [PubMed]

- Palin, V.; Tempest, E.; Mistry, C.; Van Staa, T.P. Developing the Infrastructure to Support the Optimisation of Antibiotic Prescribing Using the Learning Healthcare System to Improve Healthcare Services in the Provision of Primary Care in England. BMJ Health Care Inform. 2020, 27, e100147. [Google Scholar] [CrossRef]

- BRIT Project. Available online: https://www.britanalytics.uk (accessed on 15 February 2022).

- Daneman, N.; Lee, S.M.; Bai, H.; Bell, C.M.; Bronskill, S.E.; Campitelli, M.A.; Dobell, G.; Fu, L.; Garber, G.; Ivers, N.; et al. Population-Wide Peer Comparison Audit and Feedback to Reduce Antibiotic Initiation and Duration in Long-Term Care Facilities with Embedded Randomized Controlled Trial. Clin. Infect. Dis. 2021, 73, e1296–e1304. [Google Scholar] [CrossRef]

- Jones, G.F.; Fabre, V.; Hinson, J.; Levin, S.; Toerper, M.; Townsend, J.; Cosgrove, S.E.; Saheed, M.; Klein, E.Y. Improving Antimicrobial Prescribing for Upper Respiratory Infections in the Emergency Department: Implementation of Peer Comparison with Behavioral Feedback. Antimicrob. Steward. Healthc. Epidemiol. 2021, 1, e70. [Google Scholar] [CrossRef]

- Curtis, H.J.; Bacon, S.; Croker, R.; Walker, A.J.; Perera, R.; Hallsworth, M.; Harper, H.; Mahtani, K.R.; Heneghan, C.; Goldacre, B. Evaluating the Impact of a Very Low-Cost Intervention to Increase Practices’ Engagement with Data and Change Prescribing Behaviour: A Randomized Trial in English Primary Care. Fam. Pract. 2021, 38, 373–380. [Google Scholar] [CrossRef]

- Linder, J.A.; Schnipper, J.L.; Tsurikova, R.; Yu, D.T.; Volk, L.A.; Melnikas, A.J.; Palchuk, M.B.; Olsha-Yehiav, M.; Middleton, B. Electronic Health Record Feedback to Improve Antibiotic Prescribing for Acute Respiratory Infections. Am. J. Manag. Care 2010, 16, 311–319. [Google Scholar]

- Chang, Y.; Sangthong, R.; McNeil, E.B.; Tang, L.; Chongsuvivatwong, V. Effect of a Computer Network-Based Feedback Program on Antibiotic Prescription Rates of Primary Care Physicians: A Cluster Randomized Crossover-Controlled Trial. J. Infect. Public Health 2020, 13, 1297–1303. [Google Scholar] [CrossRef] [PubMed]

- Elouafkaoui, P.; Young, L.; Newlands, R.; Duncan, E.M.; Elders, A.; Clarkson, J.E.; Ramsay, C.R. An Audit and Feedback Intervention for Reducing Antibiotic Prescribing in General Dental Practice: The RAPiD Cluster Randomised Controlled Trial. PLoS Med. 2016, 13, e1002115. [Google Scholar] [CrossRef] [PubMed]

- Du Yan, L.; Dean, K.; Park, D.; Thompson, J.; Tong, I.; Liu, C.; Hamdy, R.F. Education vs. Clinician Feedback on Antibiotic Prescriptions for Acute Respiratory Infections in Telemedicine: A Randomized Controlled Trial. J. Gen. Intern. Med. 2021, 36, 305–312. [Google Scholar] [CrossRef]

- Shen, X.R.; Lu, M.; Feng, R.; Cheng, J.; Chai, J.; Xie, M.; Dong, X.; Jiang, T.; Wang, D. Web-Based Just-in-Time Information and Feedback on Antibiotic Use for Village Doctors in Rural Anhui, China: Randomized Controlled Trial. J. Med. Internet Res. 2018, 20, e53. [Google Scholar] [CrossRef] [PubMed]

- Hemkens, L.G.; Saccilotto, R.; Reyes, S.L.; Glinz, D.; Zumbrunn, T.; Grolimund, O.; Gloy, V.; Raatz, H.; Widmer, A.; Zeller, A.; et al. Personalized Prescription Feedback Using Routinely Collected Data to Reduce Antibiotic Use in Primary Care: A Randomized Clinical Trial. JAMA Intern. Med. 2017, 177, 176–183. [Google Scholar] [CrossRef] [PubMed]

- Davidson, L.E.; Gentry, E.M.; Priem, J.S.; Kowalkowski, M.; Spencer, M.D. A Multimodal Intervention to Decrease Inappropriate Outpatient Antibiotic Prescribing for Upper Respiratory Tract Infections in a Large Integrated Healthcare System. Infect. Control Hosp. Epidemiol. 2022, 1–8. [Google Scholar] [CrossRef]

- Page, M.J.; McKenzie, J.E.; Bossuyt, P.M.; Boutron, I.; Hoffmann, T.C.; Mulrow, C.D.; Shamseer, L.; Tetzlaff, J.M.; Akl, E.A.; Brennan, S.E.; et al. The PRISMA 2020 Statement: An Updated Guideline for Reporting Systematic Reviews. BMJ 2021, 372, n71. [Google Scholar] [CrossRef]

- Weisz, D.; Gusmano, M.K.; Wong, G.; Trombley, J. Emergency Department Use: A Reflection of Poor Primary Care Access? Am. J. Manag. Care 2015, 21, e152–e160. [Google Scholar]

- Laur, C.; Sribaskaran, T.; Simeoni, M.; Desveaux, L.; Daneman, N.; Mulhall, C.; Lam, J.; Ivers, N.M. Improving Antibiotic Initiation and Duration Prescribing among Nursing Home Physicians Using an Audit and Feedback Intervention: A Theory-Informed Qualitative Analysis. BMJ Open Qual. 2021, 10, e001088. [Google Scholar] [CrossRef]

- Hemkens, L.G.; Saccilotto, R.; Reyes, S.L.; Glinz, D.; Zumbrunn, T.; Grolimund, O.; Gloy, V.; Raatz, H.; Widmer, A.; Zeller, A.; et al. Personalized Prescription Feedback to Reduce Antibiotic Overuse in Primary Care: Rationale and Design of a Nationwide Pragmatic Randomized Trial. BMC Infect. Dis. 2016, 16, 421. [Google Scholar] [CrossRef]

- Prior, M.; Elouafkaoui, P.; Elders, A.; Young, L.; Duncan, E.M.; Newlands, R.; Clarkson, J.E.; Ramsay, C.R.; Black, I.; Bonetti, D.; et al. Evaluating an Audit and Feedback Intervention for Reducing Antibiotic Prescribing Behaviour in General Dental Practice (the RAPiD Trial): A Partial Factorial Cluster Randomised Trial Protocol. Implement. Sci. 2014, 9, 50. [Google Scholar] [CrossRef] [PubMed]

- Hueston, W.J. Improving Quality or Shifting Diagnoses?: What Happens When Antibiotic Prescribing Is Reduced for Acute Bronchitis? Arch. Fam. Med. 2000, 9, 933–935. [Google Scholar] [CrossRef] [PubMed]

- Meeker, D.; Linder, J.A.; Fox, C.R.; Friedberg, M.W.; Persell, S.D.; Goldstein, N.J.; Knight, T.K.; Hay, J.W.; Doctor, J.N. Effect of Behavioral Interventions on Inappropriate Antibiotic Prescribing Among Primary Care Practices. JAMA 2016, 315, 562. [Google Scholar] [CrossRef] [PubMed]

- Guyatt, G.H.; Oxman, A.D.; Vist, G.E.; Kunz, R.; Falck-Ytter, Y.; Alonso-Coello, P.; Schünemann, H.J. GRADE: An Emerging Consensus on Rating Quality of Evidence and Strength of Recommendations. BMJ 2008, 336, 924–926. [Google Scholar] [CrossRef]

- Murad, M.H.; Mustafa, R.A.; Schünemann, H.J.; Sultan, S.; Santesso, N. Rating the Certainty in Evidence in the Absence of a Single Estimate of Effect. Evid. Based. Med. 2017, 22, 85–87. [Google Scholar] [CrossRef] [PubMed]

- Wasylewicz, A.; Scheepers-Hoeks, A. Chapter 11 Clinical Decision Support Systems. In Fundamentals of Clinical Data Science; SpringerOpen: Cham, Switzerland, 2018; pp. 153–169. ISBN 9783319997131. [Google Scholar]

- Zhuang, M.; Concannon, D.; Manley, E. A Framework for Evaluating Dashboards in Healthcare. IEEE Trans. Vis. Comput. Graph. 2022, 28, 1715–1731. [Google Scholar] [CrossRef]

- Davis, M.E.; Liu, T.L.; Taylor, Y.J.; Davidson, L.; Schmid, M.; Yates, T.; Scotton, J.; Spencer, M.D. Exploring Patient Awareness and Perceptions of the Appropriate Use of Antibiotics: A Mixed-Methods Study. Antibiotics 2017, 6, 23. [Google Scholar] [CrossRef]

- Yates, T.D.; Davis, M.E.; Taylor, Y.J.; Davidson, L.; Connor, C.D.; Buehler, K.; Spencer, M.D. Not a Magic Pill: A Qualitative Exploration of Provider Perspectives on Antibiotic Prescribing in the Outpatient Setting. BMC Fam. Pract. 2018, 19, 96. [Google Scholar] [CrossRef]

- Linder, J.A. Moving the Mean with Feedback: Insights from Behavioural Science. npj Prim. Care Respir. Med. 2016, 26, 16018. [Google Scholar] [CrossRef]

- Perez Jolles, M.; Lengnick-Hall, R.; Mittman, B.S. Core Functions and Forms of Complex Health Interventions: A Patient-Centered Medical Home Illustration. J. Gen. Intern. Med. 2019, 34, 1032–1038. [Google Scholar] [CrossRef]

- Sterne, J.A.C.; Savović, J.; Page, M.J.; Elbers, R.G.; Blencowe, N.S.; Boutron, I.; Cates, C.J.; Cheng, H.-Y.; Corbett, M.S.; Eldridge, S.M.; et al. RoB 2: A Revised Tool for Assessing Risk of Bias in Randomised Trials. BMJ 2019, 366, l4898. [Google Scholar] [CrossRef] [PubMed]

- Eldridge, S.; Campbell, M.K.; Campbell, M.J.; Drahota, A.K.; Giraudeau, B.; Reeves, B.C.; Siegfried, N.; Higgins, J.P.T. Revised Cochrane Risk of Bias Tool for Randomized Trials (RoB 2) Additional Considerations for Cluster-Randomized Trials (RoB 2 CRT) Cluster-Randomized Trials in the Context of the Risk of Bias Tool Bias Arising from the Randomization Process. 2021. Available online: https://www.unisa.edu.au/contentassets/72bf75606a2b4abcaf7f17404af374ad/rob2-0_indiv_main_guidance.pdf (accessed on 15 February 2022).

- Higgins, J.; Li, T.; Sterne, J. Revised Cochrane Risk of Bias Tool for Randomized Trials (RoB 2) Additional Considerations for Crossover Trials. 2021. Available online: https://methods.cochrane.org/bias/resources/rob-2-revised-cochrane-risk-bias-tool-randomized-trials (accessed on 15 February 2022).

- Sterne, J.A.; Hernán, M.A.; Reeves, B.C.; Savović, J.; Berkman, N.D.; Viswanathan, M.; Henry, D.; Altman, D.G.; Ansari, M.T.; Boutron, I.; et al. ROBINS-I: A Tool for Assessing Risk of Bias in Non-Randomised Studies of Interventions. BMJ 2016, 355, i4919. [Google Scholar] [CrossRef] [PubMed]

- The Cochrane Collaboration Review Manager (RevMan); Version 5.4 2020; Cochrane: London, UK, 2020.

| Study ID | Data Summarized | Features | Development Details | Extracted Data from | Time Period of Report | Access | Engagement and Reminder Strategies |

|---|---|---|---|---|---|---|---|

| Du Yan 2021 [26] | Rate of antibiotic prescription (AP) and practice-wide prescribing rates for upper respiratory infection (URI), bronchitis, sinusitis, and pharyngitis. | Personalised for each clinician, including a practice summary (practice’s antibiotic prescription rates for target conditions), individual clinician prescription and the difference with their practice. | No detail | Electronic medical record without a separate database. | Report from previous month starting May 2018. | An online dashboard; the paper provided a sample in a figure (see Figure 2 from original paper [26]). | No detail |

| Daneman 2021 [20,32] | Percentages of AP and prolonged antibiotic treatment (longer than seven days). Additionally, antipsychotic, benzodiazepine, and other neurotropic medication prescribing was reported. | A home page (overview) with key messages from prescribing data, peer comparisons (question mark icon if prescriptions were higher, similar or lower than their peers), and two links (to view trend data and change ideas). The antibiotic page allowed comparing their overall rate with Ontario percentiles, showed key changes and answers to important questions (relating to resident characteristics, accurate data, the rate calculated, data limitation, and low AP that was reasonable and safe). | Input from infectious diseases, implementation science, information technology, and quality improvement specialists to improve its design through an iterative, user-centered design process. | Administrative health databases and linked with drug, hospitalization, and emergency department databases. | Four quarters of 2018 and four quarters of 2019 | An online dashboard; the paper provided a screenshot of a sample in a supplement (see Supplement S1 from original paper [20]) | No detail. However, they explored how the intervention was perceived by those that engaged with it in its qualitative study [32]. |

| Hemkens 2017 [28,33] | Antibiotic prescriptions per 100 consultations in the preceding months and displaying the adjusted average in peer physicians, that is, the entire population of Swiss primary care physicians. | Details on the prescriptions per age group or sex or for certain antibiotic types and answers to frequently asked questions on antibiotic use. | No detail | Data from statutory health insurers for claims of drug prescriptions and health care services. | Quarterly intervals (not more report details) | An online dashboard; the paper provided a screenshot of a sample in a supplement (see Supplement Figure from original paper [28]) | Physicians received a quarterly updated personalised prescription feedback |

| Curtis 2021 [22] | Change in AP | No detail | No detail | National datasets published monthly by NHS Digital (Practice-level prescribing data). | No detail | An online dashboard with a single measure highlighted (a link to their practice dashboard on OpenPrescribing.net.). The study provided a sample image in Supplement (see supplement Figure S1 from original paper [22]) | No detail. However, an update was sent at 5-week intervals. |

| Linder 2010 [23] | The proportion of Acute Respiratory Infection (ARI) visits with antibiotics, the proportion of individual ARI diagnoses (pneumonia, sinusitis, acute bronchitis) with antibiotics, the proportion of broad-spectrum AP, the distribution of ARI visits by evaluation and management billing codes, and individual patient visit details. | Design based on the recommendations of the Centers for Disease Control and Prevention and the American College of Physicians. ASP.NET technology used to build the Dashboard. Option to “drill down” to any patient’s medical record directly from the Dashboard to review patient details and export the report for additional follow-up or analysis. | A pilot to assess the users access, understand if it was useful to their antimicrobial prescribing patterns and validate its reports with primary data from the HER by drilling down to individual patient charts. | Electronic health records (EHR) | Dashboard displayed visit and prescribing data for the previous year and was automatically updated monthly. | Physicians accessed the Dashboard from the EHR Reports Central area, which contained about 10 other reports about preventive and chronic disease management. The study provided a screenshot of the dashboard in Figure (see Figure 1 from original paper [23]) | Monthly e-mails reminding physicians about the ARI Quality Dashboard. |

| Shen 2018 [27] | Their performance scores (PSs) and percentages of prescribed antibiotics use (ABU). | The PS and ABU were presented in red, yellow, and green, respectively, if it fell below (or above), within, and above (or below) the interquartile range of the same PS or ABU). Additionally, it illustrated relevant performance feedback, performance scores for current doctor and their peers in total and by infections, public commitment, bulleted points of commitment letter, and frequently questions. | No detail | Data was based on the records of their management of symptomatic infection patients | No detail | Web-based aid (WBA). A slide of WBA in Multimedia Appendix (see appendix A3 from original paper [27]) | No detail |

| Elouafkaoui 2016 [25,34] | Prescribing rate number of antibiotic items dispensed multiplied by 100 claims and the health board rate (the overall ordinary list prescribing rate for current dentists in non-salaried practices in NHS Example Board) | No detail | No detail | Electronic healthcare datasets held centrally by the Information Services Division of NHS National Services Scotland. | Monthly | This Audit and Feedback included a visualisation (line graph) which was delivered by post. The study provided an example in Supplement (see supplement Figure S1 from original paper [25]) | No detail |

| Chang 2020 [24] | An individual ranking score of AP (peer comparison), statistic information about the diagnosis and AP (total and type of antibiotics). | Top of the screen: the top five diseases of patients seen by the physician over the previous 10 days, the start and stop time for the previous 10 days, and the number of prescriptions given during this period and department ranking Bottom: Statistics on the antibiotic frequency, prescription rate of each antibiotic prescribed, precautions and contraindications for antibiotics being used. | No detail | Health information system (HIS). | Previous 10 days | A link on HIS to see the feedback information any time. The paper provided an example of feedback information displayed on a physician’s computer screen in Figure (see Figure 1 from original paper [24]) | A pop-up window to automatically prompt to check for the feedback information every 10-days |

| Jones 2021 [21] | Rate of inappropriate prescribing and stratified by diagnosis category. | Top peer comparison (top 10% of performers (clinicians with the lowest prescribing rates) or not to be among the 10% best performers). Rolling over each column shows the percentage for each provider and the number of encounters on which the rate of inappropriate prescribing is based. Filters allow the provider to compare data over different timelines and across departments. | No detail | Electronic health record system. | Unclear, but it mentioned “Dashboard was updated daily” | Tableau dashboard. The paper provided a figure of provider feedback dashboard in Supplementary (see supplementary Figure S2 from original paper [21]) | Physicians’ review of their personal data was structured to satisfy the requirements for the American Board of Emergency Medicine Maintenance of Certification Improvement in Medical Practice Requirements. Physicians received biannual e-mails |

| Davidson 2022 [29] | Prescribing rate, target rate, antimicrobial encounters, total encounters and antimicrobial prescribing rate | Comparing AP behaviours among providers, practices and organisational groupings. Data viewable by indication, antibiotics class, and at the levels of provider, practice site, specialty medical director and administrator. | Developed in Microsoft Power BI. Including coding, targeted indicators, instructional webinar and on-site dashboard navigation education given upon request for practice sites and leaders. The dashboard remains part of continuous, ongoing assessment of feedback from users and leadership. | Electronic health record and administrative data sources. | Prescribing data compared year-to-year and rolling 12 months | Online dashboard; the paper provided a figure of Dashboard Overview in Supplementary (see supplementary Figure S8 from original paper [29]) | Antibiotic education campaign (provider focused resources) |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Garzón-Orjuela, N.; Parveen, S.; Amin, D.; Vornhagen, H.; Blake, C.; Vellinga, A. The Effectiveness of Interactive Dashboards to Optimise Antibiotic Prescribing in Primary Care: A Systematic Review. Antibiotics 2023, 12, 136. https://doi.org/10.3390/antibiotics12010136

Garzón-Orjuela N, Parveen S, Amin D, Vornhagen H, Blake C, Vellinga A. The Effectiveness of Interactive Dashboards to Optimise Antibiotic Prescribing in Primary Care: A Systematic Review. Antibiotics. 2023; 12(1):136. https://doi.org/10.3390/antibiotics12010136

Chicago/Turabian StyleGarzón-Orjuela, Nathaly, Sana Parveen, Doaa Amin, Heike Vornhagen, Catherine Blake, and Akke Vellinga. 2023. "The Effectiveness of Interactive Dashboards to Optimise Antibiotic Prescribing in Primary Care: A Systematic Review" Antibiotics 12, no. 1: 136. https://doi.org/10.3390/antibiotics12010136

APA StyleGarzón-Orjuela, N., Parveen, S., Amin, D., Vornhagen, H., Blake, C., & Vellinga, A. (2023). The Effectiveness of Interactive Dashboards to Optimise Antibiotic Prescribing in Primary Care: A Systematic Review. Antibiotics, 12(1), 136. https://doi.org/10.3390/antibiotics12010136