Examining the Use of Game-Based Assessments for Hiring Autistic Job Seekers

Abstract

:1. Introduction

2. Materials and Methods

2.1. Power Analysis

2.2. Participants

2.3. Measures

2.3.1. Disconumbers

2.3.2. Shapedance

2.3.3. Digitspan

2.4. Procedure

3. Results

4. Discussion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Ali, Mohammad, Lisa Schur, and Peter Blanck. 2011. What types of jobs do people with disabilities want? Journal of Occupational Rehabilitation 21: 199–210. [Google Scholar] [CrossRef] [PubMed]

- Alter, Adam L., Joshua Aronson, John M. Darley, Cordaro Rodriguez, and Diane N. Ruble. 2010. Rising to the threat: Reducing stereotype threat by reframing the threat as a challenge. Journal of Experimental Social Psychology 46: 166–71. [Google Scholar] [CrossRef]

- Atkins, Sharona M., Amber M. Sprenger, Gregory J. H. Colflesh, Timothy L. Briner, Jacob B. Buchanan, Sydnee E. Chavis, Sy-yu Chen, Gregory L. Iannuzzi, Vadim Kashtelyan, Eamon Dowling, and et al. 2014. Measuring working memory is all fun and games: A four-dimensional spatial game predicts cognitive task performance. Experimental Psychology 61: 417–38. [Google Scholar] [CrossRef] [Green Version]

- Bai, Zhen, Alan F. Blackwell, and George Coulouris. 2014. Using augmented reality to elicit pretend play for children with autism. IEEE Transactions on Visualization and Computer Graphics 21: 598–610. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Bertua, Cristina, Neil Anderson, and Jesús F. Salgado. 2005. The predictive validity of cognitive ability tests: A UK meta-analysis. Journal of Occupational and Organizational Psychology 78: 387–409. [Google Scholar] [CrossRef]

- Bonaccio, Silvia, Catherine E. Connelly, Ian R. Gellatly, Arif Jetha, and Kathleen A. Martin Ginis. 2020. The participation of people with disabilities in the workplace across the employment cycle: Employer concerns and research evidence. Journal of Business and Psychology 35: 135–58. [Google Scholar] [CrossRef] [Green Version]

- Brown, Harriet R., Peter Zeidman, Peter Smittenaar, Rick A. Adams, Fiona McNab, Robb B. Rutledge, and Raymond J. Dolan. 2014. Crowdsourcing for cognitive science–the utility of smartphones. PLoS ONE 9: e100662. [Google Scholar] [CrossRef] [PubMed]

- Burgers, Christian, Allison Eden, Mélisande D. van Engelenburg, and Sander Buningh. 2015. How feedback boosts motivation and play in a brain-training game. Computers in Human Behavior 48: 94–103. [Google Scholar] [CrossRef] [Green Version]

- Burke, Raymond V., Melissa N. Andersen, Scott L. Bowen, Monica R. Howard, and Keith D. Allen. 2010. Evaluation of two instruction methods to increase employment options for young adults with autism spectrum disorders. Research in Developmental Disabilities 31: 1223–33. [Google Scholar] [CrossRef]

- Campion, Michael A., David K. Palmer, and James E. Campion. 1997. A review of structure in the selection interview. Personnel Psychology 50: 655–702. [Google Scholar] [CrossRef]

- Centers for Disease Control and Prevention. 2021. Data & Statistics on Autism Spectrum Disorder; Washington, DC: U.S. Department of Health and Human Services. Available online: https://www.cdc.gov/ncbddd/autism/data.html (accessed on 31 August 2021).

- Chen, June L., Geraldine Leader, Connie Sung, and Michael Leahy. 2015. Trends in employment for individuals with autism spectrum disorder: A review of the research literature. Review Journal of Autism and Developmental Disorders 2: 115–27. [Google Scholar] [CrossRef]

- Connolly, Thomas M., Elizabeth A. Boyle, Ewan MacArthur, Thomas Hainey, and James M. Boyle. 2012. A systematic literature review of empirical evidence on computer games and serious games. Computers & Education 59: 661–86. [Google Scholar]

- Cottrell, Jonathan M., Daniel A. Newman, and Glenn I. Roisman. 2015. Explaining the black–white gap in cognitive test scores: Toward a theory of adverse impact. Journal of Applied Psychology 100: 1713–36. [Google Scholar] [CrossRef] [Green Version]

- Curioni, Arianna, Ilaria Minio-Paluello, Lucia Maria Sacheli, Matteo Candidi, and Salvatore Maria Aglioti. 2017. Autistic traits affect interpersonal motor coordination by modulating strategic use of role-based behavior. Molecular Autism 8: 1–13. [Google Scholar] [CrossRef] [Green Version]

- Dichter, Gabriel S., and Aysenil Belger. 2007. Social stimuli interfere with cognitive control in autism. Neuroimage 35: 1219–30. [Google Scholar] [CrossRef] [Green Version]

- Goldstein, Harold W., Charles A. Scherbaum, and Kenneth P. Yusko. 2010. Revisiting g: Intelligence, adverse impact, and personnel selection. In Adverse Impact. Abingdon: Routledge, pp. 122–61. [Google Scholar]

- Hebl, Michelle R., and Jeanine L. Skorinko. 2005. Acknowledging one’s physical disability in the interview: Does “when” make a difference? Journal of Applied Social Psychology 35: 2477–92. [Google Scholar] [CrossRef] [Green Version]

- Hensel, Wendy F. 2017. People with autism spectrum disorder in the workplace: An expanding legal frontier. Harvard Civil Rights-Civil Liberties Law Review (CR-CL) 52: 73–102. [Google Scholar]

- Herrera, Gerardo, Francisco Alcantud, Rita Jordan, Amparo Blanquer, Gabriel Labajo, and Cristina De Pablo. 2008. Development of symbolic play through the use of virtual reality tools in children with autistic spectrum disorders: Two case studies. Autism 12: 143–57. [Google Scholar] [CrossRef]

- Higgins, Kristin K., Lynn C. Koch, Erica M. Boughfman, and Courtney Vierstra. 2008. School-to-work transition and Asperger syndrome. Work 31: 291–98. [Google Scholar] [PubMed]

- Hoque, Kim, Nick Bacon, and Dave Parr. 2014. Employer disability practice in Britain: Assessing the impact of the Positive about Disabled People ‘Two Ticks’ symbol. Work, Employment and Society 28: 430–51. [Google Scholar] [CrossRef]

- Huffcutt, Allen I., James M. Conway, Philip L. Roth, and Nancy J. Stone. 2001. Identification and meta-analytic assessment of psychological constructs measured in employment interviews. Journal of Applied Psychology 86: 897–913. [Google Scholar] [CrossRef] [PubMed]

- Hughes, Jonathan A. 2020. Does the heterogeneity of autism undermine the neurodiversity paradigm? Bioethics 35: 47–60. [Google Scholar] [CrossRef] [PubMed]

- Hunter, John E., and Frank L. Schmidt. 1996. Intelligence and job performance: Economic and social implications. Psychology, Public Policy, and Law 2: 447–72. [Google Scholar] [CrossRef]

- Hurley-Hanson, Amy E., and Cristina M. Giannantonio. 2016. Autism in the Workplace (Special Issue). Journal of Business and Management 22: 1–156. [Google Scholar]

- Kumazaki, Hirokazu, Taro Muramatsu, Yuichiro Yoshikawa, Blythe A. Corbett, Yoshio Matsumoto, Haruhiro Higashida, Teruko Yuhi, Hiroshi Ishiguro, Masaru Mimura, and Mitsuru Kikuchi. 2019. Job interview training targeting nonverbal communication using an android robot for individuals with autism spectrum disorder. Autism 23: 1586–95. [Google Scholar] [CrossRef]

- Kuncel, Nathan R., Deniz S. Ones, and Paul R. Sackett. 2010. Individual differences as predictors of work, educational, and broad life outcomes. Personality and Individual Differences 49: 331–36. [Google Scholar] [CrossRef]

- Kuo, Susan S., and Shaun M. Eack. 2020. Meta-analysis of cognitive performance in neurodevelopmental disorders during adulthood: Comparisons between autism spectrum disorder and schizophrenia on the Wechsler Adult Intelligence Scales. Frontiers in Psychiatry 11: 1–16. [Google Scholar] [CrossRef]

- Lindsay, Sally, Joanne Leck, Winny Shen, Elaine Cagliostro, and Jennifer Stinson. 2019. A framework for developing employer’s disability confidence. Equality, Diversity and Inclusion: An International Journal 38: 40–55. [Google Scholar] [CrossRef]

- Luft, Caroline Di Bernardi, July Silveira Gomes, Daniel Priori, and Emilio Takase. 2013. Using online cognitive tasks to predict mathematics low school achievement. Computers & Education 67: 219–28. [Google Scholar]

- Lumsden, Jim, Elizabeth A. Edwards, Natalia S. Lawrence, David Coyle, and Marcus R. Munafò. 2016. Gamification of cognitive assessment and cognitive training: A systematic review of applications and efficacy. JMIR Serious Games 4: e11. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Malinverni, Laura, Joan Mora-Guiard, Vanesa Padillo, Lilia Valero, Amaia Hervás, and Narcis Pares. 2017. An inclusive design approach for developing video games for children with autism spectrum disorder. Computers in Human Behavior 71: 535–49. [Google Scholar] [CrossRef]

- Maras, Katie, Jade Eloise Norris, Jemma Nicholson, Brett Heasman, Anna Remington, and Laura Crane. 2021. Ameliorating the disadvantage for autistic job seekers: An initial evaluation of adapted employment interview questions. Autism 25: 1060–75. [Google Scholar] [CrossRef]

- Mash, Lisa E., Raymond M. Klein, and Jeanne Townsend. 2020. A gaming approach to the assessment of attention networks in autism spectrum disorder and typical development. Journal of Autism and Developmental Disorders 50: 2607–15. [Google Scholar] [CrossRef]

- McPherson, Jason, and Nicholas R. Burns. 2008. Assessing the validity of computer-game-like tests of processing speed and working memory. Behavior Research Methods 40: 969–81. [Google Scholar] [CrossRef] [Green Version]

- Melson-Silimon, Arturia, Alexandra M. Harris, Elizabeth L. Shoenfelt, Joshua D. Miller, and Nathan T. Carter. 2019. Personality testing and the Americans with Disabilities Act: Cause for concern as normal and abnormal personality models are integrated. Industrial and Organizational Psychology 12: 119–32. [Google Scholar] [CrossRef]

- Michael, David R., and Sandra L. Chen. 2006. Serious Games: Games That Educate, Train, and Inform. Boston: Thomson Course Technology. [Google Scholar]

- Miranda, Andrew T., and Evan M. Palmer. 2014. Intrinsic motivation and attentional capture from gamelike features in a visual search task. Behavior Research Methods 46: 159–72. [Google Scholar] [CrossRef] [PubMed]

- Müller, Ralph-Axel. 2007. The study of autism as a distributed disorder. Mental Retardation and Developmental Disabilities Research Reviews 13: 85–95. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Murdock, Linda C., Jennifer Ganz, and Jessica Crittendon. 2013. Use of an iPad play story to increase play dialogue of preschoolers with autism spectrum disorders. Journal of Autism and Developmental Disorders 43: 2174–89. [Google Scholar] [CrossRef] [PubMed]

- Ozonoff, Sally. 1995. Reliability and validity of the Wisconsin card sorting test in studies of autism. Neuropsychology 9: 491–500. [Google Scholar] [CrossRef]

- Ployhart, Robert E., and Brian C. Holtz. 2008. The diversity–validity dilemma: Strategies for reducing racioethnic and sex subgroup differences and adverse impact in selection. Personnel Psychology 61: 153–72. [Google Scholar] [CrossRef]

- Quiroga, María Ángeles, F. J. Román, J. de la Fuente, Jesús Privado Zamorano, and Roberto Colom. 2016. The measurement of intelligence in the XXI Century using video games. The Spanish Journal of Psychology 19: 1–13. [Google Scholar] [CrossRef]

- Quiroga, María Ángeles, Sergio Escorial, Francisco J. Román, Daniel Morillo, Andrea Jarabo, Jesús Privado, Miguel Hernández, Borja Gallego, and Roberto Colom. 2015. Can we reliably measure the general factor of intelligence (g) through commercial video games? Yes, we can! Intelligence 53: 1–7. [Google Scholar] [CrossRef]

- Reilly, Nora P., Shawn P. Bocketti, Stephen A. Maser, and Craig L. Wennet. 2006. Benchmarks affect perceptions of prior disability in a structured interview. Journal of Business and Psychology 20: 489–500. [Google Scholar] [CrossRef]

- Schmidt, Frank L., and John E. Hunter. 1998. The validity and utility of selection methods in personnel psychology: Practical and theoretical implications of 85 years of research findings. Psychological Bulletin 124: 262–74. [Google Scholar] [CrossRef]

- Schmidt, Frank L., and John E. Hunter. 2004. General mental ability in the world of work: Occupational attainment and job performance. Journal of Personality and Social Psychology 86: 162–73. [Google Scholar] [CrossRef] [Green Version]

- Schmidt, Frank L., In-Sue Oh, and Jonathan A. Shaffer. 2016. The Validity and Utility of Selection Methods in Personnel Psychology: Practical and Theoretical Implications of 100 Years…. Research Paper. Philadelphia: Fox School of Business, pp. 1–74. [Google Scholar]

- Schur, Lisa, Lisa Nishii, Meera Adya, Douglas Kruse, Susanne M. Bruyère, and Peter Blanck. 2014. Accommodating employees with and without disabilities. Human Resource Management 53: 593–621. [Google Scholar] [CrossRef] [Green Version]

- Simut, Ramona E., Johan Vanderfaeillie, Andreea Peca, Greet Van de Perre, and Bram Vanderborght. 2016. Children with autism spectrum disorders make a fruit salad with Probo, the social robot: An interaction study. Journal of Autism and Developmental Disorders 46: 113–26. [Google Scholar] [CrossRef]

- Smith, Matthew J., Emily J. Ginger, Katherine Wright, Michael A. Wright, Julie Lounds Taylor, Laura Boteler Humm, Dale E. Olsen, Morris D. Bell, and Michael F. Fleming. 2014. Virtual reality job interview training in adults with autism spectrum disorder. Journal of Autism and Developmental Disorders 44: 2450–63. [Google Scholar] [CrossRef] [Green Version]

- Spiggle, Tom. 2021. What does a Worker Want? What the Labor Shortage Really Tells Us. Forbes. Available online: https://www.forbes.com/sites/tomspiggle/2021/07/08/what-does-a-worker-want-what-the-labor-shortage-really-tells-us/ (accessed on 31 August 2021).

- Strickland, Dorothy C., Claire D. Coles, and Louise B. Southern. 2013. JobTIPS: A transition to employment program for individuals with autism spectrum disorders. Journal of Autism and Developmental Disorders 43: 2472–83. [Google Scholar] [CrossRef]

- Tremblay, Jonathan, Bruno Bouchard, and Abdenour Bouzouane. 2010. Adaptive game mechanics for learning purposes-making serious games playable and fun. CSEDU 2: 465–70. [Google Scholar]

- Tso, Leslie, Christos Papagrigoriou, and Yannic Sowoidnich. 2015. Analysis and comparison of software-tools for cognitive assessment. OPUS 1: 1–40. [Google Scholar]

- Vandenberg, Steven G., and Allan R. Kuse. 1978. Mental rotations, a group test of three-dimensional spatial visualization. Perceptual and Motor Skills 47: 599–604. [Google Scholar] [CrossRef] [PubMed]

- Velikonja, Tjasa, Anne-Kathrin Fett, and Eva Velthorst. 2019. Patterns of nonsocial and social cognitive functioning in adults with autism spectrum disorder: A systematic review and meta-analysis. JAMA Psychiatry 76: 135–51. [Google Scholar] [CrossRef] [PubMed]

- Volkers, Nancy. 2021. When a Co-Worker’s Atypical… Can We Be Flexible? Leader Live. Available online: https://leader.pubs.asha.org/do/10.1044/leader.FTR1.26042021.36/full/ (accessed on 31 July 2021).

- Waterhouse, Peter, Helen Kimberley, Pam Jonas, and John Glover. 2010. What would it take? Employer perspectives on employing people with a disability. In A National Vocational Education and Training Research and Evaluation Program Report. Adelaide: National Centre for Vocational Education Research Ltd. [Google Scholar]

- Wechsler, David. 1997. Wechsler Adult Intelligence Scale-III (WAIS-III) Manual. New York: The Psychological Corporation. [Google Scholar]

- Wee, Serena, Daniel A. Newman, and Dana L. Joseph. 2014. More than g: Selection quality and adverse impact implications of considering second-stratum cognitive abilities. Journal of Applied Psychology 99: 547–63. [Google Scholar] [CrossRef]

- Wilson, Alexander C., and Dorothy V. M. Bishop. 2021. “Second guessing yourself all the time about what they really mean…”: Cognitive differences between autistic and non-autistic adults in understanding implied meaning. Autism Research 14: 93–101. [Google Scholar] [CrossRef]

- Wood, Richard T. A., Mark D. Griffiths, Darren Chappell, and Mark N. O. Davies. 2004. The structural characteristics of video games: A psycho-structural analysis. CyberPsychology & Behavior 7: 1–10. [Google Scholar]

- Zedeck, Sheldon. 2010. Adverse impact: History and evolution. In Adverse Impact. Abingdon: Routledge, pp. 33–57. [Google Scholar]

| Autistic Participant Sample | General Population Sample | |||

|---|---|---|---|---|

| Disconumbers and Shapedance | Digitspan and Shapedance | Disconumbers and Shapedance | Digitspan and Shapedance | |

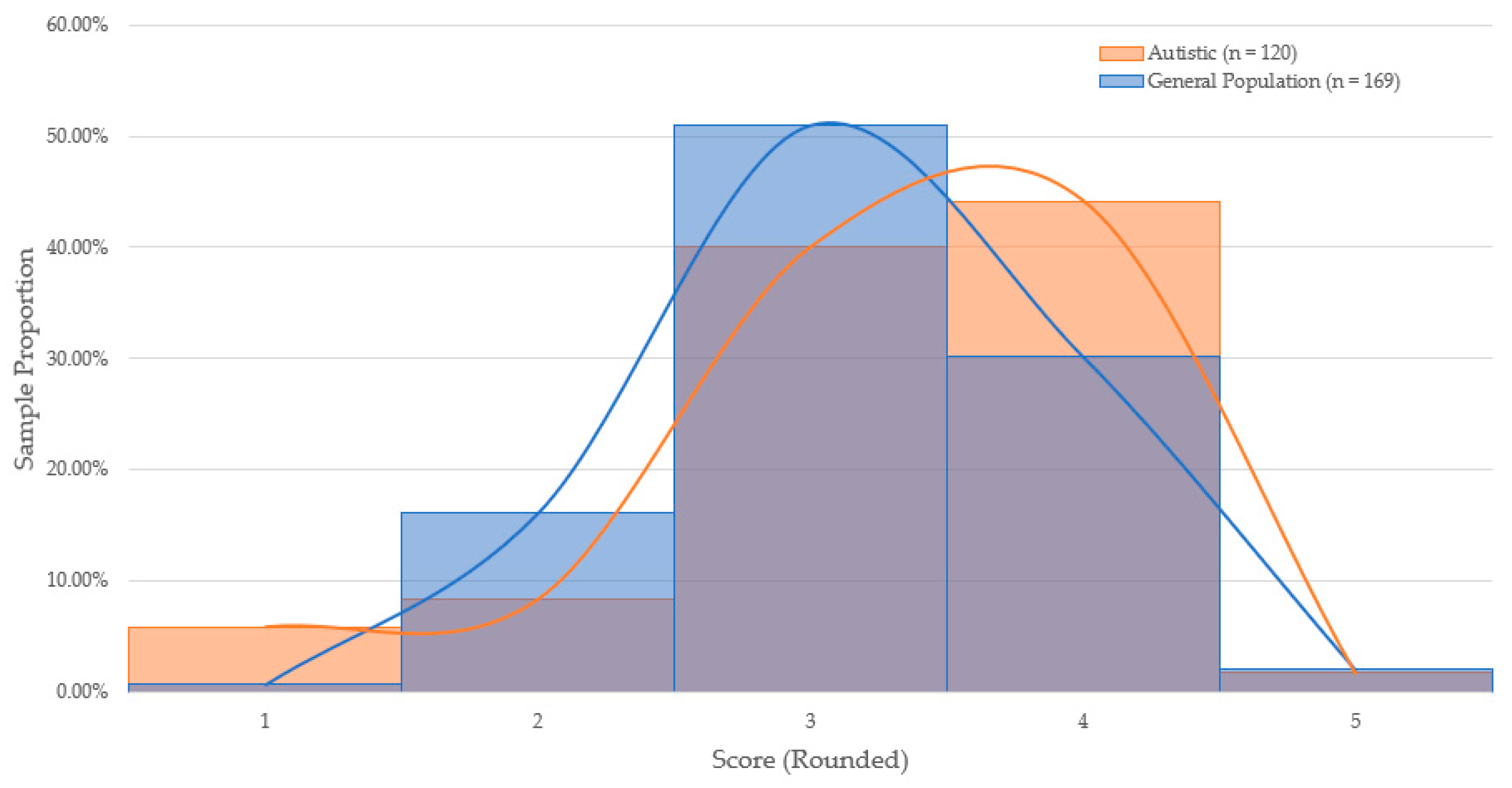

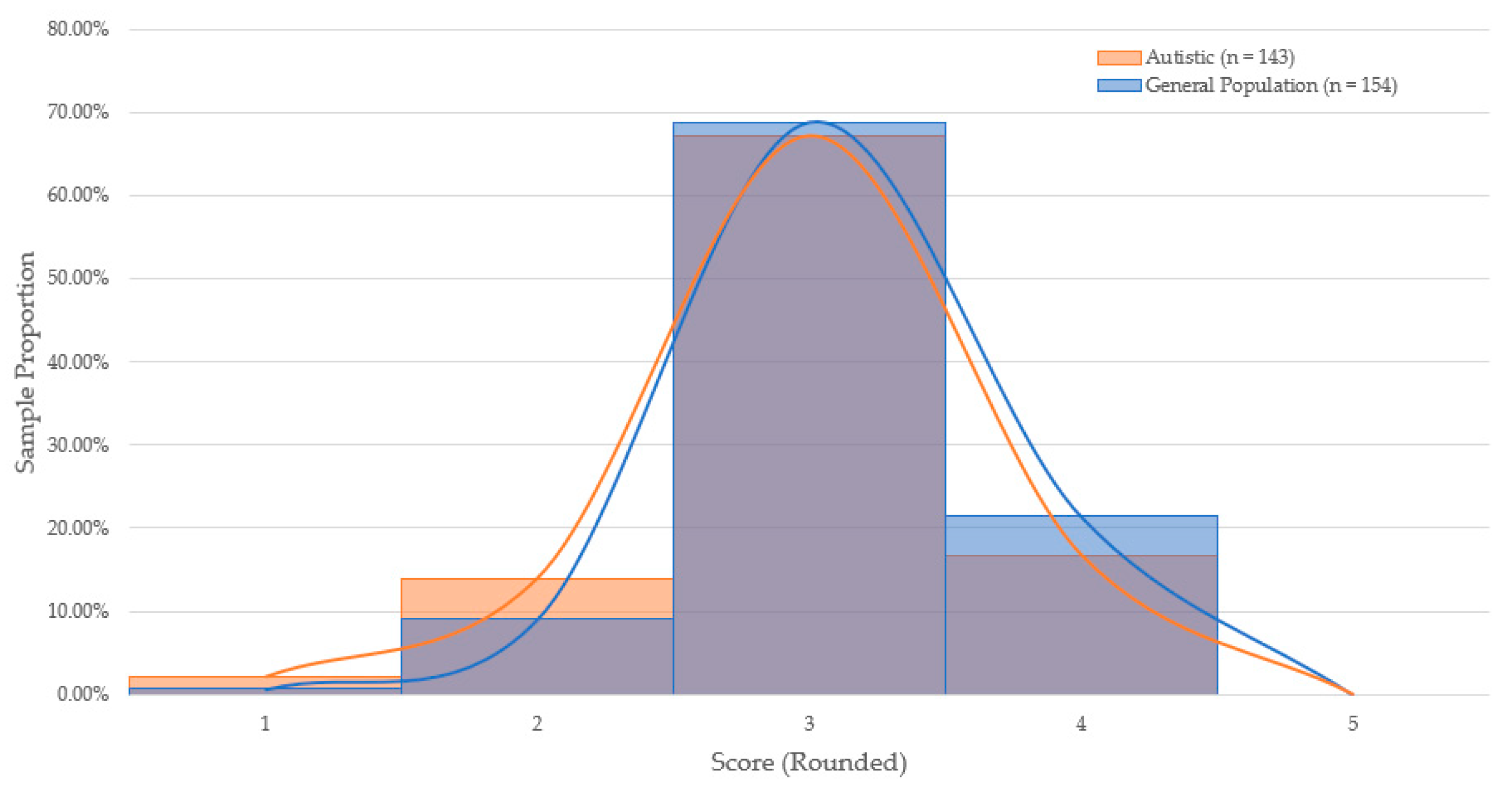

| Total | 120 | 143 | 169 | 154 |

| Gender | ||||

| Male | 81 | 109 | 92 | 101 |

| Female | 26 | 24 | 67 | 40 |

| Undisclosed Gender | 13 | 10 | 0 | 13 |

| Race and Ethnicity | ||||

| White | 79 | 100 | 100 | 179 |

| Black | 5 | 6 | 25 | 22 |

| Hispanic | 12 | 11 | 22 | 22 |

| Asian | 11 | 12 | 12 | 16 |

| Undisclosed Race/Ethnicity | 13 | 15 | 0 | 13 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Willis, C.; Powell-Rudy, T.; Colley, K.; Prasad, J. Examining the Use of Game-Based Assessments for Hiring Autistic Job Seekers. J. Intell. 2021, 9, 53. https://doi.org/10.3390/jintelligence9040053

Willis C, Powell-Rudy T, Colley K, Prasad J. Examining the Use of Game-Based Assessments for Hiring Autistic Job Seekers. Journal of Intelligence. 2021; 9(4):53. https://doi.org/10.3390/jintelligence9040053

Chicago/Turabian StyleWillis, Colin, Tracy Powell-Rudy, Kelsie Colley, and Joshua Prasad. 2021. "Examining the Use of Game-Based Assessments for Hiring Autistic Job Seekers" Journal of Intelligence 9, no. 4: 53. https://doi.org/10.3390/jintelligence9040053

APA StyleWillis, C., Powell-Rudy, T., Colley, K., & Prasad, J. (2021). Examining the Use of Game-Based Assessments for Hiring Autistic Job Seekers. Journal of Intelligence, 9(4), 53. https://doi.org/10.3390/jintelligence9040053