Relational Integration and Attentional Control Are Crucial to Fluid Intelligence Together but Not Alone—An Experimental Investigation of Individual Difference in Relational Monitoring Processes

Abstract

1. Introduction

1.1. Relational Integration in RMT

1.2. Attentional Control in RMT

1.3. Analytical Approaches: Operationalising Performance Metrics to Test Processes

1.4. The Current Study

1.5. Hypothesis

1.5.1. Relational Integration (Relational Complexity)

1.5.2. Attentional Control-Inhibition (Visual Interference)

1.5.3. Attentional Control-Scanning (String Preservation)

2. Study 1

2.1. Overview

2.2. Method

2.2.1. Three Potential Criteria to Reduce Response Windows

2.2.2. Relational Monitoring Task

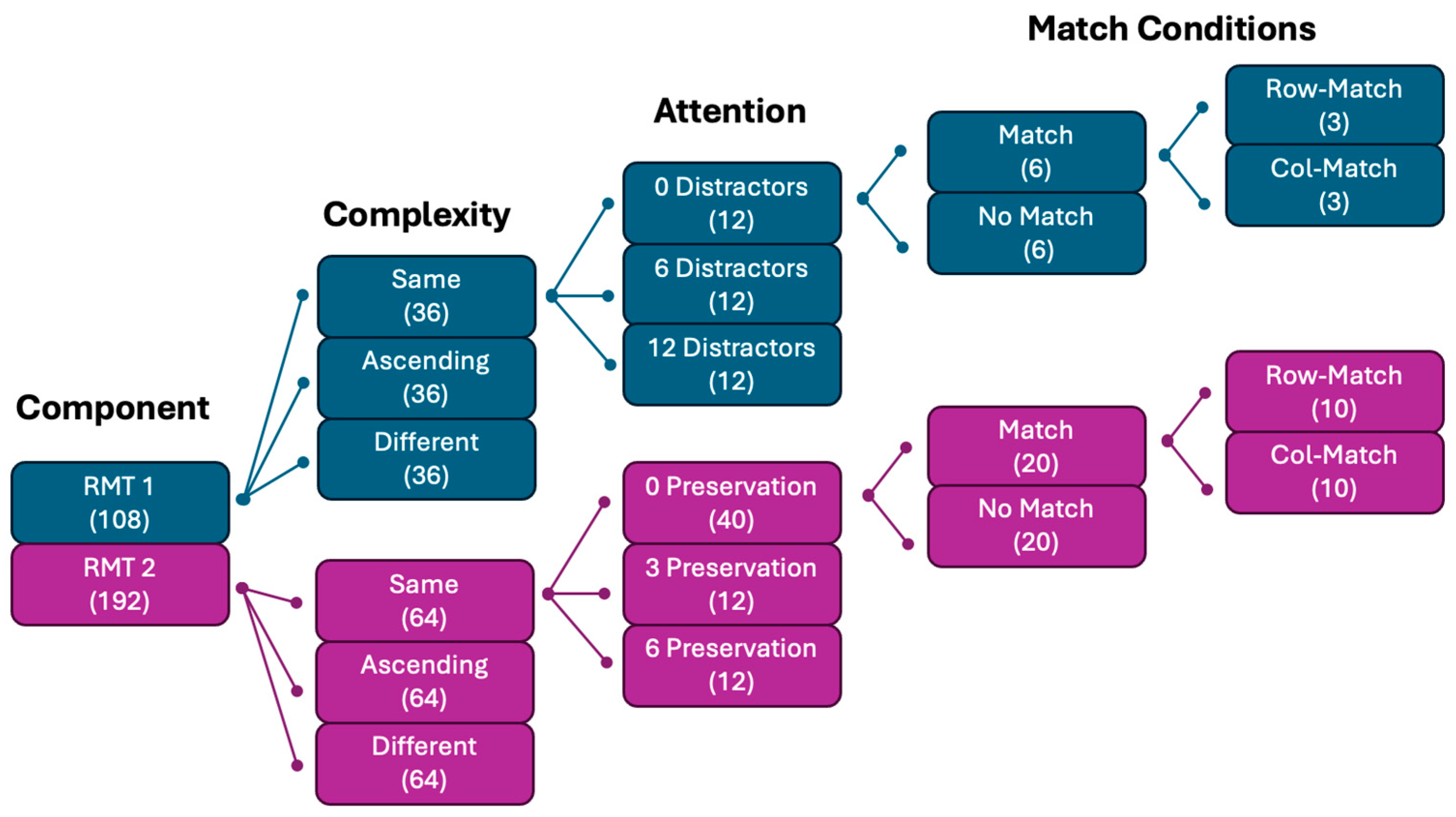

2.2.3. RMT Component 1 (Relational Integration and Inhibition)

2.2.4. RMT Component 2 (Relational Integration and Visual Scanning)

2.3. Participants and Procedure

2.4. Results and Discussion

3. Study 2

3.1. Experimental Design

3.2. Method

3.2.1. Sample

3.2.2. Relational Monitoring Task

3.2.3. Gf Measures

3.2.4. Fluid Intelligence

3.2.5. Procedure

3.3. Results

3.3.1. RMT Contrast Variables

3.3.2. Latent Construct Scores

3.3.3. Simple-Composite Scores

4. Discussion

4.1. RMT Performance (Hypothesis H1, H3, H4, and H6)

4.2. RMT and Gf (Hypotheses H2, H5, and H7)

4.3. Alternative Explanations, Limitations, and Future Research

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

| 1 | Re-analysis of Bateman et al. (2019) by Zhan and Birney (2023) suggested that the “same” rule should be classified as a unary relation for the reasons outlined here and in Figure 1, and not binary as Bateman et al. had originally proposed. |

References

- Bateman, J. E. (2020). Relational integration in working memory: Determinants of effective task performance and links to individual differences in fluid intelligence [Ph.D. thesis, The University of Sydney]. Available online: https://hdl.handle.net/2123/22361 (accessed on 4 August 2023).

- Bateman, J. E., & Birney, D. P. (2019). The link between working memory and fluid intelligence is dependent on flexible bindings, not systematic access or passive retention. Acta Psychologica, 199, 102893. [Google Scholar] [CrossRef]

- Bateman, J. E., Thompson, K. A., & Birney, D. P. (2019). Validating the relation-monitoring task as a measure of relational integration and predictor of fluid intelligence. Memory & Cognition, 47(8), 1457–1468. [Google Scholar] [CrossRef]

- Bates, D., Mchler, M., Bolker, B., & Walker, S. (2015). Fitting linear mixed-effects models using lme4. Journal of Statistical Software, 67(1), 1–48. [Google Scholar] [CrossRef]

- Birney, D. P., & Beckmann, J. F. (2022). Intelligence is cognitive flexibility: Why multilevel models of within-individual processes are needed to realise this. Journal of Intelligence, 10(3), 49. [Google Scholar] [CrossRef]

- Birney, D. P., Beckmann, J. F., Beckmann, N., & Double, K. S. (2017). Beyond the intellect: Complexity and learning trajectories in Raven’s Progressive Matrices depend on self-regulatory processes and conative dispositions. Intelligence, 61, 63–77. [Google Scholar] [CrossRef]

- Birney, D. P., Bowman, D. B., Beckmann, J. F., & Zhi Seah, Y. (2012). Assessment of processing capacity: Reasoning in Latin square tasks in a population of managers. European Journal of Psychological Assessment, 28(3), 216–226. [Google Scholar] [CrossRef]

- Birney, D. P., & Zhan, Y. (2023, April 12–14). Relational binding and integration in the Latin square task and relational monitoring task [Paper presentation]. Australasian Society for Experimental Psychology Conference, Australian National University, Canberra, Australia. [Google Scholar]

- Brose, A., Neubauer, A. B., & Schmiedek, F. (2021). Integrating state dynamics and trait change: A tutorial using the example of stress reactivity and change in well-being. European Journal of Personality, 36, 180–199. [Google Scholar] [CrossRef]

- Brunner, J., & Austin, P. C. (2009). Inflation of type I error rates in multiple regression when independent variables are measured with error. The Canadian Journal of Statistics, 37(1), 33–46. [Google Scholar] [CrossRef]

- Chuderski, A. (2014). The relational integration task explains fluid reasoning above and beyond other working memory tasks. Memory & Cognition, 42(3), 448–463. [Google Scholar] [CrossRef]

- Draheim, C., Tshukara, J. S., & Engle, R. W. (2024). Replication and extension of the toolbox approach to measuring attention control. Behavior Research Methods, 56(3), 2135–2157. [Google Scholar] [CrossRef] [PubMed]

- Draheim, C., Tsukahara, J. S., Martin, J. D., Mashburn, C. A., & Engle, R. W. (2021). A toolbox approach to improving the measurement of attention control. Journal of Experimental Psychology, 150(2), 242–275. [Google Scholar] [CrossRef]

- Ecker, U. K. H., Lewandowsky, S., Oberauer, K., & Chee, A. E. H. (2010). The components of working memory updating: An experimental decomposition and individual differences. Journal of Experimental Psychology: Learning Memory and Cognition, 36(1), 170–189. [Google Scholar] [CrossRef]

- Engle, R. W., Conway, A. R. A., Tuholski, S. W., & Shisler, R. J. (1995). A resource account of inhibition. Psychological Science, 6(2), 122–125. [Google Scholar] [CrossRef]

- Faul, F., Erdfelder, E., Buchner, A., & Lang, A.-G. (2009). Statistical power analyses using G*Power 3.1: Tests for correlation and regression analyses. Behavior Research Methods, 41, 1149–1160. [Google Scholar] [CrossRef]

- Frischkorn, G. T., & Oberauer, K. (2021). Intelligence test items varying in capacity demands cannot be used to test the causality of working memory capacity for fluid intelligence. Psychonomic Bulletin & Review, 28, 1423–1432. [Google Scholar] [CrossRef] [PubMed]

- Gelman, A., Hill, J., & Yajima, M. (2012). Why we (usually) don’t have to worry about multiple comparisons. Journal of Research on Educational Effectiveness, 5, 189–211. [Google Scholar] [CrossRef]

- Halford, G. S., Wilson, W. H., & Phillips, S. (1998). Processing capacity defined by relational complexity: Implications for comparative, developmental, and cognitive psychology. The Behavioral and Brain Sciences, 21(6), 803–831. [Google Scholar] [CrossRef] [PubMed]

- Halford, G. S., Wilson, W. H., & Phillips, S. (2010). Relational knowledge: The foundation of higher cognition. Trends in Cognitive Sciences, 14(11), 497–505. [Google Scholar] [CrossRef]

- Hox, J. J. (2010). Multilevel analysis: Techniques and applications (2nd ed., p. 382). Routledge. [Google Scholar]

- Kane, M. J., Hambrick, D. Z., & Conway, A. R. A. (2005). Working memory capacity and fluid intelligence are strongly related constructs: Comment on Ackerman, Beier, and Boyle (2005). Psychological Bulletin, 131(1), 66–71. [Google Scholar] [CrossRef]

- Kovacs, K., & Conway, A. R. A. (2016). Process overlap theory: A unified account of the general factor of intelligence. Psychological Inquiry, 27(3), 151–177. [Google Scholar] [CrossRef]

- Krumm, S., Schmidt-Atzert, L., Buehner, M., Ziegler, M., Michalczyk, K., & Arrow, K. (2009). Storage and non-storage components of working memory predicting reasoning: A simultaneous examination of a wide range of ability factors. Intelligence, 37(4), 347–364. [Google Scholar] [CrossRef]

- Kvist, A. V., & Gustafsson, J.-E. (2008). The relation between fluid intelligence and the general factor as a function of cultural background: A test of Cattell’s investment theory. Intelligence, 36(5), 422–436. [Google Scholar] [CrossRef]

- Lüdecke, D. (2025). sjPlot: Data visualization for statistics in social science. Available online: https://CRAN.R-project.org/package=sjPlot (accessed on 12 December 2025).

- Mashburn, C. A., Tsukahara, J. S., & Engle, R. W. (2021). Individual differences in attention control: Implications for the relationship between working memory capacity and fluid intelligence. In R. H. Logie, V. Camos, & N. Cowan (Eds.), Working memory: State of the science (pp. 175–211). Oxford University Press. [Google Scholar] [CrossRef]

- Meyer, D., & Hornik, K. (2023). sets: Sets, generalized sets, customizable sets and intervals. Available online: https://CRAN.R-project.org/package=sets (accessed on 4 August 2023).

- Oberauer, K. (2009). Design for a working memory. In B. H. Ross (Ed.), The psychology of learning and motivation (pp. 45–100). Elsevier Academic Press. [Google Scholar] [CrossRef]

- Oberauer, K. (2021). Towards a theory of working memory: From metaphors to mechanisms. In R. H. Logie, V. Camos, & N. Cowan (Eds.), Working memory: State of the science (pp. 116–149). Oxford University Press. [Google Scholar] [CrossRef]

- Oberauer, K., & Lewandowsky, S. (2016). Control of information in working memory: Encoding and removal of distractors in the complex-span paradigm. Cognition, 156, 106–128. [Google Scholar] [CrossRef] [PubMed]

- Oberauer, K., Süß, H.-M., Wilhelm, O., & Wittman, W. W. (2003). The multiple faces of working memory: Storage, processing, supervision, and coordination. Intelligence, 31(2), 167–193. [Google Scholar] [CrossRef]

- Oberauer, K., Süβ, H.-M., Wilhelm, O., & Wittmann, W. W. (2008). Which working memory functions predict intelligence? Intelligence, 36(6), 641–652. [Google Scholar] [CrossRef]

- Oberauer, K., Wilhelm, O., Schulze, R., & Süß, H. M. (2005). Working memory and intelligence-their correlation and their relation: Comment on Ackerman, Beier, and Boyle (2005). Psychological Bulletin, 131(1), 61–65. [Google Scholar] [CrossRef]

- Pedhazuer, E. J., & Schmelkin, L. P. (1991). Measurement, design, and analysis: An integrated approach. Lawrence Erlbaum Associates. [Google Scholar]

- R Core Team. (2025). R: A language and environment for statistical computing. R Foundation for Statistical Computing. Available online: https://www.R-project.org/ (accessed on 12 December 2025).

- Raven, J. (1989). The Raven Progressive Matrices: A review of national norming studies and ethnic and socioeconomic variation within the United States. Journal of Educational Measurement, 26(1), 1–16. [Google Scholar] [CrossRef]

- Revelle, W. (2023). psych: Procedures for psychological, psychometric, and personality research. Available online: https://cran.r-project.org/web/packages/psych/index.html (accessed on 12 December 2025).

- Rey-Mermet, A., Gade, M., Souza, A. S., von Bastian, C. C., & Oberauer, K. (2019). Is executive control related to working memory capacity and fluid intelligence? Journal of Experimental Psychology: General, 148(8), 1335–1372. [Google Scholar] [CrossRef]

- Shipstead, Z., Harrison, T. L., & Engle, R. W. (2016). Working memory capacity and fluid intelligence: Maintenance and disengagement. Perspectives on Psychological Science, 11(6), 771–779. [Google Scholar] [CrossRef]

- Unsworth, N., & Engle, R. W. (2005). Individual differences in working memory capacity and learning: Evidence from the serial reaction time task. Memory & Cognition, 33(2), 213–220. [Google Scholar] [CrossRef]

- Unsworth, N., & Engle, R. W. (2007). The nature of individual differences in working memory capacity: Active maintenance in primary memory and controlled search from secondary memory. Psychological Review, 114(1), 104–132. [Google Scholar] [CrossRef]

- Wickham, H. (2016). ggplot2: Elegant graphics for data analysis. Available online: https://ggplot2.tidyverse.org (accessed on 12 December 2025).

- Wickham, H. (2022). stringr: Simple, consistent wrappers for common string operations. Available online: https://stringr.tidyverse.org (accessed on 12 December 2025).

- Wickham, H., Averick, M., Bryan, J., Chang, W., McGowan, L., Franois, R., Grolemund, G., Hayes, A., Henry, L., Hester, J., Kuhn, M., Pedersen, T., Miller, E., Bache, S., Müller, K., Ooms, J., Robinson, D., Seidel, D., Spinu, V., & Yutani, H. (2019). Welcome to the tidyverse. Journal of Open Source Software, 4(43), 1686. [Google Scholar] [CrossRef]

- Wickham, H., & Bryan, J. (2023). readxl: Read Excel files. Available online: https://readxl.tidyverse.org (accessed on 12 December 2025).

- Zhan, Y., & Birney, D. (2023). Exploring functions of relational monitoring: Relational integration and interference control. Proceedings of the Annual Meeting of the Cognitive Science Society, 45, 3116–3122. Available online: https://escholarship.org/uc/item/4sq4d8tg (accessed on 12 December 2025).

| Relational Complexity | Upper Quartile Response Time | Median Response Time | 10% Timeout Rate |

|---|---|---|---|

| Same | 3.80 s | 2.65 s | 4.05 s |

| Ascending | 4.41 s | 3.47 s | 4.72 s |

| Different | 4.71 s | 3.62 s | 5.00 s |

| Descriptive Statistics | Correlation | ||

|---|---|---|---|

| M | SD | Gf | |

| RMT Grand Total | .70 | .08 | .43 ** |

| RMT Component 1 | |||

| RMT Same | .81 | .11 | .27 * |

| RMT Ascending | .67 | .12 | .32 ** |

| RMT Different | .62 | .14 | .31 ** |

| RMT 12 Distractors | .71 | .11 | .33 ** |

| RMT 0 Distractor | .69 | .10 | .39 ** |

| RMT 6 Distractors | .69 | .10 | .37 ** |

| RMT Component 2 | |||

| RMT Same | .81 | .09 | .35 ** |

| RMT Ascending | .66 | .11 | .28 ** |

| RMT Different | .63 | .11 | .27 ** |

| RMT 6 Preservation | .69 | .10 | .23 * |

| RMT 3 Preservation | .69 | .09 | .33 ** |

| RMT 0 Preservation | .70 | .08 | .40 ** |

| Gf Measures | |||

| RPM | .54 | .19 | .67 ** |

| Number Series | .57 | .19 | .70 ** |

| GLST | .56 | .18 | .74 ** |

| Fixed Effects | Random Effects | ||||||

|---|---|---|---|---|---|---|---|

| Predictors | Model | Log-Odds | SE | z | CI | p | tau |

| RC (linear contrast; H1) | 1 | −0.512 | 0.036 | −14.22 | −0.583, −0.442 | <.001 | 0.17 |

| RC.quad (quadratic contrast) | 1 | 0.287 | 0.038 | 7.58 | 0.213, 0.361 | <.001 | |

| HighD (high distraction contrast; H3) | 1 | −0.075 | 0.038 | −1.96 | −0.151, −0.000 | .050 * | |

| LowD (facilitation vs. baseline; H4) | 1 | −0.121 | 0.045 | −2.70 | −0.209, −0.033 | .007 | |

| RC × HighD | 1 | 0.035 | 0.048 | 0.73 | −0.059, 0.130 | .467 | |

| RC × LowD | 1 | 0.110 | 0.056 | 1.95 | −0.001, 0.220 | .052 | |

| RC (H1) | 2 | −0.490 | 0.027 | −17.83 | −0.544, −0.436 | <.001 | 0.051 |

| PreserveC (preserve cost; H6) | 2 | 0.059 | 0.028 | 2.12 | 0.004, 0.113 | .034 * | |

| PreserveL (preserve levels; H6) | 2 | 0.004 | 0.044 | 0.08 | −0.083, 0.090 | .937 | |

| RC × PreserveC | 2 | 0.023 | 0.035 | 0.67 | −0.045, 0.092 | .503 | |

| RC × PreserveL | 2 | −0.028 | 0.055 | −0.50 | −0.136, 0.081 | .617 | |

| Gf | 3 | 0.271 | 0.047 | 5.74 | 0.178, 0.363 | <.001 | |

| Gf × RC (H2) | 3 | −0.013 | 0.051 | −0.25 | −0.113, 0.088 | .806 | |

| Gf × HighD (H5) | 3 | 0.010 | 0.054 | 0.19 | −0.096, 0.116 | .848 | |

| Gf × LowD (H5) | 3 | −0.057 | 0.063 | −0.91 | −0.181, 0.066 | .365 | |

| Gf | 4 | 0.219 | 0.046 | 4.76 | 0.129, 0.309 | <.001 | |

| Gf × RC (H2) | 4 | −0.047 | 0.036 | −1.32 | −0.118, 0.023 | .187 | |

| Gf × PreserveC (H7) | 4 | 0.049 | 0.039 | 1.26 | −0.027, 0.126 | .207 | |

| Gf × PreserveL (H7) | 4 | 0.056 | 0.062 | 0.89 | −0.066, 0.178 | .371 | |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Li, Y.; Birney, D.P. Relational Integration and Attentional Control Are Crucial to Fluid Intelligence Together but Not Alone—An Experimental Investigation of Individual Difference in Relational Monitoring Processes. J. Intell. 2026, 14, 8. https://doi.org/10.3390/jintelligence14010008

Li Y, Birney DP. Relational Integration and Attentional Control Are Crucial to Fluid Intelligence Together but Not Alone—An Experimental Investigation of Individual Difference in Relational Monitoring Processes. Journal of Intelligence. 2026; 14(1):8. https://doi.org/10.3390/jintelligence14010008

Chicago/Turabian StyleLi, Yunze, and Damian Patrick Birney. 2026. "Relational Integration and Attentional Control Are Crucial to Fluid Intelligence Together but Not Alone—An Experimental Investigation of Individual Difference in Relational Monitoring Processes" Journal of Intelligence 14, no. 1: 8. https://doi.org/10.3390/jintelligence14010008

APA StyleLi, Y., & Birney, D. P. (2026). Relational Integration and Attentional Control Are Crucial to Fluid Intelligence Together but Not Alone—An Experimental Investigation of Individual Difference in Relational Monitoring Processes. Journal of Intelligence, 14(1), 8. https://doi.org/10.3390/jintelligence14010008