1. Introduction

Numerical simulation and large-scale data analysis became indispensable tools in all scientific and engineering areas. From modeling astrophysical phenomena and predicting climate change to designing novel materials and simulating biological systems, the ability to implement complex mathematical model descriptions and general-purpose algorithms efficiently and reliably is paramount. The choice of programming language for these demanding computational tasks significantly influences not only the achievable performance, but also the development lifecycle, including coding effort, maintainability and integration capabilities [

1].

High-performance computing (HPC) involves a persistent contradiction: On the one hand, Fortran remains unsurpassed for numerical performance due to its compilation-time optimization capabilities and straightforward array handling. Vast repositories of high-performance legacy codes in Fortran and C, thoroughly tested and perfected over years of application, remain indispensable for large-scale scientific simulations [

2]. This is evidenced by cutting-edge codes like the Fortran-based GESTS, which holds the record for pseudospectral DNS resolution (32,768

3 grid points) [

3], and the C code Tarang demonstrating excellent scalability on hundreds of thousands of cores [

4,

5].

On the other hand, developing production-grade Fortran code is challenging. Implementing complex algorithms in low-level compiled languages demands expertise (in spite of certain inherent simplicity of Fortran syntax compared to other high-level programming languages) and iterative refinement. On top of this, manual derivation of numerical schemes is labor-intensive and error-prone. This motivates a question: Can we retain Fortran’s performance benefits while accelerating development?

This paper advocates a four-stage development methodology that achieves this goal:

Stage 1: Rapid Prototyping in Python (version 3.11) The algorithm to be implemented must be validated. It is practical to employ symbolic computation systems (SymPy, Wolfram Language, Maple) for this purpose. They enable derivation of discretization schemes directly from governing equations. Finite-difference stencils, operator matrices and other components can be generated symbolically ensuring mathematical exactness. This approach prevents subtle errors from propagating undetected through subsequent implementation stages. The symbolic form also serves as documentation of the mathematical foundation.

Validation must be systematic. Unit tests verify basic operations. The Method of Manufactured Solutions, employing polynomial test functions, confirms that convergence orders match theoretical predictions. Conservation laws must be checked over extended simulation periods. Convergence studies on progressively refined grids provide quantitative evidence of correctness.

Profiling follows validation. Modern profiling tools identify which components dominate computation time. Preliminary estimates of achievable acceleration in compiled languages can then be obtained.

The output of Stage 1 is a validated prototype supported by rigorous tests and symbolic derivations. This foundation makes Stage 2 systematic rather than exploratory.

Stage 2: Transition to Fortran and Optimization Each computationally intensive code component is migrated individually. Fortran implementations are generated from symbolic specifications derived in Stage 1, ensuring mathematical equivalence.

Validation proceeds by direct comparison with Python results to machine precision. Any divergence indicates transcription errors (such as data layout mismatches—column-major versus row-major array ordering) that must be resolved upon detection.

Optimization begins with compiler flags appropriate to the target architecture. Vectorization can be verified through assembly inspection. Loop structures are reorganized for cache efficiency: outer loops progress over slower-varying dimensions, inner loops over faster-varying dimensions.

Shared memory parallelization with OpenMP introduces new points of concern. False sharing and load balancing require careful attention.

Profiling after each modification confirms that optimizations produce the expected improvements.

Numerical stability under aggressive compiler optimizations requires revalidation. Operation reordering at high optimization levels can degrade the accuracy of long simulations [

6].

Integration of optimized code components into a complete code is carried out by language bridging tools like f2py, maintaining the identity with the original Python algorithm throughout development.

Stage 3: Final Fortran Implementation The validated, optimized Fortran implementation must be prepared for production execution. This requires elimination of temporary bridging layers and implementation of the complete I/O and workflow driver in the target language.

Architecture-specific tuning becomes necessary. Compiler flags and preprocessing options vary significantly across CPU families and accelerator platforms. Portability across disparate hardware requires systematic testing on target systems.

GPU acceleration based on directive-based approaches such as OpenACC provides vendor portability at the cost of the constraints of the data access pattern. Graphics processing units impose strict requirements on the memory layout and access patterns. Numerical results may differ due to floating-point rounding differences between CPU and GPU execution. This variation must be quantified and accepted or mitigated.

Distributed-memory parallelization through message passing introduces challenges not encountered in shared-memory optimization. Domain decomposition strategies fundamentally affect communication overhead and load balance. Scaling must be explored and demonstrate the acceptable efficiency of parallelization before using the code for production computing.

Besides meeting performance prerequisites, production codes must satisfy reliability requirements. Regular checkpointing enables recovery from hardware failures. Comprehensive logging of iterations, residuals, memory consumption, and conserved quantities enables post-simulation control. Input validation and comprehensive error handling, including that for numerical overflow or underflow, prevent silent corruptions.

Stage 4: Maintenance and Evolution Sustained use of production codes may reveal additional physics requiring implementation. Rather than ad hoc modification, new models can be integrated following the established methodology described above: physics prototyping in Python, symbolic derivation where applicable, automated Fortran implementation and systematic validation ensure that extensions maintain the code quality. The modular architecture simplifies integrating new functionalities.

Our approach addresses the following issues of HPC code development:

Development time: Validation in Python may be orders of magnitude faster than implementing tests directly in Fortran.

Correctness: Symbolic generation guarantees mathematical equivalence between the problem specification and the implemented algorithm, eliminating the respective errors.

Maintainability: Production code is pure Fortran with no external dependencies; this simplifies deployment, porting and long-term maintenance.

Performance: The final Fortran code performance is high; it is not corrupted by runtime overheads from interpreted languages or FFI (Foreign Function Interface) calls.

The main contributions of this work are as follows:

A formalized four-stage development methodology for producing high-performance production-ready Fortran code by employing Python prototyping, symbolic mathematics and LLM assistance.

A critical assessment of where and how LLMs can accelerate development (Python prototyping, wrapper generation, code review) and where symbolic methods or expert development is essential (core code components).

Practical guidelines for implementing this workflow, including best practices for validation, testing and ensuring reproducibility of the final Fortran code.

We have validated the proposed strategy in its full-blown application while developing a Fortran code for simulating convective magnetic dynamo (see a discussion of some key fragments of these algorithms in [

7]).

While our methodology focuses on the explicit transition from Python to Fortran, it is important to contextualize it within the broader landscape of automatic code generation and optimization for high-performance systems. A large body of previous research has explored various specification and code generation approaches, such as LIFT, MDH (Multi-Dimensional Homomorphisms), SkelCL, and PACXX [

8,

9,

10,

11]. Concurrently, significant advancements have been made in auto-tuning frameworks like ATF (Auto-Tuning Framework) and Schedgehammer [

12,

13], alongside optimization strategies such as Elevate, OCAL, and dOCAL [

14,

15,

16]. While these sophisticated frameworks excel at automating hardware-specific transformations and performance portability, they often introduce opaque abstraction layers. Our methodology, in contrast, prioritizes a transparent, “white-box” approach, explicitly retaining native Fortran code to ensure long-term maintainability and manual control over architectural parallelization.

The paper is organized as follows.

Section 2 explores the contemporary landscape of high-performance computing and argues why Fortran remains the standard for numerical simulation.

Section 3 discusses symbolic computation as a tool for automatically generating correct numerical code.

Section 4 details the role of Python prototyping in enabling rapid algorithmic exploration and validation before committing to compiled implementation.

Section 5 examines the usage of LLMs within this workflow, showing both capabilities and limitations. Final remarks (

Section 6) conclude the paper.

2. Fortran in Production HPC Systems

2.1. Technical Advantages of Fortran for Numerical Computing

Fortran remains the dominant language for production-scale HPC codes due to fundamental technical advantages rooted in its design philosophy.

Restrictive aliasing semantics. Unlike C/C++, Fortran prohibits memory aliasing within program units by default [

1]. Compilers use this to apply aggressive optimizations—vectorization, loop fusion, interprocedural analysis—without expensive alias analysis. This yields performance gains for array-centric codes.

Modern language features. Modern Fortran (2008+) provides explicit parallelization constructs (DO CONCURRENT, PURE/ELEMENTAL procedures) that enable automated compiler optimization while maintaining compatibility with standard parallelization libraries (OpenMP, MPI, OpenACC).

Mature infrastructure. Decades of development have resulted in highly optimized compilers (Intel, GNU, Cray), standardized libraries (BLAS, LAPACK, FFTW) and vendor-tuned implementations for diverse architectures.

2.2. Alternative Languages: Strengths and Limitations

C and C++ achieve comparable performance to Fortran through careful coding, but permissive pointer aliasing rules require greater explicit effort from the developer to enable aggressive compiler optimization.

Python excels for rapid prototyping, workflow management and data analysis, but executes 10–100 times slower than compiled code for numerically intensive operations [

17]. Thus, Python is useful for algorithm development but unsuitable as the primary language for production HPC codes.

Julia is attractive for exploratory research, combining dynamic syntax with JIT compilation and built-in distributed parallelism. However, production HPC codes in Julia remain limited due to runtime dependencies and limited compiler support for advanced hardware architectures.

Our methodology specifically targets the Python–Fortran stack for pragmatic reasons: the scientific community maintains a massive repository of highly optimized, heavily verified legacy Fortran codes that cannot be trivially rewritten. Research groups developing large-scale simulations typically extend and reuse these codebases over decades, while the composition of the team evolves; a single well-established compiled language for production code ensures continuity and reduces the onboarding effort for incoming members. At the same time, Python remains the ubiquitous foundational language for scientific prototyping. Furthermore, fully automated translation of distributed-memory parallel structures (such as MPI) remains an unsolved challenge across all high-level languages. By generating transparent “white-box” Fortran kernels, our approach shifts the global MPI domain decomposition towards the architectural level, allowing developers to manually apply industry-standard distributed parallelism around the generated native code.

2.3. Production HPC Codes: The Fortran Standard

Large-scale production codes consistently employ Fortran for performance-critical components. Representative examples include the following:

GESTS: Fortran 90/95 pseudospectral DNS code allowing

grid resolution with GPU acceleration [

3]

Python–Fortran hybrids: Research codes managing high-level workflows in Python while implementing numerically intensive code components in Fortran [

7]

This preference reflects fundamental advantages: (1) aggressive compiler optimization for array-centric algorithms; (2) proven scalability to exascale systems; (3) long-term stability and vendor support.

2.4. Strategic Hybrid Approach

Recognizing this reality, we advocate a hybrid methodology: use Python and symbolic mathematics to rapidly develop and validate algorithms, then implement production HPC code in Fortran. This combines Python’s development productivity with Fortran’s execution performance.

3. Symbolic Code Generation: From Mathematics to Correct Implementation

A problem in numerical code development is the gap between mathematical specification and executable implementation. Translating concise mathematical expressions to efficient, correct, numerically stable code requires careful handling of edge cases. Manual translation is often prone to errors.

Symbolic computation systems bridge this gap by automating the translation from mathematical specification to executable code. Systems like SymPy (Python-based), Maple, Mathematica, Wolfram Language offer capabilities to:

Define mathematical expressions symbolically;

Perform symbolic differentiation, integration and algebraic manipulation;

Automatically generate optimized source code in the desired language (Fortran, C, C++);

Verify properties of the generated code (the truncation error, stability regions, conservation properties).

3.1. Why Symbolic Generation Matters for HPC Code

The benefits are substantial for scientific computing:

Guaranteed correctness. Code generated from a symbolic specification is mathematically correct by construction—it is equivalent to the specification by design. This eliminates entire classes of errors (algebraic mistakes, transcription errors, sign errors) that plague manual derivation and coding.

Reduced development time. Deriving a complex finite-difference stencil manually might require hours of algebraic manipulation. Symbolic systems accomplish this in seconds. For specialized schemes with specific properties (positivity-preserving, energy-stable, etc.), the time savings are significant.

Exploration of alternatives. Developers can easily explore multiple discretization schemes, orders of accuracy or boundary condition implementations without proportionally increasing manual effort.

Optimized code generation. Symbolic systems apply optimizations during code generation: common subexpression elimination (identifying and reusing repeated calculations), operation count minimization and usage of specialized mathematical functions. The generated code may outperform manually written implementations.

Reproducibility and verification. The symbolic specification serves as precise, machine-verifiable documentation. The connection between mathematics and implementation is explicit and verifiable.

3.2. Workflow: From Mathematics to Production Code

We propose the following workflow:

Step 1: Symbolic specification. The researcher defines the problem in mathematical terms. For example, to implement a finite-difference discretization of a PDE, the researcher specifies the differential operator, boundary conditions and desired accuracy order. The specification is expressed in symbolic form in a computer algebra system.

Step 2: Symbolic derivation. The algebra system performs the necessary manipulations such as Taylor series expansions, algebraic simplification, error analysis and derivation of the discrete approximation. This step is mathematically precise and produces closed-form expressions (for the discrete scheme in the above example).

Step 3: Code generation. The algebra system translates the generated expressions into Fortran (or C/C++) code. This step involves optimizing for the target language and taking account of the numerical properties (avoiding cancellation errors, managing precision).

Step 4: Verification. The generated code is validated: test runs confirm it implements the specification correctly, convergence studies verify the order of accuracy, and performance profiling ensures the efficiency. Because the code was generated from a verified specification, these tests typically do not require reiterating the previous steps.

Step 5: Integration. The generated Fortran code is integrated into the production system. Because it is pure Fortran with no external dependencies, integration is straightforward.

3.3. Limitations and Practical Considerations

Symbolic generation is powerful but not universal:

Algorithms with complex control flow. Codes involving conditional logic, adaptive refinement or complex data structures may be difficult to express symbolically. Symbolic systems excel at mathematical expressions, less so at procedural algorithms.

Domain-specific optimizations. Hardware-specific optimizations (cache blocking, vectorization strategies, memory layout) are unlikely to be automatically captured by symbolic code generation. Expert tuning may be necessary to achieve the highest performance.

Hybrid approach. In practice, symbolic generation is often combined with manual coding: formulae components (stencils, derivatives, matrix operations, etc.) are generated symbolically, while operation management, I/O and complex logic are developed manually or with LLM assistance.

3.4. Integration with Python Prototyping

The connection between Python prototyping and symbolic generation is natural:

The researcher first develops a Python prototype to validate the overall algorithm structure: input/output handling, parameter management, convergence behavior, etc. Once the Python prototype is verified to be algorithmically correct, profiling identifies computationally intensive code fragments.

For each subproblem expressed as a mathematical formula, the symbolic specification is derived from the Python prototype. A symbolic system generates the Fortran code. The researcher verifies that the Fortran-generated code produces numerically identical results to the Python prototype (within the floating-point tolerance), then replaces the Python code with the generated Fortran code.

This approach ensures that the final Fortran code is both mathematically correct (by symbolic construction) and algorithmically sound (validated by Python prototype).

4. Stage 1: Rapid Prototyping in Python

Python has emerged as the de facto language for scientific algorithm development, not by replacing Fortran but by occupying a different niche. Where Fortran excels at execution, Python excels at development. This section describes Python’s role as a development tool in the four-stage methodology—a place to validate ideas before committing to production Fortran implementation.

4.1. Why Use Python for Prototyping

Rapid iteration. Python as an interpreted language eliminates the compile–link–run cycle. Algorithm changes are immediately testable within one interactive session. This tight feedback helps to clarify the subtleties of the problem, particularly in exploratory research where the final algorithm is unknown at the outset.

Extensive scientific libraries. NumPy, SciPy, and scikit-learn provide thousands of reliable numerical algorithms, data structures and utilities. Researchers can focus on domain-specific logic rather than reimplementing standard components. Matplotlib (version 3.8) and Plotly (version 5.9) enable immediate visualization of results, helping to acquire the insight.

Dynamic typing and flexibility. Python’s dynamic type system and flexible data structures reduce the syntactic overhead of implementation. Complex algorithms can be expressed concisely with less typing compared to statically-typed compiled languages.

Interactive development environment. Jupyter notebooks combine code, narrative, visualization, and results in a single document. This environment is ideal for exploratory research, documentation, and knowledge dissemination.

Infrastructure and community. Python’s scientific community has created extensive tools and tutorials, and has defined the best practices rules. Finding examples or solutions to common problems is straightforward.

4.2. Python’s Role as a Development Tool

Python serves as a temporary development tool in this methodology—not a component of the final production system. The key principles are as follows:

Validation, not production. Python is used to validate that an algorithm works, i.e., produces correct results for test cases, converges to expected solutions and shows the expected numerical behavior. Once validation is complete, the algorithm is translated into Fortran.

Algorithmic clarity over performance. Python implementations prioritize clarity and correctness over performance. Loop structure, conditionals and data organization are optimized for human understanding, not execution speed. This helps identify algorithmic issues that might be obscured by optimizations.

No runtime dependencies in production. The final production code is pure Fortran. Thus, all Python drawbacks, such as the interpreter dependency, FFI (Foreign Function Interface) overhead or runtime performance penalty from Python bindings, are circumvented.

Parallel development. During Python prototyping, working simultaneously on different aspects is possible. Symbolic derivation and Fortran implementation of different constituent program units can also proceed in parallel.

4.3. The Python Prototyping Workflow

Typically, one proceeds as follows:

Step 1: Algorithm sketch. The researcher sketches the algorithm in Python, using NumPy arrays and standard Python control structures. The focus is on capturing the algorithmic logic clearly.

Step 2: Test case development. Simple test cases are implemented, e.g., analytical solutions for verification, extreme cases for robustness checking, convergence studies for accuracy validation.

Step 3: Profiling and optimization. Python profilers (cProfile, line_profiler) identify which functions consume the most execution time. Typically, 80–90% of time is spent in 2–3 functions, which become candidates for optimization (e.g., by means of the symbolic generation).

Step 4: Comparison against reference implementations. If existing reference implementations are available (from established libraries or prior work), numerical results are compared. This validates not just the correctness, but the overall numerical behavior (such as stability and accuracy).

4.4. LLM Assistance in Python Prototyping

Large Language Models like ChatGPT (GPT-5.2) can accelerate Python prototyping. The researcher can request the following:

Initial implementation from specification. Given a mathematical description or pseudocode, an LLM can generate a Python skeleton implementing the algorithm.

Standard component generation. LLMs reliably generate common patterns: parameter parsers, file I/O, data structure initializations, standard preprocessing steps. The researcher specifies the structure and LLMs fill in the details.

Test case generation. LLMs can generate reasonable test suites: edge cases, boundary conditions, convergence studies. Based on the domain knowledge, the researcher refines the LLM-generated starting point for tests.

Debugging assistance. When algorithm behavior is unexpected, LLMs can suggest potential issues and propose diagnostic approaches.

The key point is that the LLM assistance accelerates the Python development. The researcher remains in full control, verifying all results and making algorithmic decisions.

4.5. Transition from Python to Fortran

Once the Python prototype is verified, the transition to Fortran production code proceeds by (preferably) automatic interlanguage translation, or by careful manual translation. The Python code serves as follows:

Correctness baseline. Fortran code is validated to produce results that are numerically identical (within the floating-point tolerance) to those of the Python prototype on test cases.

Performance reference. Python execution time establishes a baseline, against which Fortran performance is measured to assess how effective optimization efforts are.

Documentation. The Python prototype remains a part of the project documentation, explaining the algorithm in a concise executable form.

This transition preserves the benefits of Python’s clarity and enables Fortran performance of the production code.

Iterative Hybrid Execution

A crucial operational advantage of the proposed methodology is that the transition from Python to Fortran is not a monolithic, blocking step, but rather an iterative, parallelizable workflow. The researcher does not need to wait for the entire codebase to be translated into Fortran to begin large-scale computations.

Once the Python prototype is validated, profiling identifies the most computationally intensive subroutines. Instead of translating the entire application into Fortran, the researcher can opt to first translate only the most time-consuming (or other resource-consuming) subroutines (often applying symbolic generation). These native Fortran subroutines are then wrapped back into the Python environment using tools like f2py, which requires minimal developmental overhead (typically a matter of minutes).

Consequently, the researcher can launch actual production-scale scientific calculations using this hybrid setup. Concurrently, while the computations are running, the developer continues the incremental translation of the remaining Python components into Fortran. This iterative cycle effectively overlaps the computation time with the development time, drastically reducing the time to obtaining the first research result.

Ultimately, this iterative replacement process converges to the final Stage 3: a complete, standalone, transparent “white-box” Fortran application. At this stage, having bypassed black-box frameworks, the developer has full, convenient access to the native Fortran code to implement and optimize advanced distributed-memory parallelization (such as MPI) precisely tailored to the specific numerical algorithms.

4.6. Illustrative Example

To demonstrate the proposed methodology, we consider a core computational kernel from magnetohydrodynamic (MHD) simulations: evaluation of

, the leading term in the magnetic induction equation

This kernel is representative of the most error-prone and computationally demanding class of operations in spectral and finite-difference MHD codes (see, e.g., [

18,

19,

20,

21]): it combines a nonlinear vector product with spatial differentiation, involves multiple array dimensions and stencil neighbors, and offers a nontrivial optimization opportunity that can only be revealed through symbolic analysis. We trace each stage of the methodology below.

4.6.1. Stage 1: Python Prototype

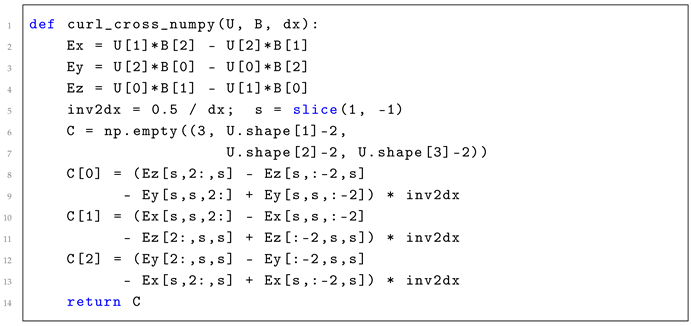

The NumPy implementation follows the mathematical definition directly (Listing 1): first compute the cross product (allocating three temporary arrays), then apply the 2nd-order central difference curl operator to .

| Listing 1. NumPy prototype for

|

![Computation 14 00086 i001 Computation 14 00086 i001]() |

The prototype is validated by the Method of Manufactured Solutions with test fields

and

on

.

Table 1 confirms the expected second-order convergence of the central difference scheme.

4.6.2. Stage 2: Symbolic Derivation and Code Generation

Using SymPy, we derive the curl of the cross product from first principles. For the manufactured test fields, this yields the analytical reference solution

which is used to compute the errors in

Table 1.

Crucially, the symbolic analysis reveals the key optimization: the cross product and the curl can be fused into a single loop pass. Each interior grid point requires 12 cross-product evaluations at stencil neighbors (4 per curl component), totaling 48 floating-point operations—but no intermediate arrays. This eliminates three temporary allocations and reduces memory traffic by approximately . This optimization cannot be expressed in NumPy’s array-at-a-time execution model; it emerges naturally from the symbolic representation of the fused operator.

4.6.3. Stages 3–4: Fortran Generation, Validation and Performance

The Fortran subroutine generated from the symbolic expressions implements the fused computation with OpenMP parallelization (Listing 2; only the x-component of the curl is shown, the other two are analogous).

| Listing 2. Generated Fortran kernel: fused

(x-component). |

![Computation 14 00086 i002 Computation 14 00086 i002]() |

The Fortran kernel is produced in two steps: SymPy generates the stencil arithmetic from the symbolic operator via

IndexedBase/

fcode() and then the subroutine skeleton (loops, OpenMP,

f2py) is assembled with LLM assistance. Future modifications begin as changes to the SymPy specification and propagate to Fortran through the same generation script (

Section 4.5).

The generated Fortran code is a transparent “white-box”: the developer retains full control and can directly insert shared-memory directives (!$omp parallel do) or adapt the loop structure for architecture-specific tuning. For distributed-memory parallelization, the transparent index arithmetic of the generated kernel allows the developer to determine the required halo width directly from the stencil and implement the domain decomposition precisely—no framework introspection is needed.

Validation. Compiled with

gfortran -O3 -fopenmp and wrapped via f2py, the kernel is validated against the Python prototype on random data: the maximum pointwise difference is

, confirming numerical equivalence at machine precision. Both implementations reproduce the MMS solution with identical discretization error (

Table 1).

Performance. Table 2 summarizes the benchmark on a

grid (Apple M3 Pro, 11 cores;

gfortran 14.2 with

-O3 -fopenmp; wall-clock time averaged over 5 iterations, excluding initialization). The NumPy prototype is already fully vectorized, yet the generated Fortran kernel achieves a

speedup. The gain originates entirely from

loop fusion: eliminating three temporary

arrays and reducing memory traffic. On multi-socket HPC nodes with larger core counts and lower memory-bandwidth-per-core ratios, the advantage of the fused kernel is expected to grow further.

This example illustrates the central thesis: Python prototyping rapidly produces a correct, validated baseline; symbolic derivation reveals architectural optimizations invisible at the Python level; and the generated Fortran kernel captures those optimizations in transparent, maintainable code that matches or exceeds hand-written performance.

4.7. Specialized Transpilation Tools

It is often possible to convert expressions that are results of symbolic computations using the tools of the respective symbolic system. For instance, in Mathematica, this can be accomplished by the built-in function FortranForm.

Several specialized tools have been developed for transpiling Python to compiled languages. While these tools vary in maturity and scope, they offer automated approaches to code translation.

The Py2F project represents an experimental research compiler that translates a subset of Python to Fortran 90/95, focusing on numerical code and basic control structures. Similarly, the NumPy to Fortran Translator (NFT) targets array operations specifically, recognizing common NumPy patterns and generating equivalent Fortran 2003+ code with array operations.

Despite these developments, current transpilation tools are subject to significant limitations when applied to complex scientific code. Challenges include handling Python’s dynamic typing, translating advanced control structures, managing external dependencies, and generating architecture-specific optimizations. Most research transpilers also suffer from limited maintenance and incomplete support for modern Python features.

5. Employing LLMs

Large Language Models represent a powerful tool for scientific software development—not a replacement for expertise, but a force multiplier that can accelerate development. Here, we examine where LLMs are helpful and where their limitations demand careful verification or alternative approaches.

5.1. LLM Capabilities and Performance

Some capabilities of modern LLMs (e.g., GPT-5.2, Claude Opus 4.6, and GitHub Copilot, especially when utilized within AI-assisted development environments like Cursor) are relevant to scientific development:

Code generation from specification. When provided with clear problem descriptions or pseudocode, LLMs can generate working implementations with success rates over 80% for well-defined problems [

22]. For domain-specific tasks with extensive training data (standard algorithms, common patterns), accuracy is particularly high.

Language-to-language translation. LLMs can translate algorithms between programming languages. However, the quality varies significantly depending on the language occurrence in training data. Translation from Python to C++ is far more reliable than that from Python to Fortran, a critical asymmetry discussed below.

Wrapper and interface generation. LLMs are highly reliable for generating boilerplate code, such as f2py signatures and Fortran module interfaces. These patterns are well-represented in training data and LLMs generate correct code with high consistency.

Documentation and test support. LLMs can generate reasonable first-pass unit descriptions, comments and unit tests. Researchers review and refine the LLM output based on domain knowledge, but employing LLMs accelerates the process.

Iterative refinement. When provided with error messages or performance feedback, LLMs can suggest fixes. Success rates for refinement are lower than initial generation (30–50% for code fixes [

23]), but the LLM assistance remains valuable in debugging.

5.2. The Python–Fortran Asymmetry in LLM Capabilities

The asymmetry between LLM capabilities for Python versus Fortran originates in fundamental differences in training data:

Python in training data accounts for approximately 30–40% of publicly available code repositories and datasets used for LLM training. Python tutorials, blog posts, Stack Overflow answers, and academic examples saturate training corpora. Consequently, LLMs trained on this corpus generate Python code with high reliability.

Fortran scarcity in training data. Modern Fortran (Fortran 2003+) accounts for only 0.1–0.5% of training data. Most Fortran code in public repositories is legacy code written in Fortran 77 or 90. Contemporary Fortran features (modules, derived types, OOP extensions, co-arrays) are severely underrepresented in LLM training.

Consequence for Fortran code generation. Research explicitly examining Fortran code generation by LLM reveals significant performance differences [

24]. When tasked with 540 LeetCode problems translated to Fortran specifications, even specialized fine-tuned models achieved successful execution without human intervention on only 9% of problems [

24]. LLMs generate invalid Fortran syntax, misuse module interfaces, confuse Fortran standards and create undefined symbol references [

25].

Consequence for Python. By contrast, LLMs achieve 65-84% accuracy on comparable Python problems [

26,

27]. For Python code with sufficient specifications, LLM assistance is highly effective.

5.3. Strategic Implications: Three Zones of LLM Usage

This asymmetry suggests a clear strategy for LLM usage in the proposed four-stage methodology:

Zone 1: Python prototyping. LLMs are effective for accelerating Python development. LLMs can be used for:

Generating initial implementations from problem specifications;

Supporting tests and creating standard components;

Generating documentation and explanatory code comments.

In this zone, LLM-generated code is typically correct and productive. Researchers validate results against test cases or reference implementations, but extensive code review is usually unnecessary.

Zone 2: Wrapper and interface generation. LLMs are reliable for generating typical interface code: f2py directives, Fortran module signatures, type conversion code and similar code fragments whose standard structures are well-represented in training data. LLMs can be used to:

In this zone, LLM output is often correct but requires validation by compilation and simple testing.

Zone 3: Production Fortran code units. LLMs are unreliable for generating production-grade Fortran code. Critical issues include:

Errors in modern Fortran syntax (invalid module declarations, incorrect interface syntax);

Confusion between Fortran standards (mixing Fortran 77 and Fortran 2003 features);

Type system errors (incorrect type declarations, array handling mistakes);

Undefined symbol references due to missing declarations or incorrect module usage.

LLMs should not be used for directly generating production Fortran code. The reliable tools include:

Symbolic code generation (SymPy, Maple, Mathematica) for mathematically derivable code;

Expert manual implementation of complex algorithms;

LLM assistance for code review and minor refinement.

5.4. Why This Asymmetry Validates the Four-Stage Methodology

The Python–Fortran asymmetry in LLM capabilities actually validates the four-stage methodology:

It confirms Python’s suitability for prototyping. The high LLM reliability for Python suggests that Python is the natural language for rapid algorithm development. LLMs and Python are well-matched, accelerating exploration and validation.

It demonstrates that developing Fortran production code requires specialized methods. LLM limitations for Fortran show that direct LLM-generated Fortran is unsuitable for production. Instead, production Fortran should be generated by

Symbolic code generation (guaranteed mathematical correctness);

Expert manual implementation (rigorous domain knowledge);

Not LLM generation (insufficient reliability).

Justifies the separation of concerns. The methodology separates algorithm development (Python + LLM) from production implementation (Fortran + symbolic generation). This separation is not arbitrary but optimal, given current tool capabilities.

5.5. Best Practices for LLM-Assisted Development

When deploying LLMs in this workflow:

For Python prototyping:

Provide clear problem specifications;

Request modular, well-documented code with error handling;

Always validate generated code against test cases;

Refine LLM suggestions based on domain knowledge.

For interface generation:

Provide examples of similar existing interfaces;

Request code that matches established patterns;

Validate by compilation and simple functional testing.

For Fortran code development:

No direct use of LLM-generated code in production;

LLM usage for code review and suggestion, not primary generation;

Preference for symbolic generation of numerical code units;

Expert developer employment for complex algorithms.

5.6. Future Evolution

As LLM training corpora evolve and specialized models emerge, we can foresee the following:

Improved Fortran support. Specialized LLM models trained specifically on Fortran code will emerge, potentially improving the quality of generated Fortran codes. However, the asymmetry (Python superior to Fortran) is likely to persist due to fundamental differences in the public code corpus composition.

Better error handling. Future LLMs may integrate compilation feedback more effectively, enabling iterative code refinement with higher success rates.

Domain-specific reasoning. LLMs augmented with domain-specific reasoning could potentially generate Fortran code respecting domain constraints (conservation laws, stability requirements, etc.).

For now, use LLMs strategically in Zones 1 and 2 (Python and interfaces), but rely on symbolic generation or expert development for Zone 3 (production Fortran).

6. Conclusions

We have presented a four-stage methodology—Python prototyping, symbolic code generation, Fortran implementation, and maintenance—for developing high-performance scientific software. The methodology reconciles competing demands by exploiting each tool’s strengths: Python for rapid development, symbolic systems for mathematical rigor, and Fortran for production performance. A practical demonstration on a magnetohydrodynamic kernel (

Section 4.6) showed that the approach produces numerically equivalent results to hand-written Fortran while reducing development time from days to hours.

The observed asymmetry in LLM capabilities across languages—strong for Python, weak for modern Fortran—is not a limitation of the methodology but rather a validation of its design: by confining LLM usage to Python prototyping and interface generation (where reliability is high), and relying on symbolic generation for production Fortran kernels (where mathematical correctness is guaranteed by construction), the methodology is well-aligned with the current state of tool capabilities.

Future work. Several directions warrant further investigation. Systematic integration of auto-tuning frameworks (such as ATF [

12]) with the generated Fortran code could automate architecture-specific optimization. Furthermore, as LLM capabilities for Fortran improve, the boundary between Zones 2 and 3 of LLM usage may shift, potentially enabling LLM-assisted generation of production Fortran under sufficiently rigorous verification protocols.

For the scientific computing community confronting the challenges of complexity, scale and reproducibility, this methodology offers a practical path toward developing production software that is both high-performance and maintainable.